Abstract

Over the past decade, many approaches have been introduced for traffic speed prediction. However, providing fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network where the size of the network keeps increasing and new traffic detectors are constantly deployed has not been well studied. To address this issue, this paper presents DistTune based on long short-term memory (LSTM) and the Nelder-Mead method. When encountering an unprocessed detector, DistTune decides if it should customize an LSTM model for this detector by comparing the detector with other processed detectors in the normalized traffic speed patterns they have observed. If a similarity is found, DistTune directly shares an existing LSTM model with this detector to achieve time-efficient processing. Otherwise, DistTune customizes an LSTM model for the detector to achieve fine-grained prediction. To make DistTune even more time-efficient, DisTune performs on a cluster of computing nodes in parallel. To achieve adaptive traffic speed prediction, DistTune also provides LSTM re-customization for detectors that suffer from unsatisfactory prediction accuracy due to, for instance, changes in traffic speed patterns. Extensive experiments based on traffic data collected from freeway I5-N in California are conducted to evaluate the performance of DistTune. The results demonstrate that DistTune provides fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network.

Traffic speed is a key indicator for measuring the efficiency of a transportation network. Accurate traffic speed prediction is therefore crucial to achieve proactive traffic management and control for transportation networks. During the past decade, many approaches and methods have been introduced for traffic speed prediction. Each of them can be summarized as learning a mapping function between input variables and output variables. These methods can be classified into two main types: parametric and nonparametric. Parametric approaches simplify the mapping function to a known form, i.e., they require a predefined model. A typical example is the autoregressive integrated moving average approach (ARIMA) ( 1 ). By contrast, nonparametric approaches do not require a predefined model structure. Typical examples include the k-nearest neighbors (k-NN) method ( 2 , 3 ), artificial neural network (ANN) ( 4 ), and recurrent neural network (RNN) ( 5 ).

However, providing fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network where the size of the network keeps increasing and new traffic detectors are constantly deployed on the network has not been well studied. To address this issue, this paper proposes a solution based on long short-term memory (LSTM) ( 6 ), which is a special type of RNN. Prior studies such as Ma et al. ( 7 ), Yu et al. ( 8 ), and Zhao et al. ( 9 ) have shown that LSTM is superior in time series prediction and provides better prediction accuracy than many existing approaches and neural networks, including Elman NN ( 7 ), Time-delayed NN ( 7 ), Nonlinear Autoregressive NN ( 7 ), support vector machine ( 7 ), ARIMA ( 1 ), and the Kalman Filter approach ( 10 ). Therefore, LSTM is chosen as our building block.

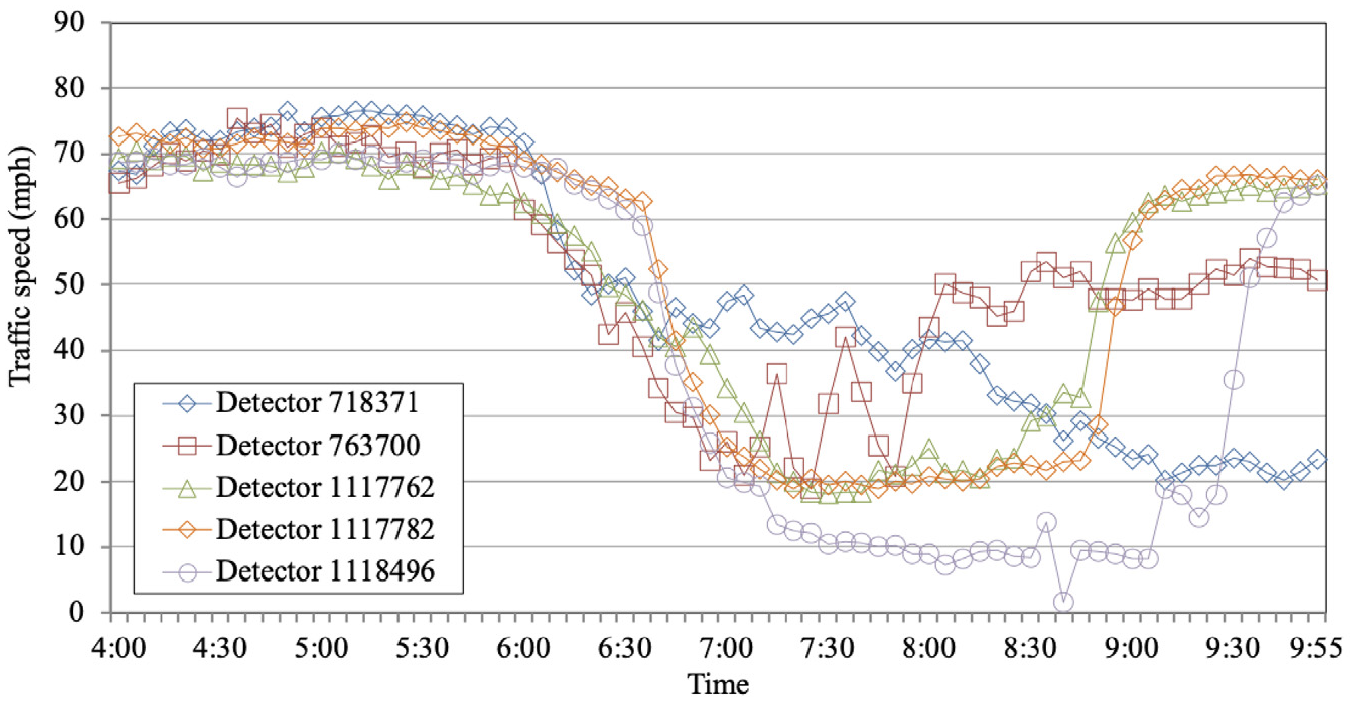

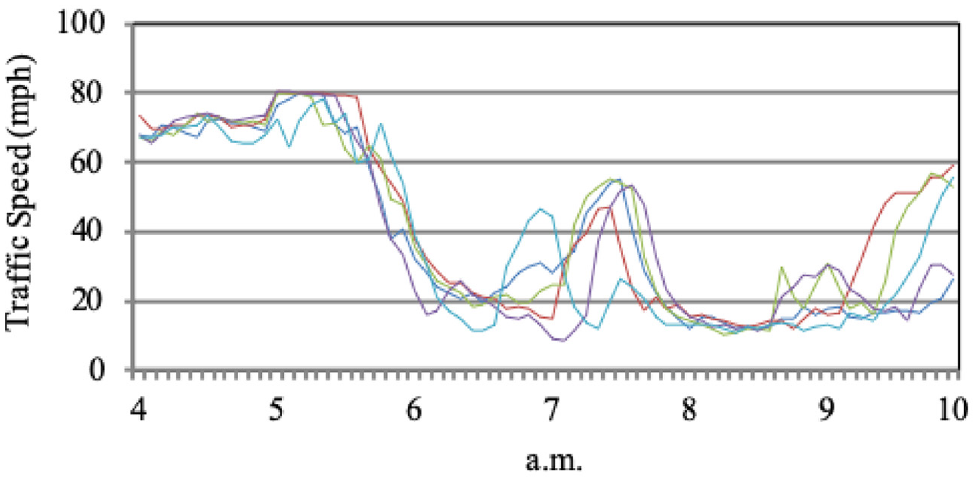

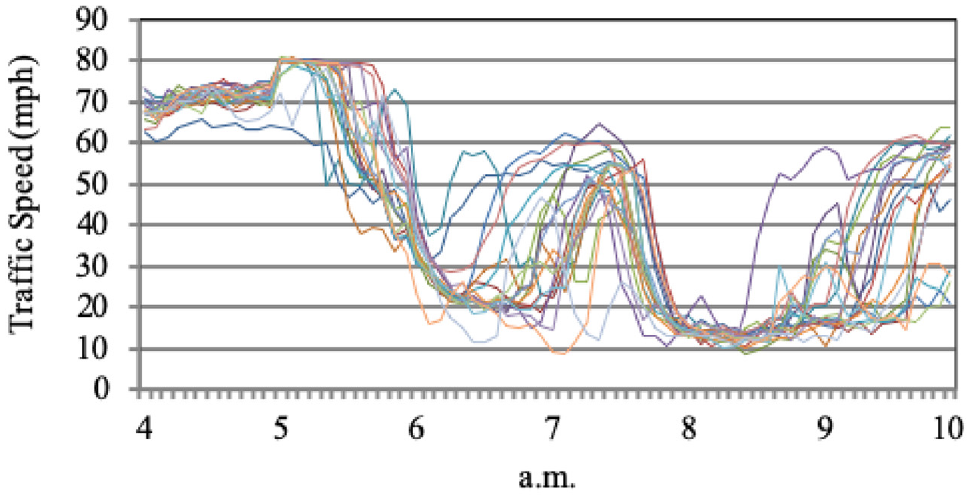

However, several challenges exist and several issues must be addressed to achieve the above-mentioned goal (i.e., fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network). For instance, detectors such as loop sensors and traffic cameras in a transportation network are deployed in different places to collect and monitor traffic data. Depending on the density of nearby population and other factors, the traffic speed observed/collected by detectors at different locations may not be the same. For example, Figure 1 illustrates the traffic speed observed by five detectors deployed on freeway I5-N in California ( 11 ) in a typical weekday. We can see that the observed traffic speeds were similar to each other between 4 a.m. and 6 a.m. However, the pattern of the traffic speed became different and diverse from 6 a.m. Therefore, we recommend that each detector should have its own LSTM model to predict the traffic speed of its coverage so as to provide fine-grained traffic speed prediction and achieve better transportation management and services. However, such an approach is expensive and impractical because training an LSTM model for each individual detector in the growing transportation network is required and that each training process is in general time-consuming.

The traffic speed collected by five detectors on freeway I5-N in California between 4 a.m. and 10 a.m. in a typical weekday ( 12 ).

Another issue is how to achieve satisfactory prediction accuracy and continuously maintain satisfactory accuracy over time for every single detector in the growing transportation network. It is well-known that the success of LSTM in achieving satisfactory prediction accuracy relies on an appropriately configured set of hyperparameters, which are parameters whose values are set before the training process of LSTM starts. These hyperparameters include the learning rate and the number of hidden layers. Determining appropriate values for LSTM hyperparameters is usually done manually by trial and error, which might be time-consuming, and may not be able to guarantee good prediction accuracy. In addition, an LSTM model might not be able to keep offering satisfactory prediction accuracy because the traffic speed pattern observed by the corresponding detector may change over time.

To summarize, this paper attempts to address the following challenges:

How can we automatically customize an LSTM for each single detector (i.e., appropriately configuring LSTM hyperparameters) in a growing transportation network such that the corresponding LSTM model provides satisfactory prediction accuracy?

How can we time-efficiently perform automatic LSTM customization for the increasing number of detectors in growing transportation networks?

How can we keep maintaining satisfactory prediction accuracy for every single detector in a time-efficient way?

To address the above challenges, in this paper, we propose DistTune, which is a distributed scheme to automatically customize LSTM models and constantly provide satisfactory prediction accuracy for every single detector in a growing transportation network. DistTune customizes an LSTM model for a detector by automatically tuning LSTM hyperparameter values and training the corresponding LSTM models based on the Nelder-Mead method (NMM) ( 13 ), a commonly applied method used to find the minimum or maximum of an objective function in a multidimensional space. The reason why NMM is employed will be explained later in this paper.

However, simply using NMM to gradually customize an LSTM model for every single detector is not a scalable solution since the number of detectors in a growing transportation network might be very large and increasing. To enhance scalability and efficiency, DistTune allows detectors to share the same LSTM model if these detectors have observed similar traffic patterns. In addition, DistTune is designed in an incremental, distributed, and parallel manner, and runs on a cluster of computing nodes to accelerate all required LSTM customization processes.

Whenever DistTune encounters an unprocessed detector Di, it checks if the traffic speed pattern observed by Di is similar to that observed by any other detector whose LSTM model has been customized by DistTune. If the answer is positive and the traffic speed pattern observed by Di is similar to that observed by detector Dj, DistTune directly shares Dj’s LSTM model with Di without customizing an LSTM model for Di. However, if the answer is negative, DistTune requests an available computing node from the cluster to customize an LSTM model for Di. To guarantee and maintain satisfactory prediction accuracy, DistTune keeps track of the prediction accuracy of all detectors. If any LSTM model is unable to achieve the desired prediction accuracy because of a change in the traffic pattern or another reason, DistTune requests an available computing node to re-customize an LSTM model for the corresponding detector.

To demonstrate the performance of DistTune, we conducted three extensive experiments on an Apache Hadoop YARN cluster using the traffic data collected by detectors on freeway I5-N in California. In the first experiment, we designed several scenarios to evaluate the auto-tuning effectiveness of DistTune. The results show that auto-tuning LSTM hyperparameters leads to higher prediction accuracy than manually configuring LSTM hyperparameters. In the second experiment, we evaluated the auto-sharing LSTM performance of DistTune. The results indicate that DistTune significantly reduces the time for LSTM customization. In the last experiment, we evaluated the LSTM re-customization performance of DistTune under two types of re-customization. One is to start from scratch. The other one is based on Transfer Learning ( 14 ). Surprisingly, the results show that following the concept of Transfer Learning does not bring significant benefits if time consumption and prediction accuracy are both considered. The contributions of this paper are as follows:

Two well-known hyperparameter optimization approaches are evaluated and compared through empirical experiments: NMM and the Bayesian optimization approach (BOA) ( 15 ). The results suggest that the former method suits DistTune better because of its efficiency in finding appropriate LSTM hyperparameter values for achieving the desired prediction accuracy.

The proposed DistTune enables detectors to share the same LSTM model, and it provides an LSTM re-customization service for all detectors when any of them fails to provide satisfactory prediction accuracy. The design of DistTune addresses the time-consuming and computation-intensive issues of LSTM customization for the tremendous number of detectors in a growing transportation network.

The performance of DistTune is carefully evaluated through extensive experiments based on traffic data collected by detectors on freeway I5-N in California. The results suggest that DistTune provides fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network.

The rest of the paper is organized as follows: the next section provides background and related work. We then evaluate NMM and BOA, and explain why NMM is chosen by DistTune to autotune LSTM hyperparameters. We then introduce the details of DistTune and evaluate the performance of DistTune, respectively. This is followed by a discussion of the training data set we chose for conducting our experiments. The paper concludes with an outline of future work.

Background and Related Work

In this section, we briefly introduce LSTM and discuss related work.

LSTM and its Hyperparameters

LSTM is a special type of RNN. Its architecture is similar to that of RNN except that the nonlinear units in the hidden layers are memory blocks. Each memory block consists of memory cells, an input gate, a forget gate, and an output gate. The input gate decides if the input should be stored in the memory cells. The forget gate determines if current memory contents should be deleted. The output gate decides if current memory contents should be output. This design enables LSTM to preserve information over long time lags, thereby addressing the vanishing gradient problem ( 16 ).

According to Lee et al. ( 12 ), the prediction performance of LSTM greatly depends on configuring appropriate values for the following hyperparameters:

Learning rate

The number of hidden layers

The number of hidden units

Epochs

The learning rate controls the amount the weights of LSTM are updated during training. The lower the value, the less is the possibility of missing any local minima, but it might prolong the training process. A hidden layer is where hidden units take in a set of weighted inputs and produce an output through an activation function. More hidden layers are usually required to learn a large and complex training data set. A hidden unit is a neuron in a hidden layer. An inappropriate number of hidden units might cause either overfitting or underfitting. An epoch is defined as one forward pass and one backward pass of all the training data. Too few epochs may underfit the training data, whereas too many epochs might overfit the training data.

It is clear that all the above-mentioned hyperparameters are important to the learning performance and computation efficiency of LSTM. Therefore, DistTune takes all these hyperparameters into consideration when conducting LSTM customization.

Related Work

Over the past two decades, many traffic prediction approaches have been proposed. As briefly mentioned in the introduction, they can be classified into two categories: parametric and nonparametric approaches. In parametric approaches, a model structure (i.e., the mapping function between input variables and output variables) needs to be determined beforehand based on some theoretical assumptions. Usually the model parameters can be derived from empirical data. The ARIMA model is a typical and widely used parametric approach ( 17 ). Many ARIMA-based approaches were also introduced to improve prediction accuracy, including Lee and Fambro ( 18 ), Williams ( 19 ), and Williams and Hoel ( 20 ).

Unlike parametric approaches, nonparametric approaches do not require a predefined model structure (i.e., there is no need to make assumptions about the mapping function). Typical examples include k-NN, ANN, RNN, hybrid approaches, and so forth. The k-NN method was used in Davis and Nihan ( 2 ) to forecast freeway traffic. Several variants of the k-NN and RNN methods were then introduced for traffic prediction, such as Bustillos and Chiu ( 3 ) and Cui et al. ( 21 ). Le et al. ( 22 ) addressed traffic speed prediction using big traffic data obtained from static sensors and proposed local Gaussian processes to learn and make predictions for correlated subsets of data. Jiang and Fei ( 23 ) introduced a data-driven vehicle speed prediction method based on Hidden Markov models. Ma et al. ( 7 ) employed LSTM to forecast traffic speed using remote microwave sensor data. However, all the above approaches focus on predicting traffic on a fix-length road section or a fix-sized region. Unlike these approaches, DistTune is a flexible scheme for a growing transportation network since it is able to incrementally customize LSTM models for any detectors that are newly deployed on a transportation network. This property makes DistTune a flexible and scalable solution for a growing transportation network.

Ma et al. ( 24 ) used deep learning theory to predict traffic congestion evolution in large-scale transportation networks. Furthermore, Ma et al. ( 25 ) predicted traffic speed in large-scale transportation networks by representing network traffic as images and employing convolutional neural networks to make predictions. However, both of these methods require the scale of the target transportation network to be fixed and specified in advance, which is not required when DistTune is employed. DistTune can handle an increasing number of detectors on the fly without requiring the scale of the target transportation networks to be specified beforehand.

More recently, Lee and Lin ( 26 ) formulated the problem of customizing an LSTM model into a finite Markov decision process and then introduced a distributed approach called DALC to automatically customize LSTM models for detectors in large-scale transportation networks. However, DALC only focuses on two LSTM hyperparameters (the number of hidden layers and epochs) using a fixed state transition graph. Unlike DALC, our DistTune considers two more LSTM hyperparameters without using a finite Markov decision process. DistTune is, therefore, more flexible than DALC. In 2020, Lee et al. ( 12 ) introduced DistPre to provide find-grained traffic speed prediction for large-scale transportation networks based on LSTM customization and distributed computing. However, the LSTM models generated by DistPre cannot be updated to adapt to traffic pattern changes. Therefore, DistPre might not be able to maintain satisfactory prediction accuracy for every single detector in a growing transportation network over time.

Evaluation of BOA and NMM

Hyperparameter optimization is the process of finding appropriate values for the hyperparameters of a training algorithm such that the algorithm can achieve desirable results. Existing approaches include NMM, the Bayesian optimization approach (BOA), grid search, random walk, random search, genetic algorithm, greedy search, simulated annealing, particle swarm optimization, etc. The evaluation of these approaches can be found in, for example, Matuszyk et al. ( 27 ) and Thornton et al. ( 28 ).

Among these approaches, BOA has gained great popularity in recent years in a wide range of areas as a result of its power and efficiency. BOA was designed to find the optimal value of a black-box objective function. In our context, the black-box objective function refers to an LSTM model, and the optimal value refers to a hyperparameter setting (i.e., assigning a value to each LSTM hyperparameter we consider). With this optimal hyperparameter setting, the LSTM model is able to provide satisfactory prediction accuracy.

In BOA, the uncertainty of the objective function across not-yet evaluated values is modeled as a prior probability distribution (called prior), which captures our beliefs about the behavior of the function. After gathering the function evaluations (i.e., the prediction accuracy of an LSTM model under a particular hyperparameter setting), the prior is updated to form the posterior distribution over the objective function. The posterior distribution is then used to construct an acquisition function that will select the most promising value (i.e., the most promising hyperparameter setting) for next evaluation. The above process iterates toward an optimum. Examples of acquisition functions include, for instances, probability of improvement, expected improvement, and Bayesian expected losses ( 29 ). All of them try to use and balance exploration and exploitation to minimize the number of function queries. This is why BOA is suitable for functions that are expensive to evaluate. An in-depth review of BOA can be found in Brochu et al. ( 30 ).

NMM is another popular optimization method for nonlinear functions. This method does not require any derivative information, making it suitable for problems with non-smooth functions. NMM minimizes an objective function by generating an initial simplex based on a predefined vertex and then performing a function evaluation at each vertex of the simplex. Note that a simplex has

A sequence of transformations is then performed iteratively on the simplex, aiming to decrease the function values at its vertices. Possible transformations include reflection, expansion, contraction, and shrinking. The above process terminates when the sample standard deviation of the function values of the current simplex fall below some tolerance. We refer readers to Singer and Nelder ( 31 ) for more details about these transformations.

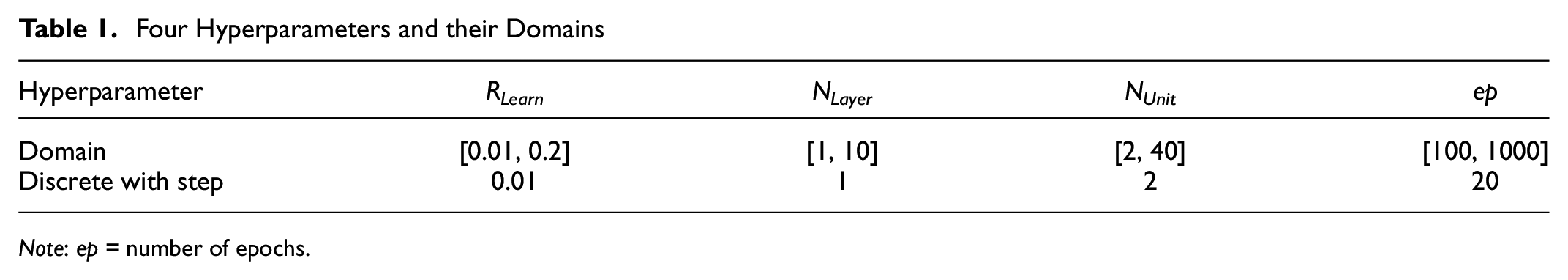

In this paper, we focus on evaluating BOA and NMM to see which of them suits DistTune better, i.e., which of them can more time-efficiently find LSTM hyperparameters for detectors such that the resulting LSTM models are able to achieve the desired prediction accuracy. In this experiment, BOA and NMM focus on auto-tuning the four above-mentioned LSTM hyperparameters: learning rate (denoted by RLearn), the number of hidden layers (denoted by NLayer), the number of hidden units (denoted by NUnit), and the number of epochs (denoted by ep). Table 1 presents the domains of these hyperparameters.

Four Hyperparameters and their Domains

Note: ep = number of epochs.

To fairly evaluate and compare BOA and NMM, we selected the five detectors shown in Figure 1 to be their targets. It is clear from Figure 1 that the traffic speed patterns observed by detectors 1117762 and 1117782 are similar to each other, and the traffic speed patterns observed by the other three detectors are diverse. These phenomena reflect the traffic speed patterns observed by detectors in a real transportation network. This is why we chose these five detectors to evaluate BOA and NMM. If one of these two approaches is able to more time-efficiently autotune LSTM hyperparameters for these five detectors than the other such that the corresponding models achieve the desired prediction accuracy, it is likely that this approach can offer better time efficiency than the other when it is adopted by DistTune in a growing transportation network.

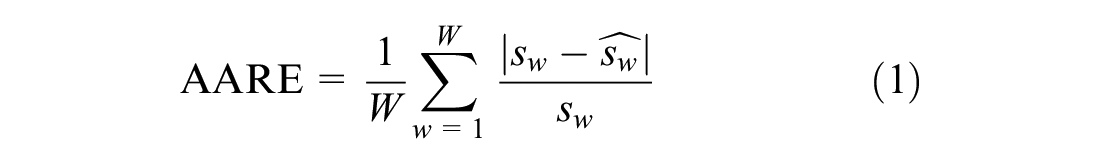

In this evaluation, each of these five detectors has the same size of training data (i.e., traffic speed values from five working days with the collection interval of every 5 min). Two metrics were used for the comparison: average absolute relative error (AARE) and LSTM customization time (LCT). AARE is a well-known measure for the prediction accuracy of a forecast method ( 32 ), which is defined as follows:

where

W is the total number of data points considered for comparison,

w is the index of data point,

sw is the actual traffic speed value at w, and

The lower AARE is, the higher prediction accuracy the forecast method has. The second metric (i.e., LCT) is the time taken by BOA and NMM to individually find an LSTM hyperparameter setting for a detector such that the prediction accuracy of the corresponding LSTM model satisfies a predefined AARE threshold, which is 0.05 in this paper. Note that this value is considered satisfactory according to Lee et al. ( 12 ), Lee and Lin ( 26 ), and Xia et al. ( 33 ).

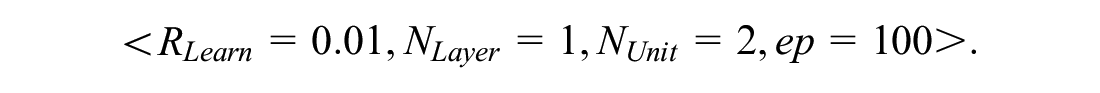

In this experiment, both approaches start with the following default hyperparameter setting, taken from Lee et al. ( 12 ):

As soon as they find a hyperparameter setting with which the corresponding LSTM model has an AARE less than or equal to the AARE threshold, they automatically terminate. However, if they have iterated 20 times without being able to find such a hyperparameter setting, they will be forcibly terminated. This experiment was performed on a laptop running MacOS 10.13.1 with 2.5 GHz Quad-Core Intel Core i7 and 16 GB 1,600 MHz DDR3.

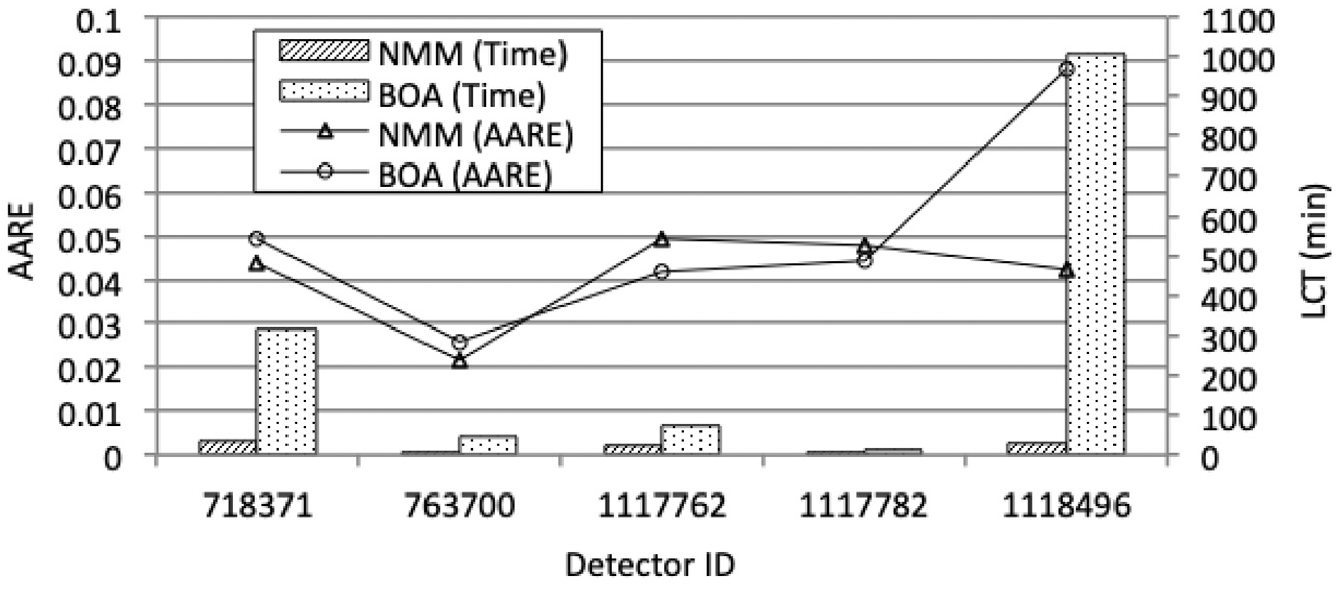

Figure 2 illustrates the performance of BOA and NMM in terms of AARE (presented as marked lines) and LCT (presented as clustered columns). We can see that all the LSTMs customized by NMM satisfy the AARE threshold because their AARE values are all lower than 0.05. However, not all the LSTMs customized by BOA achieve the same good result, especially for detector 1118496. After BOA iterates 20 times, the best AARE value for this detector is still higher than 0.05, and the LCT taken by BOA has reached 1,006 min.

The performance of NMM and BOA on customizing LSTM models for five detectors that observe different traffic speed patterns.

Based on the above results, we conclude that NMM is more suitable than BOA for DistTune because NMM has better efficiency and effectiveness in finding appropriate values for LSTM hyperparameters such that the desired prediction accuracy for detectors can be satisfied. Even though BOA is able to find optimal hyperparameter settings that might be able to reach higher prediction accuracy than NMM, its high time consumption is unaffordable for DistTune. This is why NMM is adopted by DistTune to autotune LSTM hyperparameters.

Methodology of DistTune

In this section, we firstly introduce all algorithms of DistTune and then suggest a customization protocol for practitioners to implement DistTune on their growing transportation networks.

Algorithms

DistTune is designed and implemented as an incremental LSTM auto-tuning, sharing, and re-customization system on a cluster consisting of a master server and a set of worker nodes. The master server determines whether to customize, share, or re-customize LSTM models for individual detectors. Each worker node waits for an instruction from the master server to conduct LSTM customization/re-customization for a given detector.

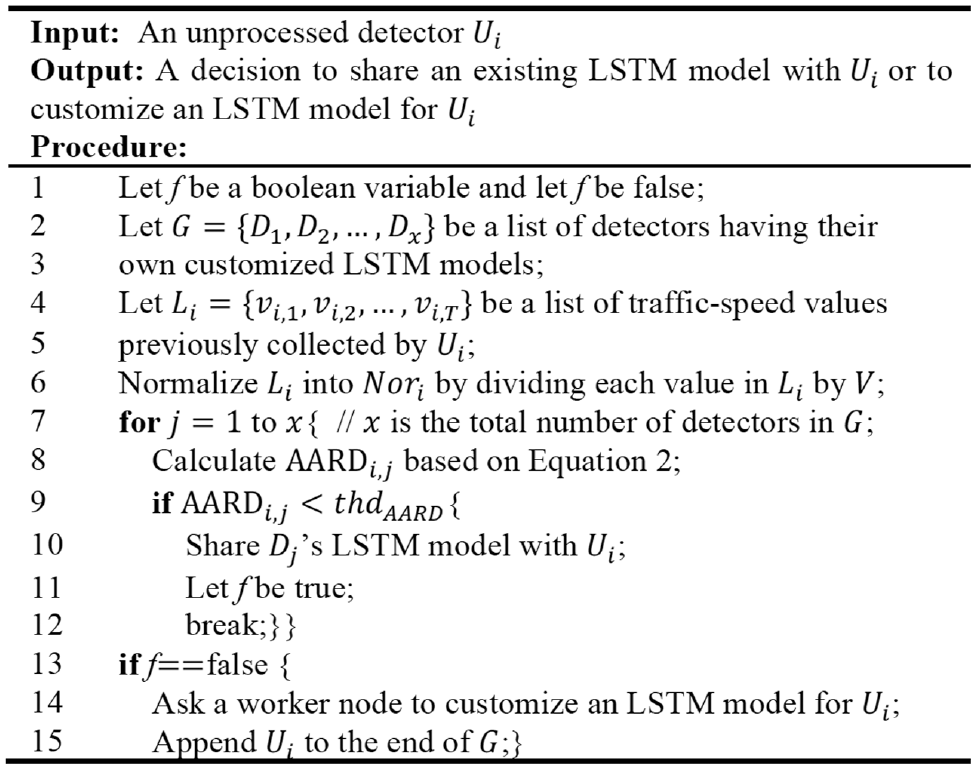

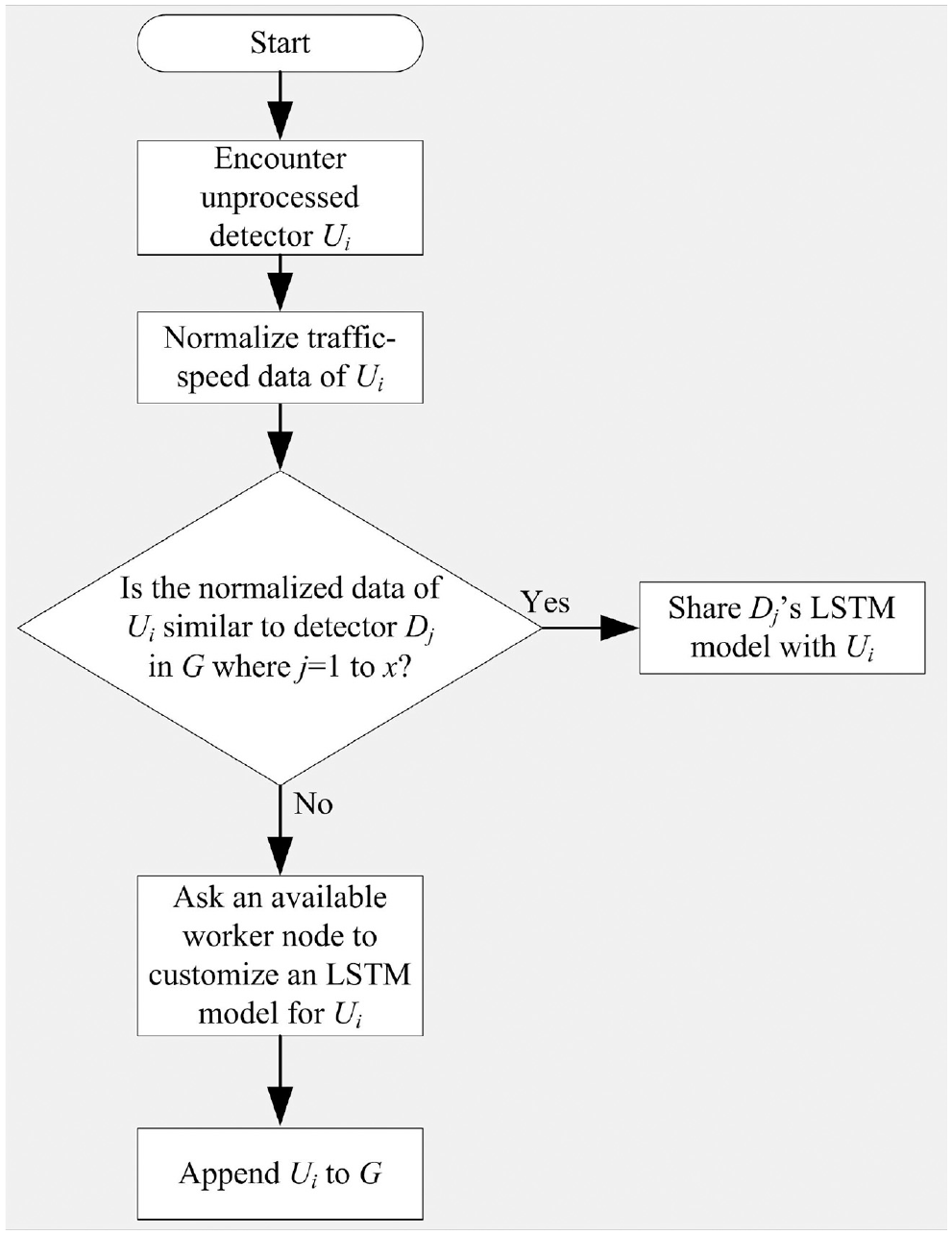

Figure 3 illustrates the LSTM auto-tuning and sharing algorithm running on the master server, and Figure 4 shows the high-level flowchart of the algorithm. Let G=D1,D2, ..., Dx be a list of detectors that have their own LSTM models customized by DistTune. Note that G is empty before DistTune is employed and launched. Whenever encountering an unprocessed detector (denoted by Ui), the algorithm first normalizes a list of traffic speed values observed and collected by Ui. Let Li be the list, and Li={vi,1,vi,2, ..., vi,T} where vi,t is the traffic speed value collected by Ui at time t, t=1,2,. . .,T. The normalization process divides vi,t by V, which is a predefined fixed value (e.g. 70 to represent the speed limit in mph). Let Nori be the normalization result, i.e., Nori={ni,1, ni,2, ..., ni,T} where

The LSTM auto-tuning and sharing algorithm performed by the master server.

The flowchart of the LSTM auto-tuning and sharing algorithm.

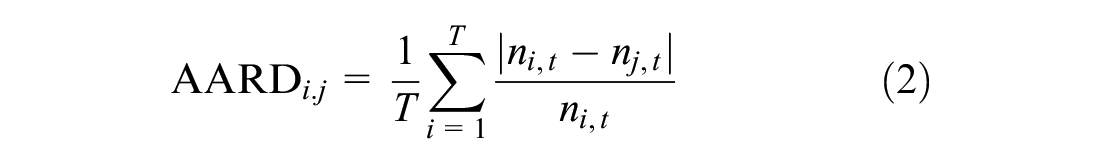

The master server decides if it should customize an LSTM model for Ui by sequentially comparing Ui with every detector (denoted by Dj, j=1,2,. . .,x) in G in terms of their normalized traffic speed pattern based on the following Equation ( 12 ):

where AARDi.j is the average absolute relative difference between the traffic speed patterns collected by Ui and Dj, and nj,t is the normalized traffic speed value collected by Dj at time t, where

In fact, Equation 2 is similar to the AARE equation shown in Equation 1. Recall that a smaller AARE value indicates that what the prediction method predicts is more similar to the actual one. We follow the same concept to propose Equation 2 and use it to measure if two detectors have observed similar normalized traffic speed patterns. If AARDi.j is less than a predefined threshold thdAARD (implying that Ui and Dj observe a similar normalized traffic speed pattern), the master server directly shares Dj’s LSTM model with Ui, without customizing an LSTM model for Ui (see Figure 3: line 9 to line 12).

However, if the master server is unable to find any detector that has observed a similar normalized traffic speed pattern with Ui (i.e., line 13 holds), the master server asks an available worker node to customize an LSTM model for Ui, and then appends Ui to the end of G to indicate that Ui has its own customized LSTM model. Based on how each detector is appended to G, it is clear that every detector in G must have observed a distinct traffic speed pattern.

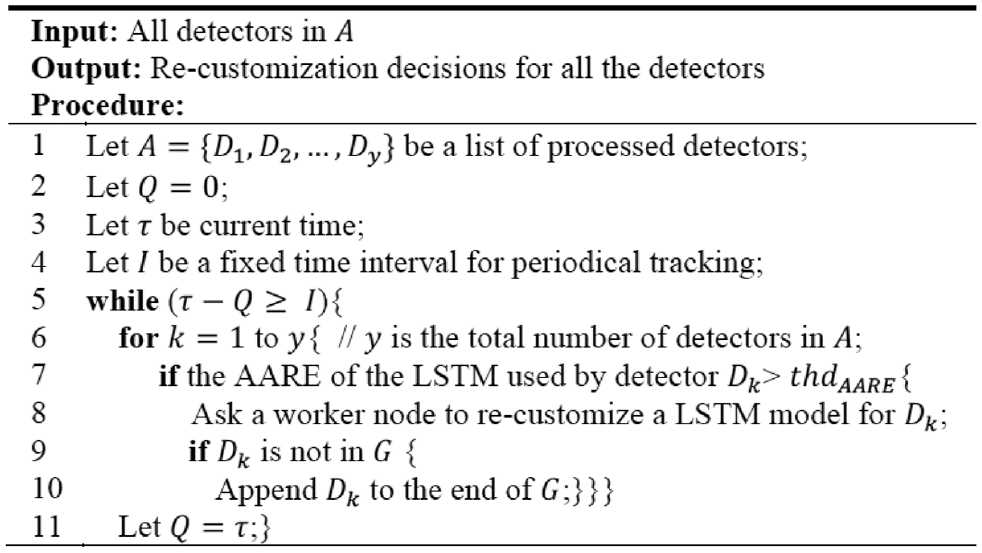

Several factors might stop an LSTM model from providing satisfactory prediction accuracy. For example, the traffic pattern collected by a detector has changed and no longer follows the previous pattern that is used to train the detector’s LSTM model. In order to guarantee fine-grained and satisfactory prediction accuracy for every detector in a growing transportation network, the master server keeps track of every single detector by using the tracking algorithm shown in Figure 5.

The tracking algorithm performed by the master server.

Let A be a list of detectors that have been processed by DistTune, and A=D1,D2, ..., Dy where y≥

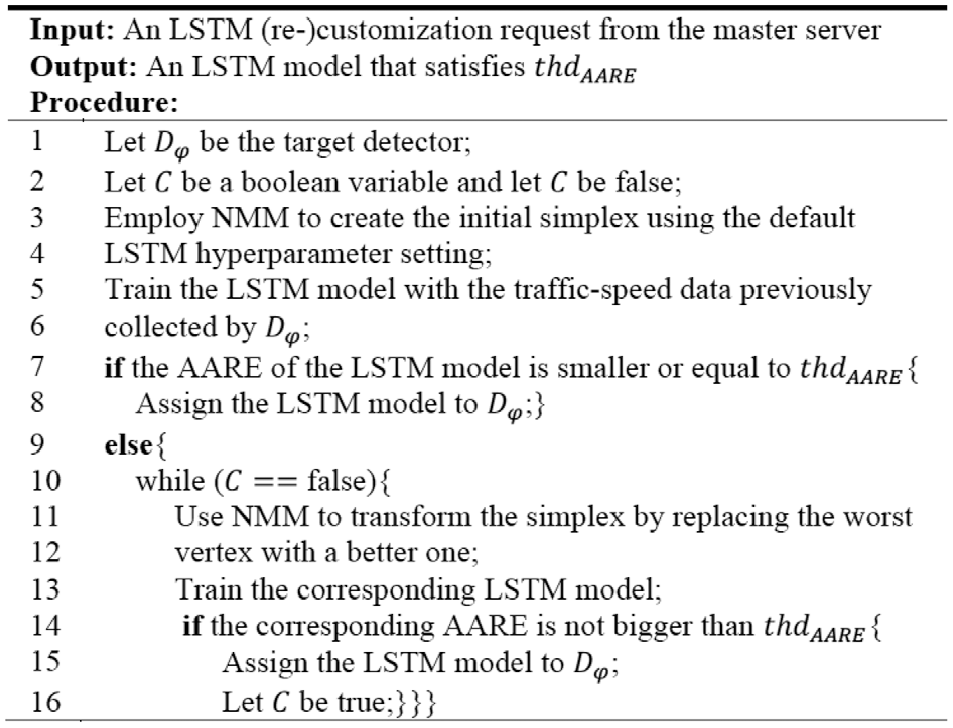

Whenever a worker node receives a customization or re-customization request for detector

The LSTM (re-)customization algorithm performed by every worker node.

However, if the AARE is higher than thdAARE, the worker node keeps using NMM to transform the simplex by replacing the worst vertex with a better one and repeats the same procedure until the function evaluation at a vertex of the simplex is satisfactory. In other words, the worker node terminates when it finds an LSTM model of which the AARE is less than or equal to thdAARE. The corresponding LSTM model is the final output, and it will be used to predict future traffic speed collected by

Customization Protocol

To apply DistTune to a growing transportation network, the following steps should be conducted beforehand:

Choose a target transportation network.

Collect the traffic speed data observed by all detectors in the target transportation network, and then determine the sizes of training data set and testing data set for LSTM customization.

Determine thdAARD. If the value of thdAARD is low, it means that detectors need to have high similarity in their normalized traffic speed patterns to be able to share a single LSTM model.

Determine thdAARE. The lower the value of thdAARE is set, the higher prediction accuracy will be achieved.

Once one has finished the above steps, DistTune can be launched to initiate its traffic speed prediction service.

Performance Evaluation

To evaluate DistTune, we conducted three extensive experiments using traffic data from the Caltrans performance measurement system ( 34 ), which is a database of traffic data collected by detectors placed on state highways throughout California. Freeway I5-N was chosen. This is a major route from the Mexico–United States border to Oregon with a total length of 796.432 miles. In these experiments, DistTune incrementally provides its service until 110 detectors on I5-N are covered to show that DistTune is able to be employed in a growing transportation network and to incrementally customize LSTM models for any detectors that are newly deployed to the network. Note that the distance between two consecutive detectors of the 110 detectors is around 5 miles, and each detector collected traffic data every 5 min. For each detector, we crawled its traffic data for six continuous working days, and then split it into a training data set (the first 5 days) and a testing data set (the last day). In other words, the training data set and testing data set for each detector consist of 1,440 and 288 data points, respectively. Since all the traffic data was aggregated at 5-min intervals, DistTune follows the same interval for prediction. The reason why the training data set is five continuous working days will be discussed in the next section.

In all the experiments, thdAARE=0.05 and thdAARD=0.1 ( 12 ). In this paper, if a detector is able to provide 95% prediction accuracy, we consider it satisfactory. This is why we set thdAARE to be 0.05. The same reason for thdAARD: if two detectors have 90% similarity in their normalized traffic speed patterns, we consider these patterns similar. This is why we set thdAARD to be 0.1. In fact, these two thresholds are configurable if one wants to change the degree of similarity or achieve a different level of prediction accuracy.

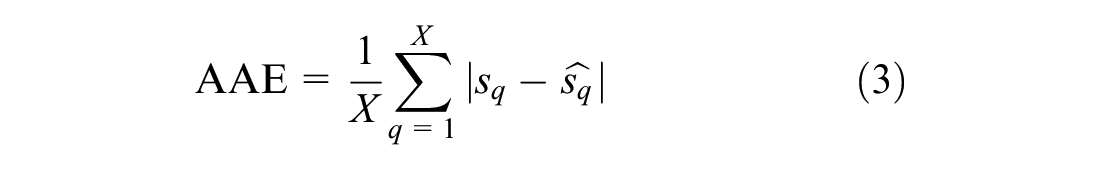

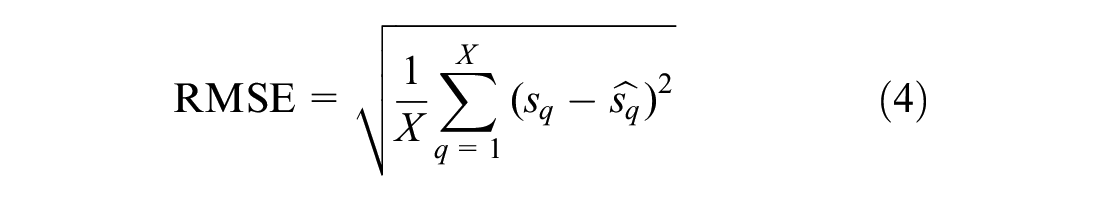

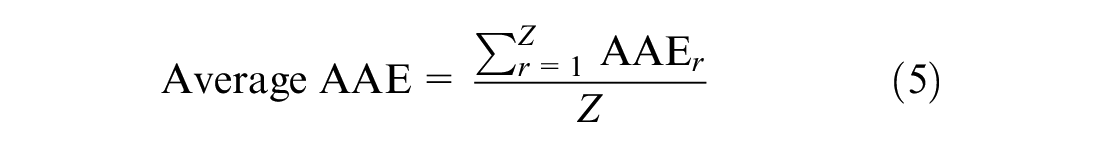

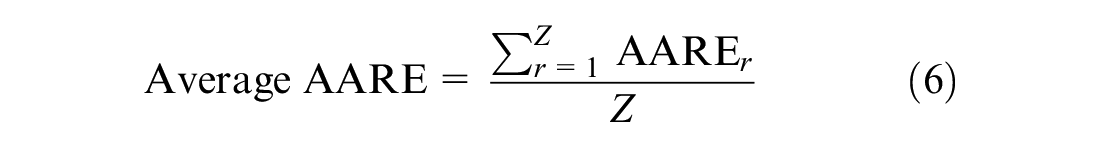

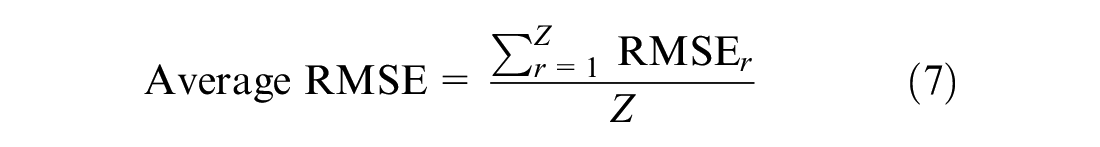

Three widely used performance metrics ( 32 ), which are average absolute error (AAE), AARE, and root mean square error (RMSE), were employed in all our experiments to evaluate LSTM prediction accuracy. Please see Equation 1 for AARE. The equations to calculate AAE and RMSE are as follows:

where

X is the total number of data points for comparison,

q is the index of a data point,

sq is the actual traffic speed value at q, and

Low values for AAE, AARE, and RMSE indicate that the corresponding LSTM model has high prediction accuracy.

Experiment 1

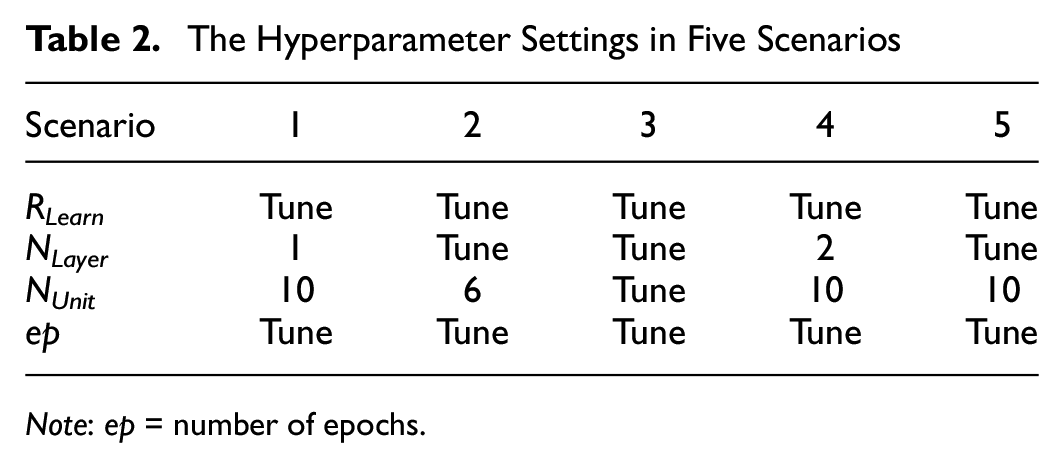

In this experiment, we designed five scenarios (see Table 2) to evaluate the auto-tuning effectiveness of DistTune by temporarily disabling the LSTM sharing function. In other words, every detector in this experiment will always get its own LSTM model customized by DistTune. In Scenario 1, DistTune only autotunes two hyperparameters. The other two hyperparameters are assigned with small values. In Scenario 2, DistTune autotunes one more hyperparameter. In Scenario 3, all of the four hyperparameters are automatically tuned by DistTune. Scenarios 4 and 5 are similar to Scenarios 1 and 2, respectively, but with an equal or a higher value for each non-autotuned hyperparameter. The goal is to see the impact of these increased values on DistTune.

The Hyperparameter Settings in Five Scenarios

Note: ep = number of epochs.

Four performance metrics were used in this experiment:

Cumulative LSTM customization time: the summation of the time taken by DistTune to customize an LSTM model for each detector such that the corresponding LSTM satisfies thdAARE.

Average AAE: the average AAE of all the LSTMs customized by DistTune. See Equation 5.

Average AARE: the average AARE of all the LSTMs customized by DistTune. See Equation 6.

Average RMSE: The average RMSE of all the LSTMs customized by DistTune. See Equation 7.

In the above three equations, Z is the total number of LSTMs (in this experiment, Z equals 110), and r is the index number of an LSTM, r = 1,2,...,Z.

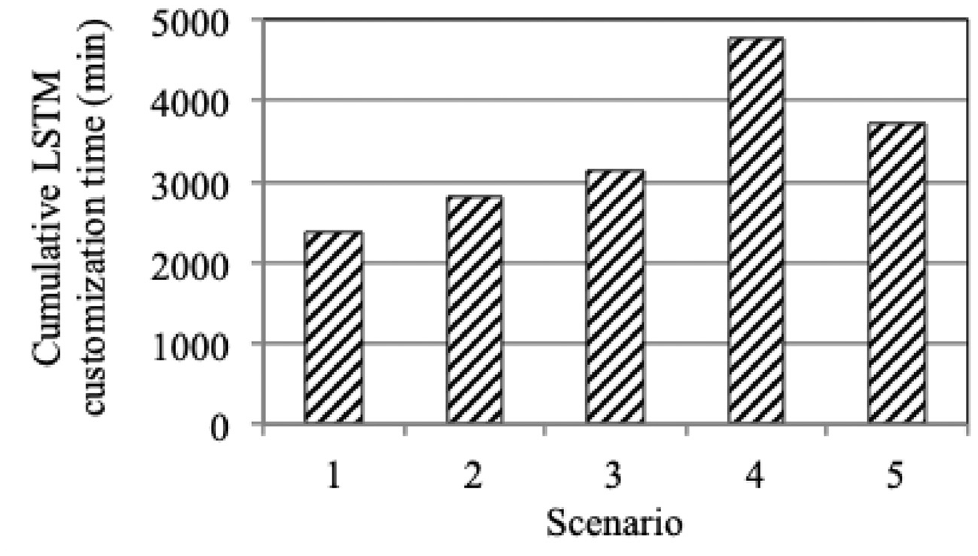

Figure 7 illustrates the cumulative LSTM customization time taken by DistTune in the five scenarios. From the results of the first three scenarios, it seems that the cumulative LSTM customization time increases when more hyperparameters are automatically tuned by DistTune. However, this is not true when the last two scenarios are further considered. We can see that Scenarios 4 and 5 cost more time than Scenario 3, even though they have fewer autotuned hyperparameters than Scenario 3. In other words, increasing the number of autotuned LSTM hyperparameters does not mean that the corresponding LSTM customization time will always increase. The reason why Scenario 4 resulted in the longest LSTM customization time is that the network structure of each LSTM model in Scenario 4 is more complicated than that in all the other scenarios. Therefore, using DistTune to autotune NLayer and NUnit would be more appropriate.

The cumulative LSTM customization time consumed by DistTune in five scenarios when the LSTM sharing function is disabled.

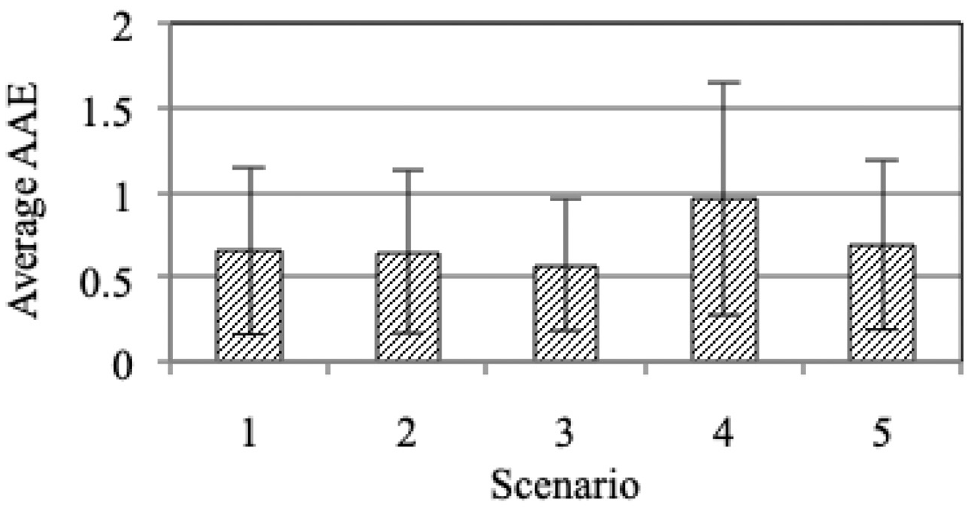

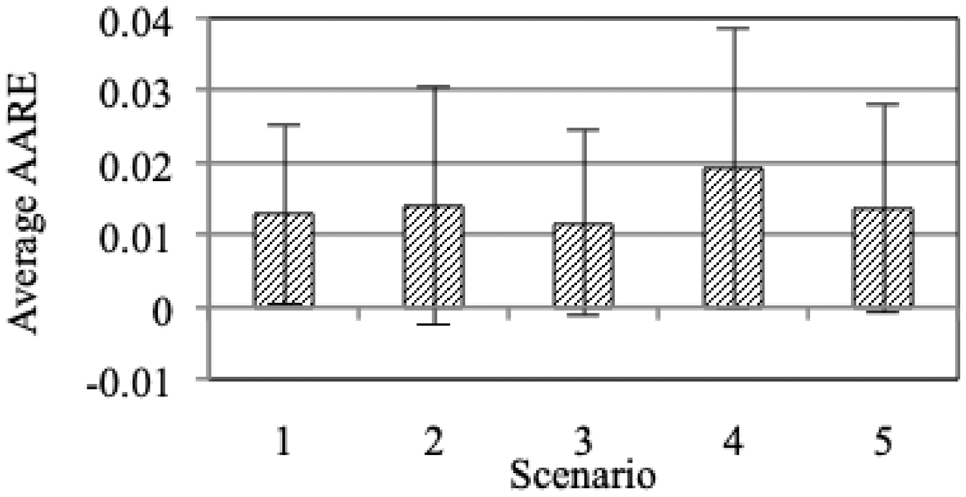

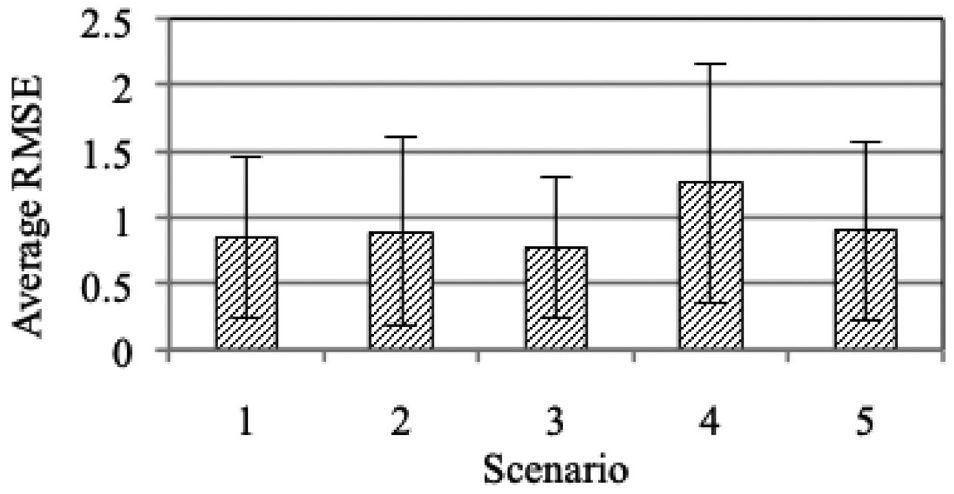

Figures 8 to 10 show the prediction performance of DistTune in the five scenarios when the LSTM sharing function is disabled. It is clear that Scenario 3 has the highest prediction accuracy since its average AAE value, average AARE value, average RMSE value, and the corresponding standard deviation values are the smallest among the five scenarios. The results confirm that auto-tuning all the considered hyperparameters leads to the best prediction accuracy. However, if the cumulative LSTM customization time shown in Figure 7 is further considered, it appears that Scenario 3 is more computationally expensive than Scenarios 1 and 2 due to its significant increase in computation time, compared with its slight improvement in prediction accuracy. This is because DistTune in this experiment disables the LSTM sharing function. DisTune needs to individually customize an LSTM model for every single detector, so the cumulative LSTM customization time of DistTune cannot have a significant improvement. In the next experiment, we will show how DistTune mitigates this issue by enabling the LSTM sharing function.

The average AAE results in five scenarios when the LSTM sharing function of DistTune is disabled.

The average AARE results in five scenarios when the LSTM sharing function of DistTune is disabled.

The average RMSE results in five scenarios when the LSTM sharing function of DistTune is disabled.

Experiment 2

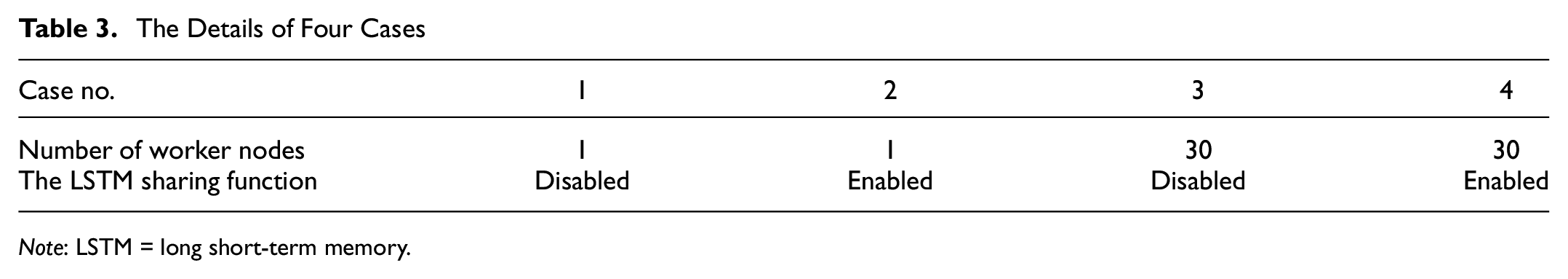

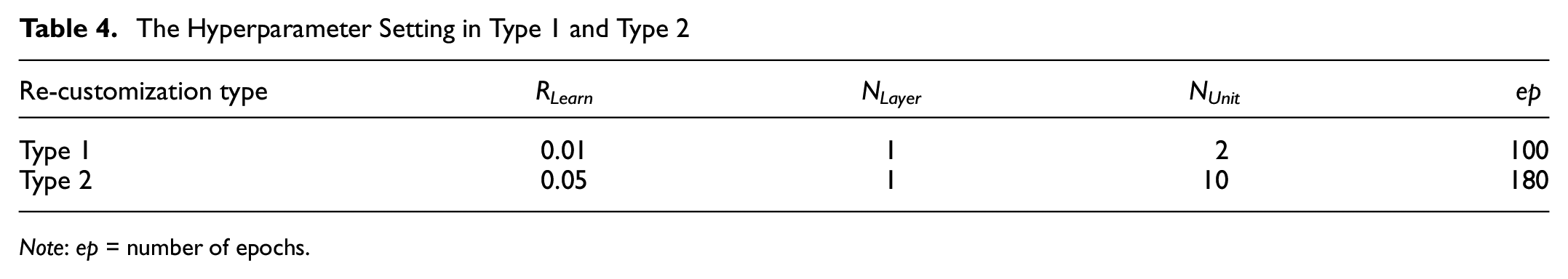

In this experiment, we studied the impact of the LSTM sharing function and the number of worker nodes on the performance of DistTune. To do this, we designed four cases as listed in Table 3. In Cases 1 and 2, the cluster running DistTune has a single worker node. However, the LSTM sharing function of DistTune was disabled in Case 1, whereas it was enabled in Case 2. In Cases 3 and 4, the cluster has 30 worker nodes, and the sharing function was disabled and enabled, respectively. Two performance metrics were employed in this experiment: total LSTM customization time and average AARE. Note that the former is the total elapsed time from when DistTune is launched until all 110 detectors have obtained LSTM models.

The Details of Four Cases

Note: LSTM = long short-term memory.

Figure 11 shows the performance results of DistTune in the four cases. Case 1 is the most time-consuming of the four cases. This is because only one worker node was employed to individually and sequentially customize LSTM models for each of the 110 detectors. This situation turns better in Case 2 since the sharing function was enabled. The total LSTM customization duration reduces by

The performance of DistTune in four cases.

When the 30 worker nodes were used and the sharing function was disabled, i.e. Case 3, the total LSTM customization time reduces to 224 min, meaning that increasing the scale of the cluster and using parallel computing also help improve the performance of DistTune. By further enabling detectors to share their LSTM models without individual customization, i.e. Case 4, the total time drops to only 56 min. The reduction is around

On the other hand, from the perspective of average AARE, both Cases 1 and 3 lead to the same result (around 0.012 as shown in Figure 11). This is because the algorithm used by DistTune to customize LSTM models (i.e., NMM) is deterministic. In other words, the result generated by NMM for a given detector is always identical no matter which worker node performs the task.

For the same reason, the average AARE results in Cases 2 and 4 are identical, but they are a little higher than those in Cases 1 and 3. The reason is that not all the detectors in Cases 2 and 4 have their own customized LSTM models.

In Cases 1 and 3, all the 110 detectors require no re-customization since each of them has a customized LSTM model. However, in Cases 2 and 4, seven out of the 110 detectors require re-customization because the LSTM models that they inherited from other detectors are unable to satisfy thdAARE. Nevertheless, the re-customization ratio is low

Based on all the above results, we conclude that DistTune in Case 4 provides the best trade-off between time efficiency and prediction accuracy, implying that enabling LSTM sharing and running DistTune on a large cluster are both important for DistTune.

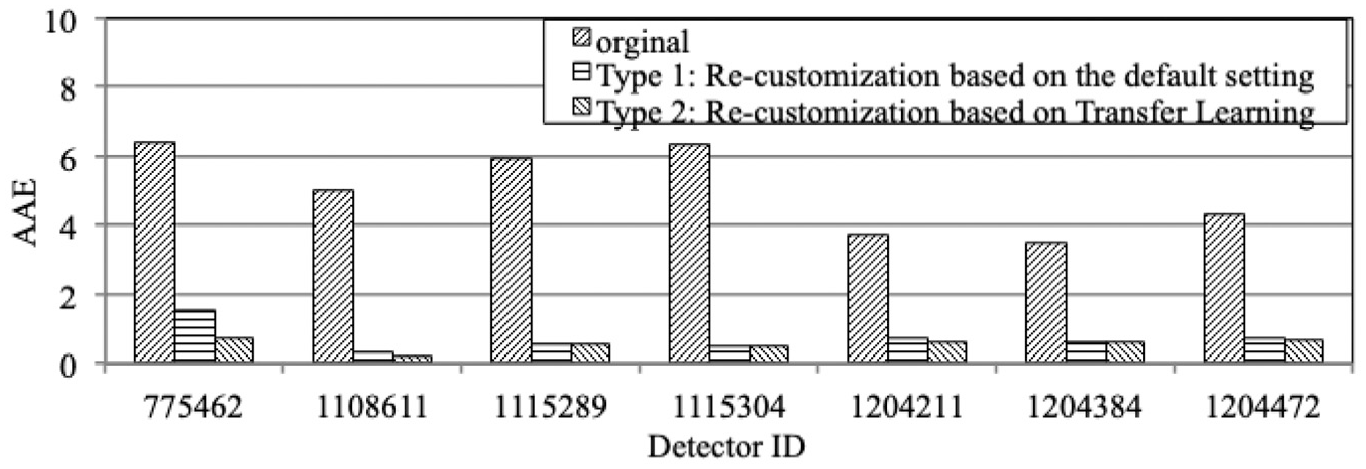

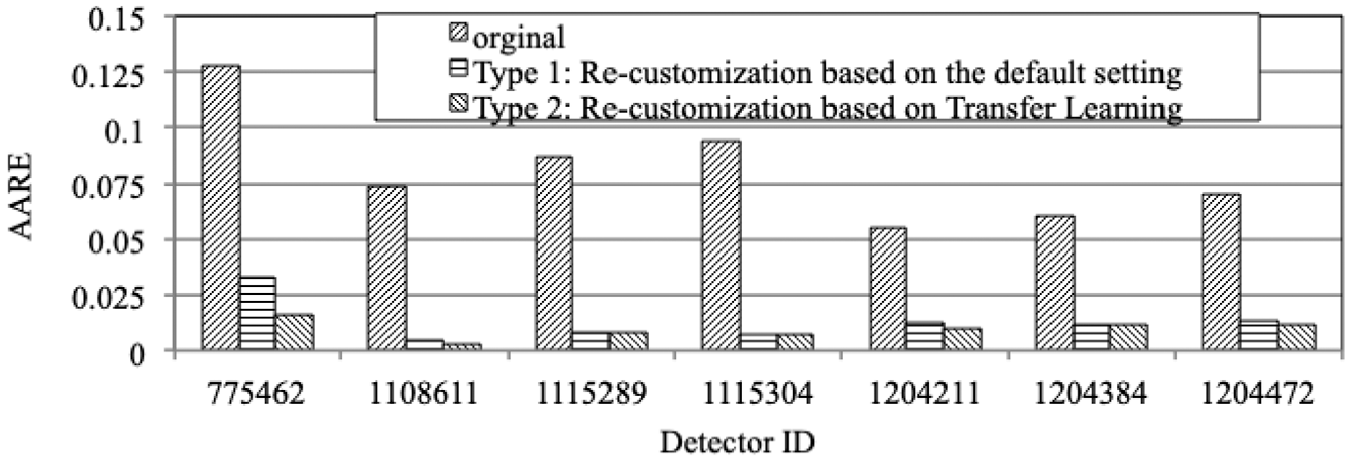

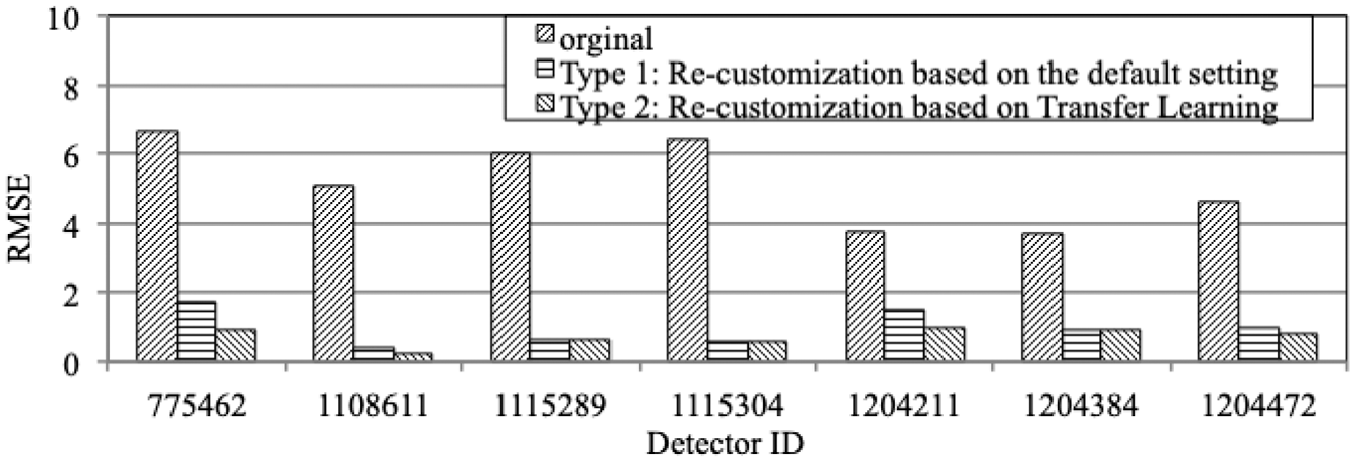

Experiment 3

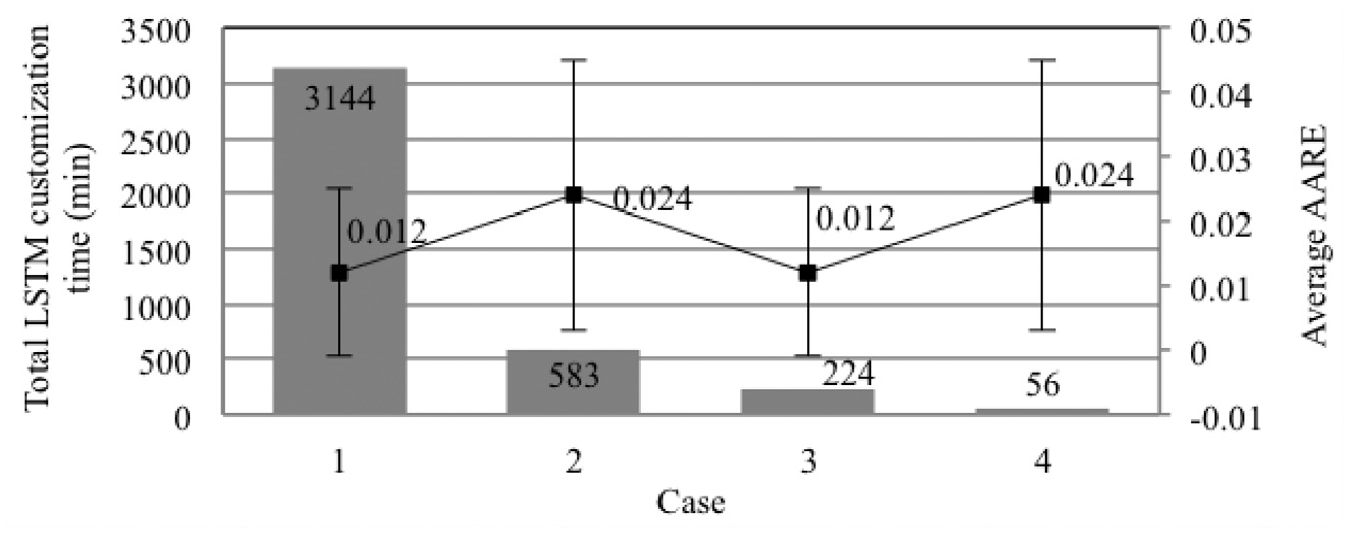

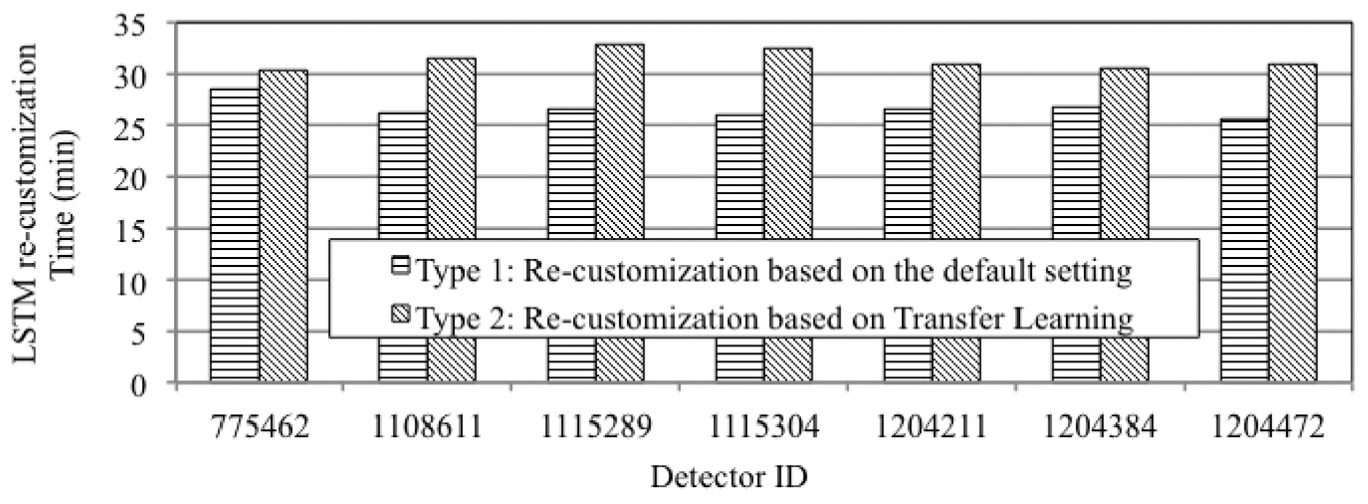

In this experiment, we evaluated the LSTM re-customization performance of DistTune by re-customizing LSTM models for the seven detectors that require re-customization in Cases 2 and 4 in Experiment 2. Two types of LSTM re-customization were considered:

Type 1: based on the default hyperparameter setting.

Type 2: based on Transfer Learning.

In Type 1, DistTune re-customizes an LSTM model for each of the seven detectors by using the default hyperparameter setting (i.e., RLearn=0.01, NLayer=1, NUnit=2, ep=100) to generate the initial simplex for NMM. In Type 2, DistTune re-customizes an LSTM model for detector i by using the LSTM hyperparameter setting of detector j to generate the initial simplex if the LSTM model currently used by detector i is shared by detector j.

Table 4 lists the hyperparameter settings separately used in Types 1 and 2 for the seven detectors. In fact, all the detectors inherited their LSTM models from the same detector (i.e., detector 1118333) since the traffic speed patterns they observed are similar to the one observed by detector 1118333. This is why these detectors have the same hyperparameter setting in Type 2.

The Hyperparameter Setting in Type 1 and Type 2

Note: ep = number of epochs.

Figure 12 shows the LSTM re-customization time for the seven detectors. Surprisingly, Type 2 consumes more time than Type 1 for every detector. The key reason is that the hyperparameter setting in Type 2 contains higher values for NUnit and ep, therefore prolonging the LSTM training time and increasing the required LSTM re-customization time for each detector.

The required LSTM re-customization time for the seven detectors.

Figures 13 to 15 illustrate the prediction performance of the seven detectors before and after the two types of re-customization were individually performed. Both Types 1 and 2 are able to customize an appropriate LSTM model for each of these detectors and considerably reduce all the AAE, AARE, and RMSE values. It is clear that Type 2 leads to slightly better prediction accuracy than Type 1, but Type 2 consumes more processing time than Type 1. Therefore, choosing Type 1 seems to be more economic. For this reason, the re-customization approach of DistTune is based on Type 1, i.e., the default hyperparameter setting.

The AAE results before and after two types of LSTM re-customization are individually performed.

The AARE results before and after two types of LSTM re-customization are individually performed.

The RMSE results before and after two types of LSTM re-customization are individually performed.

Discussion

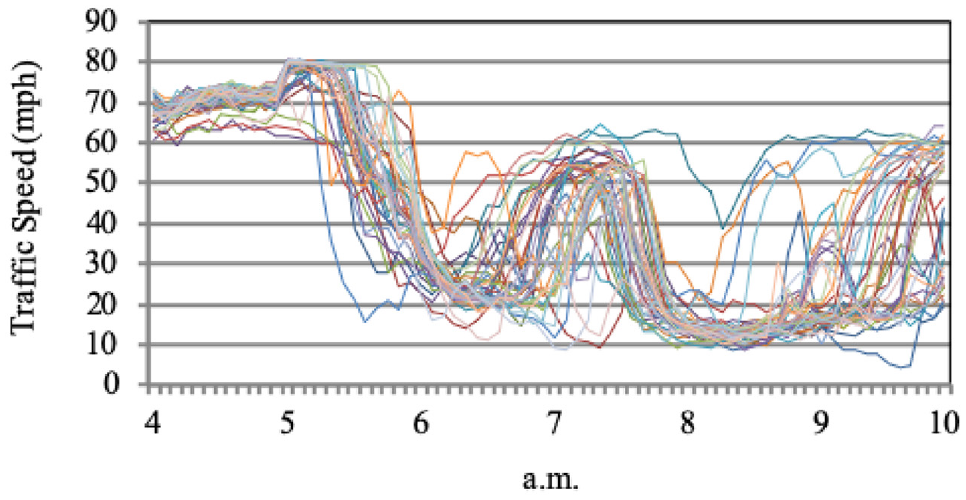

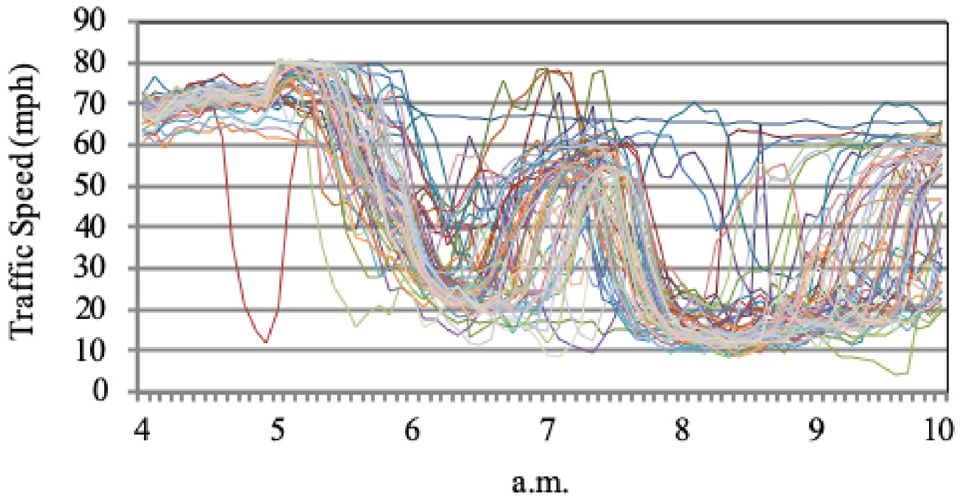

In this section, we discuss and explain why we chose to use the traffic speed data of five continuous working days to be the training data set for every detector in all our experiments, rather than using a longer period of data. According to our observation, not all detectors observe similar traffic speed patterns all the time. To demonstrate this point, we used one detector, i.e. detector 718086, from freeway I5-N as an example. Figures 16 to 19 illustrate the traffic speed data collected by this detector on freeway I5-N between 4 a.m. and 10 a.m. for 1-week working days, 4-week working days, 8-week working days, and 12-week working days, respectively. When the observation period is one week, we can see from Figure 16 that the traffic speed patterns of these five working days have some deviation. This situation gets worse when we increased the observation time. Please see Figures 17 to 19. It is clear that the traffic speed pattern collected by this detector has more variation as the observation period prolongs. Note that this phenomenon not only appears for this detector, we found that it also happens for many other detectors.

The traffic speed pattern of detector 718086 between 4 a.m. and 10 a.m. for 1-week consecutive working days (from Oct 16, 2017 to Oct. 20, 2017).

The traffic speed pattern of detector 718086 between 4 a.m. and 10 a.m. for 4-week consecutive working days (from Sept. 25, 2017 to Oct. 20, 2017, that is, the five working days of four consecutive weeks).

The traffic speed pattern of detector 718086 between 4 a.m. and 10 a.m. for 8-week consecutive working days (from Aug. 28, 2017 to Oct. 20, 2017, that is, the five working days of eight consecutive weeks).

The traffic speed pattern of detector 718086 between 4 a.m. and 10 a.m. for 12-week consecutive working days (from July 31, 2017 to Oct. 20, 2017, that is, the five working days of 12 consecutive weeks).

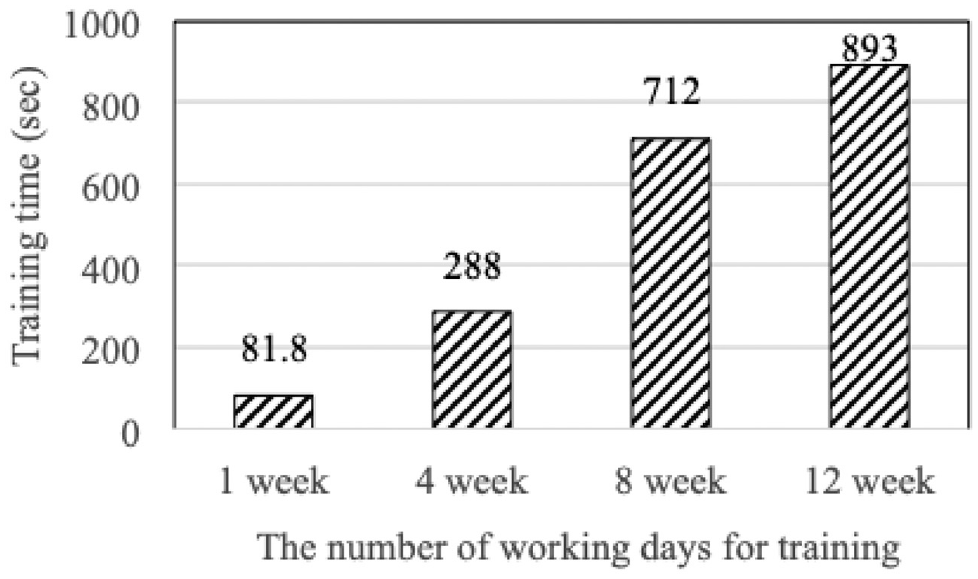

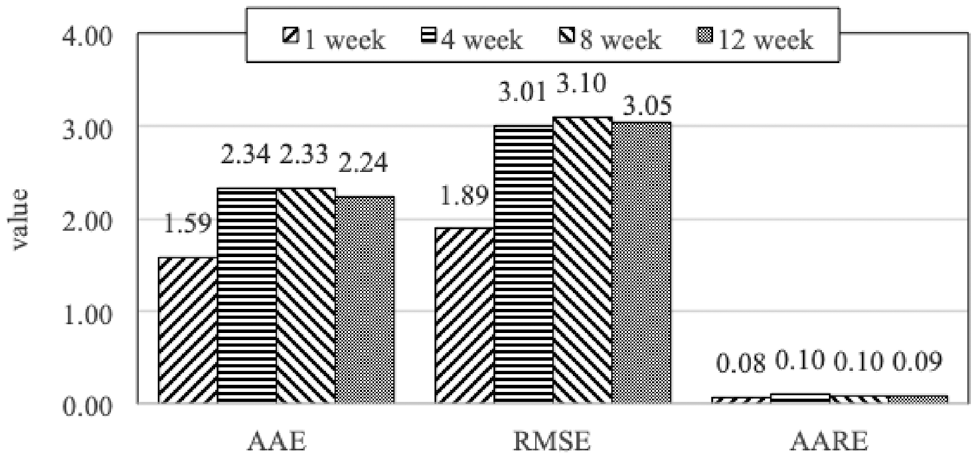

To show the effect of different lengths of training data, we evaluate the corresponding LSTM training time and prediction accuracy in terms of AAE, AARE, and RMSE based on the default hyperparameter setting (i.e., RLearn=0.01, NLayer=1, NUnit=2, ep=100). As shown in Figure 20, the 1-week scenario requires the shortest LSTM training time (around 81.8 s) because the size of the training data is only 5 days, which is the shortest among all the four scenarios. The time is only

The required training time given different lengths of training data.

On the other hand, from Figure 21, we can also see that the 1-week scenario outperforms the other three scenarios when it comes to prediction accuracy. Apparently, the 1-week scenario leads to the smallest value in AAE, AARE, and RMSE, implying that it leads to the best prediction accuracy among the four scenarios.

The prediction accuracy under different lengths of training data.

Based on the above results, we confirm that choosing 1-week traffic speed data as the training data size of LSTM not only saves time, but also achieves higher prediction accuracy because of less deviation in the traffic speed patterns. These two properties are essential since DistTune is designed to provide time-efficient and accurate traffic speed prediction.

Conclusion and Future Work

In this paper, we have introduced DistTune, a distributed scheme to achieve fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for the increasing number of detectors deployed in a growing transportation network. DistTune automatically customizes LSTM models for detectors based on NMM, which was chosen over BOA based on our empirical comparison and evaluation. By allowing LSTM models to be shared between different detectors and employing parallel processing, DistTune successfully addresses the scalability issue, and enables fine-grained and efficient traffic speed prediction. Furthermore, DistTune keeps monitoring detectors and re-customizes their LSTM models when necessary to make sure that their prediction accuracy can always be achieved and guaranteed. Our extensive experiments based on traffic data collected by the Caltrans performance measurement system demonstrate the performance of DistTune and confirm that DistTune provides fine-grained, accurate, time-efficient, and adaptive traffic speed prediction for a growing transportation network.

In this paper, DistTune is implemented on a cluster consisting of only one master server and a set of worker nodes. Such a cluster might have the following risks or limitations. The master server is a single point of failure (SPOF), and it might crash or fail for diverse reasons. In addition, its computation resources might not be able to support the operation of DistTune if DistTune is employed in a large-scale transportation network. On the other hand, the number of worker nodes decides the execution performance of DistTune. In this paper, the number of worker nodes is fixed for simplicity. However, it should be dynamically adjusted over time according to the workload of DistTune.

Therefore, in our future work, we would like to further address the SPOF issue and suggest a highly scalable and elastic solution based on a cloud or a multi-cloud environment for DistTune. Furthermore, we plan to improve the performance of DistTune by considering proper scheduling approaches, such as Lee et al. ( 35 ) and Lin and Lee ( 36 ). In addition, we plan to investigate Early Stopping ( 37 ) and study its impact on the performance of DistTune. Furthermore, we would like to integrate DistTune with other novel techniques such as vehicle trajectory extraction ( 38 ) to provide better traffic managements and services for growing transportation networks.

Footnotes

Acknowledgements

The authors want to thank the anonymous reviewers for their reviews and valuable suggestions to this paper.

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: Ming-Chang Lee and Jia-Chun Lin; data collection: Ming-Chang Lee; analysis and interpretation of results: Ming-Chang Lee and Jia-Chun Lin; draft manuscript preparation: Ming-Chang Lee, Jia-Chun Lin, and Ernst Gunnar Gran. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the eX3 project—Experimental Infrastructure for Exploration of Exascale Computing, funded by the Research Council of Norway under contract 270053, and the scholarship under project number 80430060 supported by Norwegian University of Science and Technology.