Abstract

Meta-analyses are critical for synthesising research evidence, yet little evidence exists to confirm how closely published meta-analyses adhere to established methodological guidelines. Using a structured assessment of 100 meta-analyses published between 2023 and 2025 in top-tier journals, this article documents key features of contemporary practice, including data scale and structure, estimator and effect-size choices, approaches to detecting and adjusting for publication bias, methods for reporting and exploring heterogeneity, open science practices, and software usage. It reveals a substantial implementation gap between recommended methods and routine practice. Although most meta-analyses extract multiple effect sizes per primary study, fewer than 40% of those that acknowledge dependence employ multilevel or multivariate models. Correlation-based effect sizes dominate, but they rarely incorporate the recommended transformations or weighting strategies designed to avoid known algebraic distortions. Heterogeneity is extreme (median I2 > 95%), yet it is often only partially reported or explored. Although publication bias is commonly tested, fewer than half of the studies report bias-adjusted estimates; they rely instead on low-powered diagnostic tools. The authors conclude by identifying inferential consequences that are particularly salient for ensuring the credibility and interpretability of meta-analytic evidence in management and marketing.

1. Introduction

Meta-analysis offers an essential tool for synthesising cumulative evidence in various fields, including management and marketing. Researchers and policymakers rely on meta-analyses to clarify theoretical debates, provide overall estimates of key phenomena, and explain why empirical findings vary across studies. Because meta-analyses often serve as authoritative summaries of entire research domains, the quality of the methods used to conduct them has direct consequences for the credibility of the cumulative knowledge available.

In management and marketing research, several features of the typical empirical environment create challenges for statistical inference in meta-analysis. Primary studies often report multiple effect sizes; effect sizes are frequently measured as correlations; heterogeneity across studies can be substantial; and multiple explanations for variation in results are plausible. These features make meta-analytic inference particularly sensitive to analytic choices. In practice, meta-analyses often treat multiple effect sizes from the same study as independent, use correlations without appropriate transformations, test for publication bias without incorporating it into the estimation, and only partially explore heterogeneity. Each of these choices can affect pooled estimates, measures of uncertainty, and the interpretation of average effects. Taken together, these considerations raise a broader question: How reliable are the cumulative conclusions produced by meta-analyses in management and marketing?

Abundant methodological guidance across multiple disciplines suggests how to conduct rigorous meta-analyses (Cheung and Vijayakumar, 2016; Field and Gillett, 2010; Havránek et al., 2020; Paul and Barari, 2022; Pigott and Polanin, 2020). These guidelines emphasise the need for careful choices regarding effect-size metrics and estimators, systematic examination of the extent and structure of heterogeneity, explicit treatment of dependence among effect sizes, and consideration of how selective reporting may distort results (Bartoš et al., 2025; Cheung and Vijayakumar, 2016; Higgins et al., 2013; Irsova et al., 2025; Pustejovsky and Tipton, 2018; Schmidt and Hunter, 2015; Van den Noortgate et al., 2013). The methodological foundations for conducting credible meta-analyses are now clearly articulated. Yet despite this abundance of methodological guidance, comparatively little evidence exists regarding whether researchers adopt these principles in practice. This gap raises two additional questions: Do contemporary meta-analyses in management and marketing actually adhere to these methodological recommendations, and to the extent that they do not, what are the implications for the credibility of the cumulative evidence available in these fields?

We address these questions by analysing 100 meta-analyses published between 2023 and 2025 in top-tier management and marketing journals, providing a contemporary snapshot of meta-analytic practices. By documenting current analytic practices and examining how routine methodological choices affect statistical inferences, this study provides a basis for assessing the credibility of cumulative evidence in these fields. Notably, the findings indicate that analytic practice often diverges from methodological guidance in ways that prompt important inferential consequences.

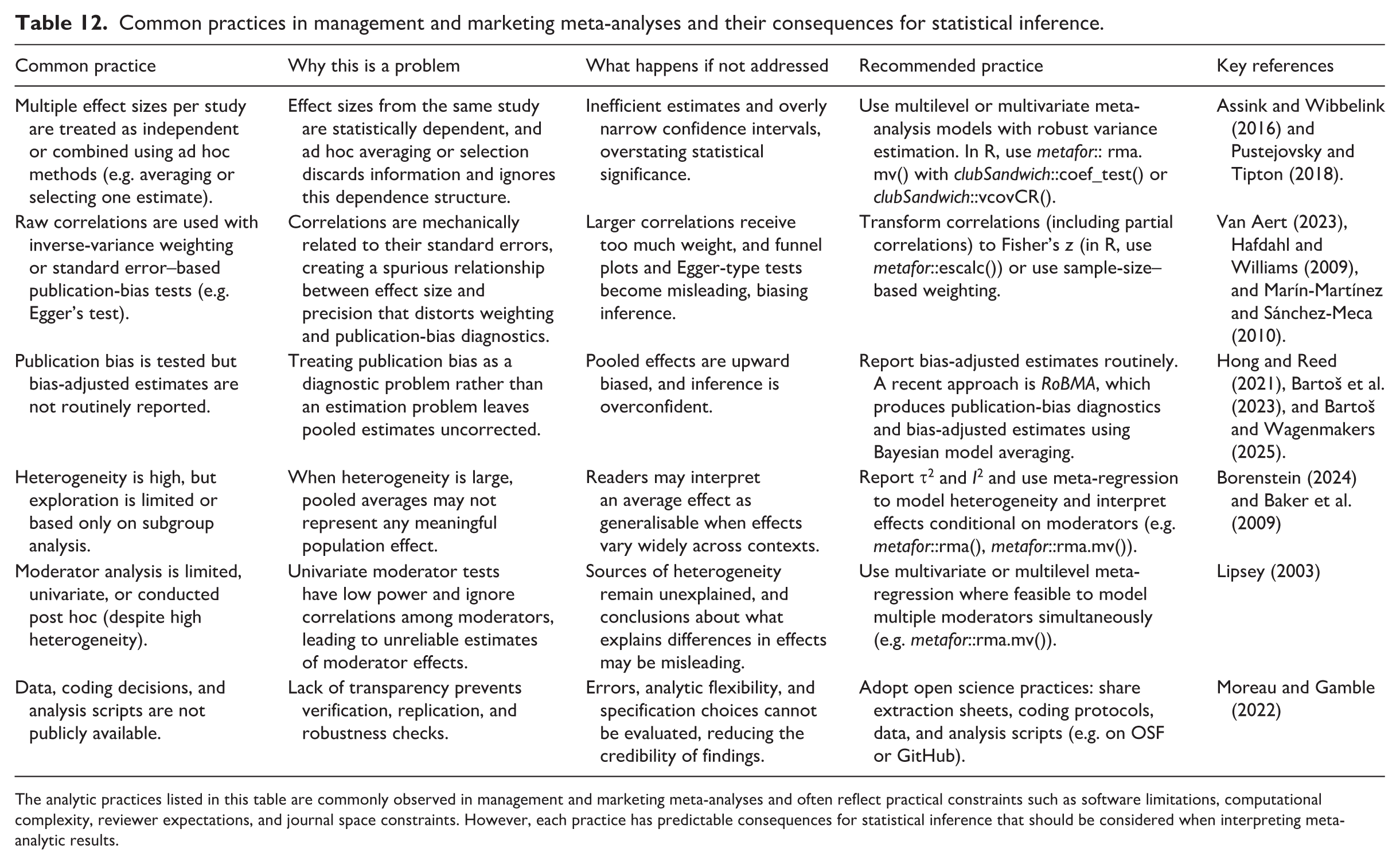

Rather than treating these patterns as a checklist of best-practice violations, we focus on their inferential consequences. The aim is not to propose another set of methodological guidelines but to identify which departures from recommended practice materially affect meta-analytic inferences and, in turn, the credibility of cumulative evidence in these fields. Accordingly, this study makes four main contributions. First, we provide a contemporary descriptive assessment of how meta-analyses in management and marketing are conducted. Second, we identify systematic gaps between routine analytic practice and generally accepted methodological guidelines. Third, we show how these gaps translate into distinct inferential consequences, including inefficient estimates, biased pooled effects, misspecified uncertainty, and limited interpretability when heterogeneity is extreme. Fourth, we synthesise these findings into a practical framework that links common meta-analytic practices to their inferential consequences and identifies which methodological choices are most consequential for statistical inference (Table 12). This framework provides applied researchers with guidance on how to prioritise methodological decisions when conducting and evaluating meta-analyses in management and marketing. We also trace how analytic choices interact with the empirical conditions typical of this literature domain to shape statistical inferences.

The remainder of this article is structured as follows. Section 2 describes the process used to identify and select the 100 meta-analyses that constitute our sample. Section 3 presents summary statistics describing how meta-analyses are currently conducted in management and marketing. Section 4 identifies gaps between these practices and generally accepted methodological guidelines. Section 5 examines the inferential consequences of these meta-analytic practices. Section 6 concludes this article.

2. Literature search

We identify meta-analyses using Scopus, one of the largest bibliographic databases and a widely used source for systematic literature searches. Scopus provides broad coverage of published research across management and related disciplines and offers filtering tools that allow users to restrict search results by subject area and keyword. Its coverage of business and management journals is comparable to that of Web of Science, but it offers more granular subject classifications. These features make Scopus well-suited for systematically identifying meta-analytic studies in management and marketing.

We query Scopus by searching the title, abstract, and keywords fields for the term “meta-analysis,” and restrict the results to publications from 2023 to 2025. Using subject-area filters, we retain only articles in Business, Management, and Accounting, yielding 874 records. Next, we restrict the pool to articles published in A-level or higher journals, as defined by the FT50 and ABDC journal quality lists for management and marketing. Because these top-tier journals exert substantial influence over methodological norms, they provide a useful lens for evaluating contemporary meta-analytic practices. After applying this restriction, 208 eligible meta-analyses remain. To avoid overrepresentation from any single outlet, we impose a cap of 10 meta-analyses per journal. Then, from this pool, we retain 100 meta-analyses, representing approximately 48% of the 208 eligible studies. 1 This large, broadly representative snapshot of current practices reflects a practical limit that enables in-depth coding. The studies are downloaded according to the Scopus relevance ranking, so that the final sample reflects prominent, recent, meta-analytic work in leading management and marketing journals. Our approach aligns with Wu et al.’s (2026) analysis of meta-analytic practices across 10 disciplines (e.g. medicine, engineering, and the physical and social sciences). All coding decisions, extracted variables, and analytic scripts are documented and publicly available through the OSF repository cited in the Data Availability Statement.

3. Characterising current meta-analytic practices

With this descriptive section, we seek to document how meta-analyses in management and marketing are conducted currently, without assessing whether these practices conform to methodological guidelines or considering their inferential consequences. The interpretations of these patterns are the focus of Sections 4 and 5.

3.1. Number of studies and estimates

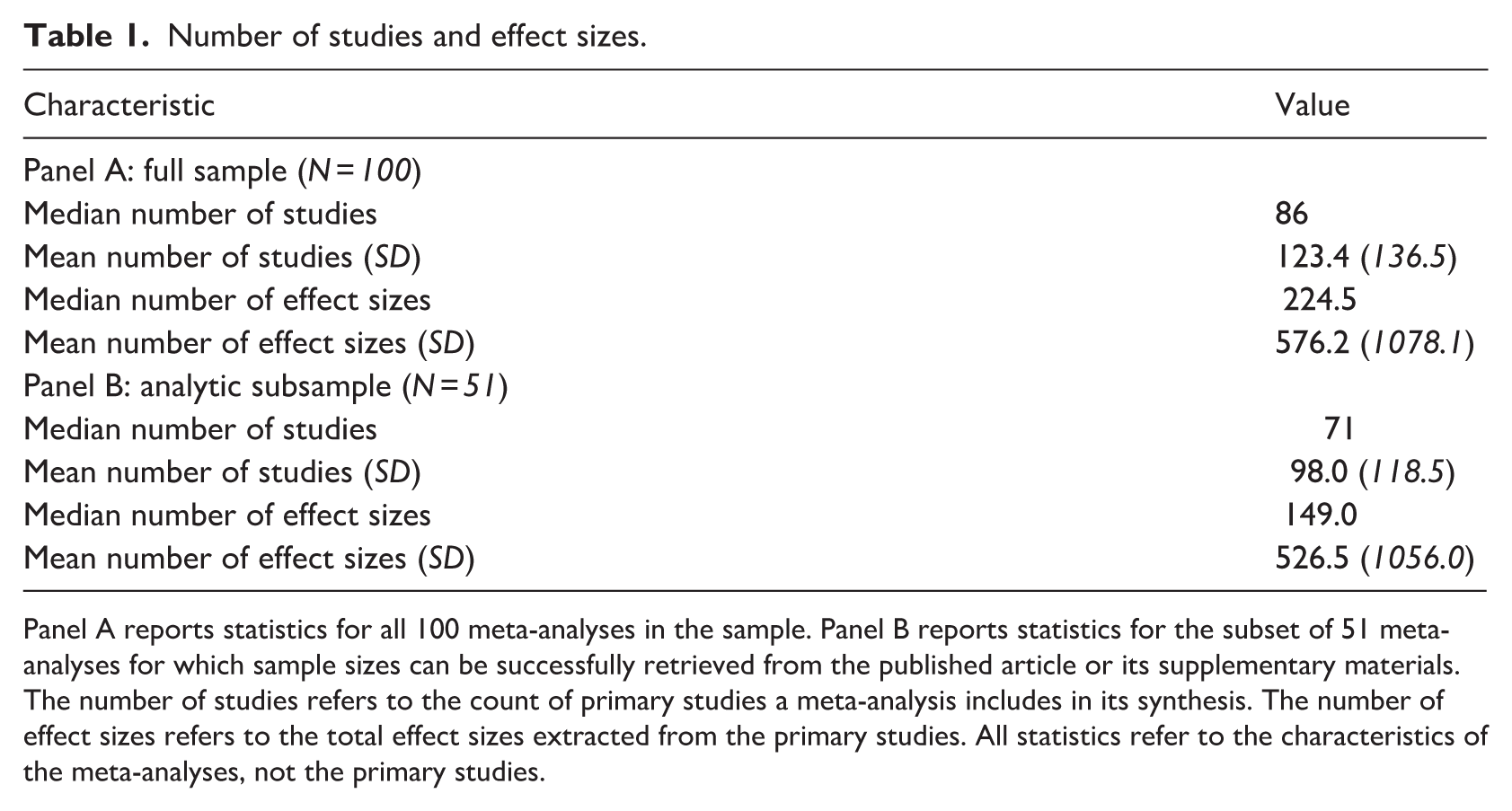

Table 1 summarises the distribution of studies and effect sizes across our sample. Considering all 100 meta-analyses, Panel A shows that, at the median, a meta-analysis includes 86 studies (mean = 123.4; SD = 136.5), but a substantially larger number of extracted effect sizes, with a median of 224.5 and a mean of 576.2 (SD = 1078.1). These values indicate considerable variation in data set size across studies.

Number of studies and effect sizes.

Panel A reports statistics for all 100 meta-analyses in the sample. Panel B reports statistics for the subset of 51 meta-analyses for which sample sizes can be successfully retrieved from the published article or its supplementary materials. The number of studies refers to the count of primary studies a meta-analysis includes in its synthesis. The number of effect sizes refers to the total effect sizes extracted from the primary studies. All statistics refer to the characteristics of the meta-analyses, not the primary studies.

Many meta-analyses initially report a larger collection of studies and effect sizes, but then base their substantive analyses on restricted subsamples. However, the sample sizes for these analyses – that is, the samples included in the statistical models – are not always explicitly reported. Because our assessment characterises the focal meta-analyses according to the samples actually used in their statistical models, this distinction is important. We use the term “analytic sample size” to denote the number of studies and effect sizes included in the statistical models reported by a meta-analysis. If these quantities are not explicitly stated, we reconstruct them by consulting supplementary materials, cross-checking tables and figures, and, where necessary, inferring data from the text descriptions. When none of these sources proves sufficient, we default to the overall number of studies and effect sizes. This procedure reflects our effort to document the scale of each meta-analysis as consistently as possible.

Panel B in Table 1 contains the descriptive statistics for the subset of meta-analyses (N = 51) for which we can recover the analytic sample sizes. Even within this subset, the variation in the number of studies and effect sizes is substantial. Specifically, the median meta-analysis includes 71 studies and 526.5 effect sizes, with standard deviations of 118.5 and 1056, respectively.

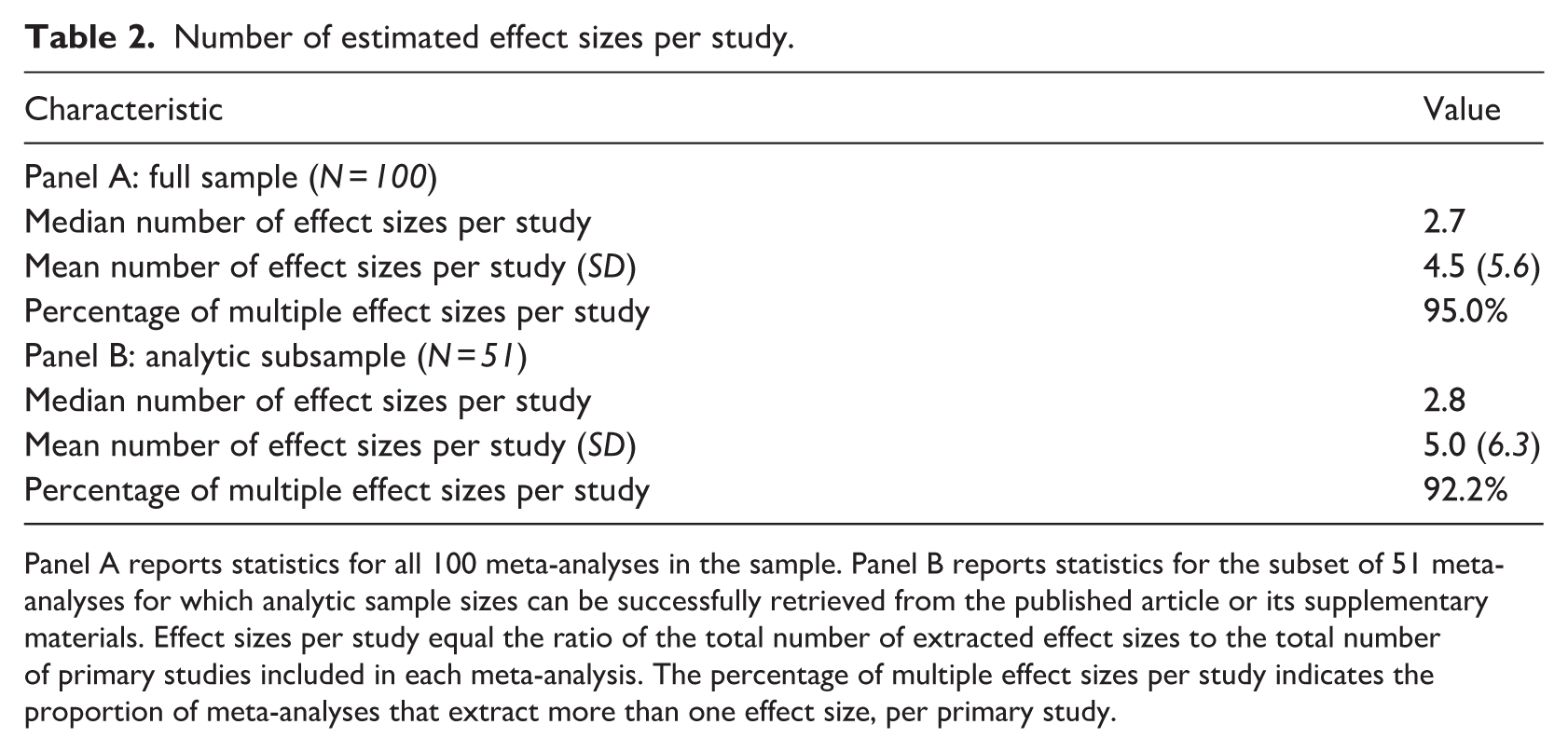

The notable gaps between the number of studies and the number of effect sizes indicate that most meta-analyses extract multiple estimated effects from individual primary studies. As Table 2 reveals, 95% of meta-analyses include more than one effect size per study, with a median of 2.7 effect sizes per study (mean = 4.5; SD = 5.6). Using the reconstructed analytic samples, we obtain comparable results, with a median of 2.8 effect sizes per study, such that 92% of studies report more than one estimate. Extracting multiple estimates from a single primary study creates statistical dependence because the estimates often rely on the same underlying sample or measure related constructs. Such a nested structure is a common feature of meta-analytic data sets in management and marketing.

Number of estimated effect sizes per study.

Panel A reports statistics for all 100 meta-analyses in the sample. Panel B reports statistics for the subset of 51 meta-analyses for which analytic sample sizes can be successfully retrieved from the published article or its supplementary materials. Effect sizes per study equal the ratio of the total number of extracted effect sizes to the total number of primary studies included in each meta-analysis. The percentage of multiple effect sizes per study indicates the proportion of meta-analyses that extract more than one effect size, per primary study.

In summary, the number of studies and effect sizes varies widely across meta-analyses, such that a benchmark using a “typical” meta-analysis is uninformative. Furthermore, the routine extraction of multiple estimates from the same primary study generates a nested data structure, which must be addressed in meta-analytic modelling. We examine how such dependence has been handled in practice in Section 4.1.

3.2. Choice of estimators

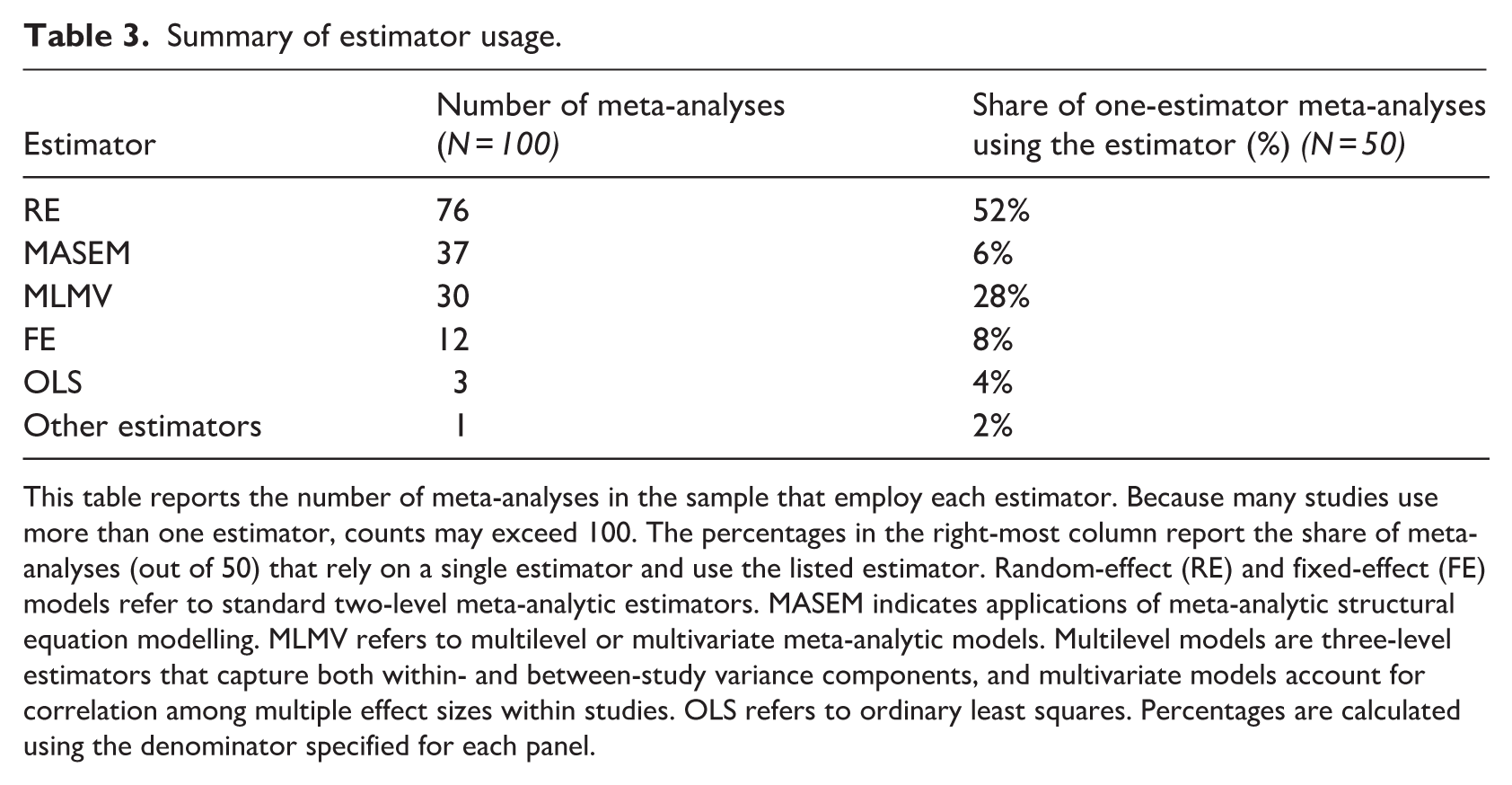

Table 3 summarises the estimators used across the meta-analyses in our sample. Standard textbook treatments present fixed-effect (FE) and random-effect (RE) models as two canonical estimators, usually under the assumption that every primary study contributes a single effect estimate. FE models assume that all studies estimate a common true effect, whereas RE models allow true effects to vary across studies. Our findings reveal that authors overwhelmingly prefer RE models: 76% of our focal meta-analyses apply an RE estimator. Among studies that rely on a single estimator, 52% use RE exclusively. FE models, in contrast, appear only 12 times in total, despite receiving comparable attention in methodological recommendations. They rarely function as the lone estimator in a meta-analysis (8%).

Summary of estimator usage.

This table reports the number of meta-analyses in the sample that employ each estimator. Because many studies use more than one estimator, counts may exceed 100. The percentages in the right-most column report the share of meta-analyses (out of 50) that rely on a single estimator and use the listed estimator. Random-effect (RE) and fixed-effect (FE) models refer to standard two-level meta-analytic estimators. MASEM indicates applications of meta-analytic structural equation modelling. MLMV refers to multilevel or multivariate meta-analytic models. Multilevel models are three-level estimators that capture both within- and between-study variance components, and multivariate models account for correlation among multiple effect sizes within studies. OLS refers to ordinary least squares. Percentages are calculated using the denominator specified for each panel.

This heavy reliance on RE models is consistent with the heterogeneity we observe in constructs, measurement strategies, and empirical contexts, because RE models allow true effects to vary across studies. Although RE models relax the homogeneity restriction imposed by FE models, they maintain assumptions regarding independence among effect sizes and the relationship between effect sizes and their standard errors. These assumptions motivate the use of alternative estimators, such as multilevel models, robust variance estimators, and meta-analytic structural equation modelling (MASEM), each of which relaxes constraints imposed by conventional two-level frameworks.

Among these alternative estimators, MASEM appears especially frequently in our sample: 37 meta-analyses employ it. 2 It integrates meta-analysis with structural equation modelling (SEM) to determine how multiple relationships fit within a broader theoretical process, rather than focusing on a single association in isolation (Cheung, 2014). In turn, MASEM is well-suited to process-oriented theories that feature mediating mechanisms and interrelated constructs, as are common in management and marketing research (Bergh et al., 2017). However, MASEM rarely functions as a stand-alone estimator (6%). Instead, it almost always appears in studies that report results from multiple estimators, suggesting that researchers use MASEM alongside, rather than in place of, more conventional analyses (i.e. RE models). Researchers appear to rely on FE or RE frameworks to estimate effects and publication-bias risks and then apply MASEM to explore the structure of those relationships within a broader theoretical framework. Because MASEM operates on pooled meta-analytic inputs, it cannot address publication bias and inherits selection distortions from the underlying estimates.

We also identify multilevel and multivariate models (MLMVs) 3 in 30 meta-analyses, driven almost entirely by multilevel applications. Unlike conventional FE or RE models, which treat effect sizes as independent, MLMV approaches are designed to accommodate the dependence that arises when multiple estimates come from the same primary study. In detail, multilevel models apply when studies report multiple estimates of the same underlying effect (e.g. across alternative specifications or robustness checks), and multivariate models are used when studies report multiple, related outcomes or multiple measures of the same construct. In our sample, 29 meta-analyses feature a multilevel model; only one uses a fully multivariate model. Both approaches are designed to yield more efficient and reliable estimates than FE or RE models when the assumption of independence is violated (Van den Noortgate et al., 2013, 2015). Thus, when we focus on meta-analyses that rely on a single estimator, MLMVs – and particularly multilevel models – remain a non-trivial minority, appearing in 28% of studies. This pattern suggests that a substantial cohort of authors treat multilevel models as direct alternatives to conventional two-level FE or RE estimators when the data feature dependence among effect sizes.

3.3. Choice of effect sizes

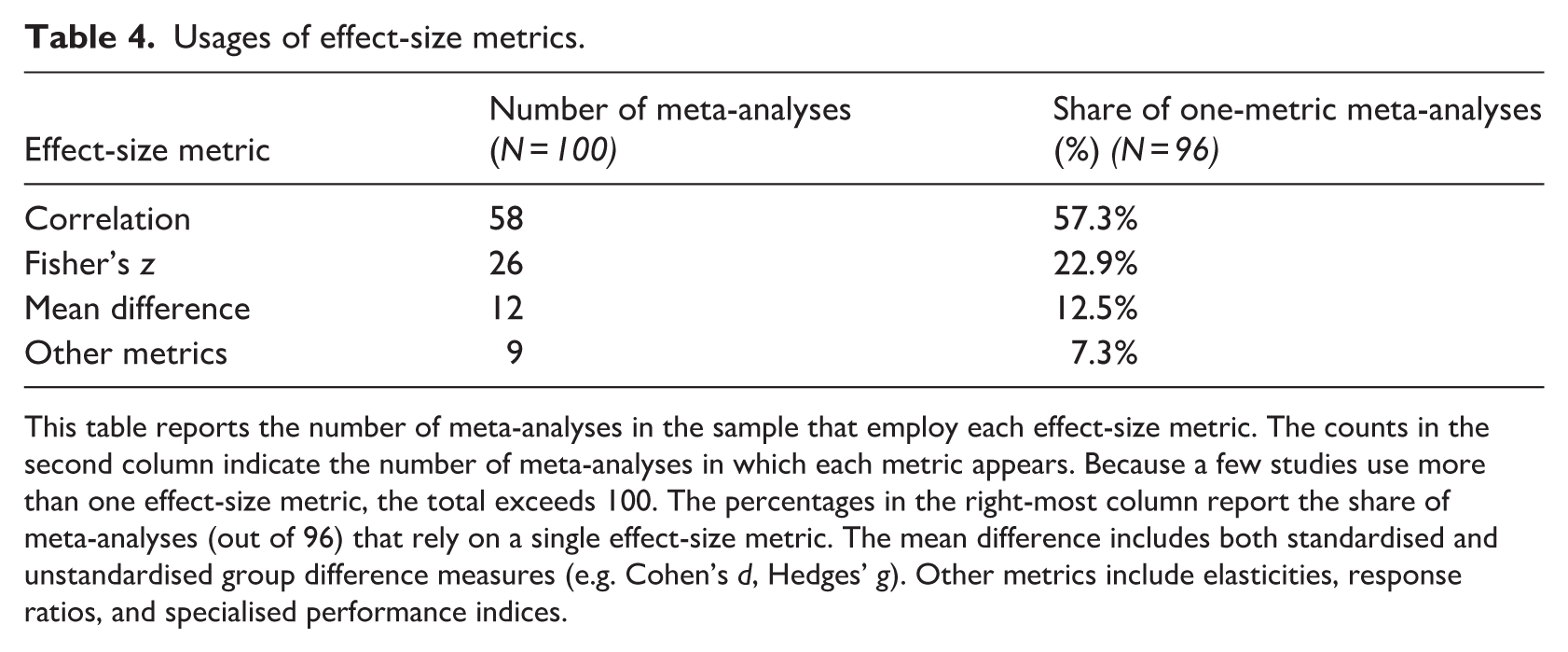

The distribution of effect-size metrics in Table 4 reflects the tendency of meta-analyses in our sample to employ more than one metric, such that total usage sums to more than 100. Correlations are by far the most commonly used effect-size metric, appearing in 58 meta-analyses. Approximately one-quarter of studies use Fisher’s z transformations. Far fewer use mean differences or other effect-size metrics, such as elasticities (12) or response ratios (9). Among meta-analyses that rely on a single metric, correlations account for approximately six of ten cases (57%).

Usages of effect-size metrics.

This table reports the number of meta-analyses in the sample that employ each effect-size metric. The counts in the second column indicate the number of meta-analyses in which each metric appears. Because a few studies use more than one effect-size metric, the total exceeds 100. The percentages in the right-most column report the share of meta-analyses (out of 96) that rely on a single effect-size metric. The mean difference includes both standardised and unstandardised group difference measures (e.g. Cohen’s d, Hedges’ g). Other metrics include elasticities, response ratios, and specialised performance indices.

The prominence of correlations reflects the empirical orientation of management and marketing research; scholars frequently examine associations among constructs using cross-sectional survey data. Correlation matrices are routinely reported in primary studies, making correlation-based metrics a convenient and readily available choice for meta-analysis. We examine the implications of such reliance for weighting, heterogeneity, and publication-bias assessments in Section 4.2.

3.4. Reporting heterogeneity

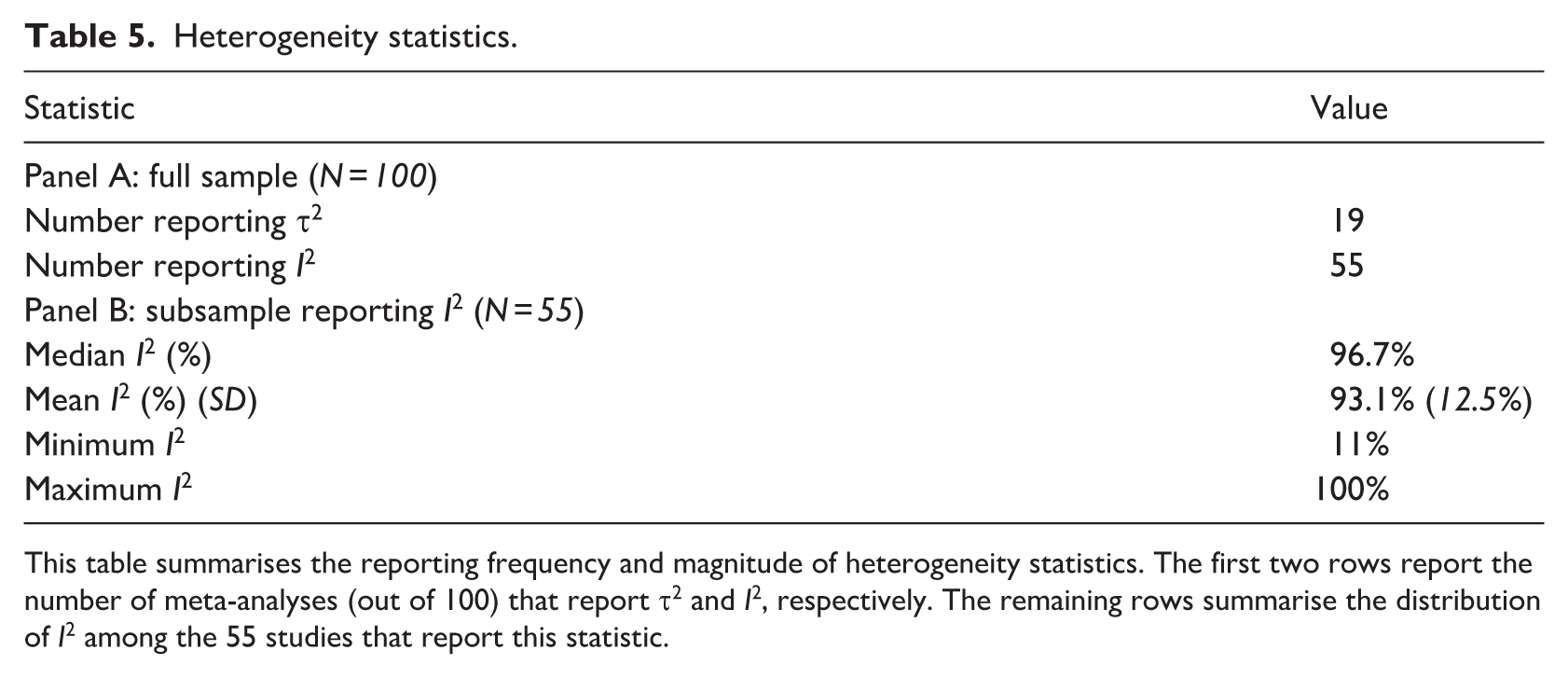

Heterogeneity in true effects is a central feature of meta-analytic data and is routinely summarised using τ2 (tau-squared) or I2 (I-squared). The τ2 statistic measures the absolute magnitude of between-study variance in the squared units of the effect size, whereas I2 expresses the proportion of observed variation attributable to true differences in effects rather than sampling error. Because I2 is a relative measure, its value depends on the magnitude of sampling error; the same level of between-study variance can produce a low I2 if sampling error is large and a high I2 if it is small. For this reason, methodological guidelines commonly recommend reporting both τ2 and I2 to provide a more complete description of heterogeneity (Borenstein et al., 2017). Table 5 summarises how our sample of meta-analyses reports heterogeneity. Of the 100 studies, 19 report τ2, and 55 report I2. Among the latter, we find high reported levels: the median I2 is 96.7%, and the values range from 11% to 100%. 4

Heterogeneity statistics.

This table summarises the reporting frequency and magnitude of heterogeneity statistics. The first two rows report the number of meta-analyses (out of 100) that report τ2 and I2, respectively. The remaining rows summarise the distribution of I2 among the 55 studies that report this statistic.

These summary statistics reflect substantial variation in the estimated effects across the studies that report heterogeneity measures. The I2 values indicate that much of the observed dispersion can be attributed to between-study variation rather than sampling error. However, because I2 is a relative measure, its magnitude must be interpreted in conjunction with other features of the data, including study precision and sample size. The evidence in Table 5 thus documents both uneven reporting of heterogeneity statistics and very high levels of heterogeneity in studies that report I2. In Sections 4.3 and 5.5, we detail how these patterns relate to recommended reporting practices and how they affect estimation, interpretation, and inferences.

3.5. Detecting publication bias

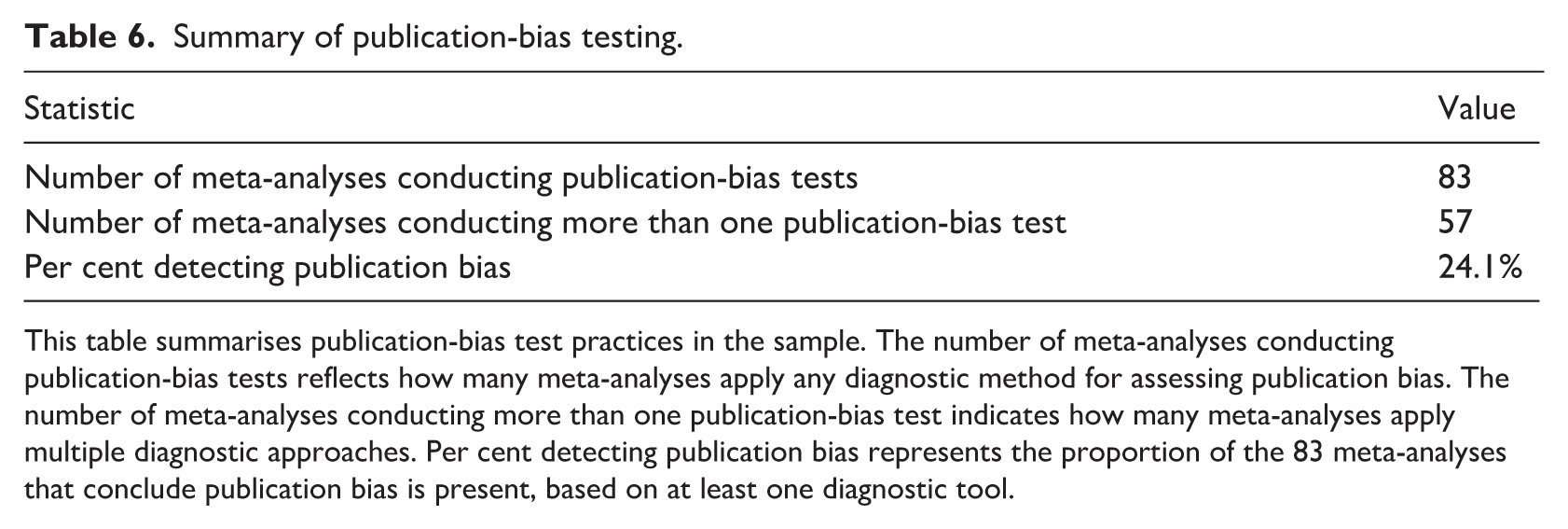

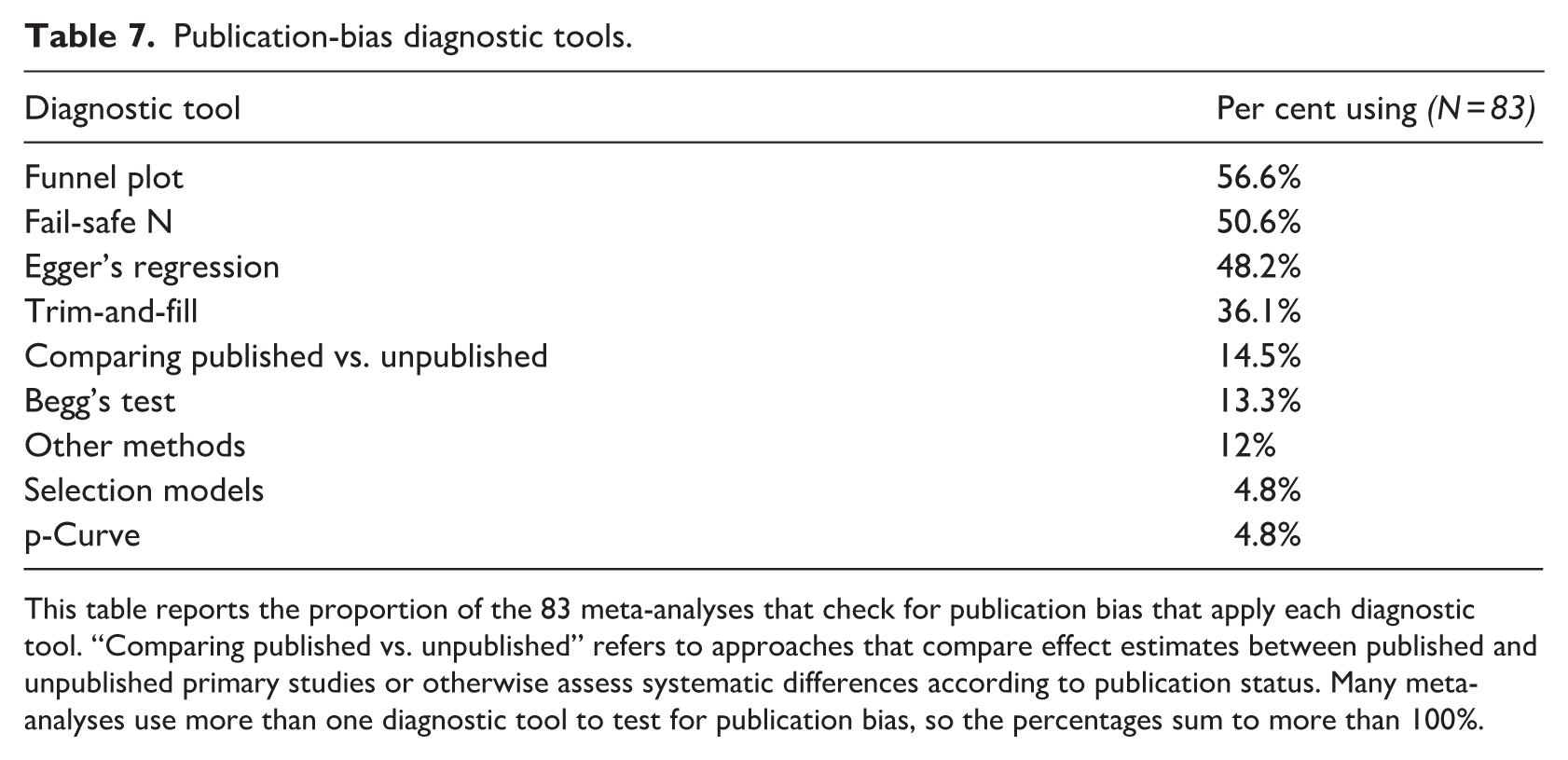

Systematic differences can arise between published and unpublished research when the likelihood of publication depends on the direction, magnitude, or statistical significance of the reported results. In meta-analyses, selection processes can influence the distribution of observed effect sizes and, thus, the properties of pooled estimates. Table 6 summarises publication-bias testing practices in our sample. Of the 100 studies, 83 conduct at least one diagnostic test for publication bias, and 57 apply more than one. Among studies that test for publication bias, 24% conclude that publication bias is present according to at least one diagnostic test. As shown in Table 7, funnel plots are the most frequently employed method (57%), followed by Fail-safe N (51%), Egger’s regression test (48%), and trim-and-fill methods (36%). Less frequently used approaches include comparisons of published and unpublished studies (15%), Begg’s rank correlation test (13%), selection models (5%), and p-curve analysis (5%).

Summary of publication-bias testing.

This table summarises publication-bias test practices in the sample. The number of meta-analyses conducting publication-bias tests reflects how many meta-analyses apply any diagnostic method for assessing publication bias. The number of meta-analyses conducting more than one publication-bias test indicates how many meta-analyses apply multiple diagnostic approaches. Per cent detecting publication bias represents the proportion of the 83 meta-analyses that conclude publication bias is present, based on at least one diagnostic tool.

Publication-bias diagnostic tools.

This table reports the proportion of the 83 meta-analyses that check for publication bias that apply each diagnostic tool. “Comparing published vs. unpublished” refers to approaches that compare effect estimates between published and unpublished primary studies or otherwise assess systematic differences according to publication status. Many meta-analyses use more than one diagnostic tool to test for publication bias, so the percentages sum to more than 100%.

These results document substantial variation in both the frequency and the choice of publication-bias diagnostics across meta-analyses in management and marketing. We consider the interpretations of these diagnostics, their performance under typical empirical conditions, and the extent to which publication-bias assessments are incorporated into estimation results in Sections 4.4 and 5.4.

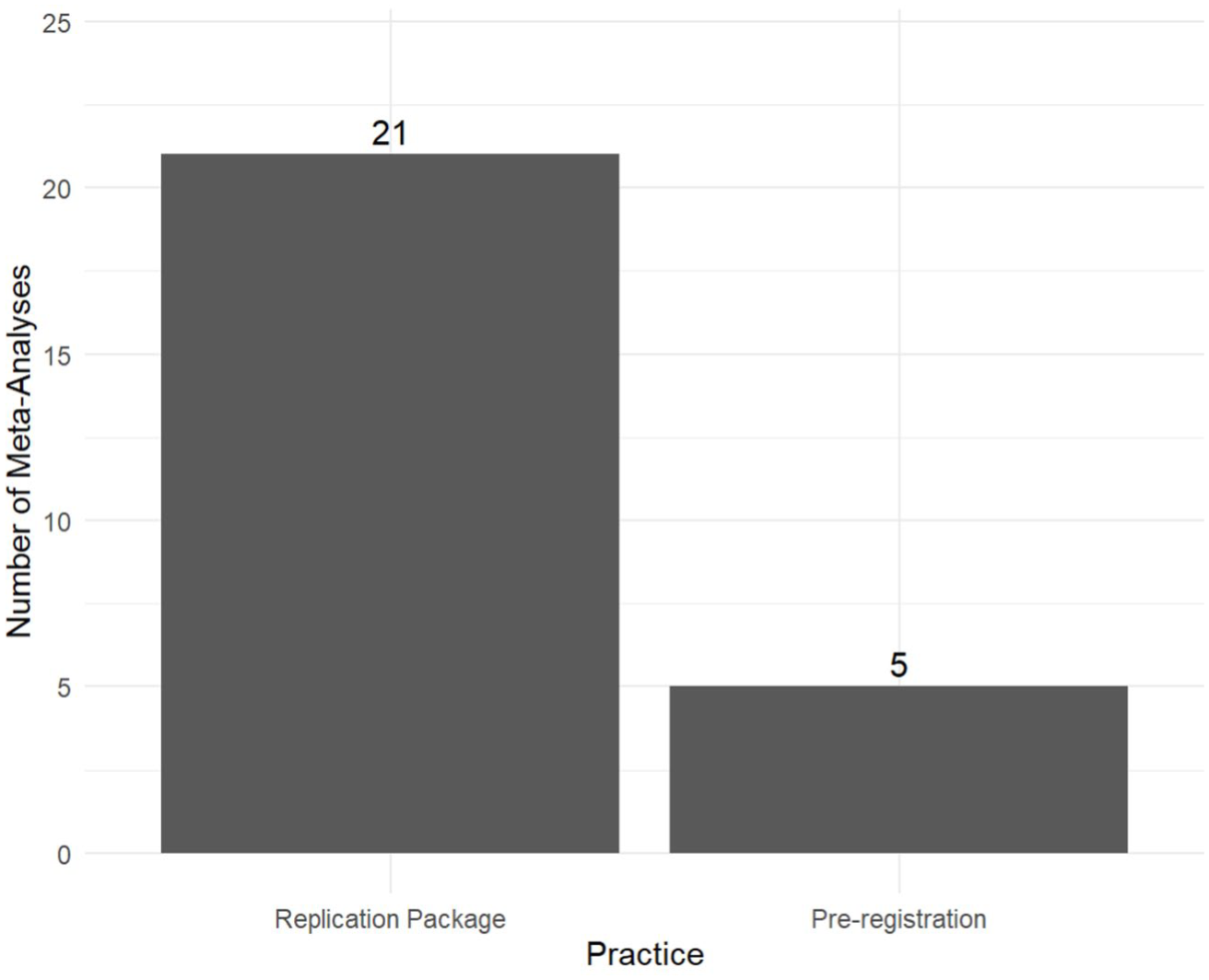

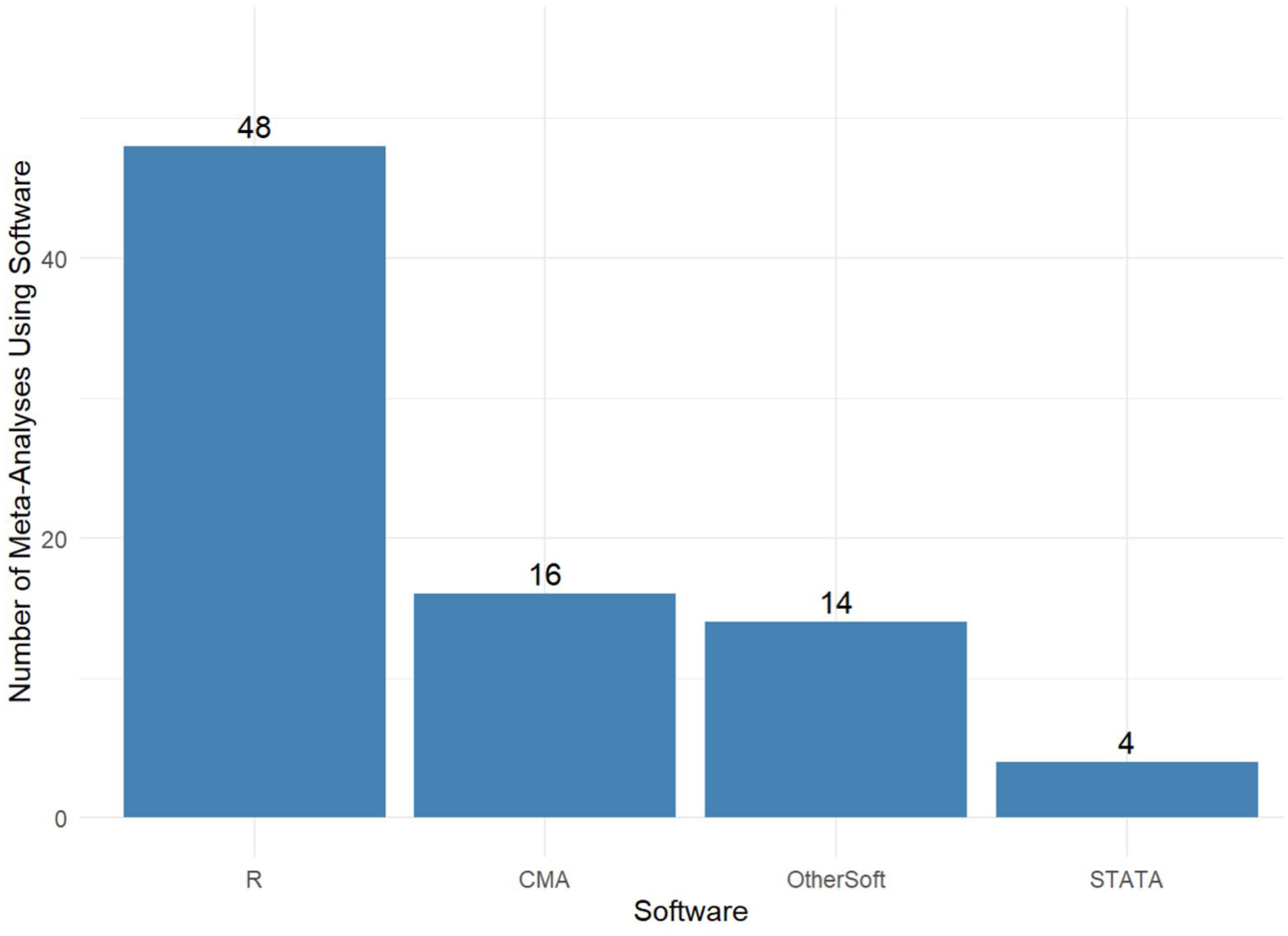

3.6. Replication materials

To identify meta-analyses in management and marketing that provide replication materials or are preregistered, we determine whether each study includes an explicit data availability statement and provides an accessible link to the data or code, or supplies the data or code as supplementary materials. Figure 1 summarises the data sharing and preregistration practices among the meta-analyses in our sample and shows that 21 studies share their data sets or analysis code and 5 are preregistered. These rates are notably low compared with those reported in broader surveys of social science. For example, Ferguson et al. (2023) report that 77% of 3257 social scientists assert that they share their data or code and that 25% claim they preregister their studies. Similarly, Logg and Dorison (2021) find that among 248 active social and behavioural science researchers, 54% report having shared data and 50% report having preregistered a study.

Open science practices.

Such limited transparency may restrict other researchers’ ability to reproduce results, evaluate alternative modelling decisions, or update meta-analyses as new evidence emerges. Many of the methodological issues we discuss subsequently, including effect-size dependence, estimator choice, and publication bias, require access to underlying data sets and analytic code for reanalysis. Thus, making replication materials available is critical for cumulative research. We return to the implications of transparency practices for methodological gaps and inferential reliability in Sections 4.6 and 5.6.

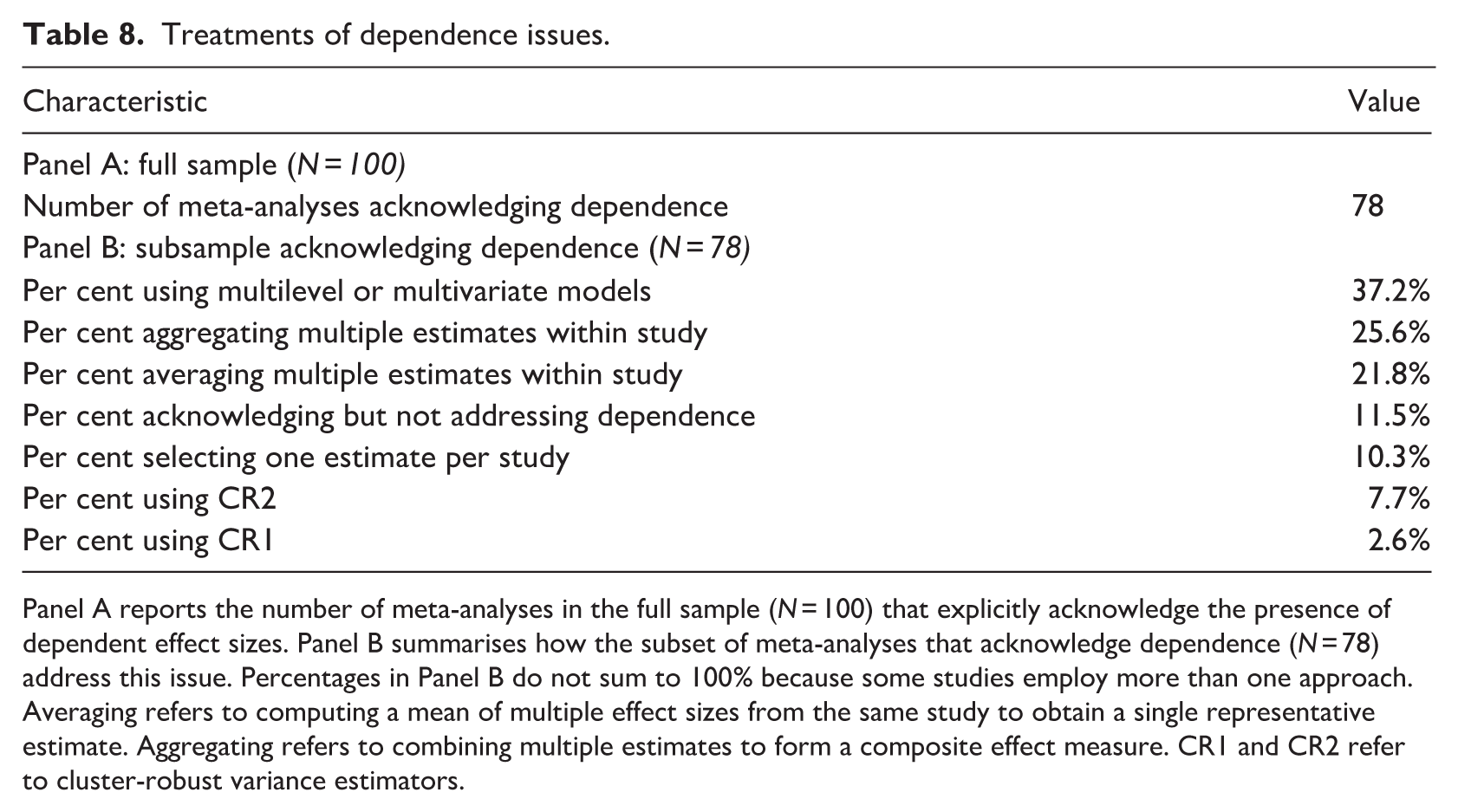

3.7. Software and implementation environment

Figure 2 depicts the software environments that management and marketing researchers use to implement meta-analyses. In our sample, 48 meta-analyses are implemented in R (R Core Team, 2024), making it the most commonly used platform, followed by Comprehensive Meta-Analysis (CMA; Borenstein et al., 2022), employed in 16 studies.

Software usage rates.

The choice of software influences the types of analyses researchers can undertake because different software environments support different methodological capabilities. For example, CMA provides user-friendly implementation of conventional FE and RE models and common publication-bias diagnostics. However, it offers limited support for complex dependence structures and newer, model-based, bias-correction methods.

In contrast, programmable statistical environments such as R allow researchers to implement a wider range of models through specialised packages, including MLMV meta-analyses, robust variance estimation (RVE), and SEM-based meta-analyses. As a result, methodological variations may reflect different software capabilities, as well as differences in researchers’ training and disciplinary norms. Quantitatively oriented researchers are more likely to use programmable environments, whereas applied researchers without programming backgrounds may prefer dedicated graphical software.

4. Methodological priorities and gaps in current practice

Section 4 evaluates current practices relative to generally accepted methodologies to identify systematic gaps (i.e. areas where routine analytic choices diverge from recommended standards). Section 5 then examines the inferential consequences of these gaps. We argue that these gaps are particularly important given common empirical features of the management and marketing literature, including large numbers of extracted estimates, within-study dependence, extreme heterogeneity, and strong incentives to report statistically significant results.

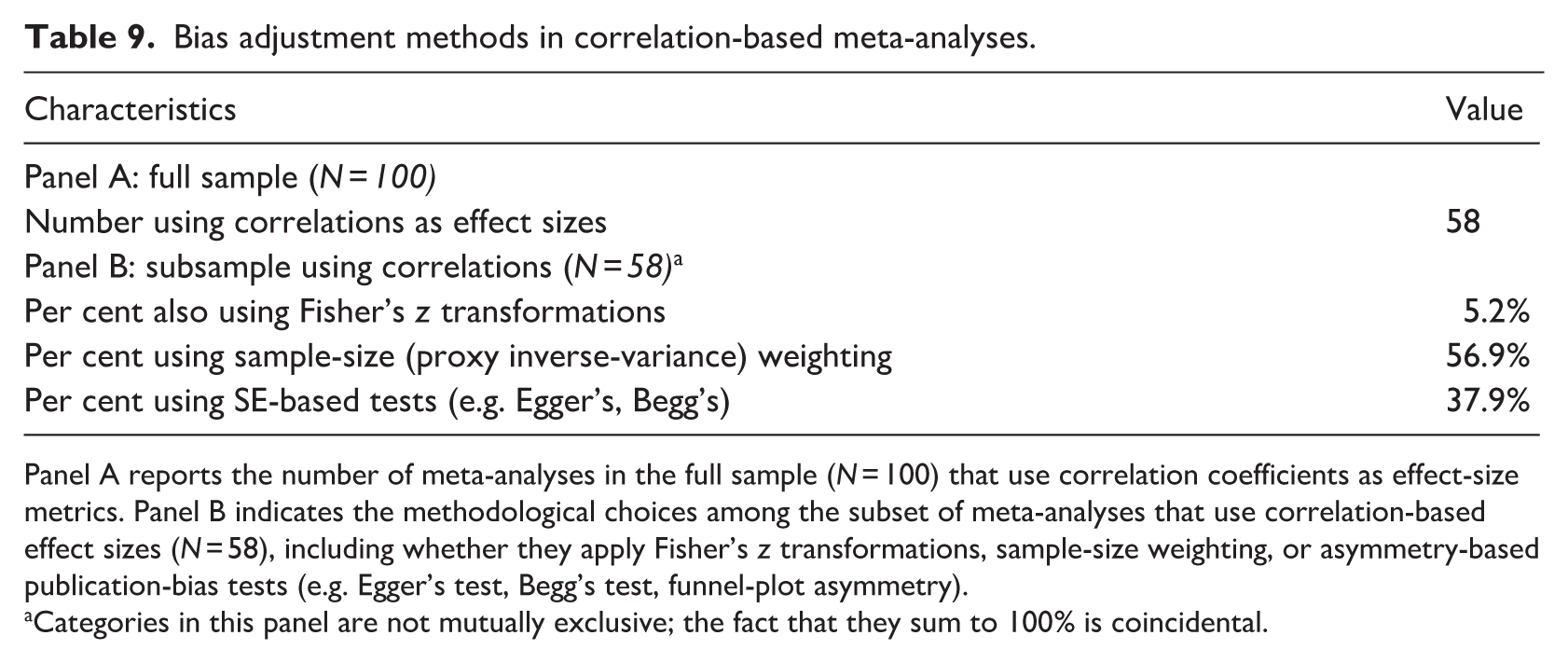

4.1. Dependence among effect sizes: recognised but poorly addressed

Methodological guidance is explicit: Dependence among effect sizes should be addressed using models or variance estimators that account for within-study clustering. However, as documented in Section 3, most meta-analyses in management and marketing acknowledge the presence of dependent effect sizes, but relatively few account for it. Such dependence may arise from two main sources. First, when multiple effect sizes are drawn from a single primary study, they often reflect repeated observations of a common underlying study-level effect (Van den Noortgate et al., 2013). Second, effect sizes derived from the same respondents and data set may share sampling error. In such cases, their residuals covary, violating the independence assumptions that underlie conventional FE and RE models (Cheung, 2019).

Several methodological approaches exist for addressing dependence. Multilevel (i.e. three-level) meta-analytic models treat effect sizes as nested within studies and decompose variance into within- and between-study components (Van den Noortgate et al., 2013, 2015). Multivariate models represent dependence with structured variance–covariance matrices, which capture correlations among outcomes or repeated measures drawn from the same sample (Jackson et al., 2011). Although MASEM can incorporate dependence when it provides the primary modelling framework by jointly modelling multiple relationships within studies, this feature cannot be leveraged if MASEM is applied after conventional pooling.

Other approaches instead adjust standard errors to account for clustering. For example, RVE adapts cluster-robust variance estimators to meta-analytic settings with inverse-variance weighting and common data structures (Hedges et al., 2010; Tanner-Smith et al., 2016). Two generations of small-sample adjustments to RVE have emerged. The first, CR1 (i.e. cluster-robust variance estimator), applies a simple multiplicative correction to the variance estimator and provides asymptotic validity but limited finite-sample protection. Subsequent efforts produced CR2 (i.e. bias-reduced cluster-robust variance estimator), a second-generation bias-reduced estimator designed to improve finite-sample performance for smaller sets of studies (Pustejovsky and Tipton, 2018; Tipton, 2015). Methodological guidelines typically recommend pairing CR2 with Satterthwaite degrees-of-freedom adjustments for hypothesis testing (Tipton, 2015), and simulation evidence indicates its superior performance relative to CR1 for conventional sample sizes (Tipton et al., 2019).

Although these approaches are well established, their adoption remains limited, as Table 8 illustrates. Panel A shows that 78% of meta-analyses explicitly acknowledge dependence concerns, yet Panel B reveals that relatively few studies address it. In detail, among studies that acknowledge dependence, approximately 12% do not offer any indication of how they handle it. More than half rely on ad hoc strategies, such as aggregating (25.6%), averaging (21.8%), or selecting (10.3%) estimates to collapse multiple results obtained from each primary study. 5 That is, they do not adopt the model-based and variance-adjustment approaches called for in methodological recommendations (Cheung, 2019). In turn, only 37.2% of the 78 meta-analyses that acknowledge dependence employ multilevel or multivariate models. Even fewer adopt RVE: 2.6% apply CR1 and 7.7% apply CR2, despite methodological guidance recommending CR2 in typical meta-analytic settings.

Treatments of dependence issues.

Panel A reports the number of meta-analyses in the full sample (N = 100) that explicitly acknowledge the presence of dependent effect sizes. Panel B summarises how the subset of meta-analyses that acknowledge dependence (N = 78) address this issue. Percentages in Panel B do not sum to 100% because some studies employ more than one approach. Averaging refers to computing a mean of multiple effect sizes from the same study to obtain a single representative estimate. Aggregating refers to combining multiple estimates to form a composite effect measure. CR1 and CR2 refer to cluster-robust variance estimators.

In summary, within-study dependence is widely recognised in management and marketing meta-analyses, but it is often addressed using approaches that diverge from established methodological recommendations. The inferential consequences of these departures are examined in Section 5.1.

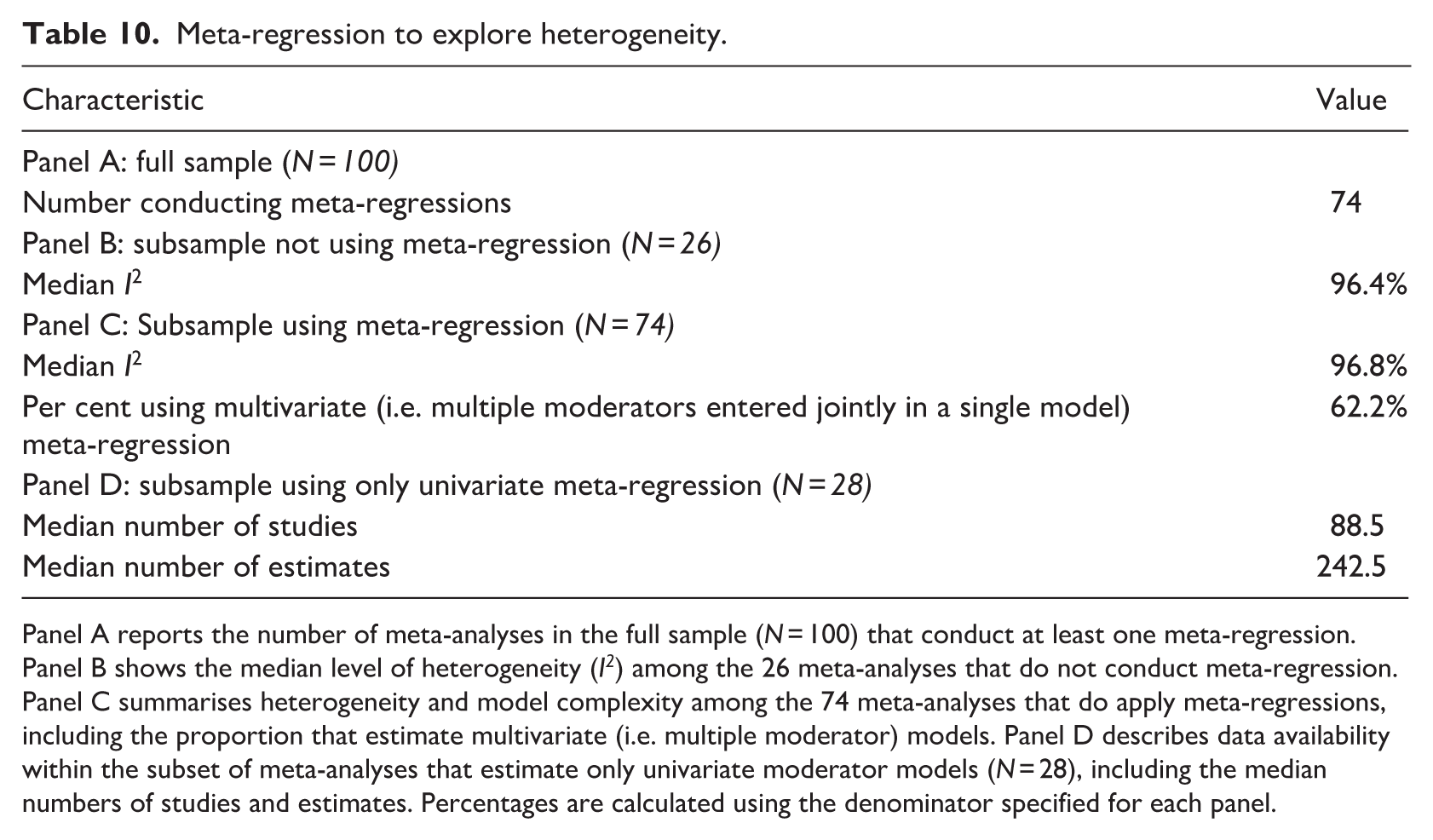

4.2. Correlation-based effect sizes: prevalent but weakly aligned with guidance

Correlation coefficients often appear as effect sizes in management and marketing meta-analyses, reflecting both their intuitive interpretability and their common appearance in primary studies. In our sample, nearly 60% of meta-analyses rely on correlations as effect sizes (Table 4), despite the complications they create for meta-analysis. That is, meta-analyses estimate mean effects using inverse-variance weighting, which assigns greater weight to more precise estimates. Ideally, the precision of an estimate should depend on pertinent factors, such as sample size, rather than the magnitude of the estimated effect. With correlations, however, estimated precision depends on the magnitude of the correlation, such that larger correlations appear mechanically more precise than smaller correlations, even when they are estimated from the same sample size. This relationship is evident in the commonly used expression for the standard error of Pearson’s r (Hafdahl and Williams, 2009; Wiernik and Dahlke, 2020):

Because the standard error depends on the size of the correlation, larger correlations mechanically produce smaller standard errors (holding sample size constant), so inverse-variance weighting assigns greater weight to larger correlations. If meta-analyses use raw correlations as effect sizes, this mechanical relationship can bias pooled estimates upwards in absolute value. Similar concerns arise when raw correlations are used as inputs to secondary modelling approaches, such as MASEM, which typically operate on pooled meta-analytic estimates rather than constructing effect sizes in accordance with methodological recommendations.

Widely used publication-bias diagnostics, such as Egger’s regression, Begg’s rank correlation test, and funnel-plot asymmetry, are also affected by this algebraic relationship between correlations and their standard errors. That is, these diagnostics rely on an assumption of independence between effect sizes and their standard errors, but this assumption does not hold when raw correlations are used without adjustment. Best-practice guidelines caution against applying standard, asymmetry-based diagnostics to untransformed correlation coefficients. Instead, they offer two solutions. The first is to transform correlations into Fisher’s z values prior to estimation, which breaks the deterministic link between effect magnitudes and standard errors (Stanley et al., 2024; Van Aert, 2023). The second is to define the applied weights according to the sample size, rather than estimated standard errors, and use sample size as a proxy for information content (Schmidt and Hunter, 2015). Both approaches are well established.

Observed meta-analytic practice in management and marketing, however, does not indicate widespread use of these approaches. Among the 58 meta-analyses in our sample that rely on correlation-based effect sizes, only 5% apply Fisher’s z transformations; approximately 57% rely on sample-size weighting (Panel B in Table 9). In addition, 38% of correlation-based meta-analyses employ standard error–based publication-bias diagnostics, such as Egger’s or Begg’s tests, without making adjustments to the effect-size construction.

Bias adjustment methods in correlation-based meta-analyses.

Panel A reports the number of meta-analyses in the full sample (N = 100) that use correlation coefficients as effect-size metrics. Panel B indicates the methodological choices among the subset of meta-analyses that use correlation-based effect sizes (N = 58), including whether they apply Fisher’s z transformations, sample-size weighting, or asymmetry-based publication-bias tests (e.g. Egger’s test, Begg’s test, funnel-plot asymmetry).

Categories in this panel are not mutually exclusive; the fact that they sum to 100% is coincidental.

These patterns document substantial gaps between recommended analytic practices and routine implementation in correlation-based meta-analyses. The implications of these gaps for estimation, diagnostic interpretation, and inferences are detailed in Section 5.3.

4.3. Heterogeneity: routinely reported but rarely explored

As we documented in Section 3, heterogeneity in management and marketing meta-analyses is substantial (e.g. among studies reporting I2, the median value is 96.7%; Table 5). According to methodological standards, such heterogeneity signals that variation in effect sizes needs to be examined and, where possible, explained (Baker et al., 2009; Stanley and Doucouliagos, 2012). Furthermore, related guidelines suggest investigating sources of heterogeneity using a moderator analysis or meta-regression. Meta-regression in particular allows analysts to examine multiple study, sample, and measurement characteristics simultaneously and assess their relative contribution to observed variation. Unlike subgroup analyses, meta-regression can accommodate complex empirical structures and overlapping sources of heterogeneity.

To assess implementation of these recommendations, we identified meta-analyses that regressed estimated effect sizes on study-level, sample-level, or estimation characteristics. We excluded univariate Egger-type regressions, which regress standard errors on estimated effect sizes, because they primarily diagnose publication bias rather than explaining substantive heterogeneity. Table 10 reveals that 74 meta-analyses estimate at least one meta-regression. For the 26 meta-analyses that do not conduct any meta-regression, it is possible that heterogeneity is modest. However, Panel B shows that the median I2 among these meta-analyses is 96.4%, nearly identical to the median value of 96.8% among those that do estimate meta-regressions (Panel C in Table 10). Thus, the magnitude of heterogeneity does not appear to determine whether researchers undertake the recommended heterogeneity analyses.

Meta-regression to explore heterogeneity.

Panel A reports the number of meta-analyses in the full sample (N = 100) that conduct at least one meta-regression. Panel B shows the median level of heterogeneity (I2) among the 26 meta-analyses that do not conduct meta-regression. Panel C summarises heterogeneity and model complexity among the 74 meta-analyses that do apply meta-regressions, including the proportion that estimate multivariate (i.e. multiple moderator) models. Panel D describes data availability within the subset of meta-analyses that estimate only univariate moderator models (N = 28), including the median numbers of studies and estimates. Percentages are calculated using the denominator specified for each panel.

Even when meta-regressions are employed, they often fall short of established standards. Among meta-analyses that estimate meta-regressions, only 62% include more than one moderator. When multiple sources of heterogeneity are plausible, multivariate specifications are important because univariate models cannot distinguish among correlated explanations or assess their joint effects. The predominance of univariate meta-regressions could reflect limited degrees of freedom. However, Table 10 shows that among the meta-analyses relying only on univariate meta-regressions, the median number of studies (88.5) and effect sizes (242.5) exceed the sample-size thresholds typically recommended for reliable multivariate meta-regressions (Hedges and Pigott, 2004; Tipton et al., 2019).

Thus, management and marketing meta-analyses routinely report very high heterogeneity, but they rarely follow recommended practices to identify its sources. When meta-regressions are conducted, they are often limited in form. In Section 5.5, we consider the implications of this gap between methodological guidelines and routine practice for interpretation and generalisability.

4.4. Publication bias: widespread testing with limited adjustment

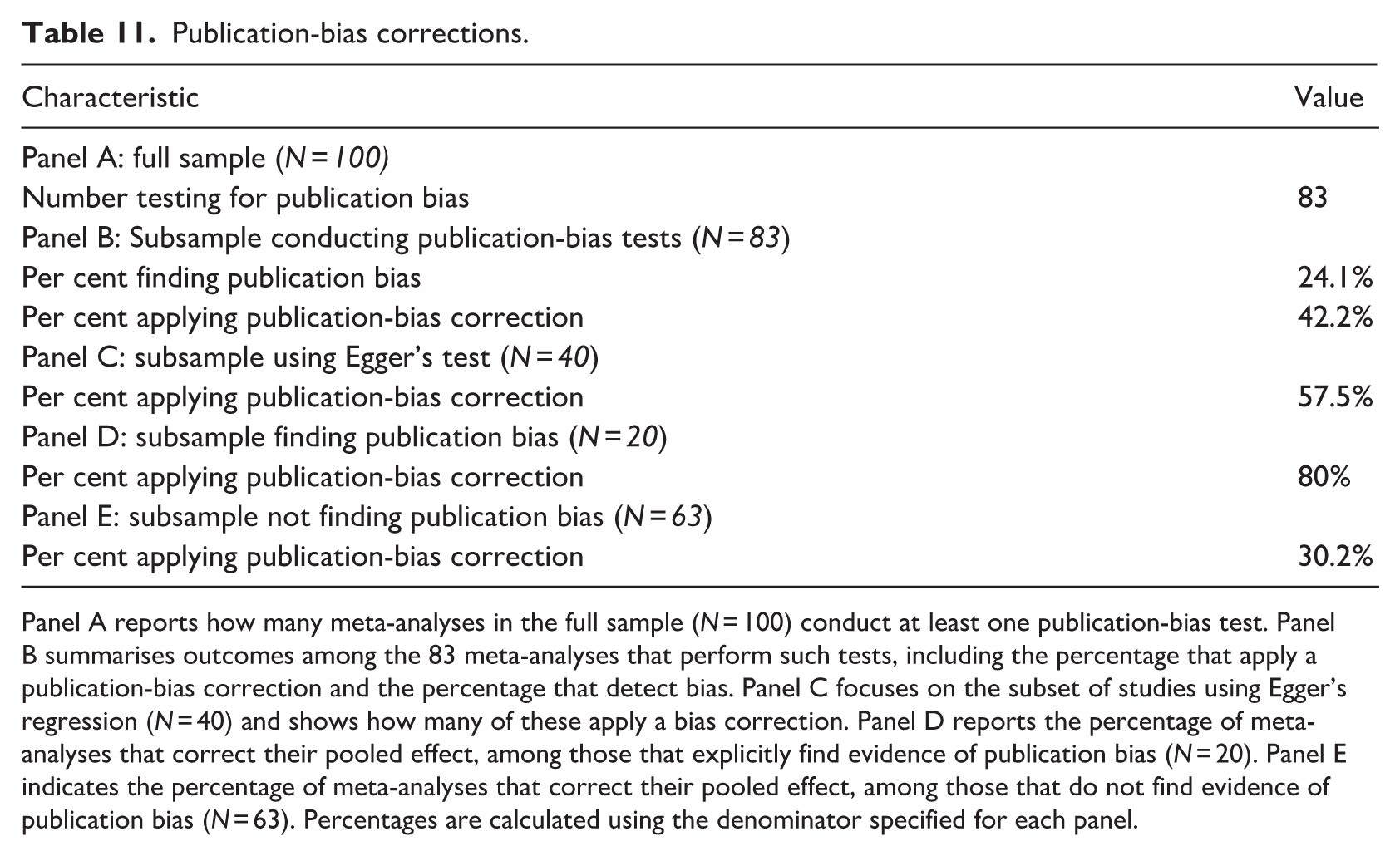

As documented in Table 7, management and marketing meta-analyses usually check for publication bias, often using funnel plots or Fail-safe N. However, these two approaches lack any mechanism for estimating publication bias–adjusted effect sizes. While Fail-safe N was an ingenious pioneering device for addressing the “file-drawer problem,” current guidelines recommend abandoning it in favour of more informative statistical methods (Becker, 2005). This recommendation is due to several critical weaknesses: it lacks an underlying statistical model or formal criterion for interpretation, ignores heterogeneity in study results, and relies on the problematic assumption that missing studies have an average effect of zero. Furthermore, it focuses narrowly on statistical significance rather than the practical or clinical magnitude of the effect. Because significance depends strongly on sample size, the Fail-safe N can misleadingly suggest that results are robust even when the pooled estimate itself is biased.

Among the 83 meta-analyses that conduct at least one publication-bias test, fewer than half (42.2%) estimate a bias-adjusted effect (Panel B in Table 11). Panel D in Table 11 documents how researchers respond when they detect publication bias. In total, 80% implement some form of adjustment, but 20% report only unadjusted pooled effects, leaving the magnitude of the bias unaddressed.

Publication-bias corrections.

Panel A reports how many meta-analyses in the full sample (N = 100) conduct at least one publication-bias test. Panel B summarises outcomes among the 83 meta-analyses that perform such tests, including the percentage that apply a publication-bias correction and the percentage that detect bias. Panel C focuses on the subset of studies using Egger’s regression (N = 40) and shows how many of these apply a bias correction. Panel D reports the percentage of meta-analyses that correct their pooled effect, among those that explicitly find evidence of publication bias (N = 20). Panel E indicates the percentage of meta-analyses that correct their pooled effect, among those that do not find evidence of publication bias (N = 63). Percentages are calculated using the denominator specified for each panel.

Adjustments are even less common when diagnostic tests fail to detect publication bias (Panel E in Table 11). Among the 63 meta-analyses that do not find evidence of publication bias, only 30.2% report bias-adjusted estimates. This asymmetry indicates that most authors base their use of bias-correction methods on the outcome of diagnostic tests, treating publication bias adjustments as an optional robustness check rather than a routine estimation requirement. Such conditional applications also rely on diagnostic tests that offer limited power, particularly in settings characterised by extreme heterogeneity and flexible model specifications.

Such practices directly contradict recommendations to identify publication bias as an estimation problem and report bias-adjusted estimates routinely, regardless of whether the formal tests detect asymmetry (Andrews and Kasy, 2019; Carter et al., 2019; McShane et al., 2016). Thus, we identify another systematic gap: publication bias is widely tested, but adjustments are rare, inconsistently applied, and often treated as optional. We address the implications of this gap for statistical inferences in Section 5.4.

4.5. Comparison with other disciplines

It is useful to situate these patterns relative to meta-analytic practices in other disciplines. Using the cross-disciplinary assessment provided by Wu et al. (2026), we compare the frequency with which meta-analyses in management and marketing comply with best-practice recommendations with the corresponding frequencies in business and economics and in psychology. Business and economics are the closest intellectual neighbours to management and marketing; psychology is a closely related social science field where correlation-based syntheses and construct-level relationships are common.

As the results in Appendix 1 reveal, the patterns are broadly similar across the three fields. We find somewhat greater adoption of certain publication-bias practices in management and marketing, including the use of multiple publication-bias diagnostics and the avoidance of standard error–based diagnostics when correlations are used. Yet management and marketing meta-analyses appear less likely to address dependence among effect sizes. These comparisons should be interpreted cautiously, because the relevant studies draw on different samples, journal sets, and publication windows. Nevertheless, they provide a useful benchmark for assessing actual methodological practices in management and marketing, relative to related disciplines.

5. Key inferential consequences of current meta-analytic practices

In this section, rather than scoring studies against a checklist of best practices or conducting a compliance audit, we identify the consequences of the gaps between recommended methods and routine practices in management and marketing meta-analyses. In particular, we focus on how these divergences can translate into inefficiency, bias, misspecified uncertainty, and reduced interpretability. The consequences we discuss are selective rather than exhaustive and reflect areas where our review reveals consistent gaps between recommended and actual practices, and where the implications are particularly important for management and marketing research.

5.1. Inefficient estimates due to ignoring effect-size dependence

As noted, meta-analyses in management and marketing routinely extract multiple effect sizes from individual primary studies. Because these effect sizes are derived from the same samples, measures, or research designs, they are statistically dependent. Ignoring such dependence by treating correlated observations as independent produces inefficient estimates that do not reflect the true structure of the data. As a result, these estimates effectively double-count information and fail to achieve the minimum variance attainable under correct modelling of the data-generating process.

To address this inefficiency, model-based approaches such as multilevel and multivariate meta-analytic models can decompose variance into within- and between-study components and identify correlations among effect sizes drawn from the same study. These approaches retain all available estimates while explicitly accounting for dependence. As a result, they preserve information and yield more efficient estimates than ad hoc strategies (e.g. averaging, aggregating, or selecting a single estimate per study).

5.2. Invalid inferences due to misspecified uncertainty

Another serious consequence of ignoring dependence among effect sizes is misspecification of uncertainty, which leads to invalid statistical inferences. Conventional meta-analytic variance formulas assume independence among observations, but correlated effect sizes drawn from the same primary study violate this assumption, resulting in understated standard errors, overly narrow confidence intervals, and hypothesis tests that reject null hypotheses too frequently. Such consequences are particularly salient in management and marketing meta-analyses, which often include a moderate number of studies while also leveraging multiple effect sizes per study. Even if the pooled effect estimates are unbiased, misspecification of uncertainty creates a false impression of precision that undermines the credibility of statistical inferences.

To avoid this problem, researchers can use MASEM as their primary inferential framework, because it explicitly incorporates dependence. Yet, as we documented in Section 3, MASEM rarely serves as the sole basis for inference. Instead, it is typically applied after conventional pooling has already imposed independence assumptions on the data. In that scenario, a downstream modelling approach is not sufficient to produce valid statistical inferences. Instead, valid inferences require explicit adjustments in the estimation stage. Cluster-robust variance estimates can correct standard errors without requiring full specification of the dependence structure. In particular, the second-generation CR2 corrections, combined with appropriate small-sample degrees-of-freedom adjustments, can yield reliable confidence intervals and hypothesis tests in typical meta-analytic settings.

5.3. Biased pooled estimates due to correlation-based effect sizes

Although correlations provide an intuitive descriptive measure of association, their use as the dominant effect-size metric in inverse-variance–weighted meta-analyses produces systematic bias in pooled effect estimates. This inferential consequence arises from the deterministic relationship between a correlation coefficient and its standard error. Larger absolute correlations mechanically generate smaller standard errors, independent of study design or information content. Inverse-variance weights then assign greater influence to larger correlations by construction, which confounds effect magnitude with precision. This relationship also violates a core assumption underlying conventional meta-analytic estimators. As a result, pooled effect estimates may be systematically inflated, even in the absence of selective reporting. Furthermore, standard publication-bias diagnostics that rely on independence between effect sizes and their standard errors (e.g. funnel-plot asymmetry, Egger-type regressions) yield misleading signals when applied to untransformed correlations.

Established remedies include transforming correlations to Fisher’s z or basing weights on study sample sizes to separate effect magnitude from the standard error and thereby restore the validity of standard meta-analytic assumptions. Yet despite these long-standing recommendations, we find few such adjustments in management and marketing meta-analyses. Because this bias directly affects substantive conclusions, it represents a particularly consequential inferential concern.

5.4. Overconfident conclusions from treating publication bias as diagnostic

As we documented in Section 4, management and marketing meta-analyses often test for publication bias. However, once they test for publication bias, they rarely incorporate it into the estimation of pooled effects, so the reported summary estimates remain unadjusted even when selective reporting is evident. Furthermore, we find indications that researchers use diagnostic outcomes as a decision rule for conducting bias adjustments, even though the diagnostics they use tend to be weak and sensitive to specification choices. In such cases, the meta-analyses are at risk of producing overconfident inferences. For example, if weak diagnostic tests fail to detect selective reporting and researchers therefore do not adjust their estimates for bias, the reported summary effects are likely to overstate the magnitude of the underlying relationships and understate their uncertainty.

To address this concern, studies should routinely include bias-adjusted estimates. Several methods are available to move beyond diagnosis and produce bias-adjusted pooled estimates. Regression-based approaches such as funnel asymmetry test (FAT)-precision-effect test (PET)-precision effect estimate with standard error (PEESE) can both test for small-study effects and generate corrected estimates (Stanley and Doucouliagos, 2012). Selection models can also be used to estimate adjusted summary effects while retaining a diagnostic role (Andrews and Kasy, 2019). More recently, robust Bayesian meta-regression (RoBMA) extends this logic by applying Bayesian model averaging across multiple publication-bias models, rather than relying on a single correction method (Bartoš et al., 2023). This approach allows researchers to combine evidence across alternative bias-adjustment procedures and obtain both publication-bias diagnostics and bias-adjusted estimates within a unified framework.

5.5. Limited interpretability due to extreme heterogeneity

Extremely high heterogeneity is a defining feature of management and marketing meta-analyses. As we have documented, reported values of I2 routinely exceed 90%, indicating that most observed variation reflects real differences in underlying effects rather than sampling error. As a result, the substantive meaning of any pooled average effect is inherently limited. If such heterogeneity is documented but not systematically explored – for example, when meta-analyses rely on sparse, univariate, or post hoc moderator analyses – pooled estimates provide limited guidance about where, when, or for whom the identified effects are likely to hold.

Meta-regression offers a viable way to translate heterogeneity into context-specific inferences because it can link effect sizes to study characteristics, contexts, or design features. When implemented within frameworks that account for dependence and uncertainty, multivariate meta-regressions can yield interpretable conditional effects. However, isolated moderator tests often lack power, ignore correlations among moderators, and fail to deliver meaningful explanations of effect variation.

5.6. Consequences of limited transparency and replicability for inferences

We identify limited transparency and replicability as cross-cutting issues that amplify all of the preceding problems. When researchers do not share their data, code, and analytic decisions, it is difficult to evaluate whether they have handled dependence appropriately, specified uncertainty correctly, constructed and weighted effect sizes appropriately, or incorporated publication bias into their estimation procedures. Such constraints are particularly acute for meta-analyses, whose results depend on various analytic choices made at multiple stages of the research process. Without transparent reporting and accessible materials, readers cannot assess the robustness of the conclusions or determine how sensitive the results are to alternative, equally plausible specifications. Limited transparency thus acts as a catalyst that magnifies other inferential consequences by preventing their detection, correction, or reassessment. It constrains cumulative knowledge by limiting the field’s ability to update, reanalyse, or extend meta-analytic evidence with emerging methods and data.

5.7. Summary and example of compounded inferential consequences

Table 12 summarises commonly observed gaps between routine meta-analytic practices and methodological best practices, together with their consequences for statistical inference and the corresponding methodological adjustments. In turn, it provides a concise summary of how departures from recommended practices can affect the credibility and interpretability of meta-analytic evidence. Although we discuss these gaps separately, we acknowledge that they rarely occur in isolation. Meta-analyses typically combine several features; for example, they may extract multiple dependent effect sizes, use correlation-based effect-size metrics without transformation, and undertake publication-bias correction only when low-powered diagnostic tests suggest the need to do so. When these elements occur together, their inferential consequences interact. The resulting effects are not necessarily additive; their joint influence depends on the data structure and the analytic choices made by the researcher. In general, multiple departures from recommended practice increase the risk that pooled estimates, standard errors, and diagnostic tests together produce a misleading impression of the underlying evidence.

Common practices in management and marketing meta-analyses and their consequences for statistical inference.

The analytic practices listed in this table are commonly observed in management and marketing meta-analyses and often reflect practical constraints such as software limitations, computational complexity, reviewer expectations, and journal space constraints. However, each practice has predictable consequences for statistical inference that should be considered when interpreting meta-analytic results.

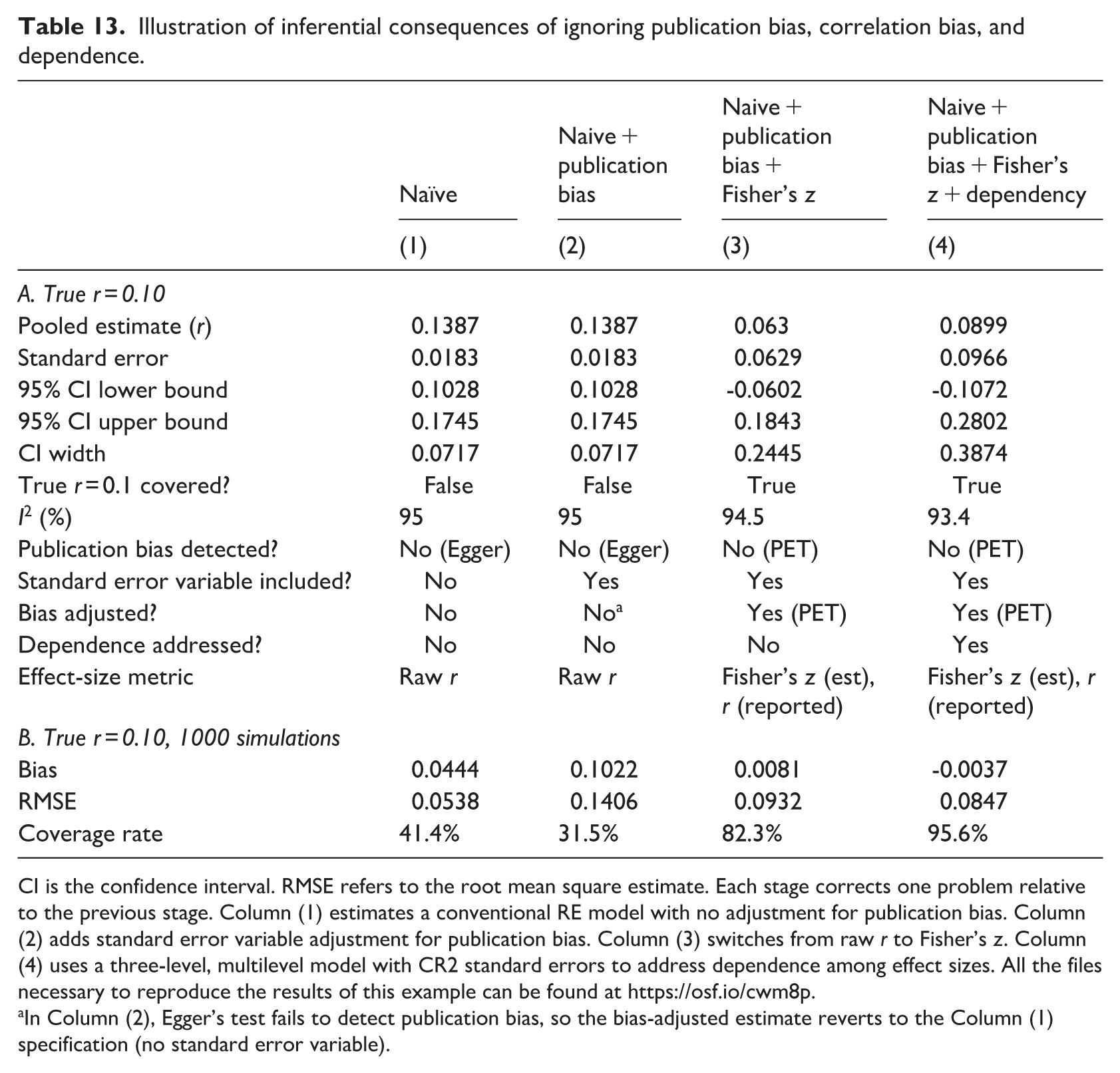

To illustrate how inferential consequences might interact and compound, we present a hypothetical meta-analysis constructed with parameters calibrated to the sample meta-analyses. This stylised example demonstrates how each correction alters inferences under conditions that are typical of management and marketing meta-analyses, in which correlation-based effect sizes, selective reporting, bias corrections based on diagnostic tests, and multiple dependent effect sizes per study occur simultaneously.

The simulated dataset comprises 87 studies (median sample size in our review), each of which contributes three effect sizes, for 261 estimates in total. We set the true underlying correlation to r = 0.10, which Cohen (1988) classifies as a small effect, establish extreme heterogeneity (I2 ≈ 95%), and introduce publication bias by suppressing 80% of the statistically non-significant estimates, in accordance with selective reporting pressures in management and marketing research.

Table 13 presents the results across four specifications that reflect progressive applications of corrections for publication bias, effect-size scaling, and dependence. Column (1) represents the naive estimate, namely a standard RE model applied to raw correlations, with no adjustment for publication bias and no correction for dependence. Column (2) adds Egger’s test for publication bias but, consistent with the low-power diagnostic problem (Section 5.4), fails to detect any bias and therefore reverts to Column (1). Column (3) switches from raw correlations to Fisher’s z and introduces a standard error term, as is available from a PET regression that can adjust for publication bias. Finally, Column (4) addresses dependence by fitting a three-level multilevel model with CR2 standard errors; it represents the fully correct model.

Illustration of inferential consequences of ignoring publication bias, correlation bias, and dependence.

CI is the confidence interval. RMSE refers to the root mean square estimate. Each stage corrects one problem relative to the previous stage. Column (1) estimates a conventional RE model with no adjustment for publication bias. Column (2) adds standard error variable adjustment for publication bias. Column (3) switches from raw r to Fisher’s z. Column (4) uses a three-level, multilevel model with CR2 standard errors to address dependence among effect sizes. All the files necessary to reproduce the results of this example can be found at https://osf.io/cwm8p.

In Column (2), Egger’s test fails to detect publication bias, so the bias-adjusted estimate reverts to the Column (1) specification (no standard error variable).

The results clearly illustrate the compounding nature of the inferential failures. The naive estimate in Column (1) equals 0.139, nearly 40% larger than the true effect of 0.10, and its 95% confidence interval [0.103, 0.175] excludes the true value entirely. This exclusion is a direct consequence of the upward bias created by selective reporting and the artificially narrow standard errors that result from ignoring dependence. The transition from Column (1) to Column (2) produces no change; a naive diagnostic test adds no benefit when heterogeneity is extreme. Correcting the correlation bias and adjusting for publication bias, as in Column (3), substantially reduces the point estimate to 0.063, and the confidence interval covers the true value. The fully correct specification in Column (4) yields an estimate of 0.090, the closest to the true effect. Its substantially wider confidence interval [−0.107, 0.280] reflects the genuine uncertainty that the naive approach concealed. Notably, accounting correctly for dependence and heterogeneity produces results in which the pooled effect is no longer statistically distinguishable from 0. This finding is entirely obscured by the naive approach.

To check whether these patterns generalise beyond a single simulated dataset, we conduct 1000 Monte Carlo replications using the same parameters and report the results in the bottom panel of Table 13. The results confirm and sharpen the findings. That is, Column (1) produces a mean bias of 0.044 and a coverage rate of only 41.4%, meaning the 95% confidence interval fails to include the true effect in nearly six of ten replications. Column (2) performs even worse: The bias rises to 0.102, and coverage falls to 31.5%. This counterintuitive result seemingly reflects the instability introduced by conditioning the decision to make adjustments on the low-powered diagnostic test. In the few replications where Egger’s test identifies bias, the PET regression, applied to the raw correlations, overcorrects and produces estimates even farther from the truth than the unadjusted naive approach. This inferential hazard is acute and demonstrates that selectively adjusting for publication bias only when a noisy diagnostic detects it does not improve inferences on average and even tends to make them less reliable.

Column (3) substantially improves performance, reducing bias to 0.008 and raising coverage to 82.3%, but it still falls short of the nominal 95% rate because this specification leaves dependence among effect sizes unaddressed and leaves the standard errors understated. Column (4) achieves both near-zero bias (−0.004) and coverage of 95.6%, which is essentially the nominal rate. Thus, jointly addressing all three problems is necessary for reliable inferences. As the Monte Carlo results illustrate, departures from methodological guidance can be deeply consequential in the data environments that are typical of management and marketing meta-analyses.

6. Conclusion

With this systematic assessment of contemporary meta-analytic practices in management and marketing and their (mis)alignment with established methodological guidelines, we document consistent patterns in data structures, estimator choices, effect-size approaches, heterogeneity reporting, publication-bias assessments, and transparency practices. Across 100 meta-analyses published in top-tier journals, we find consistent evidence that authors acknowledge key methodological challenges but only partially address those challenges in practice.

Not every departure from methodological best practices indicates a lack of awareness or care on the part of researchers. In many cases, practical constraints shape analytic choices. For example, the software limitations of commonly available packages make it difficult to implement advanced estimators; multilevel and model-based bias-correction methods impose substantial computational complexity; reviewers might demand familiar analytic approaches; a small sample of studies limits the degrees of freedom available for moderator analyses; and journal space constraints might prevent the reporting of extensive robustness analyses. We recognise that the analytic choices we document likely reflect pragmatic trade-offs in many cases.

Still, these deviations from generally accepted methodological recommendations have serious inferential consequences. Some of the common empirical features of this literature domain – including multiple dependent effect sizes, extreme heterogeneity, and strong incentives to publish statistically significant results – interact with conventional analytic choices to produce systematic threats to estimation, inference, and interpretation, including inefficient estimates, misspecified uncertainty, invalid inferences, algebraic distortions in correlation-based analyses, and overconfident conclusions drawn from unadjusted pooled effects.

With this assessment, we seek to complement, rather than replace, existing guidelines and thereby help authors, reviewers, and editors identify the consequences of methodological choices, given the empirical conditions typical of management and marketing research. By focusing on inferential vulnerability rather than procedural compliance, our framework provides a practical means to prioritise methodological improvements without imposing any single or uniform standard.

Given the central role of meta-analyses in developing theory, synthesising evidence, and offering meaningful managerial recommendations, we consider efforts to close the gap between recommended best practices and routine implementation deeply important. Addressing the inferential consequences we raise involves recognising that common analytic choices can generate inefficiency, bias, misspecified uncertainty, and limited interpretability. By making these consequences explicit, this assessment establishes a basis for more informed analytic judgements and, ideally, for improved credibility, transparency, and the cumulative value of meta-analyses in management and marketing.

Footnotes

Appendix

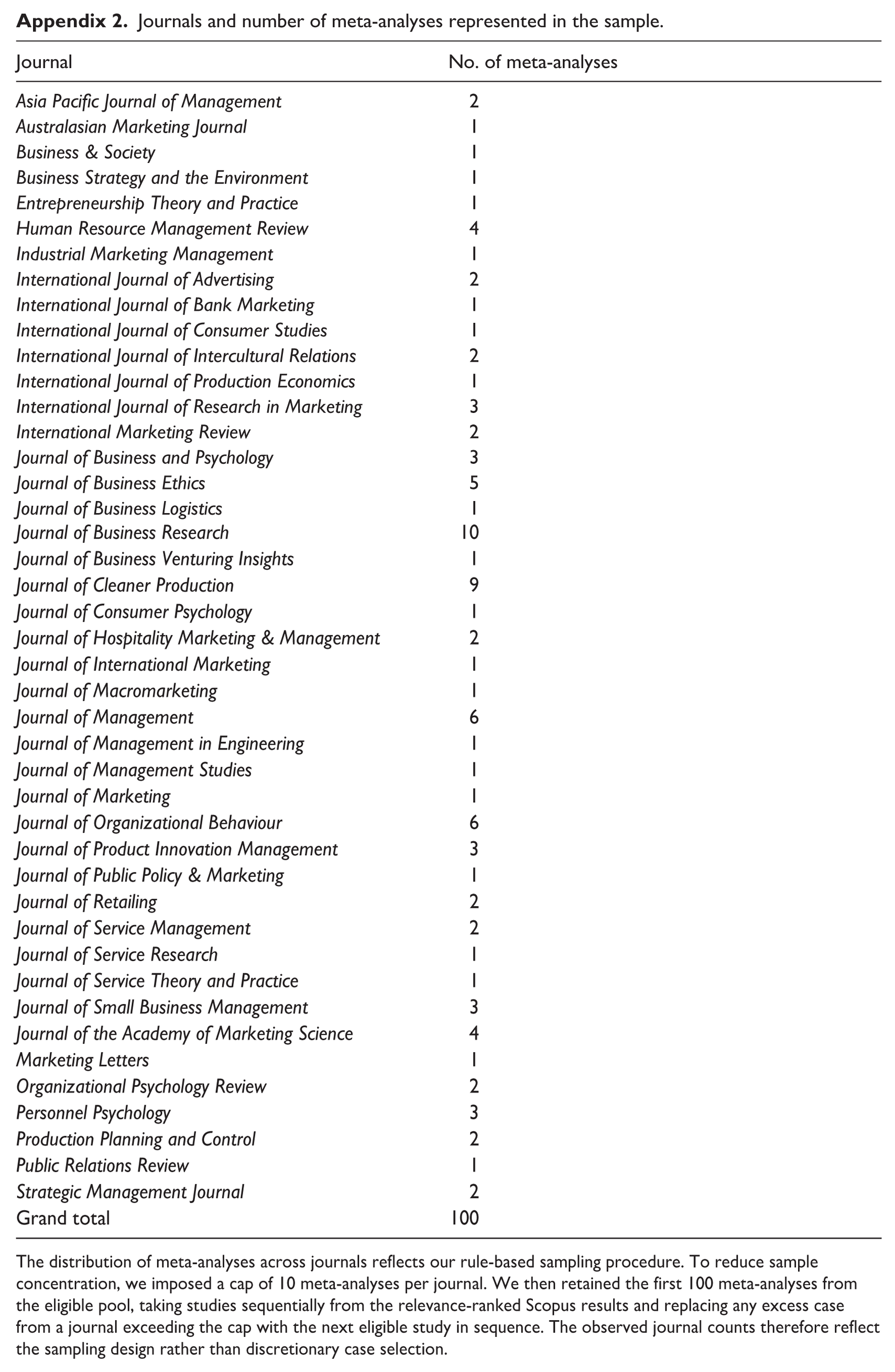

Journals and number of meta-analyses represented in the sample.

| Journal | No. of meta-analyses |

|---|---|

| Asia Pacific Journal of Management | 2 |

| Australasian Marketing Journal | 1 |

| Business & Society | 1 |

| Business Strategy and the Environment | 1 |

| Entrepreneurship Theory and Practice | 1 |

| Human Resource Management Review | 4 |

| Industrial Marketing Management | 1 |

| International Journal of Advertising | 2 |

| International Journal of Bank Marketing | 1 |

| International Journal of Consumer Studies | 1 |

| International Journal of Intercultural Relations | 2 |

| International Journal of Production Economics | 1 |

| International Journal of Research in Marketing | 3 |

| International Marketing Review | 2 |

| Journal of Business and Psychology | 3 |

| Journal of Business Ethics | 5 |

| Journal of Business Logistics | 1 |

| Journal of Business Research | 10 |

| Journal of Business Venturing Insights | 1 |

| Journal of Cleaner Production | 9 |

| Journal of Consumer Psychology | 1 |

| Journal of Hospitality Marketing & Management | 2 |

| Journal of International Marketing | 1 |

| Journal of Macromarketing | 1 |

| Journal of Management | 6 |

| Journal of Management in Engineering | 1 |

| Journal of Management Studies | 1 |

| Journal of Marketing | 1 |

| Journal of Organizational Behaviour | 6 |

| Journal of Product Innovation Management | 3 |

| Journal of Public Policy & Marketing | 1 |

| Journal of Retailing | 2 |

| Journal of Service Management | 2 |

| Journal of Service Research | 1 |

| Journal of Service Theory and Practice | 1 |

| Journal of Small Business Management | 3 |

| Journal of the Academy of Marketing Science | 4 |

| Marketing Letters | 1 |

| Organizational Psychology Review | 2 |

| Personnel Psychology | 3 |

| Production Planning and Control | 2 |

| Public Relations Review | 1 |

| Strategic Management Journal | 2 |

| Grand total | 100 |

The distribution of meta-analyses across journals reflects our rule-based sampling procedure. To reduce sample concentration, we imposed a cap of 10 meta-analyses per journal. We then retained the first 100 meta-analyses from the eligible pool, taking studies sequentially from the relevance-ranked Scopus results and replacing any excess case from a journal exceeding the cap with the next eligible study in sequence. The observed journal counts therefore reflect the sampling design rather than discretionary case selection.

Acknowledgements

We thank Editor Tania Bucic for her insightful comments. Any remaining errors are our own.

Final transcript accepted 30 March 2026 by Tania Bucic

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.