Abstract

Information infrastructure plays a critical role providing the necessary information to achieve compliance with sustainability reporting standards, however, there remains limited understanding of the reciprocal relationship between these two activities. In this study, using a qualitative research methodology, we have investigated the nature of adaptation and development of information infrastructure and the variations in the data-sourcing practices firms engage while complying with different reporting standards. We found institutional pressures that drive the decision to report can influence the development of new infrastructure and that how the standard addresses data-sourcing practices plays an important role in the relationship between reporting and infrastructure. We further observe that standards focusing on the information needs of external stakeholders may inadvertently decouple reporting from the management of sustainability challenges.

Keywords

1. Introduction

Reporting on sustainability performance is increasingly recognised as essential in the effective evaluation of corporate performance. Cognisant of the need for reliability and transparency of reporting, there has been significant progress over recent years in the development of reporting standards with companies now able to draw upon several voluntary (such as the CDP, GRI and SASB) as well as an increasing number of mandatory reporting standards (e.g. European Commission, 2014; NGERs in Australia) to inform the content and structure of their reports (KPMG et al., 2016). Despite the pervasiveness of sustainability reporting standards and substantially more performance information being reported, there is increasing criticism of the quality of information (Boiral et al., 2021; Ding et al., 2023; Haji et al., 2023), reporting being regarded as symbolic or ‘greenwashing’, and that it is failing to meet stakeholders’ needs (Balluchi et al., 2021; European Commission, 2021; Khan et al., 2021; Michelon et al., 2015). Significantly, research has questioned whether reporting reflects actual management practices (Cho et al., 2015; Khan et al., 2021; Michelon et al., 2015) or being used as a marketing or public relations tool (Seele and Gatti, 2017), and whether it assists in managing sustainability challenges (Boiral et al., 2019). In response, there has been a push for new standards that further refine reporting to meet external stakeholders’ information needs (Christensen et al., 2021; IFRS, 2020).

Alternatively, it has been argued that as sustainability reporting evolves, greater emphasis should be placed on internal processes, addressing emerging challenges related to current capabilities, and expanding the information infrastructure necessary to support the demands of reporting standards (Brooks et al., 2012; GRI, 2017; Garcia et al., 2016; Herremans and Nazari, 2016; IIRC, 2018; Kasperson and Johansen, 2016; Troshani and Rowbottom, 2024). To address potential challenges, it is crucial to acknowledge gaps in our understanding of practice, particularly when accounting expands into new domains (Power, 2015) that include identifying relevant data-sources for sustainability reporting, integrating unstructured sustainability data into traditional accounting functions (Kocsis, 2019), and understanding how sustainability reporting regulations influence existing management systems (Haji et al., 2023; McNally and Maroun, 2018; Troshani and Rowbottom, 2024). While there is an emerging literature exploring the relationship between sustainability reporting and information infrastructure, this has not explicitly focused on the relationship between the specific requirements of a reporting standard and the bespoke information infrastructure supporting compliance reporting.

We contribute to this literature by exploring the reciprocal relationship between a sustainability reporting standard and the information infrastructure that supports compliance reporting. We adopted a qualitative methodology interviewing report preparers within 12 Australian companies to investigate how information infrastructure is impacted to achieve compliance with two divergent standards, the GRI and the NGERs. This enabled us to observe and understand instances where and how information infrastructure was developed or adapted, or where and how reported information was affected by legacy information infrastructure and importantly how differences in the standard may affect this relationship. For data analysis and interpretation of our findings, we draw upon Krasikov and Legner’s (2023) framework focusing on data-sourcing practices (sense-making, data collection and reconciliation) ‘which pave the way for reliable and trustworthy sustainability reporting’ (p. 164). Extending upon prior research our study provides evidence of the reciprocal relationship between a reporting standard and information infrastructure (Krasikov and Legner, 2023; Troshani and Rowbottom, 2024 and Senn and Giordano-Spring, 2020). We observed that the information infrastructure is shaped by institutional pressures (Bui and Fowler, 2022), by how each standard addresses data-sourcing, critically, the sense-making phase (definitions, metrics and measurement methods), and that such interactions vary across different standards (Krasikov and Legner 2023).

The remainder of the paper proceeds as follows; section 2 presents a review of the literature. Section 3 discusses the theoretical anchor. Section 4 explains the research design. Section 5 provides the results and analysis followed by a summary of findings and conclusions.

2. Literature review

Sustainability reporting, while having a long history, is relatively recent in its demands for more comprehensive and comparable data and information as companies become more reliant upon reporting standards (Senn and Giordano-Spring, 2020). This has motivated the exploration of an organisation’s information infrastructure and its relationship with sustainability reporting (Brooks et al., 2012; GRI, 2017; Garcia et al., 2016; Herremans and Nazari, 2016; IIRC, 2018; Troshani et al., 2022). Information infrastructure comprises of information systems, software tools, data repositories, organisational practices and routines (Leonardi, 2011), provides the platform shaping the way organisations collect, store and disseminate sustainability data (Pan et al., 2022) and are essential for accurate, and reliable data capture, and consistent and comparable reporting (Garcia et al., 2016; Herremans and Nazari, 2016). However, there have been questions as to the capacity of information infrastructure to support sustainability reporting (EDM Council, 2024; GRI, 2017; IIRC, 2018). Issues such as limited data accessibility and poor data quality (Machado Ribeiro et al., 2022; Melville et al., 2017), data-collection from diverse internal and external data sources (Seethamraju and Frost, 2016), identification of relevant data sources and inclusion of unstructured data (Kocsis, 2019), and insufficient data integration and consolidation (Zampou et al., 2022) are seen as obstacles to improved reporting. These data related problems are likely to be accentuated if the organisation is required to report against legislated standards and to be externally audited (Krasikov and Legner, 2023). However, despite a growing awareness of the challenges, intra-organisational factors such as information infrastructure, data-sourcing, use and adaptation of information systems, technologies and tools in sustainability reporting are relatively unexplored (Adams and Frost, 2008; McNally and Maroun, 2018; Senn and Giordano-Spring, 2020; Troshani and Rowbottom, 2024).

Two issues pertinent for understanding the interaction between sustainability reporting and information infrastructure emerge. The first is the reliance upon existing information infrastructure to support sustainability reporting. The materiality of the firm’s impact on sustainability often forms the basis for issues identified for reporting, particularly for ‘inside-out’ reporting standards (such as the GRI). This will encompass material issues already the subject of management attention, with established information systems containing robust measurement and reporting processes often tailored for the internal user. Compliance with reporting standards in the first instance may seek to source data from existing management systems. As such legacy systems may shape sustainability reporting with respect to metrics, measurement and the robustness of the reporting data (Kasperson and Johansen, 2016; Troshani and Rowbottom, 2024).

The second issue is how existing information infrastructure are adapted or new infrastructure developed to create ‘new’ performance data to achieve compliance. A key issue in the development of sustainability reporting standards has been the move towards standards equivalent to financial reporting with an emphasis on ‘comparable and consistent reporting’ (IFRS, 2020). This ‘outside-in’ approach focuses more on the external users’ information needs. Prior research has observed that the required data may not be readily available, or that such data may not be collated in a way that is sufficiently consistent or robust to achieve compliance (Troshani et al., 2022). There is an emerging literature exploring the effect financial reporting standards have on accounting information systems and the importance of quality information systems to support reporting including sustainability reporting (Ionascu et al., 2014; Liu et al., 2018; Nguyen et al., 2021). However, there is little known of how sustainability reporting regulation affects internal processes (Haji et al., 2023; Seethamraju and Frost, 2019; Troshani and Rowbottom, 2024).). It is argued that the data requirements emanating from the sustainability reporting standard motivate adaption or the development of new information infrastructure to produce the required data. To address these issues, the following research questions are explored:

3. Theoretical anchor

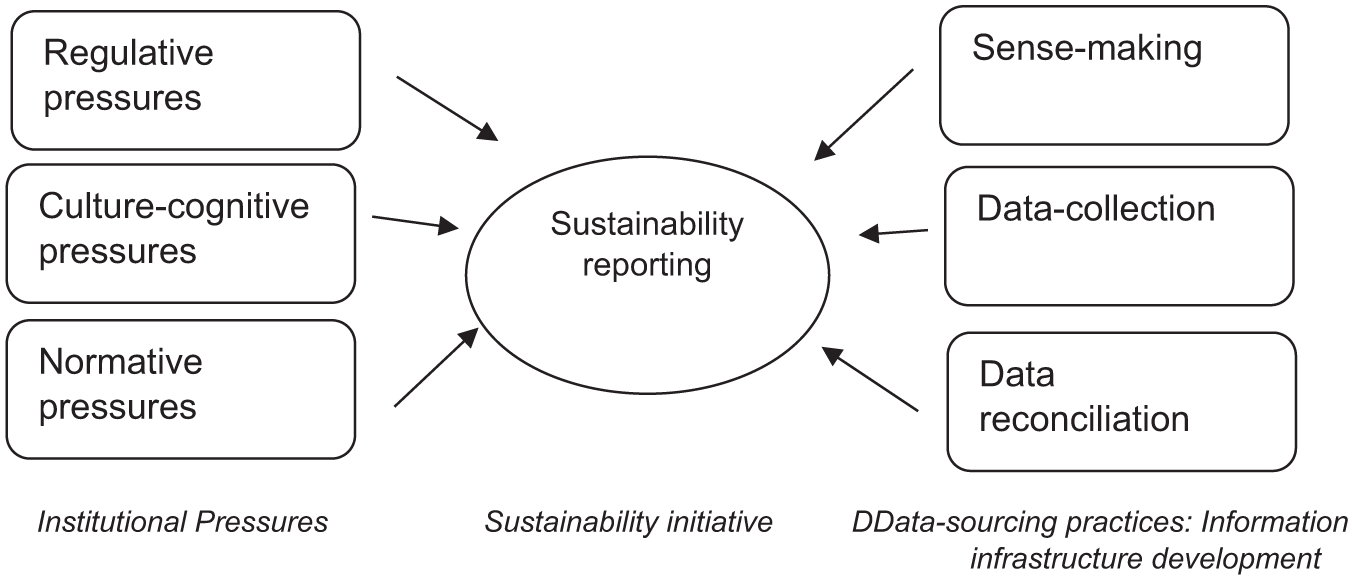

When addressing the relationship between information infrastructure and sustainability reporting, there has been a focus on the technical artefacts (Troshani and Rowbottom, 2024) or the design of systems with little attention to the data (Machado Ribeiro et al., 2022). To redress this limitation, we draw upon Krasikov and Legner’s (2023) framework (Figure 1) which first draws upon institution theory to explain the decision to adopt a sustainability initiative (why does the firm report against the standard) and second presents a data-sourcing perspective to explain organisational response in the development of information infrastructure to support sustainability reporting (how does the firm achieve compliance reporting). Widely used in management and sustainability literature (Butler, 2011), and accounting information systems research (Schiavi et al., 2024), institutional theory seeks to explain how organisations are influenced by the environment that comprises of regulative, normative and cultural-cognitive pressures and organisational structures, actions, behaviours, processes and rules (Scott, 2013). Although institutional theory has been applied to various organisational initiatives including sustainable behaviour and sustainability management accounting (Chen and Roberts, 2010), expanding its application to other subfields of accounting is recommended because of its significance to the current technological and organisational contexts (Schiavi et al., 2024). The other side of the framework provides a structured means of observing data-sourcing practices impact on the development of information infrastructure purposed to support compliance with sustainability reporting.

Institutional pressures and sustainability reporting.

Sustainability reporting is a tangible sustainability initiative, emerging in response to institutional pressures. However, it is the development of the firm’s underlying information infrastructure, shaped by the data-sourcing practices, where we may observe changes in practice.

4. Research design

4.1. Study context and focus

Our study explores the reciprocal relationship between information infrastructure and sustainability reporting standards using qualitative research methodology. As both the researchers are in Australia and funded by an Australian university, we have targeted large Australian firms to understand how they have responded to two reporting standards that have differing requirements for implementation and compliance. Those two are – GRI, globally and in Australia the most adopted voluntary reporting framework (KPMG, 2020), and NGERs (the National Green Energy Reporting Scheme), the Australian government’s mandatory standard for organisations that reach certain thresholds on carbon emissions.

The GRI provides guidelines on a range of topics including energy use, workplace diversity, anti-corruption and human rights while not requiring standardised data, performance definitions and measurement. It allows the firm to identify material topics, choice of method identification, determination of boundaries and breakdown of the data and provides a series of issue specific standards (for example GRI302: Energy) to help firms ‘prepare a sustainability report that is in accordance with the standard’ (GRI, 2016: 3).

The NGER Scheme was established in 2007 to create ‘a single national framework for reporting and disseminating company information about greenhouse gas emissions, energy production, energy consumption’ (CER, 2021b) with the Australian government Clean Energy Regulator (CER). The NGERs, through specified thresholds, identifies compliant reporting entities, prescribes definitions as to the types of emissions (fuel, and production and consumption of energy), and provides measurement guidelines and a standardised online reporting template (via the Emissions and Energy Reporting System (EERS) to enable creation of a national set of accounts with respect to Greenhouse gas emissions and energy usage (CER, 2021c). Auditability is a key requirement with respect to Scope 2 emissions (CER, 2021a) requiring data capture at the point of purchase. This together with standardised guidelines for converting data to Co2 equivalents and requirement to comply with the data management processes, allows little latitude with respect to data capture and collation.

These two standards represent different forms of sustainability reporting (Rowbottom, 2023), presenting different challenges to achieve compliance. The next section explains the method.

4.2. Method

Given the form of research questions, a qualitative approach using multiple case study organisations (Yin, 2009) was adopted. As the systems and practices adopted are temporal requiring the researcher to uncover the phenomenon in specific organisations, our approach enables an understanding of the informants’ experiences and perceptions (O’Dwyer et al., 2005) within the sustainability reporting process.

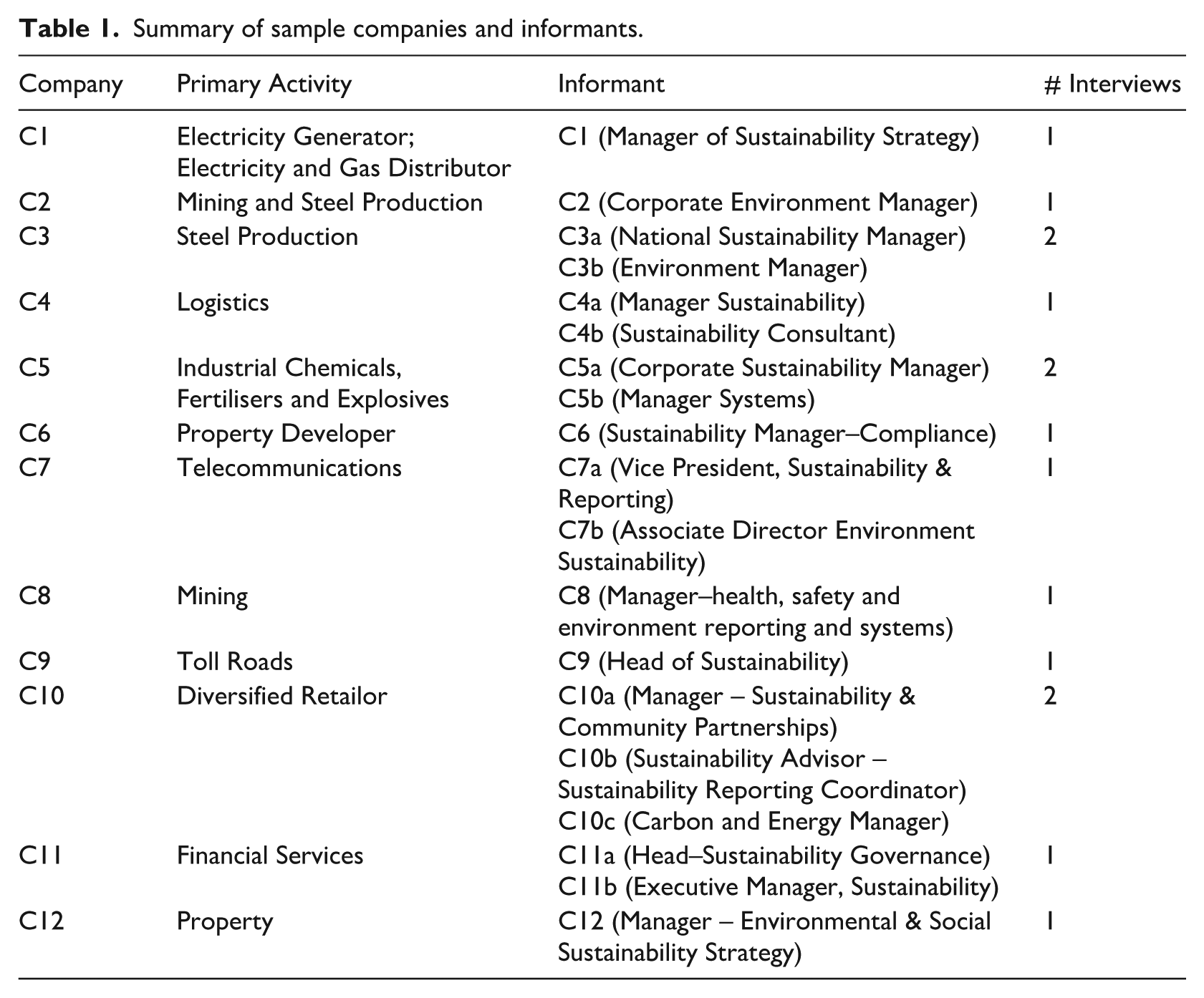

Using a theoretical, non-random sampling approach, case study organisations were chosen due to their history of publishing GRI reports as well as the mandated report under NGERs, thus having the ability to provide empirical evidence on the issues the study is exploring (Eisenhardt and Graebner, 2007). Identified companies were contacted seeking to interview individuals responsible for the preparation of the sustainability report and/or NGERs compliance report. For some companies these roles overlapped; in other companies there were degrees of separation between the roles. Participants are responsible for the sustainability reporting including the ongoing data-sourcing practices essential to support sustainability reporting and therefore can provide insights into information infrastructure deployed to support sustainability reporting. In addition, they are expected to have a perspective on why the company may continue to comply with reporting standards and of the tension that may arise due to this commitment. A final sample of 12 Australian companies and details of the 19 informants participated in the study along with the positions and nature of industry are presented in Table 1. Interviews ranged from 40 to 90 minutes (average time one hour) and conducted during the period 2018 to 2020.

Summary of sample companies and informants.

Interview protocol that consisted of a semi-structured interview guide, participant information statement, letter inviting participation and consent form, approved by the ethics committee at the University where authors were affiliated, was used in the study. Using this interview guide, two pilot interviews were conducted to test the process and structure (Yin, 2009) and the interview guide was refined (see Appendix). Interviews were recorded with prior permission, transcribed and verbatim transcripts were sent to individual respondents for corrections and validation and then anonymised for analysis. Using transcripts and notes of observations made during the interview, a chain of evidence was established thereby validating the data. Interview questions were loosely structured allowing flexibility in responding and broadly covered themes aligned with the three data-sourcing practices, starting with the background and role of the informants to understand the context and their perspective. The first theme aimed to elicit informants’ perceptions and beliefs on sustainability reporting against standards in the firm. The second theme focused on the reporting processes that include the data-sourcing and collection, challenges and the information infrastructure employed. The third theme aimed at understanding the way information is processed, reconciled and reports compiled and submitted. These were then further elaborated upon to ascertain the informant’s perceptions on the relationship with and impact on other management practices and overall sustainability performance.

Data were analysed using qualitative procedures (Huberman et al., 2014) that started from the interview itself and consisted of an iterative cycle of interview-analyse-refine-interview (Miles and Huberman, 1994). After every interview, there were debriefing and exchange of notes by the authors allowing them to identify themes, contrasting perceptions in responses and comparison with later interviews. The transcribed data was then coded independently by the researchers using NVivo software and the themes identified. The themes were further developed by condensing and conceptually grouping identified patterns (Myers and Newman, 2007). Findings were derived by triangulating the patterns with the interview data iteratively until a coherent understanding of the phenomenon is developed while maintaining a logical chain of evidence (Yin, 2009). One informant from a case study organisation, one independent researcher and colleague who did not participate in the study have reviewed the analysis and a summary of findings and their feedback incorporated. Quotes, wherever appropriate are presented in the results section to signify richness of data (Yin, 2009), offer credibility and transferability (Guba and Lincoln, 1994), and context to demonstrate how findings have arisen from the data (Paton, 2002).

5. Results and analysis

Sample selection ensured that all case study organisations are legislated to comply with the NGERs and have also chosen to report against the GRI. Significantly, we also observed that the case companies had signed up to additional reporting standards, each with their own data requirements and targeted stakeholders, often with overlapping information requirements. For example, C5 report against NGERs, NPI (National Pollutant Inventory), IFA (International Fertilisers Association), DJSI (Dow Jones Sustainability Index), CDP and the GRI. Analysis of interview data and findings are presented with reference to the institutional environment and the data-sourcing practices – sense-making, data-collection and data-reconciliation.

5.1. The GRI

5.1.1. Institutional environment

Adoption of the GRI is a response to social responsibility (normative pressures) and to follow ‘orthodoxy in its organisational field’ (cultural-cognitive pressures) (Higgins and Larrinaga, 2014). The GRI standards enable a company to report on what they believe are their most significant impacts within any jurisdiction and can extend beyond existing regulatory reporting requirements (GRI, 2024). Participants believed that their role to achieve compliance, did not seem to delineate between regulated or voluntary reporting when seeking to understand the scope of sustainability reporting. Reporting against a ‘portfolio’ of sustainability standards resulted in a varying commitment to the GRI with some respondents expressing a degree of cynicism towards standardised reporting and questioned the value of reporting in improving sustainability performance. This was reinforced with a concern that the resources committed to enterprise-wide reporting were distracting from the stakeholder engagement and management at the operational level (Kasperson and Johansen, 2016; Kazemian et al., 2022). The following quote by C8 exemplifies this view: I get concerned about the amount of time and complexity . . . we’ll put this extra effort into reporting and that’ll help manage things? No. What it means is you take the people away from managing it and you get them to spend their time reporting. We report a lot of it. We report to it a pretty good high quality. (C8)

This view, while not sufficient to challenge the company’s ongoing commitment to the reporting standard, may influence ongoing data-sourcing practices and subsequently the quality of reporting (McNally and Maroun, 2018): When we produced our first GRI standalone report, that was done to the GRI standard. And then I came in and started producing them, I questioned the value of getting the external GRI check. So I dropped the GRI check, and I didn’t receive a single comment or query from anyone. (C3a) . . . One of the key drivers for continuing to produce this report is reputation. So we’re not out to be a leader in this area, but we also don’t want be actively lacking. So there’s certainly a corporate image component of it. (C3a)

As elaborated by the respondent from C3, there was waning of interest in the GRI (cultural-cognitive pressures) with a subsequent winding back of the quality of reporting with no observable consequence to the company. The firm continued to produce these reports mainly because of the corporate image and reputation (normative pressures). Thus, peer pressure and corporate image, factors of cultural-cognitive and normative pressures, respectively, may have driven the initial adoption of GRI rather than any sustainability performance objectives (Kasperson and Johansen, 2016; McNally and Maroun, 2018). What we did observe, with respect to the voluntary reporting standard, was a potential waning of the power of institutional pressures. Although it has not reflected in the overall compliance with the standard, it is observed in the underlying practices in and around the data-sourcing essential in supporting sustainability reporting (Bui and Fowler, 2022). The variation of data sourcing practices that support compliance with the GRI observed in our study is explained below.

5.1.2. Sense-making

The GRI does not require standardised measurement or metrics, instead allows companies to identify relevant issues and associated data management methods. The initial emphasis is on stakeholder engagement and inclusiveness, captured through a materiality analysis, where relevant stakeholders are connected with issues of significance. Through this process the company identifies material topics for reporting purposes (GRI, 2016; see also Kasperson and Johansen, 2016). For many of the case companies this was an important element in the sense-making process: We then do the GRI’s materiality determination which the (Big4 Audit firm) have assured in the past to say that we meet it. Using that and what our risk department gives us . . . We marry in what we get back from each group, and we do a materiality determination, identify the top six to ten. We can determine where they have issues that are the same, and then rank them accordingly, . . . and that’s all signed off by the sustainability committee, then the executive leadership team, and then the board. (C4a) . . . a structured materiality process . . . I don’t want it to be a process that exists solely for the purpose of reporting. I’d rather they did a slightly different version of it which is useful for their internal strategy development that we then fit in with our reporting– otherwise, I think it’s a cart pulling a horse. (C10b)

The flexibility of the GRI lead to varied interpretations during the sense making process. A key tension arises between using data for operational management or to populate an external report. Although report preparers aimed to add value through the process, the flexibility prevented establishing a singularity of sense-making across the case organisations.

5.1.3. Data-collection

While each company had an established history of GRI compliance, we observed considerable divergence in the nature and quality of infrastructure supporting the data collection process. In this context, three broad but related challenges emerged from the data: systems ownership, data siloes, and cost considerations.

The first challenge was that report preparers did not have ownership of the systems and data collection process at the operational level. There were exceptions where preparers also held responsibility at the corporate level for performance, however often this did not extend across the full range of sustainability issues reported. Below are experiences from C7 and C9: Our source systems, multiple source systems . . . many are legacy systems that means they report to what and how the business uses them historically. Whereas what we need to report and in the form the external stakeholders want, it’s sometimes quite different. We’ve had to factor in the practicality of sourced data and how the business can generate the reports. (C7a) There’s not really a centralised reporting system. It has been fairly ad hoc. There are sets of data which are held with our facilities group and certain other data held by HR . . . we pull all the data together as a sustainability team. But the data are held and managed by different parts of the business. (C9)

A tension between data requirements for reporting and ownership of systems that capture data for supporting current management practices emerges. While the tension was not resolved, the need to report against the GRI alone was not deemed sufficient justification to reorientate data collection to support the diverging needs of reporting.

A second challenge was the diversity of systems and data silos purposed to support operational management, only accessible via the responsible manager with limited connectivity and integration. This can in part be explained from issues emerging from the sense making process; recognition that different norms of performance exist and that material issues are not consistently defined across business units. Issues of prominence were more likely to be supported by mature systems, whereas an issue with less relevance often was not supported by a formal system. Experiences from C4 and C7 are highlighted below: . . . we still have a very disparate system because sustainability encompasses different areas, . . . HR, community, environmental and governance information that relates to the business . . . we rely on a few different systems. We’ve got our own finance system. HR was recently upgraded to a new system, but it’s not yet global. (C4b) . . . some systems have more maturity . . . something like WHS systems, the platforms put in place by our HR colleagues captures a lot of data. WHS – I worry less, because even without a central system, we already get a lot of sourced data and calculated data. But other areas, . . . it’s a whole myriad of different platforms. Network has one way stream, IT device group has another, they’re all different combinations. Some are actual systems, some spreadsheets . . . some manual to some automated, and some semi-automated. (C7a)

Where sense-making did not provide a unified criteria for recognising and reporting data, data collection often drew upon information systems that were unconnected and provided incompatible data. To resolve challenges from the divergence of data, development of an enterprise-wide reporting system or data reconciliation is considered necessary.

Cost considerations presented a challenge to the development of enterprise-wide reporting systems. Below is C4’s experience on a bespoke ‘global’ environment reporting system: We looked at environmental systems in the past – It was all in different systems. At the same time, our safety management area was upgrading to a new system. We ended up going along with their system. We were presented with a few different environmental options. There were some that were better, but because safety was using a particular one and it could include our environmental data, we went with that one. The idea is that it’s global. However, it doesn’t work well, or even at all. It’s not optimum and it wasn’t optimum when we looked at it. (C4b)

C4 now have an enterprise-wide ‘environmental reporting system’ that is an extension of the health and safety system. However, it was acknowledged that this was a suboptimal choice tempered by resource availability and convenience rather than achieving fit for purpose. This is not a reflection on C4’s commitment to resourcing information infrastructure that support performance management, rather the commitment to enterprise-level reporting was not sufficient justification to develop bespoke information infrastructure. To overcome the limitations of sense-making organisations then often had to rely upon data reconciliation processes.

5.1.4. Data reconciliation

For GRI, data is sourced from divergent components of information infrastructure–from manual processes to use of systems and software tools that generate data at different times in the year and in different formats. Three challenges were observed.

The first challenge was recognising the variability of the data sources, reflecting the diversity of practices endemic in a large organisation with diverse operations: So our preference is that the things that we report are numbers that we’re actually using and our reflecting of what we are doing, our interactions. (C11b) . . . there are different norms in different industries as to how you calculate that data. So to some extent, there will always be – we’ll never have perfect consistency across the group but we take a pragmatic approach to that. (c10b)

As there was no prior conversation as to the nature of the required data, such as definitions, metrics of methods of measurement, there had been no prior effort to standardise data for the purpose of reporting. Hence the data collected while representative of operation performance now required manipulation.

Report preparers often engage in data manipulation to address the challenge of representing the whole of organisation in a meaningful way. As pointed out by the respondents C4a and C10b below, manipulation of the information was required reconciling the variations and differences that have emerged from the data sources delivered from divergent systems: The next question is how do we calculate it and there have been times in the past, where something like male to female salary ratios has never been reported and that involved entire manual calculation. So it required going out to every individual country, getting their salary spend, number of employees, exchange rates and manually calculating it in an excel spreadsheet. (C4a) We have some issues with numbers coming up differently. The number of employees, for example, entered into Envizi for safety calculation purposes do not match the number of employees that the divisions are talking about when they announce their full year results. So, we are trying to have one source of data for each type so that we don’t have reconciliation issues. At present, yes we have reconciliation issues. (C10b)

The third challenge is the data aggregation presented in a multitude of formats and from divergent systems and technologies.

HR has their own data collection on their statistics and finance have their own data collection. And then, community have just a spreadsheet. (C5a) So we have actually quite a lot of systems that we collect data from . . . we kind of almost own the environment and some of community data. . . . all the other information that we report basically comes from different parts of the business, they all have their own systems and we have to adapt to those processes and ways of capturing data. It means manually collecting the data and putting it together in a spreadsheet because our sustainability reporting is global. (C4b)

While sense-making may respect the underlying norms of performance across the organisation, it may require data manipulation to meet the requirements of the GRI so that stakeholders at a distance can make informed decisions. However, the validity of the reported information could be questioned as it may not be supported by clear and consistent definitions or an understanding of the organisation’s boundaries or indeed reflect exposure of all controlled operations. Consequently, the data that exists in a particular format suitable for reporting may not be useful for operational purposes and may only exist for the purpose of external reporting.

With regard to the GRI, there was little evidence of a desire to go beyond the minimum requirements; primarily due to a diluted commitment to a portfolio of sustainability reporting standards, lack of a value proposition, and the pressure to be indistinguishable from peers and/or to mimic other corporate actors. Information infrastructure to support data-sourcing practices are adopted at the companies’ discretion based on firm specific circumstances. Confirming prior findings by Boiral et al (2019), our study observed a disconnect between information reported and sustainability challenges and found the disclosures to be selective and inconsistent. The existence of the report and the firm’s GRI status rather than content with context and relevance is the primary motivation to continue reporting. Limited commitment of those responsible and lack of resources resulted in the information infrastructure remaining underdeveloped (Troshani and Rowbottom, 2024).

5.2. NGERs

5.2.1. Institutional environment

NGERs was adopted due to coercive regulatory pressures of the underlying regulation, ongoing compliance monitoring and for the fear of penalties for non-compliance (CER, 2021a; Choi et al., 2021; Stubbs et al., 2013). While there are cultural-cognitive and normative pressures to reporting Greenhouse gases emissions and energy usage more broadly (indeed other voluntary reporting standards overlap on these issues) (Frost et al., 2023), compliance with NGERs is designed solely to meet the regulated needs of the CER (2021a).

5.2.2. Sense-making

NGERs is prescriptive with regard to types of emissions, definitions, measurement guidelines and standardised online reporting template (CER, 2021b). Sense-making experiences from C1 and C5 are highlighted below: NGERs there’s all different criteria . . . There’s criteria for being measured by invoices, there’s criteria for particular grades of meters, there’s criteria for estimates and it’s all very clear when you’re allowed to use a particular criteria and then you actually have to specify when you’re reporting that information what criteria you have used . . .. it’s quite clear what you have to use. (C1) . . . with NGERs there’s quite strict rules on what does and doesn’t pass . . . plenty of documents and there’s plenty of process.., we have manuals from a corporate level, we also have facility level manuals and procedures which explain exactly what needs to happen. So any other competent person could pick it up and understand what’s done. (C1) The NGER legislation asks that the energy you report be auditable back to supplier invoices. So where there is an invoice, you have to use the invoice amount of kWh. At sites where there’s no invoice, where we’ve burned gas to make kilowatt hours . . . then they read the meter to obtain the kWh. (C5a)

Thus, the prescriptive nature of NGERs, regulative pressure and the fear of penalties for non-compliance constrained sense-making conversations. Consequently, there was recognition in the sense-making stage that data created for other purposes were not fit for purpose for NGERs compliance: The PRS (production reporting system) at large manufacturing sites gives a real time ratio of how much gas is being used to make each tonne of ammonia. It uses meters throughout the plant. But these are site based, so I do not usually use them. They also do not use a calendar month in PRS, so the PRS site systems cannot be used for NGER reporting. (C5a)

This has consequences for data collection phase, as discussed in the next section.

5.2.3. Data-collection

Although the regulative pressure provided focus for sense-making, it did not always overcome existing data silos or impacted on other management information systems. For example, the reliance upon invoice data for NGERs reporting would suggest a logical link with sourcing data from the financial systems: I think where one of the flaws might be is that people who own SAP tend to be those people in the financial areas. And they don’t have much of a vested interest in getting this data. . . . we do have other ways we can get the data, which are relatively simple for us. . . . what we can do through SAP is clarify who’s provided diesel. So, you can run a report and 90 percent of our diesel comes from one vendor . . . they provide us a monthly report which the hydrocarbons team validate. And then that report has all of the information that we need. (C8)

The supplier now provides an invoice for accounting purposes and a second report with data to enable NGERs compliance reporting. In effect NGERs reporting for C8 was decoupled from the accounting system even though emissions data could be sourced from existing management systems. Following is a summary of C6’s experiences: So initially on a very high percentage of the buildings, they’re all metered . . . sort of 80% and 90%. So they’re all electronic and they all get fed into a–metering dynamics and all organisational metering dynamic. Everything is then cross checked via invoicing. So when we report for our NGERS, it’s basically all off invoice data and not off metering data. (C6)

The regulative pressure to report ‘reliable’ data for NGERs justified the development of new information systems and elevated the report preparer’s status to owner of these systems. For C10, NGERs compliance fast tracked the rollout of information systems: . . . generally our system for NGERS was set up after NGERS had actually started, so, we actually built it to meet the requirements rather than the other way around. (C10b)

While some organisations developed and/or extended existing systems to manage energy use and other relevant sustainability data, other organisations have outsourced data-collection. For example, C9 outsourced data collation and reliability testing on energy usage and costs, an arrangement unique to energy, partially due to cost, but also the need for compliance reporting.

5.2.4. Data-reconciliation

Compliance with NGERs created an additional challenge for data-reconciliation, not for compliance but in repurposing the data for other stakeholders. This became of relevance when reconciling data across boundaries, between different organisational levels and with outsourced systems and data. For C9, outsourcing has consequences for operational management: I suspect that we will probably be heading towards establishing our own system, so we’ve got greater visibility. Because we can’t say what the trends are. I don’t think we’ve also got sufficient metering across our assets. So we can’t see, for example, when there’s a spike in energy consumption and investigate what’s going on. (C9)

The data are now fit for purpose with respect to compliance reporting but provides little insight for operational management and therefore fails to support managers’ ability to analyse performance.

Where NGERs provided a catalyst for the development of information infrastructure, development often did not extend beyond the boundaries of NGERs. Because of its specified requirements, NGERs data existed as an anomaly within the data collated for sustainability reporting: . . . that doesn’t cover us, for instance, for the operations in the US. So we’d be sourcing the data directly from those facilities teams in the US. (C9)

For C5, the NGERs shaped how data within Australian operations are managed and influenced boundaries for auxiliary reporting: So we don’t do any Scope 3 data. We only do what’s under our operational control, so Scope 1 and Scope 2, which is electricity. No Scope 3 yet. And again, the reason why; the legislation has demanded Scope 1 and 2. (C5a)

While some companies reported scope 3 (air kilometres, for example), only where there was an operating imperative was scope 3 data recorded – although this data was not necessarily included within the sustainability report. C9 for example operate toll roads, from which they monitor emissions from vehicles in tunnels as part of their operating agreement with the State Government. These emissions are not reported under NGERs. Technology is deployed to monitor and disperse the emissions, and it is the energy used in these functions that is defined as Scope 2 and reported under NGERs.

The primary data source utilised for management is therefore sidelined for the purpose of NGERs reporting and justified based on the ‘accuracy’ of the data produced. Interestingly where technology was already deployed for improved management there was no discussion as to replacing with the data sources supporting NGERs. The emphasis thus is on improved reporting rather than improved performance.

6. Findings and conclusion

To complement and extend upon research on sustainability reporting, we have examined the relationship between information infrastructure and sustainability reporting and the reciprocal effects. Investigating data-sourcing practices, our research extends prior research which has primarily focused on the technology (Krasikov and Legner, 2023) and addresses the need for research on internal processes and practices that support sustainability reporting advocated by researchers and professionals (EDM Council, 2024; Haji et al., 2023; Machado Ribeiro et al., 2022). By applying institutional theory to new accounting fields (Schiavi et al., 2024) such as sustainability reporting and supporting information infrastructure, our study contributes to advancing institutional theory. Our findings contribute to an understanding of the institutional pressures in relation to data sourcing practices for sustainability reporting and development of effective information infrastructure. Our study contributes to the literature by explaining the nature of adaptation of information infrastructure and the variations in the data sourcing practices firms engage while complying with different reporting standards.

Addressing the first research question we observed that while there was sufficient institutional pressure to adopt the GRI, this often did not subsequently provide sufficient incentives to develop bespoke information infrastructure to support reporting. Extending prior research (Kasperson and Johansen, 2016; Troshani and Rowbottom, 2024), our study found sustainability reporting to be reliant upon the legacy information infrastructure originally designed to support operational management. Although it does not address data sourcing, the GRI preferences reporting that reflects internal processes with the intent of providing transparency of practice. Consequently, we observed that enterprise-wide reporting required conversion of metrics, capturing of new data to fill gaps, manual intervention to connect data, ‘aggregation’ of divergent data and manipulation into a standard format and structure. We observed there is limited interconnection of sustainability reporting with sustainability performance (McNally et al., 2017) and operations (O’Dwyer, 2011) with the aggregated data frequently not fully reflecting the multiplicity of sustainability performance and management practices within the organisation (Kasperson and Johansen, 2016).

In addressing the second research question of how sustainability reporting standards affect information infrastructure, we observed the development and adaptation of information infrastructure to address the information needs of legislated reporting, consistent with prior research (Adams and Frost, 2008; McNally and Maroun, 2018; Nguyen et al., 2021; Troshani and Rowbottom, 2024). Its occurrence, however, is not observed for voluntary reporting in our study. The institutional environment, specifically regulatory pressure for reporting (e.g. carbon emissions) or for improved performance (such as risk management or workplace health and safety), proved to be instrumental in providing the impetus for the development of bespoke information infrastructure built upon standardised data-sourcing practices enabled by technology. Consistent with previous research (Kasperson and Johansen, 2016; Troshani and Rowbottom, 2024) we observed that the boundaries of reporting were determined by the regulation and that the information infrastructure, when needed, was developed and extended to capture the required data because of the compliance and associated penalties. In addition, where the standard is explicit in the definition, metrics, measurement method and in the final presentation of this information to an external stakeholder (in the sense-making phase of data sourcing), we observed development of information infrastructure for data-capture, collection and reconciliation at the operational level (Kasperson and Johansen, 2016; Senn and Giordano-Spring, 2020). This has enabled uniform data-collection and minimised the need for data-reconciliation at the entity level. Further research is necessary to investigate whether this relationship can be observed where the reporting standard prescribes sense-making but is not legislated (e.g. CDP).

A key implication for standard setters is that the combination of design and regulation can provide the impetus for companies to address challenges related to the development of information infrastructure critical to improve the quality of reporting. Regulatory pressure creates an institutional environment which can overcome internal barriers for the development of information infrastructure (Krasikov and Legner, 2023) such as availability of resources (Troshani and Rowbottom, 2024) and data characteristics (Senn and Giordano-Spring, 2020). Conversely, the GRI illustrates how flexibility during the sense-making phase resulted in challenges in the data-reconciliation phase. Lacking guidance from the standard on information infrastructure, report preparers often had to rely on prevailing siloed information systems and databases, and incompatible technologies, that frequently resulted in gaps and inconsistencies in the reported performance data. Without explicitly addressing the characteristics of data, aggregated performance data are not considered representative of the multiplicity of performance information used to inform management practices though it was constructed to represent corporate level ‘comparable and consistent reporting’.

A second key implication is potential unintended consequences where the requirements of the legislated standard usurped information infrastructure development. In some organisations, this occurred without consideration of existing information infrastructure and at times operated independently or in parallel to management systems purposed for performance management. Systems supporting NGERs, we found, have become disconnected and unsupportive of the company’s focus on operational performance and appear to be distracting from core performance issues (for example reporting on energy used to monitor emissions, but not including data from the monitored emissions). However, with an emphasis (and penalties) focused on reporting, there were limited incentives to make the reporting systems link more effectively with performance management. This presents a challenge to standard setters: to develop standards that not only provide meaningful information to external users but also provide data that serves the needs of internal users and reflect sustainability performance management (Vigneau et al., 2015).

A key limitation is the focus on two reporting standards which were purposely selected to represent the disparity in the compliance requirements of current standards. While this enabled us to highlight the differences in the way information infrastructure is developed, our understanding would be enhanced by extending the scope to include other standards and to other countries where the institutional environment varies. Further research is needed on the impact of operational complexities, disparity of data and the development of relevant taxonomies (Rowbottom, 2023) in more complex environments on data-sourcing and management practices, particularly where sustainability data are to be provided to both external and internal stakeholders. Another limitation is that we did not consider the possible dynamic nature of the stakeholders’ information demands (Bui et al., 2024). Standardised external reporting tends to be static by design, whereas stakeholders often operate in more dynamic environments. Future research with in-depth case studies and surveys could deepen our understanding of the tensions between stakeholders and explore the implications for information infrastructure in increasingly dynamic settings (Bui and Fowler, 2022; Bui et al., 2024).

Finally, the information infrastructure supporting enterprise-wide reporting are not necessarily connected with performance management and there is limited evidence that standards focusing on the needs of external stakeholders will drive an improved understanding of performance at the operational level. The pursuit of a ‘compliant’ report purposed for an external audience may inadvertently result in new data ‘silos’, as reporting systems, built on imposed singular ‘sense-making’, could become decoupled from operational management and performance improvement. Given the potential decoupling of reporting from performance management and the disparity of the systems supporting reporting, should users of these reports be confident in the reporting they observe? The transparency on display is not necessarily reflective of management practice, often created for the purpose of an anecdotal perception of external ‘stakeholder’ information needs with the sustainability performance improvement of the organisations largely ignored. Indeed, the resistance by some of the informants to such reporting suggests the need for further exploration of the nature and purpose of sustainability reporting, as reporting standards seek to deliver standardised performance data to external stakeholders rather than reflect the complexity and diversity of sustainability performance management. There is little wonder that there continues to be calls for improved reporting.

Key practical and research implications

The study explores the reciprocal relationship between sustainability reporting standards and the information infrastructure, uncovers how reported information is affected by legacy information infrastructure and how differences in the standard may affect this relationship.

Report preparers and management must explicitly address the characteristics of data in the adaptation and development of information infrastructure so that it can produce aggregate performance data that is representative of the multiplicity of performance information and useful for performance management.

Researchers and external users should consider the nature of the standard, the potential connection with information infrastructure including data sourcing practices when using and evaluating the quality of sustainability reporting.

Standard setters and external users should be aware that the voluntary standards that do not explicitly address the definition and measure of data typically lead to inadequate development of information infrastructure and manipulation of data that is not necessarily representative of data used to manage operations.

Management must recognise that the information infrastructure that supports enterprise-wide reporting is generally not connected with sustainability performance management and the focus of standards on external stakeholders alone may not drive improved understanding and management of sustainability performance.

Standard setters while developing standards must take into account the needs of internal users in addition to the external users to help firms manage sustainability performance.

Footnotes

Appendix

Acknowledgements

The authors would like to thank the research participants for generously sharing their perceptions, views and knowledge and the reviewers for their constructive and insightful comments.

Final transcript accepted 16 February 2025 by Andrew Jackson (Editor-in-Chief).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.