Abstract

COVID-19 poses an infectious risk to healthcare workers especially during airway management. We compared the impact of early versus late intubation on infection control and performance in a randomised in situ simulation, using fluorescent powder as a surrogate for contamination. Twenty anaesthetists and intensivists intubated a simulated patient with COVID-19. The primary outcome was the degree of contamination. The secondary outcomes included the use of bag-valve-mask ventilation, the incidence of manikin cough, intubation time, first attempt success and heart rate variability as a measure of stress. The contamination score was significantly increased in the late intubation group, mean (standard deviation, SD) 17.20 (6.17), 95% confidence intervals (CI) 12.80 to 21.62 versus the early intubation group, mean (SD) 9.90 (5.13), 95% CI 6.23 to 13.57, P = 0.005. The contamination score was increased after simulated cough occurrence (mean (SD) 18.0 (5.09) versus 5.50 (2.10) in those without cough; P<0.001), and when first attempt laryngoscopy failed (mean (SD) of 17.1 (6.41) versus 11.6 (6.20) P = 0.038). The incidence of bag-mask ventilation was higher in the late intubation group (80% versus 30%; P=0.035). There was no significant difference in intubation time, incidence of failed first attempt laryngoscopy or heart rate variability between the two groups. Late intubation in patients with COVID-19 may be associated with greater laryngoscopist contamination and potential aerosol-generating events compared with early intubation. There was no difference in performance measured by intubation time and incidence of first attempt success. Late intubation, especially when resources are limited, may be a valid approach. However, strict infection control and appropriate personal protective equipment usage is recommended in such cases.

Introduction

Coronavirus disease 2019 (COVID-19) is a pandemic with a high incidence of respiratory failure. 1 The severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) virus is highly infectious and can be spread by airborne aerosol, droplet and fomite transmission. 2 In the current pandemic there have been high numbers of healthcare worker infections and deaths. 3 , 4 In Italy, it was reported that up to 20% of responding healthcare workers were infected; 5 in Spain an estimated 26% of confirmed COVID-19 infections were in healthcare workers. 6

Data from the severe acute respiratory syndrome (SARS) pandemic and other viral respiratory disease outbreaks showed that transmission to healthcare personnel was associated with exposure to potentially aerosol-generating procedures, carrying a significantly increased odds ratio (6.6) of transmission. 7 Staff who directly participated in intubation had the highest and most consistently increased risk of developing SARS. 8 Intubation is particularly high risk because of the close proximity of the laryngoscopist to the patient’s airway, 9 especially if there is coughing of infectious aerosols and droplets, and the need for participation in other potentially aerosol-generating procedures during intubation such as bag-valve-mask ventilation and open airway suctioning. 10 These factors all lead to a high risk of transmission. 11

In particular, the timing of intubation is controversial. Some centres advocate delaying intubation until oxygenation can no longer be maintained despite high flow oxygen 12 or non-invasive ventilation, whereas others propose intubation be considered earlier, once the disease trajectory suggests intubation is likely. 13 Although both approaches may be justified, it is unclear which is superior.

In the event of late and emergency intubation, the patient will likely be unstable with severely impaired ventilation–perfusion matching and/or shunt, and rapid apnoeic oxygen desaturation may be anticipated. The laryngoscopist will thus be under increased time pressure to achieve successful intubation. This may lead to the operator inadvertently shortening the duration from induction and muscle relaxation to the intubation attempt, thus reducing optimal intubating conditions. Under such circumstances it is plausible that aerosolisation of droplets 9 may occur as a result of patient coughing due to suboptimal paralysis, or if rescue bag-valve-mask ventilation is required because of hypoxia. Thus, potential mechanisms for staff contamination during the intubation procedure are exacerbated.

Particularly while managing severely ill and rapidly deteriorating patients, the excess stress and cognitive load may adversely influence the ability of staff to adhere to meticulous infection control procedures. Studies of the impact of stress on performance in emergency scenarios using simulation14,15 found that increased stress was associated with worse performance. Worse performance may affect not only staff safety, but also patient safety.

We hypothesised that early intubation of a more stable COVID-19 patient in whom disease trajectory suggested the need for intubation would lead to a more controlled and efficient intubation process, with less risk of contamination of staff.

We performed an in situ simulation 16 to compare the outcome of early versus late intubation of COVID-19 patients, in particular measuring the degree of operator contamination and intubation success. An objective measure of operator stress was measured to assess the relationship between operator stress and outcomes.

Materials and methods

This study was carried out at the Department of Anaesthesia and Intensive Care, Prince of Wales Hospital, a tertiary referral teaching hospital in Hong Kong with experience in the management of critically ill COVID-19 patients. It was approved by the survey and behavioural research ethics board of the Chinese University of Hong Kong (SBRE-19-656). Informed, written consent to participate was obtained from all participants.

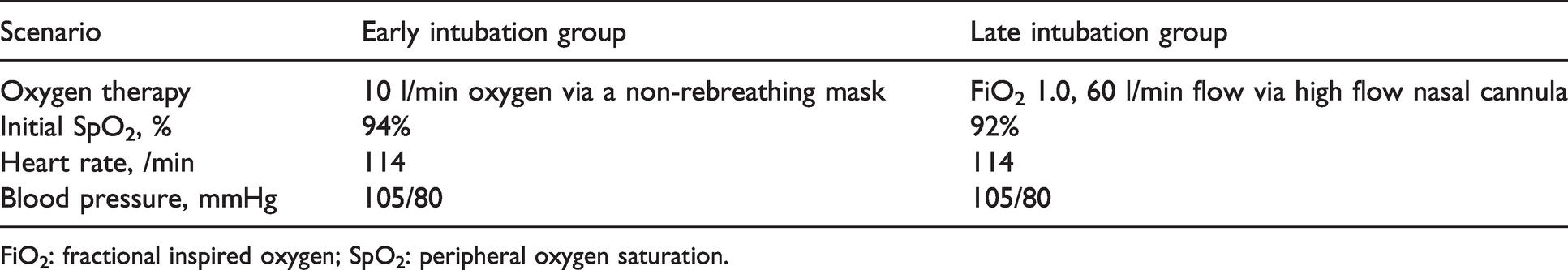

We designed two independent scenarios, one to mimic an ‘early’ intubation and one a ‘late’ intubation, as outlined in Table 1. The early intubation scenario simulated a patient with moderately high oxygen requirement. In contrast, the late intubation scenario simulated a more critically ill patient with worse respiratory failure and more rapid apnoeic desaturation. The patient in both scenarios also suffered from acute kidney injury 17 and hyperkalaemia.

Comparison of vital signs of two patients in each simulation scenario.

FiO2: fractional inspired oxygen; SpO2: peripheral oxygen saturation.

We carried out the simulations in situ in a negative pressure airborne infection isolation room in the intensive care unit identical to that used for real patients. A high-fidelity manikin (3G SimMan; Laerdal Medical, Stavanger, Norway) was used. Fluorescent powder (Glo Germ™; Glo Germ Company, Moab, UT, USA) was used as a surrogate marker of contamination.18,19 Standardised areas on the manikin (face, intra-oral region and head) were covered in fluorescent powder. An intranasal atomiser device (MAD Nasal; Teleflex, Morrisville, NC, USA) was surreptitiously placed in the nares of the manikin and connected to a syringe containing dissolved fluorescent powder which was triggered manually to produce a spray of droplets. 20

The manikin was designed to have a modified Cormack–Lehane laryngoscopy grade 2A view on direct laryngoscopy and grade 1 view on videolaryngoscopy. To simulate suboptimal paralysis, the manikin’s jaw was electronically made rigid prior to complete muscle relaxation (designated as less than 60 seconds after administration of rocuronium). 21 Furthermore, the cough atomiser was triggered if laryngoscopy was attempted before complete paralysis.

Participants first watched a video of our departmental COVID intubation procedure guideline. 22 A standardised scenario brief was then read to the participant, who was told to manage the patient as they would for a real COVID-19 patient. They were not aware of the study design. They donned a clean set of personal protective equipment (PPE) including cap, face shield, mask, gown, and gloves. Airway equipment including a videolaryngoscope (McGrath MAC videolaryngoscope, Medtronic, Dublin, Ireland, with disposable blade size 3), bougie, i-gel® (Intersurgical, Berkshire, UK), and bag-valve-mask resuscitator (Laerdal silicone resuscitator; Laerdal Medical, Stavanger, Norway) were all available. The vital signs monitor also included a timer function, identical to that in routine use in our intensive care unit (ICU) and operating theatres.

All other elements of the scenarios were standardised including the change in peripheral oxygen saturation (SpO2) reading of the manikin at a pre-specified rate 23 (Supplementary Appendix I). The scenario was complete after the endotracheal tube was successfully placed. Upon completion of the scenario, the participant’s body and PPE were examined using ultraviolet light to look for the presence of fluorescent dye as a surrogate marker of contamination.

We wished to measure the level of stress during the scenario and so chose to use heart rate variability (HRV) as a physiological measure, which has been reported as a reliable objective measure.24,25 Due to the study design, it would not have been feasible to ask participants to report their own stress scores during the simulation. HRV is defined as the variation in time interval between the two heart beats (the R-R interval on electrocardiography). 25 It was measured using a chest strap heart rate monitor (Polar H10 heart rate monitor; Polar, Kempele, Finland) and the Elite HRV application 26 for a smartphone. We measured the root mean square of successive differences (RMSSD), 27 a commonly used time-domain analysis of HRV which is a surrogate for autonomic nervous system activity. A low RMSSD signifies stress. 28

A baseline HRV reading at rest was taken while the participant was watching the intubation video prior to the scenario. Another reading was taken during the scenario and the difference was compared. Qualitative comments from participants during debriefing were also recorded.

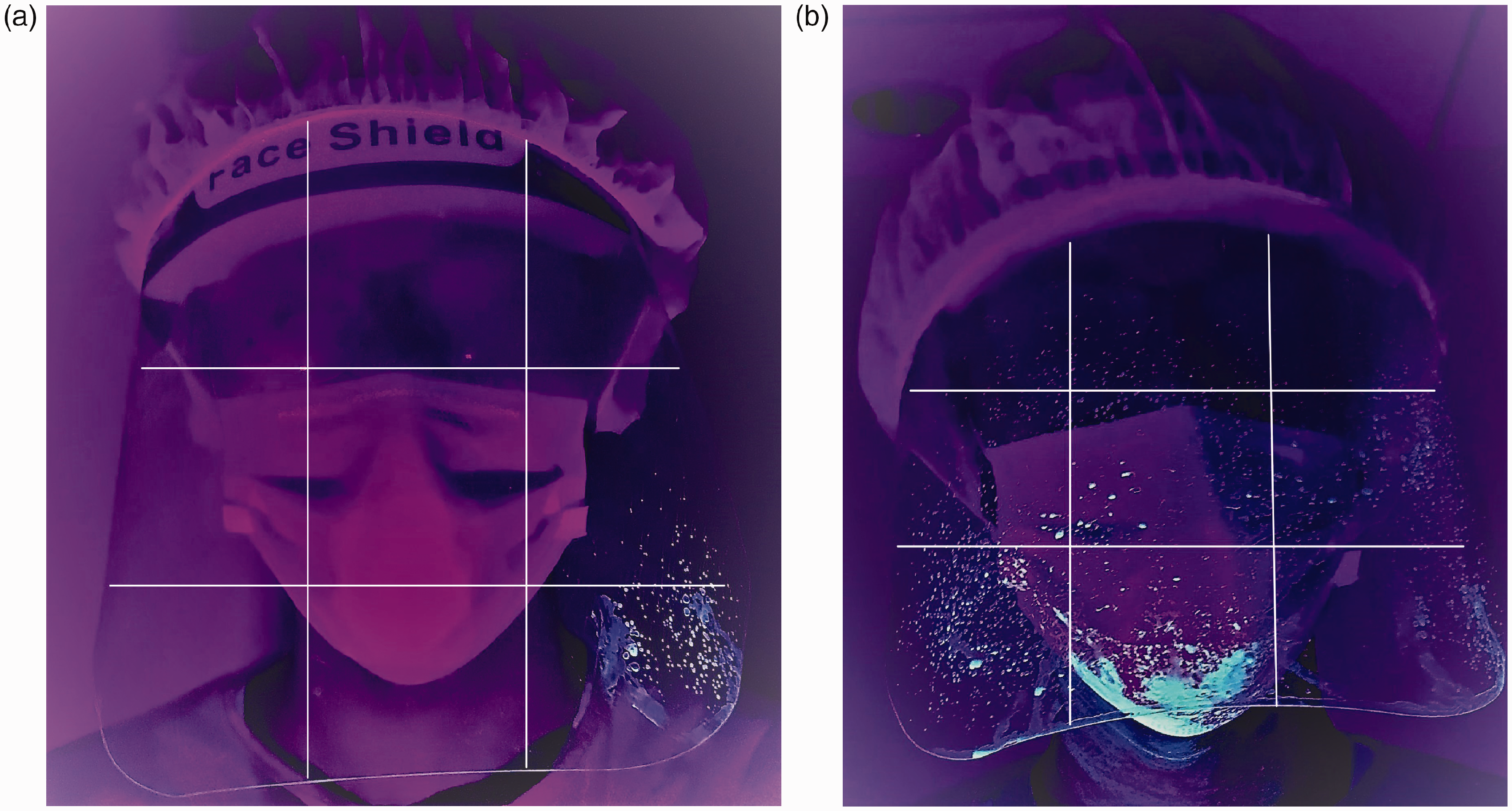

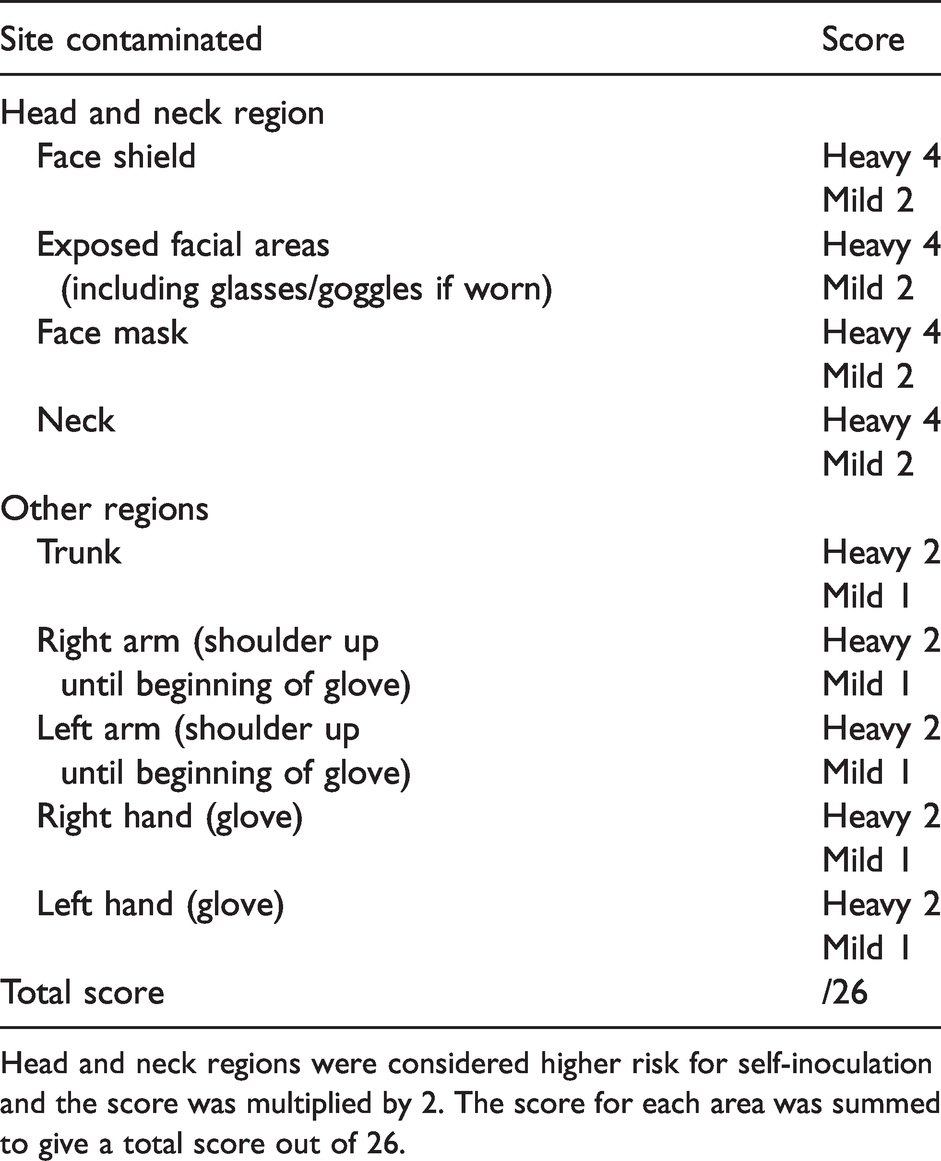

The primary outcome was the degree of laryngoscopist contamination. To allow quantitative comparison, we devised a contamination score. This was measured by using a predefined checklist of discrete areas on the participant’s body and PPE to look for the presence of fluorescent dye using ultraviolet light. For each area, the degree of contamination was rated as mild or heavy (see Figure 1). The presence of dye on the head and neck was considered as higher risk, and thus the score for these areas was multiplied by two. The score for each area was summed to give the total contamination score (Table 2).

Photos of definition of (a) ‘mild’ contamination and (b) ‘heavy’ contamination. Each predefined body area (face shield shown as example) was divided into approximately nine squares as shown. Involvement of more than 50% squares was rated as heavy and less than 50% as mild.

Contamination score.

Head and neck regions were considered higher risk for self-inoculation and the score was multiplied by 2. The score for each area was summed to give a total score out of 26.

The secondary outcomes assessed included:

Staff safety and infection control

○ Incidence of the use of bag-valve-mask ventilation at any point. ○ Incidence of cough, which was triggered if laryngoscopy was attempted before complete paralysis (defined as 60 seconds after injection of rocuronium). Patient safety and intubation process

○ Intubation time in seconds, defined as the time from administration of induction agent to first end-tidal carbon dioxide waveform. ○ Incidence of failed first attempt laryngoscopy, defined as withdrawing the laryngoscope from the manikin’s mouth before successful intubation. ○ Use of an intubating aid such as a bougie. Stress

○ HRV, as measured using the RMSSD.

We also assessed if the above factors were associated with an increased contamination score.

We ran 20 simulations involving a total of 20 participants from the Department of Anaesthesia and Intensive Care. These included ten registrars and ten specialists in anaesthesia or intensive care, who were all previously trained in airway management for COVID-19 patients, and had three years or more of experience in airway management for critically ill patients. They were invited by electronic message in alphabetical order according to each day’s roster. They were then block randomised into the ‘early’ scenario or the ‘late’ scenario, with stratification by level of training. The exclusion criteria were any known cardiac disease or use of cardiac medications.

Data were analysed using SPSS version 1.0.0.1406 (IBM, New York, NY, USA). The data were tested for normality (Shapiro–Wilk test) and the corresponding statistical test used to analyse for differences between the early and late group. In particular, we used a one-tailed Student’s t-test for parametric data and the Mann–Whitney U-test for non-parametric data, and a one-tailed chi-square test (Fisher’s exact test if cell count <5) for categorical data. We used the conventional P<0.05 as the threshold for concluding a statistically significant difference between the two groups.

We hypothesised it would be implausible that earlier intubation in a more stable patient would result in worse contamination or performance, and therefore chose to use a one-tailed power analysis. We calculated the required sample size using a priori assumption of a mean (standard deviation, SD) contamination score difference of 13 (8) with a normal parent distribution, using a one-tailed t-test for an alpha of 0.05, and a power of 0.95. Power was calculated using G*Power version 3.1.9.7 (Heinrich Heine University, Dusseldorf, Germany). This calculated a sample size of 18, and two additional participants were recruited in case of technical difficulties or missing data.

Results

All 20 participants successfully intubated the manikin.

Primary outcome

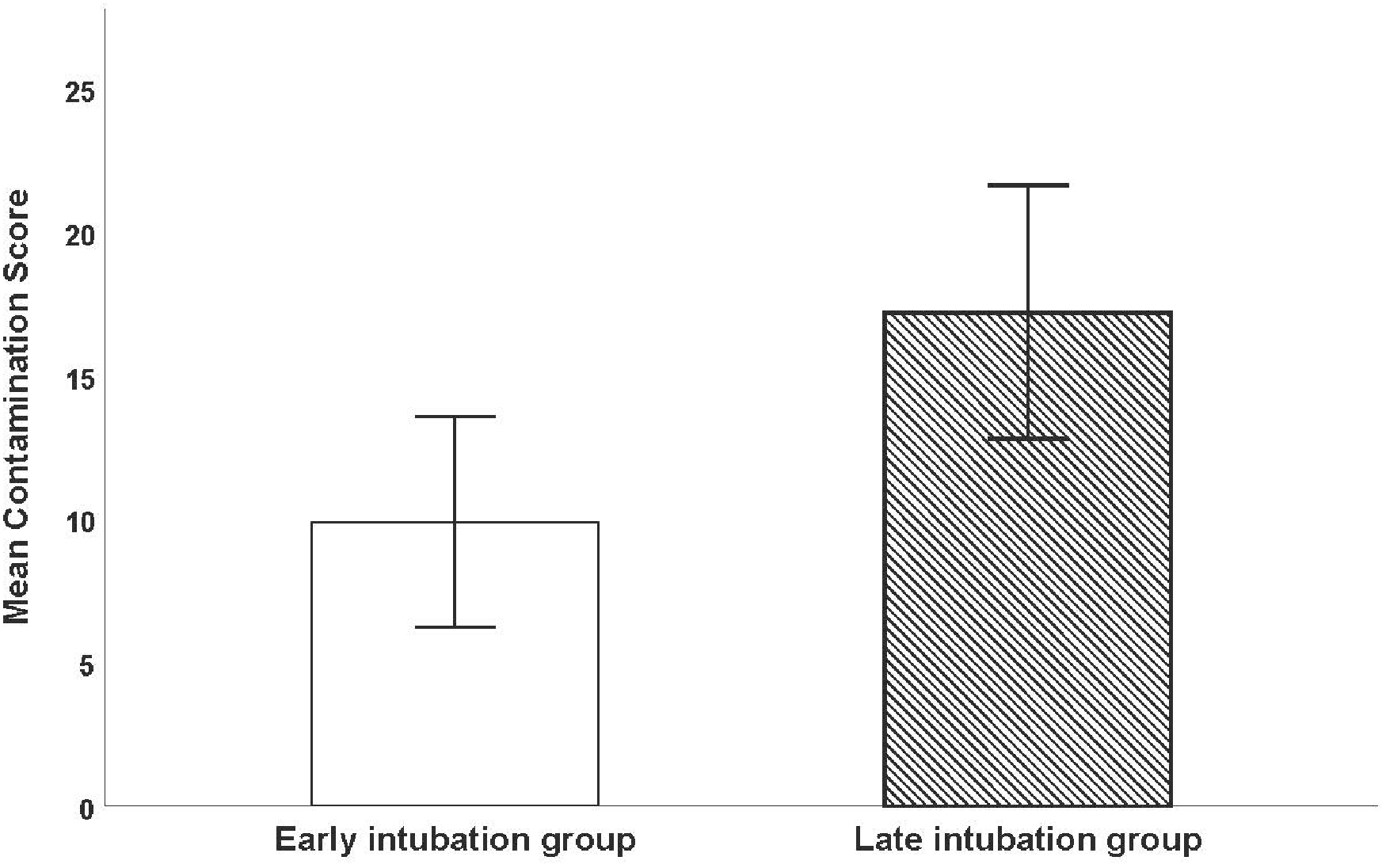

The contamination score in the late intubation group was significantly greater compared to the early intubation group (mean (SD) 17.29 (6.17), 95% confidence intervals (CI) 12.80 to 21.62 versus 9.90 (5.13) 95% CI 6.23 to 13.57; P = 0.005) (see Figure 2).

Comparison of contamination score in early intubation group versus late intubation group. Error bars show the 95% confidence intervals. The contamination score in the late intubation group was significantly greater than in the early intubation group.

Secondary outcomes

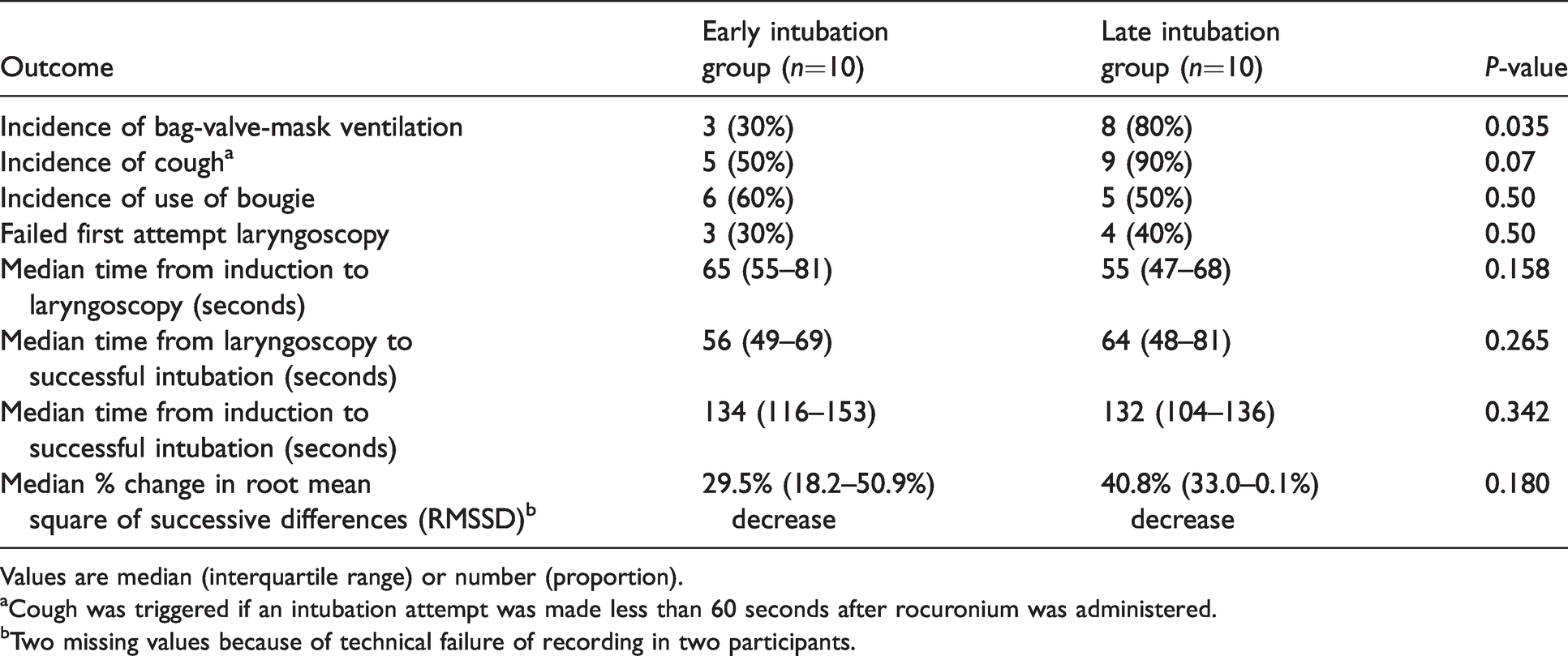

The incidence of bag-valve-mask ventilation was significantly increased in the late intubation group (80% versus 30%; P=0.035). Cough was triggered more frequently in the late intubation group (90% versus 50%; P=0.07); however, this did not reach a priori defined statistical significance (Table 3).

Secondary outcomes with comparison between early intubation and late intubation group.

Values are median (interquartile range) or number (proportion).

aCough was triggered if an intubation attempt was made less than 60 seconds after rocuronium was administered.

bTwo missing values because of technical failure of recording in two participants.

There was no difference in time to intubation, incidence of failed first attempt laryngoscopy, or use of bougie, or the percentage change in RMSSD during the simulation from baseline (Table 3) between the two groups.

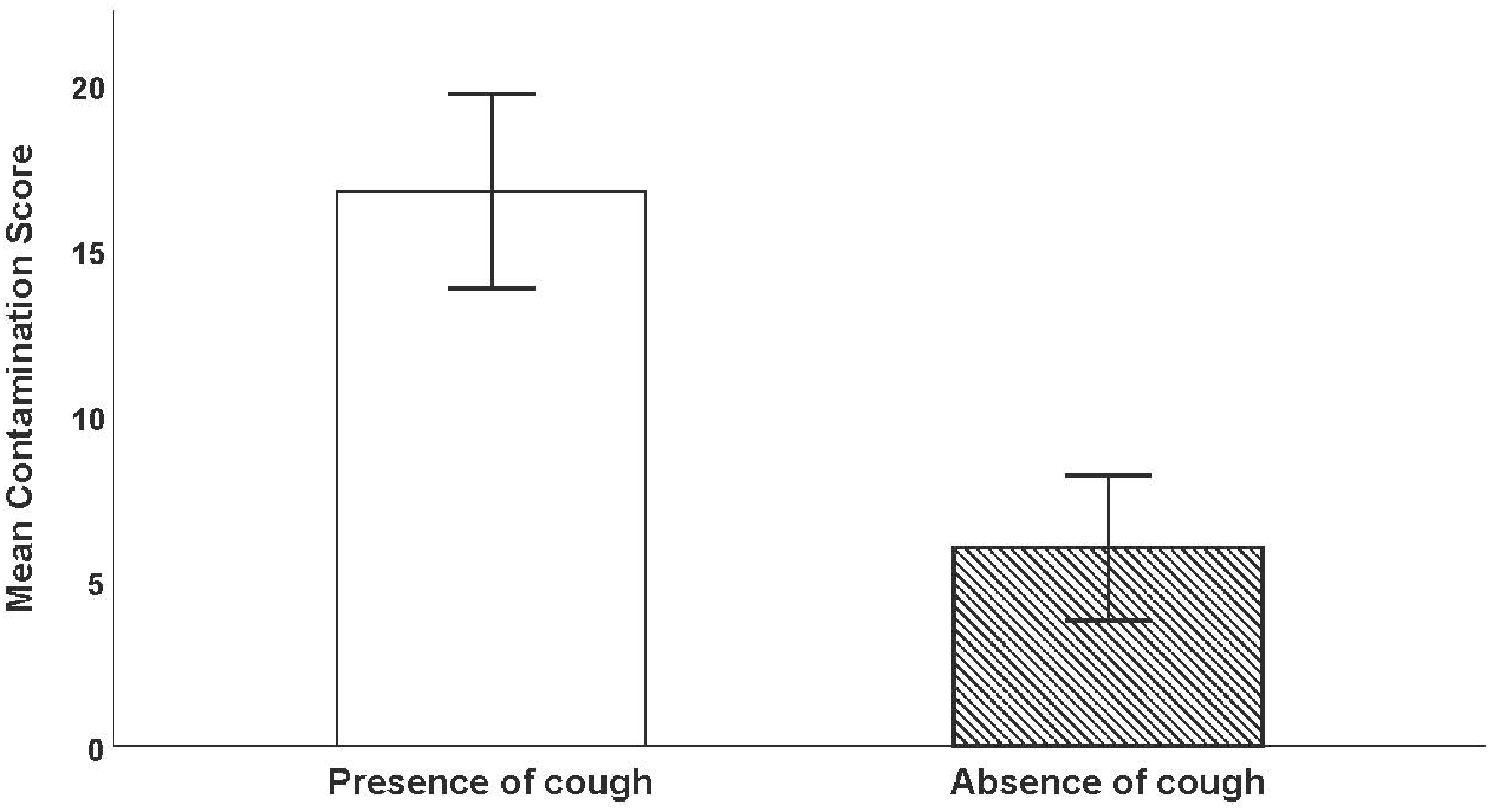

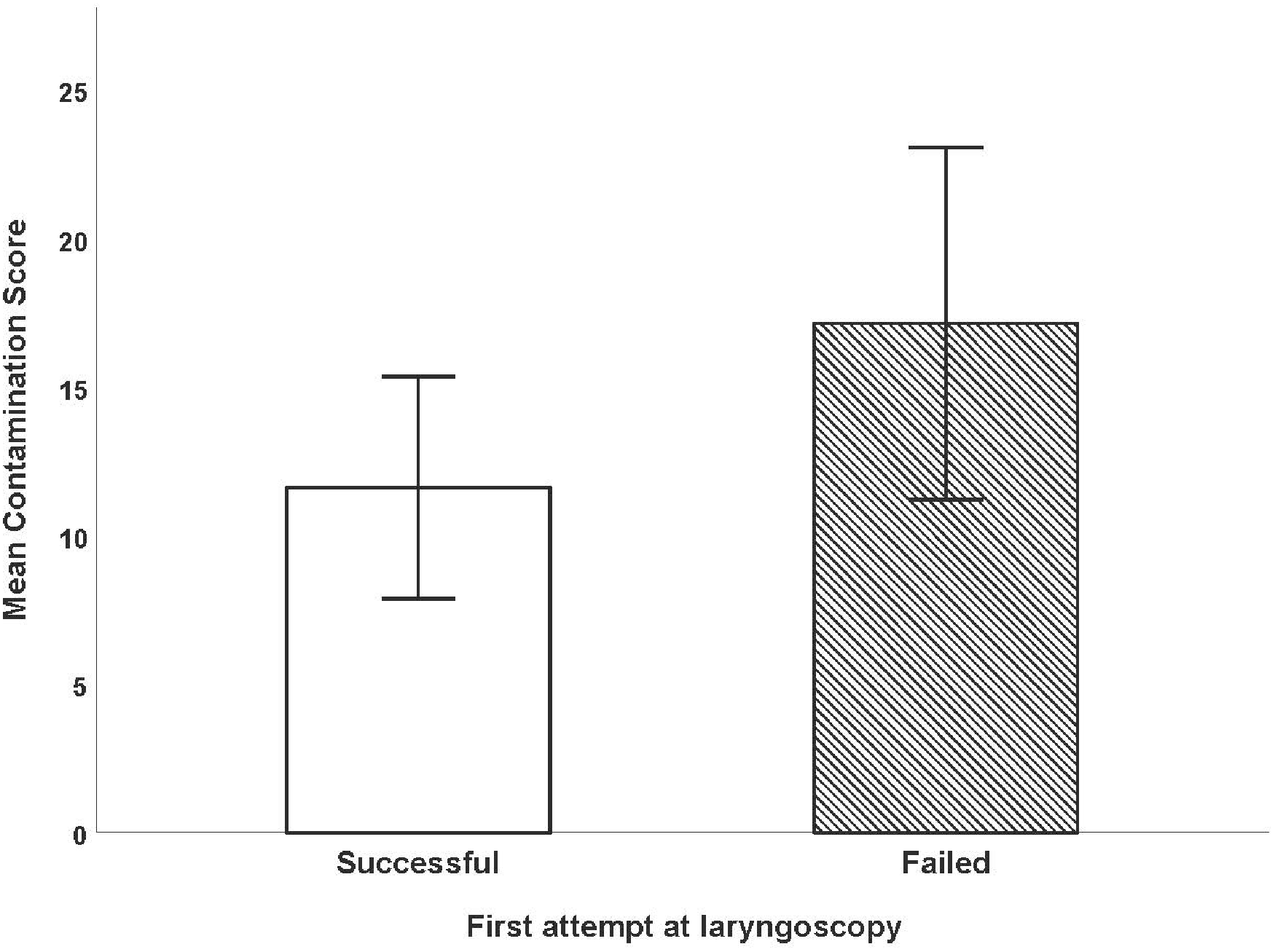

The contamination score was significantly increased in the presence of cough, with a mean (SD) of 18.0 (5.09) in the group with cough versus 5.50 (SD 2.10) in those without cough; P<0.001 (see Figure 3). The contamination score was also significantly increased when first attempt laryngoscopy failed, with a mean (SD) of 17.1 (6.41) versus 11.6 (6.20); P = 0.038 (see Figure 4).

Comparison of contamination score when manikin cough was triggered by a laryngoscopy attempt less than 60 seconds after administration of neuromuscular blocking agent versus absence of cough. Error bars show 95% confidence interval. The contamination score was significantly increased when cough occurred.

Comparison of contamination score when first attempt laryngoscopy succeeded versus failed. Error bars show 95% confidence intervals. The contamination score was significantly increased when first attempt laryngoscopy failed.

Quantitative values are shown in Table 3, and qualitative responses are shown in Supplementary Appendix II.

Discussion

We found that there was a significantly higher contamination score in the late intubation group compared to the early intubation group (P=0.005). We noted that the facemask and uncovered neck area was often contaminated, probably by cough droplets, despite use of a face shield. Increased contamination, in particular in the head and neck region, may increase the risk of healthcare worker transmission as infective virus at these sites may then be deposited onto mucous membranes via a process of self-inoculation. 11

The incidence of cough triggered in the late intubation group was higher, but the difference fell short of statistical significance. There was, however, a significant association between cough and higher contamination score, as well as failed first laryngoscopy attempt and higher contamination score. We also found an increased incidence of bag-valve-mask ventilation use in the late intubation group (P=0.035). In isolation, the act of placing an endotracheal tube into a paralysed patient should not cause significant infectious aerosol spread and contamination. However, the process from induction to successful intubation often entails other events and interventions, such as coughing from inadequate paralysis, suction and bag-valve-mask ventilation. It is likely that this combination is what makes tracheal intubation such a high-risk procedure for infection transmission. 7 The increased contamination score associated with the more frequent need for bag-mask ventilation and simulated cough are consistent with our original hypothesis.

Participants reported that they perceived a dilemma between the need for good infection control and the clinical urgency of a desaturating patient. In the late intubation group, some felt compelled to attempt intubation despite knowing the patient may not yet be completely paralysed, or to attempt bag-valve-mask ventilation while apnoeic. This behaviour was both reported and observed, despite the departmental guidelines that have a heavy focus on healthcare worker safety including minimising these two situations whenever possible.

We found no significant difference between overall time to intubation for early and late intubation scenarios. There was also no significant difference for time from induction to laryngoscopy, and laryngoscopy to intubation between groups. The observed times are similar to those reported in clinical studies of intubation in critically ill patients 29 and in COVID-19 patients. 30 Participants thus did not display observably worse performance associated with the stress of managing a more unstable infectious COVID-19 patient. From this point of view, the initial use of high flow oxygen or non-invasive ventilation, with recourse to intubation only after further clinical deterioration, may be a valid approach, especially in areas with limited ventilator and ICU capacity, provided that the laryngoscopist has been previously trained and has substantial experience in airway management.

It appears that the level of stress experienced by the participants in the late intubation scenario, as measured by percentage change in RMSSD, was greater, but this difference did not reach statistical significance. Thus, we remain uncertain regarding the possible effect of stress on the performance of intubation or degree of contamination.

This study has several limitations. Because of the inherent nature of simulation, the stress of managing a real deteriorating patient and exposure to a real infective risk of COVID-19 may not be fully reflected. Secondly, the cough simulated by our device and degree of projection of droplets may not be the same as that produced by a real patient. Thirdly, it was not possible to assess the relative risk contribution of contamination of bag-valve-mask ventilation and cough. Finally, we did not assess the duration and severity of hypoxaemia during intubation, which would be a more direct measure of patient safety. 31

In conclusion, our in situ simulation demonstrated a greater degree of staff contamination, probably from the increased exposure to potentially aerosol-generating events, during a late intubation scenario compared to an early intubation scenario. There was no definite difference in stress response or in objective parameters of performance such as intubation time and incidence of failed first laryngoscopy attempt. When faced with limited ventilator and ICU resources, late intubation may remain a desirable approach; however, strict infection control and appropriate PPE usage should be observed to minimise disease transmission.

Supplemental Material

sj-pdf-1-aic-10.1177_0310057X211007862 - Supplemental material for Early intubation versus late intubation for COVID-19 patients: An in situ simulation identifying factors affecting performance and infection control in airway management

Supplemental material, sj-pdf-1-aic-10.1177_0310057X211007862 for Early intubation versus late intubation for COVID-19 patients: An in situ simulation identifying factors affecting performance and infection control in airway management by Christopher P ee Yu-Yeung Yip Chan Albert KM Gavin M Joynt in Anaesthesia and Intensive Care

Supplemental Material

sj-pdf-2-aic-10.1177_0310057X211007862 - Supplemental material for Early intubation versus late intubation for COVID-19 patients: An in situ simulation identifying factors affecting performance and infection control in airway management

Supplemental material, sj-pdf-2-aic-10.1177_0310057X211007862 for Early intubation versus late intubation for COVID-19 patients: An in situ simulation identifying factors affecting performance and infection control in airway management by y Christopher P ee Yu-Yeung Yip Chan Albert KM Gavin M Joynt in Anaesthesia and Intensive Care

Footnotes

Author Contribution(s)

Acknowledgements

The author(s) would like to thank their intensive care unit nurse consultant Shing Tak Poon for her assistance in running the simulation scenarios.

Declaration of conflicting interests

The author(s) have no conflicts of interest to declare.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplementary material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.