Abstract

Transfusion, as we know it today, developed into a very sophisticated treatment modality as a result of centuries of experimentation. Intraoperative cell salvage is a transfusion technique where autologous blood lost during surgery is reinfused. The success of this process relies on specialised equipment and techniques to collect, process, anticoagulate filter and reinfuse blood. Through a literature review, we collected information about the early origins of specific techniques relevant to intraoperative cell salvage: the ability to collect lost blood, to prevent collected blood from clotting, to remove debris through processing and other harmful aspects through filtering, the benefits of autologous blood transfusion, reinfusion and traditional concerns and contraindications. A culmination of knowledge specific to each of these techniques over centuries provides the background to the safe intraoperative cell salvage technique used today. In addition, we aimed to identify the reasons why specific equipment and techniques developed, why practice changed and what is still unknown. This article reviews relevant allogeneic transfusion and autotransfusion history, starting in Roman times, and includes landmark events through the centuries.

Keywords

History of blood transfusion

Historical blood transfusion literature discusses blood baths used for recuperation by Egyptian princes and blood drunk by gladiatorial victors in Roman times. However, the practice of ‘transfusion’ by Egyptians, Hebrews and Syrians in those times may differ substantially from transfusion as we know it today. 1

Conflicting publications explain how blood from three young boys was ‘transfused’ to Pope Innocent VIII in 1492. It is very likely that these transfusions were actually the drinking of blood rather than intravenous transfusion. 1 , 2 Lindeboom wrote after a thorough investigation of the original literature that blood was taken from the boys, but no conclusion can be made that Pope Innocent VIII received this blood through transfusion. 2 The first detailed explanation of an actual intravenous blood transfusion is credited to Andreas Libavius, a chemist, physician and director of the College of Coburg, from Halle Saxony, Germany (1615). 3 , 4

These events were before the discovery of circulation by William Harvey (1628), and therefore earlier accounts of blood transfusion may not imply what we now define as a blood transfusion. William Harvey, who was born in the UK, completed his medical degree in Italy and wrote his original work in Latin ‘Exercitatio Anatomica de Motu Cordis et Sanguinis in Animalibus’, later translated into English (‘An Anatomical Exercise on the Motion of the Heart and Blood in Living Beings’). Harvey was the first to describe the heart and blood vessels functioning in a circle, receiving and pumping blood to organs. 5

Some initial animal-to-human and human-to-human blood transfusion experiments took place in the second half of the 17th century. However, many fatal incidents resulted in the practice of blood transfusion experimentation being declared illegal by the French parliament, by the Royal Society of England and in Rome. 3 , 6 , 7 A Swedish nobleman died from a lamb blood transfusion by Jean-Baptiste Denis, court physician to King Louis XIV in France, in 1667. Denis was arrested and put on trial for murder but was later exonerated. 6 For 150 years afterwards, blood transfusion was not mentioned in publications. 6

In 1874, a review article in the Philadelphia Medical Times mentioned the ‘imperfect physiological basis’ of the transfusion technique between animals and humans. 8 This leading article, ‘The Present State of Transfusion’, drew attention to other authors at the time who discussed the occurrence of agglutination and haemolysis when ‘heterologous’ blood was transfused, for example from a sheep to a human. 1 , 3 The incomplete understanding of asepsis, immunology and blood groups and the inability to prevent blood from clotting resulted in many incidents, and the common perception of transfusion at that time was that it was a hazardous procedure.

To overcome the issue of clotting and provide blood available for reinfusion, investigators studied a range of more mechanical techniques, including ‘defibrination’ 9 , 10 or additives, for example sodium phosphate, sodium bicarbonate and ammonia, 11 as well as sodium citrate-, sodium metaphosphate-, hirudin- and paraffin-coated tubes. 9 Four obstetric patients received sodium phosphate in an attempt to prevent clotting by John Braxton Hicks in 1868 in the UK. 11

Those who attempted vein-to-vein transfusion were usually unsuccessful, as blood would clot before reinfusion. Direct blood transfusion, where the artery of the donor was anastomosed directly with the vein of the recipient, or infusion through paraffin-coated tubes was therefore practised. 11 The first direct animal-to-animal transfusion was conducted by Richard Lower, an English physician, in 1665. 1 , 3 , 4 The use of a device incorporating an animal bladder as reservoir and a receiving and injecting limb was developed by Sir Christopher Wren in England. 1 , 12

Parallels can be drawn between the obstacles researchers had to overcome when conducting allogeneic transfusion and intraoperative cell salvage (ICS). Researchers studied various saline solutions (and other alternatives to blood as replacement therapy), conducted experiments related to compatibility (during animal-to-human and, later on, during human-to-human transfusion), attempted to find ways to ensure the availability of a blood resource during urgent situations and studied techniques and equipment that allowed collection, pressurised reinfusion and tubing. For some of these obstacles, ICS may provide a solution. For example, salvaged blood provides an immediate (no need for storage and banking) source of compatible (a patient’s own) blood. On the other hand, both ICS and allogeneic blood transfusion (ABT) present similar challenges when equipment for collection and reinfusion are considered.

In the search for the ideal replacement fluid during blood loss, other alternatives to blood transfusion were studied. Lazarus-Barlow, demonstrator in pathology at the University of Cambridge, cites Hartog Jacob Hamburger, a Dutch chemist at Utrecht veterinary school in 1888, as the main authority who suggested 0.92% saline as ‘normal’ for mammalian blood and described the various osmotic consequences of increasing concentrations. 13 , 14 Hamburger called this fluid ‘indifferent fluid’, as it displayed no visible red blood cell lysis and had a similar freezing point to human serum. 14 It was observed that ‘physiological salt solution’ was capable of saving the lives of animals and humans suffering from significant haemorrhage. 14 , 15 The term ‘normal saline’ was first used in The Lancet on 29 September 1888. 16 Today, during ICS, most manufacturers still use 0.9% saline during processing cycles and for red blood cell suspension.

In 1900, Karl Landsteiner, an Austrian American immunologist, made a major breakthrough by demonstrating the presence of isoagglutinating and isoagglutinable substances in human blood and the ABO blood groups, and he received the 1930 Nobel Prize for Physiology or Medicine for his discovery. 11 Alternatively, ICS (the patient’s own blood) provides a source of compatible blood without the need to cross-match, and the immunological compatibility of ICS blood may be one of its greatest benefits.

In 1818, when considering the challenge of finding blood in urgent situations, James Blundell commented: ‘What is to be done in an emergency? A dog might come when you whistled, but the animal is small; a calf might have appeared better suited for the purpose, but then it has not been taught to walk properly up the stairs’. 11 The ability to store blood for later use was not available at that time.

The creation of blood depots (the first blood bank), pioneered by medical scientist Oswald Robertson in 1917 in France during the First World War, was a major step towards a solution to this problem. 11 This ability to collect, anticoagulate and store blood also enabled the use and study of preoperative autologous donation (PAD), a technique where pre-donated units of a patient’s own blood could be stored, in a similar way applicable to allogeneic blood in a blood bank, for later use during surgery. PAD is, however, no longer commonly used. ICS blood on the other hand (collected in a similar way as PAD) is immediately used during surgery, and therefore does not require a storage and banking process.

In addition to these advances, other authors studied various methods of reinfusion, from syringes to valve incorporating devices and tubing to enable vein-to-vein transfusion (described later). These studies provided valuable information, and today the ICS process uses collection, processing and reinfusion techniques that were developed over centuries.

Where did autotransfusion start?

James Blundell, obstetrician and lecturer of physiology at Guy’s Hospital in London in 1818, completed many experiments where he studied human and animal donor transfusion. 6 , 17 He was credited as the first to conduct autotransfusion. 18 Between 1818 and 1829, Blundell published more than 40 lectures in The Lancet and, in 1824, a 170 page book, Researches Physiological and Pathological. 19 , 20 Blundell’s work included detailed descriptions of blood-collection techniques, multiple pieces of equipment used to enable reinfusion and observations about potential adverse events (often in experiments on dogs), which are all very relevant to the development of cell salvage equipment and techniques.

During the 1800s, clinicians experimented with animal-to-human blood transfusion. What blood to use (human or animal), how to reinfuse collected blood, how to prevent blood from clotting before reinfusion, how much to infuse, equipment to enable this collection and infusion process and whether arterial or venous blood should be used were all unknown aspects at the time.

In 1818, Blundell described how a female patient died from ‘uterine hemorrhagy’ and reflected how she could have been saved by transfusion. He remained uncertain whether blood collected in this way would ‘remain fit for the animal functions’. 17 At this time, the allogeneic transfusion practice was still very experimental. Aspects such as air embolism, clotting and transfusion reactions were suggested rather than known.

In 1828, Blundell wrote the following, referring to blood transfusion as ‘the operation’: In the present state of our knowledge respecting the operation, although it has not been clearly shown to have proved fatal in any one instance, yet not to mention possible, though unknown risks, inflammation of the arm has certainly been produced by it on one or two occasions; and therefore it seems right, as the operation now stands, to confine transfusion to the first class of cases only, namely, those in which there seems to be no hope for the patient, unless blood can be thrown into the veins.

20

Blundell wrote that blood transfusion (‘blood thrown into the veins’) held a potential fatal risk and advocated its use only as a last resort (‘for those first class of cases’), given ‘in a regulated stream from one individual to another, with as little exposure as may be to air, cold, and inanimate surface’. 20 In 2010, Ashworth noted that Blundell used blood that had been lost postpartum for reinfusion. 18 It seems that Blundell may have received the credit for the first autotransfusion in 1818 in error. From reading Blundell’s writings, even though many important principles were discussed, it is not clear that he in fact conducted the reinfusion of lost or collected blood to the same patient (autotransfusion).

Again in 1874, William Highmore in London suggested the ‘refund’ of blood lost during postpartum haemorrhage. 21 He proposed the transfusion (‘to refund blood’) of ‘defibrinated’ warmed autologous blood when he described the very unfortunate death of one of his patients and his inability to find a suitable donor to enable a timely transfusion for this patient. He believed if this lost blood was reinfused, the patient may have survived. 21

First successful autotransfusion

The first description of a successful autologous transfusion was in the USA by William Stewart Halsted, an American surgeon, and published as ‘Refusion in Carbonic-Oxide Poisoning’ in 1883. 22 , 23 Halsted described attempts at ‘refusion’ by other investigators: the Halle surgeon Richard von Volkmann who suggested the reinfusion of blood during an ex-articulation of the hip joint in 1868, and Friedrich von Esmarch, the Kiel surgeon, who acted on this suggestion, but his patient died. 22 –24 Heuter ‘defibrinated’ blood, transfused 350 mL ‘centrifugally’ into the left posterior tibial artery of a patient with frostbite gangrene in an attempt to restore blood supply to the foot ‘and preserved a portion of the frozen part’. 22 , 23

‘Carbonic-oxide poisoning’ was the most common fatal cause of poisoning at the time. Halsted described a 57-year-old male patient with carbon monoxide poisoning. The patient arrived at the clinic unconscious, with poor respiratory efforts, a strong pulse, pale skin and mildly dilated pupils. Halsted withdrew 512 mL blood from the right radial artery (with proximal and distal ligatures to secure the cannula) and then ‘defibrinated’, ‘strained’ and reinfused 288 mL through a ‘transfusion apparatus’. Reinfused blood was kept at 37.5°C. The technique used to ‘defibrinate’ the blood is unclear. 22

Halsted observed increased temperature, pulse and respiration rate and post-transfusion rigors lasting an hour. He withdrew another 300 mL, mixed the patient’s own blood with 128 mL from another patient and reinfused (‘refunded’) 192 mL of this mixture (no comment was made why the remainder of the blood was not used). Twenty-seven hours after his admission to the clinic, the patient became conscious and passed urine, was discharged home at 50 hours and remained well five-and-a-half months later. Whether the recovery was the result of ‘bloodletting’ or reinfusion was unclear. 22

In 1821, Jean-Baptiste-André Dumas, a French chemist, used a churning device where blood was whipped to ‘defibrinate’ the blood. 25 Sir Thomas Smith (1873) at St Bartholomew's Hospital in London used a ‘wire egg-beater, a hair sieve, a three-ounce glass aspirator syringe, a fine blunt-ended aspiration cannula, a short piece of india-rubber tubing with a brass nozzle at either end connecting the syringe with the cannula, a tall narrow vessel standing in warm water’ and ‘a suitable vessel floated in warm water to contain the defibrinated blood’. 3 Similar devices may have been used by Blundell and Halsted for ‘defibrination’. A German article published in 1996 credited the first suggestion of autologous transfusion for the treatment of gas poisoning in 1866 to Eulenburg and Landois, and the revival of autotransfusion later by Leipzig gynaecologist Johannes Thies in 1914. 24

First successful autotransfusion procedure during surgery

The first successful surgical use of autotransfusion was published by John Duncan (1886), a surgeon at the Royal Infirmary in Edinburgh, UK, who used the technique during the amputation of a leg. 26

Suitable reinfusion equipment was unavailable and blood clotting problematic. Duncan considered mixing blood with saline or ‘phosphate of soda’, or to ‘defibrinate’ to prevent clotting before reinfusion. These issues were considerable obstacles to transfusion at the time. In 1885, Duncan received a patient who had suffered a crush injury to his left leg eight hours after a railway accident. The patient lost a considerable amount of blood and arrived in clinical shock. Duncan mentioned that blood donors were scarce at night, and the only alternatives were saline solution (that could only provide temporary benefit) or the patient’s own blood. After a chloroform and ether anaesthetic, he ‘removed’ the leg and collected the ‘3 ounces’ (88.67 mL) of blood lost during this procedure, mixed it in a dish with distilled water and ‘phosphate of soda’. 26 The arteries were tied, and an estimated ‘8 ounces’ (200 mL) of this mixture was reinfused.

Duncan, along with Carmichael and Miller, continued to perform amputations and reinfusions using the same technique, ‘saving many lives’. 26 Some of the principles recommended by Duncan are still practised today, for example the ‘fluidity’ (anticoagulation) of the blood and the complete sterility of instruments. He immersed glass equipment in a solution of bichloride of mercury and metal in carbolic acid and then observed the absence of rigors and fever. 26

By 1886, Duncan was using very different equipment to Blundell. He captured donor blood in a graduated glass vessel with ‘phosphate of soda’, floating in warm water. One assistant collected the blood, and another reinfused it with a syringe while catgut ligatures were placed occluding bleeding arteries. They used a glass pencil pointed cannula connected to rubber tubing and a glass syringe, warmed up with a boric lint covering, for reinfusion. Equipment was washed first in antiseptic solution and then in aseptic water. 26 Duncan believed that if clean hands and instruments were used, the spraying of germicide in the air (a common practice at the time) could be delayed until after the procedure. 26 , 27

Duncan wrote that if blood was injected too fast, it resulted in discomfort for patients, and if injected too slowly, it would clot. To prevent blood from clotting before reinfusion, he used a 5% ‘phosphate of soda’ at a ratio of one part ‘phosphate of soda’ to three parts blood solution (or slightly larger). One case is described where an error occurred: the ‘phosphate of soda’ was made double strength (unintentionally). The patient experienced significant back pain and ‘forcible cardiac action’, and the transfusion was stopped. 26

The addition of an anticoagulant provided a significant benefit. In the 1800s, obstacles to the development of autotransfusion as we know it today included the absence of applicable tubing, reinfusion pumps or syringe devices to aspirate and inject larger volumes within shorter periods, and therefore they were unable to reinfuse blood before it would clot.

Mechanical defibrination techniques used in the 1800s included agitation of blood with beads or by shaking and ‘whipping’, 9 for example by Sir Thomas Smith (1873) at St Bartholomew Hospital in London. 3 Subsequent lysis of blood was a concern. 3 As mentioned above, in 1868, John Braxton Hicks, a British obstetrician, described chemical techniques (additives within blood) to prevent blood from clotting during transfusion. 3

The anticoagulant properties of hirudin was discovered by John Berry Haycraft, a British professor in physiology, in 1884, 28 and it was suggested for use during transfusion by Leonard Landois, a professor of physiology at the University of Greifswald, Germany, in 1892. 3 The use of hirudin was later found to be toxic. 9 Hirudin is the most potent known natural thrombin inhibitor found in the saliva of blood-sucking medicinal leeches (Hirudo medicinalis). However, it was only successfully isolated in the late 1950s. 28

In New York in 1916, Henry S Satterlee and Ransom S Hooker commented on the use of pipettes and cannulae with a lining of hardened paraffin or internal coating of anticoagulant solution such as ‘leech extract or herudin’. 9 These authors discussed various specific challenges, including the concern when large volumes of anticoagulant (specifically those dedicated to calcium inactivation) were used compared to the benefits of a more localised method where tubes used for transfusion were coated in paraffin. 9

An explanation of the anticoagulant properties of ‘herudin’, which Satterlee and Hooker call ‘the product of specialised glandular activity of the leech’, follows, including various potential mechanisms of action. These authors used the term ‘herudin’ to describe their own product and the term ‘hirudin’ to describe the commercially available version.

Uncertainty existed whether the toxicity seen after the use of hirudin/herudin in certain cases related to the dose used or the method of production. 9 They believed that a smaller dose of hirudin-, citrate- and paraffin-coated tubes were safe but mentioned that during six out of 14 ‘herudin’ transfusions, patients experienced chills and febrile reactions, possibly associated with a batch or commercially available product called ‘hirudin’ by Marshall. Even though hirudin appeared to be an ideal physiological anticoagulant, the mechanism of action was uncertain, and the potential for danger that existed related to dosing and contamination with ‘toxic substances’. Herudin was difficult to extract, destroyed by heat sterilisation and required the addition of thymol (as a preservative) to be stable in solution. 9 These authors also described a very specific technique whereby the use of sodium citrate at a dose of 120 mg to 300 mL of blood was seen as an effective anticoagulant and the best option for general use during transfusion, even though the exact mechanism of action and potential toxicity was still unclear. 9

In 1917, Lewisohn described sodium citrate as a known anticoagulant used during animal experimentation. 29 In 1943, Loutit and Mollison described the use of acid-citrate-dextrose (ACD) as an anticoagulant, later used by the army in 1945 and which allowed blood to be stored for 21 days. 11

The successful use of autotransfusion for the treatment of ruptured ectopic pregnancy was reported often between 1914 and 1934.6,30–34 In 1927, Van Schaik used autotransfusion for the first time during abdominal trauma during the Second World War. He mopped up or suctioned blood, anticoagulated it with citrate and used cheesecloth or fine gauze for filtration. 35 In 1982, studying patients in two different institutions, Thurer and Hauer found that those who received autologous blood after cardiac surgery had fewer infections and positive cultures than those who received ‘homologous bank blood’ and that ‘some mechanism of immunopotentiation may create a ‘protective effect’. They suggested that immunological consequences of transfusion, complement activation and pathways of ‘defibrinogenation’ were poorly understood and required more study. 6

Transfusion-related immune modulation is a delayed (>24 hours) immune response present in the blood of patients receiving ABT. 36 In the 1970s, clinicians made an association between improved renal allograft survival and ABT. 37 Later, through epidemiological and animal studies, postoperative infection risk and cancer recurrence risks were also associated with ABT. 38 , 39 These associations were believed to be a result of subsequent immune suppression. The potential lower incidence of postoperative infection during hip replacement surgery when autologous blood was used instead of ABT was identified by Murphy in 1991, 40 , 41 although the exact mechanism is still unclear. 41 ICS is beneficial in many ways. The fact that a patient can receive his/her own blood may hold significant immunological benefit, but definitive evidence remains elusive. 41

Development of autotransfusion devices

Blood collection, anticoagulation and reinfusion techniques (also used during autotransfusion) developed over centuries. These advances, however, occurred decades before actual cell salvage devices became commercially available. Several transfusion experiments on dogs were conducted according to ‘Experiments on the transfusion of blood by the syringe’. 17 In 1818, Blundell explained that a femoral arterial puncture was made, allowing the dog to exsanguinate, and the blood was collected in a cup until the dog appeared dead. Blood from another dog or a human was then introduced into the femoral vein of the dead dog through a syringe (Figure 1). 17

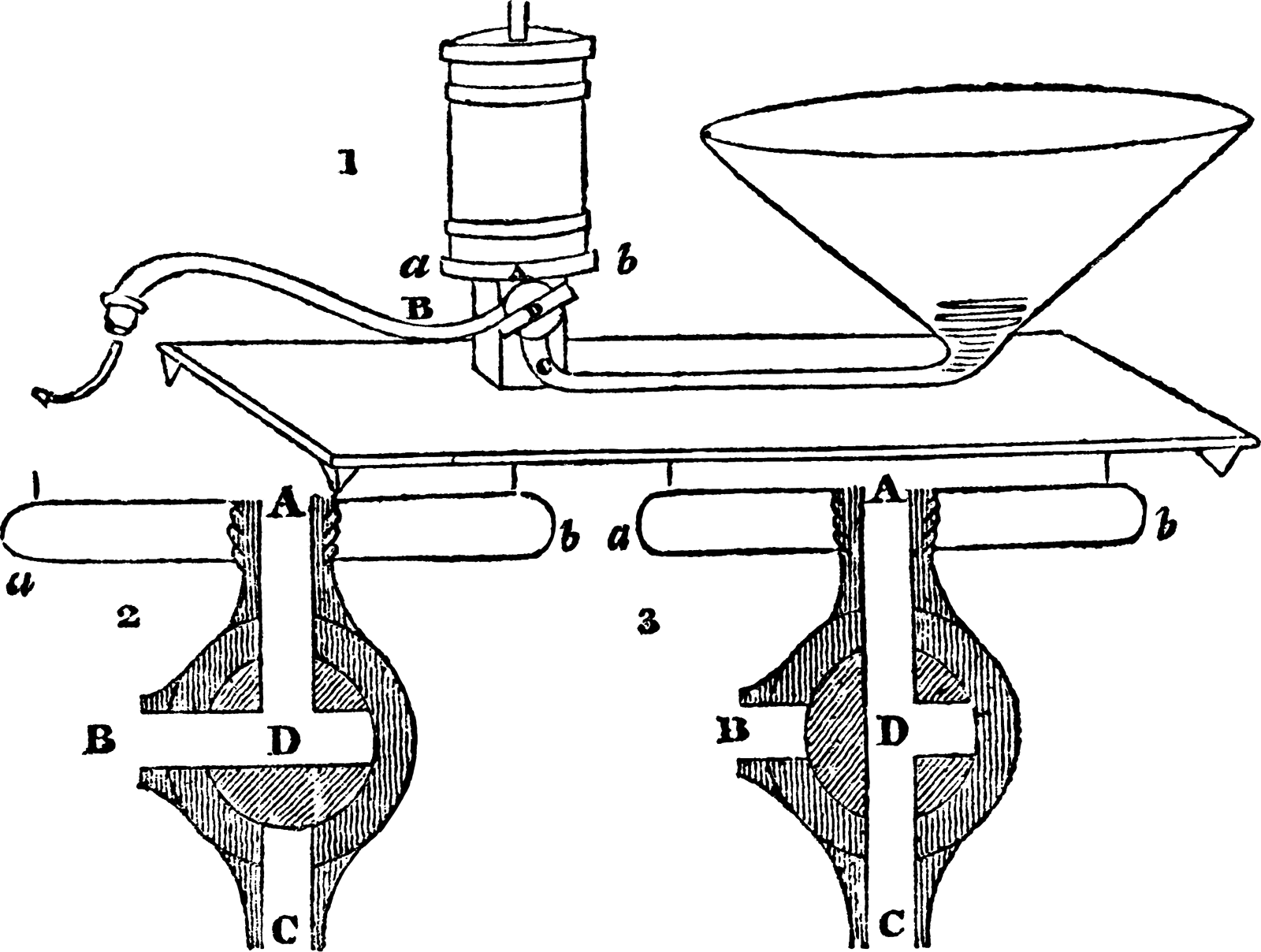

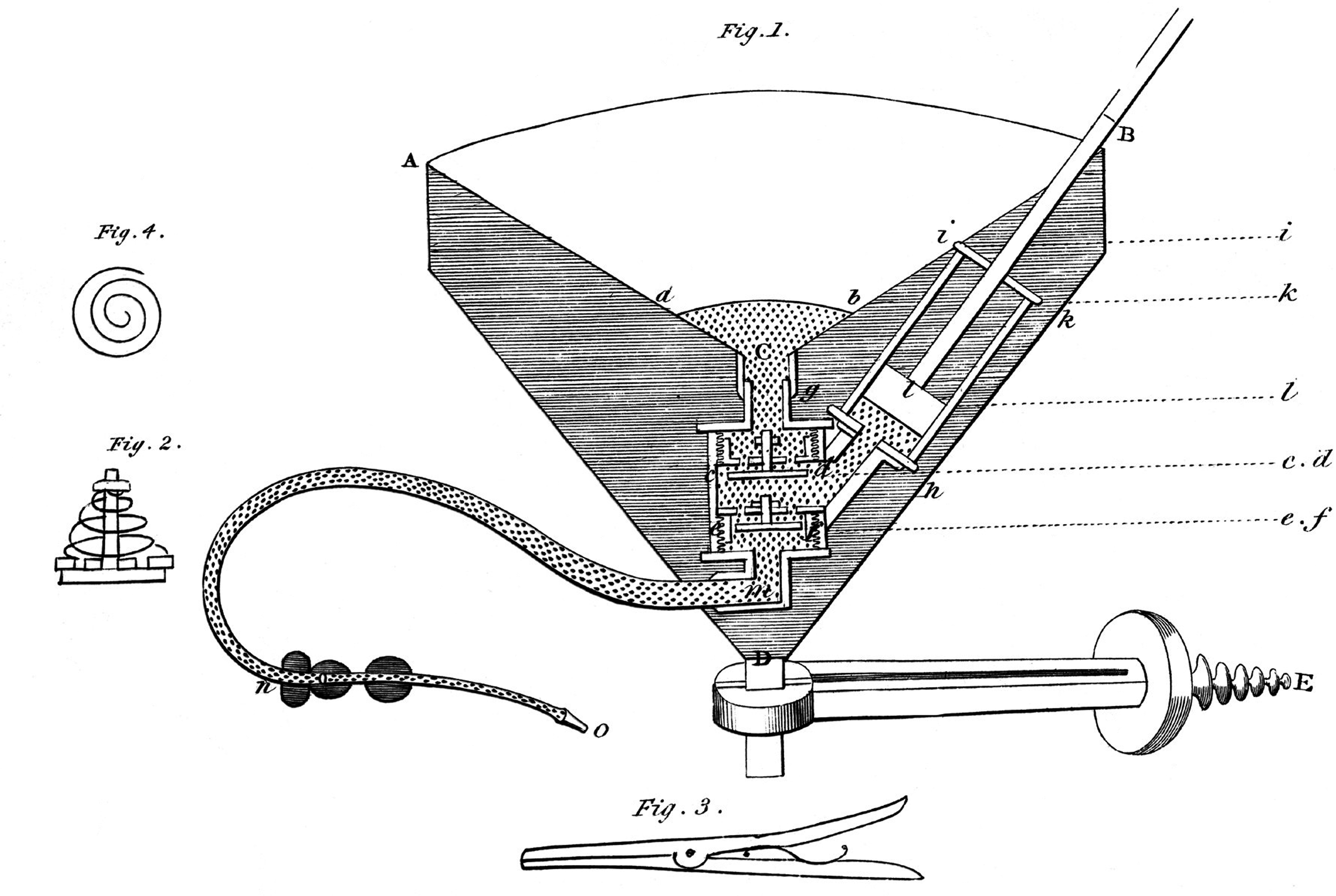

Early basic autotransfusion equipment consisted of a sterile basin to collect blood, mixed with ‘phosphate of soda’ and reinfused using a syringe (1885).26 Despite modern technological advances, some principles remain unchanged. Blundell also developed a device called the ‘impellor’, which was used until the late 1800s.19,42 Novel equipment used included a collection cup, tubes, wire springs and valves made of soft alum leather (Figure 2). 19

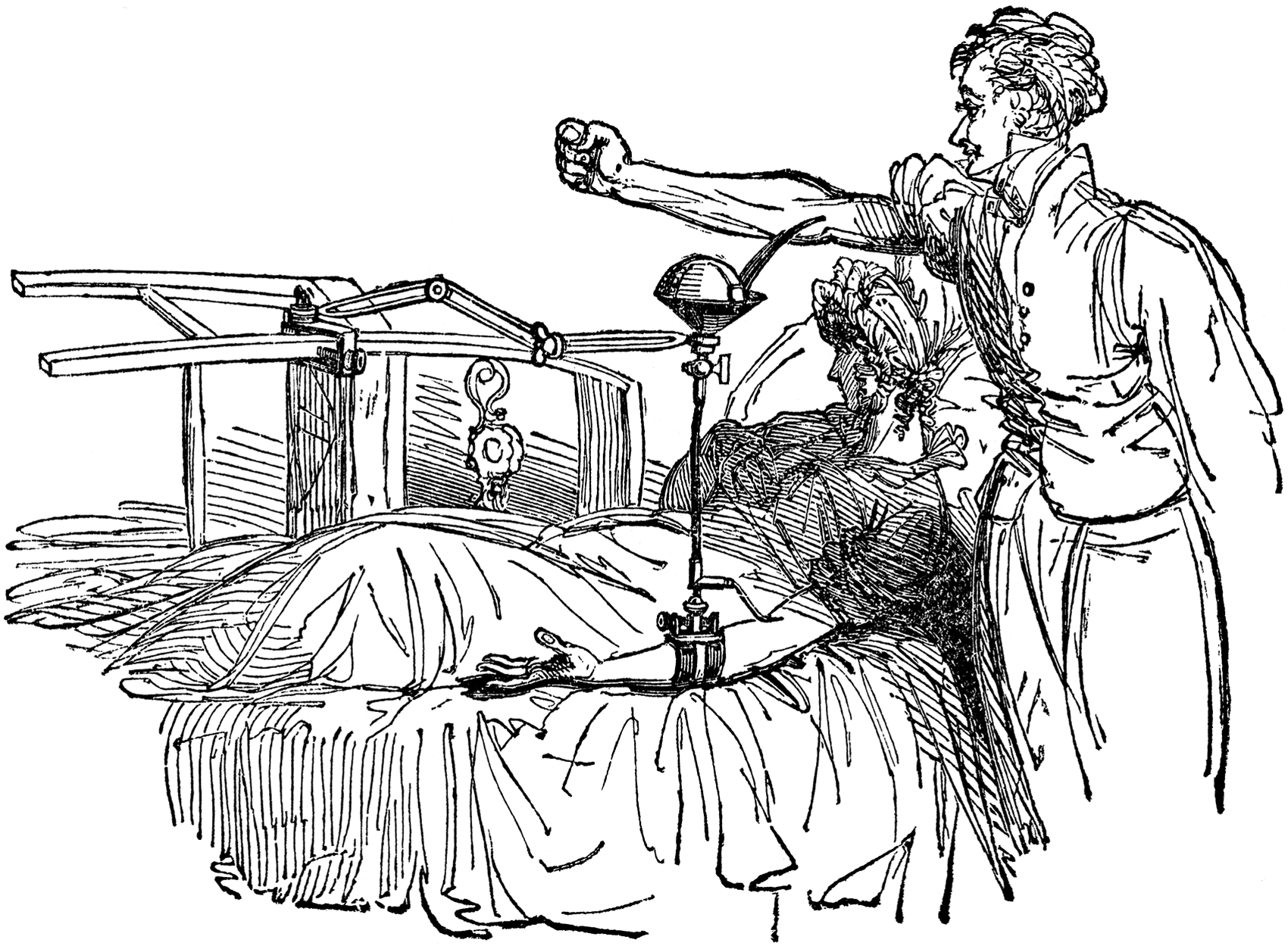

With Blundell’s ‘gravitator’, blood was transfused directly from a venepuncture/section in the donor arm, captured in a cuplike device (and warmed, ‘better if milk-warm’), with a stopcock/valve (‘on/off’ switch), through tubing into a 90° angle rigid device connected around the patient’s arm and then through a flexible silver tube, lying directly over the receiving vein, directed ‘towards the heart’. A probe was inserted under the ‘receiving’ vein to provide ‘stable substance to press upon’ when bleeding occurred during the insertion of the transfusion tube (Figure 3). 20

He described how blood, if clotted in the tube, can be expelled by warm water with a syringe while observing the patient. ‘If the features are slightly convulsed, the flow of blood should be checked: and if the attack is severe, the operation must be suspended altogether’. Because of a concern that the heart may not be able to cope with a fast transfusion, a volume limit was identified. Flow could also be regulated by the turn of the stopcock to partly open. Reinfusion pressure was generated through gravity. 20

ICS technology development in the 1900s

In 1914, successful autotransfusion was conducted in three patients with ruptured ectopic pregnancies by Thies from Germany 6 and for splenectomy by Lockwood in 1916. 43 Elmendorf reported the first successful use for the treatment of haemothorax on a soldier.6,44 By 1923, there were 164 reported cases,30 and by 1936, there were 272. 45 Autotransfusion was reported for neurosurgery (1925), 46 the traumatic rupture of the spleen (1928), 47 thoracic surgery and heart surgery (1990). 48 Autologous transfusion-related adverse events were also reported, including haemoglobinuria and a fatality that resulted from a technical error. 30 In a report of 272 cases in 1936, Watson attributed the death of three patients to the reinfusion of old haemolysed blood. 45 Autotransfusion was commonly only used when allogeneic blood was unavailable, but Brown and Debenham commented: ‘We do not consider it to be true surgery to withdraw good blood and throw it away’. 49

Transfusion apparatus used by Blundell, 19th century. Source: Digital image from the Wellcome Collection (reproduced under CC BY 4.0 licence wellcomecollection.org/works/h5z5tx6b)

Section of impellor injecting syringe. Source: Digital image from the Wellcome Collection (reproduced under CC BY 4.0 licence wellcomecollection.org/works/xefvhvnc)

Blundell’s method of blood transfusion. Source: Digital image from the Wellcome Collection (reproduced under CC BY 4.0 licence wellcomecollection.org/works/s5ppqhbf)

Preoperative autologous donation

‘Predeposit’ or PAD described the process where a patient’s blood was collected, processed and stored in order to enable transfusion later during surgery. ICS and PAD are very similar, with one significant difference: ICS blood is collected and reinfused at the same time during surgery, whereas PAD blood is stored away from the patient for later use, requiring strict labelling and safety procedures, in the same way as allogeneic blood does, and carries significant risks of incidents of the wrong blood being given to the wrong patient. 50 ICS blood is not stored, and therefore the risk associated with storage and clerical errors are negligible. Due to serious concerns related to clerical error and cost, PAD is no longer used in Australia. 50 Those interested in ICS development can, however, learn a lot from the development and obstacles encountered during the history of PAD. Similar beneficial aspects are relevant to both these techniques, for example device technology (processing and filtering), anticoagulation, immunological consequences and reinfusion events such as air embolism. In 1921, Grant studied a male patient undergoing neurosurgery for a suboccipital tumour. Before this surgery, the author described the removal of 500 mL of blood kept in 0.2% sodium citrate solution in a refrigerator for 24 hours and reinfused after the surgery. The postoperative course was documented as ‘favourable’. 51 The popularity of this technique increased thereafter. In the 1960s, programmes related to PAD were used during lung, 52 vascular, 53 cardiac, 54 scoliosis and obstetric and gynaecological surgery.55,56 Thurer and Hauer (1982) identified orthopaedic patients as an ideal group included in blood conservation programmes. 6 It was possible to schedule orthopaedic procedures which made PAD, iron supplements, perioperative haemodilution and salvage feasible. By using these conservation methods, good preoperative assessment and careful surgical technique, it became possible to avoid ABT. 6 These principles are still very valid today and were published as the Three Pillars of Patient Blood Management model described in The Lancet in 2013. 57

During the 1940s and 1950s, blood bank (allogeneic blood) transfusion developed into a safe modality and reduced the supposed need for autotransfusion. 6 In 1943, Arnold Griswold from Kentucky in the USA developed the first formal cell salvage autotransfusion device. Suctioned blood was collected in a bottle and then strained through a cheesecloth before being reinfused. This formed the basic principles on which modern cell salvage devices are designed today (without the centrifuge or washing step). Potential contaminants identified included bile, tissue fluid and bacteria. 58 Advocates and critics produced various reports from 1940 to 1960, identifying the clinical value and potential cost benefit of autotransfusion,59–63 but they also warned of concerns and potential complications.59,64

A major development occurred in 1968 when Wilson and Taswell developed a machine to collect (through suction), process (through centrifuge to separate red blood cells and supernatant), wash (with saline 0.9% or Ringer’s lactate) and reinfuse blood continuously. 65 In 1969, Wilson et al. adapted the technique to include transurethral resection of the prostate (TURP) procedures and evaluated the properties of salvaged blood for the first time.66,67 These authors did the first animal studies on a machine very familiar to us now, and in 1970, the Bentley Autotransfuser/autotransfusion system became commercially available. 67 Washed blood contained minimal clotting factors, and unwashed blood contained high amounts of fibrin degradation products. Therefore, a need arose to develop anticoagulation techniques, at first using heparin and thereafter citrate for local and systemic anticoagulation. 68 Collaboration between Dyer and Klebanoff enabled the development of a roller head pump and the cardiotomy reservoir, and Klebanoff’s autotransfuser was later used in Southeast Asia on ten patients. 67 The Bentley Autotransfuser, manufactured through Bentley Laboratories (Irvine, CA, USA), became the first commercially available device of its type, using simple filtration. 6

After the 1960s, technology developed towards specific formal autotransfusion devices, and investigators worked on solutions in the hope of overcoming previously identified risks such as air embolism and technical errors. There is a common thread through all transfusion literature. Technological development was fuelled by specific needs at each particular timepoint. Risks associated with ABT led to interest in ICS and, conversely, risks associated with ICS led to interest in ABT.

The autotransfusion technique used by Wilson and Taswell introduced the centrifuge and washing of lost blood during TURP for the first time, in addition to the filtration methods used during earlier cases. 69 During washing, clots, platelets, drugs, free haemoglobin, cellular debris and clotting factors were removed, and therefore the concentration of red blood cells reduced the risk of circulatory overload. 6

The scarcity of blood during emergencies and the known risks of allogeneic transfusion led, in the 1960s to the development of a device used during traumatic haemothorax by Symbas et al. 70 Symbas’ technique collected blood from a traumatic haemothorax, through a 14 G needle or trocar, into a 480 mL container. Blood was anticoagulated with 120 mL ACD and reinfused using gravity. Concerns related to infection and the complicated nature of some autologous devices at the time were significant obstacles. 70

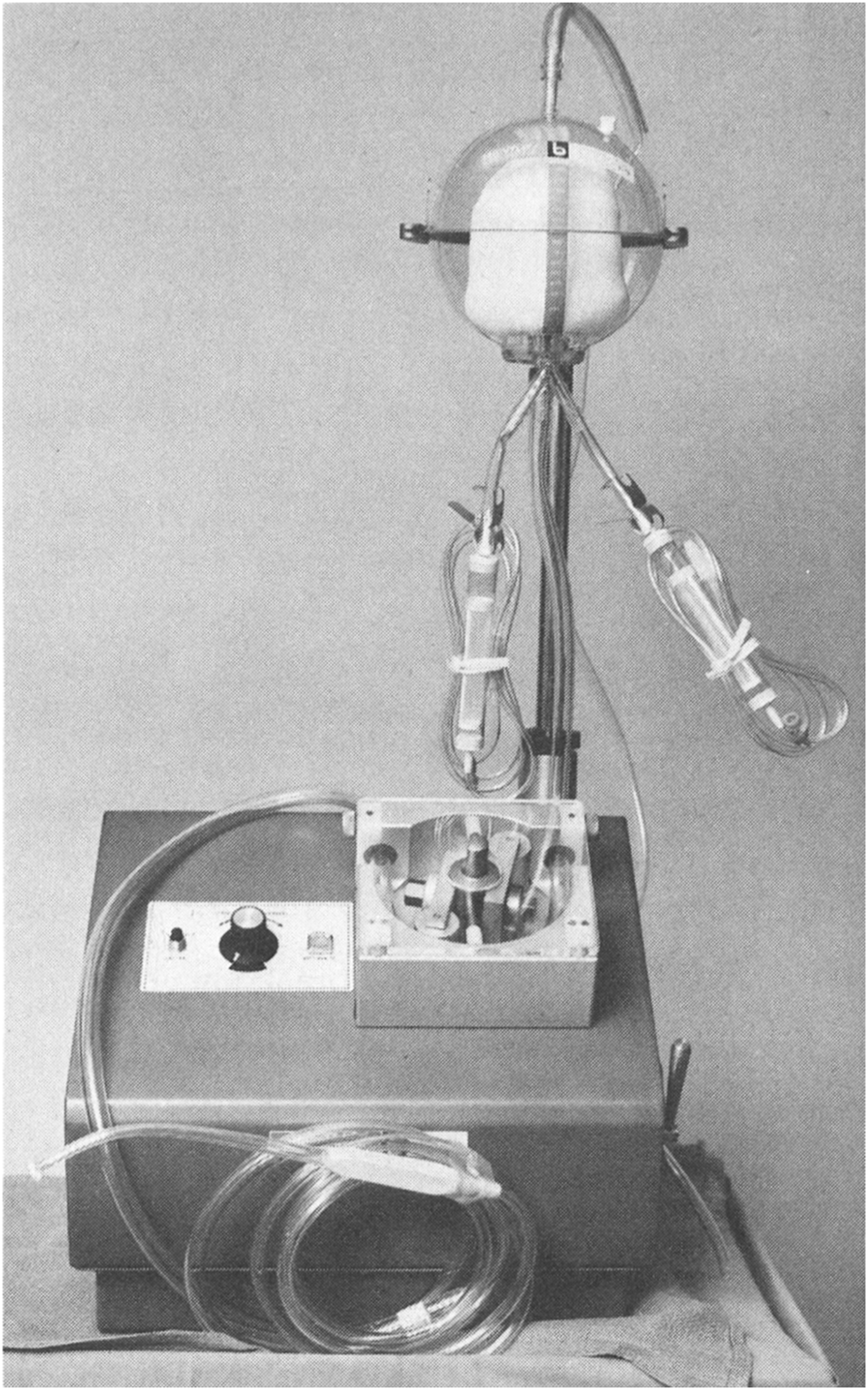

The Bentley autotransfusion system consisted of technology already in use by cardiac surgeons and included a Bentley cardiotomy reservoir (to collect blood) and a DeBakey roller head pump (to reinfuse the blood). The following mechanism was used: A roller over tube device produced negative pressure, and therefore suction, proximal to the roller. This suction pressure enabled aspiration of blood from the surgical field. A disposable reservoir with a 125 μ filter and pressure-relief tube enabled blood collection, and two infusion lines were connected. A pressure-relief valve overcame high-pressure situations, and a photoelectric device detected low-volume situations and reduced air embolism risk. The pressure that resulted distally from the roller pump mechanism enabled the reinfusion of collected blood. Unfortunately, this pressure mechanism also led to the many fatal air embolism events documented with this device at the time. 6 The Bentley autotransfusion system was very operator dependent, used citrate phosphate dextrose (CPD) as an anticoagulant and did not enable the processing of collected blood (Figure 4). 6 Further developments allowed for the reduction of air embolism risk and the production of red cell concentrates with the Sørenson autotransfusion system and the Haemonetics Cell Saver (Wilson, Watson-Williams and Gilcher) after 1975. 6

Bentley autotransfusion system.Source: reproduced with permission from Wolters Kluwer. 56

Cardiac surgery became a frequent indication for the use of this technique. 6 The Sørenson autotransfusion system included suction and anticoagulation, and employed a rigid reservoir with a double-bag system where blood collected in the first bag filtered down (at 170 µ) into a second reinfusion bag and thereafter into the patient through gravity. This device was withdrawn by the Food and Drug Administration in the USA in 1976 due to the unpredictable rate of anticoagulant introduction through the ‘suction wand’. 6

The IBM Corp. (Armonk, NY, USA) blood cell processor was very similar to devices used today. Blood was collected with a Sørenson or standard cardiotomy reservoir, anticoagulated with heparin or CPD, drained into the processing section, air removed, centrifuged, supernatant removed and saline added to wash cells. Repeating wash cycles were used if significant debris was present. Uniquely, the same disposable bag was used to wash, centrifuge and reinfuse blood. 71 The Haemonetics Corp. system (Braintree, MA, USA), required an operator at the start, little assistance during processing (15 minutes) and collected blood via wall suction. Blood was anticoagulated and then centrifuged in a rigid Latham bowl. The supernatant was removed, and washed red cells were presented for reinfusion. 6 These specifications were so similar to devices used in modern autotransfusion that it seems little progress was made since 1976. On the contrary, it seems that current devices require more monitoring and support.

Controversies related to ICS during obstetric surgery

Obstetric haemorrhage was of significant concern to Blundell in 1818 17 and is still a leading cause of direct maternal death in Australia and globally today (according to the Australian Institute of Health and Welfare). 72 A study in Australia and New Zealand (2010–2011) estimated 6.0/10,000 women who gave birth required a hysterectomy to control haemorrhage. 73

ICS was traditionally contraindicated during caesarean section 74 because of theoretical concerns that included the potential for amniotic fluid embolism syndrome, Rh immunisation and bacterial contamination. 75 Blood aspirated from the surgical field during ICS may contain amniotic fluid contaminants (foetal squamous cells, α-fetoprotein, lipid components, etc.) believed to be responsible for amniotic fluid embolism syndrome and the resulting disseminated intravascular coagulation (DIC). 75

In 1991, Thornhill et al. published an abstract at the American Society of Anesthesiologists conference (USA) where the potential of ICS to remove foetal products such as foetal squamous cells, lipids, amniotic fluid vernix, α-fetoprotein, trophoblasts, lanugo hair, vernix caseosa and tissue factor was assessed. It was, however, unclear exactly which of these markers or substances within amniotic fluid were the aetiological triggers for amniotic fluid embolism syndrome. 75

In 1998, Rebarber et al. published the first triple-centre cohort study comparing 139 obstetric patients for caesarean section (one group received ICS, another ABT), and found no complications directly related to ICS, including no case of acute respiratory distress syndrome or amniotic fluid embolism, and no statistically significant difference in the need for ventilator support, DIC, or length of postoperative stay identified between the study groups. 76

These authors were convinced that their study power was adequate to detect amniotic fluid embolism–related adverse events (mean incidence at the time 3/100,000) and infectious morbidity. 76 They also mentioned other in vitro studies (with no reinfusion) where the salvaged blood product was assessed: Thornhill studied α-fetoprotein and foetal cells, and Durand studied red cell structure and bacterial contamination. 76

Other potential benefits identified were the reduced risk of bacterial contamination and alloimmunisation, citrate and potassium toxicity, 2,3-diphosphoglycerate 2,3-(DPG) deficiency and immunological complications. 76 The risk of Rh immunisation was an additional concern during the use of ICS in obstetric surgery. Foetomaternal haemorrhage and subsequent Rh immunisation may occur at any stage of pregnancy and delivery in Rh D–negative mothers with Rh D–positive foetuses if the maternal circulation is exposed to foetal red cells. Antibodies against the foetal red cells can potentially cause haemolytic disease of the newborn in subsequent pregnancies if left untreated. Consequently, all Rh-negative mothers of Rh-positive babies should have Kleihauer testing performed to assess the presence of foetomaternal haemorrhage after any at-risk event during pregnancy or following delivery. 77

Processing and filtering of ICS blood does not exclude this risk, as foetal red blood cells are present in salvaged blood.74,78 Mothers who receive cell salvage blood should therefore receive Kleihauer testing to evaluate the volume of red cells detected in the maternal circulation and to identify the required dose of subsequent anti-D immunoglobulin therapy to prevent alloimmunisation. 79

In 2000, Waters et al. published a landmark paper that provided in vitro evidence to dispute the theoretical concern that amniotic fluid embolism can be caused by ICS during obstetric surgery. These authors studied samples from 15 women undergoing caesarean section. The groups compared included unwashed blood from the surgical field (pre-wash), washed blood (post-wash), washed and filtered blood (post-filtration) and maternal central venous blood drawn from a femoral catheter at the time of placental separation. They assessed the squamous cell concentration, lamellar body count, quantitative bacterial colonisation, potassium level and foetal haemoglobin concentration and confirmed that ‘leukocyte depletion filtering of cell salvaged blood obtained from caesarean section significantly reduces particulate contaminants to a concentration equivalent to maternal venous blood’. 78

Waters et al. recommended the use of two suction devices, which are still commonly used: one to collect most of the amniotic fluid product, and the other thereafter to collect lost blood. The separation of amniotic fluid products and lost blood was recommended as an additional step to reduce the risk of contamination of the salvaged blood product with amniotic fluid-related factors. 78

In another study by Catling et al., a significant number of foetal squamous cells were found in the salvaged blood product after processing and filtering. 80 Waters et al. considered the possibility that a different leukocyte depletion filter was used: the Pall RC 100 filter by Catling et al. versus the Pall RS filter by Waters et al. The differences in the media of these two filters (fibre, fibre diameter, spacing between fibres, composition and charge) were considered to be the reason for the differences in foetal factors present in these two studies. 78

Landmark events during the development of intraoperative cell salvage.

The use of ICS during obstetric surgery was endorsed by the Centre for Maternal and Child Enquires (CEMACH) in the UK in 2007, 81 the Joint Association of Anaesthetists Great Britain & Ireland and Obstetric Anaesthetists Association (AAGBI/OAA) Guidelines in 2005, 82 the National Institute for Health and Care Excellence (NICE) in 2005, 83 within the guidance for the provision of intraoperative cell salvage document (Appendix V) 84 and the Australian National Patient Blood Management Guidelines, Module 5, Obstetrics and Maternity in 2015. 85 ICS use in obstetric surgery is therefore no longer contraindicated.

‘Filtering’ versus ‘processing’ development

In their article in 1982, Thurer and Hauer described that ICS was done in one of two ways: either by collection, filtering and reinfusion or by collection, processing and reinfusion techniques. 6 These principles still apply today. Processing occurred through the introduction of saline and repeated centrifuge. Anticoagulation was added to avoid initial clotting and was removed within the processing cycle. 6

Whole blood infusions (without processing) maintained the benefits of platelets and plasma and were faster and cheaper, but unfortunately they also maintained the presence of debris, clots and other unwanted aspects of shed blood. Alternatively, when blood was processed into a red cell concentrate, these debris, bone chips, cement, 86 irrigation solution, coagulants, platelets, free haemoglobin, fibrinogen degradation products, fat, plasma and activated factors were removed.6,87

Critical evaluation of technology at the time included confirmation of removal of these potentially harmful substances. 88 Haemolysis caused an increased plasma level of free haemoglobin, reduced through processing. Therefore, when surgical techniques were employed that increased the risk of haemolysis, processing became essential. 6 Processing itself caused a level of red cell trauma and subsequent haemolysis. 89 Newer technology caused less cell trauma and therefore improved the haematocrit and levels of 2,3-DPG, reduced the risk of free haemoglobin and renal failure, improved fibrinogen levels and reduced fibrinogen degradation products and therefore the risk of coagulopathy. 90 Advanced filter technology provided solutions to many of these concerns.54,67,69,91 Filter technology developed from the earlier use of steel wool and wash filtration,54,62,92,93 muslin gauze and sterile surgical sponges 69 to disposable sterile filters used in 1970 67 through to more advanced mesh filters 94 (similar to those used during the production of banked blood today).

In 1971, Patterson described a perceived benefit when using a polypropylene filter with a polyester fibre mesh and a pore size of ∼25 µ. Concern at the time related to microemboli causing perfusion and subsequent neurological injury when standard 170 µ filters (during massive transfusion of banked blood) were used. 95 In 1973, Gervin recommended the use of a Dacron filter above a standard 40 µ grid filter for the removal of microaggregates, potentially harmful debris and non-functional platelets (potential microaggregates) during massive transfusion. No significant changes to the coagulation system (PTT, PT and TT) and no activation of the fibrinolytic system were identified. Finding that significant aggregate formation occurred within two hours in fresh heparinised blood that could lead to microembolism, they concluded that specific ultrapore filters would therefore be applicable in most transfusion cases. 96 The type of material (Dacron, wool, polyester) and the size of the filter pore enabled variable removal of debris, platelets and microaggregates. 97 However, many filters used in modern autotransfusion technology, while removing a higher percentage of debris, bacteria and malignant cells, unfortunately also impair the speed of reinfusion significantly. 98

Thurer also commented that immunological consequences of transfusion, complement activation and pathways of ‘defibrinogenation’ were poorly understood and required more study. 6 Smith and Hauer reported safe transfusion of contaminated autotransfused blood and reduced clinical infections.99,100

ICS describes the technique where blood lost during surgery is captured, processed, filtered and reinfused at the time of surgery. ICS is beneficial in many ways. The fact that a patient can receive their own blood, which is immediately available within the theatre, may hold significant immunological benefit. ICS is fresh blood and therefore maintains membrane integrity and erythrocyte viability, beneficial levels of 2,3-DPG and ATP and physiological pH and therefore improved oxygen carrying capacity and tissue oxygen delivery.101–104

Conclusions

Developments in blood transfusion answered many questions, although many unanswered questions related to the best type of transfusion, technology, filters and the immunological consequences of allogeneic and autologous blood transfusion still remain.

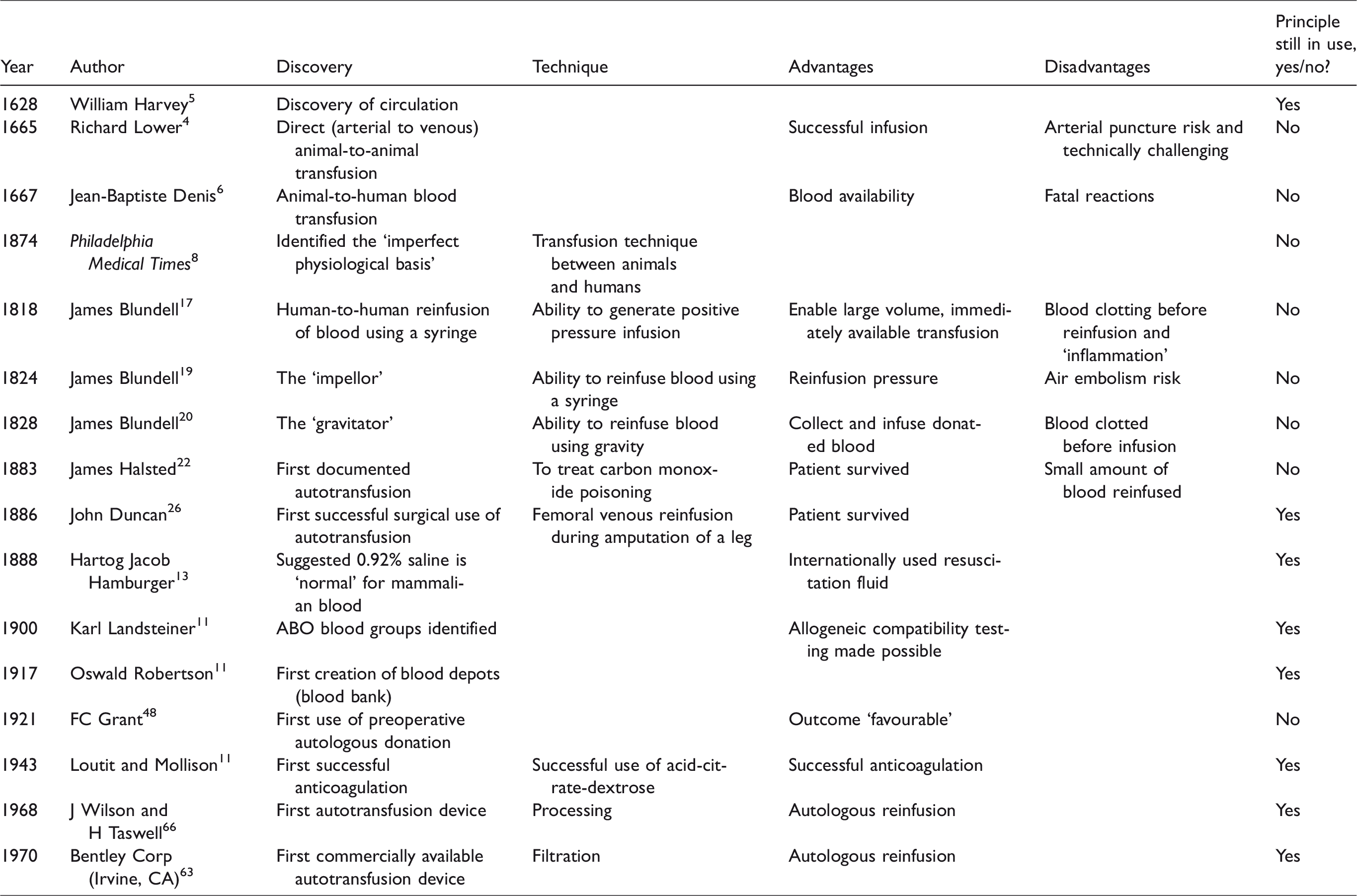

Throughout history, autologous transfusion developed when a specific need was identified or when supply-and-demand concerns or risks relevant to allogeneic transfusion demanded investigation. Anticoagulation was not understood in the 1800s, and equipment to enable safe blood transfusion did not exist. Developments in these areas thereafter enabled the growth of ABT and ICS (Table 1). However, with this increased use, the potential for adverse outcomes also became more apparent. When risks associated with allogeneic blood became of significant concern in the late 1900s, the idea of an ‘own blood’ transfusion avoided the later risks such as hepatitis and human immunodeficiency virus and made autologous transfusion favourable again. Research into the use of PAD, particularly to avoid the immunological consequences of ABT, increased but unfortunately led to some of the same concerns (e.g. incidents of the wrong blood being given to the wrong patient). This practice became unpopular again, resulting in a paucity of autologous blood transfusion studies. The significant potential described in the PAD literature, particularly to avoid the immunological consequences of ABT, may still be relevant for ICS and warrants future research.

Footnotes

Acknowledgements

The authors wish to thank the University of Queensland librarian, Mr Lars Eriksson.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.