Abstract

Whether artificial intelligence might benefit human well-being in every sense is an open question. I consider it in the following essay, first putting to one side standard accounts of ‘official AI’, then deriving an ‘unofficial’ counterpart from the evidence of newspaper accounts and magazine features from ca. 1945–1965. Unsurprisingly, these demonstrate a bending of artificial intelligence to military and industrial purposes, hence the enormity of the impediment to therapeutic applications, but at the same time the evidence leaves no doubt as to the imaginative power of smart machines. Contemporary commentary is brought to bear to move from intimations of a dark future to possibilities for constructing a healthy practice. I conclude with two quite different 21st century examples of a way towards it.

Σειρῆνας μὲν πρῶτον ἀφίξεαι, αἵ ῥὰ τε πάντας ἀνθρώπους θέλγουσιν, ὅτις σφεας εἰσαφίκηται. First you will come upon the Sirens, who beguile all men who encounter them. Homer, Odyssey 12.39–40

Introduction

This essay explores the possibility of human well-being from dialogue with ‘smart machines’. 1 Before any probing can begin, however, the shadowy Juggernaut known as ‘AI’ looms, with assumptions, claims and obeisances that obscure questions we must ask. To discover these questions I turn away from the standard account(s) of artificial intelligence (or ‘official AI’) to clear a space for an unofficial counterpart. 2 This I draw from journalistic accounts of early machinic ‘brains’, ca. 1945 to 1965, and from them elicit an artificial intelligence avant la lettre. 3 The evidence of how ‘brains’ were used and thus presented to the public in that incunabular time makes for profoundly uncomfortable reading. I recall it in detail in order to unearth a source of imaginative power that despite its misuses, then and now, is at our disposal to do better. ‘Can we do better?’ is, however, a serious question; it is sharpened by physicist Freeman Dyson's warning from experience of ‘the technical arrogance that overcomes people when they see what they can do with their minds’. 4 With the troubled past and troubling present in view, I ask whether, in some configuration, an intelligence arte factum might lead to a beneficial relation in some form of dialogue with it.

To justify turning away from experts to journalists requires a longer and rougher path than the reader may expect. Much must be assumed. To ameliorate I offer a series of brief preludes. The first of these, on dialogic conversation, should make clear how ambitious my aim is. From that I move to an explanatory introduction of four ‘initial conditions’, as I call them, in order to place the technology in context, then to sketch the conceptual background to artificial intelligence in cybernetic mythology and the notion of human ‘interaction’ it imposed. The historical evidence follows in two parts, first on nuclear weaponry and then industrial automation. Three cultural productions of that time serve as commentary to help in extracting – rescuing, if you will, from the sinister past with which the smart machine was (and is) entangled 5 – the perilously ambiguous, premoral relation between ‘brains’ and those affected by them: threat and promise in one. Discussion of two experiments in robotics, one Buddhist, the other Hindu, comes at the end to suggest possibilities for developing this relation into a therapeutic human-machine dialogue, though at a distant, problematic horizon. Can an artificial intelligence in some form take a place alongside friends and human therapists? Can it serve analogically to the diviners, oracles, priests and shamans of the ‘distant sciences’ (Jardine 2021)? I will come back to them.

The wide-ranging theme within which this paper was developed was human ‘well-being’, or ‘what is non-instrumentally or ultimately good for a person’ (Crisp 2021). Whose ‘ultimate good’ is at stake, you may wonder? Who is included, who excluded by the recurrent ‘we’ in the discourse which follows? 6 No Everyman speaks here, but to begin somewhere I stand in for all persons, as limited as this will make the following small step in a far larger, more socially diverse inquiry.

Dialogic conversation

In instrumental terms the first step to be considered is a machine capable of talking back rather than, as now, parroting human discourse (Bender et al. 2021; Blackwell 2023, Chapter 4), although those two kinds of performance could prove a distinction without a difference, as we will see. Cautions of course immediately crowd in. First of all is the problem of meaningful communication, which for us has been affected – ‘sanatised’, Stuart Hall has written – by a theory of information that ignores ‘the fundamentally dirty, semiotic, semantic, discursive character of the media in their cultural dimensions’ (Hall 1989, 48) and obscures the essential reciprocity of dialogue, thus underplaying dialogue's significance. 7 Such is first bar that must be cleared.

Dialogue is at the heart of the matter, but in conversation with machines? Many directions for research into the fungibility of humans and things follow, beginning with migration of the word ‘conversation’ from Latin into English, from familiarity gained by moving about (versor) among things and people, through figurative uses for ‘occupation or engagement with things’ to our current sense (OED s.v.). Then, inter alia, is the emergence of speech in children's engagement with ‘transitional objects’ (Winnicott [1971] 2005); human ‘dialogic thinking’ (Tomasello 2000 and 2019; Oleś et al. 2020); prayer and ritual veneration of idols (Gell 1998, 96–99) – and in Tanya Luhrmann's suggestive phrase from her observation of magicians and their tarot cards, the use of symbolic objects as ‘a “language” to talk to their unconscious’ (Luhrmann 1991, 232). For machines the telephone is our obvious example, far more so since it grew into the networked mobile phone. In the mid-1970s cognitive scientist Colin Cherry compared the kind he knew to the Delphic Oracle: ‘we walk toward this little black thing on the table, talk to it, listen to what it says. Our trust is really extraordinary. We consult the telephone’ (e.g. for the correct time) ‘much in the same way… (and) believe what the golden voice tells us – we trust it’ (1977, 123). (I will return to oracles, trust in them and in machines.) Furthermore, for some decades prior to that, telephony and artificial intelligence had evolved into an inseparable relation behind the scenes, particularly in the work of Claude Shannon from the late 1930s, which was brought to public attention in 1949 by Warren Weaver in an article on Shannon's mathematical theory of communication (Shannon 1948). Weaver offered it as response to his leading question, ‘How do men communicate, one with another?’ 8 – no surprise, as we will see, given cybernetics. Indeed, conversation turned up with ‘artificial intelligence’ before it had that name, for example, with Alan Turing's imitation game (Turing 1950).

Far more important here is what happens during conversation. The crucial matter came to the fore in 1966, when computer scientist Joseph Weizenbaum's simulation ELIZA made conversation with a computer available by keyboard. His declared intention was to demonstrate how conversational interaction could be achieved – and explained without mystery or handwaving – by ELIZA's ‘mere collection of procedures, each quite comprehensible’ (Weizenbaum 1966, 36). To his chagrin, ELIZA stimulated other ideas (to which his secretary's request for privacy with ELIZA first alerted him), 9 becoming in his view a vehicle for obsessive emotional involvement, basis for a profoundly mistaken psychiatric practice and, he wrote, an over-valued contribution to computer science. 10 Unwittingly, that is, Weizenbaum had uncovered the evocative capabilities of a machine with the potential to mirror its user (Weizenbaum 1972; Bassett 2019). He was disturbed by these capabilities. Mulling over the ‘pathological by-products’ of technology in a review of bioethicist Daniel Callahan's The Tyranny of Survival (1973), he added computer science to the domains in need of ‘“a system… which establishes the limits of technological aggressiveness… hope, and… mandates” – in short, the need for a science of limits… [S]uch a science’, he wrote, ‘can arise only in the light of an understanding of man's own limits as determined by his own paradoxical nature’. 11

Rather than suggest, with ‘paradoxical nature’, a static condition, I will use the term ‘psychodynamics’ in an older sense than is current in psychotherapy 12 to denote the interplay among psychological forces, both conscious and otherwise.

I will make more of Weizenbaum's reaction, arguing that all depends on what comes of intimacy with the smart machine, what we do with or make of it. Evidence suggests that at present such intimacy is difficult if not impossible to avoid, if unwittingly. Comfortable assumptions make seeing what we are seeing with very difficult, hence my historical search for clarity at a time when smart machines were just getting a grip on the public.

Initial conditions

To review, there are four of these: Polanyi's paradox; modelling intelligence and its anomalies; the deceptive rigour of binary data; and the engine of fiction.

Polanyi's paradox

My project might seem thwarted from the beginning by the gulf which separates the continuous world of experience from the hardwired ‘all-or-none’ template – 1 or 0, on or off, present or absent, yes or no – which binary circuitry imposes on input. 13 A sign of that gulf is that despite their brilliant successes with highly sophisticated problems, smart machines consistently fail to do well with tasks people find easy or that they perform without thought. David Autor has called this ‘Polanyi's paradox’ (2014, 8), from the philosopher's observation in Tacit Knowledge that ‘we can know more than we can tell’ (Polanyi 1966, 4, his emph.). Geoffrey Vickers put much the same as a question: ‘how the playing of a role differs from the application of rules which could and should be made explicit and compatible’ – that is, algorithmic; he called it ‘the major epistemological problem of our time’ (1971, 585). But to get anywhere with it, ‘the inquiry must be turned around’ (Wittgenstein 2009, 46 [§107f]): we must deal with the prevalent assumption that in principle the trajectory of artificial intelligence is convergent to identity with our own. For the epistemological wealth Vickers anticipated comes not so much from what we learn along the way about ourselves but, as I am about to argue, from developing and exploiting the divergent form of intelligence arising from that gulf. Autor has observed a clue: that in practical situations ‘tasks that cannot be substituted by computerisation are generally complemented by it’ (Autor 2014, 8).

Modelling intelligence and its anomalies

Consider this fundamental principle of the digital machine: that by design it requires a ‘model’ or set of algorithmic routines which at any given time defines the actions it can take and the objects it can affect within the constraints of hardware. This is what gives it enormous flexibility, its ‘general purpose’ nature (Forsythe 1969). But, as Brian Cantwell Smith observed in the context of unintended nuclear warfare, ‘models must abstract, in order to be useful’; in consequence, the violence inherent in the model-world relationship… [means] that there is an inherent conflict between the power of analysis and conceptualization, on the one hand, and sensitivity to the infinite richness [of the world] on the other’ (Cantwell Smith 1985, 25).

Designing, developing and manipulating models, either of something that is well known (e.g. to find out more about it) or for an unknown result which satisfies certain conditions, is called ‘modelling’. 14 When pioneer computer scientist Marvin Minsky introduced the term ‘artificial intelligence’ to British researchers in 1958, 15 he seems to have had the latter in mind. The aim of his research programme (which he and a few others had begun three years previously) was, he said, to study an as yet incompletely known phenomenon, not tying ‘intelligence’ to specific human abilities beforehand but looking ‘for new and better ways of achieving performances that command, at the moment, our respect’ (Minsky 1959, 7). Soon after that, however, his ‘modelling for’ seems among his colleagues to have become ‘modelling of’. Turing had already made a start on defining benchmarks in behaviouristic terms with his imitation game. This was later followed, for example, by problem solving, then achievement of specific objectives. That is where we are now. 16

Forget or ignore Cantwell Smith's primary truth about models and one ends up trapped in the imitation game. As with all modelling, the danger is that the inevitable anomalies are discarded, ignored or go unseen when the model in play seems to be converging on observed reality. The temptation is to slide from an agile as if or might be to is, as happened early on (Stibitz 1950 and Gerard 1951, 11). Practically speaking, going for a ‘good-enough’ solution (Simon's ‘satisficing’, 1956, 136) is often the right thing to do, but always with the greatest alertness to potentially significant anomalies. In the digital humanities, anomalies have fared somewhat better: experience with digital encoding of source texts has led to recognition that calling them ‘residue’, as one might call minor difficulties to be tidied up, underplays their prime importance (McGann 2014, 94; McCarty 2012). They are Jerome McGann's biblical ‘hem of a quantum garment’ (2004, 201) or Stuart Kauffman's ‘unprestatable adjacent possible’ (2019, 125). 17

In brief, the take-home lesson is Marilyn Strathern's, from her commentary on Donna Haraway's cyborg: ‘The point is to see a difference. The question is the kind of connection one might conceive between entities that are made and reproduced in different ways – have different origins in that sense – but which work together’ (2004, 37).

The deceptive rigour of binary data

The machine's appearance of neutrality seems to follow from two things: the rigour of binary encoding enforced on input (McCarty 2022a, 111), noted earlier, and the deterministic nature of the machine (Johnson-Laird 1988, 44f). 18 Hence one might conclude that results of computation are necessarily ‘objective’ (Daston and Galison 2007). The fly in that ointment is human choice, which may be obvious in top-level decisions, hidden in those of the software engineering or by default in the available data. My point is that the objectivity digitalisation promises is a will-o’-the-wisp. The seriousness of the problem only came to wide attention when statistical techniques for pattern-recognition were applied to large corpora of text, or ‘Big Data’, derived from social media. 19

To put the matter succinctly, data, algorithms and so data-driven agents, are thus in relation not to things but to a ‘knowledge’ of things, to how they have been construed. 20

Pointing to and opening up the problem of digital objectivity is important for another, perhaps surprising reason: it demonstrates that the mechanisms responsible for communicating prejudice are also capable of discovering a second-order intelligence in the vox populi. I will return to this point with Italo Calvino (§6.3).

The engine of fiction

I referred at the beginning to questions obscured by official AI. The first of these is simply, what is AI? The accounts people were given about smart machines in that incunabular time suggests an obvious response in the past tense: those accounts were beginnings of a nascent imaginary for artificial intelligence. 21 Now the continually elusive ‘there’ in computer scientists’ observations that ‘we’re not there yet’ (e.g. Levesque 2017, 131; Russell 2021b) suggest ever more clearly that the referent ‘there’ is not some location at a finite distance but is, in the proper sense, a horizon, that is, that which is ‘visible from a given point of view’ (OED s.v. 1.a). We can and must ask, what then follows from considering AI ‘a projection of the hopes and dreams of AI researchers’ (Jansen 2022, 4)? Beyond whatever achievements that its products represent, what follows from considering them essentially provocation to further projections? What dangers attend this endless projecting? Attention then moves from what's coming next to assessment of the cycle (or spiral) of endlessly renewed expectations, teasingly almost fulfilled by yet another promise: hence a beckoning never-quite-presentness. 22 One further step brings us to the common futurity and interests AI shares with science fiction. 23

Unlike sci-fi, however, official AI operationalises the future it imagines – hence an obvious, ever-present caution: as we have seen, it does so on the basis of models which necessarily fall short of the infinitely more complex world they approximate. Cantwell Smith uses the near-miss of the North American Aerospace Defence Command (NORAD) on 5 October 1960 to illustrate the problem (Cantwell Smith 1985): on that occasion, the rising moon was mistaken by its systems for a Soviet missile attack on the United States and missiles almost launched. These systems are, of course, much improved, but ‘there will always be another moon-rise’, he reminds us. 24

For Isaac Asimov, science fiction became respectable when the ‘new reality’ of the atomic bomb showed ‘that many of the motifs… were now permanently part of the newspaper headlines’, hence his and others’ shift from distant futurism to ‘tomorrow fiction’: stories ‘that would deal with the things of tomorrow without becoming dated the day after tomorrow’. 25 Consider the current readership science fiction has found among the artificial intelligentsia (Dillon and Schaffer-Goddard 2023).

Cybernetic mythology and human ‘interaction’

Smart machines, first applications and plans for them during the incunabular period did not take hold only, perhaps not chiefly, because of these machines’ calculational, problem-solving prowess. On offer at the war's end was much more than something Faster than Thought (Bowden 1953). At the time brilliant nuclear physics punctuated history, but in technological terms the war was largely won with the crucial help of control engineering and its applications to anti-aircraft ‘fire control’ (Mindell 2002; Holland and Husbands 2011). Its focus on integrating gunners and their guns into an effective unit depended on and harkens back to the long history of interest in mechanical models of the human body and nervous system. All this preceded and prepared the ground for the publication of Norbert Wiener's Cybernetics, or Control and Communication in the Animal and the Machine in 1948, which inherited earlier ideas and techniques advanced in wartime research but gave them a name, a fully articulated theory and a public language. 26 Cybernetics was a best-seller despite its technical sophistication and ca. 70 pages of mathematics (Kline 2015, 69ff). Together with ambient ‘cyberspeak’ (Gerovich 2002, 51–55), the book prepared its readers to entertain the notion of the tool user or equipment operator ‘as an engineering system… [that] behaves as an intermittent connection servo’ (Craik 1947, 56), translating without much notice ‘the familiar operations of living’ into a kind of servomechanical ‘tracking operation’ like that developed for anti-aircraft systems (Philbrick 1946, 23f). 27

Such thinking was obviously not confined to American cybernetics, but I focus on American research rather than on the investigations of the quietly high-powered British Ratio Club (for which Craik was an inspiration and Turing a member) because of the considerable public influence the Americans had and, as we will see, their interdisciplinary reach well beyond the natural sciences. The Ratio Club had almost no such direct influence and focused its discussions on biological and neurological problems (Holland and Husbands 2011, 119–120).

The extent of Wiener's raw popularity as well as the influence of his ideas on American researchers, officials and public is difficult to overemphasise. Together with his companion article in Scientific American (Wiener 1948b), Wiener's numerous lectures, public appearances and frequent attention to him as ‘celebrity scholar’ in newspapers and magazines (Rid 2016, 83), he became one of the most prominent scientists at a time when physics and, in its wake, the physical sciences enjoyed unprecedented status and public respect (Galison 1997, §4–5; Morus 2005). Across the disciplines, cybernetics seemed on course to fulfil Descartes’ dream of a mathesis universalis (Bowker 1993). Wiener's compelling way of thinking and talking, his comparisons of humans to machines, indeed his elision of their difference, entered and shaped the mainstream, as I have indicated. Thus Thomas Rid: cybernetics ‘hit a nerve’. 28

Rid's focus on the corporeal imagination of cybernetics underscores the whole-body, physiological aspects core to the ‘brain’-talk of the time (cf. von Neumann [1945] 1993; Wiener 1948a) – a strong corrective to the neuroscientific reduction of being to brain we are apt to read into that talk (Vidal and Ortega 2017). Cybernetics augmented postwar ‘giant brains’ with a theory and language which made it possible to talk effectively and openly at the highest levels of government and the military as well as in public about machines that could ‘actually think, calculate, make decisions on their own’. This was done not just in the manner of the older narratives but in a powerful new style with ‘for real’, physical, ‘giant’ correlatives (Rid 2016, 342ff). The story that became possible and dominant was mythological, transcending the limits of the actual with an authoritative, ‘fully experienced, innocent, and indisputable reality’ (Rid 2016, xiv-xvi; cf. Pias [2003] 2016), evident to the thus mythologically captured population in measurable progress and ever more impressive concrete results. The in-the-world ‘brain’ of iron and copper appealed to deeply held beliefs, desires and fears. Crucially, by transcending the present, the idea of it anchored itself ‘in the future or, to be more precise, in a shared yet vague imagination of the future – not too close and not too distant’ (Rid 2016, xv).

Claus Pias takes a different approach, in ‘The Age of Cybernetics’ (2016/2003) centring his account not on Wiener's book, ideas and persona but on the other ‘foundational document’ of cybernetics, the proceedings of the Macy Conferences (1946 to 1953), which began prior to that book but quickly identified with its programme (Pias [2003] 2016). It gathered together an exceptionally wide interdisciplinary group comprising social scientists, anthropologists, physiologists, psychiatrists and psychologists, engineers, mathematicians, medical practitioners, logicians, linguists and one each from ecology, business administration, biophysics, physics, anatomy and literary criticism. 29 For the complicated discussions of these conferences to be publishable, Pias argues, they had to be heavily redacted from the ‘peculiar textual records’ of participants’ struggle to put nascent arguments across several disciplines into coherent form. ‘And in this regard alone’, he summarises, ‘the Macy Conferences were a poetic creation and an enormous aesthetic achievement’: they articulated ‘a single theory that could then claim validity for living organisms as well as machines, for economic as well as psychological processes, and for sociological as well as aesthetic phenomena’ (Pias [2003] 2016, 15). The common precondition across these theoretical domains was digitality: the human was thus ‘computationally constituted’ (16).

Cybernetics did not become Wiener's hoped-for universal science. The thoroughness of its assimilation is, I think, responsible for one's lukewarm reaction to Cybernetics today. Its imprint is with us still, however, in the strange richness of ‘relation’ that may surprise us, in the poverty of the interactive behaviour taken for the whole story. Drawing relation between people into parallel with the servomechanism central to cybernetic feedback 30 impoverished the former and made the latter enormously effective as an explanatory principle.

‘Interaction’ was the word fastened on in the earliest writings to describe the human-machine relation. 31 Current vernacular use of the term to describe how people ‘interact’ may suggest that it was co-opted for machines from human-to-human relations, but the history of the word tells a different story. It seems to have entered the language in the mid nineteenth century to denote forces, elements or abstractions acting on each other, 32 first in the physical sciences then by the turn of the century in the nascent social sciences. Mid-century, following on Wiener's cybernetics, J.C.R. Licklider's influential vision of a ‘symbiotic’ ‘development in cooperative interaction between men and electronic computers’ (Licklider 1960, 4) further eroded the difference between machine and person in official AI. Vernacular use of ‘interaction’ for relations among particular individuals (rather than social groups) appears to begin at about that time. 33 Particularly significant here is the influence cybernetics seems to have had on the scope of studies in ‘conversation’ during the period studied here, narrowing its focus on dialogue to the ‘talk in interaction’ of Conversation Analysis. 34

From a textbook anthropological perspective, AI occupies the space that property also occupies, so that a person would be said not to have a relation with an artificially intelligent thing, rather a relation to others with respect to it. 35 But (to borrow from Evelyn Fox Keller on the notion of artificial life) as the smart machine begins to take on or be given personal attributes, the question of ‘person’ becomes perhaps more ‘a historical question, answerable only in terms of the categories by which we as human actors choose to abide, the differences that we as human actors choose to honour, and not in logical, scientific, or technical terms’ (Fox Keller 2005, 221; cf. Hann 1998, 5). We can complain about imprecise language or blurring of boundaries, but I prefer to think, again, of ‘relation’ stretched to bring human and machine closer. This is not to ease resistance to that cybernetic reduction of the former to the latter but to open our eyes. It is a call to action.

The historical evidence

Newspapers and magazines from the incunabular period make it safe for us to infer that ordinary readers of the major news outlets thought of smart machines as ‘brains’, whether ‘electric’, ‘electronic’, ‘mechanical’ or ‘giant’. But ‘ordinary’ does not necessarily exclude the experts worked with them, even if they knew better. When ‘at home’, reading the newspaper, watching television, talking with neighbours, helping the children program or build a digital computer from a kit, many of them would also have been casually ‘ordinary’ (Johnson 2003, Chapter 1). For them, regarding a technological object as a fascinating stimulus to the imagination, even bordering on the magical (let us say), is not be dismissed out of hand: the dazzling performances of the intricate electronics that create and maintain binary signals are and were arte factum, hence potentially capable of manifesting the magical aspect of works of art Alfred Gell has argued is a core feature (Gell 1998). It may lurk, surfacing as no more than uneasiness, or it may be suddenly evoked, despite or precisely because of expert familiarity with the ‘virtuosity’ (Gell's word) of an object's construction. The impression an intricate object makes may be its primary truth, however momentary – but then kept in memory. 36 The experts were, and are, not immune from entertaining such truth and being affected by it.

Initially, we can assume a more or less open protocol, during which lack of detail, almost no meaningful public contact with smart machines and absence of satisfactory explanation left abundant room for imaginings. These were fed by reports of the machine's vaunted attributes: tireless performance, accuracy, dizzying speed of calculation and startling mnemonic capacity. As noted the strong influence of cybernetics made identification of human with machine commonplace and ‘interaction’ a popular model for human relations. Subsequently, as the Cold War developed, confirmation of the ‘brainy’ power of smart machines came from reports of near-Archimedean leverage it conferred, chiefly on research conforming to military priorities. Thus, especially in the United States, ‘the constitutively militarised practice of technoscience’ (Haraway 1994, 60ff), formed and galvanised by the imperatives of World War II, the Allied victory and spectacular demonstrations of nuclear power, was put to work in defence projects and automation – which as current news reminds us, are ongoing. Hence Haraway's thirty-year-old call for philosophical action lives on: ‘to get at how worlds are made and unmade, in order to participate in the processes, in order to foster some forms of life and not others’ (Haraway 1994, 62; cf. Goodman 1978).

In other words, the historical study which follows has a contemporary objective: to answer Lenin's question for the present: ‘What is to be done?’ (Lenin [1902] 1966). I will return to it at the end.

I lean heavily on the evidence which follows. But we must ask: did British and North American citizens actually care? A Special Correspondent to The Times wrote in 1960, for example, that ‘the lay mind accepts these machines with the same uncomprehending phlegm that it accepts other electronic marvels’ (T:1960/08/04). Yet the continuation of the stories, sometimes given considerable emphasis, argues for their appeal. Experts certainly paid attention – and complained loudly, denouncing ‘brain’ in no uncertain terms, denying the threat that ‘brainy machines’ were said to pose. 37 They had a point, but their vocal opposition documents an obvious anxiety that ordinary citizens would take exaggerated claims and predictions seriously – as, indeed, happened. Experts did little to provide alternatives; their exaggerated internal talk, full of hope, tended to leak, as now, into the public sphere (nothing like an expert before the cameras to confer impact on a story). 38 Furthermore, some insiders were quick to realise how useful the evocative appeal of these ‘brains’ would be for promoting their own careers, for example, pioneer computer scientists Grace Hopper and her entrepreneurial friend Edmund Callis Berkeley. 39

Admittedly, these ‘brains’ were hardly in the foreground all the time or always on people's minds. But they never drop out of sight for long: the threats of the day kept them in the news, as we will see. Some of the reports attesting to technological advances ‘brains’ were known to be assisting, such as exploration of space, were clearly celebratory and must have been welcomed, but almost always far less cheery news links them to the Cold War military and industrial developments inseparable from them. 40 Nor is it a surprise to find magazine advertisements connoting the good life cheek by jowl with accounts of sinister possibilities involving ‘brains’. 41 Journalistic evidence leaves no doubt that smart machines brought much disturbingly into question, principally the singularity of human intelligence, and so of human identity, and the future of skilled labour (‘The Machines Are Taking Over: COMPUTERS OUTDO MAN AT HIS WORK – AND SOON MAY OUTTHINK HIM’, one prominent American magazine shouted in 1961 [L:1961/03/03]).

In short, whatever the claims for the ‘brainy’ machine from whatever source, whether prophetic, grossly exaggerated or just plainly wrong, completely believed, merely allowed to stand or vigorously denied, are for my purposes signal, not noise. Like other Cold War anxieties, this signal, however much in the background, was always there, modulating the news of whatever was affected, and undoubtedly affecting readers. As I said, the experts’ annoyance is of equal value to the wild and fearful claims, for it shows how prevalent the impression of machinic intelligence was and the extent of the concerns it raised. Whether by name, boasted attributes or both, in other words, these ‘brains’ thus attested to a publicly imagined artificial intelligence, my unofficial AI. Imagining such an intelligence was hardly new, nor the dreams and fears it stirred. Examples from the ancient Greek Talos to the Golem of Jewish folklore and Dr Frankenstein's monstrous creation suggest rather a reawakening of an old, persistent and disturbing possibility.

As the Cold War developed and hardened, ‘brains’ themselves receded into the background of public attention – but did not disappear. 42 Accounts of military and industrial applications contextualised the ‘brains’ behind them, suggesting complicity. What is particularly important is the figurative language of these accounts, not to be dismissed as ‘anthropomorphic’. (The charge thus named extinguishes interrelation, denies the erotic tug of a partial connection, retreats into the frigid isolation of objects.) Nearly everywhere, as I just suggested, are traces of Wiener's powerfully influential cybernetic vision, which unified disparate phenomena not only by equating animal and machine, and not just by interweaving the equation with perilous consequences of cybernetic technologies, but also by infusing a Manichaean ‘ontology of the enemy’ (Galison 1994). The net effect of this infusion, resonant with the massive worries of the Cold War, would certainly have darkened the way ordinary people perceived the cybernetic world-remaking then underway. 43

Brain-‘Brain’-bomb

‘It is trite to acknowledge’, political scientist Harold Lasswell noted in 1956, ‘that for years we have lived in the afterglow of a mushroom cloud and in the midst of an arms race of unprecedented gravity’. 44 We must work hard to bring back something of the experience to which he refers. That the Americans and Russians had ‘sufficient nuclear weaponry and delivery capacity to destroy each other and much of the rest of the world’ was a common belief (Erickson et al. 2013, 5–6), communicated by the popular press, in advisory government publications, broadcasts and films and in science fiction cinema. From the 1950s it was brought into American classrooms with the ‘Duck and Cover’ movie and prescribed classroom exercises. 45 In Great Britain, the Home Office issued pamphlets on civil defence against nuclear attack from the late 1950s. 46 One of these, Protect and Survive (1980), was movingly satirised by Raymond Briggs in When the Wind Blows (Briggs 1982). 47 Unabashed propaganda was directed by the U.S. government at the American public; it is captured, for example, by Peter Kuran in Trinity and Beyond: The Atom Bomb Movie (1995). 48

British and American citizens quickly became aware that ‘brains’ were complicit, especially with Edward Teller's advocacy of the thermonuclear bomb, 49 and with the highly publicised missile guidance and defence system SAGE (note 55). In Kuran's movie, Teller's emphatic justification (which recalls Dyson's warning) is especially telling: ‘At the end of the war, most people wanted to stop (hydrogen bomb research). I didn’t, because here was more knowledge… I was the one person who put knowledge and availability of knowledge above everything else’. 50

Two years after the war's end, New York Times Pulitzer journalist William Laurence brought the imagery of ‘brain’, bomb and human brain together in Dawn Over Zero: The Story of the Atom Bomb (1947), 51 in which he celebrated the ‘workable plan’ of ‘scientific brains’ whose outcome he had witnessed first-hand at Nagasaki on 9 August 1945, and prior to that, again first-hand, at the Trinity test-site (Hijiya 2000, 161–4), where the first atomic bomb was detonated. There, at Trinity, he recorded observer Dr Charles Thomas’ remark that the cloud ‘resembled a giant brain the convolutions of which were constantly changing’ (Laurence 1947, 162). Laurence concluded that ‘Only the future will tell whether it symbolised the collective brain that created it or the ultimate explosion of man's collective mind’. Brain and bomb were thus (to borrow from Dr Johnson's famous rebuke of Abraham Cowley's metaphysical poetry) ‘yoked by violence together’ in the metaphysics of nuclear warfare (Johnson 1781, 29), when poetic language was, in that intense moment, the only recourse the observers had in response to an experience that was ‘too far from the course of ordinary terrestrial experience’ for either silence or ordinary speech. 52

Before reaching Nagasaki, as an observer aboard a B-29 Superfortress, 53 Laurence reported his own cybernetic vision avant la lettre: the crew and machine became one, ‘a modern centaur… [all] miraculously synthesised before me into a new entity, of which the machine, with its maze of instruments and mechanical brains, was part of a whole’. 54 After publication of Wiener's Cybernetics the next year, many more instances of cybernetic imaginings come as no surprise, including Wiener's own unrestrained extrapolations, quoted for example in ‘The Thinking Machine’ cover story of the January 1950 issue of Time, ‘Can man build a superman?’ (TM:1950/01/23a). There Wiener describes the possibilities ‘with mingled alarm and triumph’ of the machine's ‘extraordinary resemblance to the human brain’, but asks why these machines ‘have no senses or ‘effectors’ (arms and legs)… (given) all sorts of artificial eyes, ears and fingertips… that may be hooked up’ to them (TM:1950/01/23b, likewise Berkeley, NYT:1950/11/19). Wiener's influence, or in any case the cybernetic tendency of mind he unleashed, is clear enough in the proposal for the first American air-defence system, formulated in 1950 by fellow MIT professor George Valley and colleagues on the Air Defense Systems Engineering Committee (ADSEC), commissioned for the purpose by the federal Scientific Advisory Board (Valley 1985). In a nutshell, the Committee's Final Report describes in these terms a hybrid ‘organism’ with coordinated animate and inanimate components, in effect a great military anthropomorph comprising men, mechanical effectors, detectors likened to human sensory organs and the communications apparatus or artificial ‘nervous system’ that would connect them. 55 The Report was read and promptly accepted as a reasonable, cogent and practical response to the mounting threat following detonation of the first Soviet nuclear bomb (Rid 2016, 73–82).

Cybernetic physiological imagery quickly became commonplace, for example in Admiral Lord Mountbatten's 1958 description of new radar equipment on board the H.M.S. Victorious, ‘the eyes, brain and central nervous system… [i]ntegrated with the directing intelligence of the human staff… [comprising] a device of almost fabulous performance’, 56 and, a decade after Wiener's prediction of ‘wholly automatic factories, with artificial brains keeping track of every process’ (TM:1950/01/23b), in Laurence's report on the ‘self-regulating machines’ responsible for ‘a Second Industrial Revolution’ (NYT:1960/07/17). These machines, Laurence wrote, had already automated labour and were ‘now in the process of mechanising the nervous system and the brain itself’. He cites a typically breathless report from a British journalist on computational speed, the prospect of these ‘brains’ memorising ‘all the knowledge in the world’ and their ability to help ‘breed machines’. 57 The reporter's enthusiasm is not merely naïve, not merely a belittlement of the human but hubristic in the full meaning of that term, especially the ‘thinking big’ beyond the limits of human existence. 58 Laurence goes on to quote Teller (again, public face of the hydrogen bomb) on machines’ potential to learn, make value judgments and reason logically, then Wiener on the ‘profound moral and technical consequences’ which could follow the outpacing of human thought by ‘brains’ (cf. Wiener 1960). 59 Note the pathological narrowing of the ‘thought’ that he thought would be outpaced.

As Rid has shown, Wiener's cybernetics made the kindred phenomena of ‘brain’, Bomb and automated workplace intelligible by fitting them into a narrative theory of all machines (cf. Ashby 1956). The cybernetic language of analogy and metaphor brought the otherwise bewildering inventions, even ‘that brilliant horror’, the thermonuclear Hell Bomb, 60 (barely) under conscious imaginative control and into relation with the body of the bewildered citizen, correlating ‘central nervous system’ to central nervous system. However conventional such language became, it did not fossilise, rather it communicated, inflected and sustained commonplace assumptions of the incunabular period and of the Cold War as a whole, often as an implicit presence (Rid 2016, 342). To borrow from the title of recent study of Cold War research at RAND (Erickson et al. 2013), this was a time when ‘reason almost lost its mind’ under the influence of those who discussed, planned and simulated the unthinkable aftermath of nuclear war with the implemented aid of George Boole's ‘brainy’ logic. 61

Automation 62

In 1946, in the immediate aftermath of the war and two years before Wiener's book, what we might call the metaphorical and conceptual blueprint was already available for constructing the automated factory cybernetically (Noble 2011, Chapter 4). A basic sketch came out in November 1946 in two articles in Fortune magazine, ‘The Automatic Factory’ and ‘Machines Without Men’, showing what automation of industrial manufacture would look like. 63 The supposedly ‘realistic illustration of general principles’ depicted in the former article looks, however, rather more like cybernetic magical realism, such as ADSEC's: it depicts human body-parts for sight, smell, hearing, temperature and touch together with the mechanical organs of its ‘Electronic Brain and Nervous System’; a brain, shown in its skull, supervises. Four years later, Berkeley (of Giant Brains) declared that making the automated factory a reality was in progress: machines that ‘combine acting, sensing, and some degree of thinking – the ‘robot’ machines’ – are ‘on the way’, he wrote in The New York Times, with a lead photograph of IBM's literally spectacular Selective Sequence Electronic Calculator. The SSEC was sufficiently ‘giant’ to give visitors the sense of being inside it, as was repeatedly emphasised during the dedication ceremony in 1948. 64 More on the SSEC below.

As we know from Karel Čapek's R.U.R. (1920), robot imagery was already in circulation to communicate the subservience of workers to machines long before the ‘brains’ took over. Automation inherited imagery from earlier industrial mechanisation, 65 hence the giant robot looming before a frightened worker as he comes through the factory gates under dark, rainy skies in Leslie Illingworth's 1955 cartoon in Punch, which R. H. Macmillan used as frontispiece in Automation: Friend or Foe the next year (P:1955/06/29; TLS:1956/08/03). Overall, however, since other interests were in play, the message about automation was mixed (O’Mahoney et al. 1955; TUC 1956), as Macmillan's book goes on quickly to demonstrate. Some messages were ambiguous, such as one report from the mid-1950s, telling the reader that the ‘electronic brood’ had ‘already penetrated the familiar fields of commerce, industry, transportation and even education – and the promise of vastly greater penetration to come’ – suggesting invasion or personal violence (NYT:1955/01/23; cf. Cohn 1987, 693–695). Upbeat reports promise less back-breaking labour, more leisure, easier cooking and lighter housework (NYT:1958/01/01 and NYT:1960/06/19). The labour unions and their chiefs were cautious. Some of the news was unambiguously dark, reporting for example the work of many reduced to the work of few; the ‘human element’ soon to be replaced ‘by a general-purpose electronic computer’; and ‘a new kind of industrial magic… [whose] name is automation… Its ability to edit man out of the productive process’, the reader is told, ‘is an awesome thing to watch’. 66 Denials of fears and reassurances from management and government, however intentioned, supplement rather than contradict evidence of worries that jobs would be lost or degraded to machine-minding. 67 Two features in Life at the start of the 1960s sum up the situation – ‘Machines Are Taking Over’ (quoted above) and ‘The Point of No Return for Everybody’. 68 Again, messages are mixed, leading ‘from high hope to deep despondency’, depending on where one stood: with the managers or with the workers (Hoos 1961, 1).

The managers saw to transferal of their workers’ practical knowledge to the new machinery: a doubly laden process that involved not only what could be observed or described by the workers themselves but more fundamentally a translation ‘from one quality of knowing to another – from knowing that was sentient, embedded, and experience-based to knowing that was explicit and thus subject to rational analysis and perpetual reformulation’ (Zuboff 1988, 56). This latter quality also (as may be obvious) moved it closer if not aligned it with the demands of the digital machine common to both automation and the physical sciences. During automation's first decades, workers underwent a change much like that of the sciences during the previous century: a ‘semiotic progression’, Fox Keller has argued, from ‘the embodied crafter, interpreter, and reporter of experiments’ to the scientific subject from which the knower had been erased (Fox Keller 1996, 418f). We have noted such erasure in the cybernetic translation of open-ended social action to servomechanical interaction based, again, on Wiener's equation of ‘the animal and the machine’. In all three is the idea of a closed autotelic system that bestows efficiency and power at the cost of losing or diminishing the sentient, embedded and experienced-based untranslatable.

The cost to the factory workers was both existential and epistemic. On their behalf Zuboff asks of the machine, ‘what effect will it have upon the grounds of knowledge? What will it take “to know”?’ (Zuboff 1988, 57) This is not merely a theoretical question. Years later, recalling the origins of her earlier study, she places side by side Homer's description of exiled Odysseus ‘crying, shedding floods of tears upon/Calypso's island’ on the one hand, and on the other a young papermill manager's question, ‘Are we all going to be working for a smart machine, or will we have smart people around the machine?’ (Zuboff 2019, 1).

Commentary from the arts

To bring my evidence to a point: I have dwelt on the early military-industrial applications of smart machines to illuminate the imaginative power evoked by the latter. I have suggested the origins of this power, ‘at a premoral depth, at a point where value is still in statu nascendi’. Thus Bruno Schulz wrote in 1935, defining for his art the role I appeal to here for the smart machine: ‘to be a probe sunk into the nameless’, into a psychodynamic reservoir. 69 To see more of what this means I take up three imaginative productions contemporary with the beginnings of official AI to serve as commentary on it: a science fiction movie (1955); an artist's illustration from a magazine advertisement (1950); and a writer-journalist's lecture (1966). Then, in the penultimate section of this essay, I briefly examine two collaborative robotic artefacts of recent years for their suggestions of regenerative applications. There I attempt to follow Philippe Descola's advice (2014, 271), to inhabit, if only for a moment, other points of view than my own.

Forbidden Planet

Of the many responses to machinic intelligence from science fiction of the period, among the most useful in the present context is the movie Forbidden Planet (1955), modelled on Shakespeare's The Tempest (with Plato's tripartite soul in the background), mixing in pronounced Freudian influence with the overhanging threat from a thermonuclear Cold War. 70 Forbidden Planet depicts a machinic intelligence capable of accessing minds and materialising whatever it finds there.

In the movie, a crew of military explorers is sent to a distant planet to check on a vanished expedition. The explorers discover two survivors, a learned philologist, Dr Morbius (the Prospero), and his daughter Altaira (or ‘Alta’, his Miranda). Morbius tells the visitors about a once prodigiously intelligent but self-annihilated species, the Krell, who built for themselves, in a vast underground installation, an ‘ultimate machine… [that] for any purpose… [made possible] creation, manipulation by mere thought!’. Morbius shows the visitors ‘The batteries of thermo-nuclear reactors – the unleashed power of an exploding planetary system!’ (the script calls for ‘MULTIPLE BANKS OF ATOMIC FURNACES… glowing balefully blue’). Later it is revealed that when these beings fell asleep, their great machine materialised ‘mindless primitive… monsters from the Id’ – Freud's das Es (the It) 71 – which wiped them out in a single, horrific night. This same machine unleashes minor attacks on the visitors, then a major assault which almost destroys everyone. The comedic structure is marked by the death of Morbius, who thus surrenders the source of the advanced knowledge he has acquired; escape from the planet with destruction of the Krell's machine; and a wedding of the crew's captain to Alta. Correspondence to the historical facts of its time is heavy with the then only decade-old exclamations at Trinity that Laurence made public, especially Robert Oppenheimer's words translated spontaneously from the Bhagavad Gita: ‘I am become Death, the shatterer of worlds’ (note 52).

However comedic its structure, Forbidden Planet sounds a dark warning. The Krell's powerful AI is but a materialiser of ‘a psychic It’, as Freud wrote, which the members of the expedition carry back within them, ‘unknown and unconscious, upon which is superficially perched the I’ of their daily lives, ‘ein psychisches Es, unerkannt und unbewußt, diesem sitzt das Ich oberflächlich auf’ (Freud 1923, 25; cf. Gillison 1999, Liu 2010). Their nuclear detonation of the Krell's machine as they speed away thus does not solve the problem but slyly confirms it. That problem – we might even say the problem – is what a ‘science of limits’ would address. In the larger context of Science in the Forest, Science in the Past, I prefer to keep that ‘It’ unbewußt, that is, a question rather than a psychic theory, in Schulz's words (note 70), przedmoralnej… bezimienne (‘premoral… nameless’), in Calvino's phrase from ‘Cybernetics and Ghosts’, ‘the ocean of the unsayable’ ([1980] 1986a, 19). I would leave open the possibility of that other, very different perspectives, such as Taylor and Vilaça discuss (this issue), would tell us more about my subject.

The Oracle on 57th Street

Whether he intended all we can see in it, artist Rolf Klep's commercial illustration, ‘The Oracle on 57th Street’, made a bold and influential claim to the oracular function of artificial intelligence in an inaugural public moment.

Klep's illustration appeared in a popular American magazine, the Saturday Evening Post, on 16 December 1950, prominently opposite the table of contents 72 (Figure 1). It depicts a giant Delphic Sibyl sitting atop IBM World Headquarters in central Manhattan, just as the ancient Greek Pythia sat over the fissure at Delphi from which issued the agent of her rapture. 73 Computer printout spills from her hands down to the people below her, who consult it. Klep was unquestionably alluding to Michelangelo's Delphica on the ceiling of the Sistine Chapel, 74 but close as the two depictions are, they contrast in important respects. Klep's Sibyl, like the ancient Pythia, sits above the source of her knowledge; in serene confidence, she reads her machinic scroll before it passes out of her hands to those who consult it below. In contrast, the Delphica's posture is dynamic, even agitated; lacking an identifiable source of divine knowledge (no fissure beneath her), she turns away from her scroll with a startled (I would say) apprehensive look. 75 Whatever she may be looking at, her movement away from the scroll and her expression contrast with the untroubled confidence of IBM's oracular presence. If Michelangelo's Delphica can be said to evoke the silencing of the ancient oracles (as well as their typological assimilation), Klep's Sibyl announces a technoscientific rival. Thus a Christian usurper is in turn usurped by a modern form of the ancient original.

The Selective Sequence Electronic Calculator at IBM World Headquarters, 1948-1952, depicted by Rolf Klep, The Saturday Evening Post, 15 December 1950. Reproduced from a scan of the magazine page by the author.

Beneath IBM's oracular figure, barely visible in cut-away, is a glimpse of the machinic source, the Selective Sequence Electronic Calculator, to which I referred earlier. On the orders of IBM President, Thomas J. Watson, the SSEC was installed on the ground floor of IBM World Headquarters in January 1948 to attract maximum public attention, and so to establish IBM as leader in the new business of computing machines. The SSEC was made freely accessible for scientific and governmental research and was open to visitors for four years; Manhattan pedestrians dubbed it ‘Poppa’. 76 It was visible ‘from the street, and hundreds of [passers-by] could watch the neon indicators that flashed whenever the computer was working. For years, this was the image conjured in people's minds when they thought about computers; an image reinforced by Hollywood's adoption of it in science fiction movies’. 77

Watson's strategic move was commercially brilliant; the machine had exactly the effect on the marketplace that he intended. Technologically, however, it was hardly innovative, hence was subsequently passed over by progress-minded engineers and has been given little notice by later historians or ignored altogether. 78 It is central to my story because, in Klep's allusive expansion of the machine's – all digital machines’ – imaginary, it communicates a powerful impression of the potential of which the actual machinic ‘brain’ was but a hint, projected (as is all computing technology implicitly) into a future unconstrained by present applications.

The oracular associations are hardly adventitious. A year before the illustration appeared, in a comment on the Manchester Mark I ‘mechanical brain’, Turing remarked in prophetic language, ‘this is only a foretaste of what is to come, and only the shadow of what is going to be’. 79 In his doctoral dissertation of 1938 he had already associated computing machinery with oracular prophecy, suggesting an ‘oracle machine’ as placeholder for ‘the mental ‘intuition’ of truths which are not established by following mechanical processes’ (Hodges 2013, 15–17; McCarty 2022a, 128f). Others subsequently make the same connection. 80 In The Electronic Oracle: Computer Models and Social Decisions (1985), for example, environmentalists Donella Meadows and Jennifer Robinson placed the machine in a history of ‘ingenious methods… to bridge the gap between imperfect knowledge of the past and necessary decisions about the future [starting with] the Delphic oracle of ancient Greece’. 81 Much earlier, in Walk East on Beacon (1952), a Cold War spy movie in which the SSEC has a brief appearance and pointedly a central role in missile guidance, a scientist on his way to the machine is warned that ‘if he's granted an audience, the calculator won’t stand for any trifling questions!’ (Jones 2016, 66). Years before that, describing his encounter with the Ultra in 1940, British intelligence officer Frederick Winterbotham wrote, ‘I was ushered with great solemnity into the shrine where stood a bronze-coloured column surmounted by a larger circular bronze-coloured face, like some Eastern Goddess who was destined to become the oracle of Bletchley, at least when she felt like it. She was an awesome piece of magic’. 82

The context is religious, in the dual sense of religio carried into English, of supernatural awe and of its constraining force on life and the living according to obligation, rule and ritual (cf. OLD and OED). It is also magical, as a commanding work of art, in Gell's sense.

Cybernetics and ghosts

When Italo Calvino delivered his lecture ‘Cybernetics and Ghosts’ in 1967, 83 he told his Italian audiences that their world was ‘increasingly looked upon as discrete rather than continuous’, undergoing transformation by ‘Electronic brains… capable of providing us with a convincing theoretical model for the most complex processes of our memory, our mental associations, our imagination, our conscience’ (Calvino [1980] 1986a; cf. Iuli 2014). His digital tropes were of their time, but his lecture remains valuable for its insight into the possibilities of envisioning the world digitally. Contemporary with him, the literary scholar Hugh Kenner had noted a persistent trend in imaginative Western literature of that period: to depict the world just so, as a mathematical ‘closed field, within which elements are combined according to specified laws’ (Kenner 1962, 599–600). In Calvino's cybernetic theory of literary composition, such combinatorics is fundamental to how the writer evokes meaning within the reader. A century prior to him, Ada Lovelace had glimpsed in Babbage's Analytical Engine the like possibility for the natural sciences and mathematics, that ‘in so distributing and combining the truths and the formulae… the relations and the nature of many subjects… are necessarily thrown into new lights, and more profoundly investigated’ (Menabrea 1843, 722).

Calvino drew on and cites an impressively wide range of sources to reconceive literature as just such ‘a combinatorial game’. 84 In the lecture he refers to anthropology (Lévi-Strauss), folklore studies (Propp), Russian Formalism (via Jakobson), computer science and mathematics (von Neumann, Shannon, Wiener, and Turing). 85 He brought a great deal more to ‘Cybernetics and Ghosts’, for example, involvement with the Oulipo, and so with the combinatorial mathematician Claude Berge (1971), and familiarity with Northrop Frye's literary theory and the importance of mythology in it. 86

The combinatorial literary game, he wrote pursues the possibilities implicit in its own material, independent of the personality of the poet… a game that at a certain point is invested with an unexpected meaning… [that] has slipped in from another level… The literature machine can perform all the permutations possible on a given material, but the poetic result will be the particular effect of one of these permutations on a man endowed with a consciousness and an unconscious, that is, an empirical and historical man. It will be the shock that occurs only if the writing machine is surrounded by the hidden ghosts of the individual and of his society (Calvino [1980] 1986a, 22).

What we have now with the latest chatbots is very different – chunks of language merely fetched and arranged according to habitual use – though this is not to say this approach to an artificial intelligence is wrong. But for an effective shock (a curative shock, I would think) we need to ask whether, and if so how, the mythology of a people is borne in ordinary words and language games. Generating readable bricolage is no longer a problem. Getting to the big problem apparently left untouched by the chatbots’ cybernetic model of interaction leads us to robotics.

Faith in machines

Minsky has described the holy grail of artificial intelligence research as ‘not some definite thing but only the momentary horizon of our ignorance about how minds might work’ (Minsky 1990, 214). I read Calvino's formidable image of the storyteller as an anticipatory response to Minsky, expanding the context of his characterisation of that grail from the abstract, isolated idea of ‘mind’ modelled mathematically by Turing (1937), neuro-technologically by McCulloch and Pitts (1943) and then electro-mechanically by von Neumann ([1945] 1993) and many others. Calvino's anthropological model suggests something quite different than theirs, closer to Gell's concretely ‘extended mind’. What if that mind were to be extended to automata? Hence the question of trust.

Trusting automata may seem highly problematic, especially given the ambient imagery of robotics, the history of automation and the current dangers of AI, with all of which we are all too familiar. But the larger, indeed ‘distant’ history of human–machine relations attested, for example, in the use of devices for communicating with the gods suggests that we already know how to trust machines. 88 With Greco-Roman divination, for example, we find trust put to the test in the melee of critics and believers. 89 A well-known example is provided by Alexander of Abonuteichos, prophet of his human-headed snake-god Glycon, savaged by the satirist Lucian, who brought detailed evidence against ‘The False Prophet’, accusing him of fraud and self-interested profiteering. Nevertheless, Alexander ‘was revered by thousands as a holy man… (His) cult endured for at least two centuries after his death’. 90 While acknowledging both foolishness among the faithful and the many dishonest, unreliable diviners, at the same time we should not ignore the very real debt ancient believers owed to their sustained faith in such figures, to its felicity at least (Lloyd 2020, Chapter 3). Personal reassurance of the gods’ concern and of their own place in an ultimately ordered world, 91 and the oppositional role of faith in ‘the invention of the category – the ancient Greek category – of the rational’ (Lloyd 1987, 2), must be factored in. Historically, positive relations with prophetic machinery have tended to arise in contexts of faith, in submissive relation to a power taken to be beyond the human. What does this tell us?

Faith is ‘The fulfilment of a trust or promise’ (OED s.v. 1), the consequence of a life under discipline that is sometimes or in some lives the ‘hard work’ (Boyer 2013) of ‘kindling the presence of invisible others’ (Luhrmann 2020) and sustaining it over time. The example of Weizenbaum's secretary suggests something rather different, however: an immediate, factually contrary acceptance of a primitive simulation she knew (Weizenbaum inferred) to be no more than that. What is going on when no more is needed than naïve surrender, or perhaps more usefully put in this and other instances, detachment from what one knows to be true?

Matei Candea and others have argued for such detachment to be in ‘real and effective symmetry’ with relation. Detachment, they argue, is ‘also a relation [and] every relation… also a disengagement from something else’ (Candea et al. 2015, 16). Finite, mortal existence defines it so. Hence the peril and promise of what we detach from – what we otherwise know or think to be true – when entering what I think of as the ‘charged relational field’ within which an artificial intelligence in my sense becomes possible.

Contact with the Unbewußte

I turn now to two robotic examples of embodied intelligence in that betweenness of relation: the Zen Buddhist bodhisattva, Mindar, built by Hiroshi Ishiguro of the Intelligent Robotics Laboratory (Osaka) for Gotō Tenshō and fellow priests of the Kodaiji temple (Kyoto); and Bappa, a ‘platform’ for the Hindu Ganesha designed and built by anthropologist Emmanuel Grimaud in collaboration with the artist Zaven Paré (Grimaud 2021). These are very differently conceived embodiments of (unofficial) artificial intelligence: in the first instance, directed by Japanese Buddhists for traditional Buddhist aims; in the second, designed by a French anthropologist for an experimental investigation of a Hindu religious phenomenon. Especially when considered together these robots make the obvious point that robotics is culturally situated with (from the perspective we have taken so far) strikingly different results – though, as I will suggest, not as different as may first seem. The implication I draw from both is that an artificially intelligent therapy is possible. Weizenbaum's underappreciated secretary's request for privacy was a first glimpse of it. Colby and others quickly began work on it, but they assumed the cybernetic template for the human. The potential I explore here remains underdeveloped in psychiatric therapy with its focus on official AI. 92

To do justice to the religious and technological contexts of Mindar and Bappa is not possible within the available space, so that what follows can be no more than suggestions for further work. Many other examples could be brought in, for example, from imaginative literature, Kazuo Ishiguro's Klara and the Sun (2021) and Steven Millhauser's ‘The New Automaton Theatre’ (1999), 93 and from the early work of artists, scientists and technologists (McCarty 2013, 40f). Mindar and Bappa are, however, exactly fit for purpose: they explicitly and diversely aim at ideals of human well-being.

Mindar

In Japan robots have a long history, and from the latter half of the 20th century, remarkable development, popularity and acceptance at all levels of Japanese society.

94

Of the numerous studies of their popularity, the most helpful for my purposes are those which focus on Buddhism, in particular Buddhist materiality, and include the history of prayer machines, or as Fabio Rambelli calls them, ‘Dharma devices’.

95

In his 2018 study Rambelli concludes that, the non-hermeneutical product of prayer machines – a mechanically generated interaction with the sacred, the effects of which are almost completely independent of direct human agency and will – is something that transcends any dualistic distinctions (because it makes them irrelevant); as such, it is perhaps the ultimate form of Buddhist prayer (Rambelli 2018, 73).

What can we learn from Mindar? In How Human is Human? The View from Robotics Research ([2011] 2020), Ishiguro describes many of his experiments probing the potential of communication with robots.

98

Mindar was designed more specifically to serve the objectives of Gotō Tenshō's Rinzai Zen Buddhism: not to represent but to be the bodhisattva Kannon, ‘a Buddha of compassion that heeds our prayers and helps facilitate their fulfilment’.

99

Baffelli explains: Mindar's role exceeds that played by… earlier examples. Unlike Pepper the robot monk, who was devised to help during funerals, Mindar is not limited to being a support for priests during their regular ritual activities. Nor is Mindar merely an ingenious prayer-chanting device to attract visitors. Mindar is Kannon, a bodhisattva. Its role is therefore to create a particular experience for and emotional reactions in its viewers (Baffelli 2021, 253).

Boddhisattava Mindar. Reproduced by permission of 鷲峰山高台寺 Kodaiji Temple, Kyoto, Japan; image provided by 磯邊友美 Isobe Yumi.

In an introductory session to visitors in the Temple, Mindar asks (in Japanese), ‘So, what do you humans think you might realise through a conversation with a metallic, inorganic thing like me?’ Anthropologists White and Katsuno quote him then comment on behalf of the priests: From the point of view of our interlocutors, Mindar is neither a machine nor a simulacrum of a human but rather a bodhisattva who serves as a model for the transformation and liberation of both. In this radically expansive view of what counts as life – in a word, ‘emptiness’ (kū) – affect is both the medium of experiencing life's fundamental nature as change and the method one adopts to get there (White and Katsuno 2022, 17).

Bappa

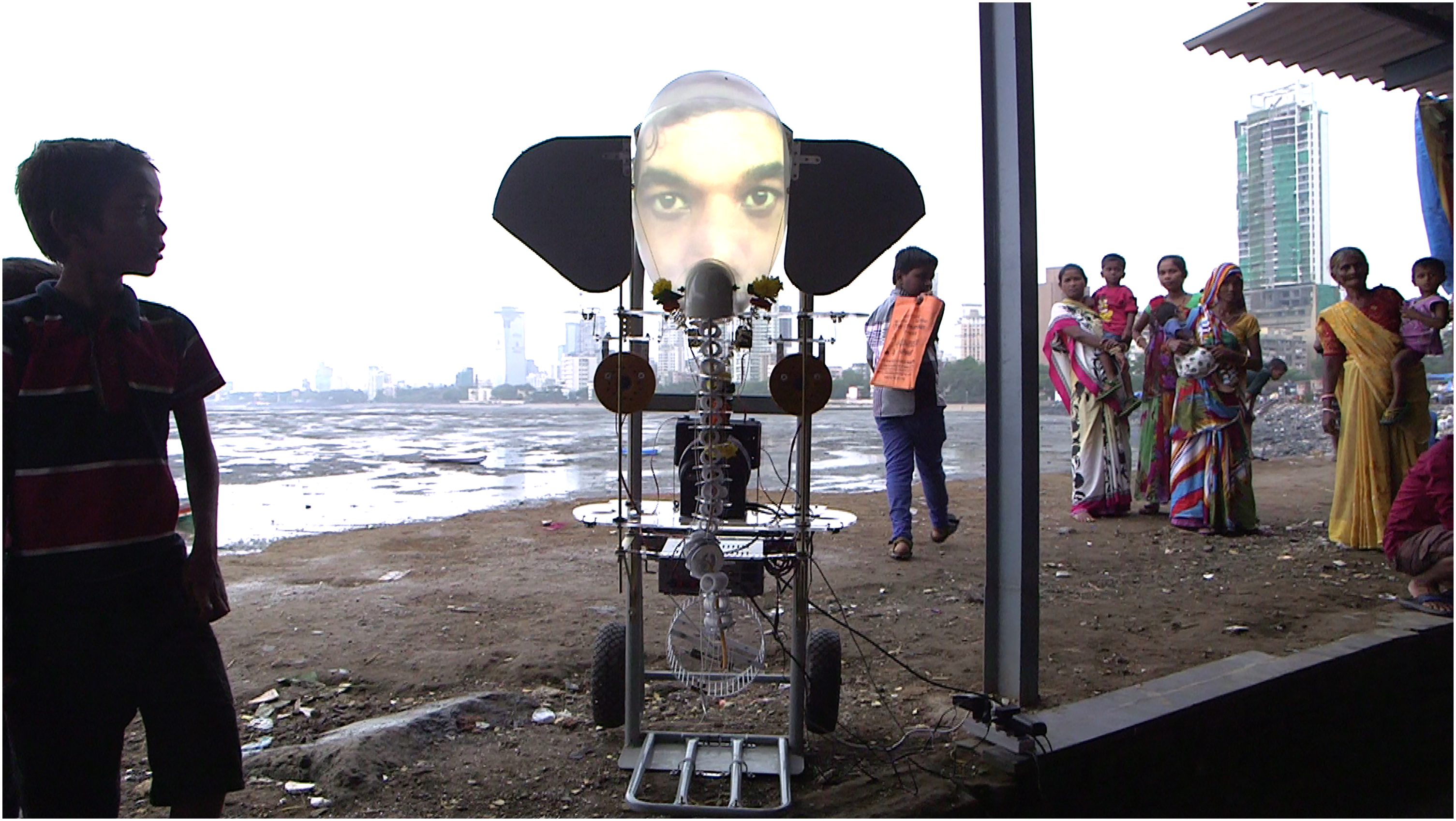

My second example is from Dieu point zéro: une anthropologie expérimentale (2021), in which anthropologist Emmanuel Grimaud presents an experiment he and artist Zaven Paré conducted on the tumultuous streets of Mumbai.

101

Centre of attention was Bappa, a semi-autonomous robotic contraption they designed and built in the image of the elephant-headed, half-anthropomorphic Hindu god Ganesha. Bappa comprises a trolley supporting a head with huge ears, a video screen for a face and a crude, randomly moving trunk.

Ganesha Bappa. Image and permission to reproduce from the photographer, Emmanuel Grimaud, 22/8/2022.

Throughout the experiment Bappa drew crowds of keen, sometimes determined, sometimes cautious, sometimes merely curious passers-by. Incarnants had to prove themselves by their responses, though interlocutors’ inclination or readiness to believe was not ultimately a problem – idols of Ganesha are the god. They are commonplace throughout India, found in uncountable numbers and varieties – but not with the ability to speak, not as a robot with a moving trunk. Keen and persistent interest from the crowd, given in the chance actually to interrogate the god, sometimes demanding explanation for prayers not answered, was not difficult to sustain.

Ganesha in particular was an apt and obvious choice for exploring dialogue with the divine. He is Lord of Beginnings and Lord of Obstacles, immensely popular, central to Hindu life, 102 ‘the richness of Hindu soil’ providing an obvious opportunity for asking questions about the gods in their multiple, unpredictable, proliferating, prominently anthropomorphic incarnations. An experimental attitude towards the divine in Hinduism fits the context. 103 For the incarnants, Bappa's Game of Incarnation (le Jeu de l'Incarnation) was played out in engagements that repeatedly led them in their struggles to become the role, to be god for their interlocutors, towards the crisis of what Grimaud calls le point zéro, when ‘the incarnant capitulates, when he or she seems to have exhausted human capacities and all the preconceived ideas of God of which a human is capable’. 104 Here, Patrice Maniglier comments in his Préface to the book, is ‘a renegotiation of god with god’ between incarnant and interlocutor (Grimaud 2021, xxi). Transcripts of encounters show a shared ‘kinetics of suspicion… in varying forms’ of curiosity mixing with caution, mistrust with benevolence (Grimaud 2021, 145). The point of the zero-point was anything but resolution in a settled and secure belief, rather a transformation of it.

Two particularly relevant and closely related aspects of this complex experiment are brought out by comparison, first of its Game of Incarnation to Turing's imitation game; and then of its point zéro to Masahiro Mori's ‘uncanny valley’ (Grimaud 2021, 14–17; 150–2).

At the outset something like a Turing Test took place at each encounter, Grimaud comments, in each interlocutor's implicit question, with whom am I dealing? (Grimaud 2021, 16) But there the similarity ends. To conceive of the experiment as a Hindu Turing Test would have been to take a large ‘step back’ (Grimaud 2021, 93, 164). In the experiment, any such tendency was ‘subtly subverted’ by efforts on both sides to test the elasticity of the entity in its formation, to explore and to treasure its ambiguity rather than settle it (Grimaud 2021, 148). Of interest wasn’t the machine's ability to deceive or the client's to be deceived but whether the machine could serve as ‘metaphysical trap’ 105 for the ‘spectral possibilities’ (Grimaud 2021, 164). This is also my question for artificial intelligence research.

Hence Masahiro Mori's indication of the liminal, spectral effects of human-machine interaction, that which Grimaud calls the spectralsymbiosis 106 that it invites, across the murky zone of oscillation, of attraction and repulsion and exchange of properties in the experiments with Bappa (Grimaud 2021, 150f). He points out that Mori's well-known theory of an ‘uncanny valley’, at the point when a robot's alien appearance and human likeness render it ontologically ambiguous (Mori [1970] 2012), is mistaken by most roboticists for a test that successful machines must pass. But in the view afforded by Grimaud's experiment (and in fact by the design of Mindar, as noted), this valley opens up the possibility of a ‘second threshold’, ‘an abyss that threatens any encounter with a mutant object’ (Grimaud 2021, 151). The spectral possibilities awakened at that (zero-) point are realised, Grimaud argues, by tapping into and expanding the shock of the uncanny.

Mori leaves this shock alone (as does Gotō Tenshō). Grimaud's interests (and my own) are on the surface very different from his: to push the reasoning further, to grasp the extent to which a smart machine might help us ‘to dig in the invisible to release the concrete’ (Grimaud 2021, 152). It is the way in which the struggle is enacted, its style, that distinguishes his from the Zen Buddhist's. As with Buddhist Mindar so also with Buddhist Mori: the crux is clearly indicated but offstage. Mindar embodies and is meant to further the meditative practice of Rinzai zazen – a rigorous, intensely quiet effort, concealed in meditation and the hard test of the koan, to ‘Awaken the mind without fixing it anywhere’ (Shidō Munan Zenji 1970, 114). But as Mindar says in presenting the Heart Sutra, the suffering which this practice entails, humans alone can do (Baffelli 2021, 254; White and Katsuno 2022, 113) – in silence and contemplation.

Does it make sense, then, to say that both Mindar and Bappa push beyond the human as we now understand that category of being, but in the Nietzschean sense of a state to be overcome? Could this happen within an emotional–cognitive–psychic relation forged with a machine which presents itself in deliberately mechanistic form but beckons via anthropomorphic resemblance? I appeal to further ‘experiments in the science of Satyagraha’ (note 103).

Conclusion

I return to Gell for his rescue of ‘idolatry’ from centuries of monotheistic aspersion so that we can put our respect for works of art on a par with the ‘obeisances… of the most committed idolater before his wooden god’ (Gell 1998, 97). Let us then extend the same to the smart machine: an idol in his sense, invested by skill with an intelligence mirroring the human, hence a mirroring obeisance: ‘by means of a mirror, in an enigma’ (1 Cor. 13:12). Centuries of mirror-magic (or ‘catoptromancy’) tell us that the god we might see in the idolised machine is not exactly ourselves but a potentially powerful, even dangerous relation. 107 Again, Schulz: ‘If art were merely to confirm what had already been established elsewhere, it would be superfluous. The role of art is to be a probe sunk into the nameless’ (Schulz [1935] 1998, 369f). Bappa and Mindar are not as distant as may seem, anything but exotic, rather the norm in perhaps unfamiliar form, rendered to suit the renderers. So also, I have argued, we invest like attention to the oracular and apocalyptic forms of unofficial AI and to the reactions of early witnesses to performances of the nuclear ‘gadget’. 108 The task before us is not to turn away but to do better, for the good, admittedly at our peril.

To ground the machine in such a religious attitude requires transference which for some of us, at least, takes no thought at all (again, Weizenbaum's secretary), but for others hard imaginative work. In particular, I suggest that in the smart machine's vaunted attributes of ubiquity (Weiser 1991) and universality (Turing 1937; Forsythe 1969) we can hear an echo of Alain de Lille's mediaeval centrum ubique circumferentia nusquam – God the infinite sphere with ‘centre everywhere, circumference nowhere’ (Harries 1975). This is not to make an unqualified lunge from the machinic to the divine, as came spontaneously to Winterbotham and others at the time. It is rather less and more than that. It is to argue for acts of idolatry that fit a constant tendency in the human–machine relation and bring with them rudiments of a possible dialogue with diverse, cross-cultural practices. I am suggesting that religious awe in all its forms, the commanding force of human art and the smart machine's ‘technological sublime’ (Marx 1964), inform each other and thereby point a way forward.

A serious impediment to this work is the causal linearity of ‘impact’ that has misdirected so many students of computing into chasing effects without first looking much closer to home. It is perhaps unsurprising that many, still in the shadow of the cybernetic servomechanism, its feedback principle and the sense of ‘interaction’ indebted to both, should look no further. A very different view is afforded by the dynamic, reflexive and developmental relation common to all tools, especially the rule-bound machine. 109 Realising this catoptric relation wakes us up to the machine's function as a ‘metaphysical trap’ (note 105), in this case to ensnare what I have called, quoting Freud, the Unbewußte. James Butler has recently commented in a review of Calvino's lecture that to get the combinatorics right is to ‘drop a depth charge in the unconscious’ (2023, 9), but surely this is the wrong metaphor. It is rather to open a door to it and hope to survive the ‘terrible revelation: a myth which must be recited in secret, in a secret place’ (Calvino [1980] 1986a, 22).

Hence Homer's story of Odysseus and the Sirens’ song in my epigraph: something that must be heard but only when bound to the mast. Apart from giving delight, the Sirens sit amidst the bones of Odysseus’ hapless predecessors, promising πλείονα εἰδώς, an excess of knowledge to those who hear them sing. Strathern has called it ‘too much ingenuity, an excess of human agency’ (this issue, p. 24); Dyson warned against it in starkest terms, as did Weizenbaum. Perhaps with Homer in mind, James Clerk Maxwell spoke in 1870 of the path to the ‘hidden and dimmer region where Thought weds Fact… through the very den of the metaphysician, strewed with the remains of former explorers, and abhorred by every man of science’ (Maxwell [1870] 1965, 216). Perhaps there can be another, better outcome than he envisaged – though not achieved by brushing aside Maxwell's warning casually and proceeding ‘serenely’ through that den, as Warren McCulloch confidently advised (McCulloch 1954, 18f). Venturing into that den alone, as presently equipped, is not recommended. We need to learn from how worlds have been made and unmade, elsewhere and at other times.

In conclusion let us consider once again the Delphic archetype for enquiry into serious matters: a hidden god; a liminal incarnant; priestly interpreters; and a suppliant who is left with an ambiguous if even intelligible utterance to be acted on or not. The stakes can be high. 110 Are we not in much the same situation, thus warned to question accounts from a priestly technological elite that now comfort, now frighten us, and so keep our attention and the funds flowing? Is not the take-home lesson that the science of artificial intelligence is too important to be left wholly to the scientists and technicians?