Abstract

Over the past two decades, a number of digital platforms have been developed with the aim of engaging citizens in scientific research projects. The success of these platforms depends in no small part on their ability to attract and retain participants, turning diffuse crowds of users into active and productive communities. This article investigates how the collectives of online citizen science are formed and governed, and identifies two ideal-types of government, either based on self-interest or on universal norms of science. Based on an ethnography of three citizen science platforms and a series of interviews with their managers, we show how different technologies – rhetorical, of the self, social, and ontological – can be diversely combined to configure these collectives. We suggest that the shift from individual projects to platforms is a defining moment for online citizen science, during which the technologies that sustain the collectives are standardized and automatized in ways that make the crowd appear to be a natural community.

‘Citizen science’ is an actor’s category that has been assertively taken up by the media and more recently by science funding and science policy agencies. It designates a wide range of practices, from citizens donating the processing power of their personal computer to perform scientific calculations (SETI@home from BOINC), to amateur naturalists collecting observational data about birds (eBird), to people analyzing light curve graphs in search of potential exoplanets (Planet Hunters from Zooniverse), to patients sharing quantified observations, symptoms, and experiences about their health (PatientsLikeMe), to biohackers attempting to produce insulin in a community laboratory (Counter Culture Labs).

Analysts of citizen science, who are often active promoters of the field, have attempted to make sense of such a diverse set of practices by asking, for example, what motivates the participants in citizen science projects (Jennett et al., 2014; Raddick et al., 2010), how power is distributed between professional and amateur participants (Bonney et al., 2009; Haklay, 2013; Shirk et al., 2012), how participation is shaped by the spaces in which amateur activities take place (Wiggins and Crowston, 2011) or how these activities embody different epistemic practices, each with a distinct genealogy (Strasser et al., 2018). This article introduces another analytic perspective to the study of citizen science by shifting the focus away from power, individual motivations, and the spaces and epistemic practices of citizen science, toward the constitution of participatory collectives. Throughout the article, we use the term ‘collective’ to refer to any kind of group that takes part in citizen science. In our usage, ‘collective’ is an abstract and general term that, unlike ‘community’, does not imply any a priori assumption regarding the nature and composition of the group, or the strength of its bonds. Although, as we show in the article, non-human actors certainly play a role in the constitution of the collectives of online citizen science, we are using the term to refer only to human collectives (Latour, 1993).

In our focus on the collectives engaged in the production of scientific knowledge in citizen science, we ask the questions: How are these collectives constituted and maintained? How are they portrayed and labeled? How are they governed? What kinds of social forms emerge out of citizen science? This study will not only contribute to our understanding of citizen science but also, more generally, to the transformation of crowds of individuals into online communities.

We explore these questions by looking at a specific, yet numerically important (involving several million participants) subset of citizen science – projects that take place exclusively online, are usually set up by mostly academic scientists and request participants to compute or analyze scientific data (Curtis, 2018; Holohan, 2013). Compared with other kinds of citizen science, such as ‘social movement-based citizen science’ (Ottinger, 2016), often focused on issues of environmental justice (Allen, 2003; Frickel, 2004; Kimura, 2016; Ottinger, 2014; Wylie, 2018) or on biomedical research (Callon and Rabeharisoa, 2007; Epstein, 1995), where the collectives take their starting point in pre-existing local communities that are strengthened and enlarged by the project, online citizen science aggregates individual volunteers who share a common interest but do not (yet) constitute a stable collective. When patient-based citizen science takes place primarily online, for example on the platform PatientsLikeMe, communities appear to emerge out of the very process of individual volunteers engaging in citizen science, rather than the opposite (Tempini, 2015). This also tends to be the case in hybrid contexts, for example in naturalist citizen science projects which do not rely on pre-existing communities, such as birders, but organize field activities for participants and also crucially depend on online databases (Cooper, 2016; Dickinson and Bonney, 2015; Pocock et al., 2017).

Instead of surveying all kinds of citizen science in order to produce yet another typology of it – this time in terms of the collectives engaged in, transformed by, and established through citizen science – we choose to focus on and compare three online citizen science projects – SETI@home, GalaxyZoo, EyeWire – for which the formation of durable collectives appears to be especially challenging and fragile. Indeed, such projects rarely draw on pre-existing, offline collectives such as local communities of amateur scientists (Tancoigne and Baudry, 2019). Instead, they gather what has been characterized as ‘crowds’ – a term which has been quite successful in ‘figuring the collective’ at play in the digital world since the 2010s (Kelty, 2012). Referring to both a large and diffuse mass of individuals, the crowd has recently been called on to represent the collectives of online citizen science; some authors now speak of ‘citizen crowd science’ or simply ‘crowd science’ (Franzoni and Sauermann, 2014; Scheliga et al., 2018).

In these distributed computing and crowdsourcing projects, scientific questions are broken down into repetitive micro-tasks that human and non-human crowds are asked to process. Unlike better-known crowdsourcing endeavors (Brabham, 2013), such as Amazon Mechanical Turk (Ellmer, 2015; Irani, 2015), participants on citizen science platforms receive no remuneration in exchange for the tasks they perform, raising the question of what sustains the collectives of online citizen science. This article shows how citizen science platforms enroll crowds of volunteers and turn them into active and industrious collectives, in the name of science. The crowd then takes on a new (ideal) form, that of the community, defined by stability, shared values, and self-organization. This article thus speaks more generally to the social construction of online communities in the digital age.

To show how such a process is achieved, the article presents case studies of three different citizen science projects and platforms, based on various types of sources: An ethnography/participant observation of three projects/platforms, semi-structured interviews with the founders and managers of the projects and an exploration of the literature from the fields of psychology and Human-Computer Interaction (HCI) that engage with the projects, a literature that we treat as a primary source. The three projects/platforms have different genealogies and they elicit different kinds of participation from their users. 1

The first project, SETI@home, was launched in 1999 at UC Berkeley and rapidly reached iconic status. The project uses the idle cycles of the participants’ computers to perform scientific calculations on astronomical radio signals, in the hope of finding signs of extraterrestrial intelligence. The process, known as ‘distributed computing’, consists of dividing large calculations into small tasks distributed among many computers. In the 1990s, thanks to the increasing speed of networks and popularity of personal computers, this approach was becoming a viable alternative to performing these calculations on a centralized supercomputer. In 2004, SETI@home was integrated into a broader platform for science-related distributed computing projects called BOINC (Berkeley Open Infrastructure for Network Computing). In 2020, the project entered a ‘hibernation’ phase, with no new tasks being distributed. The second project, GalaxyZoo, was launched in 2007 at Oxford University and has participants analyze and classify images of galaxies according to their shape. In 2009, it was integrated into the platform Zooniverse, which hosts other crowdsourcing projects in many fields of the natural sciences and the humanities. Finally, EyeWire was launched in 2012 at Princeton University, as a science game in which the users engage in complex 3D-mapping of neurons from 2D electron microscopic images. It is expected to form the basis of a platform for citizen science games about the brain, called Neo, yet to be launched. 2

To explore how the collectives of online citizen science are constituted and governed, we first present the three case studies in more detail, highlighting what kinds of user experience the projects/platforms offer to their participants, whether in terms of navigating the website, performing the tasks, reflecting on one’s activity or connecting with other users. We then build on these results in order to characterize, at the level of the participants, the different technologies that configure the social forms of citizen science, that is, turning crowds into communities. These technologies can be diversely combined, but they ultimately point to two ideal-types of government, either based on self-interest or on shared norms (of science). Finally, moving to the level of the architecture and infrastructure of the collectives, we analyze the constant human and non-human mediation work involved by the technologies of crowd governing. We show how the shift from projects to platforms can be seen as a way of automatizing and, ultimately, invisibilizing this mediation work so as to make the crowd appear as a natural community.

Experiencing the interface

SETI@home/BOINC

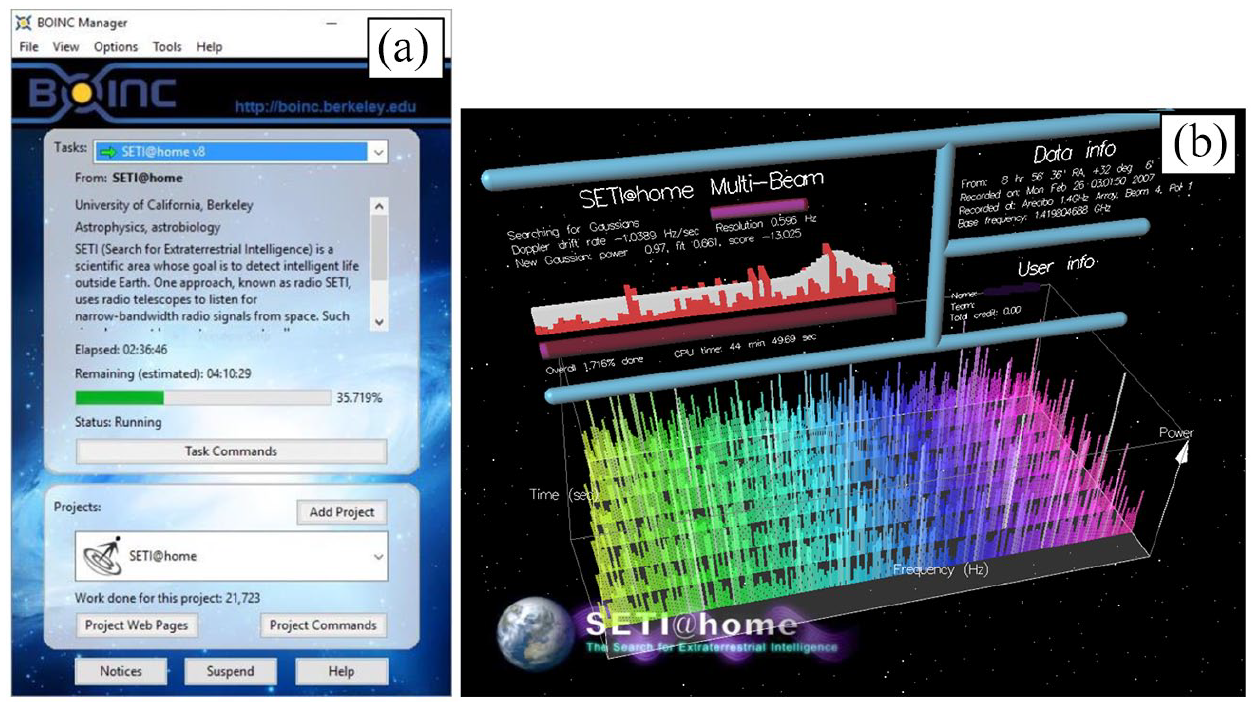

Welcome to <https://setiathome.berkeley.edu>! The website may look a bit old fashioned in 2021, but SETI@home still exists. ‘You can participate by running a free program that downloads and analyzes radio telescope data’. Once installed on your computer, this program, called BOINC manager, allows you to choose between different projects – some dedicated to astronomy (Milkyway@home), others to physics (LHC@home), biology (Rosetta@home), or climate science (Climateprediction.net). The interface of the BOINC manager is rather rudimentary (see Figure 1a); still, in addition to providing a short description of the project for which you are computing, it will display a bar showing your progress (35.719%, for now) in completing a ‘task’ or ‘work unit’. It will also remind you of the total ‘work’ you have dedicated to the project (21,723 in arbitrary units), information you can also access by looking at your account on the project website (where ‘work’ significantly becomes ‘credit’). External websites run by volunteers, such as BOINCstats, will also provide you with plenty of statistics on your activity. This information is public, so that you can easily compare your achievements to those of others. If you are in the top 1%, 10%, or 25% for recent average credits, you will also distinguish yourself with, respectively, gold, silver, and bronze ‘badges’.

(a) BOINC manager (running SETI@home) and (b) SETI@home screensaver.

Of course, you might find the BOINC manager rather austere to watch, and if you have something else to do on your computer, that is fine, your computer has plenty of processing power to handle your emails and look for extraterrestrial beings. But you can also leave your computer and go about other business. In that case, an eye-catching screensaver will automatically launch after a few moments of inactivity, offering a visualization of the data your computer is analyzing right now (see Figure 1b).

But as the menu of the SETI@home website makes clear, the BOINC experience is not just about ‘crunching’, it is also about being part of a community. Forums or ‘message boards’ will allow you to communicate with other participants. You may also want them to know more about your ‘backgrounds and opinions’ by filling out a public profile. You can even upload a picture of yourself! If you are lucky, you might be featured as the ‘user of the day’ and end up on the website’s main page. Last but not least, you will be able to compute and compete not only individually, but also in teams, which can be national (SETI.USA), linked to a specific school (UC Berkeley), company (Boeing), institution (US Navy), or be subject-specific (Raccoon Lovers).

GalaxyZoo/Zooniverse

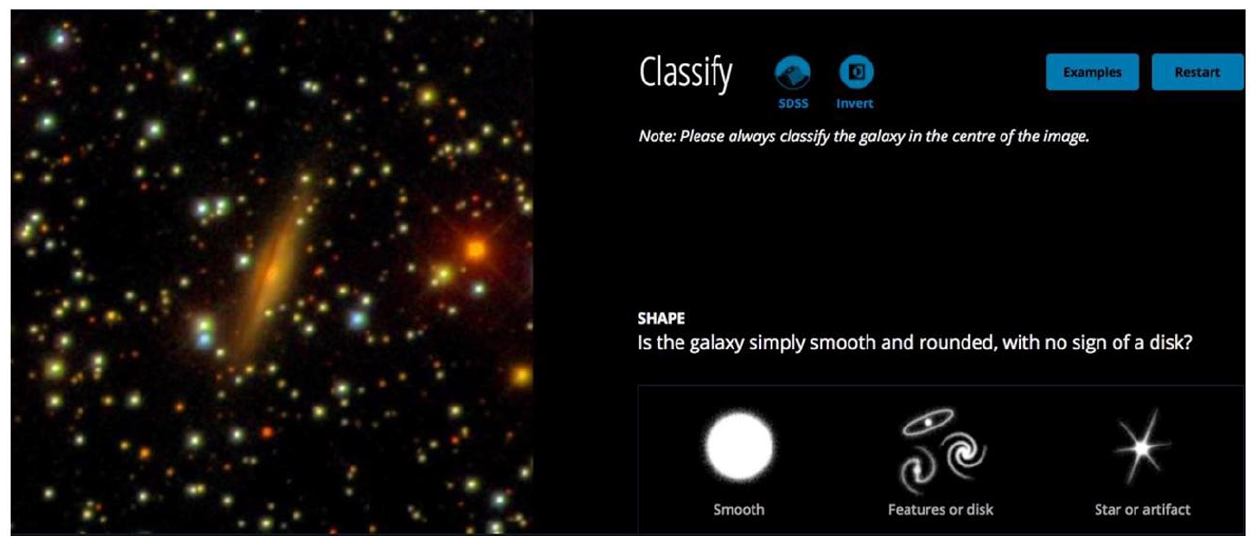

What if you, instead of your computer, were to perform scientific tasks and help to advance science? On Zooniverse, a ‘people-powered research’ platform, you will be able to choose between many different projects that allow you to analyze scientific data, generally in the form of images or texts. You will classify galaxies (GalaxyZoo), search for exoplanets (Planet Hunters), transcribe nineteenth-century ship’s logs (Old Weather) or identify animals in millions of camera trap images (Snapshot Serengeti). Each project offers a distinct interface, but the process is always the same. Let us try GalaxyZoo (see Figure 2). What appears on your computer or smartphone screen is an image featuring at least one galaxy in the center; your mission is to classify this galaxy, with the help of a series of questions that ask you to specify and further refine your choice. If the galaxy is ‘simply smooth’, would you say that it is ‘completely round’, ‘cigar shaped’ or in between? Do you see something odd, such as a ring or a merger? And so forth. Apparently, you are better at this pattern recognition task than some of the most powerful computers on earth (at least for now, because your classifications are being used to train AI algorithms further).

GalaxyZoo: Classifying galaxies.

On Zooniverse, unlike BOINC, there is no need to register if you just want to make classifications. However, if you do register, you will be able to ‘receive credit for your work’ and keep track of how many classifications you have completed. Contrary to BOINC, this information will be kept private; you will not be able to compare your achievements to others. Feedback is quantitative, not qualitative: You will learn how many classifications you have performed, but not how well you performed them (which can be known only a posteriori, when enough classifications of the same question have been made to allow for comparison and computation of the ‘right’ answer). However, you are always offered the opportunity, through the ‘Talk’ function, to discuss an image with your fellow classifiers. On this social space, with their help, you might even make a big discovery, as did the Dutch schoolteacher Hanny van Arkel, who observed in 2007 a quasar ionization echo now known as ‘Hanny’s Voorwerp’ (Lintott et al., 2009; Nielsen, 2012: 130–133). Importantly, you can only perform tasks individually, not as part of a team, even if from time to time Zooniverse will advertise short-term collective goals, such as ‘Let’s collectively classify at least X galaxies in the next Y hours!’

EyeWire

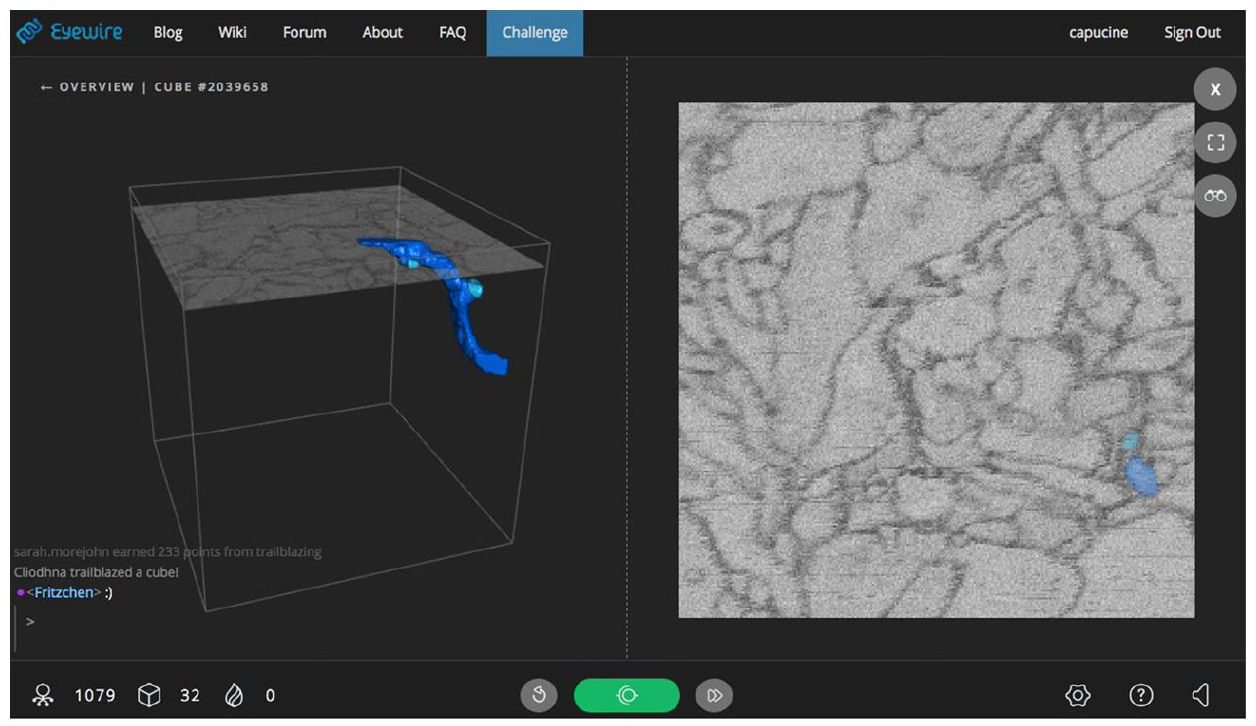

Tired of classifying galaxies? Try a game! On EyeWire, ‘a game to map the brain’, you will be spared the sight of austere scientific images and complex graphs. Instead, you will directly act by reconstructing models of neurons in the form of 3D puzzles. A meditative soundtrack will help you relax while you are playing. A short tutorial will show you how to navigate the virtual ‘cube’, an arbitrary delimitation of a brain, with the black-and-white electron microscope section of the neuron at the right side of the screen; all you will need to do is to color inside a gray outline of a neuron branch, and it will generate volumetric reconstructions (see Figure 3). Branch by branch, you will gradually map an entire cube.

EyeWire: 3D mapping of neurons.

Worried that the tasks are going to be repetitive? Well, not at all! On EyeWire, everything – from cubes to players – is organized in terms of levels, so that playing the game assiduously will give you access to new ‘challenges’ and ‘powers’. Once you’ve passed the tutorial, cubes will be ‘artifacts’, and then, if you go on performing well, ‘relics’, and you’ll be able to ‘trailblaze’ cubes (i.e. be the first person to trace a cube). Your performance or ‘stats’ will be closely monitored (both in terms of points and ‘accuracy’ – how well you traced a cube) and made publicly available, so that everyone can compare – and compete! Users can also enter temporary teams and participate in collective competitions (e.g. Stripes vs Spots in June 2020). Communication spaces are also important: Alongside the traditional forum, an in-game chat will allow you to discuss with other players online, asking them for advice and support (or anything else). Finally, a regularly updated blog, run by the EyeWire HQ and featuring illustrations inspired by sci-fi and heroic fantasy, will keep you on track with the latest developments in the game.

Interfaces

By describing the interfaces of these three platforms in terms of the general experience of the participant (navigating the platform, performing tasks, measuring one’s activity, and connecting to others), we have in mind a sociology of interfaces that goes beyond the vernacular understanding of the term or even the conception articulated in the HCI literature. In the field of HCI, interface usually refers to the graphical user interface – the carefully designed features, tools, and buttons that either express content or provide a set of instructions for action (Garrett, 2000). This understanding of the interface is task-oriented, focused on feedback loops and aimed at maximizing efficiency. In this mechanistic vision of the interface, the participant is cast as a ‘user’ rather than a subject (Drucker, 2014: 142). In this context, a key concept is that of ‘affordance’, originally introduced by ecological psychologist James Gibson to describe the relations between individuals and the environment through what it ‘provides and furnishes’ (Gibson, 1979: 127). Gibson used the term to develop a theory of perception in which animals and humans do not perceive the environment as such, but rather through its affordances, the possibilities for action it may offer. In the field of HCI, the meaning of the term has often been restricted (Bucher and Helmond, 2018) to the ‘perceived affordances’ (Norman, 1990) that designers build into their artifacts in order to configure (Woolgar, 1990) and determine the action possibilities of the users. At best, the interface is seen as a space where a two-step mediation takes place – between, first, an apparatus that seeks to frame and to constrain the behavior of a pre-defined user and, second, a participant who interprets and plays with the action possibilities offered by the apparatus (Badouard, 2014; Mackay et al., 2000). Following Hookway (2014: 12–15), the interface of HCI might better be called a ‘surface’ (as in interface), rather than a relation between things (as in interface).

Instead of seeing interfaces as things or as a surface, the literature in media studies has understood them, for example, as ‘autonomous zones of activity’ (Galloway, 2012: vii) or ‘form[s] of relation’ (Hookway, 2014: 2). For Hookway, the qualities of an interface lie not in a specific device or feature but in the relation between distinct entities that, at the same time, circumscribe the interface (interface as in ‘between faces’) and are shaped by the interface (interface as in ‘facing between’), producing in the end a ‘supplementation or augmentation of agency’ (Hookway, 2014: 17). Defined as ‘the point of juncture between different bodies’, interfaces ‘describe, hide, and condition the asymmetry between the elements conjoined’ (Cramer and Fuller, 2008: 150). Building on this conceptualization of the interface, we propose to analyze the interfaces of our three citizen science projects/platforms as relational spaces where not only the relations between humans and machines are (re)made, but also where the very nature of the human (collectives) and machines is produced. Such a research program partly reconnects with the original, fundamentally relational notion of affordance, but goes beyond in that it acknowledges that the digital environment of platforms is much more dynamic and malleable than the natural environment studied by Gibson (Bucher and Helmond, 2018) and that ‘users’ do not preexist their presence and interactions on the interface.

From crowd to community: Four technologies

If interfaces are less things – well-designed arrangements of features such as buttons, images and hyperlinks – than a relational space, then their functions should be redefined as well. Instead of studying how interfaces are made to catch, hold, and capitalize on the attention of pre-existing (or imagined) individual users (Citton, 2014; Lanham, 2006), we propose to examine how interfaces manufacture the social or, more precisely, how, depending on what types of relations are produced inside them, interfaces create, stabilize, reproduce and govern different collectives. We distinguish between four ‘technologies’ that produce the relations inside the interface: Rhetorical technologies, technologies of the self, social technologies, and ontological technologies. We use this distinction as a useful analytical tool; however, in reality, each technology embeds the others and they generally co-exist. By using the term ‘technology’, we do not mean to imply that these technologies are fundamentally technical (Shapin, 1984; Shapin and Schaffer, 1985), nor that they are inescapably top-down, designed by some to shape the behavior of others (although, of course, agency is usually not equally distributed among actors). Rather, we use the term ‘technology’ in order to show how online collectives are an artifact of the discursive, personal, social, and ontological relations between humans and machines inside the interface.

Rhetorical technologies

Communities are never just ‘taxonomic’ (the community as made of members who have similarities in the mind of the classifier) or ‘relational’ (the community as made of interrelations between members in reality) (Harré, 1981), they are also ‘rhetorical’: They exist by being ‘invoked, represented, presupposed’ in ‘discursive projection[s]’ and ‘rhetorical construct[s]’ (Miller, 1994). In our three online citizen science projects, rhetorical technologies help to define the basic entities inside the interface by ascribing fixed meanings to them.

One first venue for this discursive work is the project description page, where the project presents itself to the outside world. Zooniverse, for example, purports to be a ‘platform’ for ‘people-powered research’, that is, an open space for ‘people [to] come together’ and ‘work together’. Once they have joined the platforms, those people are neither referred to as ‘users’ nor as ‘participants’; rather, they are systematically characterized as ‘volunteers’. There is much at stake in these labeling processes; in particular, framing a gathering of actors as coherent or harmonious is crucial to the creation of a durable collective. This can be realized by appealing to a common moral purpose that transcends (Douglas, 1986) the social heterogeneity of the crowd. In the case of Zooniverse, actors are ‘volunteers’, meaning that they are all driven by a disinterested desire to help (science). In the project EyeWire, it is not shared altruism, but exalted curiosity which is put forward to cement the community. A visual from the EyeWire website accomplishes the same rhetorical effect as the ‘volunteer’ label in Zooniverse. The cartoon represents a group of ostensibly diverse people, in terms of gender, age, ethnicity, and professional background, gathering around a young-looking woman (wearing glasses) who is using a computer on which the EyeWire logo is affixed. For all its diversity, the unity of participants is achieved through the ascription of a common cognitive property: The caption reads, ‘gamers * students * teachers * scientists WE ARE WONDERERS’ (EyeWire, 2020).

Another rhetorical technology through which the relations inside the interfaces are shaped is the creation and diffusion of fictional personae who embody the defining characteristics of the collective. The function of these personae is internal to the collective insofar as they offer members of the collectives a means of common self-identification, but it is also external, in that they act as rhetorical spokespersons for the collective, therefore doing an important boundary work between the inside and the outside. One example is EyeWire’s ‘Heroes of Neuroscience’, a group of seven personae whose design is heavily indebted to space opera, heroic fantasy and gaming (EyeWire, 2016). The role that these personae play in policing the boundary between users and non-users is clearly acknowledged by one of our interviewees (who are managers and founders of the projects/platforms): I love the EyeWire heroes!! They’re my favorite! So these are the EyeWire heroes. They’re the heroes of neuroscience. […] We want the players to feel like they are… like you know, they’re a superhero amongst, you know, the people who are not, you know, doing neuroscience. [#E1]

Here the rhetorical personae, the ‘heroes of neuroscience’, not only help to materialize the boundary between users and non-users, or between the collective and the outside, but also act as a reminder of the internal structure of the collective. By inviting comparison between the different kinds of users, they contribute to shape the self of each individual user.

Technologies of the self

Technologies of the self (Foucault, 1988) are indeed pervasive in online projects and platforms, such as those devoted to citizen science examined here. Centered on the individual, these technologies shape how the user thinks about themselves and their activity. One such technology is the metrics the user can employ to measure their performance. For example, on Zooniverse, each user, after logging in, can see how many classifications they have done, but since this information is deliberately kept private, the user cannot compare their achievements with those of others: We have a credit system. We get credits to people, that’s important … but we don’t turn that into points and we don’t label you at that point. [#Z1] We’re very pro getting people their own stats. People are very interested in those stats, and when we see them they say, I want to know everything, I want to know how many classifications I have done, how much time I’ve spent on the site … But they’re not that interested to know how they compare to the other volunteers. [#Z2]

Those two interviewees address a contentious issue in crowdsourcing, including citizen science projects and platforms. Once metrics are made public, they act less as individual feedback mechanisms than as a collective credit system that organizes competition between the members of the collective through leaderboards and contests. Such technologies can strongly shape the behavior of users, as is suggested by the satirical website ‘Carolyn’s Clinic’, which opened as early as 1999 to treat ‘Setiholics’ through ‘treatment programs’ for, among other problems, ‘low self-esteem due to a low standing in the SETI@home statistics’ (Ehlen, 2000). Equally revealing, some organizers fear that if competition becomes a motive for participation, users will attempt to game the system to maximize their score rather than data quality.

The attribution of points, badges, differentiated roles, and statuses are technologies of the self insofar as they purport to shape not only the behavior, but also the identity of the user as individual, in comparison with the other individuals in the collective. BOINC projects routinely deliver badges to individual users and teams for a number of different accomplishments (total credit, length of participation, number of message-board posts), and these badges are sometimes displayed publicly by users, for example through banners embedded in forum messages, as marks of distinction and of authority which show how seriously some of the users take their participation to the platform. While no such technology exists in Zooniverse, EyeWire, in contrast, is an even more stratified community than BOINC, with users divided between normal ‘users’, ‘scouts’, ‘scythes’ (and, recently, ‘mystics’). Out of approximately 200,000 registered users, only 200 are scythes. Scouts and Scythes not only have a higher status – ‘in glowing turquoise armor, Scythes are EyeWire’s most powerful players next to Admins’, according to the EyeWire blog (EyeWire, 2014) – they also play distinct roles in the project and enjoy a whole different user experience. While regular users complete cubes, scouts have access to a ‘heat map’ through which they can flag problematic cubes, and scythes review and correct the cubes that have been flagged. In the end, this stratification acts both as a division of labor and a social hierarchy based on distinction and past achievements.

Social technologies

In addition to rhetorical technologies and technologies of the self, social technologies help to build and sustain the collective. Social technologies include tools that allow communication to flow easily between users, such as message boards, private messages, or chats. In all three projects, message boards and chats are in general very active, with conversations spanning a wide range of topics which are often unrelated to science. In Zooniverse, the ‘Talk’ section of the platform has been carefully crafted in order to foster a sense of community, without endangering the classification activity which needs to remain individual for data quality purposes. ‘Talk’ allows users to discuss their classifications, but only after they have been made, and on another webpage: I think without that discussion area there isn’t really a community. Because without this discussion area we deliberately keep people separate. Because we want them to make an individual classification, we don’t want them to be influenced by anything that anyone else is saying. So, by definition, without Talk the Zooniverse isn’t a community. [#Z2]

Chats, as found on countless online platforms, by contrast, do not separate individual activity from communication and therefore play an important role as coordination tools. They are used by individuals while they are performing their tasks, which, in effect, becomes a product of the collective. In EyeWire, the interface integrates a chat section, overlaid on the task page, that can be used during the completion of a cube; the collective is constructed not only through communication but through common action: [Showing laptop’s screen] So right now the players are talking in Scouts, which is a private channel for the ranked players. And so they’re coordinating what cell they’re going to go and work on. [#E1]

Here, communication tools are supplemented by coordination tools, such as spreadsheets, collective goals or special contests between teams, which are either made up for the contest, as in EyeWire, or more permanent. In BOINC, each team typically forms a sub-community, with its own message boards, website, and events. Some of these teams are now more than twenty years old and have been very active agents of socialization among participants, for example by organizing offline get-together events. In the words of one interviewee, these ‘social mechanisms’ allow ‘participation’ to move beyond the software and the interface to become ‘an ongoing activity’ [#B3].

These different implementations of social technologies reflect contrasting views on the nature of the community and its role in the process of knowledge production. In the first one, exemplified by Zooniverse (Kasperowski and Hillman, 2018; Rohden et al., 2019), the community is a discursive space defined by shared norms and values, a place where the exchange of private utterances has the potential to produce new, or better, knowledge: When the first project was launched, there was a discussion forum. And so instead of just getting people to classify galaxies on their own, they were able to talk to their fellow volunteers. And just through that, within a few weeks, two discoveries were made. [#Z2]

In the second view, represented by EyeWire or BOINC, the community is first and foremost a community of action, a place where divergent actors and interests are held together toward a single goal through coordination mechanisms rather than shared norms and values. Competition plays a substantial role in this view of the community; paradoxically, the more competition, the more community, as is revealed by this chat exchange initiated for us by an EyeWire manager during an interview: <user1> my first science puzzle thingy was foldit, then i moved on to projects from zooniverse <administrator> nice! <user1> but neither had any community, really, and zooniverse refused to show public stats because they believed they shouldn’t turn things into a competition, as it might skew results <user1> naturally, i disagreed <user1> i mean, i dont care for points, but the competition factor does help <user1> i dont think folding@home, or any BOINC project would be as popular as it is, were it not for points <administrator> wow, so interesting. you are stimulating discussion here! <user3> Accuracy! i want accuracy! :p <user4> pointz! dem pointz! <user2> yeah, thats the only value i care about! <user5> well, i am not a competing person but when i win Happy Hour I am happy <user5> and those badges…. <user3> think points are good for the competition aspect, cause it’s a game after all <user3> and badges yeah <user3> i’m on a rush for one right now… <user4> achievements + competitions + promotions = fun!!! <user3> better than smoking!

The individuals on EyeWire form a community because they all compete within the same interface and in the same game.

Ontological technologies

Finally, what we call ontological technologies are technologies that help shape and define what kind of world the user is thrown into when they accomplish online tasks and participate in a project. In citizen science projects, such technologies must tackle the difficult question of data representation. How should scientific data be presented to the user? Should the user live and act in a world where the scientific nature of the data at hand is emphasized, or, on the contrary, invisibilized, and translated into a more mundane ontology? For example, the Zooniverse platform prides itself on hosting projects that are true to science – that do not misrepresent their data, as austere as they may be, to make them more appealing to users: We tell science teams not to worry about whether their image is engaging or whether it will be interesting. We tell them to put up the thing that will give them the most useful classifications for their science case. [#Z1]

This issue is particularly salient for BOINC. Since the task is accomplished by the computer, both the data and the workflow (the processing of the task) are largely invisible to the user. In a sense, nothing seems to be happening, and this situation is a deterrent to long-term, ‘ongoing participation’. One way of working around this issue has been the introduction of screensavers, which do not merely illustrate, but faithfully represent the task and scientific data being processed: In Einstein@home, they have this neat deal where they show you a sphere, and that sphere has a bunch of constellations on it, and so the ideas is, in the center of that sphere would be Earth, and if you’re looking up you would see all these constellations, and … there would be this little orange dot that appears at different places at different times in that sphere, and that’s the area of the sky your BOINC client is currently looking at, to determine if it’s found a binary pulsar. … For all the big projects, I would say that what you see on the screen is what your computer is currently processing. They do try to make that happen. [#B2]

EyeWire has a very different view on what world should be offered to users – and how science and scientific data should fare inside that world. Reflecting on an earlier version of EyeWire, one interviewee derided the scientists’ naivety of taking their world for granted: You could tell it had been written by researchers. [laughs] Like, what’s a ganglion, what’s a synaptic bouton? the name of the neurons were like gc3125, you know. [#E2]

Because SETI@home and EyeWire draw some of their inspiration not only from academic science but also from, respectively, science-fiction (the graphics of the original SETI@home screensaver were modeled on the computer consoles of the early 1990s TV show Star Trek Next Generation) and gaming, both projects have seen a blossoming of fan art, in the form of illustrations, poems, and even music – showing that participants can indeed be captivated by the virtual worlds offered in online citizen science. With ontological technologies, these citizen science projects and platforms compose different worlds based on different understandings of efficiency and scalability, of the public and their desires, and of the meaning of the scientific endeavor.

At bottom, these different ways and technologies of world-making (Goodman, 1978) are linked to divergent ideas about the social and on what constitutes relevant motives for action – ultimately pointing toward two ideal-typical ways of governing the crowd. Zooniverse’s world of scientific facts and data is premised on the belief that science is, in itself, a prestigious, dignified, and worthwhile activity, and that, accordingly, users go to Zooniverse because they want to contribute to science and to the advancement of knowledge.

There are two aims of Zooniverse: One is to get science done and the other is to change how people think about science. I think it’s very hard to do the latter, if you’re disguising it. [#Z1] The original call of the Zooniverse project is to help progress science and to get through the data faster than ever before, by employing a crowd. And that’s the goal, and people realize that. And they come on and like, if I submit these classifications, I’m helping researchers go through their data. If you make a game, you change the goal, you make the game the goal. And the game is to get to the top of the leaderboard, for example. [#Z2]

In this model, the appeal to values and (Mertonian) norms of science is thought to be a – rather economical – way of governing the crowd. While rhetorical technologies play a decisive role in labeling actors and ascribing value to their activity on the platform, other technologies (of the self, social, ontological) are deployed on Zooniverse only insofar as they do not encroach on the alleged purity of scientific activity. The community that emerges out of the crowd mimics an ideal vision of the scientific community, in which cooperation and disinterestedness guarantee the reliability of scientific results and draws the boundaries of science.

In contrast, BOINC and EyeWire rest on the idea that dedication to science is not sufficient to sustain user engagement and, as a consequence, participation needs to be supplemented by exogenous motives for action: It’s just completely unscalable. So that kind of got us thinking outside the world of neuroscientists, outside the scientific box, into the world of games. Drawing inspiration from games. Because people are collectively spending billions of hours a year playing games. [#E1]

In this model, self-interest and competition are thought to be the best way of governing the crowd. Here, technologies of the self and social technologies are central: Public systems of credit, differentiation of roles and status, competition, and coordination tools provide the incentives that fuel the users’ self-interest. In EyeWire, this basic configuration is enriched by powerful ontological technologies that aim to create a highly codified and esoteric world (Juul, 2005; Wolf, 2012). In both BOINC and EyeWire there emerges an idea of the community centered on action rather than on rational discussion; that the community is engaged in producing science rather than something else appears to be incidental rather than essential to the logic of collective action.

However, behind these two ideal-typical ways of governing the crowd and of stabilizing it into a community, for the organizers and the participants, lurks a common peril – that of lapsing from participation to work. Citizen science projects and platforms constantly have to negotiate the tension between, on the one hand, productivity and efficiency (the ‘original call of the Zooniverse project’ is to help scientists ‘by employing a crowd’ [#Z1], and games offer a powerful model because ‘people are collectively spending billions of hours’ playing them [#E1]), and, on the other hand (serious) leisure and voluntary participation. We argue that this danger is overcome at the price of a very substantial, and at the same time insistently invisibilized, mediation work.

Human and technical mediators

Moving from the technologies at play at the level of the participants to the higher level of the architecture and infrastructure of the collectives, it appears that turning a crowd into a community and governing communities in the long run requires a continuous effort – this is an ongoing process that can never be taken for granted. The social can fall apart at any time. Rhetorical technologies, technologies of the self, social technologies and ontological technologies are not simply technical, but involve elaborate socio-technical assemblages that allow them to operate. Two such assemblages, community management and platformization, have become particularly prominent in online citizen science.

Community management

One important point about this mediation work, both human and technical, is that it has been, in the recent years, subject to a process of professionalization based on a specialized body of knowledge – the field of HCI. In this respect, the different times frames of our three projects/platforms (BOINC’s first project started in 1999, Zooniverse in 2007, and EyeWire in 2012) are quite telling. While all of them have been initiated by scientists (respectively, computer scientists, astronomers, and neuroscientists), the two more recent ones have acknowledged from the start that building a platform and a community required specialized and professionalized work and knowledge, that simply cannot be improvised, even by scientists.

So really what’s driven the design, I think, from Zooniverse onwards is the decision to employ professional web developers, rather than try, I think … A more natural academic model is always to try to work with students, because they’re around and they’re cheap. … And so a lot of the low-level design, the how should this site work, what are the principles, come from a decent understanding of user experience, rather than from sort of an astronomer’s sense of what should be done. [#Z1]

While BOINC has essentially relied on a handful of dedicated computer scientists (between two and five), improvising their way into the construction and management of a community, both Zooniverse and EyeWire have hired professional teams (respectively, of twenty and ten people) composed of developers, infrastructure engineers, graphic designers, UX designers, illustrators, ‘embedded academics’ [#Z1], and, of course, ‘community managers’.

Community management and community managers play an important role in all online platforms, including citizen science platforms, although their role is somewhat distinct from that of the traditional community managers of social media (Gillespie, 2018) and online games (Kerr and Kelleher, 2015). They are responsible for maintaining an active and well-ordered community, as well as for catering to the needs and demands of the users. This includes actions such as discussing with users on chats, forums, social media and via emails, posting new contents on the web and especially on social media, policing public spaces, launching new contests and competitions, introducing new features to users and so forth. However, a crucial part of their work involves another constituency – the scientists. As online citizen science moves from single project to multiple-project platforms, teams of scientists have to be managed, like the users. Community managers in online citizen science not only make sure that the scientists’ concerns are taken into account (for example about data accuracy), but they also guide them in turning their research questions and raw data into a meaningful and attractive citizen science project for a broad audience, drawing on research about their own online communities (see Conclusion). Community managers encourage scientists to interact with the users on a regular basis, feeding the users with information on the project and on the results of their research. The community manager’s role is essentially to mediate between different constituencies whose interests sometimes conflict: So I’ve basically ended up as communications lead, community manager and, and liaison with researchers, and project manager with the web development team. Because everything comes together, right? Everything has to meet to build a kind of successful project. … My job is to be an interface, essentially that, between different groups. [#Z2]

In this extended notion of community, which comprises both users and scientists, the community manager imagines themselves as the embodied interface of the project.

Interestingly, most of the actors whom we have interviewed (all organizers, at different levels, of citizen science projects and platforms) are uncomfortable with the notion of community management and with the very term of ‘community manager’. Part of their reluctance comes from the corporate and hierarchical connotations of the term ‘manage’: ‘Manage, manage is probably the, the wrong phrase for that. There was collaboration between the volunteers and us’ [#B2]. The reluctance also comes from the fact that community management conflicts with the idea of an unmediated access to scientists, which is part of the appeal of these citizen science projects. By introducing community managers (but also UX designers and illustrators) these projects run the risk of compromising their feel of scientific authenticity. But we want to argue that the project managers’ averseness toward the lexicon of community management derives from the fact that the term too overtly reveals the artificial or constructed nature of the community. Community management is human, all too human, and our interviewees are eager to emphasize the technical, rather than social, nature of mediation on the platform: A community manager should be seen as a ‘community engineer’ [#Z1] or, even more abstractly, as part of a ‘social machine’ [#Z1]. The ideal community is a community that is self-regulated through built-in tools and ‘systems’: It’s our job to build systems that allow the team to engage effectively with a community but don’t require that kind of full-time investment. [#Z1] I would say that the best projects we have are completely self-regulating. [#Z2]

The goal of self-regulation should not be seen simply as a matter of freeing up time for the community managers and the project organizers, although this consideration certainly plays a role: [About setting up message boards] So because basically what happens when you have about 200,000 people using a software and there’s only two of you, is your inbox becomes unmanageable, if you try to take it on all yourself. So, the idea was to give people a place where they can go and talk about all things BOINC and you could get the community to do some of that support for you. [#B2]

More fundamentally, self-regulation is thought to be the defining characteristic of a (virtual) community, as Rheingold (1993) originally defined it, with all its libertarian overtones. With adequate built-in mechanisms, enough social differentiation and specialized roles will be produced so as to ensure the perpetuation and maintenance of the community, through its own means. Wikipedia has often been presented as an exemplary case of a virtual community that relies on self-regulation, although in reality its organizational structure is more complex and its politics messier (Tkacz, 2015). In our three case studies of online citizen science, this ideal of the self-regulating community is part and parcel of the process of becoming a platform.

Platformization

BOINC, Zooniverse, and EyeWire all started as standalone projects, and their respective technologies for making community were tailored to their scientific needs. However, when SETI@home came to form the basis for BOINC and Galaxyzoo for Zooniverse, the original projects became platforms – infrastructures and technologies for making community that can host several individual projects, each with a distinct scientific goal. EyeWire is also expected to become a platform for various citizen science games in the future. By using the term ‘platformization’ we point less to the hegemonic rhetoric of ‘platforms’ (Gillespie, 2010) or the ‘material-technical’ perspective on programmability through APIs (Helmond, 2015) and more to the socio-technical formatting that becoming a platform entails (Kelkar, 2018; van Dijck, 2013). Before platformization, project organizers and lead scientists were often the same person and in a direct relation with participants. With platformization, the scientists become another kind of users of the platform, along with the participants, and both are now in relation with the platform and those who maintain it, rather than with one another. Platform organizers and the platform itself assumes a mediating role between users and scientists. Automating the platform, not just for relation with the participants, but also with the scientists, becomes a priority. Both BOINC and Zooniverse have created tools that enable research teams interested in using their platform to build, from scratch, their own projects.

These days, projects don’t … Projects can startup without me, without help from me. In the early days I needed to make changes to BOINC to support pretty much every project, in various ways. So, I worked closely with a lot of the big projects, like Einstein@home, and CERN, and Climateprediction.net. But these days you know, the software kind of … does everything people want. And it’s well documented. [#B3]

In particular, Zooniverse has developed a tool called the ‘Project Builder’ that enables anyone to create a project without any coding knowledge. By contrast, building a project for BOINC still comes with a high entry cost in terms of specialized knowledge, reflecting its pre-Web 2.0 origins and its deep entanglement with computer culture. With the Zooniverse Project Builder, scientists create a project out of a series of ‘workflows’ (‘the sequence of tasks that you’re asking volunteers to perform’) that are in turn made of four generic ‘tasks’: ‘Question’ (answering a question about an image), ‘drawing’ (marking features in the image), ‘text’ (transcribing a text) and ‘survey’ (recognizing specific items in an image).

With the generalization inherent to platformization comes an inevitable standardization of the code infrastructure and, consequently, of the kind of data and analysis that can be performed. In their recommendations to project builders, the organizers of Zooniverse point out that ‘Ideally, a 10- or 12-year-old child should be able to understand and do your project’, calling for the utmost simplicity, and uniformity, in terms of tasks performed. At Zooniverse, the last iteration of the infrastructure has been aptly named Panoptes (‘Panoptes, um … [laughs] so, it was from the Greek myth, so the idea of the all-seeing um … creature’ [#Z3]), with the ‘same code run[ning] the project and the back end’ [#Z1]. An interesting consequence of this standardization is that citizen science platforms, although they morally remain devoted to science, are structurally less and less related to scientific activity. Once platformization generalizes and reduces the analyses performed by the volunteers of citizen science into a limited number of basic tasks, these tasks become generic – that is, not necessarily linked to a specific scientific goal, or even to science: It’s designed for specific interaction with data. So, it’s designed to show you something and ask you to do simple tasks or questions about it. That happens to be a pattern that’s common in a lot of science, because that’s how we’ve end up doing that. But yeah, there are other possibilities there …. [#Z2]

In other words, both the BOINC and the Zooniverse infrastructures are open to whoever is interested in using them, not necessarily for (citizen) science. To be publicized on the BOINC or Zooniverse websites and to tap into their respective communities, they need to be science-related and approved (in Zooniverse, in part by the users), but otherwise, the two platforms are thought of as generic, neutral intermediation spaces: The very nature of BOINC being an open-source project and having all the source code available online means that anybody anywhere can set up a BOINC project that they want. … That was one of the design goals for BOINC, it was to be a generic platform that anybody could use to do volunteer computing. [#B2] So if you come along and build a project and you run it with your community, at the minute, I don’t care what you do. [#Z1]

Ultimately, citizen platforms can be made to resemble ‘decision markets’, in which users vote for scientific projects not by casting a ballot but by choosing to contribute to a project, thereby revealing their preferences: ‘Because computer owners can contribute to whatever project they choose, the control over resource allocation for science will be shifted away from government funding agencies and toward the public’ predicted SETI@ home founder David Anderson (2003). Although they initially embed volunteering as well as scientific norms through different technologies – either rhetoric, of the self, social and ontological – platformization transforms citizen science projects into spaces whose central function is perhaps less the production of scientific knowledge than mediation between tasks, collectives and machines. Already almost twenty years ago, SETI@home founder David Anderson had highlighted such flexibility, imagining that citizen science platforms could become ‘decision markets’, in which users would vote for scientific projects not by casting a ballot but by choosing to contribute to a project, thereby revealing their preferences: ‘Because computer owners can contribute to whatever project they choose, the control over resource allocation for science will be shifted away from government funding agencies and toward the public’, he predicted (Anderson, 2003).

Conclusion: From citizen science to a science of the citizen

It is not uncommon for citizen science projects, online or not, to couch their activities in political terms. Under the aegis of democratization and participation, a twofold promise is offered. First, as science ceases to be a closed domain reserved for an expert elite, scientific work will accelerate, new research practices will emerge, and, perhaps, new questions will be asked that are more aligned with the public interest. Second, as scientific research becomes accessible to anyone, a new kind of citizen will emerge, one who brings their working knowledge of science into the realm of liberal-democratic politics (Ezrahi, 1990) and participation (Chilvers and Kearnes, 2015). Analysts and commentators of citizen science have been keen on taking up these political promises, sometimes in a celebratory tone, sometimes in a more critical way – asking, for example, if actual practices measure up to the promise (Mirowski, 2018). But what exactly do ‘politics’ or ‘political’ mean here? A first, rather direct, but also subtly normative answer has been to cast the politics of citizen science in terms of power and agency toward defining scientific agendas and methods, for example, by positing a scale of participation and analyzing how individual projects fare with it (with the implication that some ways of sharing power between citizen and scientists are intrinsically better than others). A second, more circuitous answer has been to uphold the STS adage that the true politics of (citizen) science lie neither in discourse nor in organization but in the epistemic practices, calling for thick descriptions of the actual practices of citizen science, especially in relation with the longue durée history of the role of amateurs, invisible technicians and the public in the development and professionalization of science (Strasser et al., 2018).

We believe that another way to investigate the politics of citizen science is to look at the social forms of citizen science, and to ask how they are fabricated, maintained and regulated. Citizen science projects and their advocates often speak of their ‘community’ as if it were naturally constituted, essentially through the mutual interests (science as leisure), shared virtues (science as a way of life) and common values (science as a civic ideal) of their members. Yet, through our three case studies of online citizen science we have seen that it takes a huge amount of work and many chains of human-technical mediators to fabricate and maintain this collective and its apparent naturalness. Of course, other studies on different kinds of citizen science, especially social movement-based and medicine-related citizen science, which are very likely to involve different community dynamics, would be needed to better understand the constitution and governing of collectives through citizen science. Still, as virtually none of these projects take place entirely offline today, we believe that the interfaces of online citizen science projects offer a good starting point to delve into what we have called the technologies that construct and govern the collective. Once interfaces are defined less as things and boundaries than as relational spaces in which constitutive processes take place, it becomes possible to study their structure, their topography and their generative outcomes – in other words, how a number of technologies, diversely arranged, configure individual and collective actors, behaviors and actions. Neither the interface nor their technologies are simply designed by project organizers, as HCI would see it, but are co-constructed by the organizers and the users. Different combinations of rhetorical technologies, technologies of the self, social technologies, and ontological technologies – one may need to complement this typology for other kinds of citizen science, for example by examining political technologies – produce different kinds of collectives, but the case studies of BOINC, Zooniverse and EyeWire ultimately point to two ideal-typical ways of fabricating the community – one based on the shared values and norms of science, the other on self-interest, and competition. These technologies demand an important mediation work, accomplished by human and technical actors that engage in ‘community management’; in particular, community managers see their work as interfacing between two constituencies, the users and the scientists. The intensity of this mediation work, the amount of artifice necessary to stabilize and regulate the collective threatens the definition of the situation – that of a naturally occurring community in direct contact with science and scientists.

Platformization – the shift from individual projects to platforms – can be seen as an answer to this tension. Platformization not only answers the problem of scalability, it also generates the drive to generalize, standardize and, insofar as possible, automatize the functioning of the community. In the process, platforms lose their structural relation to their object, science, and tend to become neutral spaces that mediate between constituencies for the accomplishment of generic tasks. Moreover, as individual projects integrate platforms, they not only generate scientific results linked to specific scientific questions (whether in astronomy, climatology, history, and so forth), but they also tend to actively produce meta-knowledge on their users. The amount of HCI and psychology research that has been carried out on the community dynamics of citizen science, often with the involvement of the projects’ organizers and community managers, is quite striking. Such work typically tries to understand what motivates the users to contribute (Nov et al., 2014; Tinati et al., 2017), proposes new design and features in order to retain users and stabilize the community (Greenhill et al., 2014; Korpela, 2012; Luczak-Roesch et al., 2014; Simpsons et al., 2014), or evaluates the epistemic reliability of users (Eveleigh et al., 2013; Prestopnik and Crowston, 2011). Imperceptibly, in addition to producing scientific knowledge with the help of citizens, these platforms increasingly produce scientific knowledge about those very citizens. When they reflect on their past experience, organizers typically emphasize the knowledge they have gained in social engineering: I learned a tremendous amount about kind of the requirements. You know, this idea that you have to make a credit system sheet proof, the fact that you need all of these social mechanisms. … What I learned the most has to do with managing people. [#B3] Now [our interface] is used as an example. At MIT and now we have lots of researchers who want our advice on the crowdsourcing side of things. [#E1] It was a sort of attempt to do naïve social science. … It’s also a natural experiment into crowdsourcing. … We were interested in trying to branch out and try different things. … For our curiosity we wanted to know why GalaxyZoo worked. And so it made sense to change the science topic and see if that change, or build a harder project, or build a project where the images were even less appealing, or … [#Z1]

It is against this move – from citizen science to a science of the citizen, from crowdsourcing experiments to an ‘experiment into crowdsourcing’ – that the politics of citizen science can be cast in a new light. Citizen science is political not only because it promises to shift the balance of power from scientists to citizens, or because it promises to challenge the epistemological stance of contemporary science, but because it is, through and through, an exploration in the art of fabricating collectives and the social in the name of science.

Footnotes

Acknowledgements

We thank Nicolas Baya-Laffite, Alina Volynskaya, Simon Dumas Primbault and the organizers and participants of the panel ‘Politische Dimensionen digitaler Plattformen’ (Congress of the Swiss Sociological Association, 2017) for their productive feedback on earlier versions of this article. We are also grateful to the Social Studies of Science Editor Sergio Sismondo and the anonymous reviewers for their insightful comments and helpful suggestions.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project has been made possible by the award of a Swiss National Science Fund Consolidator Grant (157787).