Abstract

This study explored how lyrics, participant-selected music, and emotion trajectory impact self-reported emotional (happiness, sadness, arousal, and valence) and physiological (heart, respiration, and skin conductance rates) responses. Participants were matched (based on sex, age, musicianship, and lyric preference) and assigned to a lyric or instrumental group. Each participant experienced one emotion trajectory (happy-sad or sad-happy), with alternating self- and experimenter-selected jazz music. Emotion trajectory had a significant effect on self-reports, where participants in the sad-happy trajectory reported significantly more sadness overall compared to participants in the happy-sad trajectory. There were also several interaction effects between the independent variables, which indicate the relevance of order as well as differences in processing musical emotions depending on whether music is instrumental or contains lyrics.

Through considerable musical emotion research, it is well documented that music elicits strong emotional responses subjectively and physiologically (White & Rickard, 2016), experienced both individually and commonly across participants (Egermann et al., 2011). Most research on felt emotions predominantly used instrumental music (Eerola & Vuoskoski, 2010; Västfjäll, 2002), leaving lyrics as a gap few have researched (Ali & Peynircioğlu, 2006; Brattico et al., 2011). Considering many prefer genres with lyrics (Gebhardt & von Georgi, 2015), and a third of people surveyed consider lyrics an essential way that music expresses emotions (Juslin & Laukka, 2004), researching lyrics and participant-selected (PS) music is pertinent. Furthermore, though it is known that emotions change in intensity over time (Hakim et al., 2013), few tested how the order of emotional music—emotion trajectory—impacts felt emotions (Altschuler, 1941/2001; Heiderscheit & Madson, 2015; Ratcliff et al., 2014). Understanding how emotion trajectories affect listeners seems paramount since many people use music to regulate their affect (van Goethem & Sloboda, 2011), usually putting the music in some order. Researching emotions with mainly instrumental experimenter-selected (ES) music, while overlooking emotion trajectory, leaves questions unanswered.

These variables were of interest because the first author noticed—as a board-certified music therapist—that patients with psychological disorders usually requested music with lyrics, yet sometimes their preferred music exacerbated their emotions. This author slowly changed the music’s mood to try to improve patients’ moods (iso principle; Altschuler, 1941/2001), yet this technique was not always successful. Music therapy research emphasizes the efficacy of client-preferred music (Hiller & Gardstrom, 2018), and ES music allows for comparison; thus, this study used PS and ES music. Some studies utilized PS music with lyrics, yet they either varied and did not analyze order separately (Fox et al., 2019), or it was often unclear whether the music’s order and/or emotional character were considered (Cepeda et al., 2006). Furthermore, the few iso principle studies (Altschuler, 1941/2001) have been case studies (Heiderscheit & Madson, 2015) or compared vaguely “unstructured” music playlists to iso-principle playlists (Ratcliff et al., 2014). As Leo Shatin (1970) said, “unless the existence of the vectoring effect can be validly demonstrated then the applied usefulness of the ‘Iso’ matching principle (where music is matched to the current mood that we would change) remains in doubt” (p. 84). This study aims to explore how lyrics, self-selection, and emotion trajectory affect physiological and self-reported emotional responses, for now investigating healthy participants, while considering their interactions.

Literature review

Musical emotions

One main reason people listen to music is to feel and express emotions (Simonton, 2010). Musically felt emotions are a complex phenomenon caused (Juslin, 2013; Juslin & Västfjäll, 2008) and influenced (Scherer & Zentner, 2001) by many factors. When reviewing musical emotion literature, one must distinguish between general affective states, longer lasting moods, and specific, shorter emotions (Scherer, 2004). Hereafter, affect and mood only explicate findings described this way in previous research. This study aims to investigate evoked emotional responses.

The BRECVEMA framework outlines that music evokes emotions through brainstem response, musical expectancy, entrainment, aesthetics, evaluative conditioning, memory, emotional contagion, and imagery (Juslin, 2013; Juslin & Västfjäll, 2008). Lyrics could elicit emotions through imagery and memory recalled from textual cues, or through emotional contagion of vocal expression (Juslin, 2013). Selection could elicit emotions through memory (Ashley & Luce, 2004), evaluative conditioning, emotional contagion, and musical expectancy (Juslin, 2013). Considering lyrics and selection support separate and overlapping BRECVEMA factors, it is important to understand how both variables affect emotions, and whether interactions exist.

Although BRECVEMA explains how music itself causes emotions, variables like emotion trajectory must consider theories that include both non-musical and musical elements (Scherer & Zentner, 2001). Non-musical factors like the listener’s current emotional or attentive state and listening context affect emotional intensity and valence (Scherer & Zentner, 2001). Emotion trajectory’s impact is highlighted since its components affect emotions (Heiderscheit & Madson, 2015; Ratcliff et al., 2014). Similar to this study’s focus on musical (lyrics) and non-musical features (emotion trajectory, self-selection), Scherer and Zentner (2001) assert that both features interact to elicit musical emotions.

Musical elements often have emotional associations, correlate with physiological responses, and therefore can evoke accompanying emotions (Coutinho & Cangelosi, 2011). After reviewing numerous studies, Gabrielsson and Lindström (2010) reported that louder, faster, higher pitched music in major modes is usually perceived as happy and the opposite as sad. Perceived emotions often coincide with felt emotions (Schubert, 2013). Therefore, choosing stimuli with corresponding musical elements should more likely express and thus evoke happiness and sadness.

Other experimenters aiming to evoke happiness and sadness also selected music with the aforementioned criteria (Bigand et al., 2005; Nyklicek et al., 1997). Target emotion—the emotion intended to be evoked—is often analyzed separately to ensure it was evoked (Vidas et al., 2018). Compared to sad music, happy music usually elicits lower sadness, positive valence, and higher happiness and arousal ratings (Eerola & Vuoskoski, 2010), which is not surprising since sadness is often a less arousing, more negative (lower valenced) emotion than happiness (Russell, 1980). Although physiology indicates arousal, past studies discovered happy music with louder, faster, high-arousal features can elicit a higher heart rate (HR), respiration rate (RR), and skin conductance rate (SCR) compared to sad music (Krumhansl, 1997). Target emotions are more likely to be evoked when experimenters select stimuli considering these musical features, and participants select stimuli likely considering personal associations.

The stimuli chosen impact whether target emotions are evoked and the generalizability of results. Considering many prefer music with lyrics (Gebhardt & von Georgi, 2015), lyrics must be explored.

Lyrics

Lyrics are a prominent feature in many pieces of music, yet most research classical instrumental music (Eerola & Vuoskoski, 2010; Västfjäll, 2002). Lyrics can enhance the significance listeners feel for familiar music (Thompson & Russo, 2004) and cause different neurological responses than instrumental music (Brattico et al., 2011). Yet melodies alone conveyed emotion more intensely than the same melodies with lyrics in Ali and Peynircioğlu’s (2006) study. Since lyrics and music elicit strong emotions and are processed differently, further exploration is warranted.

How strongly music elicits emotions may differ based on the presence or absence of lyrics. Instrumental music evoked stronger emotions for calm music, but not happy, sad, or angry music in Ali and Peynircioğlu’s (2006) study. Indeed in two principle studies on lyrics and emotions, both brain scans (Brattico et al., 2011) and self-reported emotions (Ali & Peynircioğlu, 2006) suggested that instrumental music strongly evokes positively valenced emotions (happiness) and lyrics strongly evoke negative emotions (sadness). This implies that the strength of the emotional effect of lyrics depends on valence.

However, these findings seem limited considering their stimuli. Ali and Peynircioğlu (2006) used different genres for instrumental and lyric groups for some target emotions; thus, the difference could be due to genre, not lyrics. Also, creating 18-s instrumental excerpts from songs with lyrics (Brattico et al., 2011) limits ecological validity. These limitations necessitate strong lyric research. Lyrics are valuable to research because preferring music, as many do with lyrics (Gebhardt & von Georgi, 2015), can impact the strength of a felt emotion (Kreutz et al., 2008).

Self-selected music

Self-selected music can be more engaging (Lynar et al., 2017) and can strongly affect emotional responses (Helsing et al., 2016). Preference is so influential that preferred music can improve negative moods, and strongly disliked music can exacerbate positive moods (Wheeler, 1985). Although PS music has benefits, comparisons to ES music are necessary to compare emotion-evoking efficacies.

Schubert (2013) compared numerous studies and deduced that PS music often produces stronger felt emotions than ES music. Self-selected music elicited higher average joy and engagement ratings and higher average HR and SCR than ES music in a study by Lynar et al. (2017). Preferred music also altered participants’ moods 77% of the time in van Goethem and Sloboda’s (2011) study (p. 214). Since PS music often induces and changes mood more effectively (Västfjäll, 2002), this study will compare PS to ES music while considering emotion trajectory.

Emotion trajectory

Music listening is a common, successful, adaptable affect-regulation strategy (van Goethem & Sloboda, 2011). People listen to music expressing and eliciting different emotions, depending on their desire to stay positive, intensify negative feelings, or moderate emotions (Thoma et al., 2012, p. 554). The regulation strategy chosen impacts the musical emotion trajectory and both impact the resulting emotion. A preliminary mood can cause another mood that amplifies, diminishes, or interacts with the initial mood somehow (Izard, 2007). Previous moods impact the type and intensity of perceived emotions, which impact felt emotions. Indeed, people with depression rated various musical emotions significantly more negatively than people without depression (Punkanen et al., 2011). Emotion trajectory can uncover nuances about music’s ability to evoke emotional responses.

Emotion trajectory determines how successfully music evokes desired emotions (Altschuler, 1941/2001). The iso principle is a music therapy practice where the listener’s current mood is matched to the musical mood, then the musical mood is gradually changed to change the listener’s mood (Altschuler, 1941/2001). In one study, patient moods and quality of life improved after employing the iso principle (Ratcliff et al., 2014). This is significant, since people use music to regulate affect with inherently different emotion trajectories (van Goethem & Sloboda, 2011).

Theories emphasize that musical and non-musical features interact to affect emotions (Juslin, 2013; Juslin & Västfjäll, 2008; Scherer & Zentner, 2001), yet most studies research one variable: lyrics (Ali & Peynircioğlu, 2006), self-selection (Salimpoor et al., 2009), or emotion trajectory (Altschuler, 1941/2001). Of the few studies that used PS music with lyrics, these variables were explored inseparably as a case study (Heiderscheit & Madson, 2015) or using only self-reports (McFerran et al., 2018). Self-reports are limited to each participant’s emotional awareness (McFerran, 2016), though multiple methods can provide a more robust/informed assessment.

Physiology and self-reports

Emotional responses can be measured through self-report, physiology, behaviors, and more. Self-reports offer subjective insight, yet inadequate emotional understanding inhibits participants from reporting emotions accurately (McFerran, 2016). Physiology could indicate emotional arousal (Egermann et al., 2015), where more arousing emotions like happiness often elicit higher HR, RR, and skin conductance level (SCL) than lower arousal emotions like sadness (Krumhansl, 1997). Nevertheless, varied physiological responses to the same music imply physiology alone insufficiently explains emotional responses (Salimpoor et al., 2009). Thus, though they could potentially not correlate, measuring emotions through two methods seems to offer a more comprehensive perspective of complex emotional responses (McFerran, 2016).

To interpret whether self-reports match physiology, past psychophysiological studies used the dimensional model of emotions (Eerola, 2011; Gomez & Danuser, 2007). Overall, inquiring about emotions through arousal (intensity) and valence levels (positivity or negativity of emotion)—though somewhat limiting—can allow for more direct comparisons of physiological and self-reported emotional responses.

Aims

An experimental study aimed to test the effect of lyrics, self-selection, and emotion trajectory on felt emotional responses, as measured through physiological and self-reported responses. In addition, a pre-study was conducted with the aim of determining the main study’s ES musical stimuli with two target emotions (happy and sad), with lyric and instrumental versions. The chosen genre was jazz, which was seen as a useful contribution to existing research studies that have often included classical instrumental music, film music, or popular music with lyrics. Jazz also seemed to be a sensible choice considering related lyric studies used both jazz and classical (Ali & Peynircioğlu, 2006), and electroencephalography (EEG) studies found the brain did not react significantly differently based on jazz, classical, or rock-pop genres (Altenmüller et al., 2002).

Methods

The University of Sheffield’s Research Ethics Committee approved this research, and all participants signed informed consents. Data can be accessed online in the Supplementary Documents.

Design

A mixed experimental design explored the effect of target emotion and selection (within-participants) and lyrics and emotion trajectory (between-participants) on physiological responses (HR, RR, and SCL) and self-reported happiness, sadness, arousal, and valence (on 7-point Likert scales). Self-selected and ES music were systematically varied across participants to counter-balance for order effects.

Participants

Jazz listening volunteers (N = 36) were recruited from public places in Sheffield, UK, and from social media because familiarity with music influences how strongly music elicits emotions (Berlyne, 1970). Some listened to jazz multiple times per day, some, a few times per year; the median response was a few times per month. The median response for liking jazz was 5 on a 7-point Likert scale (7 = very much).

Data were analyzed from 34 participants (18 females; Mdnage = 25; Mage = 27.12 ± 8.22); 21 preferred lyrics, 6 preferred instrumental, and 7 had no preference between lyrics and instrumental music. Non-musicians (N = 21) never played or sang professionally, or did over 5 years ago (likely not beyond childhood). Musicians (N = 13) currently studied a music degree, played an instrument, and/or sang or performed in the last year.

Participants abstained from alcohol, drugs, and caffeine for 4 hr before data collection (like Miu & Balteş, 2012). People with psychological, cardiovascular, hearing, neurological, and respiratory conditions were excluded to limit confounding variables, and for ethical considerations.

Participants were grouped into pairs of similar ages, and from those pairs, one was assigned to the lyric and the other to the instrumental group. A similar procedure was followed to match participants based on sex, musicianship, and lyric/instrumental preference, as these have been shown to affect emotional experiences to music (Gfeller & Coffman, 1991; Kreutz et al., 2008; White & Rickard, 2016). After being matched and placed in a lyric group, participants were randomly assigned to either the happy-sad or sad-happy emotion trajectory. There were no significant differences between the two lyric groups and two emotion trajectory groups, in terms of gender distribution, age, musicianship, or preference for lyrics (p > .05). Participants were given both target emotions twice within their assigned trajectory.

Materials

The musical preference information form gathered participants’ attention to and preference for lyrics. Here, participants listed two jazz instrumental pieces and two jazz pieces with lyrics, one happy and sad for each (similar to Brattico et al., 2011).

During data collection, participants rated four self-reported emotions on 7-point Likert scales. They rated happiness and sadness, to help confirm whether target emotions were evoked (Schubert, 2013), and arousal and valence to compare self-reports to physiological responses (Coutinho & Cangelosi, 2011). A reference sheet was provided to prevent semantic confounds, as is recommended (Gabrielsson, 2001). Here, Russell’s (1980) circumplex model (p. 1168) visually represented arousal and valence and the PANAS (Watson et al., 1988) provided additional valence synonyms.

Musical stimuli

A pre-study determined ES musical stimuli from 16 pairs of musical pieces of jazz music written before 1959 with vocal and instrumental versions. Jazz music was used because it significantly affects positive and negative mood management (Cook et al., 2019) and often has vocal and instrumental covers of the same songs (Jackson, 2002). Using naturally occurring instrumental and vocal jazz recordings increases ecological validity, which is strongly recommended for musical emotion research (Eerola & Vuoskoski, 2013), since familiar music most effectively evokes target emotions (van Goethem & Sloboda, 2011). Pre-1959 jazz was used since jazz stylistically changed after 1959 (Brubeck, 2002). Happy and sad pieces were selected using the criteria from Park et al. (2013). Musical stimuli were trimmed to 60 s to be relatively stable in emotional character (Timmers, 2017) and faded in and out for 2 s to avoid startling participants (Altenmüller et al., 2002). Lyric excerpts were trimmed to include 10 s or less of introductory instrumental music.

As a pre-test of the musical stimuli, 10 musical postgraduates listened to music and rated felt emotions from 1 (not at all) to 5 (very much) to determine happy, sad, and neutral stimuli (following Mitterschiffthaler et al., 2007). They currently studied music, played an instrument, or played and studied music. Musicians had 6–22 years of playing experience (M: 14.6 ± 4.95 years). No pre-study participants participated in the main study.

All 16 pairs of pieces’ emotional ratings were compared to verify the song’s lyric/instrumental partner also evoked the target emotion. When at least 30% of participants indicated a piece was neutral when it was intended to evoke happiness or sadness, that piece was removed from analysis for happy/sad stimuli. The two pairs with the highest averaged happiness and sadness were then selected. “Sing, Sing, Sing” (Prima, 1936/1938; Prima, 1936/1952) and “On The Sunny Side of the Street” (Fields & McHugh, 1930/1937; Fields & McHugh, 1930/1961) had the highest average happiness rating of 3.7 and 3.0, respectively. “Cry Me a River” (Hamilton, 1953/1955a; Hamilton, 1953/1955b) and “Strange Fruit” (Allan, 1939) had the highest average sadness rating of 2.9 and 2.6, respectively. Although this score is not high, it was accepted for the sad stimuli because sad music frequently elicits both positive and negative emotional responses (Kawakami et al., 2013). “Satin Doll” (Strayhorn & Ellington, 1953; Strayhorn & Ellington, 1953/1958) was rated “neutral” by most participants (45%, averaged) and, thus, became the main study’s practice stimulus. Supplemental Appendix A lists the pre-study’s complete jazz stimuli set. Additional pre-study details are available upon request from the first author.

Participant-selected stimuli were selected from pieces the participant indicated (per the form) as the most emotionally evocative within their assigned lyric group. If the participant gave the same happiness rating for both PS happy pieces, one was randomly chosen (and vice versa for sad pieces). To include more participants, participants could choose jazz from any time or sub-genre (contemporary, Latin jazz). Genres were validated through online streaming services’ genre categorization and an online database (www.allmusic.com; Hanser et al., 2016). Supplemental Appendix B lists all main study stimuli.

Apparatus

Main study participants heard music through BeyerDynamic DT231 Pro closed back headphones (BeyerDynamic, NY, USA). ProComp Infiniti sensors collected physiological responses through BioGraph Infiniti software (SA7900, Version 6.0.4; Thought Technology Ltd., Montreal, QC). The Ledalab software was used to analyze skin conductance (SC) data (Benedek & Kaernbach, 2010a, 2010b). Statistical analysis was conducted using SPSS Statistics (IBM, Subscription Version).

Procedure

Participants were given a reference sheet to help them respond to self-reported questionnaire. Participants could choose from two ES stimuli to prevent potential memory associations from evoking untargeted emotions (Marik & Stegemann, 2016). If the participant knew either ES piece, the most emotionally evocative piece was chosen. If both were unfamiliar, the experimenter chose one, counterbalancing stimuli across participants.

Sanitized physiological sensors were applied following manufacturer recommendations (Thought Technology Ltd., 2013), with the RR belt fastened around the lower ribs, and SCR sensors on the index and ring fingers and the HR sensor on the middle finger of the non-dominant hand. Participants were asked to remain still due to the sensitive nature of the sensors.

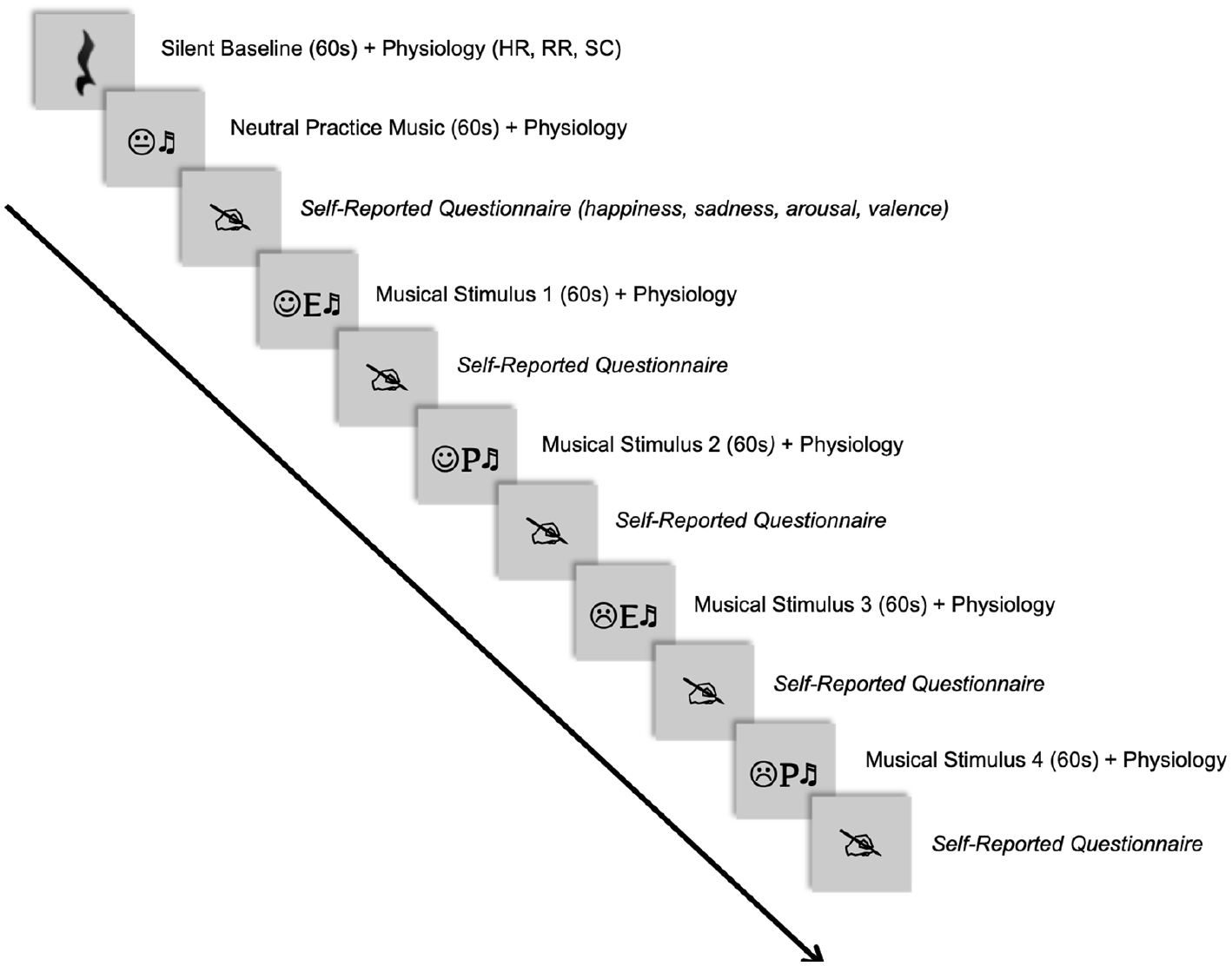

To normalize physiology, participants sat in silence for a minute before the 1-min silent baseline was collected (White & Rickard, 2016). Then, participants listened to neutral practice music to adjust the volume. Neutral music helps start participants at similar arousal levels to “reduce the orienting response” (Khalfa et al., 2008, p. 19). After the minute of music, participants completed a practice questionnaire. Then, participants heard four musical excerpts and completed the questionnaire after each piece. The sensors were removed, and participants were debriefed. Figure 1 displays an example of one order of the procedure.

Example of One Order of Musical Stimuli.

Data processing and analysis

Before data processing, data from 2 of the 36 participants were discarded. One said that she did not like lyrical and instrumental jazz and the other consumed caffeine an hour before data collection.

To begin physiological processing, HR, RR, and SC were extracted from BioGraph Infiniti software (Thought Technology Ltd., Montreal, QC). Separate means were configured for each musical excerpt (Egermann et al., 2015). The first 3 s of SC data were removed to account for the startle response (Salimpoor et al., 2009). All SC artifacts were identified using criteria from Taylor et al. (2015, p. 3), and affected time segments were corrected using Ledalab’s artifact correction function (Benedek & Kaernbach, 2010a, 2010b). Subsequently, SC data for each sample was manually smoothed using a gauss window. To extract global mean SCL, a continuous decomposition analysis was applied using the optimization function and default settings (Benedek & Kaernbach, 2010a). Where SC data exhibited movement artifacts, HR data were excluded for the same stimulus. If the remaining time was under 45 s, that participant’s data for that stimulus were excluded because the average was less reliable. Hereafter, abbreviations HR, RR, and SCL denote the mean difference from the baseline.

The effects of target emotion, lyrics, selection, and emotion trajectory were tested with one mixed-model analysis of variance (ANOVA) for the physiological variables (HR, RR, and SCL) and another ANOVA for the self-reported variables (happiness, sadness, arousal, and valence). The self-reported findings were verified with non-parametric tests. There were two physiological outliers for five total physiological data points. These two participants’ pertinent data points were removed since data collection notes confirmed they noted irregular physiological status (e.g., heavy smoker). All missing physiological data points from these outliers and others’ occasional movement artifacts were replaced with the mean for their respective groups (experienced the same lyric and emotion trajectory group). Replacing missing data with means is one way to prevent ANOVAs from eliminating entire participants listwise due to one missing data point (IBM Corporation, 2013, p. 6). Although replacing missing data with relevant means could affect the variation of a variable (Myers, 2011), 0–1 data points were replaced for most variables.

Results

Self-reports

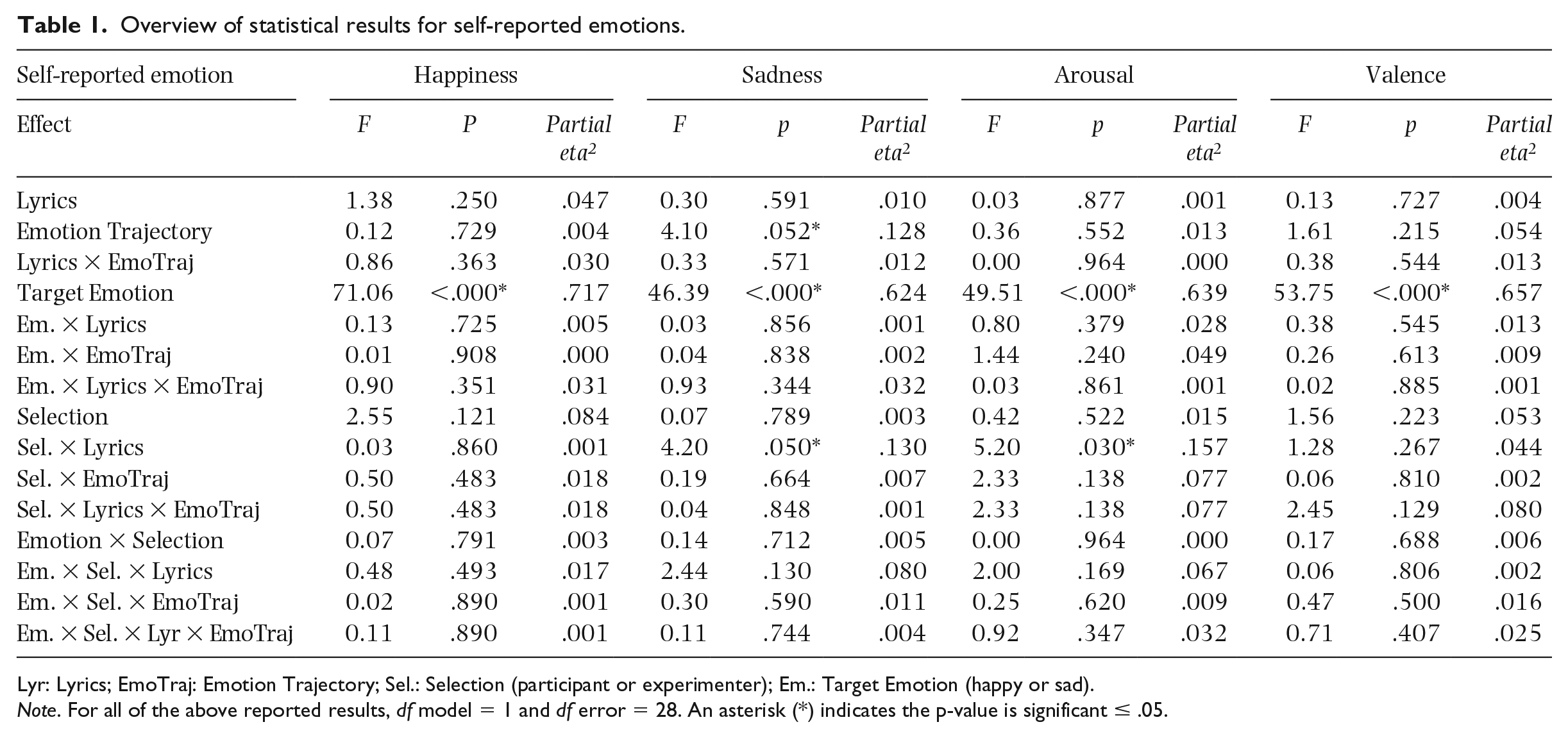

Table 1 shows results from the mixed-model ANOVA for self-reports. There was a significant main effect of target emotion (targeted happiness or sadness) on self-reported happiness, sadness, arousal, and valence. All effects were as expected and followed the direction of the target emotion (see Tables A1–A7 in the Supplemental Appendix for means and SE related to each main effect).

Overview of statistical results for self-reported emotions.

Lyr: Lyrics; EmoTraj: Emotion Trajectory; Sel.: Selection (participant or experimenter); Em.: Target Emotion (happy or sad).

Note. For all of the above reported results, df model = 1 and df error = 28. An asterisk (*) indicates the p-value is significant ≤ .05.

There was no significant main effect of lyrics or selection on any self-reported variables. There was a significant main effect of emotion trajectory on self-reported sadness, but not happiness, arousal, or valence. Participants in the sad-happy group gave a mean difference of .6 higher sadness ratings than the happy-sad group, over all stimuli.

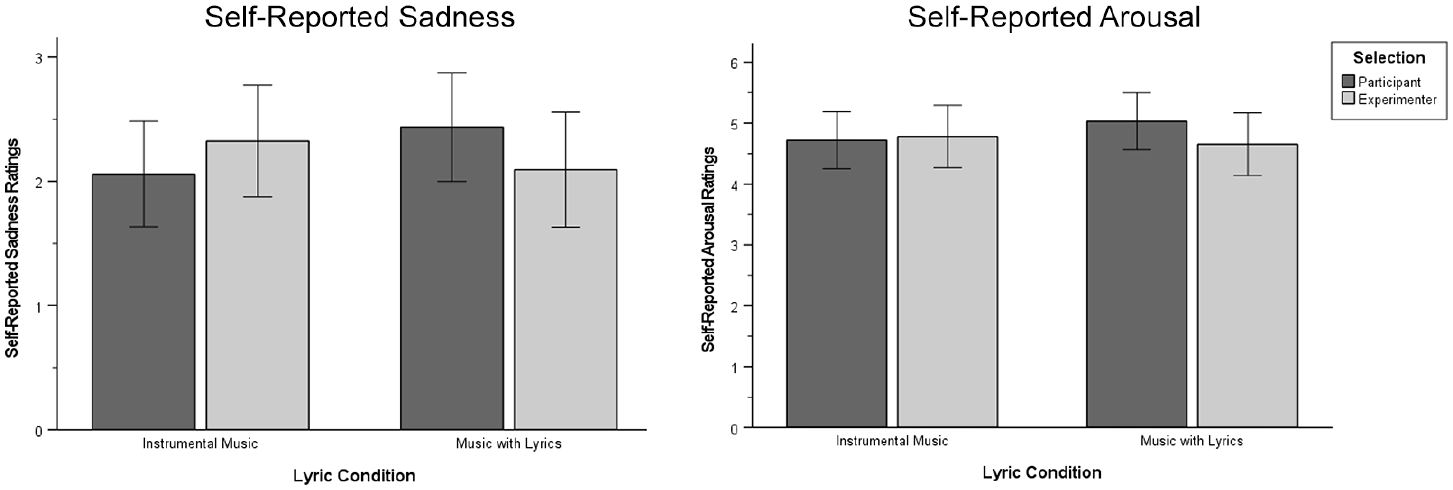

Selection and lyrics had a significant interaction effect for self-reported sadness and arousal. As Figure 2 shows, PS music with lyrics caused a mean difference of .3 higher sadness ratings than ES music with lyrics. This pattern was reversed for instrumental music, where ES music caused a mean difference of .3 higher sadness ratings than PS music. Figure 2 also shows mean differences of .4 higher arousal ratings for PS than ES music with lyrics, and .06 higher arousal ratings for ES than PS instrumental music. This particular interaction could not be confirmed with non-parametric tests, probably because of lack of statistical power. No other interactions were significant.

Interaction Effect between Selection and Condition.

Physiology

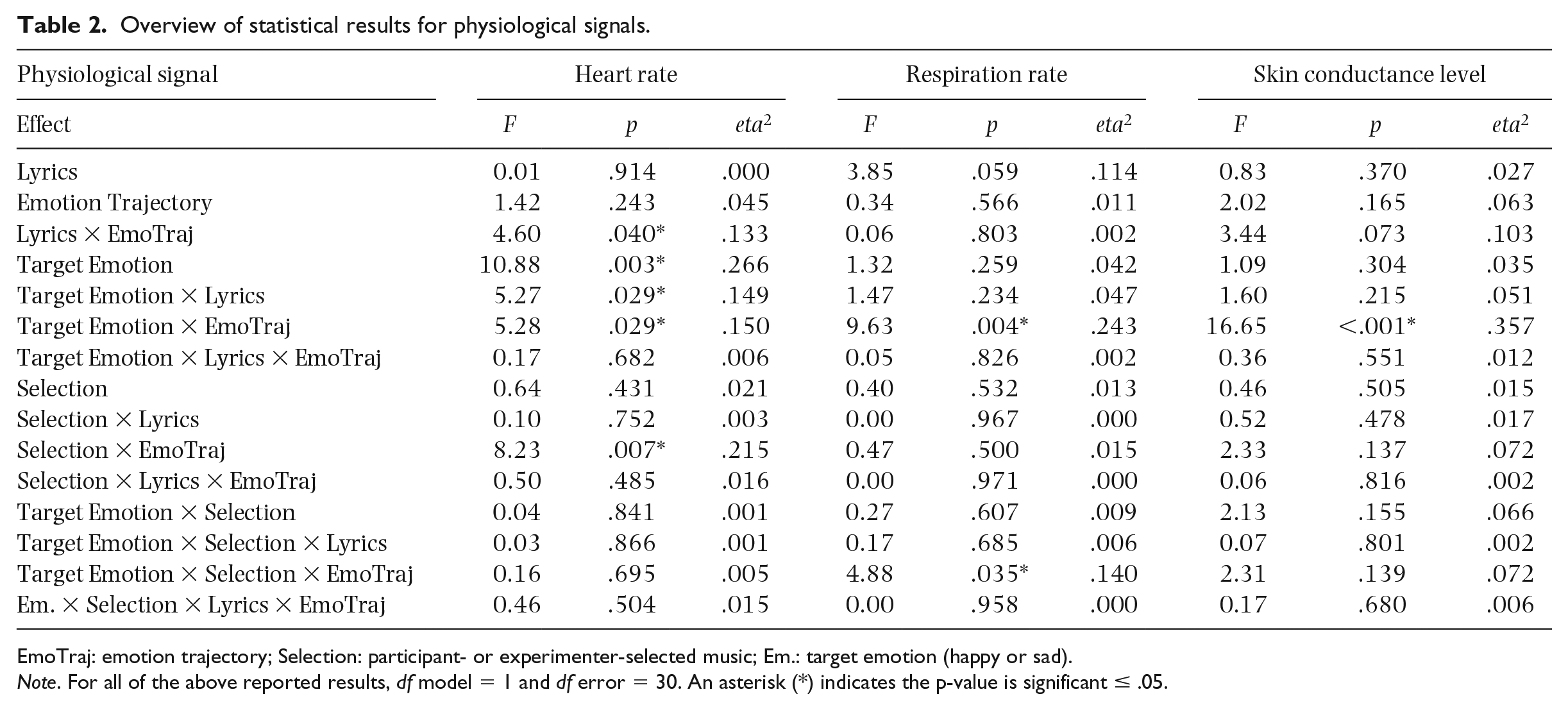

Table 2 displays results from the ANOVA for physiological measures. Target emotion had a significant main effect on HR, where HR was higher for happy music than sad music, a mean difference of 1.1 beats per minute. No other main effects were significant. However, many interaction effects were discovered.

Overview of statistical results for physiological signals.

EmoTraj: emotion trajectory; Selection: participant- or experimenter-selected music; Em.: target emotion (happy or sad).

Note. For all of the above reported results, df model = 1 and df error = 30. An asterisk (*) indicates the p-value is significant ≤ .05.

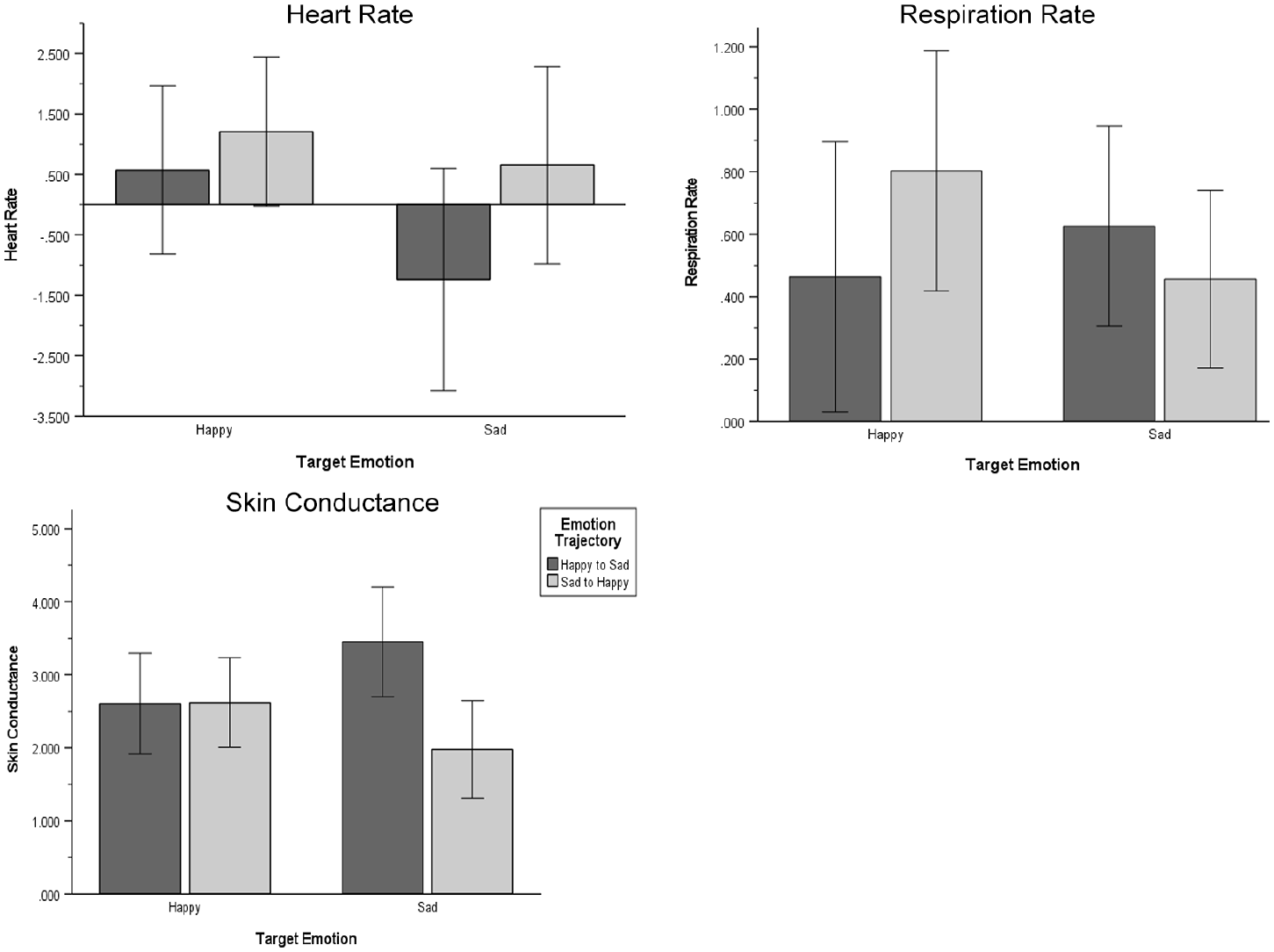

Figure 3 shows a significant interaction between target emotion and emotion trajectory for HR, RR, and SCL. The sad-happy group had mean HR differences of 1.9 beats per minute greater for sad music, and 0.6 beats per minute higher for happy music than the happy-sad group. For RR, the sad-happy group had a higher mean difference of 0.3 breaths per minute for happy music and a lower mean difference of 0.2 breaths per minute for sad music, compared to the happy-sad group. The happy-sad participants had a higher mean SCL difference of 1.47 micro-Siemens during sad music and a lower mean SCL difference of .01 micro-Siemens during happy music, than the sad-happy group.

Interaction Effects between Emotion Trajectory and Target Emotion.

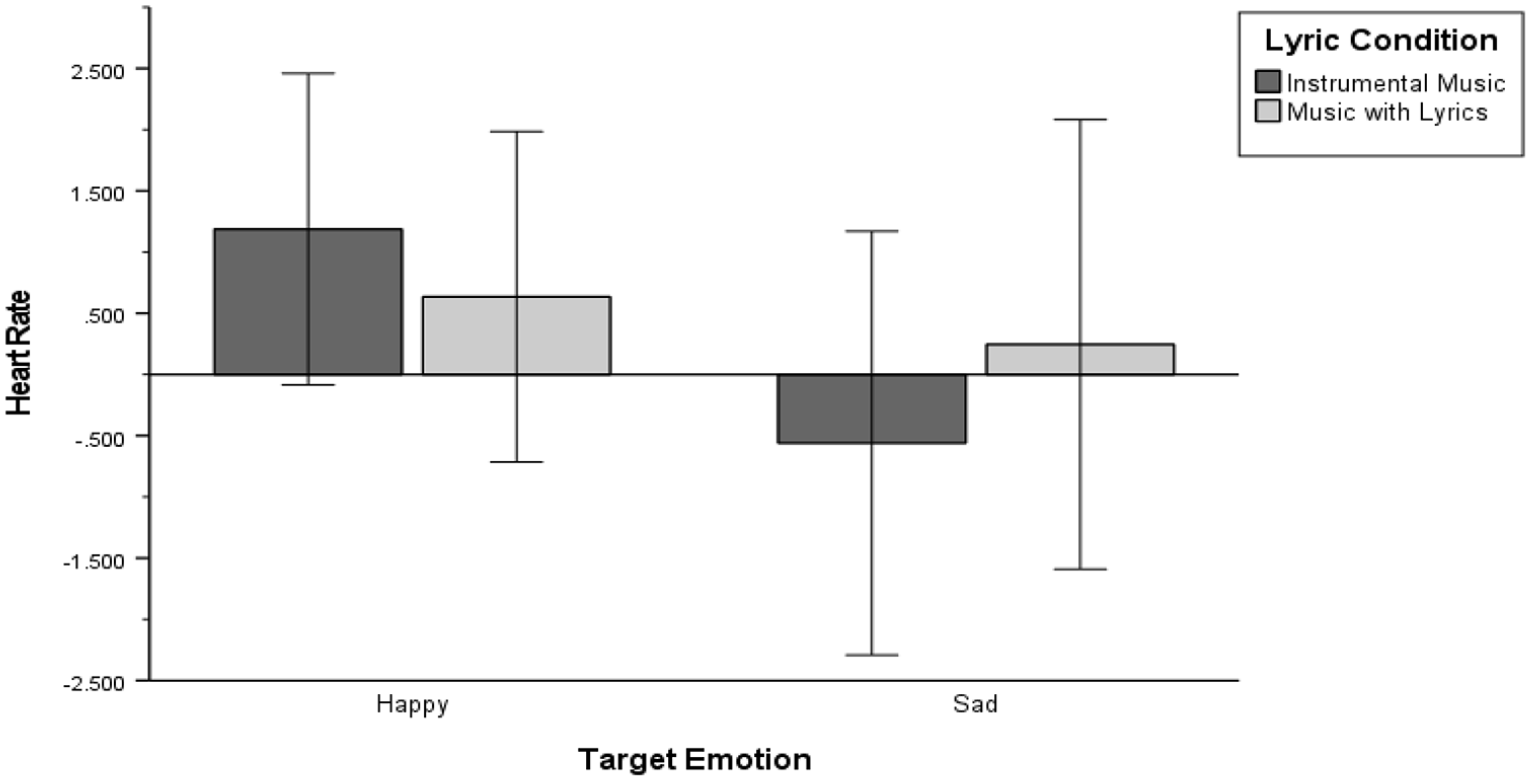

Target emotion and lyrics had a significant interaction considering HR. As Figure 4 shows, both groups had their highest HR during happy music and lowest during sad, with the instrumental group having a higher mean HR difference of 1.4 beats per minute higher than the lyric group overall.

Interaction Effect between Lyrics and Target Emotion.

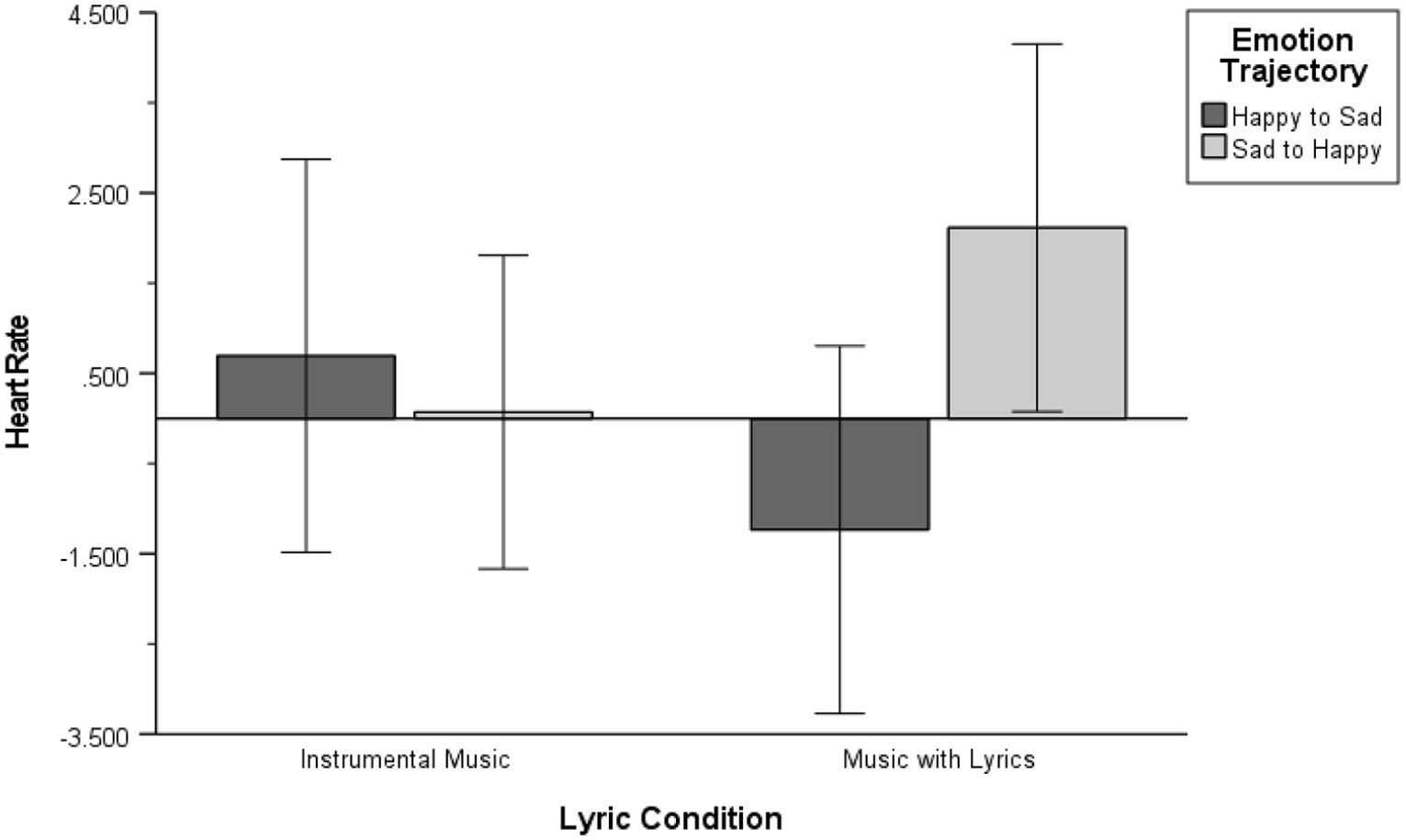

Figure 5 shows that emotion trajectory and lyrics had a significant interaction. The lyric group had a higher mean HR difference of 2.7 beats per minute than the instrumental group. Participants in the lyric group who heard the sad-happy trajectory had a mean HR difference of 3.3 beats per minute higher than the happy-sad lyric group. The happy-sad instrumental group had a mean HR difference of 0.6 beats per minute higher than the sad-happy instrumental group.

Interaction Effect between Lyrics and Emotion Trajectory.

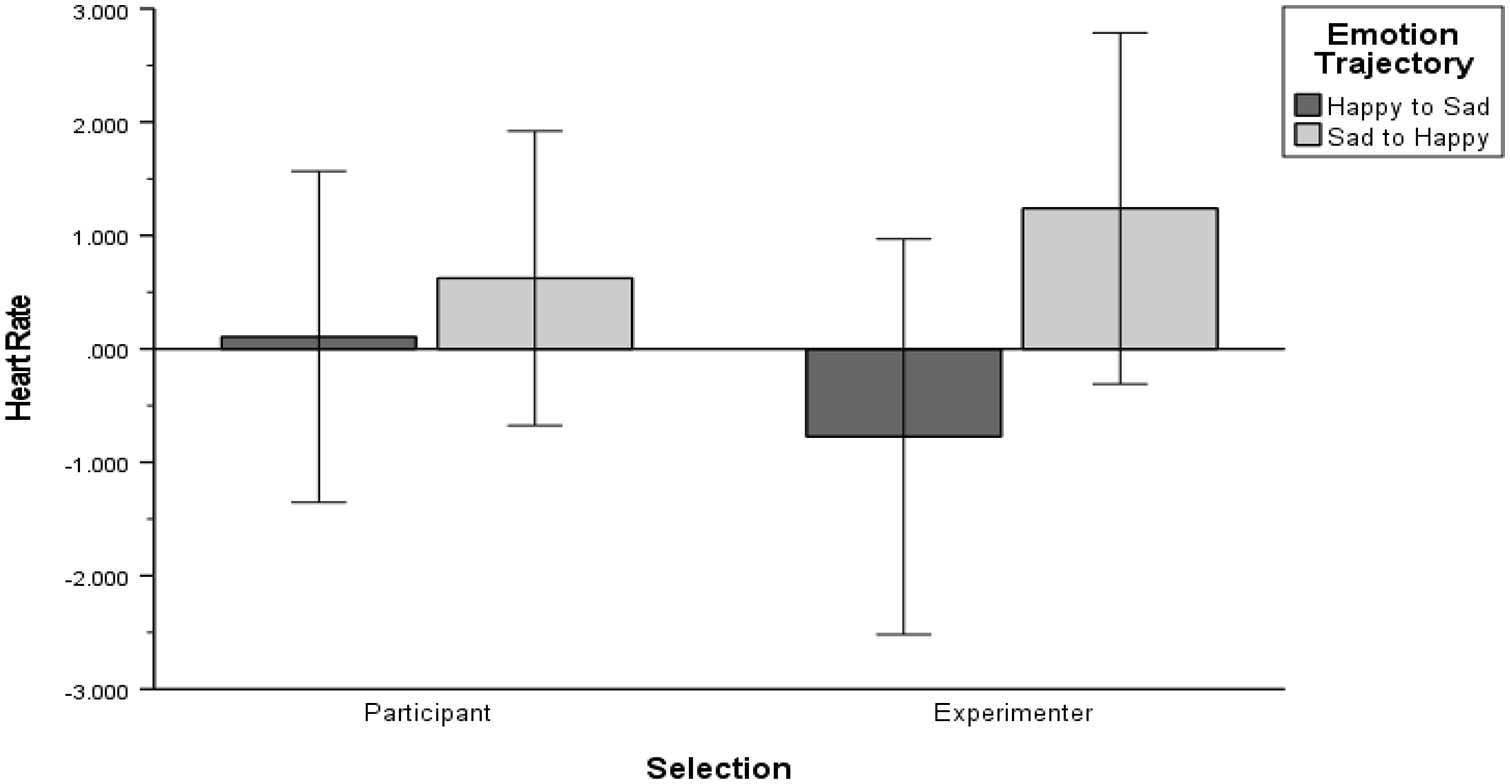

There was a significant interaction between selection and emotion trajectory on HR. Figure 6 shows that ES music had a mean HR difference of 1.5 beats per minute greater than PS music, where HR was highest for the sad-happy group and lowest for the happy-sad group during ES music.

Interaction Effect between Selection and Emotion Trajectory.

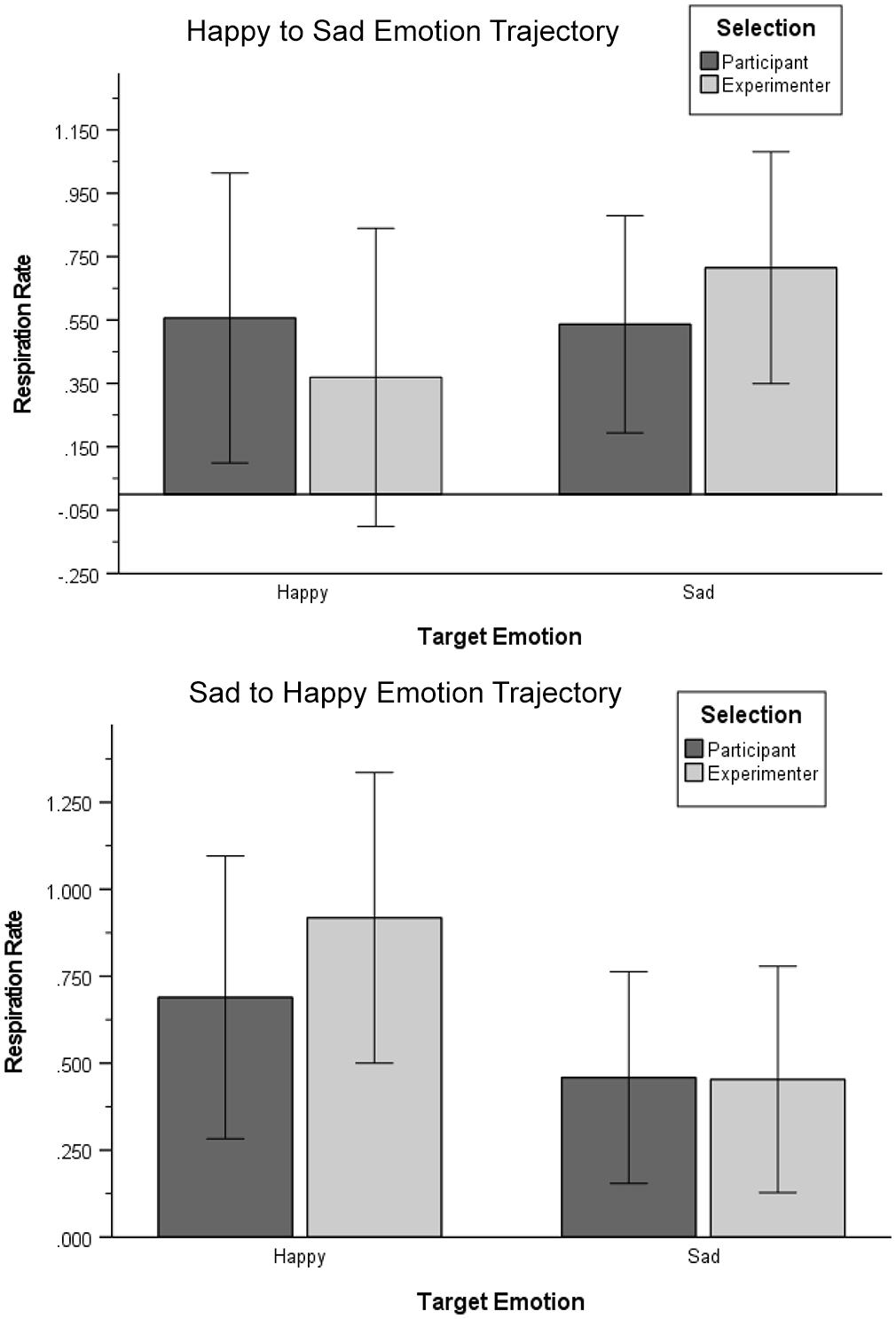

A significant three-way interaction was found between target emotion, selection, and emotion trajectory for RR. Follow-up separate two-way repeated measures ANOVAs revealed no significant simple two-way interaction effect of target emotion and selection for the happy-sad trajectory, F(1, 14) = 2.50, p = .136, r = .390; or the sad-happy trajectory, F(1, 18) = 2.93, p = .104, r = .374. As Figure 7 shows, the mean RR difference between the two trajectories was higher for happy music than sad music, where happy ES music had a greater mean RR difference of 0.4 breaths per minute compared to happy PS music, and sad ES music had a greater mean RR difference of 0.2 breaths per minute than sad PS music. The sad-happy group had the highest RR, and the happy-sad group had the lowest RR, both during happy ES music.

Three-Way Interaction Effect between Target Emotion, Selection, and Emotion Trajectory.

Discussion

Target emotion

Target emotion had significant main effects on self-reported emotions and HR. According to self-reports, it seems the target emotion was evoked since participants gave lower sadness, and higher happiness, arousal, and valence ratings for happy music. The main effect of target emotion on HR supports previous research where happy music (usually a higher arousal emotion) elicited a higher HR compared to sad music (Sokhadze et al., 2012), and also suggests the music successfully evoked the target emotion. Perhaps target emotion did not significantly affect RR or SCL because physiological signals do not always change as predicted. Indeed, Gomez and Danuser (2004) found that HR, RR, and SCL sometimes changed in different directions in response to the same music. Overall, since five variables changed in the expected directions based on target emotion, the musical stimuli seemed to successfully evoke both target emotions.

Lyrics

Lyrics did not significantly affect physiological or self-reported emotional responses, at least not in a simple way, as it interacted with other variables. Perhaps ES stimuli (instrumental and lyric versions of the same song at similar tempos) were too much alike. Indeed, the same music with and without lyrics did not significantly alter exercise performance in a study by Sanchez et al. (2014). Two studies that reported main effects of lyrics on emotions used multiple genres (Ali & Peynircioğlu, 2006; Brattico et al., 2011). Although using only jazz eliminated a confounding variable, it could have made differences less distinguishable.

Participant-selected music

It was surprising that selection alone did not have a significant main effect on any variables, since PS music normally improves emotions significantly more than ES music (Lynar et al., 2017). Perhaps this was because participants enjoyed the experimenter’s music; many complimented it, and some unknowingly listed one ES stimulus for their PS music. In Gfeller and Coffman’s (1991) study, liking a piece correlated with self-reported mood; thus, if participants enjoyed ES music similarly to their own music, emotional responses could be similar. In addition, participants chose one ES stimulus if they knew both options. Although this prevented adverse memory associations, introducing choice for ES music likely obfuscated emotional response differences.

Emotion trajectory

There was a significant effect of emotion trajectory for self-reported sadness, where participants who heard sad music had significantly higher sadness ratings than the group who heard happy music first. Sadness lingering then slowly fading supports the “tension-release” emotional experience theory (Russo et al., 2013, p. 7). This also supports the findings by Punkanen et al. (2011) that people with severe depression interpret music more negatively than people with mild depression. Intense sadness affects perceived musical emotions, and perceived and felt emotions often coincide (Schubert, 2013); perhaps this is why intense sadness lingered through two more pieces. Although emotion trajectory did not have a significant simple main effect for the other variables, it seems to play a meaningful role in affecting emotions since it was involved in many significant interaction effects.

Interaction effects

Emotion trajectory had significant two- and three-way interactions. Perhaps the sad-happy group had a higher HR and RR for ES music than the happy-sad group because beginning with sad music then shifting to happy music could be quite unexpected and arousing, especially for less-familiar ES music. Also, the happy-sad group had the lowest HR and highest SCL for sad music, and the sad-happy group had the highest HR and RR during happy music, and the lowest SCL during sad music. The HR and RR findings here suggest a contrastive valence effect, where happy music could sound exceptionally happy after hearing sad music (Huron, 2006). The SCL findings suggest more of a simple order effect, where SCL increases with musical exposure, and depends on the type of target emotion heard.

Lyrics also had many significant interaction effects. Participants who heard lyrics reported significantly higher sadness and arousal for PS than ES music. Music with lyrics has been found to evoke sad or negatively valenced emotions strongly (Ali & Peynircioğlu, 2006; Brattico et al., 2011). Perhaps music with lyrics is more personal and has more associations than instrumental music. Lyrics contain text, which could evoke memory associations, and memories can evoke strong emotions (Scherer, 2004). Interestingly, this study also found an interaction effect that the happy-sad group had a lower HR and the sad-happy group had a higher HR, for the lyric group. This supports the concept that music with lyrics is perhaps more personalized, and can strongly evoke sadness, as evidenced by self-reports and HR (higher physiological arousal). However, participants who heard instrumental music rated their sadness significantly higher for ES than PS music. Perhaps instrumental music has a more immediate effect on emotions, with all of its timbres, individual rhythms, pitch contours coming together (Gabrielsson & Lindström, 2010). This study also found an interaction effect that the instrumental group had significantly higher HRs for happy music and lower HRs for sad music, which were more extreme changes than for the lyric group. This could support the notion that instrumental music could quickly affect self-reports and HR when considering target emotion. These discussion points may be interesting questions to investigate in future research.

Overall, it seems many non-musical and musical variables interact to affect emotions, yet physiological variables do not always correlate or change in the expected direction. Perhaps the physiology varied because specific musical features (e.g., timbre, dynamics) affect physiology differently (Coutinho & Cangelosi, 2011), and because order effects are particularly strong for some physiological variables, like SCL. Also, participants were limited in self-reported emotion labels, and if they felt a slightly different emotion, their self-reports might not match their physiology. Nevertheless, these preliminary findings necessitate future studies with larger sample sizes to clarify the specific interactions.

Limitations

Even after diverse recruitment efforts, the majority of participants preferred music with lyrics. Although a potential confounding variable, this justifies further lyric research. Furthermore, laboratory access hours restricted participants to mostly students.

The restrictive nature of listening to music while being physiologically monitored in an unnatural laboratory limits this study’s generalizability. Replacing the few missing physiological data points with means affects the physiological data.

Using only jazz music limits generalizability since listeners may choose genres for specific emotional purposes (Cook et al., 2019). Allowing PS music from jazz subgenres introduced heterogeneity and improves generalizability, yet limits internal validity. The ES stimuli were selected from original audio recordings to promote external validity and accurately reflect pre-1959 jazz. Although the ES lyric/instrumental versions had identical or similar tempos and keys as the counterpart, they sometimes differed slightly in instrumental arrangement, which could have affected emotional responses. Nevertheless, original audio recordings seemed to provide more externally valid musical stimuli, which is imperative when trying to evoke musical emotions (Eerola & Vuoskoski, 2013).

To avoid adverse memory associations and aim to elicit strong emotional responses, participants familiar with ES stimuli were asked which piece evoked the target emotion more strongly. These demand characteristics could have affected this study’s results.

Conclusion

This study found significant effects for target emotion and emotion trajectory, and several interaction effects for both self-reported and physiological responses. The emotion trajectory findings suggest sadness lingers when measured subjectively, and perhaps HR and RR more so follow the contrastive valence concept (Huron, 2006). The lyric findings suggest music with lyrics could elicit more self-reported sadness and arousal for PS music due to its personalized associations, and perhaps instrumental music has a quicker effect on HR and self-reports due to its combinations of musical features. Although there are many contributing factors, these results highlight the importance of the effect of emotion trajectory and presence or absence of lyrics on emotional processing in music listening. The potential implications warrant future investigation.

Future research should explore how different genres with lyrics affect emotional responses, since people choose different genres to regulate mood (Greasley et al., 2013). Furthermore, future studies could test music with lyrics by comparing listeners’ emotional responses to the same version of a song with a vocalist singing the lyrics, then the instrumental version with that vocal track removed.

Although this study found that PS music alone affected emotional responses similar to ES music, a systematic review found live patient-preferred music was so beneficial it improved immunocompromised patients emotionally and physiologically (Silverman et al., 2016). Although many studies advocate for PS music, more studies should compare directly to ES music to better understand its impact.

The sad to happy emotion trajectory made participants subjectively feel significantly sadder after listening to happy music. Music therapists utilizing the iso principle to help people with depression transition and improve emotions should consider this finding. Future studies could explore these emotion trajectories over a longer duration, and later introduce other emotions.

The numerous interaction effects confirm that the independent variables indeed interact to affect felt emotions in listeners. Future research should explore combinations of these and other variables, and test whether preferred music with lyrics indeed causes personalized emotions, and whether instrumental music evokes physiologically felt emotions more quickly.

Understanding musical emotions has serious implications for the general population and those needing mental health support. Different emotional states and psychological disorders affect the music people choose and how it affects them (McFerran, 2016). Future studies should thoughtfully consider these different factors and their interactions to better inform research in music psychology and clinical practice in music therapy.

Supplemental Material

sj-pdf-1-pom-10.1177_03057356211024336 – Supplemental material for The emotion trajectory of self-selected jazz music with lyrics: A psychophysiological perspective

Supplemental material, sj-pdf-1-pom-10.1177_03057356211024336 for The emotion trajectory of self-selected jazz music with lyrics: A psychophysiological perspective by Ashley Warmbrodt, Renee Timmers and Rory Kirk in Psychology of Music

Supplemental Material

sj-sav-1-pom-10.1177_03057356211024336 – Supplemental material for The emotion trajectory of self-selected jazz music with lyrics: A psychophysiological perspective

Supplemental material, sj-sav-1-pom-10.1177_03057356211024336 for The emotion trajectory of self-selected jazz music with lyrics: A psychophysiological perspective by Ashley Warmbrodt, Renee Timmers and Rory Kirk in Psychology of Music

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.