Abstract

This study examined the emotion recognition patterns as a function of facial expression speed and intensity by using dynamic facial expression stimuli. Ninety-six university students participated in an emotion identification task, viewing computer-presented facial expressions depicting six basic emotions. Each emotion was morphed to vary across four intensity levels (20%, 40%, 60%, and 80%) and four facial expression speeds (250 ms, 500 ms, 750 ms, and 1,000 ms). A repeated-measures ANOVA was conducted to compare the effects of emotion, intensity, and facial expression speed on accuracy, false alarm rate, sensitivity, and response time. Significant two-way and three-way interactions were observed. Simple effects analyses revealed that each emotion demonstrated distinct optimal combinations of intensity and facial expression speed for accurate, sensitive, and fast recognition. Furthermore, recognition was optimized when expression speed matched intensity within each intensity level: low-intensity expressions were better recognized at faster speeds, while high-intensity expressions were better recognized at slower speeds. These findings suggest that emotion recognition varies systematically with specific combinations of facial expression speed and intensity, highlighting the importance of dynamic features in understanding facial emotion perception.

Keywords

Introduction

Everyday emotional expressions vary greatly in their intensity and speed. Nonetheless, past studies on emotion recognition have mostly used the static facial expression stimuli (SFES), which arguably have low ecological validity. To overcome this shortcoming, the use of dynamic facial expression stimuli (DFES) gradually gained popularity in emotion recognition research. Recent studies revealed that DFES are not only ecologically valid but also yield better emotion recognition performance than their static counterpart (Krumhuber et al., 2013). However, few studies using DFES investigated the effect of intensity and facial expression speed, which are critical features of dynamic facial expressions. Furthermore, the studies that included these factors, however, treated them separately, thus failing to account for their potential interaction effects on emotion recognition. This study, therefore, sought to examine the patterns of emotion recognition that vary according to intensity, facial expression speed, and their interactions in dynamic facial expression stimuli.

Dynamic stimuli are more advantageous for identifying emotions than static stimuli, as they reflect human sensitivity to spatio-temporal information. Motion over time guides attention to the face and helps observers to process how an expression unfolds, rather than simply adding more static snapshots within a given time (Bould & Morris 2008; Bould et al., 2008; Dobs et al., 2018). For example, Ambadar and colleagues (2005) inserted visual-noise masks between successive frames of dynamic stimuli to disrupt the perception of motion while preserving the total amount of image information. They found that performance dropped to a level comparable to single static stimuli. Also, recognition depended on the natural temporal ordering of facial-action components, and disrupting this order impaired recognition performance (e.g., Jack et al., 2014; Jack & Schyns, 2015). This evidence implies that the advantage of DFES does not come merely from the greater quantity of visual input. Furthermore, dynamic stimuli bring out representational momentum that makes an expression perceived as more intense (Yoshikawa & Sato, 2008). Compared with the performance on emotion recognition tasks using SFES, those using DFES showed higher accuracy and faster response time (Calvo et al., 2016), higher arousal and intensity perception (Sato & Yoshikawa, 2007), and even more activation in brain areas responsible for social cognition and emotion processing (Arsalidou et al., 2011).

Importantly, not all dynamic stimuli have the same degree of ecological validity. Video recordings of actual human faces capture spontaneous and natural facial movements and offer high ecological validity, but their limitation includes severe lack of experimental control, such as manipulating facial motion parameters. Point-light face stimuli, which depict only moving markers corresponding to key facial features, reverse this trade-off. They allow precise manipulation of movement while sacrificing naturalness. Image-based morphing has emerged as a compromise approach, linearly morphing between neutral and peak facial expressions to create the impression of movement. Although morphs have been criticized for their linear trajectories that may not capture the idiosyncratic movement of real expressions (Korolkova, 2018), they enable fine-graded manipulation of parameters such as intensity and velocity, while better preserving natural facial form than point-light techniques (Dobs et al., 2018).

This methodological leverage allowed researchers to examine how quickly expressions should unfold to facilitate recognition. Indeed, previous literature has suggested that facial expression speed is one of the key determinants of perceived naturalness and recognition accuracy. Hoffmann and his colleagues (2010) have found that the speed of facial expression underlies human sensitivity to dynamic stimuli. Each emotion has a different optimal facial expression speed at which it is perceived as natural and recognized the most accurately (Hoffmann et al., 2010; Recio et al., 2013; Sato & Yoshikawa, 2004). For example, studies (Hoffmann et al., 2010; Kamachi et al., 2013) have found that sadness was considered to be most natural and recognized the most accurately when it was presented at slow speed (over 1 s). Furthermore, Kamachi et al. (2013) demonstrated that anger was recognized the most accurately when it was presented at medium speed (0.87 s), and then happiness and surprise at fast speed (0.2 s). Consistent with these findings, Sowden and colleagues (2021) observed that actors’ natural productions of anger and happiness unfold faster than sadness. Using the point-light technique to manipulate the original stimulus speed, they found that recognition of anger and happiness improved when sped up, whereas slowing down improved the recognition of sadness, suggesting that exaggerating an emotion's characteristic speed can facilitate recognition.

Intensity of facial expressions also plays a crucial role in emotion recognition. While emotional expressions can be very strong in some situations, people often show more subtle forms of expression. There have been several attempts to examine the difference between partial and full-intensity expressions using dynamic stimuli (Kessels et al., 2014; Recio et al., 2014; Wingenbach et al., 2016). As a result, studies on how the intensity of expression influences emotion recognition not only found increased accuracy with higher intensity but also interaction between intensity and type of emotion. For example, Calvo and his colleagues (2016) revealed that each emotion had different recognition thresholds, which is the lowest intensity to be identified above chance level: For example, 20% of intensity for happiness, 40% for sadness, surprise, anger, and disgust, and 50% for fear. Also, they have found that the advantage of intensity on recognition thresholds of each emotion was more distinctive in DFES than in SFES. For instance, fear in SFES required higher intensity to be recognized above the chance level compared with that in DFES (60% vs. 50%, respectively).

While the above evidence suggests that both facial expression speed and intensity of emotional expressions influence emotion recognition patterns, only a few studies on these variables have been carried out with DFES thus far. Furthermore, to the best of our knowledge, only one study attempted to investigate facial expression speed and intensity together, although these two variables are expected to have interaction effects on the identification of DFES. Foisy et al. (2007) examined the impaired recognition ability in alcoholics according to two levels of expression intensities (30% vs. 70%) and durations (250 ms vs. 1,000 ms). However, the task was relatively easy because it used SFES without examining multiple levels of each variable. Lastly, most of the studies reported only accuracy and response time. However, false alarm, which is the tendency to confuse the facial expression stimuli as a certain emotion, is likely to influence accuracy. For example, Calvo et al. (2016) found that surprise has high scores on both accuracy and false alarm. This indicates that while people identified surprise correctly in general, people tended to categorize non-surprise faces into surprise as well, which might have inflated its accuracy score. This might bias the interpretation of the conditions that influence the sensitive emotion recognition. To address these issues, this study investigated how emotion intensity and facial expression speed of six basic emotions affect accuracy, false alarm rates, sensitivity, response time, as well as the type of errors during emotion recognition by using DFES.

Method

Participants

Ninety-six native Korean-speaking university students in Gwangju, South Korea, participated in the experiment through an undergraduate research pool in exchange for course credit. There were 30 (31.3%) males and 66 (68.8%) females, and their average age was 20.3 (

Stimuli

The photographs of six emotional expressions (anger, disgust, fear, happiness, sadness, and surprise) from the Japanese Female Facial Expression (JAFFE: Lyons et al., 2020; Lyons, 2021) database were selected through a pilot study. At the initial stage, a pool of 50 pictures with readily identifiable emotional expressions was chosen by the researchers for further evaluation. Then, a total of 69 participants, consisting of university students, rated the perceived intensity of the six emotions appearing in each picture based on a scale of 0 (not intense at all) to 5 (most intense). The mean scores of intensities for each emotion perceived from each picture were thereby obtained. For the experimental stage, the faces that were perceived to be most typical of the given emotion were selected based on (1) relatively high mean intensity score for the target emotion and (2) less confusion with other emotions, as calculated by the difference between the mean intensity scores of the target emotion and non-target emotions. An exception was the expression of fear. Only the target emotion's perceived intensity was used as the selection criterion, because it had relatively small difference between the mean intensity scores of surprise and disgust. Through such a process, we finally derived four faces for each of the six basic emotions. The mean score of perceived intensity of target emotion in these 24 faces was 3.47 (

By using FantaMorph Software, Version 5.6.2 Deluxe (Abrosoft, Beijing, China), these photographs were subjected to morphing, where a neutral face progressively turned into an emotional face. For each emotional expression stimulus, we created a sequence of 60 frames progressively changing in equal gradients from a neutral face to the full-blown emotional face. For each expression, we selected four frames for each sequence: Frames 12, 24, 36, and 48, which represented, respectively, 20%, 40%, 60%, and 80% of intensities in the development of the emotional expression. The 100% intensity level was discarded because a ceiling effect was expected. After creating video clips of 1 s at 60 frames with various intensities, we adjusted their facial expression speed. To set the range of facial expression speed, we considered the fact that micro-expressions take 260 ms on average as onset time (Yan et al., 2013) and that prototypical expressions take 1,000 ms to form on average (Pollick et al., 2003). Accordingly, we set 250 ms as the minimum speed and 1,000 ms as the maximum speed. Therefore, the video clips of 250 ms, 500 ms, 750 ms, and 1,000 ms in length were constructed, respectively, whereby the target intensity was reached at the last frame. In total, 384 video clips were used as experimental stimuli (4 actors × 6 emotions × 4 intensities × 4 facial expression speeds) (see Figure S1 and S2).

Procedure

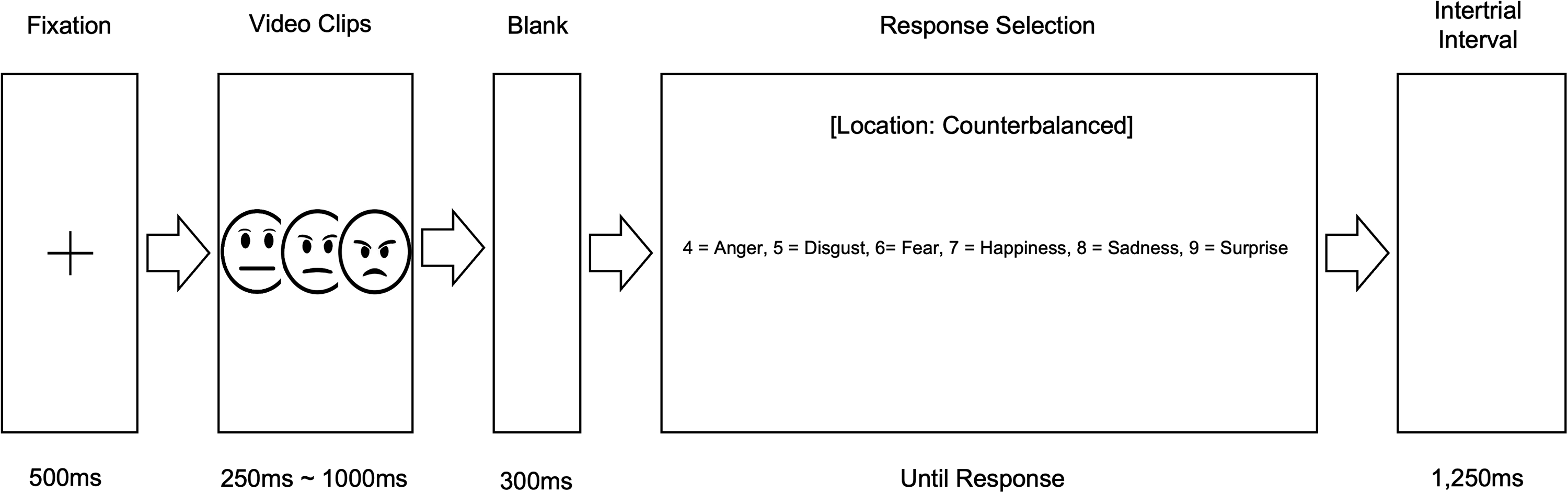

The facial stimuli were shown on a computer screen with E-Prime 2.0 software (Psychological Software Tools, Pittsburgh, PA). Participants were told that video clips of faces would be randomly presented with different emotions, intensities, and facial expression speeds. They were requested to identify and categorize the emotions by pressing one of the six keys and to respond as quickly and accurately as possible. The assignment of emotions to numbers was counterbalanced across participants (but kept unchanged across trials). For categorizing each emotion, the participants pressed one of the number keys (from 4 to 9) in the upper row of a laptop keyboard. We informed the participants about the six basic emotions and held a 10-min practice session for them to become used to the location of the respective keys. We used pictorial representations of facial expressions (emoticons) for the practice session.

The sequence of events on each trial can be seen in Figure 1. After an initial 500 ms central fixation cross on a screen, a video clip of different facial expression speeds appeared. The face subtended a visual angle of 9.5° (Height) × 6.5° (Width) at a 50 cm viewing distance. After the face offset, a 300 ms blank interval was given. Instructions for the response were displayed on the screen. In the response screen, six numbers (from 4 to 9) were shown horizontally, with each number associated with a verbal label of an emotion category (e.g., 4: anger; 5: disgust). Before the new sequence began, a 1,250-ms intertrial interval was given. The participants were given 1 min break halfway through the 384 trials. These procedures were similar to those used previously by Calvo and his colleagues (2016).

Sequence of events in the emotion recognition task.

The visual presentation of the experimental procedure and stimulus-presentation is provided in Figure S3. The stimulus-set consisted of 6 emotions varied in the combination of intensity (20%, 40%, 60%, and 80%) and facial expression speed (250 ms, 500 ms, 750 ms, and 1,000 ms) for a total of 96 stimuli for each of the four actors (384 stimuli). After the practice session, each participant was presented with 32 blocks of randomly ordered six emotions selected randomly without replacement from the pool of stimuli of the first two actors. Two stimuli from the same emotion category were not allowed to be presented sequentially between the blocks. Upon completing the initial 32 blocks, the participants were given a 1-min break and then proceeded to the latter part of the experiment, consisting of 32 blocks of emotion stimuli from actors 3 and 4. Such stimuli selection process was repeated for each participant.

Measures

Hit rate (probability of correctly endorsing the target emotion when it is presented), false alarm rate (probability of endorsing a certain emotion when other emotions are presented), and response time (RT; from the offset of the 300 ms interval) were measured as dependent variables. For example, hit for fear is the tendency to accurately categorize fear stimuli as fear, which represents accuracy. False alarm for fear is the tendency to falsely categorize non-fear stimuli (i.e., anger, disgust, happiness, surprise, and sadness) as fear. We also examined the type of errors, which is the probability of being not correct and different from the concept of false alarm rate (see Figure S4). Furthermore, we assessed sensitivity using the nonparametric

Statistical Analysis

We used repeated-measure ANOVA with a specificity correction to compare the effects of emotions (anger, disgust, fear, happiness, sadness, and surprise), intensities (20%, 40%, 60%, and 80%), and facial expression speeds (250 ms, 500 ms, 750 ms, and 1,000 ms). Also, to analyze the type of errors, mixed-design ANOVA with 4 (intensity) × 4 (facial expression speed) as between-factors and 5 (nontarget emotions) as a within-factor was conducted for each target expression. To further examine the interaction effects, simple effect analysis was conducted on hit rate, false alarm rate,

Transparency and Openness

We report how we determined our sample size, data exclusions, all manipulations, and all measures in the study, and we follow JARS (Kazak, 2018). All the data is available at the link stated in the data availability section of this paper (Kim, 2022). For copyright reasons, the JAFFE Database is available only at [https://www.kasrl.org/jaffe_download.html]. Furthermore, as these stimuli are deeply linked to the experimental task, the task can be requested from the corresponding author only if users are granted permission to use the stimuli from the copyright holders. IBM SPSS Statistics for Windows, Version 23.0, was used for all statistical analyses (IBM SPSS, Armonk, NY). This study's design and its analysis were not pre-registered.

Statement of Research Ethics

The study procedures were carried out in accordance with the Declaration of Helsinki. The university research ethics committee reviewed and approved the design of the study. All participants were informed about the study, and all provided written informed consent for both participation and publication of this study prior to any research procedures.

Results

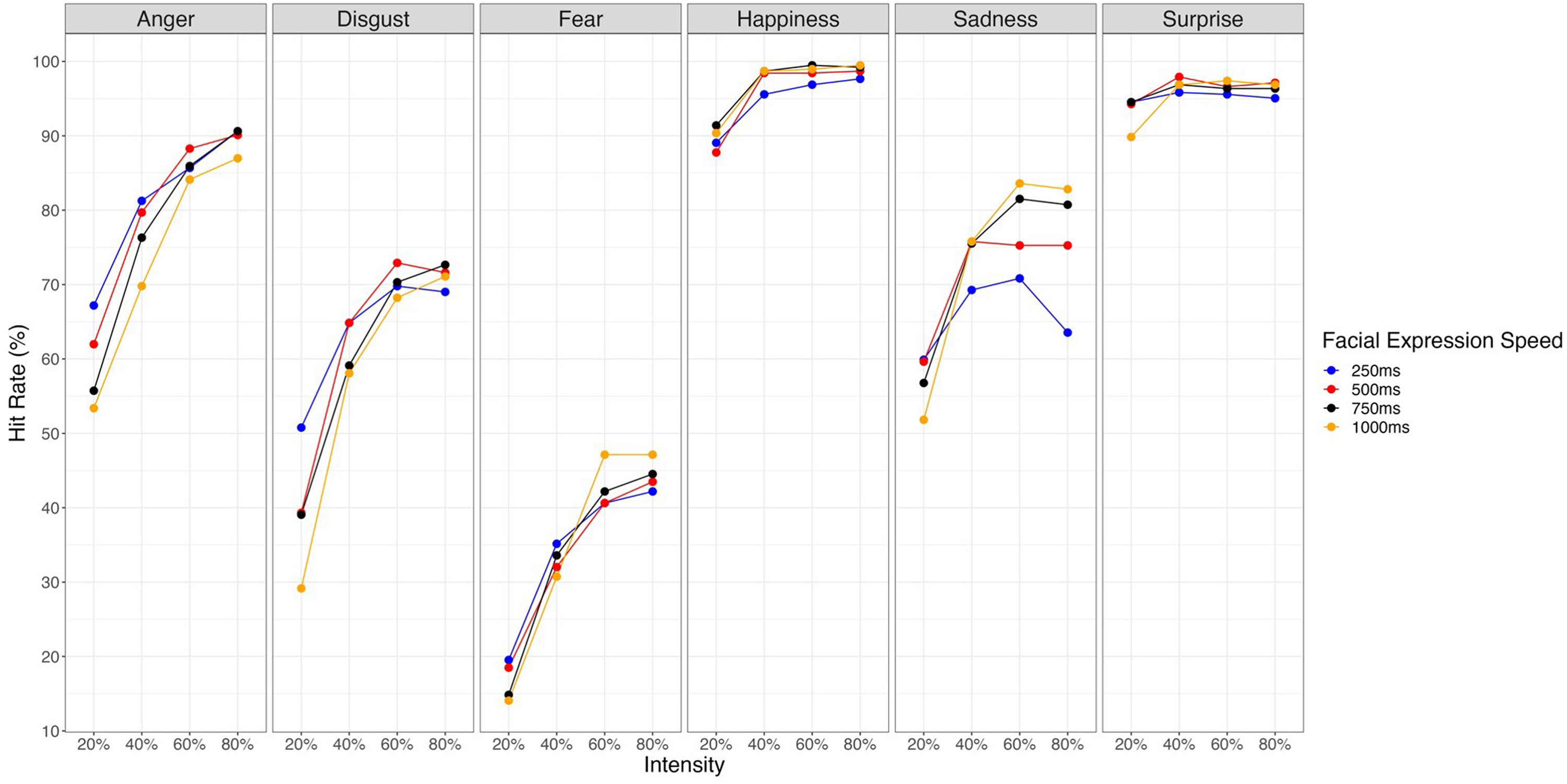

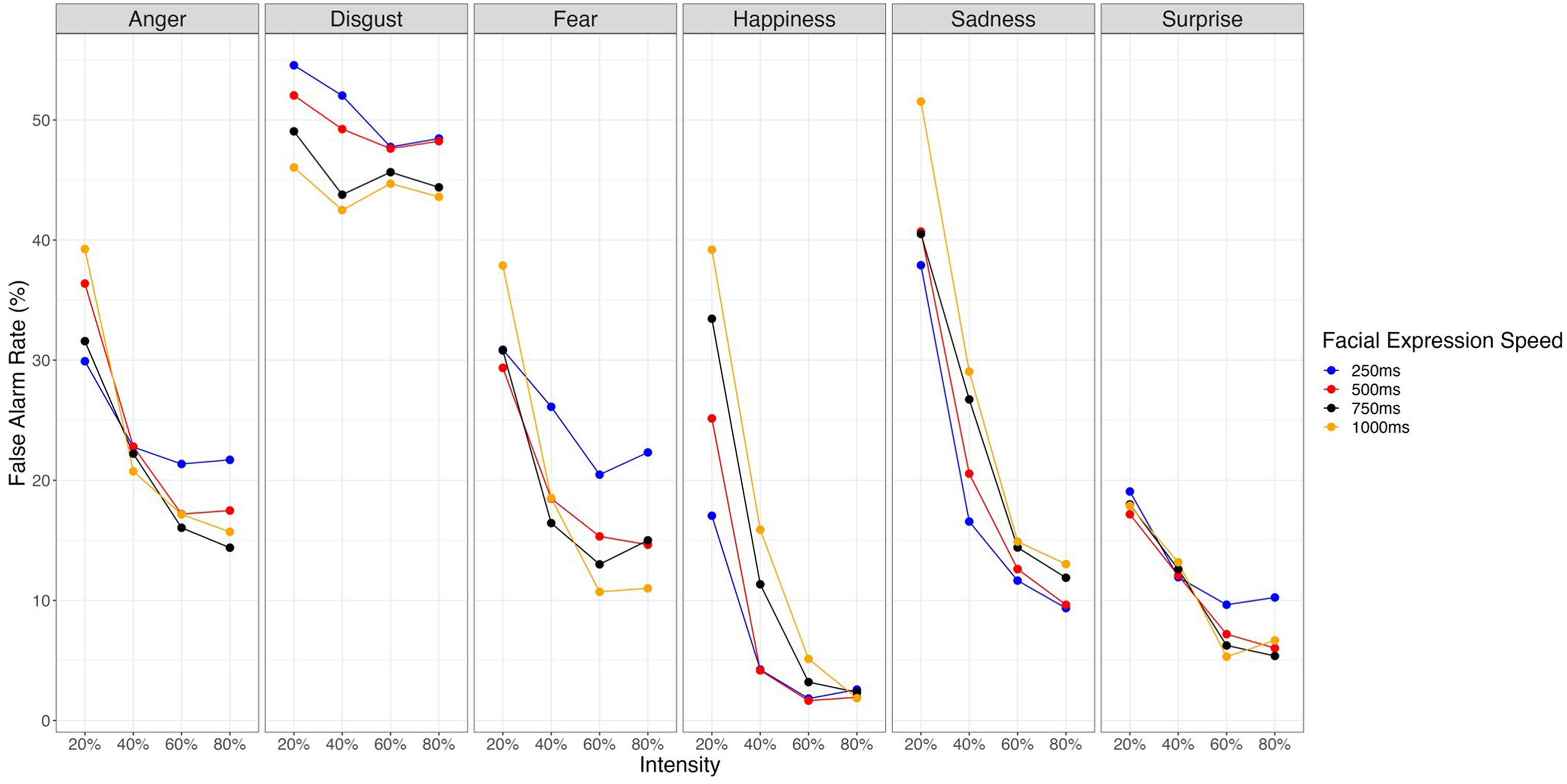

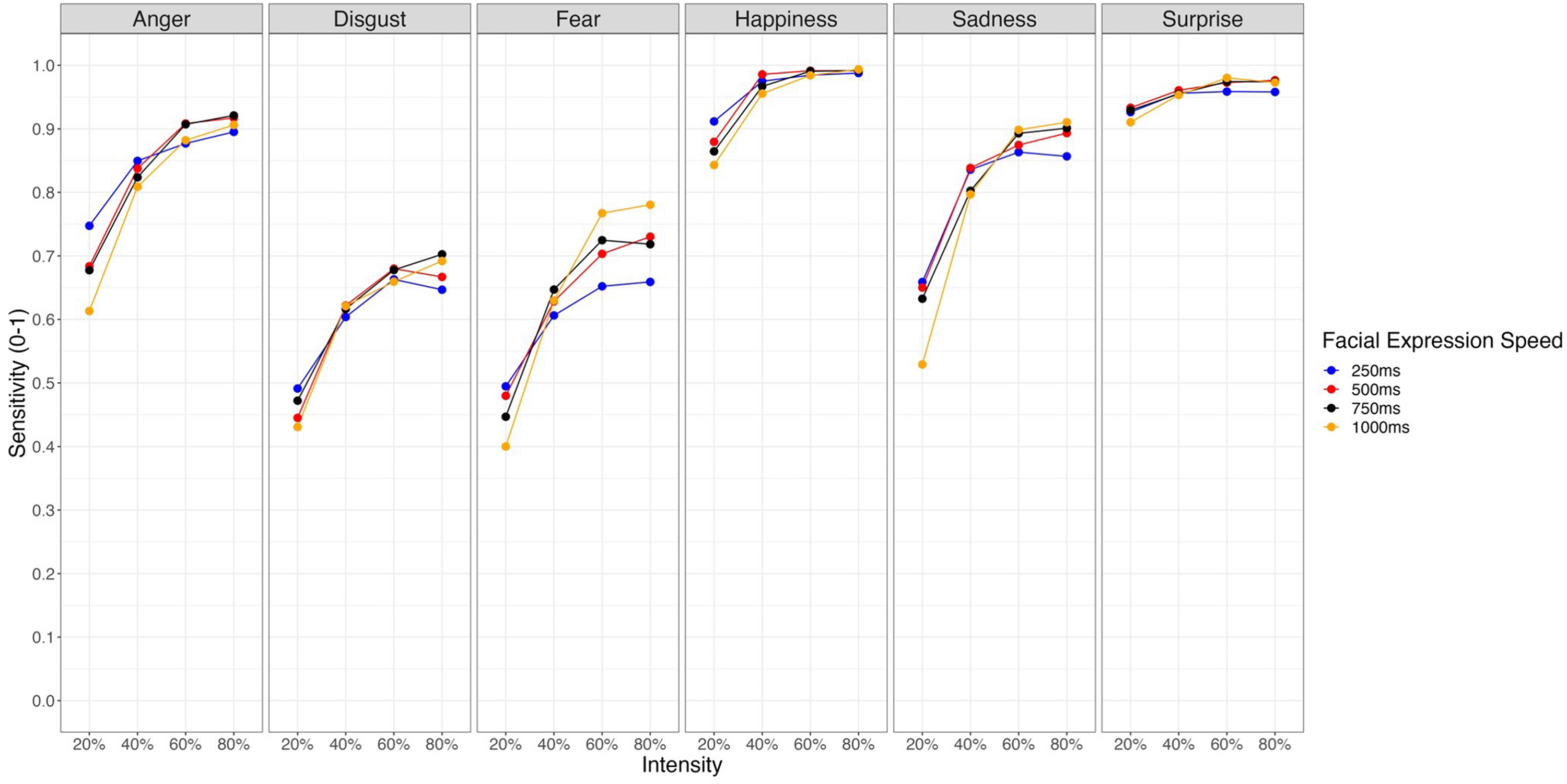

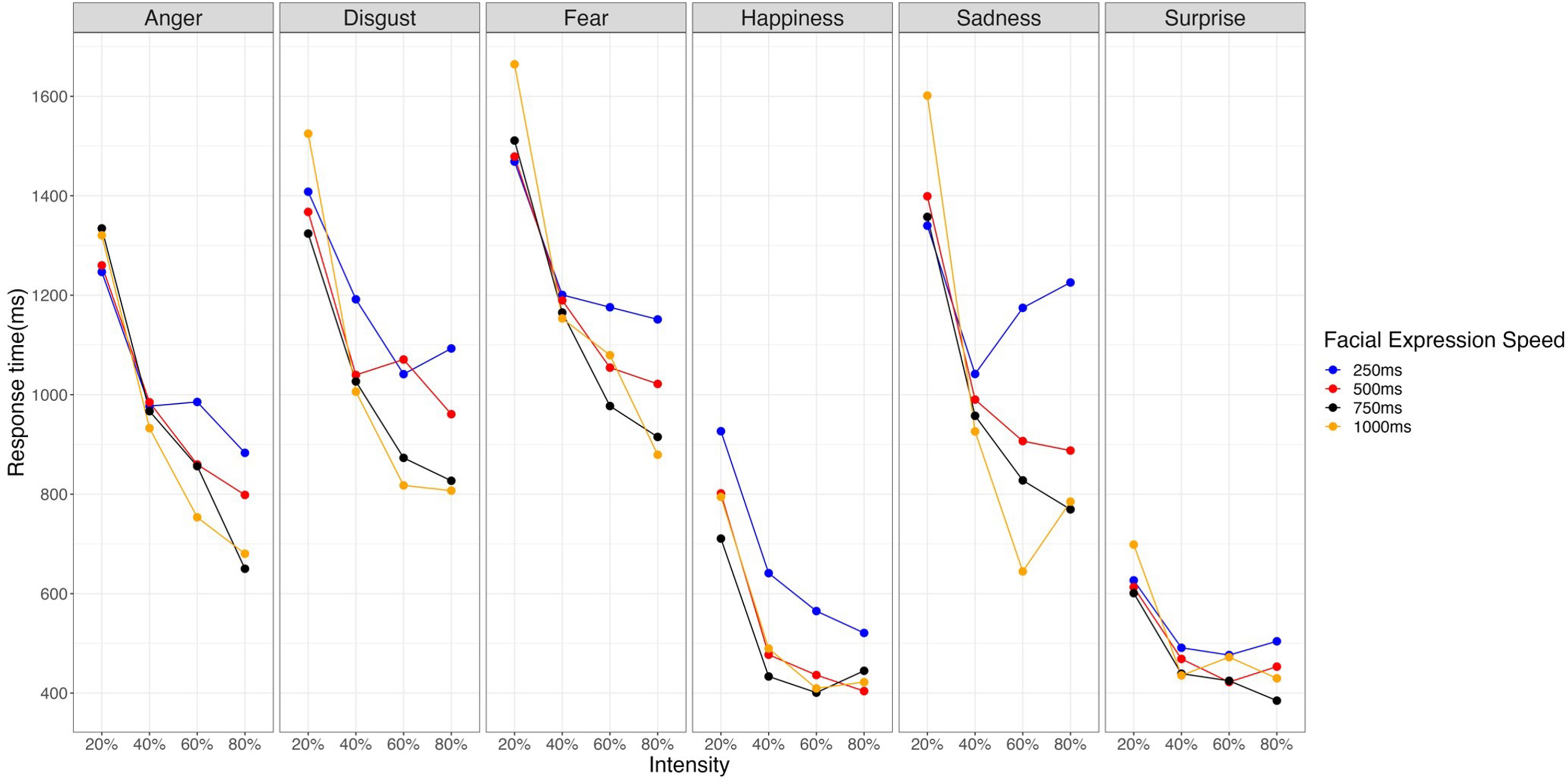

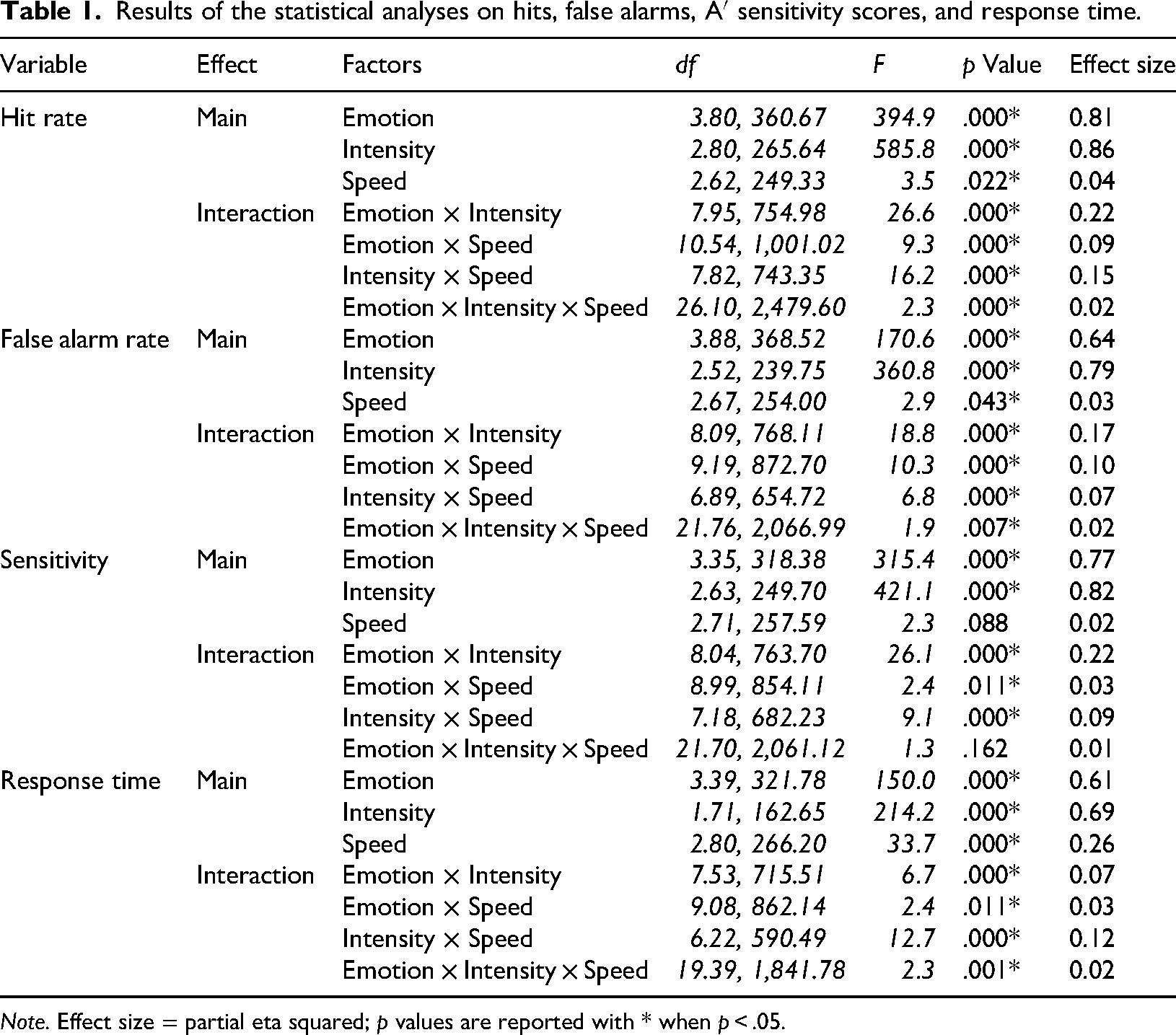

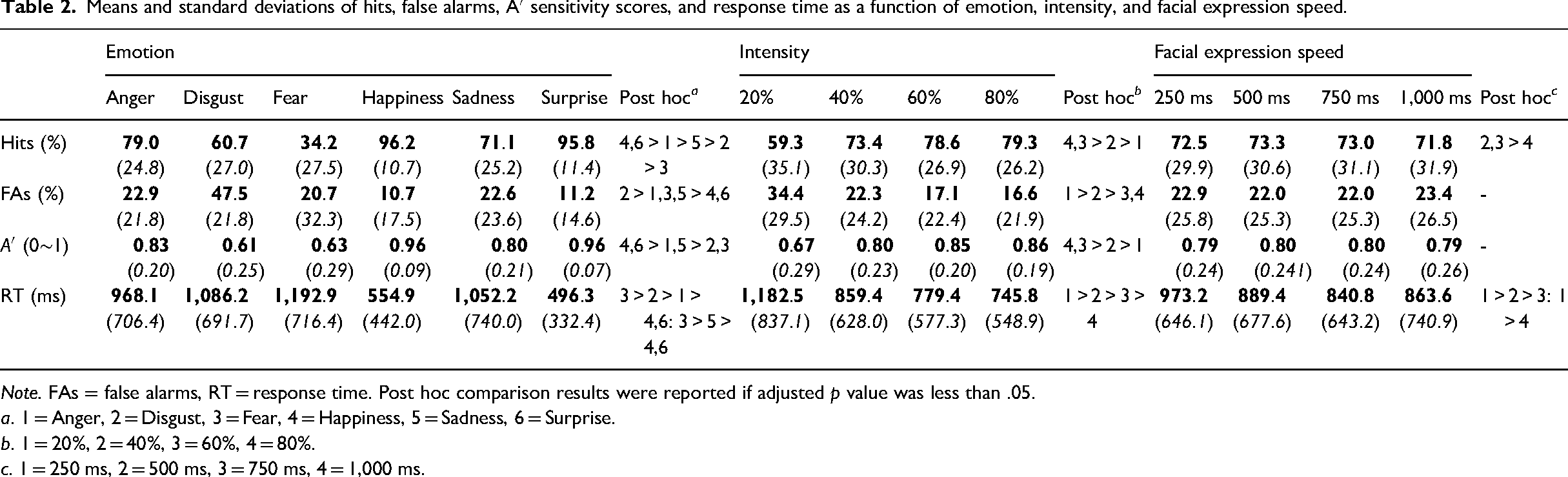

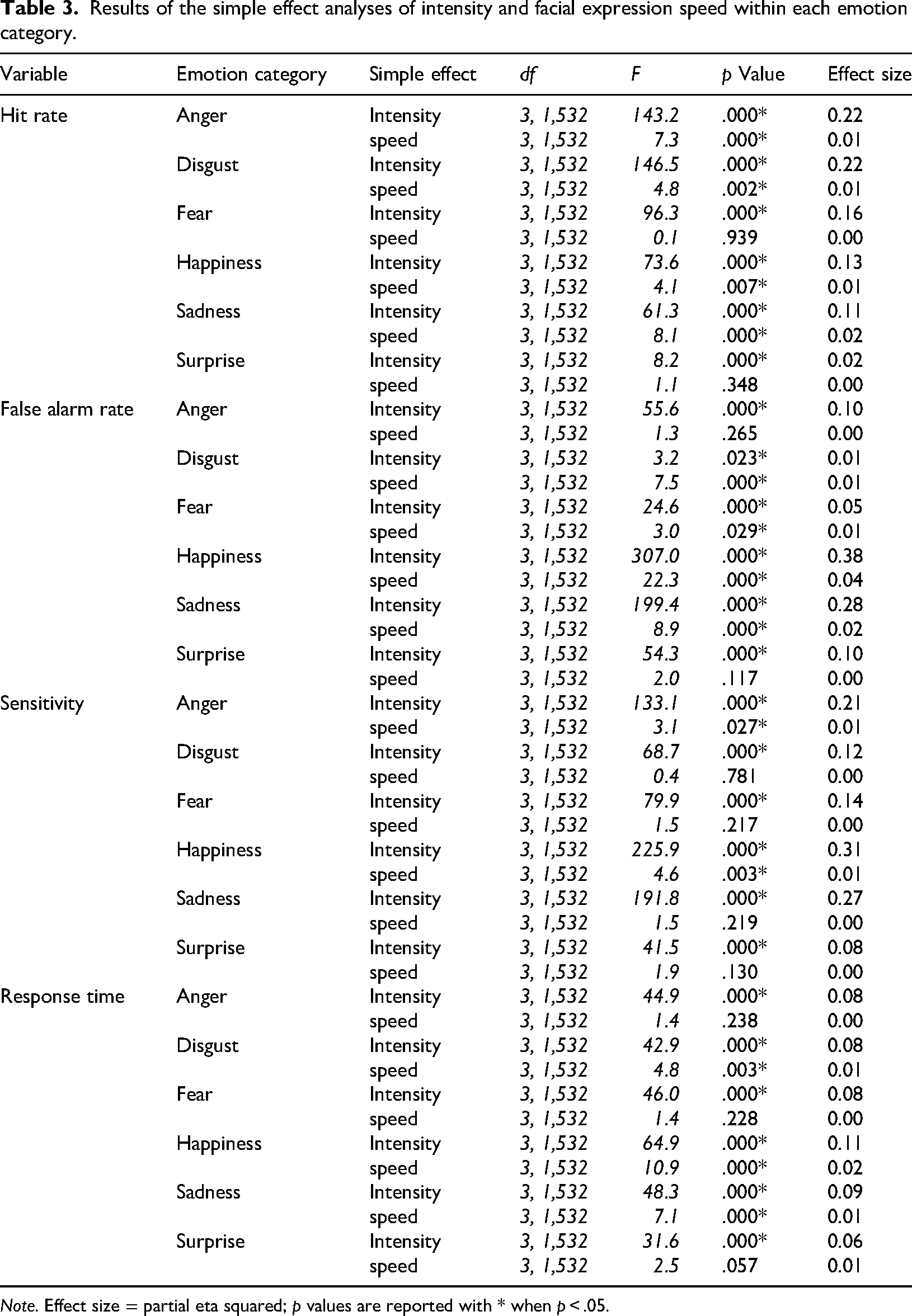

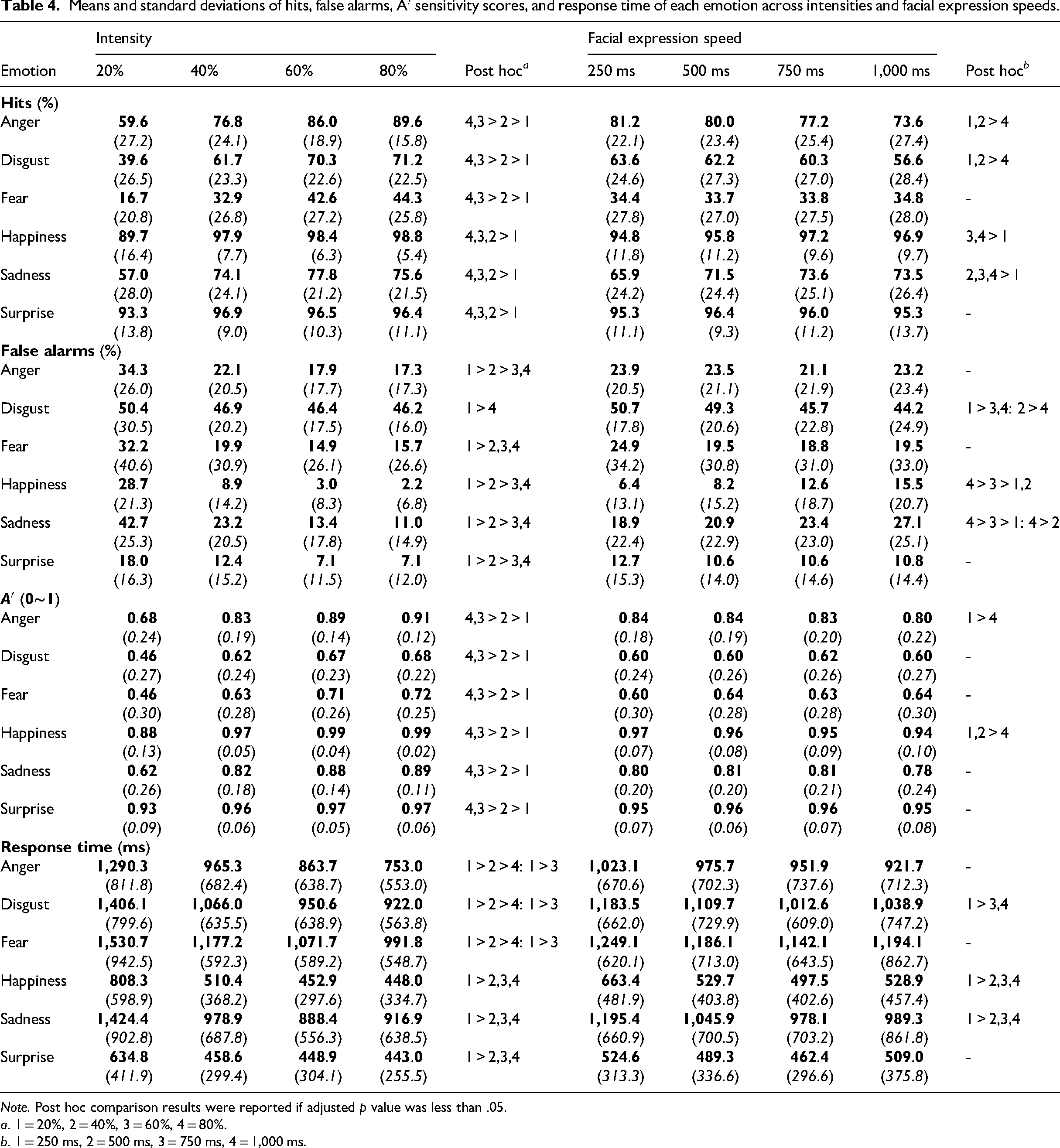

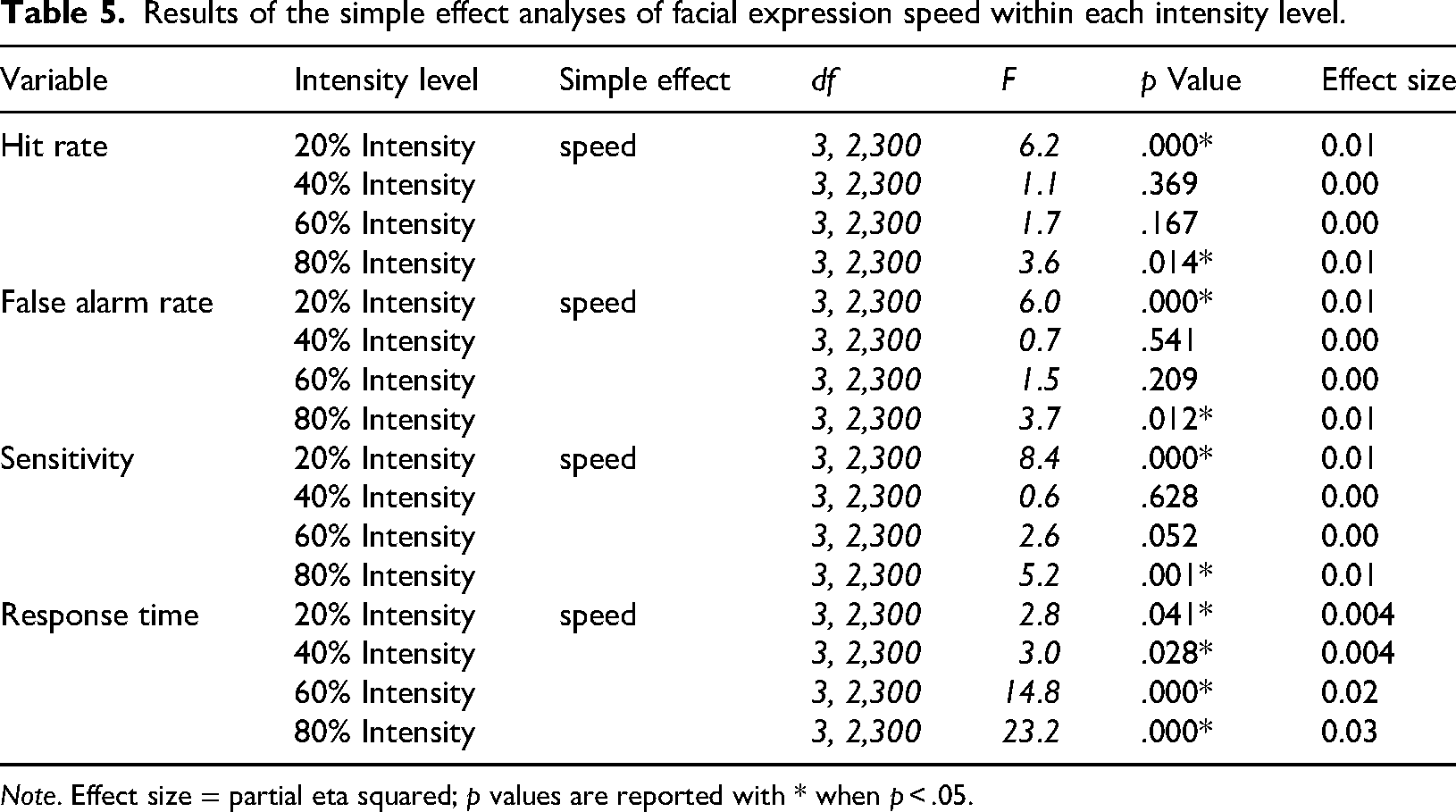

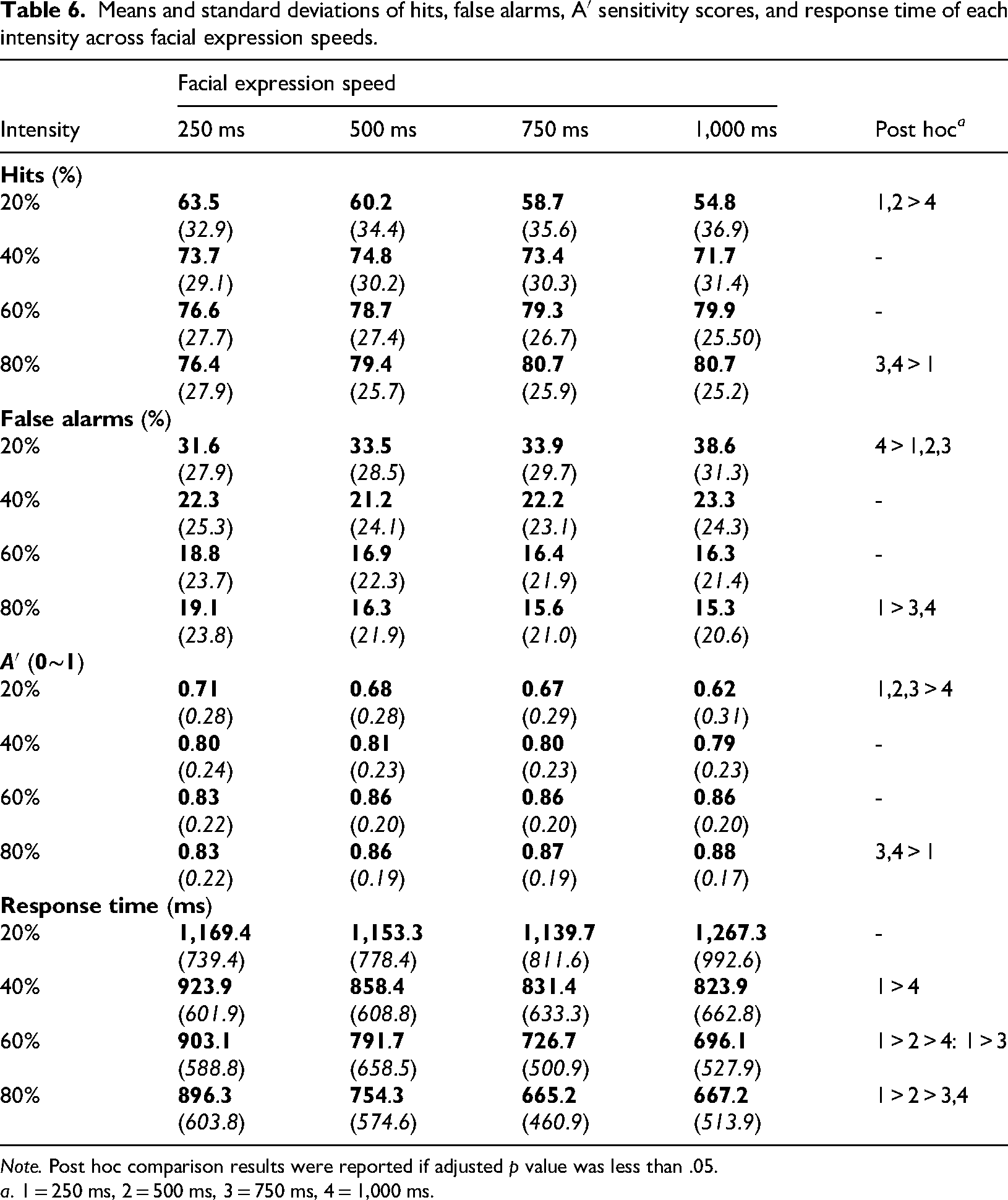

The mean and standard deviation values, as well as statistical analyses of hit rate, false alarm rate, sensitivity, response time, and type of errors, are presented in Tables 1 to 6 and Supplementary Table 1. Three-way interactions are visualized in the Figures 2 to 5. The visualizations of main effects and two-way interactions are illustrated in the Supplementary Materials, Figures S5 to S8.

Mean hits according to emotion, intensity, and facial expression speed.

Mean false alarms according to emotion, intensity, and facial expression speed.

Mean sensitivity according to emotion, intensity, and facial expression speed.

Mean response time according to emotion, intensity, and facial expression speed.

Results of the statistical analyses on hits, false alarms, A′ sensitivity scores, and response time.

Means and standard deviations of hits, false alarms, A′ sensitivity scores, and response time as a function of emotion, intensity, and facial expression speed.

Results of the simple effect analyses of intensity and facial expression speed within each emotion category.

Means and standard deviations of hits, false alarms, A′ sensitivity scores, and response time of each emotion across intensities and facial expression speeds.

Results of the simple effect analyses of facial expression speed within each intensity level.

Means and standard deviations of hits, false alarms, A′ sensitivity scores, and response time of each intensity across facial expression speeds.

Hit Rate

Accuracy significantly varied by emotion [

False Alarm Rate

False alarm rates differed by emotion [

Sensitivity

Sensitivity significantly varied by emotion [

Response Time

Response time significantly differed by emotion [

Type of Errors

Error rates were significantly different by emotion [all

Discussion

The purpose of this study was to examine how emotion intensity and facial expression speed of six basic emotions affect emotion recognition by using DFES. Emotional facial expressions with four different intensities (20%, 40%, 60%, and 80%) and facial expression speeds (250 ms, 500 ms, 750 ms, and 1,000 ms) were shown, and accuracy, false alarm rate, sensitivity, response time, and error types of participants during the task were collected. We have found that happiness and surprise were better recognized than negative emotions and that the expressions presented at higher intensities or relatively medium speeds showed better performance. Furthermore, each emotion had different optimal intensities and facial expression speeds for accurate, sensitive, and fast recognition. Also, this study showed the interaction effect between facial expression speed and intensity. At the lowest intensity, people better identified the expressions with faster speed than the ones with slower speed. On the other hand, better performance was found on the expressions with slower speed than the one with faster speed at the highest intensity. These findings have specific implications for understanding how people perceive emotional facial expressions as well as future emotion recognition studies.

Our findings are in line with those from the emotion recognition literature. For example, we replicated the well-documented advantage for happiness and surprise over negative emotions on both accuracy and speed of recognition. These results might be explained in part due to distinct features of the expressions (Becker & Srinivasan, 2014; Calvo & Marrero, 2009). For instance, happiness has a distinct feature, such as a smile, surprise, or a gaping mouth. Related to this, studies (Calvo et al., 2016; Recio et al., 2013) suggested that negative emotions are more confused with each other, possibly because they share in common more morphological properties. Consistent with this, our data also suggested that negative emotions tended to be categorized as other negative emotions, whereas neutral and positive emotions did not. Another explanation is that negative emotions such as anger and fear draw more attention and demand more cognitive resources to assess the potential threats, which might have led to more errors and response time (Mancini et al., 2020, 2022; Mirabella, 2018; Mirabella et al., 2023). Lastly, it is possible that the recognition advantage of happiness and surprise might have come from more facial movements. A previous study that manipulated facial movement has suggested that spatial information, rather than speed, is crucial for emotion recognition (Pollick et al., 2003). However, this study varied these factors in separate stimuli and experiments, which might have caused selection bias. More recent studies manipulated them simultaneously and reported that speed significantly influenced recognition, whereas spatial information did not (Sowden et al., 2021). We also replicated robust intensity effects (Calvo et al., 2016; Kessels et al., 2014; Wingenbach et al., 2016). Increasing intensity improved accuracy and sensitivity, reduced false alarm rate and error rates, and shortened response. As for the facial expression speed, our study shows that the highest accuracy is achieved at its medium levels of 500 ms and 750 ms and that as the facial expression speed got slower, the response time got shorter. These results are similar to those of previous studies (Boloorizadeh & Tojari, 2013; Kamachi et al., 2013).

Furthermore, several emotion-specific patterns are consistent with the literature. This implies that each emotion has different optimal intensities and facial expression speeds for the best accuracy, false alarm rate, sensitivity, response time, and error rate. For example, disgust and fear needed 40% intensity to exceed the chance level performance, while other emotions showed the above chance level performance at 20% intensity. Also, happiness, sadness, and surprise were recognized the most accurately and fastest, starting from rather weaker intensity (40%), but anger, disgust, and fear required relatively stronger intensity (60%) to yield the best performance. These results regarding emotion and intensity interaction are similar to the previous finding (Calvo et al., 2016). Furthermore, happiness and sadness tended to be accurately recognized at a slower speed, whereas the accuracy of anger and disgust peaked around a faster speed (Bould et al., 2008; Hoffmann et al., 2010; Kamachi et al., 2013; Oda & Isono, 2008; Recio et al., 2013; Sato & Yoshikawa, 2004; Sowden et al., 2021).

Beyond these replications, the present study extends the literature by clarifying how speed and intensity affect not only accuracy but also patterns of false alarms. Prior research often interprets higher accuracy in dynamic facial expression recognition as evidence of improved perceptual discrimination. However, Calvo et al. (2016) demonstrated that accuracy alone can obscure meaningful response biases. For example, their study showed that some emotions, such as fear, were often miscategorized into other emotions, such as surprise, inflating the accuracy of one emotion while simultaneously elevating false alarms of the other. Similarly, our findings utilizing both accuracy and false alarm rates suggest that some of the previously reported speed advantages are at least partially driven by response bias. Specifically, slow speeds increased false alarms for happiness and sadness, and fast speeds increased false alarms for disgust. As a result, speed did not improve the sensitive recognition of sadness and disgust, even when accuracy improved. Nonetheless, the result of our study suggested that faster speeds (i.e., 250 ms and 500 ms) did facilitate sensitive recognition for happiness and anger. These results underscore the importance of supplementing accuracy with false alarm and sensitivity measures, as relying solely on accuracy can mask the underlying perceptual processes and exaggerate the benefits of certain temporal manipulation.

In addition, we found a notable interaction effect between intensity and facial expression speed, demonstrating that recognition performance is shaped by the combined influence of these two parameters rather than by either one on its own. By systematically varying both factors across multiple levels, the present study extends earlier work that typically manipulated only one dimension of dynamic expressions. Our findings show that the perceptual system does not respond to speed or intensity in a uniform way. Instead, the effect of each parameter depends on the other. For example, at low-intensity conditions (20%), accuracy significantly increased as the expressions were shown faster, and the response time tended to shorten with faster facial expression speed, although it did not reach statistical significance in pairwise comparison analysis. At almost full-blown intensity condition (80%), a reversed pattern appeared for facial expression speed. This pattern is further supported by their lower false alarm rate and higher sensitivity in each condition.

Although the current data is limited in providing conclusive evidence, human sensitivity to natural facial expressions may have yielded this unique pattern of response. For example, people have been found to identify DFES better than SFES as DFES are closer to real facial expressions (Krumhuber et al., 2013). Also, faster speeds may amplify perceptual clarity for weak emotional cues by providing sharper temporal contrast, whereas slower speeds may allow observers more time to extract rich spatial information from high-intensity expressions. Another possible explanation is that participants may rely on implicit expectations about the typical mapping between expression intensity and speed in natural emotional behavior. Subtle, low-intensity emotional cues in everyday life often appear briefly or rapidly, such as fleeting micro-expressions or partial expressions that do not fully develop. Conversely, strong, full-blown emotions tend to unfold more gradually, with more pronounced muscular changes that take longer to reach their apex. Thus, when stimulus timing was congruent with these ecological expectations, recognition benefited, whereas incongruent combinations led to poorer performance.

There are some limitations of this study that should be considered. Although our study did not show any effect of participant gender, it is important to consider potential gender-related differences in the recognition of facial expression intensity or speed. Some literature on dynamic facial expression recognition has reported gender-specific effects when expression dynamics are manipulated. For example, studies that examined expression speed (Hoffmann et al., 2010) and intensity (Calvo et al., 2016) have reported small gender-related differences in recognition performance. Future studies should therefore explicitly examine whether the effects of expression intensity and speed on emotion recognition vary as a function of participant gender. Furthermore, the stimuli we used were only comprised of the female faces, and a potential bias from using only one gender should not be ruled out. For example, one study found that male faces showing sadness or disgust yielded better identification than female faces, while happy female faces were more accurately recognized than their male counterparts (Hess et al., 1997). Furthermore, our study did not separate the influence of genuine motion information from the benefits of simply providing more visual information over time. Although our study focused on facial expression speed (i.e., motion information), the dynamic advantage may partially reflect the greater amount of information available. Some studies suggested that the recognition benefits of video stimuli may stem from the accumulation of static information, rather than the motion cues (e.g., Fiorentini & Viviani, 2011; Gold et al., 2013). For example, Gold et al. (2013) found similar recognition efficiency for dynamic expressions, temporally reversed or randomized expressions, and single static images. They suggested that the amount of available static information, especially the inclusion of the expression's apex, may be the most critical factor. Another limitation is concerned with the ecological validity of morphed stimuli. Although morphing technology allows the control and manipulation of various expressions, the fixed time course of the morphed faces may engender unnaturalness or even unpleasant feelings (e.g., Uncanny Valley; Mori, 1970). Morphed faces change in a linear time course, whereby each facial feature unfolds at the same time and progressively toward emotional expressions. However, changes in the time course of natural facial expressions vary greatly and affect the perception of naturalness of emotions (Oda & Isono, 2008). To overcome these limitations and to expand the generalizability of our outcomes, future studies should separate the contributions of information quantity and true motion cues, include standardized male emotion expression stimuli, and consider the time course of each emotion expression.

In conclusion, this study not only replicated prior results but also provided a more integrative framework for understanding dynamic emotion perception. By clarifying how speed and intensity influence not only accuracy but also false alarms and sensitivity, the present study showed that faster speeds can produce superficial performance gains that partially reflect response bias rather than actual improvements in perceptual discrimination. By incorporating false alarms and sensitivity measures alongside accuracy, we showed that faster speeds enhance discrimination only for certain emotions (e.g., happiness and anger) and provide no benefit for others. Furthermore, our study found that recognition performance is influenced jointly by intensity and presentation speed rather than independently. These results underscore the importance of aligning stimulus parameters with the natural dynamics of facial expressions. Taken together, these insights offer both methodological guidance for studies employing dynamic stimuli and theoretical implications for models of facial expression perception, emphasizing the need for multifaceted approaches that capture the complexity of dynamic facial expression perception.

Supplemental Material

sj-docx-1-pec-10.1177_03010066261433424 - Supplemental material for Recognition patterns of dynamic emotional facial expression stimuli

Supplemental material, sj-docx-1-pec-10.1177_03010066261433424 for Recognition patterns of dynamic emotional facial expression stimuli by Yongguk M. Sarles and Samuel Suk-Hyun Hwang in Perception

Footnotes

Ethics Statements

The study procedures were carried out in accordance with the Declaration of Helsinki. The university research ethics committee reviewed and approved the design of the study (IRB No.1040198-200220-HR-023-04). All participants were informed about the study, and all provided written informed consent for both participation and publication of this study prior to any research procedures.

Author Contribution(s)

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All the data is available at [https://osf.io/bw4jn/]. For copyright reasons, the JAFFE Database is available only at [![]() ]. Furthermore, as these stimuli are deeply linked to the experimental task, the task can be requested from the corresponding author only if users are granted permission to use the stimuli from the copyright holders.

]. Furthermore, as these stimuli are deeply linked to the experimental task, the task can be requested from the corresponding author only if users are granted permission to use the stimuli from the copyright holders.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.