Abstract

The fovea, with its high concentration of cone photoreceptors, results in increased sensitivity and visual acuity, while the periphery, with its lower contrast sensitivity and resolution, provides better spatial summation. Despite these differences, our experience of the visual field remains detailed and uniform, supported by the influence of central vision on peripheral vision. There is evidence that recognition of simple shapes in the periphery is enhanced by the presence of a similar shape in central vision. However, it is unclear whether such mechanisms generalise to more complex stimuli, such as faces. In a face matching task, we found that having a similar face in central vision improved face matching performance in the periphery. This suggests that general mechanisms govern the interaction between central and peripheral vision in recognising faces.

Introduction

The human retina processes visual information unevenly across its different regions, with the fovea exhibiting the highest density of cone photoreceptors, leading to enhanced sensitivity and visual acuity (Strasburger et al., 2011). Conversely, the periphery displays lower resolution and contrast sensitivity, but increased spatial summation. This uneven distribution can lead to significant variations in the appearance of basic visual features across eccentricities when measured with simple stimuli in controlled laboratory conditions (Davis, 1990; Greenstein & Hood, 1981; McKeefry et al., 2007; Valsecchi et al., 2013).

When we fixate on the centre of textures, they tend to appear uniform, despite systematic changes in the periphery (Otten et al., 2017), suggesting that local features are integrated across the visual field. In a recent study on peripheral vision of objects’ brightness, we found evidence for a filling-out mechanism (Toscani et al., 2017): the brightness of peripheral areas on 3D objects was biased by the luminance at the fovea. In our previous study (Toscani et al., 2017) we used a gaze contingent display to force participants to look at a chosen dark or light point within a virtual object's surface. They were asked to adjust the luminance of a disk to reproduce the luminance of a peripheral target portion of the object. Although we explicitly told them to ignore the content of the scene and only to consider the brightness of the target portion, their matches were biased by luminance at fixation. Crucially, we found no influence of fixated luminance when observers foveated outside the object's boundaries. These results indicate that our visual system uses foveal information to estimate the brightness of areas in the periphery, and that it does so only when a certain degree of continuity can be safely assumed, such as whether two points are within the same object boundary. Such a mechanism may explain why lightness perception is biased by lightness at fixation (Toscani et al., 2013b, 2013a). The influence of foveal information on peripheral vision goes beyond basic visual properties such as lightness or texture: we found that the perceived mood of individual faces in a crowd of people and the mood of the whole crowd are biased by the emotions at fixation (Zoghlami & Toscani, 2023).

The functional role of this mechanism can be understood with the assumption that similar objects tend to appear in groups, for example, when looking at an apple on an apple tree, the fruits perceived in the periphery are likely to be apples rather than pears. If the interactions between central and peripheral vision are governed by general mechanisms, we should expect them for different stimuli, from basic visual dimensions such as lightness, to complex objects like faces. In fact, an effect of information in central vision on perception in peripheral vision has been shown for size, orientation, density, blur, shape, colour, lightness (Otten et al., 2017; Toscani et al., 2017) and faces (Zoghlami & Toscani, 2023). These effects consist of perceptual biases, for example, the perceived facial emotion of peripheral faces in a crowd is biased by the emotion of the face at fixation (Zoghlami & Toscani, 2023). However, the influence of information presented in central vision on peripheral vision extends beyond perceptual biases: Yu and Sim (Yu & Shim, 2016) found that the presentation of an object in the fovea can improve discrimination performance of a similar object presented in the periphery. This effect can be explained as the consequence of foveal feedback signals (Stewart et al., 2020). Evidence for such feedback comes from an fMRI study in which participants were engaged with an object discrimination task. Results showed that it is possible to decode the object category from BOLD activity in a foveal-retinotopic cortical area, although objects are presented in the periphery (Williams et al., 2008). Furthermore, interfering with foveal processing with transcranial magnetic stimulation impairs peripheral object discrimination (Chambers et al., 2013). Also, the presence of a foveal noise mask that disrupts foveal processing effectively impairs object discrimination in peripheral vision (Contemori et al., 2022; Fan et al., 2016), suggesting a role for the foveal representation in visual cortex in processing peripheral information. This may reflect a general mechanism linked to the remapping of receptive fields. The foveal-retinotopic cortex may be engaged in anticipation of a saccade, even when no eye movement occurs, allowing it to receive information from peripheral retinotopic cortex through predictive remapping without actual foveation (Kroell & Rolfs, 2022).

However, it is not clear whether the results from Yu and Shim (2016) generalised to complex stimuli such as human faces like perceptual biases do (Zoghlami & Toscani, 2023). Faces are indeed special visual stimuli that are processed differently.

Unlike basic geometric forms, face perception involves specialised neural systems—most notably the fusiform face area (FFA)—that are selectively tuned to facial features (Kanwisher et al., 1997). This distinct processing is supported by robust behavioural and neurophysiological evidence. One key behavioural demonstration is the face inversion effect, where recognising inverted faces is much harder than recognising inverted objects, suggesting that faces are processed holistically rather than through isolated features (Yin, 1969). This configural processing is also illustrated by the Thatcher effect (Thompson, 1980), where distortions to the internal features of an inverted face go unnoticed until the face is upright. While faces are detected and attract attention more quickly than other objects (Bindemann et al., 2005; Cerf et al., 2009; De Haas et al., 2019)—possibly due to the use of rudimentary and rapidly accessible information (Crouzet et al., 2010), holistic processing is slower than processing individual features and appears to require at least 200 ms, as the inversion effect is not observed with shorter presentation times.

Crucially, in the Yu and Shim study (Yu & Shim, 2016), the positive influence of central stimuli on the recognition of similar peripheral stimuli is demonstrated using simple shapes, with the central stimulus presented for a very brief duration (33 ms). Although this short presentation time permits initial face detection and some early-stage face processing, it likely does not allow for holistic processing—a crucial step in perceiving faces (Wang, 2019). Therefore, it is possible that the effect does not generalise to more complex stimuli, such as faces.

Here, we test whether the recognition of faces (measured as matching performance) presented in peripheral vision improves when similar faces are shown in central vision, suggesting that the influence of central information on peripheral vision may be a general mechanism employed by the visual system to compensate for poor peripheral resolution.

Method

Participants

We recruited 33 volunteer participants, all of whom were Bournemouth University students aged over 18 years old. Their participation could be compensated with credits if they needed to complete their studies. Others were compensated with Amazon Vouchers valued at £10 per hour.

Stimuli

Faces were taken from the Chicago Face Database (CFD) (Ma et al., 2015).

We had 10 female and 10 male faces, meaning 45 pairs per gender. While we balanced for gender we did not balance for race, as this would have required a much larger pool of faces. However, although we are better at recognising faces of our own race (e.g., Chiroro & Valentine, 1995), face-specific holistic processing appears to be comparable for other-race faces; if anything, the inversion effect tends to be stronger when participants are presented with faces of a different race (Valentine & Bruce, 1986).

The similarity between each pair of faces within gender groups was determined with a preliminary unidimensional scaling (MDS) experiment (N = 3, the three authors). Similar to Toscani et al. (2020), participants were presented with four comparisons on the left side of the screen and one reference on the right side. They were then asked to use the mouse to select the comparison that was most similar to the reference. Once the participant clicked on the selected comparison, it was removed from the screen, and the participant had to select the closest comparison to the reference among the remaining comparisons. This process was repeated until all comparisons were chosen. All 252 groups of five were presented, separately for the male and the female faces, for a total of 504 trials. Responses were converted into paired comparisons, with 6 comparisons per trial, and these were pooled across participants. Next, we utilised the fminsearch() MATLAB (MathWorks, Natick, MA, USA) function to search for the 10 parameters that represent the position of each of the stimuli on a 1D space, which best predicts the participants’ choices. Figure 1 shows the stimuli ordered based on scaling results.

Female and male face stimuli arranged based on perceived similarity. List of images: BM-011-016-N, BM-010-003-N, BM-013-002-N, BM-018-001-N, BM-020-001-N, BM-022-022-N BM-026-002-N, BM-043-071-N, BM-0039-029-N, BM-037-033-N. BF-018-039-N, BF-036-027-N, BF-023-010-N, BF-247-179-N, BF-241-222-N, BF-250-121-N, BF-233-116-N, BF-228-212-N, BF-048-002-N, BF-221-223-N. Labels from the Chicago Face Database (CFD) (Ma et al., 2015).

We used the resulting space to determine the distance between each pair of faces, and we used these distances to split the pairs into three groups: ‘similar’, ‘moderately similar’, and ‘different’. Among the 45 pairs per gender, the 15 most similar were labelled as ‘similar’, the next 15 as ‘moderately similar’, and the 15 least similar as ‘different’.

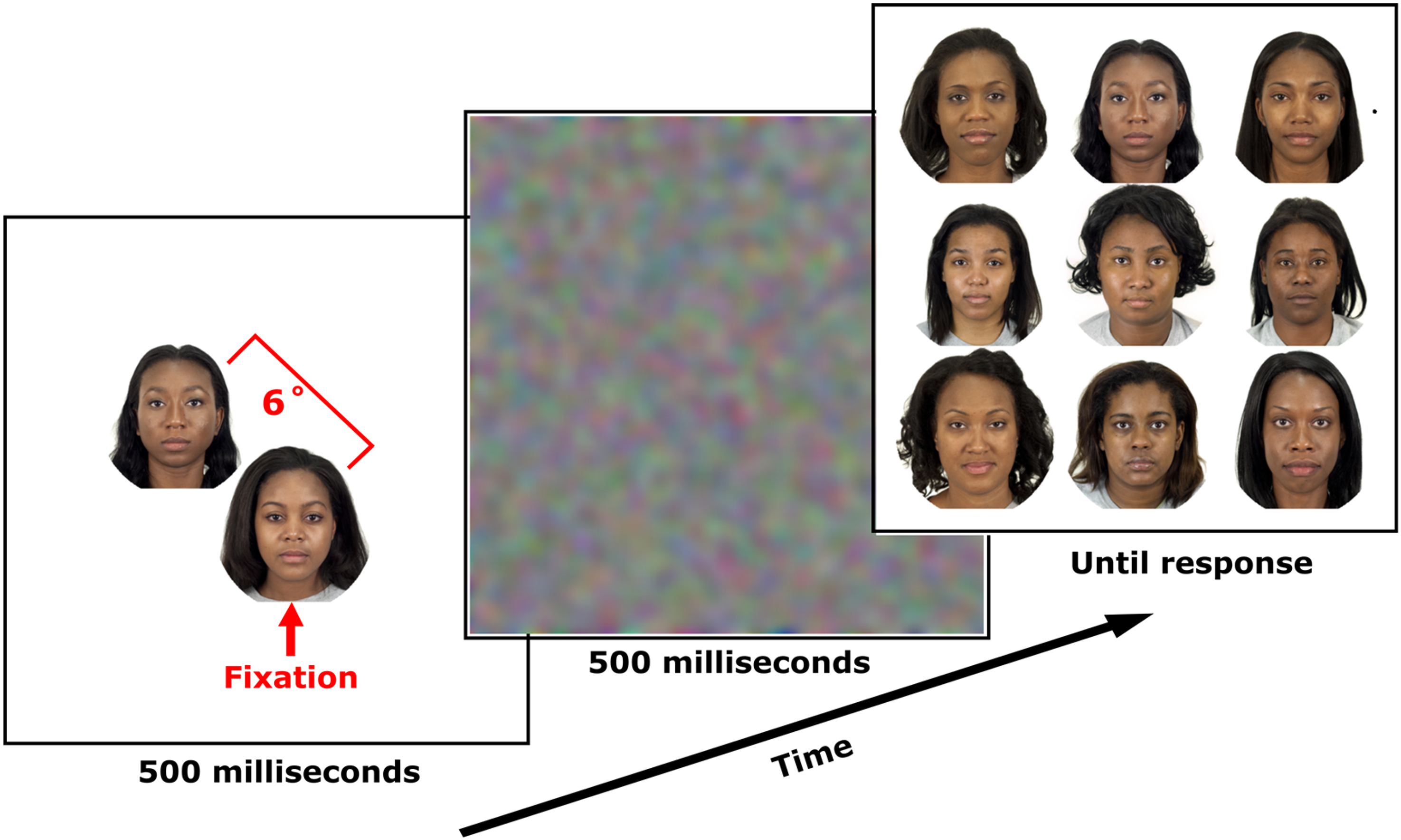

Procedure

Participants were asked to look at the fixation point displayed in the centre of the screen and press the space bar when ready. After that, one face was presented in the periphery and one in the centre, simultaneously for 500 ms (Figure 2). The peripheral face was shown at a random location along a circle with a radius of 6 degrees of visual angle from fixation.

Procedure. After brief 500 ms presentation of central and peripheral faces, followed by a 500-ms dynamic noise mask, participants viewed a 3 × 3 matrix of faces and selected the face they believed appeared peripherally.

To ensure that participants stared at the fixation point presented at the beginning of each trial, then at the face presented on the fixation point, rather than looking at the peripheral face, eye tracking was employed to monitor gaze position in real-time. In case the participant's gaze moved away from the central face, the trial was repeated.

After the peripheral and the central faces disappeared, all faces (males or females) except the one shown at fixation in that trial were displayed in a 3 × 3 matrix layout, and participants were permitted to make free eye movements and use the mouse to select the face they believed was shown in the periphery. They were told to ignore the central face and do their best to identify the peripheral face. Participants were not instructed to react as soon as they could, but rather to be accurate.

Between the presentation of the central and peripheral faces and the 3 × 3 matrix of faces, a mask was presented for 500 ms to disrupt brief sensory memory (Wong et al., 2021). The mask consists of dynamic Gaussian noise realised by filtering a white noise RGB image every frame (frame rate was 60 Hz) with a sigma of 0.3 dva.

Participants underwent 90 trials in total, with trials identified by all 45 pairs of 10 male faces and 45 pairs of 10 female faces. Therefore, there were 15 trials for each of the similarity conditions (‘similar’, ‘moderately similar’ and ‘different), one corresponding to one pair of peripheral and central faces, for male and female faces, for a total of 90 trials. This way, each face served as a peripheral target and a central distractor an equal number of times. The order of the trials was chosen at random. The experiment took participants between 20 and 30 minutes to complete.

The face images were presented using custom-made software written in MATLAB and displayed on an LCD monitor with 1920 × 1080 pixel spatial resolution and 41 × 23 degrees of visual angle at a 60-Hz refresh rate. Participants were seated at 74 cm from the computer monitor with their heads stabilised on a head and chin rest. Each face stimulus was comprised within a circular region of 6 degrees of visual angle.

Eye Tracking

We used an Eyelink 1000 (SR Research Ltd, Osgoode, Ontario, Canada) Desktop Mount eye tracking system to track the right eye at a sampling rate of 1000 Hz. Before each session, participants were calibrated using a standard calibration procedure (Valsecchi et al., 2013). The eye position was monitored sample by sample, and the trial was repeated when the distance between the fixation point and the actual fixated position exceeded 1.5 degrees of visual angle.

Results

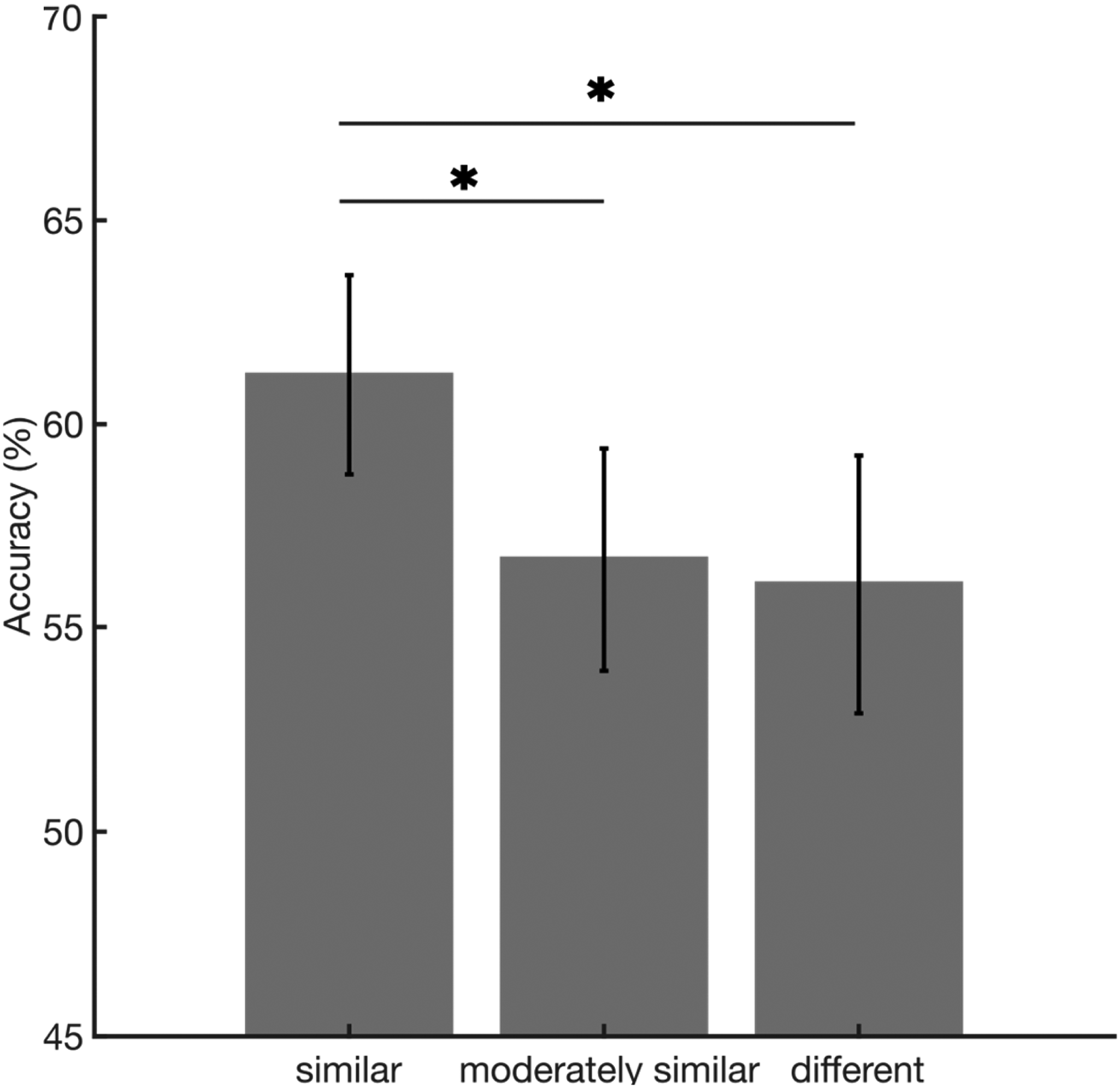

Figure 3 shows matching accuracy as a function of similarity.

Matching accuracy on the y-axis, as a function of similarity condition, on the x-axis. The horizontal lines with stars highlight the significant post hoc comparisons. The error bars represent the standard error of the mean.

Although faces were presented only in same-gender groups to be considered potential targets, because gender was not an experimental factor of interest, we averaged across genders for each of the three similarity groups. Chance level was 11.11% and all participants performed above chance, with performance ranging from 20% to 93%.

A one-way repeated measures ANOVA revealed a significant main effect of similarity (F(2, 64) = 4.54, p = .014, η2 = .124), indicating that accuracy increased with similarity. Bonferroni–Holm corrected post hoc comparisons showed that accuracy was significantly higher for similar faces than for faces with medium similarity (t(32) = 2.43, corrected p = .036; correction for three comparisons), and for different faces (t(32) = 2.76, corrected p = .023).

Discussion

Our results show higher accuracy when the face presented in central vision was similar to the one presented in peripheral vision, although participants were told to ignore the central face and identify the peripheral one. This is consistent with findings on shape discrimination (Yu & Shim, 2016) and can be related to foveal feedback signals (Chambers et al., 2013; Williams et al., 2008). The influence of foveal signal on peripheral discrimination can be part of a set of fovea-periphery interaction mechanisms helping us to perceive the visual scene as uniform across the visual field despite the differences between central and peripheral vision (Stewart et al., 2020; Toscani et al., 2021). This may be particularly important when peripheral stimuli occur in groups and are therefore affected by crowding.

We presented the central and peripheral faces simultaneously, and both were consciously visible, because we wanted to resemble the natural conditions in which multiple similar complex objects appear at the same time. However, this implies significant differences with the study by Yu and Shim (2016). They presented the central stimulus very briefly followed by a mask, so that participants did not perceive it consciously. This was meant to exclude the possibility that observers responded to the peripheral target based on what they consciously perceived centrally. In our study, we presented the central stimulus for 500 ms, ensuring that it was consciously perceived by participants. This duration was chosen to promote holistic processing, as evidence suggests that when faces are presented only briefly, they are processed based on individual features, and face-specific effects—such as the inversion effect—tend to disappear (Wang, 2019). While we told participants to ignore the central stimulus, which was presented as a distractor, we can’t rule out the possibility that our results are driven by some conscious perceptual bias toward what was presented at the fovea. In fact, all perceptual judgements on suprathreshold stimuli may be subject to response biases (Morgan et al., 2013). Our results are compatible with the hypothesis that information presented in central vision enhances recognition performance for similar peripheral stimuli, which is an emerging perspective (Stewart et al., 2020; Williams et al., 2008), but further research is needed to provide less subjective evidence. For instance, one could expect that established neural correlates of face recognition (Schweinberger et al., 2004) are enhanced by the presence of a similar, centrally presented stimulus.

Another difference is that Yu and Shim (Yu & Shim, 2016) did not find an effect of the central stimulus on the discrimination of the peripheral stimulus when the shapes were presented simultaneously, like in our study, but only when the central stimulus was presented 150 ms after the onset of the peripheral target. They interpreted this result as a sign that the foveal feedback takes some time to occur. Our results are not necessarily at odds with the finding described above. In fact, while we presented the central and the peripheral stimuli simultaneously, we kept them on screen for a much longer time (500 ms), providing more than 150 ms for the foveal feedback to occur. While our study shows an effect of a central face on the discrimination performance of a similar face presented in the periphery working in natural conditions in which the two faces are consciously visible, and presented at the same time, its dynamics need further investigations to be understood.

Since we did not assess recognition performance for the peripheral stimulus in the absence of the central stimulus, we do not know whether the presence of the peripheral stimulus causes an overall improvement or detriment to recognition, independent of the effect of similarity, or if the presence of a similar face results in increased performance and the presence of a different face results in decreased performance. However, even if similarity reduces recognition performance—that is, if the presence of the central stimulus has an overall negative effect on performance—the effect we found can still be functionally explained in terms of promoting perceptual uniformity. There is indeed evidence of perceptual biases that reduce vision accuracy but cause uniform perception across the visual field. In scotopic vision, when the cones in our retina are not stimulated enough to respond to light and only rods are active, the lack of rods in central vision implies the presence of foveal scotoma. However, the scotopic foveal scotoma is filled in with information from the immediate surround, and people trust such illusory percept more than veridical information (Gloriani & Schütz, 2019). Similarly, the missing information in the blind spot on our retinae, where no photoreceptors are present, is filled in with information from the surround (Ramachandran, 1988, 1992). Such illusion is considered by participants more reliable than a percept based on external input (Ehinger et al., 2017). Together with the findings discussed above regarding filling-in in the blind spot and the foveal scotoma, the notion that a perceptual effect like the one we present here contributes to perceptual uniformity is consistent with the theory that perceptual uniformity is achieved through perceptual mechanisms (Toscani et al., 2021), rather than being a metacognitive phenomenon (Odegaard et al., 2018).

We believe the effect of the face presented centrally on facial recognition performance cannot be explained based on known spatial contrast effects. When an attractive face is presented near average faces, this face tends to look more attractive, and vice versa (Lei et al., 2020). This is suggested to be caused by a contrast mechanism by which the attractiveness of a face in a group is compared with the environment. Such mechanisms could explain known group effects, such as the cheerleader effect (van Osch et al., 2015; Walker & Vul, 2014) or the friend effect (Ying et al., 2019). To the best of our knowledge, such contrast effects have not been reported for facial identity appearance. However, if results were to generalise from research on attractiveness, they could not explain our findings, as the contrast with a similar face presented at the centre would bias its appearance away from the similar features the two faces share, and potentially make the peripheral face harder to recognise.

We also believe that temporal contrast cannot account for our results. In our task, participants fixated on the central face for 500 ms. This could potentially lead to visual adaptation and aftereffects, which might influence the appearance of the target face and the distractors presented in the 3 × 3 layout. Although the 500 ms mask should prevent aftereffects, even if they did occur, they would be expected to reduce accuracy as similarity increases. Specifically, the well-known repulsive aftereffect (e.g., Webster & Maclin, 1999) would predict that when the central face is similar to the target face, its appearance would be distorted away from the features of the fixated face, leading to lower matching accuracy.

While our investigation focused on a single peripheral face in natural settings, faces are often viewed in groups. In such contexts, visual crowding likely affects face perception at multiple stages, including both individual face features and holistic processing (Kalpadakis-Smith et al., 2018; Manassi & Whitney, 2018). Crucially, foveal noise affects the ability to discriminate peripheral objects impaired by crowding, suggesting a role for foveal feedback in mitigating crowding (Qian et al., 2024). This detrimental effect is prominent at 100 and 500 ms after the onset of the peripheral stimulus, suggesting that foveal feedback may help reduce the effects of crowding in both feature-based and holistic face processing.

A late influence of foveal noise has also been reported for peripheral stimuli presented in isolation. Contemori et al. (2022) found an effect of foveal noise presented between 150 and 300 ms after peripheral stimulus onset, and Fan et al. (2016) reported a disruptive effect of noise introduced at 250 ms post-stimulus onset. These findings support the idea that the temporal dynamics of foveal feedback are compatible with face processing.

In conclusion, our study revealed a higher performance in recognising facial features when the face presented in central vision resembled the one in peripheral vision, despite participants being instructed to focus solely on the peripheral face. This trend is consistent with prior research on shape discrimination and is attributed to foveal feedback signals. The presence of such signals might contribute to the perception of a uniform visual scene across the visual field, despite the differences between central and peripheral vision. Our findings add to the evidence suggesting the existence of general mechanisms that govern interactions between central and peripheral vision.

Footnotes

Acknowledgments

The authors thank Alejandro Estudillo for his extremely helpful comments and suggestions. This work was supported by a British Academy SRG2324\240833. They thank Obaapa Owusu-Manu for their excellent work with data collection and help with conceptualisation and writing.

Author Contribution(s)

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the British Academy (grant number SRG2324\240833).