Abstract

1. Introduction

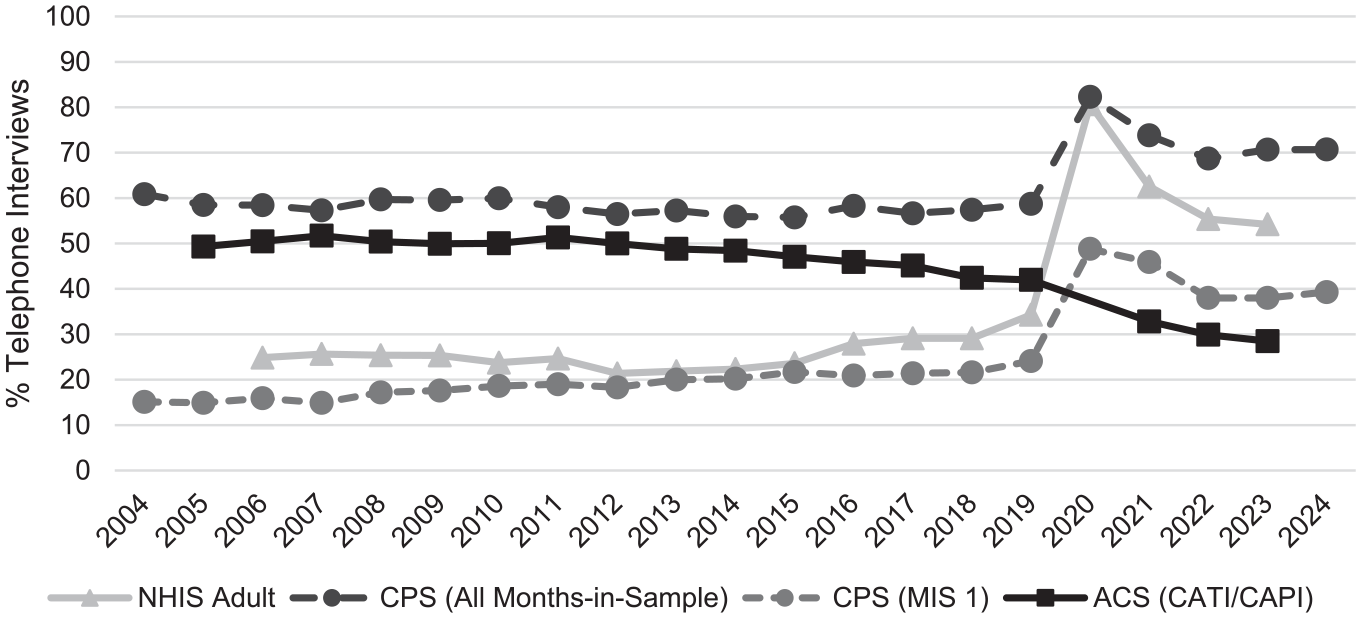

One of the most substantial shifts over the last decade has been in the use of in-person and telephone interviewers as a survey mode of administration. Historically in-person surveys switched to being conducted by telephone or mixed with self-administered modes of web and paper questionnaires (de Leeuw 2005; Olson et al. 2012, 2021). As one example, Figure 1 shows the proportion of telephone interviews conducted in three government surveys in the United States—the National Health Interview Survey (NHIS), the Current Population Survey (CPS), and the American Community Survey (ACS). The NHIS and CPS traditionally use primarily in-person interviews. Before 2020, less than one-third of NHIS interviews, under one-fifth of the first month-in-sample for the CPS, and about 60% of the CPS interviews for all of the months-in-sample (MIS), were conducted by telephone. Spiking due to COVID-19 travel restrictions in 2020, the plurality (CPS-MIS1) or majority (NHIS, CPS-all-MIS) of these two traditionally in-person surveys are now conducted by telephone. The traditionally mixed-mode ACS uses a combination of telephone and in-person interviews, mail questionnaires and (as of 2013) web questionnaires. In 2019 and earlier, about half of the questionnaires were completed using an interviewer-administered mode; after 2020, this fell to 30% of interviews. Interviewer-administered surveys are not disappearing, but how they are being used in surveys is changing. With this backdrop, I ask, what are future challenges and research needs for interviewer-administered surveys?

Percent of interviews conducted by telephone, US National Health Interview Survey, Current Population Survey (March, All months-in-sample and first month-in-sample), and American Community Survey (Sources: Table A1).

2. Interviewer-Administered Surveys and Coverage, Sampling, and Nonresponse Errors

Declines in response rates and increases in noncontact and refusal rates in interviewer-administered surveys over time are an ongoing challenge (Luiten et al. 2020). These declines are amplified because interviewers vary in their ability to carry out sample creation, household and respondent selection, and survey participation recruitment tasks, possibly resulting in both nonresponse bias and nonresponse error variance due to interviewers (e.g., Groves and Couper 1998; Kibuchi et al. 2019; Kołczyńska et al. 2023; Wagner and Olson 2018; West and Blom 2017; West et al. 2013; West and Olson 2010). Recruitment difficulties are further compounded by changes in societal norms around talking with unknown others, reduced trust in the legitimacy of science, and a proliferation of survey requests leading to “oversurveying” (Eggleston 2024).

To help understand these decisions, future research on nonresponse in interviewer-administered surveys should examine what interviewer-respondent “doorstep” interactions look like in today’s in-person and telephone surveys. This research may include how and whether the types of householder concerns expressed to interviewers have changed, what kinds of responses interviewers provide to these concerns, and evaluate how often householders are making purely heuristic decisions to not respond before receiving information about the request (Schaeffer et al. 2018). If interviewers’ own attitudes toward their job (e.g., Durrant et al. 2010) follow the more general trend toward greater skepticism for science or away from desires for in-person interactions, then declines in response rates may reflect feelings from both sample members and interviewers; understanding changes in interviewers’ attitudes is thus also important. Future research should also examine whether interviewer tailoring and maintaining interaction to combat refusals are still efficacious (Daikeler and Bosnjak 2020) and how different survey design features intended to help with cooperation (e.g., incentives) are differentially implemented across interviewers (Kibuchi et al. 2019). Future qualitative research with both responding and nonresponding households to understand their participation (non)decisions, as well as with interviewers, would complement and extend analyses of interviewer observations (Medway et al. 2022). This work should inform new training materials and interviewer observations.

In-person interviewers often observe characteristics of sampled units for use in response propensity models or as proxies for nonresponse bias. Future research could use natural language processing and other machine learning models to parse interviewer call notes for potential insights into or predictors of a sample unit’s response propensity or characteristics; survey organizations may also ask interviewers to answer one or two open-ended questions designed to elicit information about the household in addition to standard interviewer observations that may be easier to incorporate into models for field decisions, given contemporary text computing advances (West and Li 2019).

Obtaining contact with a household is an ongoing and increasing challenge for interviewer-administered surveys. Updated research on effective days and times to contact households for current interviewer-administered surveys is needed. To the extent that “optimal” call windows are provided to interviewers in a responsive or adaptive design, then interviewers need to follow these recommendations. More research on how to encourage interviewer compliance with call timing and other recommendations from supervisors or the main office, and understanding reasons for deviations from these recommendations (Walejko and Wagner 2018; Wescott 2020) may help with understanding why some adaptive or responsive designs in interviewer-administered surveys fail to meet their goals (Tourangeau et al. 2016)

Interviewer-administered modes are an important and effective tool for increasing response rates in mixed-mode surveys (de Leeuw 2005; Olson et al. 2021). Mixed-mode designs that include interviewer-administered modes are most effective when including the interviewer-administered mode increases the response propensity for a sampled person above their (assumed) baseline for the alternative mode(s) (Olson and Groves 2012). One presumed mechanism is that sample members have a latent preference for at least one mode (Smyth et al. 2014), which then increases the probability of cooperating in these modes when offered (Olson et al. 2012). More than simply whether use of mixed-mode surveys increases response rates, future research is critical for understanding how and why the use and sequencing of interviewer-administered modes in a mixed-mode design affects survey participation decisions. For instance, whether the type and order of interviewer-administered and self-administered modes affects sample member contactability is not understood. Additionally, whether specific combinations of modes affect decisions to cooperate by changing the perception of what is a “preferred mode” or perceptions of the legitimacy, importance, and confidentiality of different surveys in different modes is not known. How interviewer-administered modes increase the propensity of those who may find it difficult to participate (e.g., those who speak languages other than the primary language of the interview; those with disabilities) in mixed-mode surveys is also worthy of future study. This kind of research will be expensive—it cannot be done with vignettes in a nonprobability web sample as mode of data collection shapes reported mode preferences—but is critical for understanding the mechanisms of why interviewer-administered modes matter. Whether interviewers vary in how effectively they offer alternative modes when faced with a reluctant respondent is also an area of future research as mixed-mode surveys become the norm; examinations of “knock-to-nudge” approaches such as those used in the United Kingdom during 2020 and 2021 may provide some initial insights (Department for Transport 2023).

3. Interviewer-Administered Surveys and Measurement Errors

Variable and biasing measurement errors due to interviewers are a consistent challenge for interviewer-administered surveys (Schaeffer et al. 2010; West and Blom 2017). Correlated variable measurement errors due to interviewers, akin to the effects of cluster sampling, increase the variance of the respondent mean by

Data quality indicators in interviewer-administered surveys tend to be explained more by question characteristics than characteristics of interviewers or respondents (Olson et al. 2020). Yet the effects on interviewer-related measurement errors such as IICs of many important question or design features (e.g., length of the reference period, questions that are field coded or have volunteered response categories) or across respondent subgroups (e.g., self- vs. proxy reports, older vs. younger; higher vs. lower education) are largely unexplored. Future research on measurement errors in interviewer-administered surveys should focus on question characteristics as informed by contemporary question taxonomies (e.g., Schaeffer and Dykema 2011, 2020); this research should start with a systematic review of what types of question features are missing from our current understanding of interviewer effects to inform future observational and experimental research. Interviewer training materials can be developed where interviewers have larger effects; questionnaire design elements can be modified (e.g., instructions, motivational statements) where questions or respondents may have a larger effect on measurement errors.

Mixed-mode surveys create possible problems for survey estimation. Measurement differences in mixed-mode surveys have largely focused on biasing effects (e.g., mean differences across modes) after accounting for selection differences into the modes (see Chapter 8 in Olson et al. 2019). It is possible that the obtained IIC is also affected in a mixed-mode design, although to my knowledge, no research has examined this question. IICs may increase because of differential selection into the interviewer-administered mode (e.g., similar respondents agree to certain interviewers) or because those who participate via an interviewer-administered mode are more sensitive to cues from the interviewers (e.g., lower education respondents). IICs may also decrease; for instance, more training or monitoring resources can be put into an interviewer-administered mode because other less-expensive modes are used for the respondent pool, telephone interviews allow for greater social distance (and fewer observable cues) from the interviewers to the respondents than in-person interviews, or more attention is paid to the visual design of the interviewer’s instrument when the respondent may also use the same questionnaire. Future research should examine how variable and biasing interviewer effects manifest on different types of questions and for different types of respondents in mixed-mode surveys, including which questions or tasks are “best” (e.g., lowest item nonresponse, lower measurement errors, highest consent rates) when administered by interviewers. This information could be used to develop evidence-based interviewer training and monitoring on question and survey administration.

4. Interviewers and Technology of the Future

One can only speculate on what new and emerging technologies may mean for interviewer-administered surveys of the future. Development of survey dashboards that visualize data collection progress and/or estimate response propensity models in real time are facilitated by large-scale computing infrastructure (Murphy et al. 2018). Real-time algorithms paired with mobile devices allow survey organizations to steer interviewer behavior in the field (Walejko and Wagner 2018). Advanced computing power permits larger survey organizations to transcribe and code interviews through automated machine learning methods, facilitating detection of falsification or deviations from standardized protocols (Sun and Yan 2023). Future research should continue to improve these technological advancements; ideally, computing resources and software will expand so that smaller survey organizations with fewer resources eventually can use these technologies.

What new technology means for the future of interviewers is, at this writing, in its infancy. Research should continue on virtual interviewers through avatars, interviewers administering questionnaires through text message, and interviewing through video conferencing platforms such as Zoom (Conrad et al. 2020; Hillygus 2024; Schober et al. 2015). Much of this work has been around the question of “can we do interviews using this technology?” Future research would benefit from asking “under what conditions should we do interviews using this technology?” exploring when such technology may or may not be fit for purpose.

Generative AI has accelerated work using “virtual AI” interviewers, in which generative AI platforms administer questionnaires using a form of conversational interviewing (e.g., Wuttke et al. 2024). Future research on generative AI interviews needs to unconfound automated administration versus human administration, “standardized” versus “conversational” administration, and text responses versus spoken responses. Additionally, future research should examine how “conversational” administration manifests for different generative AI programs (e.g., what kinds of directive or nondirective probes, affect, feedback, rapport building) as different architectures have different default ways of interacting with users (Buskirk 2024). Other areas for future research may consider whether audio computer-assisted self-administration instruments (ACASI) may benefit from real-time clarification or follow-up from an AI-generated interviewer, whether surveys administered on the web can improve the quality of answers to questions that are hard to transition from interviewer-administered to self-administered modes (e.g., self-reported industry and occupation), or whether time diaries or event history calendars are easier to implement when a generative AI interviewer is programmed to anticipate parallel probing across life domains. It is possible that future interviewer-administered surveys could outsource some supervision and monitoring to automated systems that “listen” in real-time to a conversation through computer-assisted recorded interviews, automatically code the interaction between interviewers and respondents, detect falsified interviews immediately, and provide feedback to interviewers on the quality of their question administration, probing, and feedback, although this kind of implementation seems many years away.

Ultimately, the future of surveys conducted by interviewers will be driven by costs. Human interviewers are expensive, and the labor market for interviewers is changing constantly. Finding a supply of employees who are willing to go to strangers’ homes or cold call telephone numbers and ask for information about household members is increasingly challenging. Whether interviewer positions are structured as salaried full-time versus part-time work versus on-call or per-piece work varies across organizations, studies, and labor laws in different countries. Future research should study the question of interviewer employment, examining individual, study, supervisory, and institutional factors that predict interviewer attrition, promotion, and retention. This work, in concert with using work on survey errors related to interviewers to inform training and monitoring procedures, will permit organizations to develop survey protocols that account for interviewer-related survey costs and survey errors from posting a job to data collection end.

Footnotes

Appendix

Sources for Figure 1 on Data Collection Modes.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Received: January 9, 2025

Accepted: January 21, 2025