Abstract

Underwater visual Simultaneous Localization and Mapping (SLAM) is essential for autonomous underwater navigation and close-range underwater inspection. However, the turbid and low-light conditions common underwater severely limit visibility and cause motion blurring, posing significant open challenges for visual SLAM approaches deployed underwater. On the other hand, the scarcity of public underwater multi-sensor datasets, coupled with the lack of 6-Degree-of-Freedom (6-DoF) ground truth data for SLAM evaluation, hinders the advancement of underwater visual SLAM research. To address these problems, this paper introduces an underwater dataset encompassing multi-sensor data from a stereo camera, an Inertial Measurement Unit, a Doppler Velocity Log, and a pressure sensor. To cover various difficulty levels for underwater SLAM evaluation, it provides eight sequences collected under different speed and illumination conditions. Extrinsic and intrinsic calibration parameters are also provided for multi-sensor fusion. Additionally, we present TankGT, a fiducial-marker-based SLAM system designed to provide highly accurate 6-DoF ground truth poses in underwater environments, enabling rigorous quantitative and qualitative benchmarking for underwater SLAM algorithms. We demonstrate the effectiveness of the proposed Tank dataset with four SLAM algorithms. The dataset is released to facilitate underwater SLAM research in the community at https://senseroboticslab.github.io/underwater-tank-dataset.

1. Introduction

Underwater Simultaneous Localization and Mapping (SLAM) is vital for enabling autonomous navigation and task execution in underwater environments. Two primary approaches in underwater SLAM are sonar-based SLAM and visual SLAM. Sonar systems offer a significantly longer operational range compared to cameras, making them effective in low-visibility conditions. However, their low resolution, high noise, and limited observability make it difficult to recover full 6-Degree-of-Freedom (6-DoF) poses accurately. Additionally, the high cost of sonar systems limits their widespread adoption.

In contrast, visual SLAM is attractive due to the widespread availability, low cost, and rich perceptual capabilities of camera systems commonly used on underwater robots for underwater inspection tasks. Nevertheless, underwater environments pose several unique challenges for visual SLAM. Turbid water reduces visibility, producing noisy, low-contrast, and textureless images that hinder feature extraction and matching. Motion blur, caused by poor lighting and external disturbances such as currents and waves, further degrades tracking performance. Featureless open areas provide little visual structure for SLAM algorithms to exploit. Additionally, suspended particles scatter light and introduce artifacts such as marine snow, which severely affect image quality and disrupt feature matching (Guth et al., 2014; Zhang et al., 2022). These challenges make vision-only SLAM systems unreliable or even non-functional in many real-world underwater scenarios. Consequently, multi-sensor fusion, using cameras with modalities such as Inertial Measurement Unit (IMU), Doppler Velocity Log (DVL), and depth sensor, has emerged as a promising strategy to enhance the robustness and accuracy of underwater visual SLAM systems.

In recent years, research in underwater visual SLAM has increasingly adopted a multi-modal approach (Vargas et al., 2021; Xu et al., 2021; Thoms et al., 2023; Zhao et al., 2023; Xu et al., 2025), integrating sensors such as camera, IMU, DVL, and pressure sensors, to address the challenges of the underwater environment. However, the lack of publicly available underwater datasets with accurate 6-DoF ground truth (GT) poses has significantly limited the benchmarking of SLAM methods and slowed progress in the field. Obtaining reliable GT poses underwater remains a major challenge. Motion capture systems, commonly used for aerial SLAM, suffer from limited operational range and coverage underwater because their performance degrades sharply in water. Acoustic positioning systems, such as Ultra-Short Baseline (USBL), offer an alternative but are expensive, difficult to deploy, and often unreliable in shallow water (Guth et al., 2014). Structure-from-Motion (SfM) techniques (Schönberger and Frahm, 2016) are frequently used to generate GT poses offline from visual data. For instance, COLMAP, a widely used SfM toolbox, has been employed in datasets such as AQUALOC (Ferrera et al., 2019) and Eiffel Tower (Boittiaux et al., 2023). However, SfM methods are effective only under ideal visual conditions, requiring good visibility, sufficient environmental features, and slow platform motion to avoid motion blur. As such, they are poorly suited for evaluating SLAM performance in challenging, real-world underwater scenarios. To the best of our knowledge, generating accurate and comprehensive 6-DoF ground truth for underwater SLAM remains an open and unsolved problem, presenting a key barrier to benchmarking and advancing state-of-the-art methods.

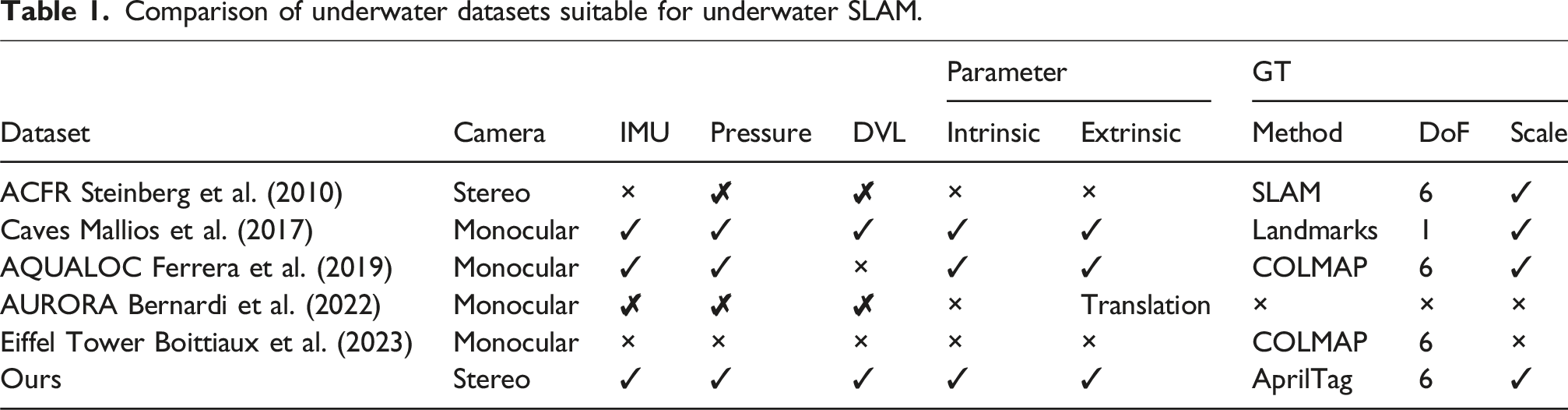

Comparison of underwater datasets suitable for underwater SLAM.

In this paper, we propose the Tank dataset, an underwater dataset that includes multi-sensor data from a stereo camera, an IMU, a DVL, and a depth sensor. To the best of our knowledge, it is the first public underwater dataset that incorporates this multi-sensor configuration and provides accurate extrinsic sensor calibration parameters. Moreover, a physical underwater structure and a fiducial-marker-based SLAM system, termed TankGT, are developed to generate accurate 6-DoF GT poses for underwater visual SLAM evaluation. Eight sequences are captured in different routes, velocities and lighting settings to reflect varying difficulty levels. A convenient evaluation tool set is also developed to generate visually appealing and publication-ready tables and figures for SLAM evaluation. We demonstrate the effectiveness of this Tank dataset on four state-of-the-art SLAM algorithms.

2. Related work

In this section, we provide a review of existing underwater SLAM datasets.

The ACFR dataset (Steinberg et al., 2010) includes 22 AUV dives off Tasmania in 2008, with annotations on every 100th image from over 100,000 stereo pairs. Each annotated image has 50 labeled points covering biological, abiotic, and ambiguous classes. Nevertheless, it lacks raw IMU readings and does not provide the original DVL or pressure sensor data, restricting its value for systems that perform their own fusion or sensor calibration. Furthermore, the dataset does not include camera intrinsic parameters or extrinsic transformations between the sensors. This is a significant limitation for benchmarking multi-sensor SLAM algorithms. In addition, the ACFR dataset employs an extended information filter-based SLAM algorithm (Mahon et al., 2008) to generate GT poses. However, this filter-based SLAM is no longer state-of-the-art, and may not be suitable to provide GT data for evaluating modern SLAM algorithms.

The Caves dataset (Mallios et al., 2017), collected in in an underwater cave complex using a diver-guided AUV, includes sonar, DVL, IMUs, depth, and downward-facing camera data. It provides both ROS bag files and processed text formats for ease of use. However, it only provides roughly measured GT data using 1-D distances between cone pairs (as landmarks). Moreover, it only employs a monocular camera.

The AQUALOC dataset (Ferrera et al., 2019) provides 17 sequences recorded at depths up to 380 m using ROVs equipped with a monocular camera, IMU, and pressure sensor. Data is provided as ROS bags and raw files, with offline SfM-based trajectories for benchmarking. However, the dataset lacks DVL data, which is crucial for underwater SLAM. The SfM-based GT poses are generated using COLMAP (Schönberger and Frahm, 2016), which may not be suitable for evaluating SLAM algorithms in challenging underwater conditions.

The AURORA dataset (Bernardi et al., 2022) offers a more diverse sensor suite, including sidescan sonar, multibeam echosounder, and visual data, collected during surveys in the Greater Haig Fras Marine Conservation Zone. However, it only provides fused navigation outputs rather than time-synchronized raw measurements from the DVL, IMU, and depth sensors. This omission limits its utility for evaluating or developing tightly-coupled SLAM pipelines that require direct access to raw measurements for accurate state estimation and uncertainty propagation.

The Eiffel Tower dataset (Boittiaux et al., 2023) provides only monocular camera data, which significantly limits its applicability to multi-sensor SLAM research. Without data from inertial sensors, depth sensors, or sonar, it cannot support the development or benchmarking of sensor fusion frameworks that are increasingly central to robust SLAM in challenging environments such as underwater domains.

As far as we know, the existing datasets do not provide a comprehensive multi-sensor configuration, calibration parameters with accurate 6-DoF GT poses for underwater SLAM evaluation. The Tank dataset aims to fill this gap by providing a multi-sensor dataset with accurate GT poses, enabling the development and benchmarking of robust underwater SLAM algorithms.

3. Dataset collection and formation

3.1. Environment setup

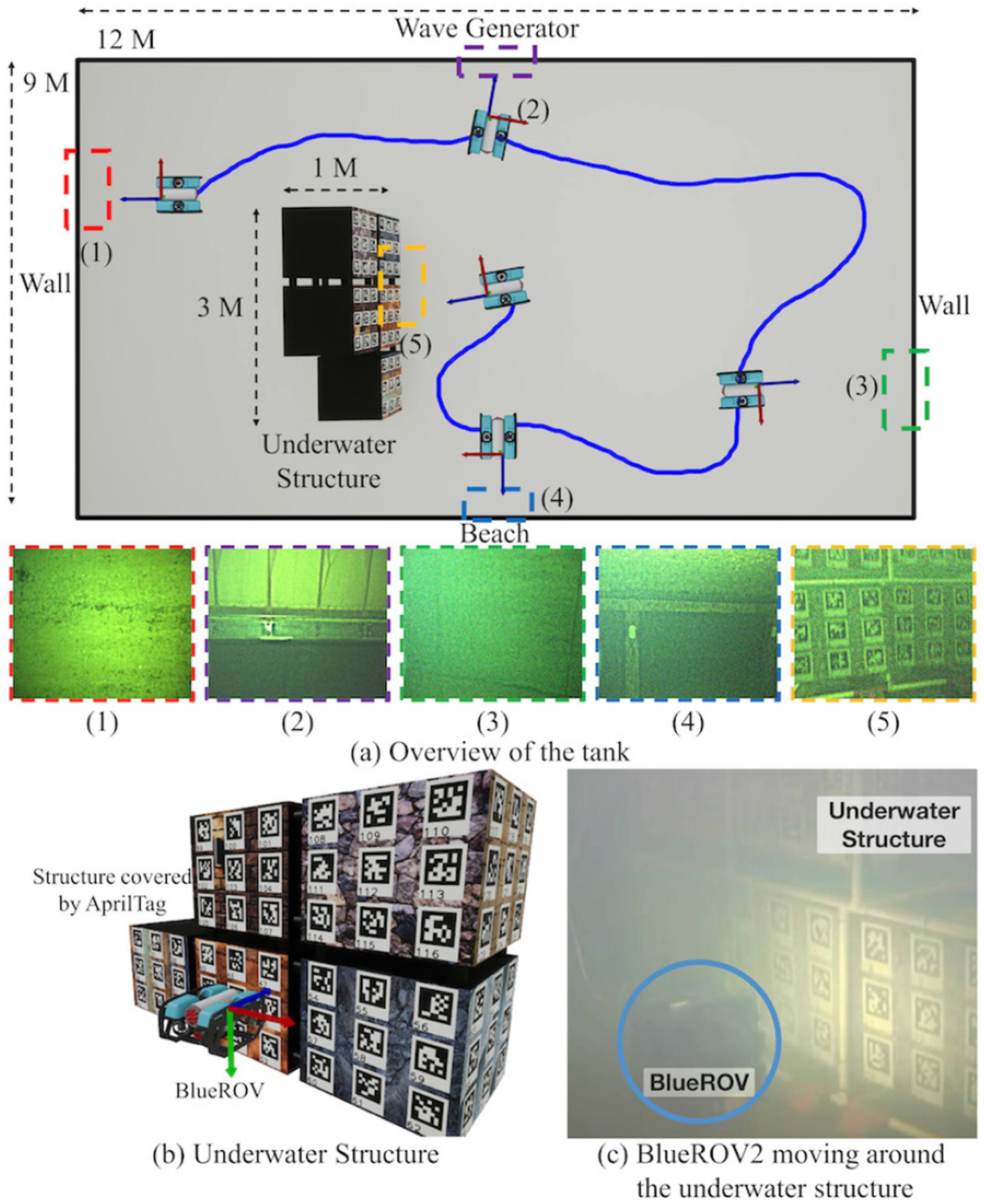

The data is collected in a 9 × 12 m water tank with a dedicated underwater structure covered by AprilTag (Ferrera et al., 2019) markers in the middle. As indicated in Figure 1, the environment has five different areas: (1) textureless wall, (2) wave generator, (3) textureless wall, (4) beach, and (5) underwater structure. These areas provide a variety of scenarios: the beach area provides some visible features when the vehicle traverses closely, being a relatively easy region; the wall and generator areas do not have much salient texture to track, hence challenging for visual SLAM; The underwater structure provides clear features as well AprilTag markers for generating GT poses. Experiment setting for the data collection.

3.2. Sensor configuration

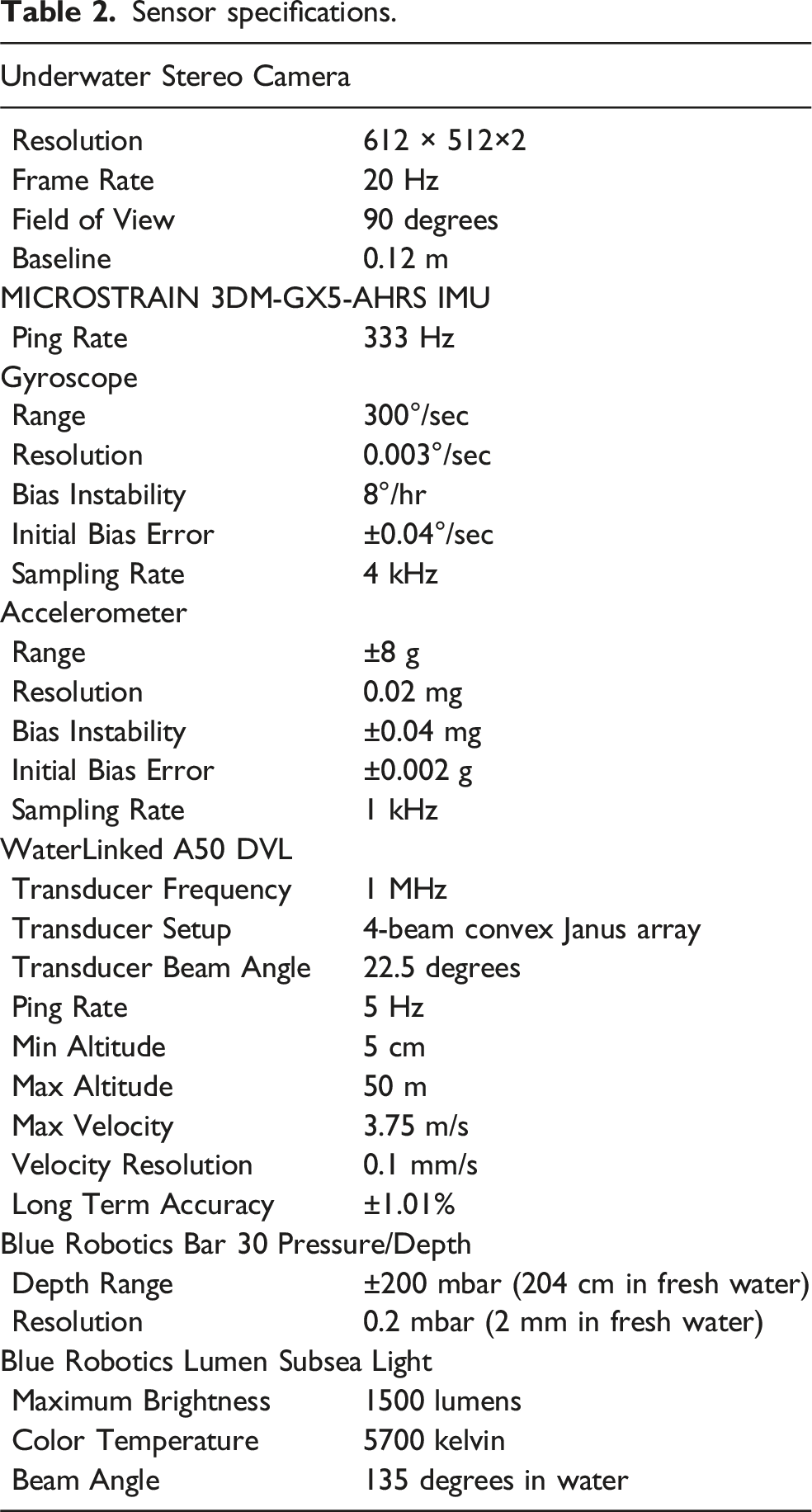

Sensor specifications.

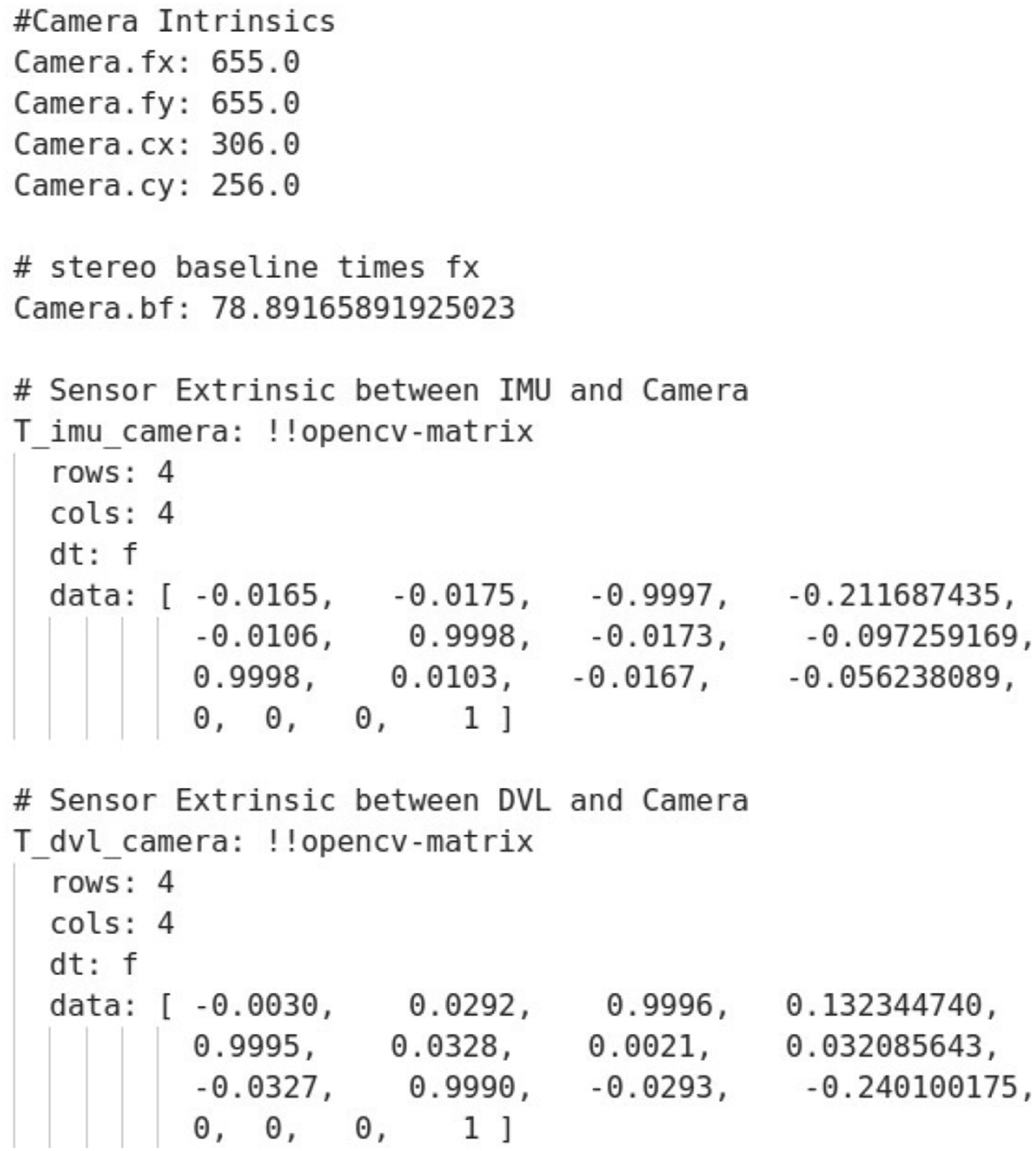

Camera intrinsic parameters

The intrinsic parameters of the stereo cameras are calibrated using the Pinax Model (Łuczyński et al., 2017) which corrects the refraction and distortion caused by the camera’s waterproof housing. An underwater dehazing algorithm (Łuczyński and Birk, 2017) is also enabled to enhance the camera visibility in the water.

Extrinsic parameters

Extrinsic parameters between sensor A and B can be defined as a transformation matrix as:

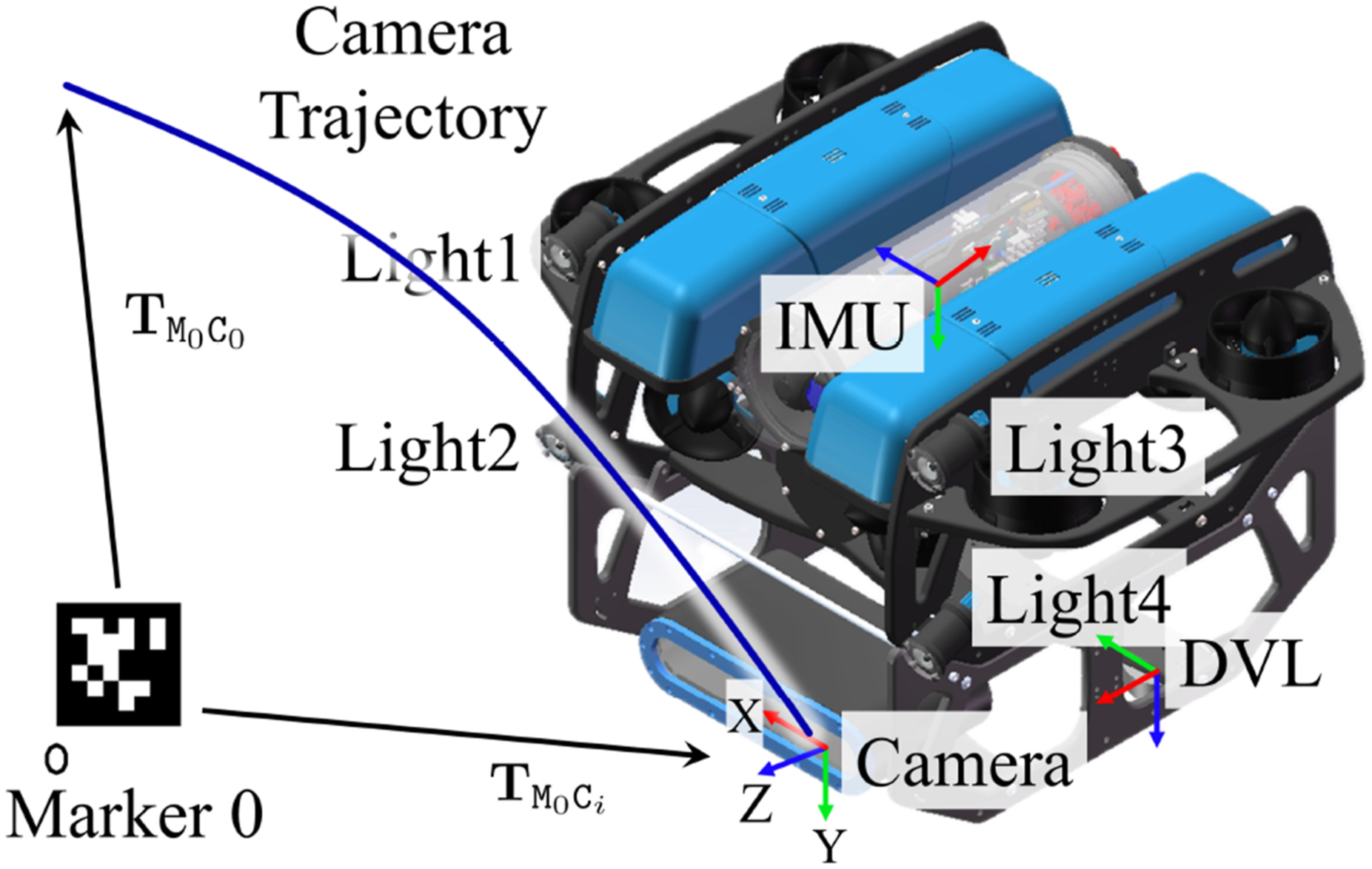

The sensor coordinate frames are shown in Figure 2. We provide Coordinate frames of the sensors and the GT poses.

Extrinsic calibration algorithm

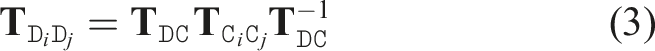

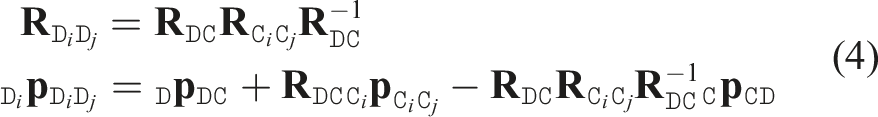

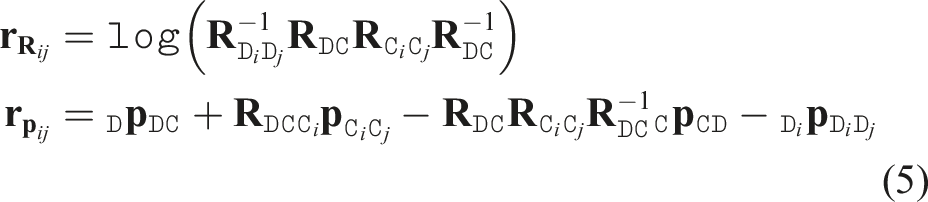

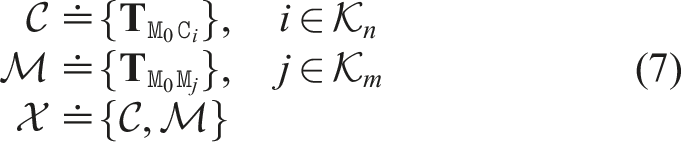

The extrinsic parameters between the DVL and the camera are obtained using our extrinsic calibration algorithm proposed in Xu et al. (2021), being initialized with manual measures for better convergence. Specifically, trajectories estimated separately from DVL based dead reckoning and camera-based visual SLAM are obtained. The DVL and camera trajectories are defined as a set of relative transformations:

Extrinsic calibration between the IMU and the camera is performed using the Kalibr toolbox (Furgale et al., 2013).

Sequence configuration

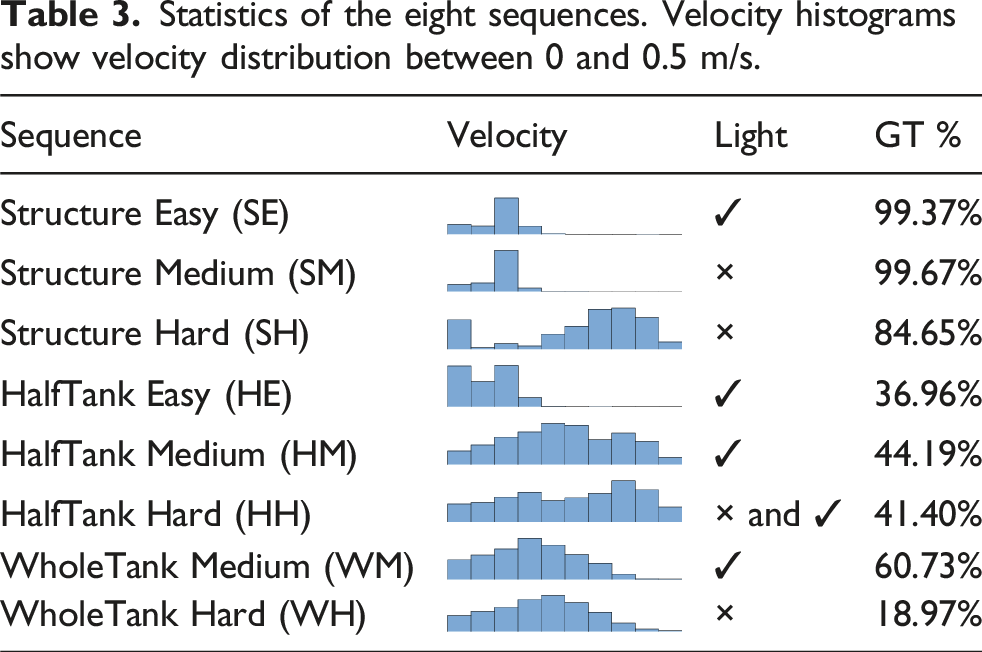

Statistics of the eight sequences. Velocity histograms show velocity distribution between 0 and 0.5 m/s.

Three difficulty levels are defined for evaluation: • The easy level features low velocity, good lighting conditions, and appropriate distances between the camera and objects to minimize motion blur and ensure good visual input for SLAM systems. • The medium level introduces challenges such as poor lighting, occasional aggressive motion, and a lack of structural features, making it more difficult for SLAM systems. • The hard level combines aggressive motion, poor or varying lighting, and long-term structureless or textureless scenes, posing significant challenges for SLAM systems.

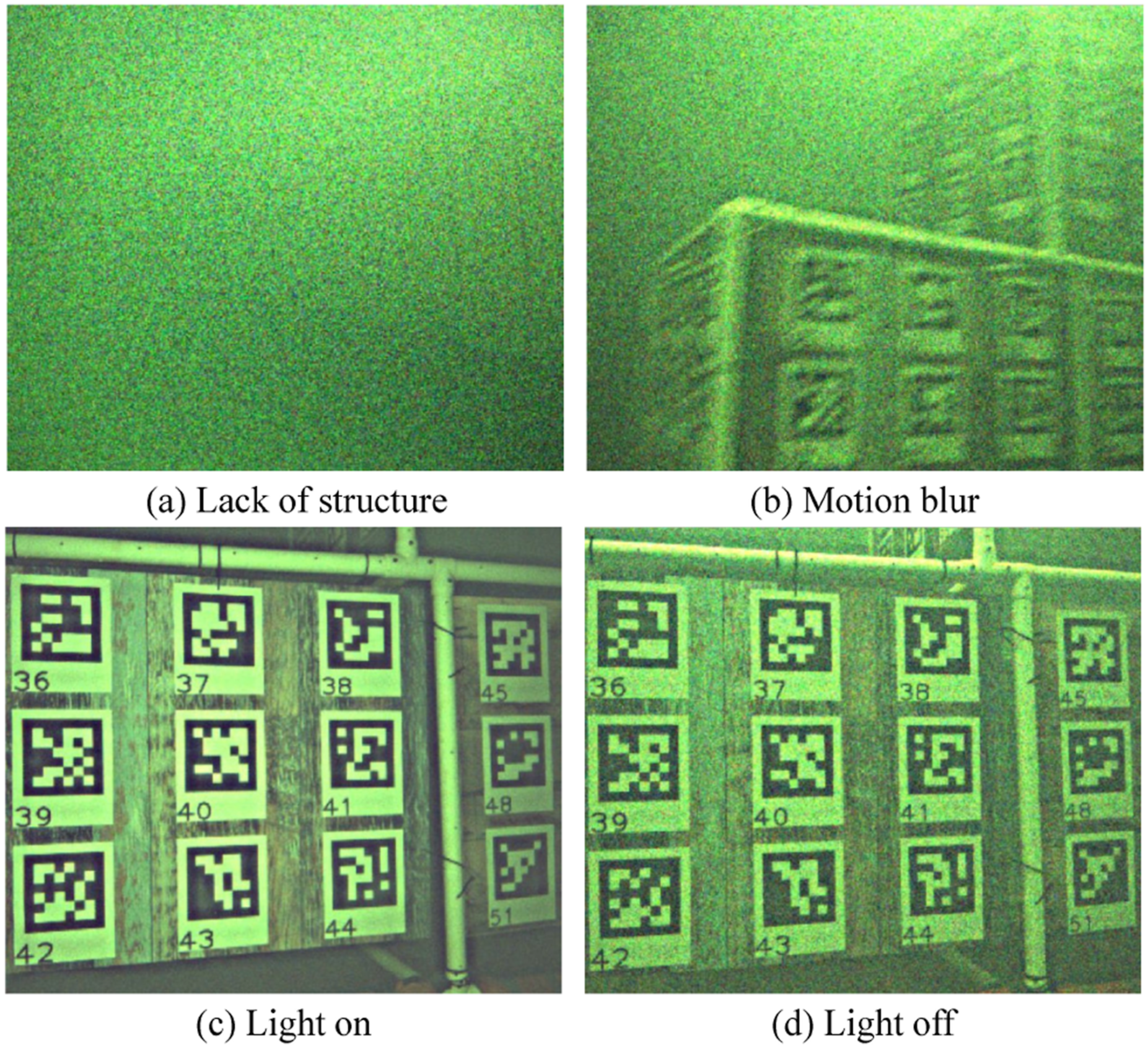

Examples of these challenging visual conditions, including lack of structure, motion blur, and lighting variations, are shown in Figure 3. Some visually challenging scenarios in the dataset.

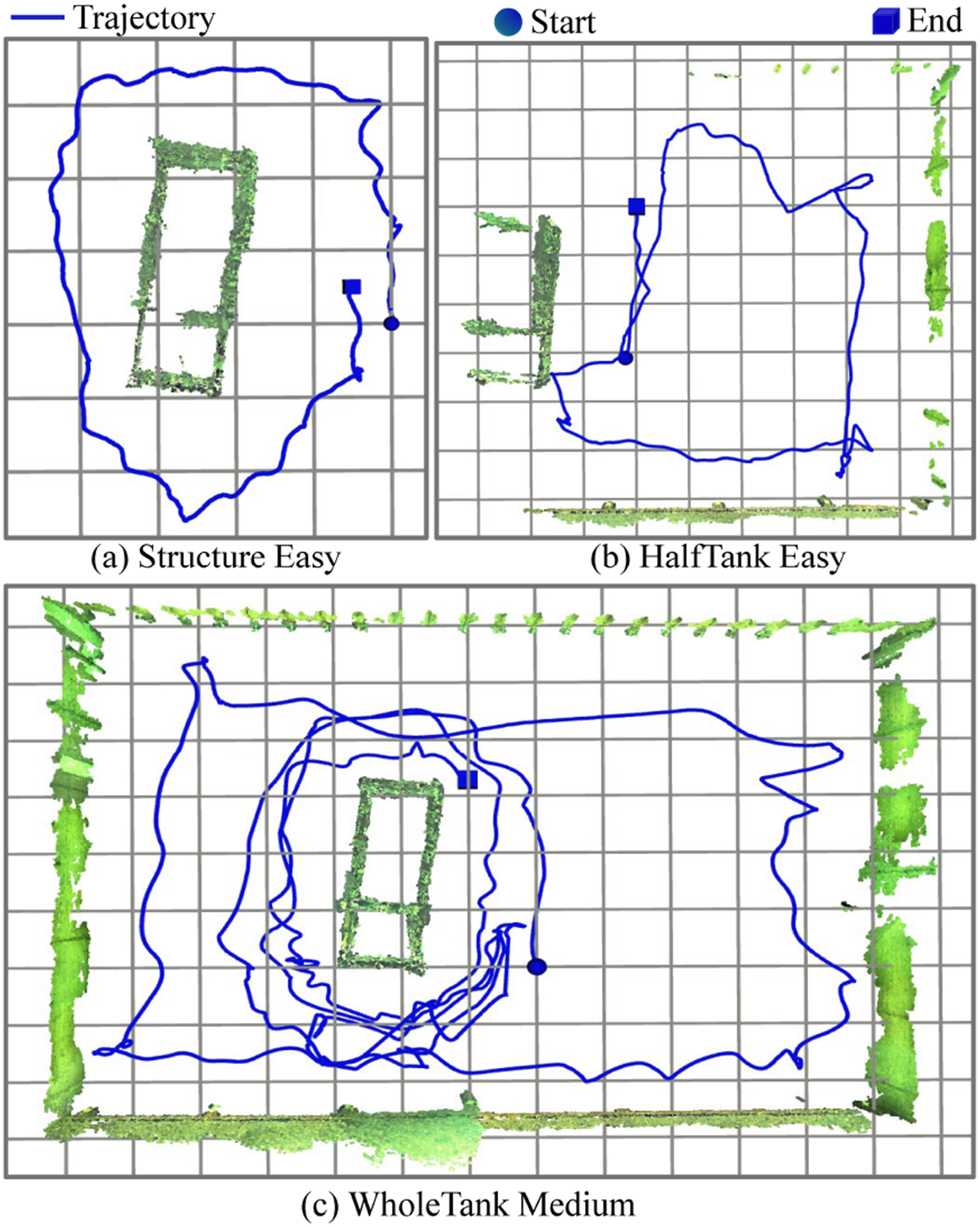

We designed three types of sequences: Structure (SE, SM, and SH), HalfTank (HE, HM, and HH), and WholeTank (WM and WH). • The Structure sequences (SE, SM, and SH) are collected around the structure, with the vehicle following a looped trajectory, as illustrated by the SE sequence in Figure 4(a). • The HalfTank sequences (HE, HM, and HH) cover a larger area, starting from the structure and traversing half of the tank, including the wall and beach areas. The trajectory and reconstruction of the HE sequence are shown in Figure 4(b). • The WholeTank sequences (WM and WH) span an even larger area, where the vehicle begins near the structure, traverses the entire tank and returns to the structure for loop closure, as demonstrated by the WM sequence in Figure 4(c). Trajectories and 3D maps of three sequences. A grid is with size 1

For the Structure sequences, 6-DoF GT poses are mostly available. For both the HalfTank and WholeTank sequences, the vehicle starts in the structure area with GT, moves through challenging textureless regions without GT, and then returns to the structure area where GT is available again. This setup allows us to evaluate visual odometry drift or visual SLAM errors by comparing GT poses before and after traversing challenging regions.

Data format

ROS bag

The data is provided as ROS bags and associated parameter YAML files. The ROS bag files contain the sensor data. Each ROS bag file has six topics:

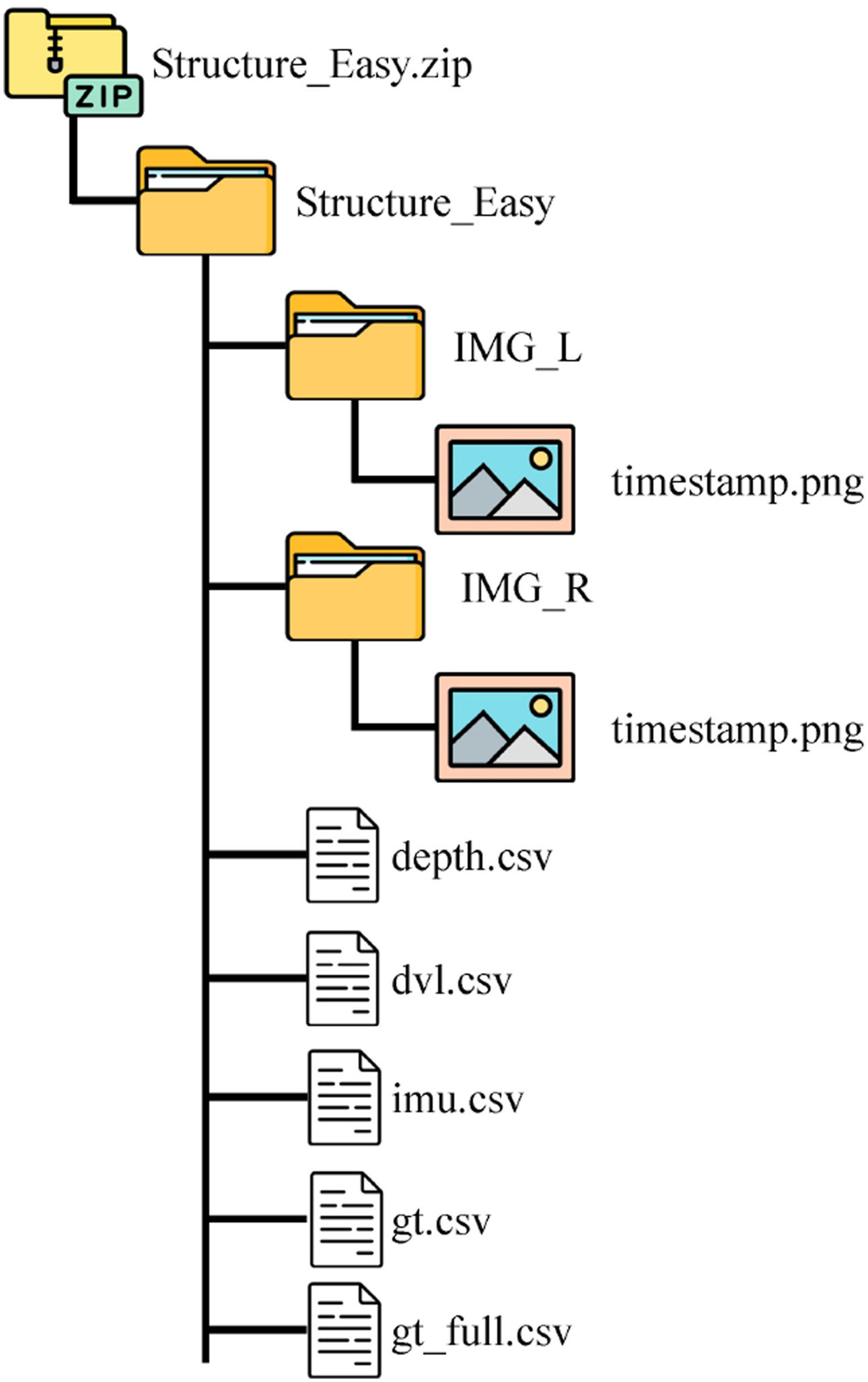

Raw data

In addition to the ROS bag files, the raw data is also provided in a folder structure. The raw data includes the images from the stereo camera, the depth data from the pressure sensor, the DVL data, the IMU data, and the GT data. An example of the folder structure of Structure_Easy sequence is shown in Figure 5.The name of the image files is the timestamp of the image in nanosecond. The depth data is stored in a CSV file with the timestamp and the depth value. The DVL data is stored in a CSV file with the timestamp and the 3-DoF velocity in DVL body frame and four radial velocities along each transducer. The IMU data is stored in a CSV file with the timestamp and the acceleration and angular velocity values. The GT data is stored in a CSV file with the timestamp and the GT pose values. The folder structure of the raw data.

Parameter YAML

The parameter files provide the camera intrinsic parameters and extrinsic parameters between the sensors in the YAML format as shown in Figure 6. The parameter YAML file.

Ground truth generation

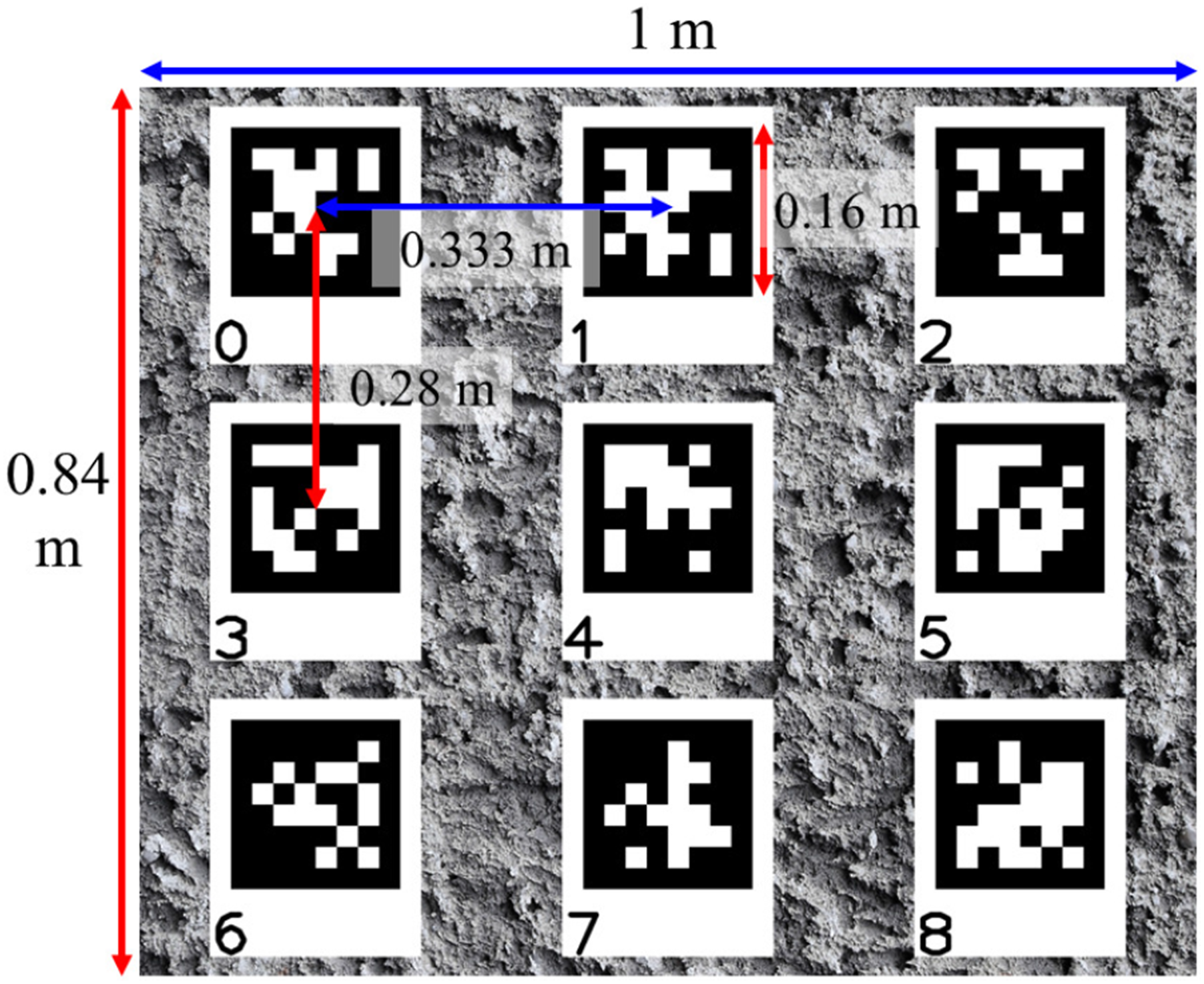

Underwater structure with AprilTag markers

The underwater structure is built with waterproof panels which are covered with AprilTag markers, as shown in Figure 1(b) and (c). As illustrated in Figure 7, a panel that measures 1 × 0.84 m has nine markers arranged with a horizontal interval of 0.333 m and a vertical interval of 0.28 m. This configuration allows for multiple markers to be observed simultaneously as the robot moves around the structure, enhancing the robustness and accuracy of the GT generation. The design of the AprilTag markers on each panel.

TankGT algorithm

To obtain accurate and reliable GT pose data, we developed TankGT, a fiducial marker-based SLAM system. It utilizes the relative pose information from AprilTags to simultaneously estimate the camera poses and the poses of the AprilTag markers. It includes two modes: calibration mode and localization mode.

Calibration mode

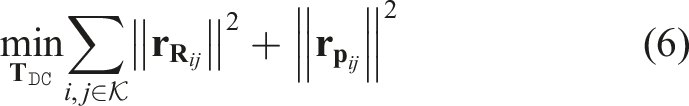

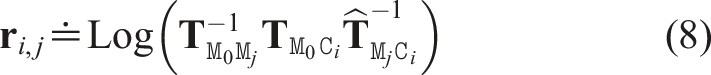

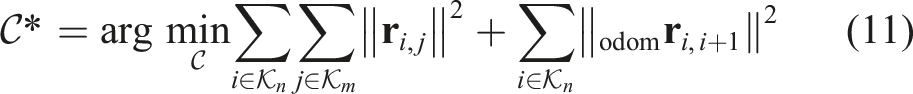

The calibration mode is designed to calibrate the poses of all AprilTag markers under ideal conditions. Challenging underwater conditions can compromise the accuracy of estimated marker poses. Therefore, we estimate the marker poses in a rather optimal scenario where the robot moves slowly around the underwater structure for good image quality with multiple loop closures to reduce errors. This calibration mode is formulated as a SLAM problem with a factor graph optimization. Specifically, considering all camera frames

Localization mode

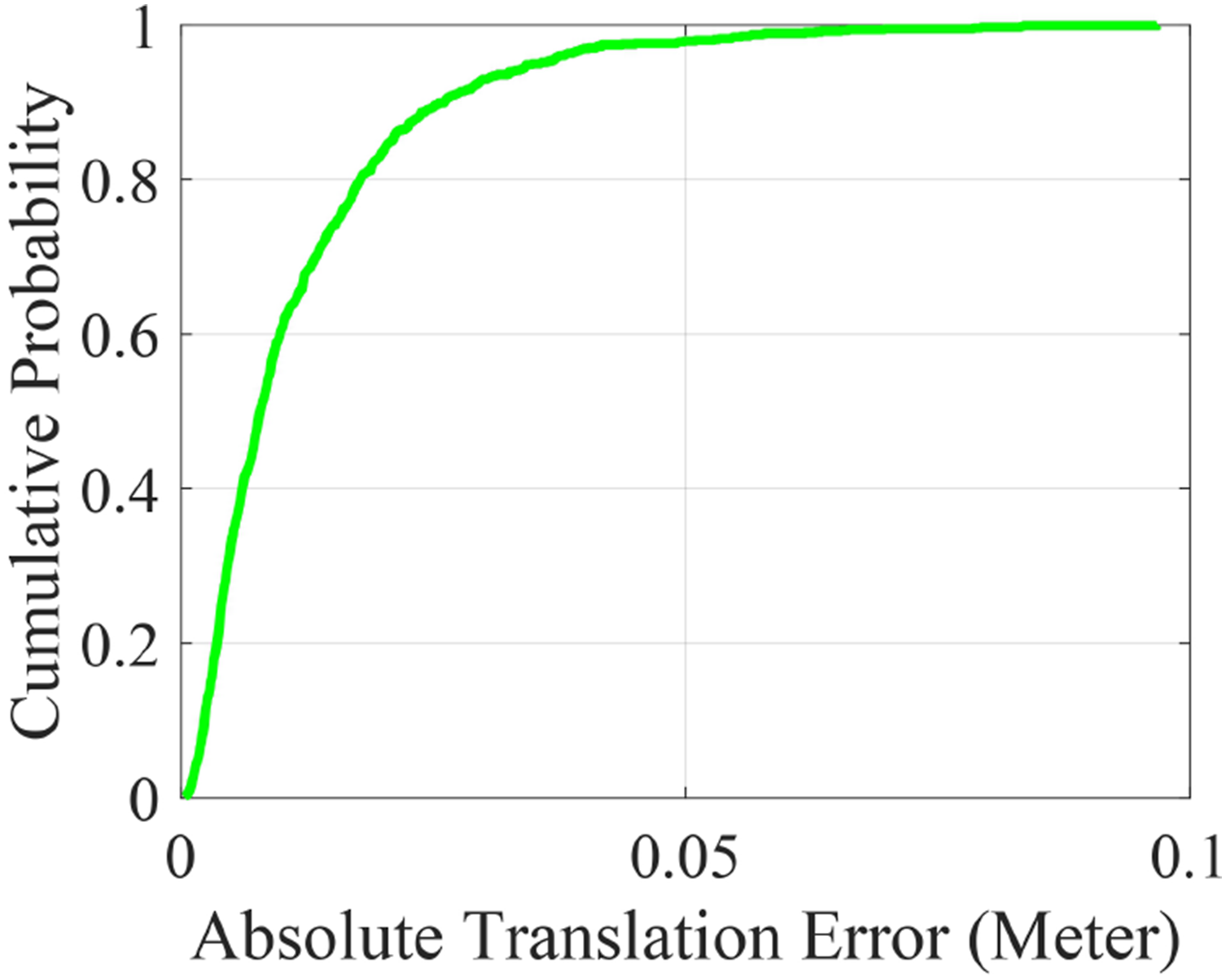

The localization mode estimates the camera poses directly using the known AprilTag poses obtained in the calibration mode. Hence, the objective function to solve is reformulated as

To provide a complete GT trajectory, we include optimized camera poses by fusing the TankGT poses with the AQUA-SLAM algorithm (Xu et al., 2025). AQUA-SLAM is an underwater SLAM framework that fuses stereo camera, IMU, and DVL data in a tightly-coupled framework to achieve reliable pose estimation in challenging underwater conditions. For our purposes, we disable the loop closure module in AQUA-SLAM to obtain smooth odometry constraints between consecutive camera frames. To correct drift, the TankGT poses are fused as landmarks measurements, serving as external references. The overall problem is then formulated and solved as a factor graph optimization, as described below:

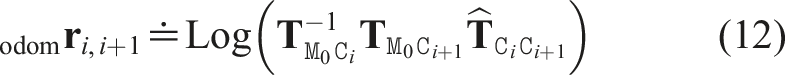

Accuracy of the generated ground truth

Since the AprilTag design on each panel is known (Figure 7), we have the theoretical relative pose between any pair of markers on the same panel. This allows us to compute an error against the calibrated poses of the AprilTag markers, to some extent, validating the accuracy of the generated ground truth camera poses. Figure 8 shows the cumulative density of the translation errors between the theoretical and the estimated AprilTag poses. We can see that over 90% of the estimated marker poses are within 3 cm, which is considered sufficient for the GT generation using measurement constraints from multiple AprilTag markers. Note that the actual errors are likely lower than this value given the fact that the panels may experience slight deformation in water. Cumulative translation error of the calibrated AprilTag poses.

SLAM evaluation using the dataset

In this section, we introduce our proposal to evaluate SLAM performance using the dataset and its tool set, before benchmarking four SLAM algorithms on the dataset.

Trajectory alignment

To evaluate a SLAM algorithm, its estimated poses are first paired with the GT poses using the timestamp. SE(3) transformation is then performed to align the estimated trajectory with the GT trajectory by minimizing the distances between the estimated poses and the GT poses. Specifically, given a set of estimated poses

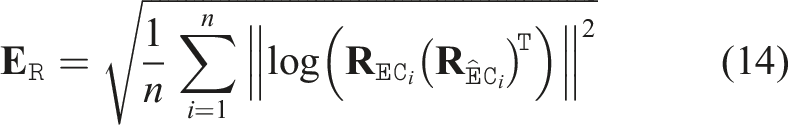

Error calculation

After alignment, the absolute errors between the estimated trajectory and the transformed GT trajectory can be calculated as the RMSE rotation error

Notably, the GT data is only available for the underwater structure area. For most sequences, such as the HalfTank and WholeTank sequences, the GT data is only available for part of the sequences (see the GT percentage in Table 3). This makes the relative error imprecisely reflect the performance. Therefore, the absolute error, instead of the relative error, is proposed for evaluation.

Evaluation tool set

To facilitate the evaluation, we also provide evaluation tools to generate visually appealing and publication-ready tables and figures for SLAM evaluation. The evaluation tools include: (1) (2) (3) (4) (5)

Evaluation results

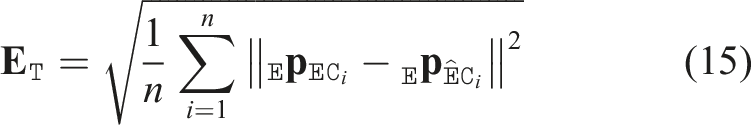

We evaluate the performance of four SLAM systems, that is, Underwater Visual Acoustic SLAM (UVA) (Xu et al., 2021), SVIN2 (Rahman et al., 2022), ORB SLAM3 (Campos et al., 2020), and VINS-Fusion (Lin et al., 2018), on the proposed Tank dataset by reporting the generated results using the evaluation tool set. UVA is an underwater SLAM system fusing stereo cameras, a DVL, and a gyroscope in a loosely-coupled framework. SVIN2 is an underwater SLAM system fusing a stereo camera, an IMU, and a sonar. We only use the stereo camera and IMU data since sonar data is unavailable in the dataset. ORB SLAM3 and VINS-Fusion are two state-of-the-art visual-inertial SLAM systems. ORB SLAM3 is a feature-based SLAM system, while VINS-Fusion is an optical flow based system.

Error table

SLAM performance (average of 10 runs) using the proposed Tank dataset.

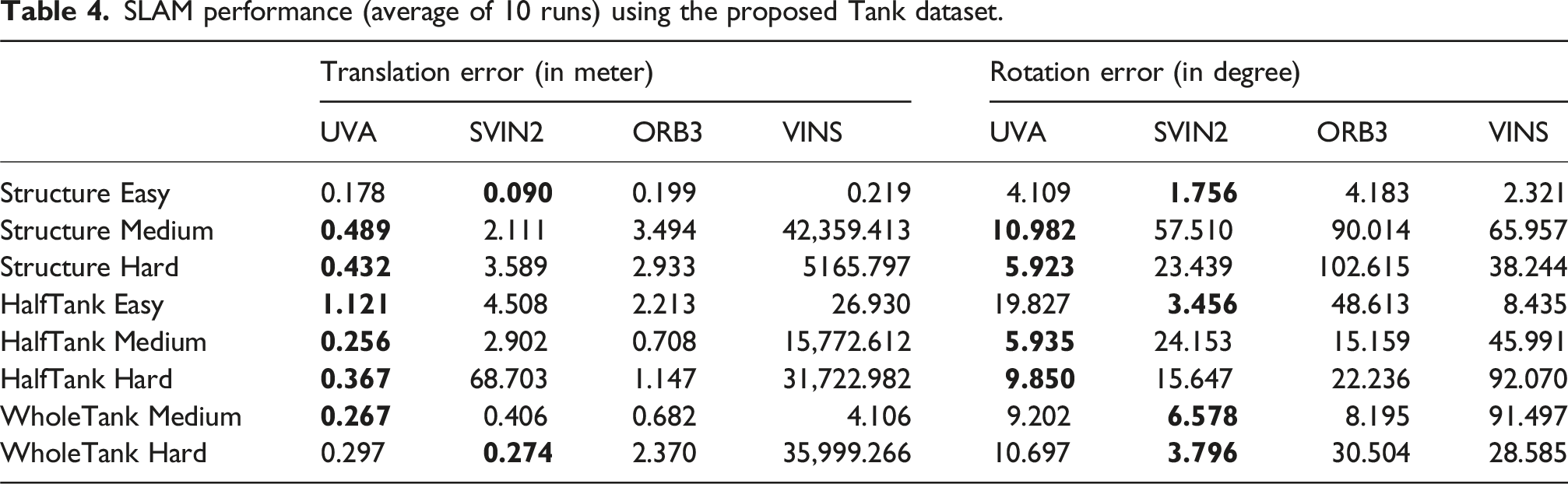

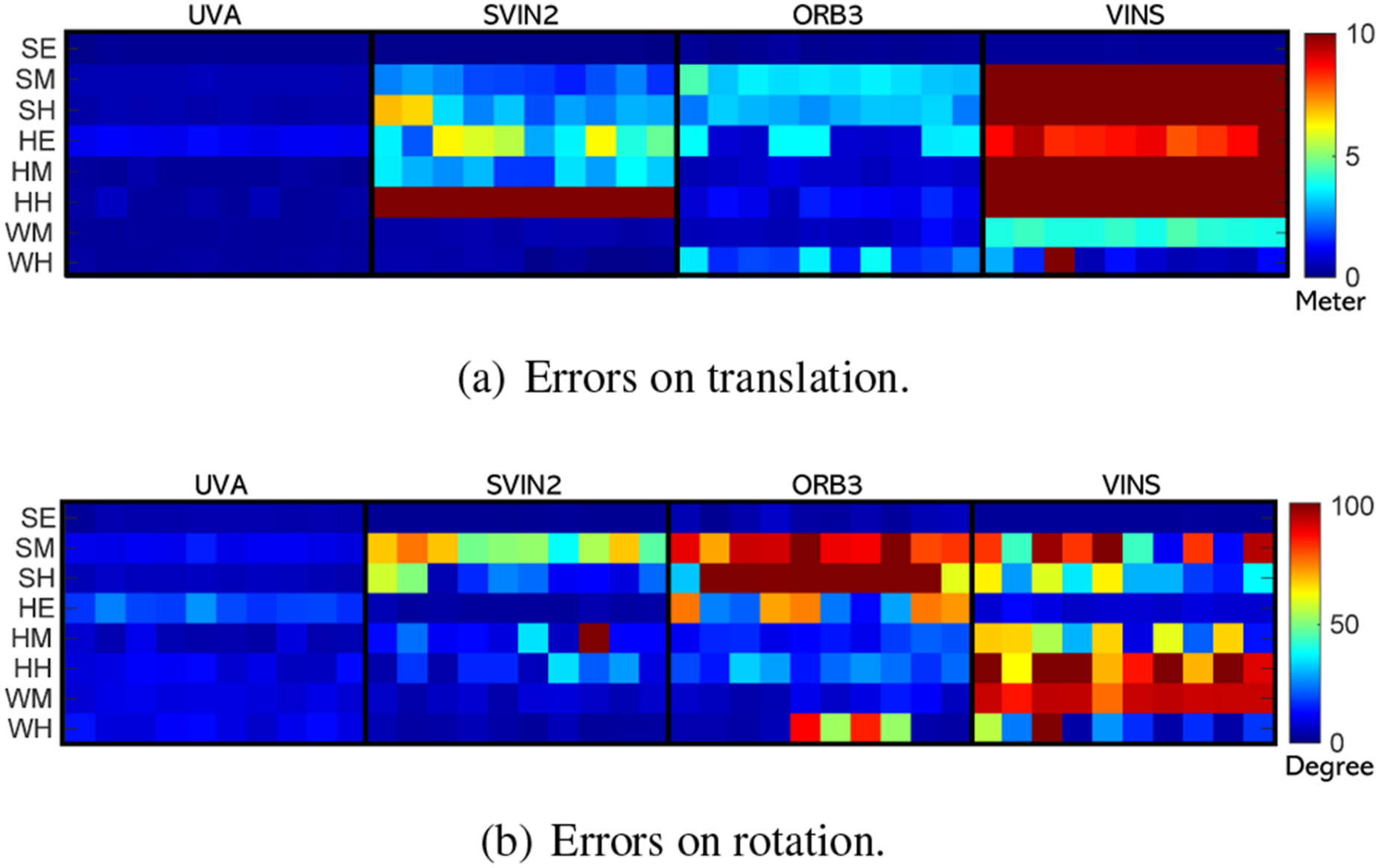

Error distribution heat map

The error distribution heat map, presented in Figure 9, illustrates the error deviations across 10 runs for each method. The UVA method demonstrates the highest robustness across the majority of the sequences. Results of 10 runs on all Tank sequences.

Error with time

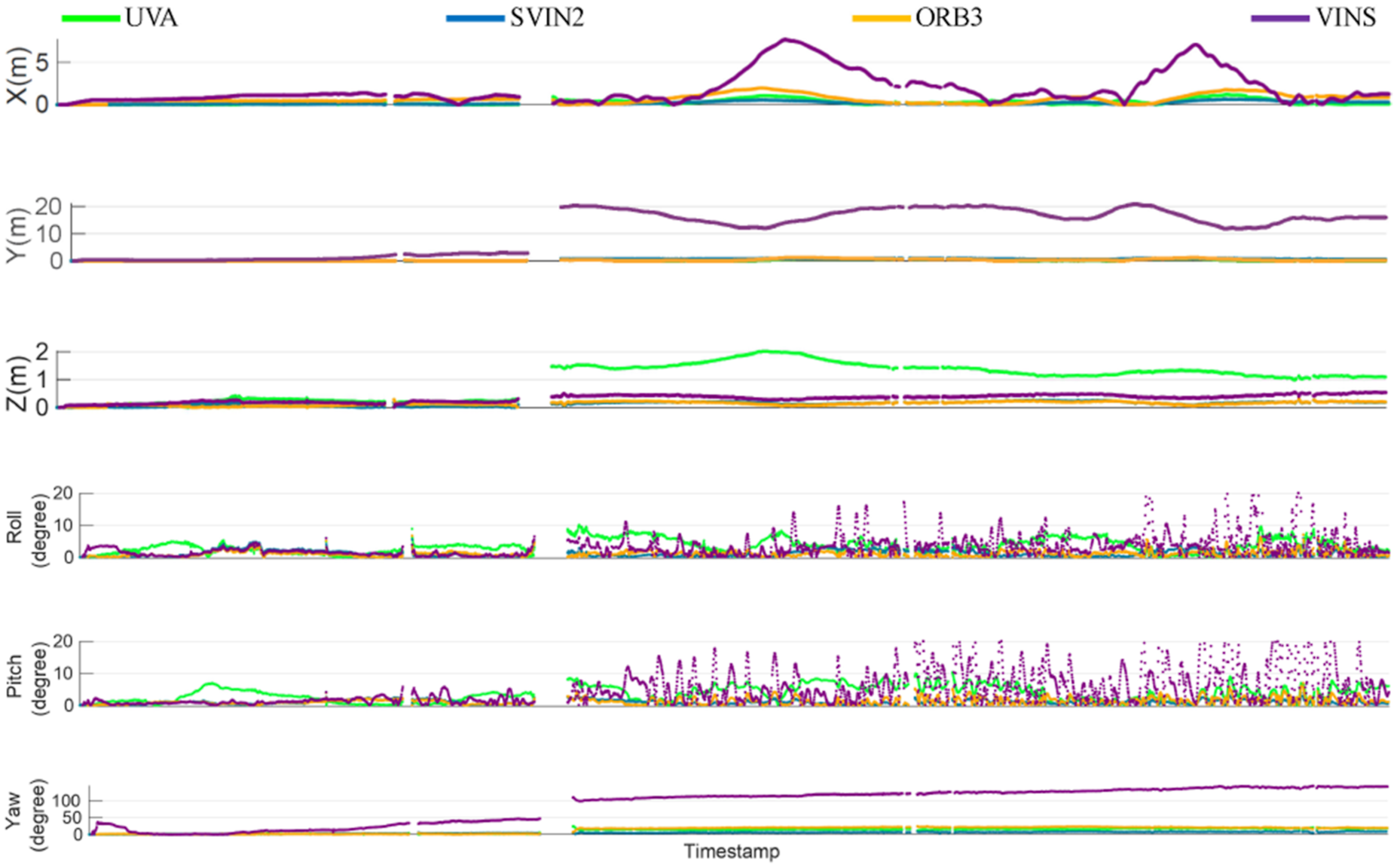

The pose errors on the WholeTank Medium sequence are shown in Figure 10. Errors on WholeTank Medium sequence. The timestamp gaps indicate the times without GT poses.

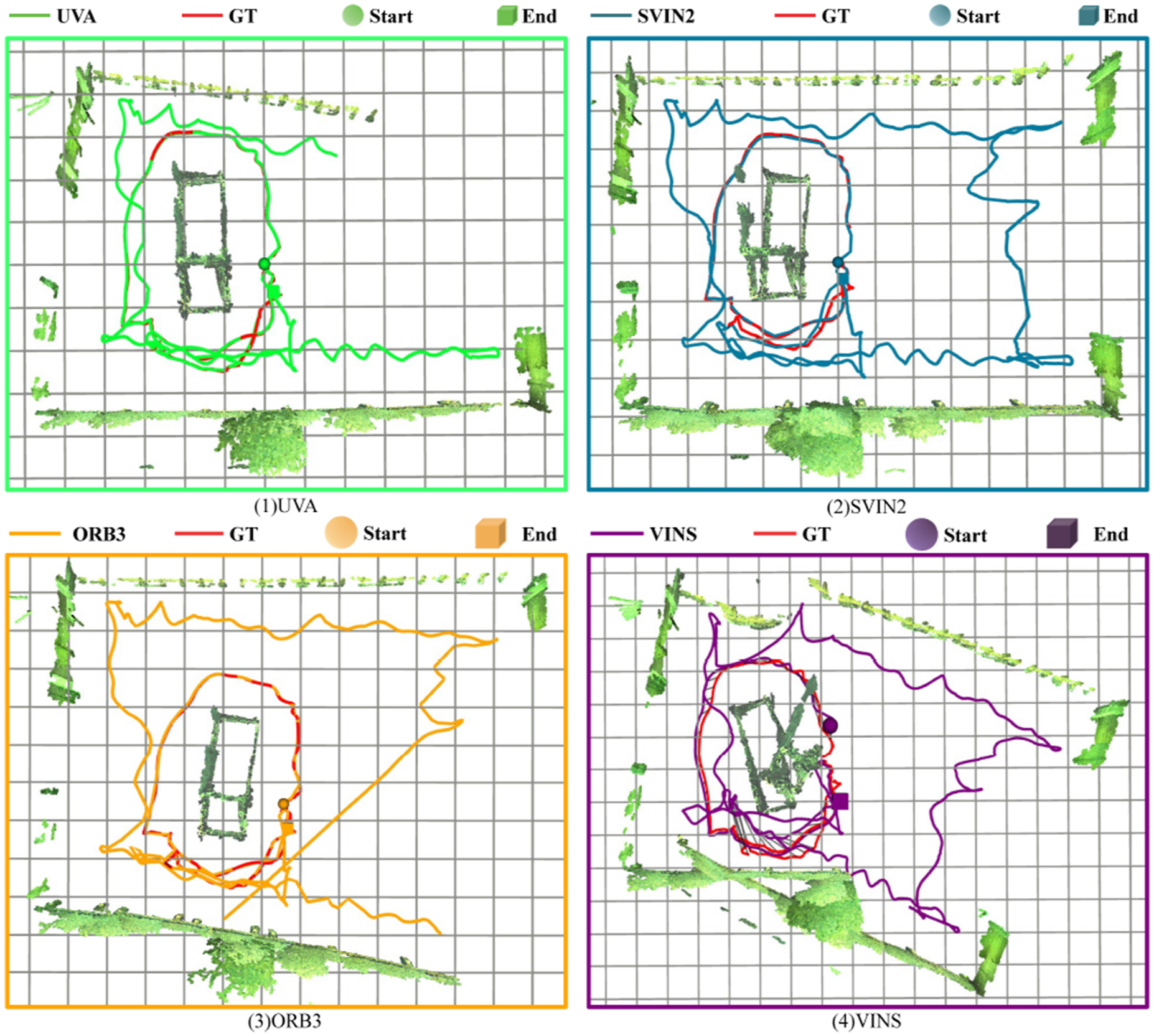

Stereo reconstruction

We provide a convenient script to generate 3D reconstructions using the estimated camera trajectory generated by SLAM and the stereo depth generated by Block-Matching stereo matching algorithm. An example of the 3D reconstruction, SLAM trajectories and associated GT trajectories are shown in Figure 11. 3D reconstruction and SLAM trajectories on the WholeTank Hard sequence.

Conclusion

In this paper, we introduce the Tank dataset, which includes multi-sensor data from a stereo camera, an IMU, a DVL, and a depth pressure sensor. Accurate 6-DoF ground truth poses are also provided by using the proposed TankGT algorithm along with AprilTag markers on an underwater structure. We validate the effectiveness of the dataset by using it to benchmark four SLAM systems. The dataset and the evaluation tools are publicly available at https://senseroboticslab.github.io/underwater-tank-dataset.

Supplemental Material

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.