Abstract

This work presents a novel approach for the optimization of dynamic systems on finite-dimensional Lie groups. We rephrase dynamic systems as so-called neural ordinary differential equations (neural ODEs), and formulate the optimization problem on Lie groups. A gradient descent optimization algorithm is presented to tackle the optimization numerically. Our algorithm is scalable, and applicable to any finite-dimensional Lie group, including matrix Lie groups. By representing the system at the Lie algebra level, we reduce the computational cost of the gradient computation. In an extensive example, optimal potential energy shaping for control of a rigid body is treated. The optimal control problem is phrased as an optimization of a neural ODE on the Lie group SE(3), and the controller is iteratively optimized. The final controller is validated on a state-regulation task.

1. Introduction

Many physical systems are naturally described by the action of Lie groups on their configuration manifolds. This can range from finite-dimensional systems such as rigid bodies, where poses are acted on by the special Euclidean group SE(3) (Murray et al., 1994), towards infinite-dimensional systems such as flexible bodies or fluid dynamical systems, where the diffeomorphism group acts on the configuration of the continuum (Schmid, 2010).

Geometric control systems on Lie groups (Brockett, 1973; Jurdjevic, 1996) exploit the Lie group structure of the underlying physical systems to provide numerical advantages (Marsden and Ratiu, 1999). For example, PD controllers for rigid bodies were defined on SO(3) and SE(3) by Bullo and Murray (1995), and more recently geometric controllers were applied in the context of UAV’s (Goodarzi et al., 2013; Lee et al., 2010; Rashad et al., 2019). Examples for efficient optimal control formulations on Lie groups include linear (Ayala et al., 2021) and nonlinear systems (Spindler, 1998), as well as efficient numerical optimization methods (Kobilarov and Marsden, 2011; Saccon et al., 2013; Teng et al., 2022).

In an orthogonal development over the recent years, there has been a surge of machine learning applications in control (Dev et al., 2021) and robotics (Ibarz et al., 2021; Soori et al., 2023; Taylor et al., 2021). This surge is driven by the need for controllers that work in high-dimensional robotic systems and approximate complex decision policies that require the use of data. The implementation of such controllers through classical control theoretic approaches is prohibitive, and it led to a paradigm shift towards data-driven control (Taylor et al., 2021). Examples of machine learning within high-dimensional systems extend to soft robotics (Kim et al., 2021) and control of fluid systems (Paris et al., 2021). The literature also aims to address common concerns of safety (Brunke et al., 2022; Hewing et al., 2020) both during the training process and in the deployment of systems with machine learning in the loop.

The so-called Erlangen program of machine learning by Bronstein et al. (2021) stresses the importance of geometric machine learning methods: symmetries of data sets can restrict the complexity of functions that are to be learned on them, and thus increase the numerical efficiency of learning frameworks. This rationale also led to extensions of machine learning approaches to Lie groups (Fanzhang et al., 2019; Lu and Li, 2020), with recent applications by Huang et al. (2017); Chen et al. (2021); Forestano et al. (2023).

Indeed, the fundamental symmetry groups in robotics are naturally represented by Lie groups (Marsden and Ratiu, 1999). As such, Lie group-based learning methods are of interest to the robotics community. In an excellent example of a control application Duong and Atanasov (2021b) extended neural ODEs to SE(3) and applied it to the adaptive control of a UAV in Duong and Atanasov (2021a). In their recent work Duong et al. (2024) also highlight the practical use of neural ODEs on Lie groups.

However, a general approach for geometric machine learning in the context of dynamic systems on Lie groups is missing. We believe that such an approach would be of high interest, especially for control applications. In this paper, we address this issue by formalizing neural ODEs on Lie groups.

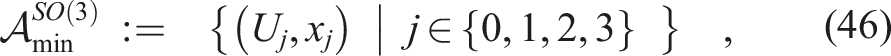

Our contributions are 1. the formulation of neural ODEs on any finite-dimensional Lie group, with a particular focus on matrix Lie groups; 2. computational simplifications with respect to manifold neural ODEs through use of a compact equation to compute gradients on Lie groups, and reduced dimension with respect to non-intrinsic approaches on Lie groups 3. a 4. the formulation of a minimal exponential Atlas on the Lie group SE(3).

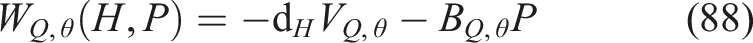

The article is divided into two parts (see also Figure 1): first the formulation of neural ODEs on finite-dimensional matrix Lie groups, and second an extensive example of optimal potential energy shaping on SE(3). Overview of the main contribution and structure of the article. Given a parameterized dynamical system on a Lie group, the generalized adjoint method on Lie groups lets us compute the parameter gradient of a cost-functional over system trajectories by solving a set of differential equations. This parameter gradient can then be used to iteratively update parameters by gradient descent. In practice, we sample multiple initial conditions and approximate the parameter gradient of the expected cost

Section 2 presents the main technical contribution of the article: the generalized adjoint method on Lie groups, which is at the heart of a gradient descent algorithm for dynamics optimization via neural ODEs on Lie groups.

A number of technical tools are required to apply this algorithm on a given matrix Lie group, which are introduced in Section 3. The exponential Atlas allows to implement a numerical procedure for exact integration on Lie groups, while a compact formula for the gradient of a function on a Lie group reduces complexity of the gradient computation.

Section 4 presents the Lie groups SO(3) and SE(3), and gives concrete examples of the technical tools presented in the previous section. One aspect of this is the formulation of a minimal exponential Atlas on SE(3), which is used to formulate an integration procedure on SE(3). This treatment prepares the stage for control optimization of a rigid body on SE(3).

Section 5 introduces the example of optimizing potential energy and damping injection controllers for rigid bodies on SE(3). The class of controllers is defined and it is shown that it guarantees stability by design. Afterward, the optimization of a cost-functional over the defined class of controllers is derived from the general procedure in Section 2.

Finally, Section 6 provides two examples of optimizing controllers for a rigid body on SE(3). The first example concerns pose control, without gravity, and results are compared to a quadratic controller of the type presented by Rashad et al. (2019). In the second example, the controller’s performance is investigated in the presence of gravity.

The article ends with a discussion in Section 7 and a conclusion in Section 8.

1.1 Neural ODEs and relation to existing works

Neural ODEs were first introduced by Chen et al. (2018), who derived them as the continuous limit of recurrent neural nets, taking inputs on

Massaroli et al. (2022) introduced a more general framework of neural ODEs, showing the power of state-augmentation and connections to optimal control, while also showing that the cost-functional can include integral cost terms. To this end, they presented the generalized adjoint method.

There are two highly relevant examples in the recent literature that extend neural ODEs to manifolds. The so-called extrinsic picture is presented by Falorsi and Forré (2020), who show that neural ODEs on a manifold

An intrinsic picture is presented by Lou et al. (2020), who show that neural ODEs on a manifold

An example of neural ODEs to control of robotic systems described on

Duong and Atanasov (2021a,b) apply neural ODEs to control optimization for a rigid body on SE(3). The work focuses on the formulation of an IDA-PBC controller, uses it for dynamics learning and trajectory tracking, and uses neural ODEs as a tool for this optimization. While the integration procedure used is not geometrically exact and the Lie group constraints are violated, the approach is highly successful. However, Duong et al. do not connect their contribution to geometric machine learning literature such as neural ODEs on manifolds. In recent work Duong et al. (2024), steps are made to extend the extrinsic approach to general matrix Lie groups, however without making full use of the geometric structure given by Lie groups.

With respect to Duong et al. (2024), we present neural ODEs on arbitrary finite-dimensional Lie groups. By extending the intrinsic formulation to Lie groups, our example on SE(3) has a reduced number of dimensions (24 instead of 36), and the use of local charts allows geometrically exact integration.

1.2 Notation

While the main results are accessible with a background of linear algebra and vector calculus, the derivations heavily rely on differential geometry and Lie group theory, see, for example, Isham (1999) and Hall (2015) for a complete introduction, or Solà et al. (2021) for a brief introduction with examples in robotics.

Calligraphic letters

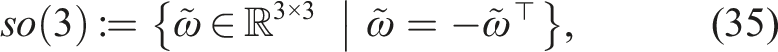

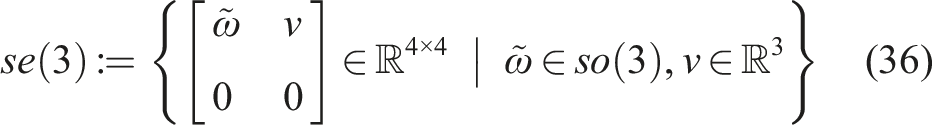

Upper case letters G, H denote Lie groups, while lower case letters g, h denote their elements. A lower case e denotes the group identity e ∈ G, an upper case I denotes the identity matrix. The Lie algebra is

Furthermore

For

When coordinate expressions are concerned, the Einstein summation convention is used, that is, the product of variables with lower and upper indices implies a sum a i b i ≔ ∑ i a i b i .

Let

2. Main result

After a brief introduction to Lie groups in Section 2.1, the optimization problem is introduced on abstract Lie groups in Section 2.2. A gradient descent optimization algorithm is presented in Section 2.3. Our main technical result, the generalized adjoint method on Lie groups, lies at the core of the gradient computation. For the sake of exposition, we present it in the context of matrix Lie groups, and relegate the derivations and the formulation on abstract Lie groups to Appendix A.

2.1 Lie groups

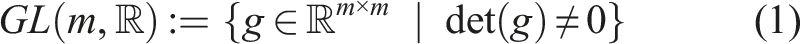

A finite-dimensional Lie group G is an n-dimensional manifold together with a group structure, such that the group operation is a smooth map on G (Isham, 1999). G is a real matrix Lie group if it is a subgroup of the general linear group

We denote the Lie algebra of G as

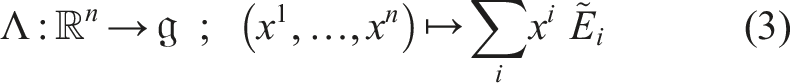

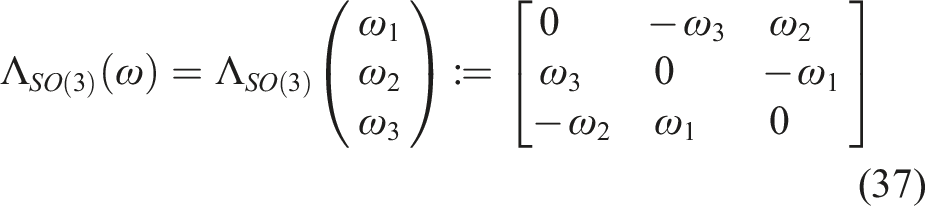

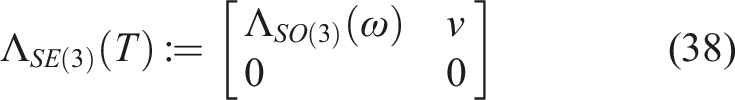

Define a basis

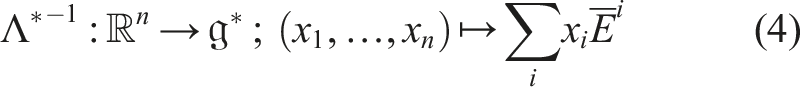

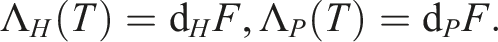

The dual of Λ is the map

For a matrix Lie group the Lie algebra

For

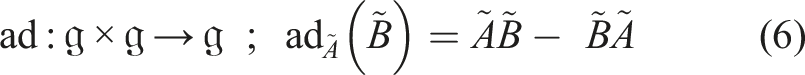

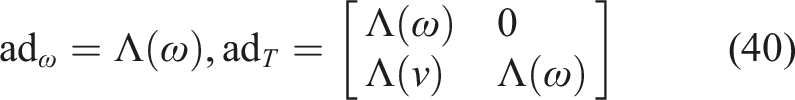

Using the operator Λ, a matrix representation of ad is obtained as

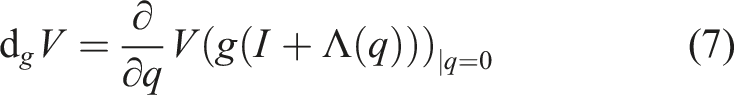

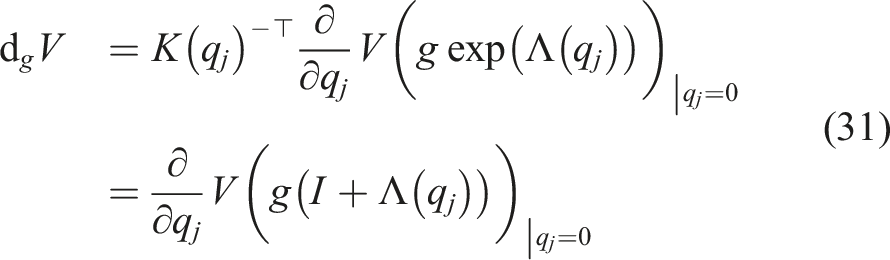

On matrix Lie groups and for functions

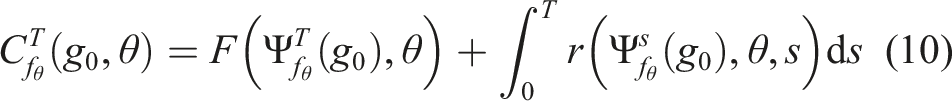

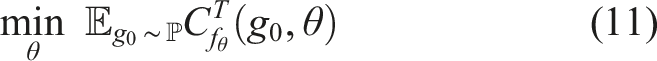

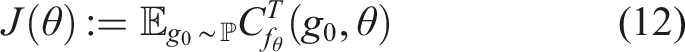

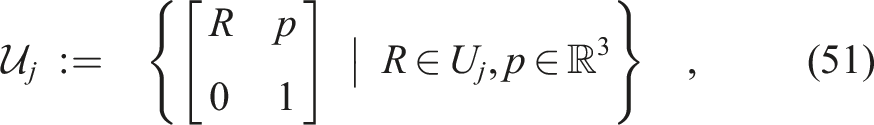

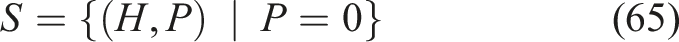

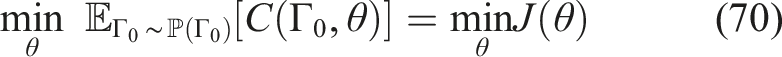

2.2 Optimization problem

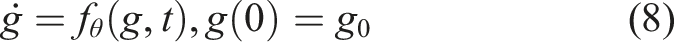

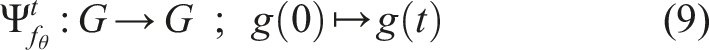

We consider a variant of the optimal control problem on a Lie group (Jurdjevic, 1996) with a finite horizon T. Given parameters

Indicating a probability space

The chief reason for our interest in this optimization problem is that it includes, as a sub-class, the optimization of state-feedbacks

The dynamics f

θ

(g, t) can also be parameterized with neural nets, in which case f

θ

(g, t) is referred to as a neural ODE on a Lie group. Indeed, for the Lie group

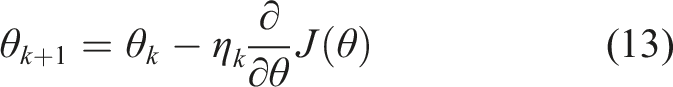

2.3 Optimization algorithm

We use a stochastic gradient descent optimization algorithm (Robbins and Monro, 1951) to approximate a solution to the optimization problem (11) on a matrix Lie group.

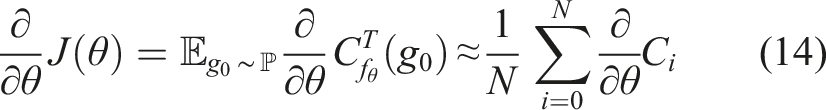

Denote the total cost in (11) as

Additionally, denote by

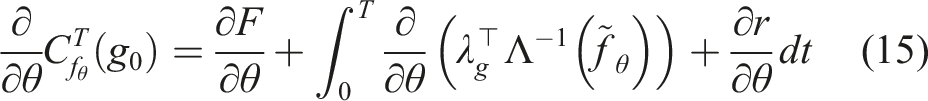

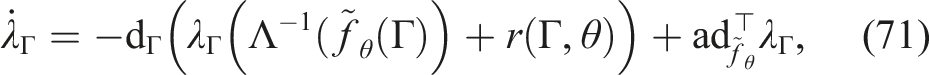

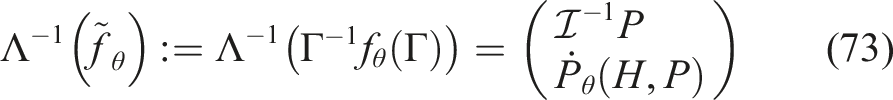

In order to compute the gradient ∂/∂θC i of the cost for a single trajectory (10), we derived the generalized adjoint method on matrix Lie groups. It is the main technical result of this paper, and it is stated in the following:

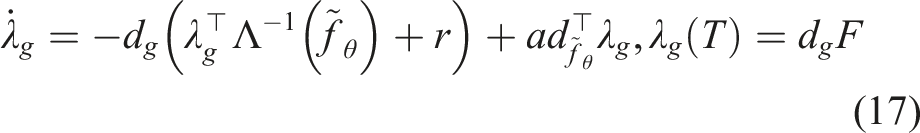

Generalized Adjoint Method on Matrix Lie Groups. Given are the dynamics (8) and the cost (10). Denote by The generalized adjoint method (Massaroli et al., 2022) on Just as the generalized adjoint method on Various technical tools are required to apply Theorem 2.1 in practice. This includes the exponential Atlas for exact integration of

Equations (16) and (17) are solved by integrating (16) forward in time, computing d

g

F at g = g(T), and integrating (17) backwards in time, reusing g(t) from the forward integration. Equation (15) is solved by integrating its differential alongside Equation (17). See especially Figure 1. The memory efficiency of neural ODEs stems from the fact that trajectories g(t) and λ

g

(t) do not need to be stored, apart from a few way-points of g(t), and that the dependency of g(t) on parameters is largely ignored in the forward pass - this avoids overheads that arise, for example, through automatic differentiation over an ODE-solver.

The choice of group action for

3. Technical tools

A number of technical tools are presented in the context of matrix Lie groups. Given mild adaptations of the definitions these tools also apply to abstract finite-dimensional Lie groups (see Appendix A.4).

3.1 Atlas and minimal atlas on Lie groups

In this section the exponential map and logarithmic maps will be used to construct an atlas of exponential charts for finite-dimensional Lie groups, and the concept of a minimal exponential atlas will be defined. Here an atlas is defined as follows:

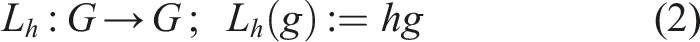

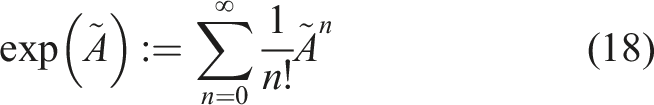

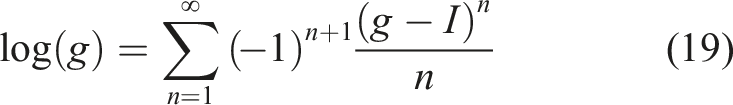

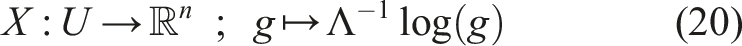

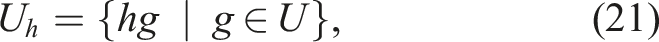

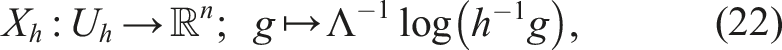

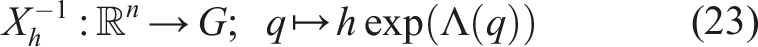

Atlas and Charts. An For finite-dimensional Lie groups the exponential map For a matrix Lie group, the exponential map is given by the infinite sum (Hall, 2015: Chapter 3.7): Conversely, the log map for matrix Lie groups is given by the matrix logarithm, when it is well-defined (Hall, 2015: Chapter 2.3): On a case-by-case basis the infinite sums in (18) and (19) can further be reduced to a finite sum by use of the Cayley-Hamilton theorem (Visser et al., 2006), which often allows one to find a closed-form expression of the exp and log maps. The logarithmic map (19) and Λ in equation (3) can then be used to construct a local exponential chart (U, X) for G, where To create a chart “centered” on any h ∈ G (i.e., the zero coordinates are assigned to h), both the region U and the chart map X can be left-translated

3

by L

h

to define the chart (U

h

, X

h

) with The collection In order to use a finite number of charts, we are interested in constructing a minimal exponential atlas. A minimal atlas is defined as follows:

Minimal Atlas. An atlas

Given a manifold

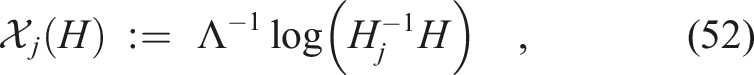

Given a minimal exponential atlas (which can be seen to be always countable) we use integers j to number the relevant chart-centers as g

j

, the corresponding charts as (U

j

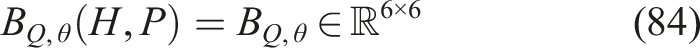

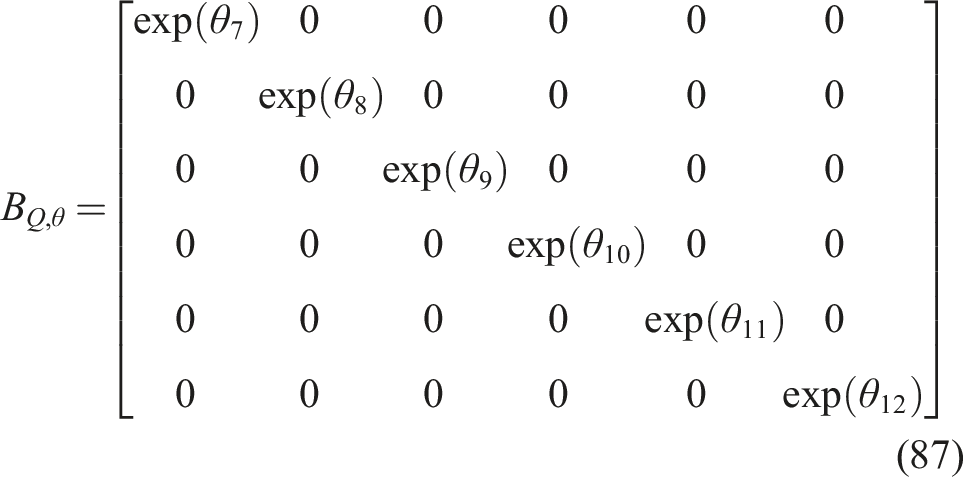

, X

j

), and denote the chart-coordinates in the j-th chart as

3.2 Vectors on Lie groups

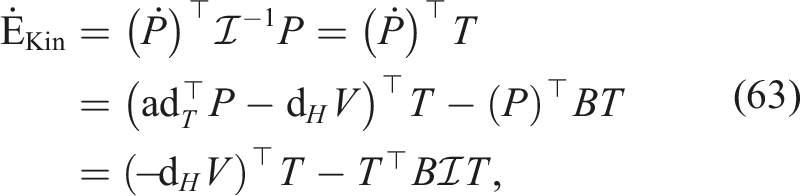

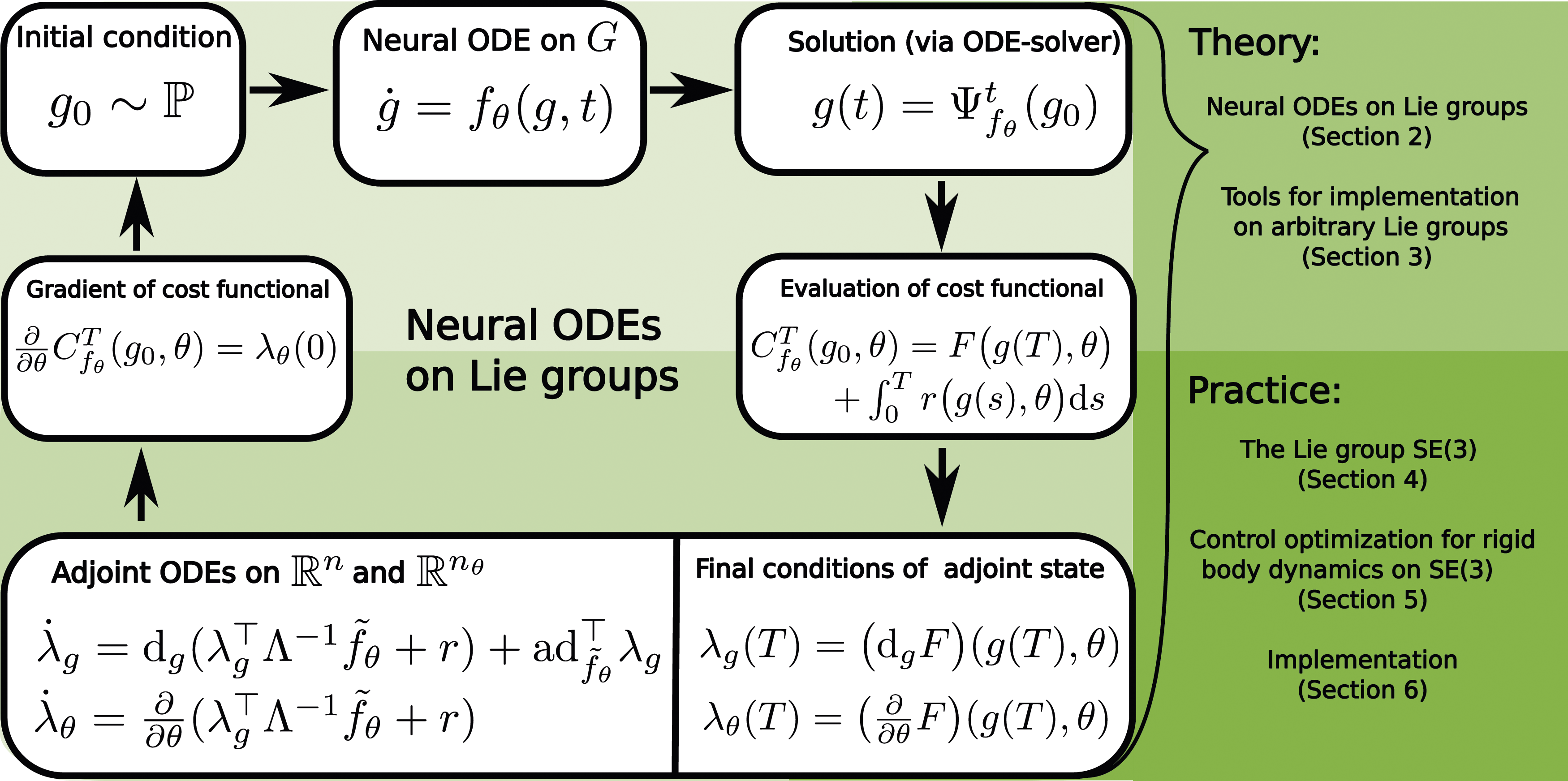

Given a curve Commutative diagram of a generic Lie group G. Boxes represent sets, while arrows represent functions between sets. Relevant variables in a given set are indicated in red.

Recall that

The expression (24) is invariant under choice of exponential chart, that is, for any charts (U

j

, X

j

) and (U

k

, X

k

) one has that

3.3 Lie group integrators

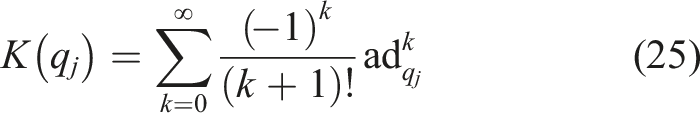

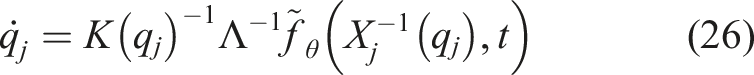

We adapt the Runge-Kutta-Munthe-Kaas (RKMK) method (Munthe-Kaas, 1999) for exact integration of the dynamics (8). The RKMK method uses the Runge-Kutta scheme on Lie groups—we instead allow for arbitrary numerical integration schemes. For an overview of Lie group integrators, see, for example, Iserles et al. (2000); Celledoni and Owren (2003); Celledoni et al. (2014).

Using equation (24), the dynamics (8) can be represented in a local exponential chart as (Celledoni et al., 2014)

Note that the chart-dynamics (26) are not necessarily well-defined for all To make sure the state remains within the chart region we switch charts when needed by application of Given a minimal exponential atlas, we choose to reduce the amount of chart switches. To this end, we introduce indicator functions

The use of a minimal exponential atlas allows to store way-points of a trajectory

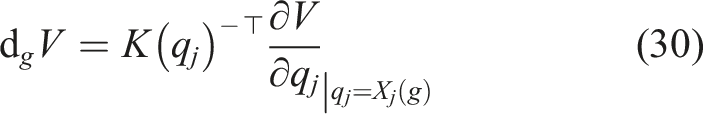

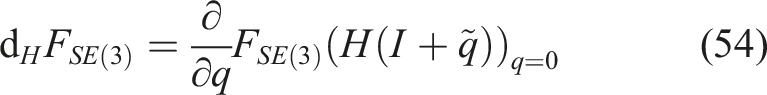

3.4 Gradients on Lie groups

The gradient of a function

Equivalently, d

g

V can be found from the computation in a chart (U

j

, X

j

) as (indeed, dual to (24))

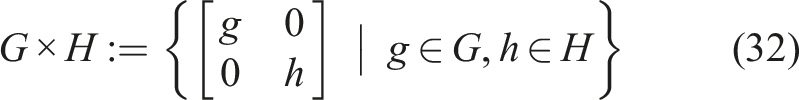

3.5 Composition of Lie groups

We briefly review the composition of Lie groups. Lie groups G and H can always be composed to form a product Lie group G × H. For matrix Lie groups

The composition of matrix Lie groups has a block-diagonal structure. This block-diagonal structure reappears in the construction of the corresponding Lie algebra

4. The cases SO(3) and SE(3)

Prior theory is applied to the Lie groups SO(3) and SE(3).

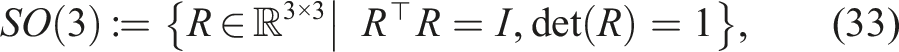

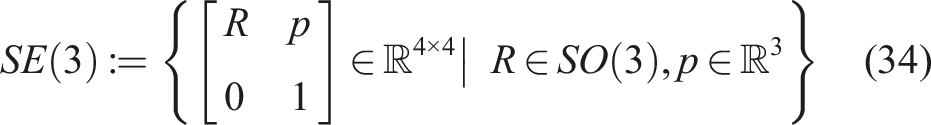

4.1 The matrix Lie groups SO(3) and SE(3)

Here, the special orthogonal group SO(3) and the special Euclidean group SE(3) are directly defined as matrix Lie groups that collect transformations of the Euclidean 3-space

Define

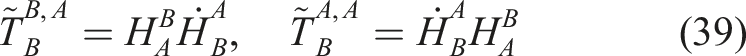

Concerning notation for relative poses of rigid bodies: The Lie algebras of SO(3) and SE(3) are the vector spaces The vector space isomorphism

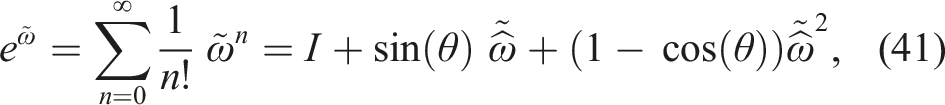

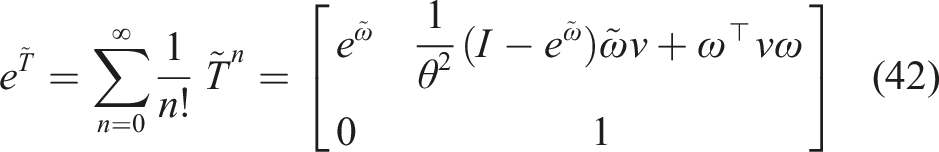

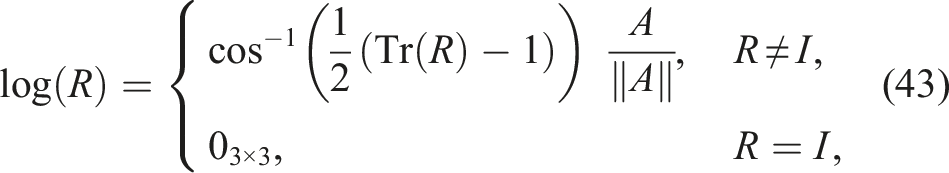

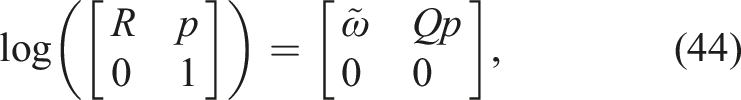

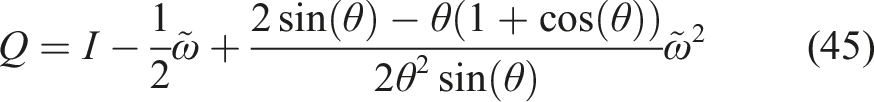

Concerning notation for the relative twists (velocities) of rigid bodies: Consider a curve The adjoint representations of so(3) and se(3) follow from the definition (6) as The exponential maps for SO(3) and SE(3) are almost-global diffeomorphisms that relate For θ < π their inverses are presented in equations (43) and (44), respectively: the log map for SO(3) is (Murray et al., 1994, Appendix A, Section 2.3) Denoting

4.2 Minimal atlas

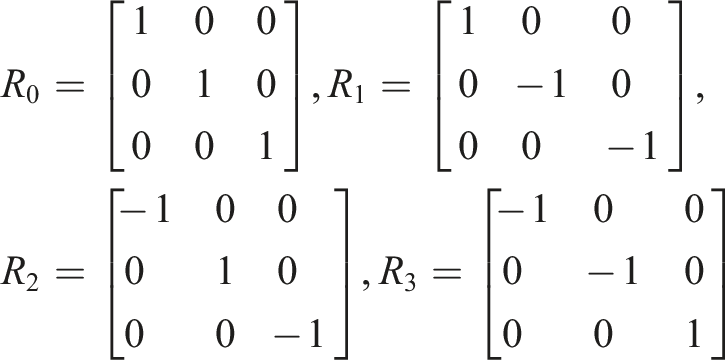

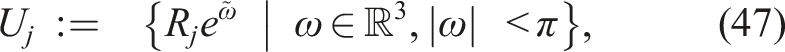

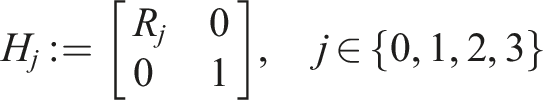

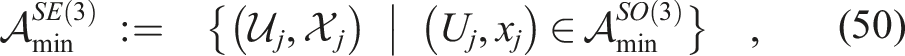

Here we construct minimal exponential atlases for SO(3) and SE(3) as special cases of the exponential atlas (21) – (23) for the respective Lie groups. Both atlases use four charts, which is the theoretical minimum size of an atlas for SO(3) and SE(3) (Grafarend and Kühnel, 2011).

For the atlas on SO(3) the four exponential charts are centered on the elements

Intuitively speaking, the open set U j contains all orientations that are reachable from R j by a rotation through an angle less than π.

A proof that

For the atlas on SE(3), define the centers of the exponential charts on SE(3) as

4.3 Expressing scalar functions

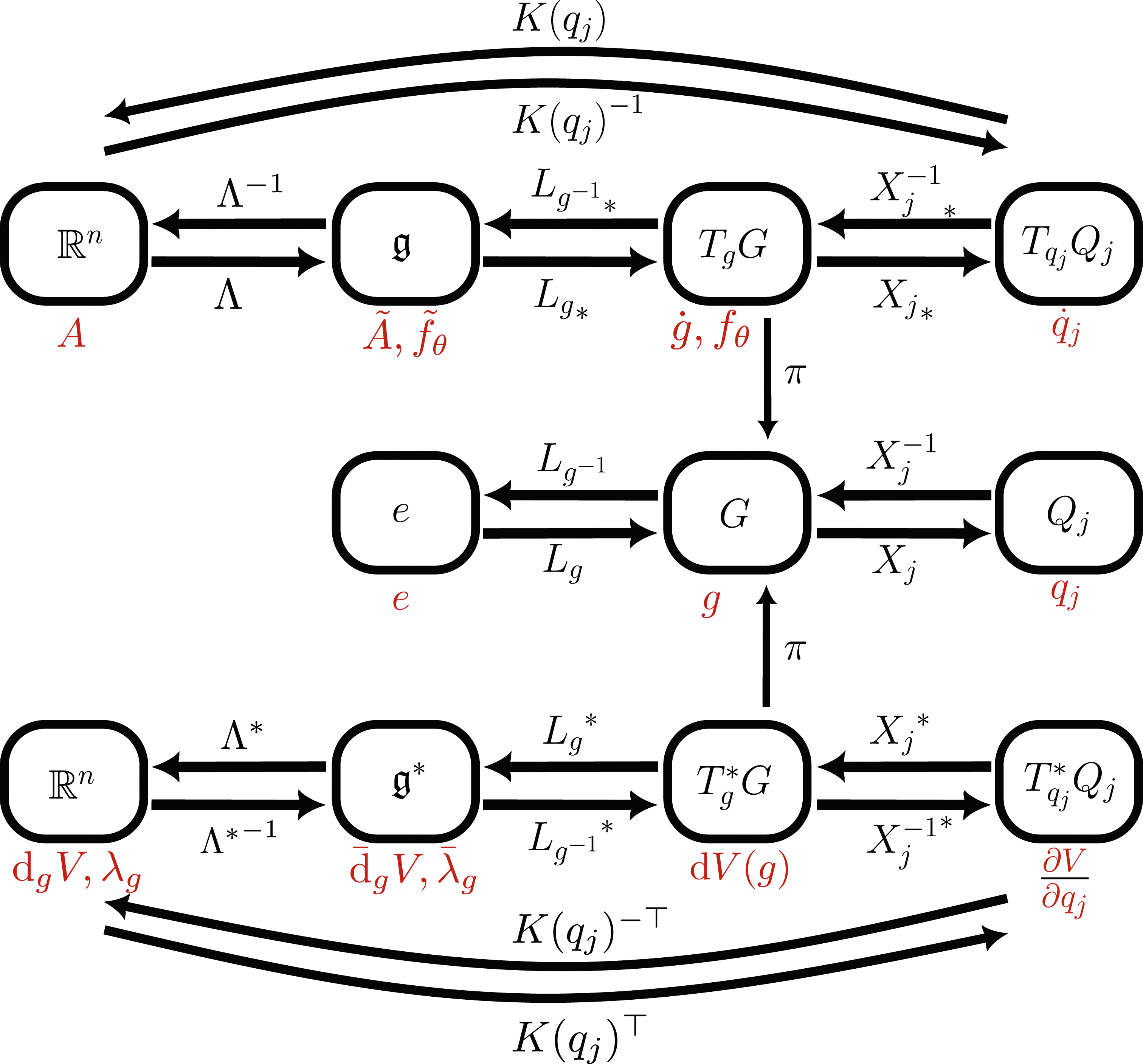

We briefly highlight how to represent scalar-valued functions

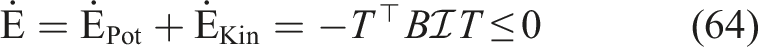

For example, on SE(3) one restricts a function Commutative diagram highlighting how the natural embedding

The approach also holds for SO(3), which can be embedded in

5. Optimizing a rigid body control

The optimization procedure in Section 2 is applied to potential energy and damping injection based control of a fully actuated rigid body.

The core idea of potential energy shaping and damping injection is to combine advantages of energy-balancing passivity based control (EB-PBC) (Ortega and Mareels, 2000) and of control by interconnection (Ortega et al., 2008), which provide stability guarantees when interfacing with physical systems. Our article presents a class of controllers that generalizes the architecture presented by Rashad et al. (2019). We address common safety concerns about machine learning in control loops by optimizing a class of controllers that guarantees stability and a bounded energy by design.

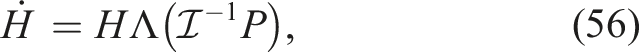

5.1 Control of a rigid body

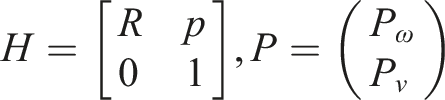

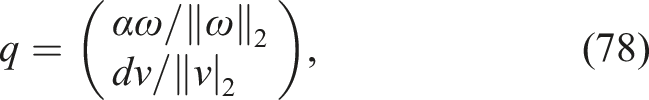

The trajectory of a rigid body in Euclidean 3-space is fully described by the curve

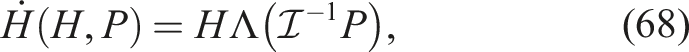

The dynamics of a rigid body follow from the Hamiltonian equations on matrix Lie groups ((100) and (101) in Appendix A.2) by setting G = SE(3) and letting

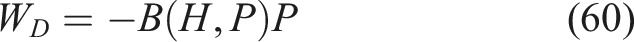

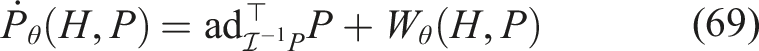

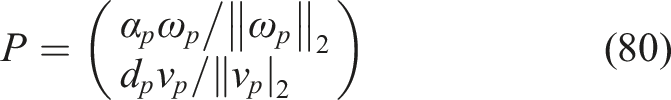

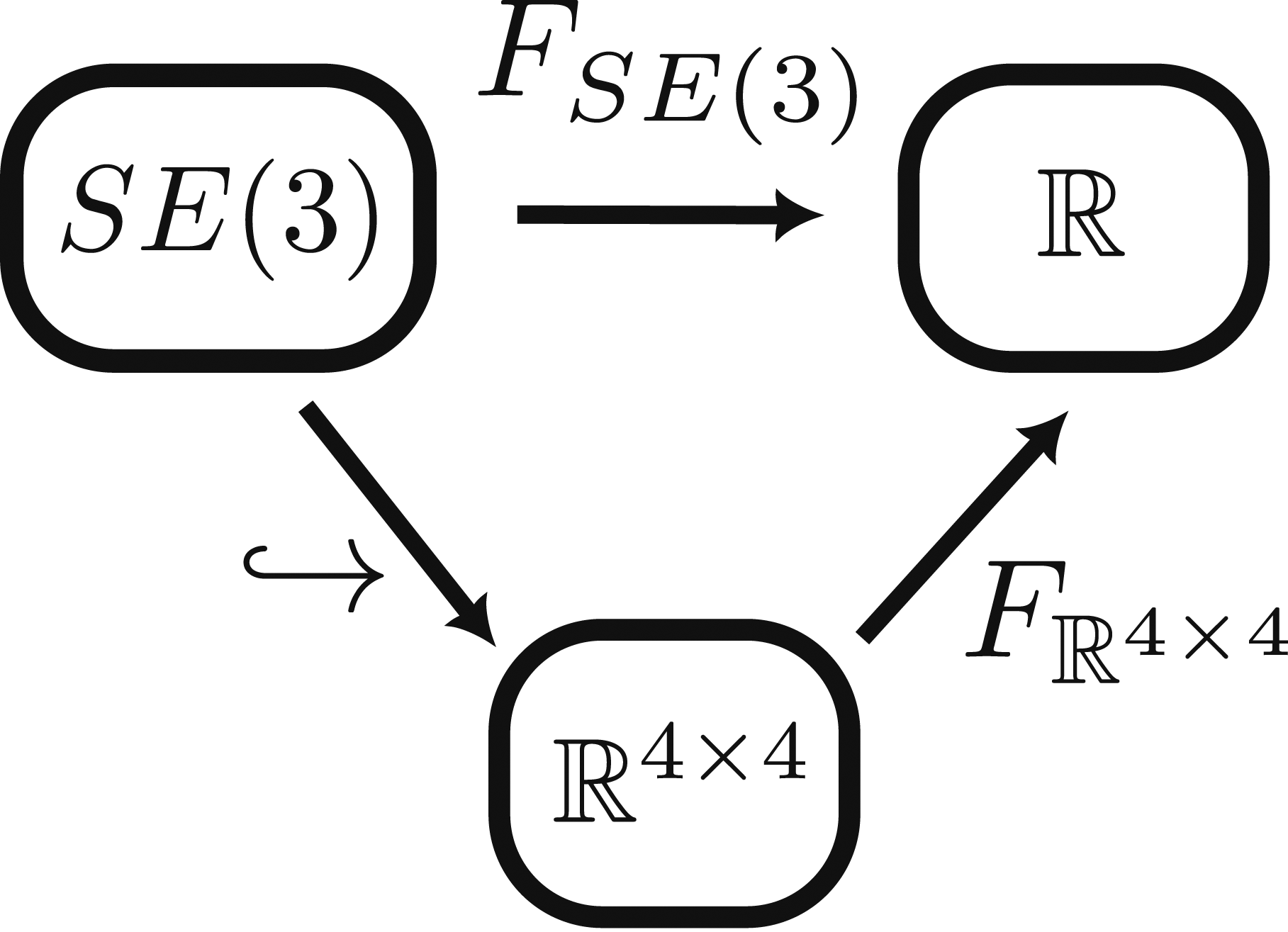

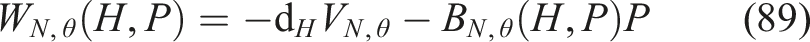

In control by potential energy shaping and damping injection, this external wrench is constructed as a sum of a potential gradient term W

V

and a damping term W

D

:

Nonlinear, configuration-dependent viscous damping takes the form

In this context, the control architecture of Rashad et al. (2019) corresponds to the popular yet very particular choice of a constant B(H, P) for the damping injection, while their potential V(H) shows a quadratic dependence on translations and a nearly quadratic dependence on rotations. Their controller may be interpreted as a linear PD controller on SE(3), where our work may be seen as a nonlinear PD controller on SE(3).

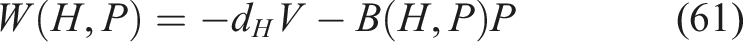

5.2 Stability

We present here a general proof of stability for the class of controllers.

Stability. Given the system (56), (57) together with the controller (58) given as

5.3 Optimization by the adjoint method on SE(3)

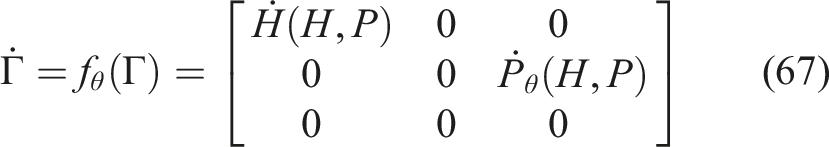

In order to apply the adjoint method on Lie groups (Theorem 2.1), we re-define the dynamics (56) and (57) on the Lie group G = SE(3) × se*(3). To this end, we choose to equip G with the element-wise composition (H1, P1) ◦ (H2, P2) = (H1H2, P1 + P2).

As a composition of matrix Lie groups SE(3) and

Given a cost

As in Section 2.3, approximate

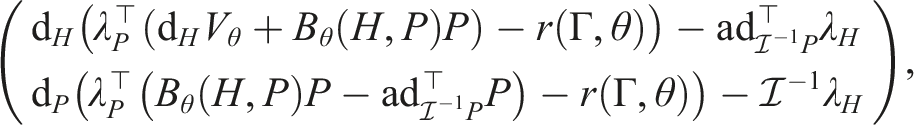

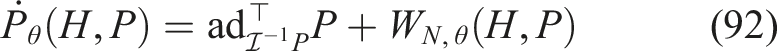

The dynamics of λΓ follow from equation (17) as

The gradient

Further, split λΓ = (λ

H

, λ

P

) into components

Note that the second derivative term

Rather than constructing the direct product group SE(3) × se*(3), the semi-direct product group SE(3) ⋉ se*(3) could have been defined using the Coadjoint representation

6. Simulations

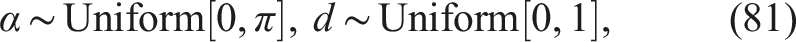

We numerically solve the optimization problem (11) for the dynamics (67). We investigate various choices of final and running costs, distributions

6.1 Quadratic vs. general potential shaping

A controller with quadratic potential and linear damping injection (Section 6.1.2) is compared to a controller with NN-parameterized potential and damping injection (Section 6.1.3).

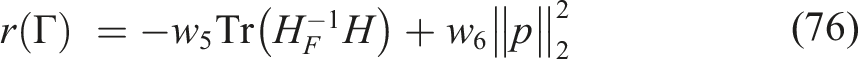

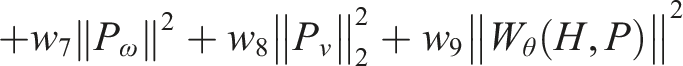

6.1.1 Choice of cost C and distribution

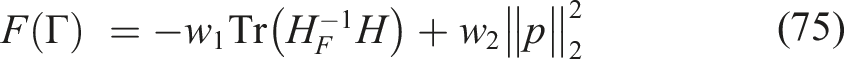

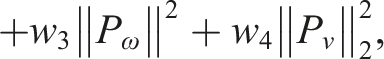

We determine a final cost F and a running cost r to stabilize a static target state with H = H

F

, p = 0 over a horizon of T = 3 seconds. The key properties of F and r are that both are differentiable and have their minimum in the target pose. Denote components of H and P

Given scalars

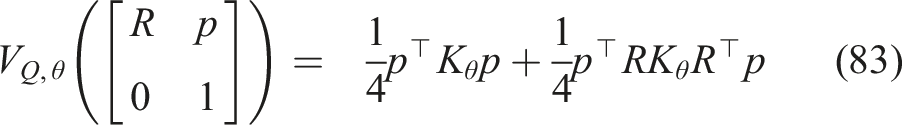

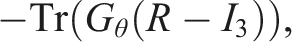

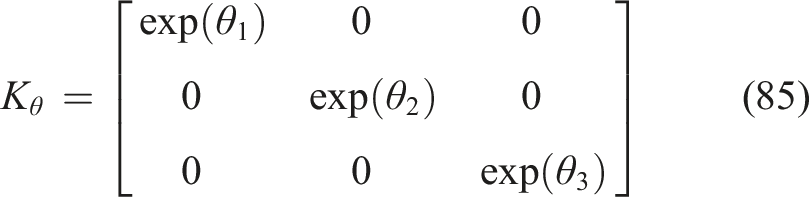

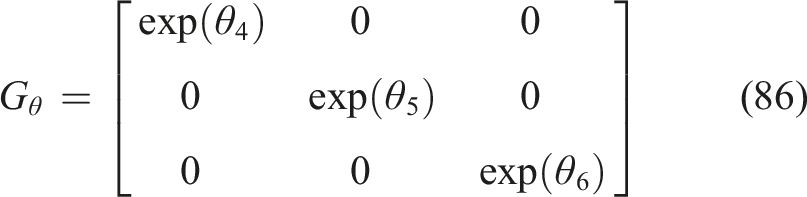

6.1.2 Quadratic potential and linear damping injection

The quadratic controller coincides with the controller presented by Rashad et al. (2019), in a setting of motion control. As such the quadratic potential

The control-law is then of the form

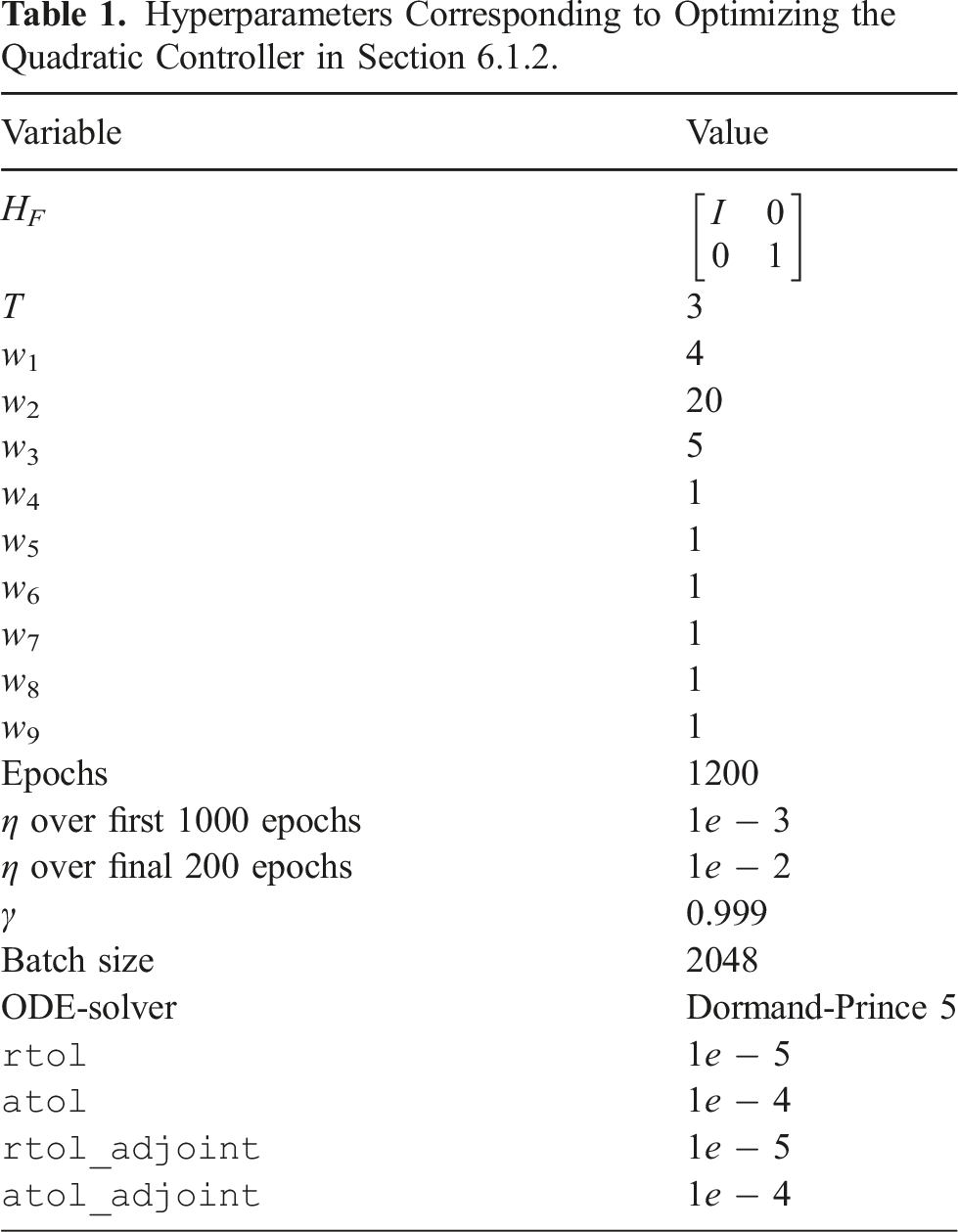

Hyperparameters Corresponding to Optimizing the Quadratic Controller in Section 6.1.2.

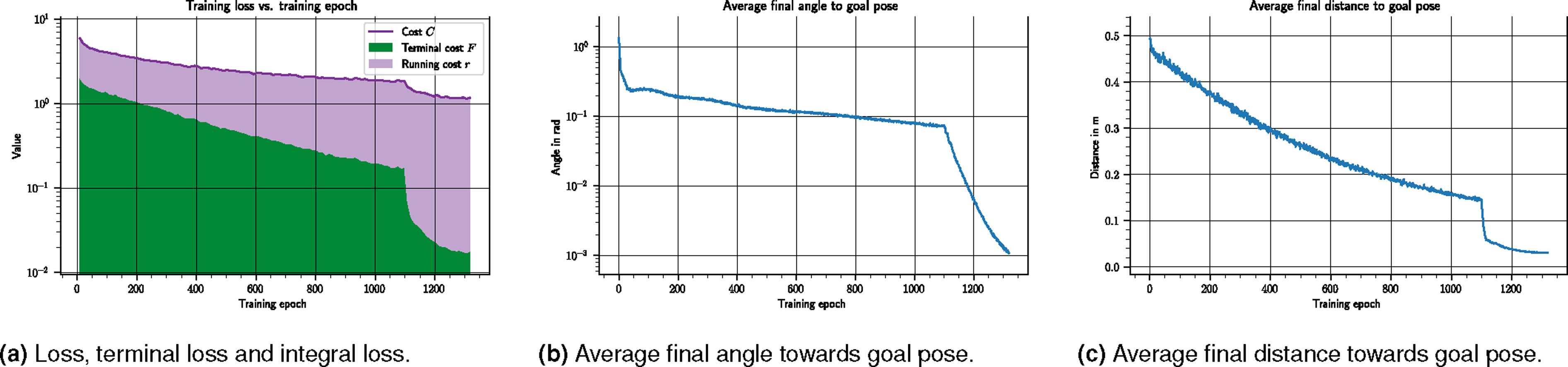

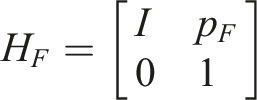

Visualization of the training progress of the quadratic controller characterized by VQ,θ and BQ,θ. All figures show data averaged over 2048 sample trajectories at the given epoch, with initial conditions sampled from

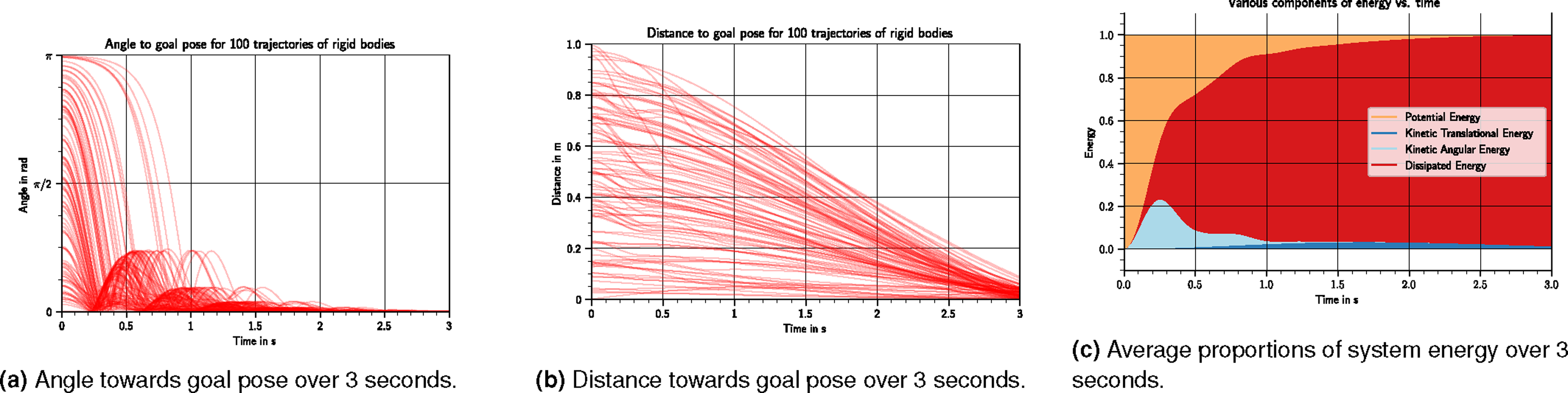

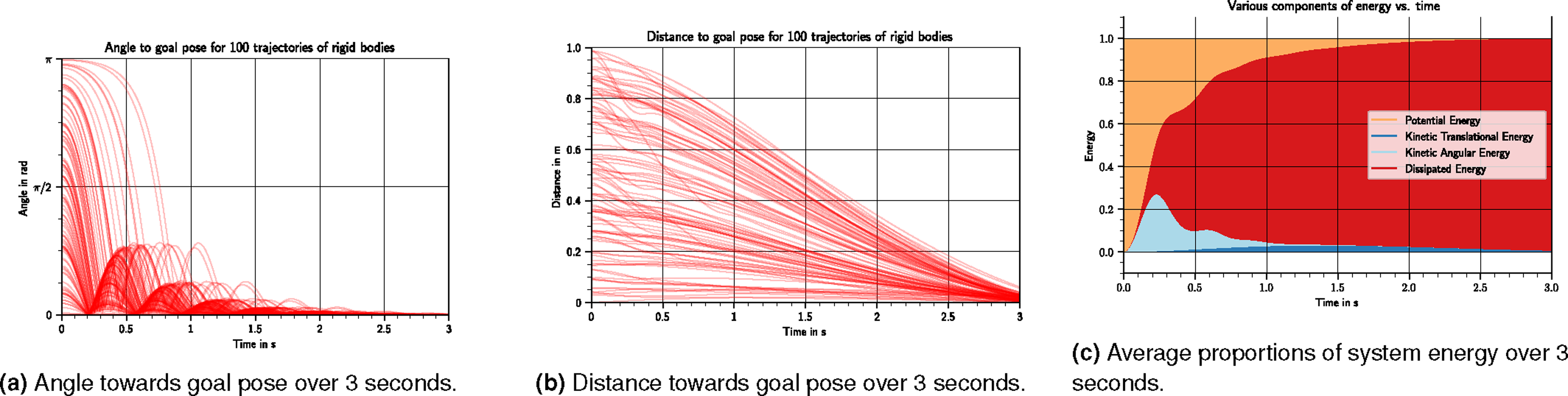

Visualization of the performance of the quadratic controller characterized by VQ,θ and BQ,θ, over 100 trajectories of rigid bodies with initial conditions sampled from

6.1.3 Nonlinear potential and damping injection

Here we showcase the optimization of a nonlinear potential

The control-law is of the form

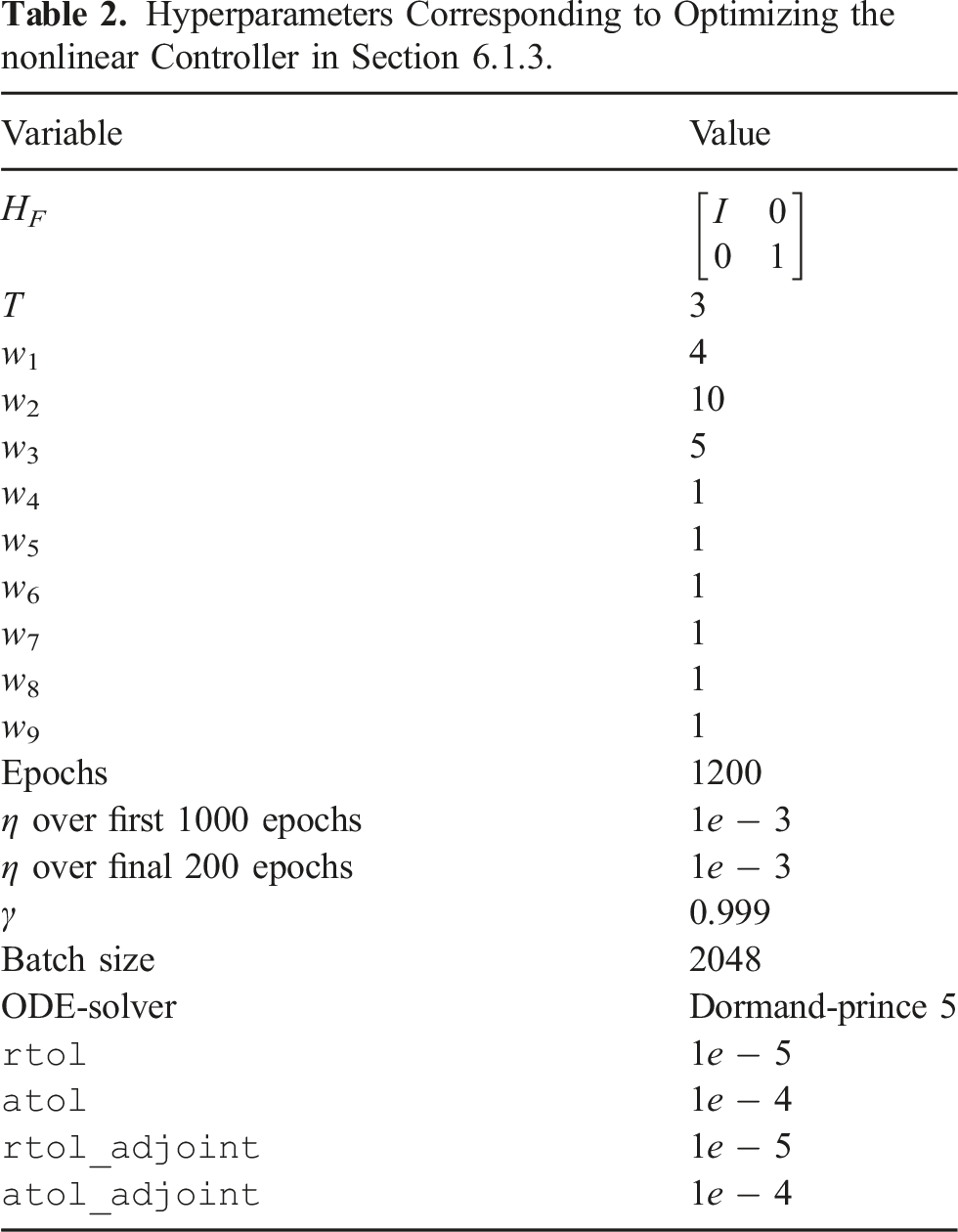

Hyperparameters Corresponding to Optimizing the nonlinear Controller in Section 6.1.3.

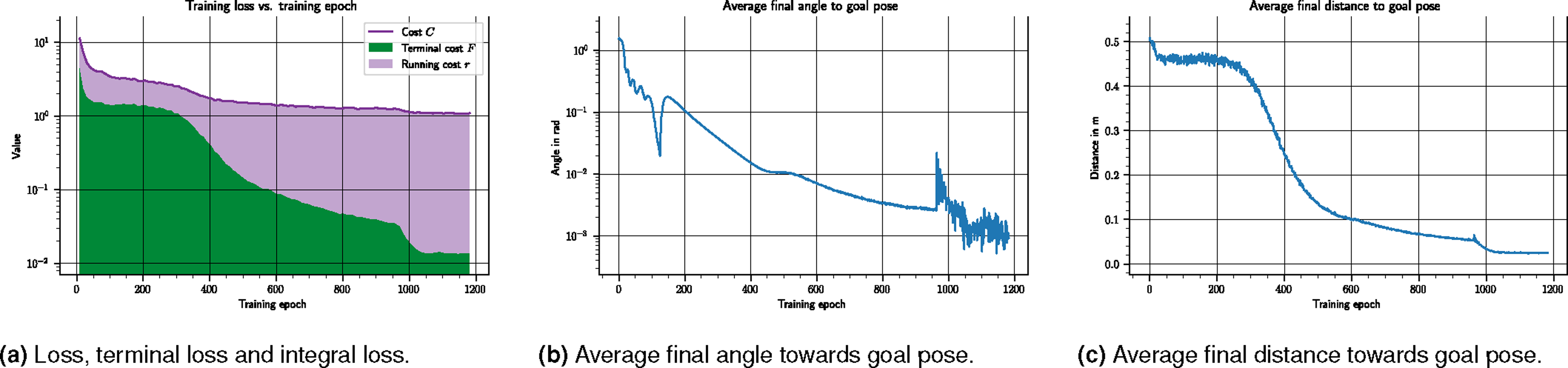

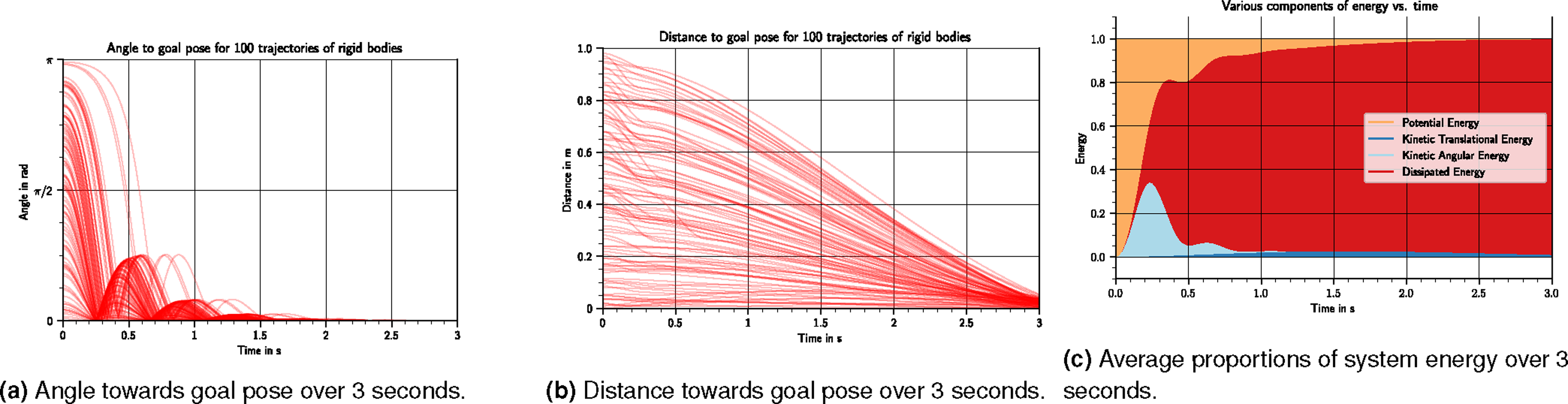

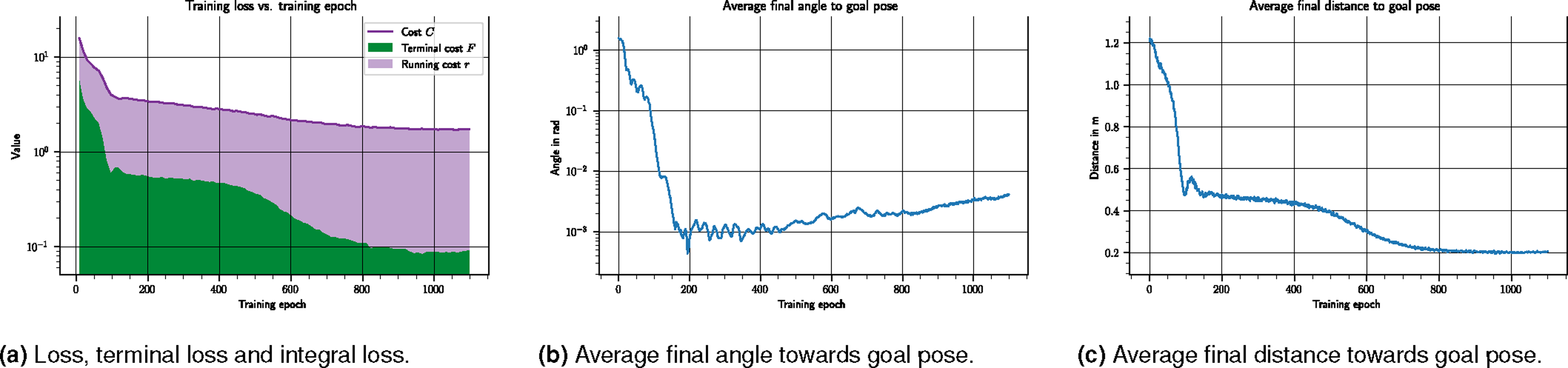

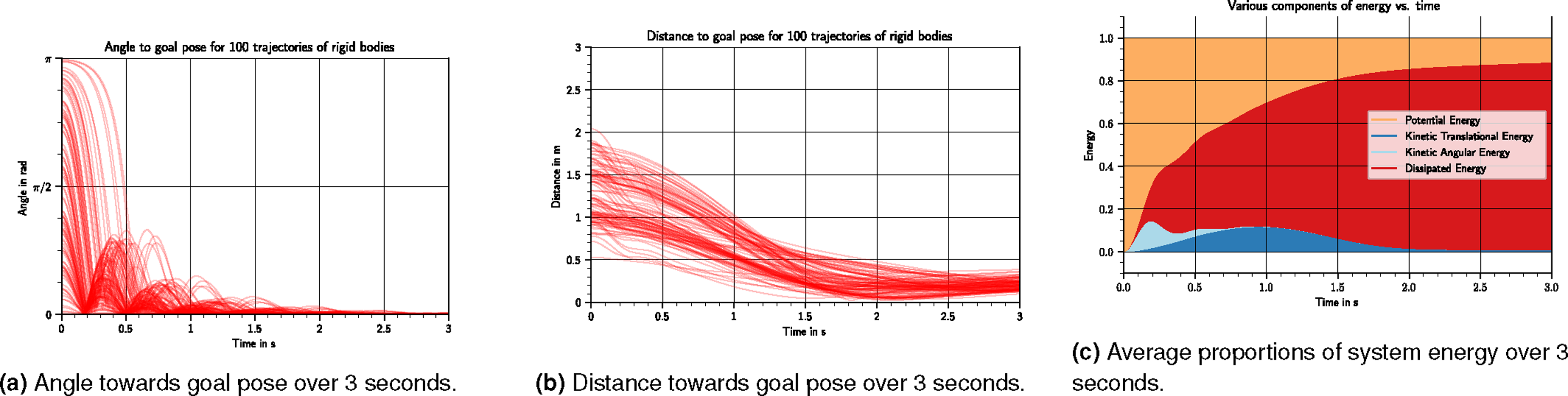

The training progress is summarized in Figure 6. It can be seen that the final loss of the nonlinear controller in Figure 6(a) is equivalent to that of the quadratic controller Figure 4(a). In particular, the performance of a quadratic and a nonlinear controller for this scenario are close: the final angle and distance in Figures 6(b) and 6(c) are comparable to those of Figures 4(b) and 4(c), respectively. The resulting controller’s performance is shown in Figure 7. Here the qualitative behavior shown in Figures 7(a), 7(b) and 7(c) resembles that of the quadratic case in Figures 5(a), 5(b), and 5(c), respectively. Visualization of the training progress of the nonlinear controller characterized by VN,θ and BN,θ. All figures show data averaged over 2048 sample trajectories at the given epoch, with initial conditions sampled from Visualization of the performance of the nonlinear controller characterized by VN,θ and BN,θ, over 100 trajectories of rigid bodies with initial conditions sampled from

6.2 General potential shaping with gravity

We optimize an NN-parameterized potential and damping injection in a system with gravity in Section 6.2.2, and show the effect of an adapted target configuration in Section 6.2.3.

6.2.1 Adapted running cost r

In the presence of a gravitational potential

To take gravity into account in the optimization, the only required adaptation is to use the adapted W θ (H, P) in the running cost (76).

Minimizing the term ‖W

θ

(H, P)‖ indirectly minimizes the required gravity compensation by reducing the total wrench exerted on the plant. Indeed, when ‖W

θ

‖ = 0 the dynamics are

6.2.2 Nonlinear potential and damping injection

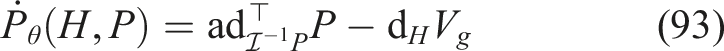

Hyperparameters Corresponding to Optimizing a nonlinear Controller Including Gravity in the Cost, as in Section 6.2.

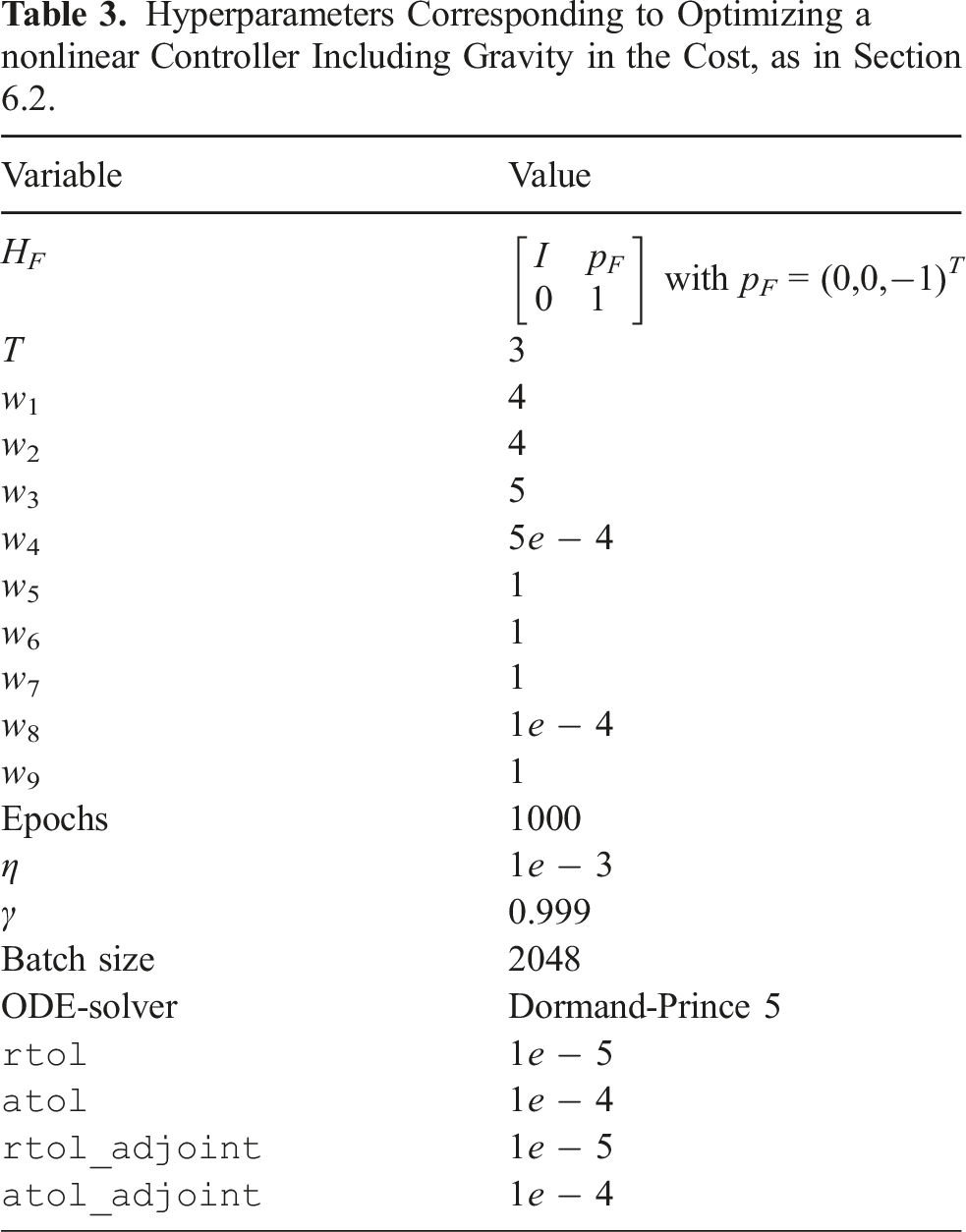

Visualization of the training progress of the nonlinear controller characterized by VN,θ and BN,θ, in the presence of gravity. All figures show data averaged over 2048 sample trajectories at the given epoch, with initial conditions sampled from

Visualization of the performance of the nonlinear controller characterized by VN,θ and BN,θ, in the presence of gravity. The results show 100 trajectories of rigid bodies with initial conditions sampled from

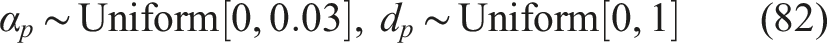

6.2.3 Asymmetric initial distribution

To better highlight the influence of the adapted running cost, and how the optimization in the presence of gravity differs from an optimization in the absence of gravity, an initial distribution asymmetric about the goal pose H

F

(77) is introduced by choosing

The parameters of this training coincide with those of the symmetric scenario, and are likewise summarized in Appendix C.1, Table 3. The training progress is summarized in Figure 10, and the resulting controller’s performance is shown in Figure 11. Visualization of the training progress of the nonlinear controller characterized by VN,θ and BN,θ, in the presence of gravity and with an initial distribution whose mean is above the target position. All figures show data averaged over 2048 sample trajectories at the given epoch, with initial conditions sampled from Visualization of the performance of the nonlinear controller characterized by VN,θ and BN,θ, in the presence of gravity and with an initial distribution whose mean is above the target position. The results show 100 trajectories of rigid bodies with initial conditions sampled from

7. Discussion

7.1 Neural ODEs on Lie groups

The proposed formulation of neural ODEs on Lie groups immediately applies to arbitrary matrix Lie groups, where parameterized maps can be learned with a global validity. The optimization of Neural ODEs on Lie groups by the gradient descent via the generalized adjoint method is a scalable approach. The key aspects that contribute to this scalability are: First, the generalized adjoint method on Lie groups preserves the memory efficiency of the generalized adjoint method used for neural ODEs on

The work can be generalized further: Theorem 2.1 assumes the cost to be of the form (10), while the derivation in Appendix A.4 in principle allows for a more general choice of cost that may be of interest in, for example, learning of periodic trajectories (Wotte et al., 2023). The accompanying code is currently written specifically for the Lie group

7.2 Optimal potential shaping

The optimization of an NN-parameterized potential and damping injection was successful and the large number of parameters used in the optimization confirms that it scales to the large parameter scenario. The optimization was also successful when including gravity in a nonlinear running cost. Stability was guaranteed by design, by implementing the requirements of Theorem 5.1 on the level of architecture and activation functions. As a further advantage the resulting controller is global on SE(3), as opposed to only being applicable in a limited chart region.

Regarding limitations of the approach, the numerical stability of the adjoint method on SE(3) was observed to strongly depend on the smoothness of the running cost, which suggests added value in considering different Lie group integrators that accommodate this lack of smoothness. Lastly, while the structure of the presented controller is highly interpretable and the various components of the energy are readily visualized, the space of possible initial conditions and trajectories remains large, and the high-dimensional state-space obscures low-level properties and a deep understanding of the eventual controller, beyond safety guarantees and numerical verification of stability.

Alternative choices for the final and running costs, as well as the weights in these costs are worth investigating. The design space of possible controllers is also large and other control architectures may be advantageous. In future work the controller will be applied to a real drone, and other cost-functions and control structures will be investigated.

8. Conclusion

Lie groups are ubiquitous in engineering, and so are dynamic systems on Lie groups. We proposed a method for dynamics optimization that works on arbitrary, finite-dimensional Lie groups and for a large class of cost-functions. The resulting method is highly scalable, and more compact than alternative manifold formulations. The key steps in the formulation related to using canonical Lie group structure to create a compact gradient descent algorithm: we phrased the generalized adjoint method at the Lie algebra level, we utilize a compact expression for the gradient as an element of the dual to the Lie algebra, and we use a generic Lie group integrator for dynamics integration. The method was successfully applied to optimize a controller for a rigid body that is globally valid on the Lie group SE(3). A key aspect of choosing the class of controllers was stability by design, which guided the architecture of the neural nets that parameterize the potential energy shaping and damping injection controller.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was supported by the PortWings project funded by the European Research Council [Grant Agreement No. 787675].

Notes

Appendix

A The generalized adjoint method on Lie groups

In this Appendix the generalized adjoint method on matrix Lie groups (Theorem 2.1) is derived in four steps.