Abstract

This paper addresses the lower limits of encoding and processing the information acquired through interactions between an internal system (robot algorithms or software) and an external system (robot body and its environment) in terms of action and observation histories. Both are modeled as transition systems. We want to know the weakest internal system that is sufficient for achieving passive (filtering) and active (planning) tasks. We introduce the notion of an information transition system (ITS) for the internal system which is a transition system over a space of information states that reflect a robot’s or other observer’s perspective based on limited sensing, memory, computation, and actuation. An ITS is viewed as a filter and a policy or plan is viewed as a function that labels the states of this ITS. Regardless of whether internal systems are obtained by learning algorithms, planning algorithms, or human insight, we want to know the limits of feasibility for given robot hardware and tasks. We establish, in a general setting, that minimal information transition systems (ITSs) exist up to reasonable equivalence assumptions, and are unique under some general conditions. We then apply the theory to generate new insights into several problems, including optimal sensor fusion/filtering, solving basic planning tasks, and finding minimal representations for modeling a system given input-output relations.

Keywords

1. Introduction

Accomplishing a robot’s tasks may involve designing or employing a combination of different parts: planning algorithms, sensor fusion or filtering methods, machine learning algorithms, and control laws. Given a problem expressed in terms of a well-defined task structure, the relationship between these different parts with each other, and their relation with the task is often ignored. Each part is developed rather in isolation, heavily motivated by the long lasting traditions in robotics. For example, navigating a mobile robot to a goal configuration is typically achieved by estimating the robot configuration in a known (or an unknown) map and applying a feedback policy that relies on the estimated configuration. Indeed, for some problems it may be possible that a simpler filtering approach that does not require estimating the full state could as well be sufficient to achieve the given task. This may lead many to believe that robotics itself does not have its own, unique theoretical core (on this, we agree with (Koditschek, 2021)) and it appears as an application area for other fields; designing and testing machine learning algorithms, planning algorithms, sensor fusion methods, control laws, and so on. In our quest towards a theory that is unique to robotics and that plays a similar role to Turing machines for computer science, or

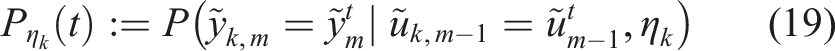

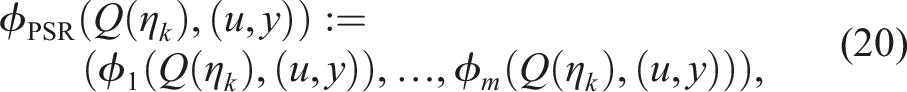

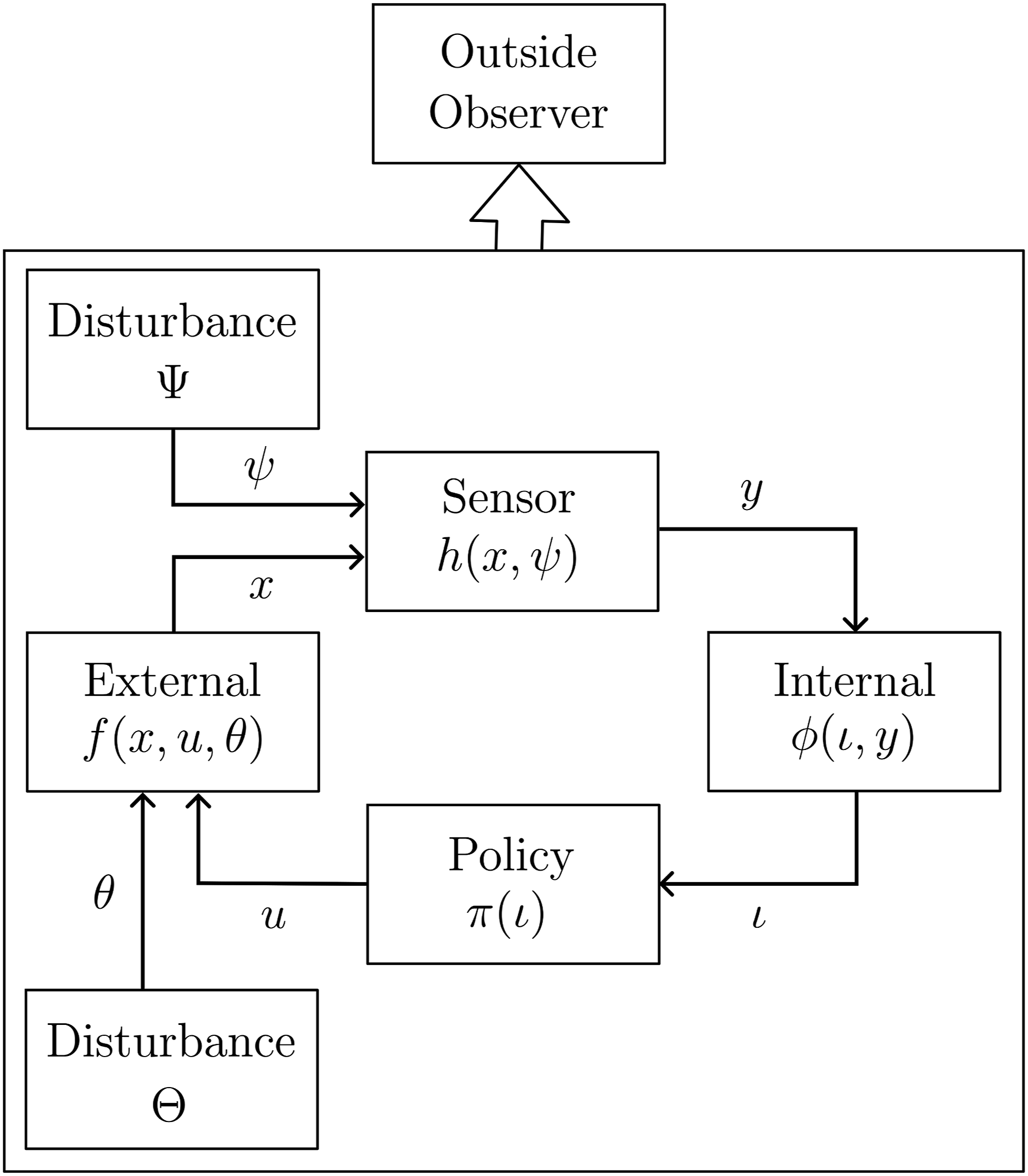

This paper proposes a mathematical robotics theory that is built from the input-output relationships between two (or more) coupled dynamical systems. For a programmable mechanical system (robot) embedded in an environment, the input-output relationships correspond to sensing and actuation between two coupled systems; an internal system (robot brain) and an external system (robot body and the environment). This relation is shown in Figure 1(a)–(b). We assume that the robot hardware is fixed, which means fixing the sensors and the actuators, and we focus on determining which necessary and sufficient conditions the internal system has to maintain for a task to be accomplished. In light of these conditions, we try to find a minimal sufficient internal system which corresponds to the weakest possible representation of the acquired information through interactions; reducing the internal system any further makes the problem unsolvable. (a) The internal robot brain is defined as an ITS that interacts with the external world (robot body and environment). (b) Coupled internal and external systems mathematically capture sensing, actuation, internal computation, and the external world.

At the core of our framework is the notion of an ITS which builds on the well-studied notion of transition systems. The information part of an ITS comes from information spaces (LaValle, 2006: Chapter 11) which is developed as a foundation of planning with imperfect state information due to sensing uncertainty. The concept of sufficient information mappings appears therein. It is generalized in this paper, and the state space of each ITS will in fact be an information space. An internal system will be modeled as an ITS and its sufficiency and minimality with respect to a problem will be analyzed using this framework.

We categorize tasks into two classes: active and passive. Informally, a passive task corresponds to filtering and an active one corresponds to planning or control. In our work an ITS can be seen as a filter and together with its underlying information space serves as a domain over which a plan or a policy can be expressed and analyzed. We will consider a variety of information spaces, which also encompass robot configuration spaces or phase spaces, that are typically used for planning tasks. A distinction between model-based and model-free formulations will be considered too, in line with the choices commonly found in machine learning. We characterize the problems corresponding to the active and passive tasks and define notions of feasibility and minimality for information transition systems (ITSs) that solve these problems. In our approach to finding minimal sufficient ITSs, we will analyze the limits of reducing or collapsing the information spaces, until the lower limits of task feasibility are reached.

1.1. Previous work

Many of the concepts in this paper build upon (Weinstein et al., 2022), in which we recently proposed an enactivist-oriented model of cognition based on information spaces. By enactivist (Hutto and Myin, 2012), it is meant that the necessary brain structures emerge from sensorimotor interaction, and do not necessarily have predetermined, meaningful components as in the classical representationalist paradigm (Newell and Simon, 1972). Despite introducing a general framework, the focus of (Weinstein et al., 2022) was on emerging equivalence classes of the environment states through sensorimotor interactions. In some sense, this corresponds to finding a (minimal) sufficient sensor or a representation for the external system. In this paper, we focus on the internal system which, in turn, refers to finding a minimal filter and/or a policy, given an appropriate task description.

Most approaches in filtering can be categorized into two classes: probabilistic and combinatorial. Probabilistic filters typically rely on Bayes’ rule to propagate the obtained information (Särkkä, 2013; Thrun et al., 2005). They have been extensively used in robotics; especially for state estimation, mapping, and localization (Dissanayake et al., 2001; Zhen et al., 2017). Combinatorial filters (Kristek and Shell, 2012; Tovar et al., 2008) do not rely on probabilistic models but instead make use of nondeterministic (possibilistic) ones. The notion of information spaces (LaValle, 2006: Chapter 11) provides a general formalization that encompasses both probabilistic and combinatorial filters. In our framework based on transition systems, the set of states of a transition system will indeed be an information space. Using the notion of information spaces, several works attempted to characterize different sensors defined over the same state space and compare their power in terms of gathered information (LaValle, 2012; O’Kane and LaValle, 2008; Zhang and Shell 2021). Despite providing an elegant and exact way of solving filtering problems, the space requirements for a combinatorial filter can be high. Considering a notion of minimality, some authors addressed algorithmic reduction of filters (O’Kane and Shell, 2017; Rahmani and O’Kane, 2021; Song and O’Kane, 2012). Reducing combinatorial filters have roots in the theory of computation, where decomposition (Hartmanis, 1960; Hartmanis and Stearns, 1964) and minimization of finite automata (Moore and Mealy machines) has been a topic of active research since the 1950s.

A gap still remains between analyzing the requirements of filters or sensors for pure inference (passive filtering) and the ones needed for active tasks (planning and control) such that an information-feedback policy can be described. Various representations were used in the literature as a domain to define the policy. For most robotic planning problems, the domain of the policy or a plan is fixed; which is the robot configuration or the phase space (Majumdar and Tedrake, 2017; Zhu and Alonso-Mora, 2019). This corresponds to the assumption that the robot state can be fully observed or estimated with high accuracy. For problems that the state is not fully observable, partially observable Markov decision processes (POMDPs) (Kaelbling et al., 1998; Ross et al., 2008) and belief spaces (Vitus and Tomlin, 2011; Agha-Mohammadi et al., 2014) have been considered for planning. Note that POMDP literature is mostly restricted to finite state and action spaces. There is a limited literature that studied the information requirements for active tasks which corresponds to determining the weakest notion of sensing or filtering that is sufficient to accomplish a task. A notable early work showed, especially for manipulation, that one can achieve certain tasks even in the absence of sensory observations (Erdmann and Mason, 1988). In (Zhang and Shell, 2020) the authors characterize all possible sensor abstractions that are sufficient to solve a planning problem. Minimality has been addressed for specific problems regarding mobile robot navigation in (Blum and Kozen, 1978; Tovar et al., 2007). Closely related to our work, a language-theoretic formulation appears in (Saberifar et al., 2019), in which, Procrustean-graphs (p-graphs) were proposed as an abstraction to reason about interactions between a robot and its environment.

Obtaining a model from input–output relations that represents the underlying system has been a common interest for many fields ranging from control theory, machine learning, and robotics. Different approaches to this problem in the context of finite state automata were reviewed by (Pitt, 1989). In diversity-based inference (DBI) for an input–output machine (Bainbridge, 1977; Rivest and Schapire, 1993, 1994), a model of the underlying system is constructed in terms of equivalence classes of tests which consist of one or more consecutive actions and observations. Its probabilistic counterpart, predictive state representation (PSR) (Boots et al., 2011; Littman and Sutton, 2001), addresses the analogous problem by considering (linear) combinations of prediction vectors, which represent probabilities for test success/failure. Other than relying solely on input–output relations, a parametric model (or a class of them) can be provided for learning a representation for the underlying system (Brunnbauer et al., 2022). We will also consider this distinction between model-based and model-free within our ITS framework.

1.2. Contributions

The main contribution of this paper is a novel mathematical framework for analyzing and distinguishing the interactions that emerge from a robotic system embedded in an environment. We introduce the notion of ITSs as a general way to characterize the internal system (“brain”) of the robot. We then proceed to establish conditions for sufficiency and the existence of unique minimal ITSs in a very general setting. Intuitively, we establish how small the robot brain could possibly be for given goals or tasks. Anything less results in impossibility. The framework addresses both filtering and sensor fusion problems, which are passive in the sense of no controls are applied, and planning or control problems, which are active. We illustrate the scope of the framework by applying it to several problems that shed light on relationships to many existing concepts, including Kalman filters, predictive state representations, combinatorial filters, and planning over reduced information spaces.

Many of the concepts in this paper build upon (LaValle, 2006: Chapter 11; Weinstein et al., 2022). The contributions of the present paper with respect to these works are listed in the following: • The framework based on transition systems introduced in (Weinstein et al., 2022) is adapted into a robotic setting. We define the notions of internal and external systems within this context, together with concrete examples. Moreover, we formulate the disturbances affecting the external system model and the sensor mapping, within this framework. • We formally define the notion of task description (distinguishing between finite and infinitary tasks, as well as between active and passive tasks), and filtering and planning problems. • The notion of minimality for a transition system describing the internal system under a planning (control) task is new. • The ITSs corresponding to some of the information spaces presented in (LaValle, 2006: Chapter 11), which were based on intuitions, are shown to be minimal applying the proposed framework. • We also formalize the model-based and the model-free information spaces using the notion of coupled internal-external systems.

This paper is an expanded version of (Sakcak et al., 2022).

1.3. Paper structure

The remaining of the paper is organized as follows. Section 2 provides a general mathematical formulation of robot-environment interaction as transition systems. We also introduce a notion of couplings which encode various types of interactions between an internal and an external system. Section 3 then develops central notions of sufficiency and minimality over the space of possible ITSs. Section 4 applies the general concepts to address what it means to solve both passive (filtering) and active (planning/control) tasks minimally. Canonical problem families are presented that aim to capture typical problem settings in filtering and planning, from the perspective of minimal sufficient solutions. Section 5 illustrates how the theory can be applied using simple examples. Section 6 summarizes the contributions and identifies important directions for future work.

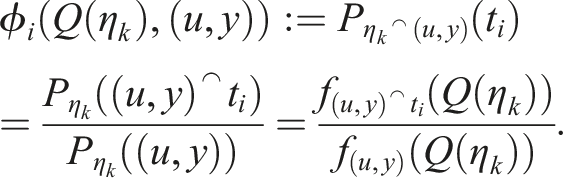

2. Mathematical models of robot-environment systems

2.1. Internal and external systems

In this paper, we consider a robot embedded in an environment and describe this system as two subsystems, named internal and external, connected through symmetric input-output relations. The external system describes the physical world, and the internal system describes the information processing “robot brain.” With “robot brain” we refer to a centralized computational component that processes sensor observations and actions. The interaction between the internal and external systems is shown in Figure 1(b). The input to the internal system is the information reflecting the state of the external system, obtained through observations (that is the output of the external system). The output of the internal system is a control command that in turn corresponds to the control input of the external system, and causes its state to change. In this sense, the state of the external system is similar to the use of the term state in control theory and the state of the internal system is similar to the use of the term in computer science.

The external system corresponds to the totality of the physical environment, including the robot body. Let X denote the set of states of this system; it could be for example, the configuration of a robot (e.g., position and orientation of a mobile robot or joint configuration of a robotic manipulator) within a known environment (or within a set of possible environments), or it can be extended to include also the (higher order) derivatives of its configuration. See (LaValle, 2012, Section 3.1) for possible state spaces of a mobile robot. Next, let U be the set of control inputs (also referred to as actions). When applied at a state x ∈ X, a control u ∈ U causes x to change according to a state transition function f : X × U → X. Indeed, an action u ∈ U refers to the control input to the system and corresponds to the stimuli created by a control command generated by a decision maker. Mathematically, the external system can now be expressed as the triple (X, U, f). The sets X and U can be finite or infinite discrete spaces, or they could be equipped with extra structure: they could be metric manifolds, vector spaces, compact, or non-compact topological spaces. In such cases, the function f may or may not be assumed to respect such structure: sometimes it is appropriate to assume continuity or measurability.

The internal system (robot brain) corresponds to the perspective of a decision maker. The states of this system correspond to the retained information gathered through the outcomes of actions in terms of sensor observations. To this end, the basis of our mathematical formulation of the internal system is the notion of an information space (I-space) presented in (LaValle, 2006: Chapter 11). Let

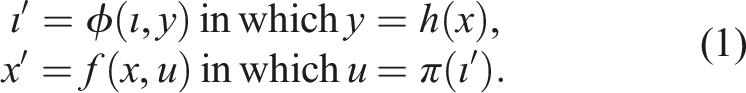

The external (X, U, f) and internal (

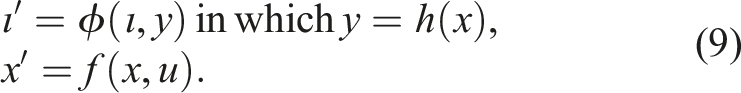

Suppose the system evolves in discrete stages. Then, the coupled dynamical system can be written as

For the external system, starting from an initial state x1, each stage k corresponds to applying an action u

k

which then yields the next stage k + 1 and the next state xk+1 = f (x

k

, u

k

). As the system evolves through stages, the tuples

2.2. Disturbances

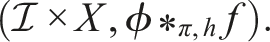

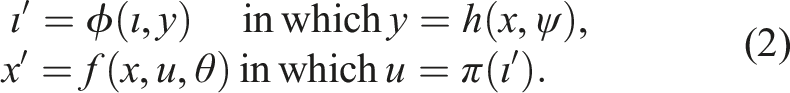

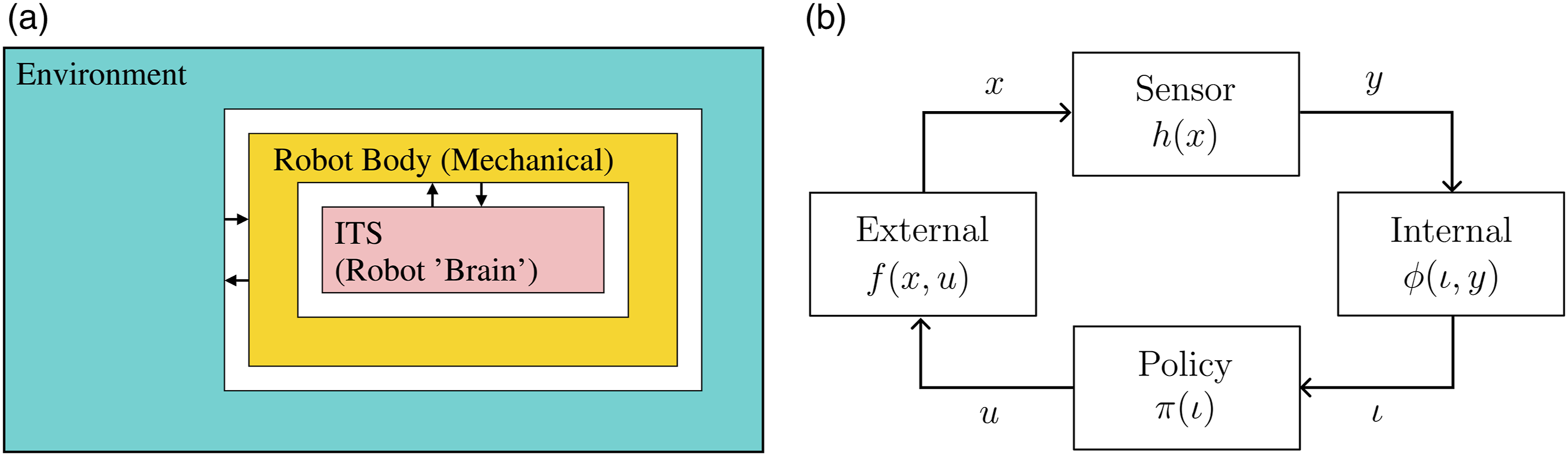

The coupled internal-external systems formulation can be extended to include disturbances affecting the external system and the sensor. In particular, we can define two disturbance generating systems, with outputs θ ∈ Θ and ψ ∈ Ψ, that are influencing the external system and the sensor, respectively (see Figure 2). Mathematically, the external system with disturbances is (X, U × Θ, f), where f : X × (U × Θ) → X is a state transition function for the external system under disturbances. Thus, the disturbances merely add a new dimension to the control parameters of the system. In the internal–external coupling, we also assume disturbances in the sensory mapping which takes the form Disturbances may affect the external system and the sensor. Note that conditioned on the realization of these, the internal system and policy remain deterministic. An outside observer (planner/designer) may perceive the coupled system as a whole.

Note that neither θ, which affects the state transition function of the external system, nor ψ, which affects the sensor mapping, is directly available to the internal system

There are two possibilities for how the information regarding the disturbances can be specified: nondeterministic and probabilistic. In the nondeterministic case, the set Ψ and possibly a subset Ψ(x) ⊆Ψ is specified for all x ∈ X, in which Ψ(x) represents the set of all ψ that can be realized for each x. In the probabilistic case, assuming that the disturbances are generated by a system that is Markovian, such that they do not depend on the previous stages, a probability distribution over Ψ can be specified for each x. This will be denoted as P(ψ∣x). The disturbances affecting the external system can be specified similarly to those that affect the sensor. In the nondeterministic case, the set Θ(x, u) ∈ Θ is known for each (x, u) ∈ X × U. In the probabilistic case, a probability distribution over Θ, that is, P(θ∣x, u), can be specified for each (x, u).

2.3. Generalizing to transition systems

A transition system is a triple (S, Λ, T), in which, S and Λ are some sets (possibly equipped with some structure, for example, topology), and T ⊆ S × Λ × S is a ternary relation. Here S is the set of states, Λ is the set of labels for transitions between elements of S, and a triple (s, λ, s′) belongs to T if there is a transition from s to s′ labeled by λ. A special case is when for each (s, λ) ∈ S × Λ there is a unique s′ ∈ S with (s, λ, s′) ∈ T. Then, T defines a function τ: S × Λ → S. These are called deterministic transition systems, and sometimes also (open) dynamical systems. An extensive analysis of those and their coupling is explored by (Spivak, 2015).

All models in Sections 2.1 and 2.2 are deterministic transition systems: the external, the internal, the disturbed versions, and their couplings are all deterministic transition systems. 2 Note that if Λ is a singleton, the system (S, Λ, τ) is equivalent to a discrete time autonomous dynamical system. If (S, Λ, τ) is a deterministic transition system, S and Λ are finite, s0 ∈ S, and F ⊆ S, then (S, Λ, τ, s0, F) is a finite automaton as defined in (Sipser, 2012, Definition 1.5). If not stated otherwise, we do not assume our systems to be finite. In Section 5.3, we explore connections between our theory with the theory of finite systems.

The following notion of state-relabeled transition systems was introduced in (Weinstein et al., 2022) to model the internal and external systems.

A state-relabeled transition system is the quintuple (S, Λ, T, σ, L) in which σ: S → L is a labeling function and (S, Λ, T) is a transition system. The function σ is the labeling function and L is the set of labels.

A state-relabeled transition system is closely related to the Moore machine which is a state-relabeled transition system with a fixed initial state, a finite set of states, and finite sets of input and output alphabets (finite Λ and L) (Moore, 1956).

In our framework, a labeling function σ serves two purposes; it enables a potential coupling by matching output of one system to the input of another, and acts as a categorization of the states of the system being labeled. Preimages of a labeling function σ induce a partition of the state space S into sets whose elements are indistinguishable through sensing. Let S/σ be the set of equivalence classes [s] σ induced by σ such that S/σ = {[s] σ ∣s ∈ S} and [s] σ = {s′ ∈ S∣σ(s′) = σ(s)}. Then, using these equivalence classes, we can define a new transition system called the quotient of (S, Λ, T) by σ.

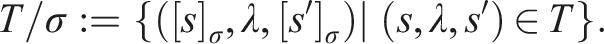

The quotient of (S, Λ, T) by σ is the transition system (S/σ, Λ, T/σ), in which

Note that (S/σ, Λ, T/σ) is a reduced version of (S, Λ, T), in the sense that the map s→[s] σ is onto, but not necessarily one-to-one. 3 We might be interested in finding a labeling function σ such that the corresponding quotient transition system is as simple as possible while ensuring that it is still useful. In the following sections, we will provide motivations for a reduction and discuss in more detail the requirements on σ for the quotient system to be useful.

The external and internal systems can be written as state-relabeled deterministic transition systems (X, U, f, h, Y) and

3. Sufficient information transition systems

3.1. Information transition systems

In the general setting, an I-state corresponds to the available (stored) information at a certain stage with respect to the action and observation histories. An I-space is a collection of all possible I-states. We will use the acronym ITS to refer to an information transition system, that is, a transition system whose state space is an I-space.

We have already used the notion of an I-space when modeling the internal system representing the robot brain, which we view as an ITS. Here, we extend the notion of an ITS to include different perspectives from which the external and the coupled systems can be viewed. In particular, we identify three perspectives; • a planner, • a plan executor, • and an (independent) observer.

With a slight abuse of previously introduced notation and terminology, we use the term internal to refer to any system that is not the external system and we use

Suppose

Before any policy is fixed, a DITS of the form

Consider the DITS

In this paper, an observation will refer to a sensor reading y. However, when we discuss an (independent) observer described over the coupled system, the input to this observer system can be a function of any variable of the coupled system, for instance action, information state or the state of the external. If the coupled system is disturbed, the disturbances can be observed by the observer too.

3.2. History information spaces

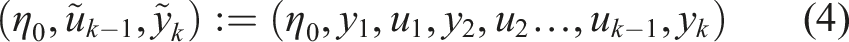

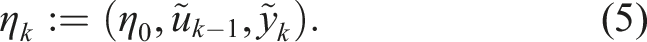

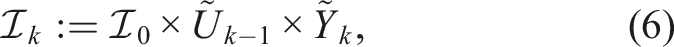

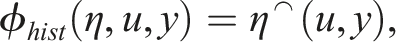

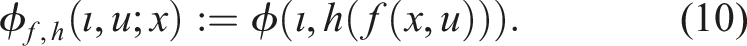

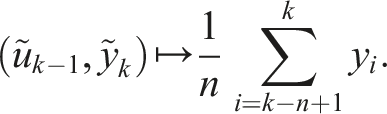

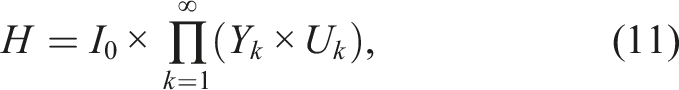

The most fundamental I-space is the history I-space, which we denote by

Let U and Y be the sets of possible actions and observations respectively. The elements of

The description of initial conditions in the set

3.3. Sufficient state-relabeling

In (Weinstein et al., 2022), we have introduced a notion of sufficiency that generalizes the definition introduced in (LaValle, 2006: Chapter 11) and is presented here for completeness.

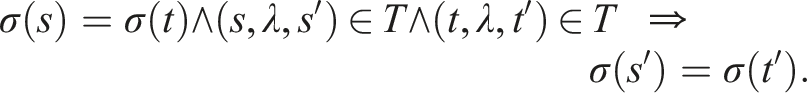

Let (S, Λ, T) be a transition system. A labeling function σ: S → L defined over the states of a transition system is sufficient if and only if for all s, t, s′, t′ ∈ S and all λ ∈ Λ, the following implication holds:

If σ is defined over the states of a deterministic transition system (S, Λ, τ), then σ is sufficient if and only if for all s, t ∈ S and all λ ∈ Λ, σ(s) = σ(t) implies that σ(τ(s, λ)) = σ(τ(t, λ)).

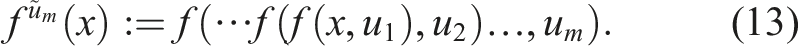

Consider the stage-based evolution of the state-relabeled deterministic transition system corresponding to the external system (X, U, f, h, Y) with respect to the action (control input) sequence

Now, consider an internal system with a labeling function

3.4. Derived information transition systems

Even though it seems natural to rely on a history ITS, the dimension of a history I-state increases linearly, and the size of the history I-space increases exponentially, as a function of the stage index, making it impractical in most cases. Thus, we are interested in defining a reduced ITS that is more manageable, due to, for example, lowered requirements for memory or computing power. Furthermore, this would largely simplify the description of a policy for a planner or a plan executor.

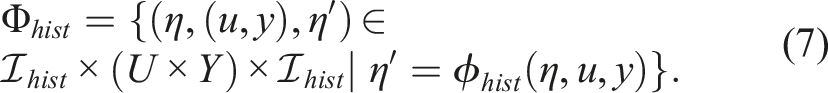

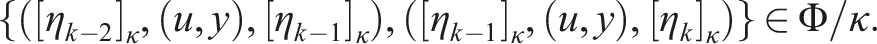

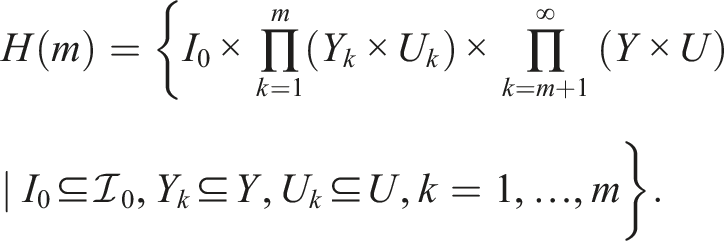

Recall the quotient of a transition system by a labeling function (see Definition 2). We rewrite

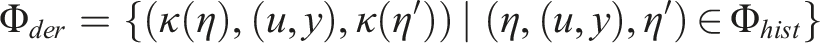

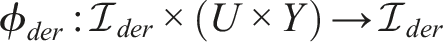

We can introduce an information mapping (I-map)

It is crucial that the derived ITS is a DITS so that the transition from the current label to the next can be determined using only the derived ITS, without making reference to the history ITS. The reason for this requirement is straightforward for an observer as the I-states correspond to what is inferred about the external system, given observation history (potentially accompanied by the action history). The same applies for the planner and the plan executor to be able to describe and execute a policy. Considering the quotient system derived by κ from the DITS (by definition)

■

Note that Proposition 1 holds also in the case of a generic ITS

Whether the quotient system derived from As ϕ

hist

is a function with domain

For an I-map

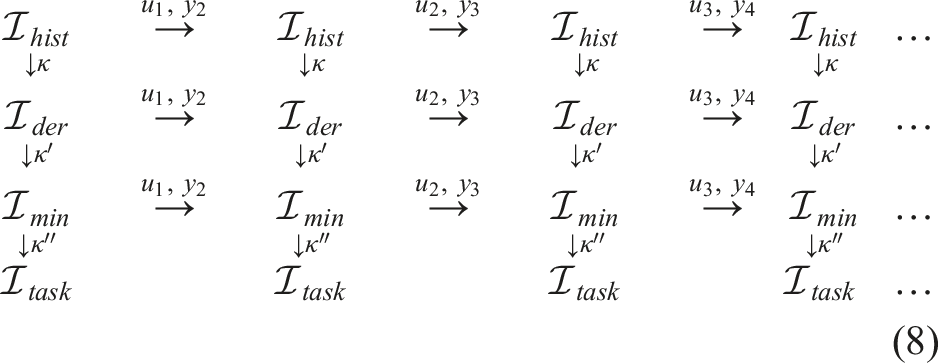

(Weinstein et al., 2022, Proposition 2.37). Thus, we can use the labels introduced by an κ as the new (derived) I-space and the corresponding quotient system as the derived ITS. Suppose an I-map κ is sufficient. Then, the derived ITS is a DITS, meaning that given an I-state ιk−1 in the derived space Note that we can similarly define an I-map that maps any derived I-space to another. An example is given in (8) as the mappings

3.5. Model-based and model-free

In machine learning, control, and robotics literature, methods are often categorized into model-based and model-free (or data-driven) ones. Informally, using our setup, a model-based scenario is one where the derived I-state is allowed to depend on knowledge about the external system, the sensor mapping, the initial state, and the disturbances acting on the external system or on the sensors (if there are any). The model-free scenario in contrast cannot depend on those, but can depend on data, which in our case is the history I-states, that is, the sequences of actions and observations.

In Section 2.1 we have defined internal-external coupled systems. Their coupling (1) produces an autonomous system

Note that the internal system only has access to the current information state

The distinction between model-based and model-free is also seen in the initial states η0 of the history I-space. In model-based setups, typically η0 is a subset of X, or a probability distribution over X while in model-free setups η0 is an empty sequence. Examples 9 and 11 are examples of model-free and model-based I-spaces respectively.

Note that this formalization implies that model-free methods are a subset of model-based. This is because the internal perspective is itself a function of the entire coupled system, so anything that is a function of the internal perspective is by transitivity also a function of the entire coupled system. This matches the intuition that model-based are ones where more information is available. We leave the exploration of more aspects of this distinction and its formalization for future work.

We now present two examples that illustrate model-based and model-free derived ITSs.

Suppose the initial history information state encodes a probability distribution over X such that η0 = P(x1). We refer to the coupled system including the disturbances described in (2). A Markovian, probabilistic model of the disturbances is given in the form of conditional distributions P(ψ∣x) over Ψ, and P(θ∣x, u) over Θ. In the former, conditioning takes place relative to external states x ∈ X, and in the latter relative to state-action pairs (x, u) ∈ X × U. Using the definitions of f and h given in (2), P(y

k

∣x

k

) and P(xk+1∣x

k

, u

k

) can be derived from P(ψ

k

∣x

k

) and P(xk+1∣x

k

, u

k

) for all stages k.

Let

Note that the Kalman filter is a special case of a Bayesian filter when f and h are linear and the disturbances are Gaussian. These specifications imply that all the posterior distributions are Gaussian as well. Therefore, in this special case, the range of κ

prob

is implicitly restricted to the set of all Gaussian distributions, denoted as

The following is an example of a model-free derived ITS.

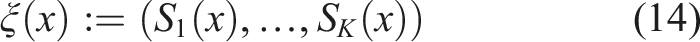

Let

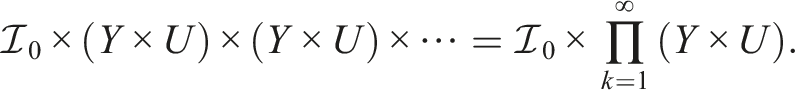

3.6. Lattice of information transition systems

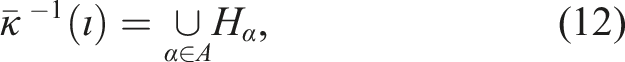

We fix

An I-map κ′ is a refinement of κ, denoted as κ′⪰κ, if

Let

At the top of the lattice, there is the partition induced by an identity I-map (or equivalently, by a bijection),

As motivated in previous sections, we are interested in finding a sufficient I-map such that the quotient ITS derived from the history ITS is still deterministic. Notice that the constant I-map κ

const

is sufficient by definition since for all (u, y) ∈ U × Y, and all

Let

It is shown in (Weinstein et al., 2022, Theorem 4.19) that the minimal sufficient refinement of κ defined over the states of a deterministic transition system (S, Λ, τ) is unique up to relabeling, namely if κmin and

4. Solving tasks minimally

4.1. Definition of a task

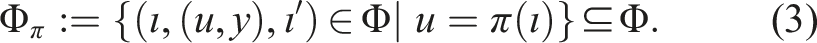

In this section, we formulate general planning and filtering tasks within the framework of information transition systems. We distinguish between two categories: 1) active, which entails planning and executing an information-feedback policy that forces a desirable outcome in the external system, and 2) passive, which refers to only observing the external system without being able to effect changes. We next describe active and passive tasks for the model-free and model-based I-space formulations, introduced in Section 3.5. In the model-free case, tasks are specified using a logical language over

Solving a passive task only requires maintaining whether a sentence is satisfied, rather than forcing an outcome; this corresponds to filtering. Whether the task is active or passive, if satisfiability is concerned with a single, fixed sentence, then a task-induced labeling (or task labeling for short), that is, κ

task

, over

Some naturally occurring robot tasks can only be described in terms of infinite sequences of actions and observations. These are called infinitary tasks. For example, cycling through a finite sequence of subsets of X indefinitely while avoiding others (Fainekos et al., 2009) can only be described in terms of infinite histories. For this task, whether the sentence of interest is satisfied cannot be determined based on a finite history of any given length. However, the histories that fail, that is, those for which the sentence of interest becomes false, can be defined in terms of finite histories (namely those that result in a state that needed to be avoided). The interested reader can refer to (Kress-Gazit et al., 2009) for examples based on linear temporal logic (LTL).

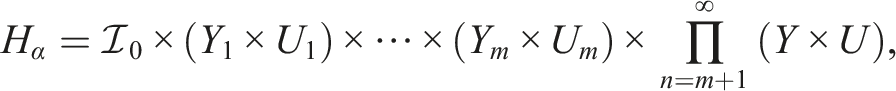

Infinitary tasks are defined on the set of infinite histories

The preimages of an infinitary task labeling

Now, let

To characterize infinitary tasks in terms of deciding their satisfiability, we rely on topology. Assume that some topology is defined for the sets

In the simplest nontrivial case, a task labeling

Due to the definition of the sets H(m), the membership of a given history in an open set is determined by some finite initial segment of that history. Therefore, based on a finite length segment of a given history, we can determine its membership in the success set of an open task, in the fail set of a closed task, and both the success and fail sets for a clopen task. In these cases, task satisfiability can be defined in terms of the elements of

Below are examples of typical model-based task descriptions with their corresponding definitions in terms of infinite histories that can be expressed using a task-labeling over

The success set of this task consists of those histories that correspond to the external system arriving to x in some finite time. If

The fail set of this task consists of those histories that correspond to the external system arriving at x from some initial state x0. Since this always happens in finite time (or not at all), the fail set can be expressed as the union

The success set of this task may be written as the union, over

An infinitary task is not necessarily either open or closed. One example of this are tasks that can be expressed as so-called G δ sets (Dugundji, 1978, Section 3.6), that is, infinite intersections of open sets (see Example 7).

In this case, neither the success set or fail set of this task can be defined in terms of the sets H(m), since no finite length history can rule out either success or failure. For each In this paper, we consider tasks that are expressed as a labeling over

4.2. Problem families

It is assumed that the state-relabeled transition system (X, U, f, h, Y) describing the external system is fixed, but it is unknown or partially known to the observer (a robot or other observer).

Filtering (passive case) requires maintaining the label of an I-state attributed by κ task . Since κ task is not necessarily sufficient, we cannot guarantee that the quotient system by κ task is a DITS (Propositions 1 and 2). This implies that relying solely on the quotient system by κ task , we cannot determine the class that the current history belongs to (see the last row in (8)). Hence, we cannot determine whether a sentence describing the task is satisfied (or which sentences are satisfied).

Suppose the sets U and Y are specified, and at each stage k, the action uk−1 is known and y

k

is observed. The following describes the problem for a passive task given a state-relabeled (history) ITS

Find a sufficient refinement of κ

task

. Note that

Suppose

Notice that Problem 1 does not impose an upper bound. At the limit, a bijection from

We now consider a basic planning problem for which

Let

Most problems in the planning literature consider a fixed DITS and look for a feasible policy for

We can further extend the planning problem to consider an unspecified internal system. This entails finding a DITS

Suppose We emphasize that finding a DITS for a planning problem differs from Problems 1 and 2 in the sense that we are not looking for a refinement of κ

task

. The reason for this difference is because κ

task

can already be sufficient; hence, it is the minimal sufficient refinement of itself. However, this does not necessarily imply the existence of a feasible policy defined over

Let

Informally, a minimal DITS for π implies that one cannot further reduce the quotient system by merging equivalence classes induced by κ, while simultaneously ensuring that when coupled to the external system that is initialized at the same state, the coupling would result in the same observation and action histories as

There may be multiple pairs of (κ, π) that solve the same problem. Given two DITS,

Suppose a feasible policy

■

4.3. Learning a sufficient ITS

Although learning and planning overlap significantly, some unique issues arise in pure learning (Weinstein et al., 2022). This corresponds to the case when

We can address both filtering and planning problems defined previously within this context, considering model-free and model-based cases. In the model-free case, the task is to compute a minimal sufficient ITS that is consistent with the actions and observations. Variations include lifelong learning, in which there is a single, long history I-state, or more standard learning in which the system can be restarted, resulting in multiple trials, each yielding a different history I-state. In the model-based case, partial specifications of X, f, and h may be given, and unknown parameters are estimated using the history I-state(s). Different results are generally obtained depending on what assumptions are allowed. For example, do identical history I-states imply identical state trajectories? If not, then set-based, nondeterministic models may be assumed, or even probabilistic models based on behavior observed over many trials and assumptions on probability measure, priors, statistical independence, and so on.

5. Applying the theory

In this section we provide some simple filtering and planning problems and show how the ideas presented in the previous sections apply to these problems. All problems defined in the previous section can be posed in a learning context as well. Then,

5.1. Red-green gates

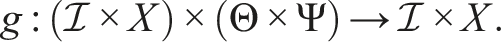

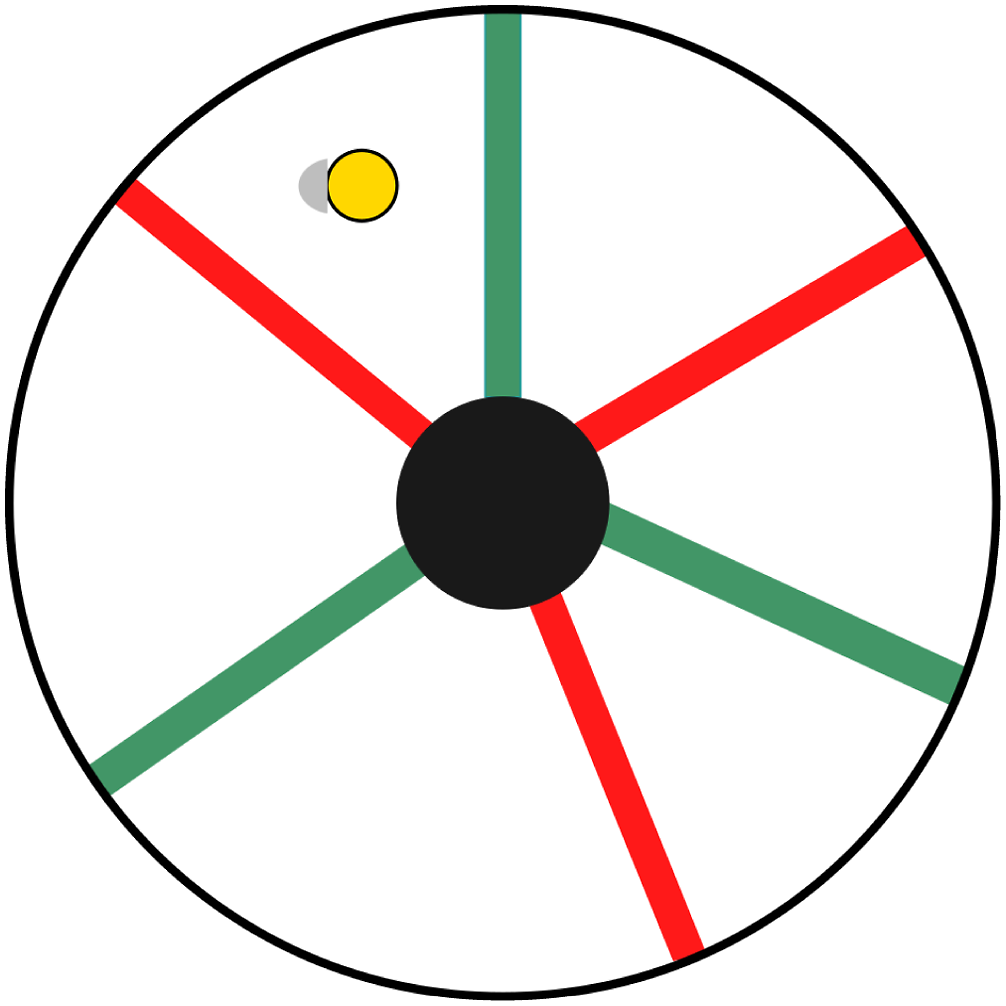

This example is inspired by (Tovar et al., 2008). Let Environment used in Examples 9, 10, and the obstacle (an open disk) is shown in black.

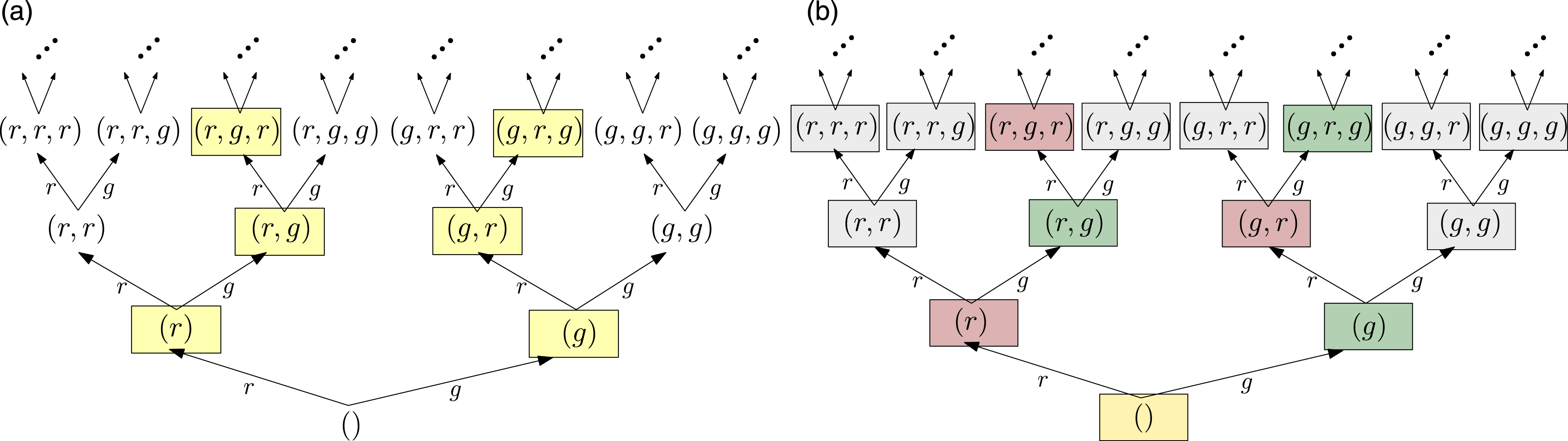

This example considers a filtering problem from the perspective of an independent observer. Suppose the actions taken by the robot are not observable and the only information about the system is the history of readings coming from the robot’s color sensor; for example, (r, r, r, g, r, g). Then, the history I-space is the set of all finite length sequences of elements of Y, that is,

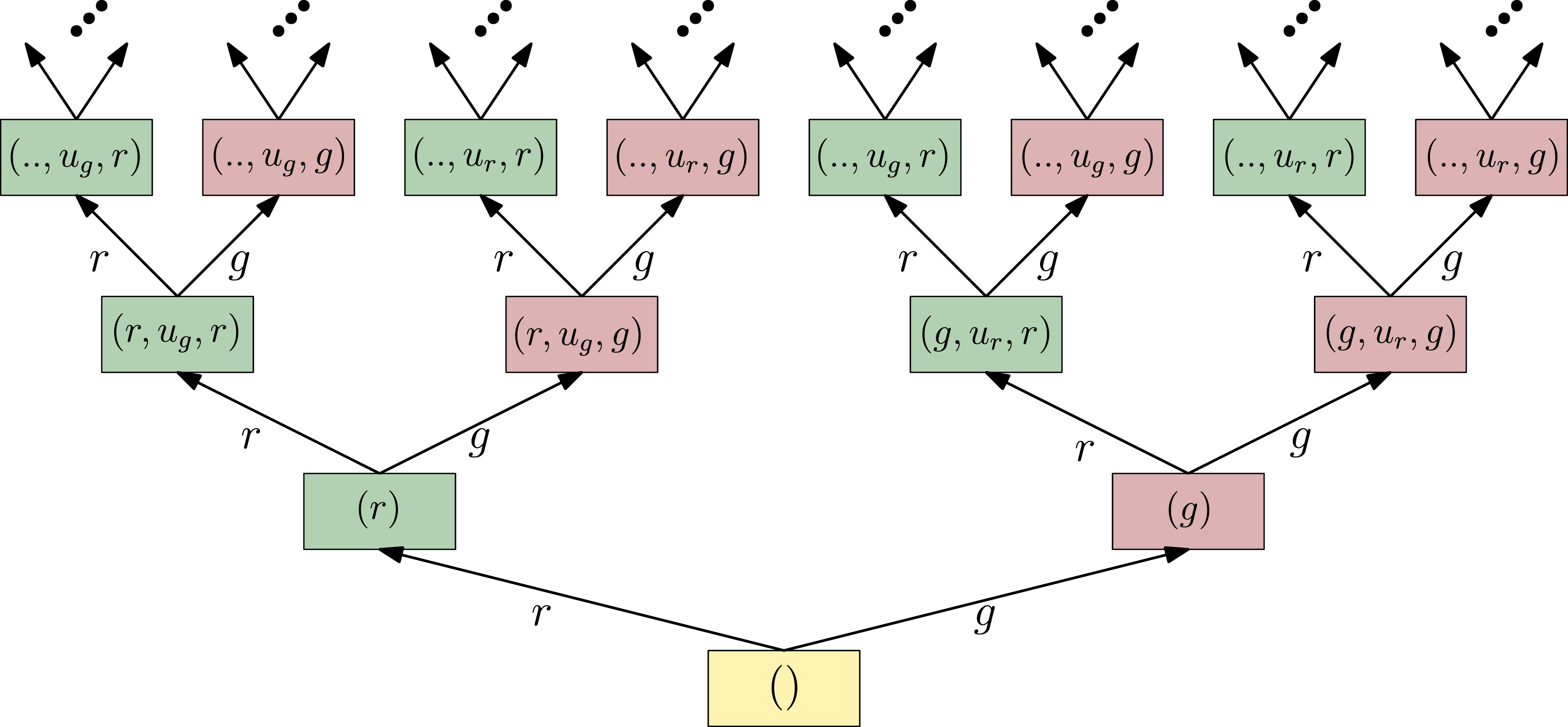

(a) State-relabeled history ITS described in Example 9, and the labeling function κ task . States colored yellow are the ones that do not violate the task description. (b) Equivalence classes induced by κ′; the minimal sufficient refinement of κ task .

Task labeling κ

task

defined in Example 9 is not sufficient.

■ We can obtain a sufficient refinement of κ

task

, defined as

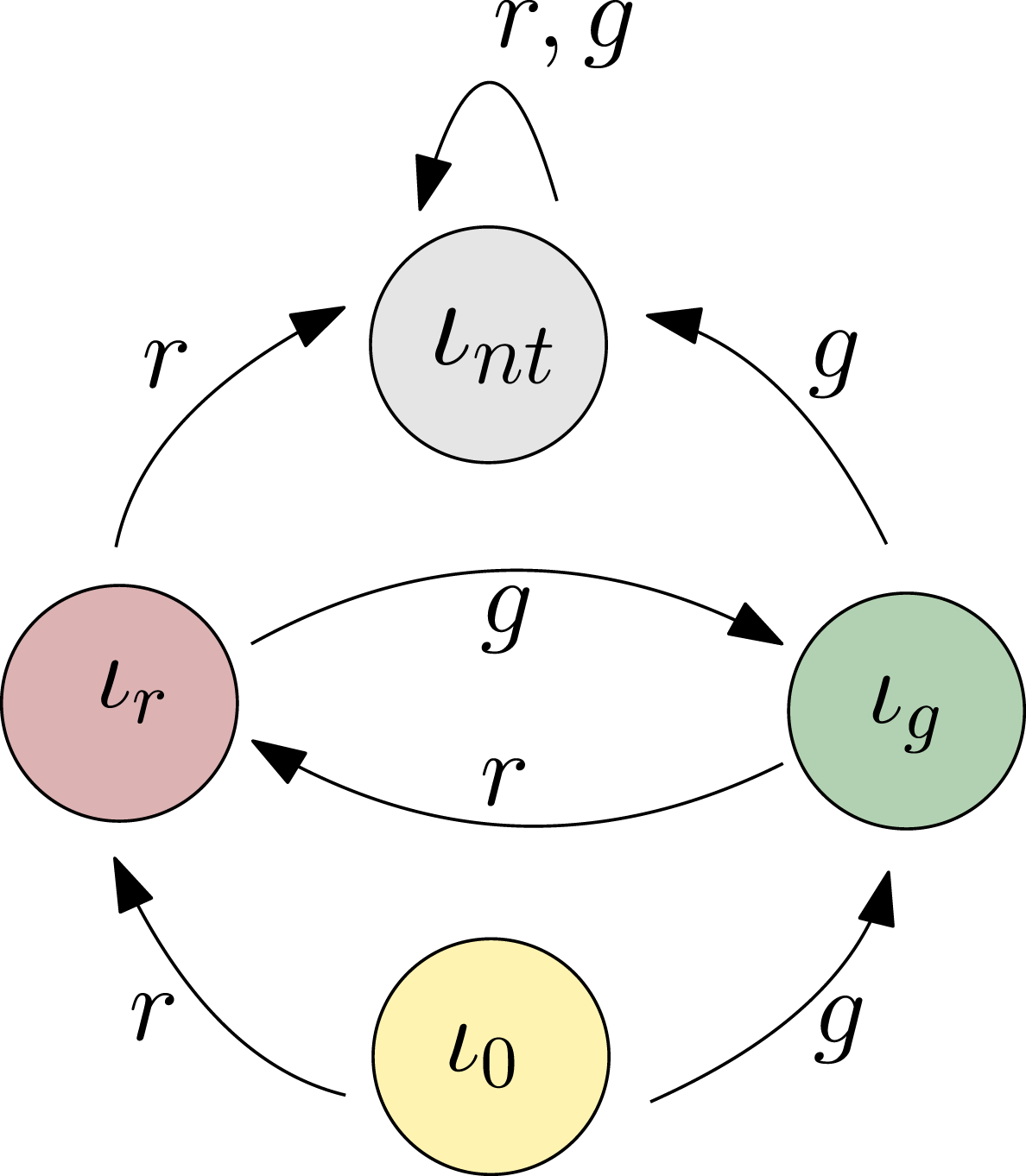

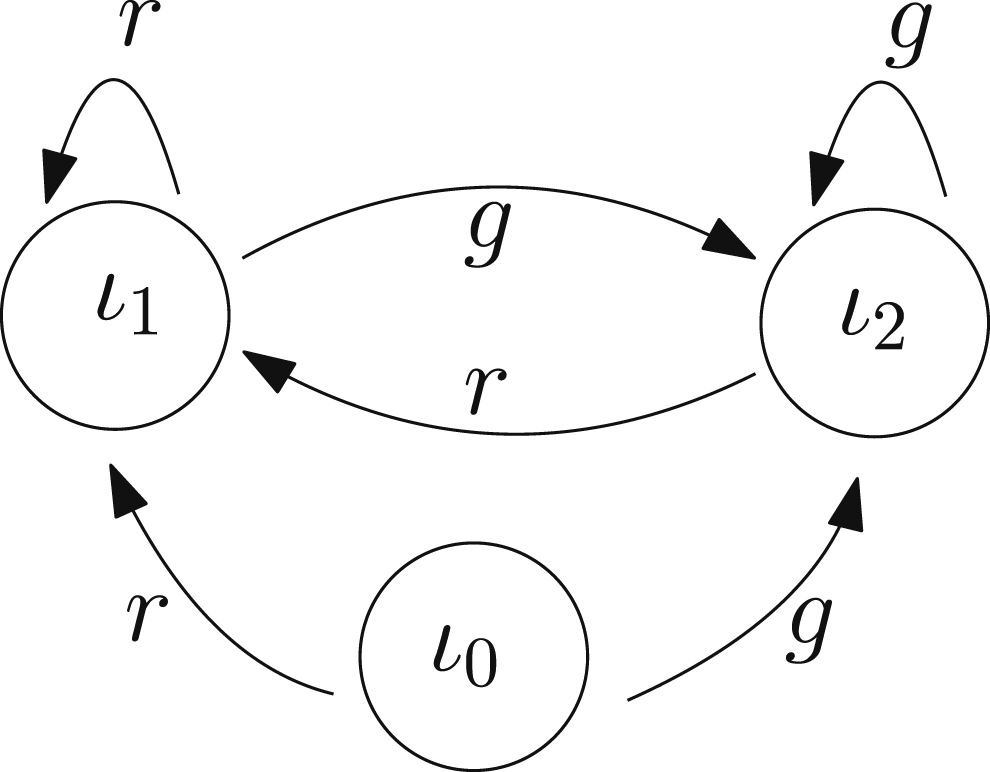

Quotient by κ′ of the state-relabeled history ITS shown in Figure 4(b).

κ′ as defined above is a minimal sufficient refinement of κ

task

.

■ Suppose the robot has a boundary detector, and it is capable of executing a bouncing motion that involves move forward and rotate in place. The set of actions is defined as U = {u

r

, u

g

}, in which u

g

represents a bouncing motion that allows the robot to traverse the green gate but not the red one, u

r

allows it to traverse the red gate but not the green one. For all the actions, the robot also bounces off of the boundary. We assume that the boundary detector and color sensor readings do not arrive simultaneously, and that the resulting trajectory will strike every open interval in the boundary of every region infinitely often, with non-zero, non-tangential velocities (Bobadilla et al., 2011).

We now consider a planning problem (that belongs to the class described in Problem 4) for which the goal is to ensure that the robot crosses the gates consistently. The history I-space of the planner is A policy

The minimal DITS for π is the quotient of The quotient system, that is the minimal DITS, is shown in Figure 7. Let

History ITS

DITS describing the internal system solving the planning problem described in Example 10.

5.2. L-shaped corridor

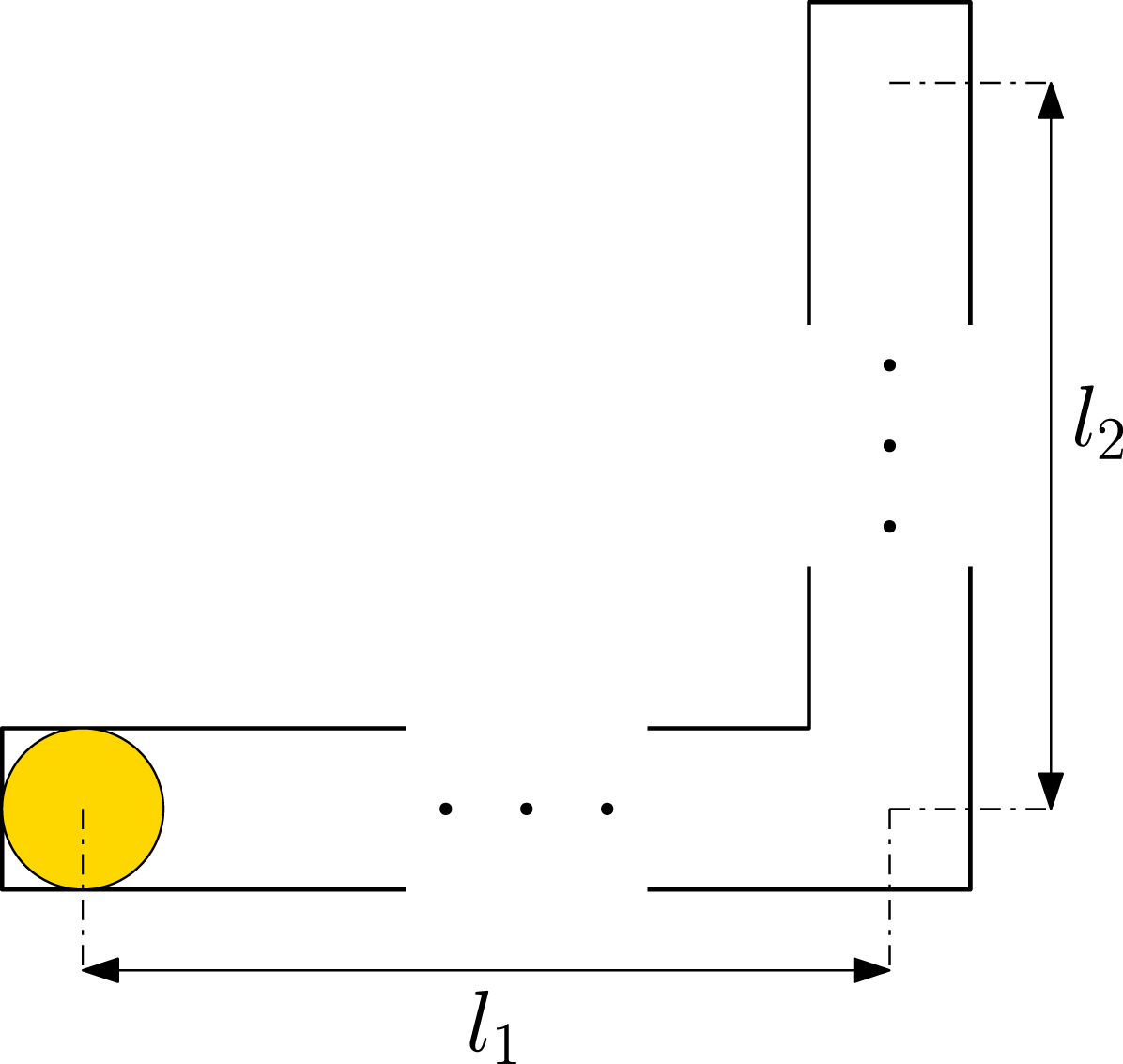

Consider a robot in an inverted L-shaped planar corridor (Figure 8). Let L-shaped corridor; l1, l2 ≤ l. For any corridor, the robot starts at the left-most part of the corridor which corresponds to the coordinates (0, 0).

Consider a model-based history ITS with η0 ⊂ X that specifies the initial position as q0 = (0, 0) which corresponds to the left-most bottom square of the mirrored L-shape (Figure 8) but does not specify the environment so that it can be any

Let κ

ndet

and κ

task

be the I-maps defined in Example 11. Then, κ

ndet

is a minimal sufficient refinement of κ

task

.

■ A policy can be described over

5.3. Diversity-based Inference (DBI) as a derived ITS

In this and the following section, we present DBI and its probabilistic counterpart PSR as deterministic ITSs. The core idea in DBI (Rivest and Schapire, 1993, 1994) is to gather information about the environment through action-observation experiments. The environment is modeled as a finite state Moore machine (finite state automaton), formally defined as a 6-tuple

The cardinality

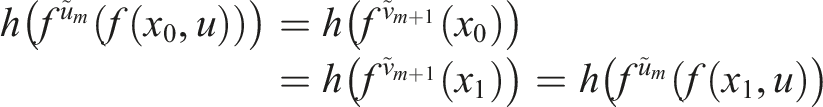

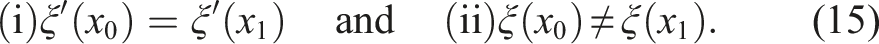

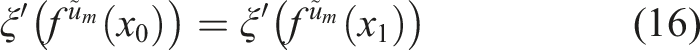

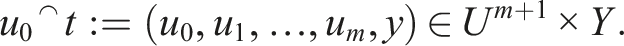

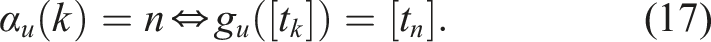

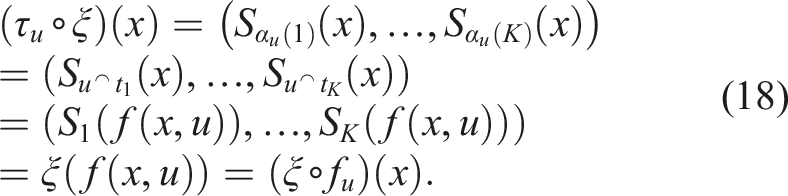

Suppose ξ is not the minimal sufficient refinement of h. Let ξ′ be a minimal sufficient refinement of h which always exists, see Remark 3. Thus, we have ξ ≻ ξ′⪰h, so there are x0, x1 ∈ X with Since ξ′ is sufficient, it follows from (15) (i) that ξ′(f(x0, u)) = ξ′(f(x1, u)) for all actions u ∈ U. Using the sufficiency of ξ′ again m times, it follows that ■ Now, denote the concatenation of an action u0 ∈ U and a test Since

Let • • • • s0 = (S1(x0), …, S

K

(x0)). A machine/environment

Then, ξ: X → S is onto by definition of the set S. To show injectivity, assume ξ(x) = ξ(x′) for some x, x′ ∈ X, which means that S

k

(x) = S

k

(x′) for all k = 1, …, K. Since We still need to show that for all (x, u) ∈ X × U, the functions f

u

(x):= f(x, u) and τ

u

(s):= τ(s, u) satisfy Since ξ is a bijection, the labeling function σ in Definition 8 is well defined, and satisfies (σ∘ξ) (x) = h(x) by definition. Finally, ξ(x0) = s0 by the definition of s0. ■ To simulate the environment If we remove the assumption that

■

5.4. Predictive state representations (PSRs)

Predictive state representation (PSR) (James and Singh, 2004; Littman and Sutton, 2001), like its deterministic predecessor DBI, is based on the idea of performing tests on the environment. The difference is that PSR assumes a statistical description of the internal-external coupling, expressed via success probabilities of tests, conditioned on past histories. Predictive state representations have been shown to be more general than POMDPs (Cassandra et al., 1997) in the sense that every POMDP model can be represented via the corresponding PSR.

Since the introduction of the original PSR, several variations of the concept have been proposed in connection to different learning algorithms that aim to discover the set of core tests and learn the associated prediction functions (Boots et al., 2011, 2013; James and Singh, 2004). So-called TPSRs (Rosencrantz et al., 2004) are adaptations of the concept where, instead of maintaining vectors of probabilities over a finite set of core tests, a linear combination of a larger set of tests is maintained instead.

We focus on the original formulation of the PSR model and show how it can be expressed in our formalism as a DITS. Let

The idea of PSR is to identify minimal core sets of tests Q = (t1, …, t

m

) which have the property that, given any test t∉Q and some history

Formally, a PSR is a 5-tuple (U, Y, Q, F, m0), where U is the set of actions, Y is the set of observations, Q is a core set of tests, F is the set of prediction functions, and m0 ∈ [0,1]|Q| is the initial prediction vector after seeing the null history η0 = (). A PSR model provides a complete (probabilistic) description of the action-observation dynamics because the prediction vector Q(η

k

) can be updated with each new action-observation. For this, only the (finite number of) prediction functions f(u,y) and

6. Conclusions and future work

This paper introduced a mathematical framework for determining minimal filters and minimal feasible policies by comparing ITSs over information spaces. The minimality results are quite general without imposing strong restrictions on the underlying dynamical system (external system). We show that a large class of problems can be posed and analyzed under this framework.

Nevertheless, there are several opportunities to expand the general theory. For example, we assumed that u is both the output of a policy and the actuation stimulus in the physical world; more generally, we should introduce a mapping from an action symbol σ ∈ Σ to a control function

It is also important to extend the models to continuous time. In this case, the sensing and action histories are time parameterized functions, rather than sequences. Sufficiency must be defined in terms information mappings that apply to any time slice from 0 to t′ < t for a history that runs from time 0 to t, rather than only over discrete time steps. Some ground work has already been done in (LaValle, 2006).

Another direction is to consider the hardware and actuation models as variables, and fix other model components. This is similar to the class of problems related to co-design for which the design process of a robot given resource constraints (sensors and actuators) is sought to be automated (Shell et al., 2021; Censi, 2016; Zardini et al., 2021).

In this paper, we considered the theoretical limits on the DITS necessary to express a policy defined over a history ITS. However, the problem of finding such a DITS remains as an open algorithmic challenge. Furthermore, we only considered feasible policies. An interesting direction is to analyze the information requirements for policies that are optimal with respect to a relevant objective and the trade-off between optimality and minimality. This will amount to an ordering (potentially a partial ordering) of policies in terms of (expected) cost and the minimal DITS to express such policy.

In an external-internal coupled system, the different components, I-map κ, the information transition function ϕ and the policy π share the total complexity (information content) of the internal information processing system. Of particular interest would be to explore the trade-offs between these components in terms of efficient encoding of data-structures and their successful decoding in terms of policies. Ultimately this could lead to fundamental characterizations of interaction system information content in the spirit of the minimum description length principle proposed in (Rissanen, 1978).

The mathematical theory of coupling as presented in this paper is very general. Coupling in discrete dynamical systems, and of finite automata, are special cases of it, and even continuous systems can be seen as such. Connections to other work on coupling such as (Spivak, 2015) are to be explored. Dynamic coupling has been proposed as a viable approach to a mathematical modeling of cognition from the enactivist perspective (Montebelli et al., 2008; Favela, 2020). The existing literature on the latter uses bits and pieces of dynamical systems with sporadic applications in different areas of cognitive science, but a systematic unifying study is still to be seen, especially one that has meaningful ramifications to robotics and algorithmic design. An attempt to connect philosophical ideas with those of this paper was presented by the authors in (Weinstein et al., 2022).

A grand challenge remains: The results here are only a first step toward producing a more complete and unique theory of robotics that clearly characterizes the relationships between common tasks, robot systems, environments, and algorithms that perform filtering, planning, or learning. We should search for lattice structures that play a role similar to that of language class hierarchies in the theory of computation. This includes the structures of the current paper and the sensor lattices of (LaValle, 2012; Zhang and Shell, 2021). Many existing filtering, planning, and learning methods can be formally characterized within this framework, which would provide insights into relative complexity, completeness, minimality, and time/space/energy tradeoffs.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the European Research Council Advanced Grant (ERC AdG, ILLUSIVE: Foundations of Perception Engineering, 101020977), Academy of Finland (projects PERCEPT 322637, CHiMP 342556), and Business Finland (project HUMOR 3656/31/2019).