Abstract

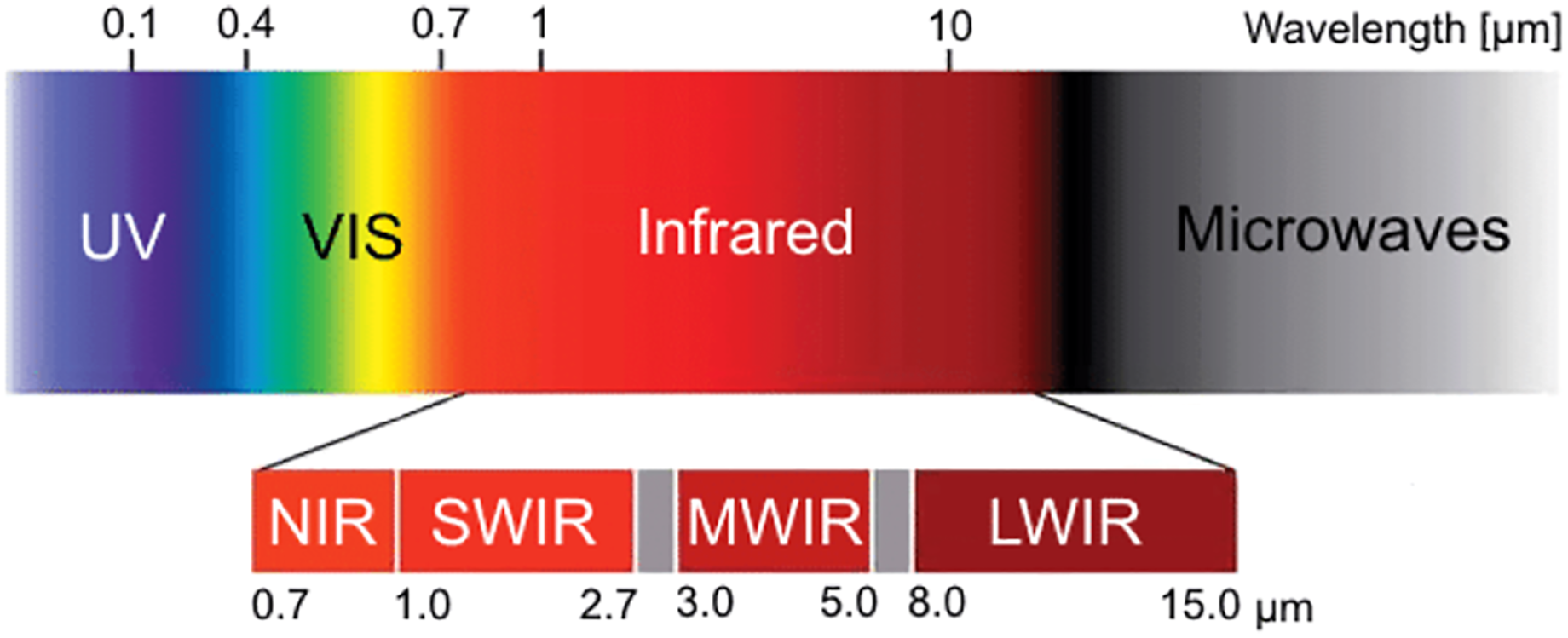

Recent advances in deep learning technology have triggered radical progress in the autonomy of ground vehicles. Marine coastal Autonomous Surface Vehicles (ASVs) that are regularly used for surveillance, monitoring, and other routine tasks can benefit from this autonomy. Long haul deep sea transportation activities are additional opportunities. These two use cases present very different terrains—the first being coastal waters—with many obstacles, structures, and human presence while the latter is mostly devoid of such obstacles. Variations in environmental conditions are common to both terrains. Robust labeled datasets mapping such terrains are crucial in improving the situational awareness that can drive autonomy. However, there are only limited such maritime datasets available and these primarily consist of optical images. Although, long wave infrared (LWIR) is a strong complement to the optical spectrum that helps in extreme light conditions, a labeled public dataset with LWIR images does not currently exist. In this paper, we fill this gap by presenting a labeled dataset of over 2900 LWIR segmented images captured in coastal maritime environment over a period of 2 years. The images are labeled using instance segmentation and classified into seven categories—sky, water, obstacle, living obstacle, bridge, self, and background. We also evaluate this dataset across three deep learning architectures (UNet, PSPNet, DeepLabv3) and provide detailed analysis of its efficacy. While the dataset focuses on the coastal terrain, it can equally help deep sea use cases. Such terrain would have less traffic, and the classifier trained on cluttered environment would be able to handle sparse scenes effectively. We share this dataset with the research community with the hope that it spurs new scene understanding capabilities in the maritime environment.

1. Introduction

In recent years, advances in deep learning algorithms have led to an exponential growth in the research on autonomy of land vehicles. Some of the key catalysts for this growth have been publicly available labeled datasets, open-source software, publication of novel deep learning architectures, and increase in hardware compute capabilities. The maritime environment, with an abundance of periodic tasks such as monitoring, surveillance, and long haul transportation presents a strong potential for autonomous navigation. Availability of good datasets is a key dependency for gaining autonomy. The challenge lies in interpreting this data and creating labeled datasets that can help train deep learning architectures.

Electro-Optical (EO) cameras are predominantly used to capture images because they are inexpensive and ubiquitous. In autonomous driving, sign posts, traffic lights, lane markings etc. can be easily recognized from a colored image. The abundance of Convolutional Neural Network (CNN) architectures by Krizhevsky et al. (2012) that learn from the labeled images has helped in making EO cameras mainstream. Two methods are commonly used for annotating an image. First, detecting objects of interest by drawing bounding boxes around them. Second, semantically segmenting an image in which each pixel is assigned a class label. The first method is faster as it focuses on specific targets; however, the second is more refined as it segments the entire scene.

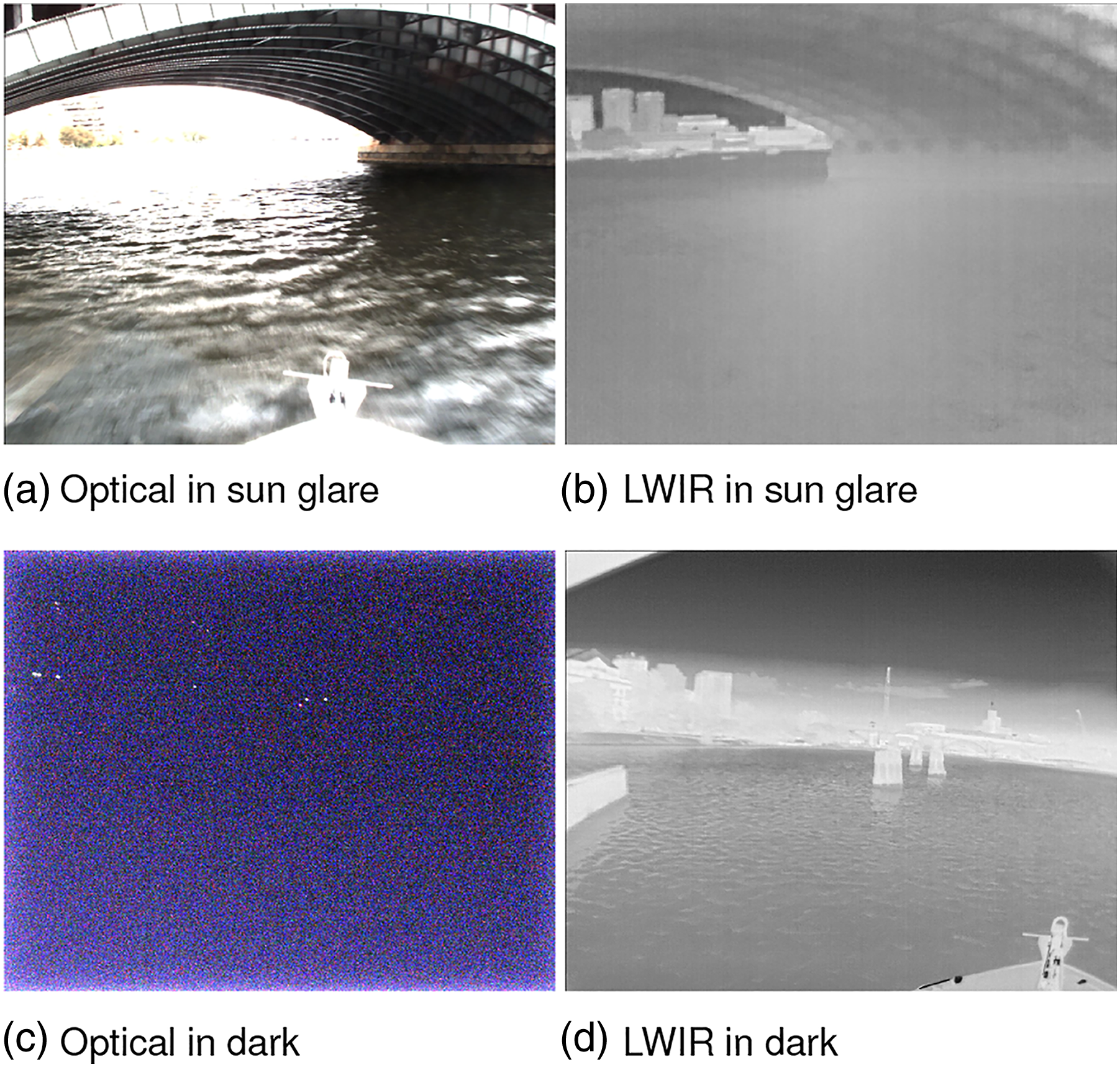

The maritime environment is exposed to sky and water and the lighting conditions are therefore quite different as compared to a ground terrain. Glitter, reflection, water dynamism, and fog are common. These conditions deteriorate the quality of optical images. Horizon detection is also a common problem faced while using optical images. On the other hand, LWIR images offer distinct advantages in such extreme light conditions as shown experimentally by Robinette et al. (2019), Nirgudkar and Robinette (2021) and as shown in Figure 1. Marine robotics researchers have used LWIR sensors in their work (Schöller et al. (2019); Rodin and Johansen (2018); Qi et al. (2011); Wang et al. (2017)). Most notably Zhang et al. (2015) has built a labeled dataset of paired visible and LWIR images of different types of ships in the maritime environment. However, there are certain Figure 1 limitations of this dataset which are discussed in the next section. LWIR versus Optical cameras in extreme light conditions: In (a), sun’s glare is obstructing the view for optical while the visibility is clear in LWIR (b). In (c) visibility from optical camera is almost zero in the dark, whereas LWIR continues to give a clear picture (d).

In this paper, we present a dataset of over 2900 LWIR maritime images that capture diverse scenes such as cluttered marine environment, construction, humans, marine wildlife, and nearshore views across various seasons and times of the day in the Massachusetts Bay area including Charles River and Boston Harbor. The dataset images are segmented using instance and semantic segmentation into 7 classes. We also evaluate the performance of this dataset across 3 popular deep learning architectures (UNet, PSPNet, DeepLabv3) and present our findings with respect to obstacle detection and scene understanding. The dataset is publicly available and can be downloaded from the URL ‘https://github.com/uml-marine-robotics/MassMIND’ Nirgudkar et al. (2022). We aim to stimulate research interest in the field of perception in maritime autonomy through this dataset.

The remainder of the paper has been organized as follows. Section 2 outlines the current state of art in the maritime domain. Section 3 describes the hardware assembly that is used for data acquisition. Specifics about the dataset and segmentation methods have been elaborated in Section 4 and Section 5 presents evaluation results against the 3 architectures. We draw conclusions in Section 6 and highlight the future plan of work.

2. Related work

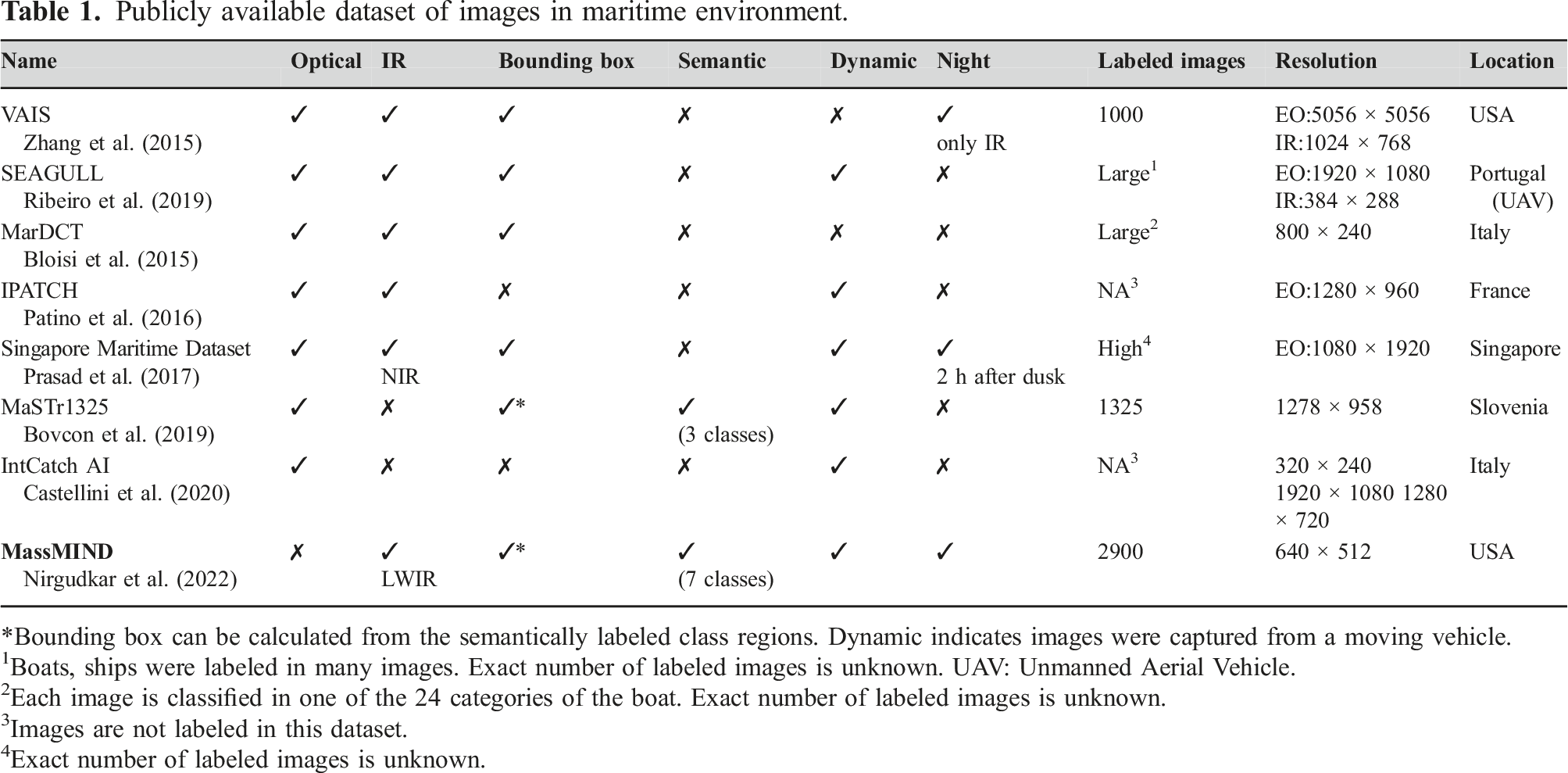

Significant research has been carried out in the field of marine robotics over the past few years and researchers have developed multiple datasets that can aid autonomy. These datasets vary in size and complexity; however, their main purpose revolves around environment monitoring and surveillance. Castellini et al. (2020) used autonomous aquatic drones and captured multivariate time series data for the purpose of water quality management. The dataset contains parameters such as water conductivity, temperature, oxygen level and is useful for time series analysis. Bloisi et al. (2015) used ASV in Venice, Italy and created a dataset of optical images for the purpose of boat identification and classification. The ground truth in this dataset is provided in the form of bounding boxes. Images in this dataset contain close up view of boats. Each image is classified as one of the 24 categories of the boat. Ribeiro et al. (2019) captured images from an unmanned aerial vehicle (UAV) 150–300 m above ocean surface for research on sea monitoring and surveillance. Though multi-spectrum cameras including IR cameras are used, the dataset is not annotated and hence it cannot be used readily for model training. Another problem is that the images were aerial pictures and therefore do not have the same perception properties as that of the view from a vehicle on the water surface. Zhang et al. (2015) has created a dataset of paired visible and LWIR images of various ships in the maritime environment. The annotations are provided in the form of bounding boxes around ships. However, selection is focused on those images where ships occupy more than 200 pixels of the image area. The dataset is therefore not adaptable for general use where obstacles can be in any shape or form such as buoys, small platforms, and construction in water. More importantly, the images are captured from a static rig mounted on the shore and therefore lack the dynamism found in real-life scenarios.

Prasad et al. (2017) has created a dataset using optical and near infrared (NIR) cameras to capture maritime scenes from a stationary on-shore location. This dataset also has some video sequences recorded from a ship but they are from a higher vantage point and hence do not clearly depict the same view as that from an ASV. Additionally, not all images in the dataset are annotated. Only a few are annotated using bounding boxes. Patino et al. (2016) has used multi-spectrum cameras to capture video segments of enacted piracy scenes. The emphasis is on threat detection and so the video sequence contains medium to large boats appearing in the frames. Since the visuals are enforced, the dataset lacks natural marine scenes that are critical for training a model. Steccanella et al. (2020) used pixel wise segmentation to differentiate between water and non-water class. The dataset contains around 515 optical images with the 2 classes. In recent years, two excellent datasets MaSTR1325 and MODS have been created by Bovcon et al. (2019) and Bovcon et al. (2021). The optical images in these datasets are semantically segmented in 3 classes and are suitable for maritime scene understanding. However, as pointed out by Bovcon et al. (2021), bright sunlight, foam, glitter, and reflection in the water deteriorate the inference quality. Small object detection poses a challenge even for the model developed by Bovcon and Kristan (2020) that is specifically designed for the maritime environment.

Publicly available dataset of images in maritime environment.

*Bounding box can be calculated from the semantically labeled class regions. Dynamic indicates images were captured from a moving vehicle.

1Boats, ships were labeled in many images. Exact number of labeled images is unknown. UAV: Unmanned Aerial Vehicle.

2Each image is classified in one of the 24 categories of the boat. Exact number of labeled images is unknown.

3Images are not labeled in this dataset.

4Exact number of labeled images is unknown.

IR Spectrum.

In a nutshell, the existing NIR/LWIR datasets lack complete scene parsing capabilities, or have been created in a controlled environment or are not publicly available. MassMIND bridges this gap and is the largest labeled dataset of its kind containing real-life LWIR images. The images have been recorded in a busy marine area as well as in not so busy open waters during various seasons and times of the day over a period of 2 years. The dataset has been annotated into 7 classes using instance segmentation that can be used for scene parsing during autonomous navigation.

3. System setup

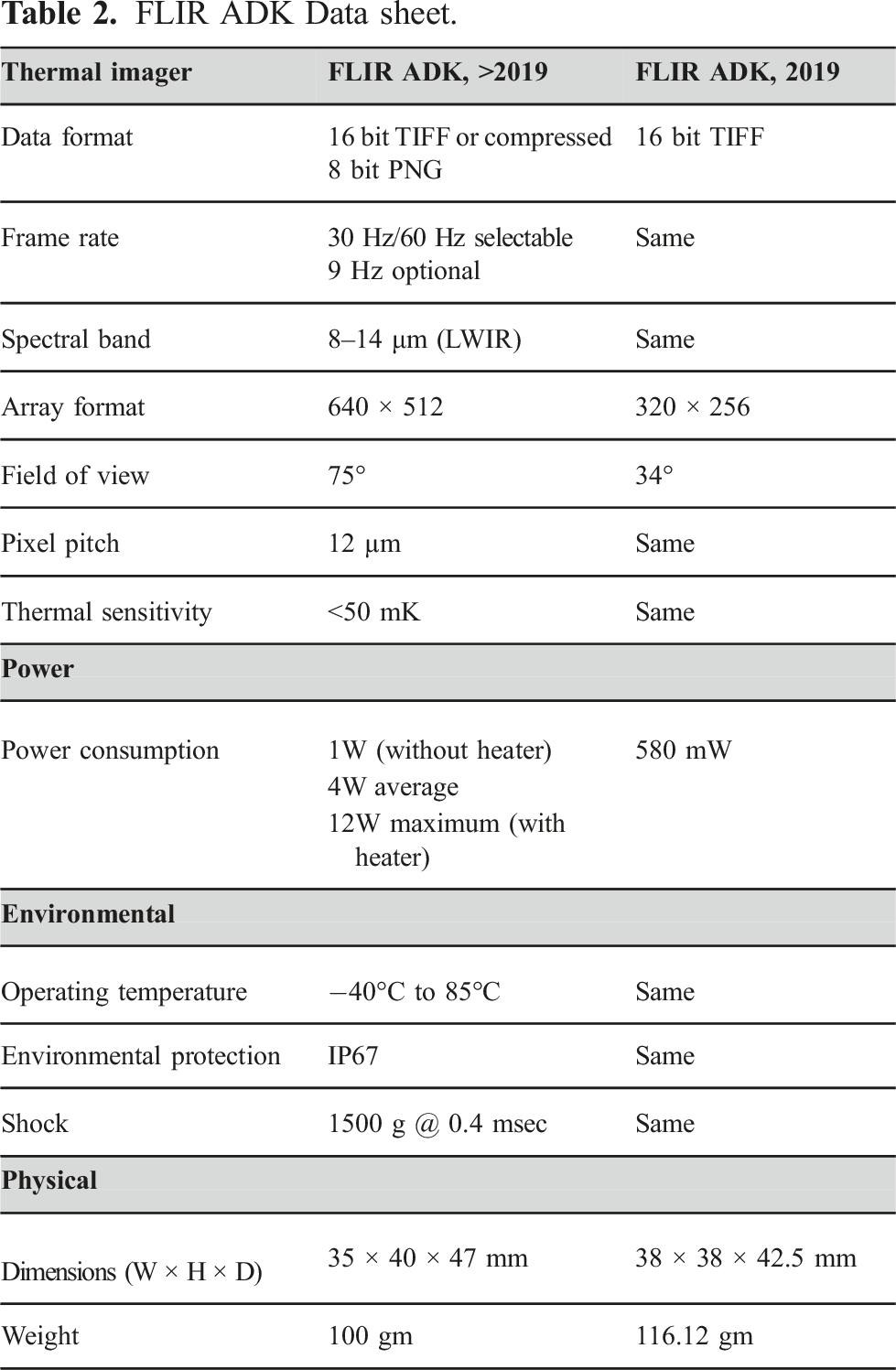

R/V Philos, an ASV owned by the Brunswick Corporation and operated by MIT Autonomous Underwater Vehicle (AUV) AUVLab (1989) lab was instrumental in collecting images for the MassMIND dataset. The ASV is equipped with 3 EO cameras, two Forward looking infrared (FLIR)-LWIR cameras, a radar, a lidar, GPS, and inertial measurement unit (IMU). The FLIR cameras used in 2019 were entry level providing resolution of 320 × 240 (width×height) and horizontal field of view of 34°. These cameras were upgraded in 2020 which provided resolution of 640 × 512 (width×height) and horizontal field of view of 75°. Images from these sensors have slight offset with one another due to the placement of EO and FLIR cameras as shown in Figure 3. Table 2 shows technical specifications of the FLIR ADK cameras used in the setup in 2019 and later. The vehicle was driven in and around Boston harbor by a human operator to collect the data and was not in autonomous mode most of the time. However, a limited autonomy for waypoint navigation and course holding was exercised. Additional details of the ASV can be found in DeFilippo et al. (2021). The unannotated raw dataset from the sensors was also released as part of that work SeaGrant (2022). R/V Philos showing placement of LWIR cameras. FLIR ADK Data sheet.

4. Dataset description

The strength of LWIR is its independence from visible light. Our main goal was to utilize this property of LWIR and create a comprehensive marine dataset of real-life images so that it complements existing optical datasets. In this section, we first describe how the data was acquired and the process of image selection. Then we describe the segmentation scheme that was followed along with the labeling process. Note that the image selection, labeling, and evaluation process was iterative. We started getting reasonable results with around 1500 images (excluding the augmentation) but we kept on increasing the size till we got robust inference from all the classifiers.

4.1. Data acquisition and selection

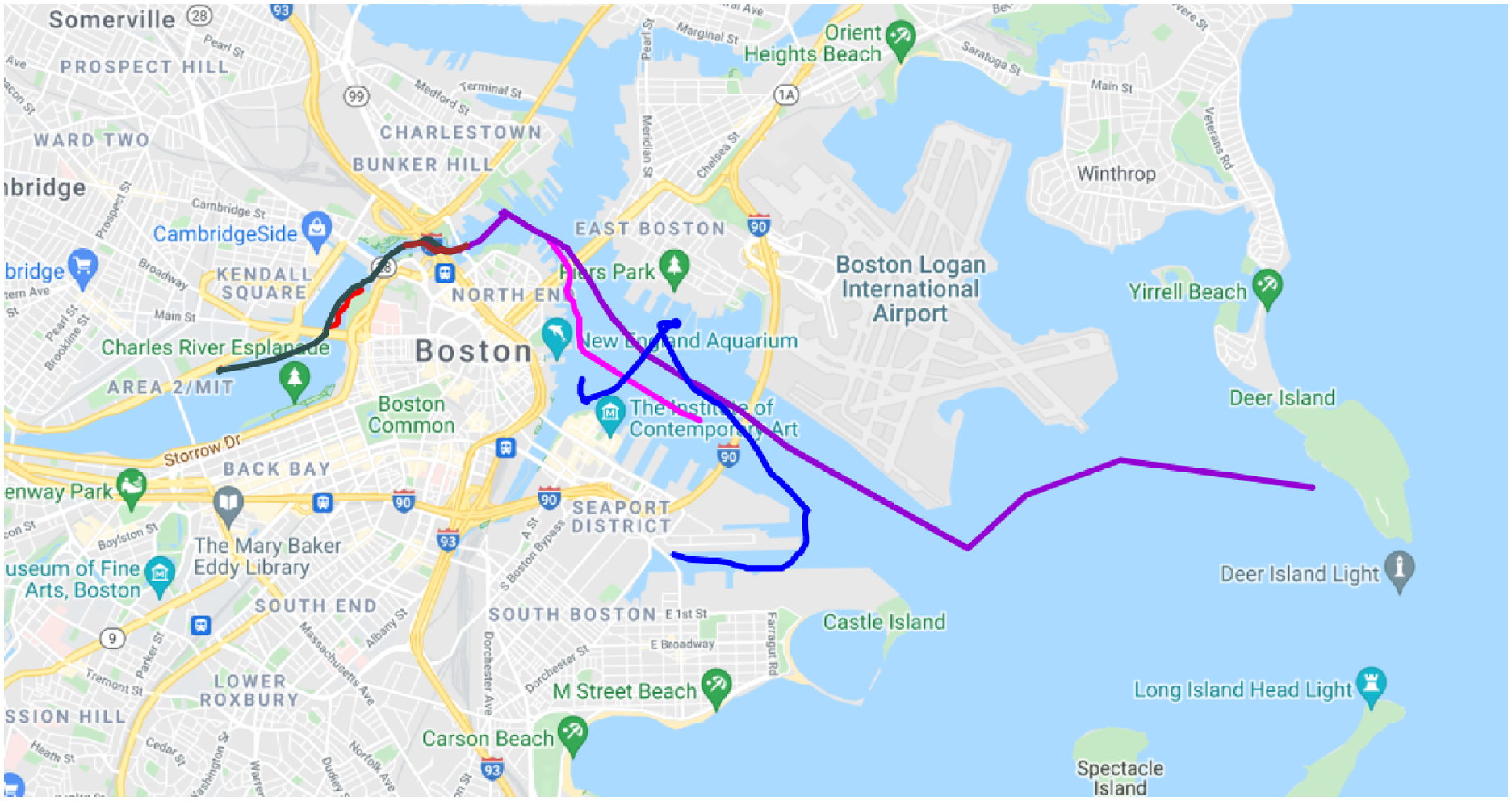

R/V Philos recorded scenes around the Massachusetts Bay area over a period of 2 years from 2019 till November 2021. This gave us an opportunity to create seasonal and temporal diversity in the dataset. Nearshore scenarios were also captured as they present more challenging scenes with construction and increased human and vehicle presence. This was in addition to the complexity present due to weather and light patterns observed in the farther seas. Images were taken in a natural, uncontrolled environment which makes the dataset applicable for practical use. Figure 4 shows some of the typical routes the ASV has taken in these trips. One of the routes was in open waters away from the crowded harbor, and as expected, the corresponding images show little traffic (Figure 9, last row). Area covered by R/V Philos near Boston, Massachusetts.

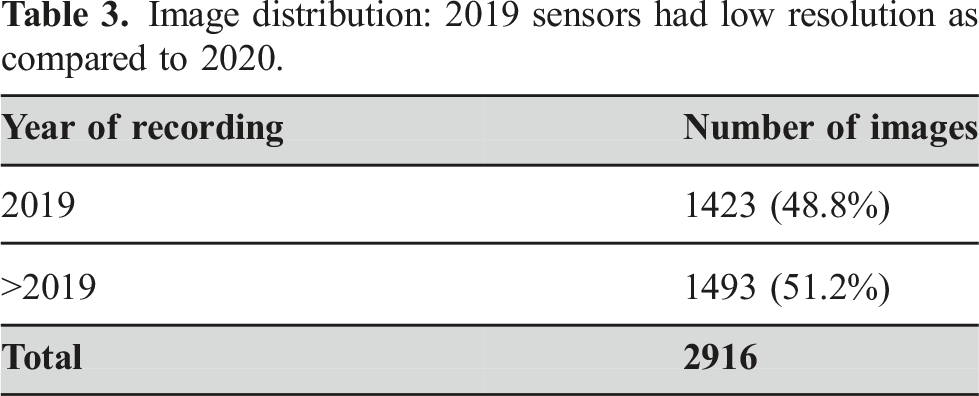

Image distribution: 2019 sensors had low resolution as compared to 2020.

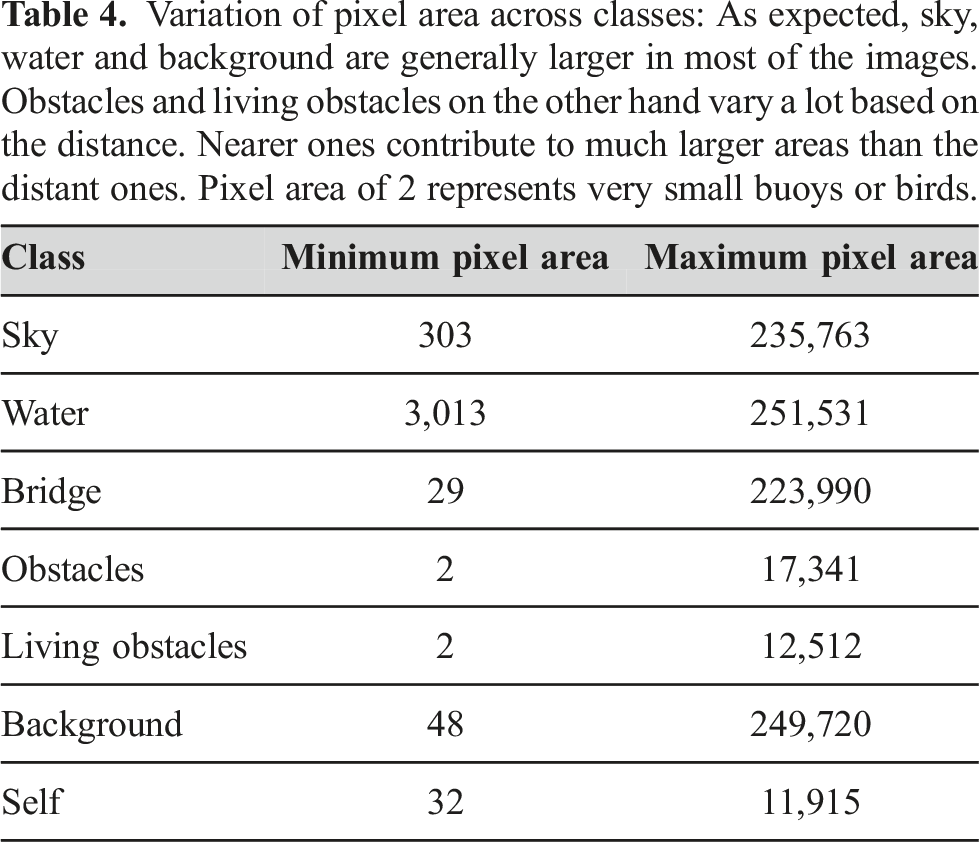

Variation of pixel area across classes: As expected, sky, water and background are generally larger in most of the images. Obstacles and living obstacles on the other hand vary a lot based on the distance. Nearer ones contribute to much larger areas than the distant ones. Pixel area of 2 represents very small buoys or birds.

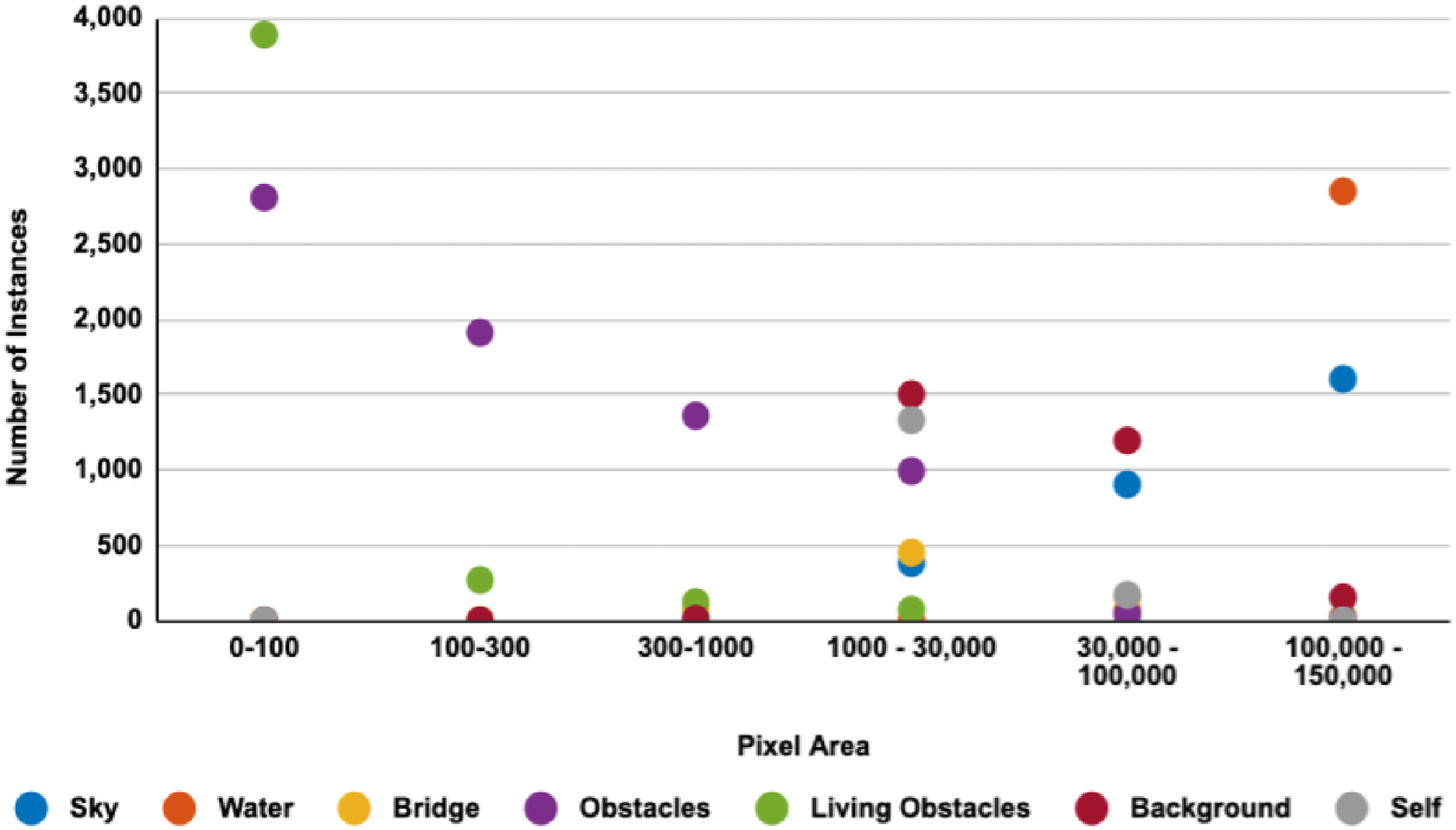

Distribution of instances across pixel area: As seen, sky, water, and background are prominently on the right end of the chart with larger area. Most of the concentration of living obstacles and obstacles is on the left indicating smaller pixel areas. There is a gradual progression toward the center which indicates cases where these obstacles are in close proximity and appear larger. Distance does play a role in bridge size as well but not to the same extent as that of obstacles. Training was therefore done on actual size images so that we can retain information for obstacles and living obstacles.

4.2. Image segmentation

The purpose of this and the next section is to share our experience of labeling the dataset with the research community. We believe that there are key lessons which the community can benefit from. We analyzed the Cityscape Cordts et al. (2016) dataset to understand the guidelines of their classification. In order to make our dataset meaningful for scene understanding, we finalized 7 classes—“sky,” “water,” “obstacle,” “living obstacle,” “bridge,” “background,” and “self” for annotation. Categorizing maritime scene into such refined classes ensures that true obstacles are identified and not get combined with other non-essential entities. The “obstacle” class consists of inanimate objects surrounded by water such as buoys, small platforms, sailboats, ships, and kayaks.

Animate obstacles like humans and birds in the water are classified as “living obstacles.” Though this class may seem contrived, identification of humans and marine wildlife may be useful for surveillance and environmental or ecological monitoring, respectively. Illegal trafficking of drugs, weapons, and humans through marine channels is a vexing problem and such activities are often carried out at night. Detecting humans in such cases may prove to be useful. In the future world of USVs, probably being able to detect a human on board might also prove useful. Thus, the dataset can be used for multiple purposes.

Because of the urban setting of the dataset, bridges were frequent and posed a unique challenge. The piers of the bridge that are submerged in water and the girder of the bridge were relevant as the ASV would need to maneuver around the same. However, the top of the bridge was not hence we annotated the navigation relevant portions of the bridge as “bridge” class and mark the remaining portions as “background” class. The “background” class covered any static or dynamic entities that were on land such as trees, buildings, and construction cranes.

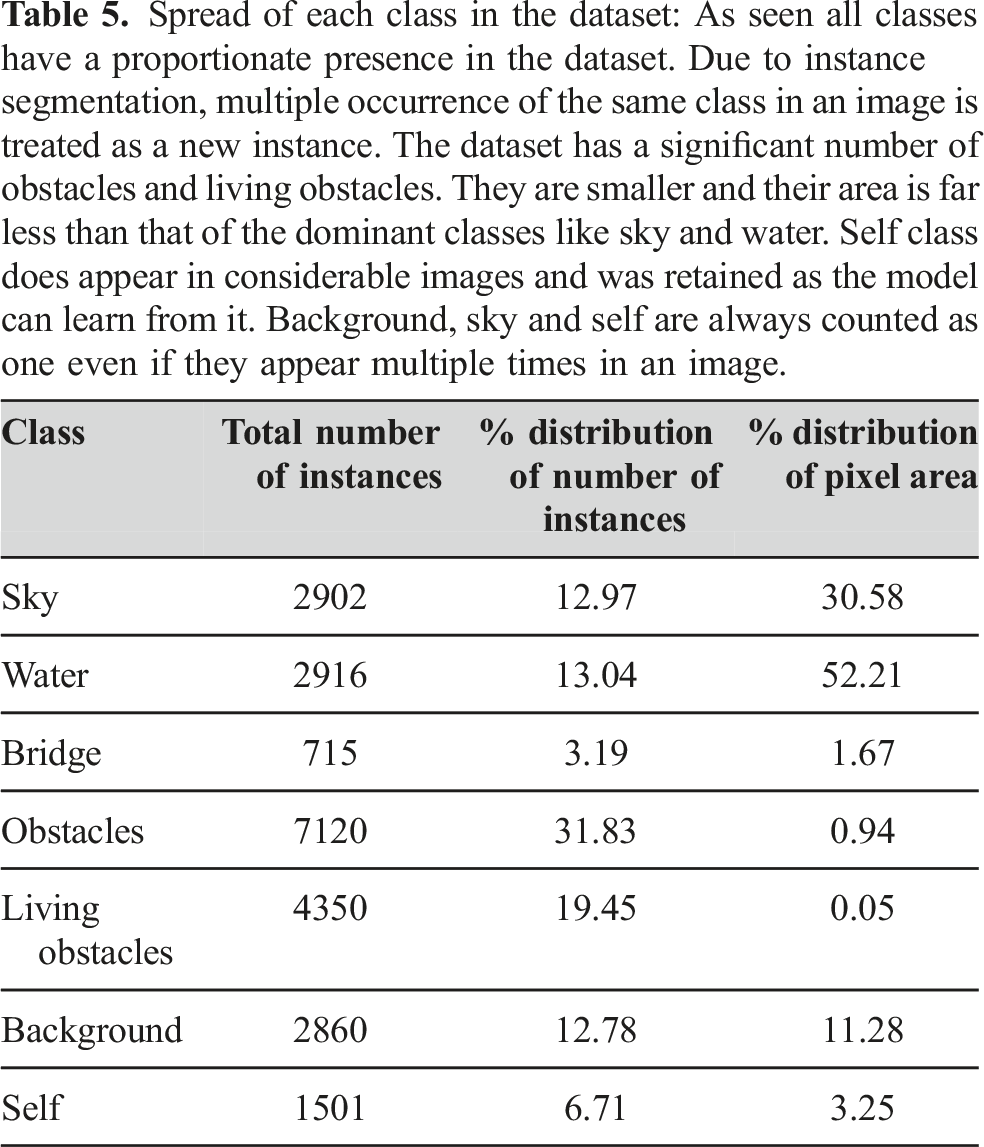

Spread of each class in the dataset: As seen all classes have a proportionate presence in the dataset. Due to instance segmentation, multiple occurrence of the same class in an image is treated as a new instance. The dataset has a significant number of obstacles and living obstacles. They are smaller and their area is far less than that of the dominant classes like sky and water. Self class does appear in considerable images and was retained as the model can learn from it. Background, sky and self are always counted as one even if they appear multiple times in an image.

As we were working through the annotations, many images gave us new insights that had to be incorporated. We had anticipated bridge complexity as part of our planning; however, we encountered a similar issue with respect to obstacles. Initially, we defined an obstacle as an entity surrounded by water on all sides. However, we came across construction objects that stretched from land into the water such as gates that allow the boats to pass through, piers and docks to park the boats, or platforms connected to the land. These obstacles were pertinent for scene classification and hence could not be treated as background. Similarly birds flying just above the water were categorized as sky, and birds in the water were categorized as “living obstacle.” Since the images are 2D and LWIR does not give a good feel of the color and texture, there were cases where the obstacle in water was collapsed in the rear background and had to be reviewed thoroughly. Another challenge faced was due to the wide and deep line of sight that we get in the marine environment. There were many obstacles near the horizon that were very tiny. We had to enlarge the image and annotate such images per pixel. A person on a boat is annotated as a “living obstacle” while the boat is annotated as an “obstacle.” In cases where the boat was far out and a human could not be easily identified on the boat, it was not called out and considered as part of the “obstacle” class.

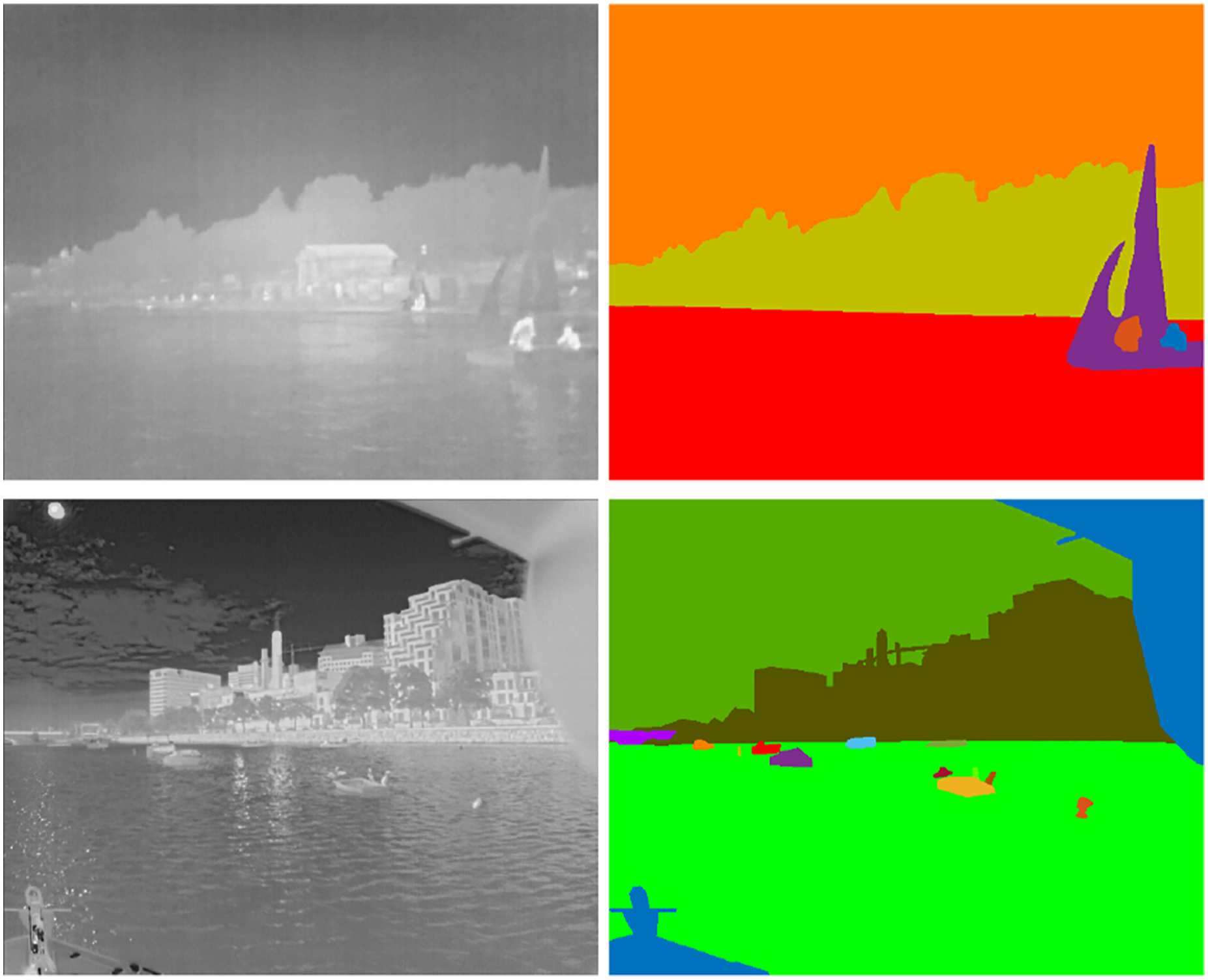

In addition to labeling, we also had to make a choice between semantic and instance segmentation. In semantic segmentation, each occurrence of a class is assigned same class label while in instance segmentation, each instance of a class is assigned different instance ID. Unlike on land, maritime environment has a wide and deep line of sight. The near and far objects may overlap in the 2D view of an image. They will be collapsed if semantic segmentation was used. By instance segmenting the image, the information of overlapping multiple obstacles can be retained which in turn can be used to evaluate or develop occlusion aware classifier vital for path planning. Lastly, the benefit of instance segmentation is that it can always be converted programmatically to semantic based on the need. Figure 6 illustrates a few samples of raw LWIR images and their annotated masks. LWIR images and their segmentation: Multiple occurrences of birds, humans or boats have their own instance id and appear in different color. Background, sky, and water each have only one instance in an image.

4.3. Labeling process

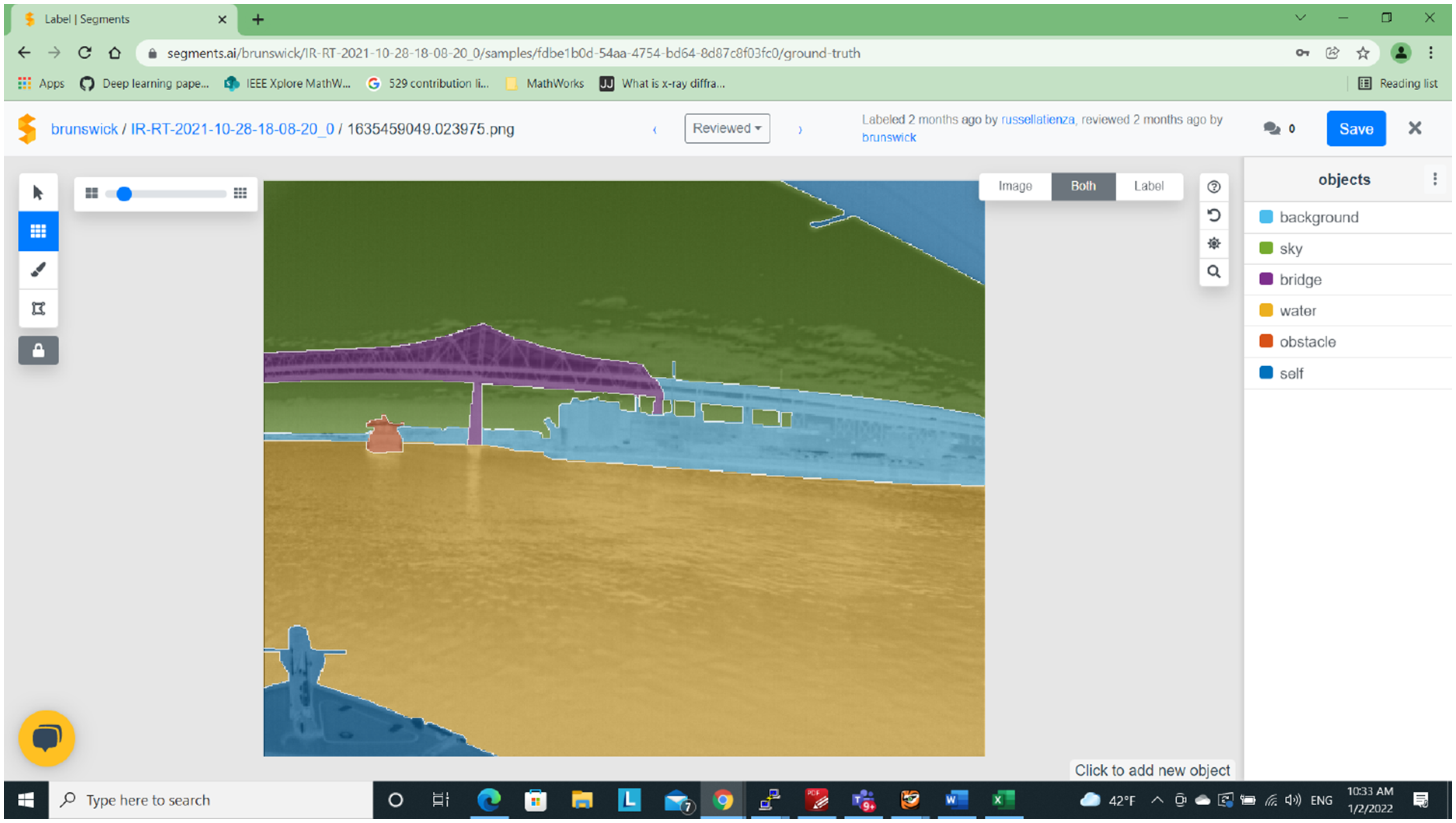

An independent organization was tasked with the labeling of these images. To achieve consistency in the result, we created and shared a detailed class label definition document so that labelers would have a common understanding. We also labeled a batch of images ourselves to illustrate the desired outcome. There are many tools available for image segmentation but we chose segments.ai Debals (2021) platform because of its ease of use. The labeling was quick because of its superpixel technology. The API interface made working with the labeled dataset efficient. Figure 7 shows the tool in action. While the labeling tool was easy to use, there were some challenges faced especially due to the nature of LWIR images. Lack of color and textures posed difficulties in image identification as well as annotation. Color and texture assists the human eye in making a judgment. Lack of thereof, led to multiple reviews in some complex situations such as cluttered set of obstacles, cluster of parking poles, flock of birds, or distant ships. For each LWIR video, the corresponding optical version was therefore provided to the labeling team for reference. The image had to be classified in 7 classes. This made the labeling process quite elaborate and time consuming. Identifying parts of the bridge that should be annotated as background was subjective and as a result, there may be some deviations in some of the annotations. In summary, the semantic annotation exercise for LWIR images was more time consuming and needed a lot more attention than we had anticipated. On the positive side, the tool had a zoom-in capability and allowed us to annotate tiny areas of the order of few pixels well. We wanted to account for all sizes of the obstacles because we wanted our dataset to be a real-life dataset. Instead of all perfect images, presence of extremities can make the dataset more robust. Image segmentation platform: Segments.ai tool that was used for annotation. It provides super pixels that improve the productivity and reduces manual errors. Pipeline was automated using the API interface.

Two rounds of reviews were done to improve the accuracy of segmentation. Including the reviews, each image annotation took approximately 25 min. We also developed a script to check for any unclassified pixels and corrected such masks. Automated checks for validating the segmentation and gathering metrics were also developed.

5. Dataset evaluation

Though we have created instance-segmentation dataset, for the purpose of evaluation we used its semantic segmentation masks. To evaluate the efficacy of the dataset, we used 3 image segmentation architectures - UNet developed earlier by Ronneberger et al. (2015) for medical image segmentation, PSPNet by Zhao et al. (2017) for its improvement over fully convolutional network based segmentation and DeepLabv3 by Chen et al. (2018) which is known for its overall superior performance. The dataset was split into 3 categories - 70% for training, 20% for validation and 10% for testing. The test images were used only during inference. Pretrained weights such as Deng et al. (2009) are generally available for training optical images. They greatly reduce the time to retrain the model; however, these do not work well for LWIR image training as shown by Nirgudkar and Robinette (2021). Another challenge was the characteristics of these images. The pixel area occupied by the boats (0.94%) is the second least because they are tiny as compared to the water or sky in an image. We therefore trained these architectures from scratch for our LWIR dataset to mitigate such issues. Image resolution of 640 × 512 (width×height) pixels was used across all the architectures - UNet (Ronneberger et al. (2015)), PSPNet (Zhao et al. (2017)), DeepLabv3 (Chen et al. (2018)) for both 2019 as well as the 2020 images. Note that even though 2019 cameras had a resolution of 320 × 256, the saved images had a size of 640 × 512.

5.1. Data augmentation

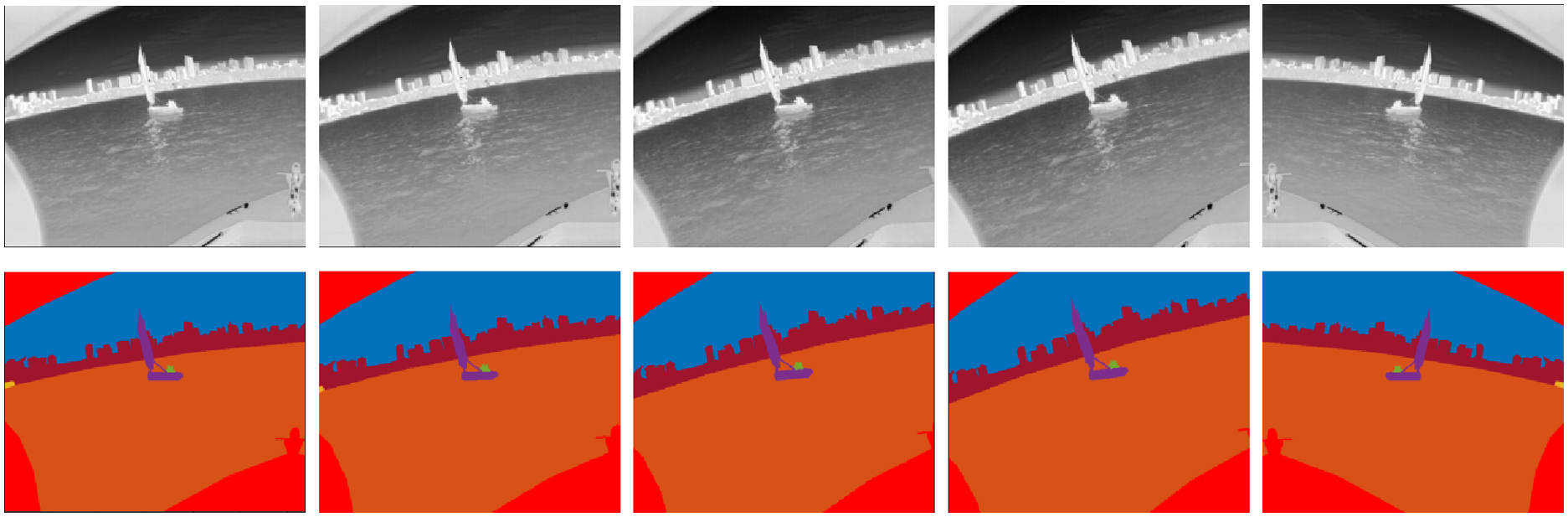

Robust, diverse and large volume of data is a prerequisite for any successful model. However, creating a labeled dataset is a laborious and expensive task. A common technique employed in increasing the dataset variety is data augmentation Schöller et al. (2019), Bovcon et al. (2019). We used the following two types of transformations for our dataset: • Rotation: An image and its mask were rotated by ±2°, ±5°, ±7° • Mirror: The original image and mask and their rotated equivalence from previous step was mirrored on the vertical axis

This scheme generated 13 images from one image. Accordingly, our dataset was augmented to 40,096 images. An illustrative example is shown in Figure 8. Data augmentation: First column shows original image and its mask followed by 3 rotations: 2, 5 and 7° respectively. Last column shows mirror operation. Images were also rotated by −2, −5, and −7°.

5.2. Performance criterion

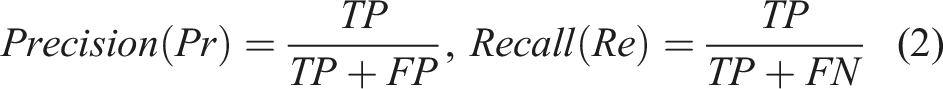

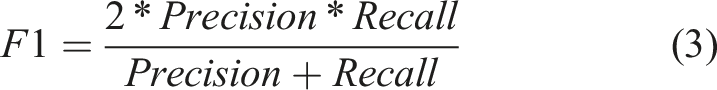

Intersection over union (IoU) is an important metric for segmentation inference Csurka et al. (2013). It is defined as ratio of area of intersection between predicted segmentation mask (P) and the ground truth (G), and the area of union between the two.

The IoU score ranges between 0 and 1. A threshold value can be chosen to determine if the inference can be treated as true positive (TP). If the IoU score is greater than a certain threshold, then we treat the inference as true positive (TP) otherwise it is treated as false negative (FN). If the ground truth does not contain the region present in prediction then it is treated as false positive (FP). Usually, a threshold value of greater than 0.5 is considered optimal Liu et al. (2021); however, if the class sizes are tiny as in the present case, the threshold can be compromised to a lower value. The precision, recall and F1 score are defined as follows:

5.3. Performance metrics

Performance of various architectures: Size of the images and number of epochs were identical across the 3 architectures.

Quantitative results on the MassMIND dataset: Classes pertinent to the scene understanding were also evaluated against a lower threshold. PSPNet performs at par with DeepLabv3 in all classes except for ‘living obstacles’ as indicated by the poor recall. Most of the times it identifies the living obstacle but collapses it in the obstacle (a boat typically). Large number of epochs for PSPNet will probably circumvent this problem. However, its F1 score for obstacles is marginally better than DeepLabv3. Despite their tiny size, obstacles give a satisfactory recall. F1 score of DeepLabv3 for bridge, obstacle, and living obstacle classes is quite good. It has performed well across all the classes.

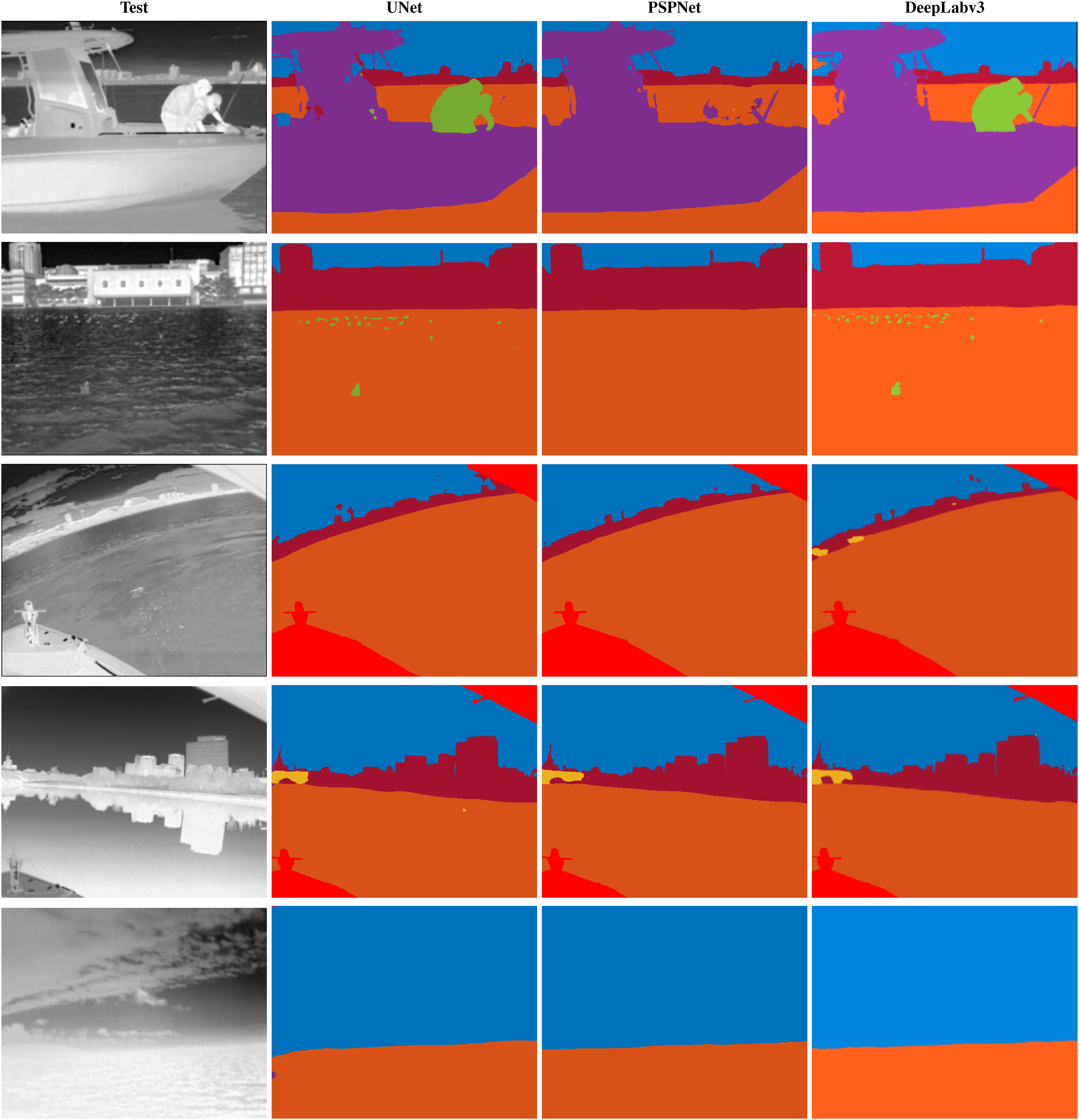

Figure 9 indicates the inference results from all 3 architectures for a few sample images. Living obstacles (humans) have been inferred really well in both DeepLabv3 and UNet. The boundaries separating humans from the obstacle (boat) has been interpreted well. In the second row, very tiny obstacles (birds) in the water have been correctly inferred by DeepLabv3 and UNet. Third row indicates that curvature or waves in water pose no issue to the model. Reflection in water in the fourth row, or the heavy cloud cover right on top of the sea, has been interpreted properly by the models. PSPNet was found to have problems with identifying “living obstacles.” While it works really well for all other classes, it needs a lot of training to consistently differentiate the boundaries between obstacle and the living obstacle. Qualitative comparison of the inference: First row: DeepLabv3 and UNet have a good inference other than a few traces of sky in the obstacle. PSPNet, despite the significant size has problem in identifying living obstacles and will need significant additional training. Second row: Very tiny birds in the water have been correctly captured by both DeepLabv3 and UNet and are again missed in PSPNet. Third row: Curvature of the image and waves in water have been correctly depicted in all 3 classifiers. DeepLabv3 does a good job in calling out the bridges as well. Fourth row: There is significant reflection in the water that is handled well by all, except for a few sporadic traces of bridge. Fifth row: There is a very obscure cloud and sky boundary. The image was taken in relatively open waters, see one of the routes in Fig. 4. This has been handled really well in all 3 models. Overall, glitter, waves, horizon boundaries that are a problem in optical world are handled well in the LWIR dataset. DeepLabv3 performs best overall, closely followed by PSPNet (except for the problem with living obstacle) and UNet.

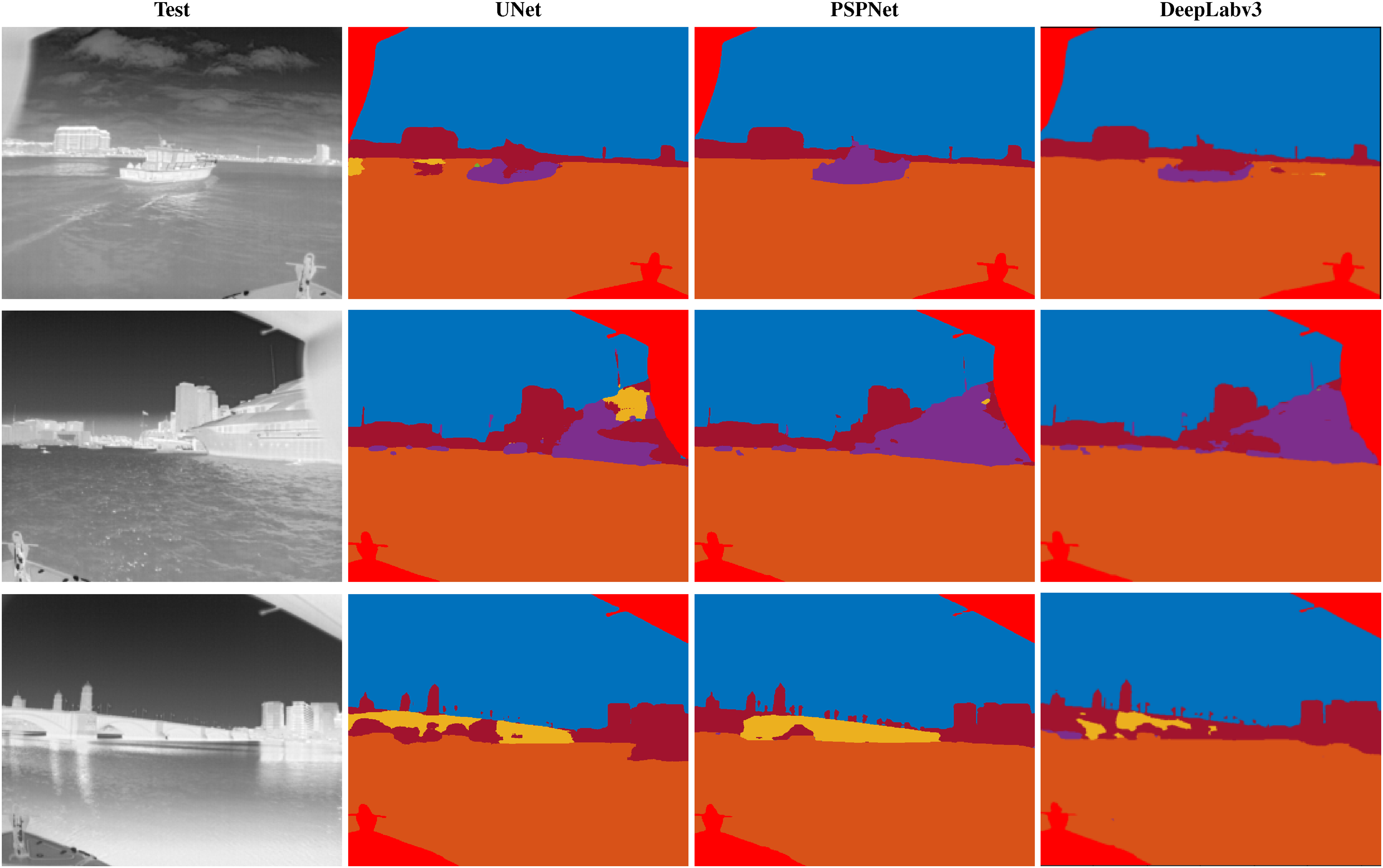

In addition to the above limitation in PSPNet, there were some situations where very tiny obstacles were missed or not completely identified. Bridge is another area where the classifiers sometimes gave inconsistent results. This was primarily due to the fact that ground truth for the bridge contained a portion annotated as ‘background’ that is not pertinent to navigation. The classifier therefore sometimes incorrectly inferred the navigable portion of the bridge as background and vice versa. Despite that, inference for “bridge” class improved significantly after increasing the training to 50 epochs. Some of the challenge cases are shown in Figure 10. Misrepresented inference: First row: A portion of the obstacle has been clubbed with the background in both UNet and DeepLabv3, however it is represented properly in PSPNet. There are sporadic traces of bridge in UNet. Second row: Again, the hull of the larger ship is very close to the background and the classifier has problems detecting the boundaries in all 3 architectures. And there are a few spurious occurrences of bridge and living obstacles. Third row: PSPNet has a better inference than the rest although the boundaries are not clear. Portions of bridge and background have been collapsed in the other two along with a few sporadic obstacles. The reflection in water has been handled well in all three.

To get consistent and meaningful performance from the deep learning models, various modifications were tried and parameters changed. A few of those key modifications are elaborated below:

Initially we had reduced the image size to 320 × 256 (width×height) to speed up the training phase. This resulted in a lot of smaller obstacles being missed in the inference. The obstacles were inherently small as shown in Table 4 and they got tinier with the reduction in image size leaving the classifier with very little information to train well. It was therefore decided to retain the original size of the images. We also experimented with excluding 2019 images because they were of low resolution. We expected improvement in the performance. However, the performance worsened as a lot of variation and information contained in the 2019 dataset was lost. We therefore retained both 2019 and 2020 images.

To increase the dataset size, data augmentation was used as described in Sub-Section 5.1. For optical images, color adjustment is yet another augmentation operation. However, augmentation by changing the brightness of LWIR images had adverse effect on the inference and was not incorporated. Larger rotations (±10°, ±15°) were tried and that caused distortion in 2019 images and deteriorated the overall results. Smaller range ( ±2°, ±5°, ±7°) seem to fit the dataset well. An important consideration that improved the performance drastically was shuffling. Since our images were named based on epoch times, without explicit shuffling they were fed sequentially to the classifier and gave poor results. They were shuffled randomly before the training and that variation helped the classifiers boost the performance multi-fold.

As seen from the inference, horizon detection does not pose a problem in LWIR images. However, there are some sporadic instances of clouds being incorrectly inferred as water and vice versa. This happens mainly when there are white clouds in the sky that appear similar to water, have similar pixel values and get labeled incorrectly. Bridge annotation remained challenging despite good F1 scores. Depending on the nature of the bridge and position of the background with respect to the bridge, the classifier has a challenge and classifies the navigable portion of the bridge as a background. When the bridge has arches, view from beneath the arch could be sky or background and water. Such minute details pleasantly have been represented well most of the times, but do pose a challenge in other cases.

In summary, standard CNN architectures can be trained with LWIR images and can produce good inference for obstacle detection and scene understanding. Although EO cameras are better suited for boat classification, in extreme light conditions they perform poorly in their ability to detect an obstacle. In such cases, LWIR cameras can assist in detection if not in the categorization. It may not be COLREG compliant but it would at least detect the obstacles and indicate the relative positions of obstacles, water, background etc. Thus, the LWIR dataset complements the optical dataset in scenarios where lighting conditions are extreme and is therefore invaluable in the perception task.

6. Conclusion

We have presented a comprehensive MassMIND dataset containing over 2900 segmented diverse LWIR images and made it publicly available. This is the first maritime dataset that segments LWIR images in 7 different classes with emphasis on living and non-living, static and dynamic obstacles in the water. It can be used for a variety of use cases. Additionally, any label can be collapsed in another label programmatically if it is not of interest. The dataset is evaluated and bench marked against a few industry standard architectures.

Of the 3 classifiers evaluated, DeepLabv3 Chen et al. (2018) stands out the best, followed by UNet and PSPNet. PSPNet is found to be comparable to DeepLabv3 in all other classes except for living obstacles. Our research calls out significance of LWIR when it comes to problems such as extreme light conditions and horizon detection that are common in the optical world. In addition, the dataset tries to encompass many of the critical aspects of the maritime imagery such as static and dynamic obstacles of varying sizes, crowded shoreline, deep seas, varying weather and light patterns, and life presence on the water surface. Such a diverse LWIR dataset can be useful to improve the deep learning architectures for the maritime environment. At the same time, it can complement optical datasets. The present dataset also has some limitations. Certain obstacles such as river barges or Sea-Doos are not captured because of the ASVs’ urban setting. The demographics within the dataset is also limited to one region in USA. We understand that as a result, there is no variety in navigational markers used across world regions. This gap can be bridged by collaboration with other researchers.

LWIR images lack texture and color information so classifying boats into subclasses will take more effort. However, we plan to refine the current ‘obstacle’ class to indicate the type of obstacle such as sailboats, kayaks, and merchant ships. For tug boats, cargos, and tankers additional parameter of a safe zone criterion can be incorporated. We are also exploring labeling of the data from other sensors such as radar or lidar that will be synchronized with LWIR and EO cameras. This will enable creation of dataset and classifiers which can be useful for navigation. We demonstrated a prototype of integration of inference obtained by LWIR sensor, radar, and lidar in Nirgudkar and Robinette (2022). We plan to further refine that research such that it can work in real time. Lastly, we will also explore creation of an instance-segmentation architecture dedicated towards LWIR images in the marine environment.

Footnotes

Acknowledgements

We are grateful to Brunswick® Corporation for their support. The views, opinions and/or findings expressed are those of the authors and should not be interpreted as representing the official views of Brunswick® Corporation. We also thank the team at segments.ai for providing early access free of charge and for providing dashboard for tracking the progress of the labeling work.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.