Abstract

A fundamental question in creativity research is whether chance models of creativity align with the idea that cognitive ability can explain individual differences in creative cognition. Using two datasets (NDataset1 = 462 and NDataset2 = 331) we extended previous work on the equal odds baseline (i.e., a chance model of creativity) by using a latent variable analytic approach to model latent residual factors rectified for fluency. We compared three measurement models: the EOB latent variable model, a residualized model, and a ratio score model, examining their reliability and associations with cognitive abilities (i.e., fluid intelligence and working memory capacity). We found that when a chance model of creativity is deemed to be an appropriate fit for a given dataset, as evidenced by Dataset 2 in our study, a cognitive interpretation of originality factor η is warranted (i.e., around 21% of variance can be explained by working memory capacity). Our work, thus, refines discussions about the compatibility of chance models with cognitive explanations of creative cognition.

Ideas or products of high creative quality must be original and effective (Amabile & Pratt, 2016; Runco & Jaeger, 2012). Chance models of creativity suggest that generating more ideas increases the likelihood of generating a high-quality idea, or, as Osborn famously stated, “quantity breeds quality” (Osborn, 1963; p. 131). Chance models of creativity are ubiquitous in creativity research and have been applied to scientific productivity (Feist, 1997; Forthmann et al., 2020a; Simonton, 2004; Sinatra et al., 2016), brainstorming research (Briggs & Reinig, 2010; Nijstad et al., 2010; Osborn, 1963; Rietzschel et al., 2007), creativity in music (Hass & Weisberg, 2015; Kozbelt, 2005, 2008), and divergent thinking (Forthmann et al., 2021b; Mouchiroud & Lubart, 2001). Previously, researchers have argued that chance models are not akin to any sort of psychological cognitive interpretations (Rietzschel et al., 2007); in other words, high-quality ideas should not be understood as a result of careful reasoning but are rather attributable to the random generation of a large number of ideas.

However, while this may be true for a special case of chance models (Forthmann et al., 2021c), recent developments in research on chance models of creativity allow for explicit modeling of individual differences in creative thinking (Caviggioli & Forthmann, 2022; Forthmann et al., 2021c), which, in turn, may allow researchers to evaluate the relationship between creative cognition and other cognitive abilities at the between-person level. Thus, the objective of this paper is to compare three different latent variable modeling approaches to separate originality (i.e., the primary component of creativity; Diedrich et al., 2015; Pichot et al., 2024; Zhou et al., 2017) from the number of responses (i.e., fluency) to a given prompt; the three models are the equal odds baseline (EOB) model, a residualized model, and a ratio score model. Furthermore, we aim to evaluate the association of a (residual) factor of originality in each model with general cognitive functioning, as operationalized by fluid intelligence (Gf) and working memory capacity (WMC).

In the following, we first outline the EOB model, which is the focal chance model of creativity in the current work. In this section, we also introduce a latent variable extension of the EOB that allows us to model and evaluate reliable individual differences in creative thinking while explicitly accounting for the complex relationship between quantity and quality. This model is then compared to two different modeling approaches that also account for the fluency contamination in originality (Forthmann et al., 2020b; Hocevar, 1979). We then outline the relationship between cognitive abilities and creative thinking. The three models are then applied to two distinct datasets to relate individual differences in originality (as captured by the different models) to latent variables reflecting cognitive abilities.

Chance Models of Creativity and Their Cognitive Psychological Interpretation

Simonton's equal odds baseline model (Simonton, 2004, 2010)—i.e., the chance model of creativity employed in the current work—proposes that the number of creative hits Hp of creator p is a linear function of the total number of productions Tp of that creator

This very basic model has been coined “strict EOB” in the literature (Forthmann et al., 2021c), and it is most likely this extreme special case of the EOB that was mentioned by Rietzschel et al. (i.e., “the baseline approach in its strictest form,” 2007; p. 934) when discussing a potential incompatibility between chance models of creativity and cognitive capacity explanations of creative ideational behavior (e.g., as expressed while working on divergent thinking tasks).

To see why a cognitive interpretation is not at odds with the EOB, one must consider the most recent version of the model (Simonton, 2004, 2010) that further incorporates a random error term

However, when dealing with empirical data, it is important to consider sampling variation as an additional source of variance. It is critical to determine if Var(up) exceeds the expected amount of variance attributable to sampling error. This can be empirically tested when Hp is operationalized by a simple count of “hits” (i.e., the number of original responses). However, in many cases, Hp represents the sum of citations received for a researcher's scholarly papers or the sum score of subjective ratings in a divergent thinking task. In these situations, testing for residual variance beyond sampling error is not straightforward. Hence, for the purpose of the current work, we propose a latent variable extension of the EOB (Caviggioli & Forthmann, 2022) to explore for reliable individual differences in the residual term.

A Latent Variable Extension of the Equal Odds Baseline That Allows for Item-Specific Quantity and Quality Scores

The EOB, represented by Equation 2, is a basic linear regression model. The intercept is fixed at zero and the regression slope, represented by the average hit ratio ρ, is fixed to the ratio of average quality and average quantity. Hence, it is straightforward to estimate the EOB model as a structural equation model, and previous work has explored this option empirically (Forthmann et al., 2020a; Forthmann et al., 2021a; Forthmann et al., 2021c). Latent variable extensions of the EOB have also been proposed by modeling multiple quality indicators for the same set of inventors’ patents (Caviggioli & Forthmann, 2022). For divergent thinking tasks, we propose a latent variable extension that considers item-specific quantity and quality scores, Ti and Hi, respectively. Ti and Hi refer to fluency and summative originality, respectively, in the context of divergent thinking research.

First, consider summative originality Hip of item i and person p as a linear function of fluency score Tip:

Next, the error term can be split into a latent originality factor η

p

(weighted by factor loading

Models Competing with the EOB Extension

Building on the latent variable extension of the EOB model, which addresses the issue of fluency contamination of originality (e.g., Forthmann et al., 2020b; Hocevar, 1979), two additional models also arguably address this fluency-originality contamination and can be compared against the extended EOB approach: a residualized model and a ratio score model.

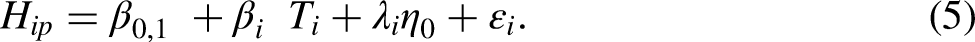

While the EOB model is based on the assumption that “fluency breeds quality,” the residualized model offers an alternative perspective, aiming to isolate originality from fluency (“originality without fluency”). In this model, a latent originality factor η represents pure originality, with fluency being partialled out through residualization:

Competing chance models for creativity.

Fluency, Originality, and Fluid Cognitive Abilities

Creative ideation describes the process of generating divergent ideas (Barbot, 2018; Fink & Benedek, 2014). Divergent thinking tasks—the most used operationalization of creative ideation (Kaufman et al., 2008)—require test takers to be both fluent (quantity of responses; e.g., Runco, 2020) and original (quality of responses; e.g., Carroll, 1993) regarding their responses to an open-ended task or problem. Both aspects of creative ideation have been subsumed under the larger first-order factor of retrieval from long-term memory in contemporary intelligence structure models (e.g., Carroll, 1993; Schneider & McGrew, 2018), but this classification has recently been questioned (Weiss et al., 2024). However, this possible reclassification of structural aspects does not necessarily change the fact that both aspects are inherently interwoven with fluid abilities that are essential for a wide range of intelligence-related performances, and that both may also contribute to generating original ideas (cf. Gerwig et al., 2021). In the following, we outline the status quo of fluid abilities in order to establish how they might be related to a latent originality factor η that is derived from the above-described models.

Fluid abilities play a critical role in contemporary models of intelligence (e.g., Marrs, 2011; Schneider & McGrew, 2018). In fact, fluid intelligence (Gf) is often considered the most crucial first-order factor of intelligence (Carroll, 1993), and it has even been equated with general intelligence (e.g., Gustafsson, 1984; Kan et al., 2011; Undheim & Gustafsson, 1987). The term “Gf” refers to one's capacity to solve new and unfamiliar problems in not yet experienced situations (e.g., Cattell, 1971). However, performance in prototypical Gf tests (Wilhelm, 2005) is known to depend on more refined cognitive operations, like maintaining, manipulating, and storing single units of information (Wilhelm & Schroeders, 2019). These cognitive operations align with contemporary definitions of working memory, such as the binding hypothesis of working memory (e.g.,Wilhelm et al., 2013). Working memory is understood as a cognitive system that supports non-automatized mental processes by retaining information while, at the same time, also allowing for the processing of new inputs (Conway et al., 2008). With that, working memory can be understood as the fundamental basis of more complex cognitions, like reasoning (e.g., Kane et al., 2005; Kyllonen & Christal, 1990; Oberauer et al., 2005; Wilhelm et al., 2013) or retrieving information from long-term memory (cf. Goecke et al., 2024; Rosen & Engle, 1997). The limited capacity of working memory is often termed “working memory capacity” (WMC; cf. Benchmark 12.1 in Oberauer et al., 2018).

Both Gf and WMC can be considered important for creative ideation. Prototypical creativity tests, or divergent thinking tests, often require a combination of two processes: (a) retrieving existing information about a given problem from long-term memory and (b) restructuring this information and combining it to something novel. This latter aspect in particular may require fluid abilities, although the former aspect also requires fluid abilities like reasoning ability or WMC (Goecke et al., 2024). For example, in tasks like the Alternate Uses Task (Wallach & Kogan, 1965), where individuals are required to come up with creative uses for a brick, individuals must retrieve information in terms of existing knowledge regarding an everyday object from long-term memory (Gilhooly et al., 2007) and combine this information with novel thoughts (Paulus & Brown, 2007).

In this process, working memory might act as a bottleneck. That is, individuals with higher WMC may have a more opportunity to simultaneously consider information that they draw from long-term memory, and, hence, these individuals might have more opportunity to generate new ideas or novel thoughts. Thus, creative ideation, which involves activation of stored knowledge and processing this information into novel outcomes, strains working memory. The ability to retain one piece of information while processing another is a key function of working memory in this context. As Gf is highly dependent on WMC (Kane et al., 2005; Oberauer et al., 2005; Süß et al., 2002), the role of Gf for creative ideation can be understood similarly.

Distinguishing between fluency and originality is theoretically straightforward, but empirically and psychometrically it is much more difficult (e.g., Acar, 2023; Forthmann et al., 2020b; Hocevar, 1979; Weiss et al., 2024). In particular, measuring originality is very challenging, as the methods available to control for fluency, i.e., residualization and ratio scores (Forthmann et al., 2020b; Hocevar, 1979; Hocevar & Michael, 1979), are theoretically (e.g., Cronbach, 1941; Morley, 1930) and empirically (e.g., Arndt et al., 1991; Hocevar, 1979; Hocevar & Michael, 1979) associated with decreases in reliability. Hence, the individual association of originality with fluid abilities like WMC is difficult to grasp. Although previous research has indeed investigated the relationship between these constructs (e.g., Gong et al., 2023), the available studies did not control for a possible fluency-originality contamination (Lu et al., 2022).

As stated above, theoretical and empirical evidence suggests that complex cognitions play a crucial role for creative ideation (cf. Sowden et al., 2015). However, fewer studies have investigated the relationship between WMC and creative ideation, and the available results are conflicting (Benedek et al., 2014; De Dreu et al., 2012, Gerver et al., 2023; Gong et al., 2023; Lee & Therriault, 2013; Lu et al., 2022; Orzechowski et al., 2023; Weiss et al., 2021; Weiss et al., 2024). While meta-analytical studies tend to report a small positive but significant relationship (r = .09, 95% CI [.07, .10], k = 176, m = 29; Gerver et al., 2023; r = .08, 95% CI [.05, .12], k = 75, m = 28; Gong et al., 2023), individual studies often report higher relations between divergent thinking and working memory (e.g., β = .29, Benedek et al., 2014; β = .53 for general cognitive abilities marked by WMC and originality, Weiss et al., 2024). The diversity in these findings might be attributable to different operationalizations in the assessments (e.g., fluency vs. originality, Reiter-Palmon et al., 2019; updating vs. binding, Wilhelm et al., 2013), different scorings, and different data treatments (Gerver et al., 2023; Weiss et al., 2024).

Given the confounding of fluency and originality in divergent thinking tasks, it is difficult to disentangle the degree to which WMC or cognitive abilities in general might affect the quality of ideas (i.e., originality in this context). Given the theoretical importance of WMC in creative ideation and the diverse findings, it is relevant to further investigate this relationship based on the models outlined above.

Aims of the Current Study

In the current study, we extend previous work on the EOB through a latent variable analytic approach and compare competing modeling approaches for the fluency-originality contamination. We compare three different models: an EOB model, a residualized model, and a ratio score model with respect to the interpretation of the latent originality factor η. The three models are evaluated in terms of their fit to two distinct datasets, the reliability of η, and its validity in light of its correlation with cognitive abilities (Dataset 1: Gf, Dataset 2: WMC).

First, we examined whether the EOB model could be fitted to divergent thinking data. Notably, however, previous correlational findings related to the EOB model have been rather heterogeneous (Acar et al., 2023; Forthmann et al., 2020b): Several studies have shown a positive correlation between fluency and ratio scores (e.g., Kleibeuker et al., 2013; Mouchiroud & Lubart, 2001; Plucker et al., 2014), some have shown a negative correlation between fluency and ratio scores (e.g., Plucker et al., 2011; Silvia et al., 2014), and others have shown correlations between fluency and ratio scores that were negligibly different from zero (e.g., Forthmann et al., 2021b). Thus, we did not necessarily expect a good fit of the EOB to the data.

However, in the event that the EOB model did display a reasonable fit to the data, we would then expect that all three models would display an equally well-fitting result. For this situation, we further expected the reliability of η to be rather homogeneous across the models. Second, assuming that η reliably displays an interpretation of originality, we assumed that it would meaningfully correlate with individual differences in cognitive abilities (Gf and WMC). Overall, more heterogeneous findings were anticipated in the event that the EOB did not demonstrate a satisfactory fit to the data. The aim of investigating these research questions empirically was to gain insight into the fundamental question of whether chance models of creativity align with a cognitive ability explanation of individual differences in creative cognition (cf. Rietzschel et al., 2007).

Method

Transparency and Openness

We report how we determined our sample size, all data exclusions (if any), all manipulations, and all measures in the study, and we follow JARS (Appelbaum et al., 2018). We provide all data and materials necessary to reproduce the analyses in an online repository: https://osf.io/tz9ge/. Data were analyzed using R, version 4.4.1 (R Core Team, 2024) and the following packages: lavaan (version 0.6-18; Rosseel, 2012), psych (version 2.4.3; Revelle, 2023), semTools (version 0.5-6; Jorgensen et al., 2022), and tidyverse (version 2.0.0; Wickham et al., 2019). This study's design and its analysis were not pre-registered.

Participants and Procedure

In this work, we make use of secondary data analysis of two openly available datasets.

Dataset 1

The first sample included adolescents from lower secondary and higher secondary schools (public and private; from rural areas and cities) from Austria (Neubauer et al., 2018). The data were provided by Neubauer and colleagues (2018; https://osf.io/v8e5x/). The measures analyzed in the manuscript at hand were part of a larger data collection regarding the self-other knowledge asymmetry. As described by Neubauer and colleagues, the study was approved by the Ethics Committee of the University of Graz (Austria) and the school council of the Austrian province Styria. All students gave informed consent. The final sample consisted of students recruited at 13 schools—after excluding participants with missing values as reported by Neubauer et al.—and included 462 students (55.4% female). Their ages ranged from 13 to 20 years.

Dataset 2

The second sample consisted of adult participants between 18 and 45 years. The study for the second dataset was promoted by multiple ways (e.g., social media, mailing lists, flyers, and advertisements). The measures analyzed in the manuscript at hand were part of a larger multivariate data collection. The computerized cognitive test batteries were administered in group testing sessions in a laboratory (see Goecke et al., 2024, and Weiss et al., 2024, for a more comprehensive description). All participants provided written consent, and the study was approved by the local ethics committee of Ulm University. The final sample we used for statistical analysis consisted of N = 331 participants. These participants were 65.6% female and ranged in age from 18–42 years (Mage = 25.35 years, SDage = 5.41).

Measures

Dataset 1

Indicators of Divergent Thinking for Both Datasets.

Dataset 2

Analytical Approach

Structural equation modeling (SEM) was carried out with the R package lavaan (Rosseel, 2012). We used full information maximum likelihood estimation under the assumption of missing at random to combine handling of missing data and parameter estimation in a single step (Enders, 2001; Schafer & Graham, 2002). However, participants with missing values on all observed variables were excluded in each of the analyses. A maximum likelihood estimator with robust standard errors (MLR) was used to address potential deviations from multivariate normality.

The following fit statistics were considered to indicate a good model fit: CFI (comparative fit index) ≥ .95, RMSEA (root mean square error of approximation) ≤ .06, and SRMR (standardized root mean square residual) ≤ .08 (Hu & Bentler, 1999; West et al., 2012). For acceptable model fit, these boundaries were used: CFI ≥ .90, RMSEA ≤ .08, and SRMR ≤ .10 (Bentler, 1990; Browne & Cudeck, 1992).

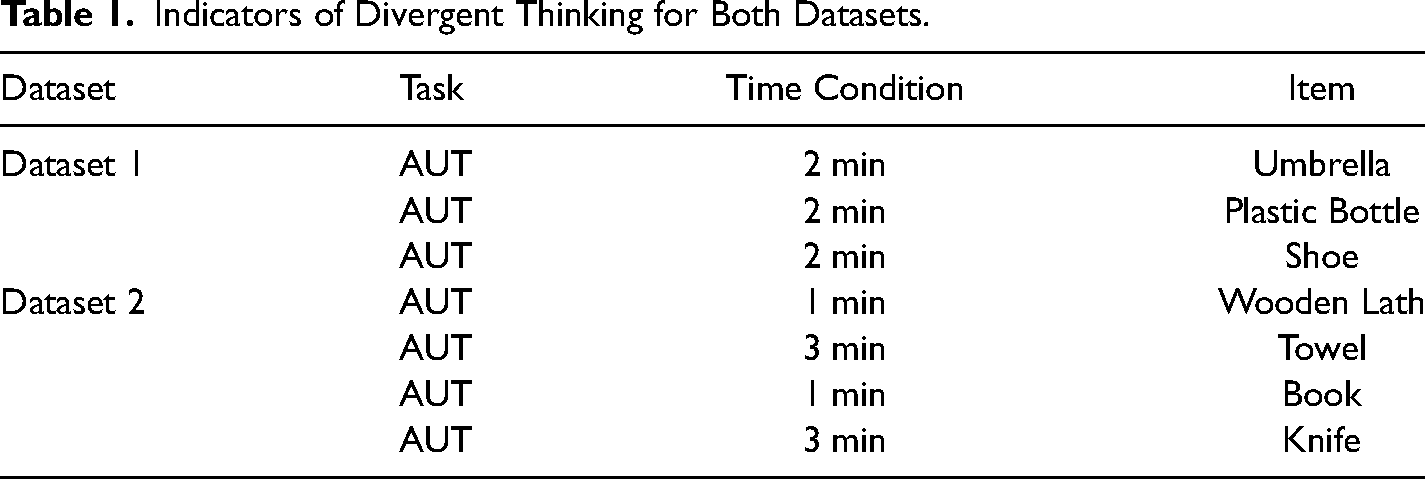

In order to examine the EOB model, item-specific originality scores were regressed on item-specific fluency scores (see Figure 2). The intercepts in these regressions were fixed to a value of zero, and the regression coefficients were constrained to the ratio of average originality and average fluency (cf. Caviggioli & Forthmann, 2022; Forthmann etal., 2021a). In the EOB model, item-specific originality scores were additionally predicted by a latent residual originality factor. For purposes of model identification, the loading of the first item on this originality factor was fixed at a value of one. Next, the residualized model was estimated in a manner analogous to that of the EOB model, with the exception that the intercepts and slopes in the item-specific regressions of originality on fluency were freely estimated (cf. Figure 2); the EOB model represents a special case of the residualized score model. Finally, the ratio score model was estimated as a simple unidimensional factor model with the item-specific ratio scores of originality being used as observed indicators (see Figure 2). Here also, the loading of the first item was fixed to a value of one for model identification purposes. We note that the ratio score model is not a special case of the other two models, and vice versa.

Schematic presentation of the EOB model, the residualized model, and the ratio score model for dataset 1. Notes. Upper chart: EOB model; middle chart: residualized model; lower chart: ratio score model.

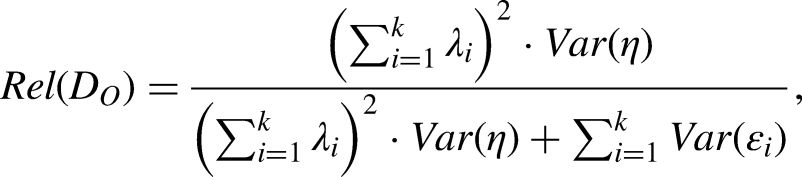

Reliability estimates were based on Raykov's reliability estimates (Raykov, 2001), which we implemented into our syntax and adapted for all evaluated models. For example, the reliability of variation in a residual originality composite score (i.e., after statistically controlling for the fluency contamination effect) can be estimated by the following formula (cf. Raykov, 1997):

Results

Descriptive statistics and bivariate manifest correlations can be found in the OSF repository (https://osf.io/tz9ge/). First, we examined the three different modeling approaches that all account for fluency contamination in originality. The path models illustrating the EOB model, the residualized model, and the ratio score model (see Figure 1) are schematically displayed for Dataset 1 in Figure 2. For Dataset 2, these models are expanded by one item, thus including four items in total (see Table 1). In all models, a latent variable η is estimated displaying different interpretations of originality. As described in the introduction, in the EOB model, η displays the remaining originality after accounting for what can be expected by fluency; in the residualized model, η represents the pure originality, free from fluency; and in the ratio score model, η represents originality that is weighted by fluency.

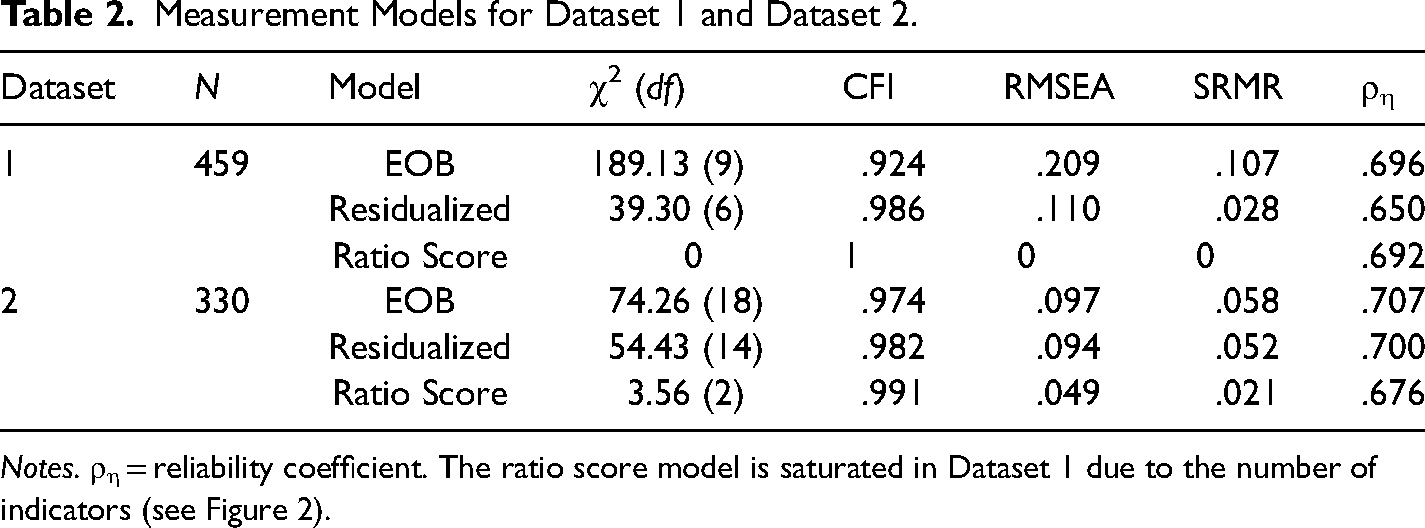

In Table 2 we display the fit indices for the competing models (illustrated for Dataset 1 in Figure 2). Furthermore, we present a reliability coefficient of the latent originality factor η that is based on the adaptation of Raykov's reliability estimate (Raykov, 1997). As displayed in Table 2, the EOB model does not fit the data satisfactorily in Dataset 1; however, the fit of the EOB model is acceptable in Dataset 2. The residualized model and the ratio score model fitted the data well in both datasets. In Dataset 1, the reliability of η in the residualized model (.65) was lower than in the ratio score model (.69). Given the misfit of the EOB model in Dataset 1, the reliability of η was overestimated (.70). The reliability estimates of η in Dataset 2 show less heterogeneous results across the three models compared to the results in Dataset 1.

Measurement Models for Dataset 1 and Dataset 2.

Notes. ρη = reliability coefficient. The ratio score model is saturated in Dataset 1 due to the number of indicators (see Figure 2).

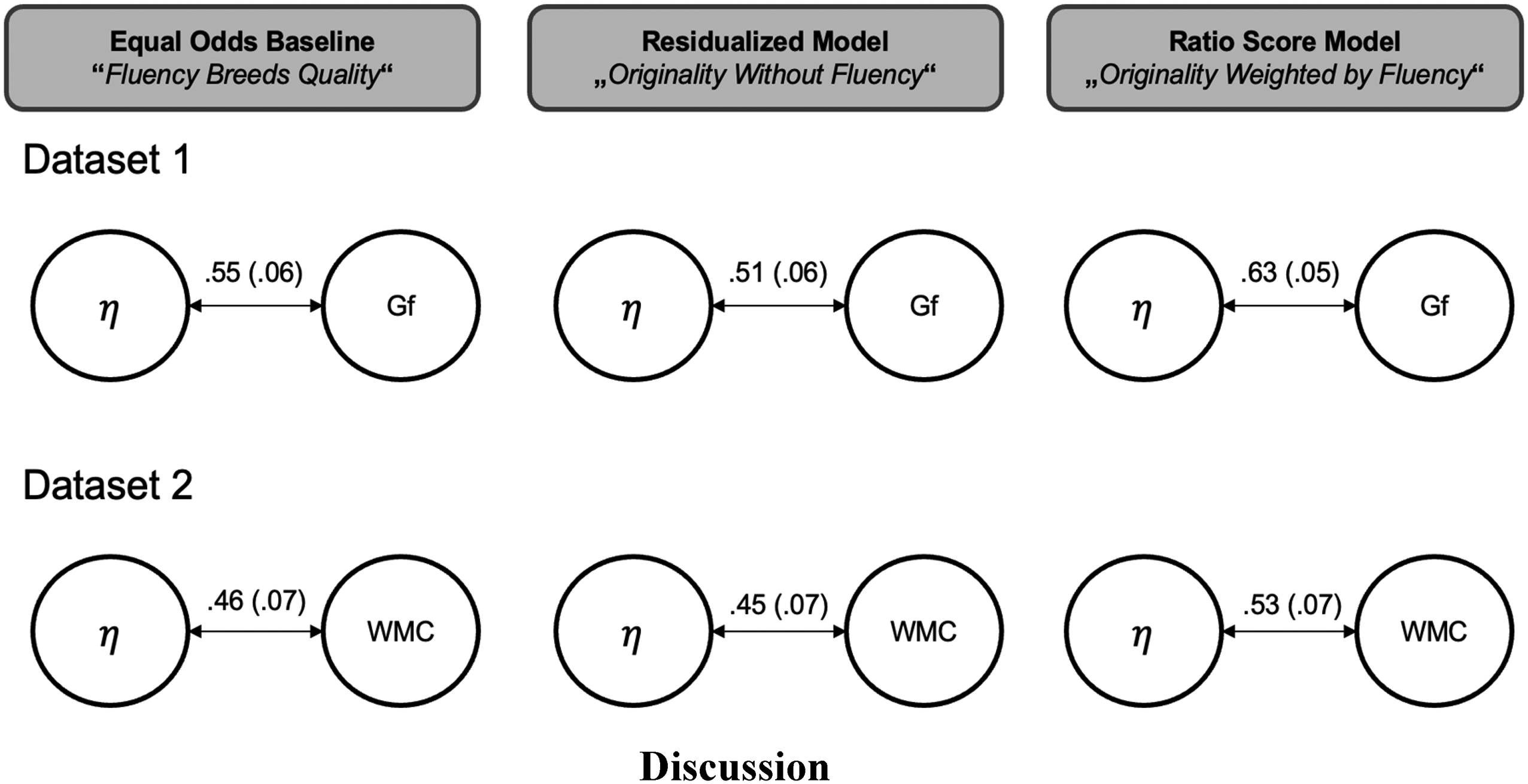

Second, we investigated the relationship between the latent originality factor η and Gf and WMC in all three models for each dataset, respectively. This is schematically shown in Figure 3. In Dataset 1, the relationship between η in the ratio score model and Gf is higher than the relationship between originality and fluid abilities in the models that disentangle fluency and originality (EOB model and residualized model). The same pattern can be found in Dataset 2, for the relationship between originality and WMC. In sum, the relationship between η and WMC is somewhat lower than between η and fluid abilities, but both relationships are statistically meaningful.

Latent correlations between η and gf in dataset 1 and between η and WMC in dataset 2 for each of the proposed models (standard errors in parenthesis).

Discussion

In this manuscript, we examined the compatibility of a cognitive psychological interpretation of creativity with chance models of creativity, which had been previously questioned (e.g., Rietzschel et al., 2007). Using latent variable modeling, we conducted a comparative analysis between an EOB model (i.e., a chance model of creativity) and two alternative models, a residualized model and a ratio score model. Our findings suggest that, contingent upon the EOB model's capacity to align with the data, the overall results are likely to exhibit a high degree of comparability. It is precisely in this situation that we posited the existence of a reliable latent originality factor, denoted as η, which shares a substantial amount of variance with Gf or WMC. Consequently, our approach diverges from previous work that presumed a fundamental incompatibility between chance models of creativity and psychological cognitive interpretations.

Regarding our first research question, we found that the EOB model could not be fitted to the Dataset 1. Conversely, the other two models (residualized model and ratio score model) fitted this dataset well. The misfit of the EOB model in Dataset 1 can be traced back to manifest positive correlations between T and H/T (see the OSF repository: https://osf.io/tz9ge/), which are not compatible with the underlying assumptions of the EOB model. In this context, the EOB model's partialling out of fluency is less pronounced than in the residualized model. Consequently, this resulted in an overestimation of the reliability η in the EOB model relative to the residualized model in Dataset 1. The reliability estimates of η in the residualized model and the ratio score model in Dataset 1 exhibit slight discrepancies from one another, which can be attributed to the partialling out of construct-relevant variance in the residualized model, which is not partialled out in the ratio score model. As a result, the ratio score model displayed higher reliability. In Dataset 2, all three models fitted the data comparably well (with the RMSEA as a notable exception), leading to less heterogeneous patterns of results across the three models (see, for example, the reliability estimates for η).

Regarding the second research question, we analyzed whether the different interpretations of originality factor η correlated meaningfully with Gf and WMC and, thus, with basic factors of cognitive ability. We found that η was significantly positively correlated with both constructs in all models. In fact, all analyses yielded moderate to large correlations. However, the EOB model did not fit Dataset 1 well, and the correlations with Gf should be better interpreted based on the other two models. In the residualized model, η (i.e., the pure originality factor) correlated strongly with Gf, but this correlation was less strong as compared to the ratio score model. This difference in effect sizes can be attributed to the fact that more construct-relevant variance is removed from η in the residualized model when the EOB does not fit well due to positive correlations between fluency and manifest ratio scores. In this situation, a cognitive interpretation of the EOB model is not straightforward. In Dataset 2, where the EOB model fitted reasonably well, we found that WMC was similarly related to η in all three models. In sum, Dataset 2 shows that about 21% of variance in η is shared by WMC, implying that a cognitive interpretation is not at odds with equal odds.

Are Chance Models of Creativity Compatible with a Cognitive Interpretation of Individual Differences in Creative Cognition?

The EOB model can be estimated using structural equation modeling (Forthmann et al., 2021a; Forthmann et al., 2021c), and in this paper we proposed a latent variable extension of the EOB for its application to divergent thinking tasks. The poor fit in Dataset 1 and the mostly good fit of the EOB model in Dataset 2 calls into question the generalizability of the EOB model across different item sets and datasets. Nevertheless, Dataset 2 suggests that a cognitive interpretation of η in the EOB model is possible and meaningful.

Hence, our theoretical arguments and empirical findings support the notion that when a chance model of creativity is deemed to be an appropriate fit for a given dataset, as evidenced by Dataset 2 in our study, a cognitive interpretation of η is warranted. Conversely, when a chance model is deemed to be an inappropriate fit for a given dataset, as evidenced by Dataset 1 in our study, a cognitive interpretation of η is not straightforwardly applicable—at least not in the context of the EOB. Of course, a cognitive interpretation of individual differences in η further requires that there is substantial variance of the latent variable. In other words, the EOB model should not reduce to a strict EOB model in which residual variation can be solely explained by sampling error (Forthmann et al., 2021c). Thus, our work contributes to and refines related discussions that have previously viewed chance models of creativity as being incompatible with such a cognitive interpretation (Rietzschel et al., 2007).

Furthermore, we found the ratio score model provided the best model fit across both datasets. Notably, the lower reliability in the residualized model suggests that attempting to isolate pure originality might introduce measurement instability. This calls into question the appropriateness of removing fluency (residualized model) or removing what can be explained by fluency (EOB model), in turn raising the question of whether originality is more appropriately measured when fluency is accounted for but not entirely removed of (e.g., originality weighted by fluency, as specified in the ratio score model). Nonetheless, the deterioration of model fit for the residualized and EOB models was small, indicating that removing fluency-originality contamination is possible, reliable, and interpretable.

(Pure) Originality and Cognitive Abilities

First, and importantly, the correlations between the specified originality factors and cognitive abilities (Gf and WMC) were of similar magnitude across models and datasets. This implies that cognitive abilities are important for being original, regardless of whether we consider originality as being free of fluency or whether originality is weighted by fluency. Based on three competing models and two datasets, we showed that the impact of cognitive abilities on different interpretations of originality might be even larger than previously expected (Benedek et al., 2014, De Dreu et al., 2012; Weiss et al., 2021). However, the stronger relationship between η (in the ratio score model) and fluid abilities emphasizes the importance of considering fluency when examining cognitive correlates of originality.

In this sense, our findings suggest that the ratio score can be interpreted as an indicator of the efficiency of the ideational process. As such, by failing to account for the role of fluency in this process, the disentangling models (EOB and residualized model) may underestimate the cognitive connections of originality. However, prior work did aim to model individual differences in hit rates by adding the residual term to the EOB (Simonton, 2004, 2010), and differences in results between the EOB and the ratio score model (when the EOB model fits a given dataset reasonably well) could be due to technical reasons that are not yet fully understood. Thus, future methodological work might be needed on the latent variable extension of the EOB.

Finally, we note that the less strong but meaningful relationship between WMC and originality (in comparison to the relationship between Gf and originality) suggests that while originality is linked to cognitive abilities, fluid reasoning might be a more critical factor than working memory in the creative process. On the other hand, the correlation between originality and Gf might be inflated because crystallized affordances are suspected to be higher in verbal and numerical Gf tests than in visual WMC tests (cf. Wilhelm & Kyllonen, 2021).

Limitations

Our study compared three different measurement model approaches for addressing the fluency-originality contamination in two datasets that included different Alternate Uses Tasks. We used different datasets and stimuli to ensure generalizability of our results. However, we note that the generalizability is limited to the Alternate Uses Task, and the misfit of the EOB model in Dataset 1 suggests that the model may not generalize across different sets of task prompts. Therefore, future studies should further generalize to other stimuli, especially to other divergent thinking tasks, because limiting a construct to one task (as here, where alternate uses was the only marker for creative cognition) is always questionable (e.g., Campbell & Fiske, 1959; Saretzki et al., 2024).

Furthermore, the interpretation of the latent originality factor η varies between the models (e.g., pure originality without fluency in the residualized model). Despite this varying interpretation of η, the range of correlations of η with cognitive abilities between the models was quite similar (e.g., Dataset 2 ranging from .45 to .53). This implies that the influence of cognitive abilities does not vary largely for different interpretations of originality. Future studies should, therefore, investigate further covariates where the differences in interpretation of η between the models lead to greater variance in the correlation with the covariate. Such covariates could include personality as well as covariates that have a focus on originality, such as creative achievements (e.g., Diedrich et al., 2018).

Conclusion

Our results indicate that while the EOB model did not fit reasonably well to all datasets, the ratio score model demonstrated the most robust correlations with cognitive abilities, perhaps underscoring the pivotal role of fluency in conjunction with originality, which collectively indicate the efficiency of an ideational process. However, the fact that a residualized model also fitted the data well in both datasets leads to the conclusion that originality may be meaningfully displayed free of fluency (depending on the reliability of the latent factor). Thus, depending on the research question one wishes to investigate, one might even choose a residualized model. We argue that theoretical deliberations should always guide the decision of whether construct-relevant variance needs to be partialled out.

Overall, this research advances the field's understanding of how fluency and originality in creative thinking can be modeled within a chance model of creativity (and related models) and demonstrates that cognitive abilities significantly relate to individual differences in creative performance. Future research should aim to refine these models further and explore their applicability across diverse creative tasks and populations, while also considering practical implications for assessing and fostering creativity in educational and organizational settings. For example, a timely question would be to evaluate whether individual differences in creative cognition persist and can be modeled and explained in accordance with the EOB, particularly in light of the potential impact of homogenization on creative performance in the age of artificial intelligence (Anderson et al., 2024).

Footnotes

Acknowledgements

We thank Amina Rajakumar, Jasmin Thelen, and Tim Trautwein for their support in data collection for Dataset 2. We are thankful for the possibility to analyze the dataset published by Neubauer and colleagues (2018), and we thank Aljoscha Neubauer, Anna Pribil, Alexandra Wallner, and Gabriela Hofer for allowing a publication of the results. We thank Celeste Brennecka for proofreading of our manuscript.

Data Availability

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research project has been funded by Ulm University (Graduate and Professional Training Center Ulm, 029/126/P/IIP).

Ethical Approval and Informed Consent Statements

This work relies on secondary data analysis of openly available datasets and an ethical approval was thus not required. The primary studies from which the data were taken, however, were approved by local ethics committees (cf. Neubauer et al., 2018; Weiss et al., 2024). In both primary studies participants either gave written informed consent to participate (Weiss et al., 2024) or fulfilled the requirements for consent in accordance with country-specific laws (Neubauer et al., 2018).