Abstract

Public administration scholars are devoting increasing attention to the concept of reputation. The emphasis reflects a long-standing concern in the field with the sources of power and influence on administrative processes. This study extends the investigation of reputation from organizational reputation to reputational signals regarding public sector professions. We begin with a definition of reputational signals. We then develop a survey instrument that measures reputational signals from two signalers: elected officials and people close to respondents. Results are presented for internal consistency, exploratory and confirmatory factor analyses, convergent and discriminant validity, and average variance extracted. Next, we conduct a path analysis to test the effects of reputational signals regarding public school teachers on two outcomes using two staggered survey instruments with 588 US adults. We find that reputational signals from both types of signalers are positively and significantly associated with the perceived prestige of the teaching profession. Furthermore, reputational signals from people close to respondents are directly and positively associated with support for teacher autonomy. In contrast, reputational signals from elected officials do not have a statistically significant association with support for teacher autonomy. We conclude by discussing avenues for future research.

Keywords

Bureaucratic reputation has garnered increasing attention in public administration recently (Carpenter, 2010; Carpenter & Krause, 2012; Busuioc & Rimkutė, 2020; Bertelli & Busuioc, 2021), but the concept is rooted in the field's distant past. Although Norton Long (1949) did not use the term reputation, there is little doubt that the “administrative rationality” long associated with “the power to act” was grounded in reputation. Like Long's focus in his seminal essay, the unit of analysis in recent research about reputation is agencies and programs, exemplified by research focusing on the power of the U.S. Food and Drug Administration (Carpenter, 2010) and the European Union's regulatory state (Busuioc & Rimkutė, 2020).

A line of research that predates both Norton Long and more recent attention to reputation is Leonard White's research on the “prestige value” of public employment (White, 1929). White, the founding editor of

Overall, the study of reputation has focused on the reputation of public agencies, not reputational signals regarding public sector professions. Thus, relatively little attention has been devoted to understanding whether criticisms and praise of public sector professions from signalers affect support for public employees, influence public employees’ behavior and attitudes, or whether reputational signals relate to organizational or bureaucratic reputation. Public employees are sensitive to signalers’ diverse assessments of their work and have incentives to protect their reputations and avoid any reputational damage (Abner et al., 2020). The way that signalers view public employees matters because reputation can provide them with a “protective shield” against antagonistic criticisms from external signalers (Carpenter & Krause, 2012).

In this study, we seek to fill gaps in the literature by proposing a definition of reputational signals based on a review of relevant literature. We then turn to developing a survey instrument that can be used to measure reputational signals from multiple signalers, including elected officials and people close to respondents. After identifying the contents of the scale, we explain the data and descriptive statistics associated with testing its reliability and validity. We present results for internal consistency, exploratory and confirmatory factor analyses, convergent and discriminant validity, and average variance extracted (AVE). Next, we conduct a path analysis to test the effects of reputational signals on two outcomes using two staggered survey instruments. We conclude by discussing future research that could be performed using the reputational signals instrument.

Defining Reputational Signals

We define reputational signals as what stakeholders have heard about a profession's work ethic, competence, accountability, and motives (Brown et al., 2006). These dimensions are drawn richly from Hubbell's work (1991). In his study, Hubbell (1991) reviewed Ronald Reagan's public papers to understand how he succeeded in tapping into the public's ire with government employees. He found that Reagan described public employees using four symbols: loafers, incompetent buffoons, good ole’ boys, and tyrants. These symbols speak to public employees’ work ethic, competence, accountability, and motives.

These dimensions intersect with other conceptualizations of reputation directed at organizations and bureaucracy as the units of analysis. Carpenter (2010), for instance, conceptualizes organizational reputation as reflecting an organization's performance, morality, procedure, and technical competence. Other research about reputation suggests it represents the views of “signalers” regarding “perceived performance, moral values, and/or technical expertise” (Maor et al., 2013, p. 583) or “performance, technical expertise, procedural legality, or morality” (Gilad et al., 2018, p. 1).

Distinguishing Professional from Bureaucratic Reputation and Public Sector Stereotypes

Although reputational signals intersect with the concept of bureaucratic or organizational reputation, the two constructs also differ in important ways. The primary distinction, as noted above, is the unit of analysis. Organizational reputation focuses on the agency (Overman et al., 2020), organization, or bureaucracy (Lee & Van Ryzin, 2019). This is a meaningful difference, “given that judgments are made about whole public sector professions—not just about government bureaucracies” (Abner et al., 2020, p. 1025). While public sector stereotypes include the profession as the unit of analysis, neither bureaucratic reputation scales nor public sector stereotypes are designed to assess the reputational signals that individuals receive from other signalers (i.e., what respondents have heard from others) (Willems, 2020; Döring & Willems, 2023; Neo et al., 2023). By identifying the reputational signals that individuals receive, we can assess which signalers have the largest impact on the attitudes and behavior of the general public toward public employees and their comparative impact on the attitudes and behavior of public employees themselves.

A more subtle distinction is that most research about organizational reputation often focuses not just on organizations but specifically on regulatory organizations or agencies. An important by-product of the focus on regulatory organizations is that it contextualizes the investigation of reputation in a policy arena where the choices of regulators may be accepted or contested based on their efficacy for achieving policy goals. But what about nonregulatory organizations, such as public service providers such as schools, police departments, and fire departments? Their reputations may depend more on the behavior of street-level bureaucrats who operate in a less stylized, more discretionary setting. Can their organizational reputation be predicted by the same mechanisms and influenced by the same levers? If not, then investigating reputational signals regarding the workforce can extend our understanding in meaningful ways that may not be discovered using current approaches to reputation research.

Factors Shaping the Reputation of Public Sector Professions

Regardless of the focal unit of analysis in reputation research, most studies share at least two commonalities. One, which we have already highlighted, is that reputation is grounded in assessments regarding a few salient features of the focal enterprise—typically features related to performance, technical expertise or competence, and morality. The second commonality is that reputation is affected by different signalers.

The nature of public sector employment makes public employees vulnerable to criticism, and such criticism can undermine the reputation of public sector professions and the organizations with which they are associated. Four characteristics of public sector employment, in particular, feed into criticisms about public employees: their job security, benefits, the perceived disconnect between their pay and performance, and their work being supported by tax dollars. These characteristics intersect with the more general attributes we identified earlier—work ethic, competence, accountability, and motives of public employees—as influential in shaping stakeholder perceptions about public sector professionals.

Public sector employment, in general, is characterized by high levels of job security to promote a politically neutral public service and protect public employees from dismissal “due to the whims of newly elected governments” (Hur & Perry, 2016, p. 265). However, external signalers such as elected officials and the general public question whether the high job security enjoyed by public employees undermines their work motivation, accountability, and competence (Battaglio Jr., 2010; Hur & Perry, 2016; Hur, 2022). At times, this questioning from citizens and elected officials escalates into verbal criticism, with claims that government employees are lazy and unaccountable to the public due to their job security.

In general, employees in the public sector enjoy greater retirement and health benefits than employees in the private sector (Reddick, 2009; Reilly, 2013; Chen et al., 2014; Finan et al., 2017). This is partly because offering higher pay to public employees is not always a politically feasible recruitment strategy (Perry et al., 2009). As disparities in benefit levels have grown between employees in the public and private sectors, criticisms suggesting that public employees care more about their pensions and benefits than their clients have also increased. This reflects on the inferred motives of public workers.

A third key feature of public sector employment is that pay is more often linked to tenure and experience, which some view as proxies for performance and others as a substitute for performance (U.S. Office of Personnel Management, 2019). Although it is challenging to implement successful performance-related pay (PRP) systems given that success is contingent upon the observability of performance, the target(s) of the PRP system, pay design, and pay size, among other factors (Chen, 2018; Larsson et al., 2022; Park, 2022). The difficulty in implementing successful PRP systems has not diminished the claim that the absence of PRP attracts less competent and less motivated employees to the public sector.

Lastly, citizens are forced to fund the work of public employees through taxation, regardless of whether they need or desire the services offered by public employees. Forcing citizens to fund the work of public employees heightens citizens’ expectations for transparency and accountability. The higher these expectations, the greater the difficulty in meeting them, likely leading to increased dissatisfaction with behavior coerced by the state (Zhang et al., 2022).

It is important to note that public employees are also often subject to praise. While Reagan was critical of government employees overall, he was quite complimentary of the military and the police. Therefore, any measure of reputational signals must account for the possibility of criticism and praise. Three characteristics of public sector employment, in particular, lead to praise for public employees: their work often involves emotional labor, their work is often in response to market failure, and they have a higher likelihood of experiencing workplace violence and aggression compared to employees in other sectors (Harrell, 2013; Sheppard et al., 2022).

Emotional labor occurs when employees “manage their emotions and create desired emotional states in themselves and in customers. Workers engage in emotional labor to meet expectations of service in customer interactions, to display deference to superiors, to make favorable impressions on others, or to maintain workplace norms of emotional expression” (Sloan, 2014, p. 274). Many jobs in the public sector necessitate interpersonal contact with citizen–customers, highlighting the importance of emotional labor for successful job performance (Cho & Song, 2017; Guy, 2017). Unfortunately, however, emotive work such as “caring, negotiating, empathizing, smoothing troubled relationships, and working behind the scenes to enable cooperation” is often “excluded from job descriptions and performance evaluations…and [goes] uncompensated” (Guy & Newman, 2004, p. 289). Many public sector employees, such as teachers and social workers, elicit praise because such professions require intense emotional labor yet are modestly compensated relative to the educational requirements of the professions.

Second, most public employees provide public goods and services that address market failures, including education, healthcare, public safety, national defense, and child welfare (Perry & Rainey, 1988). The fact that these prosocial services are vital and would be inefficiently or inequitably provided by the private sector sometimes elicits praise for those working in such professions. Opposition to contracting out these prosocial services highlights the idea that public employees and institutions embody important values and beliefs that are less central to the private sector (Goodsell, 2003; Perry & Wise, 1990; Van der Wal & Huberts, 2008; Van der Wal et al., 2008).

Lastly, public sector employees have a higher likelihood of experiencing workplace violence and aggression compared to employees in the private sector (Harrell, 2013; Sheppard et al., 2022). The higher prevalence of workplace violence and aggression in the public sector can be explained in part by the fact that inherently dangerous professions such as law enforcement and military service are disproportionately public sector professions. However, other professionals, such as nurses, doctors, and social workers, disproportionately work in the public sector as well and are subject to alarming rates of workplace violence and aggression (Rossi et al., 2023; Zelnick et al., 2013). Research suggests that the high rates of workplace violence in these professions are due in part to the fact that these jobs often require face-to-face contact and are high stress (Fischer et al., 2016). Given that workplace violence and aggression are high for certain public sector professions, the public often lauds people working in these high-risk public sector professions for their willingness to sacrifice their safety to help others.

Scale Development

As the reputation literature suggests, we posit that overall reputational signals are formed from reputational signals from multiple signalers. Scholars have developed instruments to measure bureaucratic reputation; however, these instruments measure the perceptions of specific signalers, such as citizens (e.g., Lee & Van Ryzin, 2019), rather than the signals these signalers have received from others. In our scale, respondents are asked what they have heard from two different signalers—elected officials and persons close to them—regarding public school teachers.

We chose elected officials and persons close to respondents based on two main criteria. First, we chose them for their salience, as respondents need to have heard something from the signaler. Second, a broad body of theory and empirical research outside of public administration, notably in psychology and political science, supports the influence of elite and personal influences on public opinion (Cislaghi & Heise, 2018; Zingher & Flynn, 2018).

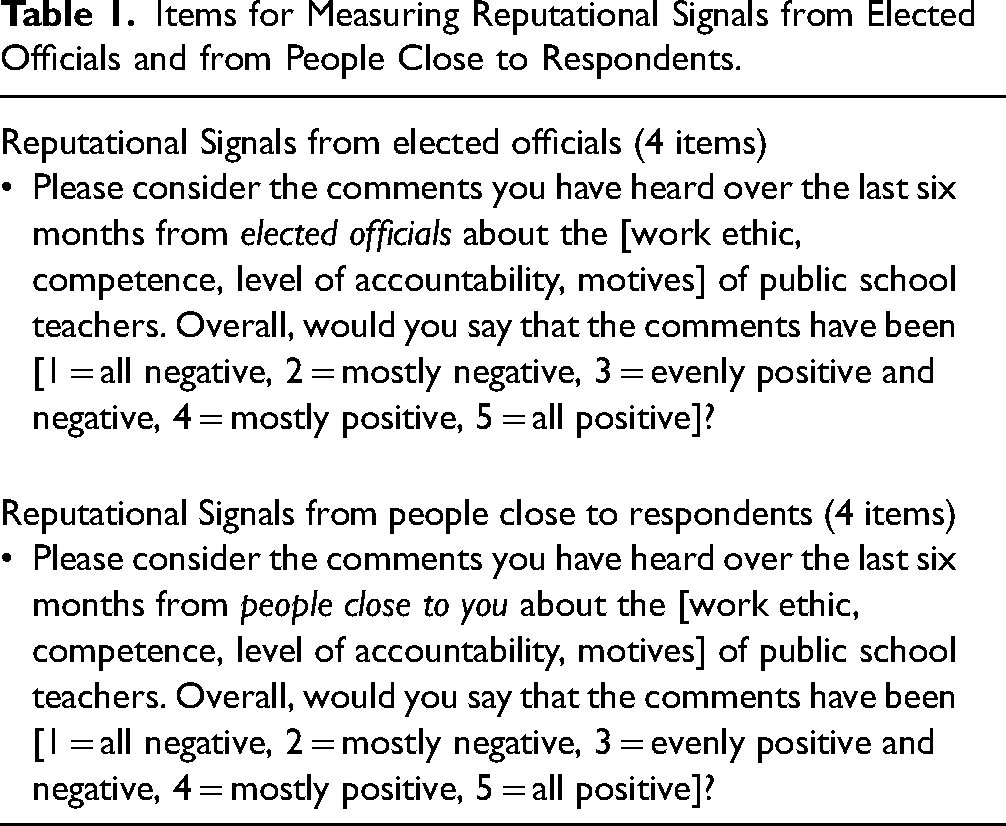

We chose not to specify the nature of the relationship for the “people close to you” prompt (e.g., family, friends, coworkers) because the human experience is quite diverse. Conceptions of what constitutes family, friends, or even coworkers have different meanings across different demographic groups, so defining the signalers using broad terminology is essential. Furthermore, we chose not to specify the level of government for the “elected officials” prompt (i.e., federal, state, local) because the more narrowly you define the signaler, the greater the chances for some recall error. Additionally, the more narrowly you define a signaler the less likely respondents would have heard from that signaler. Lastly, we chose not to identify the medium through which respondents received the signal, given that people are subject to so much media (e.g., social media, podcasts, radio, television) on a daily basis it is unlikely that they would be able to accurately recall where they heard the signals. The items for measuring reputational signals from these two signalers are presented in Table 1.

Items for Measuring Reputational Signals from Elected Officials and from People Close to Respondents.

As noted above, the reputational signals from each signaler are composed of four dimensions: work ethic, competence, level of accountability, and motives. As such, the survey items for reputational signals include eight questions (i.e., four reputation dimensions for each of the two signalers). Specifically, respondents were asked to indicate the overall valence of the comments they have heard over the last six months from a particular signaler (either elected officials or people close to them) about each of the four reputation dimensions (work ethic, competence, level of accountability, and motives) of public school teachers. Responses are provided on a 5-point scale ranging from 1 = “all negative” to 5 = “all positive.”

We used several criteria for scale construction: parsimony, readability, completeness, and specificity. First, concerning the parsimony criterion, the scale consists of only four items for each signaler to manage the cognitive burden on respondents. Longer scales can increase the cognitive burden on respondents, potentially leading to “attrition, measurement error, and satisficing” (Holyk, 2008, p. 657). Longer scales are also more costly to field. Using four items to measure a construct is in line with recommendations by Harvey et al. (1985), who note that four or more items are “needed to test the homogeneity of items within each latent construct” (p. 463). Similarly, Hinkin (1998) recommends retaining between four to six items for most constructs and contends that “scales should possess [a] simple structure, or parsimony” (p. 109).

With regard to the readability of individual items, the scale employs simple terms and concepts. It avoids compound sentences, double-barreled questions, and double negatives, as recommended by Holyk (2008) and others. With respect to completeness, we included five response categories, aligning with current evidence that contends that “five to nine response categories is best” (Holyk, 2008, p. 658). Lastly, concerning specificity, we avoided using vague quantifiers such as “none, some, many, or all” when assessing the quantity of positive or negative comments respondents had heard about public school teachers. Instead, we used more specific quantifiers like “all negative, mostly negative, evenly positive and negative…”

Data

To examine the validity and reliability of the survey items, we used an online sample assembled by CloudResearch, a leading cloud survey research company. This online survey tool allowed us to collect data from diverse populations. Before fielding the survey, we conducted three rounds of pretesting to ensure the clarity of the survey items. The final survey was fielded in July 2023. A total of 588 US adults completed the study. While scholars disagree about the exact sample size necessary for scale development, they generally agree that a sample size of 300 is good and a sample size of 500 is very good (Boateng et al., 2018).

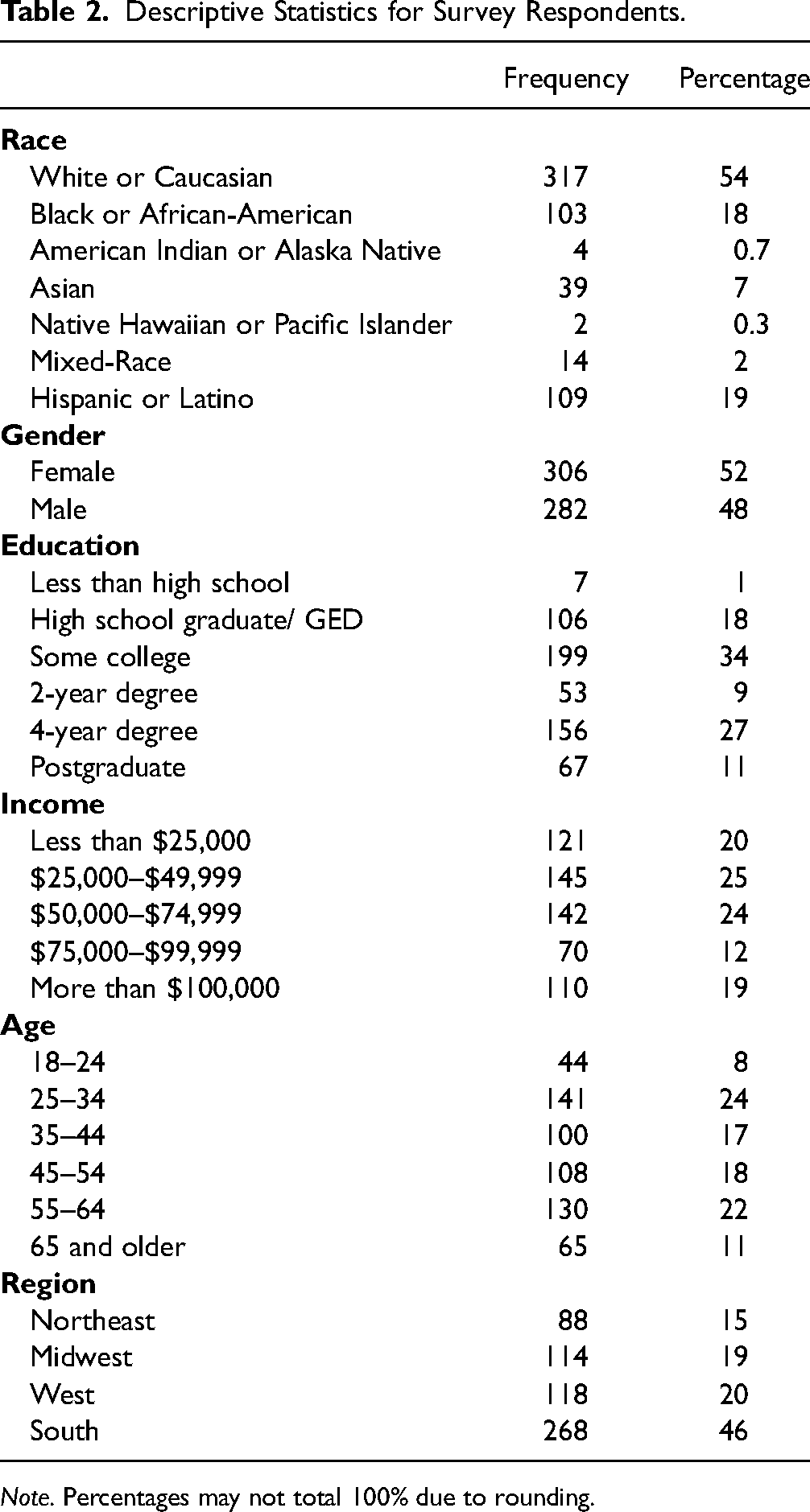

The descriptive statistics for the sample appear in Table 2, including the respondents’ race, gender, education, income, age, and region. About 46% of the respondents were racial minorities, 52% were female, 38% had 4-year college degrees or higher, 57% were between the ages of 35 and 64, 36% had incomes between $50,000 and $99,999, and 46% resided in the South. Racial minorities, college graduates, respondents aged 35–64, and Southerners were slightly overrepresented in our dataset relative to the U.S. population.

Descriptive Statistics for Survey Respondents.

In gathering data, we aimed to assess reputational signals regarding public school teachers. Public school teachers are important for assessing reputational signals because they substantially impact society. Public school teachers represent the largest subcategory of public employees in the United States, with nearly 3.5 million full- and part-time public school teachers (National Center for Education Statistics, 2021). Additionally, elementary and secondary education represents the second-largest expenditure for state and local governments, with 21.5% of state and local general expenditures going to elementary and secondary education (Urban Institute, 2022). Public school teachers are compensated primarily based on education and experience, a distinctly public-sector approach (Podgursky & Springer, 2007). Lastly, public school teachers are a relevant group for assessing reputational signals because the degree to which teaching is a profession is the subject of much debate. For example, some argue that teaching lacks a scientific knowledge base and that teacher training programs and licensure requirements fail to enhance teachers’ competency, undermining the claim that teaching is a profession (Maranto & Wai, 2020; Mehta & Teles, 2014). Thus, reputational signals may be useful in providing a high-level view into the debate regarding the degree of professionalism teachers exhibit. It should be noted, however, that the scale could be applied to other categories of workers as long as they are a salient professional group.

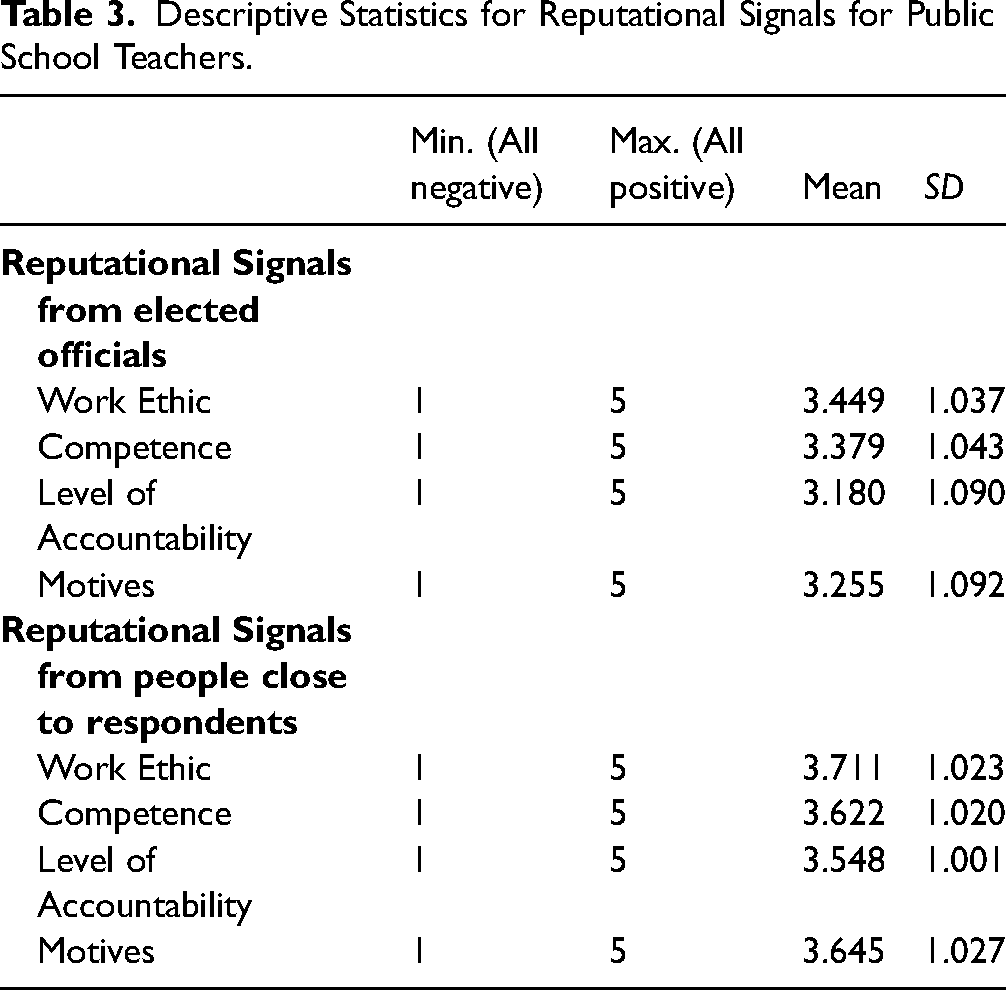

Descriptive statistics for the items measuring reputational signals regarding public school teachers from elected officials and people close to respondents are presented in Table 3. Respondents reported hearing more positive comments about public school teachers from people close to them than from elected officials across all four reputation dimensions. Another key finding from the descriptive statistics is that respondents reported hearing the most positive comments about public school teachers in relation to their work ethic and the most negative comments regarding their level of accountability from both sources of signals.

Descriptive Statistics for Reputational Signals for Public School Teachers.

Results

The results are reported in six parts, corresponding to key questions related to the reliability and validity of the reputational signals scale. We begin with an exploratory factor analysis (EFA), which provides an initial assessment of the underlying dimensionality of the scale. Next, we turn to confirmatory factor analysis (CFA), which offers an opportunity to assess the scale according to theoretical expectations about its one- and two-factor structure. An analysis of convergent and discriminant validity, which examines how the scale is associated with other theoretically related and theoretically unrelated constructs, follows. We then compute the AVE, which is “a measure of the amount of variance that is captured by a construct in relation to the amount of variance due to measurement error” (dos Santos & Cirillo, 2023, p. 1639). Next, we present path analyses of relationships among reputational signals from the two sources, the perceived prestige of the profession, and support for teacher autonomy. We conclude by presenting results from two robustness checks.

Exploratory Factor Analysis

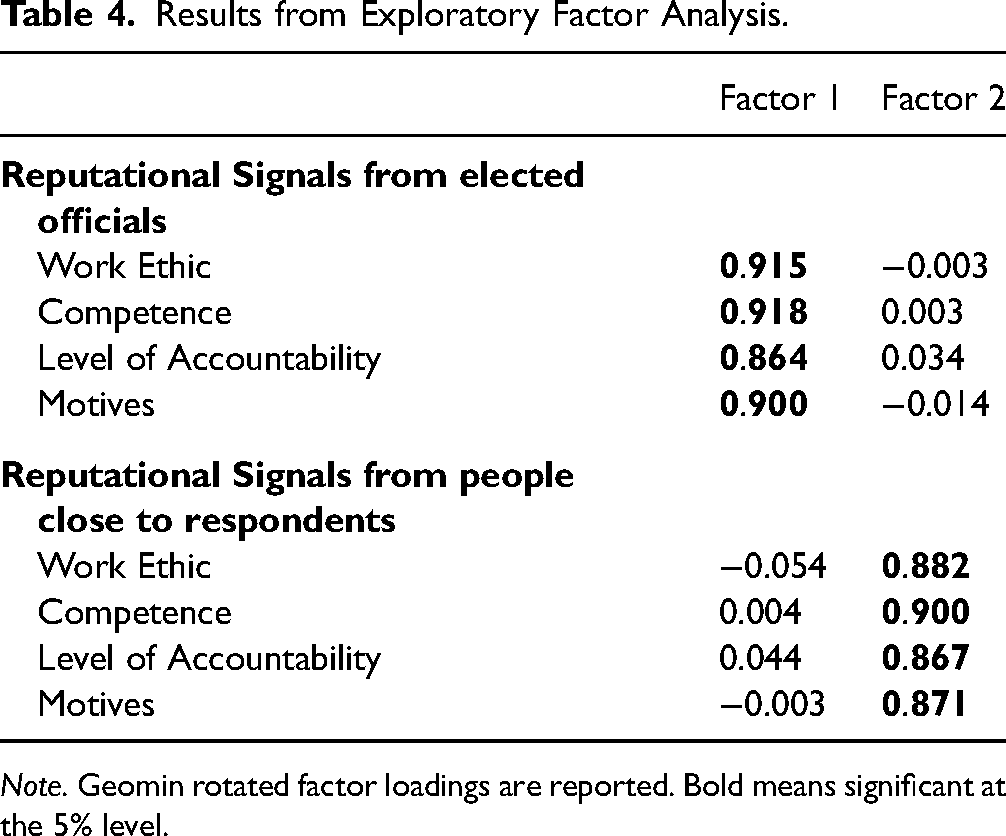

First, we performed an EFA to understand the underlying dimensionality of the items, using the Kaiser criterion with Geomin rotation. We randomly split the sample in half and used one half (

The Geomin rotated factor loadings are presented in Table 4. The results show that the first factor represents reputational signals from elected officials, and the second factor represents reputational signals from people close to respondents. All items had factor loadings greater than 0.8. As a robustness check, we conducted additional EFAs, splitting the sample in half multiple times. The sample was divided randomly five times, with a different split-half sample used each time. The results remained consistent across all split-half samples.

Results from Exploratory Factor Analysis.

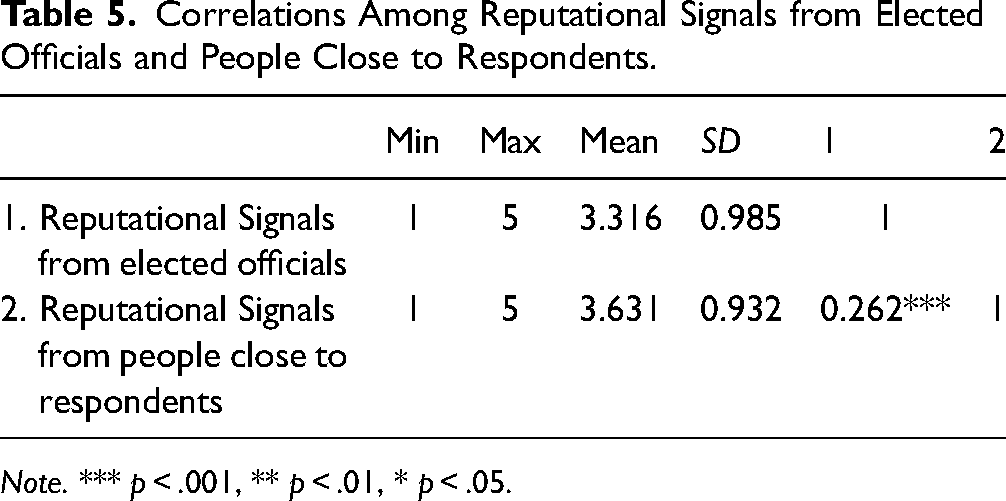

We then assessed the scale's internal consistency by measuring Cronbach's alpha for both signalers. Cronbach's alpha assesses the extent to which “all the items in a test measure the same concept or construct” (Tavakol & Dennick, 2011, p. 53). The Cronbach's alpha was 0.943 for reputational signals from elected officials and 0.936 for reputational signals from people close to respondents. Scholars generally recommend a minimum value of 0.7 for Cronbach's alpha (Tavakol & Dennick, 2011), and the scale met that standard for both signalers. Correlations among the reputational signals from the two signalers are presented in Table 5. The two reputational signals are positively and moderately correlated (

Correlations Among Reputational Signals from Elected Officials and People Close to Respondents.

Confirmatory Factor Analysis

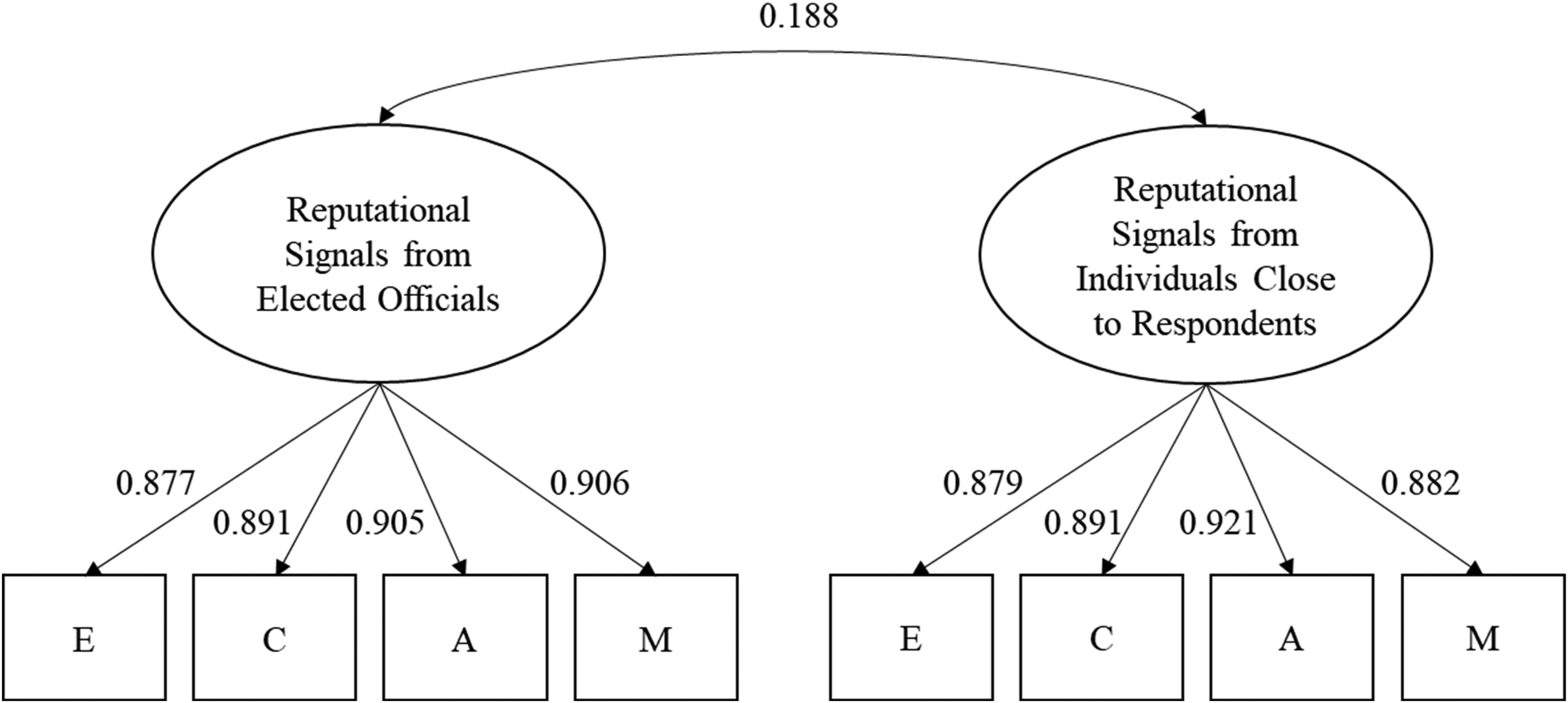

Next, we performed a CFA using the remaining split-half sample (

To evaluate model fit, we compared this model with a single-factor model. All eight items were loaded onto a single latent variable that combined reputational signals from elected officials and people close to respondents. The CFA results favored the two-factor model (χ2

The results from the two-factor CFA model are shown in Figure 1. All eight items, each associated with their respective latent variables, exhibited significant and substantial standardized factor loadings, ranging from 0.877 to 0.921. The two latent variables were also positively and significantly related. These results collectively suggest that reputational signals from elected officials and those from people close to respondents are distinct yet interrelated. We calculated factor-analytic weighted average scores for reputational signals from the two sources using factor loadings from the two-factor CFA model.

Results from confirmatory factor analysis.

Convergent and Discriminant Validity

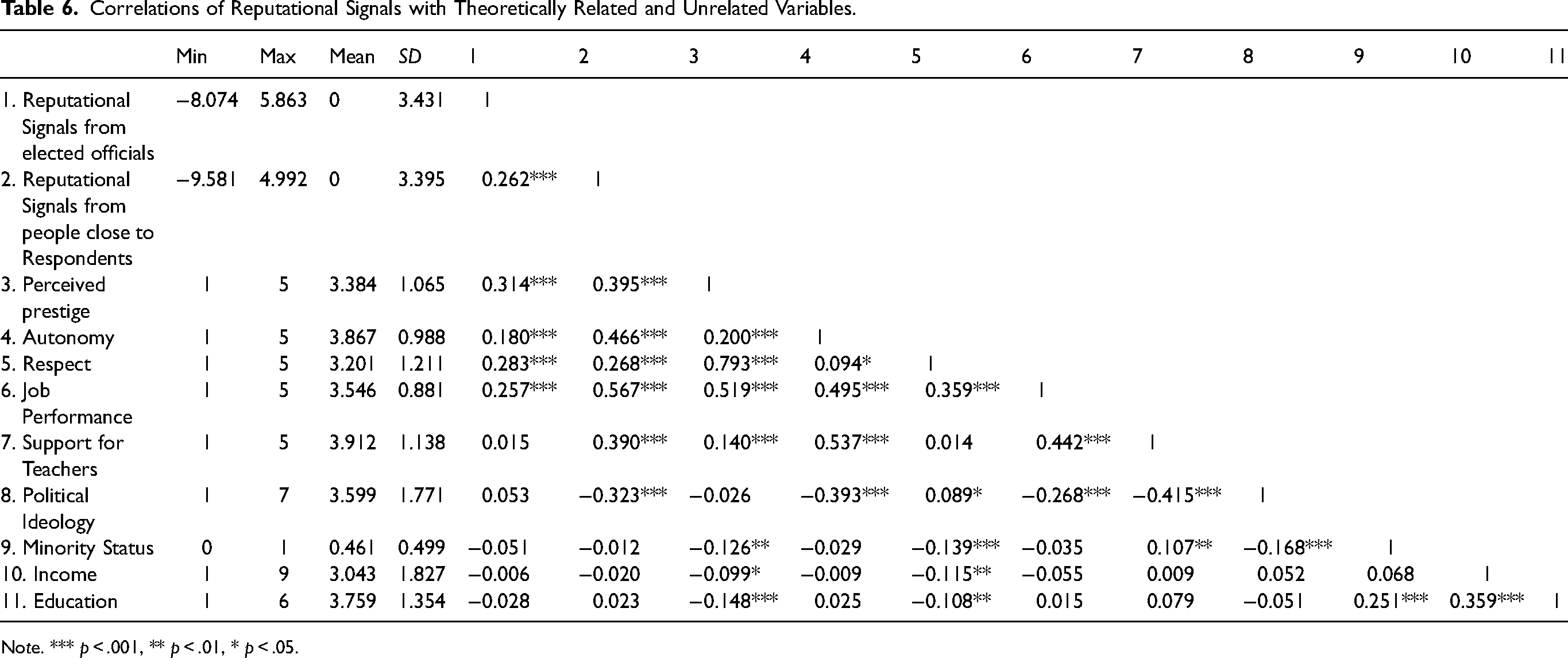

We assessed the construct validity of the reputational signals scale, focusing on convergent and discriminant validity. Convergent validity refers to the extent to which the scale is associated with other theoretically related constructs, and discriminant validity refers to the extent to which the scale is unrelated to other theoretically unrelated constructs (Campbell & Fiske, 1959; DeVellis, 2003). Specifically, we examined the associations of reputational signals from elected officials and reputational signals from people close to respondents with variables that are either theoretically related or unrelated to reputational signals. The correlations between reputational signals and each of these variables are presented in Table 6.

Correlations of Reputational Signals with Theoretically Related and Unrelated Variables.

No

We chose the following variables that would be strongly related to reputational signals: the perceived prestige of the profession, autonomy, respect, job performance, and support for teachers. First, as the literature on bureaucratic reputation suggests (Carpenter, 2001; Carpenter & Krause, 2012), reputational signals formed by assessments from different signalers would be positively correlated with the perceived prestige of the profession. We measured the perceived prestige of public school teachers using the following two items: “Generally, people in this country think highly of public school teachers” and “Overall, public school teachers have a good reputation” (1 = “strongly disagree” to 5 = “strongly agree”). The responses to these two items were averaged, and the Cronbach's alpha was 0.878.

Also, reputational signals are likely positively correlated with autonomy (Carpenter, 2001; Lee & Van Ryzin, 2019; Maor, 2011; Roberts, 2006). Autonomy for teachers was measured using the following question with five specific items: “Do you support or oppose increasing the amount of freedom that public school teachers have to influence… (1) curriculum; (2) lesson plans; (3) teaching materials; (4) rules for classroom behavior; and (5) teaching methods?” (1 = “strongly oppose” to 5 = “strongly favor”). The responses to the five items were averaged, and the Cronbach's alpha was 0.923.

Respect is expected to be positively correlated with reputational signals. This variable was measured using the following item: “Public school teachers are well-respected in this country” (1 = “strongly disagree” to 5 = “strongly agree”). Reputational signals are presumed to be positively related to the assessment of job performance (Krause & Douglas, 2005; Maor & Sulitzeanu-Kenan, 2016). We measured job performance using the following item: “Students are often given the grades A, B, C, D, and Fail to denote the quality of their work. Suppose that public school teachers were graded in the same way. What grade would you give public school teachers in the nation as a whole—A, B, C, D, or Fail?” (1 = “F,” 2 = “D,” 3 = “C,” 4 = “B,” 5 = “A”).

Lastly, reputational signals are likely to lead to support for teachers. Support for teachers was measured using the following question with four specific items: “Would you support or oppose an increase to your local taxes if made on the following grounds? (1) to increase salaries for public school teachers; (2) to increase school supplies for teachers’ classrooms; (3) to increase peer support for public school teachers; and (4) to reduce class sizes” (1 = “strongly oppose” to 5 = “strongly favor”). The responses to the four items were averaged, and the Cronbach's alpha was 0.929.

Consistent with theoretical expectations, we observed predominantly positive correlations between reputational signals and each of these variables. Regarding reputational signals from elected officials, correlation coefficients ranged from 0.015 for support for teachers to 0.314 for perceived prestige. For reputational signals from people close to respondents, correlation coefficients ranged from 0.268 for respect to 0.567 for job performance. Except for the negligible correlation between reputational signals from elected officials and support for teachers (

On the other hand, we examined the associations between reputational signals and respondents’ minority status, income, and education, which are expected to be theoretically unrelated to reputational signals. Based on previous research on bureaucratic reputation (Lee & Van Ryzin, 2019), we anticipated that reputational signals would be relatively independent of demographic and socioeconomic characteristics. Minority status was measured using the following item: “What is your race/ethnicity?” (1 = “White/Caucasian,” 2 = “Black/African American,” 3 = “Hispanic/Latino,” 4 = “Asian,” 5 = “Other”). This variable was coded as 1 if White/Caucasian and 0 otherwise. Income was measured using the following item: “Which of the following best describes your annual income before taxes?” (1 = “less than $25,000,” 2 = “$25,000–$49,999,” 3 = “$50,000–$74,999,” 4 = “$75,000–$99,999,” 5 = “more than $100,000”). Education was measured using the following item: “What is the highest level of education you have completed?” (1 = “less than high school,” 2 = “high school graduate/GED,” 3 = “some college,” 4 = “2-year college,” 5 = “4-year college,” 6 = “postgraduate degree”). No significant correlations emerged between reputational signals and each of these variables. These results provide overall support for discriminant validity.

Average Variance Extracted

Average variance extracted refers to “the average amount of variation that a latent construct is able to explain in the observed variables to which it is theoretically related” (dos Santos & Cirillo, 2023, p. 1639). The cutoff for an acceptable AVE score is 0.50. If the AVE is less than 0.50, “the variance due to measurement error is larger than the variance captured by the construct, and the validity of the individual indicators, as well as the construct, is questionable” (Fornell & Larcker, 1981, p. 46). For both factors, the AVE was above 0.50, which indicates an acceptable level of convergent validity. Regarding reputational signals from elected officials, the AVE was 0.80. Regarding reputational signals from people close to respondents, the AVE was 0.77.

Path Analysis

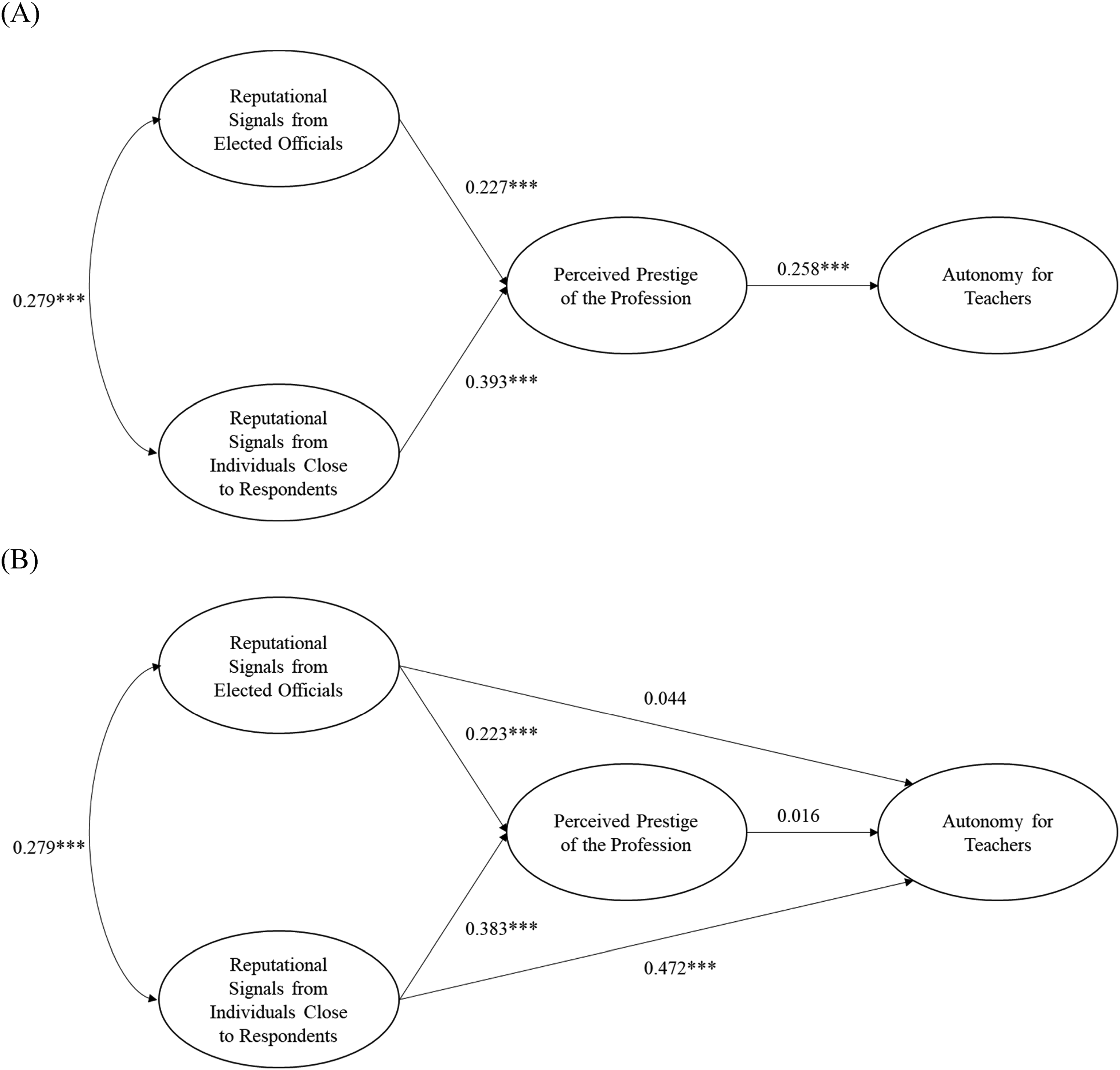

Lastly, we conducted a path analysis to examine the relationships among reputational signals, the perceived prestige of the teaching profession, and support for autonomy for public school teachers. Aligned with theoretical expectations grounded in the bureaucratic reputation literature, we expected that reputational signals would influence perceived prestige, which in turn would lead to increased support for autonomy for public school teachers. Specifically, we tested two alternative models—one incorporating and the other excluding direct paths from reputational signals to autonomy for teachers.

To prevent common method bias, we fielded two separate surveys: one that included questions regarding the reputational signals and respondents’ demographic information and another that included questions regarding the perceived prestige of the teaching profession and support for teacher autonomy. On average, respondents took the surveys approximately 9 h apart. Additionally, we randomized the order of the questions regarding perceived prestige and teacher autonomy to circumvent potential question order effects (Van de Walle & Van Ryzin, 2011).

The full mediation model, which excluded the direct paths, yielded a χ2 value of 262.503 with a

Results from path analysis. (A) Full Mediation Model. (B) Partial Mediation Model.

As shown in Figure 2B, the effects of reputational signals on perceived prestige are positive and significant (

On the other hand, the direct effect of reputational signals from elected officials on autonomy for teachers is positive but not statistically significant (

In summary, it is noteworthy that reputational signals from both types of signalers are positively and significantly associated with the perceived prestige of the teaching profession. Furthermore, reputational signals from people close to respondents are directly and positively associated with support for teacher autonomy. In contrast, reputational signals from elected officials do not have a statistically significant association with support for teacher autonomy.

These results underscore the pivotal role of reputational signals from people close to respondents in shaping the public's support for teacher autonomy. Furthermore, these findings support Ostrom's (1998) theory of collective action, which posits that when people or institutions have positive reputations, they are viewed as more trustworthy. Our data do not allow us to draw firm conclusions regarding why reputational signals from people close to respondents have a statistically significant association with support for teacher autonomy and signals from elected officials do not. However, Ostrom's research suggests that signals from people close to us may be viewed as more credible than signals from people viewed as more distant from us due to an actual or perceived difference in norms.

Robustness Checks

We performed two sets of robustness checks. For the first set of robustness checks, we reran the full and partial meditation models, except this time, we included a control for the political ideology of the respondent and a control for the grade that respondents gave public school teachers in the nation as a whole—A, B, C, D, or Fail. The aim was to control for respondents’ partisan biases and experiences with public school teachers. We tested several other control variables (e.g., age, gender, race, income, home ownership, and number of children), but they proved nonsignificant.

Our results again support the partial mediation model over the full mediation model. Furthermore, the directional relationships and their significance levels are the same as in the original model. The only difference between this model and the original one is that the coefficients are smaller. It is important to remember, however, that including controls such as respondents’ political ideology and the grade that respondents give teachers as a whole may partially measure what is also measured by reputational signals, prestige, and/or support for teacher autonomy, thus lowering, perhaps artificially, their coefficients. Please see Appendix A for additional details.

For the second set of robustness checks, we collected additional survey data in February 2024 via the online survey company CloudResearch. The survey included questions about reputational signals from elected officials and people close to respondents. We also included five additional questions regarding organizational reputation, which were not included in the first survey (Lee & Van Ryzin, 2019). The organizational reputation questions pertained to respondents’ local school district. The survey took approximately 5 min, and 300 respondents completed it.

We assessed whether all three concepts (i.e., the two measures of reputational signals and organizational reputation) represent distinct latent variables. Consequently, we conducted exploratory and confirmatory factor analyses. Model fit statistics support a three-factor model for both the exploratory and confirmatory factor analyses. The three factors are positively correlated with a correlation coefficient of 0.36 for the relationship between signals from elected officials and organizational reputation and 0.41 for the relationship between signals from people close to respondents and organizational reputation. Therefore, we can conclude that reputational signals from elected officials, reputational signals from people close to respondents, and organizational reputation are all distinct constructs. Please see Appendix B for additional details.

Discussion

We applied the instrument to assess reputational signals regarding public school teachers, given that they represent the largest subgroup of public employees and are thought to be subject to a “barrage of criticism” (Kearney, 2011; Weingarten, 2011). Although we applied the scale to the context of public school teachers, the scale is designed to assess reputational signals regarding any public sector profession as long as the profession is salient. Examples include police officers, social workers, and military personnel.

The survey instrument developed here provides another tool to extend research about reputation from its current focus on organizations and agencies to salient professional groups. Investigating the influence of professional groups is important for two reasons. First, the reputations of groups such as teachers and police officers may have an outsized impact on the reputation of some public service units because their reputations are intimately tied to the standing of professional groups. Second, even if organizational and bureaucratic reputation may be explained by other factors, it is helpful to know whether and how the reputations of professional groups embedded within an organization factor into overall organizational reputation.

Results from the path analytic models indicate that reputational signals from both elected officials and people close to respondents were significant in directly explaining perceived prestige. However, only reputational signals from persons close to respondents were significant in directly explaining support for teacher autonomy. It may be that elite signals affect mass public opinion but not for all issue areas and that signals from those closer to us are influential across a broader range of issue areas.

Conclusion

Scholars, practitioners, and good government proponents have made many claims about attacks on public sector professions. No systematic evidence is available to substantiate those claims due to scholars’ struggles to define and measure reputational signals. This study aimed to define reputational signals and develop and validate a scale that measures this construct. The test results show that the scale demonstrates high internal consistency and both convergent and discriminant validity.

This article responds to recent calls by public administration scholars to develop core field constructs and measurement scales (Perry, 2016). Perry (2016) notes that “public administration scholars have been slow to embrace developing core field constructs and measurement scales” despite their usefulness for advancing knowledge accumulation (p. 697).

Although we achieved encouraging results for the scale's reliability and both convergent and discriminant validity, the research is not without limitations. While the sample size used in this study is large enough for scale development and validation, it is not nationally representative. Thus, the estimates presented should not be interpreted as population parameters.

Additionally, we examined reputational signals regarding a single profession, public school teachers. We encourage scholars to examine reputational signals regarding other salient public sector professions, such as police officers, social workers, transportation security agents, and military personnel. The key is for respondents to have heard something from the signalers over the past six months about the profession.

Lastly, it is important to note that the reputational signals scale likely serves as just a starting point for identifying perceptions regarding the strengths and weaknesses of various public sector professions, just as the organizational reputation and bureaucratic reputation scales only serve as a starting point for identifying perceptions regarding the strengths and weaknesses of public sector organizations. As with any evaluation, a mixed-method research design (e.g., focus groups and robust surveys) would be crucial for comprehensively identifying and addressing weaknesses in public perceptions and building on strengths.

The scale developed here can be used to advance four broad streams of research. First, scholars can assess whether reputational signals affect organizational reputation. It may be the case that negative reputational signals (e.g., police officers) detract from an organization's reputation (city police department). In such a case, improving the profession's reputation would be central to improving the organization's reputation. This could be an important line of research because administrative reform has received significant attention in the public management literature. However, reforming professions has received significantly less attention—the recent calls to reimagine policing point to the implicit importance of reputational signals.

Second, it may be the case that organizational reputation and credibility affect professional reputation. For example, the Centers for Disease Control and Prevention (CDC) in the United States has received significant criticism for its response to COVID-19. Critics have argued that the CDC “worked against quickly approving effective tests [for COVID-19] from commercial labs outside the government,” “insisted on developing its own test and then botched the job,” and that “there was vicious infighting within and between the White House, HHS, the CDC, and FDA about setting shared goals over testing and which nonpharmaceutical measures to prioritize” (Parker & Stern, 2022, p. 622). The CDC has also been criticized extensively regarding its communications during the COVID-19 pandemic (Lewis, 2021). Ultimately, the CDC's poor response to COVID-19 likely impacted its organizational reputation and credibility, which may affect the reputation of public health workers writ large. If that is the case, then organizational reputation or credibility must improve to improve professional reputation. Research suggests that agency credibility can be enhanced by aligning professionals’ incentives with the public good and limiting political interference in agency decision-making (Bertelli, 2021).

Third, this scale can be used to assess if, and potentially how, reputational signals affect intentions to enter a public sector profession. Through survey experiments, Abner, Perry and Fucilla (2020) explored this question. In their first survey experiment, they examined whether positive and negative perceptions of public school teachers affected the likelihood that members of the general public would recommend a career in teaching. In their second experiment, they examined whether negative perceptions of public school teachers affected the likelihood that teachers would recommend a career in teaching. Relatedly, reputational signals can affect the type of people who select a profession, not just the amount (Linos, 2018). Thus, this scale can be combined with longitudinal data to explore the relationship between reputational signals and the volume and composition of people who enter a profession.

Lastly, scholars can assess whether reputational signals affect the morale of public employees, with a particular focus on its potential effects on career commitment and burnout. Negative reputational signals likely undermine career commitment and enhance burnout as signalers communicate their displeasure with the profession. Professionals may disconnect themselves from their profession to maintain their self-image and well-being. Scholars should assess whether public service motivation moderates the impact of reputational signals on career commitment and burnout.

Supplemental Material

sj-docx-1-arp-10.1177_02750740241261070 - Supplemental material for Measuring Reputational Signals Regarding Public Sector Professions: Validation of a Scale and a Research Agenda

Supplemental material, sj-docx-1-arp-10.1177_02750740241261070 for Measuring Reputational Signals Regarding Public Sector Professions: Validation of a Scale and a Research Agenda by Gordon Abner, James L. Perry and Sun Young Kim in The American Review of Public Administration

Supplemental Material

sj-docx-2-arp-10.1177_02750740241261070 - Supplemental material for Measuring Reputational Signals Regarding Public Sector Professions: Validation of a Scale and a Research Agenda

Supplemental material, sj-docx-2-arp-10.1177_02750740241261070 for Measuring Reputational Signals Regarding Public Sector Professions: Validation of a Scale and a Research Agenda by Gordon Abner, James L. Perry and Sun Young Kim in The American Review of Public Administration

Footnotes

Acknowledgments

The authors would like to thank Ashley Clark, Lilian Yahng, and Garrett Hisler for their support and feedback with parts of the design and analysis of the survey.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Policy Research Institute at the LBJ School of Public Affairs at the University of Texas at Austin.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.