Abstract

Background

Clinical trial designs are typically narrowly focused on error control in hypothesis testing, but this approach is inadequate in many contexts, particularly when a decision maker intends to, or must, consider multiple relevant clinical and health economic outcomes under uncertainty. Value-of-information (VoI) metrics can be used to estimate the monetary value of data collection to the decision maker. Adaptive trial designs use prespecified decision rules as data are collected and analyzed to modify the ongoing trial design. To date, VoI considerations have rarely been integrated into this approach, partly due to the computational burden.

Methods

We propose a value-driven adaptive design that refocuses trial design on VoI as a metric to direct trial adaptations. Specifically, a VoI analysis is performed at each interim analysis to determine whether or not the trial should proceed to the next analysis (i.e., determine whether further data collection is sufficiently valuable). We provide methods to compute the expected net benefit of perfect information, expected net benefit of sampling (ENBS) for the next analysis, and the ENBS for subsequent sequential analyses. Our approach is flexible to any statistical model, decision model, and research cost function and does not require distributional assumptions about the net benefit.

Results

We describe our method in detail and demonstrate its implementation via a case study comparing infant immunoprophylaxis and maternal vaccination to prevent respiratory syncytial virus–related medical attendances.

Conclusions

Our value-driven adaptive design aligns pragmatic clinical trial design with the requirements of decision makers. Designs with VoI-based adaptations have the potential to improve the cost-effectiveness of clinical trials.

Highlights

Our value-driven adaptive design is a new method that uses the expected net benefit of sampling to define stopping rules at interim analyses (i.e., to determine if further data collection is sufficiently valuable).

Our method orients trial designs to efficiently produce evidence to inform the decision maker.

Health care decision makers rely on high-quality evidence generated from clinical trials to guide their decision making. For example, in Australia, the Australian Technical Advisory Group on Immunisation makes clinical recommendations for the Australian Immunisation Handbook 1 based on the aggregation of clinical evidence. 2 Unfortunately, in some fields of research such as vaccines, despite aiming for high quality, evidence from clinical trials is often graded as being of low quality for translation to clinical practice recommendations, often due to a lack of precision in the estimated parameters for the reported outcomes. 3

Determining a sample size that balances trial operating characteristics, feasibility, and ethical considerations is a challenge that faces all clinical trials. In general, trials are designed to ensure a reasonable chance of detecting and concluding, with sufficient certainty, a treatment benefit if one exists while mitigating against the risk of a false detection if a treatment benefit does not exist. 4 Traditionally, a sample size that achieves acceptable trial operating characteristics with respect to a null hypothesis significance test relating to the primary outcome is computed using formulae or simulation. However, the error-driven approach to clinical trial design (i.e., the classical approach focused on type I and type II error control) is increasingly regarded as inadequate as it ignores the cost-effectiveness of the proposed research.5,6 Clinical trials could instead be designed and prioritized to optimize the net prospective monetary value of the evidence generated to clinical and policy decision makers (i.e., a value-driven approach). 6

Value of Information

Value-of-information (VoI) metrics such as the expected value of perfect information 7 (EVPI), expected value of partial perfect information 8 (EVPPI), and the expected value of sample information 9 (EVSI) have been proposed as a way to assist researchers and funders during the trial design stage by providing information on the monetary value of conducting the proposed trial.10,11 Recent advances in approximation methods12–20 to compute these metrics have had their performance benchmarked,21,22 and practical guidance has been provided for their implementation. 23 Specifically, these metrics allow researchers to quantify and compare the expected cost of conducting a particular study versus the expected monetary value gained from the study in order to inform a decision about whether the research should be funded and, if so, which design is the most cost-efficient.24,25

Typically, such analyses have been used to supplement, rather than replace, the sample size calculation.5,26 In principle, the sample size calculation seeks to determine the number of units required to identify a clinically meaningful effect under a given design, whereas the VoI analysis determines whether the proposed trial can be expected to be cost-effective (i.e., the monetary value of the evidence derived from the study outweighs the cost of research). However, the separation of these components might not be ideal. A proposed trial that is dismissed as “underpowered” according to convention might nonetheless be cost-effective from a VoI perspective.

Adaptive Trial Designs

Adaptive trial designs are a popular alternative to traditional fixed trial designs.27–29 Instead of recruiting to a fixed prespecified sample size, adaptive designs incorporate interim analyses that apply prespecified decision rules based on model parameter estimates. Interim analysis decision rules guide the ongoing design of the trial to allocate resources efficiently or to stop early if a trial conclusion can be declared.30,31

VoI analyses are rarely incorporated into adaptive designs in practice, 32 partly due to concerns 33 about the complexity of the analyses required, although there has been recent methodological progress. Vervaart et al. 34 developed methods to assess the value of collecting additional survival data by extending the follow-up period of currently enrolled trial participants to inform treatment adoption decisions within health economic evaluations. These methods are described with practical guidance in a tutorial 35 and may be implemented at interim analyses as value-based stopping rules. Flight et al. 36 adapted the EVSI calculation for group sequential designs with clinical effectiveness stopping rules and suggested that VoI methods could be used at interim analyses to inform the ongoing trial design but that computing the EVSI one analysis at a time “does not take account of all possible future interim analyses.” Griffin et al. 37 described how to compute the VoI for designs that resolve uncertainty in parameter sets sequentially using the EVPPI. However, computational complexity was identified as a potential barrier to the sequential EVSI extension of these methods. 37

Decision-theoretic models using dynamic programming methods have also been proposed for adaptive trial designs,38–41 for example, a Bayesian group sequential design based on a quadratic loss function, 41 and these methods have been subsequently extended.42–45 More recently, building on the work of Pertile et al., 44 a decision theoretic model has been extended to account for delay in outcome ascertainment, 46 for multiple trial interventions, 47 and to select optimal recruitment rates 48 with 2 retrospective case-study applications49,50 and a tutorial. 51 These models and their contemporaries estimate the distribution of the expected incremental net monetary benefit (INMB; the incremental monetary value of one decision over another) using trial-based economic evaluations (where the INMB is estimated using only trial data). Under certain assumptions, for example, a Gaussian prior distribution and likelihood, optimal stopping boundaries may be derived analytically and optimal allocation policies prescribed.

Aim

Trial-based economic evaluations may be inappropriate if all available treatment options considered by the decision maker are not included, the trial time horizon is truncated, or there is a failure to incorporate all relevant evidence.52,53 Sculpher et al. 52 suggested that trials should not be considered as the “vehicle for economic evaluation” but should instead generate evidence to be synthesized into the full, externally specified decision model (e.g., by reducing parameter uncertainty). To align with Sculpher et al., 52 we propose a new method that incorporates VoI methods into Bayesian adaptive trial designs based on a complete decision model. We aim to orient trial designs to efficiently produce evidence to inform the decision maker by conducting VoI analyses as part of the trial’s prespecified interim analyses. Specifically, a VoI analysis based on the expected net benefit of sampling (ENBS) will be performed at each interim analysis to determine if the trial should proceed through to the next analysis (i.e., determine if further data collection is sufficiently valuable).

Our method, termed the value-driven adaptive design, may be considered in the class of value-adaptive designs 51 and intends to offer an alternative pragmatic approach to the aforementioned decision-theoretic models and contemporaries. Our approach accommodates any Bayesian statistical model (e.g., a hierarchical model) and allows for the decision model and statistical model to be specified independently. Building on the work of Griffin et al., 37 and providing an alternative to Pertile et al., 44 our value-driven adaptive design is the first adaptive design using a VoI-based decision rule at interim analyses to determine the value of continuing recruitment to repeatedly reduce parameter uncertainty without restriction or distributional assumptions on the statistical model or net benefit. Our method is general purpose and not restricted to any specific health economic model structures (as required by Pertile et al. 44 ) or approximation algorithms (i.e., our method can use regression based approximation algorithms 18 that maintain computational complexity, irrespective of the complexity of the health economic model). The article proceeds as follows: the second section describes our methodology in detail, the third section presents a case study as a motivating example, and the fourth section concludes with a discussion.

Methodology

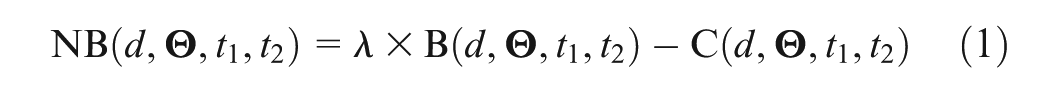

Suppose that a decision maker was choosing between an exhaustive choice set of

Here,

If the parameters

A Clinical Trial

Suppose we were to conduct a clinical trial with sequential analyses in which we accrue information on unit outcomes and progressively resolve epistemic uncertainty in the value of the net benefit function (1) (which could be exploited by the decision maker to make an improved decision). Our goal is to progress through the sequential analyses until it is no longer valuable. We denote all relevant outcome data collected for participant

The function

Expected Net Benefit of Sampling

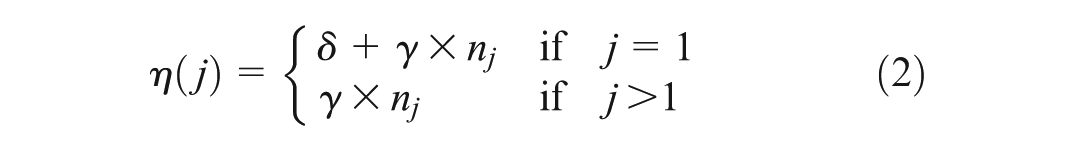

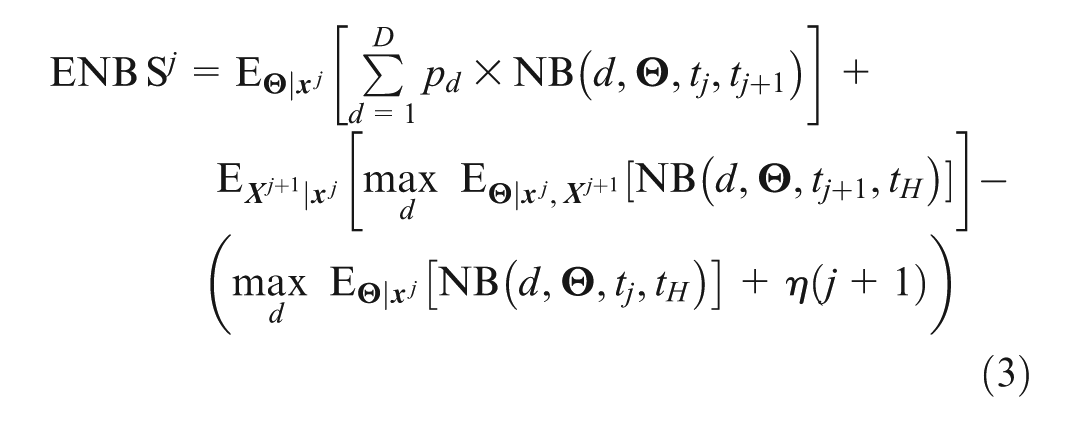

We denote the assumed implementation of decision options during the trial with

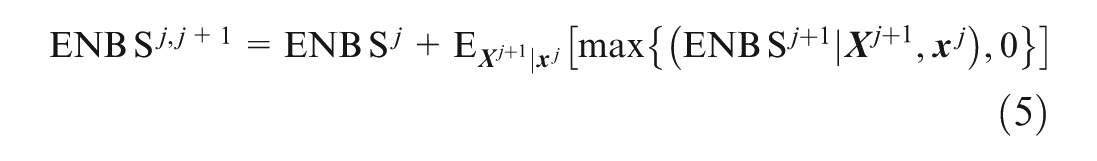

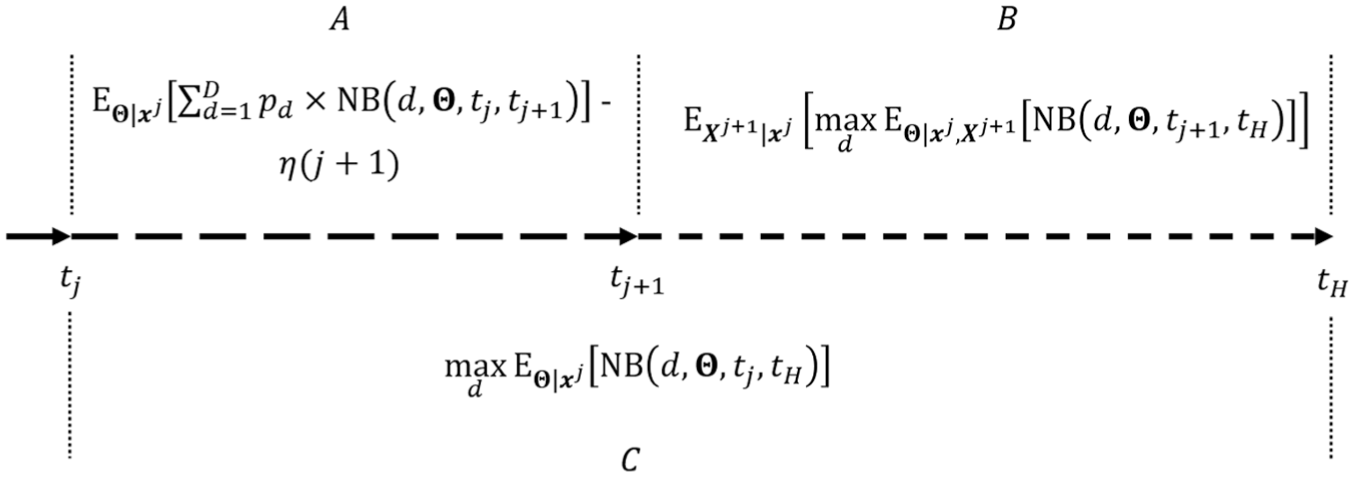

The ENBS equation (3) is visualized in Figure 1. If

Visual representation of the expected net benefit of sample information (

Expected Net Benefit of Perfect Information

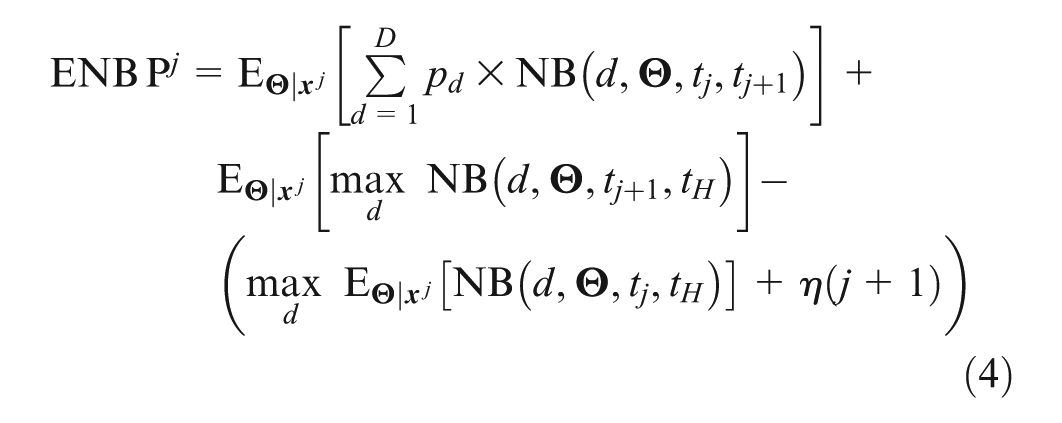

Analogous to the EVPI, we define the expected net benefit of perfect information (ENBP) as the expected value of proceeding through to the next analysis under the assumption that doing so will resolve all parameter uncertainty. The ENBP computed at analysis

The expression for

Expected Net Benefit of Continuing the Trial

The above expression for

A proof for equation (5) is provided in the Supplementary Materials. In (5), the inner part of the second term represents the decision on whether or not to proceed to analysis

Furthermore,

Choosing Interim Sample Sizes

Our value-driven adaptive design can be used irrespective of the method for selecting interim sample sizes. Many trials choose interim sample sizes based on practical constraints such as anticipated recruitment, availability of statistical resources and the length, cost, and feasibility of outcome ascertainment (e.g., laboratory-based analyses may require batched samples). In these cases, we advise choosing interim sample sizes pragmatically.

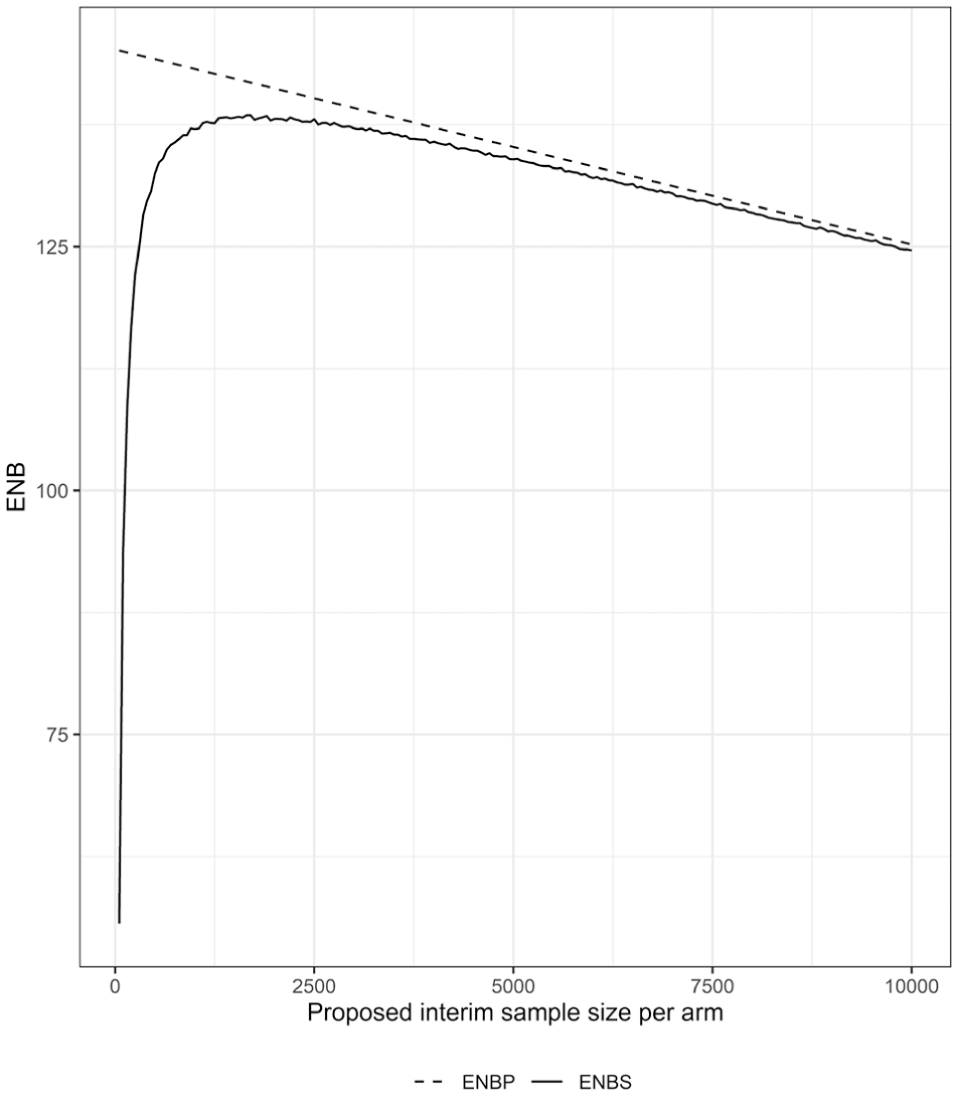

Alternatively, interim sample sizes may be chosen strategically at each interim analysis by choosing

Expected net benefit (ENB) of perfect information (ENBP) and sampling information (ENBS) by proposed interim trial sample size per arm. Proposed interim sample sizes range between 50 and 10,000 participants per arm.

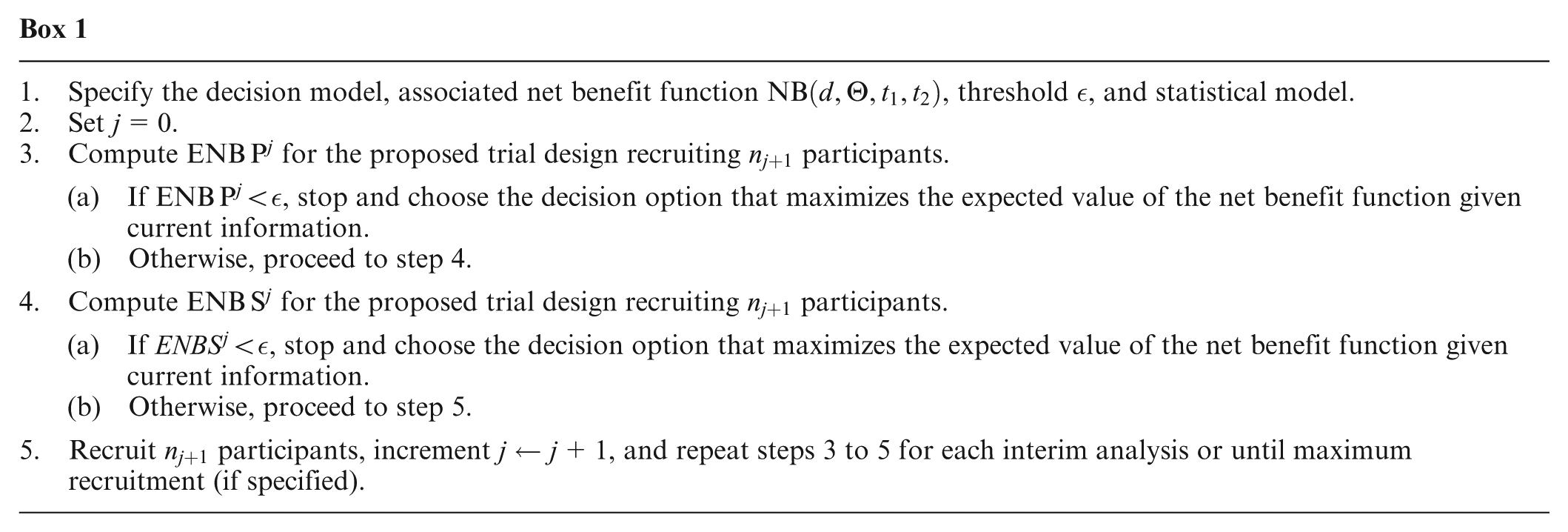

Summary

Having presented the key measures to define our value-driven adaptive design, we provide a summary of the steps in Box 1. We also provide generic code that implements our method by calculating

Case Study

To demonstrate our value-driven adaptive design, we consider an example trial evaluating infant immunoprophylaxis (II) compared with maternal vaccination (MV) to prevent respiratory syncytial virus (RSV). RSV infection accounts for approximately 3.6 million hospitalizations and more than 100,000 deaths each year, globally.

55

In Australia, the 2 potential alternative strategies to reduce the burden of severe RSV disease are II (

Net Benefit Function

Of interest to the policy maker is the tradeoff between the difference in effectiveness of II compared with MV with respect to preventing RSV-related medical attendances (MA-RSV) in the first 12 months of life and the respective costs of implementing each strategy. We denote the probability that infants belonging to mother–infant dyads who received II or MV experience an MA-RSV within the first 12 months with

We assume that in Australia, there are, on average, 300,000 births per year, the willingness to pay to prevent each MA-RSV is

Note that we use a discrete approximation for the discounting function here only for the purpose of exposition and accessibility. In practice, at least for this example, a continuous discounting function may be more accurate and computationally advantageous. We see here that MV will be more cost-effective than II if

The Trial

Suppose that a potential trial comparing the strategies head to head aims to recruit up to a maximum of 2,000 mother–infant dyads, denoted

We use regularizing Beta(4,20) priors for both unknown parameters

Scenarios

To demonstrate the implementation of our method on the RSV case study, we consider the following 2 scenarios:

The incremental effectiveness of II compared with MV is large (i.e., II is preferred to MV). We set

The incremental effectiveness of II compared with MV is small (i.e., MV is preferred to II). We set

These parameters are fixed and known only for the purpose of data simulation. Prior to conducting a trial with an adaptive design, this method can be used within a simulation study to explore the trial’s potential course given current parameter uncertainty and does not require fixed truth scenarios such as those presented here.

Implementation

Prior to starting the trial, we conduct a cost-effectiveness analysis to determine whether conducting the trial up to the first analysis is cost-effective (i.e., compute

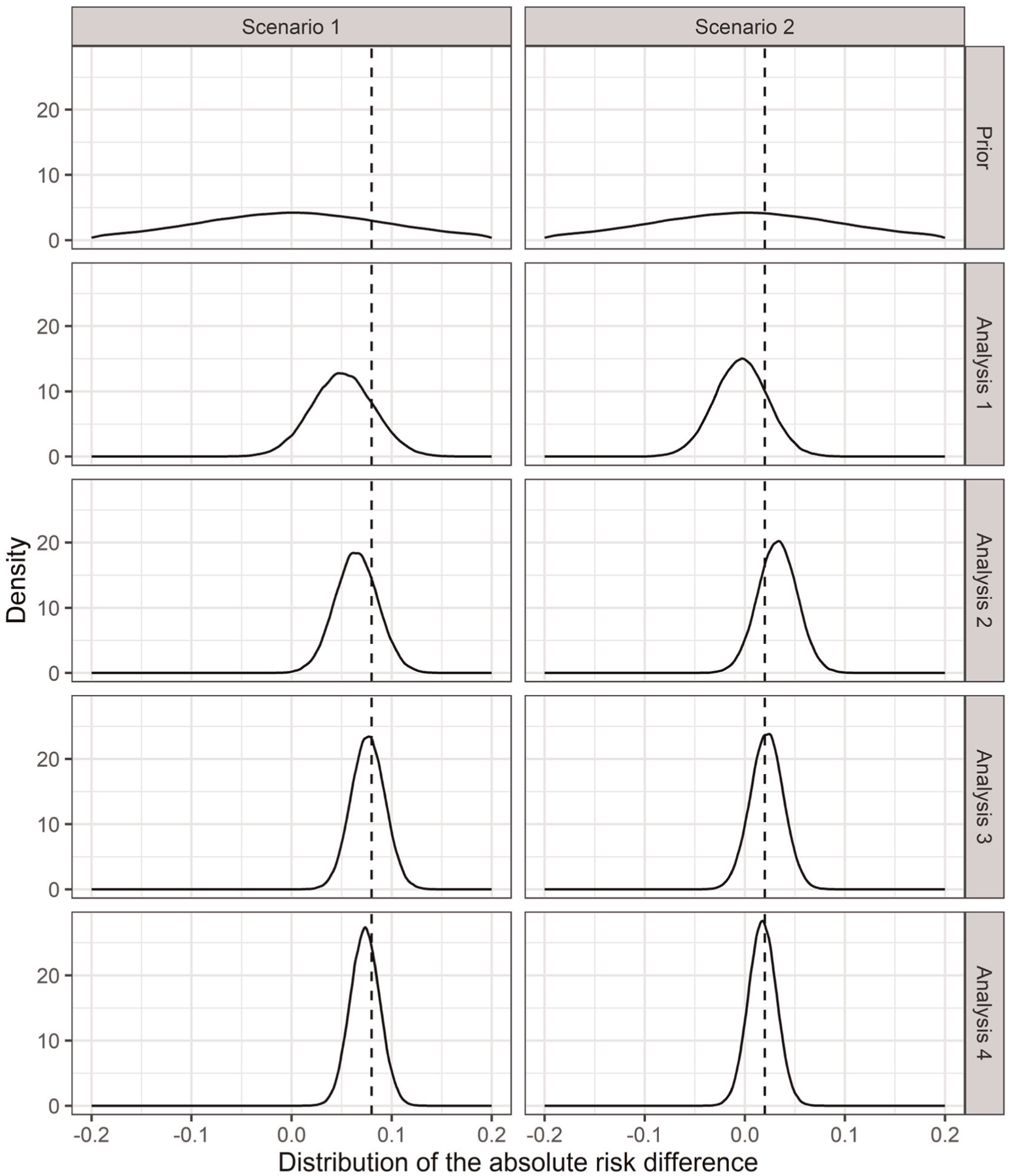

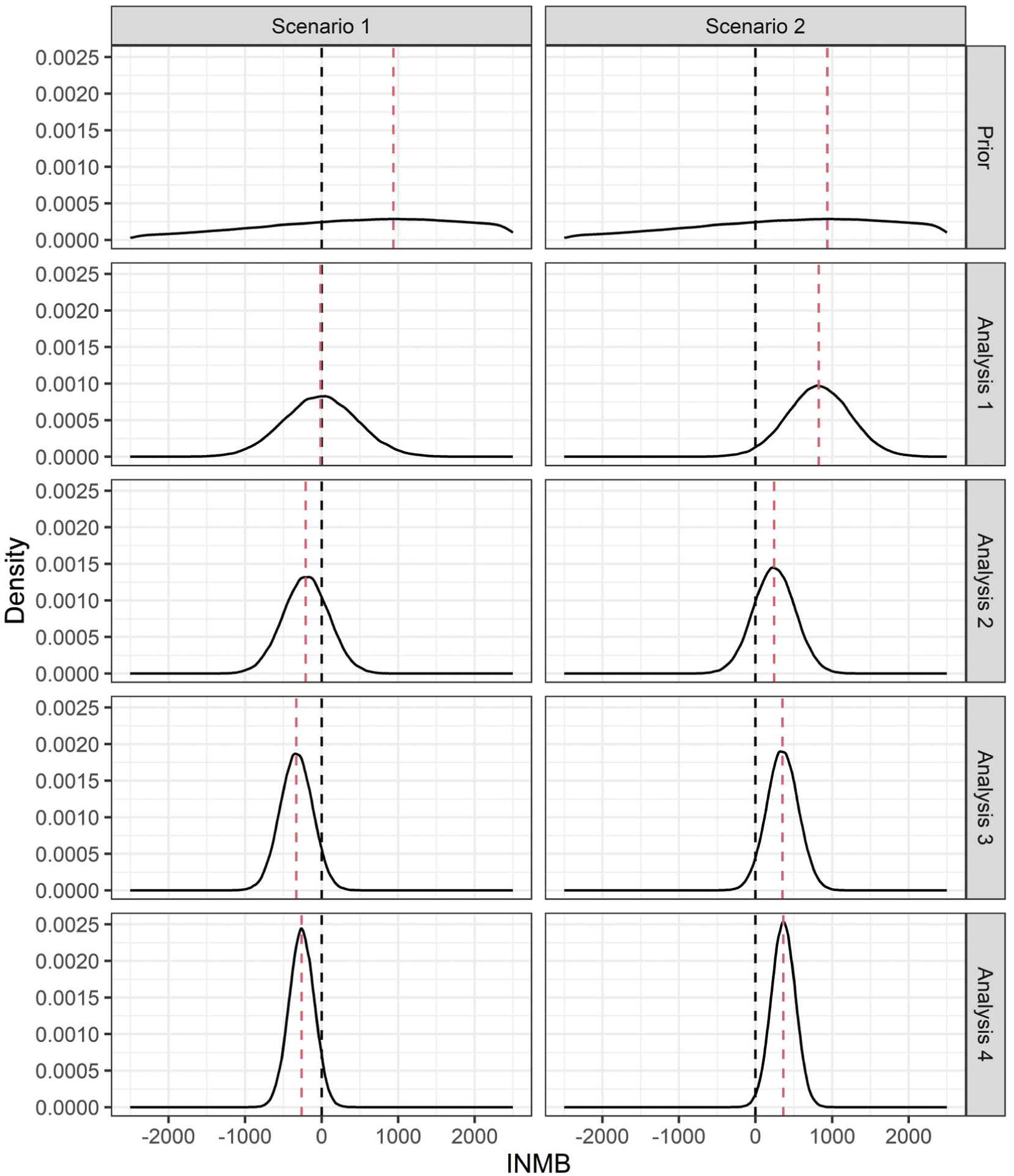

For each scenario, we generate data for each analysis (using equation [7]) and estimate the corresponding posterior distributions. As data accrue, posterior parameter uncertainty reduces, and consequently, uncertainty in the INMB (6) reduces too. Figures 3 and 4 show the uncertainty reduction in the parameters (as the absolute risk difference) and INMB, respectively, for each scenario and analysis for one set of simulated trials.

Distributions of the absolute risk difference of a medically attended respiratory syncytial virus event within 12 months between maternal vaccination and infant immunoprophylaxis (

Incremental net monetary benefit (INMB) distributions prior to the trial (prior distributions) and at each analysis (posterior distributions) for one simulated trial under each scenario of the respiratory syncytial virus case study. The black dashed line at zero represents the decision point between the infant immunoprophylaxis and maternal vaccination strategies. The red dashed lines represent the expected INMB.

We estimate the value of potentially proceeding through to analysis

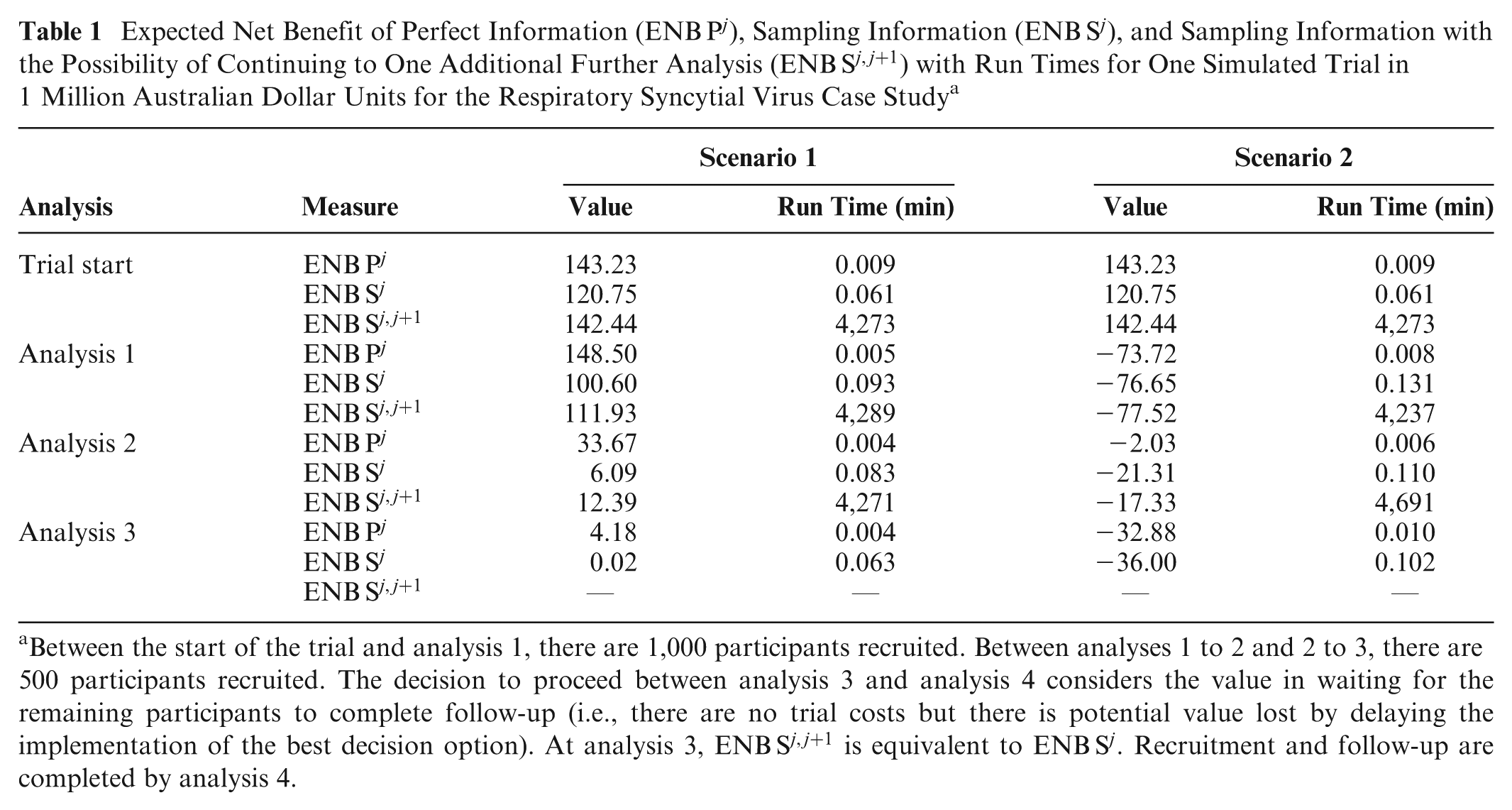

Expected Net Benefit of Perfect Information (

Between the start of the trial and analysis 1, there are 1,000 participants recruited. Between analyses 1 to 2 and 2 to 3, there are 500 participants recruited. The decision to proceed between analysis 3 and analysis 4 considers the value in waiting for the remaining participants to complete follow-up (i.e., there are no trial costs but there is potential value lost by delaying the implementation of the best decision option). At analysis 3,

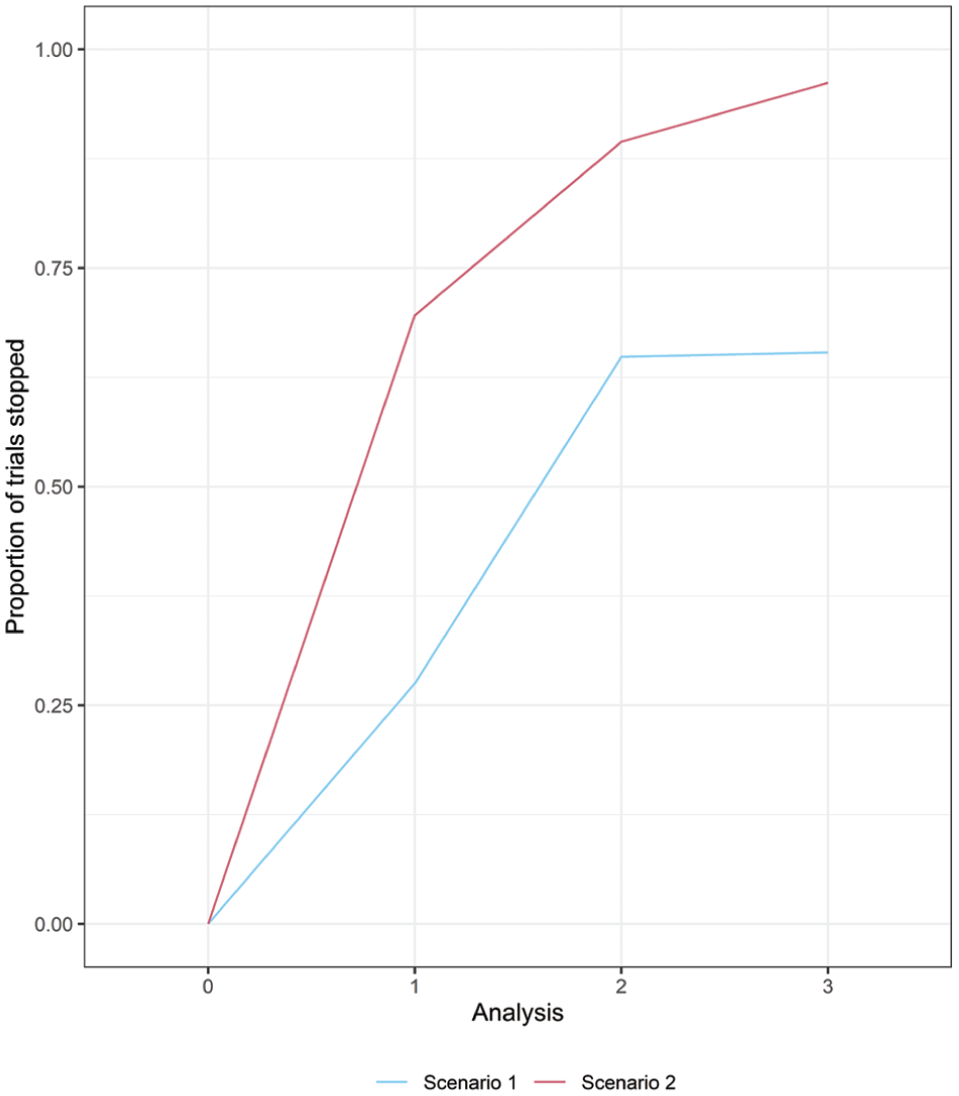

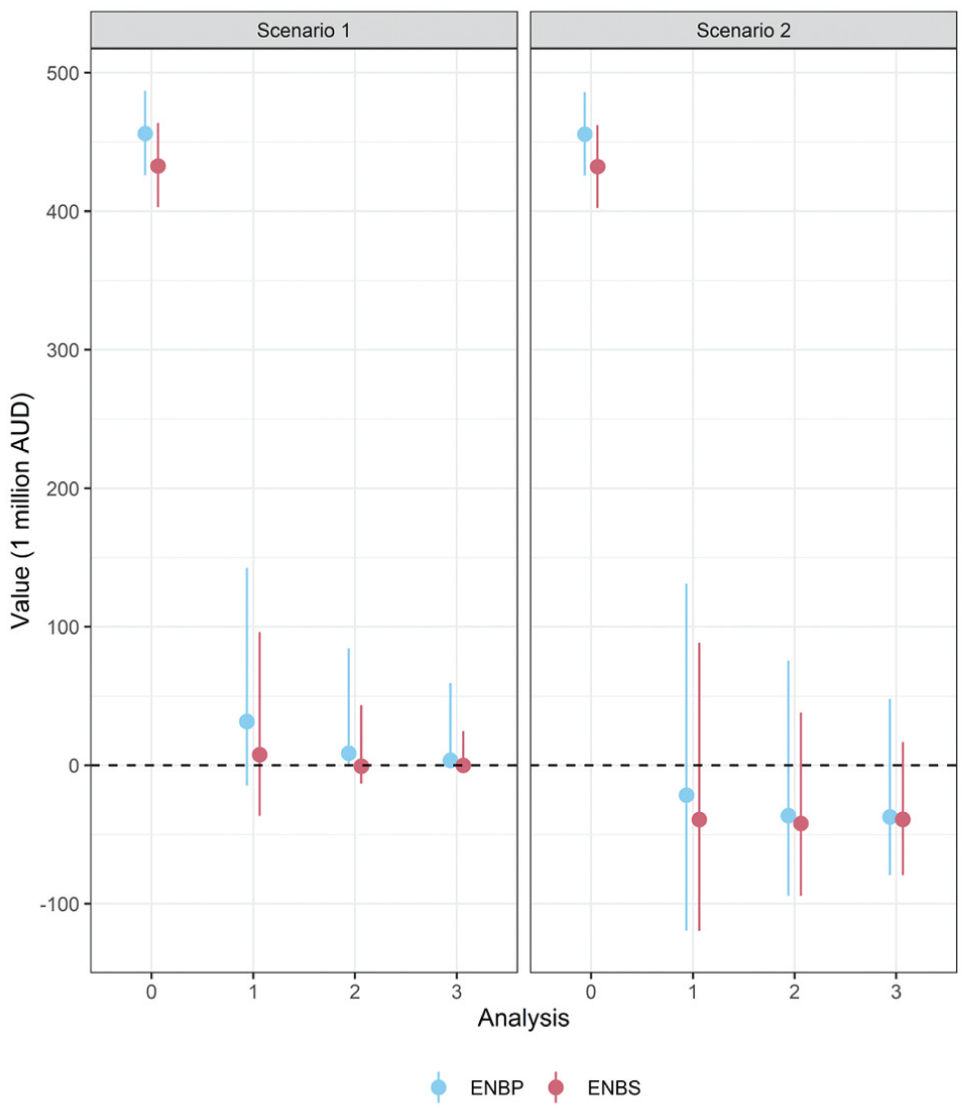

We conducted a simulation study investigating 10,000 simulated trials in each scenario. The proportion of simulated trials stopping at each analysis in each scenario is shown in Figure 5, and the distribution of

Proportion of 10,000 simulated trials stopping at each analysis in each scenario due to a negative expected net benefit of sampling (

Median and 95% central interval of the expected net benefit of perfect information (

Discussion

VoI methods enable researchers to determine the net monetary value of their proposed research and therefore allocate resources more efficiently. Moreover, public research funders can use VoI methods to determine, in advance, which studies are most likely to be cost-effective and thereby set future research priorities and allocate limited research budgets accordingly. 56 Recently, these methods have become accessible to researchers via a range of publicly available online tools, 57 with their popularity coinciding with an increase in the advocacy of health economic considerations in general.

The value-driven adaptive design is fundamentally different from a traditional design (adaptive or not) in its philosophy, and therefore, we have chosen not to compare the designs. At the core of a traditional design is a hypothesis test that aims to determine whether or not a parameter (or set of parameters) exists within a region of interest (e.g., whether or not a treatment effect is zero). A resulting claim about the parameters of interest incurs the risk of being wrong, and consequently, type I error and power become important. Unfortunately, many researchers believe that the value of a trial lies in its ability to navigate this hypothesis test (e.g., trials that do not declare a nonzero treatment effect are often deemed to have “failed” or else are “inconclusive”). Trials with traditional designs may continue long after we are confident of the economic decision or alternatively may stop when the economic decision is still unclear.

In contrast, the value-driven adaptive design is centered around the (monetary or health) value of data gathered to inform a decision with respect to a decision model. It is not subject to type I error and power simply because it does not make a binary truth claim and instead focuses on reducing parameter uncertainty to improve the probability the decision maker makes an optimal decision. Of course, a suboptimal decision may still be made (akin to a type I or type II error), but by design, the trial stops at the point at which the incremental value to reduce the probability of making a suboptimal decision is outweighed by the cost of continuing the trial. Therefore, whether or not the optimal decision was chosen is irrelevant; the cost of collecting more data to inform the decision exceeds the expected benefit from making a better informed decision, implicitly because those resources could be diverted to where the benefits returned are likely to be greater. Furthermore, not only will a clinical decision likely consider multiple outcomes that a hypothesis test avoids based on multiplicity concerns, but in most circumstances, a decision will need to be made whether or not a trial is conducted, and the “correctness” of the decision made may be impossible to determine, even in hindsight.

Decision-theoretic models have been developed in the past decade that optimally select stopping times given their model-specific assumptions.44,46–48 These models employ dynamic programming methods to optimize decision making while carefully managing potential pathological behavior in the VoI functions (e.g., paradoxical nonconcavity 58 ). However, approaches using data-driven trial-based cost-effectiveness analyses may be inappropriate, and using the trial to inform a subset of the parameters of the full decision model may be a preferable alternative. 52 In scenarios in which the trial does not contain all of the decision options in the economic model, the VoI calculation is still valid but will depend on only the relevant subset of parameters (e.g., if the parameters associated with the missing decision options are independent from the relevant subset of parameters, then their uncertainty will remain unchanged).

Our model-based method seeks to provide a pragmatic alternative to these data-driven methods and does not, by any definition, claim to select optimal stopping times. Clinical trials serve multiple stakeholders and are subject to ethical and practical constraints 33 that, consequently, may preclude the implementation of an “optimal” design. For example, a pragmatically designed trial appropriately informed by the relevant stakeholders, including consumers, may equally allocate participants to all available interventions even though it may counterintuitively be optimal to collect information on only a subset of the available interventions. 58 Further practical considerations that may preclude the implementation of an optimal model include the complexity of the statistical model, randomization, intercurrent events, and constraints on statistical and laboratory resources. Our value-driven adaptive design is not contingent on the absence of these considerations and is flexible in its implementation by design. The resulting trial will likely be of value to decision makers, even if its value is “suboptimal.” In fact, the capability to determine an optimal solution (e.g., via the methods of Pertile et al. 44 ) is explicitly traded off with the relaxation of unrealistic assumptions that may hinder the use of VoI methods in adaptive designs.

A limitation of our methodology is that it is constrained to designs in which the decision faced at interim analyses is only one related to increasing the sample size, where other design elements are fixed (e.g., length of follow-up time). Trial designs that allow for the follow-up time of currently enrolled trial participants to be increased may benefit from alternative methods previously developed in this space.34,35 However, if the decision is made to increase the sample size, including at the start of the trial, the randomization ratio still needs to be chosen. If feasible, our method can be used to select an advantageous randomization ratio that targets recruitment to a decision option associated with higher parameter uncertainty in order to maximize the VoI collected from the next interim sample. Another potential limitation is that it may be infeasible to compute

Adaptive designs, including the value-driven adaptive design, are not suitable for all trials. For example, if the length of follow-up to outcome ascertainment is sufficiently long compared with the speed of enrolment, then the potential benefits of an adaptive design are limited (i.e., if there are few participants yet to recruit at the time of an analysis, then the ongoing trial design has limited opportunity to “adapt”). In these situations, a fixed design that is still based on VoI is recommended.

The RSV case study demonstrated the implementation of a simple value-driven adaptive design. A real-world implementation of this design would need to address the costs of implementation for each strategy as well as their expected uptake. Furthermore, the policy maker will likely be interested in additional outcomes (e.g., hospitalization, mortality, and adverse events) and will be required to specify an appropriate willingness-to-pay parameter that considers the full decision model, potentially specified in terms of QALYs. Moreover, for the case study, we assumed a simple research cost function,

An important avenue for future work will be to explore the implementation of the value-driven adaptive design for a clinical trial including the potentially challenging formulation and agreement of a decision model and specification the research cost function. Arguably, this area of research, or at least its implementation, is still in its infancy, and further investigation and development is needed.

Supplemental Material

sj-pdf-1-mdm-10.1177_0272989X261423177 – Supplemental material for A Pragmatic Bayesian Adaptive Trial Design Based on the Value of Information: The Value-Driven Adaptive Design

Supplemental material, sj-pdf-1-mdm-10.1177_0272989X261423177 for A Pragmatic Bayesian Adaptive Trial Design Based on the Value of Information: The Value-Driven Adaptive Design by Michael Dymock, Julie A. Marsh, Mark Jones, Anna Heath, Kevin Murray and Thomas L. Snelling in Medical Decision Making

Footnotes

Acknowledgements

Not applicable.

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support for this study was provided in part by an NHMRC Postgraduate Research Award (APP2022557), an AusTriM Postgraduate Top-up Scholarship, a Stan and Jean Perron Top-up Scholarship, a Wesfarmers Centre of Vaccines and Infectious Diseases Top-up Scholarship, a Statistical Society of Australia PhD Top-up Scholarship, a grant from the Discovery Grant Program of the Natural Sciences and Engineering Research Council of Canada (RGPIN-2021-03366), a Canada Research Chair in Statistical Trial Design, and an MRFF Investigator Grant (MRF1195153). The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing, and publishing the report.

Ethical Considerations

Not applicable.

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.