Abstract

Background

Health care interactions may require patients to share with a physician information they believe but is incorrect. While a key piece of physicians’ work is educating their patients, people’s concerns of being seen as uninformed or incompetent by physicians may lead them to think that sharing incorrect health beliefs comes with a penalty. We tested people’s perceptions of patients who share incorrect information and how these perceptions vary by the reasonableness of the belief and its centrality to the patient’s disease.

Design

We recruited 399 United States Prolific.co workers (357 retained after exclusions), 200 Prolific.co workers who reported having diabetes (139 after exclusions), and 244 primary care physicians (207 after exclusions). Participants read vignettes describing patients with type 2 diabetes sharing health beliefs that were central or peripheral to the management of diabetes. Beliefs included true and incorrect statements that were reasonable or unreasonable to believe. Participants rated how a doctor would perceive the patient, the patient’s ability to manage their disease, and the patient’s trust in doctors.

Results

Participants rated patients who shared more unreasonable beliefs more negatively. There was an extra penalty for incorrect statements central to the patient’s diabetes management (sample 1). These results replicated for participants with type 2 diabetes (sample 2) and physician participants (sample 3).

Conclusions

Participants believed that patients who share incorrect information with their physicians will be penalized for their honesty. Physicians need to be educated on patients’ concerns so they can help patients disclose what may be most important for education.

Highlights

Understanding how people think they will be perceived in a health care setting can help us understand what they may be wary to share with their physicians.

People think that patients who share incorrect beliefs will be viewed negatively.

Helping patients share incorrect beliefs can improve care.

Keywords

Vitamin C cures colds, eating sugar causes diabetes, and walking 10,000 steps is the threshold for healthy movement. These statements, while widely believed by the lay public, are either incorrect or overly simplified descriptions (e.g., 10,000 steps is a threshold developed by a pedometer marketing company, not health researchers). When patients interact with health care providers, private inaccurate health beliefs they hold can and should become public. Shared decision making (SDM), the gold standard for medical treatment planning, necessitates patients and providers sharing information so they can collaboratively develop treatment plans. 1 SDM can improve health outcomes, treatment adherence, and satisfaction as well as reduce treatment disparities.2–4 Significant resources have been invested in implementing SDM 5 and incorporating it in medical education.6,7 However, it is not widespread, 8 and patients who want to engage in SDM often defer to physicians in actual decision making. 9

We propose that the information sharing essential to SDM is a key barrier to it, as it requires patients to share information that may conflict with their provider’s beliefs. While patients possess unique knowledge of their own health,10,11 their knowledge may be incomplete or incorrect. People often misattribute the source of their individual symptoms12,13 or incorrectly deem medications effective. 14 Further, health misinformation and conspiracy theories are pervasive.15–17 Surveys have found 67% of people believe vitamin C cures colds, even though vitamin C only minimally shortens colds. 18 Likewise, 82% of survey respondents believed eating sugar causes diabetes, 19 even though sugar consumption alone is not a cause of developing diabetes. In addition, even before COVID-19, 49% of survey respondents believed in at least 1 health-related conspiracy theory. 15 As these examples show, incorrect beliefs can vary in their relevance for future health, from taking a supplement that may be merely ineffective, to avoiding vaccination that could save lives. The prevalence and array of incorrect beliefs mean any given patient is likely to hold beliefs that differ from medical consensus or their provider.

A key question is whether patients believe that sharing health beliefs that providers may not agree with may be detrimental to their relationship with their physician. Laypeople do not have formal health training and should not be held to a standard of having textbook health knowledge. If laypeople believe that providing education on incorrect health beliefs is core to physicians’ work, then laypeople may think physicians will be unfazed by incorrect disclosures. However, patients report concerns over seeming incompetent or uninformed by their physicians.20,21 US adults report withholding information from their health care providers to avoid judgment, 22 such as not disclosing use of complementary and alternative medicine. 23 From this previous work, we predict people may think physicians react negatively to patients sharing incorrect beliefs, as this could imply a person is uninformed about their health and a “bad” patient.

Incorrect information is not created equal. For example, some beliefs may seem reasonable (e.g., taking vitamin C cures colds), whereas other beliefs may not (e.g., the social media–spread belief that putting potatoes in your socks cures colds). Further, people hold health beliefs that are not just unreasonable but also represent conspiratorial thinking. People may perceive that only extreme beliefs such as conspiracy theories will be penalized in the health care setting. In addition, an incorrect belief could be central to successful disease management (e.g., information about treatments) or peripheral to the disease (e.g., a fun disease factoid). A patient who holds an incorrect belief about the treatment of their illness could be seen as more problematic than one who believes something incorrect about how the disease was discovered.

While laypeople may view sharing incorrect beliefs as problematic, physicians may see this as just part of the health care interaction. Prior work has examined factors influencing how doctors perceive patients such as a patient’s race, gender, and socioeconomic status.24–27 However, it is not known how the information a patient actively shares influences perceptions.

Patient beliefs influence behavior, 28 so identifying incorrect beliefs could enable interventions to foster healthier choices. 29 In 3 samples, we presented participants with information varying in reasonableness and relevance to a patient’s health condition to test how the nature of incorrect information affects perceived penalties for sharing that information. We examined whether people were sensitive to 1) the degree of “wrongness” in judging an information sharer, or whether any incorrect information would be equally penalized, and 2) the relevance of a shared belief to a patient’s disease management. We focus on type 2 diabetes (T2D) because it is prevalent 30 and open communication between patients and providers has proven critical.31,32 First, we test perceptions in a general online sample. Next, we test perceptions in a sample of participants who reported having T2D (along with a comparison of people who did not report having T2D) to understand if personal experience with this chronic illness changes people’s view of how incorrect beliefs about the disease will be received. Finally, we tested in a sample of primary care to test if physician judgments match patient expectations.

Methods

Approval was granted through Stevens Institute of Technology’s Institutional Review Board. All data were collected online between August 2020 and January 2022.

Participants and Exclusion Criteria

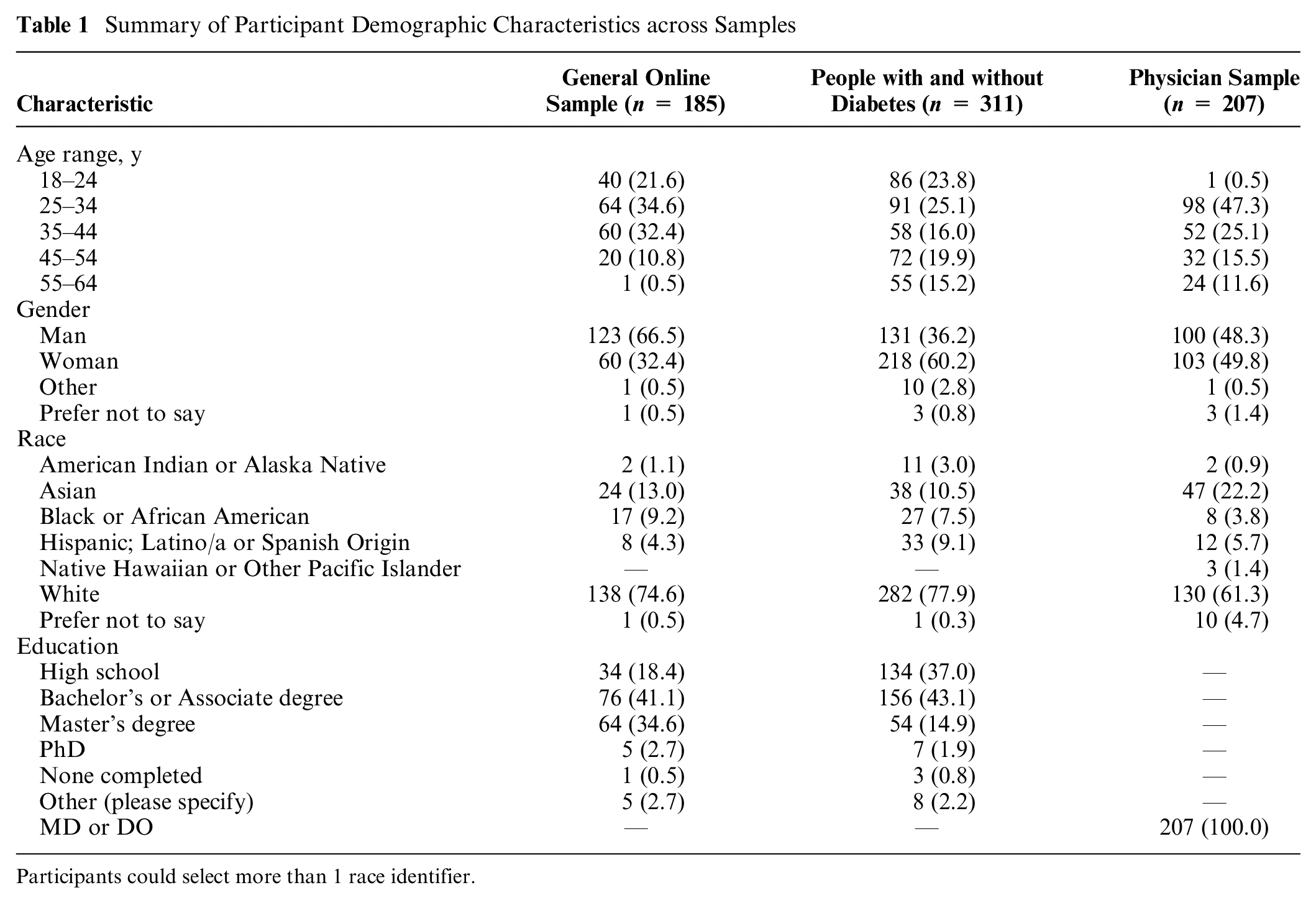

We recruited US participants aged 18 to 64 y. Table 1 presents participant demographics. To recruit lay participants (sample 1), we used Prolific.co and excluded participants working in health care and participants outside of our defined age range. We recruited 200 participants and excluded 4 duplicate responses and 11 individuals who reported working in health care, for a final total of 185 participants in our analysis.

Summary of Participant Demographic Characteristics across Samples

Participants could select more than 1 race identifier.

We additionally recruited a sample of people with and without diabetes (sample 2). We used Prolific’s targeted recruiting to recruit 200 participants who reported having diabetes and 200 unrestricted, general participants, with 399 completing. Of these, 33 participants were excluded due to working in health care and 4 were excluded for being outside our age range. From the remaining 362 participants, we sorted participants into experience groups by responses to a demographic question about their diabetes status: yes, type 1 diabetes (n = 33); yes, type 2 diabetes (n = 139); no, but I am a caregiver for someone with diabetes (n = 11); no (n = 172); or other with a space to describe why they chose this option (n = 7; e.g., having gestational diabetes). For the self-experience group, we used participants reporting having T2D as our vignettes feature an adult recently diagnosed with diabetes. For the no-experience group, we used participants who had no personal diagnosis of diabetes and were not caregivers for anyone with diabetes (i.e., participants who answered “no” to our question). We had 139 participants who reported having T2D in the self-experience group and 172 participants who reported no experience with diabetes in the no-experience group.

We additionally recruited primary care physicians using convenience and snowball sampling methods.33,34 We e-mailed physicians working in Department of Internal or Family Medicine associated primary care clinics at ∼30 sites nationwide. We aimed to recruit 250 physicians and stopped data collection when recruitment slowed at 244. The inclusion criteria were an MD or DO degree, working in primary care in the United States, and being aged 18 to 64 y. We excluded 5 duplicate responses, 25 participants who did not report MD or DO as their highest degree obtained, and 7 participants who reported their age as 65 y or older. This resulted in 207 physicians in our analysis. Physicians received a $20 gift card for participation.

Materials

Participants read vignettes describing an individual with diabetes reporting a belief to their physician. The prompt for each hypothetical patient was as follows:

Imagine a person who just moved to a new town. Shortly before they moved to the new town, the person was diagnosed with diabetes. The person is now having their first visit with their new doctor. At this first visit, the person mentions that they think that [BELIEF STATEMENT].

We described the patients as new to town to avoid assumptions about why patients were seeing new physicians and equate the length of the presented patient-physician relationships.

Through separate pilot testing, we created 16 statements representing different beliefs. In our pilot work, we asked participants to rate how reasonable a set of statements were to believe. We also asked participants to rate how much each statement sounded like a conspiracy theory (see Supplemental Materials, Appendix A for pilot test information and the selected items). From this pilot testing, we selected 4 true statements as a baseline for how patients were perceived when they shared correct information (e.g., “Diabetes is usually caused by a lack of insulin in the body.”). These true statements were rated as more reasonable than the 12 incorrect statements that we included. The incorrect statements we used grouped into 3 categories based on pilot ratings: reasonable to believe (reasonably wrong; e.g., “CBD oil reduces blood sugar.”), unreasonable to believe (unreasonably wrong; e.g., “Drinking carrot juice will cure diabetes.”), and unreasonable to believe and additionally represented a conspiracy theory (conspiracy; e.g., “Medications intentionally cause diabetes as a side effect so people will have to buy insulin to treat it.”). The reasonably wrong and unreasonably wrong were rated as not representing conspiracy theories. Including reasonably and unreasonably wrong statements allows us to measure if people were sensitive to the degree of reasonableness of a statement. We included conspiracy statements to determine if these more extreme beliefs are uniquely penalized. Overall, multiple types of incorrect statements allow us to test if all incorrect beliefs are treated as equal or if people are sensitive to the acceptability of an espoused belief.

In addition, we manipulated statements’ relevance to diabetes management. We included statements that were either about causes of and treatments for diabetes (central) and statements describing other elements of diabetes or unrelated health information (peripheral). If people are sensitive to the content of a statement, and not just how wrong it seems to be, then statements that are central to the cause and treatment of diabetes may be more heavily penalized if they seem unreasonable to believe. That is, the very things that should be discussed with a provider may create the biggest penalty for patients. We averaged across the 2 types of central and the 2 types of peripheral statements for analysis.

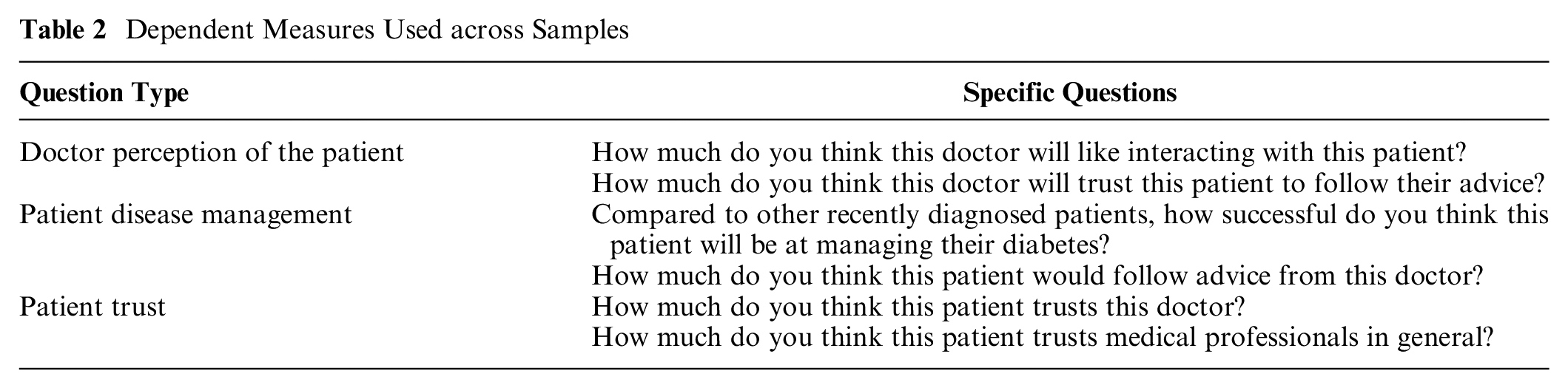

To test participants’ judgments, we measured 3 constructs: how people believed the patient’s doctor would perceive the patient, how people evaluated the patient’s ability to manage their disease, and how people perceived the patient’s trust in doctors. We tested 2 questions for each construct (Table 2) and averaged responses for each participant across the 2 questions to create 1 mean rating per construct, per participant. Our original intention was to analyze these 3 constructs separately. However, we tested whether our 3 separate measures were correlated using McDonald’s ω for our first participant sample. We found high reliability within true (0.948), reasonable (0.968), unreasonable (0.976), and conspiracy (0.982) statements, suggesting that our 3 measures were answered in similar ways. Given this finding and for simplicity of presentation, we averaged across our 6 measures for each participant to calculate 1 composite impression score for each vignette. In the Supplemental Materials (Appendix B), we present analyses separated by our 3 original constructs.

Dependent Measures Used across Samples

To summarize, we averaged across our six measures to calculate two mean ratings (central and peripheral) for each of the four levels of reasonableness (true, reasonable wrong, unreasonable wrong, conspiracy).

Procedure

Participants provided informed consent. They then read instructions that described the following vignettes as presenting individuals’ first encounter with a new doctor in a new town and that patients often share information such as “how they’re feeling, something they read about, or knowledge they have.” We instructed physicians in our third sample to “imagine an encounter with the average primary care doctor,” so that physicians, like lay participants, also made judgments about a hypothetical physician.

Participants then read 16 vignettes, each with a different statement, in a randomized order. For each vignette, participants rated our 6 questions from 0 (not at all) to 100 (completely). Physicians were randomly assigned to the same materials shown to laypeople (n = 46) or to a version where the gender (woman or man) and race (Black or White) of each patient was included (n = 161). i

After rating all vignettes, participants rated how reasonable and conspiratorial each statement was, allowing us to check that they viewed the reasonableness of the statements as intended. The posttest results replicated the pilot data (Supplemental Materials, Appendix C). Participants then provided demographics (gender, race, age range, country of birth, and education level). In addition, for both lay samples, participants reported if they worked in the medical field. In sample 2, we also asked if participants had personal diabetes experience (e.g., type 1 or 2 diagnosis, caring for someone with diabetes, or other experience). In sample 3 we asked for participants’ duration of practicing medicine and current specialty. The experiment was administered online using the Gorilla survey software.

Statistical Analysis

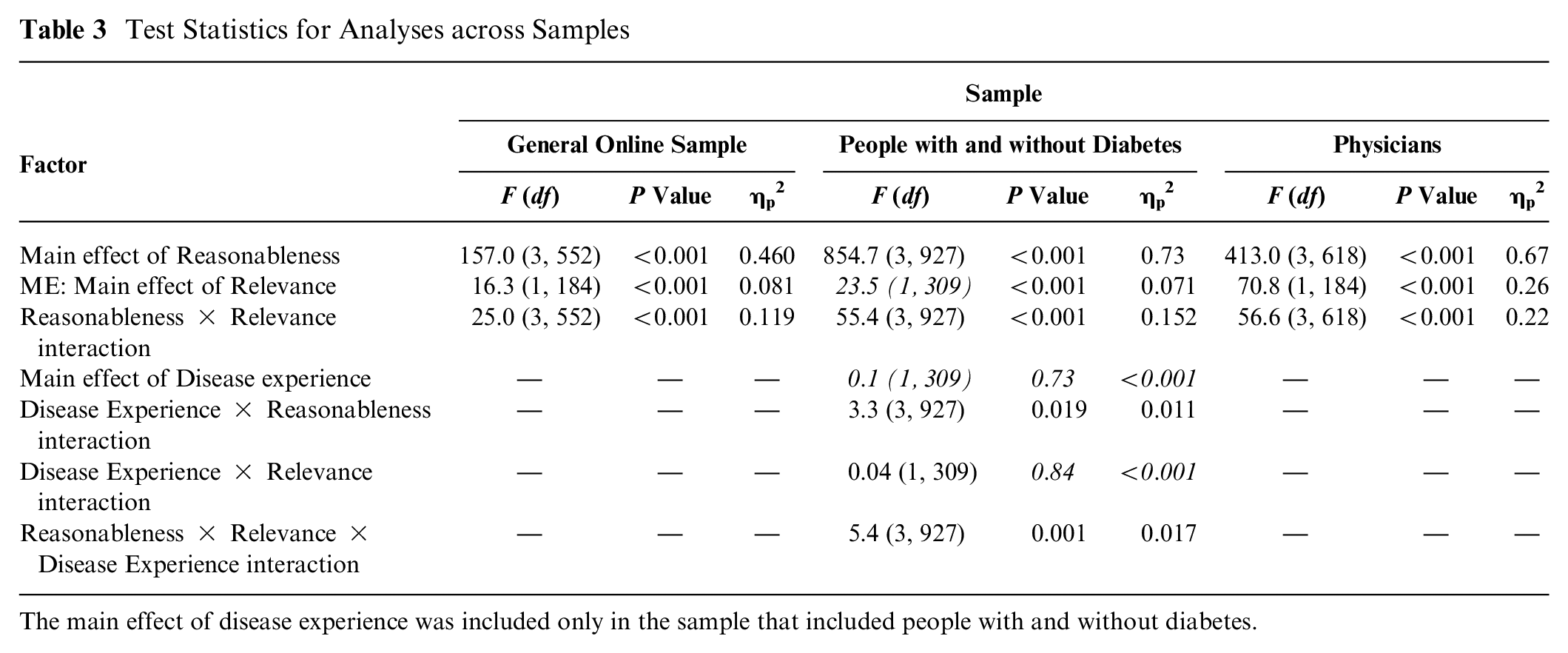

We conducted 4 (reasonableness: true, reasonably wrong, unreasonably wrong, conspiracy) × 2 (relevance: central, peripheral) repeated-measures analyses of variance (ANOVA). We explored significant interactions with follow-up Sidak corrected t tests. For our sample in which we compare people with and without diabetes, we added diabetes experience (no experience v. self-experience) as a between-subject factor. The test statistics for analyses are presented in Table 3.

Test Statistics for Analyses across Samples

The main effect of disease experience was included only in the sample that included people with and without diabetes.

Data were analyzed with SPSS (version 29). Raw data are available at https://doi.org/10.17605/OSF.IO/RE56Q. 35

Results

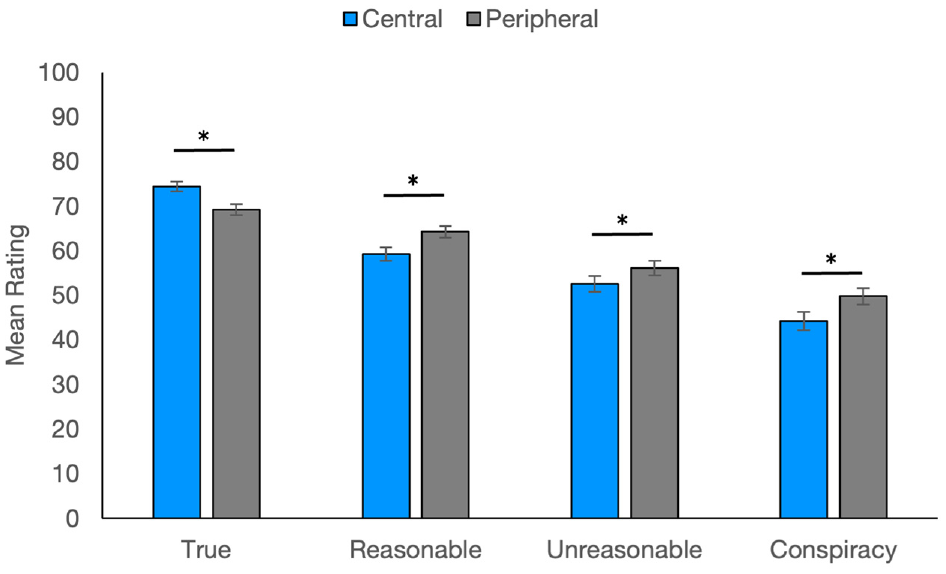

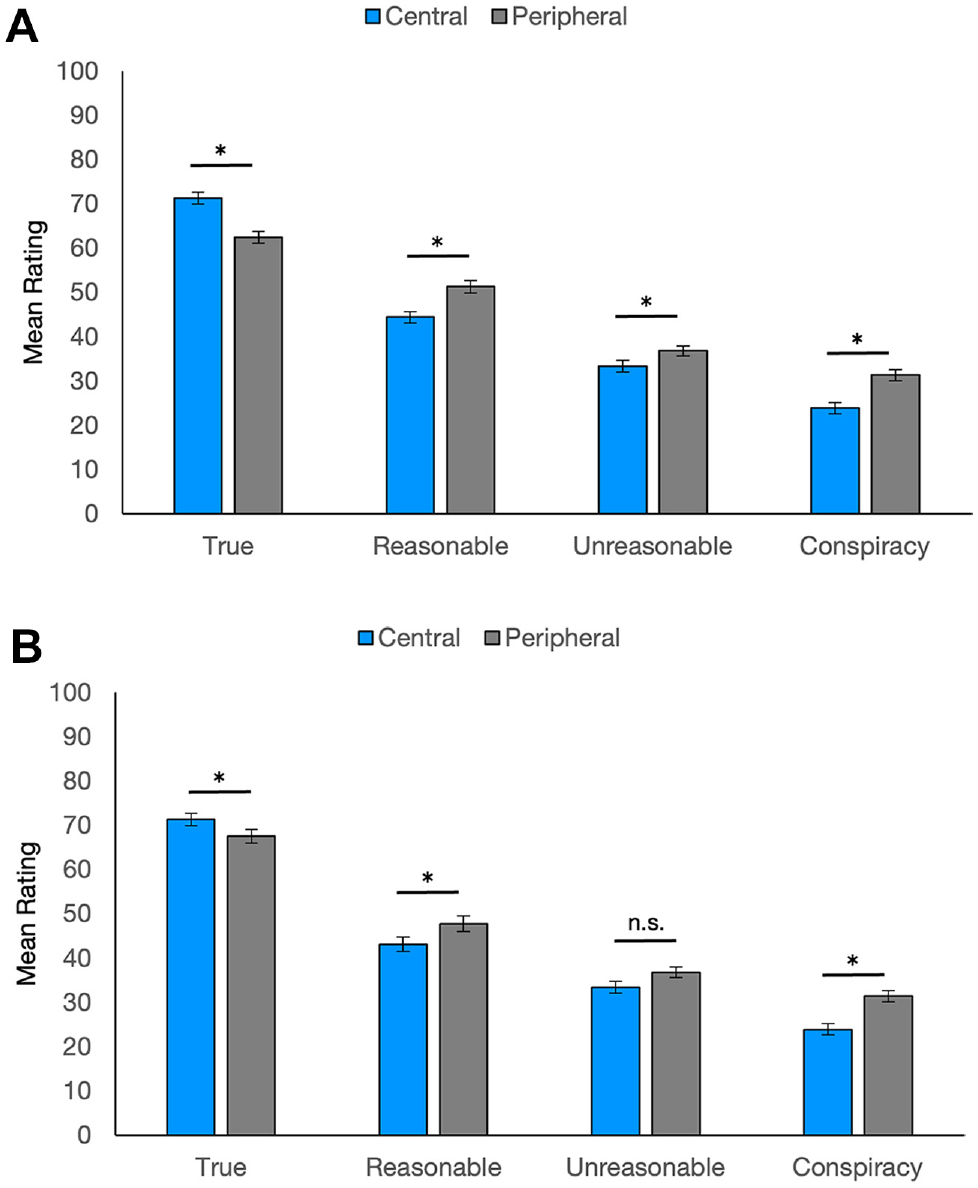

Sample 1: General Online Sample

We first tested perceptions of information sharing in a general sample. We hypothesized that judgments of patients would be more negative the more unreasonable the statement shared. We also hypothesized that patients sharing incorrect information central to their disease would be judged more harshly. Our results support our predictions. We found main effects of reasonableness and relevance as well as a significant interaction (see Table 3). Exploring the interaction, we found that people were sensitive to how “wrong” a belief seemed to be. Composite impression scores decreased from true to reasonably wrong, from reasonably wrong to unreasonably wrong, and again from unreasonably wrong to conspiracy statements for both the central and peripheral statements, P values <0.001 (see Figure 1). In short, patients sharing incorrect statements were rated more negatively than patients sharing true statements, and patients were penalized more the more unreasonable the incorrect belief. In addition, participants had greater negative impressions for patients sharing incorrect statements central to diabetes care. While ratings were significantly higher for patients sharing true central (v. peripheral) statements, patients sharing central statements that were incorrect resulted in lower ratings than patients sharing incorrect peripheral statements, P values <0.002.

Ratings for the general online sample.

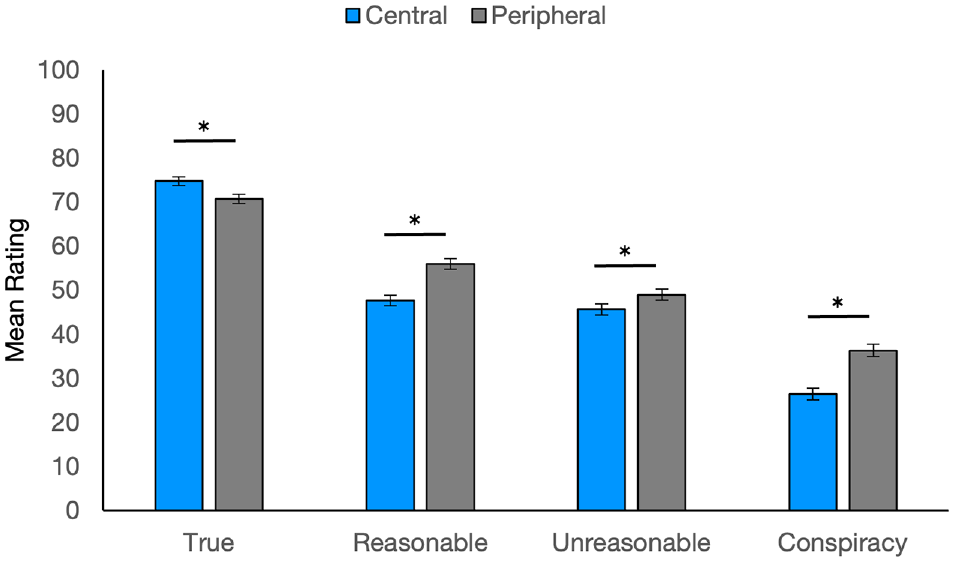

Sample 2: People Living with or without Diabetes

People living with diabetes may have more sympathy for people sharing incorrect information about the disease because they are used to wading through the vast amount of information and misinformation available about diabetes care. To see if people with lived experience formed different impressions than people without diabetes did, we next tested participants living with and without diabetes. We replicated the same overall pattern of results as in sample 1 for both groups (see Table 3). Exploring the significant 3-way interaction, ratings in the no-experience group replicated the general online sample: ratings significantly decreased across levels of reasonableness, P values <0.001; patients presenting true central (versus peripheral) statements were rated more positively, P < 0.001; and patients presenting incorrect central (versus peripheral) statements were rated more negatively, P values <0.002. The self-experience group mostly resembled the no-experience group. Ratings decreased across levels of reasonableness, P values < 0.001; patients were rated more positively when presenting true central (versus peripheral) statements, P < 0.006; and patients were rated more negatively when presenting central (versus peripheral) reasonably wrong and conspiracy statements, P values <0.002. The self-experience group’s ratings did not differ across central and peripheral unreasonable statements, P = 0.75, departing from the findings of the no-experience group (see Figure 2).

Ratings by people with and without diabetes: (A) no-experience group and (B) self-experience group.

Sample 3: Physicians

Finally, we tested physicians’ beliefs. We replicate our previous findings (see Table 3 and Figure 3). The previous pattern of significantly decreasing ratings with decreasing reasonableness held for peripheral and central statements, P values < 0.001. Patients sharing true central (v. peripheral) statements were rated more positively, P < 0.001. Patients sharing incorrect central statements were penalized more than those sharing incorrect peripheral statements, P values <0.019.

Ratings for physician participants.

General Discussion

Across samples, we found perceived penalties for sharing incorrect health information. Participants rated patients more negatively the more unreasonable their shared beliefs, especially if the beliefs were centrally relevant to their disease. Laypeople’s perceptions were matched by physician participants, who also rated our hypothetical patients more negatively for more unreasonable beliefs.

We found similar patterns across our 3 constructs, justifying collapsing into 1 composite impression measure. However, our 3 constructs could have tapped different elements of a patient impression. Our first construct asked people to rate how physicians would react to patients. Negative impressions indicate that our participants did think physicians would penalize these patients. Our second construct asked participants to rate how the patients would manage their disease. Negative ratings here indicate that participants did not believe our hypothetical patients were competent patients. In this way our participants are forming the same negative impression of the patients as they assume doctors would. Finally, our third construct measured patient trust, suggesting our participants not only judge physicians and themselves as reacting negatively to these patients, but they also think the patients react negatively to the health care system. Our work builds on prior findings that patients often do not disclose relevant information in part due to fear of judgment, 22 finding laypeople and physicians do form negative judgments based on the quality of information patients share.

We assert that patients should not be penalized for their incorrect beliefs. Laypeople are not supposed to have a medical school understanding of their health. Instead, laypeople defer to physicians for biomedical knowledge. Reliance on experts for decision making has been described as a division of cognitive labor. Effectively, the modern world is too complex for any one person to possess all the knowledge they need, and they therefore outsource decisions to experts who have the appropriate knowledge.36–38 Medicine has developed a highly differentiated cognitive labor, with experts specializing down to the level of organ systems (e.g., cardiologists versus gastroenterologists) or in different issues from the same organ system (e.g., neurologists versus psychiatrists). The division of cognitive labor allows laypeople to hold incomplete or inaccurate knowledge because they can outsource their knowledge to experts in the form of health care professionals. Our results suggest that when seeking out the expertise of physicians, people should be careful about what they share so as not to invoke a negative impression of themselves. This suggests obvious barriers to using the division of cognitive labor that is so essential to modern life. Future research can explore whether people believe such a penalty exists in other domains where people outsource their decisions (e.g., car repair, home contracting).

Our experiment’s limitations suggest future research. We used sparse patient descriptions, which may have directed attention to the shared belief. Also, we tested information sharing by new patients who did not have a relationship built with the provider. In a richer interaction, such as real patient-provider dyads, shared information may be in competition with other information to sway perceptions of a patient and may work differently for established versus new patients. Future work is needed to see how perceived penalties balance with other characteristics of a patient to influence actual clinical interactions.

In addition, we did not test participants’ diabetes knowledge or whether they knew specific statements were actually incorrect. Based on our findings, we would expect that if participants believed a specific belief was true (e.g., CBD oil regulates blood sugar) then they would perceive no penalty for sharing that belief. Our pilot work and posttest ratings established that our lay participants agreed with our distinction of reasonable and unreasonably wrong beliefs. However, future research could test participants’ knowledge of diabetes to more finally tailor correct and incorrect beliefs to each participant. Similarly, future work could explore how certainty in a belief may influence information sharing. Believing a statement is definitely versus only probably true may influence perceptions of sharing that information in a health care setting. We would predict that patients may share only beliefs they feel extremely confident are true and reasonable to believe. This is worrisome because beliefs patients are uncertain about (e.g., being uncertain if a medical issue is a complication from a new medication) may be crucial information to be shared.

Our work also focuses on perceptions of patients rather than patients’ actual behaviors or physicians’ patient-directed behaviors. We think it is critical to understand how people think they will be perceived for sharing information because these perceptions may be the limiting factor for what is actually shared. That is, the reality of how a physician would react may not matter if patients are not willing to share the information and find out. In this way, patients’ beliefs serve as a gateway to the shared decision-making process that needs further research. Once we better understand how patients view sharing information, we can explore what actually happens to the patient-provider relationship when those beliefs are shared.

Implications for Practice

Our findings have important implications for SDM implementation. Patients may not share information they are unsure about or believe a doctor will disagree with for fear of creating a negative impression. If a patient does share something they believe and the physician points out that information is incorrect, our results suggest patients will now believe their physician thinks poorly of them. Our physician findings justify these lay beliefs, in that physicians showed the same overall negative impression of patients who shared incorrect information. These findings bring us to an impasse: how can SDM be successfully implemented if patients are afraid to share information that is needed for education and decision making? We suggest that physicians need education regarding patient barriers to participating in the decision-making process. For example, physicians could receive training on the common health misconceptions circulated in popular media that their patients may believe. If physicians know what patients may be confused about related to their health conditions, they could offer correct information before their patients have to offer their own possibly incorrect beliefs. Further, educating physicians about our results could help providers better understand why their patients may not always share their health beliefs. This could help build trust between providers and their patients. It is an open question as to how much physicians think their patients share and what they think they hold back. Finally, improving physicians’ ability to elicit even the most inaccurate beliefs of their patients would improve the information flow needed for true SDM.

Conclusion

Making patients active participants in their health care decisions may improve outcomes. However, our results suggest roadblocks to sharing information because laypeople believe there is a penalty for incorrect beliefs, and physicians confirm this impression. More work is needed to help physicians understand the overwhelming amount of health information patients are exposed to and how little guidance patients have in how to assess such information. Helping physicians understand this point can emphasize the naturalness of patients believing incorrect information. Likewise, training physicians to be open and welcoming when patients are brave enough to share their beliefs can be a step toward lowering the barriers to more inclusive health care.

Supplemental Material

sj-docx-1-mdm-10.1177_0272989X241262241 – Supplemental material for Perceived Penalties for Sharing Patient Beliefs with Health Care Providers

Supplemental material, sj-docx-1-mdm-10.1177_0272989X241262241 for Perceived Penalties for Sharing Patient Beliefs with Health Care Providers by Jessecae K. Marsh, Onur Asan and Samantha Kleinberg in Medical Decision Making

Footnotes

Authors’ Note

Partial results were presented at the SARMAC Conference (July 2021), Annual Meeting of the Psychonomic Society (November 2021), and International Conference on Advanced Technologies & Treatments for Diabetes (April 2022).

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support for this study was provided in part by a grant from the National Science Foundation to JKM (1915210) and SK (1915182). The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing, and publishing the report.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.