Abstract

Objectives

In the context of validating a measure of patient report specific to diagnostic accuracy in emergency department or urgent care, this study investigates patients’ and care partners’ perceptions of diagnoses as accurate and explores variations in how they reason while they assess accuracy.

Methods

In February 2022, we surveyed a national panel of adults who had an emergency department or urgent care visit in the past month to test a patient-reported measure. As part of the survey validation, we asked for free-text responses about why the respondents indicated their (dis)agreement with 2 statements comprising patient-reported diagnostic accuracy: 1) the explanation they received of the health problem was true and 2) the explanation described what to expect of the health problem. Those paired free-text responses were qualitatively analyzed according to themes created inductively.

Results

A total of 1,116 patients and care partners provided 982 responses coded into 10 themes, which were further grouped into 3 reasoning types. Almost one-third (32%) of respondents used only corroborative reasoning in assessing the accuracy of the health problem explanation (alignment of the explanation with either test results, patients’ subsequent health trajectory, their medical knowledge, symptoms, or another doctor’s opinion), 26% used only perception-based reasoning (perceptions of diagnostic process, uncertainty around the explanation received, or clinical team’s attitudes), and 27% used both types of reasoning. The remaining 15% used general beliefs or nonexplicated logic (used only about accurate diagnoses) and combinations of general reasoning with perception-based and corroborative.

Conclusions

Patients and care partners used multifaceted reasoning in their assessment of diagnostic accuracy.

Implications

As health care shifts toward meaningful diagnostic co-production and shared decision making, in-depth understanding of variations in patient reasoning and mental models informs use in clinical practice.

Highlights

An analysis of 982 responses examined how patients and care partners reason about the accuracy of diagnoses they received in emergency or urgent care.

In reasoning, people used their perception of the process and whether the diagnosis matched other factual information they have.

We introduce “patient reasoning” in the diagnostic measurement context as an area of further research to inform diagnostic shared decision making and co-production of health.

Diagnostic excellence encompasses both an accurate and timely explanation of a health problem that was communicated well and used the optimal process. 1 Assessing diagnostic excellence from the patient perspective is undeniably valuable,2–5 particularly in an emergency or urgent care setting. Patient report of diagnostic excellence overcomes informational gaps in emergency and urgent care with their one-time visits, lack of established patient-clinician relationships, and limited time and resources to triage patients and educate patients on next steps.

A major goal of emergency medicine, in addition to relieving patient suffering, is to make a safe disposition (i.e., treat time-dependent conditions, hospitalize for further evaluation, or discharge).6,7 Understanding when a patient may have been discharged home with a missed condition that would have benefited from either hospitalization or immediate further treatment, or when a patient is discharged with a new condition that requires management but was left without an understanding of the new condition, is beneficial to enable a learning health system. At the same time, systematic patient-reported feedback on diagnostic performance and the subsequent patients’ health trajectory is not routinely used in emergency or urgent care environments. 8 Moreover, there is reluctance to implement patient report as an assessment of diagnostic performance based on the prevalent assumption that patient report is subjective, biased by factors unrelated to diagnostic quality (e.g., long wait times or general patient satisfaction) that are already captured or perceived beyond control, and, for performance assessment purposes, requires validation via “objective” sources.9,10 Involving care partners, such as family members, friends, patient advocates, or others who partner with patients to co-manage their health, in reporting is equally important and valuable.11–13

Little is known about how patients form their assessments about an explanation of a health problem they receive. While an accurate diagnostic code documented in the medical records is helpful, it is patient’s perception of an explanation of a health problem they leave emergency or urgent care with that determines patients’ next steps. Whether the patient follows up with a treatment, pursues further diagnostic steps, visits another emergency or urgent care facility, or disengages from seeking care for this and future health problems, all options rely on patient assessing of the explanation they were given and is mediated via trust.2,14,15 The patient’s perception of an explanation of a health problem is proximal to a co-produced, shared, meaningful diagnosis.16–18

There is a gap in the understanding of patients’ perception of the diagnostic care they receive, especially the accuracy of explanations of health problems they are given. As a part of cognitive validation of a new patient-reported measure of diagnostic excellence and its 2 patient-reported diagnostic accuracy items, we conducted this study addressing the aforementioned gap. Specifically, we aimed to 1) qualitatively analyze patients’ and care partners’ perceptions of accuracy of explanations of health problems they received in emergency and urgent care settings and 2) explore variations in patient reasoning in assessing those explanations as accurate or inaccurate.

Methods

This study was conducted as part of the development and validation of a Patient-Report to Improve Diagnostic Excellence in Emergency Department (PRIME-ED) questionnaire. From February 4 to 15, 2022, PRIME-ED was administered via a Web-based platform to a nationally representative panel of adults (AmeriSpeak). Patients or care partners of a patient who had an emergency department or urgent care visit within the past 30 d were eligible to respond. The questionnaire additionally asked for 2 free-text responses (in English or Spanish) from respondents to explain why they have chosen particular levels of their agreement (Likert scale 1–5, from

Using our definition of diagnostic accuracy from patients’ and care partners’ perspectives, we paired the free-text responses to the 2 statements. We qualitatively analyzed these paired free-text responses for cognitive validity of the 2 PRIME-ED items and inductively created themes for further analysis of cognitive reasoning types. 19 The first reviewer (V.D.) examined all the responses and developed the initial code book. Then both reviewers (V.D. and N.G.) applied the code book to a subset of responses and discussed modifications and reorganizations to the code book until it was finalized. After independent coding of all the responses, the reviewers resolved their coding conflicts, and the arbitrator (K.T.G.) was involved in final decisions on remaining discrepancies. Each response was coded with up to 3 themes. Since 46% of responses had 2 identified themes but only 6% of responses had 3 identified themes, we did not consider it practical to identify more than 3 themes.

The participants responded to items on key demographic and visit information. They also indicated whether 1) their past visit in the last 30 d was to an emergency department or to an urgent care facility; 2) their primary health concern was a new health problem, accident/injury, or existing/worsening health concern; and 3) their primary health concern they visited for was nonurgent, urgent, needed care within hours, or was potentially life threatening.

Given our large sample size and following established methods, we quantitatively examined differences in prevalence of themes for patients’ and care partners’ perception of diagnostic accuracy by their level of assessment of diagnostic accuracy and by key visit information.20–23 Statistical analyses of the differences were performed using STATA 17. We conducted chi-squared tests to determine differences in the levels of agreement. For differences by the level of agreement with diagnostic accuracy, we compared each group by level of agreement being dichotomized, for example, strongly agree versus those who did not strongly agree. We also presented differences in agreement by visit type and other characteristics (reported in the Appendix).

Results

Participant and Responses Characteristics

Our Web-based survey of a national panel attracted 1,116 respondents, 59% of whom were patients and 41% care partners. Among responders, 65% were female, 63% were White non-Hispanic, 16% were Black non-Hispanic, and 14% were Hispanic. In terms of education status, 16% had a graduate degree, 22% a bachelor’s degree, 38% some college or associate degree, 18% high school equivalent education, and 5% less than high school education. The age group sizes were very similar: 23% of respondents were 65 y and older, 28% were 50 to 64 y of age, 25% were 35 to 49 y of age, and 23% were 18 to 34 y of age. About one-fourth (26%) of the panel was from the Midwest, 37% from the South, 22% from the West, and 14% from the Northeast census regions.

Patients and care partners provided 1,005 responses, of which 982 responses were coded and 23 (2%) were excluded. Excluded responses indicated either that a respondent did not have an emergency department or urgent care visit, were unrelated to the inquiry, or could not be definitively determined as related. While most (88%) respondents provided free-text responses, those who strongly agreed their diagnosis was accurate were more likely to provide a free-text response than those who strongly disagreed (94% v. 76%,

Themes Associated with Diagnostic Accuracy

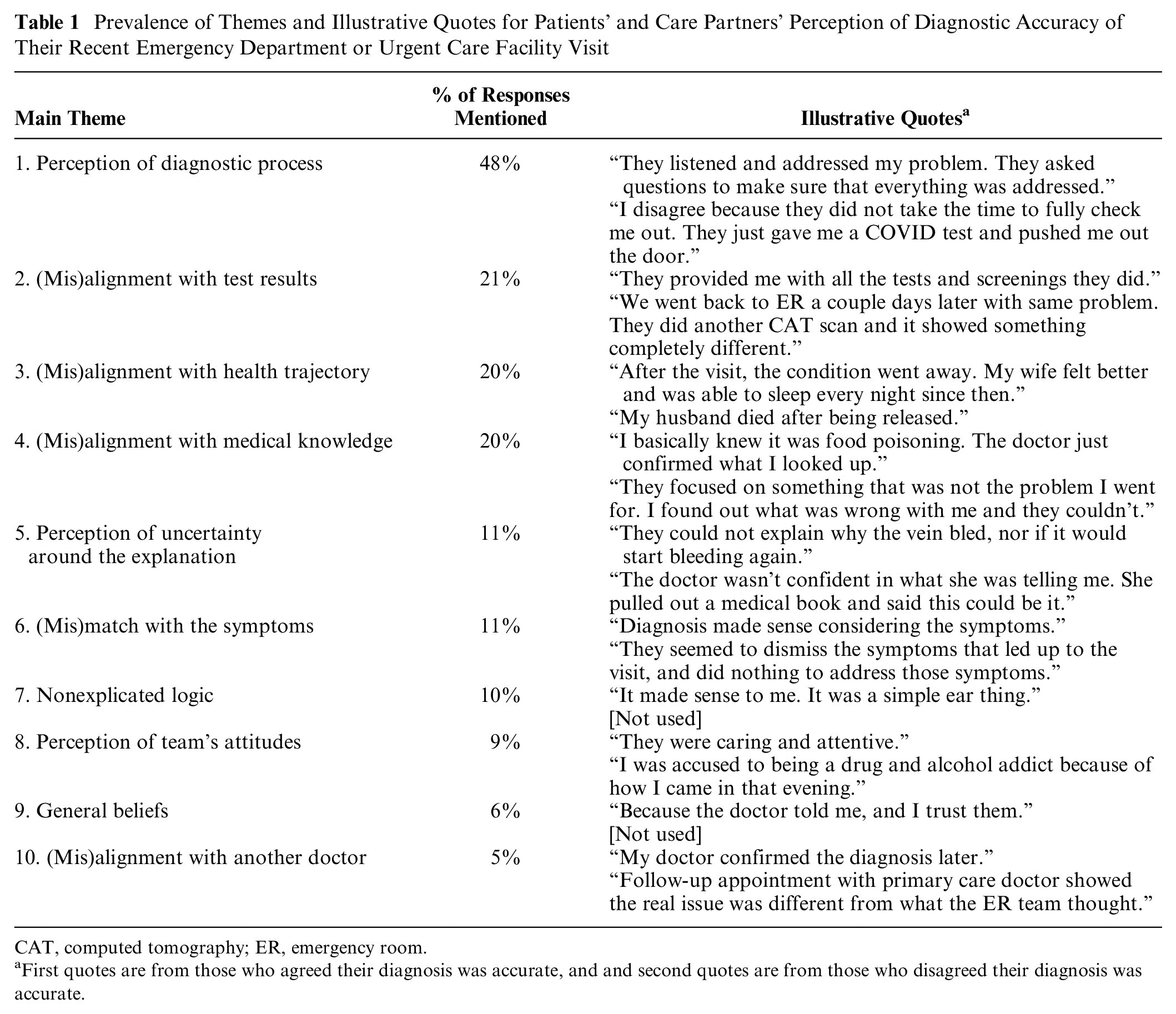

We identified an average of 1.6 themes per each paired response, resulting in 1,560 commentary instances. We identified 10 main themes in patients’ and care partners’ responses (Table 1).

Prevalence of Themes and Illustrative Quotes for Patients’ and Care Partners’ Perception of Diagnostic Accuracy of Their Recent Emergency Department or Urgent Care Facility Visit

CAT, computed tomography; ER, emergency room.

First quotes are from those who agreed their diagnosis was accurate, and and second quotes are from those who disagreed their diagnosis was accurate.

Almost half (48%) of respondents’ assessments of diagnostic accuracy were related to their perception of diagnostic process. The respondents listed several elements of the diagnostic process that affected their assessment of diagnostic accuracy, including information intake, conducting of the tests and examinations, explanation of the information, and discharge instructions. The presence or absence of these elements of the diagnostic process affected perceived accuracy. For example, “They thoroughly explained the procedure. All my questions and concerns were answered. I was thoroughly examined and confirmed with what I assumed was non-threatening. I was given instructions on what to do if my condition got worse” (respondent reporting their agreement to diagnostic accuracy) versus “They didn’t even check my vitals or anything. They sent me home without checking anything” (respondent reporting their disagreement with diagnostic accuracy).

Slightly more than one-fifth (21%) of respondents reasoned based on the alignment (or misalignment) of their diagnosis with the test results, for example, “Dr. automatically assumed patient had COVID-19 and was treated as such. Negative results given 4 d later. Had to go to another facility for treatment of bronchitis and pneumonia” (respondent reporting their disagreement with diagnostic accuracy).

One-fifth (20%) of respondents reasoned based on whether their postvisit health trajectory aligned or misaligned with their given diagnosis: “Upon coming home, I felt better. Within a few days, the prescribed medication helped relieve my symptoms as well” (respondent reporting their agreement to diagnostic accuracy). Another 20% of respondents reasoned based on alignment or misalignment of the diagnosis with their medical knowledge, such as specifying that their health concern is a chronic condition that they are familiar with, or describing the source of their knowledge, or not specifying that source. “They focused on something that was not the problem I went for. I found out what was wrong with me, and they couldn’t” (respondent reporting their disagreement with diagnostic accuracy).

A minority (11%) of respondents reasoned based on their perception of uncertainty around the diagnosis when it was provided to them:

The doctors didn’t seem to know exactly what was wrong at the beginning and once they had an idea they couldn’t explain why it happened. So I didn’t know if I could trust what they said with confidence. They didn’t go into an explanation but just discharged my husband and told us to make a follow-up appointment. So other than knowing that he was fine, we didn’t understand why it happened.

Another 11% of respondents reasoned based on alignment or misalignment of the diagnosis with their symptoms, for instance, “Overall because of my situation and what they told me, it basically matched the way I was feeling and what I was going through.” One-tenth of respondents reasoned using logic that was not explicated or detailed, such as, “It was obvious what was needed to be done and what could happen.”

Similarly, 9% of respondents reasoned based on their perception of clinical team’s attitudes: “Cause they seem not to care all around, and said, and did whatever they needed to just to get me out of there. Cause it just seemed like something they made up, so I would leave.” Only 6% of respondents reasoned based on general beliefs about health care and providers overall: “Because they are professionals, and I think I can trust them,” while 5% reasoned based on alignment or misalignment of the diagnosis with another doctor’s opinion: “Had follow-up surgical procedures that confirmed the diagnosis.”

Three Types of Patient Reasoning: Corroborative, Perception Based, and General

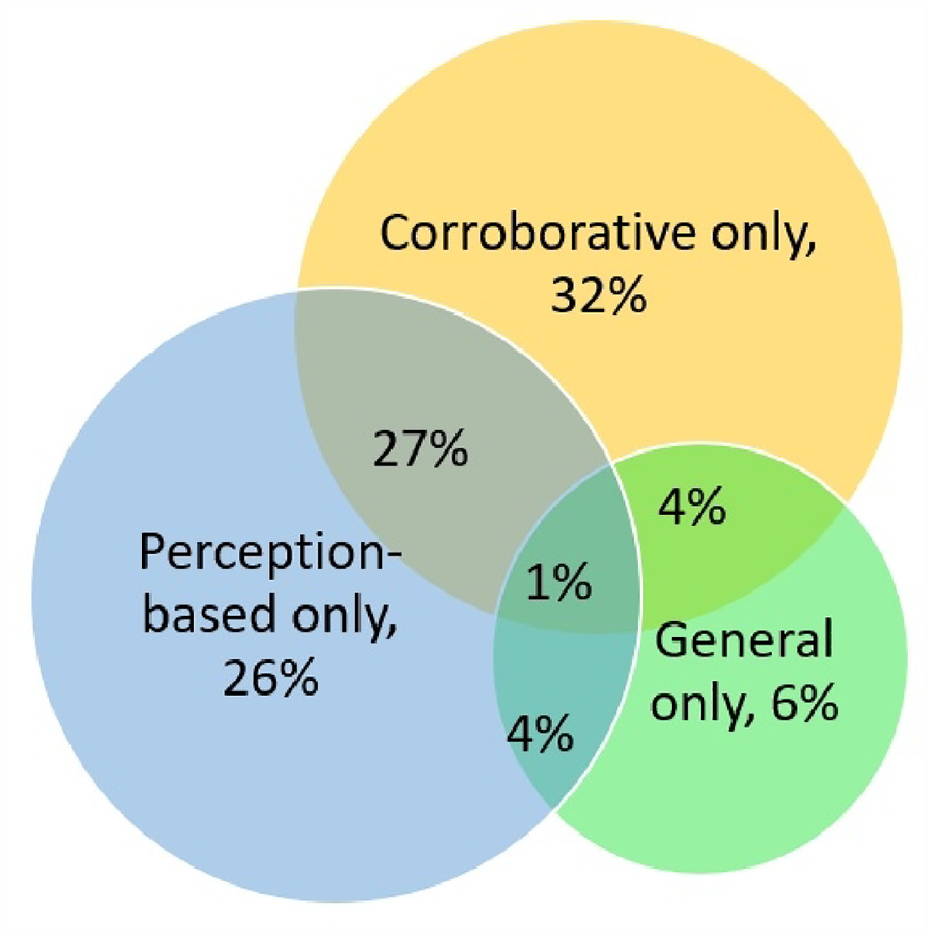

In the analysis of 10 main identified themes, 3 types of patient reasoning derived from patients’ and care partners’ perception of diagnostic accuracy of their recent emergency department or urgent care facility visit emerged. Corroborative reasoning was based on 5 themes: (mis)alignment of diagnostic accuracy with either test results, patients’ subsequent health trajectory, patients’ medical knowledge, symptoms, or another doctor’s opinion. Perception-based reasoning was based on 3 themes: diagnostic accuracy was due to patients’ or care partners’ perception of diagnostic process, uncertainty around the diagnosis, or the clinical team’s attitudes. General reasoning in assessing diagnostic accuracy was based on either nonexplicated logic or on general beliefs.

In Figure 1, we illustrate the prevalence of 3 types of patient reasoning in assessing diagnostic accuracy. Almost one-third (32%) of respondents used corroborative reasoning only, and 26% of respondents reasoned based on their perceptions of diagnostic process only. Both perception-based and corroborative reasoning was used by 27% of respondents. The remaining 15% of respondents used general reasoning (6%) and their combinations with perception-based reasoning (4%), corroborative reasoning (4%), or both (1%).

Types of reasoning used by patients and care partners to assess the diagnostic accuracy of their recent emergency department of urgent care facility visit percentage of respondents).

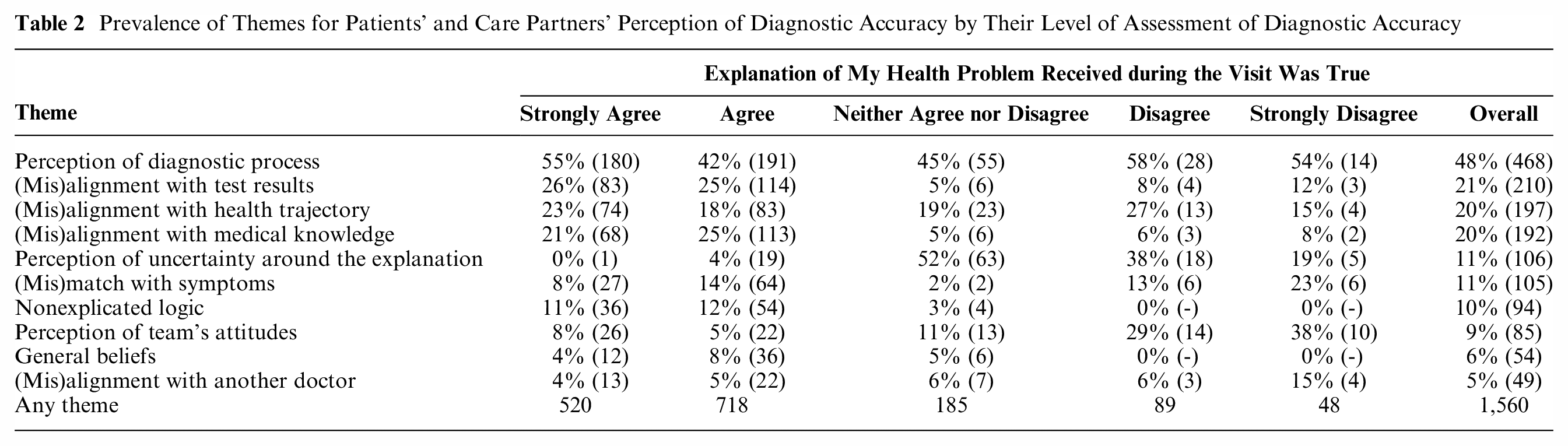

Those with Inaccurate Diagnoses Reasoned Differently

The distribution of themes differed in those who strongly disagreed or disagreed that their diagnosis was accurate (Table 2). For that analysis, the level of agreement with only 1 statement (“the explanation of my health problem received during the visit was true”) was used for the stratification. Respondents who strongly disagreed or disagreed their diagnosis was accurate did not rely on general beliefs nor on nonexplicated logic. They more often relied on 1) the perception of the clinical team’s attitudes (38% strongly disagreed v. 9% overall,

Prevalence of Themes for Patients’ and Care Partners’ Perception of Diagnostic Accuracy by Their Level of Assessment of Diagnostic Accuracy

Those who neither agreed or disagreed that their diagnosis was true more often relied on their perception of lack of explanation or uncertainty of the diagnosis (52% v. 11% overall,

The distribution of reasoning types in assessing diagnostic accuracy by patients’ or care partners’ level of assessment of diagnostic accuracy of their visit is presented in Table A2 (see the Appendix).

Consistency of Patient Reasoning by Respondent Type and Health Concern

There was no statistical difference between patients and care partners in the patterns of themes in perceptions of diagnostic accuracy. There were minor differences in the main themes based on visit type (emergency department v. urgent care), primary health concern, and visit urgency (data are presented in the Appendix).

Discussion

In this nationally representative sample of patients and their care partners who visited the emergency department or urgent care in the past month, we found that patients and care partners apply similar reasoning, which is complex and multifaceted, to assess the accuracy of the diagnostic explanation they received. Particularly, components of the diagnostic process and whether the diagnosis aligns with other factual information were predominantly used to assess accuracy. Few patients and care partners relied on general beliefs or on nonexplicated logic, and none used general reasoning in cases in which they assessed their diagnosis as inaccurate. The similarity in patient and care partner reasoning provides face validity for this measure, as care partners are often those who fill out questionnaires on behalf of patients. 24 The patterns in patient and care partner reasoning that emerged under this measure validation context are relevant to broader research topics in other patient-centered areas such as patient satisfaction, patient narratives, patient engagement, or patient complaints.

Our analysis confirmed that patients’ and care partners’ assessments of diagnostic accuracy are inextricably connected with the diagnostic process in an emergency or urgent care setting. Based on our findings, the diagnostic process affects patients’ perceptions, rationalization, and potential future health care–seeking behavior. Achieving patient-reported diagnostic excellence might be aligned with the involvement of patients and care partners in diagnostic co-production and shared process.4,17,25 At the same time, the findings demonstrate that perceptions of lack of explanation or uncertainty of the diagnosis, in the context of emergency department and urgent care visits, correspond to patients and care partners’ assessing their diagnoses as neither accurate nor inaccurate. This is further evidence that sharing of uncertainty of diagnosis with patients and care partners is an organic component of diagnostic co-production, especially sought in emergency care.26,27

To encourage expanding our findings to broader contexts such as shared diagnostic decision making, we are coining a name for a diagnostic focal area—

Patient reasoning is highly applicable to shared decision-making models and valuable as a focal point. By analogy, focusing on clinical reasoning in the shared decision-making context enabled consideration of understanding the distribution of clinical cognition between patients and health professionals, drawing on the perspectives of both parties.34,35 The latter construct includes situations in which patients fail to develop a shared trajectory for clinical reasoning during an “inherently distributed task.” 34 A recent study suggested a way to incorporate patient preferences in clinical decision making. 36 Meanwhile, in the medical literature, “patient reasoning” as a term and research field has not attracted significant attention, as illustrated by an article in which the term comes up only in a negative sense: physicians’ skepticism about patients reflected in statements in medical records that emphasize “poor patient reasoning” connected to patients’ decision making and behaviors. 37 Thus, we introduce patient reasoning as an important area for dedicated research so that the patient-reasoning aspects of shared decision making, including during the diagnostic process, could more fully enter models of shared decision making and recognize sociocultural elements of clinical reasoning.

Part of the challenge in focusing on patient reasoning is the counterpoint that what is most valuable is understanding its coherence with clinical reasoning. There is already evidence indicating misalignment between patients’ and clinicians’ mental models of patient-reported diagnostic accuracy and diagnostic errors.5,38 This context alongside the gravity of the prevalence and harms from diagnostic errors urges us to advocate for appreciating the value of patient-reported diagnostic excellence on its own, without engaging in contrasting patient reports with clinical or system-level interpretations and avoiding the natural human bias of unfairly shooting the messenger of bad news. At the same time, we should be cautious of warranted concerns that an unwanted diagnosis may be ill-received by a patient, despite its accuracy. Notwithstanding, patient perspectives on diagnostic excellence—as those who are co-producing their health—contain insights that cannot be gathered otherwise.39,40 Patient perspectives on diagnostic excellence may or may not corroborate clinician or system perspectives of diagnostic excellence, and future research is needed to assess the correlations with clinical outcomes and interpret how to apply such findings to clinical practice. 10

Future Directions and Implications

This is the first study to analyze a large data set of patients’ and care partners’ responses to how they reason about the explanations of health problem they received during diagnostic care. The thematic analysis and categorization of themes into 3 reasoning types provide a rich foundation for understanding patient reasoning and process in decision-making contexts in which the value of patient reasoning may be overlooked. More studies are needed to examine patient reasoning in other circumstances to establish if identified types and their prevalence reflect broader patterns of patient reasoning. Other studies could contribute to models and theories of patient reasoning and comparative work with clinical reasoning, for example, using the diagnostic process framework developed by the National Academies of Sciences, Engineering, and Medicine. 41 Future studies might, for instance, additionally collect information on preferred patient-physician relationships (such as paternalism, consumerism, or partnership) to understand how these preferences might affect reasoning in assessing diagnostic accuracy. Although within our analyses, we did not identify a case demonstrating that the respondent received an unwanted diagnosis during their visit, further work might explore how patients perceive diagnostic accuracy after receiving a diagnosis that is more difficult to accept, such as miscarriage, cancer, or a stigmatized condition.

In addition, demonstrating evidence of the unique value of patient-reported diagnostic excellence will require developing new measures that are longitudinal and capture health care seeking across settings as well as identifying associations between those new measures and clinical outcomes. For clinicians who wish to engage in meaningful diagnostic co-production and shared decision making, more in-depth communication around diagnosis could include asking patients how they assess the accuracy of the given diagnosis or planned next step (e.g., using the survey questions and the follow-up probes) in order to move toward greater levels of shared understanding.

Limitations

This study has its limitations. We did not have many responses in Spanish (less than 5% of comments) and did not obtain responses in other languages, which prevented us from researching language-related aspects of patient reasoning. While answering to open-ended questions eliciting patients’ and care partners’ thinking about why they reasoned the way they did, the comments might have been biased toward thinking along the PRIME-ED statements that the respondents just rated and domains that informed those statements. However, our thematic analysis did not follow a prespecified codebook aligned with PRIME-ED domains but was generated inductively from emerging themes. We had limited ability to understand temporal trends in patients’ and care partners’ reasoning about their emergency care visits (see Appendix, Table A1). We did not collect or seek information on specific patient diagnoses; however, we conducted additional analyses by type of primary health concern and its urgency (see data in the Appendix). Lastly, our survey was distributed during a COVID-19 surge, which might have affected the pattern of emergency care visits. However, from our analysis of the free-text responses, we established that only 7% of respondents either explicitly or implicitly indicated that their visit was related to COVID-19.

Conclusions

As co-production between patients (with care partners) and professionals becomes a more common goal in diagnostic care, shared decision making will need to incorporate evidence about ways that patients reason about elements of the diagnostic process and its outcomes. Our findings demonstrate that patients and their care partners provided multifaceted reasoning for their assessment of diagnostic accuracy. Diagnostic accuracy assessments were related to perception-based aspects of diagnostic process, management of uncertainty, and the attitudes of the clinical team. Patients and their care partners equally relied on corroborative reasoning about the alignment or misalignment between diagnostic accuracy and their health trajectory, the test results, their symptoms, their medical knowledge, and another doctor’s opinion. Relying solely on general beliefs or on nonexplicated logic was uncommon and not used by those assessing their diagnosis as inaccurate. To shift toward diagnostic co-production and shared decision making in diagnosis, our paper sets the stage for developing an exploration of patient reasoning and its process, models, and theories. Understanding patients’ and care partners’ reasoning can drive targeted improvements aimed at achieving diagnostic excellence, particularly in the context of shared decision making and co-production of health.

Supplemental Material

sj-docx-1-mdm-10.1177_0272989X231207829 – Supplemental material for Patient Reasoning: Patients’ and Care Partners’ Perceptions of Diagnostic Accuracy in Emergency Care

Supplemental material, sj-docx-1-mdm-10.1177_0272989X231207829 for Patient Reasoning: Patients’ and Care Partners’ Perceptions of Diagnostic Accuracy in Emergency Care by Vadim Dukhanin, Kathryn M. McDonald, Natalia Gonzalez and Kelly T. Gleason in Medical Decision Making

Footnotes

Preliminary findings of this study were presented at the Society to Improve Diagnosis in Medicine (SIDM) 15th Annual Conference, Minneapolis, Minnesota, October 16–18, 2022. The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support for this study was provided entirely by a grant from the Gordon and Betty Moore Foundation. The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing, and publishing the report.

Ethical Approval

The study was approved (IRB00228732) by the Johns Hopkins Medicine Institutional Review Board.

Informed Consent

All survey respondents consented via prospective agreements.

Data Availability

Materials will be made available upon request to Vadim Dukhanin.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.