Abstract

Keywords

Introduction

In shared decision making, patient decision aids are often used to support patients’ understanding of the process of care and the subsequent evidence-based outcomes. 1 Values clarification methods are used in decision aids to help patients evaluate the desirability of attributes or options, with the aim that choice of treatment reflects personal preferences and values. 2 A review by Witteman et al. (2016) showed that value clarification methods such as rating scales or providing the pros and cons are most commonly used to elicit individual preferences. Only 38% of the articles described a value clarification method explicitly or implicitly based on any theory, framework, model, or mechanism. 3

Based on its theoretical axioms, conjoint analysis (CA) may be an effective value clarification method. CA has a long history in marketing, and it has gained widespread use as a tool to elicit patient preferences for health care services.5,6 CA allows patients to think about complex treatment decisions by letting them evaluate scenarios through rating, ranking, or choice tasks, enabling a mathematical model to algorithmically derive the relative value of treatment characteristics and estimate preferences for available treatment options.7,8 CA is, however, mostly known for elicitation of preferences at the population level to support organizational and regulatory decision making. 9 As Kaltoft et al. (2015) argued, the results of these studies have limited clinical relevance to individual patients in decision making. 10 What is important to one patient may not be the same as what is important to others.

For individual patients to benefit from value elicitation exercises, CA needs to generate part-worth utilities at the individual patient level. Practically, there is a lot of controversy regarding what is the “best” experimental design to elicit individual preferences using CA. Holding on to criteria such as level-balance and orthogonality might lead to a large number of questions, whereas violation of these criteria might influence the estimation of reliable preferences. Other issues in which we seek guidance are whether attributes were selected at the individual level, which statistical analyses were used to estimate individual part-worth utilities, and how and in which format the outcomes were presented to patients.

Therefore, the aim of this study is to systematically review the extent to which CA is used to elicit individual preferences for clinical decision support. Second, we aim to learn from previous research by identifying the common practices in the selection of attributes and levels, the design of choice tasks, and the instrument used to clarify values. Finally, we present how the use of CA in clinical decision support was evaluated in these studies.

Methods

Search Strategy and Screening Process

We conducted a systematic literature search in Scopus, PubMed, PsycINFO, and Web of Science in May 2016 to identify studies that have described the use of CA to elicit individual preferences for clinical decision support. No publication date restrictions were imposed. Specific exclusion criteria were 1) use of other type of stated preference methods, and 2) non-English-language literature. Gray literature was searched using online search engines (Google Scholar) and in conference proceedings (Society for Medical Decision Making and International Society for Pharmacoeconomics and Outcomes Research). Studies were included if they contained sufficient information for data extraction on the relevant criteria detailed below. Reference lists of included articles were reviewed for additional relevant studies. Supplementary Appendix 1 contains a full description of the search strategy. Next, 2 authors (MW and JT) independently screened all articles. Primary exclusion was based on title, abstract, and keywords. Subsequently, potentially relevant articles were reviewed in full. Discrepancies were resolved by discussion between MW and JT until consensus was reached.

Extraction of Relevant Data

For each included study, 2 independent researchers (MW and JT) systematically extracted data using a standardized abstraction form. The extracted data included 5 categories of information: 1) general study information, 2) selection of attributes and levels, 3) choice task design, 4) instrument design, and 5) study outcomes and evaluation.

We derived

We reported design decisions regarding the

Next,

We then described the

Lastly, we examined the

Included studies had to present sufficient data on criteria 1–3, but not necessarily on 4 and 5 (e.g., clinical trial protocols). We derived information about design choices from the main text, the appendix, or the decision tool itself if it was accessible on a Web site. If information about the design characteristics was lacking or insufficiently described, we requested the authors to send a copy of the tool used. Descriptive summaries and graphs were used for all categories, except for point 5, in which narrative synthesis methods were used. Results are reported in accordance with the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement and checklist. 13

Results

Search Results

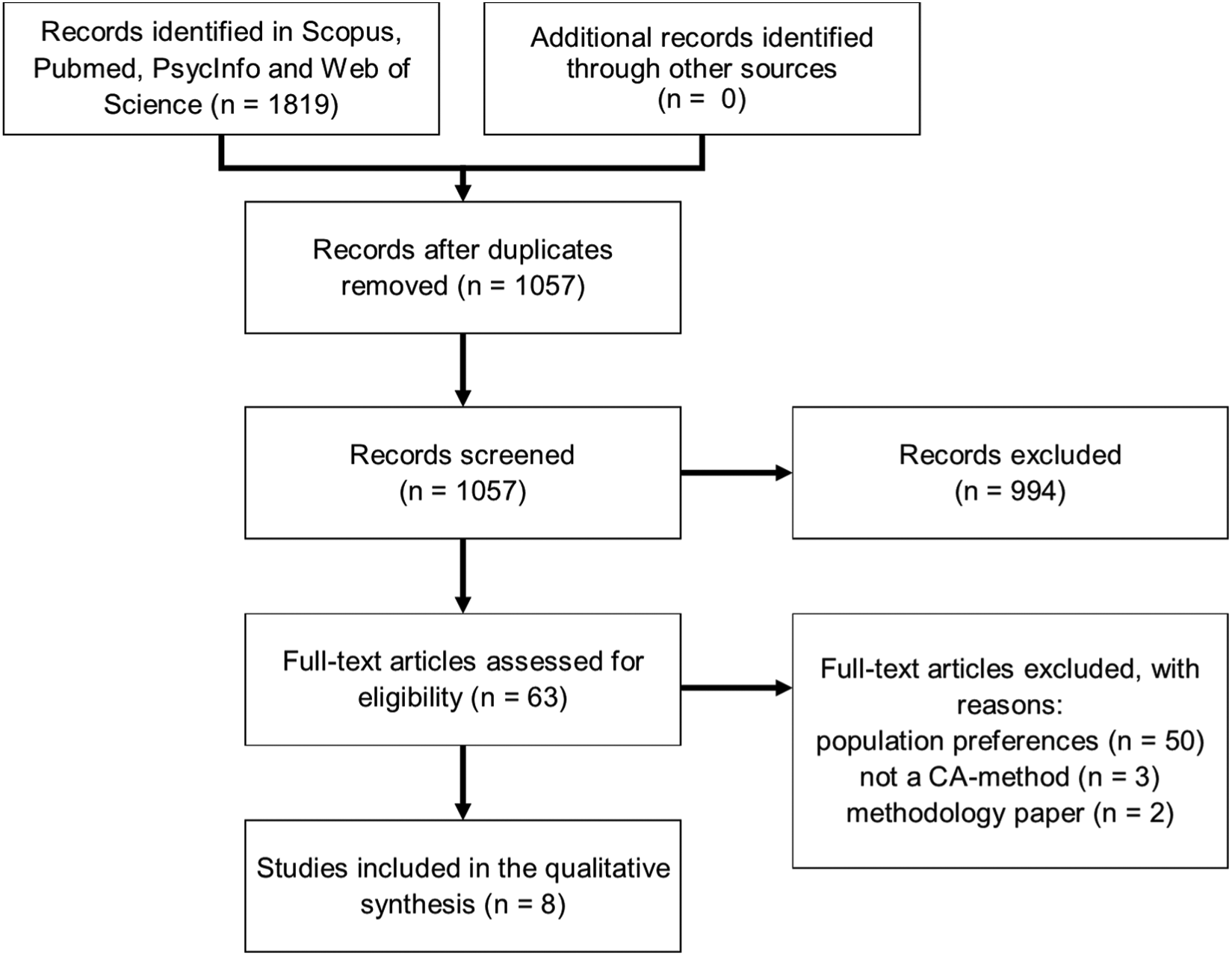

In total, 1057 articles were screened; 994 articles were excluded by title and abstract review. The remaining 63 articles were subjected to full-text review. This led to exclusion of an additional 55 articles, mainly because the focus of the article was elicitation of preferences at the population level. In total, 8 articles described the use of CA to support preference elicitation in clinical decisions in individual patients (Figure 1).

Selection of studies.

Characteristics of Included Studies

The included articles discussed the application of CA in curative treatment decisions (e.g., breast cancer and prostate cancer) and treatment decisions for chronic conditions (e.g., osteoporosis). The focus of all studies was to examine the use of CA to support preference elicitation in a clinical setting. One article discussed only the clinical trial protocol and not results. 14 More specific research questions were to compare the additional value of an interactive CA exercise to a printed booklet and a video booklet on reducing decision conflict, 15 and Pieterse et al. (2010) 16 examined whether the mode of administration (Web-based or local computer) influenced importance scores. We concluded that the studies of Fraenkel (2010) 17 and Rochon et al. (2012) 18 described the development of the same value clarification exercise, and they are therefore discussed as one study. Supplementary Appendix 2 shows the complete evidence matrix for all 5 categories of extracted data.

Selection of Attributes and Levels

In our review, we found a range of practices to identify attributes, including literature review,16,19 consulting clinicians, 15 and more in-depth qualitative research techniques such as interviews and/or focus groups with patients and/or clinicians.14,20,21 In all studies, the final selection of attributes was made by the researchers or a facilitated discussion with the project team. Only one study specifically stated that the patients had to rank and rate the attributes, from which the top attributes were determined. 21 None of the studies allowed individual patients the possibility to add (or remove) attributes prior to the actual start of the exercise.

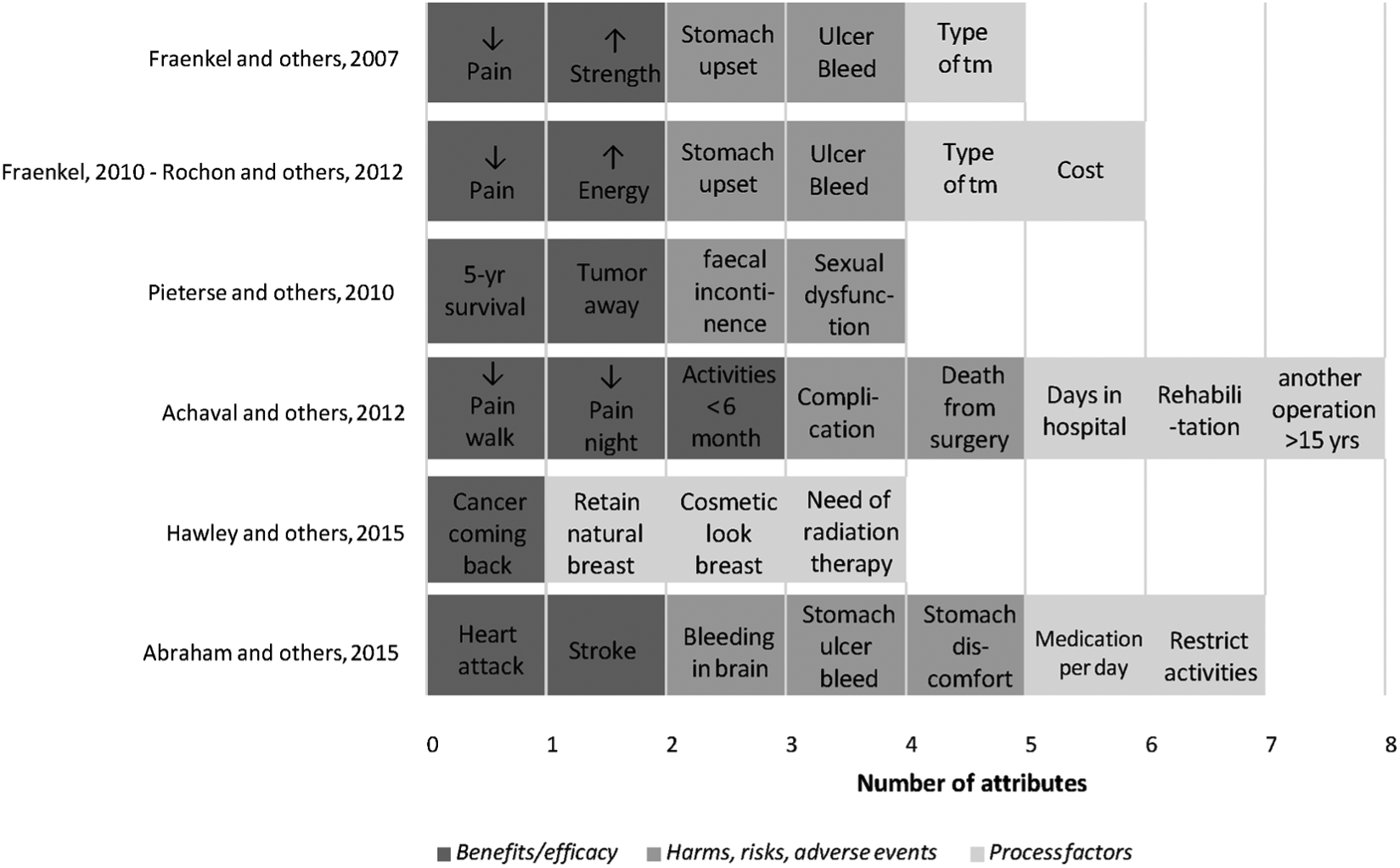

Each study included attributes concerning health outcomes such as benefits, harms, risks, or adverse events (Figure 2). Except for 1, all studies included a measure of process of care, for example treatment modality, 19 costs, 17 or days in hospital. 15 The studies selected between 4 and 8 attributes for inclusion in the CA exercise. Attribute levels reflect the range of actual variation within attributes. Among all studies, 7 out of 34 attributes were 2-level attributes, 18 were 3-level attributes, and 9 were 4-level attributes. Three studies had equal levels for all attributes,15,18,21 whereas 2 studies had a combination of 2- and 3-level attributes,16,20 and for 2 studies the number of levels for all attributes was unknown.

Type of attributes selected in the studies.

Three articles explicitly reported that pilot tests were conducted to test the feasibility of the exercise. Patient understanding was enhanced by using frequencies (graphs) to describe risks (e.g., 1 in 10 or 1 in 1000)15,16,18,20 or visual pictographs of attributes or icon arrays.19,20

Choice Task Design

All included studies used adaptive conjoint analysis (ACA). ACA is an interactive computerized way of using CA that enables patients to construct their preferences through self-explicated rating and ranking tasks followed by pairwise comparisons. ACA asks several sets of questions. In the first series of questions, patients are asked to rank the levels of the attributes that do not have a natural ranking. In the reviewed studies, these were primarily process attributes. In the second set of questions, patients are asked to rate the importance of moving from the most preferred level to the least preferred level of each attribute on a Likert-type scale. Three studies, however, used a modified (simplified) version of ACA that has been developed by Fraenkel (2010). It asks patients to first choose the attribute that is most important to them. Next, they rate the remaining attributes relative to the one they indicated as being the most important one. 17

Both the original ACA and modified ACA are followed by a series of pairwise comparisons in which patients have to use a 9-point Likert-type scale to indicate to which extent one scenario is preferred to the other. ACA is interactive, which means that after each pairwise comparison, the utility estimates are updated through regression analysis, and a new pair of scenarios is selected that are estimated to be of equal utility. The number of paired comparisons among the ACA studies ranged from 12 to 18 and is determined prior to the preference elicitation (when using Sawtooth Software). The formula that is used to determine the minimum number of paired comparisons is 3 × (

ACA allows patients to handle a large number of attributes: the highest number of attributes found in this review was a study with 8 attributes, each having 4 levels. 15 ACA may simplify the task by presenting fewer attributes in each pairwise comparison than traditional choice-based CA. ACA reduces the attributes to only the most important ones for each patient (tailored design). Whether ACA also reduces cognitive burden, however, should be empirically tested in studies comparing ACA to choice-based CA.

Instrument Design

Except for 1 study, 21 all studies used Sawtooth Software to collect and analyze the data. All ACA studies were conducted via a computer, because the pairwise comparisons had to be adapted to the patient’s previous answers. In 4 studies, it was explicitly specified that the tool was used by patients prior to consultation or prior to making their final treatment decision.14,18,19,21 Four studies used computer-assisted personal interviewing (CAPI)-based interviews (computers not connected to the Internet) to elicit patient preferences. These patients had to visit the hospital or the research facility to complete the exercise. In 1 case, patients received prior oral explanation, 20 and in another a brief training session. 15 In a few cases, a research assistant was present during the exercise to answer procedural questions.15,19,20 Three ACA studies were Web-based, and patients received a link to a Web site containing the ACA 21 or a direct link to the exercise.14,16 Patients could choose to complete the exercise at home or in the clinic.14,21 Pieterse et al. (2010) specifically compared CAPI-based and Internet-based ACA exercises, and found that the mode of administration did not influence importance scores. 16

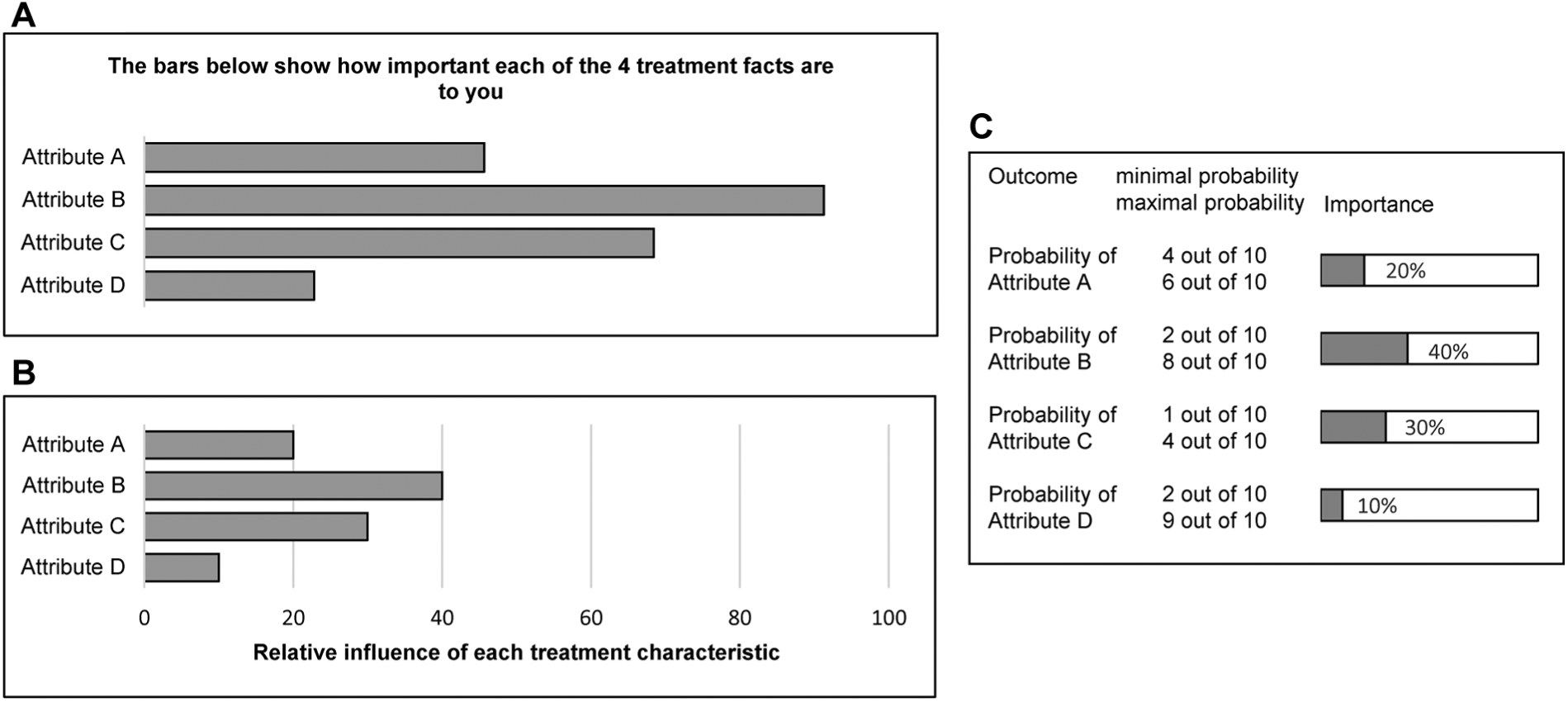

Based on the self-explicated rating and ranking tasks and the pairwise comparisons, a final set of part-worth utilities is estimated using ordinary least-square regression analysis. All studies provided patients with the output in real time, immediately after the exercise was performed. Three slightly different formats for feedback on attribute importance were found (Figure 3: reconstructions based on the information in the articles). Figure 3A and 3B are comparable in their format: a longer bar indicates higher importance of an attribute. The difference is in the description and the title of the

Result presentation to patients: attribute importance.

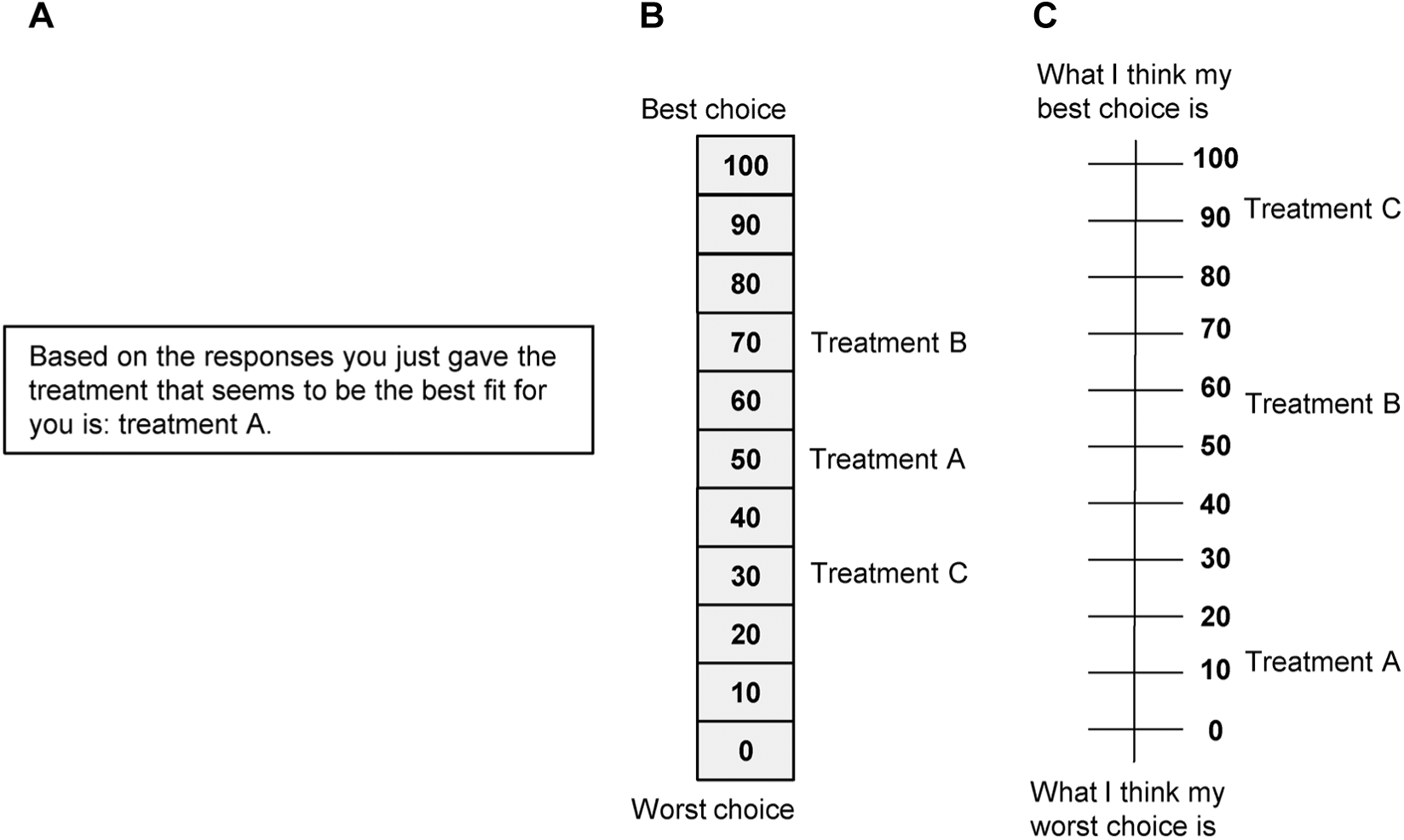

Result presentation to patients: overall preference scores.

In the studies of de Achaval et al. (2012) and Fraenkel et al. (2007), a research assistant explicitly explained the results to patients. In addition, 4 studies explicitly mentioned that patients received a handout or a printed sheet with their results to discuss them with their health care provider.14,15,19,21

Evaluation of ACA in Individual Decision Support

In 5 studies, self-administered questionnaires or surveys were used to evaluate the ACA exercises, except for the studies of Rochon et al. (2012) and Abraham et al. (2015), which used qualitative in-depth interviews and/or focus groups. Most patients throughout studies reported that the ACA exercise was easy to do, clear, and interesting.16,19 The study of Pieterse et al. also showed that as educational level increases, the median score on self-reported difficulty of the task (measured on 5-point Likert-type scales) significantly decreases (

With regard to reliability of preferences, Pieterse et al. (2010) asked patients to do a retest after 7–10 days and found that preferences were unstable in 1/3 of the sample. Hawley et al. (2015) found a concordance of 96% between predicted and revealed preferences (treatment actually received or planning to receive). Rochon et al. (2012) found that 66% of patients agreed with their prediction of preferred treatment, and in the study of Fraenkel et al. (2007) 68% thought the feedback “very much” reflected their values. Pieterse et al. (2010) reported that patients were satisfied with the feedback received on attribute importance.

In general, patients were positive with regard to the usefulness of results. Pieterse et al. (2010) reported that most patients would discuss results with their doctor and that 62% thought the ACA exercise would be helpful in deciding about treatment. 16 Fraenkel et al. (2007) and Abraham et al. (2015) also reported increased patient activation and increased patient engagement with clinicians after the use of the ACA exercise.19,20 In the study of Fraenkel et al. (2007), the ACA group reported having greater self-confidence, self-efficacy, and preparedness for decision making compared to the pamphlet-only control group (all statistically significant). 19 Hawley et al. (2015) found higher scores on several knowledge and decision appraisal statements for the ACA group, but only the score for the statement “I feel like I’ve made an informed choice” was significantly higher compared to the control group. 21 Abraham et al. (2015) studied the effect of the ACA exercise on adherence and found that patients who used the ACA exercise and were prescribed a therapy that was concordant with their preferences had a 15% increase in adherence to their antithrombotic therapy. 20 Lastly, de Achaval et al. (2012) showed that a video booklet decision aid provided the largest reduction in decision conflict compared to a group receiving an education booklet and a group receiving the video booklet + ACA exercise.

Discussion

The aim of this study was to systematically review the extent to which CA is used to elicit individual preferences for clinical decision support. We conclude that there is limited published use of CA exercises in shared decision making. Only 8 ACA studies met our inclusion criteria. Second, we have found that most studies resembled each other in the choices made regarding the design of the choice task and instrument. The principal findings will be discussed in light of future use of CA in clinical decision support.

Selection of Attributes and Levels

Each study included between 4 and 8 attributes, and found a balance between benefits (efficacy) and harms or process characteristics. All studies worked from a fixed set of attributes determined by focus groups, interviews, literature reviews, or expert consultations. None of the included studies allowed individual patients to determine the relevant attributes prior to the start of the ACA exercise (either from a predefined set or by coming up with attributes themselves). Predetermination of attributes and levels might omit characteristics of treatment that are important to individual patients. CA exercises cannot be easily adapted, however, to include additional attributes for each patient at the time of decision making.

Choice Task Design

In this review, all included studies used ACA as their preference elicitation method. Patients received between 12 and 18 paired comparisons, and this is determined prior to the preference elicitation (based on the total number of attributes and levels). Other CA methods, such as discrete choice experiments and full profile conjoint, were not observed in our review. This may be because, only for ACA and maximum-difference scaling (best-worst scaling), commercial software is available to generate reliable preference data based on the responses of a single patient in real time. 22 Furthermore, ACA (and also maximum difference scaling) does not require presentation of all attributes in one choice task, which can be useful for complex medical decisions. Another plausible explanation might be the unstudied effect of using less efficient designs (not fully balanced and orthogonal) on the reliability of individual preference estimations. ACA designs (in hindsight), however, usually have good statistically efficiency, although they are often not strictly orthogonal. 23 Nevertheless, it might be interesting for researchers to have greater flexibility in CA methods to construct decision support tools in health care settings. Fraenkel (2013) already described some pilot-test work with the best-worst scaling method in patients with rheumatoid arthritis. 22 An article describing this work in detail is not yet published, however (and could not be included in this review).

Instrument Design

All studies were conducted via a computer, of which 3 were also Web-based. Web-based ACA exercises offer flexibility to patients who have Internet access to use the ACA exercise at home, at their own pace, and thus they reduce time demands on clinicians. As study results have shown, however, people who are older or have lower levels of education may need additional support.16,18 All studies provided patients with their preference results in real time, although the type of outcome that was presented to patients differed. Only 3 studies presented patients with a ranking of treatment options or a suggestion that treatment would be the best fit with their values. Physicians might feel hesitant in case the treatment with the highest score is not the best treatment option, according to the physician’s experience or the evidence available. 22 Shared decision making has historically shied away from making any type of treatment recommendations.24,25 Providing the patient with results on attribute importance leaves more room for interpretation and discussion during shared decision making; however, it is arguable that helping patients to better understand how each treatment option aligns or does not align with what matters to them is a critical—and too often overlooked—step in supporting evidence-informed, values-congruent decisions. 8 The question remains, however, whether this should be done by a computer or by the doctor.

Evaluation of ACA

Among studies, patients had a positive attitude about the need to actively think about the relevant trade-offs, and they reported ACA exercises to be useful and informative. One of the main barriers we came across, however, was the implementation of ACA exercises within the clinical workflow. For these tools to succeed, it is of paramount importance that thought is given as to when the appropriate time to elicit preferences is, the amount of effort expected from physicians, and most importantly how the results of the CA exercise fit within the patient–physician dialogue.16,20,21 None of the included studies, however, went into depth about any of these issues. Only Pieterse et al. (2012) questioned whether patients’ values should be incorporated in the medical record to increase the likelihood that they are addressed during the clinical encounter. 16

Limitations of This Study

To the best of our knowledge, this is the first review regarding individual preference estimation based on CA methods. Only 8 ACA studies were, however, identified in this review, making generalization difficult. For the quantifiable criteria (e.g., mean duration), no meta-analysis could be performed due to heterogeneity of measurements. In addition, some studies lacked complete information on instrument design, and it was difficult to get an unambiguous picture. Authors were approached for clarification, but few responded and clarified our questions. Except for 1, all studies used Sawtooth Software, and thus our results are generalizable to this software package. Other CA software packages are available (e.g., 1000minds Ltd.); however, to the best of our knowledge, no studies using this method have met our inclusion criteria.

Some studies discussed both the design and evaluation of an ACA exercise, which mostly resulted in less detail about the design phase. For reasons of transparency and to offer the possibility to learn from previous research, we recommend authors include more detailed information about the development phase in an appendix.

Furthermore, there might have been selection bias in our review. The studies of Cheung et al. (2010) and Imaeda et al. (2010) addressed the theme of individual preference estimation, but they were excluded because the experimental designs of this ranking conjoint and maximum difference scaling experiment were designed to elicit population preferences.26,27 Moreover, one study used a discrete choice experiment to improve patient knowledge and adjust high patient expectations of treatment outcomes. 28 The study was excluded, because the discrete choice experiment was not analyzed nor did patients receive results.

Conclusion

There is only limited published use of CA exercises in shared decision making. All included studies used ACA to estimate individual preferences. Studies resembled each other in design choices made, but patients received different feedback among studies. Furthermore, patients had a positive attitude about the need to actively think about the relevant trade-offs, and they reported ACA exercises to be useful and informative. For these tools to truly succeed, however, further research should first focus on a more flexible set of attributes and levels, the feedback patients want to and should receive, and how the results fit within the patient–physician dialogue. Furthermore, it might be interesting for researchers to have greater flexibility in the choice of CA methods for decision support tools, because the optimal approach will vary depending on the needs of the clinical setting and the capabilities of patients.

Supplemental Material

Appendix_1_online_supp – Supplemental material for Individual Value Clarification Methods Based on Conjoint Analysis: A Systematic Review of Common Practice in Task Design, Statistical Analysis, and Presentation of Results

Supplemental material, Appendix_1_online_supp for Individual Value Clarification Methods Based on Conjoint Analysis: A Systematic Review of Common Practice in Task Design, Statistical Analysis, and Presentation of Results by Marieke G. M. Weernink, Janine A. van Til, Holly O. Witteman, Liana Fraenkel and Maarten J. IJzerman in Medical Decision Making

Supplemental Material

Appendix_2_online_supp – Supplemental material for Individual Value Clarification Methods Based on Conjoint Analysis: A Systematic Review of Common Practice in Task Design, Statistical Analysis, and Presentation of Results

Supplemental material, Appendix_2_online_supp for Individual Value Clarification Methods Based on Conjoint Analysis: A Systematic Review of Common Practice in Task Design, Statistical Analysis, and Presentation of Results by Marieke G. M. Weernink, Janine A. van Til, Holly O. Witteman, Liana Fraenkel and Maarten J. IJzerman in Medical Decision Making

Footnotes

Work was performed at the following institutions: Department of Health Technology and Services Research, University of Twente; Department of Family and Emergency Medicine, Office of Education and Professional Development, Laval University; Population Health and Optimal Health Practices Research Unit, CHU de Québec; and School of Medicine, Yale University. This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors. However, Liana Fraenkel is supported by the National Institute of Arthritis and Musculoskeletal and Skin Diseases, part of the National Institutes of Health, under award no. AR060231-01 (Fraenkel). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. In addition, Holly Witteman is supported by a Research Scholar Junior 1 career award from the Fonds de recherche du Québec – Santé.

The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing, and publishing the report

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.