Abstract

Discrete choice experiments (DCEs) are a stated preference method that uses a survey to systematically quantify individuals’ preferences. The method is used to understand which characteristics (termed attributes) are liked by consumers, how they balance these attributes, and the relative importance of each attribute in their decision to consume. 1 In a DCE, the respondents are asked to choose their preferred option from a series of hypothetical scenarios called choice-sets. DCEs are underpinned by 2 key economic theories: Random Utility Theory (RUT) and Lancaster’s Theory.2,3 The 2 theories combined suggest that DCE respondents choose the option from each choice-set which provides them with the most satisfaction or “utility.” The method has been used to understand people’s preferences in a variety of settings, often when it is challenging to observe consumers making choices in real markets.4,5

In healthcare, decision making may involve careful assessment of the health benefits of an intervention. 6 However, decision makers may wish to go beyond traditional clinical measures and incorporate “non-health” values such as those derived from the process of healthcare delivery. 7 DCEs allow for estimation of an individual’s preferences for both health benefits and non-health benefits and can explain the relative value of the different sources. 8

Systematic reviews of published health-related DCEs have identified that their designs are becoming increasingly complex, with an increase in the number of choice-sets presented and an increase in the number containing attributes that are difficult to present and interpret, such as time or risk.9–11 The increased complexity of DCE designs raises the potential for anomalous or inexplicable choices. 12 Any increases in the cognitive burden of the task could result in poorer quality data and should be considered carefully. 13 A number of studies have explored the implications for quantitative analyses of anomalous or inexplicable choice data, leading to, for example, the exclusion of respondents whose choices fail tests for monotonicity or transitivity or who exhibit sufficiently high levels of attribute nonattendance.14,15

Qualitative research is increasingly advocated in the field of health economics.16,17 The term qualitative research refers to a broad range of philosophies, approaches, and methods used to acquire an in-depth understanding or explanation of people’s perceptions.18–21 A key strength of qualitative research methods, in particular, is being able to collate important contextual data alongside quantitative preference data. These potential strengths can be realized only if studies are conducted appropriately and reported with sufficient clarity such that readers can understand the approach used and interpretation of the findings.

There is some evidence that stated preference methods, other than DCEs, have benefited from the use of qualitative research methods in order to provide a deeper understanding of their results.22–24 General guidelines advising on best practice for healthcare DCEs state the importance of qualitative research methods in the design of the DCE survey.25,26 Some academics have made specific recommendations for the application of qualitative research methods alongside DCEs, paying particular attention to the identification of attributes and levels.27–29 However, there has been no explicit investigation of how well these recommendations have been translated into practice or the perceived usefulness of the qualitative methods in this context.

This study aimed to identify studies reporting the use of qualitative research methods to inform the design and/or interpretation of healthcare-related DCEs and explore the perceived usefulness of such methods. The objectives were to 1) identify and quantify the proportion of DCEs using qualitative research methods; 2) investigate the stages in the DCE at which qualitative research methods were used; 3) describe the methods and techniques currently used; 4) evaluate the quality of the reporting of qualitative research when possible; and 5) explore the views of authors of published DCEs about the usefulness of qualitative research methods.

Methods

This study used systematic review methods 30 combined with a structured online survey.

Systematic Review

The systematic review focused on identifying all published healthcare DCEs within a defined time period (2001 to June 2012). The focus was on DCEs rather than other stated preference methods such as conjoint analysis, which are grounded in different economic theories and are therefore not directly relevant to this review. 31

Inclusion and Exclusion Criteria

The primary inclusion criteria were that the empirical study was healthcare related and used a discrete choice (also known as choice-based conjoint analysis) experimental design with no adaptive elements. Other literatures, such as environment, transport, or food, were excluded. Examples of conjoint analysis where respondents were required to rate or rank alternatives were also excluded from the review. Non-English articles and reviews, guidelines, or protocols were not included.

Search Strategy

An electronic search of MEDLINE (Ovid, 1966 to date) was conducted in June 2012. The strategy exactly replicated that of a published systematic review of DCEs. 9 The search terms used were “discrete choice experiment(s),” “discrete choice model(l)ing,” “stated preference,” “part-worth utilities,” “functional measurement,” “paired comparisons,” “pairwise choices,” “conjoint analysis,” “conjoint measurement,” “conjoint studies,” and “conjoint choice experiment(s).”

Screening Process

Screening was conducted by an initial reviewer (C.V.) and duplicated by a second reviewer (K.P.). Following the initial screening, if an article could not be rejected with certainty on the basis of its abstract, the full text of the article was obtained for further evaluation. Papers were reviewed a second time to identify any articles relating to the same piece of research, thus limiting the problem of double counting a single study.

Data Extraction and Appraisal

In line with previous systematic reviews, 22 this review defined qualitative research methods as any exploration of peoples’ thoughts or feelings through the collection of verbal or textual data. The studies were initially categorized into 3 categories: 1) those which reported no qualitative research (none); 2) those which contained basic information by reporting the aims, methods, analysis, or results of the qualitative component (basic); and 3) those which indicated that an extensive qualitative component was conducted by reporting information on the aims, methods, analysis, and results (extensive). Studies in category 3, “extensive,” were deemed to contain sufficient detail for critical appraisal. The categorization of studies was initially conducted by CV and repeated by 2 other researchers (Martin Eden and Eleanor Heather).

Data were extracted from each study, including the country setting, publishing journal, and year of publication. From studies in the “basic” or “extensive” categories, data were extracted about the purpose of the qualitative component, the qualitative research methods used, the approach taken to analyze or synthesize the qualitative data, and any software used in the process.

One researcher (C.V.) extracted data from the studies that reported basic details about the qualitative component of their study. An iterative process of identifying, testing, and critiquing existing appraisal tools with experienced qualitative researchers (Stephen Campbell and Gavin Daker-White) was used to produce a bespoke checklist (Appendix A, available online) for use when reporting qualitative methods used alongside a stated preference study. A separate paper is in preparation that focuses on the development, validation, and suggested use of this bespoke checklist.

Data Synthesis

Microsoft Excel was used to tabulate the extracted data. The data were then summarized and collated into a narrative report describing the findings.

Survey to Authors

An online survey (Appendix C) was designed to determine authors’ experiences and opinions of the following: 1) using qualitative research methods alongside DCEs and 2) communicating the qualitative work they conducted in a journal article. Additional questions included self-assessment of their and coauthors’ expertise in qualitative research, the number of DCEs they had conducted, and whether they agreed with the key findings of the systematic review. A preliminary version of the survey was devised and piloted with researchers (n = 3) experienced with DCEs but was not included in this review (because their DCEs were unpublished or in non-health subjects). All journal articles provided an e-mail address for the corresponding author. Therefore, the most feasible method of contacting authors and eliciting their views was an online survey. Authors were invited to participate via an e-mail (or electronic message) that explained the systematic review and included a brief abstract covering the background, methods, and results of the systematic review. The message also referenced the study included in the systematic review (for authors with multiple articles, this was the one most recently published).

Analysis of the survey responses involved production of descriptive statistics for each of the questions. The authors’ free-text comments were not thematically analyzed because of the limited textual data available (some authors chose not to comment).

Results

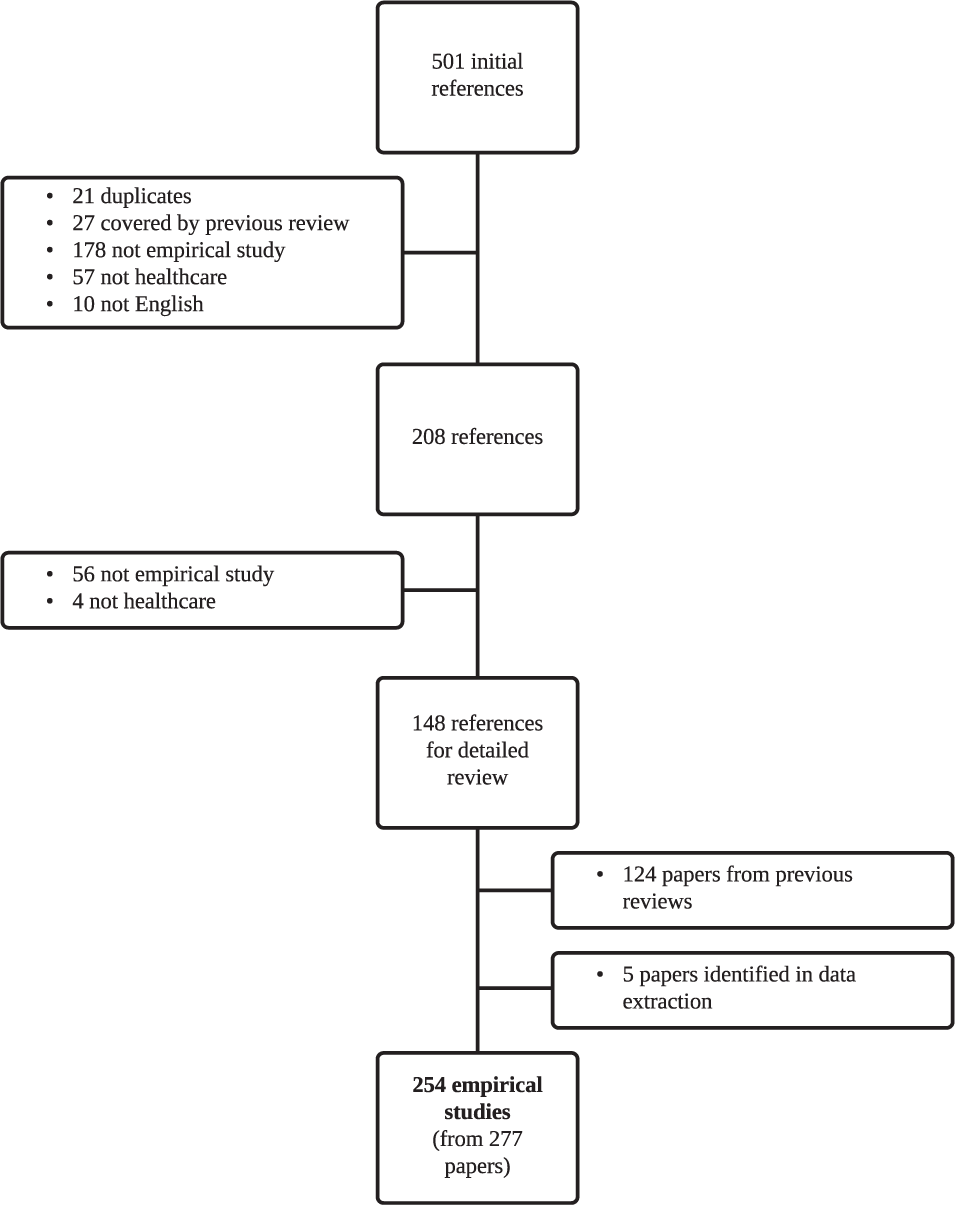

In total, 254 empirical studies (some studies were reported in more than one paper) were included in the final review published between 2001 and June 2012. A list of studies included in the review can be found in Appendix B. One hundred and twenty-nine studies were already identified by previous systematic reviews.9,32 The updated search resulted in 501 titles and abstracts since the previous review (2008 onward). Two hundred and eight full papers were retrieved for further assessment, and 148 papers met the inclusion criteria. Figure 1 shows the stages involved in screening and the reasons for rejection of the excluded papers.

Flow of studies through the systematic review.

Overview of Included DCEs

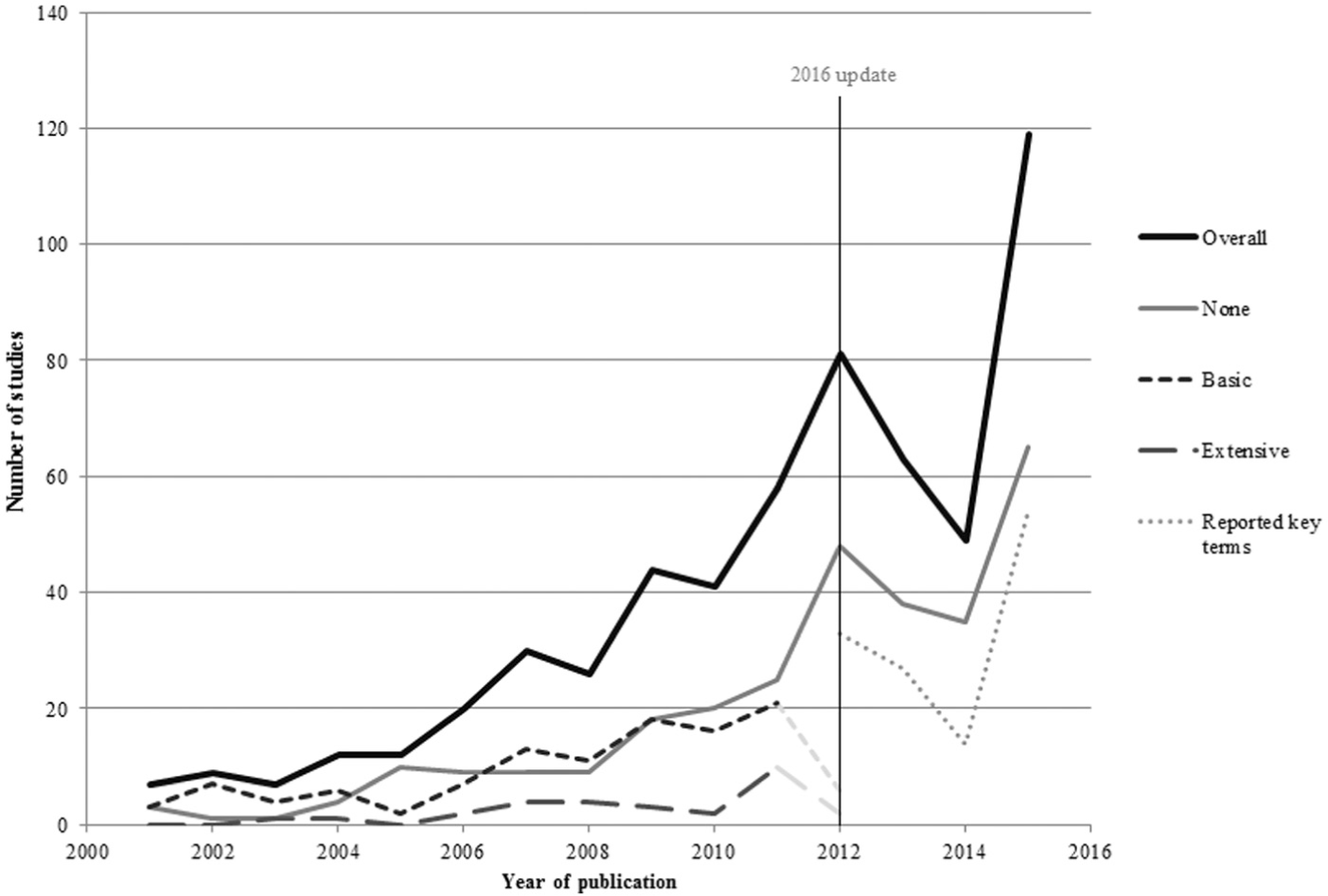

As shown in Figure 2, there was an increase in the number of DCEs published over time, with over half of the studies (n = 154, 56%) published since 2009. Half of the DCEs identified by this review were published in health services research journals (n = 139, 50%) and a third (n = 88) in specialized medical journals. Over half of the DCEs published were conducted in Europe (n = 186, 56%), and a quarter of the DCEs identified were carried out in the UK (n = 84, 25%). Other popular countries included the US (n = 49, 15%), the Netherlands (n = 38, 11%), Australia (n = 26, 8%), and Canada (n = 19, 6%).

Trends in DCE publishing over time. “Overall” includes papers rather than studies. 2012 incomplete due to the year of search.

Overall, 111 studies (44%) did not report the use of any qualitative research methods; 114 studies (45%) were rated as “basic,” reporting minimal information on the use of qualitative methods; and 29 studies (11%) reported or explicitly cited extensive use of qualitative methods. A number of studies included in the review that reported no qualitative research went on to discuss the lack of qualitative data as a limitation of their study.33–35

Journals relating to specific disease areas were least likely to contain the qualitative component of the research. In contrast, 70% of the DCE studies reporting the use of qualitative research to were published in unspecific medical journals. There were also noticeable patterns, with 90% of the 11 DCE studies conducted in Africa reporting the details of the qualitative component of their research.

Basic Qualitative Research Reported

Almost all authors who reported using some qualitative research did so by stating in the methods section of the paper the nature of the qualitative component of their research (n = 113, 99%). Almost all (n = 113, 99%) of the studies that reported basic qualitative research reported using it before the DCE was implemented, in either the design or the piloting phase. Three studies (3%) reported using qualitative research at the end of the DCE to attain additional information on preferences.36–38

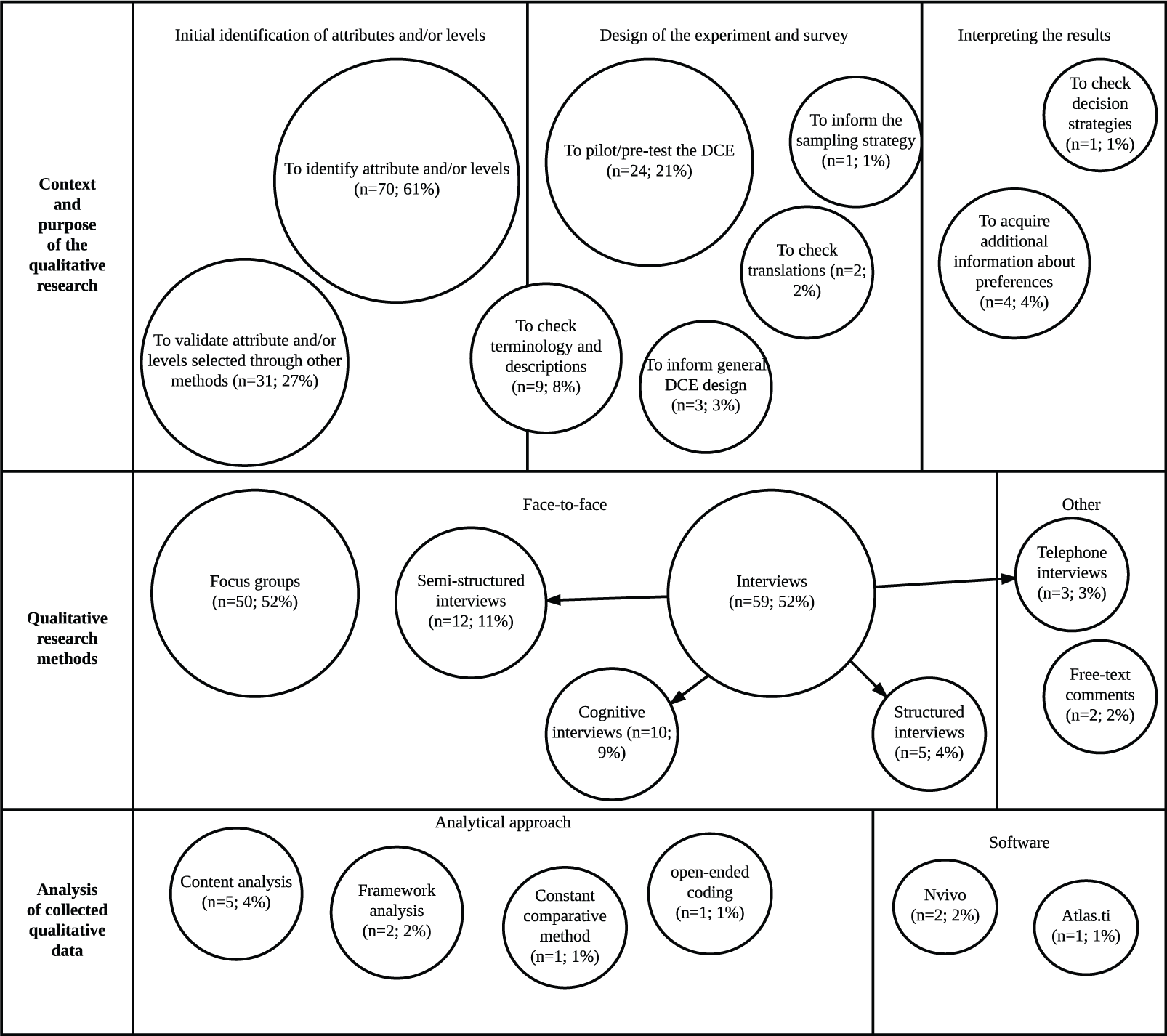

Figure 3 illustrates that a variety of applications of qualitative research methods were identified. In the design of the DCE, researchers were most commonly seeking to identify attributes and/or assign levels (n = 70, 61%) or validate attributes and/or levels identified through other methods (n = 31, 27%). Researchers also used qualitative research methods more specifically to check terminology, vignettes, and descriptions (n = 9, 8%) and to confirm translations (n = 2, 2%).

Summary of methods and context of the studies (n = 114) reporting basic details about the qualitative component.

After the design phase, some studies also reported using qualitative research methods in the piloting of the DCE (n = 24, 21%). In the pilot stage, the methods were specifically used to check for decision strategies and also to determine an appropriate sample for the final DCE. For example, one study 39 used interviews to determine an appropriate age range for the final DCE. Another study 40 used the qualitative data acquired in the piloting stage to estimate preference heterogeneity and thus predict an appropriate model for the choice data, and another study 41 used the qualitative research to predict the signs of the coefficients.

The most popular approach to qualitative data collection was interviews (n = 89, 78%), including structured and semistructured interviews. Ten studies (9%) also used cognitive interviews that included debriefing questions at the end of the task as well as a verbal protocol analytical technique called “think-aloud.” Focus groups were another popular approach to data collection (n = 50, 44%).

The most common populations used in the qualitative component were healthcare professionals (n = 21, 18%), patients (n = 46, 40%), and experts (n = 11, 10%), although some studies (n = 14, 13%) used a mixture of participants. Of the 114 studies, 71 (62%) conducted the qualitative research with the same population as the DCE study and 16 (14%) did not. In 23 studies (21%) it was unclear whether the populations for the DCE and the qualitative component were the same. In 4 studies (4%), the qualitative sample was the same sample of individuals who completed the DCE survey.

Although a crucial step in drawing reliable and valid results from the qualitative data, only a minority of studies described their approach to the analysis of the qualitative data (n = 15, 7%). Of these 15 studies, 5 studies reported using content analysis36,42–45 and 2 studies (2%) reported using framework analysis.46,47 Other analytical approaches included the use of grounded theory methods such as the constant comparative method 48 and open-ended coding. 49 Three studies detailed the use of specialist qualitative software: 2 studies36,50 (2%) used NVivo, and 1 study 51 used Atlas.ti.

Extensive Qualitative Research Reported

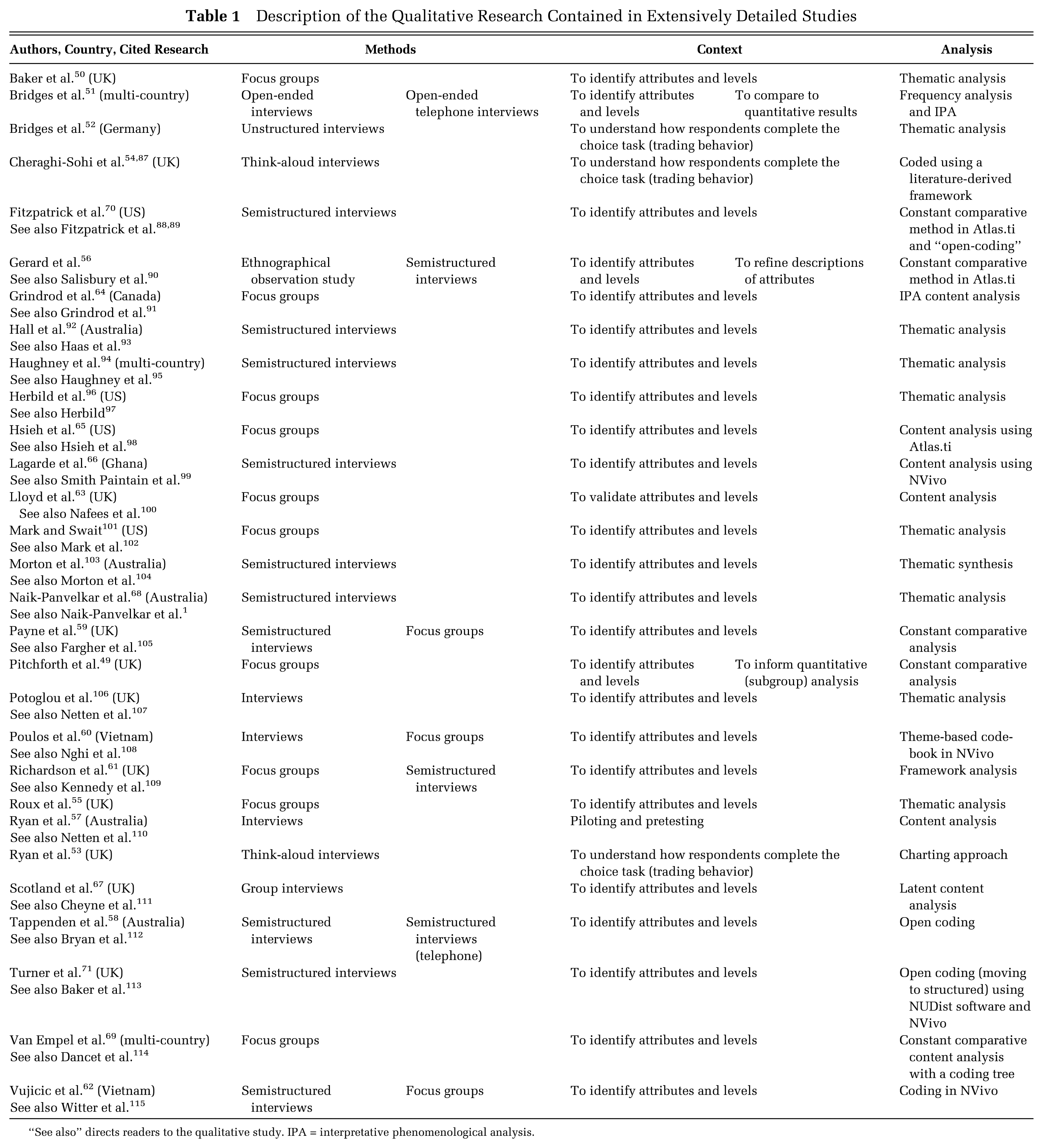

Seven DCE studies extensively described the use of qualitative research within the main text of the paper.52–58 Twenty-two further studies were identified as having conducted extensive qualitative research by checking the references to the qualitative component of the work. The details tended to be reported in other peer-reviewed journals (n = 17) and commissioned reports (n = 5). The citation of the qualitative research (either the main text of the DCE or a previous publication); the application; the methods used; and the analysis conducted are described in Table 1.

Description of the Qualitative Research Contained in Extensively Detailed Studies

“See also” directs readers to the qualitative study. IPA = interpretative phenomenological analysis.

Most of the studies (n = 25, 86%) reported the use of qualitative research methods to identify or validate attributes and/or levels for use in the DCE. Three studies55–57 used qualitative research methods to understand more about how respondents completed the choice task presented. Two studies52,55 also used the qualitative research to complement the quantitative analysis. Other studies used qualitative research methods to pilot and refine the survey.59,60

The most common data collection approach was interviews (n = 20, 69%). These interviews were mostly semistructured (n = 12, 41%) and face-to-face, although 2 studies used telephone interviews. 54,61 Of the 3 studies55–57 using qualitative research to understand more about how people completed the DCE task, 2 of these56,57 used a think-aloud interview approach.

A number of studies also used focus groups (n = 13, 45%), and 4 studies used a combination of focus groups and interviews in their qualitative study.62–65 One study 59 used the results of an ethnographic observational study to identify attributes and levels for the DCE and used semistructured interviews to refine the training materials and descriptions.

Most studies simply stated in the paper that they used thematic analysis (n = 10, 34%) or content analysis (n = 5, 17%)60,66–69 to categorize the qualitative data collected. One study also reported the use of a “latent” content approach to discover underlying themes. 70 One study 71 reported using thematic synthesis, a type of thematic analysis that involves a more explicit refinement of themes (possibly from multiple studies) and is an approach in line with reducing the qualitative data to develop a few attributes and levels.

Other analytical approaches included framework analysis (n = 3, 10%)63,64,71 and a related analysis called charting. 56 Seven studies used some constant comparative analysis (n = 5, 17%)52,59,62,72,73 or open-coding (n = 3, 10%)61,73,74 at least in the initial stages. Two studies54,67 used interpretative phenomenological analysis (IPA), which often takes an open-coding approach rather than relying on preexisting themes or frameworks. The type of software used was not always reported, but the most commonly reported packages were NVivo59,63,65,69 (n = 4, 14%) and Atlas.ti59,68,73 (n = 3, 10%).

Survey Results

A total of 114 studies reported at least basic use of qualitative research methods, and all authors of these studies were invited to complete the survey. As some corresponding authors had multiple studies included in the review, 91 individual authors were sampled. After the first e-mail sent on 1 May 2013, 38 authors completed the survey. Four authors declined to take part (for reasons such as one author had not practiced in the field for a few years so could not sufficiently recall his or her experiences; another was a statistician who had only been involved with the DCE analysis). The questionnaire closed on the 30 June 2013, with a total of 53 completed or partially complete responses, resulting in an overall response rate of 58%.

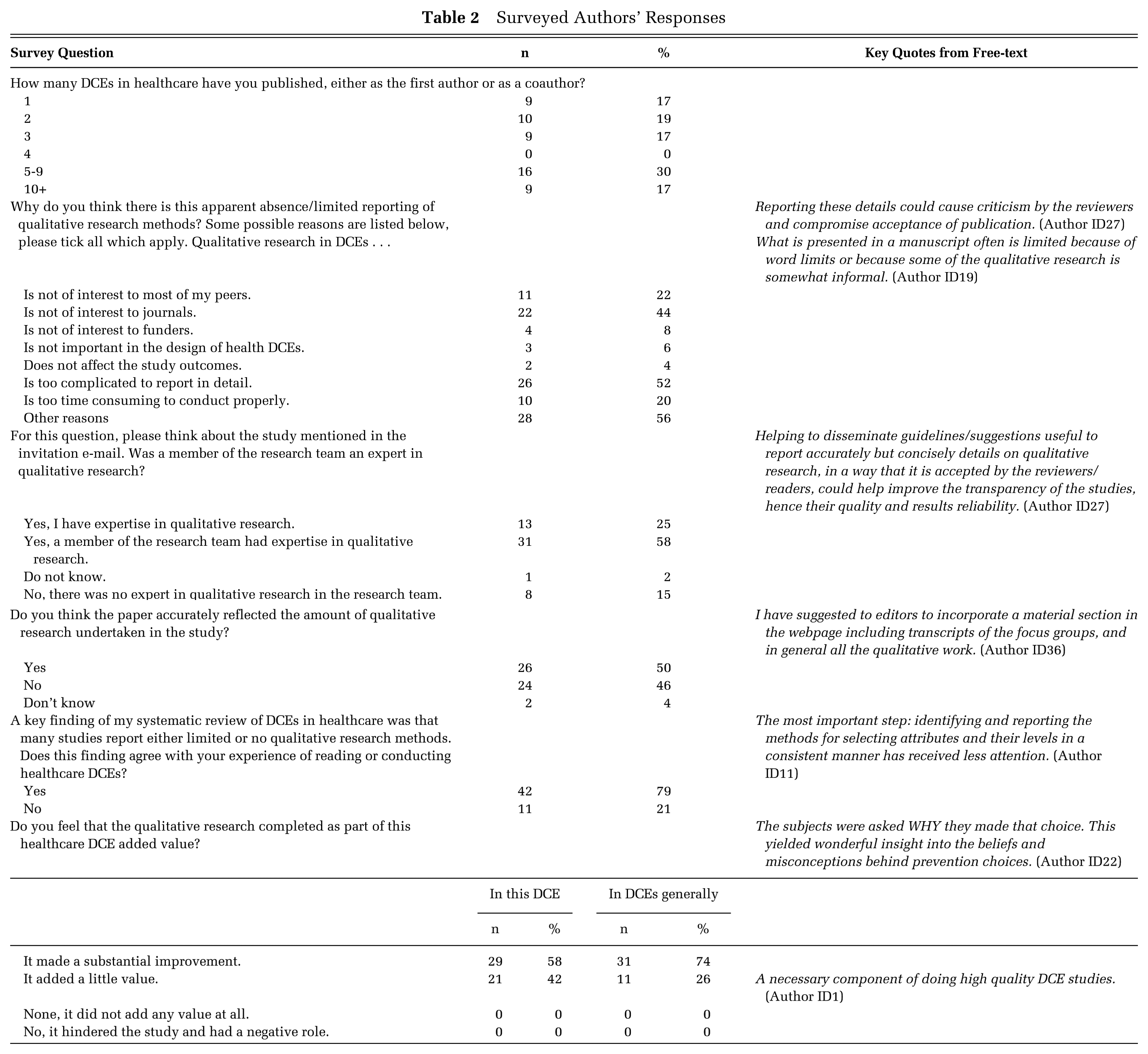

Table 2 provides a summary of the authors’ responses to each of the survey questions and examples of free-text comments provided by authors. These free-text comments are presented to illuminate the quantitative findings.

Surveyed Authors’ Responses

Of the respondents who answered the question enquiring whether qualitative research methods added value to their DCE, all (n = 50, 100%) stated that it did. Authors also reported that the use of qualitative research methods added value to their experience of conducting DCEs in general, with 74% (n = 31) stating that it made a “substantial improvement” to the study. However, one respondent offered this comment, which suggests some antipathy toward the use of qualitative research methods:

Qualitative methods often require a subjective component that doesn’t fit well with economics or quantitative methods. I am not convinced that qualitative work is always needed. (Author ID40)

A key finding of the systematic review was poor reporting of qualitative research in journal articles. The majority of survey respondents (n = 42, 79%) agreed with this finding that the qualitative component was only briefly described in their DCE paper. Some respondents (n = 11, 16%) stated that qualitative research would not be of interest to their peers, and 22 (44%) felt it was not of interest to journals. Other respondents (n = 4, 8%) reported that they did not believe qualitative research was important to funders.

Three-quarters of the respondents (n = 40, 75%) stated that they had no expertise in qualitative research methods. Some respondents (n = 31, 58%) did have a qualitative researcher as part of their team, but others (n = 8, 15%) did not. As described in Table 2, one author commented on the lack of guidance on reporting standards for the qualitative research conducted alongside a DCE.

Discussion

Existing systematic reviews of healthcare DCEs9,11,75 have not focused on the role of qualitative research, and there has been no direct contact with authors to determine whether the results detailed in their papers were subject to reporting bias. The review identified that 89% (n = 225) of identified DCEs did not report the qualitative component of the study in detail. A variety of reasons for the lack of detail in reporting, and complete omission in 44% (n = 111) of studies, were identified from the survey to authors. One potential reason for the paucity in reporting could be a lack of explicit reporting guidelines for qualitative research methods alongside DCEs.

Numerous guidelines exist for conducting and appraising qualitative research in general.76–82 However, in the context of DCEs, detailed guidelines on the use of qualitative research methods only exist for the identification of attributes and levels.27,28 It was difficult to ascertain the degree to which these guidelines were followed due to the lack of detail reported in published DCEs. The systematic review found that the most common application of qualitative research was to select attributes and levels for use in the DCE; other applications, for which guidelines do not exist, were also identified.

Qualitative research was frequently used for pretesting or piloting the DCE survey and for refining or checking terminology. The review also found some studies using qualitative research methods in other applications: for example, to predict preference heterogeneity, to select and specify a regression model, to identify the motives behind “irrational responses,” or to specifically test for breaks in the key axioms that support DCEs as a method.40,56,83 In light of these broad-ranging applications, it is apparent that qualitative research methods are being used in many ways. Best practice guidelines exist for many important steps in a DCE study, such as the experimental design 84 and analysis of choice data. 85 There is a need for similar guidelines covering the broad range of applications for qualitative research methods. Best practice guidelines may help improve both the quality and the reporting of qualitative research conducted alongside healthcare DCEs.

The author survey was sent to researchers who had regular experience in designing and/or analyzing DCEs, with most of the respondents publishing more than one DCE. This indicates that the sample was knowledgeable and was likely to include experienced researchers whose views are probably representative of the wider research area. This survey provided evidence that researchers designing and analyzing DCEs regarded using qualitative research methods as beneficial in a health DCE study. The lack of reporting of a beneficial and informative component to a research study could be rectified by clear and explicit reporting guidelines for all applications of qualitative research methods in the context of DCEs and the use of online appendices, particularly in word-restrictive journals. Maintained underreporting may otherwise erroneously imply that qualitative research is not useful in healthcare DCEs.

An updated rapid-review was conducted that covered all healthcare DCEs published between 1990 and February 2016 identified through a systematic search of the Medline database. The full-text of the 626 healthcare DCEs was then searched for key terms relating to qualitative research identified from a review of qualitative research in contingent valuation studies. 22 Further details of the update to the review can be found in Appendix D. The results of the updated review were consistent with this systematic review: Few DCEs studies report the qualitative component of their research, and few details are provided about the analytical approaches used to interpret the textual data.

Limitations

A limitation of the systematic review was the focus on papers recorded in one database, MEDLINE. This search strategy was chosen because it updated a previously published review by De Bekker-Grob and others 6 and replicated their study. The authors of the review chose MEDLINE, as other databases such as Pubmed or Embase identified duplicate papers rather than missing studies. Another limitation of the systematic review was the reliance on what was reported in the published paper; this was partially remedied with a survey to authors. However, the reliance on reporting was a particular challenge when assessing the analytical methods used by authors. For example, “content analysis” can refer to multiple approaches to the interpretation of qualitative data. 86 It was often unclear whether the authors had used numerical (summative) quantification of themes or had taken a more conventional approach of developing themes either from the text or from an initial framework.

Arguably, a more in-depth account of authors’ views and experiences could have been collected (possibly through one-to-one interviews), and thorough thematic analysis of the free-text comments could have provided more robust results. The survey sampled only authors who reported using qualitative research methods. Authors who did not report the qualitative component of their DCE study may have excluded details because the research did not add value, possibly creating bias in the survey results. However, the results of the questionnaire helped to explain the key findings of the systematic review, such as the drivers behind the lack of detail, and it is unlikely that further analysis or review would have highlighted anything that would significantly alter the findings.

An emerging type of DCE called a best-worst scaling (BWS) DCE is becoming an increasingly popular form of preference elicitation.87,88 In a BWS experiment, the respondents select their most and least preferred items, arguably revealing more about the respondents’ strength of preference in a survey containing fewer choice-sets. 89 BWS-DCE studies were not included in this systematic review because the search strategy, chosen to maintain methodological consistency with the previous DCE review, 9 was not designed to capture these types of choice studies.

Another limitation of this study was the original focus on papers published between 2001 and 2012. To remedy this, a rapid update to the review was conducted. The results of the updated rapid review matched the finding of the original systematic review, providing confirmatory evidence of its validity and the conclusion that qualitative research methods are inadequately reported in healthcare DCEs.

Conclusion

The results of the systematic review and survey of authors identified that qualitative research methods were being used by DCE researchers to answer multiple research questions and that these methods add value to DCE studies. However, the review demonstrated there was a paucity of detail about the qualitative component of most DCE articles. This lack of reporting could cause researchers to infer that qualitative research is not an important component of a DCE study. Authors and journal editors should make provisions for reporting the details of the qualitative component of their research, perhaps through the use of online appendices. Further research is required to develop guidelines for the reporting of qualitative research methods in stated preference studies, particularly for uses other than the identification of attributes and levels, which are not covered by current guidelines.

Footnotes

Acknowledgements

The authors thank Martin Eden and Eleanor Heather for their help with screening papers included in this review. We also thank Dr. Gavin Daker-White and Professor Stephen Campbell for their assistance in developing the bespoke appraisal tool. We are grateful to Gene Sim, who assisted with an update to the review. We would like to acknowledge the discussions with colleagues who attended a seminar that presented preliminary results of the review and survey at the Health Economics Unit, University of Birmingham. In particular, we thank Dr. Emma Frew for her considered suggestions at this seminar.

Financial support for this study was provided entirely by a National Institute for Health Research (NIHR) School for Primary Care Research (SPCR) PhD Studentship for Caroline Vass. The funding agreement ensured the authors’ independence in designing the study, interpreting the data, and writing and publishing the report. The views expressed in this article are those of the authors and not of the funding body.

The authors have no financial conflicts of interests.

Under the explicit guidance of the University of Manchester’s Research Ethics Committee, no ethical approval was required for this study.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.