Abstract

Background:

Cancer remains a leading global health burden. Artificial intelligence offers new opportunities to address complex physical and psychological symptoms in palliative and supportive cancer care. Despite rapid advances, including large language models, these technologies have not been consistently reviewed in this context, highlighting a gap in the synthesised literature.

Aim:

To map current evidence on how artificial intelligence is implemented in palliative and supportive care for people with cancer and their caregivers, and to identify associated challenges and future directions.

Design:

Scoping review guided by the Joanna Briggs Institute (JBI) framework.

Data sources:

We searched PubMed, CENTRAL, PsycINFO, and CINAHL databases, along with grey literature, up to 17 September 2024, and manually screened reference lists.

Results:

Of 2618 records screened, 199 studies were included. Most applications supported healthcare professionals, mainly for predictive purposes such as prognosis, symptom monitoring, and treatment-related adverse events. Machine learning was the predominant approach, reflecting reliance on structured clinical data. Publications increased markedly after 2020, with a sharp rise from 2022 onwards. Only 26 studies involved direct use by people with cancer or their caregivers, and large language model–based applications remained rare, indicating limited patient-facing use.

Conclusions:

Artificial intelligence is increasingly being applied in palliative and supportive care, yet applications designed for direct patient or caregiver use remain scarce. Further efforts should prioritise the development and validation of ethically sound, clinically integrated artificial intelligence tools to support person-centred palliative and supportive care.

Palliative and supportive care are essential to enhancing the quality of life for people with advanced cancer and their caregivers. However, access remains limited, particularly in low- and middle-income countries.

Since 2017, artificial intelligence (AI) technologies have seen rapid development and implementation in healthcare. Yet, their adoption in palliative and supportive care has remained minimal.

Existing reviews addressing AI in these contexts were conducted before the emergence of large language models and may not reflect current capabilities.

This review provides the first comprehensive global synthesis of AI implementation in palliative and supportive care, covering studies published up to 2024 and capturing developments before and after the emergence of large language models.

Most AI tools were developed for clinicians, with few designed for direct use by patients or caregivers.

Although patient-facing technologies such as chatbots and virtual reality platforms show considerable promise, clinical validation is currently insufficient.

Accessible, patient-facing AI tools may enhance symptom management and personalised support, especially in home and low-resource settings.

Integrating AI meaningfully into palliative and supportive care requires user-centred design, cross-cultural adaptability, and rigorous validation across populations, addressing ethical and digital inclusion challenges.

Policy frameworks must ensure robust ethical concerns, data protection, and equitable access for responsible implementation of AI technologies.

Background

People with cancer often experience persistent physical and psychological symptoms throughout the disease course. Despite advances in anticancer treatments, such as surgery, systemic therapy, and radiotherapy, treatment-related adverse events frequently impair quality of life. Psychological distress, including anxiety, depression, and fear of recurrence, is common during treatment and may persist afterwards. 1 Palliative and supportive care are essential to addressing these multidimensional needs.2,3 However, only about 14% of eligible people with cancer worldwide receive such services, with marked disparities in low- and middle-income countries due to limited resources and workforce capacity.4–6

Advances in artificial intelligence (AI) offer novel opportunities to enhance access to and continuity of palliative and supportive care. AI has become increasingly embedded in healthcare through electronic health records, mobile applications, and wearable devices. 7 Although AI research dates back to the 1950s, clinically meaningful applications have emerged only with progress in deep learning, computational power, and big data analytics.8–10 The introduction of transformer models in 2017 and the public release of large language models (LLMs) such as ChatGPT in late 2022 have further expanded the potential of AI-driven healthcare innovations.11,12 Nonetheless, the extent of AI use within palliative and supportive care remains unclear.

AI-based interventions for prognosis estimation and end-of-life decision-making have expanded substantially in recent years, particularly within home-based care, where interdisciplinary collaboration is fundamental.13–15 However, only two systematic reviews have examined AI use in this field, both conducted before the advent of LLMs, highlighting the need for updated synthesis reflecting recent technological advances.16,17

Given their natural language capabilities and emotional responsiveness, LLMs (e.g. the GPT series) may enhance communication and psychosocial care, especially in psycho-oncology. For people with advanced cancer and their caregivers, such tools may offer emotional support beyond conventional care settings. 18 However, ethical concerns, data security, and algorithmic bias must be carefully addressed.10,19–21 As AI increasingly intersects with psychosocial care, clarifying its current clinical use is essential to guide ethical, safe, and person-centred integration into practice.

Objectives

In this scoping review, we examine how AI is currently implemented in palliative and supportive care to enhance the quality of life of people with cancer and their caregivers. Specifically, we map patterns of AI use across care settings and identify areas requiring further development in clinical translation, research, and ethics. AI designed to support or optimise active anticancer treatments (e.g. surgery, systemic therapy, or radiotherapy) was excluded, whereas applications aimed at predicting, preventing, or managing treatment-related adverse events or symptoms were included as part of supportive care.

Design

Study selection and synthesis followed the Joanna Briggs Institute (JBI) guidance for scoping reviews. 22 Reporting of the review was guided by the Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) checklist to enhance transparency and reproducibility. 23 The review protocol was registered in the Open Science Framework on 18 October 2024 (https://osf.io/nq827).

Eligibility criteria

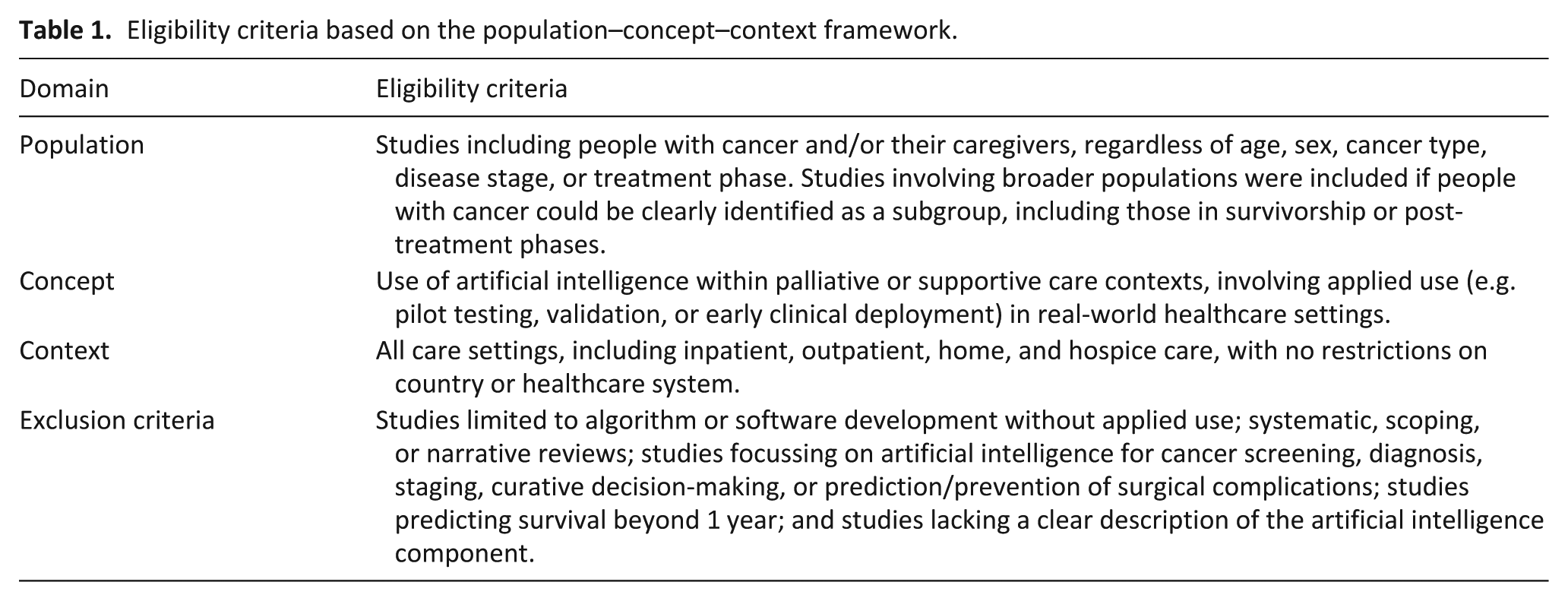

Eligibility criteria were based on the Population, Concept, and Context framework recommended by JBI. 22 Eligibility criteria are summarised in Table 1. No restrictions were applied to publication year, language, or care setting, and both peer-reviewed and grey literature were eligible for inclusion.

Eligibility criteria based on the population–concept–context framework.

Concept definitions

In this review, AI encompassed machine learning, natural language processing, deep learning, LLMs, and related technologies. 24 The term “AI implementation” referred to the applied contextual use of AI within palliative or supportive care, including pilot testing, validation, or early clinical deployment using real-world data. Studies describing only algorithm or software development without applied use were not considered implementations.

Care definitions

Palliative care was defined, in accordance with the World Health Organization, as an approach aimed at improving the quality of life of people with life-threatening illness and their caregivers. 4 For the purposes of this review, supportive care was operationally defined as the prediction, prevention, and management of adverse events associated with systemic cancer therapy and radiotherapy. 25 AI designed to support or optimise active anticancer treatments was excluded, whereas applications addressing treatment-related adverse events or symptom management were included as supportive care.

The term “palliative chemotherapy” was considered conceptually heterogeneous, encompassing intentions from life-prolonging to symptom-relieving, and was therefore classified under systemic cancer therapy for consistency.26,27 Non-curative interventions aimed at improving physical or psychological quality of life, such as cosmetic care, nutrition, speech therapy, and post-treatment rehabilitation were also included.

Information sources

We conducted a comprehensive literature search across four major databases: PubMed, the Cochrane Central Register of Controlled Trials (CENTRAL) via the Cochrane Library, PsycINFO, and the Cumulative Index to Nursing and Allied Health Literature (CINAHL) via EBSCOhost, without language or date restrictions. Both controlled vocabulary (e.g. MeSH, CINAHL Headings) and free-text terms were combined using Boolean operators (“AND,” “OR”). The search strategy was structured around three core concepts: (1) cancer and life-threatening illness; (2) palliative and supportive care, including quality of life and end-of-life care; and (3) artificial intelligence–related technologies. Detailed search strategies for each database are provided in eTable 1 (Supplemental Appendix 1).

Additional sources for grey literature included clinical guidelines, research protocols, professional-society websites, and ClinicalTrials.gov. 22 Reference lists of excluded reviews and primary studies were manually examined to identify further eligible studies. The search strategy was designed, validated, and approved by a qualified medical librarian, and the final search was conducted from 17 to 25 September 2024.

Selection of sources of evidence

We used Rayyan (Rayyan Systems Inc.) and EndNote for study management.28,29 After removing duplicates in EndNote, two reviewers (S.K. and M.S.) independently screened titles and abstracts using Rayyan. Full texts of potentially eligible studies were then assessed independently by paired reviewers (S.K., M.S., T.H., S.W., S.F., M.M.). Discrepancies were resolved through consensus discussions or by consulting a third reviewer. Inter-rater reliability for full-text screening was assessed using Cohen’s kappa and overall agreement rate.

Data extraction followed a structured, pilot-tested framework developed by the research team; the framework and decision rules are described in the “Data charting process” section and detailed in eTable 2 (Supplemental Appendix 1).

Data charting process

Data extraction employed a structured framework developed and pilot-tested on 10 randomly selected studies to ensure consistency among reviewers. The framework included predefined variables such as study characteristics, AI modality, care setting, user type, and data source. Each reviewer pair independently extracted information from eligible studies, utilising all available study information, including direct communication with authors when necessary. All extracted data were compiled into a shared spreadsheet to ensure transparency and consistency across reviewers. Representative variable definitions and data-handling procedures are described in the “Data items” section, with detailed definitions and coding rules provided in eTable 2 (Supplemental Appendix 1).

Data items

We extracted 15 core variables from each study to enable comparative analysis: (1) country of study, (2) study design, (3) publication language, (4) study objective, (5) cancer type, (6) therapeutic phase, (7) AI modality, (8) purpose of AI use, (9) AI user, (10) setting of AI usage, (11) source of input data, (12) sample size, (13) median age (range), (14) number and proportion of female participants, and (15) duration or frequency of AI use. All data were tabulated in Microsoft Excel (eTable 5, Supplemental Appendix 2).

AI modalities were classified as machine learning, natural language processing, deep learning, LLMs, or general AI. Machine learning encompassed methods such as random forests, decision trees, k-means clustering, and support vector machines. 30 Neural networks, such as artificial neural networks, deep neural networks, and convolutional neural networks, were grouped under deep learning. Long short-term memory networks were grouped as deep learning or natural language according to their function.

The purposes of AI use were identified through an iterative categorisation process. While the overarching approach of classifying purposes of use was defined a priori, the specific domains were not pre-specified due to the heterogeneity of the literature. Following full-text screening, two reviewers independently reviewed all included studies and inductively grouped similar applications into provisional categories. Through iterative discussion and consensus among the review team, these categories were refined and consolidated into 13 purpose domains:

(A) Adverse Events Prediction in Radiation Therapy

(B) Adverse Events Prediction in Systemic Cancer Treatments

(C) Aesthetic and/or Psychological Support

(D) Artificial Intelligence and Machine Learning Applications

(E) Data Extraction and/or Analysis

(F) Nutritional and/or Physical Support

(G) Patient Communication and Education

(H) Prognostic Prediction

(I) Quality of Life Assessment

(J) Symptom Monitoring and/or Prediction

(K) End-of-Life Care

(L) Social and Economic Factors

(M) Other

AI users were classified as medical personnel in hospitals, medical personnel at home, social workers, patients themselves, patients’ families or supporters, and others. If the user group was not explicitly stated, it was inferred from the study context. For example, models using electronic medical records to predict treatment-related toxicities were attributed to clinicians. When multiple user types were involved, all were recorded.

Care settings were classified as community welfare, general ward (inpatient), home, hospice (inpatient), hospice at home, outpatient (hospital or clinic), or other.

If the setting was not stated, it was inferred from context. For instance, AI use by patients or families was coded as “home,” whereas that by healthcare professionals in clinical environments was coded as “outpatient.”

Input data sources were classified as blog/social networking service/online forums, database/platform/registry, electronic or nursing records, imaging data (CT, MRI, X-ray, ultrasound), interview transcripts or qualitative data, patient-reported outcomes or questionnaires, research data, and other sources. AI systems without clear data descriptions (e.g. chatbots, assistive robots) were coded as “other.”

Missing data for variables such as sample size or sex ratio were coded as “N/A,” as shown in eTable 5 (Supplemental Appendix 2). Sample size, demographic information, and AI use duration are detailed in eTable 3 (Supplemental Appendix 1). Descriptive statistics (frequencies and percentages) were used to summarise the findings. In line with scoping-review methodology, no formal quality or bias assessment was conducted. 23

Results

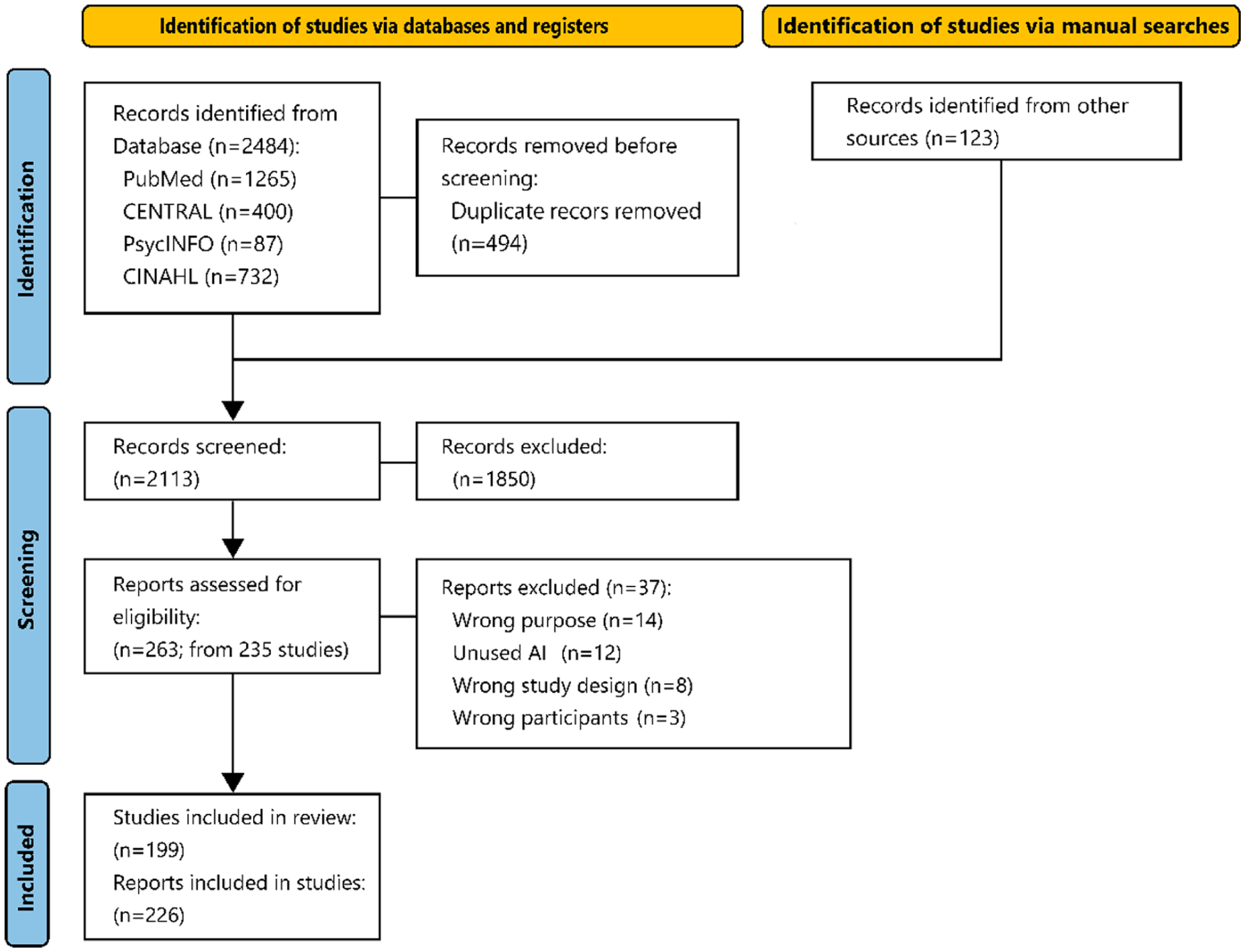

A total of 199 unique studies were included in the review. The selection process is summarised in Figure 1, following the PRISMA 2020 framework.23,31 Inter-rater reliability for full-text screening was substantial (κ = 0.69, 95% CI 0.54–0.84; agreement = 93.2%). 32

PRISMA flowchart of study selection.

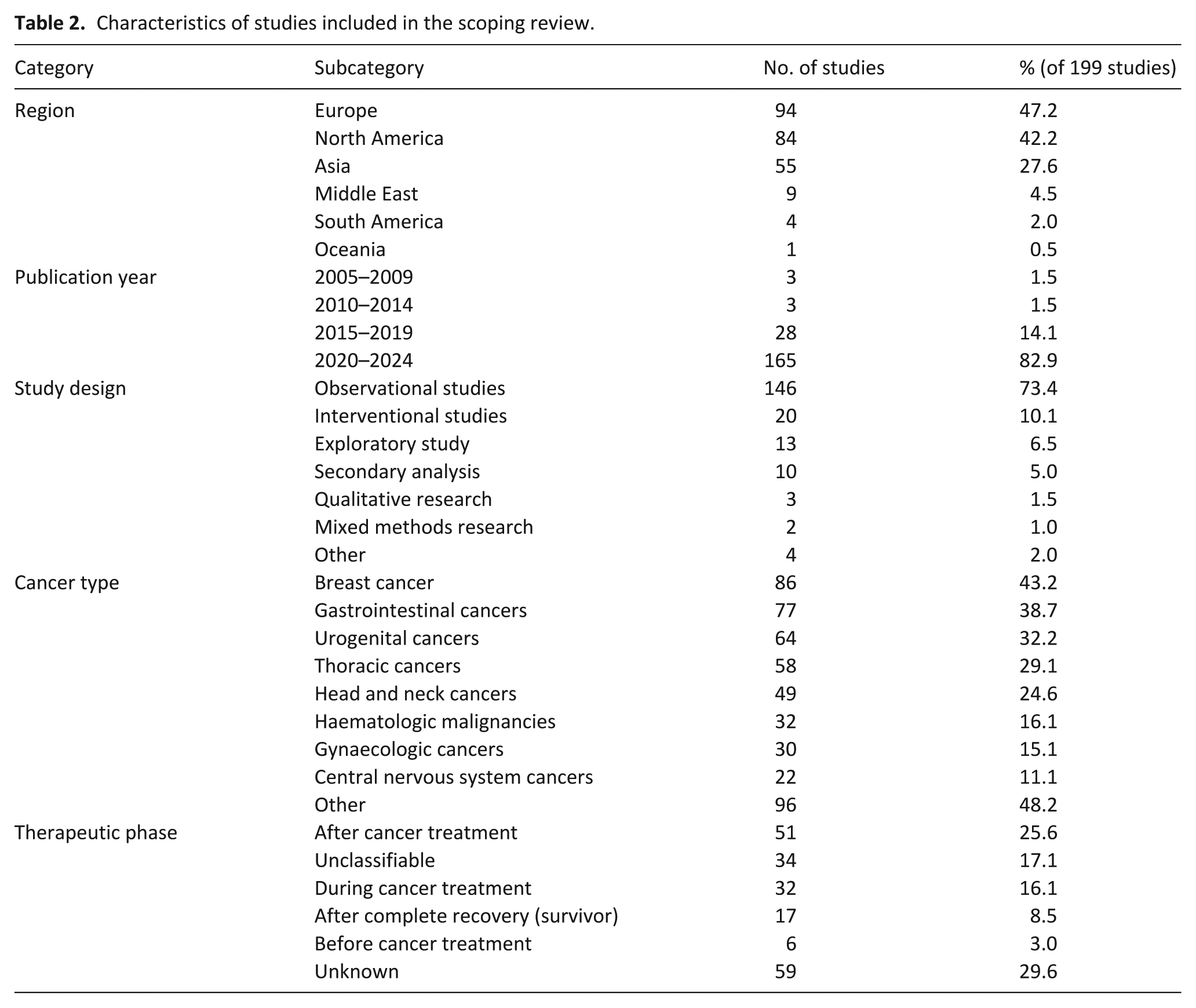

Study characteristics are summarised in Table 2, with more detailed descriptive data provided in eTable 3 (Supplemental Appendix 1). The complete dataset without statistical aggregation is presented in eTable 5 (Supplemental Appendix 2). A full list of included studies is available in Supplemental Appendix 1. Percentages in this section are calculated using the total number of included studies (N = 199), unless otherwise specified.

Characteristics of studies included in the scoping review.

Geographic and temporal characteristics of included studies

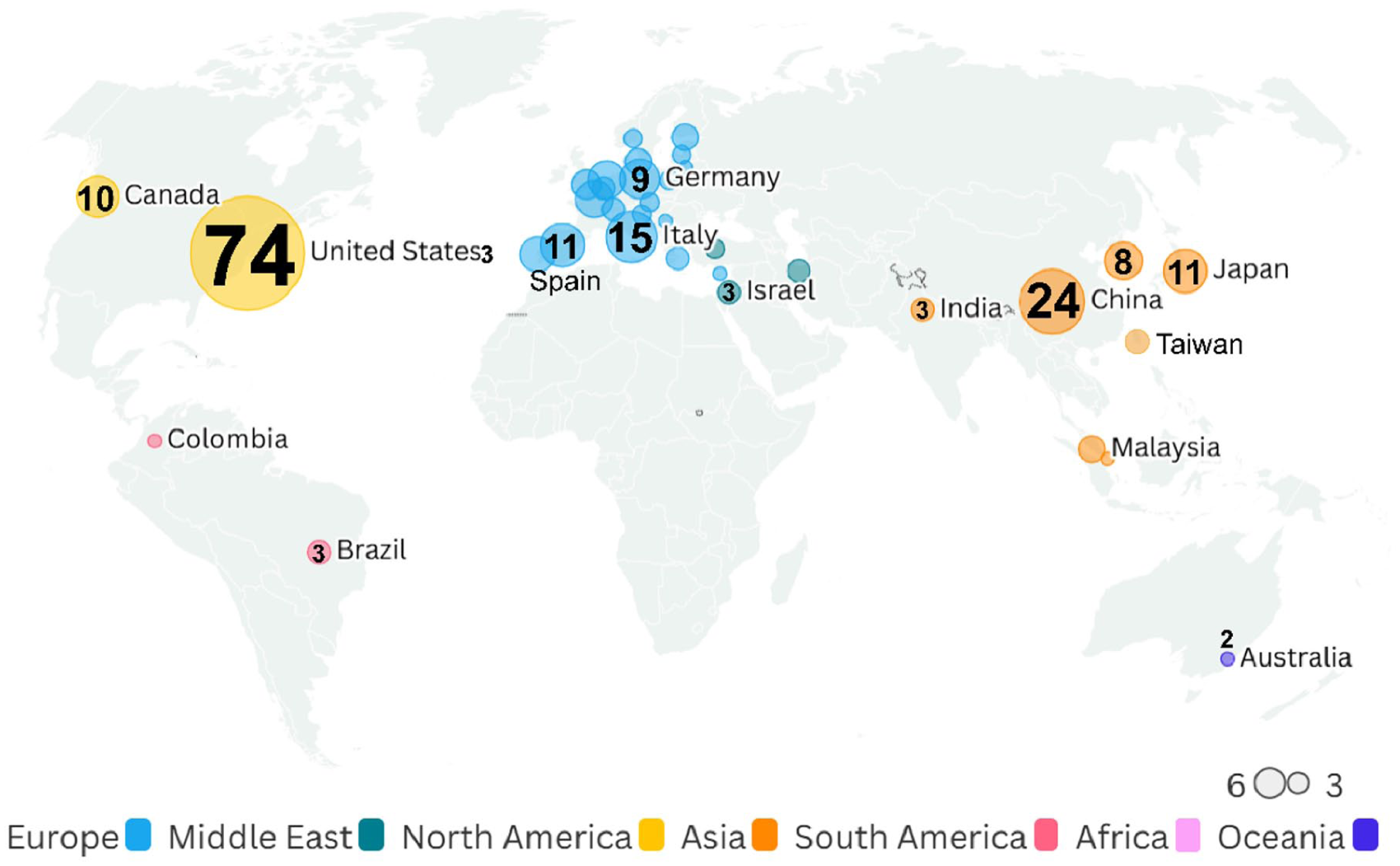

Of the 199 studies conducted across 36 countries, most originated from the United States (74 studies, 37.2%), followed by China (24, 12.1%), Italy (15, 7.5%), Japan and Spain (each 11, 5.5%), and Canada (10, 5.0%). By region, Europe contributed the largest share (94 studies, 47.2%), followed by North America (84, 42.2%) and Asia (55, 27.6%; Figure 2). Some studies were multinational and therefore contributed to more than one regional category. Nearly all publications were in English (196 studies, 98.4%), with one each in French, Japanese, and Chinese.

Geographic distribution of included studies in artificial intelligence (AI) research for palliative and supportive care.

The review includes studies published up to September 2024. Publication activity rose sharply after 2020, with 165 studies (82.9%) appearing between 2020 and 2024. Of these, 128 (64.3%) were published between 2022 and 2024, including 41 (20.6%) in 2024 alone despite the September endpoint. The publication-year distribution is detailed in eTable 3 (Supplemental Appendix 1), showing a marked surge from 2022 onwards (44 studies in 2022, 43 in 2023, and 41 in 2024).

Study designs

Observational studies predominated (146 studies, 73.4%), most commonly retrospective or prospective cohorts (124, 62.3%). Interventional studies, including randomised controlled trials, comprised 20 (10.1%). Exploratory analyses (13 studies, 6.5%) and secondary analyses (10, 5.0%) were reported separately, together accounting for 23 studies (11.6%). Qualitative (3 studies, 1.5%) and mixed-method (2, 1.0%) designs were also identified. Detailed classifications of study designs are shown in eTable 3 (Supplemental Appendix 1) and summarised in Table 2.

Cancer types

Breast cancer was the most frequently studied cancer (86 studies, 43.2%), followed by gastrointestinal cancers (77, 38.7%) and urogenital cancers (64, 32.2%). Other cancer types, including lung, head and neck, haematological, gynaecological, and brain cancers, were reported in proportions ranging from 11.1% to 29.1% of studies.

Therapeutic phase

Regarding therapeutic phases, 51 studies (25.6%) examined the post-treatment period, while 32 (16.1%) focussed on active treatment. Thirty-four (17.1%) included multiple or overlapping phases and were classified as unclassified, and in 59 (29.6%) the phase of care was not specified.

AI modality

Machine learning was the most common AI modality (118 studies, 59.3%), followed by natural language processing (37, 18.6%), deep learning (34, 17.1%), general AI (27, 13.6%), and LLMs (3, 1.5%).

Purpose of AI use

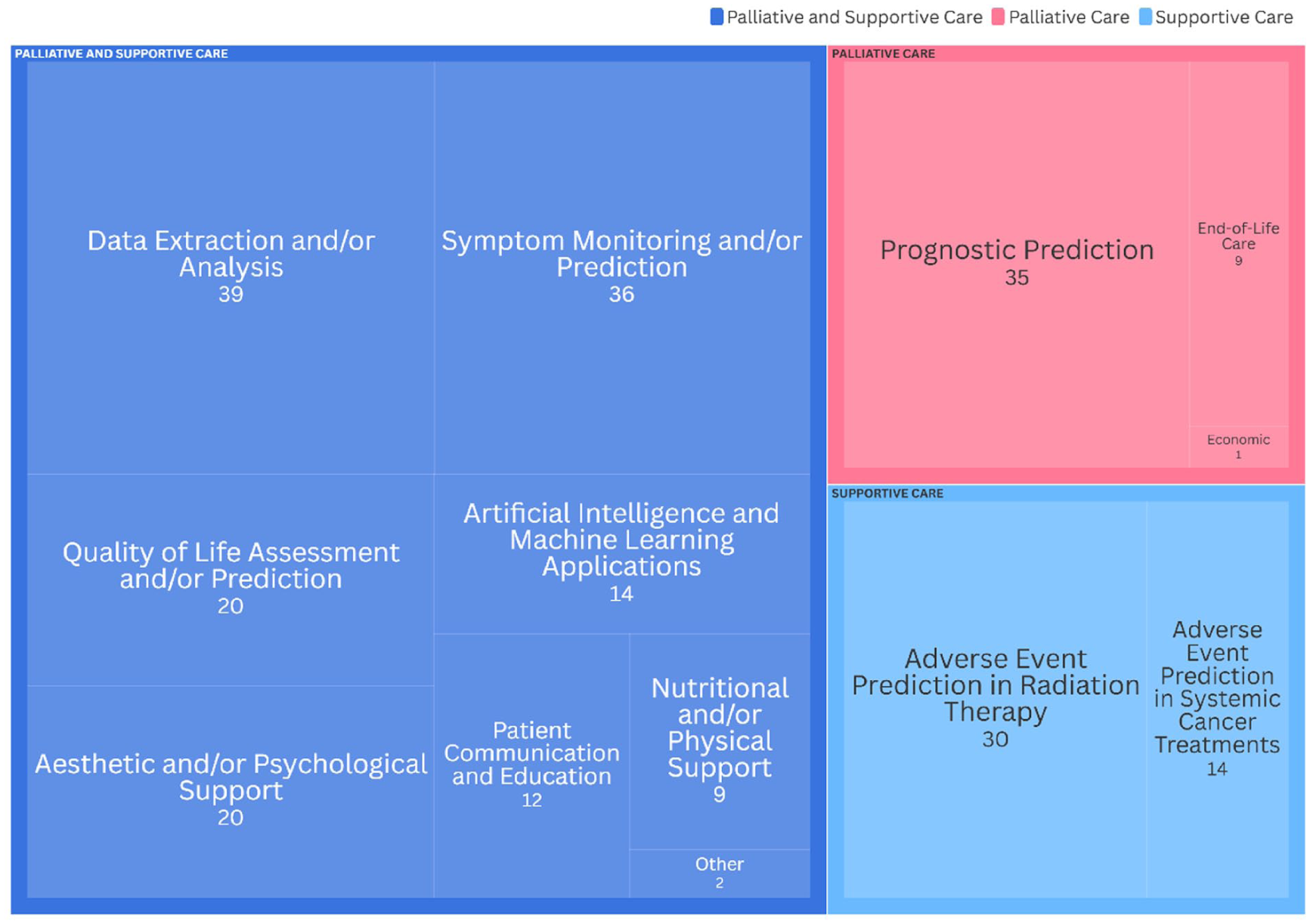

The most common purposes of AI use were data extraction and analysis (39 studies, 19.6%), followed by symptom monitoring and prediction (36, 18.1%), prognosis prediction (35, 17.6%), and prediction of radiation-related adverse events (30, 15.1%). Overall, 135 studies (67.8%) involved predictive applications of AI (Figure 3).

Purpose of AI use in palliative and supportive care.

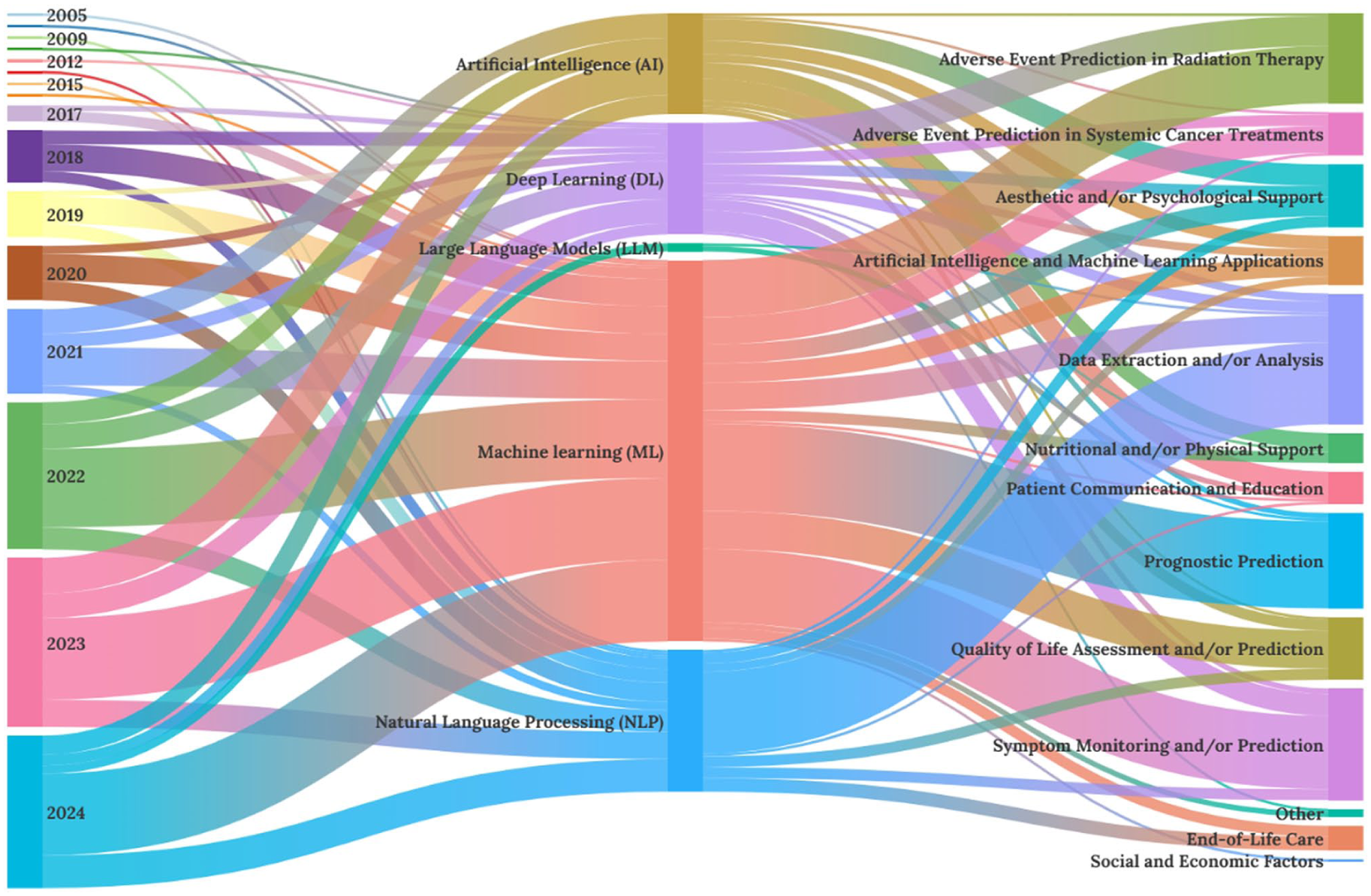

The Sankey diagram (Figure 4) visualises relationships among publication year, AI modality, and application purpose. Each rectangle (node) represents a category, with its size reflecting the number of studies. Connecting bands (arcs) indicate links between categories and their frequencies. 33 The horizontal layout proceeds from publication year (left) to AI modality (centre) and purpose (right), illustrating chronological and thematic trends.

Sankey diagram depicting the relationships between publication year, AI modality, and the purpose of AI use in palliative and supportive care.

As shown in Figure 4, AI studies in palliative and supportive care increased substantially after 2017, with a sharp rise from 2022 onwards. Machine learning remained the predominant approach, reflecting its compatibility with structured clinical data and predictive modelling. Deep learning and natural language processing showed steady growth, commonly applied to imaging analysis and text-based communication. The post-2022 surge coincided with the emergence of transformer-based architectures and LLMs, representing a new generation of natural language processing technologies that have broadened research interest towards generative and language-oriented applications. Nevertheless, their direct clinical use remains limited.

Only three studies (1.5%) employed LLMs, primarily for narrative data extraction, educational support, and patient queries.34–36 Although limited in number, these early studies indicate an emerging trend towards conversational and patient-facing AI, while most existing applications still focus on predictive modelling and risk mitigation.

Users and clinical settings of AI usage

AI was most frequently implemented by healthcare professionals (177 studies, 88.9%), increasing to 183 (92.0%) when home-based use was included. In contrast, AI was directly used by people with cancer in 26 of 199 studies (13.1%), by social workers in six (3.0%), and by caregivers or family members in four (2.0%).

To clarify how people with cancer use AI, we examined these 26 studies involving direct patient interaction. Four also included family members or informal caregivers. From each study, we extracted the country, cancer type, study design, AI modality, purpose, user, care setting, and sample size (eTable 4, Supplemental Appendix 1).

These 26 studies involving direct AI use by people with cancer or families were conducted across 18 countries, most often in the United States (10 studies) and China (4). Most were home-based, with fewer conducted in outpatient care. Nine were randomised controlled trials, five were non-randomised or exploratory, and a few were cohort or crossover designs. Chatbots were the most common modality (18 studies), followed by natural language processing (5), machine learning (4), deep learning (3), and large language models (2). The main purposes were communication and education (10 studies), psychological support (8), and nutritional or physical support (6). Other applications included symptom management, cosmetic support, and improved access to supportive care information.

Three studies described AI-enabled robots, used as therapy dog substitutes in sterile settings or as educational tools promoting sleep hygiene and nutrition for paediatric or AYA populations.37–39 Although paediatric evidence remains limited, these interventions enabled sustained engagement without dropout, suggesting good feasibility. Further details of these 26 studies are presented in eTable 4 (Supplemental Appendix 1).

User-centred evaluations were common across these studies but varied in outcome measures and methods. Most assessed satisfaction, acceptability, usability, or adherence using Likert-type scales, questionnaires, or interviews. Others included patient-reported outcomes relevant to intervention goals, such as improvements in symptoms, psychological wellbeing, quality of life, or knowledge. Overall, participants reported high satisfaction and perceived usefulness; however, the heterogeneity of outcome measures limits comparability across studies and makes quantitative synthesis, such as meta-analysis, challenging. These findings emphasise the need for standardised and validated outcome measures to evaluate patient-facing AI in palliative and supportive care.

Regarding clinical settings, AI was most often applied in outpatient care (147 studies, 73.9%), followed by inpatient (44, 22.1%) and home or hospice care (31, 15.6%). Hospice-specific AI use was identified in six studies (3.0%), and four (2.0%) described community-based implementations.

Input data sources for AI technologies

The sources of input data used for developing or applying AI technologies varied across the included studies. Electronic health records were most common (74 studies, 37.2%), followed by patient-reported outcomes (51, 25.6%), research databases (45, 22.6%), and imaging data (33, 16.6%). Several studies also utilised clinical trial datasets. Non-traditional sources, including social media posts, personal blogs, or audio recordings from people living with cancer, were used occasionally. Twenty-five studies (12.6%) relied on existing AI systems, such as chatbots, assistive robots, or commercial platforms. These were grouped as “other data sources” owing to unclear or unstructured inputs.

Other characteristics

Information on sample size, median age (range), and the number and proportion of female participants varied widely across studies. Additionally, among the 199 studies, only 23 reported data on the duration or frequency of AI use, which ranged from a few hours of single use to 9 months of continuous use. These data are presented in eTable 5 (Supplemental Appendix 2).

Discussion

Main findings of the study

This review synthesised global evidence on the implementation of AI in palliative and supportive care for people with cancer and their caregivers. We identified 199 studies from 36 countries, predominantly conducted in North America and Europe, with recent growth in Asia. Since 2017, AI adoption has accelerated, driven by transformer-based architectures and the emergence of LLMs since 2022.

Within palliative and supportive care, most AI systems supported healthcare professionals in data extraction, analysis, prognostic modelling, and prediction of treatment-related adverse events. Machine learning remained the predominant approach, reflecting the availability of structured data such as imaging and electronic health records. By contrast, natural language processing, deep learning, and LLMs were less common but were applied to communication, education, and interpretation of unstructured data.

Although feasibility was demonstrated across many studies, few reported concrete indicators of clinical integration, such as interoperability with hospital systems or routine workflow use. In most cases, it was unclear whether AI tools were implemented in daily clinical practice or confined to research contexts. This persistent gap between technological innovation and clinical translation reflects challenges including limited validation, data privacy concerns, and poor interoperability.40,41 Bridging this divide will require scalable and sustainable frameworks that enable safe, ethical integration of AI into routine care.

Previous reviews by Reddy et al. 16 and Pan et al. 17 highlighted the potential of AI in prognosis prediction, symptom monitoring, and treatment stratification. However, these reviews preceded the widespread use of LLMs. Building on this evidence, our review identified emerging user-focussed applications, including chatbots for psychological support, educational tools, robotic-assisted rehabilitation, and virtual-reality-based caregiver support. Twenty-six studies described AI tools used directly by people with cancer and caregivers, addressing nutrition, symptom management, psychological support, and education,37,39,42,43 with several focussing on paediatric and AYA populations.37,38

Cancer remains one of the leading global causes of disability-adjusted life years (DALYs), second only to cardiovascular disease.44,45 By 2050, incidence is expected to exceed 35 million cases, underscoring the need for scalable and sustainable innovations across the care continuum.3,46 However, most AI technologies remain at early stages of clinical adoption. Only 23 studies reported the duration of AI use, which typically ranged from a few weeks to several months, and few included long-term follow-up. Considerable heterogeneity in study aims, populations and data sources also precluded quantitative synthesis. This ongoing gap between research and real-world practice underscores the need for future studies with longer observation periods to assess sustained user engagement, long-term effectiveness and system performance over time.

Studies involving people with advanced or terminal cancer face ethical and logistical challenges, including difficulties in obtaining consent, sustaining participation and meeting review requirements.47–49 These barriers may limit the use of AI systems, especially LLM-based tools. Moreover, insufficient clinical validation, transparency and accountability in commercial AI systems further hinder clinical adoption.40,41

Conversely, AI shows promise in home-based and resource-limited palliative settings, providing continuous support, personalised guidance and psychosocial care. Evidence indicates that AI-driven chatbots can provide psychological support comparable to that of trained counsellors. 50 They may be particularly valuable for informal caregivers, who often lack access to professional help and are at risk of anticipatory or prolonged grief. 51

Most studies were conducted in high-income countries, reflecting disparities in digital infrastructure and access to palliative care. As human resources remain the most limited component of healthcare delivery, scalable AI tools such as decision-support algorithms and chatbots could help reduce these inequalities and extend specialist expertise to underserved areas in low- and middle-income countries.5,52 Few studies examined the financial impact of AI implementation. None reported deployment costs, including computing resources (e.g. power consumption for LLM inference), device maintenance, robotic rental, or system integration. Although a recent systematic review identified potential cost savings from AI in general healthcare, economic evidence in palliative and supportive care remains limited. 53 Future studies should therefore include cost-effectiveness analyses alongside clinical outcomes to assess affordability and sustainability, particularly in resource-limited settings. With appropriate ethical governance and investment in digital infrastructure, AI could serve as an equalising technology, improving access, continuity and equity of care.

What this study adds

Although AI technologies, including LLMs, are now widespread, their application in clinical and palliative research remains limited by ethical, practical and economic constraints. This review highlights both the opportunities and challenges of AI-based support for people with cancer and caregivers. As healthcare becomes more patient-centred and home-based, LLMs and related tools may offer practical value, particularly in communication, education and psychological support. Ongoing research and clear ethical frameworks are needed to ensure the safe, equitable and sustainable integration of these technologies into clinical practice.

Strengths and limitations of the study

This review has several limitations. First, although both peer-reviewed and grey literature were comprehensively searched, the included studies varied widely in design, population, outcomes and quality. This heterogeneity prevented meta-analysis or risk-of-bias assessment; findings therefore require cautious interpretation and further validation before clinical application.

Second, variation in the definitions of palliative and supportive care may have introduced bias. Supportive care was defined as the prediction, prevention and management of adverse events related to systemic therapy or radiotherapy. Some studies, however, applied broader definitions encompassing general symptom management, which could have influenced study selection and scope.

Third, most studies originated from high-income countries, limiting generalisability to low- and middle-income settings where healthcare access and digital infrastructure differ. In addition, a large proportion focussed on breast cancer, reflecting prevailing research trends in oncology, which may limit insights into AI use in other cancer types. Future work should therefore include a broader range of malignancies to promote equitable innovation in palliative and supportive care.

Among its strengths, this review is among the first to comprehensively map AI implementation within palliative and supportive care. It systematically included studies across diverse regions and care contexts and incorporated recent advances such as large language models, providing an up-to-date global overview. Furthermore, peer-reviewed and grey literature were searched using a structured, reproducible framework. These findings expand current evidence and may inform the responsible integration of AI into person-centred palliative and supportive care.

Implications

AI technologies have the potential to enhance the quality of care and quality of life for people with cancer and their caregivers by complementing existing services. Key benefits may include improved care coordination, more efficient symptom monitoring and greater emotional support, particularly in home-based and resource-limited settings. To move from research to routine care, integration strategies should address challenges of interoperability, user engagement and cultural adaptation, while ensuring robust data governance and equitable access.

Future research should prioritise the development and validation of AI tools suited to palliative and supportive care, especially for underserved populations and low- and middle-income settings. Interdisciplinary collaboration among clinicians, technologists, ethicists and policy-makers will be essential to guide transparent, ethical and sustainable implementation, ensuring respect for sociocultural values and support for person-centred care.

Conclusions

AI offers promising opportunities to enhance palliative and supportive care for people with cancer. This scoping review mapped current applications across diverse settings and purposes, identifying key trends in AI modalities, user groups and clinical contexts. Although interest in AI is increasing, clinical adoption remains limited, particularly for tools intended for direct use by patients and caregivers. Future work should focus on bridging the gap between technological innovation and sustainable, ethically grounded and person-centred implementation.

Supplemental Material

sj-docx-1-pmj-10.1177_02692163261416261 – Supplemental material for Implementation of artificial intelligence in palliative and supportive care for people with cancer: A scoping review

Supplemental material, sj-docx-1-pmj-10.1177_02692163261416261 for Implementation of artificial intelligence in palliative and supportive care for people with cancer: A scoping review by Shino Kikuchi, Masatsugu Sakata, Takaaki Hasegawa, Sakiko Wada, Satoshi Funada, Mari Makishi and Tatsuo Akechi in Palliative Medicine

Supplemental Material

sj-xlsx-2-pmj-10.1177_02692163261416261 – Supplemental material for Implementation of artificial intelligence in palliative and supportive care for people with cancer: A scoping review

Supplemental material, sj-xlsx-2-pmj-10.1177_02692163261416261 for Implementation of artificial intelligence in palliative and supportive care for people with cancer: A scoping review by Shino Kikuchi, Masatsugu Sakata, Takaaki Hasegawa, Sakiko Wada, Satoshi Funada, Mari Makishi and Tatsuo Akechi in Palliative Medicine

Footnotes

Acknowledgements

We sincerely appreciate Ms. Izumi Endo of Keio University Shinanomachi Media Centre for her invaluable assistance with the literature search. No content in this manuscript originated from ChatGPT. We employed ChatGPT to refine language and adhere to scholarly journal standards.

ORCID iDs

Ethical considerations and consent to participate

As this was a scoping review of publicly available data, ethical approval and informed consent were not required.

Author contributions

S.K. and T.A. contributed to the conceptualization and design of the study, with S.K. developing the review protocol. S.K. and M.S. performed the initial screening of studies, while S.K. and M.M. were responsible for literature acquisition. S.K., M.S., T.H., S.W., S.F., and M.M. performed the full-text screening and extracted the data. All authors reviewed the manuscript, revised it, and approved the final version.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article:T.A. has received lectures fees from AstraZeneca, Chugai, Daiichi-Sankyo, Eisai, Eli-Lilly, Kowa, Lundbeck, MSD, Meiji-Seika Pharma, Merck, Otsuka, Pfizer, Shionogi, Sumitomo pharma, Takeda, Tsumura, UCB, and Viatris, and a grant from Shinogi, royalties from Igaku-Shoin, outside the submitted work. TA serves as the representative director of the General Incorporated Association NCU CRESS and receives compensation as an advisor to Snom Inc. TA. has pending patents (2020-135195, 2024-516288, 2025-128748) (Institute) and the patents (7313617) (Institute). M.S. is employed in the Department of Neurodevelopmental Medicine, Nagoya City University Graduate School of Medical Sciences, which is an endowment department supported by the City of Nagoya, and has received a personal fee from SONY outside the submitted work. The other authors declare no conflicts of interest.

Data availability statement

The review was conducted using data that were already available in the public domain, with appropriate references provided throughout. The full search strategy used in the present review can be found in the supplementary material.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.