Abstract

Generative artificial intelligence (Gen.AI) is capable of significantly improving the breadth and depth of structured literature reviews (SLRs). However, its inclusion raises essential questions regarding the review’s methodology, quality, and ethical implications. Previous research predominantly focused on the capabilities and limitations of Gen.AI to establish guidelines for research practices. However, the rapid evolution of Gen.AI often outpaces the publication of methodological papers. In response, our study adopts a criteria-centric approach, scrutinizing the scientific quality standards that Gen.AI must meet. In other words, instead of discussing

Keywords

Introduction

Generative artificial intelligence (Gen.AI) has seen rapid developments garnering the attention of practitioners and researchers alike. Most famously, the introduction of ChatGPT in 2022 has surpassed many peoples’ imagination, introducing new groundbreaking functionalities. For instance, the general public could use natural language to interact with large amounts of data, like “chatting with PDF files” (Hadi et al., 2023). These functionalities have proved similarly influential in research. Many scholars have started using Gen.AI tools for various parts of their knowledge creation processes, like identifying relevant literature (Dann et al., 2017), creating the manuscript’s abstracts (Else, 2023), or even developing the entire manuscript (Dwivedi et al., 2023). This has resulted in published papers officially co-authored by Gen.AI tools (Stokel-Walker, 2023), prompting the Scientific American to attest that Gen.AI already has “thoroughly infiltrated scientific publishing” (Stokel-Walker, 2024).

However, through these unique capabilities, Gen.AI has ambivalent effects on the scientific landscape. On the one hand, it has fueled the trend of rising research publications. Even before Gen.AI, researchers struggled with the exponential growth of scientific research, increasing the risk of missing valuable contributions in their field (Cropanzano, 2009). For instance, while in 1980, approximately 650,000 academic papers (peer-reviewed papers in journals and conferences across all disciplines) were published globally, it had surged to about 3 million by 2022 (Science.org, 2023). Despite no current statistics on post-Gen.AI publishing, experts and scholars alike express paper submission numbers to exponentially increase because of Gen.AI usage in manuscript development (Stokel-Walker, 2023).

On the other hand, Gen.AI can support scholars in managing these large data volumes (Antons et al., 2023; Mortenson and Vidgen, 2016; Rathje et al., 2024). For instance, Gen.AI tools can assist in streamlining the identification of relevant papers, ensuring comprehensive coverage of pertinent literature (Dann et al., 2017). Furthermore, Gen.AI excels in summarizing extensive scholarly articles (Glickman and Zhang, 2024) and has been employed in automating data extraction and analysis (Rathje et al., 2024), drastically increasing the efficiency and accuracy of synthesizing and analyzing large volumes of research data, thereby enhancing the overall quality of systematic reviews (Drori & Te’eni, 2024).

In business research, both the negative and positive effects of Gen.AI are particularly pronounced for structured literature reviews (SLRs) (Tingelhoff et al., 2024). First, the negative effects of Gen.AI are especially severe for SLRs, as they are meant to consolidate past research to generate new insights, methodologies, or theories (Webster and Watson, 2002). As Gen.AI amplifies the volume of “past research,” it also significantly increases the workload and complexity for authors. Simultaneously though, SLRs are particularly apt for Gen.AI augmentation. Algorithm-based Gen.AI tools favor clear context information (like processes, task descriptions, or output formats), which perfectly maps to the structured, transparent, and reproducible nature of SLRs (Tranfield et al., 2003).

Past research on Gen.AI-assisted SLRs mainly falls into one of two categories: investigating Gen.AI’s potential by analyzing its capabilities (e.g., Schryen et al., 2024; Wagner et al., 2022); or scrutinizing how Gen.AI’s capabilities might undermine research integrity. Given the critical importance of transparency and reproducibility in the SLR process, the black-box nature of Gen.AI tools raises significant concerns (Dwivedi et al., 2023). Scholars argue that relying on Gen.AI outputs may lead to authors losing control over their SLRs, given the technologies’ issues with plagiarism, biased information, or false claims (Alavi et al., 2024; Ngwenyama and Rowe, 2024). Combining both literature streams, past research can be summarized as a discussion on the influence of Gen.AI’s capabilities on SLR standards (Jarvenpaa and Klein, 2024).

However, the speed at which Gen.AI is evolving currently outpaces publication cycles (Nguyen et al., 2022). This means the bases for Gen.AI discussions are often outdated with the paper’s publication. Consequently, we propose to flip the discussion to how research criteria shape the use of Gen.AI capabilities. In other words, instead of discussing

To identify and analyze the established processes and guidelines for conducting state-of-the-art SLRs.

To assess how the established SLR process and guidelines influence the use of Gen.AI tools and to formulate optimal strategies for its integration.

We strive to contribute to the academic discourse in two distinct yet interconnected ways. First, our analysis of the established state-of-the-art processes and associated quality standards in SLRs culminates in the synthesis of a unified process and criterion set. This synthesis not only underpins a comprehensive understanding of the extant SLR methodologies but also serves as the foundational framework for integrating Gen.AI. The relevance of this integration extends beyond those researchers actively employing Gen.AI in their SLRs; it offers an insightful summary of established research methodologies and normative guidelines, benefiting the wider scholarly community. Second, we delineate the specific scenarios conducive to incorporating Gen.AI into this fundamental framework, as well as situations where its integration may not be suitable (Ngwenyama and Rowe, 2024). Our contribution is further solidified by providing a detailed, step-by-step guide—akin to a “cooking recipe”—to effectively integrate Gen.AI in SLRs, ensuring adherence to established quality criteria.

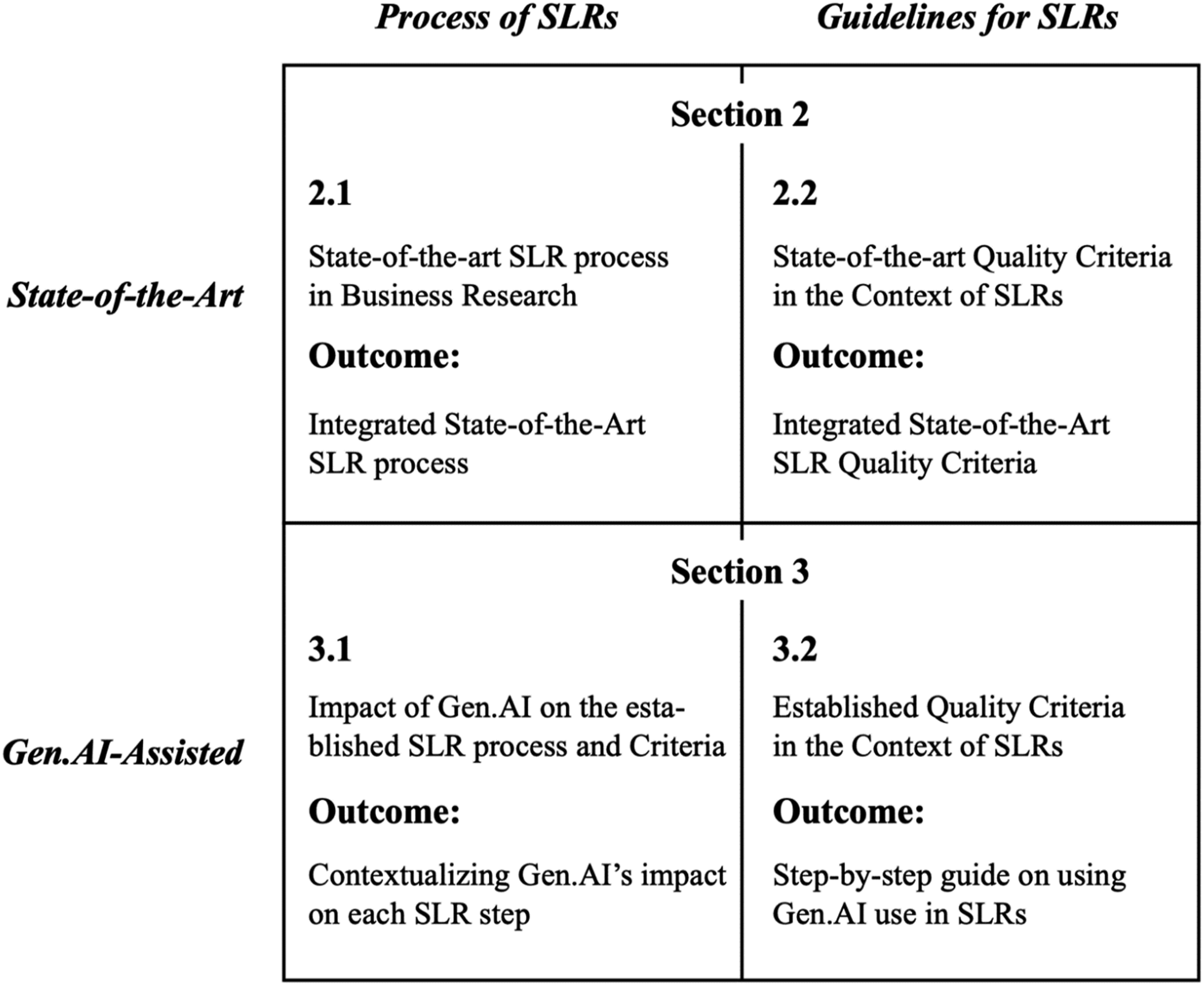

Using a two-by-two matrix, Figure 1 lays out the structure of this paper. Structure of this paper.

The state-of-the-art of structured literature reviews

Traditionally, SLRs are a scientific tool consolidating past research to generate new insights, methodologies, or theories, thereby serving as a general cornerstone of research (Webster and Watson, 2002). SLRs play a critical role in collating and synthesizing existing knowledge, developing new research trajectories, and contributing to the dynamic evolution of various fields (Webster and Watson, 2002). They not only frame research agendas and promote methodological transparency (Wagner et al., 2022) but also play a crucial role in acknowledging and understanding the cultural nuances within research phenomena (Kummer et al., 2012). Given their substantial influence and significance, SLRs are subject to rigorous academic scrutiny. Scholars have extensively debated the proper execution of SLRs (e.g., vom Brocke et al., 2015), their adherence to quality criteria (e.g., Tranfield et al., 2003), and the effective presentation of findings (e.g., Webster and Watson, 2002). In this section, we initially discuss the state-of-the-art of the SLR process, followed by a guide on how to effectively conduct an SLR.

The state-of-the-art process of structured literature reviews

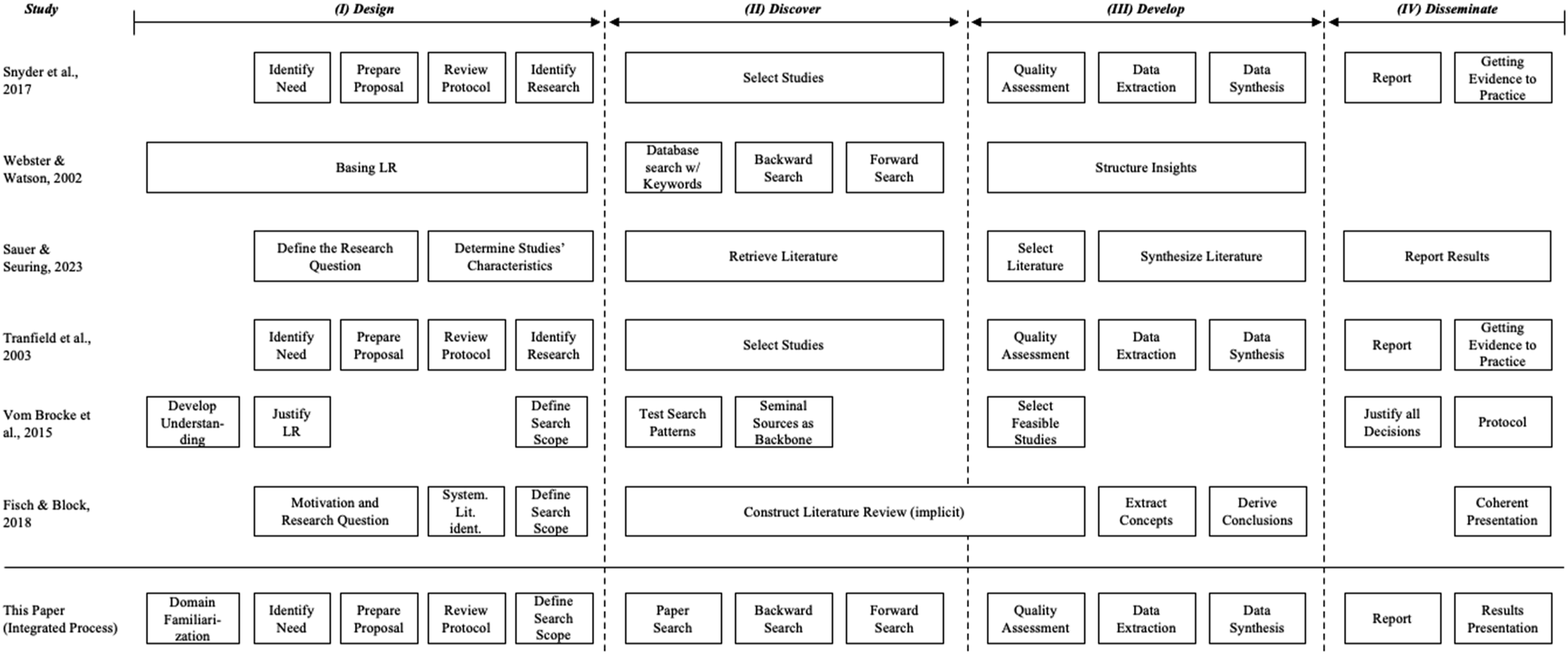

To conceptualize when and how to use Gen.AI in SLRs, it is imperative that we first understand the established process of conducting SLRs. We identified several influential method papers by examining published SLRs in business-related FT-50 journals and engaging with senior researchers across several countries and continents. We present and contrast these papers and their proposed processes in Figure 2. Noticing the commonalities in the processes described in these studies (e.g., see Snyder, 2019), we categorized their tasks into four phases: (1) designing the review; (2) discovering relevant research; (3) developing the outcome of the SLR; and (4) disseminating knowledge. We discuss each phase and associated steps in detail below. Consolidation of literature review processes of established method papers.

Design

The initial phase of an SLR encompasses its foundational preparation. This stage involves a thorough domain familiarization to establish a robust solid base for the ensuing subsequent phases (vom Brocke et al., 2015). Typically, renowned scholars conduct SLRs, leveraging their extensive domain knowledge to bolster the validity of their literature selection and interpretation (Webster and Watson, 2002). Emerging scholars, especially those engaged in PhD research, may require more initial workload to achieve domain knowledge similar to senior researchers (Ngwenyama and Rowe, 2024; vom Brocke et al., 2015).

Next, authors must identify the need for their SLR (Sauer and Seuring, 2023). An SLR becomes necessary, for example, when there is a need to consolidate existing knowledge on a topic (Webster and Watson, 2002), to stay abreast of developments and theoretical shifts (Levy and Ellis, 2006), or when exploring interdisciplinary connections (Okoli and Schabram, 2015).

Once the authors have developed a profound understanding of their research field and formulated the need for their SLR, they can begin its preparation. Preparing a SLR requires a systematic and clearly defined strategy (Fisch and Block, 2018). Essential steps include defining the review’s scope and objectives (Webster and Watson, 2002), choosing the right balance between breadth and depth (Fisch and Block, 2018), and documenting the process for its reproducibility in a review protocol (Okoli, 2015). A review protocol is “a plan prior to the review [that] states the criterion for including and excluding studies, the search strategy, description of the methods to be used, coding strategies and the statistical procedures to the employed” (Tranfield et al., 2003: 213).

Lastly, authors need to determine the scope of their SLR (Fisch and Block, 2018; vom Brocke et al., 2015). Usually, this refers to formulating a set of keywords and phrases that closely align with the research topic, ensuring they encompass both broad and specific aspects of the topic (Fink, 2019). Boolean (AND, OR, NOT) and logical operators (LIKE, %, *) can refine the search (Rowley and Slack, 2004). Including synonyms and related terms can broaden the scope, as Levy and Ellis (2006) recommended. Furthermore, researchers must identify criteria for the inclusion and exclusion of literature to be reviewed (Sauer and Seuring, 2023), which can regard paper characteristics (e.g., “the paper is written in English” or “the full-text is open access”) or contents (e.g., “the paper uses one theory” or “the paper assumes one perspective”).

Discover

Post-design, the discovery phase involves executing the search strategy to gather potential literature. First, researchers should conduct keyword searches within the identified databases. If unexpected or unintended results are obtained, authors can iteratively refine their decisions from the design phase (Snyder, 2019). This approach aids in retrieving a comprehensive set of articles that constitute the SLR’s foundation. A subsequent backward search allows researchers to review the references of the already identified articles to find additional sources. This method is critical for uncovering foundational works that might not surface in keyword-based searches (Webster and Watson, 2002). By examining the citations in key articles, researchers can trace the intellectual lineage of a topic, ensuring a deep and historical understanding of the research area (Okoli and Schabram, 2015). A forward search, conversely, involves looking at studies that have cited the already identified key articles. Resources such as Google Scholar and Scopus provide citation-tracking capabilities, which are essential for this task (Cronin et al., 1998). This method allows researchers to understand a topic’s evolution and current state as newer studies build upon or challenge the findings of earlier works. Forward searching is especially beneficial in rapidly evolving fields, where keeping up with the latest developments is critical (Bawden and Robinson, 2015). Together, the keyword, backward, and forward searches ensure a thorough and up-to-date SLR, capturing both the field’s historical roots and contemporary advancements.

Develop

After compiling a comprehensive list of potential sources in the development phase, each paper is rigorously evaluated for its quality, relevance, and contribution to the research question to finalize the selection (Sauer and Seuring, 2023). Initially, researchers need to evaluate the quality of each paper, focusing on the publication source’s credibility, the research methodology employed, and the findings’ impact (Levy and Ellis, 2006; Snyder, 2019; Webster and Watson, 2002). This assessment helps to exclude studies that do not meet the scholarly standards necessary for a robust SLR. Moreover, researchers should eliminate studies that are inaccessible to the research community to ensure a transparent and replicable process (vom Brocke et al., 2015).

Data extraction and synthesis then involve categorizing key elements such as research questions, methodologies, findings, and theoretical frameworks (Rowley and Slack, 2004). Researchers should thoroughly synthesize and organize the data to uncover relevant themes and concepts (Fisch and Block, 2018), identifying patterns and gaps, and constructing a comprehensive narrative encapsulating the breadth and depth of the research field (Fink, 2019; Okoli and Schabram, 2015; Torraco, 2005). It is vital to ensure that the synthesis not only summarizes the findings of individual studies but also draws connections between them to offer a broader understanding of the field (Cronin et al., 1998).

Disseminate

The dissemination phase centers on presenting the SLR findings and associated decisions transparently and coherently. This includes justifying source selection, criteria for inclusion and exclusion, and the employed methodologies for analysis and synthesis of literature data (Levy and Ellis, 2006; Webster and Watson, 2002). Such transparency is crucial in establishing the review’s credibility and the possibility of replication, as Okoli and Schabram (2015) emphasized. In presenting results, effective techniques like visual aids and engaging narratives are vital for conveying complex information and facilitating comprehension (Snyder, 2019; Webster and Watson, 2002). Employing a concise and engaging writing style renders the SLR accessible and valuable to a broader audience, including practitioners. Consequently, authors must decide on the appropriate publication outlet and theoretically embed their SLR into its past research (Sauer and Seuring, 2023).

The state-of-the-art guidelines for structured literature reviews

As with any research paradigm, SLRs must comply with quality criteria and guidelines established by ethics committees, scientific associations, and journals. Given their crucial role in research, these SLR criteria must be carefully considered and contextualized, as detailed in established method publications (Tranfield et al., 2003; Webster and Watson, 2002). Irrespective of whether researchers decide to integrate Gen.AI or do the entire SLR manually, these criteria are the foundation of rigorous and integrity-driven SLRs (Paré et al., 2023). Consequently, they are the constant against which new methods and tools must be evaluated. Thus, to comprehend how established guidelines influence the application (or non-application) of Gen.AI in SLRs, it is essential to first grasp the underlying criteria (Gregor, 2024).

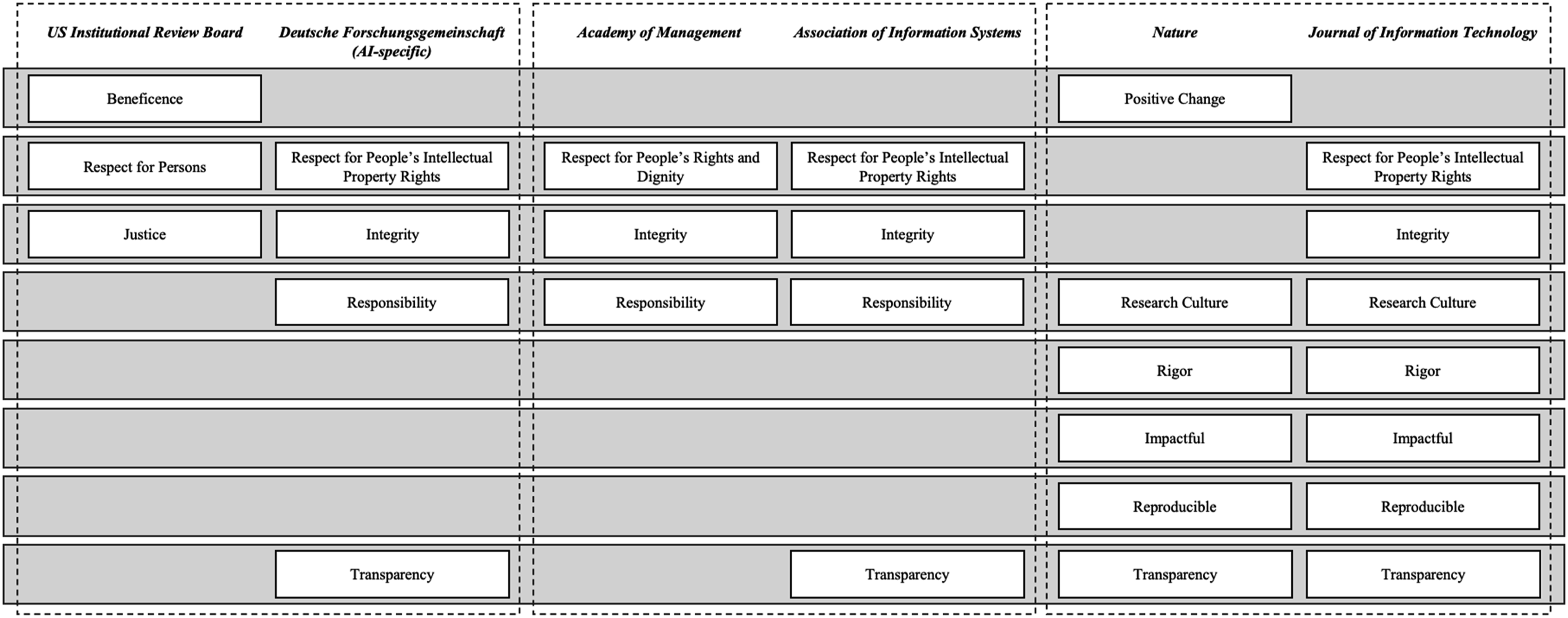

In this study, we specifically incorporated the quality criteria from the US Institutional Review Board and the Deutsche Forschungsgemeinschaft (DFG) (as examples of national ethics committees), the Academy of Management and the Association for Information Systems’ Code of Ethics (as research communities), and Nature and the Journal of Information Technology’s Value Statements (as exemplary journals)—as these are found to already incorporate guidelines about the responsible use of Gen.AI for their authors and reviewers (Gregor, 2024). Through our analysis, we found eight quality criteria, emphasizing high ethical standards and scientific integrity as foundational requirements for research: beneficence, respect for persons, integrity, responsibility, rigor, impact, reproducibility, and transparency. We provide an overview of these criteria in Figure 3. While our consolidated figure refers specifically to the guidelines of the six aforementioned organizations, our extended analysis of over 30 institutions and journals showed that the consolidated criteria align closely with, and can be considered representative of, the full set. Henceforth, we proceed to explain each criterion as interpreted by these bodies, contextualized by its relevance to SLRs as discussed by scholarly research. Quality criteria for academic rigor and integrity.

Beneficence

In the context of SLRs, researchers must be acutely aware of the broader impact their synthesis of existing research has on societal welfare. This requires a meticulous selection and evaluation of studies that collectively offer insights beneficial to society (Macfarlane, 2010). Researchers should aim to highlight the positive societal outcomes identified in the literature, ensuring their review underscores contributions that advance societal good. For instance, if an SLR were to review the dark sides of technological change, it should highlight mitigation strategies to positively impact how society deals with the presented problems. Additionally, it is essential to address any ethical issues reported in the studies reviewed, providing a balanced perspective that considers both benefits and potential harms (Walsham, 2012). By doing so, SLRs can guide future research and policy-making in directions that foster societal well-being and mitigate harm.

Respect for persons

In research, in general, ethical treatment of data and research subjects is crucial (Mertens and Ginsberg, 2009). While SLRs involve analyzing publicly available data rather than collecting new data, this criterion is less important to the review process. Still, when analyzing articles, authors should investigate how primary researchers obtained informed consent from their research subjects and ensured their privacy. By thoroughly examining and reporting on these ethical practices, SLR authors can highlight the ethical rigor of the studies they review, thus maintaining public trust and upholding the ethical standards of the research community. Addressing these considerations demonstrates a commitment to ethical scholarship and reinforces the importance of ethical research practices across all stages of research.

Integrity

Research integrity is paramount in SLRs, requiring researchers to be truthful in every aspect of the review process. This principle mandates the accurate representation of the papers included in the review, strictly avoiding any form of fabrication, falsification, or misrepresentation (Resnik, 2005). For instance, suppose an experienced author is conducting an SLR in a field where they have already published many articles. Giving one’s own articles more exposure in the review undermines the fair and unbiased representation of the sample, hence violating integrity. In SLRs, maintaining honesty is critical for several reasons. First, it ensures that the conclusions drawn from the review are based on objective and verifiable data, which is essential for the reliability and trustworthiness of the review. Second, accurate reporting and unbiased interpretation of the findings from the studies included in the review contribute meaningfully and authentically to the cumulative body of scientific knowledge (Comstock, 2012; Steneck, 2003). Adhering to integrity allows SLRs to provide a reliable synthesis of existing research, guiding future research directions and informing policy and practice based on solid and dependable evidence (Snyder, 2019).

Responsibility

Researchers must adhere to ethical standards and legal regulations, accepting the consequences of their decisions throughout the review process (Shamoo and Resnik, 2009). Responsibility in SLRs includes meticulously citing sources, acknowledging the work of other researchers, and attributing work fairly. For instance, authors are responsible for reading papers in their sample entirely and not just skimming over them to ensure they are not attributing wrongful information to another author (Anderson et al., 2010). Only by responsibly handling the diverse studies included in an SLR and analyzing them faithfully can researchers establish the trust and appreciation necessary for advancing a community’s knowledge (Resnik et al., 2015). However, the notion of attributing work “fairly” also entails subjective interpretation; individual researchers may understand and convey the same work differently based on their perspectives and analytical frameworks. Therefore, responsibility in SLRs demands both an accurate portrayal of existing literature and an awareness of the interpretive nuances that come with assessing and citing others’ work.

Rigor

An SLR demands a rigorous approach to systematically identify, select, and analyze published work. This rigor involves implementing a clear, transparent methodology and encompassing specific search strategies, selection criteria, and analytical methods (Snyder, 2019; Webster and Watson, 2002). Without assessing the quality of the included studies, the review’s conclusions might be based on unreliable or biased data, compromising the methodological soundness of the review. For instance, authors might use journal rankings or scientific indices as a proxy for publishing quality (Hahn, 2024). Rigor is crucial in SLRs for several reasons. Firstly, it ensures comprehensive coverage of relevant literature, which minimizes bias and enhances the review’s validity (Okoli and Schabram, 2015). Secondly, a rigorous review process allows other researchers to verify and build upon the findings, which is essential for advancing scholarly research (Okoli and Schabram, 2015; Sauer and Seuring, 2023). This level of thoroughness is particularly important in dynamic and interdisciplinary fields, where evolving methodologies and paradigms require continuous reassessment and validation of findings. By maintaining rigor, SLRs contribute reliable, high-quality insights that can guide future research and inform practice, ensuring that the synthesized knowledge is both credible and valuable.

Impact

In SLRs, impact refers to the review’s capacity to significantly influence future research, practice, policy, or theory (Fisch and Block, 2018). An impactful review tackles essential issues, presents new perspectives, or offers thorough frameworks that other researchers and practitioners in the field can utilize (Sauer and Seuring, 2023). It can result in developing new theories, enhancing existing systems, and advancing theoretical knowledge (Bawden and Robinson, 2015; Cronin et al., 1998). Additionally, an impactful SLR affects policy and decision-making in organizations, underscoring its importance beyond academia (Levy and Ellis, 2006; Webster and Watson, 2002). For example, authors, who set the scope of their review too narrowly, might limit the generalizability of their findings and, thus, the usefulness of their review, undermining the review’s relevance and publishability.

Reproducibility

In SLRs, reproducibility means other researchers can replicate the review process and ideally reach similar conclusions using the same methods and data (Snyder, 2019). By adhering to reproducible methods, an SLR provides a transparent and systematic approach that other researchers can replicate to verify results, eliminating subjective bias and enhancing the robustness of the conclusions (Levy and Ellis, 2006; Webster and Watson, 2002). Scholars have long been advocating for systematicity and rigor in synthesizing existing knowledge, highlighting the necessity for researchers to adhere to established guidelines and best practices when conducting literature reviews (Cram et al., 2020).

However, a reproducible SLR does not require authors to justify every paper in their sample. For instance, vom Brocke et al. (2015: 216) emphasize that “there is no reason to exclude a relevant publication from a SLR if the researcher came across it by means other than the keyword search, even by chance.” This means that an SLR inherently involves some level of non-reproducibility. Still, it is crucial where reproducibility is situated in the SLR process. While paper search might have more freedom, researchers with the same paper samples and analysis framework should ideally reach the same conclusions. This is important, as reproducibility also allows for continual updates and advancements of SLRs, as future researchers can build on the established methodology to incorporate new studies and insights, ensuring the SLR remains relevant and comprehensive over time (Fink, 2019; Okoli and Schabram, 2015).

Transparency

Transparency in SLRs means clearly and explicitly documenting the review process—from the initial formulation of research questions to the selection and evaluation of sources and data analysis and synthesis methods. Transparency provides a roadmap of the researcher’s intellectual journey, allowing readers to understand and evaluate the basis of the review’s conclusions (Snyder, 2019). Given research’s fast-paced and complex nature, clearly articulating the research scope, boundaries, and methodology is essential for the review’s relevance and rigor (Levy and Ellis, 2006; Webster and Watson, 2002). Transparency also helps establish the review’s and the researcher’s credibility, building trust in the findings, which is essential for advancing knowledge (Okoli and Schabram, 2015; Rowley and Slack, 2004). Furthermore, transparently reporting SLR processes helps future researchers grasp the context and limits of past reviews, contributing to the field’s cumulative development (Cronin et al., 1998; Torraco, 2005).

A criteria-centric perspective on Gen.AI-assisted literature reviews

In this study, we have comprehensively analyzed SLR processes and quality criteria, culminating in an integrated state-of-the-art SLR process and criteria set. Enhancing the understanding of current SLR methodologies is foundational to conducting impactful review articles (Paré et al., 2023) and establishing a baseline for integrating Gen.AI.

Gen.AI is an evolutionary step of machine learning (ML) and deep learning (DL) algorithms and has significantly transformed the landscape of academic support technologies. Initially, tools like Grammarly provided foundational aid, primarily focusing on grammar and spelling corrections to enhance the clarity and correctness of academic writing. To this day, these tools are crucial for researchers, especially non-native English speakers, to improve their scholarly communication for clarity and understandability (Araújo et al., 2020; Mettler and Sunyaev, 2023). As computational and algorithmic capabilities advanced, the scope of these tools expanded beyond error correction. AI offers new, unique functionalities focused on analyzing, interpreting, and progressively generating natural language, but also to improve argumentative structures (Wambsganss et al., 2024). The latest stage of these developments, culminating in more sophisticated models, are referred to as Generative AI. This is possible as the underlying algorithms learn from past prompts and data, thereby refining their output to progressively become more efficient and effective (Chowdhary, 2020; Gatt and Krahmer, 2018). Particularly through the development of neural network-based models, Gen.AI tools can analyze large blocks of text, learn from extensive databases of scholarly literature, and provide contextually and academically appropriate recommendations throughout the entire research process. For instance, as early as 2017, research by Dann et al. showed that Gen.AI could outperform humans in identifying potentially relevant literature through innovative DL algorithms. Ultimately, Gen.AI is especially suitable for tasks requiring deep comprehension of language and context (Brown et al., 2020; Zhong et al., 2024), significantly extending the range of tasks that AI tools can effectively undertake (Benbya et al., 2024; Wagner et al., 2022). Hence, Gen.AI is a major advancement in computational assistance in the context of SLRs.

While the adoption of Gen.AI-assisted SLRs has grown, as evidenced by publications highlighting the potential of integrating Gen.AI into this process (Noroozi et al., 2023; Schryen et al., 2020), it is imperative for researchers to recognize that the effective use of these tools necessitates a high level of skill and domain expertise. Researchers must be capable of independently performing the tasks they delegate to Gen.AI to critically evaluate and adapt the outputs generated. If a researcher lacks the necessary skills to carry out a task manually, it becomes challenging to assess the accuracy, relevance, and reliability of the Gen.AI output.

Criteria-based implementation of Gen.AI into systematic literature reviews

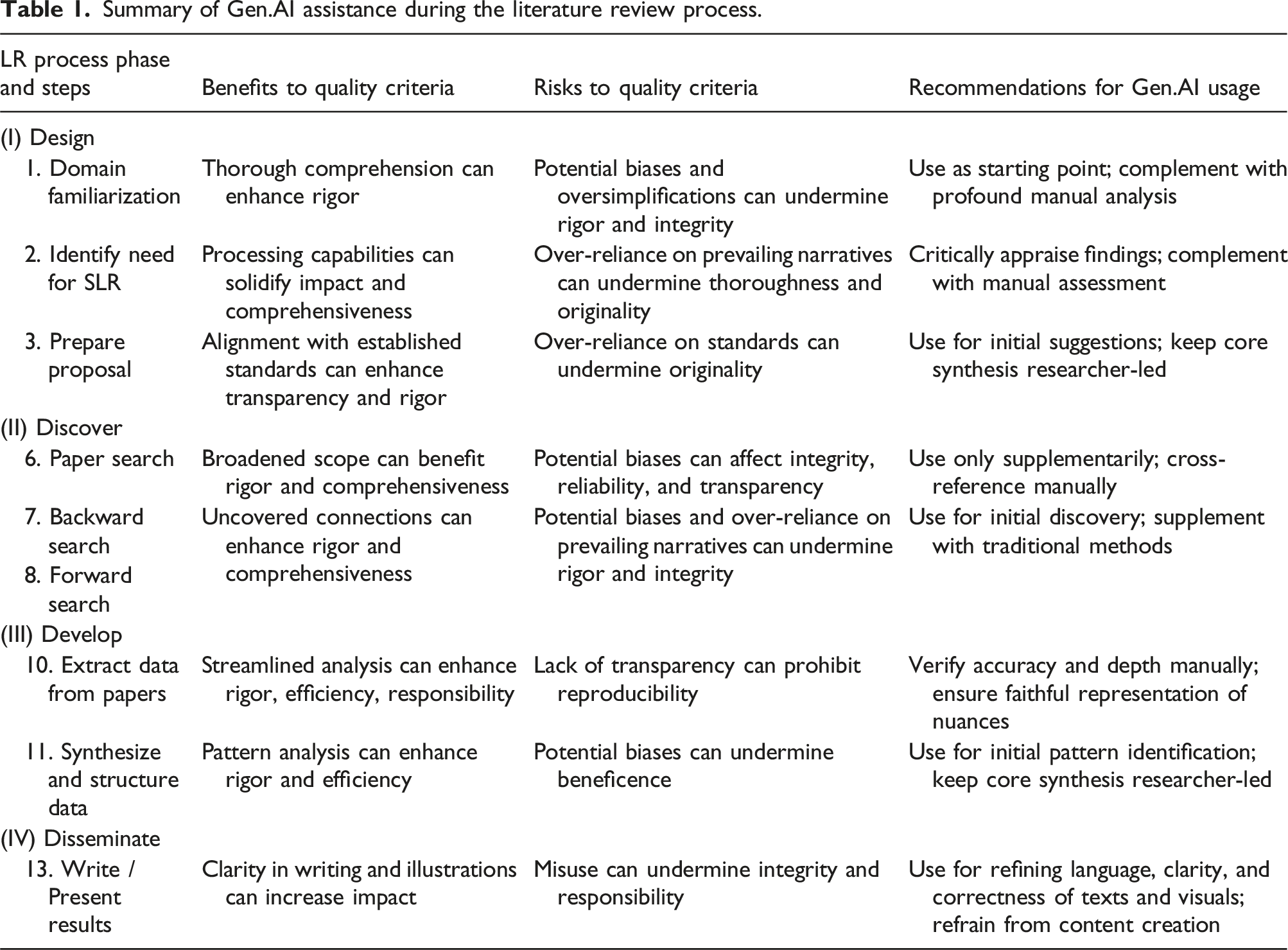

Summary of Gen.AI assistance during the literature review process.

Design

Domain familiarization

Developing a thorough understanding of the relevant literature can be supported through Gen.AI tools and services trained on extensive academic literature (Dann et al., 2017; Ngwenyama and Rowe, 2024). Through their summarization and clustering capabilities, tools like Scite Assistant or Consensus can generate high-level, tabular overviews tailored to specific research questions, allowing researchers to quickly scan vast amounts of literature (Alshami et al., 2023; Benbya et al., 2024; Lim et al., 2023). However, while these tools might offer fast answers and seemingly comprehensive high-level overviews, researchers should be mindful of their inherent lack of transparency and explainability (Ngwenyama and Rowe, 2024). When using Gen.AI tools in their search process, researchers should note that these tools primarily rely on Open-Access publications and include content only up to a certain date (Lund et al., 2023). This limitation is particularly significant in fast-evolving fields like IS, and Gen.AI research in particular; it may lead to situations where authors fail to include the newest findings if they solely rely on such tools for their search. Consequently, using these tools should be followed by an extensive manual examination of the literature, ensuring one’s understanding of its subtle and complex aspects to maintain the integrity and depth of the SLR (Lund et al., 2023; Ngwenyama and Rowe, 2024).

Identify need for SLR

Dependent on a comprehensive understanding of existing literature, recognizing unexplored areas and emerging trends is crucial for determining the SLR’s beneficence and potential impact. Although the author should decide on the specific research focus, Gen.AI can substantially support researchers. For instance, specialized tools like Powerdrill augment general models such as GPT-4, enabling the quick inclusion and synthesis of domain-specific literature (Dann et al., 2017). These tools also critically assess the relevance of identified research gaps by incorporating lesser-known or interdisciplinary studies and serve as a feedback mechanism to direct researchers toward meaningful areas of inquiry (Dwivedi et al., 2023). However, over-relying on Gen.AI, which primarily depends on existing literature, might lead to the perpetuation of established narratives, especially in well-researched fields (Schryen et al., 2024). This highlights the necessity for researchers to critically evaluate Gen.AI outputs to avoid redundant insights and ensure the originality and innovation of their work. By employing Gen.AI, researchers can increase their efficiency in working with large amounts of literature. However, assessing if there is or is not a need for a SLR should ultimately always be done by the author. This approach ensures that the research remains impactful, beneficent, and adherent to academic standards, thus contributing significantly to the field.

Prepare proposal

When crafting research proposals, authors must align their SLRs with existing research frameworks, ensuring systematic, rigorous, and original work. Gen.AI integration can significantly enhance the structuring and conceptualization of the review. These tools are adept at aligning the review’s framework with current quality standards, templates and recognized structures, thereby assisting in defining the scope and objectives of research endeavors. For example, researchers can use tools like ChatGPT-1o to generate tables or frameworks in text-based formats such as LaTeX or Mermaid. Historically, these more technical formats required specialized training. Today, Gen.AI tools allow authors to generate and adapt them using only natural language, reducing barriers and simplifying the creation of structured frameworks for their proposals. Furthermore, authors can use Gen.AI tools to rephrase or translate their notes into a first draft for their proposal, saving time and making it easier to share with colleagues.

Prepare a review protocol

Given its instrumental role in the academic discourse (Paré et al., 2023), we advise against using Gen.AI tools in preparing a review protocol. As the process requires high precision, individual judgment, and methodological rigor, authors cannot ensure that Gen.AI judges and weighs characteristics to be reported fairly, precisely, and unbiased (Dowling and Lucey, 2023). Keeping detailed records of each step increases accuracy and facilitates critical reflection on the review process. This manual approach aligns with the quality criteria of transparency, replicability, and methodological rigor, which are fundamental to the integrity of academic research.

Define search scope

Defining the search scope involves manually selecting keywords and databases based on a deep understanding of the field and its current trends, depending strongly on the author’s diligence, ethical responsibility, and transparency to ensure the reliability and trustworthiness of the SLR findings. It is a critical task in the SLR process, as it lays the foundation for the entire review. We recommend manually selecting keywords and databases instead of relying on Gen.AI assistance, as such tools at this stage might introduce incomplete literature representations (Alshater, 2022) or subtle biases, such as the anchoring effect—where initial suggestions unduly influence subsequent decisions (Tversky and Kahneman, 1974). A manual approach ensures control and transparency, upholding the quality criteria of integrity, responsibility, and rigor in SLR findings.

Discover

Paper search

Transparency and reproducibility are essential in the SLR process, especially during the paper search step. As such, structured approaches like Boolean search queries are crucial for refining results and maintaining systematic and transparent literature searches. This ensures that all researchers can access the same data, use the same search strategies, and achieve similar results, thereby upholding the integrity of the review process.

At their core, most Gen.AI tools can be described as a natural language interface to a black-box model. As such, they neither can nor should replace academic databases as the primary resource for building the review sample. However, Gen.AI tools can improve search efficiency by assisting in the initial creation and adjustment of keyword search queries, including tailoring these queries to the syntax requirements of different databases (Wang et al., 2023). This is particularly useful since different academic databases often have slightly varying search query syntaxes and specific requirements for formatting Boolean operators, wildcards, and other search parameters, making the adaptation of the search queries both tedious and time-consuming. Furthermore, Gen.AI can assist researchers in identifying synonyms, related terms, and variations in language usage that may affect search results (Badami et al., 2023). By suggesting alternative terms and refining search queries, these tools help overcome language barriers, terminology inconsistencies, and vocabulary variations, enabling more thorough and precise literature searches (Badami et al., 2023; Min et al., 2023).

Backward and forward search

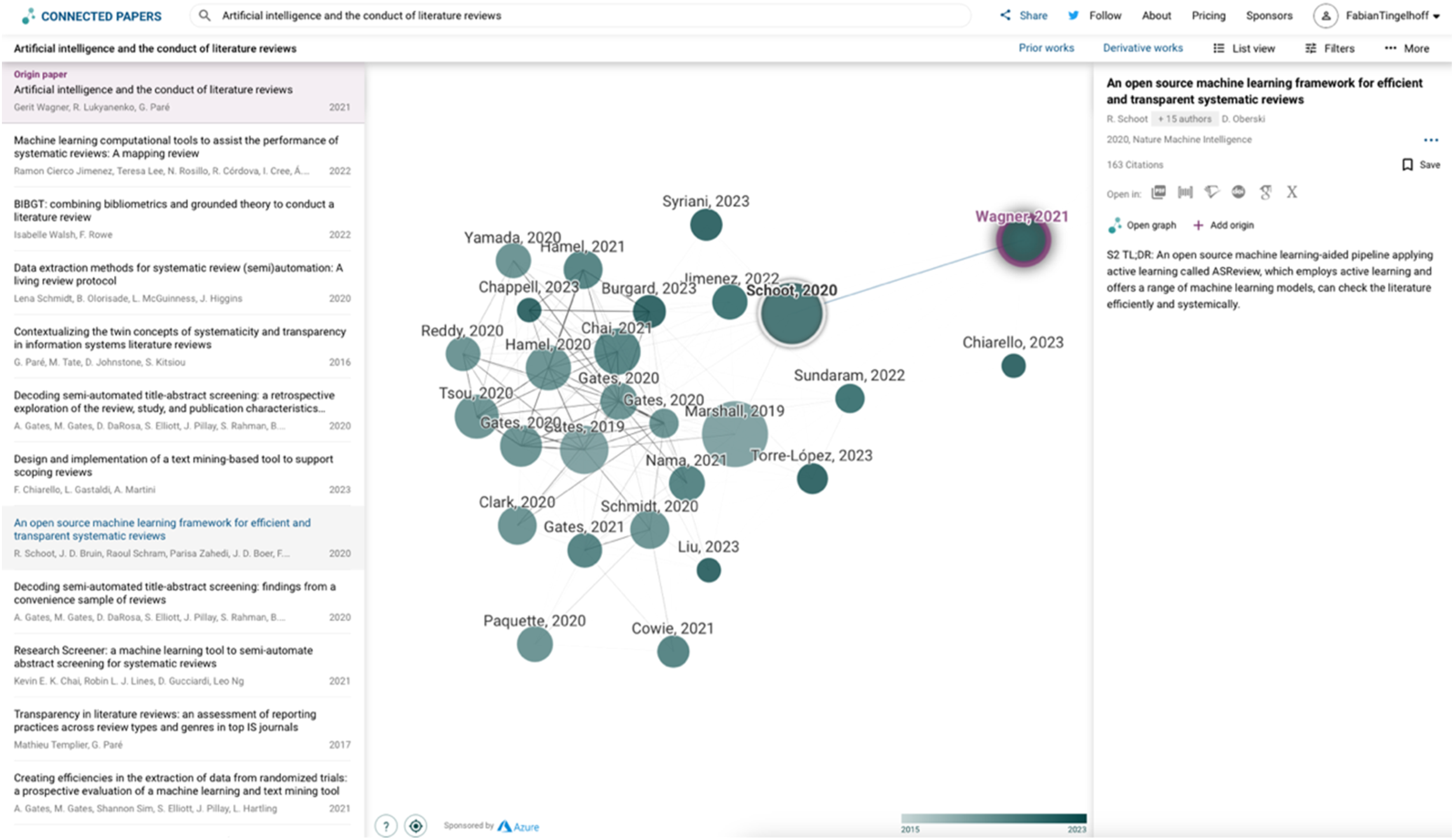

In backward and forward searches, authors need to understand and use connections between publications to find more relevant literature. Integrating visualizing services like Semantic Scholar, Research Rabbit, or Connected Papers can add a new dimension to traditional citation tracking in SLRs. Based on a set of author-selected papers, they can identify thematic and conceptual connections between papers beyond direct citation links (Wagner et al., 2022). This capability to pinpoint semantically related studies, along with providing visual maps of the literature landscape (Haddaway et al., 2022), can enable authors to gain a more comprehensive and nuanced understanding of the subject matter, enhancing the rigor of the SLR. To illustrate, Appendix 1 shows a “Connected Papers graph.”

Moreover, Gen.AI tools can assist in automating searches, identifying relevant studies more quickly, and uncovering connections that might be missed through manual searches alone. However, ensuring the quality and relevance of the identified literature requires critical human evaluation to maintain rigor and integrity and avoid biases that might emerge from the reliance on Gen.AI’s training data (Harshvardhan et al., 2020). This approach ensures a transparent and reproducible search process, meeting critical quality criteria of academic research. Researchers can conduct a more comprehensive SLR by judiciously integrating Gen.AI’s capabilities with established, manual research techniques (Dann et al., 2017; Wagner et al., 2022).

Develop

Quality assessment of paper

Quality assessment of scientific publications involves two key steps: determining if academic works meet scholarly standards; and assessing if it contributes significantly to the research question. Whether Gen.AI can assist in achieving these goals is a much-debated question. While Wagner et al. (2022) reason that Gen.AI only possesses limited potential to assist in quality assessment, Drori and Te’eni conclude in their 2024 study that Gen.AI can indeed assist researchers in evaluating the quality of research papers by analyzing various dimensions such as contribution, soundness, and presentation. However, Gen.AI tools may inadvertently introduce biases due to limitations in their training data or algorithms, for instance, they might overemphasize certain types of research, overlook methodological nuances, or fail to capture the context-specific significance of a study. Given these limitations, we recommend not using Gen.AI tools when reviewing or assessing the quality of academic papers (Kankanhalli, 2024).

Extract data from papers

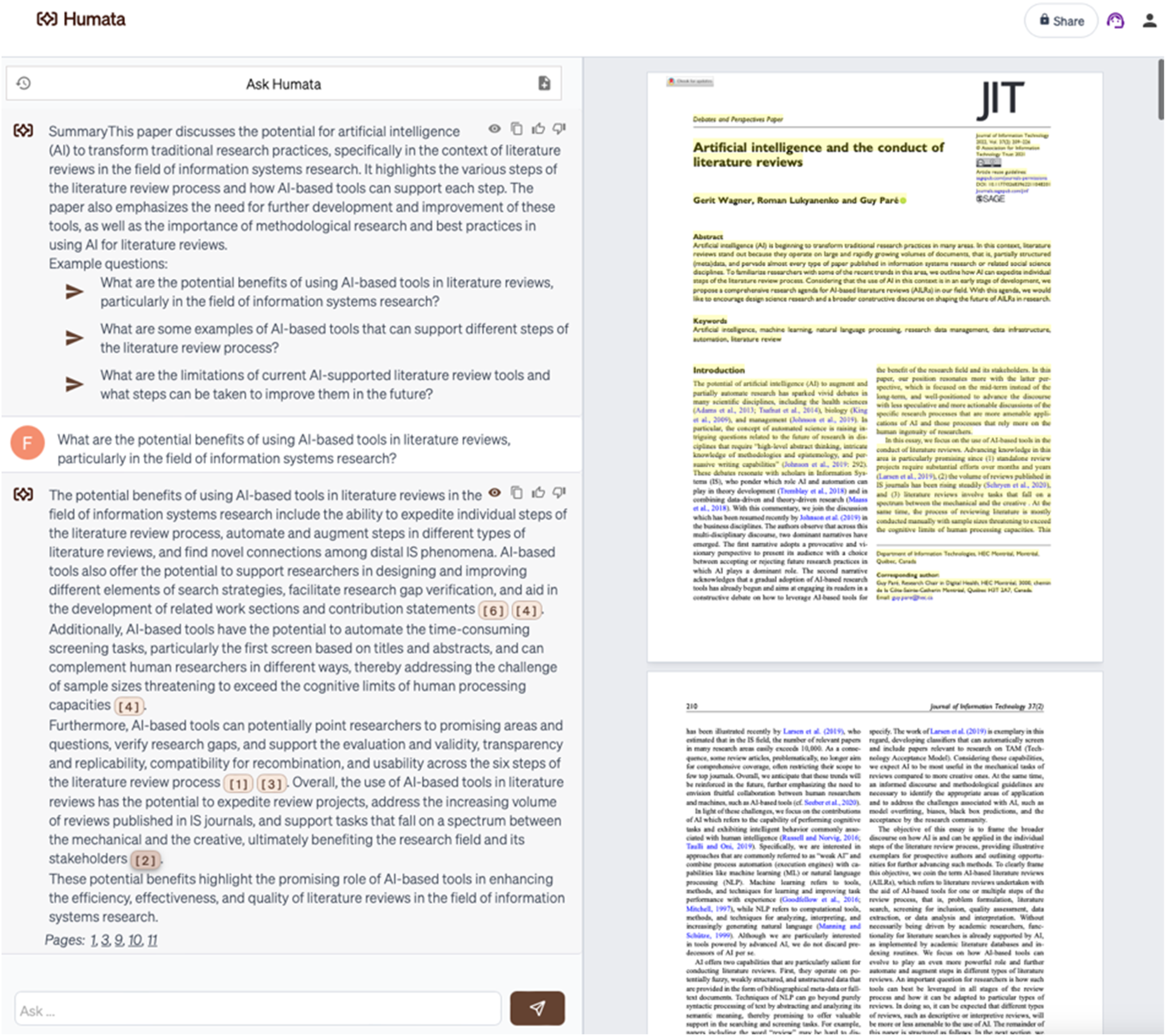

Gen.AI tools (like Humata) can support data extraction by visually connecting inferences to specific sentences and paragraphs in the sample paper. Furthermore, the structured nature of Gen.AI algorithms can ensure that data from all studies in the literature sample is extracted using an equal analysis framework, further limiting unequal representations between studies. To illustrate, Appendix 2 shows a conversation with the tool Humata about the paper by Wagner et al. (2022).

However, these tools also pose risks to these same quality criteria. Their simplicity and speed might lead to an oversimplification of complex data, potentially undermining the integrity of the analysis (Alshater, 2022). Moreover, biases in the analysis framework can lead to constitutionalized data misrepresentations (Dowling and Lucey, 2023). Additionally, the often proprietary and, thus, ultimately intransparent nature of Gen.AI’s underlying algorithms poses a significant challenge for other researchers aiming to replicate the study (Schryen et al., 2024). Consequently, while the advantages of Gen.AI tools are compelling, researchers must exercise caution. It is crucial to rigorously check the accuracy and depth of data extracted by these tools to ensure it accurately represents the subject’s detailed nuances. This careful approach balances the benefits of Gen.AI tools with the need to maintain the highest standards of transparency, reproducibility, integrity, and responsibility in academic research.

Synthesize and structure data

During the Data Synthesis phase of the SLR, researchers must critically analyze, integrate, and coherently present information from multiple sources. They are tasked with discerning key themes, interpreting data in context, and ensuring their synthesis is not only thorough but also adds original insights to the existing knowledge. Gen.AI tools with proficiency in analyzing extensive academic texts (like ResearchGPT and Consensus) provide a streamlined, efficient way to synthesize information in SLRs. Their ability to process and synthesize large data volumes far exceeds manual capacities, allowing for a more expansive and thorough analysis (Davison et al., 2023). This capability facilitates establishing extensive connections across studies, revealing patterns and insights that may be less apparent through conventional methods (Schryen et al., 2024). As a result, Gen.AI tools can significantly improve the rigor and efficiency of the analytical process. However, over-relying on Gen.AI can potentially undermine the SLR’s integrity and originality, both central quality criteria. Additionally, Gen.AI’s propensity to rely on existing narratives can hinder the creation of innovative contributions to the field, undermining beneficence. Therefore, we suggest using Gen.AI mainly for the initial identification of potential trends and patterns while the primary data synthesis remains under the researcher’s control.

Disseminate

Report all decisions

Reporting all decisions in an SLR demands integrity, responsibility, and rigor to ensure transparency and reproducibility. Throughout the entire SLR process, we advocated for the supervised usage of Gen.AI, controlling its outcomes. Reporting Gen.AI use promotes trust in the SLR, as reviewers and readers can transparently understand the author’s oversight. As a result, this reporting should be done manually, without Gen.AI assistance to maintain the SLR’s integrity.

Write/present results

There is concern that authors might use Gen.AI to generate content not reflective of their own analysis or expertise (Alavi et al., 2024; Benbya et al., 2024; Else, 2023). For example, recent events at John Wiley and Sons, where over 11,300 fraudulent papers containing AI-generated content were retracted, highlight the dangers of misusing Gen.AI (Subbaraman, 2024). This cautionary tale underscores the importance of integrity and critical evaluation when incorporating AI tools in the academic writing process. Consequently, it is crucial for authors to understand that they bear the ultimate responsibility for their work, both overall and within each sub-step. Any contributions from third parties, including co-workers or Gen.AI tools, must be critically evaluated and appropriately disclosed. Still, when used properly, tools (like Quillbot) can play a pivotal role in refining the writing style, ensuring the text is clear and concise, and, thus, promoting its impact (Dwivedi et al., 2023). Furthermore, Gen.AI tools can support authors in adhering to structural requirements in writing, such as formatting, reference, and styling (Kankanhalli, 2024). We encourage authors to use Gen.AI to enhance the language, clarity, and correctness of their texts and visual aids, yet refrain from letting Gen.AI generate content.

Criteria-based usage of Gen.AI assistance in structured literature reviews: A guide and illustration

The integration of Gen.AI in conducting SLRs is permissible only when it adheres to established academic rigor and integrity standards. To understand the current status quo of Gen.AI assistance in SLRs, we initially surveyed 20 researchers 1 of varying seniority levels about their current use of Gen.AI. Following this, we enhanced the existing process by incorporating best practices from other research disciplines. This effort resulted in the formulation of an eight-step process for employing Gen.AI assistance in SLRs.

All researchers we interviewed presented a similar status quo of how they used Gen.AI tools in SLRs. First, they identified the specific need for assistance, for instance, not finding relevant papers during their forward and backward searches. Then, all interviewed scholars would go to their preferred Gen.AI tool, which was most often ChatGPT, and execute the task (e.g., “Show me relevant papers for xy”). Most interviewees, though not all, then assessed the AI output and revised it if necessary. Subsequently, all researchers would integrate the revised output into their manuscripts.

While using Gen.AI can potentially enhance the quality of manuscripts, we identified three major challenges through interviews and our prior analysis. First, the Gen.AI tools currently available may not adequately meet researchers’ specific needs. For example, ChatGPT lacks integration with scientific databases, which limits its utility in assisting with literature searches. Second, because of their black-box nature, the training data and algorithms of Gen.AI tools are usually not publicly available information. As such, researchers are often unable to understand why a tool produces specific content in response to queries about relevant publications. This intransparency makes it difficult for researchers to trust the relevance and accuracy of the output. Third, the generative nature of these tools means that they can produce different outputs from the same prompt. For instance, using co-pilot to improve the same sentence repeatedly might yield varying results despite the identical input. This inconsistency can be problematic for researchers, undermining transparency, reproducibility, and third-party comprehensibility. To mitigate these three problems, we propose to change the status quo process in three ways:

Tool-task fit

To address the tool-task fit, we introduce a step where researchers familiarize themselves with the existing Gen.AI tools and their capabilities to make an informed decision on which tool fits their needs best.

Between-tool comparison

To mitigate the intransparency and potential biases of Gen.AI tools, we propose to use several Gen.AI tools to execute a given task and then compare the outputs. This between-tool comparison is similar to data triangulation, which is a proven scientific method to address the intransparency and potential biases of data sources (Creswell et al., 2003; Greene et al., 1989; Tashakkori and Teddlie, 2003; Venkatesh et al., 2013).

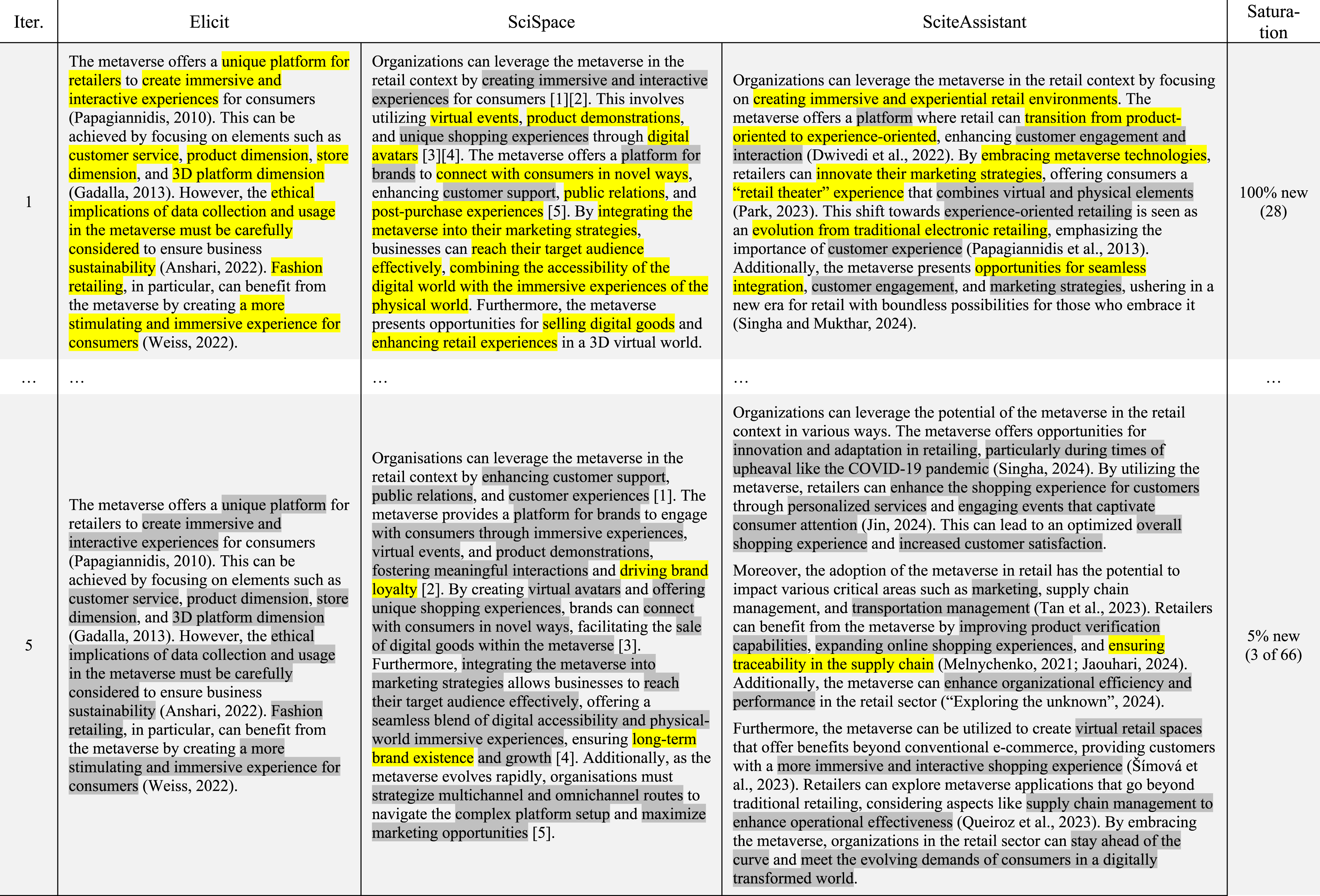

Within-tool comparison

To mitigate data quality concerns that stem from the generative nature of Gen.AI tools, we propose that Gen.AI tools should be used repeatedly with the same prompt. Using the same tool repeatedly allows for a within-tool comparison to ensure that the data extracted is all the relevant information the tool can produce (Glickman and Zhang, 2024). This approach is rooted in grounded theory (Glaser and Strauss, 1968), which introduced the concept of saturation—a point where further inquiry is unlikely to lead to the emergence of more relevant information (Guest et al., 2006).

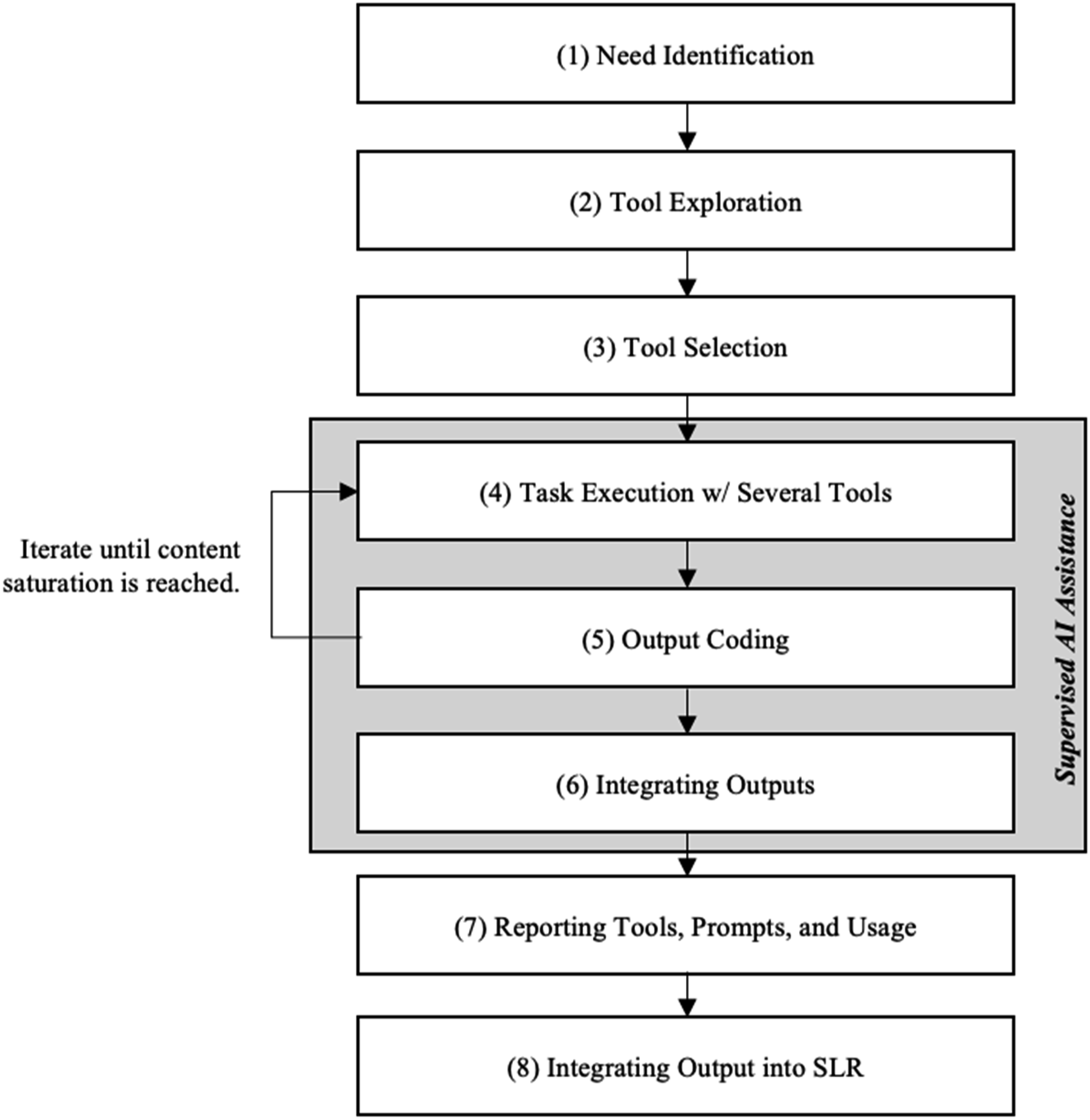

In summary, Gen.AI assistance in SLR poses problems already seen in different research contexts (e.g., grounded theory, data triangulation). Consequently, our proposed mitigative strategies come from transferring validated and proven approaches to tackle these problems to the context of Gen.AI-assisted SLRs. This leads us to the eight-step guide for using Gen.AI for scientific task assistance, outlined in Figure 4. Eight-step guide for using Gen.AI for scientific task assistance.

In the following, we describe each step in detail. To increase the understandability and usability of our eight-step guide, we illustrate its application in an SLR example (Rathje et al., 2024). While we provide the comprehensive guide in a separate online appendix, we also want to briefly illustrate the guide’s application in this manuscript. Please note that we provide screenshots and further background information in the online appendix for every step.

Need identification

Researchers need to first clearly define the specific task for which Gen.AI assistance is required and identify the necessary tool functionalities (e.g., “tool must have an integrated scientific database,” specific filter criteria). Furthermore, researchers should determine tool characteristics that motivate choosing one tool over the other.

Tool exploration

Next, researchers should familiarize themselves with all the Gen.AI tools. This includes experimenting with the tools occasionally to understand the tools’ characteristics, like data cut-off dates, biases, or plug-ins. While researchers do not need to do this step daily, Gen.AI tools show rapid developments that should prompt researchers to update their tool knowledge from time to time. As official benchmarking reports are often not available for Gen.AI tools but only their underlying algorithmic models, we found community discussions on public forums, such as Reddit or the OpenAI user forum, particularly useful for this purpose. Not only are they usually more up-to-date than official tool websites, but they also provide more in-depth information as actual users on particular subjects and evaluations provide the data.

Tool selection

After identifying potential tools, authors should assess which of the identified tools fit their needs best. We propose comparing the tools in a table to select the most fitting tools. Authors should then move to the next steps with the top three to five tools that best fit their needs.

Task execution

Next, researchers should develop a prompt to execute the task with each chosen tool. All tools should be used with the same prompt to enable a between-tool comparison (Glickman and Zhang, 2024). It is pivotal to understand that the quality of the Gen.AI output is a function of AI literacy and prompting skills of the researcher (Knoth et al., 2024). Hence, for this step, researchers might benefit from consulting the latest prompt engineering literature to further improve the tools’ output quality.

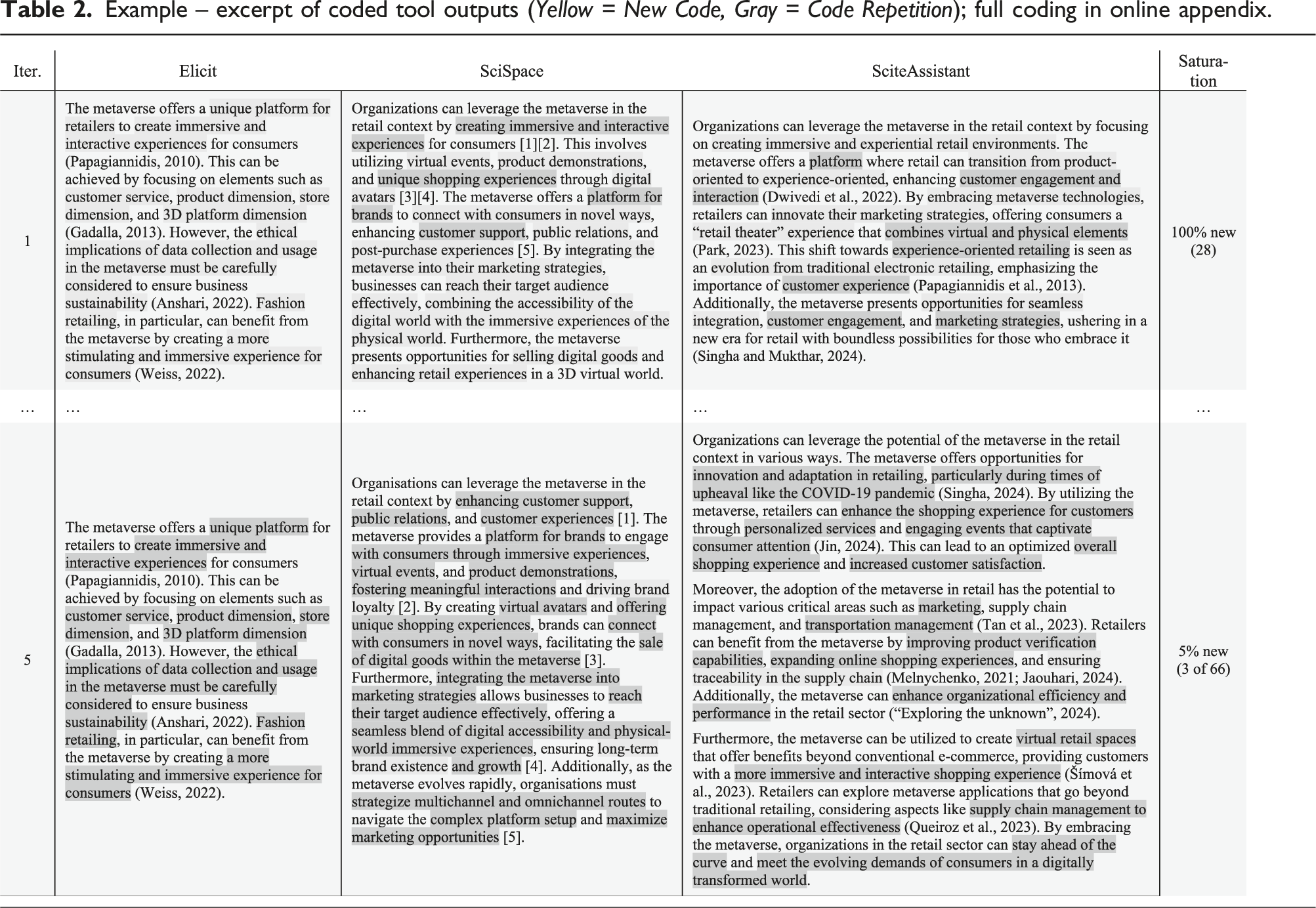

Output coding

After execution, the authors should analyze the outputs from all tools. To do so, we advocate for the grounded theory approach (Glaser and Strauss, 1968): researchers should inductively identify and code statements in the tolls’ outputs, grouping similar statements, resulting in an exhaustive overview of newly acquired content.

Iterations

Example – excerpt of coded tool outputs (

Integrating outputs

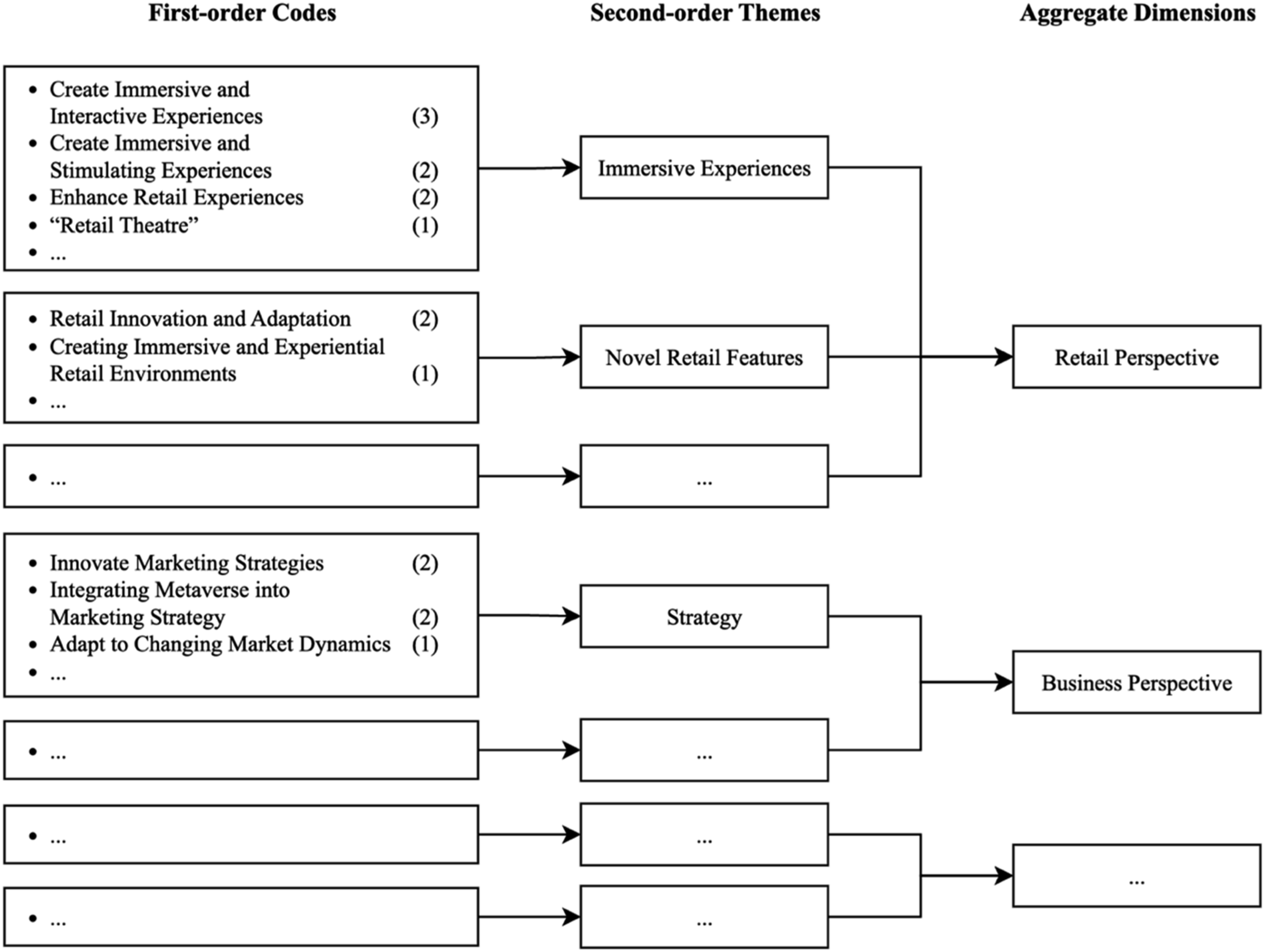

After this iterative process, authors are confronted with separate outputs from several tools (equal to the number of tools times the number of iterations). As this amount of dispersed knowledge might be overwhelming, we follow the grounded theory approach. As authors have already coded tool outputs in step 5, we suggest they should inductively combine similar codes across all outputs into higher-level, second-order themes. If the number of second-order themes is still unmanageably high, authors might want to combine them into aggregate dimensions. Ultimately, authors can then use these newly created knowledge graphs to structure the tool outputs based on content, generating one holistic, integrated overview of newly acquired knowledge.

After the initial round and four iterations of executing and prompting tool outputs, we are left with 66 unique codes. Then, to better group similar information, we categorize these codes into 13 second-order themes and four aggregate dimensions. While we could have used Gen.AI to synthesize and integrate codes (Benbya et al., 2024), we decided against it to not further complicate this illustrative, exemplary process application. We provide an excerpt of the resulting coding framework in Figure 5, which integrates the information of the several tool outputs into one exhaustive overview of newly acquired content. Example – excerpt of final coding framework from the integration of Gen.AI outputs; full Figure in online appendix.

Reporting tools, prompts, and their usage

After having generated the integrated output, authors are done working directly with the Gen.AI tools and their outputs. Then, authors must thoroughly and transparently report their AI usage, including details of the tools, prompts, and iterations, along with observations on tool performance.

Integrating output into SLR

Finally, the iteratively refined output—be it a list of relevant papers, a rephrased text, or else—needs to be incorporated into the manuscript. This critical step must be carried out with utmost responsibility, ensuring the ethical and legal integrity of AI-generated content. Due to this step’s importance, authors should manually integrate this content. This method lets authors control the final content, ensuring it accurately represents their research intentions and findings.

Tough questions to ask: Control versus contribution

Throughout this study, we have operated under implicit assumptions regarding the fundamental nature of SLRs. We presumed that the primary purpose of SLRs is to contribute novel insights to the academic discourse (the

The academic community is presently engaged in vigorous debates about integrating Gen.AI into research methodologies (e.g., Banker et al., 2024a, 2024b; Berger, 2024; Hermida Carrillo et al., 2024), including SLRs (e.g., Schryen et al., 2024; Wagner et al., 2022). Traditionally, we have placed immense value on key aspects of SLRs: structuring existing research with transparency and reproducibility; integrating information with rigor and adherence to scholarly standards; and disseminating findings effectively to maximize impact and beneficence. However, as demonstrated in our study, Gen.AI systems are increasingly capable of mimicking—and potentially surpassing—these aspects. Simultaneously, the fraction of open-access articles is continuously growing (Björk, 2017; Laakso et al., 2011; Seo, 2023) and will likely become the default publication class (Piwowar et al., 2019). This prompts pressing questions: If SLRs become partially or fully automatable, what then distinguishes an exemplary SLR? Is the hallmark of a great SLR rooted in the researcher’s unique contribution, or can it be attributed to the efficiency and comprehensiveness of AI-generated outputs?

Extending this inquiry further, we confront a pivotal dilemma: If Gen.AI could perform SLRs faster, more accurately, and on a larger scale than human researchers, should we continue to entrust the SLR process to human oversight? Current Gen.AI models often operate as opaque “black boxes,” lacking transparency and interpretability—a characteristic that may persist or evolve unpredictably in the future (Drori & Te’eni, 2024; Kankanhalli, 2024). This opacity challenges our ability to ensure accountability, reproducibility, and ethical integrity in research. Consequently, the academic community must grapple with a fundamental question: Do we prioritize the contribution of knowledge, irrespective of its source, or do we value maintaining control over the process of knowledge generation?

These profound questions extend beyond the scope and intent of our current study. Our objective has been to propose a balanced approach that navigates the spectrum between complete reliance on Gen.AI and exclusive human control. We have introduced a framework that enables researchers to leverage the transformative potential of Gen.AI in conducting SLRs while retaining essential oversight over the Gen.AI’s outputs and their integration into the scholarly narrative.

It is crucial to acknowledge that SLRs represent just one facet of research methodology. Their structured nature makes them particularly susceptible to AI integration, positioning them as an ideal initial use case within the scientific domain. However, the fundamental challenges and ethical considerations posed by all types of AI will inevitably confront scholars across all methodological paradigms. While our guide specifically pertains to SLRs in business research, we believe it can potentially be applied in other research settings as well. For instance, researchers conducting a quantitative study could potentially refer to our eight-step guide to using Gen.AI assistance when developing an experimental design. Moreover, aspects of our paper, such as the domain familiarization or paper search, can be relevant to researchers writing related-work sections. However, since we created the eight-step guide in the context of SLRs, future research is necessary to show how it needs to be modified for other application contexts. Ultimately, SLRs serve as the initial battleground—a microcosm that will shape broader paradigms of research practice in an era increasingly influenced by AI technologies.

Supplemental Material

Supplemental Material - A guide for structured literature reviews in business research: The state-of-the-art and how to integrate generative artificial intelligence

Supplemental Material for A guide for structured literature reviews in business research: The state-of-the-art and how to integrate generative artificial intelligence by Fabian Tingelhoff, Micha Brugger, and Jan Marco Leimeister in Journal of Information Technology.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Note

Appendix

Research graph by connected papers based on Wagner et al. (2022) Humata references its answers to specific sections of the paper by Wagner et al. (2022)

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.