Abstract

Part 1 of the ‘conversation’ offered important insights into a groundbreaking era for computer development – adding further detail to existing writings by Frank Land, the work of the LEO group in general, and extended accounts such as those by Ferry, Hally and Harding. This should have whetted the appetite for readers keen to know more, also prompting others to offer their own accounts. Part 2 moves on to Frank Land’s subsequent activities as one of the founding figures of the Information Systems (IS) Academy, and his ‘Emeritus’ phase.

Keywords

LSE and pioneering academic IS

FL – For various reasons, the relationship between the EE (English Electric) and the LEO (Lyons Electronic Office) people was always tense. As I said, although described as a merger, it was effectively a take-over of LEO by EE. The merger took place in 1963, and I worked there until 1968, by which time LEO III was in operation. At that time, my wife, Ailsa, was due a sabbatical from her post at LSE (London School of Economics) and had arranged a visiting post at Wisconsin. The people there were kind enough to offer me access to their computing facilities. I asked EELM 1 if they would grant me a sabbatical year, but they refused. Unfortunately, as a result of Ailsa’s mother’s poor health, the arrangements fell through. But the whole episode had loosened my ties to LEO and I was prepared to look outside. When the opportunity came, I joined LSE as a Research Fellow in Management, a 50% post with the other 50% of my time allocated to managing LSE computer services – in effect becoming LSE’s first, and at that time sole, computer services manager.

The way LSE came to offer me a job was as follows: By 1968, there was a growing realization in the United Kingdom that universities needed to teach and research into the use of computers for business and administration or systems analysis. The National Computing Centre (NCC), a government-sponsored private/public entity charged with promoting the use of IT in the private sector, offered a grant of £30,000 to each of two universities to establish teaching and research into this new topic: At 2019 values this would be around £300,000.

At that time, a limited number of UK universities had started offering computer courses, primarily to allow their own faculty to learn how to use computers for their own research. But computer science was becoming recognized as a discipline in its own right. In the United States, a few universities had recognized the importance of the data processing element of computer applications. One of the earliest textbooks was published by the University of Wisconsin Press, in 1951, as Computing Manual by Fred Gruenberger. In its preface, the book includes the words ‘. . . particularly in training personnel for work in (IBM) punched card computing’. In 1951 and 1952, Gruenberger taught a course at Wisconsin – Theory and Operation of Computing Machines.

AB – I looked on the Amazon website and the only copy of Gruenberger’s book for sale was priced at US$450. So it must be something of a rarity.

FL – Bob Glass, an American Software pioneer and editor of In the Beginning (1998) – a book to which I contributed an account of my early years as a programmer on LEO – has donated a copy to the Cambridge Centre for Computing History. I have seen it, and it provides few, if any, insights into IS. It is more a manual on assembling plugboards.

AB – OK, I won’t rush out to buy it.

FL – In the event, two of the universities bidding for the NCC 2 grant were the LSE and Imperial College, London. The LSE had at that time, under the leadership of Professor Gordon Foster of the Statistics Department, began to take an interest in computing and systems analysis. Gordon Foster had an interesting background. He had been a member of the code-breaking team at Bletchley Park, got to know Alan Turing and spent time at Manchester University. He joined the Statistics Department at the LSE in 1952 and was involved with the development of LSE’s Operational Research group, becoming the first LSE Professor of Computational Methods. Foster supervised a PhD student, Patrick Losty, on the topic of Computers and Management, completed in 1967 – probably the first PhD in the United Kingdom to study the topic at PhD level.

AB – Here is the entry for Losty’s thesis in the ISIG Abstracts: LOSTY, Patrick Alfred (1967) This thesis examines the interaction of computers and management structures. Part I comprises definition and examination of the elements, management structures, information systems, and digital computers. Part II examines the observed practical consequences of combining the elements into a computer-based business system. Part III deals with the hierarchical nature of business systems in a manner calculated to facilitate the analysis and design of such systems. I also consider computers as a tool for exploring management structures, and then explore them using simulation and by the analysis of programmed systems. Finally, a scheme for the classification of business information systems is shown. This will permit the recording, comparison, and contrasting of such systems.

3

FL – The application from Imperial College was led by Professor Sam Eilon, head of the Operational Research group at Imperial. When Imperial was awarded one of the grants, Eilon decided that the best way to utilize the grant was to acquire computing facilities – an IBM 1401 – to provide the capability for researching business application development methods as well as providing for student experiments. Professor Gordon Foster, on the other hand, sought to use the LSE grant to acquire the experience and knowledge of an established practitioner to develop teaching curricula in systems analysis and to outline and lead research in the new discipline, and my CV ticked most of his boxes.

It is interesting to note the extent to which similar ideas about the need for teaching and research into the use of computers to support business and administration were gathering pace in most of the developed world. In 1968, the ACM published its first curriculum recommendations for teaching Computer Science, including a module on ‘Information Systems and Data Processing’. A few years later, it published the very influential 1972 ‘Curriculum on Information Systems’ (Ashenhurst, 1972; Couger, 1973). Even earlier, in 1963, Erwin Grochla and Norbert Szyperski founded BIFOA – Business Institute for Organization and Automation – at the University of Cologne in Germany. In Germany, too, the Federal Republic sponsored the National Research Centre for Information Technology, GMD, founded in 1968, to act as a centre for collaborative research with industry as well as promoting education in all aspects of computing.

The period from about 1965 to the early 1970s saw universities and technical colleges start to teach and research into data processing, first as an add-on to Computer Science and then evolving into a discipline in its own right. There were efforts to provide a clear theoretical underpinning, but essentially the main motivation was to serve a market for programmers and systems analysts. Principal pioneers in the new discipline included Börje Langefors at Stockholm University who published the first and highly influential theoretical analysis of the new discipline as early as 1966 (Langefors, 1966). Alex Verrijn-Stuart at Leiden University, who worked at Shell before playing an important role in defining the discipline, was instrumental (with others) in establishing Working Groups (WGs) 8.1 and 8.2 – the IFIP (International Federation for Information Processing) WGs devoted to the study of Information Systems. In Denmark, at the Copenhagen University Business School, Niels Bjorn-Andersen established a very successful school of information systems study, and in Norway, Kristen Nygaard, with a background in Computer Science and Operational Research, became head of the Norwegian NCC in 1960. Finland, too, became a centre for the study of Information Systems, producing a number of leading scholars in the discipline. The Scandinavian school was celebrated for its interest in the social aspects of information systems, and this is reflected in its publication – The Scandinavian Journal of Information Systems. A recent edition provides an excellent review of this very important Information Systems research tradition. 4 That same interest in social aspects was also part of the scholarly foundation set up by Adriano De Maio at the Polytechnic Institute in Milan. (He held the post of Professor of Corporate Management, Innovation Management and Management of Complex Projects from 1969.) Giuseppe Traversa, a little later, promoted similar ideas at the University of Pisa. I was invited to lecture there, together with Enid Mumford. Of course there were many more active scholars than those referred to above, and at the same time the IS discipline was also being developed in France, Canada, Australia, New Zealand, South Africa and Japan.

AB – Frank you are being too modest regarding your own contribution to the development of academic IS. When I started teaching at Leeds Polytechnic – mid-1980s – we used your discussion from An information system is a social system, which has embedded in it information technology. The extent to which information technology plays a part is increasing rapidly. But this does not prevent the overall system from being a social system, and it is not possible to design a robust, effective information system, incorporating significant amounts of the technology without treating it as a social system. (Land, 1985: 215)

It is worth contrasting this definition with the one offered by Gordon Davis in 2006, reviewing the previous 30 years of the IS discipline:

In an organization of any size, there is an organization function responsible for the technology, activities and personnel to support its technology-enabled work systems and the information and communication needs of the organization. There is an academic discipline that teaches those who build, acquire, operate and maintain the systems and those who use the systems. Both the organizational function and the academic discipline have developed over a period of 55 years (but primarily in the last 40 years). (Davis, 2006: 11)

One reviewer directed our attention to this extract from Davis, remarking that it summarizes your career trajectory: ‘helping create a new technological/organizational system and then helping devise and implement an academic discipline to pass this knowledge on to others including future generations’. On the other hand, it does not specifically address the idea that an information system is a social system – something that continually needs to be stressed and re-stated, as you have consistently done throughout your academic career and in your writings and interviews. This is particularly important now as the associated technologies become increasingly ubiquitous and influential – something we will consider in our concluding section.

FL – The explosion in academic interest in Information Systems was a consequence of the exponential rise in the use of computers for data processing in the private sector by business organizations, and in the public sector by government departments, government agencies and municipalities. Perhaps, the highest growth rates in academia took place in the United States with the Business Schools leading the way. Leading scholars included Gordon Davis at the University of Minnesota who established, in the late 1960s, what became the best known programme for teaching and research in the MIS discipline. His and Margrethe Olson’s book on MIS became one of the most renowned texts, going through many editions (Davis and Olson, 1974). Today Davis’s PhD students – well over 100 – occupy many of the top posts both in academia and in consultancies. Other prominent pioneers from that era include Professor Richard Nolan, from the Harvard Business School, who provided an early model of how companies adopt information technology (Nolan, 1973); Professor James Emery, first chair of the Department of Decision Sciences at the Wharton School, who developed models for evaluating the economic value of Information Systems adoption (Emery, 1973); Professor Daniel Teichroew, sometimes called the parent of CASE (computer-aided software engineering), at the University of Michigan, who developed ISDOS (Information System Design and Optimisation System) for automating the process of building an information system (Darnton, 2010); and Professor Dan Couger, at the Colorado State University, who was prominent in the definition of MIS curricula (Couger, 1973).

The United Kingdom, too, featured a rapid expansion in teaching and research. The lead was taken by the polytechnics rather than the universities. At that time, the United Kingdom had a binary system of degree-awarding education. The polytechnics, separately funded, had to have their degree courses approved by the Council for National Academic Awards (CNAA), founded in 1964. The CNAA focussed on supplying the future practitioners required by industry and administrations, but with little involvement in research. Many of their undergraduate degrees included a so-called sandwich element involving students spending up to a year working with users in industry or administration. Don Conway, then at Stafford Polytechnic, was one of the prominent pioneers, later moving to Leicester Polytechnic, which subsequently became De Montfort University, and as Professor Conway headed the CNAA Computing subject board until its abolition in 1993.

I worked quite closely with the CNAA and the Polytechnics, first being co-opted on to their information systems and systems analysis committee, then later taking on the chair of that committee and acting as external examiner for a number of Polytechnics. A memorable experience was a 2-week visit by a cross-disciplinary team selected by the CNAA to Hong Kong. The Government of Hong Kong had asked the CNAA to assess the courses given by the Hong Kong Polytechnics to assess their suitability for awarding CNAA degrees. These Polytechnics included the institutions which have become today’s high-class Hong Kong universities.

AB – Indeed, the polytechnics were for the most part teaching courses in computing, as opposed to computer science – as much by design as simply in order to offer something identifiably distinct from university courses. I was appointed to a lecturing post in Leeds Polytechnic in 1985, to teach systems analysis and design on their Computing and OR BSc course, also to develop their first master’s programme in IS. Although I had a PhD and 2 years of lecturing experience – all in social sciences – my main qualification for the job was a conversion Masters in Computing and several years’ experience working for a small software company. The computing course had evolved from an earlier course in Maths and OR, and had only recently been rebranded with Computing as the first-named – that is, primary – component. There were a couple of key characteristics about the undergraduate course. First it was a 4-year ‘sandwich’ programme – the third year being spent as an employee with an organization, monitored by one of the course tutors. I was fortunate to teach on the second year and the fourth year core courses and was impressed by the ways in which students were transformed between the end of their second year and the start of their final year – always a transformation for the better. The other interesting aspect was that the gender balance was around 50:50. It was only later, when computing began to be taught in schools, that the men largely outnumbered the women. I always think this was because computing was seen as a technical subject at school, and so a male preserve.

FL – In the earliest days at LSE, I divided my time equally between being a Research Fellow and running the computer system, but gradually I delegated more of the latter to Peter Wakeford. Essentially we were pioneering one of the earliest IT services for a UK university.

AB – Peter Wakeford was at LSE, 1970–1976. He was Head of Computer Services and wrote two programs that were widely used before the advent of SPSS: SDTAB and MUTOS. SDTAB was used for survey data tabulation, MUTOS for spreading out multi-punched data: ‘All data came punched on 80-column Hollerith cards. The LSE programs were Fortran-based and also used cards for input, but at least we got printed output back’ (Hall, 2010: 3). Wakeford is listed as one of the ‘Unsung heroes in support services’. 5

Here again you were involved from the beginning with what today is regarded as almost the ‘obvious’ starting point – an IT system as a service; something that has become a common place, but in the earliest days of computing something that ran counter to the assumption that computer systems – software plus hardware – were products first and foremost. Perhaps, you can explain how you developed this orientation at LSE, building on the foresight of Simmons and others at Lyons, who were once again pioneers in this regard.

FL – When I first arrived at the LSE, the academic Statistics Department provided a rudimentary advice service on processing survey data for other departments. My brief was to establish a professional service with its own equipment to provide a service for the whole school. Initially, a number of the larger departments wanted to own and run their own services arguing that the requisite expertise could only be found within their community. From the beginning we wanted to offer a two-faced service – one directed at providing computer advice including which computer to use, the central London University service or our own local service based on our small IBM 1440, sufficient for many LSE needs. The other face was directed at the subject specialists, to understand their needs from a subject perspective. We gradually took on subject specialists as we grew. We had to demonstrate that our new set up delivered the kind of service to computer users they would not have been able to provide themselves and to show departments that had not thought of using computers that they could provide a new tool to enhance their research.

When Gordon Foster left the LSE to take up a post at Trinity College, Dublin, Sandy Douglas came to the Department of Statistics as Professor of Computational Methods, and so was my line manager. He came from a Scottish aristocratic clan, had been awarded a PhD at Cambridge in computer science and then worked at CEIR (Corporation for Economic and Industrial Research Inc. – a pioneer in computer services in the United States and the United Kingdom, from 1950s onwards). Sandy was very much a member of the establishment and that, plus his background in maths, carried considerable weight, even at the LSE. I had previously applied for and been offered a job at CEIR. I declined the offer as I had already received a matching offer from LEO management. Douglas got the job instead, and later came to LSE. Earlier he had been a Professor at the University of Leeds, where had set up their first computer in 1957. 6

AB – So having joined LSE as part Research Fellow and part Computer Services manager, how did your role change over the years to your eventually becoming Professor of Information Systems? I assume that Peter Wakeford took on more of the services role, and that the staff involved in this area grew significantly.

FL – In brief, in the early 1970s, I became a Senior Lecturer with responsibility to establish graduate courses and research in the area of Management Information Systems – all under the aegis of the Department of Statistics. Initially we offered a diploma course, then a master’s. We attracted a cohort from the Civil Service College for the first years of the programme and so were assured a sizeable number of students. By 1970s, the market for computer-savvy personnel was expanding rapidly in the United Kingdom and abroad. Hence our new course proved popular with graduates from other disciplines, including computer science and mathematics, as well as practitioners returning to university to develop a more rigorous and informed approach to their everyday tasks and responsibilities. The course attracted students from abroad: Europe, Hong Kong and Malaysia, and even the United States. We soon added a PhD capability to our portfolio and this programme graduated some of the current leading lights in the IS domain, including Richard Baskerville, Rudy Hirschheim, Bob Galliers, Bay Arinze, Oscar Guiterrez, Chrisanthi Avgerou, as well as prominent people in the world of consultancy.

In the United Kingdom, two individuals had an important impact on my own development. The first, and most important, was Enid Mumford from the Manchester Business School. Enid, with a background in organizational studies, and extensive experience working with the Tavistock Institute, had developed a socio-technical approach to the construction of information systems, an approach that focussed attention on all the stakeholders in the system. Building on the ideas from the Tavistock, her approach attempted to ensure that any new system incorporating computer technology would enhance the job satisfaction and quality of working life of all participants. Her methodology was called ETHICS 7 and had its roots in studies carried out by Mumford in the late 1960s and early 1970s, but was first published, co-authored with her research associate Mary Weir, in 1979 (Mumford and Weir, 1979). An important feature of Mumford’s approach was her willingness to embrace the work of other scholars and, with their permission, to incorporate this into her work. In particular, this applied to the work of her colleague at the Manchester Business School, Stafford Beer, whose ideas about the ‘viable system’ were part of her ETHICS methodology.

I first met Enid in 1970. The NCC had set up a WG to study and report on the economic evaluation of information systems. The group was chaired by John Dorey, then head of computing at Pfizer, and included Enid Mumford; John Hawgood from Durham University; Mike Reddington, Treasurer of Liverpool Corporation; and Bill Morris, also from Durham University and an associate of John Hawgood (Morris et al, 1971). I was invited to join the group. Enid, John and I quickly found that we shared values and ideas. As a result, we were able to outline an approach to evaluation based on the notion that any organizational system had to meet a range of objectives (multi-objective), and that different stakeholders attached different values to the objectives (multi-criteria). We suggested a set of procedures to operationalize these ideas, and they were published as part of the report from the working party. We called our method BASYC (Hawgood and Land, 1977) and it was subsequently embedded in the ETHICS methodology.

The second academic influence was that of Peter Checkland, from Lancaster University. Peter rejected the purity of the mathematical approaches to systems design promulgated by those trained in operational research and replaced it with his ‘soft systems methodology’ which recognized the messy nature of real-world problems. His students at Lancaster worked on projects in industry deploying and testing that methodology. Peter’s work was very influential in the UK’s academic IS community and beyond.

If Mumford, Checkland and others brought new insights from academia, my experience from having worked 16 years with Lyons and LEO implementing information systems brought its own insights. One in particular is worth noting. In LEO, we recognized that new or changed information systems implied innovation – new and different work and management practices. And innovation implied uncertainty in many aspects of the potential outcomes. To resolve or minimize that uncertainty required an experimental approach to systems design. Perhaps, an example from my LEO days can illustrate the point.

In its efforts to minimize the high cost of data preparation by punching holes in cards or paper tape, the LEO engineers constructed a device called Lector, which could sense marks made by pencil or pen on a paper form. A system was devised whereby a bakery goods salesman (and at that time they were all men!) would carry a pre-printed order form listing the items which the customer to be visited had previously ordered. The order form had a number of columns, each column representing a different quantity. The salesman was expected to make a pencil mark for each item ordered by the customer in the column, or combination of columns, indicating the quantity ordered. Before the system could be implemented, many elements had to be explored and tested – experimented on. These included

The size, weight and colour of the order form, which would be robust enough to withstand handling by the salesman in a variety of conditions, and minimize marking errors;

The number of columns, which again minimized marking errors. It turned out that fewer columns, which involved the odd marking of more than one column to represent a quantity, were on average more reliable than more columns requiring fewer combinations of markings;

The best shape for marking aids to ensure the salesman put the mark in the correct place for reading. It turned out a marking aid in the shape of a top hat yielded the most accurate marking outcomes;

How the salesman took to the very different system from that used previously. We were interested both in the physical skill required for accurate marking and the attitude to the change and willingness to embrace it. In practice after a few practice sessions, salesman liked the new system as the pre-printed form provided him – always him – with the orders placed for each bakery item on the form with quantities ordered in his four previous visits.

This experimental approach was devised by the systems research office originally under Simmons and subsequently Caminer.

I was joined at the LSE by Sam Waters who had been a junior colleague at LEO. Sam had joined LEO as a school leaver with a background as a working class Londoner. He was engaged by LEO almost as an experiment in taking on an unqualified school leaver. In the event, Lyons helped Sam to take a degree in Mathematics part time, to which he later added a PhD in thermodynamics. Sam proved to be an excellent teacher and understood the more physical side of systems design. Soon after joining me at LSE, he prepared a text on Systems Design published by the NCC (Waters, 1974). After a few years, Sam left the LSE for a more senior post at what was then Bristol Polytechnic, and later was promoted to a full Professorship at the same institution, now known as the University of the West of England.

Sam Waters was followed by Ronald Stamper. Ronald had been teaching systems analysis at BISRA – the British Iron and Steel Research Association – the research arm of the British steel industry. He brought to the LSE relevant teaching, and both practical and research experience, with an enquiring mind, coupled with an original approach to defining systems. When he joined us, he had just published a text book on the management of information systems which was for its time groundbreaking (Stamper, 1973).

The Wikipedia entry offers a succinct and accurate summary of his work: The main thrust of Stamper’s published work is to find a theoretical foundation for the design and use of computer based information systems. He uses a framework provided by semiotics to discuss and prescribe practical and theoretical methods for the design and use of information systems, called the Semiotic Ladder. To the traditional division of semiotics into syntax, semantics and pragmatics, Stamper adds ‘empirics’. ‘Empirics’ for Stamper is concerned with the physical properties of sign or signal transmission and storage. He also adds a ‘social’ level for shared understanding above the level pragmatics. (Wikipedia)

8

Ronald continued to develop his ideas and made some headway in trying to define a language – LEGOL (LEGally Oriented Language) – for expressing rules. 9 He built a small team to assist him, and his work took him away from the mainstream of our research and teaching. Nevertheless, when he left the LSE to take up a chair at the University of Twente in Holland, we felt we had lost an original mind. Another ex-colleague from my LEO days comes to mind – Kit Grindley, a fellow graduate from the LSE. He had joined Lyons as a Management Trainee. After leaving LEO, he joined the prominent consultants Urwick Orr and later became a director of Urwick Diebold, subsequently working for Price Waterhouse Coopers and being funded by them for the PWC part time chair in Systems Management at the LSE. Kit while working at LEO had developed the idea of automating the process of systems development by designing a special language and systems generator he called Systematics (Grindley, 1975). The ideas behind Systematics were excellent, but despite a number of publications and building up a team to construct the generator up to 2006, the time for Systematics had passed and it had to be abandoned as funding dried up.

AB – How did these developments equate with other, similar ones in the United States and elsewhere?

FL – Gordon Davis visited the LSE in the mid-1970s. His own background was not in computing, but rather in accounting, with a PhD in Business Administration. As I have already mentioned, by 1970s, he was recognised as one of the leading figures in the formulation of a separate academic discipline – MIS – and had published, with his colleague, Margrethe Olson, at the University of Minnesota, a very influential text – Management Information Systems: Conceptual Foundations, Structure and Development (Davis and Olson, 1974). The list of his PhD students includes many of the most highly esteemed IS academics world-wide. At the time of his visit, his approach to IS tended to be largely positivist, but nevertheless he was prepared to engage with the more qualitative approach he met at the LSE.

I had a Visiting Professor post at Wharton, 1975–1976, in the Department of Decision Sciences, where I worked with James Emery and Howard Morgan among others. At the same time – 1970s – European academic IS was developing with the work of Neils Bjorn-Andersen, Janis Bubenko, Henk Sol and Alex Verrijn-Stuart.

This period also witnessed the emergence and growth of IFIP (founded in 1960). IFIP was established by UNESCO and launched at an international conference in 1959. One of the first international collaborations under IFIP auspices was the agreement on the specification for ALGOL 60. Various technical committees – TCs – were added to the initial ones, and each TC hosted one or more WGs. IFIP organized itself into TCs each covering a specific IT domain, for instance, TC3 covered education. The TC with which I was most involved, TC8, was founded in 1976 to cover what is now known as Information Systems. TC8, like all TCs, comprised a number of WGs, each group concerned with a specific aspect of the TC’s domain of interest. Thus, WG 8.1 concerned itself with the definition and evaluation of Information Systems, whereas WG 8.2 studied the relationship of an organization and its stakeholders with its Information Systems. Apart from regular IFIP conferences involving all TCs, each WG held its own conferences and workshops. I became a member of WG 8.2 and acted as its chair in the early 1980s. But there were a number of earlier conferences chaired by A.B. Frielink, picking up on the concerns of users about evaluating the benefits of introducing business computers. The first of these was held as early as 1965. It was followed in 1974 by a symposium in Mainz, one of several conferences to which I contributed papers (Land, 1974).

1970s also witnessed the emergence of what much later became ECIS, the annual European Conference on Information Systems – which started in 1993 in Henley Management School. The initial concerns in the early 1970s were on system requirements, including predicting what value a new system would add to the organization, and then moving to analysis and design. Only later, in the late 1970s and 1980s, did people’s ideas develop to start to take account of the wider impact of the systems being developed and implemented.

AB – These changes are evident from the successive versions of the ACM curriculum that were produced, starting in the 1960s. The ACM website acknowledges this evolution and continuing demand for re-focusing the curriculum for five distinct aspects: Computer Engineering, Computer Science, Information Systems, Information Technology and Software Engineering: In the decades since the 1960s, ACM, along with leading professional and scientific computing societies, has endeavored to tailor curriculum recommendations to the rapidly changing landscape of computer technology. As the computing field continues to evolve, and new computing-related disciplines emerge, existing curriculum reports will be updated, and additional reports for new computing disciplines will be drafted.

10

FL – I was a founder-member of the IFIP/TC8 WG 8.2, concerned with ‘The Interaction of Information Systems and the Organization’. It was perhaps one of the first instances in which the major American thinkers about Information Systems worked together with their European counterparts. Prominent among the American members were Gordon Davis and Hank Lucas. 11 The Europeans included Niels Bjorn-Andersen from Copenhagen University, Rudy Hirschheim, my colleague from the LSE, Enid Mumford, Trevor Wood-Harper, Guy Fitzgerald, David Avison, myself, as well as academics from Scandinavia and the Netherlands, Germany and Belgium. A feature of the WG was the intense discussions that took place among the members regarding IS research: how to approach and legitimate our investigations. It was clear that the American academic scene was dominated by an orientation which could be described as business school values, coupled with an approach that focussed on technological possibilities and positivist thinking about rigour in research. In contrast, the Europeans tended to focus primarily on perceived social values and were far more open to qualitative research that encompassed a pragmatic approach to development methods, as well as ideas and concepts associated with the work of Habermas and critical theory. This reflected the ways in which the impact of the technology and systems was gaining wider attention outside the purely technical realm (see Lucas et al., 1979).

One outcome of this was the WG8.2 Manchester Conference in 1984, now recognized as a landmark event (Mumford et al., 1985). The conference brought together many of the most active IS academics from all over the world and provided the academic IS community with an understanding of the issues that were being debated, and the varying perspectives on these issues. It did not, and could not, provide a single solution to the issues, but enabled most participants to recognize what each approach brought to an understanding of IS, and what role academia should play. Although the WG played lip-service to the need for practitioners to be involved in these deliberations, in practice their involvement was minimal. Indeed most of the latter would have been dismayed by the nature of the academic discourse, and in particular, what they would have seen as the highly esoteric and largely impenetrable language.

AB – I know this has been one of your enduring criticisms of academic IS, starkly illustrated by what you postulate as the wonderment experienced by any IS practitioner stumbling upon an academic IS discussion of actor–network theory! (We return to this issue below.)

FL – The 1970s also saw the start of the PhD programme at LSE. LSE began to grow their provision in IS, attracting more students – many of them international – and so bringing with them more funding which provided a basis for new posts. By the 1980s, our department had grown and developed, and so by the late 1980s, several of the people I had recruited – some from the PhD programme – were in line for promotion. If it had not been evident before, many academics at LSE were suspicious of IS. I am not sure that they really understood what it involved as an academic study. In 1986, one of my colleagues applied for promotion/tenure and was turned down, bringing into question not only the merits of the application itself but also of the entire academic value of IS. At that point I left LSE. Other colleagues also left, moving to other institutions both in the United Kingdom and elsewhere.

Soon after I was offered a 5-year post at the London Business School (LBS), the contrast between LSE and LBS was stark. At LBS, the students’ primary interest was predominantly on their career prospects as consultants, preferably with one of the big consulting companies, whereas at LSE, although this may have been one concern, it was generally overshadowed by genuine academic interest initially in learning more about the issues, and then moving into research and teaching. I felt far more comfortable in the latter environment.

In 1991 I left LBS, at the end of my 5-year term, and was then appointed as a Visiting Professor at LSE, later becoming Emeritus Professor, a post I continue to hold. So although I had been a Professor at LSE, my appointment to emeritus status came as a move from a visiting post rather than an established one – which was and is highly unusual. I have also held visiting professorial posts at various universities in Australia and India.

The emeritus phase and the current state of IS

AB – We have covered your time working on LEO and the move to LSE, drawing to some extent on some of your published accounts, but also bringing out several key points:

- The development of computer technologies in the 1950s and early 1960s;

- Lyons’ forward thinking regarding organizational computing and information systems;

- The ways in which the UK IT industry lost its way and failed to build upon its leading position and early promise;

- Your move to LSE and your key role in developing academic IS, including confronting challenges from the academic establishment;

- The development of IS courses and curricula, and the important influence of the polytechnics in the United Kingdom.

You have already related the circumstances that led to your appointment, in 1991, as Emeritus Professor at LSE. In your emeritus period you have continued to be extremely active and visible. This has included being a key figure in many of the public campaigns centred on IT and related issues; making significant contributions to the project to publicize the history of early commercial computing – particularly the LEO project – and also to ensuring that the history and documentation are preserved and made accessible; collaborating with and encouraging younger colleagues in their work; and generally continuing to act as a guiding and influential figure across many different contexts associated with or related to Information Systems.

The preceding discussion has expanded upon some of your writings on LEO and the early days of commercial computing, as well as the development of IS as an academic area. In your introduction to my book Thinking Informatically (Bryant, 2006), you referred to information systems as a

domain of study (operating under) a variety of names. Its Journals and Conferences flourish. But it is a domain in crisis. Its legitimacy as a separate field of study has been questioned. Student recruitment in the developed world, after many years of sustained growth has fallen sharply. At the same time a divide has grown between practitioners and academic scholars. An ICT professional walking into a workshop on the role of the actant in Actor Network Theory or the importance of nomological nets would retreat in bewilderment. (Land in Bryant, 2006: ix)

I think the point you make should be taken as an enduring and valuable admonition, and not confined only to IS. But looking at recent conferences (e.g. ICIS, AMCIS, ECIS), and the range of IS journals, it is evident now that many of the issues of current concern – the ‘hot topics’ – do indeed emanate from practice and everyday experience. In part this is because digital technology in all its various forms has taken centre stage, and so provides a necessary starting point for research and discussion. On the other hand, the basis for this was already there in the early work you did with Mumford and others and was something you fostered through the choice of papers published in the Journal of Information Technology (JIT), which you founded with Igor Aleksander in 1986. Notable early examples include Somogyi and Galliers (1987), Clegg (1988), Land et al. (1989), Willcocks and Mark (1989), Hochstrasser (1990), Ward (1990), Fiedler et al. (1994), Clark et al. (1995) and Eason (1996).

Earlier I referred to how I used your definition of an Information System back in the 1980s and its impact on my students and my own ideas. We have also pointed out the fact that in the United States, the term MIS was coined both for the academic area and the topic of concern, also that the focus on Management Information Systems is far from neutral and comes with a good deal of what we might see as conceptual baggage that requires unpacking and close examination. In part this may have been a result of the ways in which the field developed in different countries. In the United States, departments of MIS usually emerged from business schools, whereas in the United Kingdom, the various departments of IS usually developed from within departments of computing or computer science. When I developed connections with like-minded colleagues in mainland Europe in the 1980s and 1990s, their departments were usually designated as Information Management, and mostly associated with faculties of Applied Social Science or something similar. Your own experience at LSE and then at LBS illustrates some important aspects of these differences.

Current developments have resulted in many IS departments or their equivalents altering course. At LSE, IS is now part of the Department of Management, and across Europe and North America many IS groups have, in similar fashion, been incorporated or re-incorporated into departments whose main focus is on business and management. Each department in each institution has its peculiarities and specialisms, but generally it often seems to be the case that IS in North America leans to the managerial and technically oriented, whereas in continental Europe, particularly Scandinavia and NW Europe, it is the social and political aspects that predominate. This was certainly the case in the 1980s and 1990s, with US-based journals favouring quantitative submissions, as opposed to European journals favouring interpretative and qualitative ones. Of course there are many important exceptions to this, particularly the case of ‘Europeans’ teaching and researching in American universities, working with and encouraging like-minded colleagues.

As someone who set up the first department of IS in the United Kingdom, you clearly had to contend with the problem of justifying the academic credentials of IS – explaining what the term actually means, and how and why it is distinct from IT or other terms. You have already referred to the specific problems at LSE that led to your departure and the dispersal of many of your colleagues in the 1980s. How do you assess the current status of IS, and its future as an academic subject?

FL – IT, or rather ICT (information and communication technology), now encompasses far more than business, administrative, engineering and scientific activities. It permeates a very wide range of human activities ranging from how politics is done to how we arrange to meet sexual partners. It plays a critical role in conflict and warfare, it has become the basis for interpersonal communication and it has made the companies that provide the technology the richest and most successful companies there have ever been. At the same time, it has provided unrivalled opportunities for what we have termed the ‘dark side’ to flourish and reap mischief and mayhem on an unprecedented scale. My concern is that within academia, the old IS or MIS groups have lagged behind in understanding, or worse in seeking to understand, the implications of the way ICT is developing and its impact on all human activity. I hope I am wrong, but I do fear that IS academia is behind the curve.

AB – When I was appointed to a personal chair in the 1990s, I deliberately designated myself as Professor of Informatics, taking and slightly revising Donna Haraway’s (1985) characterization of the term. Hence the characterization of informatics as the ‘technologies of information (and communication) as well as the biological, social, linguistic and cultural changes that initiate, accompany and complicate their development’. In the meantime, the term itself has been ‘borrowed’ by computer scientists, for some reason feeling the need to re-brand themselves. In the United States, Rob Kling coined and popularized the term ‘Social Informatics’, but use and recognition of the term seems to have dissipated in recent years.

Mumford used the term ‘socio-technical’ in her work, which clearly signposted the way in which she wanted to relate technical and technological issues to social ones. In light of this work and other related studies, the field of STS – Science, Technology and Society – has developed. While generally seeing such developments as encouraging, I also see some problems in the ways in which such linkage of ‘society’ to ‘technology’ builds on the assumption that they are two distinct realms. Consequently, I prefer Raymond Williams’ view. He discounts both ‘technological determinism’ – where technology is seen as self-generating and operating in an independent sphere, with technological developments leading to new social conditions – and also what he terms ‘symptomatic technology’, which sees technology R&D as a distinct sphere, where the results are taken up and used by existing social processes. The two positions, although they appear contrary, share the assumption that technology is seen as an isolated facet of existence, outside society and beyond the realm of ‘intention’. Instead Williams argues that technological advances are ‘looked for and developed with certain purposes and practices already in mind’, these purposes and practices being ‘central, not marginal’ as the symptomatic view would hold. The history of LEO encapsulates this very clearly, and the ways in which digital technology was taken up and developed in the corporate world can be understood in a similar fashion, but on a far larger scale (see Williams, 1974: 11–12).

Williams was writing in the 1960s and 1970s, and his main focus was on TV rather than computers and digital technology. I think his overall position is important and should be borne in mind, even if people find aspects open to criticism. Manuel Castells, for instance, offers a succinct if unacknowledged account of Williams in his rejection of technological determinism: Of course technology does not determine society. Nor does society script the course of technological change, since many factors, including individual intuitiveness and entrepreneur-ialism, intervene in the process of scientific discovery, technological innovation, and social applications, so that the final outcome depends on a complex pattern of interaction. Indeed the dilemma of technological determinism is probably a false problem, since

Williams’ account, however, needs to be supplemented and enhanced to take account of the ways in which digital technology is qualitatively different from other forms of technology; something that Stafford Beer understood and encapsulated in his three questions alluded to earlier, and which we might now rephrase: The question which asks how to use (the computer)

FL – Yes indeed, and Geoff Walsham (2012) from Cambridge University raised the issues in a provocative paper in JIT which led to a debate in subsequent issues of JIT including a contribution from ourselves (Bryant and Land, 2012). But returning to the academic mind-set, we might also distinguish between the scientific mind-set searching for all-encompassing theories to explain the way IS is deployed and its impact and the engineering mind-set which emphasizes solutions to problems even if the underlying causes remain undiscovered. The engineering mind-set is content with providing a ‘satisficing’ solution, and the scientific mind-set is not satisfied until an optimal solution can be proven. IS or MIS faculties tend to be dominated by one or the other of these mindsets. The difference is exemplified by the debate about the meaning and significance raging around the concept of ‘design science’. A panel discussion at which I participated included in the 2008 ECIS conference in Milan debated the significance of Design Science.

AB – You and your colleagues, such as Mumford, Beer and others, were already aware of this in the 1970s and 1980s. But you were the exceptions, and it is still the case that people’s understanding of the nature and impact of these technologies is highly imperfect and largely lacking in terms of the wider social and political perspectives. Critically, this is the case among those developing and establishing public policies, projects and regulations. Over the years there have been several notable instances where you have been invited or felt impelled to get involved, including the NHS, and various issues concerned with privacy and security.

FL – One of the features of an academic career is being called on as an expert to support official enquiries. The first time I was called was to join a small group made up of members of the Royal Statistical Society and the British Computer Society (BCS). The year was 1971 – the year of the UK Census. Jeremy Thorpe, leader of the Liberal Party, had raised the issue of the security of the Census and the threat to individual data privacy from the sale of data to third parties. Thorpe stood up in the House of Commons and declared he would rather pay the £50 fine 12 than complete his census form (in practice like the vast majority of the population he did fill in his census form). The government felt it had to respond to the alarm raised by Thorpe’s intervention and accepted the invitation of the BCS to investigate Census procedures. 13 The furor over the use of computers and sale of data to third parties is echoed by current concerns about the activities of Facebook and Cambridge Analytica.

Our group of experts visited the Census Office premises. From the start it was clear the notion of security threats was far from the minds of management and staff. We were allowed entry on the assumption, on the part of the security guards, that we were a bunch of ICL engineers – indeed such a visit was scheduled for later that day. The computer premises themselves, with data and magnetic tapes lying around, were visible and accessible from the wide-open windows (it was a hot day). However, there was no indication that any of the stuff lying around had been compromised.

Although the census files available for sale had been stripped of identifying information and aggregated, it would have been possible for a smart computer system to identify individuals from common characteristics, to obviate that possibility data from individuals living in isolated areas were deliberately blurred. We saw the main threat to privacy being the possible misfeasance by enumeration staff, despite the attempt to avoid that risk by making them operate outside their own immediate neighbourhood.

Our conclusion was that despite the lack of concern about security at the census premises, there was no evidence of any large-scale failures. The main threats were on a small scale from enumerator fraud and the possible identification of individuals by searching for common characteristics on areas where aggregation was not possible. Our report made a number of proposals for tightening procedures.

At the following census, in 1981, we were asked to see to what extent the operations had been made more secure and we were impressed by the way the census management had followed our recommendations.

Early in the 1980s, Sandy Douglas and I, working as his number two, were invited to act as specialist advisers to the House of Commons Select Committee on Science and Technology, investigating the UK computer industry. The committee was chaired by Airey Neave MP, later assassinated by the IRA. Airey was a shrewd and knowledgeable chair, but MP members of the committee varied from those who just came along for the ride, to those who had a genuine interest in the investigation and contributed effectively both to the investigation and to the preparation of the final report. But the unsung hero was the Clerk of the committee. He was a member of the House of Commons staff, highly intelligent, and although lacking in prior knowledge, he proved to be a quick learner. His responsibilities included all the scheduling of witnesses, briefing them on what the committee expected from them, and playing a key role in drafting the committee report.

Our role as advisers was to brief committee members on the issues, suggest the names of witnesses to call, and provide individual members with drafts of the questions they should put to the witnesses. The committee would meet before scheduled meetings and discuss the line of questioning, allocating particular themes to the individual MPs. This tended to involve long discussions on what direction the committee should take, and it was interesting to note the limited role played by political party differences in the debates, though it was never completely absent.

During meetings with witnesses, we as advisers would sit behind the MPs, and as the interrogation of witnesses took place, we would write suggested follow-up questions on slips of paper and pass them to the chair or sometimes selected MPs. Of course, MPs often took their own line and did not necessarily follow our advice. Nevertheless, when it came to writing the report, our influence was significant in nudging the committee in directions we felt to be critical.

One interesting lesson I learned was that many of the witnesses came to give evidence in good faith, with the feeling that they were there to help the committee – expecting to share their knowledge with the MPs in attendance. They were unaware that the Select Committee modus operandi was nurtured in the UK’s adversarial justice system, so witnesses were interrogated, often in a hostile manner. Some witnesses were outraged by this unexpected treatment by the MPs. Civil Servant witnesses, of course, knew how the committees operated and were prepared for such interrogations, and by and large defended their ground successfully.

My second experience occurred a few years later. This time I was the sole technical adviser to the Select Committee, chaired by Kenneth Warren MP, established to examine the UK computer industry and to report what the committee thought the role of government should be. The actual operation was very similar to that of the earlier committee I worked with. Kenneth Warren proved to be an excellent chairman, with to my mind a good grasp of the issues facing the UK computer industry. It was clear by that time that the UK industry was in decline and American companies dominated the market. The successive mergers of the UK industry had not halted the decline, and now much of the growing software industry too was dominated by US companies, and leading UK companies were being taken over by outsiders. The committee produced a report advocating a stronger role for the government in supporting British industry.

Internally, and completely unofficially, the report was labelled ‘The Land Report’, but that underplays the role of the Chair supported by most of the members, and the careful drafting of the Clerk. However, when the report was debated in the House of Commons, its somewhat un-Thatcherite message was rejected, with Conservative MP Emma Nicholson, an ex-Ferranti employee, leading the critics. A report in New Scientist commented on the interrogation of Lord Young by the Committee. 14

My next public engagement came much later and after my retirement. The Labour Government under Tony Blair had been persuaded that the salvation of the NHS required an intense use of information technology, and that the NHS had fallen behind in its deployment of computer technology for many aspects of its operations. Consulting companies, asked at considerable expense to review NHS use of ICT, supported the notion that a root and branch revision of technology deployment was required. In practice, the NHS had been using ICT for a long time, but there was little standardization in technology or systems design, and there was much fragmentation. Systems varied greatly in scope and in quality ranging from world class to failing systems.

The government launched a multi-billion-pound initiative, the NPfIT, National Programme for Information Technology, to reorganize the NHS ICT systems. To achieve this, they established a centralized directorate to oversee the building of the system, though the implementation of different aspects was outsourced to a number of consultancies and computer companies including, for example, Fujitsu. The timetable announced by Tony Blair for the completion of the project was less than 2 years. Existing systems and systems which were being revised were to be replaced by the rollout of the new system. The underlying notion was that the process could be streamlined by the newly designed systems, a one-size-fits-all philosophy: for instance, a system suitable for acute treatment units would also work for the diagnosis and treatment of mental problems.

It became clear to a number of observers, including me, that the project was seriously flawed on multiple levels. I was part of a group of 23 academics and medics who got together as a consortium to ask the authorities to review the whole project as a matter of urgency. At first, the Director of the project appeared to be sympathetic to the concerns we highlighted and agreed a review would be valuable for the health of NPfIT, but that soon changed, and our group was accused of asking for a review merely so that we could be appointed as well-payed consultants. As far as we knew, none of our concerns was addressed, despite the fact that the timetable for completion was slipping badly and costs escalating. In-time sub-contractors, notably Fujitsu, withdrew from the project, and when the Labour Government was replaced by the coalition of Conservatives and Liberal Democrats (2010), the decision was taken to abandon the NPfIT in 2011. It is ironic that some of the systems built and implemented were first class. 15

AB – I used the term ‘palimpsest’ quite deliberately in the introduction, as it should evoke the realization that the current state of IS practices and research is built upon earlier stages – some of which endure, albeit often unrecognized or taken-for-granted, while others have been effaced and forgotten. The ideas that developed within and around the LEO group are a prime example of this, and I vividly recall the presentation given by Eric Schmidt, then CEO of Alphabet/Google, at the special event to celebrate LEO and those involved with it at the LSE a few years ago. He opened proceedings by explicitly drawing attention to the ways in which the LEO group had developed and pioneered an understanding of commercial and general purpose computing that has continued to provide the basis for innovations in digital technology for more than 50 years – at the same time lamenting how the origins of this foundation had been largely forgotten.

In recent years you have been particularly keen for people to devote time and energy to articulating the history of IS from its origins in the middle of the 20th century and have made key contributions that seek to re-discover many forgotten or ignored aspects. In some cases you have referred to these efforts as a ‘re-inventing’ of IS history, as well as a ‘re-discovering’.

The work you did with Mumford and Hawgood, and the work of Checkland have already been referred to. I think Checkland’s work continues to be taught widely, I certainly cover his soft systems method (Checkland, 1981) in my teaching and have done so for 30+ years. But I am also keen to ask your opinion of Russell Ackoff, now largely forgotten, but someone whose work should be far more acknowledged in the IS canon. His paper ‘Management Misinformation Systems’ was published in 1967 and retains its relevance – perhaps even more so – after 50 years (Ackoff, 1967). Similarly his work on systems theory, including ‘Towards a System of Systems Concepts’ (Ackoff, 1971). Any re-inventing-cum-re-discovering of IS/MIS needs to incorporate his work, particularly since it provides a link to other key writers such as C. West Churchman, 1971, and Emery and Trist.

FL – Agreed: I also want to note in particular Ackoff’s (1988) The Future is Now. Ackoff’s work led directly to Jonathan Rosenhead’s (2001a, 2001b) work on Robustness Analysis, and in turn to my distinguishing between planning horizons and forecasting horizons in Designing Information Systems. My notion of ‘future analysis’ was adopted as part of Mumford’s ETHICS socio-technical methodology. Few, if any, of the then highly touted methodologies promoted by academia, and the growing band of consultants, paid attention to the problems of reconciling short forecasting horizons in a turbulent world with the far longer time to design, construct, test and implement an information system. Some noted the importance of risk analysis without providing much in the way of risk analysis process. In my view, the failure to assess the possibility of differences between the two horizons accounts for many IS failures. And that problem is still with us today – witness the 2007 banking crash and the recent failure of Carillion. 16 The popularity, today, of agile methods is an attempt to reduce the time taken to implement a system, but I am not convinced that they resolve the key problems we identified all those years ago.

AB – In the late 1990s we coined the term ‘The Dark Side of IS/ICT’, and it is now widely used in many conferences and papers. To some extent, MIS as a subset of IS might be seen as somewhere between the light and the dark sides, all too often taking the managerial and technicist perspectives as givens or as primary standpoints. To some extent, ICT and digital technologies have led to enormous benefits, which in many cases have been accessible to and welcomed by a significant proportion of the world population – encompassing different social strata, ethnic groups and geographical regions.

Yet, developments in recent years in areas such as Big Data, social networks, and AI and robotics are seen by many as having a range of sinister and ominous implications and ramifications. Some might view these as accidental or contingent, but if we adopt Williams’ view of technology, many of these developments and innovations might be better understood as ‘looked for and developed with certain purposes and practices already in mind’. IS researchers and academics have to be mindful of this characteristic and work to establish a critical distance on many of these key issues, as opposed to adopting something more akin to a ‘gee-whiz’ orientation to the latest technologies, or more accurately the latest claims evoked by supposed technological innovations.

The grand economists at LSE were shocked and literally rendered speechless when the Queen visited there in 2008 and asked why none of them had foreseen the meltdown. They did eventually respond, but only after several weeks of delay!

17

IS academics should take this lesson to heart, with all that it entails for IS curricula and research issues. A largely neglected writer, Günther Anders, writing in the immediate aftermath of WWII (World War II), discussed what he termed ‘Die Antiquiertheit des Menschen’

18

– coining the term ‘Promethean shame’ (Anders, 1979). His argument, now being re-discovered and re-considered after a neglect of many decades, is that rather than feeling pride in our technologies and inventions – in a manner similar to that felt by Prometheus after he had created humans from clay and then defied the gods by stealing fire from them and giving it to humanity – we now feel shame. He proposed what he called a ‘philosophical anthropology in the epoch of technocracy’ (Anders, 1979: 1): By ‘technocracy’ I am not referring to the supremacy of the technocrats (as if they were a group of specialists who dominate contemporary politics), but to the fact that the world in which we live and which surrounds us is a technological world, to such an extent that we are no longer permitted to say that, in our historical situation, technology is just one thing that exists among us like other things, but that instead we must say that now, history unfolds in the situation of the world known as the world of ‘technology’ and therefore technology has actually become the subject of history, alongside of which we are merely ‘co-historical’. (Anders, 1979: 1)

Anders terms this a ‘Copernican revolution’, which few, if any, had grasped in the 1950s – a failure that has been perpetuated and exacerbated to the present day, and on a much larger scale. I have added to what he terms his ‘specific thesis’, bringing it up to date, but underlining its prescience as follows: My most specific thesis [is] that it is false to claim that the atomic bomb [and digital technologies] exist[s] in the framework of our political situation; to the contrary, it is clear that politics [and social interaction] take[s] place in the framework of the atomic [digital] reality. (based on Anders, 1979: 1 footnote)

Anders acknowledges that some might counter his argument, claiming that ‘we humans have made these machines ourselves. Our natural and legitimate attitude to these machines is therefore pride’. But this is to use the terms ‘we’ and ‘our’ as if they applied to all of us, rather than to a very small minority of ‘researchers, inventors and experts who truly master these mysterious realms’. For the rest of us, these achievements ‘strike us as strange and disconcerting’, and Anders singles out what he refers to as ‘the cybernetic ones’ – so he recognized that even in the 1950s these technologies were somehow distinctive.

I find an enormous and significant resonance between Anders’ writing and your trajectory. You began as virtually a complete novice, confronted by a strange technology and complex context, from which you derived an understanding that encompassed far more than the specific technology and context themselves. On the basis of that early experience, you then grew into a position of critical understanding and analysis that provided the basis for you to pass this on to colleagues and students, all the while continuing to develop your own understanding by working with key figures making equivalent significant contributions. Your emeritus period has been a continuation of this, albeit that your efforts to explain the ‘strange and disconcerting’ aspects to the ‘great and good’ have largely fallen on deaf ears – no fault of yours, and that is in no way to detract from your efforts and reputation. I leave it to you to add any final comment. But I will also add the opening words of Anders’ ‘Die Antiquiertheit des Menschen’ – volume II, as an apposite summary: It is not enough to change the world. That is all we have ever done. That happens even without us. We also have to interpret this change. And precisely in order to change it. So that the world will not go on changing without us. And so that it is not changed in the end into a world without us.

FL – Yes, Anders provides valuable insights. If only we could ‘interpret this change’ without wearing blinkers. But our interpretations tend to be instrumental and biased; the unfolding of actuality is more often determined by serendipity then by design. And perhaps we need to remember historian E.H. Carr’s much quoted aphorism: ‘Study the historian before you study the facts’ (Carr, 1961). Is that a negative end to our conversation? I hope it is not. I have found my life in the shadow of the evolving information age constantly exciting and often enabling, even if at times that excitement turns to anger. And looking back I value the interchange and companionship of the IS community and am proud to have been recognized by the Association for Information Systems for life-time achievement with their top honour, the LEO Award.

Afterword

AB – The last section of this conversation, on Frank’s emeritus phase, moves from a chronicling of his crucial contributions into consideration of wider issues, including ‘the dark side of ICT’. In effect broaching the question ‘what has LEO [and what followed in its wake] wrought?’ Consequently, it has been suggested that we address the issue of the ethics of ICT/ICS (information and communication systems), and add a fourth question to Beer’s list – that is, ‘An even better version is the question asking what, given (computers) digital technologies, (the enterprise) society SHOULD BE! That is, how can we lighten the dark side.’

Taking up these concerns in any substantive manner would extend this discussion well beyond its already considerable length, but that is not to deny their importance. A number of key issues are, however, worth stressing. It is certainly crucial that we gather and engage with the testimonies of the founding figures of IS, particularly someone as important as Frank Land.

Frank’s career has been described as the pattern of experience of many who were early to a new technology. I was reminded of Joseph Campbell’s ‘The Hero With A Thousand Faces’. The hero starts out naively in the ordinary world, gets a call to adventure, and eventually crosses into a special world where the hero encounters and must endure several ordeals. After some time in the special world the hero then returns to the ordinary world, but in the process has acquired some form of elixir, in FL’s case new knowledge about computers and business systems. Usually the ordinary world has difficulty understanding and relating to the elixir when it is first presented to them and some form of education is required. In some cases, the elixir is rejected, even if it is the solution to the problems the people face.

19

In addition, however, it is critical that these experiences and insights are set against a context that avoids simple and simplistic explanations, such as those founded on technological determinism, or use/abuse of technology seen itself as neutral. Hence our earlier quote from Weizenbaum that ‘the remaking of the world in the image of the computer started long before there were any electronic computers’ (Weizenbaum, 1984, p. ix), coupled with the discussion of Raymond Williams and Günther Anders, who provide an orientation that alerts us to the ways on which technological developments are always ‘looked for and developed with certain purposes and practices already in mind’ – where ‘technology has actually become the subject of history, alongside of which we are merely co-historical’, so that ‘politics [and social interaction] take[s] place in the framework of [digital] reality’.

The implications of this demand further elaboration and discussion, and ought to be central to the academic IS community in a manner that can support and influence the practices of IS/ICS – avoiding the shortcomings noted by Frank in the above. Again one of our reviewers offered the important insight that in effect Frank and colleagues such as Mumford and those who founded and contributed to the STS (socio-technical systems) approach were ‘struggling to find a language and concepts to frame the interpenetration of technology with the human elements in society’.

Some readers might, at this point, feel dissatisfied that we have merely hinted at these important issues, referring to but not developing the ideas of, for instance, Anders, Weizenbaum and Williams. In our defence, we would argue that this conversation should be seen as offering critical insights, articulating the history of IS, and inevitably raising at least as many questions as they answer – but, we hope, indicating important and practical paths that can be followed and developed.

Recommendations for further reading

The scope of the conversation has encompassed several extensive aspects, and readers can be forgiven for some bewilderment at this point. Names of authors, organizations and sources have been used liberally, so we now offer a few suggestions for further reading on some of the issues that have been addressed (full details of each item are listed in the references).

LEO computers

- G. Ferry – A Computer Called Leo

- D. Caminer et al. – LEO: The Incredible Story of the World’s First Business Computer

- F. Land – Implementing IS at J. Lyons

- M. Hally – Electronic Brains: Stories from the Dawn of the Computer Age

- T. Harding – Legacy: One Family, a Cup of Tea, and the Company That Took on the World

Emergence and development of IS/MIS

- A. Bryant – Thinking Informatically

- Bryant et al. – What is history? What is IS history? What IS history?

- G. Davis – The Past and Future of Information Systems: 1976–2006 and Beyond

- E. Mumford et al. – Research Methods in Information Systems

Critiques of technology and ICT

- G. Anders – The Obsolescence of Man

- S. Beer – The Brain of the Firm

- D. Haraway – A Manifesto for Cyborgs

- R. Williams – Television: Technology and Cultural Form

Key figures

Lyons and LEO

George Booth – Lyons Company Secretary

John Simmons – Lyons Chief Comptroller

John Barnes, David Caminer, Mary Coombs (née Blood), Betty Cooper (née Newman), John Grover, Leo Fantl, Derek Hemy, Ernest Lenaerts, John Pinkerton, Anthony Salmon, Oliver Standingford, Thomas Raymond Thompson

Early computer pioneers and researchers

Howard Aiken (Harvard, USA), Douglas Hartree (Cambridge, UK), Hermann Goldstein Princeton, USA), Maurice Wilkes (Cambridge, UK)

Tavistock Clinic and associates

Ken Bamforth, Stafford Beer, Peter Checkland, Fred Emery, John Hawgood, Enid Mumford, Eric Trist, Mary Weir

LSE and early academics

Niels Bjorn-Anderson (Denmark), Don Conway (Stafford, UK), Dan Couger (USA), Gordon Davis, (USA), Sandy Douglas (LSE), Sam Eilon (Imperial College, UK), James Emery (USA), Gordon Foster (LSE), Patrick Losty LSE), Adriano De Maio (Milan, Italy), Richard Nolan (USA), Margaret Olson (USA), Ronald Stamper (LSE), Alex Verrjin-Stuart (Leiden), Daniel Teichroew (USA), Giuseppe Traversa (Pisa, Italy), Peter Wakeford (LSE), Sam Waters (LSE)

LSE PhD Alumnae – students of FL

Bay Arinze, Chrisanthi Avgerou, Richard Baskerville, Bob Galliers, Oscar Guiterrez, Rudy Hirschheim

IFIP TC8 WG 8.2 and fore runners

David Avison, Neils Bjorn-Andersen, Gordon Davis, Guy Fitzgerald, A.B. Frielink, Rudy Hirschheim, Hank Lucas, Trevor Wood-Harper

Key intellectual figures (see bibliography for selected writings)

Russell Ackoff (USA; 1919–2009) – a key figure in the development of post-war systems thinking; many of his writings – some dating from the 1960s – have proved highly prescient and of continuing value.

Günther Anders (Germany/Poland, USA, Austria; 1902–1992) – philosopher in the phenomenological tradition, studies with Husserl and Heidegger; his essays on technology, the threat of nuclear destruction and other technologies were largely neglected until re-discovered in the 1990s.

Stafford Beer (UK; 1926–2002) – developed new ideas about organizational development and systems ideas, including VSM (Viable Systems Model).

Peter Checkland (UK, 1930–) – systems theorist who developed Soft Systems Thinking, an enhanced form of Action Research.

C. West Churchman (USA; 1913–2004) – notable contributions to ethical systems theory, and highly influential on systems thinks such as Checkland and Mumford.

Enid Mumford (UK; 1924–2006) – key systems thinker, including her ETHICS approach; associated with the socio-technical orientation and development of work originating with the Tavistock.

Raymond Williams (UK; 1921–1988) – key figure in the development of cultural studies; key works include Culture and Society (1958) and The Long Revolution (1961). Politics and Letters: Interview with New Left Review (1979) essentially an extended ‘conversation’ with Williams, covering his biography and intellectual career.

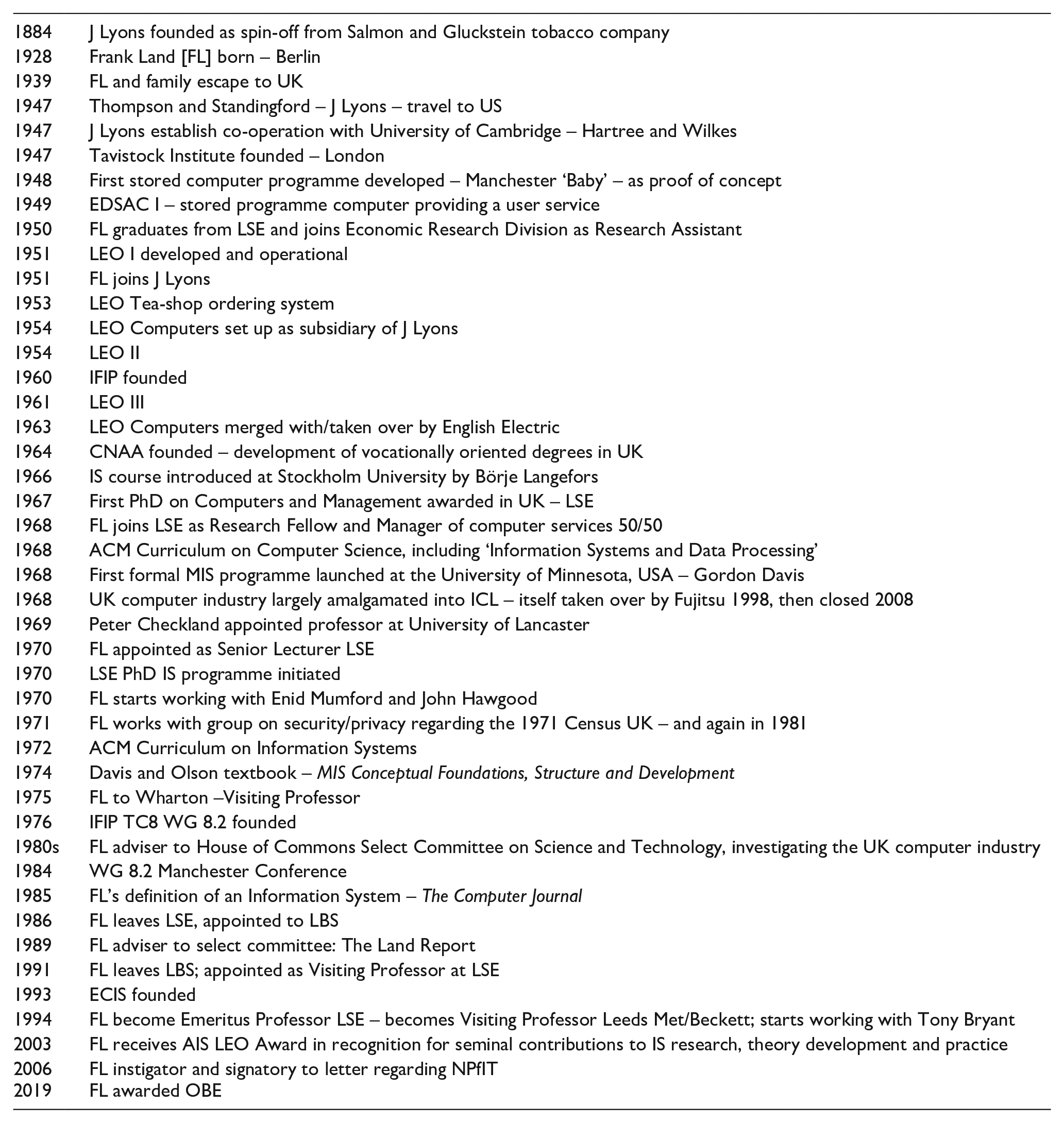

TIMELINE of key dates and events

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.