Abstract

African public administrations are in an especially difficult position with respect to the adoption of artificial intelligence (AI), in both how they harness its benefits but also in managing potential harms. Through a narrative review of existing research, this paper synthesises findings from previous digital government implementations on the African continent, and considers the implications for AI use. The analysis is centred on the way public officials are bypassed or empowered to work with emerging technologies, and the influence of public service values on their adoption. Four concerns emerge as relevant: Integrity of recommendations provided by decision-support systems, including how they are influenced by local organisational practices and the reliability of underlying infrastructures; Inclusive decision-making that balances the (assumed) objectivity of data-driven algorithms and the influence of different stakeholder groups; Exception and accountability in how digital and AI platforms are funded, developed, implemented and used; and an expectation of Complete understanding of people and events through the integration of traditionally dispersed data sources and systems, and how policy actors seek to mitigate the risks associated with this aspiration.

Introduction

Recent technical progress around machine learning (ML), as a prominent sub-field of artificial intelligence (AI), has reignited interest in automated or algorithmic decision-making, including its potential to ‘transform’ government bureaucracy (Araya, 2019). Most automation in current public sector information technology (IT) systems involves making a decision or recommendation based on the application of relatively fixed rules to an analysis of a limited number of variables. For example, the algorithm in a welfare IT system may be configured to allocate a certain grant amount to a parent according to the household income and number of children.

Framework for exploring AI adoption and impact in the public service.

ML is different from this largely human-configured automation in that the set of rules defined in the algorithm is generated by a computer, which considers a much larger amount of data and variables than a human could interpret and programme. Moreover, the algorithm can automatically adjust as new data is received to improve accuracy. In this way ML introduces a new, meta-level of rule creation and automation into digital government which has important implications for transparency, fairness and accountability in public planning and administrative decision-making (Bullock et al., 2020; Kuziemski and Misuraca, 2020; Zuiderwijk et al., 2021).

With the shift in design, configuration and operation of digital government platforms to higher levels of automation and technical complexity, control and discretion are becoming concentrated in an increasingly elite community of platform companies and ‘system-level bureaucrats’ responsible for managing data inputs and designing the classification or prediction algorithms (Bovens and Zouridis, 2002). As a result, emerging data-driven applications are raising new questions about the conflation of policy formulation with systems design in these exclusive spaces; but also intensifying concerns about the epistemic dependence and policy alienation of public officials (Tummers et al., 2009; Van den Hoven, 1998). For the recipients of public services, especially those with limited ability or resources to influence system-level activities, these changes can have significant implications for their access to and experience of public services, and the potential for administrative justice (Eubanks, 2018; Raso, 2022; Razzano, 2020; Sutherland et al., 2019).

For African governments and users of public services, the potential risk from these shifts is especially severe for a number of reasons: First, many African states are unable to assemble sufficient policy and administrative capacity or influence to mitigate the challenges posed by emerging technologies and global platform companies (Heeks et al., 2021). Second, governments have often become dependent on external technical skills, due to high levels of contracting to the private sector for service delivery functions (Brunette et al., 2019) or because of a dependence on international donor funding and programming (Goldsmith, 2001). Finally, the largely imported information systems used on the continent are more likely to experience failure due to a mismatch with local infrastructure, capabilities and organisational environments (Berman and Tettey, 2001).

The likelihood, therefore, of public officials in African countries being able to meaningfully engage with the opportunities and risks of AI is low. Whilst this can contribute to an erosion of responsibility and accountability amongst officials, with the potential for longer term damage to the effectiveness of the public service, there is also the more immediate possibility of direct harm to marginalised populations using these technologies, such as through the misuse of personal data (for example, see Torkelson, 2017).

Nonetheless, governments continue to seek opportunities around the use of AI, to address service delivery challenges but also to position themselves (and their local ecosystem) as early adopters in the field. And so the policy response in many parts of the continent is aiming at a ‘responsible’ approach to the implementation of AI, often building on a platform of data protection legislation enacted in several countries (Gwagwa et al., 2021).

Whether and how these emerging initiatives translate into a public service that exercises agency on AI, and if this is likely to result in public benefit is a key concern. Importantly, though, the adoption of AI by civil servants is going to be shaped by decades of African experience with existing information and decision-support systems, often in niche applications – from the medical expert systems of the 1980s (Forster, 1992), to established algorithms for calculating unemployment benefits (Horn, 2021) and 30 years of digitalisation at Ghana’s customs authority (Addo, 2021) - as well as wider e-government and statistical or open data programmes.

Given this experience, the main research question is then: What lessons can be drawn from previous digital implementations in African public administrations that can inform the responsible and effective use of AI in similar contexts? In responding to this question, the analysis sought to achieve three main objectives:

Understand the emerging relationships between digital discretion, AI, public service values and administrative outcomes globally. Synthesise findings related to IT governance, implementation and impact from previous research conducted in African government departments and agencies. Consider the implications of these findings for AI adoption by Africa’s civil servants.

The next section provides a global perspective on digital discretion, public service outcomes and the emerging influence of AI.

Digital discretion, AI and public service outcomes

Proponents of digital, data and now AI-enabled decision-making see an opportunity for significant shifts in public administration; away from the biased allocation of public resources towards the fair and transparent application of rules, and away from procedure-focused indifference towards consumer-oriented personalisation and efficiency. In these scenarios, digital discretion replaces (or augments) the human discretion traditionally exercised by bureaucrats when implementing policies, by both limiting the amount of leeway they have when making decisions, and by providing rich, data-driven insights to automatically customise services for specific user circumstances.

In reality, the adoption of technology in the public sector is shaped by a more complex mix of potentially competing values - as well as technology, organisational and task characteristics - that affect the realisation of public service objectives (Bullock 2019; Busch and Henriksen, 2018; Hood, 1991). Public officials who use or mediate access to digital tools are central to these outcomes as they ignore, defer to or appropriate decision-support systems (Snow, 2021). The global surveys and indices of e-government, ‘digital government reform’ and digital inclusion provide limited data on public official use and attitudes towards technology (for example, see OECD, 2020; UN DESA, 2020; UNESCO, n.d.). Nonetheless, as part of these reports’ broader commentary, as well as through more targeted country or agency-level studies, we are able to get a sense of what civil servants think of digital government and how it influences administrative decision-making (for example, see Antón et al., 2014; Baldwin et al., 2012; Tummers et al., 2009).

Two observations are prominent. First, public officials expect change to happen when using technology, but in a multitude of possible directions; some positive, such as stronger relationships with the public, and others negative, such as information overload (Antón et al., 2014; Baldwin et al., 2012; Tummers et al., 2009). Second, the motivation or intention of public officials to use digital services is influenced by a range of personal and organisational factors, including their experience with the technology (Antón et al., 2014), but also drivers in the wider institutional environment. As suggested in OECD Digital Government Index (2020) commentary, the sustainable adoption of digital government reforms in member countries depends on the translation of higher-level policies into formal roles and responsibilities for coordinating the implementation of public sector IT and data initiatives. Similarly, the UN E-Government Survey, which tracks the presence of institutional factors for enabling e-governance practices, such as legal frameworks and guidelines for public officials, notes that that the: “success of e-government initiatives is largely contingent upon the values that prevail in public administration as a whole and among distinct public entities and individual staff members. The ethos of a Government and the values promoted by individual institutions determine how interaction with the public is perceived and guide the way ICT is integrated to mediate that relationship” (UN DESA, 2020: 137).

This means that public officials are likely to resist the use of services that are in conflict with the prevailing values. In fact, as noted earlier, a ‘shift’ in bureaucratic values is seen to be a core enabler and goal of digitalisation initiatives, with implications for a range of related issues, such as the principles and rules by which government goes about the procurement of technology (Wilkening et al., 2021). But what are these prevailing values, how are they related to digital technology and what do they mean for service outcomes?

The anticipated outcomes from public sector use of information systems may be rooted in distinct and possibly contradictory value concepts: as examples, technology can help reveal the reasoning behind government actions and thereby enhance political legitimacy (a democratic public value), or it can enable faster decision-making and increased efficiency (a professional public service value), or it can be used to enforce adherence to rules and procedures for fair and uniform decision-making (an ethical public service value) (Busch and Henriksen, 2018).

Ultimately, these values form the basis on which citizens and public officials assess the adequacy of digitally-enabled decisions (Raso, 2022). In the case of AI and algorithmic decision-making generally, there are emerging findings from surveys which point to a more general sense of acceptability without providing details on what values are being considered: Data from Switzerland suggests that there are low levels of public trust in the recommendations made by online algorithms (Latzer et al., 2020). In the United States, public acceptance of computer algorithms making decisions related to job applications and personal finance is only about 31% (Smith, 2018). A nationally representative study in the Netherlands is more positive, with AI being seen as on par or better than human experts when it comes to decisions about public health and justice (Araujo et al., 2020). Limited information is available on how public officials perceive the value of AI, with most focusing on executive-level views related to priority application areas and organisational readiness (for example, see Criado et al., 2021).

Underlying these trends we should expect substantial nuance in how trust is constructed or evolves. For the public service, we have a particular interest in AI’s relationship to administrative justice; what form it takes and how it may be realised in different organisational settings (Heeks and Shekhar, 2019; Raso, 2022; Sutherland et al., 2019). The specific role expectations of public officials as well as their professional background and membership intersect with the digitally-delimited decision options designed into digital services by external architects, which can lead to value or ethics conflicts (Busch and Henriksen, 2018; Hood, 1991; Raso, 2022).

Of broader concern is when the digital ‘curtailment’ of frontline policy discretion (Buffat, 2015) leads to work alienation (Tummers et al., 2009) and even a descent into thoughtlessness (McQuillan, 2019). Understanding how this occurs as well as possible sites of resistance and agency by public officials, requires a deeper exploration of the genealogy of digital technology use and the contingent stability around what currently works (Gray, 2014). Even where emerging data-driven technologies such as ML present opportunities for change, the potential for a shift in priorities and values of public officials is moderated by the acceptability of decisions in each context, but also the inertia of layers of decade-old data processing and code-driven decision-making tools on which AI and ML are built, supported by a network of frontline workers, programmers, infrastructure and databases (Raso, 2022).

Directing ‘digital transformation’ in the face of this inertia is the subject of various public sector guidelines and courses (for example, see Teaching Public Service in the Digital Age, n.d.). Ensuring broad buy-in to the transformation process is often argued to require participatory and user-centred design methods. For the OECD, involving end users and stakeholders, including government officials and civil society, in digital government reforms can enhance the legitimacy of decisions, but these practices are not widely adopted (2020: 7). In reality, most system development projects in developing countries fall far short of what many scholars feel is appropriate for public interest platforms, especially given the power imbalances and likelihood of further marginalisation of vulnerable groups (Avgerou, 2008). Therefore, whilst there are calls on designers and developers to embed ethical data and AI practices in their work (for examples, see DSCI 2021; UK Government, 2021), these mainly localised attempts at encouraging the co-design of AI solutions – and preventing harm – do not take into account the wider socio-technical configuration in which system development takes place (Donia and Shaw, 2021).

There is also limited consideration of the unique position and responsibility that public officials occupy as both user and mediator of technology-enabled service delivery. As Van den Hoven argued in 1998, systems developers need to take into account three responsibilities when designing platforms for civil servants: task responsibility (as typically outlined in the contract), negative task responsibility (ensuring that no harm is brought about to employees and citizens in performing the task), and also meta-task responsibility (ensure that the user can take his or her responsibilities). In practice though, “…this will only be possible in a climate of open discussion about design and development issues with a seriousness that used to be associated with political deliberation about the common good in the pre-computer era” (1998: 107–108).

Here we see an argument for participation that goes beyond design-for-use, to recognise how epistemic empowerment and enslavement through technology are intertwined and need to be addressed as part of a politically sensitive design process.

Analytical framework and method

Of course, what the movement around AI and these considerations mean for African public administrations is not clear. Drawing on our experience with other digital and data-driven technologies, governments may look to develop public sector guidelines on the use of AI in specific sectors or for how AI should be procured (for example, see UK Government, 2020). However, we will also need to ask more fundamental questions about the way in which AI is embedded within the broader digital and data environment; the mechanisms by which it is likely to enable, curtail or alienate public officials in their delivery of public services; and the expected impact on administrative outcomes.

The following sections present findings from a narrative review of research on digital discretion, e-government and AI from African countries to better understand how AI is, or could be, adopted within existing data and digital environments, and how it may influence the dynamics of public service and its outcomes.

The recent growth in review-type analysis in the information systems field has been supported by calls since the early 2000s for more attention to evidence synthesis as a standalone research approach. Whilst the nature of synthesis has specialised across a range of methods – from narrative to systematic and umbrella reviews – the objectives are similar: to understand the breadth of research in a field, integrate evidence on the effectiveness of interventions, and to develop theories or conceptual background for subsequent research (Paré et al., 2015). As public sector use of AI is an emerging area, there is little guidance on where further study or policy interventions are needed, especially in developing countries. To address this need, the following sections aim to provide a landscape view of research related to technology-supported public sector decision-making on the African continent, similar to a scoping review, as an initial indication of the scale and type of research that is potentially relevant to AI adoption. It therefore focuses on the “breadth of coverage of the literature rather than the depth of coverage” (Paré et al., 2015: 186) by synthesising findings from research conducted around a diversity of information technologies across a range of African countries, rather than looking to provide an in-depth analysis of a single country or technology.

The review process involved four main steps: First, a starting framework was developed based on an initial set of widely-cited articles globally that highlighted key terms and conceptual categories related to digital discretion, many of which were discussed in the previous section. This framework helped with the development of inclusion and exclusion criteria: Geographically, consideration was given to research conducted by both African and non-African scholars as long as the research was concerned with at least one African country. In focusing on public administration, material covered a spectrum of public sector decision-making practices, from policy formulation to monitoring and evaluation, at national and subnational levels, across a variety of possible sectors, from public health to crime prevention (Shafritz et al., 2016). Although information and decision-support technologies have been used on the African continent for several decades, adoption has increased significantly in recent years, and so the focus of analysis was limited to the period between 2000 and 2021. As the second step in the review process, a search string was developed using the inclusion criteria and identified terms, tested on different databases, adjusted and eventually run on the Scopus database. A total of 477 unique articles were identified. The search string is provided in Appendix A. In the third step, these articles were screened by one researcher using title, abstract, year of publication and citation count, to select 46 articles which were considered most relevant to the research question. For the final step, the full texts of the 46 selected articles, supplemented by a small number of relevant references, were downloaded and reviewed in detail. The review involved extracting key findings from articles which were then coded according to terms from the starting framework, whilst also allowing for new additions and modifications to categories to take into account themes identified in the extracted texts. This led to a fusion of the starting framework and emergent concepts (Hay, 2011).

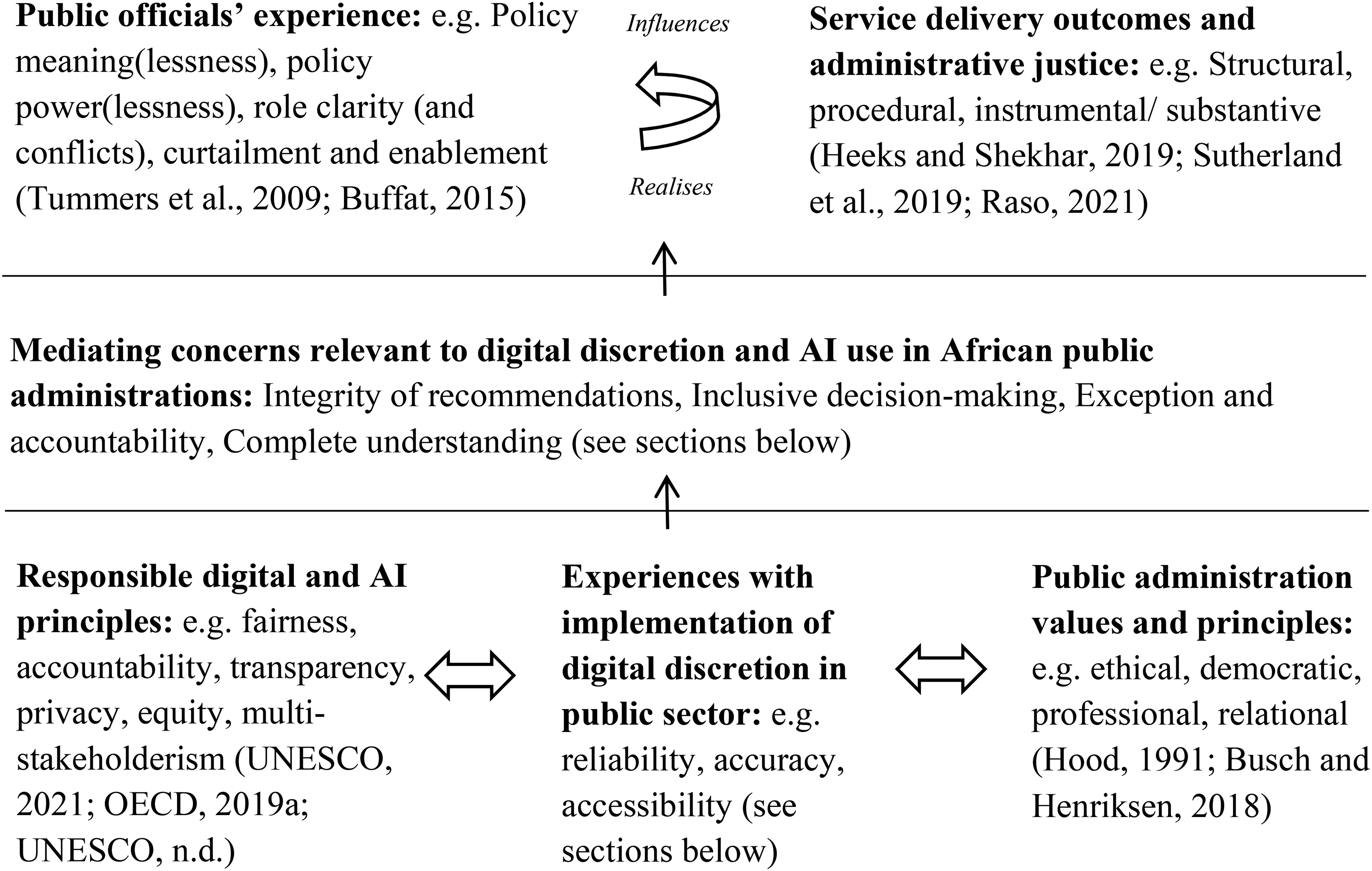

The final framework is outlined in Figure 1, and highlights the broad conceptual categories resulting from adjustments to the starting framework and integration of themes from the full text review. Most significant is the addition of a layer of mediating concerns or narratives emerging from the Africa-specific material. These include:

Integrity of recommendations provided by decision-support systems, including how they are influenced by local organisational practices and the reliability of underlying infrastructures. Inclusive decision-making that balances the (assumed) objectivity of data-driven algorithms and the influence of different stakeholder groups. Exception and accountability in how digital and AI platforms are funded, developed, implemented and used. Complete understanding of people and events through the integration of traditionally dispersed data sources and systems, and how policy actors seek to mitigate the risks associated with this aspiration.

These mediating concerns are shaped by at least three dynamics: experience with previous digital or e-government implementations, emerging digital and AI-specific principles, and public administration values which may be particular to the region or country. They also seem to be relevant to public officials’ experience of policy meaning(lessness), policy power(lessness), role clarity (or conflict), curtailment or enablement and ultimately, the realisation of service outcomes and administrative justice in its different forms.

The framework is inevitably tentative as the main priority is to map out broad conceptual categories, and so the relationships and influences are only indicative of potential areas for further research. In the following sections these concepts are discussed in more detail, through the lens of the four mediating concerns introduced above.

Integrity of recommendations

The trustworthiness of administrative decision-making enabled by digital technology is central to ensuring its legitimacy as a tool that can be used in public service environments. If civil servants working with AI-based applications or the citizens affected by decisions do not believe recommendations are reliable and fair, or cannot understand how a decision is reached, then they are unlikely to trust these systems (OECD, 2019a; UNESCO, 2021).

In general, information technology is seen to help public administrations reveal the reasoning behind government actions (and thereby enhance political legitimacy, a democratic public value); but also enforce adherence to rules and procedures (enabling fair and uniform decision-making, an ethical public service value). However, research on this topic suggests outcomes are more variable, with the possibility that the use of technology exacerbates discrimination through uneven access and that it can obscure processes (Bannister and Connolly 2014; Busch and Henriksen, 2018). Adding to this, the relatively opaque character of ML algorithms, along with well-documented cases of bias across the ML learning life cycle, have focused public and policy attention on the lack of transparency, explainability and fairness of many AI-based applications (OECD, 2019a; UNESCO, 2021). In African contexts, the integrity and trustworthiness of decision-making supported by digital technologies and AI is affected by a number of underlying factors discussed below.

Working practices

Perhaps most fundamental is the mismatch between values and decision-making models embedded in (mostly) imported technology platforms and those of the local context (Berman and Tettey, 2001; Mensah et al., 2021). Empirical findings from several studies highlight how e-government projects have not been supported by, as a Malawian study describes, appropriate “administrative structure, processes, decision-making structures, or procedures” and are therefore in conflict with “the way people work” leading to low levels of adoption and motivation to use (Ziba and Kang, 2020: 369–370). In response, much of the focus tends to be on top-down responses that can bring civil servants into line with the platform-designed processes; such as through executive mandates and strategies (Apleni and Smuts, 2020; Mensah et al., 2021; Ziba and Kang, 2020), as well as by enforcing standards and standard operating procedures (Kang’a et al., 2016: 680).

Unfortunately, the ‘way people work’ in many African public administrations tends to involve patronage and corruption of various forms, issues which affect the consistency and fairness of decision-making and which are not easily addressed by digital solutions. Deploying information technology to address corruption is often not successful because, in these environments, “systems are inefficient, administrative rationalities different, and enforcement structures generally weak” (Addo, 2021: 109). But it is also because information technology can amplify or create alternative opportunities for corruption, potentially of a much larger scale than the petty corruption prevalent in many African states (Addo, 2021; Donovan, 2015; Mutungi et al., 2019). In fact, the changes or disruption to roles associated with information technology implementation is itself argued to lead to corruption (Elgohary and Abdelazyz, 2020). The integrity of digital systems is further eroded by regular security breaches; and the centralisation of biometric or identity data in national repositories increases the reward for external hackers, whilst creating almost ‘infinite’ opportunities for internal official misuse of personal data (Breckenridge, 2005: 281). Whilst digital fraud in public systems is also prevalent in most other countries (Anders, 2015; Busch and Henriksen, 2018), African states tend to lack the skills needed to effectively counter these threats (Barata and Cain, 2001).

Technical and human capabilities

The integrity of decision-support systems in African states is affected by a range of other well-known technical issues including, amongst others, uneven access to connectivity and the unreliability of information systems. Unreliability is often caused by unstable electricity supplies but is also the result of limited system support skills (Forster, 1992; Kang’a et al., 2016; Ziba and Kang, 2020). Public officials attempt to mitigate these issues through a variety of responses. In the case of a Kenyan electronic medical record (EMR) implementation, it became necessary to maintain a double-entry of information in both paper and digital forms so that services can be provided consistently, adding to the data management workload (Kang’a et al., 2016: 681). These issues have deeper implications for officials’ attitudes to computing systems, beyond just increased frustration and workloads. Recounting a Liberian example, Mensah et al. (2021) describe how system downtimes leads to inconsistencies between physical forms and virtual databases, the outputs of which are then rejected by officials and trust in the system is eroded.

Ultimately, to effectively use digital and data-driven tools in decision-making, sufficient capabilities need to exist amongst front-line officials for interpreting data and data-based recommendations, for being able to explain how decisions are arrived at, and to take responsibility for the impact of the decisions. In examining the deployment of medical expert systems in developing countries during the 1980s, and from an analysis of their use by public health officials, Forster argued that: “the user must understand the basic reasoning of the system and be given the ultimate responsibility to challenge its output … If the burden of accountability lies with the system builder, the user must be skilled enough to question the assumptions, logic and outcomes of the system” (1992: 22).

However, technology and data-related capacities, especially around more complex needs, are often lacking. For example, in a baseline analysis for an international donor’s ‘evidence for action’ programme in public health the researchers found that the collection and use of data for day-to-day decision making by clinical, administrative and management officials across six African countries appears to be quite widely practiced, but the wider, strategic use of data appears to be a challenge (Nove et al., 2014).

Adding new technologies to work practices can increase access to relevant data, but also leads to information overload for officials, affecting their perceived efficiency and effectiveness (Barata and Cain, 2001: 255; Elgohary and Abdelazyz, 2020). A study of health information system adoption in two tertiary healthcare facilities in South Africa found that the broad, non-targeted adoption of computerised activities adds to workload for nurses, taking time away from patients – and so the researchers’ recommendation is to more carefully target specific work flows for digitalisation (Cline and Luiz, 2013).

Transparency and evidence of what works

There has been some recognition that the opaque character of AI is making it difficult to understand how decisions are reached or to assess whether an application is operating as expected. At least since the early 1990s, scholars have argued against the deployment of black box expert systems for disease diagnosis in Africa and other developing countries, suggesting that, where technically feasible, “the steps between symptom history and therapy recommendation must be transparent and open to critique and examination by the user” (Forster, 1992: 20). A starting point is to ask whether extensive data collection and complex models are needed in the first place (Forster, 1992; Rudin, 2019). In addition, there is now an established network of researchers, practitioners and policy actors working on methods and tools to support fair, transparent and ‘explainable’ AI; such as for model and bias analysis across the ML pipeline (for example, Google’s What-If Tool and IBM’s AI Fairness 360). There are also process frameworks being developed for open benchmarking and evaluation of AI technologies (for example, the ITU-WHO Focus Group on Artificial Intelligence for Health), and calls for empirical evidence to demonstrate the safety and efficacy of these technologies - which is critical for their introduction into certain sectors (for example, see Seneviratne et al., 2019).

When all of the above considerations are applied to possible AI implementation - with expected changes to organisational decision-making and roles, additional demands on existing digital infrastructure, new types of sensitive data use and growing adoption of sophisticated data processing - it is clear that the integrity of decision-making supported by AI will depend on a substantial investment in individual and organisational processes and capabilities.

Inclusive decision-making

The effectiveness and legitimacy of digital government does also depend on a broader set of institutional capabilities, one of which is ensuring meaningful involvement of diverse stakeholders and affected individuals in shaping the use of technology (Busch and Henriksen, 2018). As a more recent example, the values and principles espoused in the UNESCO recommendation on AI note that diversity and inclusion “should be ensured throughout the life cycle of AI systems … by promoting active participation of all individuals or groups” (2021: 7) and calls for “inclusive public oversight” over the technology’s adoption (2021: 10).

Decentralisation

How participation takes place depends on the institutional arrangements within which technology implementation and technology-mediated decision making take place. The studies from the African continent reviewed in this paper describe quite durable hierarchies anchored in a strong central authority. Earlier commentary on the use of technology in public service organisations went as far as suggesting that there is a fundamental conflict between African states’ relatively centralised decision-making controlled by generalist administrators, and the computer-enabled algorithmic model of organisational control imported from Western contexts which presumes the “social acceptance of diverse forms of expert knowledge” (Berman and Tettey, 2001: 3). Endorsing this view, a study of front-line healthcare workers in a number of Africa countries found that “staff’s ability to say no to senior staff and colleagues for demands/decisions not supported by evidence was relatively low” (Nove et al., 2014: 105). An emphasis on hierarchy and central authority is evident in similar e-governance research where the focus tends to be on reducing variation between centrally defined policies and their implementation by street-level bureaucrats, and how high-level policies and standards may be incorporated into the design of systems (Barata and Cain, 2001; Reddick et al., 2011). Whilst the use of technology to enforce objectivity and consistency in front-line decision-making has demonstrated value in the delivery of critical welfare services, it does raise a broader question about whether there has simply been a redistribution of subjectivity (and corruption, discussed above) to central actors, and an increase in system fragility by creating a central point of failure (as discussed below) (Donovan, 2015).

The same dynamics are reflected at a macro level, between international, national and subnational spheres of governance, where technology is implicated in a tension between the benefits of decentralisation (especially around revenue raising and/ or spending) and a risk that decentralisation creates multiple centres of power (Barata and Cain, 2001; Ochara, 2010). For central officials, digital government provides an opportunity to more effectively monitor and assert disciplinary power over local government, especially through data-driven performance management and fiscal allocation. This ‘managerial’ approach to inter-governmental relations and public administration supported by digital technologies seems to be at odds with a narrative supporting decentralisation as a way to enable more innovative, open and participatory local policy processes (Ochara, 2010). In the case of relatively powerful city governments, especially, their capture of local data flows and participation in global city networks and knowledge exchanges is an increasingly important influence on policy decision-making (Baud et al., 2014), but is likely to heighten conflicts with national actors.

Primacy of the model

Much of the ability and authority of central policy officials is derived from the technical complexity and ‘objective’ authority of the systems or algorithms they employ, which presents a conundrum for what are meant to be participatory planning processes. As a case study covering two South African cities shows, by using a novel GIS-based modelling approach – to prioritise the allocation of facilities for informal settlements – planning officials were able to “reduce political pressure on location choices” (Baud et al., 2014: 506). However, it seems the source and type of political pressure matters: the same study highlights how “input from poorer communities is limited” because of a lack of access to public media and dysfunctional ward representation. As a result, information and inputs to planning tools tends to favour more affluent groups. Yet the authors recognise that stronger engagement with all affected groups would help to address concerns about the assumed validity of data used in GIS modelling (Baud et al., 2014: 507).

Perhaps the most significant practice of data collection to support governance processes is the national identity register, and on the African continent this platform is increasingly enabled by biometric technology (Breckenridge, 2021). From a global perspective, AI-enabled technologies are transforming how biometric collection and processing are taking place, which has significant implications for:

The way in which data is collected - from an in-person exchange at a government office to the harvesting of camera, social media or mobile phone registration data; The type of data collected - from the relatively simple structural representation of a fingerprint to complex profiling based on data related to voice, gait, face and behaviour; How consent is obtained - from a public official facilitating understanding and consent; to continuous, technology-driven monitoring and notification (at most); and Nature of identification - from a named-person to an anonymous, behavioural signature or trace (Eaves and Rachid, 2020; Heller, 2013).

In Africa, documentary forms of government have, until recently, continued in many post-colonial states, and involve writing and/ or interaction with civil servants in physical spaces. AI-enabled biometric registration and transacting, in contrast, is remote and involuntary, “answering questions about identification, location, and timing without the active participation, or the knowledge of, the subject” (Breckenridge, 2021). Through a “radically simplified claim about the presence and implied consent … [the technology] works to control the agency of governed subject and the bureaucratic agent by requiring live biometric data from both parties for every transaction” (Breckenridge, 2021). The increasingly instantaneous centralisation of data management in national systems tends to “disempower local officials, who have limited rights to edit records, or change the rules embedded in the database” (Breckenridge, 2005: 281). Moreover, the convergence of a broader set of technologies, including blockchain, around a biometric assemblage reinforces the immutable nature of envisaged identification systems (NOID, 2019).

The simplification and reduction of information associated with digital projects, in which contextual meta-data is often not created or is lost, means that data can become meaningless (Barata and Cain 2001: 255). In reducing contextual information to a single classification or recommendation, intelligent systems are creating an ‘informational void’ (Breckenridge, 2021), erasing much of the opportunity and story that helps people to explain their case, and so any opportunity for consideration and empathy – as key public service values in many contexts - by local officials becomes lost

Re-intermediation

The remote data collection and control offered by digital government, biometric technology and AI enable quite extensive physical disintermediation, which is often central to reducing uncertainty around service delivery. For example, Addo (2021) notes that eliminating physical interaction in Ghana’s customs office ‘long room’ was key to reducing corruption. By digitalising customs activities, whilst outsourcing critical classification and valuation functions to private companies, the customs authority was able to diminish “levels of face-to-face interactions and disintermediated customs bureaucrats from key revenue-generation steps” that feed corrupt activity (Addo, 2021: 106). Similarly, in a study of Egyptian local government managers involved in e-government implementation, changes to administrative interaction and discretion - including a reduction in manual processes – are associated with lower variation in the implementation of policies by street-level bureaucrats, reduced contact by employees with citizens, and greater accuracy of decisions by employees (Reddick et al., 2011).

In contrast with these observations are studies highlighting the importance of relational processes for supporting the diffusion and use of e-government services (Twinomurinzi et al., 2012). Research describes various forms of re-intermediation in African countries involving group interaction with e-government applications; such as via telecentres, shared phones and assisted use of online services (Heeks, 2002). The same applies to how civil servants support each other with the use of new technology, such as through “forums where peers can engage and discuss issues” affecting use of an electronic health system in Kenya (Kang’a et al., 2016: 683). Group-mediated adoption of technology is especially important to local government and urban contexts, where physical proximity enables tacit knowledge exchange, more meaningful participation in policy processes and, even, the co-design or co-production of services (Biljohn and Lues, 2020).

This links with the idea of ubuntu and ‘spirit of community’ - interpreted as democratic governance enabled by a transparency of actors and roles – which support deliberative processes in ‘negotiated spaces’, and through which marginalised people can develop stronger forms of citizenship (Baud et al., 2014: 502). In South Africa, the setup of an open and community-based data initiative as an entry point for engagement and knowledge exchange helps to shift power relations related to planning activities. This type of initiative aims to be a counter to the extractive ‘datafication’ of cities which has often resulted in limited or unjust outcomes for already marginalised groups (Sutherland et al., 2019).

Exception and accountability

Through its central role in a ‘fourth industrial revolution’ (Caruso, 2018; Schwab, 2016) and because of its relative novelty and technological complexity, AI tends to have an exceptional character. This portrayal of AI has important implications for how policy actors engage with a technology that is already impacting daily life, but is also in many respects science fiction. Unfortunately for today’s public officials, digital advances and innovation generally are seen to be incompatible with government bureaucracy in its traditional form and so globally, but also in many African states, there is pressure to establish alternative organisational forms, processes and roles for public officials to be able to harness emerging technological opportunities and to be able to respond to the complexity of current developmental challenges (OECD, 2019b; van der Wal, 2017; Vivona et al., 2020).

Outsourced expertise

African governments are already unusually dependent on external actors for the delivery of public services, whether international donors or the private sector (for example, see Brunette et al., 2019). Digital technology has somewhat naturally been transferred to recipient countries through these channels – consultants, IT vendors, donor agencies and Western-trained civil servants (Heeks, 2002) – often as a component or extension of wider new public management (NPM)-oriented reform programmes. Although this externally-led approach is often viewed as necessary for accelerating the pace and quality service delivery, governments may also be outsourcing to separate themselves from the politics of planning processes or to provide an alternative, more trusted interface for accessing services (Addo, 2021).

The experience of African public officials is that (mainly international) donor representatives are very influential in policy decision-making (Ochara, 2010). As a commentary on financial management systems observes, a donor focus on outcomes rather than processes and practices can have negative consequences, including in the development of parallel systems (Barata and Cain, 2001: 254). In an analysis of e-government adoption in Malawi, there is awareness of an “over-reliance on donors” (Ziba and Kang, 2020: 370–371). Similarly, many of the e-government projects in Liberia have been funded by agencies such as USAID and implemented by consultants, with many senior IT officials concerned about sustainability of projects that do not have a committed government funding allocation (Mensah et al., 2021).

In urban applications AI has been assimilated seamlessly into the smart city agenda in which “the technical expertise of the private sector means that their power over local government is strong, and likely to grow, as the ‘smart city’ discourse requires technical expertise outside local government competencies” (Baud et al., 2014: 507).

Smart cities and AI build on a more established tradition of data-supported town planning in African urban contexts, and for which GIS-based modelling has often involved or depended on private consulting firms. Whilst additional stakeholders have been enrolled in the implementation and use of GIS, such as academic or university partners, residents are usually only engaged via consultation and formal feedback processes that tend to favour more affluent individuals and businesses (Baud et al., 2014; Odendaal, 2003).

Many African cities and states are not able to effectively design and oversee private sector and donor contracts, probably because of the capacity constraints that led to them outsourcing delivery in the first place. Inevitably this leads to exploitation of officials and, ultimately, the most vulnerable users of public digital systems. The conflation of data-gathering by state and business as part of civil registration, identification or social service delivery presents numerous risks (Breckenridge, 2005: 279), and the recent abuse of personal data by a private firm to siphon money from millions of social grant recipients in South Africa is now well documented (Gaffley, 2021). Given this situation, we need to (re)consider how administrative law may be applied to private sector digital service providers operating in the public sector or in areas of public interest (Razzano, 2020).

Independent digital units and agencies

In looking to replicate the agility and responsiveness of the private sector within public sector organisations, several governments globally have sought to establish independent digital service and innovation units (Clarke, 2020; Eaves and McGuire, 2018). These units have some freedom to define their own culture, brand and processes, and create space for policy experimentation. The specific structure and relationship to departments and the political sphere can vary considerably.

As an example from South Africa, whilst the State Information Technology Agency (SITA) has a mandate to provide digital services to national and provincial government departments, a portion of the more exploratory data-specific work has been taken up by the Council for Scientific and Industrial Research (CSIR), which has included development of a national patient registration system for the Department of Health (Chetty, 2015) and a National Policy Data Observatory (CSIR, 2021). In establishing these types of platform initiatives, the CSIR has to build a critical mass of technical talent. But it is the same concentration of skills that makes it challenging for less digitally-capable departments to take on the platforms or services that have been developed. In addition, lines of accountability for the operation of digital services become blurred beyond the user or sector department. Barata and Cain note that when implementing financial management information systems in sub-Saharan Africa, electronic records can be deleted without the responsible official being aware and so often only the technology developer can trace the authenticity of information. They argue that a “solution is needed that exploits the advantage of increased accessibility to information without sacrificing accountability” (2001: 254). As noted earlier, in Forster’s analysis of medical expert systems, users – not developers - must have sufficient understanding of the algorithm to take “ultimate responsibility” for a system output (1992: 22).

Sandboxes, pilot projects and private partnerships

The emergence of independent agencies or units reflects a broader approach to new technologies or policy areas in which funding is ring-fenced for specific activities, and a legal-organisational space is created for some degree of experimentation. One of the more visible instruments being promoted is the regulatory sandbox which, for AI, allows the technology to be tested in, what the World Economic Forum (WEF) suggests is as “real an environment as possible before being released to the world” (WEF, 2021: 20). The sandbox approach has been implemented or considered in countries as diverse as Sierra Leone, Nigeria, Kenya, Rwanda and South Africa as a way for regulatory officials to engage more directly with the fast-growing fintech industry on the African continent. The Mauritius AI strategy sees the country’s Regulatory Sandbox License as a key action for enabling innovation in this field, by supporting activities for which there are “no adequate provisions in the existing regulatory framework” (WGoAI, 2018: 19). In African and other developing countries fintech sandboxes have allowed for the relaxation of certain finance sector regulatory requirements - such as on know-your-client (KYC), cash balances and management experience - whilst providing more direct supervision and alternative forms of insurance or protection for customers (Wechsler et al., 2018). Analysis of sandbox implementations in these contexts has highlighted that, whilst they provide a useful opportunity for public officials to grow their understanding of emerging technologies, business models, and the likely impact of a specific product on consumers; they provide more limited insights into the systemic effects associated with the introduction of a growing number of (data-driven) digital services. Further, because sandbox interactions are generally quite intimate environments, unless they are carefully designed to incorporate a broader community of participants along with rules on transparency or disclosure, they are likely to cloud the already opaque character of technology-regulatory relationships (Wechsler et al., 2018).

The myriad incubators and pilot projects related to digital public services in African countries, many of them now incorporating AI, reflect the early stage of these technologies but also a shift towards a more experimental or iterative approach to adoption. Similar to sandboxes, by starting small and working in a lean or agile way, sponsors and users in government departments aim to de-risk their financial commitment and minimise negative outcomes for citizens. In piloting (and scaling), governments may also seek ‘investor’ partners to share the costs and risks, often using proprietary technologies or implemented under confidential arrangements and in the presence of immature or non-existent regulatory safeguards, all of which has significant implications for transparency and accountability – as is evident with the numerous facial recognition projects on the continent (Mudongo, 2021).

Complete understanding

At the centre of current AI classification and prediction is the desire to develop a more complete understanding of people’s behaviour or future events by collecting vast amounts of data on all possibly relevant attributes. Improving the accuracy of decision-making by combining ‘big’ data from several sources and then “utilizing intelligence algorithms” to generate more accurate recommendations is typical of a professional value set in digital government (Busch and Henriksen, 2018: 11). In response, many national AI strategies are underpinned by a plan to aggregate or integrate various previously disparate pools of data into, as South Korea suggests, a “Data Dam” made up of public and private data (OECD, 2021: 48). The need for this scale and scope of data centralisation is justified by both the opportunity for machine learning to find hidden patterns in apparently unrelated data, as well as the need to improve algorithm performance by training on large volumes of data.

Data integration to enable Ai

From an African perspective, South Africa’s draft National Policy on Data and Cloud similarly aims to ‘consolidate’ public and private data on an ‘integrated data platform’, to enhance government planning and enable AI (DCDT, 2021). Egypt’s AI strategy notes that the “poor integration of databases” makes it difficult to “mine and extract useful knowledge” (NCAI, 2021: 21). Similarly, in Uganda’s decentralised statistical system “every department produces statistical information according to their individual organisational goals and needs” with varying motivation to produce data, as well as limited compatibility of standards and systems (Ouma, 2014: 3). Uganda’s government therefore “affirmed its support of data sharing arrangements between jurisdictions to enable a better understanding of issues and enhance policy development and evidence-based decision making” to help government agencies “deliver better public services” (Ouma, 2014: 2). A national technology centre was expected to support alignment and development of open data guidelines and data inventories for better communicating data availability (Ouma, 2014: 9).

Integration is associated with a range of benefits (and associated values), from reduced errors, to enhanced transparency, and better collaboration. Both Cape Town (Baud et al., 2014: 506) and eThekwini (Odendaal, 2003) in South Africa have implemented GIS decision-support tools which provided opportunities for officials to link or coordinate planning across line departments and between spheres of government. Meanwhile, the adoption of integrated financial management information systems in Sub-Saharan African countries is seen as critical – especially by international donors – for both improving prioritisation and efficiency, but also for ensuring financial accountability, by enabling easier tracking of transactions across government (Barata and Cain, 2001: 251). In the Ghana customs office case study introduced earlier, the move to a data sharing, ‘networked’ approach enabled a greater level of automation and disintermediation of front-office officials; and contrasts with the “previous tightly linked sequential flows” of earlier IT systems which were more rigid and less transparent, allowing or requiring officials to intervene at various points and create hold-ups or extract corrupt payments (Addo, 2021: 106).

From Liberia to Egypt and South Africa, government officials and researchers have called for greater levels of integration and collaboration to enable more flexible deployment and orchestration of digital services across agencies and departments, and to provide citizens with a more seamless and user-friendly experience (Apleni and Smuts, 2020; Mensah et al., 2021; Reddick et al., 2011). As an example from Lagos State in Nigeria, Olumoye and Govender (2018) aim to address the “separatism of public services” (2018: 2) in the issuing of building development permits – which normally involves endless backward and forward travel between multiple departments and agencies. They aspire to a fourth (and fifth) stage of e-government maturity based on full integration of government interaction “that provides services regardless of the organisational borders, via a single access point” (Olumoye & Govender, 2018: 2). This integration of digital public services goes beyond connecting virtual data and services to also make links with the physical environment, through both the ‘upward’ collection of biometric and IoT-sourced data, as well as the ‘downward’ mechanical actuation of instructions via robotic components.

Integration governance

Previous experience with integration efforts on the African continent points to a dependence on wider organisational (re)alignment and integration, capacity building and the attitudes of officials (Baud et al., 2014; Olumoye and Govender, 2018).

For front-line public officials, the centralisation of data and control means that they have more limited ability to influence or query decisions. Biometric and other IoT data collection devices are designed to “resist the editorial or authorial interventions of their owners” (Breckenridge, 2021), and reduce the need for information or data capturing and editing that would normally be performed by local public officials. Whilst centralisation and automated data collection may be useful for reducing variation and corruption, for many civil service roles this can undermine accountability and the potential for establishing trusted relationships with users – a key requirement for the uptake of public health services (Habli et al., 2020).

Inevitably, there are both intra and inter-organisational processes and challenges when it comes to integration, with the potential for both system failures but also broader harms. These governance questions are probably most evident around biometric and identity data. African and various other developing countries are unique in their adoption of centralised national identity systems that increasingly form the basis for accessing public services, often with strong connections to private financial information and credit systems (Breckenridge, 2021). Biometric data and personal digital devices have become the ‘primary keys’ for linking a previously disparate array of private and state-managed data sources on individuals. For example, in seeking higher levels of assurance on the identity of a customer, the mobile industry body, GSMA, is aiming to connect financial sector Know Your Customer (KYC) processes with mobile phone SIM card registration requirements (Theodorou, 2019).

In addition, donors and aid agencies often establish their own identity platforms or layer them on top of national systems, whilst the outsourcing of various administrative functions to the private sector – such as payment of grants, issuing of passports and registration of businesses – has increased the integration and movement of potentially sensitive data between sectors. As introduced above, in South Africa, a lack of oversight around data sharing related to social grants has led to the large-scale exploitation of vulnerable people (Gaffley, 2021), whilst the adoption of an information system for health records sharing in the country’s hospitals elevated concerns amongst staff about increased risks to privacy and confidentiality of data (Cline and Luiz, 2013).

For Breckenridge, “there is almost no official and very little public concern for individual privacy, the probability of data-creep on a large scale, and almost open-ended possibilities for abuse” related to biometric identity systems (2005: 278). Moreover, the extreme parsimony of biometric identification in modern systems, and their almost instantaneous transfer, enables “centralization around a single-point of control (and of failure)” (Breckenridge, 2021, emphasis added). These shifts in data collection and sharing suggest that the governance of data integration is experiencing a form of defacto dispersion to a range of actors, very often without the institutionalised input, coordination or oversight that can ensure responsible use.

Implications for AI adoption in Africa’s public service

This paper has sought to understand the ways in which public official decision-making is likely to be influenced by the adoption of AI, and how this in turn may shape the delivery of public services. Of particular concern is how governments and officials on the African continent may navigate a more sustainable approach to using AI that draws on the lessons from previous digital and data implementations.

Much of the region’s research on digital platforms and decision-support systems has been concerned with e-government ‘readiness’ and technology adoption, with influential sector-specific clusters, such as in public health decision-making and urban planning. This body of work tends to focus on why citizens and public officials resist or embrace technology and the various socio-technical factors that influence the success of systems implementation – from a reliable power supply to training and change management.

Of broader significance are the organisational changes and value shifts in the public service that many proponents of digital technologies and innovation anticipate with the adoption of AI, and which may or may not occur. So, how do front-line public officials (but also system developers and central policy actors) in African governments interpret or understand their role, agency and accountability with respect to digital discretion, and what does this mean for their relationship with AI and realising public administration values?

It does seem that AI is likely to amplify a number of digital government dynamics, with potentially contradictory effects on public service practice and outcomes. First, the implications of AI for the integrity of decisions, and the nature of trust between citizens and state is ambiguous. For example, whilst AI may enable more accurate decision-making and thereby enhance the legitimacy of administration, its opaque character can undermine confidence in digitally-enabled recommendations and reduce officials’ ability to explain how decisions have come about. Moreover, AI implementation in Africa is being layered on top of what are often quite fragile information and data platforms, for which officials’ already have limited skills and see as a source of information overload. This could be mitigated by directing AI adoption at specific challenges or workflows. Yet, given the early-stage of most AI implementations there would still be a need for guidance on assessing the accuracy and safety of these applications, as well as some form of independent monitoring and evaluation to support the introduction and removal of products.

A second area of consideration is the influence of AI on democratic values, both in how the technology may support more inclusive public service decision-making but also in how its implementation is governed. AI, in its reductionist form, removes much of the meaning, context and “background of common social causes” which cannot be mathematised (McQuillan, 2020: 165). By seeking to describe (or ascribe) the innate attributes of individuals (McQuillan, 2020), there is limited space for context and contest by the subjects of automated decisions, whilst front-line officials are confronted with increased ‘policy powerlessness’ (Tummers et al., 2009). The remote forms of data collection and predictive decision-making supported by AI-enabled biometrics and modelling are therefore likely to disempower local officials by foreclosing alternative decisions and curtailing much of the engagement and empathy regarded as key values in many public administrations.

These trends dovetail with research from Africa’s public sector suggesting that digital technologies have mainly been enrolled into processes of centralisation; as a means for reducing variation in policy implementation across fragmented administrations, and for reducing petty corruption at a local level. However, the reality is that effective and inclusive use of these technologies often depends on some form of re-intermediation in which decentralised groups and networks of people (nationally or at local levels) share experiences, appropriate technologies and encourage adoption. This goes beyond encouraging a user or human-centred approach to AI design which has tended to reinforce an individualised approach and can lead to marginalisation of certain groups (Marcus, 2021). So we may expect – and look to harness - resistance from district and front-line officials contesting the ‘primacy’ of digital and AI-enabled decision-making and centralised implementation, as well as from cities and city-networks, as clusters of learning develop around the role and use of AI.

As a relatively new technology, we have seen that AI deployment tends to take place outside of core departmental processes or structures, often in the form of pilot projects with private sector partners. The exceptional character of AI, in its futuristic portrayal and in how it is being implemented, means that we should be especially sensitive to issues of accountability and responsibility for both immediate operational and longer-term societal impacts. In Africa, government dependence on the private sector for the introduction of new technologies means that there will be growing pressure on procurement officials to better assess data-oriented and AI services. To fill the gap, certain African states have looked to draw on public research or digital service units. In South Africa, the CSIR’s work on a national patient registration system is the type of ‘platform enablement’ activity that Eaves and McGuire (2018) regard as the ‘end-game’ to which digital service groups aspire globally. Getting to this point requires significant effort to, amongst others, build political capital and delivery capability. The apparent success of these entities in Africa but also other regions is largely driven by an ability to recruit talented technologists, by providing an opportunity to work on socially-impactful projects “in a unit that defies pejorative stereotypes of government bureaucracy” (Clarke, 2020: 368).

Because of their ability to attract talent and accelerate implementation, intra-governmental digital service units are becoming an important alternative vehicle for introducing new technologies (and processes) in government. In reality, however, it is well known that the acceptance and use of technology by officials can decline once a developer team completes project delivery. As with all new information systems, there is a substantial gap between pilot-stage ‘adoption’ and broader implementation, which requires extensive process redesign, capacity building and sustainable funding (Molinari et al., 2021). Moreover, digital service units tend to operate outside of traditional hierarchies, meaning that accountability for digitally-enabled decision-making can become confused between developer team and users.

This points to a broader concern that via pilot projects, special units and private partnerships, AI is coming in to use through a ‘state of exception’ with “the tendency to escape due process through preemption and justify actions based on correlation rather than causation” (McQuillan, 2015: 568). Underlying this way of working is an assumption that the insights from data (and now AI) are objective, that is: “with enough data, the numbers speak for themselves” (Anderson, 2008). Therefore, thinking about how AI implementation can be embedded in existing values and rights-based frameworks (e.g. country constitutions, public service charters, and international commitments) and legal safeguards (e.g. public procurement and data protection regulations) is critical for its ongoing legitimacy and sustainable adoption on the African continent.

One of the more significant areas in which safeguards are needed is around the governance of large-scale data collection, aggregation and integration which many African AI and data strategies envisage. This integration of data and systems echoes a call for the ‘platformisation’ of government (Eaves and McGuire 2018; O’Reilly, 2011), as well as the notion of a single access point for services or ‘single view’ of citizens (for example, see Barbaschow, 2018; Wiseman, 2017). Whilst integration can simplify service access, policy actors in other regions, notably the European Union (EU), have acknowledged that the integration and centralisation of data can undermine citizens’ trust in digital services. This is due to, amongst others, concerns that centralised databases become targets for hackers, and that the possibility of re-identification and discrimination is higher when databases are merged (cc:eGov, 2007; European Parliament, 2019). Civil society advocates in the EU and United States have therefore proposed similar responses to these issues, including giving data subjects the legal power to request that data be deleted from a database and pursuing regulations restricting the integration or merging of specific data sources and types (Lynch, 2019: 25). Technical measures to mitigate centralisation risk include the development of exchange protocols between data silos of government entities, instead of consolidating data on a single database (cc:eGov, 2007: 13); distorting raw biometric data so that it is less reusable (Grijpink, 2008); and using AI-based methods themselves to reduce the need for large-scale data harvesting, such as data augmentation, transfer learning and synthetic data sets (Polonetsky and Renieris, 2020).

Conclusion

This paper argues that we may look to draw on Africa’s previous experiences with digital government and data-supported decision-making to pre-emptively guide the development and adoption of AI in the public service. In synthesising research from this field, we now have a better sense of some of the foundational experiences and principles that are likely to shape AI adoption; from the personal experiences of public officials with technology and data-supported decision-making, to the distinctive public administration values associated with a specific role or region, as well as emerging digital and AI principles which are being promoted as safeguards for public use.

These foundational factors appear to shape, what this paper has called, ‘mediating concerns’ in four broad categories: First, the integrity of recommendations provided by existing technology systems is affected by unreliable infrastructure and data management in many African contexts, with implications for trust in AI. Second, efforts at more inclusive decision-making have been undermined by IT-supported centralisation, and the technical nature and opaque character of AI may compound this issue. Third, the exceptional character of digital and now AI-based systems means that they tend to be implemented outside of traditional accountability structures and skills bases, which raises questions about safety and sustainability. Finally, AI adoption aligns with ‘big data’ activities which seek a comprehensive view (and potentially, control) of people and events, with implications for privacy and autonomy.

In all of these, it also becomes clear that a sustainable and effective approach to AI will need a more explicit recognition of the role of civil servants in mediating the use and impact of AI-enabled services, including the distinct values that they attach to decision-making, whether professional, ethical, democratic, relational or other.

As a way forward for researchers in the field, each of the mediating concerns will benefit from deeper investigation. More generally, there is a critical need for technology, country, sector and user-specific research as AI comes into use, to build an evidence base that can inform the design of appropriate systems and public administration policies. As two examples, it seems that the adoption of AI in public administrations is related to task complexity and uncertainty (Bullock, 2019), and it is likely that public officials and citizens will have different views on AI, depending on their specific position, role or context (Huang et al., 2022). However, research on AI adoption in Africa’s public service may also look broadly, at the fit between what are mainly internationally developed platforms and local institutional characteristics (Berman and Tettey, 2001). Ultimately, as much of this work acknowledges, these ‘technologies of administration’ have their origins in a global and regional political economy which can enable the recreation of a less tangible form of colonial power (Breckenridge, 2005; Breckenridge, 2021), and for which ‘structural’ responses may be necessary (Heeks and Shekhar, 2019).

Footnotes

About the author

Appendix A: Scopus search string

TITLE-ABS (((administrat*) PRE/1 (law* OR procedure* OR process* OR justice)) OR intelligen* OR "expert system*" OR "decision-maki*" OR "decision maki*" OR "decision support*" OR discretion* OR "policy form*" OR "policy maki*" OR "policy-maki*" OR "policy developm*" OR "planni*" OR "monitoring and eval*" OR "knowledge manag*" OR "knowledge based" OR "knowledge-based" OR "knowledge u*" OR recommender OR "recommendation system*" OR "computer assist*" OR "computer-assist*" OR adoption OR acceptance OR resistance OR professionali* OR modernis* OR moderniz* OR reform OR classif* OR identific* OR surveill* OR control) AND TITLE (digit* OR technolog* OR "artificial intelligence" OR "machine learning" OR "e-gov*" OR "e gov*" OR "m-gov*" OR "m gov*" OR algor* OR automat* OR electronic* OR information OR data* OR computer* OR ict OR biometric OR "facial recognition") AND TITLE (((administrat*) PRE/1 (law* OR procedure* OR process* OR justice)) OR govern* OR bureaucra* OR "public se*" OR "public off*" OR "public empl*" OR "public admin*" OR "civil se*" OR "politic*") AND TITLE-ABS (africa* OR nigeria* OR ethiopia* OR egypt* OR "republic off the congo" OR tanzania* OR "South Africa*" OR kenya* OR uganda* OR algeria* OR sudan* OR moroc* OR angola* OR mozambiq* OR ghana* OR madagasc* OR camero* OR "Cote d'Ivoir*" OR "Ivory Coast" OR niger* OR "Burkina Faso" OR mali OR malawi* OR zambia* OR senegal* OR chad* OR somalia* OR zimbabw* OR guinea* OR rwanda* OR benin* OR burundi* OR tunisia* OR "South Sudan*" OR togo* OR "Sierra Leone*" OR libya* OR congo* OR liberia* OR "Central African Republic*" OR mauritania* OR eritrea* OR namibia* OR gambia* OR botswan* OR batswan* OR gabon* OR lesotho OR "Guinea-Bissau*" OR "Equatorial Guinea*" OR mauriti* OR bestatin OR swaziland OR djibouti* OR reunion* OR comor* OR "Western Sahara*" OR "Cabo Verd*" OR "Cape Verd*" OR mayott* OR "Sao Tome and Principe" OR seychell* OR "Saint Helena*") AND PUBYEAR > 1999