Abstract

There is growing recognition of the need to end the debate regarding reading instruction in favor of an approach that provides a solid foundation in phonics and other underlying language skills to become expert readers. We advance this agenda by providing evidence of specific effects of instruction focused primarily on the written code or on developing knowledge. In a grade 1 program evaluation study, an inclusive and comprehensive program with a greater code-based focus called Reading for All (RfA) was compared to a knowledge-focused program involving Dialogic Reading. Phonological awareness, letter word recognition, nonsense word decoding, listening comprehension, reading comprehension, written expression and vocabulary were measured at the beginning and end of the school year, and one year after in one school only. Results revealed improvements in all measures except listening comprehension and vocabulary for the RfA program at the end of the first school year. These gains were maintained for all measures one year later with the exception of an improvement in written expression. The Dialogic Reading group was associated with a specific improvement in vocabulary in schools from lower socioeconomic contexts. Higher scores were observed for RfA than Dialogic Reading groups at the end of the first year on nonsense word decoding, phonological awareness and written expression, with the differences in the latter two remaining significant one year later. The results provide evidence of the need for interventions to support both word recognition and linguistic comprehension to better reading comprehension.

Keywords

I Introduction

Years of debate regarding reading instruction has pitted the explicit teaching of letter-sound correspondences, or phonics, against a literacy-rich approach geared toward discovery learning known as whole language. Recently, however, Castles et al. (2018) have called for an end to the reading wars in recognition that reading instruction must involve teaching both a solid foundation in phonics and the skills to become expert readers. One approach is to provide evidence of the complementary effects of instruction focused primarily on the written code (e.g. phonics) or developing knowledge (e.g. rich vocabulary). Together, these components create a comprehensive approach to reading instruction. In the present study, we draw on data available from a school-based program evaluation project comparing two different grade 1 reading programs. By considering instructional elements and the socioeconomic regions of the participating schools, we show positive and specific effects for both code-focused and knowledge-focused interventions.

According to the Simple View of Reading (Gough and Tunmer, 1986; Hoover and Gough, 1990), reading comprehension is the product of word recognition and linguistic comprehension. In 2001, Scarborough described the many strands represented by each of these areas that are woven together to support skilled reading. Linguistic comprehension was considered to draw on background knowledge, vocabulary, language structures, verbal reasoning, and literacy knowledge. As such, linguistic comprehension as used here is supported by oral language skills but also draws on other knowledge resources. In recognition of the breadth of this concept, the terms background knowledge and knowledge-focused instruction will be adopted in this report. Although reading instruction must address both components of the Simple View of Reading, the concepts of word recognition and background knowledge are too broad and multifaceted to directly inform curriculum design. In recognition, Kim (2017) has suggested the component approach to reading instruction. In 2000, the National Reading Panel report identified five key components of effective reading instruction: phonological awareness (PA), phonics, fluency, vocabulary, and text comprehension. The first three of these skills are best described as code-focused skills, whereas vocabulary and text comprehension can be considered knowledge-focused skills. A comprehensive reading instruction program would address all five components.

1 Code-focused instruction

Code-focused instruction teaches children the necessary skills to decode text, and includes explicit teaching of PA and phonics, morphological awareness, as well as instructional activities that foster word reading fluency (Al Otaiba et al., 2018; Lonigan et al., 2013). PA, the ability to identify and manipulate sound structures of a language, has been found to predict early reading abilities (Hogan et al., 2005; Nation and Hulme, 1997; Schuele and Boudreau, 2008). PA instruction was found to be effective at improving phonemic awareness, reading outcomes, and spelling with moderate to large effect sizes (NRP, 2000). Beyond this, explicit instruction must also systematically address phonics, the knowledge of the letter-sound relationships in a language, in the initial stages of learning to read (Castles et al., 2018; Hulme et al., 2012). Systematic phonics instruction uses a planned, sequential introduction to a set of phonic elements along with teaching and practice of those elements and has been found to make a bigger contribution to children’s growth in reading than unsystematic phonics instruction or no phonics instruction (NRP, 2000). Morphological awareness refers to conscious knowledge of the smallest units of meaning. A meta-analysis has shown that morphological awareness interventions support reading, spelling and vocabulary outcomes (Goodwin and Ahn, 2010). Morphological instruction supports code-focused abilities as it helps children to decode a word, and it also provides semantic, syntactic, and orthographic knowledge to support the child’s understanding (Kirk and Gillon, 2009). Indeed, morphological awareness intervention can be considered to target both code-focused and knowledge-focused skills. A later predictor of reading success is reading fluency, the ability to accurately read connected text at a conversational rate (Hudson et al., 2005). Reading fluency is a critical component of reading comprehension developed through reading practice with feedback and guidance (Fuchs et al., 2001; NRP, 2000). These code-focused skills support word recognition, however, word recognition alone is not sufficient for reading comprehension.

Interventions aimed at developing code-focused skills will focus on phonological awareness, letter-sound knowledge, and later reading skills such as reading fluency (Lonigan et al., 2013). Lonigan et al. provided code-focused intervention to small groups of 4.5-year-old children with groups receiving either intervention focused on PA, letter knowledge or a combination of both. PA intervention progressed from developing an awareness of sound structure to identifying sounds in words, and letter knowledge intervention progressed from identifying what letters are to naming them and their sounds. Effects of small group intervention were significant for each intervention on respective targeted domains and children who received intervention demonstrated more growth in these skills compared to children only receiving the school curriculum (Lonigan et al., 2013). Similar positive effects of code-focused intervention focusing on letter knowledge have been reported for small group interventions (Piasta and Wagner, 2010), and for whole classroom PA interventions delivered by classroom teachers (Blachman et al., 1999; Carson et al., 2013; but see also Schuele and Boudreau, 2008)

2 Knowledge-focused instruction

Two additional components key to reading success include text comprehension and vocabulary knowledge (NRP, 2000), both of which focus on knowledge development. Text comprehension viewed as ‘the essence of reading’ (Durkin, 1993) requires the reader to engage in intentional interaction between the reader’s background knowledge and the text in order to construct meaning. Instruction in comprehension strategies has been found to improve text comprehension (Block and Duffy, 2008; Guthrie et al., 2004; NRP, 2000). Vocabulary knowledge refers to knowing a word’s meaning, sound and written forms, and linguistic structure. Effective vocabulary instruction involves explicit teaching of vocabulary items for specific texts, multiple exposures, and active engagement in learning tasks (NRP, 2000; Sedita, 2005). More recently, the importance of explicit instruction in oral language (Kendeou et al., 2009) and writing skills has been recognized (Graham and Herbert, 2011).

Dialogic reading is an example of an intervention aimed at developing oral vocabulary and listening comprehension (Whitehurst and Lonigan, 1998). In a Dialogic Reading approach, educators and children engage in a shared book experience with the educator prompting the child to say something about the book, expanding the child’s response, and providing multiple opportunities to learn the expansion. Educators might prompt the participation of the child by asking for completion of a given phrase, asking for a recall of a story event, inviting comments about the content generally, asking questions, or asking for recall of information related to what is in the book. In a systematic review, Dialogic Reading was found to explain significant growth in vocabulary, story comprehension and syntax (Mol et al., 2009).

3 Evidence for differential effects

One limitation of this literature is that studies examining code-focused and/or knowledge-focused instruction do not consistently examine effects in the alternate skill set. For example, studies involving Dialogic Reading interventions often determine the effect of the intervention using receptive and expressive vocabulary measures (Hargrave and Sénéchal, 2000). Similarly, code-focused interventions use outcome measures focused on PA, letter-word recognition, and nonsense word decoding (e.g. Larabee et al., 2014). This inconsistency makes predicting differential or overlapping effects as a result of specific interventions difficult. However, in one study where a code-focused and a knowledge-focused intervention were implemented, significant differences were not found across domains suggesting specific effects within domains (Lonigan et al., 2013).

4 Additional factors

This description of code- and knowledge-focused interventions highlights the complex nature of reading instruction and, of course, a number of other factors influence reading intervention beyond code- and knowledge-based skills. Take, for example, the duration of intervention. Suggate (2016) reported a meta-analysis of 16 studies examining duration, among other characteristics, involving children up to grade 7 and measuring long-term impacts (on average 11 months) after approximately 40 hours of intervention focused on phonemic awareness, phonics, fluency, or comprehension. Results revealed negligible effects in the long term of phonics, small effects of fluency, and moderate effects of phonemic awareness and comprehension interventions. The findings highlight the need for an integrated reading program addressing skills that build on one another over the long-term (Al Otaiba et al., 2018). Another important factor, reading ability status, influenced Suggate’s results: relative to typical readers, a greater retention of intervention effect at follow-up was observed for at-risk readers, or those with low ability or disabilities. Similarly, greater benefits have been reported for beginning rather than older at-risk readers who receive phonemic awareness training (NRP, 2000), and for those with language or learning disabilities compared to typical readers who receive morphological awareness intervention (Goodwin and Ahn, 2010, 2013). The National Reading Panel (NRP, 2000) also reported equivalent effects of phonics instruction for all reader groups including those with disability (see also Galuschka et al., 2014).

With regards to socioeconomic status, a meta-analysis of vocabulary interventions with kindergarten children found greater effects for at-risk children for middle- and upper- than lower-income groups (Marulis and Neuman, 2010). Indeed, Marulis and Neuman concluded that vocabulary interventions benefit oral language skills but are not sufficiently powerful to close the vocabulary gap between economic groups (see also Gilkerson et al., 2017; Sperry et al., 2019). The effects of Dialogic Reading, on the other hand, have not been found to be influenced by socioeconomic status (Noble et al., 2019). Given the number of factors that need to be considered when providing reading instruction, it is no surprise that professionals with complementary expertise often collaborate in providing effective intervention including classroom educators, special education teachers, and speech-language pathologists (SLPs).

5 SLP-educator collaboration

SLPs have expert knowledge in the area of individual phonemes and oral language, which corresponds well to both the word recognition and language comprehension of the Simple View of Reading equation. SLPs can work with educators to improve educational access for students through consultation and collaboration (Schuele and Boudreau, 2008; Suleman et al., 2013). In consultation, the SLP assesses an identified problem and makes recommendations for changes to instruction through a combination of discussion, reports, and demonstration (Suleman et al., 2013). The aim of SLP-educator classroom-based collaboration is to provide intervention directly in the setting in which the developing skills are needed (Pershey and Rapkin, 2003; Prelock, 2000). There is a need for high quality universal instruction in the classroom that can be differentiated to meet the varying needs of individual learners (Tomlinson, 2000). By working together, an effective SLP-educator collaboration has the potential to support all students effectively in the classroom (Archibald, 2017).

6 The Reading for All program

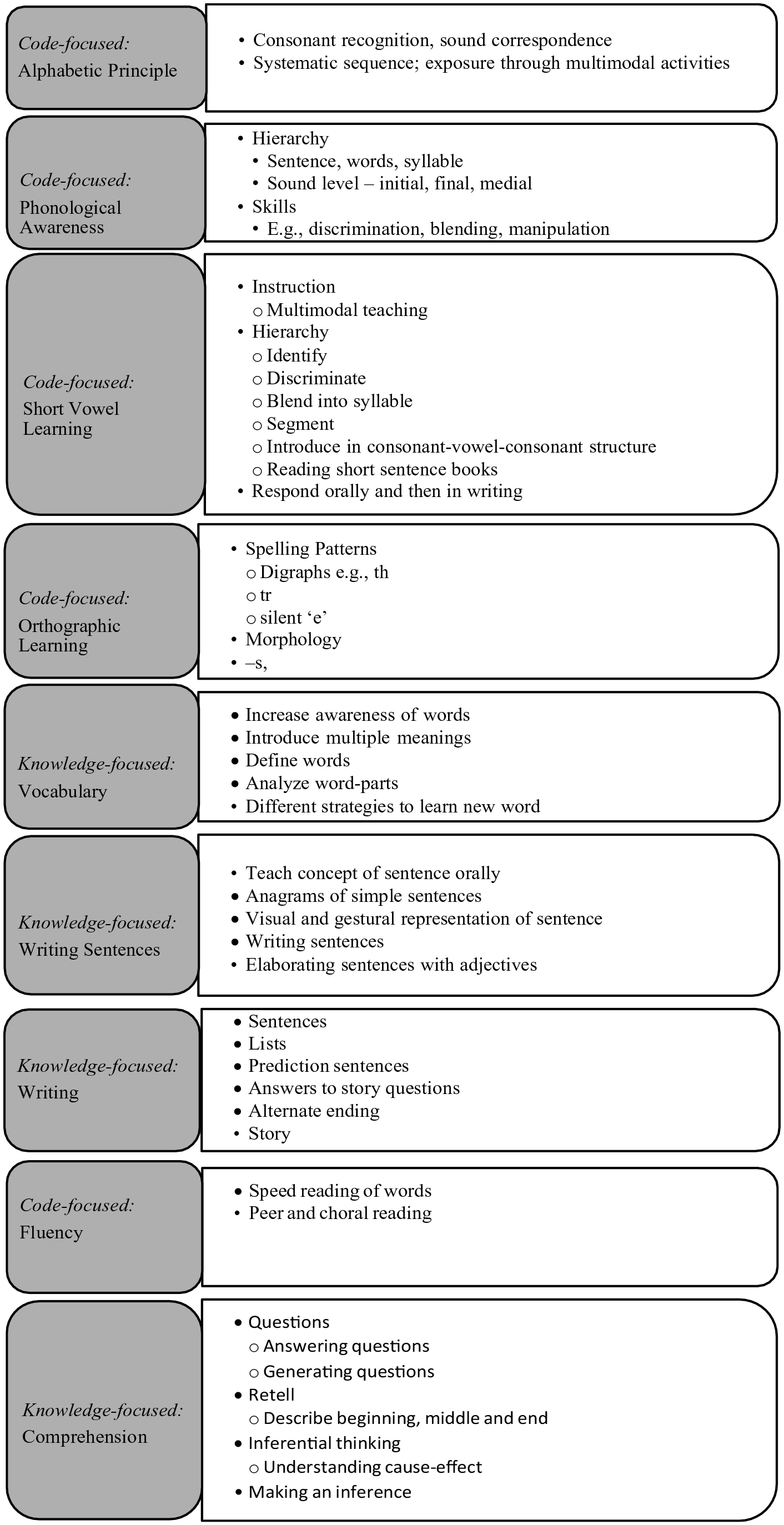

Authors SR and JL designed a comprehensive grade 1 language and literacy instruction program called Reading for All (RfA; Leggett and Raffalovitch, 2013), which was to be implemented jointly by one of the authors and a classroom educator and delivered to all students as a universal instructional program. The program was designed as a comprehensive reading intervention targeting nine goals organized developmentally and taught in the order outlined in Figure 1. Of these, three can be considered code-focused targets: (1) PA activities involving recognition and manipulation of phonemes, (2) explicit phonics instruction in the alphabetic principle, short vowel sounds, and common orthographic patterns, and (3) reading fluency tasks including timed word reading, and choral reading. The remaining three are knowledge-focused targets focused primarily on oral language: (1) vocabulary, (2) writing, and (3) comprehension. It should be noted that ‘writing’ was considered a knowledge-focused target because the writing skills addressed in RfA were more aligned with Scarborough’s (2001) strands of linguistic comprehension (e.g. writing sentences, predicting, answering questions; see Figure 1). Although writing clearly draws on code-focused skills that benefit from direct teaching (Hough et al., 2012; Lavoie et al., 2019), these skills were not specifically incorporated in the RfA program goals for writing. The materials for the RfA program included a set of storybooks (n = 12) and accompanying nonfiction texts (n = 6), which formed an overarching story arc. The books included decodable words systematically aligned with the goals of the program. Additional oral language activities explored higher level concepts and promoted comprehension through discussion, story retelling, etc. Instruction in writing began with a focus on simple sentences, and progressed to writing of lists, and stories. Importantly, the knowledge-focused instruction was orally based and emphasized understanding spoken language and providing oral responses. The RfA program was designed for whole class instruction taught through SLP-educator collaboration.

Program goals and skills targeted in the Reading for All (RfA) program.

7 Program evaluation

RfA was implemented in three schools in which the SLPs provided services. At the time of implementation, the reading curriculum used by the schools was based on leveled reading (Fountas and Pinnell, 2012). In order to inform decisions regarding implementation of RfA, a program evaluation was conducted by the program authors. Program evaluation refers to a systematic method for collecting, analysing, and using information to answer questions about projects (Administration for Children and Families, 2010). As distinguished from human participant research, the primary purpose of program evaluation projects is to benefit the specific program target audience. In this case the target audience included the SLPs and educators utilizing the manualized RfA intervention and common outcome measures. Reinking and Alvermann (2005) identified important determinants in assessing the merit for publication of a program evaluation as the inclusion of theoretical grounding, appropriate analyses, and data-based decisions. We argue that the RfA program evaluation project had a strong theoretical grounding as it includes both code-focused skills (word recognition), and knowledge-focused skills (listening comprehension) as important for reading comprehension success (Castles et al., 2018). We present here data-based interpretations based on appropriate analyses that inform current thinking regarding reading instruction.

In this project, the RfA program was compared to a knowledge-focused Dialogic Reading intervention program, which focused on vocabulary development and did not target code-focused instruction. Educators from six grade 1 classrooms in three different schools completed either the RfA program in collaboration with SLP authors SR or JL, or the Dialogic Reading program involving consultation with either of these same SLPs. The purpose of this report of the project is to investigate benefits of code-focused vs. knowledge-focused interventions as further evidence towards the complementary benefits of a comprehensive approach. One aim was to examine the extent to which outcome measures related to PA, phonics, and word reading captured changes. We anticipated greater increases for the RfA program than the Dialogic Reading program given the emphasis on code-focused skills in the former program. A second goal was to evaluate outcomes on knowledge-based measures of vocabulary, writing, and comprehension. Although both reading instruction programs were expected to result in measurable benefits on these measures, it was predicted that the primary focus of the Dialogic Reading on vocabulary development would lead to a greater relative impact on vocabulary for this program. Our third aim was to examine the influence of demographic factors on outcomes where available. We predicted that a longer duration after intervention, lower socioeconomic status, and lower reading ability might negatively impact outcome.

II Method

1 Participants

Participants were from three schools in the Greater Toronto Area (GTA, Canada) representing distinct socioeconomic strata. Relative to the annual GTA total household income of $102,000 based on the 2016 census (Statistics Canada, 2016), School 1’s annual income was higher ($216,000), School 2’s was equivalent ($99,000), and School 3’s was lower ($72,000). Two grade one classrooms in each school participated with educators making their own decisions regarding who would teach the RfA vs. Dialogic Reading programs. All children in each class received the intervention (n = 90) as part of their regular curriculum. In all cases, parents consented to the use of their child’s data as part of the program evaluation (Reading for All: n = 46; Dialogic Reading: n = 44). Unexpectedly, groups differed in age due to a main effect of school such that those from School 3 (M = 86.2 months, SD = 0.6) were older than those from School 1 (M = 75.1, SD = 0.7) or School 2 (M = 75.0, SD = 0.6). As a result, age was entered as a covariate in all analyses. Classrooms were general education classrooms which can include children with language-based learning disabilities, but no other demographic variables for individual participants/families were collected.

2 Procedures

The program occurred over one academic year for Schools 1 and 3, and over two academic years for School 2. Due to staffing constraints, the program could only be implemented for two years in School 2, and the same procedures were followed for the second year. The outcome measures were completed individually in a single session in a quiet room in the child’s school at the beginning and end of the first school year for all schools, and at the end of the second school year for School 2 (when participants were in grade 2). Outcome measures included code-focused tests (PA, nonsense word decoding, letter-word recognition) and knowledge-focused tests (listening comprehension, reading comprehension, written expression, receptive vocabulary). PA and written expression data were missing for School 1. Individual data were missing for receptive vocabulary for two children from School 1 and four children from School 3. Training and planning sessions with the program SLPs occurred during the month of September and included a joint half-day session for all educator participants focused on how oral language supports literacy and learning. A separate half-day session focused on the respective intervention program for educators implementing either the RfA or Dialogic Reading program. The interventions were administered from early-October to early-June. All testing was completed by the authors SR and JL.

a Outcome measures

Outcome measures were subtests from the Kaufman Test of Educational Achievement, 2nd edition (KTEA; Kaufman and Kaufman, 2014), except the receptive vocabulary measure, which was the Peabody Picture Vocabulary Test, 4th edition (PPVT; Dunn and Dunn, 2007). For both the KTEA and PPVT, corresponding published test version A was administered pre-intervention, B, post-intervention, and A, post-Year-2 intervention. In all cases, raw scores based on number of correct items in the subtest were converted to standard scores. Both outcome measures were standardized tests of language and have published reliability coefficients of 0.87–0.95 and 0.87–0.93, respectively.

Phonological awareness (PA): In the PA subtest, children were asked to give words that rhyme with a given word, identify words that do not rhyme, and match pictures based on the final sound of the stimulus picture. Children were also asked to blend word parts, segment words, repeat words, and delete given segments from words.

Nonsense-Word Decoding (NWD): For the NWD subtest, children were given a list of non-words and asked to read them aloud as quickly and as accurately as possible.

Letter and Word Recognition (LWR): Children were asked to identify letters and words in the LWR subtest.

Listening Comprehension (LC): In the LC subtest, children listened to recorded spoken passages. Children were told to remember the passage as they would be asked questions about the story after completion.

Reading Comprehension (RC): In the RC subtest, the child read a word or short passage silently or aloud. Items required matching words with pictures, following written directions, reading passages and answering questions, and putting sentences in the correct order to make a meaningful paragraph.

Written Expression (WE): In the WE subtest, children engaged with grade level storybooks to complete activities such as adding punctuation and capitalization, writing dialogue or captions, and editing text.

Receptive Vocabulary: In the PPVT, children were asked to choose an indicated picture from a choice of four pictures.

b Interventions

Both the RfA and Dialogic Reading interventions were designed to be delivered twice a week in 50-minute sessions with 30 minutes of active instructional time. Both interventions were implemented via whole classroom instruction, and with some small group practice provided in the RfA program. As a result of whole classroom instruction, children progressed through the programs at the same pace, and the only individual instruction was provided in the form of differentiated feedback which was provided in both intervention programs when needed. Participating classrooms were self-selected by the classroom’s teacher. Educators volunteered to deliver the RfA intervention and teachers were recruited to deliver the Dialogic Reading group. Each classroom had one educator, expect during the RfA intervention time. In the RfA intervention, SLPs and educators jointly delivered the intervention in the classroom. In the Dialogic Reading group, the SLP provided consultative support to the educator about weekly during the first month of the program and then as needed thereafter. Participating educators had varying years of experience as a teacher, but no specific demographic information was collected.

Reading for all. The RfA program (Leggett and Raffalovitch, 2013) followed the description outlined in Figure 1 to target nine language and literacy goals. The RfA intervention was developed for practice rather than for research purposes by practicing clinicians and authors Leggett and Raffalovitch, and then trialed in a classroom the year prior to implementation. Modifications were made throughout this year based on SLP impressions, teacher feedback and student feedback. In this way, the program was designed in practice for a specific clinical context. Each of the 54 lessons were co-instructed by the educator and SLP with the four components: pre-planning (10 minutes); whole class instruction (10 minutes); small group instruction (20 minutes), and debriefing (10 minutes). Children received 30 minutes of instruction and the pre-planning and debriefing time was used by the educator and SLP to review lessons and plan for future lessons. There were approximately six lessons per program target and each lesson focused on one component. The focus of the professional development session on the RfA program addressed integration of the program goals through collaborative teaching. Educators were provided with scripted lesson plans, weekly objectives, and weekly materials (for a sample lesson plan, see supplemental materials).

Dialogic Reading. The goals of the Dialogic Reading program were to develop vocabulary and oral language by encouraging child participation in the reading experience, adding information to responses using rephrasing and expansion techniques, and adapting the instructors’ style to reflect each child’s linguistic ability. The educator used planning and debriefing time (20 minutes) to select appropriate storybooks, and plan prompts to elicit more complex linguistic structures from the children. The educator then engaged in 30 minutes of instruction involving reading the selected text, elaborating on story vocabulary, and encouraging discussion using question prompts. The focus of the professional development session for the Dialogic Reading program addressed the importance of word and book selection, as well as classroom strategies to promote discussion, and use of the selected vocabulary throughout the day.

3 Data analysis

We planned a preliminary analysis to investigate potential baseline non-equivalence between groups and schools for each outcome measure. We completed a series of 2 (intervention group: Reading for All; Dialogic Reading) by 3 (School: 1, 2, 3) analyses of covariances (ANCOVAs) with age entered as a covariate on each pre-intervention outcome measure. In cases of baseline group differences on the outcome measures, we completed analyses parallel to our main analyses (described below) with the pre-intervention score entered as a covariate. In all cases, these parallel analyses did not change the results or interpretation regarding positive intervention effects, and only the main analyses are reported.

In order to evaluate the effects of the reading programs, we planned to complete a mixed ANCOVA on each outcome measure with intervention group (Reading for All; Dialogic Reading) and school (1, 2, 3) entered as between group factors, time of testing (pre-, post- intervention) as a within group factor, and age entered as a covariate. Crucially, an interaction between intervention group and time of testing would provide evidence of a greater effect of one intervention approach over the other. Lastly, we examined the post-year-2 data available for School 2 only by completing corresponding analyses on the pre-, post-, and post-year-2 intervention measures.

III Results

1 Baseline non-equivalence

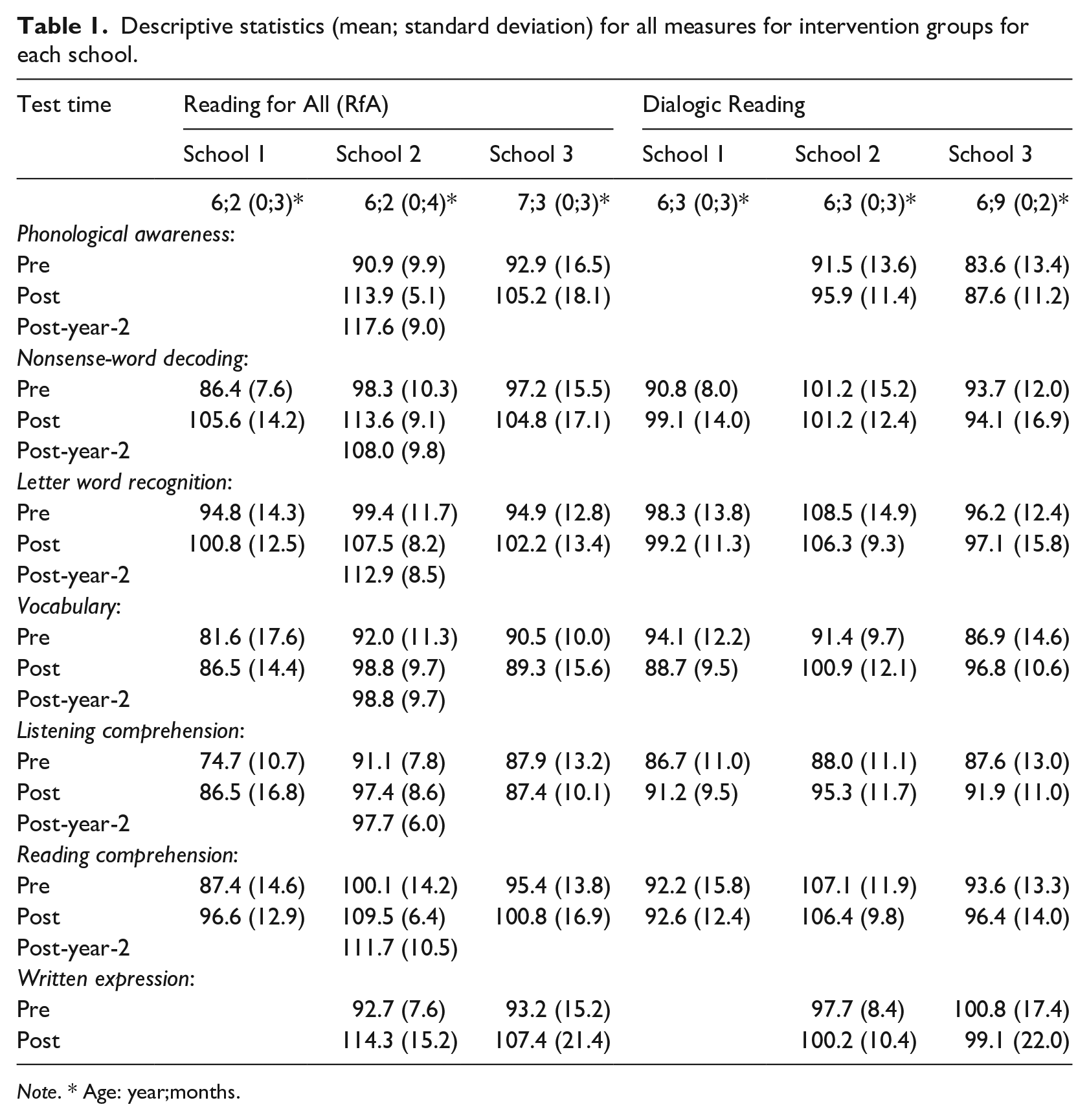

Table 1 provides descriptive statistics for Year 1 pre-and post-intervention outcome measures for intervention group and school. Baseline equivalence for investigating differences between intervention groups was assessed in corresponding 2 (intervention group: RfA; Dialogic Reading) by 3 (school: 1, 2, 3) ANCOVAs on pre-intervention scores with age entered as a covariate. Most importantly, no main effects of intervention group were observed, F < 2.8, p > .05, all cases. With regards to the main effects of school, baseline equivalence was not demonstrated for LWR, F(2,84) = 3.65, p = .03, NWD, F(2,84) = 5.95, p = .004, LC, F(2,84) = 4.69, p = .01, and RC, F(2,84) = 7.45, p = .001. Pairwise comparisons with Bonferroni corrections investigating these significant effects revealed the following differences with all remaining comparisons being nonsignificant (p > .05): Those from the school with average household income, School 2 (M = 103.9, SE = 2.4), had significantly higher LWR than School 3 (M = 95.6, SE = 2.3). School 2 (M = 99.8, SE = 2.2) also had significantly higher NWD than School 1 (M = 88.6, SE = 2.4), and significantly higher RC (M = 103.6; SE = 2.5) than either School 1 (M = 89.8, SE = 2.7) or School 3 (M = 94.5, SE = 2.4). Similarly, LC scores were significantly higher for School 2 (M = 89.6, SE = 2.0) than School 1 (M = 80.7, SE = 2.2). Baseline equivalence between schools was observed for the remaining measures, PA, Receptive Vocabulary, and WE (F < 0.75, p > .05, all cases). It should also be noted that all interactions between intervention group and school in the respective ANCOVAs were not significant, F < 2.9, p > .05 (all cases), with the exception of LC for which no within school pairwise comparisons reached significance (p > .05, all relevant cases). These results indicate that the intervention groups within schools did not differ at baseline.

Descriptive statistics (mean; standard deviation) for all measures for intervention groups for each school.

Note. * Age: year;months.

2 Effects of intervention group

We first consider evidence for an effect of one of the intervention approaches by examining for interactions with intervention group. See Figures 2a–c and 3a–c for the code-focused (PA, NWD, LWR) and knowledge-focused outcomes measures (RC, WE, vocabulary), respectively. A significant interaction involving intervention group and time of testing was observed in relevant ANCOVAs for all outcome measures except LC, F < 2.3, p > .05, np2 < 0.06 (both cases), which will not be discussed further. The interaction between intervention group and time of testing was significant for PA, F(1,59) = 18.70, p < .001, np2 = 0.24, NWD, F(1,83) = 22.38, p < .001, np2 = 0.21, LWR, F(1,83) = 15.53, p < .001, np2 = 0.16, RC, F(1,83) = 11.26, p < .001, np2 = 0.12 and WE, F(1,58) = 26.00, p < .001, np2 = 0.31. Although the interaction between intervention group and time of testing was not significant for Receptive Vocabulary, F(1,77) = 1.12, p > .05, the 3-way interaction between intervention group, school, and time of testing was, F(1,78) = 9.97, p < .001, np2 = 0.03. No other significant 3-way interactions were observed in any of the analyses, F < 2.85, p > .05 (all cases). The following descriptions unpack these significant interactions by reporting significant pairwise comparisons with Bonferroni corrections.

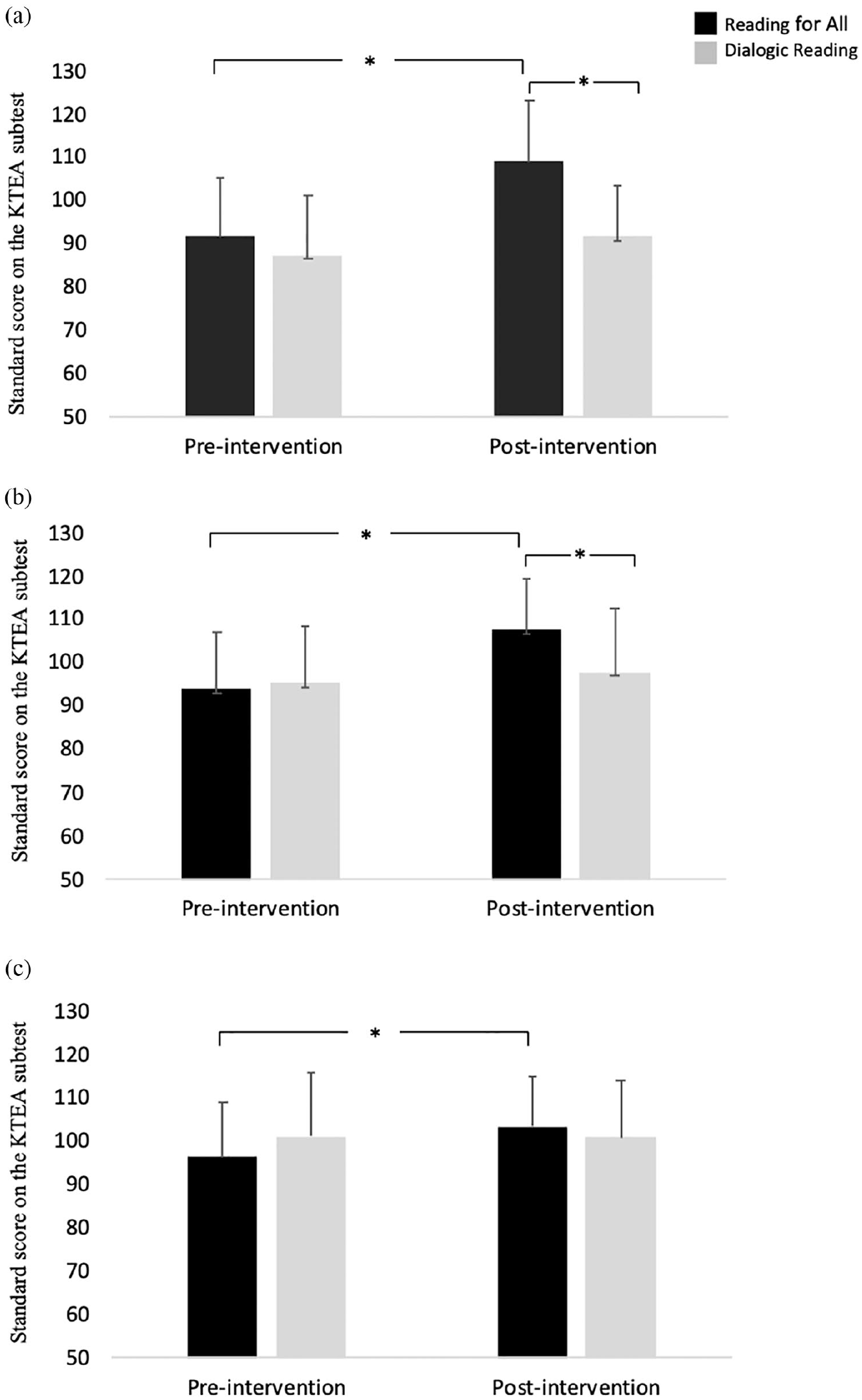

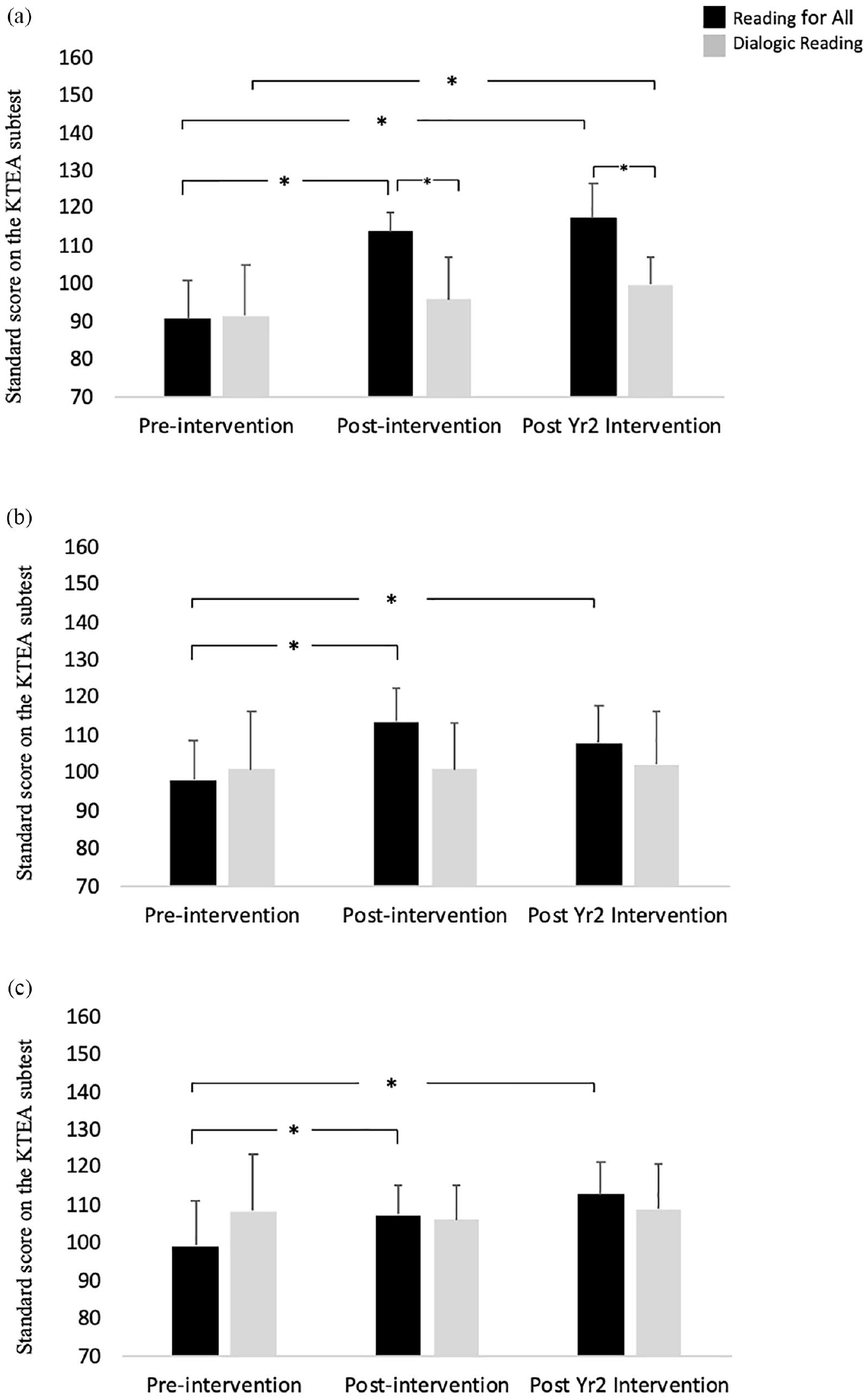

Mean standard scores (standard deviation) for the Reading for All (RfA) groups and Dialogic Reading group before and after intervention. Significant increases on pre- to post-intervention scores for the RfA but not Dialogic Reading groups on (a) phonological awareness (PA), (b) Nonsense-Word Decoding (NWD), and (c) Letter and Word Recognition (LWR).

In the case of PA (Figure 2a), a significant difference in pre-to post-intervention scores was observed for the RfA (p < .001) but not Dialogic Reading groups (p > .05). As well, the post-intervention scores were significantly higher for the RfA than Dialogic Reading groups (p < .001). The same pattern occurred for NWD (Figure 2b), for which a significant difference in pre- to post-intervention scores was observed for the RfA (p < .001) but not Dialogic Reading groups (p > .05), and post-intervention scores were significantly higher for the RfA than Dialogic Reading groups (p = .004). Results for WE (Figure 3b) mirrored this pattern with a significant difference in pre-to post-intervention scores for the RfA (p < .001) but not Dialogic Reading groups (p > .05), and significantly higher post-intervention scores for the RfA than Dialogic Reading groups (p = .03).

Mean standard scores (standard deviation) for the Reading for All (RfA) groups and the Dialogic Reading groups before and after intervention. Significant increases pre-to post-intervention scores for the RfA but not Dialogic Reading groups on (a) Reading Comprehension (RC) and (b) Written Expression (WE) with between group differences on the latter. Significant increases for the Dialogic Reading for School 2 and 3 only on (c) Vocabulary.

Similar findings occurred for LWR (Figure 2c), for which a significant difference in pre- to post-intervention scores was observed for the RfA (p < .001) but not Dialogic Reading groups (p > .05). However, the post-intervention scores did not differ for the RfA and Dialogic Reading groups (p > .05). Correspondingly, in the case of RC (Figure 3a), a significant pre-to post-intervention score increase was observed for the RfA (p < .001) but not Dialogic Reading groups (p > .05). Post-intervention scores, however, did not differ for the RfA and Dialogic Reading groups (p > .05).

In the case of the 3-way interaction for Receptive Vocabulary (Figure 3c), a significant difference in pre-to post-intervention scores was observed for the Dialogic Reading groups at the schools with average or lower household incomes, Schools 2 (p = .011) and 3 (p = .006), but not the school with higher household income, School 1 (p > .05), and not for any of the RfA groups (p > .05, all cases). None of the remaining comparisons relevant to evaluating the interventions across time were significant (p > .05, all cases).

3 School 2 year 2 follow-up

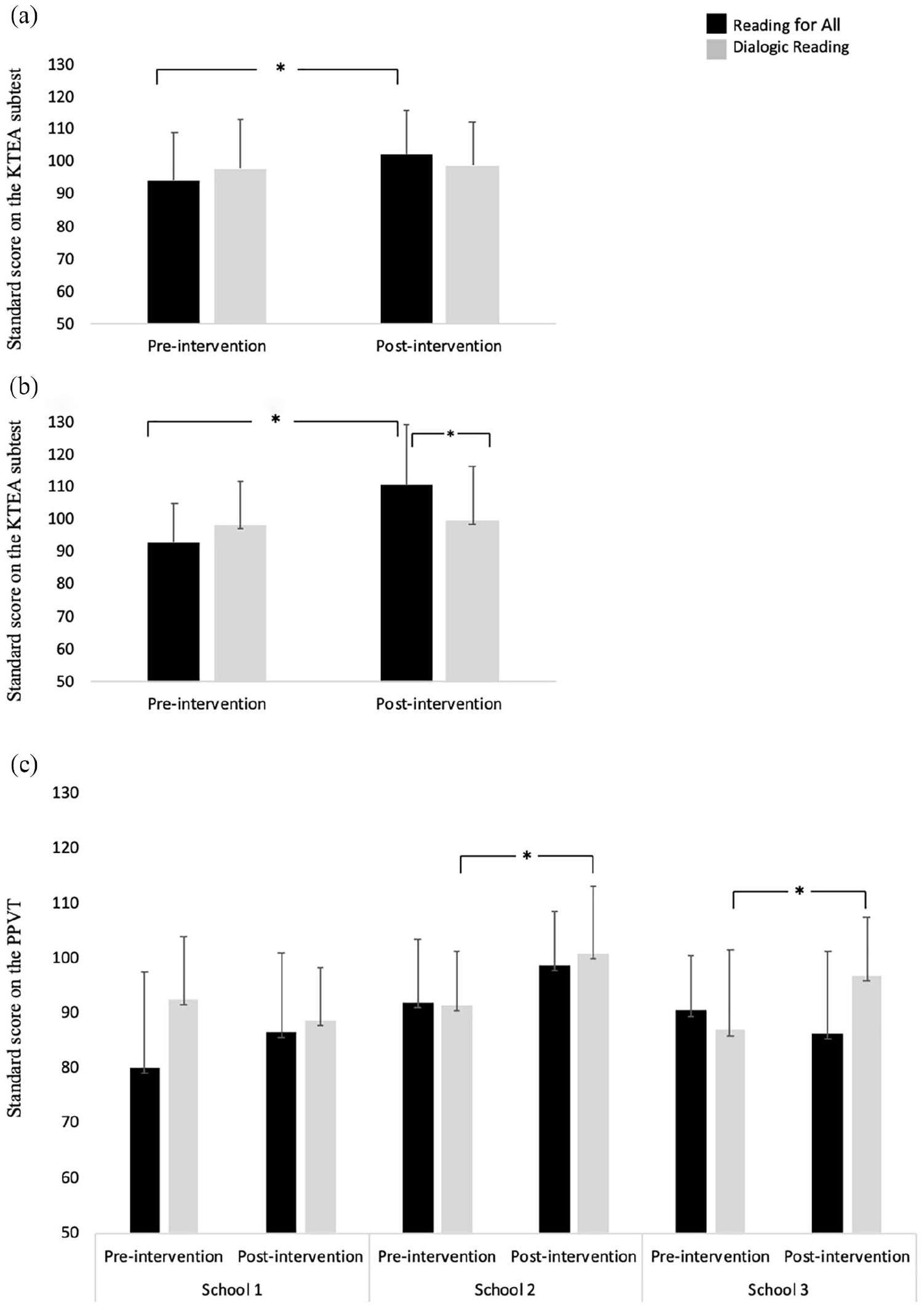

Differences between the intervention groups one year later were examined for the only school for which such data were available, School 2. See Figures 4a–c and 5a–b for the code-focused (PA, NWD, LWR) and knowledge-focused outcomes measures (RC, WE), respectively. No significant effects of intervention group were observed for LC and receptive vocabulary, F(2,58) < 0.09, p > .05, both cases, which will not be discussed further. A significant interaction between intervention groups (RfA; Dialogic Reading) and time was observed for all remaining measures: PA, F(2,58) = 15.27, p < .001, np2 = 0.09, NWD, F(2,58) = 10.23, p < .001, np2 = 0.06, LWR, F(2,58) = 8.88, p < .001, np2 = 0.06, RC, F(2,58) = 4.75, p = .012, np2 = 0.05, and WE, F(1,58) = 10.72, p < .001, np2 = 0.10. The remaining descriptions unpack these significant interactions by reporting significant pairwise comparisons with Bonferroni corrections.

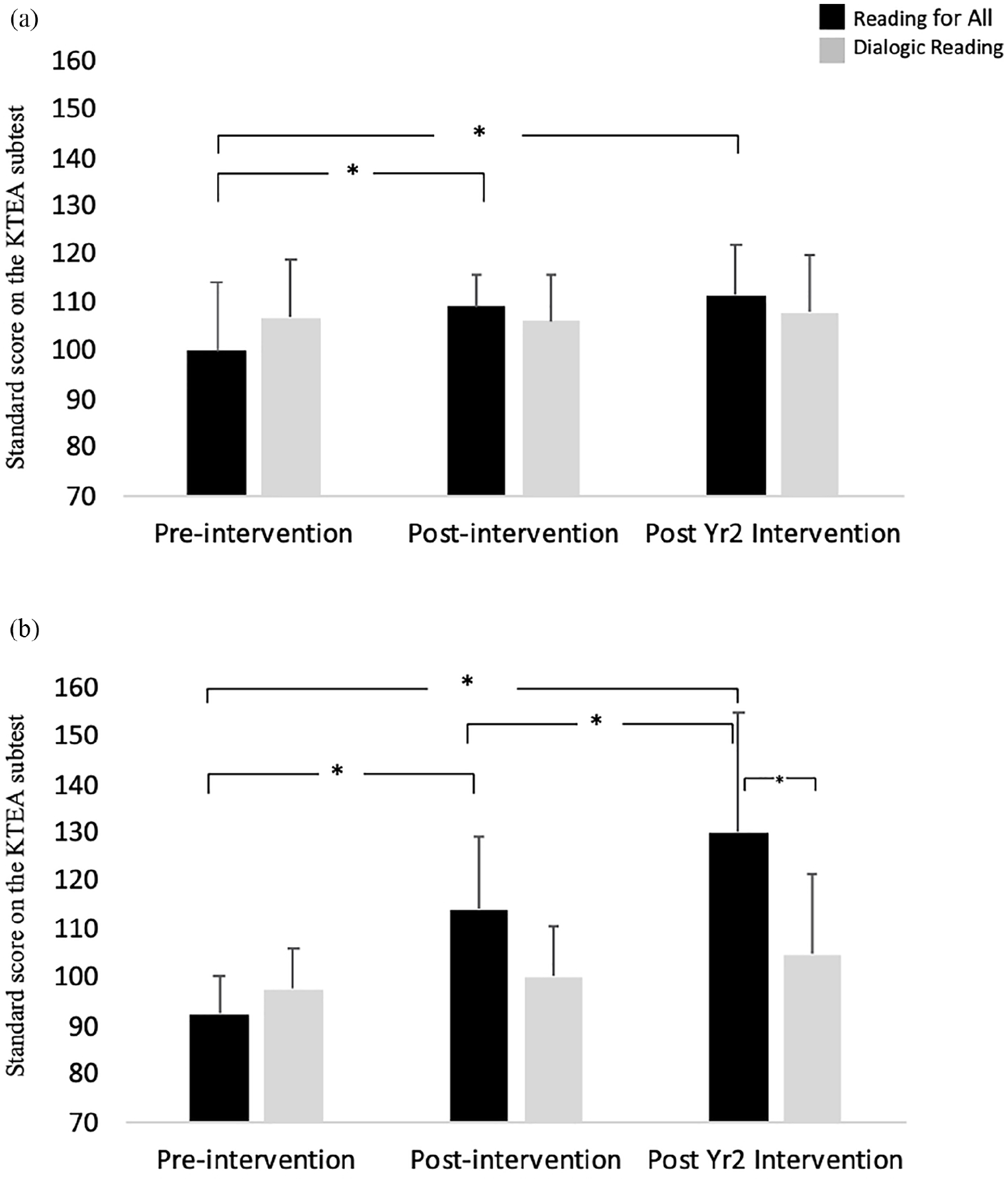

Mean standard scores (standard deviation) for the RfA groups and the Dialogic Reading groups before and after intervention. Significant gains from pre- to post-intervention maintained at one-year post intervention for the RfA group for (a) phonological awareness (PA), (b) Nonsense-Word Decoding (NWD), and (c) Letter and Word Recognition (LWR). Significant gain from pre-to one-year post intervention on phonological awareness for the Dialogic Reading group.

The same pattern of results was observed for NWD, LWR, and RC. For NWD (Figure 4b), the RfA group showed a significant increase between time 1 and both times 2 (p < .001) and 3 (p = .003), but not between time 2 and time 3 (p > .05) whereas no significant differences were observed across testing periods for the Dialogic Reading group (p < .05). The intervention groups, however, did not differ significantly at any time point (p < .05). Similarly, for LWR (Figure 4c), the RfA group scores increased significantly relative to time 1 for both time 2 (p = .015) and 3 (p < .001), but scores at times 2 and 3 did not differ (p > .05) whereas no significant differences were observed across testing periods for the Dialogic Reading group (p < .05). There were also no significant between group differences at any time point (p > .05, all cases). For RC (Figure 5a), the RfA group showed a significant increase between time 1 and both times 2 (p = .02) and 3 (p = .002), but not between time 2 and time 3 (p > .05) whereas no significant differences were observed across testing periods for the Dialogic Reading group (p < .05). There were, however, no significant between intervention group differences at any time point (p > .05, all cases).

Mean standard scores (standard deviation) for the RfA groups and the Dialogic Reading groups before and after intervention. RfA group scores significant increased from pre- to post-intervention were maintained at one-year post intervention for (a) Reading Comprehension (RC) and increased for (b) Written Expression (WE). No significant differences for the Dialogic Reading group on any measures.

In the case of PA (Figure 4a), the RfA group showed a significant increase between time 1 and both times 2 and 3 (p < .001, both cases), but not time 2 to time 3 (p > .05) whereas the difference for the Dialogic Reading was significant for time 1 to time 3 only (p < .05; p > .05, all remaining cases). As well, the intervention scores were significantly higher for the RfA than Dialogic Reading groups at times 2 and 3 (p < .001, both cases).

For WE (Figure 5b), the RfA group showed a significant increase between all time points (p < .05, all cases) whereas no significant differences were observed across testing periods for the Dialogic Reading group (p < .05, all cases). As well, the WE scores were significantly higher for the RfA than Dialogic groups at time 3 (p < .001), but not time 1 or 2 (p > .05, both cases).

IV Discussion

This program evaluation compared two reading programs provided as grade 1 whole class interventions in three schools located in municipal districts with a higher, average, or lower total household income relative to the surrounding large urban area in a 2016 Canadian census. The reading programs involved either a comprehensive approach with a focus on code-based skills, Reading for All (RfA), or a Dialogic Reading approach with a focus on building knowledge rich vocabulary. Results revealed significant improvements across the school year for those completing the RfA program on measures of phonological awareness (PA), non-word decoding (NWD), letter and word recognition (LWR), reading comprehension (RC), and written expression (WE). Scores were significantly higher for the RfA than Dialogic Reading groups at the end of the first school year (post-intervention) for the PA, NWD, and WE measures. In a follow-up at one school one year later, scores were maintained at end of year 1 levels in the RfA group for PA, NWD, LWR, RC and increases relative to the end of year 1 were observed for WE. Nevertheless, higher scores for the RfA than Dialogic Groups were observed for PA and WE only. At the end of year 1 only, significant increases in Receptive Vocabulary were observed after Dialogic Reading for the schools from municipal districts with average and lower total household incomes (relative to the greater surrounding urban area). No change in LC scores relative to the intervention groups were observed across all testing points.

Explicit instruction on code-focused skills in the RfA program resulted in improvements in measures of PA, LWR, and NWD. Gains made on these measures over the year of instruction were maintained at the end of the subsequent school year. Conversely, the Dialogic Reading group whose reading program did not include code-focused instruction did not show changes on any of these measures. These findings replicate many previous studies showing the benefits of instruction in PA, phonics, and fluency (Castles et al., 2018; Hogan et al., 2005; NRP, 2000), and are in line with the strong scientific consensus around the importance of phonics instruction in the initial stages of learning to read (Castles et al., 2018). With regards to this latter observation, it is important to note that the current findings cannot speak to the relative merit of program components related to PA, phonics, or fluency, as all components were integrated in the RfA program.

Despite the positive outcomes related to the code-focused instruction of RfA, it must be noted that the effects were relatively modest. Differences between the RfA and Dialogic Reading groups were observed on the code-focused measures of PA, and NWD at the end of the intervention period, with a lack of group differences one year later on all code-focused measures except PA. A decreasing measurable benefit of code-focused interventions is consistent with that reported in a meta-analysis of long-term effects of reading instruction (Suggate, 2010). There are several possible explanations for these findings: One possibility is that many young children are able to acquire code-based skills implicitly. Group effects in a sample comprised of mostly typically developing children such as the current sample may disappear as those who do not receive explicit instruction acquire the skills implicitly. The finding of a significant increase in PA skills at the end of the second year of testing for the Dialogic Reading groups would be in line with this suggestion. A second possibility is that these code-based skills are acquired through explicit instruction and then change little, but convey an early learning advantage in other areas. Indeed, code-focused instruction has been found to result in benefits to other aspects of reading including reading comprehension (Block and Duffy, 2008; NRP, 2000).

The RfA program was also associated with improvements across the school year in RC and WE that were maintained one year later for RC and further increased for WE. Although one explanation for this finding could be knowledge-based improvements, the lack of changes in oral language measures related to LC and receptive vocabulary does not support such an explanation. The improvements in RC are indicative of successful reading acquisition but the pattern of findings suggests this change might be attributable to the improved word recognition afforded by improvements in code-focused skills. Such an explanation would be consistent with the Simple View of Reading (Gough and Tunmer, 1986), and also with findings for beginning readers in early grades (such as ours) of greater benefits of phonemic awareness training (NRP, 2000) and phonics instruction (Suggate, 2010). To some extent, WE can be expected to mirror RC skills, but WE is also associated with many additional demands related to text formation, idea generation, etc. (Piolat et al., 2005). Given the demands of writing, it could take longer for improvements in code-focused skills to have an impact on WE. This slower knock-on effect to writing could be one explanation for the continued increase in written expression through the second year of the study.

Considering the third aim of examining the influence of demographic factors including socioeconomic factors, the Dialogic Reading program had a positive effect on receptive vocabulary for schools with average and lower household incomes. This result is intriguing given past findings of greater vocabulary intervention benefits for those from higher socioeconomic groups (Schwab and Lew-Williams, 2016). Although no changes were observed for the other oral language measures, such as listening comprehension, the lack of a corresponding vocabulary benefit in the RfA group suggests that a specific, intensive, focus on vocabulary development is needed to see measurable differences in this area.

This program evaluation provides evidence for the direct benefits of code-focused and knowledge-focused interventions lending additional support to the idea of complementary benefits from incorporating both aspects in reading instruction. Positive effects directly corresponded to the explicit instruction provided in each of these areas. The findings highlight the use of a components-based view of reading when designing a reading intervention to provide explicit instruction of the skills supporting word recognition and listening comprehension (Kim, 2015). The current findings speak to Castle et al.’s (2018) call to end the reading wars. As Castle et al. point out, there is a need for a comprehensive approach to provide both a solid foundation in phonics, and the oral language skills and strategies to become an expert reader.

As a program evaluation, there are limitations to the current findings. The program evaluation was not conducted for the purpose of making theoretical discoveries about the interventions, and details regarding the Dialogic Reading intervention most specifically were not captured. As well, the RfA program could have been more comprehensive by incorporating aspects of writing instruction more fully (Graham and Herbert, 2011). As well, there was no way to detect the impact of individual components of each of the intervention programs, or the impact of the educators’ experience as a teacher. There was also the potential for bias because the authors of the RfA program completed the assessments. Additionally, the authors of the RfA program co-instructed the RfA intervention with the classroom teachers. SLPs have knowledge to support teachers in providing scaffolding techniques and differentiated instruction to support children’s learning, and it is possible the collaborative nature of the RfA intervention delivery was a key component of its success. Certainly, further research exploring the utility of the RfA program should seek to understand the potential importance of the collaborative delivery of the program. The outcome measures did not fully assess all possible strands of word recognition or linguistic (knowledge) comprehension. A possible lack of sensitivity in the measure for listening comprehension, for example, could have accounted for the unexpected null findings given mounting evidence of the important of listening comprehension to reading comprehension (Hogan et al., 2014). Nevertheless, the findings provide a glimpse into the creativity and complexity of solutions possible for SLPs in educational settings. The collaborative nature of RfA offers a framework for other school boards moving to SLP-educator collaborative models. Collecting data from educators and SLPs throughout the collaboration would provide insights to other schools looking to implement similar procedures.

These results contribute to the ongoing debate regarding the need for a comprehensive approach to reading instruction. Grade 1 classes in the present study completed either a comprehensive program (RfA) focusing on code-and knowledge-based skills or a Dialogic Reading program aimed at developing knowledge-rich vocabulary. Both programs resulted in measurable benefits specifically related to the explicit instruction targeted. The RfA program was associated with benefits in phonological awareness, letter-word recognition, nonsense word decoding, reading comprehension, and written expression, whereas the Dialogic Reading program resulted in vocabulary improvements at schools with average or lower household incomes. In keeping with the Simple View of Reading, the results provide evidence for the complementary effects of code-and knowledge-focused interventions to support word recognition and linguistic comprehension in the equation leading to better reading comprehension. Viewing reading instruction in terms of code- and knowledge-focused components adds clarity in understanding the necessary components of reading instruction. Based on these findings, a comprehensive approach that includes each of these components is needed to create an effective approach to reading instruction.

Supplemental Material

sj-png-1-clt-10.1177_02656590211014246 – Supplemental material for Evidence for complementary effects of code- and knowledge-focused reading instruction

Supplemental material, sj-png-1-clt-10.1177_02656590211014246 for Evidence for complementary effects of code- and knowledge-focused reading instruction by Meghan Vollebregt, Jana Leggett, Sherry Raffalovitch, Colin King, Deanna Friesen and Lisa MD Archibald in Child Language Teaching and Therapy

Supplemental Material

sj-png-2-clt-10.1177_02656590211014246 – Supplemental material for Evidence for complementary effects of code- and knowledge-focused reading instruction

Supplemental material, sj-png-2-clt-10.1177_02656590211014246 for Evidence for complementary effects of code- and knowledge-focused reading instruction by Meghan Vollebregt, Jana Leggett, Sherry Raffalovitch, Colin King, Deanna Friesen and Lisa MD Archibald in Child Language Teaching and Therapy

Footnotes

Acknowledgements

We would like to thank Jana Leggett and Sherry Raffalovitch for sharing their program with us.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Jana Leggett and Sherry Raffalovitch created and implemented the program Reading for All.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.