Abstract

This study provides a bibliometric analysis of International English Language Testing System (IELTS) research from 1989 to 2024, incorporating 641 research documents. Among these, 482 were obtained from five online indices (Web of Science, Scopus, ERIC, EBSCOhost, Google Scholar) and 159 were identified manually (including 146 studies sponsored by the IELTS co-owners). The analysis focuses on patterns and trends in research topics, methodological approaches, publications and disciplinary areas, author-affiliated institutions and countries, and co-authorship. The results show that topics which were well-covered by researchers extended beyond psychometrics and validation of the testing system to include consequential factors, such as teaching and learning, academic/professional contexts, test preparation, and non-linguistic constructs (e.g., stakeholders’ attitudes, beliefs). Quantitative studies constituted the most common approach but were exceeded by (often well-cited) mixed-methods inquiries sponsored by the co-owners. Research authorship diversified from Anglophone to English as a Foreign Language (EFL)/English as a Second Language (ESL) contexts, reflecting a broader view of IELTS’s validity, warranting further exploration. Implications of the findings are discussed both for IELTS and language assessment research more generally.

Keywords

Introduction

IELTS (International English Language Testing System) is an on-demand, high-stakes English language proficiency test developed and co-owned by Cambridge English, the British Council, and International Development Programme Australia. It provides a measure of a candidate’s speaking, listening, reading, and writing skills (along with a composite overall score) across a nine-band scale from band 1.0 (“non-user”) to 9.0 (“expert user”) that is widely recognised around the world (Merrifield & GBM & Associates, 2012; Taylor, 2009). The most important use of the test is its predictive capacity to facilitate test-users’ (notably higher education institutions, professional organisations, and government entities) assessment of applicants’ linguistic readiness for English-medium academic purposes and professional registration (Merrifield & GBM & Associates, 2012). In the United Kingdom, IELTS formally holds the status of SELT (Secure English Language Test), affording it the privilege of being accepted as visa evidence for workers, entrepreneurs, and students on sub-degree level programmes. Minimum linguistic requirements, as measured in IELTS band scores, are usually established by test-users in light of guidance from the IELTS co-owners (MacDonald, 2019). IELTS is not a pass/fail test per se. Rather, candidates may be perceived to have “failed,” if the overall or sub-scores they achieve do not meet the requirements of their chosen test user, often resulting in repeated test taking (Barkaoui, 2016; Estaji & Banitalebi, 2023) or an Enquiry on Result (the process by which a candidate may challenge their score).

IELTS has adapted to developments in language education research and international student recruitment, leading to its global candidature reaching more than 4 million per year in 2023 (IELTS, 2024a). The testing system has seen a number of major changes, including the 1995 replacement of disciplinary-specific areas with Academic and General Training Modules (Davies, 2008), the 2001 revision to speaking test format and scale (Brown, 2006), the 2008 expansion of the pronunciation scale (Isaacs et al., 2015), and the 2017 introduction of computer-based testing. While test-taker numbers were badly hit by the imposed hiatus caused by the COVID-19 global pandemic (Clark et al., 2021), they have since recovered, thanks in part to the co-owners’ initiatives to respond to changing market conditions through the IELTS Indicator Test (Isbell & Kremmel, 2020) and One Skill Retake (in selected countries). The latter is a relatively new process whereby a candidate can retake one of the four test components if there is a band score shortfall relative to their needs, affording greater flexibility and peace of mind to test-takers (IELTS, 2024b).

The IELTS co-owners have long emphasised the test’s research-based foundation to enhance its credibility with stakeholders, notably test-users. They have been keen to position research into IELTS as important for ensuring “an ongoing relationship with the broader linguistics and language testing community” (IELTS, 2019, p. 11), “continuous improvement of the test” (IELTS, 2019, p. 11), and “an up-to-date testing system” (IELTS, 2002, p. 24). An important way these commitments are realised is through what is termed Internal research (IELTS, 2019), that is, studies undertaken internally by IELTS’ Research and Validation teams that “bring together specialists in testing and assessment . . . and provides rigorous quality assurance for the IELTS test at every stage of development” (p. 10). This is a notable research strand as investigators may be able to access important internal datasets (e.g., samples of candidates’ speaking and writing responses, test-takers’ background characteristics). Owing to the sensitive nature of some data, not all such outputs can be conveyed to an external audience. Those that can are published in Cambridge Assessment English and Cambridge University Press’ Studies in Language Testing and Research Notes series, respectively, full volumes of which have recently been uploaded as open access documents.

Also at the forefront of IELTS’ commitment to research is test validation through External research (IELTS, 2019). As of 2025, this constitutes over 140 IELTS Research Reports (IRRs) undertaken by more than 350 researchers from a cross-section of countries globally and funded by the co-owners (with up to £45,000 available per successful proposal) (IELTS, 2025). Projects are typically co-researched in small teams and run for 1–2 years, resulting in a written report of no more than 20,000 words (significantly longer and more detailed than the outputs of articles published in most academic journals). Reports are provided open access on the IELTS website but are not currently listed on indices such as the Web of Science (WoS) or Scopus (unlike the ETS Research Report Series). As such, IRRs may not contribute to metrics used to measure authors’ and institutions’ productivity and impact, which may discourage some from undertaking research into IELTS via the IRR series or, as in some cases (e.g., Hyatt, 2013), cause them to repurpose their report into a journal article.

Meta-research

In recent years, there has been an increasing trend towards meta-research in applied linguistics (AL), manifested in the creation of new periodicals (e.g., Research Methods in Applied Linguistics, Research Synthesis in Applied Linguistics), special interest groups (e.g., BAAL Research Synthesis in Applied Linguistics), and an increasing number of empirical studies concerned with the study of research itself. The aim of many such papers is for research stakeholders, particularly readers and authors, “to understand and improve how we perform, communicate, verify, evaluate, and reward research” (Ioannidis, 2018, p. 1). Meta-research encompasses the synthesis of existing studies with a view to suggesting enhancements to how research is undertaken and reported, perhaps through the examination of research designs and methods, publication and peer review practices, and scientific standards (Ioannidis, 2018). Well-established meta-research publishing formats in the discipline, such as systematic and state-of-the-art reviews and meta-analyses, have been complemented by an array of newer types, including narrative reviews and qualitative research syntheses (Chong & Plonsky, 2023).

The meta-research turn is especially salient in language assessment, given the volume and diversity of studies (Bachman, 2000; Dong et al., 2022; Yang & Wang, 2025). The sheer amount of research—illustrated by Aryadoust et al.’s (2020) dataset comprising 4736 articles (now 6 years old)—makes it increasingly difficult to keep pace with global trends across the literature, leading scholars to conclude that further synthesis is needed to contextualise existing research, identify enduring concerns and emerging priorities, and highlight areas requiring additional scrutiny (Yang & Wang, 2025), illustrated in a range of approaches including systematic reviews (e.g., Chen et al., 2024), meta-analyses (e.g., Gagen & Faez, 2024), and scoping reviews (e.g., He et al., 2025). For high-stakes language tests, meta-research is crucial because it systematically synthesises existing evidence to evaluate test validity, fairness, and impact, thereby guiding policy decisions that affect large numbers of test-takers. While many studies have been undertaken into IELTS over its 36-year existence, including over 140 empirical studies funded by the IELTS co-owners and published as IRRs, to our knowledge, aside from two recent meta-analyses of IELTS predictive validity (e.g., Gagen & Faez, 2024; Ihlenfeldt & Rios, 2022), there have been few attempts to synthesise this research.

Bibliometric approaches

One approach to meta-research that has been increasingly taken up within applied linguistics is bibliometric analysis or bibliometrics(e.g., Aryadoust et al., 2020; Crosthwaite et al., 2022; Hyland & Jiang, 2021; Jing et al., 2024; Lei & Liu, 2019; Li, 2022; Pearson, 2024). This refers to “the application of mathematics and statistical methods” to analyse scientific publications (Pritchard, 1969, p. 348). Bibliometric researchers generate quantitative insights into publication trends, often examining the raw frequencies and distributions of research topics, research approaches, co-authorship, document citations, journals, and author-affiliated institutions by assembling and analysing datasets comprising bibliometric records (e.g., author names, document titles, abstracts, and keywords) from research databases, such as Web of Science (WoS), Scopus, and Google Scholar (GS) (Lei & Liu, 2019). The individual study constitutes the unit of analysis (Chong & Plonsky, 2023), with researcher(s) usually seeking to highlight patterns across large datasets to characterise the domain under investigation.

Of particular value in our view are bibliometric studies, in which authors guide the reader through the evolution of the domain, identifying salient/rising/falling topics of inquiry, research methodologies, the locations of research activity, and patterns of collaboration. Such information allows readers to gain a holistic view of the domain, feeding through to more informed agenda setting or decision-making about what is studied, how, and by whom (Barrot, 2024). At a higher level, the information generated may also help stakeholders understand and improve how authors communicate, verify, evaluate, and reward research (Ioannidis, 2018). This seems particularly relevant in the case of IELTS, where much research has been carried out over 30 years and where, to the best of our knowledge, no existing paper has attempted to synthesise the comprehensive body of research. Investigating the distribution of research topics is vital to ensure that any validity arguments are applied equitably across all test components. For example, if certain modules receive disproportionately less attention, the empirical basis for overall test scores becomes uneven. Likewise, if the field relies predominantly on, for example, statistical modelling while marginalising, say, qualitative approaches, research captures only a partial view of the test. As such, bibliometric research may act as a form of oversight, providing a robust, up-to-date evidence base that could lead to refinements in test constructs.

Despite being a novel form of inquiry in AL (Chong & Plonsky, 2023), bibliometric analyses have already addressed a range of subject areas within the discipline, including automated writing evaluation (Barrot, 2024), English for academic purposes (Hyland & Jiang, 2021), second language acquisition (Zhang, 2019), technology-supported learning environments (Jing et al., 2024), and written corrective feedback (Crosthwaite et al., 2022), to name but a few. A common finding across these studies is the (often burgeoning) growth in scholarship and widening of research participation (especially internationally) over the last decade (Barrot, 2024; Crosthwaite et al., 2022; Hyland & Jiang, 2021). Sometimes, individual authors most influential within a domain are highlighted (e.g., Crosthwaite et al., 2022; Lei & Liu, 2019). Such information can also be aggregated to the level of author-affiliated institution (e.g., Dong et al., 2022; Yan & Zhang, 2023) or country (e.g., Barrot, 2024; Hyland & Jiang, 2021), helping readers identify teams or departments in particular contexts that focus on specific research topics or adopt particular patterns of co-authorship (e.g., Amini Farsani et al., 2021). This is salient in the context of IELTS, where the co-owners implicitly encourage collaboration through the IELTS joint-funded research programme that emphasises the interdisciplinary nature of research (IELTS, 2025). Studies that document evolving disciplinary priorities through analyses of research topics reveal factors relevant to research into IELTS, including the impacts of technological developments (Crosthwaite et al., 2022), internationalisation (Hyland & Jiang, 2021), and growing methodological maturity (Barrot, 2024) on AL scholarship.

Of particular relevance to the present study are existing bibliometric studies that address language testing and assessment (see Aryadoust et al., 2020; Dong et al., 2022; Yang & Wang, 2025). Aryadoust et al.’s (2020) analysis of a dataset of 4736 documents focusing on measurement and validity published between 1918 and 2019 showed that language testers have long been pre-occupied with the measurement of reading, writing (also found by Yang & Wang, 2025), and oral production skills, potentially neglecting pertinent constructs such as language knowledge, feedback, and washback. That 67.03% of the dataset comprised documents from non-specialist journals indicates that much language assessment research is distributed across a wide array of publications. This is likely the case for IELTS, given the test’s impact (Hamid & Hoang, 2018; Pearson, 2019). In contrast, Dong et al. (2022) zeroed in on the journal Language Testing to analyse patterns across 759 documents published between 1984 and 2020. They found that authors typically gave greater attention to large-scale international tests (including IELTS), often addressing the staple topics of validity, reliability, and test development, albeit the methods researchers use have evolved within that timeframe (e.g., by incorporating eye-tracking technology). The enduring and significant role of IELTS in the international English language testing landscape and the large amount of research that has been generated about, on, and around the test, provide a convincing argument for a research synthesis in the form of a bibliometric analysis.

Research aims

This study reports on a bibliometric analysis of 641 research documents published across the existing lifespan of IELTS (up until 2024), retrieved from a combination of online indices, official databases of co-owner published research, and manual searching. The study examines the prevalence of particular research topics and approaches, as well as prevailing and impactful publications, affiliated author institutions, the countries of those institutions, and patterns of co-authorship. Guiding the design of the study are the following research questions (RQs):

RQ1. What topics in IELTS research have been most frequently explored?

RQ2. What are the most prevalent research approaches used in IELTS research and how have they changed over time?

RQ3. Which publications, authors, institutions, and countries have been the most productive and impactful (in terms of citations)? What is the prevalence of co-authorship?

Method

Data collection and retrieval

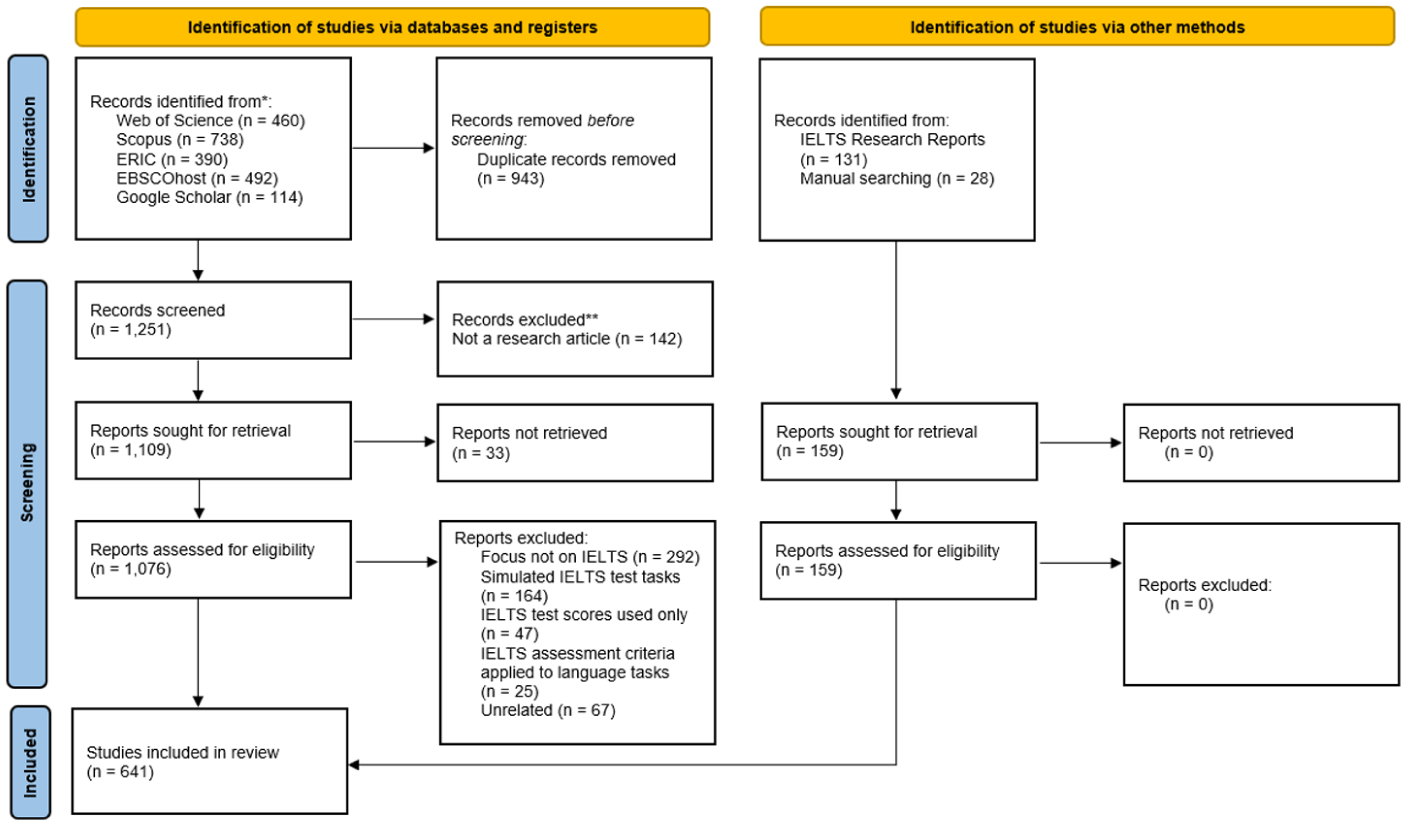

In this study, we sought to retrieve a complete body of IELTS research in the fields of social sciences and arts and humanities. As with other recent bibliometric analyses (e.g., Jing et al., 2024; Shi & Aryadoust, 2024), a Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) flow diagram (Page et al., 2021) was employed to improve the robustness of data identification, retrieval, and screening (see Figure 1). We began the process of identifying relevant bibliometric records using the keywords IELTS or International English Language Testing System in any bibliometric field (e.g., title, keywords, abstract, etc.) across five popular online indices of research; the Web of Science (WoS), Scopus, ERIC, EBSCOhost, and Google Scholar (GS). This yielded 2194 bibliometric records which, after duplicates were removed (n = 943), were subject to screening to determine their relevance. Data were extracted on April 15, 2025.

Processes for identifying, screening, and including documents.

Complementing these data were records manually identified and retrieved from other sources, the most notable of which were 131 IRRs, published on the IELTS website. We manually created bibliometric records using data (e.g., author names, institutions, countries, abstracts) by downloading and extracting the contents of the research reports themselves. These were complemented by a further 28 studies from publications not listed on the research indices, notably Studies in Language Testing (SiLT) and Studies in Language Assessment (SiLA, formerly Melbourne Papers in Language Testing). For the purposes of the analysis, following IELTS (2019), we grouped IRRs and SiLT papers together as sponsored research (n = 146) and differentiated these to external research (n = 495), which denotes studies not published by one of the co-owners (e.g., Cambridge English) or collectively under the IELTS banner. The rationale for this delineation is that the former entails co-owner provision of funding, endorsement, and potentially access to valuable internal datasets, whereas external research is more likely to address IELTS from the outside. This relates to fundamental bibliometric questions of what is researched, how, and by whom? We included 14 journal articles that seemed to provide a more condensed version of sponsored research as external studies (e.g., Hyatt, 2013; Moore & Morton, 2005), although we did not include Cambridge English Research Notes, since material from the reports could not be copied to create bibliometric records.

Data screening and eligibility

Inclusion/exclusion criteria were applied to the documents retrieved from the research indices (mainly their title, abstracts, and author-provided keywords, as it was not feasible to review complete research reports). We set the timeframe of included studies to commence from 1989, corresponding with the inception of the IELTS test (although the first document in our sample was from 1990), and to end in 2024. We limited included documents to those classified as journal articles (including “early access” on the WoS and Scopus), books, book chapters, proceeding papers, review articles, and excluded formats that do not typically constitute research (e.g., book reviews, letters to the editor, n = 142). Articles written in languages other than English (n = 6) were not removed, as each featured an English language abstract. An absence of metadata (usually because an article text could not be retrieved) meant that bibliometric records for 33 studies were largely incomplete and, hence, removed. As the focus was on characterising a comprehensive dataset of IELTS research and avoiding publication bias, no individual publication or study was screened out based on quality measures.

Examination of document titles, abstracts, and keywords resulted in the inductive creation of four further categories for excluding irrelevant studies. We omitted 290 reports which did not focus on IELTS (often because they contained a passing reference to the test in the abstract). We also excluded 164 studies where a simulated IELTS test was administered as a pre-/post-test for some pedagogical intervention that had little to no relevance to the test itself. Likewise, we removed 47 papers that referenced IELTS band scores as markers of research participants’ proficiency without focusing on the test itself. However, studies aiming to establish relationships between IELTS band scores and other variables where the focus was on the nature or suitability of the former were included. Finally, we removed a further 25 studies where the authors had utilised the IELTS Speaking or Writing band descriptors as a means of assessing learner speaking/writing without a specific focus on the test. Since it is the case that other disciplinary areas use the abbreviation IELTS, we also removed 67 wholly irrelevant results. Ultimately, bibliometric records for 482 indexed IELTS research documents were included, supplemented by 159 records manually identified from other sources, totalling 641 documents See Supplementary Appendix A on the Open Science Framework (OSF) for the list of records alongside all other supplementary materials (Pearson & Zou, 2026). We did not scrutinise the methodological quality of the included publications because our goal was to map the overall body of literature, rather than to synthesise findings or assess the rigour of individual research papers, in line with existing applied linguistics bibliometric research.

Screening document eligibility was undertaken independently by both researchers, working on half of the bibliometric records, respectively. Each author checked 35 records screened by the other, resulting in an inter-rater reliability agreement figure of 95%, deemed acceptable. Most discrepancies (e.g., Tavakoli et al., 2014) related to whether a study was sufficiently focused on IELTS to be included, with uncertainties resolved via discussion between the authors.

Data analysis

In line with prior studies (e.g., Crosthwaite et al., 2022; Lei & Liu, 2019; Zhang, 2019), this bibliometric analysis investigates salient patterns and trends concerning the output and impact of publications, author-affiliated institutions, and the countries in which such institutions are located. In addition, it identifies prevalent research topics and approaches, and how these have changed over the timeframe. We present raw frequencies and proportional figures for research output, along with normalised frequency counts to account for changes in the prevalence of research topics and approaches within IELTS across three timespans, 1989–2009, 2010–2019, and 2020–2024. These are not equal in duration, since the number of research documents was not evenly distributed.

We operationalise the academic impact of research based on individual documents’ citation counts, which we acknowledge is a rudimentary measure. Given that we extracted bibliometric records from an assortment of indices and manually added records from other sources, we employed GS citations, manually downloading counts for each study (valid for May 28, 2025). We present citation impact data in three ways: raw citations, citations normalised relative to the number of documents (to control for output size across factors such as research approaches, publications, publication types), and as an age-weighted citation rate (AWCR, calculated by dividing the number of citations by the age of the document in years), to account for the effects of time (i.e., a lower AWCR figure indicates that older documents are less impactful). AWCR figures are accumulated, by aggregating values across discrete studies. While providing a time-sensitive dimension of citation impact, it does not equate to a paper’s total influence given that a few highly recent papers (which may be cited for recency alone or owing to the fast-moving nature of some topics) can outweigh moderately cited older ones.

We retrieved full texts of all included external research studies (along with IRRs) in order to provide a comparison of study length (in words) between sponsored and external research. Document PDFs were converted into text files, with an overall word count generated using AntConc (Anthony, 2018), which was then divided by the number of documents to obtain an average. The full texts of 37 external studies could not be retrieved (often conference proceedings or older papers) and were discounted from this calculation.

Research topics

We analysed topics within IELTS research cross-sectionally and longitudinally by drawing on both author-provided keywords and keywords from abstracts (Pearson, 2024). We began by extracting all 1117 author keywords from the external studies (and none from sponsored research, which featured no keywords). We excluded 725 keywords that appeared only once or twice, since we did not consider these to be common topics in the dataset. We removed a further 94 for being too general (e.g., strategies), 29 for being methodological (e.g., correlation), nine country names because these were attended to in a separate analysis, five because we did not consider them topics (e.g., boosting), and two where an abbreviation of the concept was provided in parentheses (e.g., artificial intelligence [AI]). This final list of 253 keyword-from-author topics was supplemented with a further 487 candidate keywords from abstracts. To delineate the latter, we applied the topic patterns based on word class combinations (e.g., noun + noun, noun + preposition + noun) generated by Pearson (2024) using AntConc’s n-gram function (Anthony, 2018). Among these keywords, we removed 153 that were not research topics (often chunks that did not stand alone as something meaningful, e.g., based approach), 114 that were duplicated author-provided keywords (e.g., cognitive processes), 38 that were methodological, 30 that appeared too infrequently, three that we felt were too general, and two author countries. After manual screening, we were left with 400 keywords that, in accordance with prior bibliometric studies, we regard as topics.

Topic frequency counts were generated by cross-referencing the uncovered keywords with document titles and abstracts. As in other bibliometric studies (e.g., Crosthwaite et al., 2022; Hyland & Jiang, 2021; Lei & Liu, 2019), we operated on the assumption that research articles may feature or address multiple topics (particularly since IRRs constituted comprehensive, wide-ranging investigations). Along with identifying increasing, decreasing, and stagnating topic trends over time, we also synthesised the 400 identified topics into 15 broader topic themes (see Supplementary Appendix C; Pearson & Zou, 2026). We did this manually by assigning each to a topic theme (e.g., preparation course, practice tests, and use of exemplars were synthesised along with 32 other topics into Test-takers and test preparation) through an inductive and iterative process that involved revising, merging, and collapsing topic themes and resolving differences through discussion.

Research approaches

AL bibliometric analyses have addressed methodological features of research in different ways. While acknowledged as not constituting research topics per se, methodological keywords identified from abstracts have been incorporated into prior analyses (e.g., Hyland & Jiang, 2021; Lei & Liu, 2019; Pearson, 2024), since such items say something about the disciplinary area. In the present study, methodological keywords were addressed separately to topic ones, to investigate phenomena including methodological change over time, the citation impact of varying approaches, and the intersection with external versus sponsored research. The study drew upon the first author’s unpublished list of methodological keywords that were manually identified from a dataset of 2416 language assessment studies (see Supplementary Appendix B; Pearson & Zou, 2026). We took the position that authors are best placed to characterise the methodological approach(es) of their research, adopting the approach of tallying occurrences of these keywords across document titles and abstracts. We did not calculate their frequency across a document’s keywords since these were absent in sponsored studies.

To obtain global perspectives on research approaches and because many keywords registered zero hits, we created combinations of Excel formulae that looked up methodological keywords and assigned the labels quantitative, qualitative, or mixed methods (MMs) depending upon whether one or more of the keywords listed were present in the title or abstract. Studies were labelled as MM if they featured this phrase (or variation) or if keywords indicating both qualitative and quantitative approaches were found in the title and/or abstract with the absence of the phrase MMs (albeit such entries were manually checked). Formulae featuring synonyms of the term proficiency interview (e.g., oral interview, speaking interview) were applied in order to distinguish interview as a research method from the test feature. We removed 63 records from the methodological analysis, either because they were non-empirical (n = 44, e.g., Pearson, 2019) or did not feature an abstract (n = 19), usually in the case of book chapters.

Publication disciplinary areas and types

The most prevalent academic journals publishing IELTS research were identified from the retrieved bibliometric records. As we found that studies were disparately situated across a large array of venues and to enable more meaningful comparison, we synthesised the relevant publications into four disciplinary areas, language assessment (e.g., Language Testing), applied linguistics/Teaching English to Speakers of Other Languages (TESOL, e.g., ELT Journal), education (e.g., Cogent Education), and other journals (e.g., Australian Journal of Social Issues). In a few instances where we could not identify a disciplinary area from the name of the publication (e.g., Alabe-Revista de Investigacion Sobre Lectura Y Escritura), we reviewed the stated aims and scope on the journal/report’s website.

Institutions, countries, and (co-)authorship

Candidate entries for the 10 most productive and impactful author-affiliated institutions and countries were initially identified using WoS and Scopus functionality (as these constituted by far the most prevalent indices that list IELTS research) and by manually reviewing the bibliometric records that we had created for sponsored research. Unlike previous studies that examined only first-author institutional affiliations (e.g., Lei & Liu, 2019; Yan & Zhang, 2023), we incorporated those of every listed author for a more comprehensive perspective. Excel formulae were created to obtain precise document and citation counts (e.g., for authors affiliated with Japanese institutions, counting the occurrences of Japan in the author affiliation field). Since our results show much IELTS research has been published over the last 10 years, we did not examine trends in the countries of author-affiliated institutions over time. We analysed the extent of author collaboration by manually labelling each study as no collaboration, domestic collaboration (which we took to mean authors either from the same or different institutions in one country), or international collaboration. In the event an author had multiple affiliations, we adopted that which was listed first. While we identified prevalent authors in the dataset, because few published widely, we opted not to present these findings.

Results and discussion

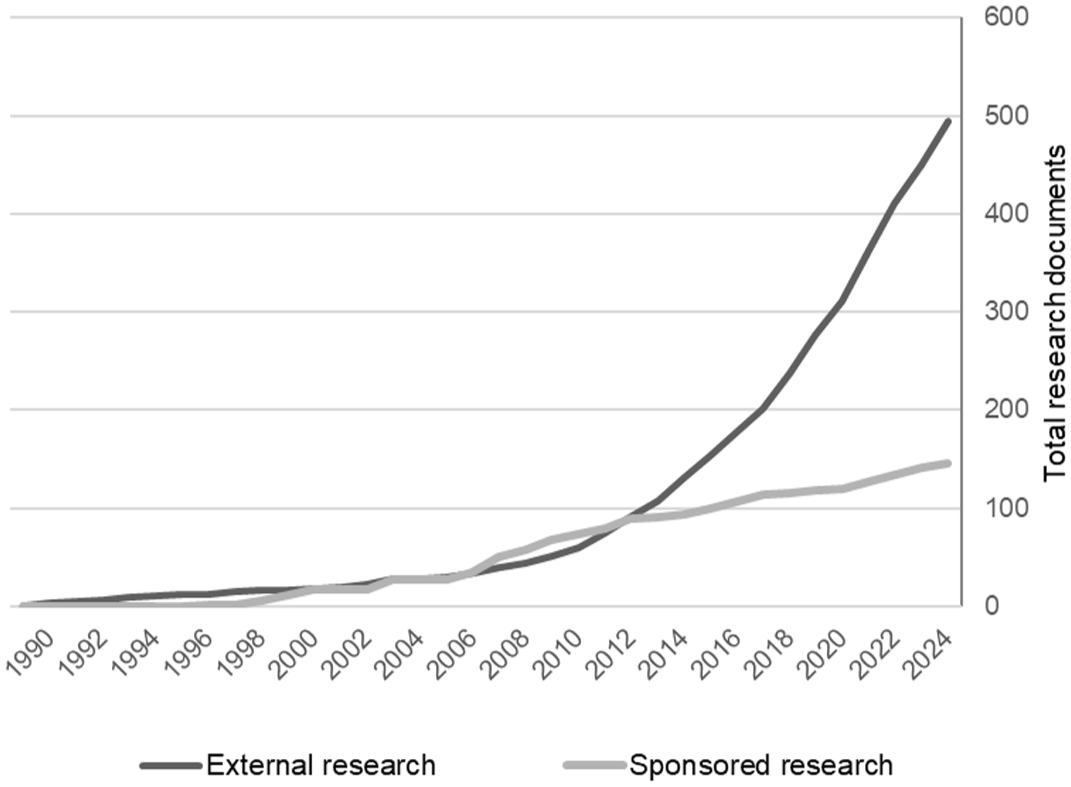

Figure 2 demonstrates trends in published IELTS research from the test’s inception in 1989 through to 2024. Research interest in IELTS was rather modest for the first 20 years of the test’s existence, only exceeding a cumulative total of 101 papers in 2008. This reflects the relatively small-scale nature of the testing operation in the 1990s (Davies, 2008) in light of the very different context of overseas student participation in Anglophone tertiary education (see Lomer, 2017). As the figure shows, the literature body prior to 2012 comprised similar degrees of both external and sponsored research (via IRRs and SiLT), although in instances of the latter, works were released in volumes (e.g., IELTS Collected Papers; Speaking and Writing) affecting their distribution in the figure. Two notable changes in research productivity were exhibited after 2012. First, there has been a marked increase in research interest overall, mirroring the growing size of the test-taking cohort. A second noteworthy trend is the clear divergence between external and sponsored research, such that by 2024, the latter comprised just 22.78% of all documents. This suggests that, as the IELTS test has grown in candidature, so too has its wider impact (Alsagoafi, 2018; Hamid & Hoang, 2018; Sinclair et al., 2019), engaging a growing body of researchers outside of the testing system itself.

Cumulative trends in IELTS research document prevalence.

In addition to trends in the breadth of research as outlined in Figure 2, it is important to note tangible differences across one measure of research depth, a study’s length in words. Our analysis found that sponsored research averaged 19,376 words, significantly exceeding the length of documents classified as external research (7868 words). Free(er) from the restrictions imposed by academic journals, IRR and SiLT authors were able to undertake and report on significantly more comprehensive investigations (see section “Research approaches”).

Research topics

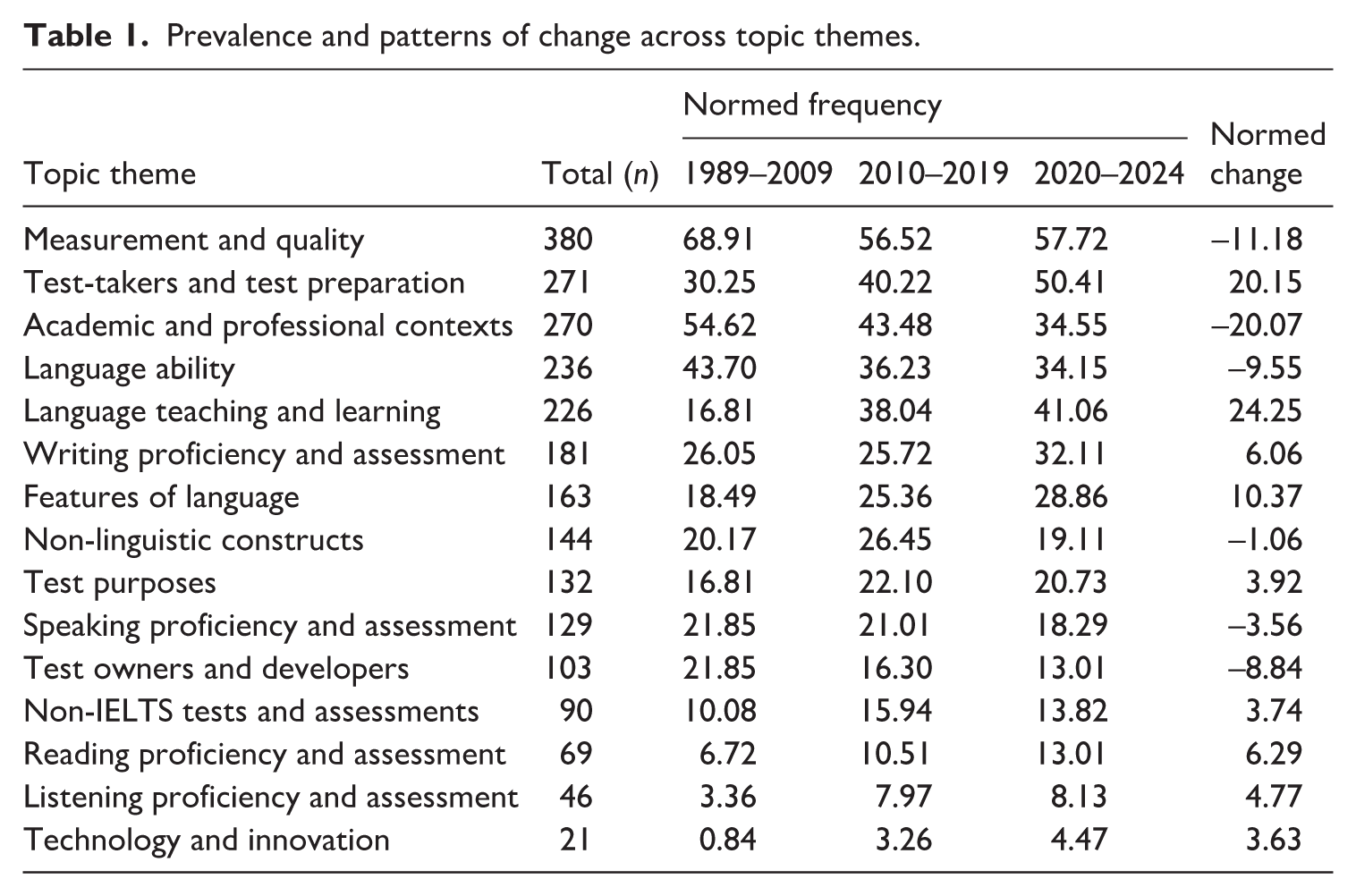

Table 1 shows the 15 topic themes in order of prevalence across document titles and abstracts. It can be seen that by far the most common theme is Measurement and quality (n = 380). This is unsurprising given that it incorporates topics related to the interpretations and intended use(s) of test scores that are central issues in language testing more generally (Fulcher & Davidson, 2007), including validity (n = 126), test scores (n = 78), reliability (n = 45), and raters (n = 41). In addition to these topics common to language assessment more broadly were terms more idiosyncratic to IELTS, including band score(s) (n = 71), IELTS band(s) (n = 28), and IELTS examiner(s) (n = 26), which all characterise the scoring process. This theme was followed by Test-takers and test preparation (n = 271) and Academic and professional contexts (n = 270), which is also unremarkable given the high-stakes nature and impactful gatekeeping role of the test in academic contexts (Pearson, 2019). The prevalence of Language ability (n = 236) reflects the testing purpose and the importance of language ability within contexts of test score use, while also showing that questions surrounding the theoretical and operational definitions of ability from a global perspective have engaged researchers.

Prevalence and patterns of change across topic themes.

With the exception of Test-takers and test preparation, these topics showed the largest normalised decreases in prevalence over the timeframe. For Measurement and quality, this may reflect broader moves in the field away from a predominantly psychometric orientation to addressing the social and ethical consequences of language testing (Hamp-Lyons, 2000; McNamara & Roever, 2006). In the case of IELTS, researchers may have expanded their focus because there were no notable revisions to the testing system between 2008 and 2020, the focus of prior studies (e.g., Brown’s [2006] Candidate discourse in the revised IELTS Speaking Test and Yates et al.’s [2011] The assessment of pronunciation and the new IELTS Pronunciation scale). The test’s validity in light of the 2023 revisions to the Writing band descriptors and the introduction of the One Skill Retake seem fruitful areas to further investigate measurement and quality issues. Alternatively, investigating the test’s usefulness, particularly its reliability and absence of bias, requires navigating access to data held by the co-owners (and probably publishing via the 18-month IELTS joint-funded research programme) and thus be off-putting in the current publishing context which prizes rapid research output.

Less common topic themes included Technology and innovation (n = 21), assessing both receptive skills (Listening proficiency and assessment [n = 46], Reading proficiency and assessment [n = 69]), and perhaps unsurprisingly, Non-IELTS tests and assessments (n = 90). The reasons researchers may favour investigating the productive skills over the receptive ones may be the creation of reified artefacts (particularly live tests) and that there exist a larger number of facets that require validation (i.e., tasks, rubrics, raters). It could also be that, as candidates report that the receptive skills are easier (Lloyd-Jones & Binch, 2012), less important (Merrifield & GMB & Associates, 2012), or more conducive to score gains (O’Loughlin & Arkoudis, 2009), they are less likely to constitute a problem or issue that typically triggers research. The low (albeit, modestly rising) frequency of studies examining the mediating role of technology in IELTS is somewhat surprising given that the co-owners have instituted greater digitalisation of the testing system (albeit not to the degree of competitor tests such as the Duolingo English Test and the Pearson Test of English—Academic), including computer-based testing, computer-mediated rating and examiner training, and the at-home IELTS Indicator Test that served to facilitate university admissions during the COVID-19 pandemic (Isbell & Kremmel, 2020). It is also the case that online networks and apps supporting candidates’ test preparation practices (both official and unofficial) have proliferated, constituting a potentially ripe area for future investigation.

The most sizeable increases in the proportion of research output over the time period were seen in Language teaching and learning (+24.25%), outstripping the related topic theme of Test-takers and test preparation (+20.15%) and reflecting the natural embedding of (notably, classroom-based) IELTS preparation within English language teaching more broadly. This is visible in the settings within which more pedagogically orientated research is situated, most commonly at private language teaching organisations (e.g., Allen, 2017; Brown, 1998; Green, 2007), where IELTS constitutes a “natural” progression route for many English learners (Ahern, 2009), and occasionally as a component of English language enhancement programmes at the tertiary level (e.g., Gan, 2009). The rising interest in Test-takers and test preparation demonstrates that stakeholder engagement with IELTS (especially by test-takers and teachers) extends well beyond test-taking itself. On the one hand, this may be considered encouraging, since it evinces responses to calls to position test candidates at the forefront of research, which is salient given that they have the most to lose and gain through testing (Hamp-Lyons, 2000). On the other, its prominence highlights the impact of the test on candidates, which has been criticised in several empirical works (e.g., Ahern, 2009; Alsagoafi, 2018; Hamid & Hoang, 2018; Sinclair et al., 2019).

Topic themes were cross-referenced with a document’s status as external or sponsored research, along with the overall methodological approach (quantitative, qualitative, or MM) to gain finer insights into publication patterns. There was a sharp divide in the occurrences of Non-IELTS tests and assessments, which were very low among sponsored studies (10.00%) in contrast to external studies (90.00%). This may stem from the co-owners’ funding priorities, which naturally lie with IELTS. However, the result is that research addressing one or more external tests lacks the comprehensive treatment typically provided in an IRR. In addition, funded research’s tendency to position IELTS in isolation rather than within the wider international language testing milieu may result in certain phenomena going under-explored in this format. These include candidates switching between standardised proficiency tests (perhaps because they are struggling to achieve the desired outcome by taking IELTS) and comparative insights across like-for-like tests (notably, other SELTs). It is also notable that external studies featured a more pronounced focus on Language teaching and learning (85.84%), with researchers outside of IELTS perhaps taking a broader view and investigating the test as a prominent feature of the broader English as a Foreign Language (EFL)/English as a Second Language (ESL) landscape.

For topic themes that intersected with research approaches, we found that qualitative methods were infrequent across all themes vis-à-vis quantitative and MM approaches. The most notable was within Speaking proficiency and assessment (22.58%), indicative of investigations of candidate performance in the IELTS Speaking test that used observation, post-hoc analysis of test-takers’ performance, and/or their oral reports (e.g., Seedhouse & Harris, 2011; Vincheh et al., 2024). Quantitative studies were common in investigations of Listening assessment and proficiency (56.52%) and Reading assessment and proficiency (38.24%), often to investigate the relationship between listening/reading performance and a non-linguistic construct (e.g., stakeholder attitudes, beliefs, emotions, etc., also common at 39.57%), operationalised quantitatively (e.g., Ha & Nguyen, 2023). When including all studies that featured an abstract, Non-IELTS tests and assessments (38.20%), Test purposes (31.06%), and Language ability (28.33%) constituted especially high proportions of studies with no identifiable research approach, often because such papers constituted review or opinion articles (e.g., Chalhoub-Deville & Turner, 2000; Hall, 2009; Pearson, 2019).

Research approaches

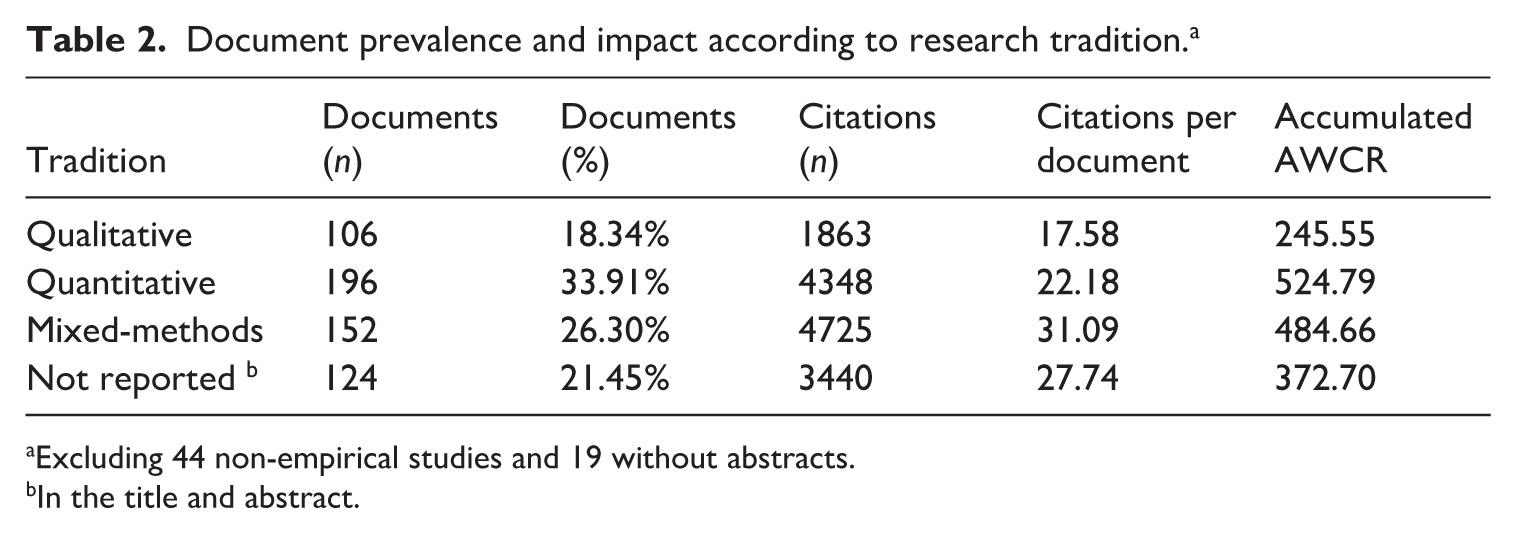

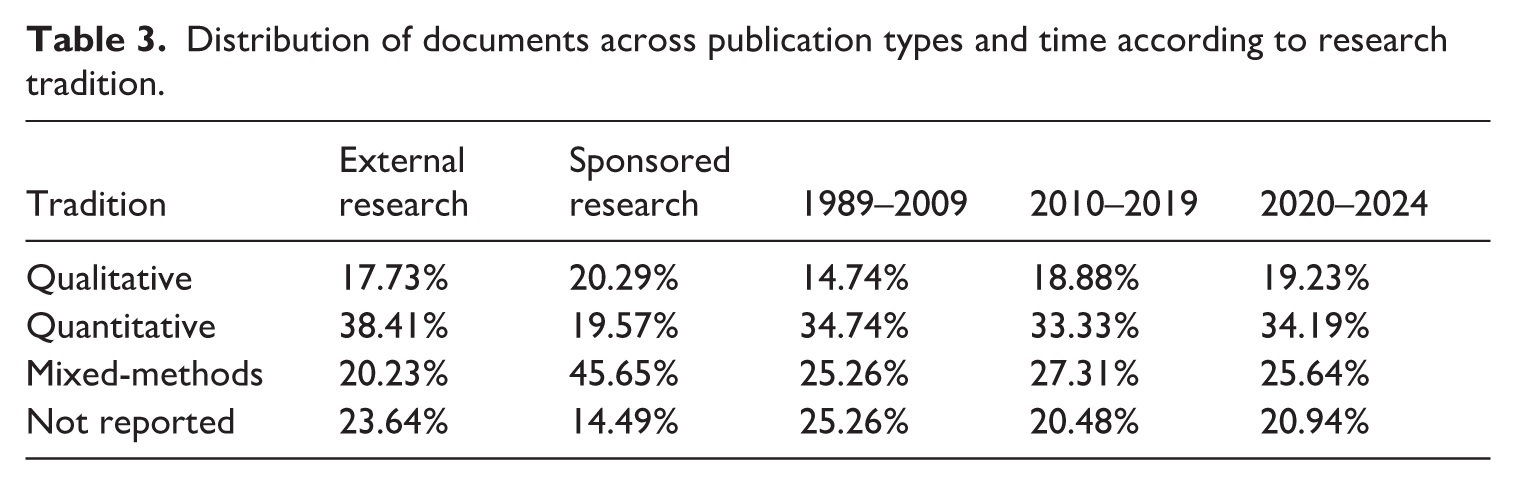

Tables 2 and 3 demonstrate the prevalence and impact of documents drawing upon the three research traditions and those where an approach could not be identified. Studies were most commonly underscored by quantitative approaches (33.91%), reflecting the strong epistemological tradition of positivism that underscores language testing (Fulcher, 2014; McNamara & Roever, 2006). Within quantitative approaches (and covering a range of features between design and technique), experimental designs (7.18%), t-tests (7.02%), and regression analyses (4.52%) were notable. Quantitative approaches were well-represented in external research (38.41%), which may reflect the prominent influence of psychometrics and measurement (Hamp-Lyons, 2000; McNamara & Roever, 2006), perpetuated in initial training programmes, which may leave a lasting impression on practitioners (Yan & Fan, 2021). Citations per document counts in quantitative research (22.18) were notably lower than MM papers. However, an accumulated AWCR value for quantitative works (524.79) exceeded MM research (484.66), indicating that more recent works within this tradition are well-cited.

Document prevalence and impact according to research tradition. a

Excluding 44 non-empirical studies and 19 without abstracts.

In the title and abstract.

Distribution of documents across publication types and time according to research tradition.

MM research was also common (26.30%), especially in sponsored studies (45.65%), probably because such studies tend to be more complex or comprehensive in scope than external research (which included many shorter conference papers). A notable MM approach investigated IELTS examiners’ practices and perspectives, for example, in the context of the 2008 revised Pronunciation scale (e.g., Isaacs et al., 2015). Another was students’ performance and perspectives, for instance, in IELTS Writing tasks (e.g., Phakiti, 2024). It was also the case that additional methods were integrated for their particular affordances, such as observations in classroom-based studies (e.g., of IELTS preparation, degree programmes) and for the rich data that they contribute (e.g., Lloyd-Jones & Binch, 2012). Authors also drew on MM approaches to enhance study quality, for example, determining whether large-scale survey data corroborates qualitative interview data (e.g., Dao et al., 2024), or to gain a comprehensive picture by triangulating evidence from different methods or data sources (e.g., Ma & Chong, 2022). MM studies were also notably more impactful when weighted against article frequency (31.09 citations per document) for reasons that require greater exploration (e.g., whether the triangulation of findings and completeness typically afforded by MM research translates to greater perceived study quality or utility).

The least prevalent tradition was qualitative research (18.34%), albeit it was also the most increasing in prevalence (+ 4.49%) and interviews were found to be the second-most popular research method (25.09%). Other qualitative methods were far less frequent, including observation (5.30%) and focus group discussions (2.96%). Qualitative reports tended to focus on querying stakeholders’ perspectives of stakeholders, notably that of test-takers (e.g., Clark & Yu, 2020; Yang & Badger, 2015), but also included content analyses of test materials (e.g., Noori & Mirhosseini, 2021) and candidate responses (e.g., Estaji & Hashemi, 2022). It is also apparent that qualitative IELTS research is not as well cited (17.58 citations per document, AWCR = 245.55, with 34 studies having an AWCR value less than 1.00). However, it is our interpretation that the lower take-up of qualitative approaches independently of quantitative ones is not due to a perceived lack of value in the tradition itself (given the prevalence of MM approaches). Rather, as a high-stakes test concerned with reifying language proficiency, quantitative data in the form of test results (e.g., Allen, 2017), text-analytic descriptions of student speaking or writing (e.g., Riazi & Knox, 2013), and/or evidence of learners’/test-takers’ behaviours as measured through eye-tracking or keystroke logging (e.g., Bax, 2013) may be seen as integral or complementary to research. Such data may be available on a large scale from the co-owners, whereas qualitative data may need to be generated anew, requiring time and resources. In addition, systematic training in qualitative methods is less established in language testing programmes, and as such, practitioners may be more concerned with assessment theories and the psychometric qualities of tests (Yan & Fan, 2021), which are firmly grounded in the quantitative tradition.

The notable prevalence of studies with no explicitly stated methodological approach (21.45%) is due to authors omitting methodological information or characterising their studies in ways not captured by our keywords. We noticed a tendency for IELTS predictive validity research to fall within the latter category, with authors using terminology with additional meanings (e.g., relationship, outcomes) or approaches couched in less precise terms (“Scores from IELTS and the university’s in-house English Proficiency Test are analysed to determine the predictive ability . . .”). As such, it should not be interpreted that providing fewer methodological details correlates with higher impact or is considered desirable by readers of research. Indeed, we feel that there are opportunities for authors to enhance study visibility by employing conventional methodological keywords (e.g., qualitative, quasi-experimental study, factor analysis) in author-provided keywords and/or abstracts, particularly as a study’s overall approach or methods may constitute the reason for its retrieval.

Publications, authors, and countries

Consistent with the broader disciplinary area of language assessment (Aryadoust et al., 2020), a notable finding that became apparent during the screening process was the diffuse array of venues that publish IELTS research. This suggests that IELTS research, as in language assessment more widely, addresses a diffuse range of topics (Yang & Wang, 2025). While a large portion of research was concentrated within the IRR format (20.09%), the 495 documents that constituted external research were situated across 270 discrete publications, averaging a mere 1.83 documents per venue. Among these were both international (e.g., TESOL Quarterly) and domestic journals (e.g., Kasetsart Journal of Social Sciences). Many publications, particularly among the latter category, appear less visible, credible, and influential by virtue of not being indexed on Clarivate’s Core Collection. Indeed, only 46 were found to be indexed on the SSCI (Social Sciences Citation Index), 51 on the ESCI (Emerging Sources Citation Index), and 28 on the CPCI (Conference Proceedings Citation Index). This suggests that IELTS attracts early-career researchers, teacher researchers, or non-linguists, the latter group perhaps impacted by a need to engage with the test themselves. Diffusion across publications of varying robustness is also reflected in the notably uneven distribution of citations. The median number of GS citations was 10.00, with 498 being the maximum. At the same time, 96 studies were found to have zero citations, perhaps because the study was new (24 of these were published in 2024), or for reasons of lower visibility, relevance, contribution, and/or perceived quality (including the publication).

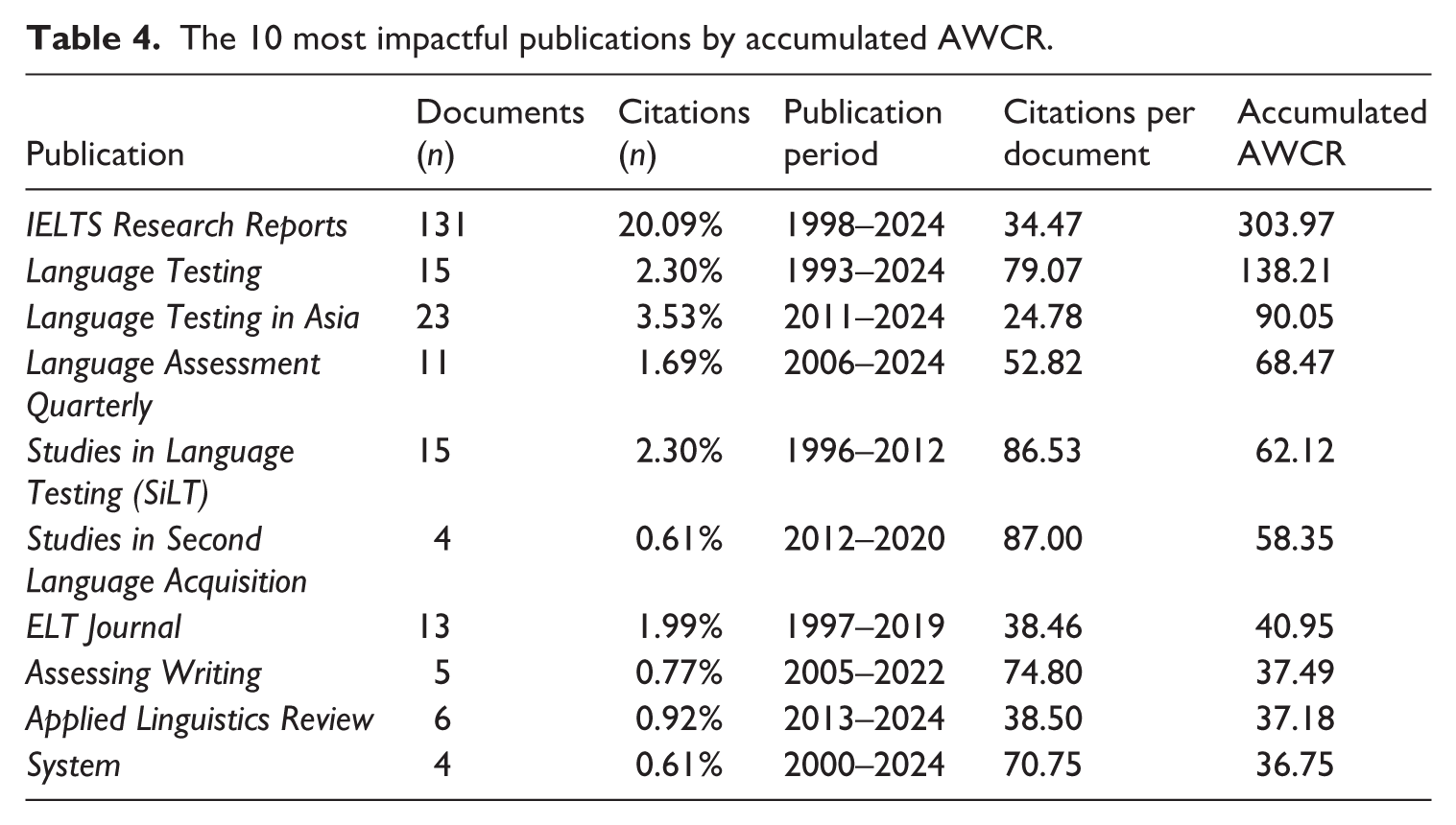

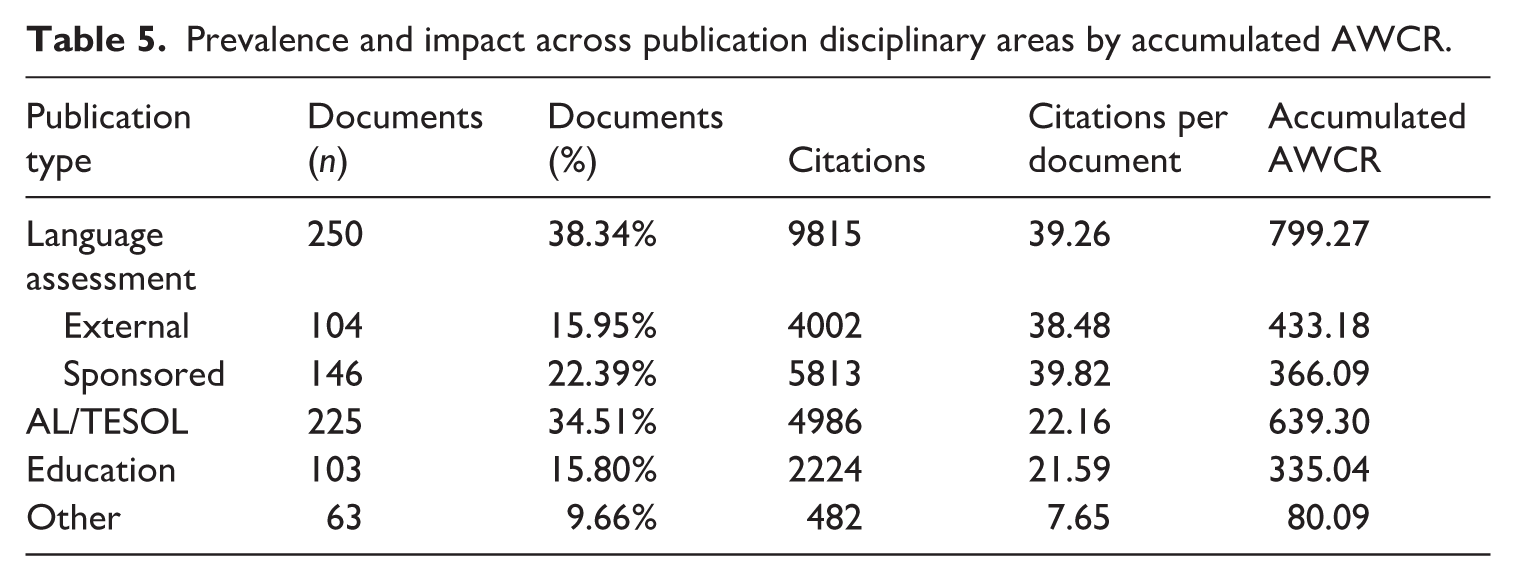

Tables 4 and 5 show trends in prevalence and citations across the 10 most impactful publications and disciplinary areas (by accumulated AWCR). Academic journals specialising in language assessment were, understandably, the most common venue for IELTS-related research, notably Language Testing in Asia (LTiA, n = 23), Language Testing (LT, n = 15), and Language Assessment Quarterly (LAQ, n = 11). One notable exception was Assessing Writing (AW), publishing just five studies. When compared with the total age of the journal, it was found that three of the four language assessment journals averaged fewer than one article per year that focused on IELTS (LAQ = 0.60, AW = 0.17, and LT = 0.40, the only language assessment journal in existence when IELTS was created). In fact, after Hamilton et al.’s (1993) analysis of L1 user performance in the 1989 version of the test, it was not until 2001 when the next article appeared in LT that focused on IELTS. The multitude of venues for external IELTS research may pose challenges for stakeholders, particularly novice researchers, those new to the topic, and non-academics, all of whom may need to (further) develop expertise in utilising online research indices to better ensure document retrieval.

The 10 most impactful publications by accumulated AWCR.

Prevalence and impact across publication disciplinary areas by accumulated AWCR.

Table 4 also shows that language assessment journals were among the most impactful when adjusted for the effects of time, especially LT and LTiA (accumulated AWCR = 138.21 and 90.05, respectively), and are thus desirable venues for authors to increase the exposure of IELTS research. Other noteworthy periodicals, both cross-sectionally as citations per document and longitudinally as AWCR, are impactful AL/TESOL publications, namely, Studies in Second Language Acquisition (SSLA, 87 citations per document/AWCR = 58.35), ELT Journal (38.46/40.95), and System (70.75/36.75). IELTS research studies in both SSLA and System were rare (n = 4) but well-cited, suggesting a boost provided by the journals’ high impact factors (2-year IF values of 4.2 and 4.9, respectively).

As shown in Table 5, IELTS does not only engage readers of language assessment periodicals but also of AL/TESOL journals, which constituted by far the most common type of academic periodical (n = 225). This reflects widespread interest in IELTS that stretches beyond scientific, technical, and inward concerns of what to test and how, which are of particular interest in language assessment (Green, 2014), encompassing a wider range of practitioner issues, such as washback to the teacher and learner and the social and ethical implications of the test. Alternatively, such journals may constitute a home for manuscripts that do not meet the more stringent requirements of LT, LAQ, and AW. AL/TESOL journals also offer a reasonable compromise for authors between article (22.16 citations per document) and time-weighted impact (accumulated AWCR = 639.30), especially when compared with sponsored language assessment research and education journals, where more impactful studies tend to be older (AWCR = 366.09 and 335.04, respectively).

The data also suggest authors to avoid publications outside of language education, unless there is clear relevance to a readership in a disciplinary area (e.g., The consequences of English language testing for international health professionals and students: An Australian case study with 44 citations in the International Journal of Nursing Studies). However, it should be acknowledged that selecting an appropriate journal is not only a matter of its impact or article visibility. Authors likely opted for education journals (e.g., Higher Education) in articles relating to uses of IELTS for the purposes of student enrolment or chose general open access education publications (e.g., Cogent Education) owing to perceptions of lower entry requirements or increased article retrievability. Regardless, readers of IELTS research need to look beyond language assessment journals and employ varied indices of research to ensure they are not unwittingly missing out.

Countries of author-affiliated institutions

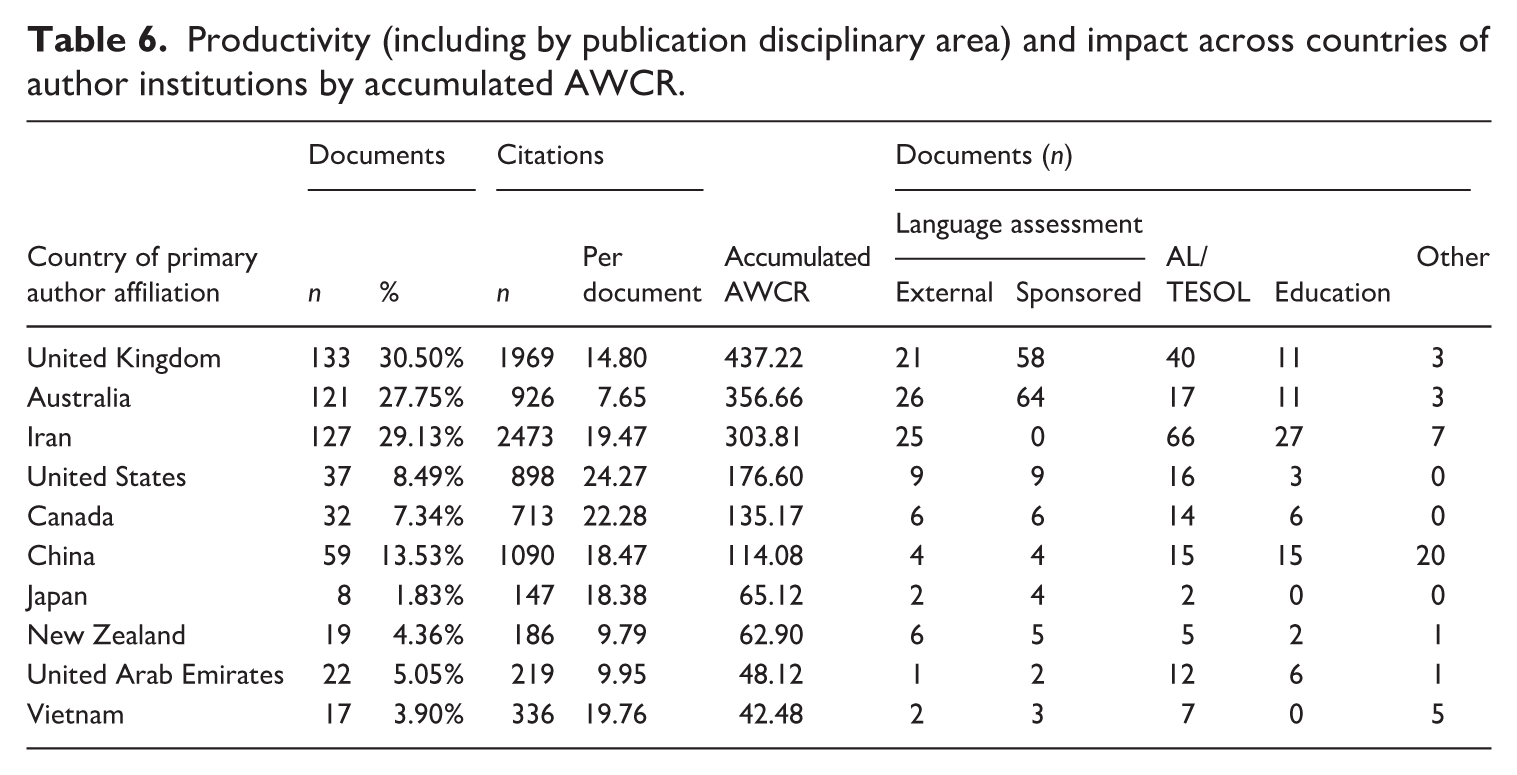

Given that the British Council, Cambridge English, and IDP are UK and Australian organisations, respectively, it is perhaps unsurprising, as shown in Table 6, that authors based at institutions in these countries contributed the first and third largest shares of research output (30.50% and 27.75% documents, respectively). Such authors also produced the most impactful research when citation counts were age-weighted (437.22 and 356.66, respectively), indicating much relevant work in these country contexts has been done more recently. It is also noteworthy that authors in these two contexts contributed prominently to the IRR format, which may further explain the high citation counts, as such works are generally more impactful. Canada and New Zealand, where IELTS is also widely accepted as evidence of English language proficiency (Merrifield & GMB & Associates, 2012) and where many item writers are based (Read, 2022), were less well-represented in the dataset (7.34% and 4.36%), perhaps because the co-owners are not based in these countries. Authors in the United States unexpectedly contributed to 8.49% of documents, indicating that IELTS has a notable footprint there (e.g., Heitner et al., 2014) in light of competition from domestic tests such as Test of English as a Foreign Language (TOEFL), Duolingo, and others.

Productivity (including by publication disciplinary area) and impact across countries of author institutions by accumulated AWCR.

Moving into EFL contexts, a cohort of researchers who have contributed a notable amount of work is based at Iranian institutions (29.13%). This is likely explained by the popularity of IELTS for the purposes of academic study (e.g., Erfani, 2012; Estaji & Banitalebi, 2023), and partly due to the unavailability of US-owned in-person competitor tests (e.g., TOEFL) (Saif et al., 2021). As with their UK and Australian counterparts, Iran-based authors were well-cited across the dataset, both in terms of citations per document (19.47) and accumulated AWCR (303.81). Nevertheless, such authors presented a contrasting profile to their UK and Australian peers in that they did not participate in co-owner-funded research. Instead, they approached IELTS from the outside (probably owing to local restrictions placed upon foreign funding), particularly through investigations of washback and impact published in language assessment journals (notably, the International Journal of Language Testing, based in Iran) and higher impact AL/TESOL periodicals (nearly all of which appeared to be international from the journal’s name or description). As with authors affiliated with Chinese institutions, promotion, hiring, and funding are strongly tied to academic publication (especially in SSCI/ESCI, English-medium, international journals). IELTS, as a globally recognised and well-documented test, provides a legitimate and internationally relevant object of study that allows Iranian researchers to achieve greater visibility and meet professional benchmarks, even when direct access to proprietary data is limited.

Reflecting the importance of the Chinese test-taking candidature by virtue of high international student numbers at institutions based in Anglophone countries (Cambridge Assessment English, n.d.), authors at Chinese institutions (including those in Hong Kong and Macao) featured prominently (13.53%). As with Iran, such authors tended to publish in impactful international AL/TESOL periodicals, along with education and “other” publications (typically humanities and social science-themed conference proceedings). The latter finding may reflect authors’ attempts to build research networks and to develop a formative profile as a stepping stone to more impactful outlets. It was apparent that China-based researchers were not always concerned with the large overseas Chinese student contingent. Such scholars also investigated samples of test-takers within domestic institutions in China, Hong Kong, and Macao (e.g., Gan, 2009), including transnational education partnerships (e.g., Ma & Chong, 2022). This may also explain the interest of United Arab Emirates (UAE)-based researchers, where IELTS has long been used to screen nationals for enrolment onto tertiary programmes at domestic institutions (Garinger & Schoepp, 2013).

Author-affiliated institutions

Over the past three decades, researchers affiliated with a wide array of organisations have contributed research into IELTS. Reflecting its primary role as a measure of English language proficiency for tertiary-level study, it was found that 94.07% of documents were authored by at least one individual affiliated with a university. A small proportion of studies featured authors from private educational companies (e.g., Cambridge English, the British Council, and research centres, 5.30%) or government bodies (0.94%). Despite the incorporation of internal IELTS research via SiLT, authors directly affiliated with the IELTS co-owners featured infrequently. This reflects a dynamic whereby research into IELTS for public consumption is primarily undertaken by academics, with potentially insightful insider voices restricted to confidential internal publications. This contrasts with comparable testing organisations (e.g., LanguageCert), who provide much empirical data from internal studies to an external audience.

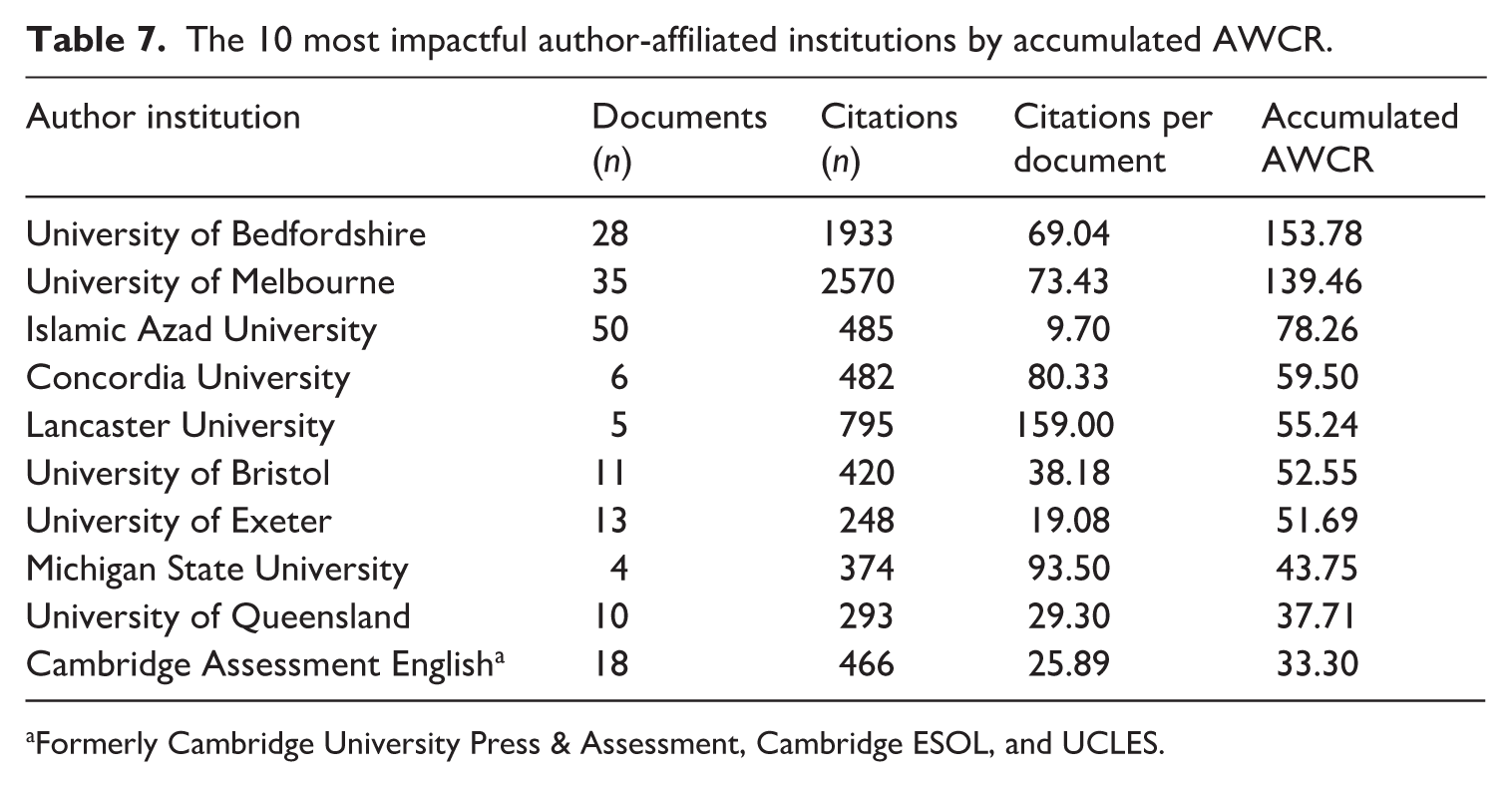

Table 7 highlights the 10 most impactful organisations that authors of IELTS research were affiliated with (by accumulated AWCR). Three institutions, the Islamic Azad University (IAU, n = 50), the University of Melbourne (n = 35), and the University of Bedfordshire (n = 28), indicated a notable body of IELTS research outputs. The latter two host specialist language testing and assessment centres, while IAU contains a large English language department. That the University of Melbourne publishes SiLA (formerly Melbourne Papers in Language Testing, at) contributed little to the institution’s ranking, given that only five studies were retrieved, most of which were older papers. Since IELTS originates in Australia and the United Kingdom, it is not surprising that the works of authors at other Australian (i.e., Macquarie, Monash, Queensland, Sydney, Adelaide) and UK institutions (Lancaster, Bristol, Exeter, Roehampton) comprise 50% of the most prevalent in IELTS research. Also of note are the small number of well-cited contributions from academics based at a select few institutions, including Lancaster University, UK (itself home to a renowned language assessment centre), Concordia University, Canada, and Michigan State University.

The 10 most impactful author-affiliated institutions by accumulated AWCR.

Formerly Cambridge University Press & Assessment, Cambridge ESOL, and UCLES.

Co-authorship

We found that IELTS research was a highly collaborative endeavour (63.65% of all documents), which may be explained by the interdisciplinary nature of such research (IELTS, 2025). A team approach allows researchers to draw upon a range of expertise, such as in psychometric testing, educational policy, language acquisition and learning, language teaching, and technology). Co-authorship was particularly prevalent in the case of sponsored research (74.66%). This reflects the reality where small teams of researchers band together to apply for an IELTS research grant to conduct and report on an in-depth study of the test. A notable majority of sponsored research collaborations (60.96% vs. 50.91% for external research) constituted domestically co-authored reports, for example, Chappell et al. (2015) and Rao et al. (2003). These often featured authors working within the same institution, likely due to institutional prerogatives to widen the range of research activities that contribute to global rankings. Rates of international collaboration were comparatively low, standing at 13.70% for sponsored research and 9.49% for external studies, represented in Sinclair et al. (2019) and Saif et al. (2021). There seems to be untapped potential for further collaboration in research, particularly as many of the issues and concerns faced by test users and takers (e.g., minimum language proficiency requirements, the effectiveness of IELTS preparation, test-taker anxiety) cut across national borders. On the contrary, further cross-contextual international collaborations may serve to reveal how the test and context interact to influence washback, which may help shed further light on the complexity and potentially contradictory findings of research (e.g., Saif et al., 2021).

Conclusion

This bibliometric analysis examined 641 studies of IELTS, the majority of which constituted external research situated in 270 discrete publications located at the international, national, and regional levels, many of which were not listed on an index, such as the SSCI, ESCI, and CPCI. This dispersion may pose location and retrieval difficulties for readers of IELTS research, along with raising the level of challenge in identifying high-quality research. The study revealed an explosion of interest in the test, which took off in a wide array of academic publications external to the IELTS co-owners after 2012, quickly surpassing sponsored research in the form of IRRs and SiLT, the traditional venues of research for public consumption since the 1990s.

The topic analysis revealed a wide range of concerns that both included and went beyond language testing principles (validity, reliability, etc.) as properties of a test. Instead, the prevalence of topic themes such as Test-takers and test preparation, Academic and professional contexts, and Language teaching and learning indicate a conception of IELTS testing with both evidential and consequential bases (Messick, 1989). The most prevalent and impactful research approach, and that which addressed a wide variety of topics, was MMs, thanks in part to funded sponsored research, which owing to its complexity and comprehensiveness, was often well-cited. In contrast, quantitative research featured impact measures (citations per document and AWCR) that fell short of MM studies (especially in older works) but were far in excess of qualitative approaches. The rarity and poor impact of qualitative approaches likely reflect the perceived necessity of incorporating quantitative data into investigations of IELTS, rather than weaknesses in qualitative methods themselves per se (since interviews were by far the most common research method featured). It could also be because many language assessment researchers may have received training using quantitative methods, owing to the strong positivist tradition in the field (Fulcher, 2014; McNamara & Roever, 2006).

We found that IELTS is a research concern that goes beyond features of the testing system and “inward” matters of measurement and quality. This is borne out by the fact that language assessment journals were not the most frequent nor always the most well-cited venues for IELTS research (with the exception of the citations per document measure). The findings showed that the profile of those who undertake IELTS research has changed (albeit has remained strongly collaborative), with a diversification away from authors in Anglophone contexts (particularly where the co-owners and others involved in test development are based) to EFL/ESL settings where test-takers and users are situated, further embodying a wider view of the test’s validity.

Implications for language testing

The sharp divide in research depth and access to testing data between sponsored and external research highlights a significant structural imbalance in how language testing knowledge is produced and disseminated, with implications for other large-scale, high-stakes testing organisations (at both national and international levels). The uncovered disparity facilitates a systemic asymmetry of information, where insiders hold access to large proprietary datasets and internal validation metrics that independent outsiders cannot replicate with small, local samples. Thus, the field risks a bifurcated knowledge base, where the technical truth of a test remains a closed-circuit conversation among insiders, with external researchers relegated to investigating the consequences of the test. To address this, the IELTS co-owners (and other organisations that publish sponsored investigations, e.g., ETS, LanguageCert) could move beyond existing selective funding models towards open-data initiatives. By releasing comprehensive anonymised datasets to the wider academic community, researchers could bridge this divide, ensuring that validation is not just a privileged internal exercise but a robust, peer-verified public discourse.

While this study’s findings are anchored in the specific trajectory of IELTS, they offer insights that, we believe, generalise to other large-scale, high-stakes language tests. Notably, the rising prevalence of research into the social dimensions of IELTS is indicative of a broader epistemological shift in the field, where validity is seen not merely from a psychometric orientation but as a moral and ethical obligation to ensure fair and just outcomes for all stakeholders (Kunnan, 2018). Alternatively, this can be seen as evidence that, as a test grows in candidature and stakes, its research base will likely expand beyond psychometrics to address the complex, lived realities of its test-takers and users. Furthermore, the trade-off between stability and innovation observed in the testing system—where the lack of major test revisions over a 12-year period catalysed a richer exploration of social consequences—is a phenomenon likely applicable to other established testing systems. On the contrary, the slow pace of research into the technological developments of IELTS does not generalise to competitor tests like the Pearson Test of English Academic or the Duolingo English Test because these systems rely foundationally on AI, automated scoring, and remote proctoring. Hence, the mediating role of technology is treated as an inseparable and primary focus of validation rather than as the digitalisation of a legacy paper-based system.

Limitations

We are cognisant of a number of methodological limitations to bibliometric analyses and our own approach. First, we emphasise that citation data are a blunt instrument and should not be fully equated to the quality of the works concerned. Given that we drew on data from a range of indices and incorporated 159 documents that were not listed on the five queried indices, we used GS citation data, the only impact measure common to all documents. GS citation counts incorporate higher frequencies of citations from non-journal sources as well as citations not listed on more robust indices (e.g., WoS, Scopus), which should be considered when interpreting the results. Relatedly, as we did not undertake a methodological appraisal of the included studies, we acknowledge that their quality is uncertain, especially for those papers from the 145 publications not listed on the SSCI, ESCI, or CPCI.

The absence of author-provided keywords in sponsored research meant that we had to rely on titles and abstracts to identify how authors characterise their research, which is a partial measure (Pearson, 2024). Likewise, as in other bibliometric studies (e.g., Hyland & Jiang, 2021; Lei & Liu, 2019), the identification of research approaches, topics, and in our case, topic themes was limited by being derived from cross-referencing keywords with document titles and abstracts. Since these fields are typically short (with abstracts limited to 200–250 words), they cannot comprehensively characterise the respective study, potentially leading to an underrepresentation of approaches and themes. This issue is less pronounced in IRRs, whose abstracts were often 50% longer (albeit this variation in relation to external research is acknowledged as a further limitation). Such a constraint could be addressed through manual analysis of full article texts, although this is not typical in bibliometric analyses owing to the size of the retrieved literature bodies.

While we acknowledge the epistemic limitations associated with bibliometric analyses, we also argue that the present study’s findings constitute an important foundation for further meta-research into IELTS and/or language assessment research more generally. For instance, the identified topic themes could be taken up by future researchers seeking to undertake systematic or state-of-the-art reviews, for example, into IELTS’ validity, test-takers’ preparation practices, and test uses in academic contexts. Furthermore, future researchers could incorporate the identified topic or methodological keywords (perhaps combined with other approaches, especially manual analysis) in order to generate a robust list of terms to deductively or abductively characterise the literature body through a comprehensive methodological review (perhaps retrieving full article texts). We are also of the view that the uncovered patterns of author-affiliations and co-authorship serve as a call to continue to diversify and innovate in research, perhaps with a greater focus on comparative studies that shed light on the nature of particular topic themes across contexts (e.g., Saif et al., 2021). A further strand of research could qualitatively explore some of the salient highlighted trends, addressing important “why” and “how” questions. One starting point is exploring and explaining the increasing interest in IELTS after 2012: what motivates scholars to focus on the test, and what dimensions of the testing system are particularly engaging to both authors and readers (to see if these align with the patterns identified in the topic themes)?

We believe that this study, the first to synthesise over 30 years of research into IELTS, will also be of practical value to students and academics of varying levels of experience. The findings emphasise the need for those seeking to identify relevant IELTS research to consult a wide range of indices and publications. By implication, the variety of publications that incorporate IELTS research and the frequent absence of SSCI, ESCI, or CPCI indexing of these indicate that readers need to adopt sufficiently critical perspectives (i.e., since quality papers may be published in lower-ranked publications and vice versa). Finally, we urge readers to stay abreast of developments in the area, given that the analysis showed that much new IELTS research has been published in recent years.

Supplemental Material

sj-pdf-1-ltj-10.1177_02655322261420501 – Supplemental material for A bibliometric analysis of research into the International English Language Testing System (IELTS)

Supplemental material, sj-pdf-1-ltj-10.1177_02655322261420501 for A bibliometric analysis of research into the International English Language Testing System (IELTS) by William S. Pearson and Minlin Minny Zou in Language Testing

Footnotes

Author contributions

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The first author has received funding through his university to run the Hornby Scholarship programme, which is administered by the British Council. The British Council is a co-owner of IELTS. In addition, the first author served as a language assessment specialist in the area of writing for a company contracted by the British Council from 2018 up until during the time this study was undertaken.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.