Abstract

While several test concordance tables have been published, the research underpinning such tables has rarely been examined in detail. This study aimed to survey the publically available studies or documentation underpinning the test concordance tables of the providers of four major international language tests, all accepted by the Australian Department of Home Affairs for Australian visa purposes. To evaluate the concordance studies, we first identified the good practice principles in concordance research through a review of both the relevant literature and leading professional standards in the field of educational measurement and language assessment. Next, we reviewed the concordance studies against the identified good practice principles. Our findings revealed that the information supplied by test providers varied, with some making the full research papers available, whereas others providing little information about their underpinning research. None of the concordance studies fulfilled all the good practice principles. Based on the findings of this study, we offer recommendations for future concordance research in the field of language testing as well as suggestions for practice.

Background

In language assessment, “linking” broadly refers to the practice of relating the scores or levels on two tests or aligning them to a language proficiency framework such as the Common European Framework of Reference (CEFR). When it comes to the former definition, linking can be categorized into three types: equating, concordance, and prediction. Equating, which has been the most discussed of the three types, involves relating scores on parallel or alternate forms of a test. Concordance, which is the focus of this paper, applies when linking scores from two different tests that measure related but different constructs (Kolen, 2004). Prediction, the third type of linking, involves relating the scores on two tests regardless of whether they measure related or different constructs, typically using regression techniques.

Language test providers are generally expected to link the scores or levels on their tests to those on other language tests because test users such as admissions officers or employers commonly accept results from multiple language tests as proof of an applicant’s language proficiency. Additionally, test takers may choose or be required to take different language tests due to various personal and situational reasons (Taylor, 2004) and may need to understand how the scores across tests are related. In consequence, there is a practical need to compare scores on different language tests.

While several concordance tables have been published to facilitate test score comparability (e.g., Clesham & Hughes, 2020; Educational Testing Service, 2010), the research underpinning these tables has rarely been examined by independent, qualified language testing experts and/or psychometricians. This, in consequence, has raised concerns over the rigor and usefulness of these concordance tables and, by extension, whether test users and policy-makers can rely on these concordance results for informed decision-making or policy formulation in areas such as admission into higher education, professional registration, or immigration. As noted by Cardwell et al. (2024), creating a concordance table between two language tests is a complicated process as different data sets and methodological choices might lead to substantially different concordance results. Therefore, it is essential to evaluate various aspects of the concordance process to ensure that the concordance results are justifiable, stable, and generalizable. Furthermore, it is also important to explore the information published by test providers for score users (e.g., test takers, admissions officers, and policy-makers), who should be provided not only with the concordance tables but also with (a) information about the methodology underpinning the concordance studies, (b) instructions on how to interpret the concordance results, and (c) any caution they need to exercise when using these results.

As a case study for exploring the quality of the concordance studies underpinning concordance tables as well as the information published for test users on test provider websites, we selected four tests (described further in the “Methodology” section) accepted for Australian visa purposes by the Australian Department of Home Affairs (n.d.). This scenario was selected to exemplify a policy context where several language tests are used for the same purpose. Our study followed three steps. First, we identified a set of good practice principles in conducting a concordance study through a literature review. Next, we applied these principles to evaluate the concordance practices of the test providers based on the studies and/or documentation on their websites. Finally, we offered recommendations for future concordance research in the field of language testing as well as suggestions for practice.

Good practice in conducting concordance studies

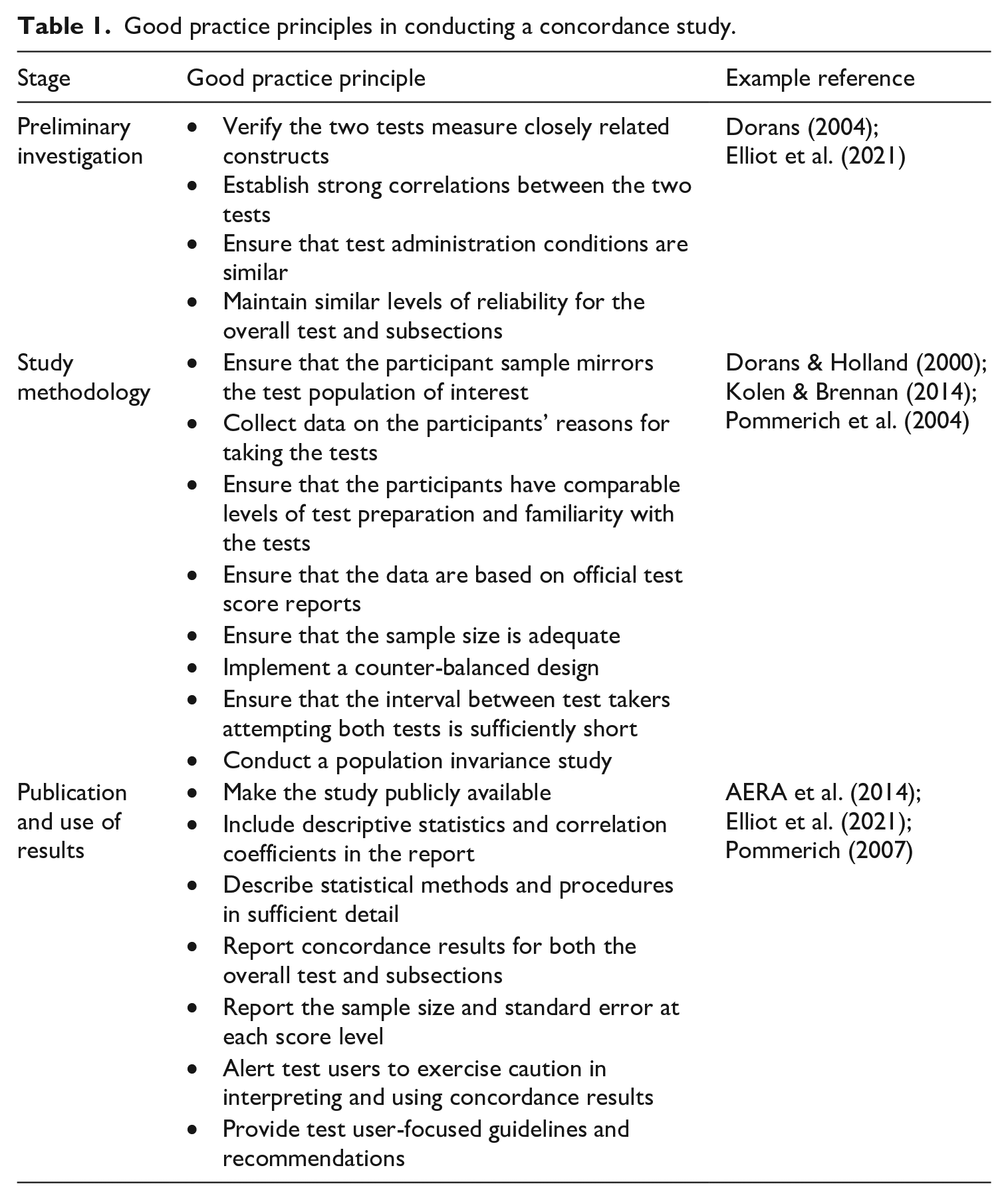

To identify good practice in concordance studies, we reviewed relevant research literature in the field of educational evaluation and language assessment (e.g., Dorans, 2004; Elliot et al., 2021; Pommerich, 2007) and consulted relevant guidelines in leading professional standards in the two fields (e.g., American Educational Research Association [AERA] et al., 2014; International Language Testing Association [ILTA], 2020). The good practice principles that we identified through the literature review span three stages of a concordance study: (a) preliminary investigation, (b) study methodology, and (c) publication and use of concordance results. Table 1 lists the good practice principles at each stage of a concordance study.

Good practice principles in conducting a concordance study.

As Table 1 indicates, before initiating a concordance study, a systematic comparison of the two tests in terms of test content, method, and constructs is necessary to ascertain whether concordance is the appropriate linking method. Concordance is deemed appropriate only when the tests measure related constructs, their content is judged similar, and a strong correlation exists between their test scores. Additionally, an evaluation of the administration conditions and reliability of the tests is required. Both tests should be properly administered and exhibit similar reliability indices at both the overall test and subsection level (e.g., listening and reading) to be suitable for a concordance study.

During a concordance study, it is crucial to ensure that the participant sample mirrors the test population of interest, thus making it possible to generalize the concordance results. Using a truncated sample, for example, by focusing only on test takers within a particular score range may undermine the claims made using concordance results. It is also necessary for researchers to collect data on the participants’ reasons for taking the tests, as well as their level of test preparation and familiarity, as these factors can significantly influence their test results. Ensuring that the data are based on official test score reports rather than self-reported data is also important. The size of the sample needs to be sufficient to create robust and stable score equivalences at different score levels. If achieving a sufficient participant number is challenging, a cumulative approach can be considered where an initial equivalence is determined and subject to continuous monitoring through ongoing data collection. A counter-balanced design is required to mitigate the potential order effect on test scores, and the interval between test takers attempting both tests needs to be sufficiently short (e.g., less than 3 months). Once the concordance table has been established, a population invariance study needs to be implemented to ascertain that the concordance results are invariant across different subpopulations defined by key attributes such as gender, proficiency level, and ethnic background relevant to the testing contexts (Pommerich, 2007).

When publishing and using concordance results, test agencies should ensure that the concordance report is easily available to the public. Descriptive statistics of test scores for both the overall test and subsections, including mean and standard deviation, need to be included in the concordance report. Where possible, these statistics should be compared with the population of interest. The report should also include the correlation coefficients between the two tests. In addition, the statistical methods and procedures (e.g., equipercentile equating, with pre- or post-smoothing) employed for creating the concordance table need to be described in sufficient detail to allow for replication. The concordance results should be presented for the overall test and each subsection. It is essential to detail the number of observations and standard error at each score point or level, as a limited number of observations can lead to unstable concordance results (AERA et al., 2014; Pommerich, 2007). Testing agencies also have the responsibility to alert test users to exercise caution when interpreting the results at the score points or levels with a small number of observations. Finally, to assist test users in interpreting the concordance results accurately and using them responsibly, it is incumbent on testing agencies to publish the studies underpinning the concordance tables along with clear, test user-focused guidelines and recommendations.

Our study aimed to explore the following research questions:

What information do the four test providers publish on their websites for test users interested in score comparisons? Are the concordance studies underpinning the concordance tables mentioned, and if so, are they publicly available?

How well do the concordance studies align with the good practice principles suggested by the relevant literature?

Methodology

Test selection

Four large-scale English language tests were included in the study: Cambridge C1 Advanced (C1), International English Language Testing System (IELTS), Pearson Test of English Academic (PTE-A), and Test of English as a Foreign Language Internet-Based Test (TOEFL iBT). The tests were chosen because they are all accepted by the Australian Department of Home Affairs for Australian visa purposes, including shorter-term visas (e.g., for study purposes) and permanent visas (i.e., migration to Australia; see Australian Department of Home Affairs, n.d.). Given that these tests are generally well known globally and the limited space in this brief report, we do not provide detailed information about the design of each test.

Procedures

We first canvassed the websites of the four test providers to ascertain the information they provide regarding concordance tables, including (a) which tests have available concordance tables with other tests and (b) whether a concordance study or other information about the concordance table (e.g., user-friendly advice on how to use the table and cautions around use) is available to test users. We then carefully read the concordance studies provided or mentioned on the websites and coded the relevant sections based on the good practice principles (see Table 1). Our coding process involved identifying whether each good practice principle was fulfilled or not (indicated by a tick or cross, see Appendix 1). Both authors independently coded the research studies, with an inter-coder reliability above .90.

Results

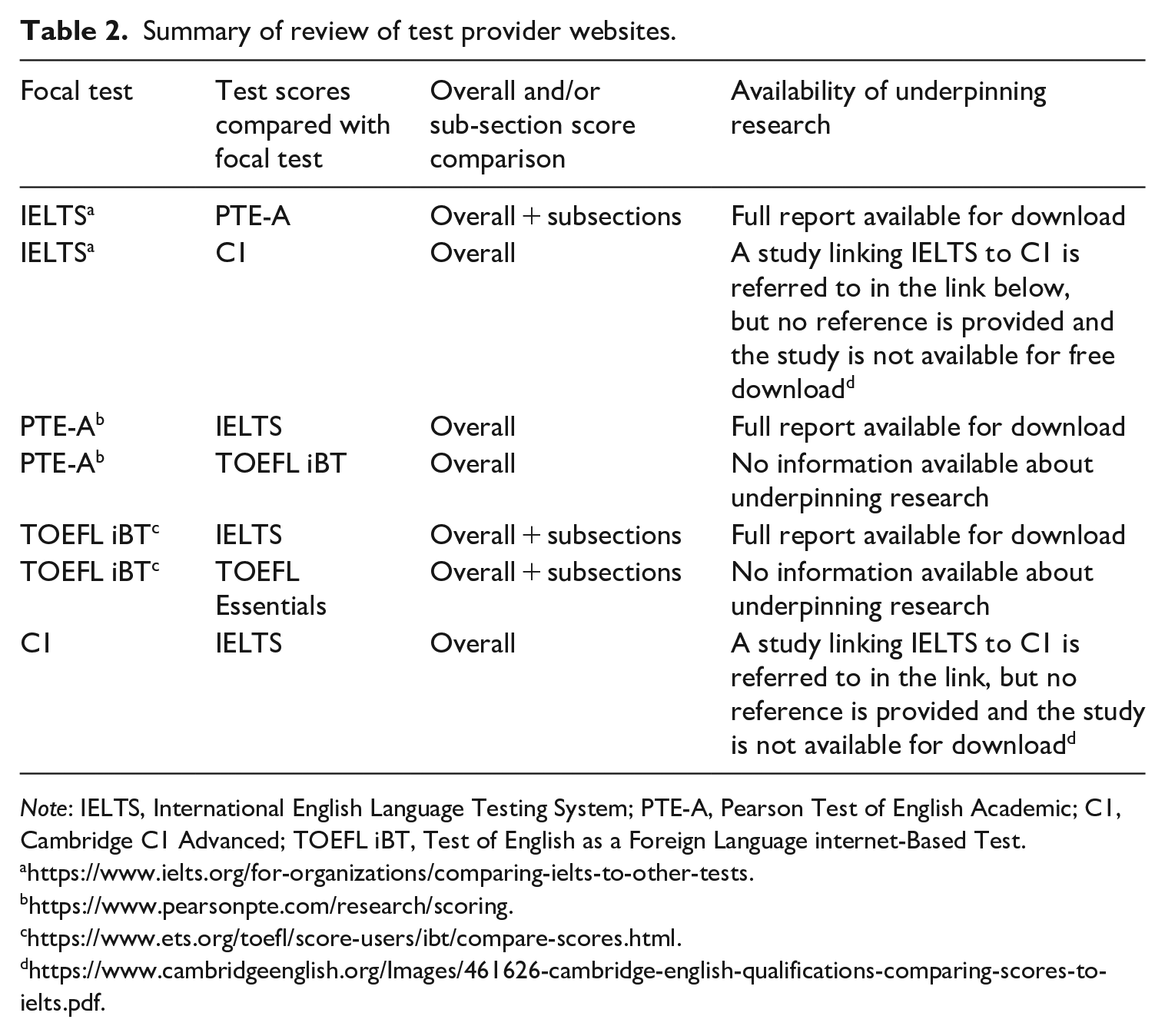

To investigate research question (RQ) one, we summarized the information (see Table 2) that the four test providers include on their respective websites. The first column denotes the test provider websites that we examined (referred to as focal test), followed by the names of the tests to which concordance tables are presented on the focal test website (second column). The third column indicates the level of detail that is provided for score comparisons (i.e., whether only overall scores are compared or whether subsection comparisons are available). The final column provides information about what underpinning research supporting the concordance tables is provided on the websites. As Table 2 indicates, the information provided to test users about test concordance differs. While some provide comparisons between overall scores only, others include comparisons between overall scores and subsection scores. Three of the test providers provide concordance tables to two other tests, while C1 only provides score comparisons with IELTS. Some providers publish the full reports detailing the research underpinning concordance tables, while others either provide no information or the information is fairly vague.

Summary of review of test provider websites.

Note: IELTS, International English Language Testing System; PTE-A, Pearson Test of English Academic; C1, Cambridge C1 Advanced; TOEFL iBT, Test of English as a Foreign Language internet-Based Test.

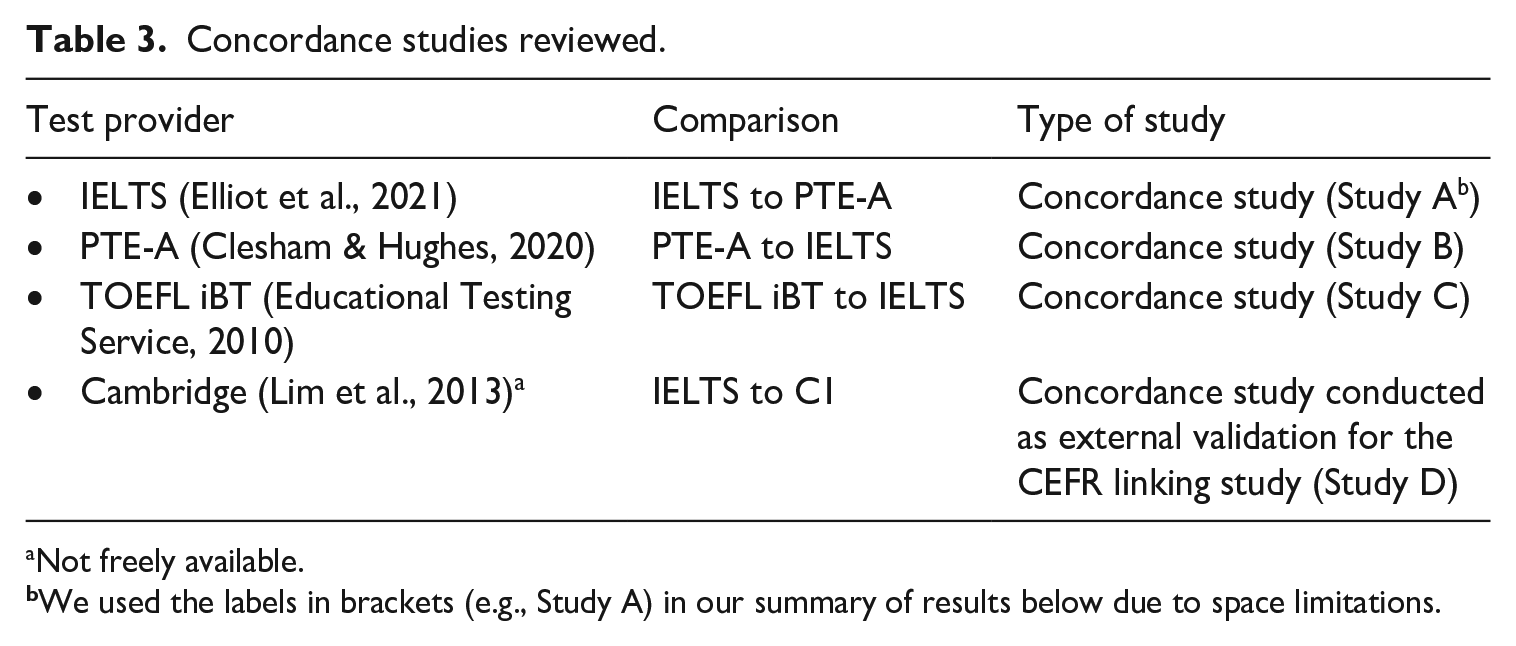

To investigate RQ2, we carefully reviewed the research reports available on the test provider websites (see Table 3) to determine whether these met the good practice principles for concordance research. We included all freely available concordance studies as well as the study linking IELTS to C1, despite this only being available in a peer-reviewed journal behind a paywall.

Concordance studies reviewed.

Not freely available.

We used the labels in brackets (e.g., Study A) in our summary of results below due to space limitations.

The full evaluation of the studies against the good practice principles is detailed in Appendix 1, which is broken down into tables for each of the three stages of a concordance study: preliminary investigation, study methodology, and publication and use of results (see Table 1). The results in the tables in Appendix 1 clearly show that, based on the information available in the reports, few of the good practice principles were fulfilled by the four studies under investigation.

In terms of the preliminary investigation, only Study A reported a comparison of constructs (published separately). The correlations for most test pairs were low, particularly for some of the subsections, arguably too low to even proceed with the concordance study. Although correlations were reported as part of the main study, none of the reviewed studies considered them as a preliminary investigation to ascertain whether concordance was the appropriate linking method. Furthermore, no test providers compared the test reliability statistics before proceeding to concordance, except for Study B, which noted that both tests seemed sufficiently reliable.

The study methodologies we examined also varied greatly and most good practice principles were not or only partially fulfilled. All studies, apart from Study C, had relatively small sample sizes and, where mentioned, these samples rarely fully represented the test taker population of interest and typically lacked a sufficient number of low-scoring students for meaningful comparisons. Only Study B claimed to have captured a representative sample of the overall test taker population, although its sample size fell short of the good practice principle. None of the studies reported collecting data on the participants’ reasons for taking the tests. They either did not mention whether the scores used in the analysis were drawn from official score reports (Studies A, C, and D) or noted that only some scores were verified (Study B). The interval between the participants attempting the two tests was mostly within 3 months; however, two studies (Studies C and D) did not mention this. Additionally, only Study D reported full counter balancing of the order of testing. Study B controlled this for half the sample, while the other two studies (Studies A and C) did not address this aspect. Three out of the four reports did not mention participants’ test preparation or familiarity with the tests (Studies A, C, and D), while Study B included this information for half of its sample.

The reporting of descriptive statistics and correlations across both overall and subsection scores varied across studies. For example, Studies A and D provided no descriptive statistics but correlations for both overall and subsection scores, while Study B reported descriptive statistics for overall scores but not for subsection scores. Study C was the only one that reported descriptive statistics and correlations for both overall and subsection scores. While all studies reported the statistical method used for concordance (i.e., equipercentile equating), the details of the concordance procedures were not fully transparent. For example, it was unclear whether Study C implemented any pre- or post-smoothing procedures in their concordance process. None of the studies included a population invariance study, possibly due to the small sample sizes in their main studies.

The quality of reporting and use of results of the concordance results also varied. Study B reported the concordance results for overall scores only, while Studies A and C also reported the results for subsection scores. Study B included the number of observations at score levels without reporting standard errors. Conversely, Study A reported standard errors without mentioning the number of observations. Studies C and D did not provide either. Among the reviewed studies, only Study C advised caution around the use of concordance results in their research reports and on their websites, highlighting that the concordance results at the outer levels (e.g., at IELTS Levels 5 and 8) should be used with caution. This was also visually indicated by the shaded sections in the concordance table. Notably, none of the four studies presented their findings in accessible ways for non-specialist test users, such as policy-makers.

Discussion and conclusion

In this study, we evaluated the current concordance practices of four major providers of English tests against the good practice principles in concordance research. Our findings indicate that the information provided on the test provider websites about concordance tables is often vague or insufficient. Test users are not always provided with the research underpinning these concordance tables. When such research is provided, it tends not to fulfill the good practice principles and is usually presented in formats not easily accessible to non-specialist test users.

Our review of the concordance studies suggests that preliminary investigations are often insufficient, and the methodologies for data collection tend to fail to adhere to the good practice criteria. For example, the sample sizes are generally too small to provide robust score comparisons. Basic information is often not provided, such as concordance results for subsection scores (which are crucial for the requirements for Australian migration and other policy-makers), the number of observations at different score levels, and their standard errors. Test users are not usually informed about the potential limitations of using published concordance tables. The findings are concerning, as these results may be used to inform high-stakes decisions that significantly impact test takers.

One possible reason for the lack of information and rigor in this area is that these studies and the creation of the concordance tables are essentially driven by the test providers themselves rather than by an independent body. It is therefore likely that test providers draw on existing data from their test taker databases rather than collecting new data specifically for this purpose, as this is costly and time-consuming. Hence, many analyses are based on convenience samples. At the moment, there is little motivation to invest in more robust concordance studies due to the absence of regulatory oversight and minimal demand for high-quality work from test users. It is also important to note that concordance tables are one site in which competition between test providers manifests, who may have a commercial interest in lowering their test scores to make it easier for applicants to achieve certain test score requirements. Test score users also encounter the challenge of having to reconcile different concordance tables, a situation exemplified by the recent IELTS—PTE-A comparison studies (i.e., Studies A and B in our study). The resulting concordance tables showed significant discrepancies at certain score levels, likely causing confusion for test users.

Based on our findings, we make the following recommendations for future concordance research in language testing. First, testing agencies should make the complete results of their concordance studies openly available. This includes the technical details required for a comprehensive evaluation by experts (e.g., language testers and psychometricians), and an accessible summary for test users (e.g., policymakers). Such information should be easily available on the test provider websites, eliminating the need for users to navigate through multiple additional links or to purchase materials behind paywalls.

When planning a concordance study, preliminary investigations should determine whether concordance is the appropriate linking method. Test providers should alert test users to possible cautions around the use of concordance results. The concordance study methodologies should adhere to the good practice principles set out in this paper. It is important for the test provider to include concordance tables for both overall and subsection scores in the concordance report, and provide clear guidelines to test users around the level of confidence in the concordance results at different score levels. Finally, it is important for language testing researchers to consider how to develop resources and activities to enhance policy-makers’ understanding and ability to make better-informed decisions when using test concordance results.

Footnotes

Appendix 1

Author contributions

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: During the last five years, the first author, Ute Knoch, conducted assessment-related research or consultancy work for the following organisations: Educational Testing Service (ETS), IELTS, Pearson, Cambridge Boxhill Language Assessments, Australian Department of Defense, Australian Civil Aviation Safety Authority, Australian Health Practitioner Regulation Authority, Benesse Corporation, Australian Department of Home Affairs. She served, until 2021, on the Pearson Technical Advisory Board and is the current test review editor of Language Testing. The second author, Jason Fan, conducted assessment-related research, advisory, or consultancy work for the following organisations: British Council, Pearson Education, PeopleCert, Cambridge Boxhill Language Assessment, Educational Testing Service, and Language Training and Testing Centre (LTTC).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.