Abstract

We describe the development and initial validation of the “ASL Fingerspelling and Number Comprehension Test” (ASL FaN-CT), a test of recognition proficiency for fingerspelled words in American Sign Language (ASL). Despite the relative frequency of fingerspelling in ASL discourse, learners commonly struggle to produce and perceive fingerspelling more than they do other facets of ASL. However, assessments of fingerspelling knowledge are highly underrepresented in the testing literature for signed languages. After first describing the construct, we describe test development, piloting, revisions, and evaluate the strength of the test’s validity argument vis-à-vis its intended interpretation and use as a screening instrument for current and future employees. The results of a pilot on 79 ASL learners provide strong evidence that the revised test is performing as intended and can be used to make accurate decisions about ASL learners’ proficiency in fingerspelling recognition. We conclude by describing the item properties observed in our current test, and our plans for continued validation and analysis with respect to a battery of tests of ASL proficiency currently in development.

Keywords

Introduction

As the first and largest technological college for students who are deaf and hard-of-hearing, the National Technical Institute for the Deaf (NTID) uses American Sign Language (ASL) as its primary language of instruction, as do many deaf schools across the United States and parts of Canada. Since ASL is the language of instruction, it is necessary for staff, faculty, and interpreters to be able to communicate effectively in the language. However, many staff and faculty members have varying levels of ASL proficiency when first hired. If needed, ASL-English interpreters are provided for an initial period after hiring, but for the institute to function smoothly, long-term employees must be able to communicate independently. This unique situation gives rise to the need for assessment instruments that can attest to candidates’ proficiency levels. In this college-level environment, the communicative stakes are high. The lack of necessary proficiency to function in a professional capacity could easily result in harm to deaf students and staff. Assessing the working proficiency of second language learners of ASL helps raise the professional standards for working in a primarily ASL environment.

ASL has its own grammatical constructions, phonotactic constraints, and modality-specific articulatory and perceptual features, different from those of English (Klima & Bellugi, 1979; Stokoe, 1960). These facts necessitate the view that people who know and use ASL and English are bilinguals who must learn to master two separate languages in two different modalities. To test receptive proficiency, acquisitional trajectories, and expressive competency, ASL requires its own set of assessments, developed with the features of ASL in mind. Few such assessments exist (cf. Smith et al., 2019; Von Pein & Altarriba, 2011), and many that do are designed for assessing child language proficiency (e.g., the ASL-PA; Maller et al., 1999; Mann et al., 2016), not adult proficiency. Therefore, NTID set out to develop a set of assessment instruments with the intended use of making high-stakes decisions about employee retention based on ASL proficiency within the context of higher education. In addition to the Sign Language Proficiency Interview (SLPI) (Caccamise & Newell, 1995; Caccamise & Samar, 2009) which assesses interpersonal communicative ability, NTID is developing separate tests to measure academic vocabulary knowledge, discourse-level comprehension, and the ability to understand fingerspelled words and phrases. This paper focuses specifically on the development of the “Fingerspelling and Number—Comprehension Test” (FaN-CT), and our evaluation of the test’s suitability for its intended interpretation and use as a screening instrument for current and future employees. After first describing the construct, we will proceed to document test development including the application of Item Response Theory (IRT) models and the evaluation of the validity argument of the test.

ASL L2 acquisition

For many second language (L2) ASL learners, the acquisition of ASL also necessitates the acquisition of a second modality of language use. Learning to process linguistic information in the visual modality requires attention to the specific visuospatial properties of the grammar. Rhythmic timing, prosodic cues, and phonological contrasts do not necessarily have directly transferable properties from a spoken first language (L1) (Jacobs, 1996; Ortega & Morgan, 2010). One feature of ASL that is particularly difficult for second modality-second language (M2L2) learners is the acquisition of fingerspelling (Geer & Keane, 2018). Fingerspelling is a system of signs used to render orthographic features of spoken languages pronounceable in signed languages (Padden, 1991; Padden & Gunsauls, 2003). Depending on the spoken language’s writing system, fingerspelling can use individual handshapes to encode letters, characters, symbols, syllabic units, or phonetic features. ASL uses a one-handed system, meaning that fingerspelled handshapes are typically produced (primarily) on the dominant hand, and they are used to represent the orthographic features of American English in ASL.

Types, functions, and frequency of fingerspelling in ASL

In this section, we will introduce the construct of fingerspelling. If fingerspelling was as easy as recognizing 26 letters, individually articulated, then measuring their receptive skills would not be very challenging. However, fingerspelling is more than just the interpretation of individual letters. The ability to decode fingerspelling also includes the phonotactic rules, coarticulated features, and conventionalized processing of multiword units, described in the section below.

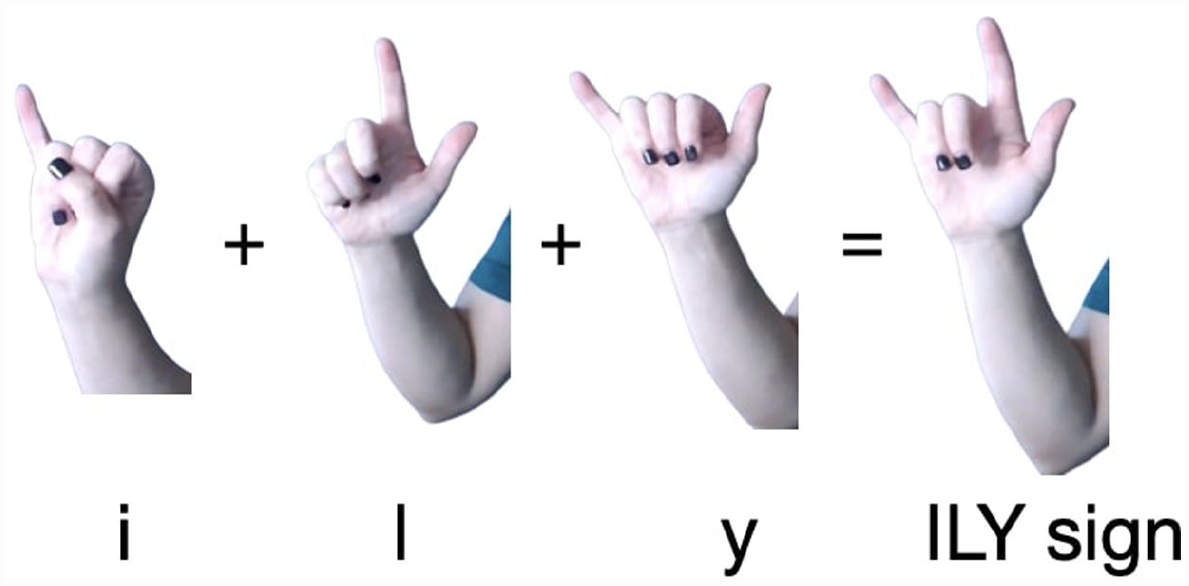

As a linguistic mechanism that allows for borrowing specific words and phrases into the visual modality, fingerspelling is multifunctional. While fingerspelled words are sometimes formed by stringing together sequences of individual letters for confirming or presenting new information, this careful fingerspelling represents only one fingerspelling function. Wilcox (1992) suggested that the more common functions of fingerspelling are to borrow words into fluent discourse or make spoken language pronounceable in a signed language. Rapid fingerspelling represents most of the fingerspelling used in discourse and is most often used with second and subsequent mentions of fingerspelled words (Patrie & Johnson, 2011). 1 Zakia and Haber (1971) suggested that skilled signers need not attend to individual letters in a fingerspelled word, and instead pay attention to the pattern of the finger configuration. Since coarticulation is common in rapid fingerspelling, individual handshapes undergo many allophonic changes as well as major phonological mergers of canonically individual letter handshapes. Thumann (2012) showed that frequently co-occurring letter combinations such as i-l-y are often articulated as a single unit, a simultaneous articulation of the letters, in this case, a handshape referred to as ILY (Figure 1).

Individual ASL handshapes I, L, and Y as well as the simultaneous articulation of the unit ILY.

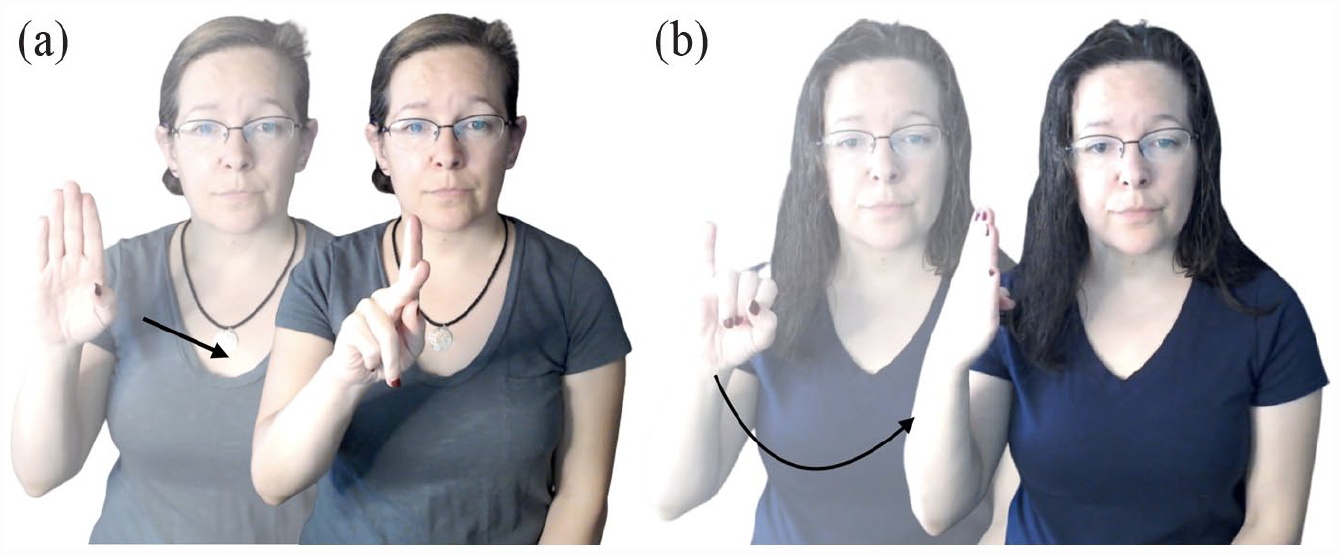

In addition to the careful and rapid varieties, there is also conventionalized fingerspelling, also referred to as lexicalized fingerspelling (Battison, 1978) or fingerspelled loan words (Brentari & Padden, 2001; Lepic, 2021). For continuity, we will refer to these signs as “conventionalized” but see Lepic, 2019, for why these signs are more aptly named “highly restructured signs”). Conventionalized fingerspelling refers to frequently fingerspelled words whose phonologically regularized or reduced forms become recognizable lexical items in ASL. Examples of conventionalized fingerspelling in ASL include the sign #BACK and #JOB (Figure 2(a) and (b)) both of which exhibit the weakening or deletion of the second handshape and changes to the movement of the fingerspelled handshapes. 2

(a) ASL conventionalized fingerspelling sign #BACK “to return to.” (b) ASL conventionalized fingerspelling sign #JOB “job.”

So-called conventionalized fingerspellings are signs that have undergone phonological reduction or deletion, changes in movement or orientation of articulatory units, and assimilation of handshapes into intermediate allophonic variants. These highly reduced forms can also take on more varied grammatical functions becoming multifunctional, unlike the more restricted functions of rapid and careful fingerspelling. Padden (2005) has suggested, for example, that careful and rapid fingerspelled terms can exist alongside conventionalized fingerspelling as pairs of functionally different forms. She gives the example of F-A-X, which refers to the noun “fax” and the “nativized” or conventionalized form #FAX, which functions as a verb. The verbal form of #FAX can grammatically encode information about the sender and receiver of the message, through the use of grammatical location of the signs’ beginning and ending points of movement (Padden, 2005, p. 190).

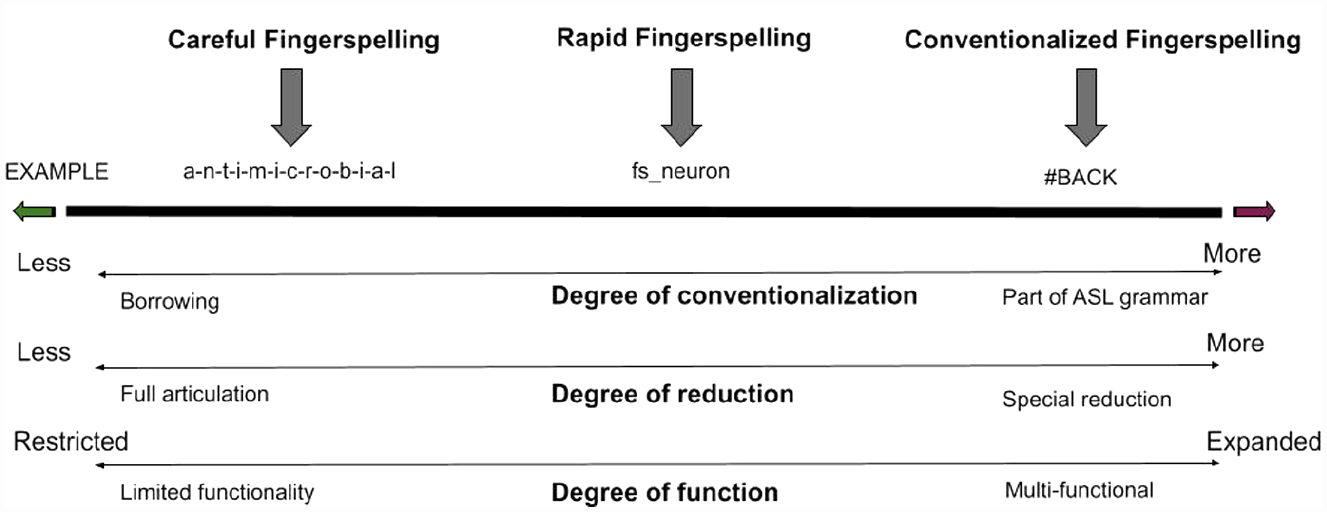

It should be noted that while we have labeled these examples (careful, rapid, and conventionalized fingerspelling) as different types, they are not strict categories of signs which exhibit necessary and sufficient conditions for category status. These labels are simply a way to describe a constellation of phonetic properties that co-occur, including, for example, duration of articulation, handshape assimilation, or orientation changes. Conventionalized fingerspelling, by definition, occurs most frequently. These signs have more opportunity to occur in more constructions and, thus, have more opportunity to reduce. The phonological features that define these categories are gradient and depend on several social, functional, linguistic, and communicative pressures in a given discourse context. Some fingerspelled tokens may have some but not all the characteristics of a given variety of forms (careful, rapid, and conventionalized). Figure 3 models this continuum of phonetic reduction using examples from the FaN-CT test bank to mark points on the fingerspelling continuum. On the left side of the figure is an example of careful fingerspelling. The word a-n-t-i-m-i-c-r-o-b-i-a-l has no articulatory reduction, as such, the full articulation of each handshape representing each letter in the English word “antimicrobial” is present. At the center of the figure, the fingerspelled sign fs_neuron represents an intermediate degree of phonetic reduction. This form has undergone handshape and movement assimilations characteristic of rapidly fingerspelled words. On the right side of the figure, the word #BACK meaning “to return to,” represents a word which, due to frequent use, has become highly restructured. This is evidenced by reduced articulation including the elision of multiple handshapes, and changes in movement. #BACK has also become integrated into the grammar of ASL having become part of the directional verb construction which uses space to encode participants or locations (see Figure 2(a)). These distinctions will become important in the discussion of scoring and analysis of the test later in the paper.

Fingerspelling cline shows points along the continuum of articulatory reduction, conventionalization, and function.

Like fingerspelling, number signs in ASL often include linear sequences of individually articulated handshapes, which, when articulated rapidly, undergo lenition and assimilation. Like fingerspelling, number signs also undergo the same processes of conventionalization, routinization, and entrenchment, such that processing numbers are not always analyzable as simple sequences of individual handshapes. Indeed, some signed languages consider numbers to be part of their fingerspelling system. In ASL, Numbers 1–9 each have an individual handshape, much in the same way that the Letters A–Z have individual dedicated handshapes. 3 Pedagogical evidence for numbers being part of the fingerspelling system in ASL exists in as much as ‘Fingerspelling and Numbers’ are often singled out and taught as their own course to provide students with additional instruction which focuses on these difficult to develop language features, outside of the regular exposure they receive in a basic language course sequence. Due to the increased relative sequentiality of both fingerspelling and number signs in ASL, the authors consider decoding of number sequences to be on par with comprehension of fingerspelling in terms of task dynamics, cognitive demand, and importance for effective communication. Figure 4 demonstrates the sequential nature of many numbers in ASL with the sign for the year ‘1976.’ The interaction of individual components means that, for example, the sequence 1976 may not be of equivalent difficulty to the sequence 7619 or similar, because the coarticulatory features will differ. Therefore, in order to create parallel forms of the test, we had to empirically test the difficulty level of candidate items.

The ASL number sign for the year “1976.”

In terms of quantifying the importance of fingerspelling within the grammar of ASL, estimates of discourse frequency range from between 10% and 15% of an annotated corpus (Padden & Gunsauls, 2003), to a more conservative, 3.3% to 8.7% of discourse depending on genre and register (Morford & MacFarlane, 2003). But even as estimates of the frequency of fingerspelled words vary by corpus, they are always so frequent as to be necessary for any level of communication in ASL discourse.

Challenges to successful L2 acquisition of fingerspelling

Despite the relative frequency of fingerspelling occurrence in ASL discourse, M2L2 learners still struggle to learn to produce and perceive fingerspelling more than they do other facets of ASL (Quinto-Pozos, 2011; Wilcox, 1992). Hanson (1981) demonstrated that fingerspelling is challenging even for skilled L2 signers and that English spelling is sometimes challenging for deaf participants. In fact, deaf participants correctly identified fingerspelled words 92.9% of the time, but only correctly spelled their English equivalents in 62.9% of cases (Hanson, 1981). This observation will become important later when we discuss scoring and analysis of the test.

Several factors contribute to the difficulty of fingerspelling comprehension. First, many frequent letter combinations are encoded using a single handshape as in the case of ILY as in the English suffix -ily (Figure 1 above). Additionally, fingerspelled units must be understood dynamically according to their phonetic environment. For example, some letters employ the same handshape and are distinguished only by palm orientation: for example, letter pairs -g- and -q-, -h- and -u-, and -k- and -p- (see Figure 5). However, within a fingerspelled word, the palm orientation of -g- or -q- may assimilate to the letters before and after such that the sign for -g- within one word might be nearly identical to -q- in another.

The ASL handshapes -g- and -q- vary only in their orientation.

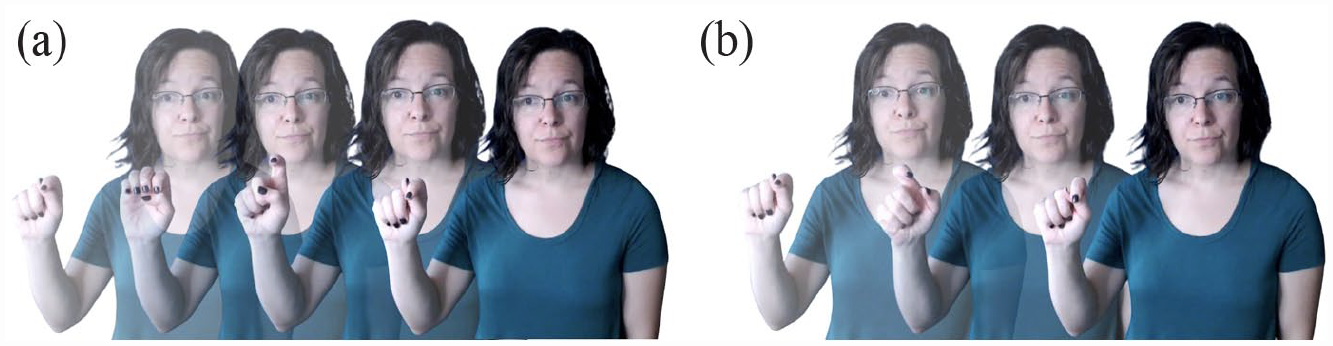

Finally, the articulatory targets of fluid fingerspelling arise from assimilation between letters as signers adapt the word to the phonotactic constraints of ASL. For example, certain features of letter signs may be altered or elided because the sequence of certain handshapes is phonotactically illicit, meaning that the underlying form must be recovered from the transitions between them. But M2L2 signers are generally unable to make use of these important phonetic cues without explicit instruction (Geer, 2020). Figure 6(a) shows how each letter of the word “text” is faithfully represented by a single handshape articulation. Figure 6(b) shows the rapid fingerspelling of the same word fs_TEXT, in which the English letters “e” and “x” are produced with a coarticulation resulting in a single articulatory unit that has features of both but is not individually recognizable as either.

(a) ASL “T-E-X-T” in careful fingerspelling form. Note that each individual letter of “text” is represented by a single handshape. (b) ASL “fs_TEXT” in rapid fingerspelling form. Note the reduction in form with -e- and -x- handshapes being coarticulated.

Overall, the task of decoding fingerspelling requires the mastery of several different but related skills. As Geer (2016) suggested, “successful fingerspelling comprehension hinges on the ability of the visual system to quickly reconcile sometimes subtle and highly variable changes in handshape and/or orientation” (p. 14). Both spoken and signed language L2 users have overall less exposure, fewer contact hours and language models, and less opportunity to glean these important phonetic cues from iterated usage contexts. However, the ability to comprehend the use of fingerspelling in ASL is a central construct for attaining communication. As such, the ability to test the comprehension of ASL is a much-needed and highly desired assessment tool.

Development and validity argument

The ASL FaN-CT is the first test of its kind intended to measure the comprehension of ASL fingerspelling and numbers in L1 and L2 deaf and hearing populations. 4 In constructing the FaN-CT, we followed an argument-based approach to validity (Chapelle, 2012), in which we first explicate the intended interpretations and uses of test scores, and then evaluate the plausibility of those intended interpretations and uses, keeping in mind possible challenges to them (Davies, 2012). Importantly, the FaN-CT is not intended to be used in isolation, but rather as one part of a battery of assessments, each focusing on different aspects of ASL proficiency. The FaN-CT specifically focuses on whether university employees have reached a level of proficiency necessary to function as independent members of the ASL community at this institution with respect to understanding fingerspelled words and numerals. In the target language use (TLU) domain of classes, meetings, and other interactions at the institution, 5 fingerspelled terms span the continuum from careful citation to conventionalized units. While asking for clarification is still common, repeated failure to understand would render fluent conversation impossible, and since many important distinctions rely on precise decoding, successful communication requires that ASL users make accurate judgments quickly. Thus, a minimally proficient test-taker would be able to correctly identify both carefully fingerspelled and conventionalized words and phrases usually without external help or the benefit of multiple choices upon seeing it for the first time. Information on how close test-takers are to that level is potentially important for diagnostic purposes, but the primary use of the FaN-CT is as a criterion-referenced assessment: endorsing candidates who meet the institution’s criteria for proficiency, and correctly identifying those who need further study.

Drawing on Chapelle (2012) and others, we have drawn up a validity argument as a chain of inferences, where the strength of the validity argument is dependent on the supporting evidence for each inference in the chain. Working backward, there is one primary intended use of the test: certifying those and only those candidates who are able to understand fingerspelled ASL. FaN-CT pass/fail decisions are intended to bring about the outcome of the institution retaining all and only those employees who can communicate in ASL, thereby promoting its use within the community and promoting the interests of stakeholders, especially deaf students. To bring about that outcome, the cut score must be set at an appropriate level to minimize classification errors. The test scores must also be fair with respect to groups, meaning that expected scores for ability-matched test takers do not differ as a function of construct-irrelevant test-taker characteristics such as sex, religion, or place of origin. Variation in the ability estimates obtained from the IRT model must, therefore, reflect variation in fingerspelling ability. For this to be so, the IRT model used to analyze test scores must be appropriate for the test and exhibit sufficient model fit. Those scores in turn must be derived using reliable and appropriate methods for scoring test taker responses to items. Finally, the items test takers respond to must be selected, created, and administered such that the test task represents the breadth and difficulty of the TLU domain task.

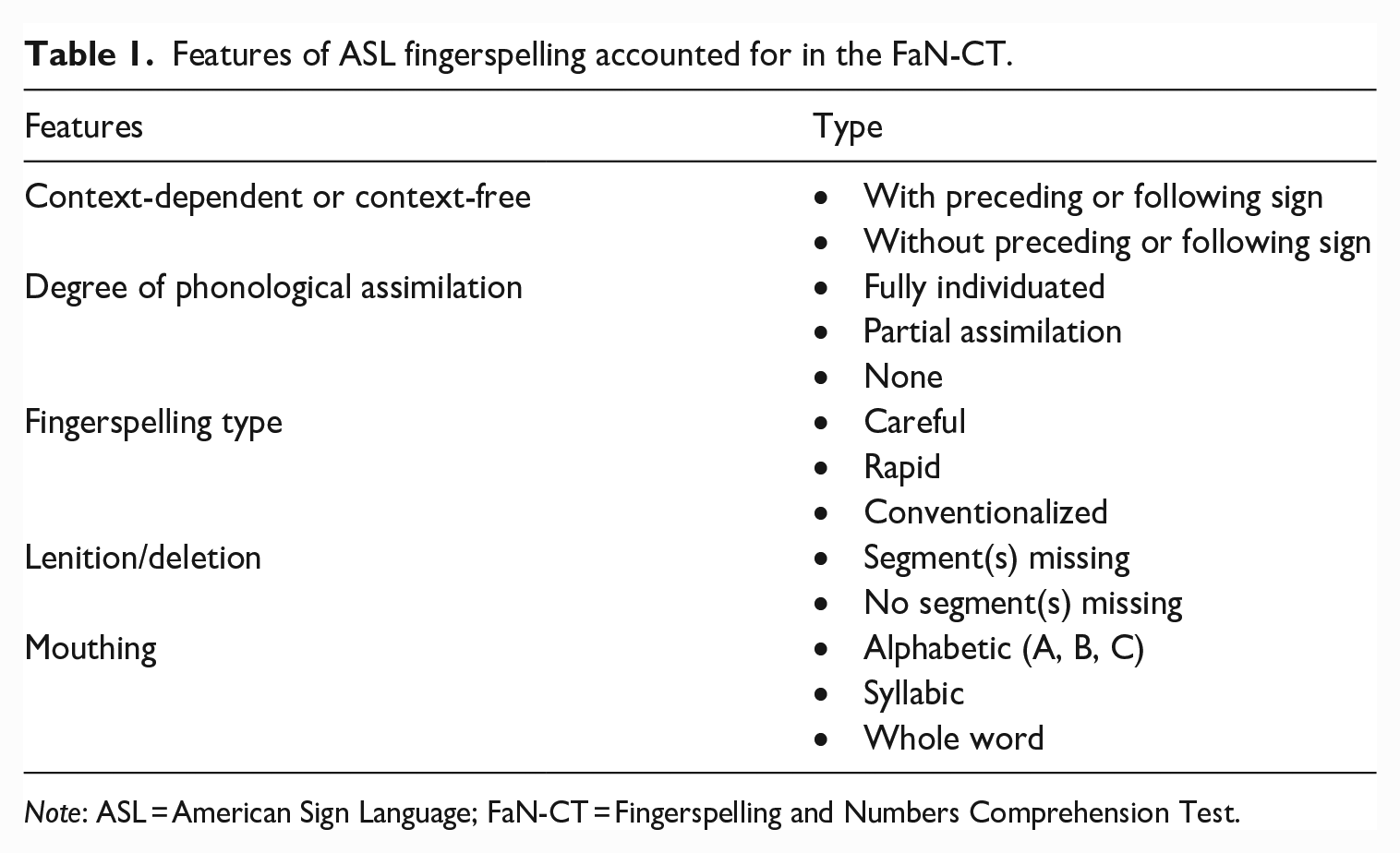

Domain selection

In the development of this test, we considered several features which may contribute to the ease or difficulty of ASL fingerspelling comprehension including word length, fingerspelling type (careful, rapid, conventionalized), degree of phonological reduction, signer type, and the presence or absence of mouthing. For instance, careful fingerspelling might not discriminate between novice and advanced signers and therefore would not be informative. Similarly, longer items with more segmental content might be more difficult, but also not discriminatory, becoming an attention task rather than a comprehension task. Additionally, we included items that have an immediate context, with a sign either preceding or following the fingerspelled or numerical sequence. It is possible each of these features contributes to the ability to comprehend fluent fingerspelling and number articulation, or it may be that some of these fingerspelling features are more informative than others in indicating proficiency in fingerspelled items and, by proxy, ASL more generally. Table 1 outlines the features which were coded for in the development and analysis of FaN-CT. This table can be read in the following way: For each feature on the left, there are between one and three values indicated by the feature types on the right. For instance, the “Degree of phonological assimilation” can be “fully individuated,” “partial assimilation,” or “none.”

Features of ASL fingerspelling accounted for in the FaN-CT.

Note: ASL = American Sign Language; FaN-CT = Fingerspelling and Numbers Comprehension Test.

Item selection

While theoretically any English word could be rendered into ASL fingerspelling, there are many words that are much more likely to be used. To ensure the test items were as realistic as possible, all items were extracted from existing examples of fingerspelling in the real world, from three types of resources:

Real fingerspelled signs extracted from NTID’s DeafTEC STEM Sign Video Dictionaries (videos of definitions and entry words in context sentences) (2018)

6

DeafTEC BIO = DeafTEC STEM Sign Video Dictionary (Lab Sciences) DeafTEC IT = DeafTEC STEM Sign Video Dictionary (Information Technology) DeafTEC Math = DeafTEC STEM Sign Video Dictionary (Math)

Real fingerspelled words extracted from ASL newscasts Brick City News Daily Moth Sign1News

Real fingerspelled signs extracted from ASL corpora/databases Morford and MacFarland (2003) ASL corpus Lepic (2018) Database of Fingerspelled Signs

In total, 683 fingerspelled or number signs and multi-word expressions containing fingerspelled items were extracted. Number signs included number + sign sequences as in the example “5 CATS” and sequences of numbers such as “1976” (see Figure 4). Fingerspelled items were then grouped into categories based on whether they were bare units or part of multi-word expressions, whether they exhibited assimilation (one piece of evidence of conventionalization), whether the multi-word expressions contained the fingerspelled or numerical element as the first or second part of the expression, whether the fingerspelling was an abbreviation (as in the case of state names KS, KY), and according to the length of the target word (less than or equal to five letters or more than five letters). A list of pilot items that contained a balanced mix of item types for recording was created (see Supplementary materials).

Stimulus recording

Six university students were recruited as language models to produce stimulus videos. Students responded to an advertisement asking, “Are you a great fingerspeller?” All sign models self-identified as deaf or hard-of-hearing. In total, five ASL models were selected to represent the diversity of the student body, including a mix of L1 and L2 signers from different racial, ethnic, and regional backgrounds, with different gender identities, representative of the student population at large.

Before filming, the ASL models were sent the lists of fingerspelled words in advance so they could ask any questions if they were unsure of a word. Models were instructed to not wear excessive make-up or jewelry which might be visually distracting and were instructed to wear comfortable clothes and shoes for long recording sessions. Upon arrival at the studio, models were given blue t-shirts to reduce visual distractions of patterned tops and to maintain consistency across videos. All models were recorded using the same gray-brown background in a professional video studio.

Before recording began, each ASL model reviewed consent procedures which were presented on paper in English and discussed in ASL. Models were filmed over two sessions lasting approximately 2 hours and were compensated for 4 hours in total, including both preparation time and studio time at a rate of $25/h. for a total of $100 per individual. Language models stood in front of the backdrop under professional lighting and looked into a teleprompter. They were instructed to “sign naturally” using their most comfortable fingerspelling style. They were allowed to use whatever mouthings felt comfortable to them. Models were allowed to self-pace the filming and cued the assistant director to feed them English prompts through the teleprompter in the camera lens. The ASL models were asked to do a minimum of two takes, but sometimes they required more takes due to “finger-flubs,” blinks, spontaneous giggles, looking away from the camera too soon, or other visual distractions. The models started in a neutral position, signed the target, and then returned to the neutral position. All signs from the pilot item set were divided equally into three lists, with each list assigned to two signers so that we would have duplicate videos of each fingerspelled word to select from. Stimulus types were presented using different visual cues to elicit the intended sign, number, and fingerspelling combinations.

Numbers were represented numerically, e.g., “23”

Signs were represented in all caps, as is the convention in signed language literature for glossing conventions, e.g., “LOCAL news” were the word ‘local’ was to be rendered as a lexical sign and ‘news’ as fingerspelling

Fingerspellings were represented in lowercase 7 , e.g., “LOCAL news”

Conventionalized fingerspellings were represented using a hashtag, e.g., “#busy”

Abbreviations were represented using at sign, e.g., “@html” and “@veg”

Post-production editing

In total, 600 acceptable video stimuli were produced from 250 unique prompt items. Additional post-production video editing was done to lighten or darken the appearance of the background to further enhance contrast between the signer and the background screen. Videos that still included blinks, body shifts, eye shifts, or other visual distractions were discarded. After culling videos with visual distractions, some test items only had one good version of the stimulus which was selected by default to represent the item. From the remaining recorded video stimuli, two proficient L2 ASL signers independently selected videos to represent the stimulus target. When there was disagreement between the videos selected, both signers watched the videos together and discussed the benefits or drawbacks of each, then decided on which video to use. To ensure items were articulated by models as designed, two research assistants coded mouthing, coarticulation, and degree of conventionalization for each stimulus video.

Test pilot methods

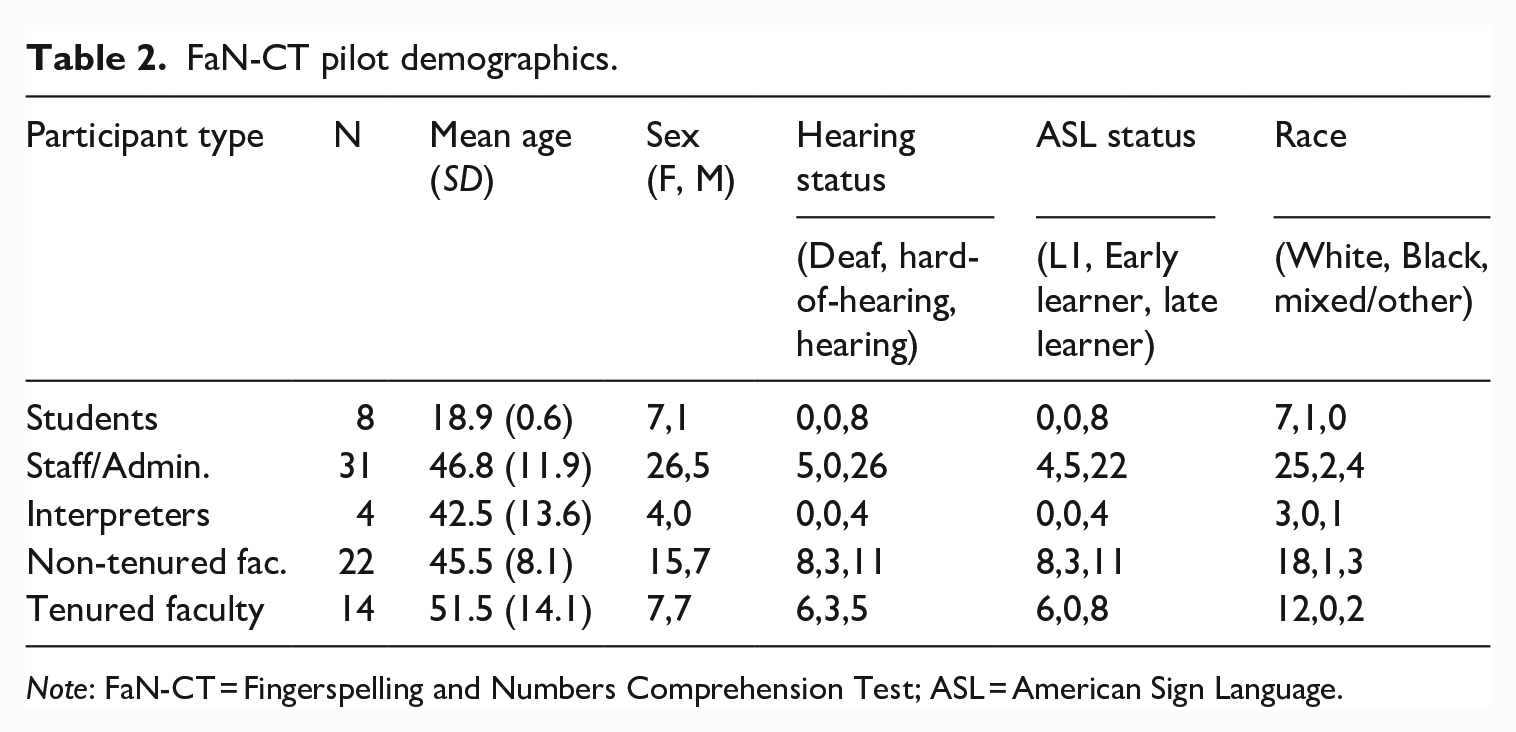

Participants

For this study, 79 adults from Rochester Institute of Technology were recruited to be as representative as possible of the range of possible test-takers, from students to staff members, program administrators, ASL interpreters, non-tenured faculty members who must demonstrate ASL proficiency for tenure requirements and tenured faculty who have already met those requirements according to current procedures. Their demographic characteristics are summarized in Table 2. 8 In total, 18 of the participants (all deaf, and all either staff or faculty) reported that ASL was their primary language before beginning school at age six, which for our purposes we categorized as first language (L1) signers. Those who reported first using ASL at age 17 or earlier were categorized as “Early learners,” while those who started learning after graduating high school were categorized as “Late learners.” All participants reported normal or corrected-to-normal vision.

FaN-CT pilot demographics.

Note: FaN-CT = Fingerspelling and Numbers Comprehension Test; ASL = American Sign Language.

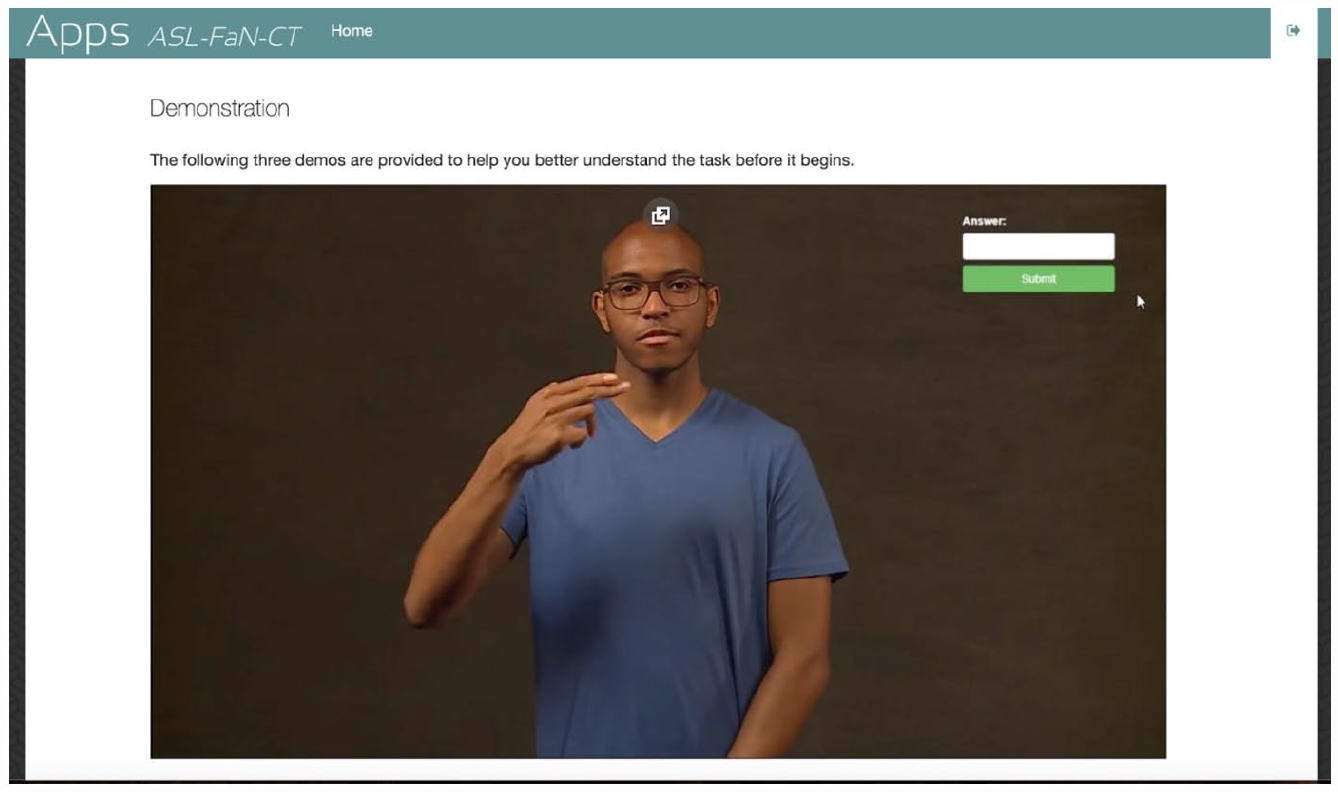

Materials and procedure

Participants were tested in a private room with a test administrator using a MacBook Pro laptop with a 15-inch screen. All video files (MP4) were scaled to 480 × 480 pixels, which rendered stimuli large enough to discern individual handshapes on the 15-inch screen regardless of browser size. After signing an electronic informed consent, participants completed an online background questionnaire concerning their general demographics and experience with ASL (see Supplementary materials). Participants were asked whether they preferred ASL or English for the duration of the session and test administrators accommodated their preferences. Including breaks, participants typically took between 1.5 to 2.5 hours to complete all 250 items. Students were paid $20; faculty and staff were paid $40 for their time.

Participants first read an explanation of the purpose of the test and instructions on how to type in their responses. Three example items were presented to familiarize them with the task. The example items were presented within the test as a screen-recording which showed participants a model signing a fingerspelled item, followed by the cursor moving to the response box, and the response box being filled in with a typed answer. For each item, the participants would see a video of a single signer against a plain background; a blank text box was presented beneath the video where participants would type their answers and press the enter key to move on to the next item. Participants could not pause the video, and the video was played only once. Participants were instructed to type the word verbatim, and to use number keys to respond to numeric stimuli. Participants had a maximum of 30 seconds to type in their answers and press the enter key or the next item would begin automatically after recording a null response. Item order was randomized for each participant. Figure 7 shows a screenshot of the test demonstration item, showing the signer mid-fingerspelling.

Live screen-shot of ASL FaN-CT Demo item, showing the full-screen video item and the item response box.

Scoring

Responses were coded dichotomously as either matching one of the acceptable key responses (1) or not (0). Several items had more than one possible correct option; for example, on the item fs_FRAMEWORK, responses were marked as correct if participants typed <framework>, <frame-work>, or <frame work>. In addition, any non-blank responses that deviated from the expected key were examined by a human rater, then confirmed in discussion with a researcher. Spelling mistakes—for example, <repitle> instead of <reptile>—were recoded as 1, but in the event that spelling differences created a different word, such as <tend> or <tred> instead of the target fs_TREND, the response was coded as incorrect. For items where only one element of the stimulus was fingerspelled, different semantically plausible translations of the non-fingerspelled element were accepted as correct, regardless of collocative status in English. For example, one term a test-taker entered was <nerve destruction> instead of the intended target, <nerve damage> where only “nerve” was fingerspelled and “damage” was represented as a separate sign which, in other contexts, can be translated as “destruction.” This was coded as correct, whereas if the fingerspelled portion of the target was substituted, this was coded as incorrect. For the item “IP address,” the “IP” portion was fingerspelled, so the response <email address> was coded as incorrect.

Before obtaining item parameter estimates, we used several criteria to decide whether to retain items for inclusion in the IRT model described in more detail in the following section. First, if a prompt elicited the same incorrect response in more than 5% of instances, the videos were re-examined by two researchers to ensure that the prompt itself was unambiguous. For example, fs_SOON “soon” elicited responses of <son> (6 test-takers) and <on> (21) among other sporadic incorrect responses that were not repeated such as “zoom.” In this case, the re-examination of the video revealed that the onset was clearly articulated, and there was clear horizontal movement on the held <o> indicating the double letter, so incorrect responses were deemed to be construct-relevant, and the item was retained. Second, items that did not elicit relatively homogeneous correct responses were deemed undesirable and were removed if they elicited three or more types of correct responses that were not simple spelling variations. For example, fs_MO elicited not only the intended response of <Missouri> but also other states such as Montana, <mo> and <m.o.>, <modus operandi> (with various other spellings), and <money order>, and was thus removed from the analysis. This resulted in the removal of all eight items of the format “sign + number” or “fingerspelling + number,” since response patterns did not converge. For example, the target “ages 17-22” elicited seven different types of correct responses, with test-takers sometimes including words such as <from,> <to,> or <through>, writing <age> or <aged>, and other patterns such as <17 to 22 years old>, which could make consistent scoring more difficult. Finally, the item fs_KNESSET 9 was removed because the response pattern indicated that many test-takers were unfamiliar with the word for Israeli parliament. One participant wrote <kn. . .ess. .et?>, indicating that the fingerspelled letters had been correctly processed, but lack of world knowledge could affect the probability of a correct response. All the remaining 235 items passed these retention checks.

For the retained items, we first examined the descriptive statistics for raw scores, which covered nearly the full range of possible scores from 3% to 96% correct response rates, confirming that our test takers spanned a large range of ability levels. The score distribution was positively skewed with a mode at approximately 30%, indicating that the FaN-CT was difficult for most test takers. Approximately 16% of responses were nonresponses, which was more than initially expected. Some of these may be partially attributable to the test administration method. Some participants who are less proficient at typing took longer than 30 seconds to complete their response; one participant entered simply “slow down!!” as their response to one item. It, therefore, seems plausible that the item delivery method depressed observed scores, but null responses were also expected when lower-level participants simply could not decode the prompt, making it impossible to dismiss null responses as construct irrelevant. Looking further into the data, the number of null responses by participants was strongly correlated with total test score (r = −.74), and null responses on an item level were strongly correlated with percent correct responses after removing nulls (r = −.62). Furthermore, all six tenured faculty members for whom ASL was their L1 scored above 95%, and no L1 signer scored below 72%, providing preliminary evidence that the test was mostly functioning as intended. Still, this investigation revealed that the differences in typing ability and content familiarity with the items contributed to noise in the data. To the best of our ability, these issues were resolved for future iterations of the test.

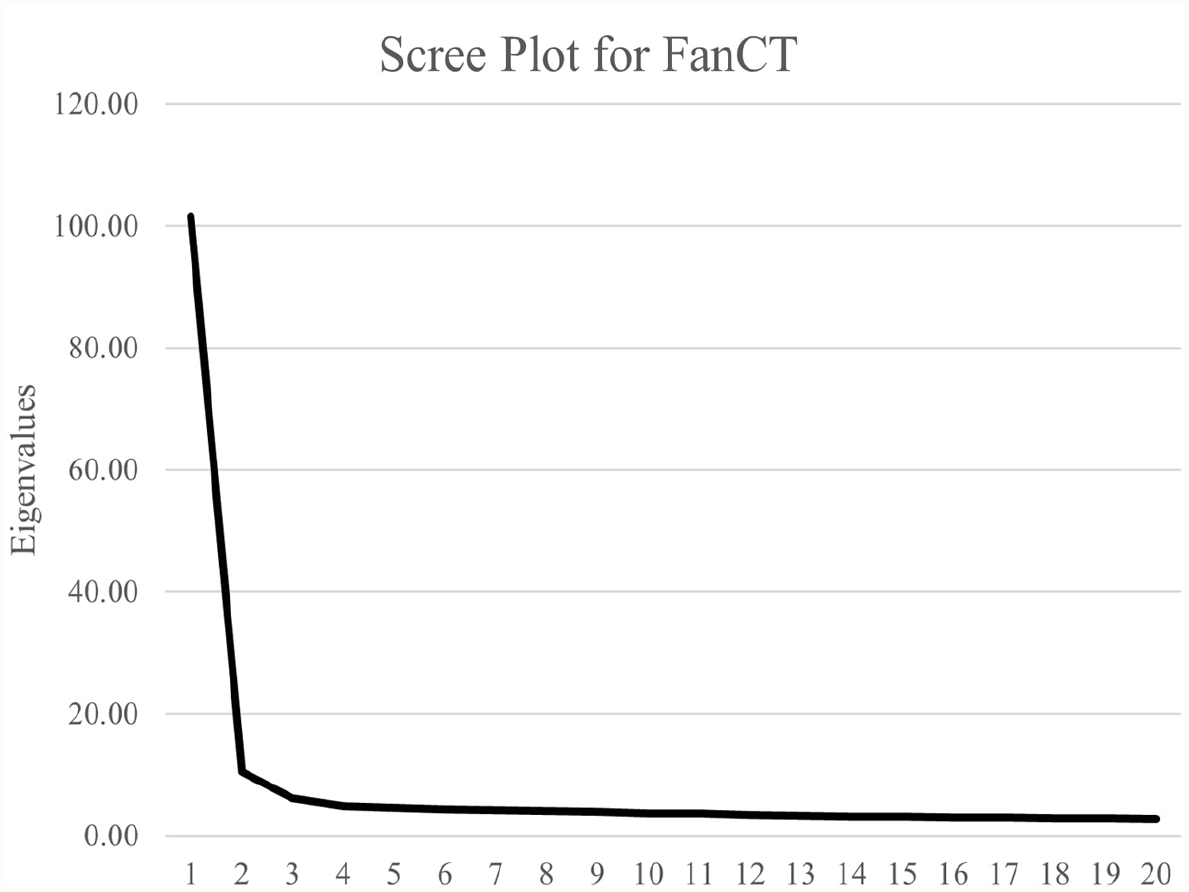

Generalization and estimation of IRT parameters

We assessed dimensionality and model fit for IRT models as best as possible given the limited sample size. Due to the relatively large number of items, the results of some dimensionality tests would be unstable, so we present preliminary analyses here with plans for more robust dimensionality analysis as more responses are obtained. We first examined the eigenvalues of the inter-item correlation matrix, finding that the first eigenvalue (105.15) was more than 10 times the magnitude of the second (10.49), and the scree plot had a clear elbow at the second eigenvalue, indicating preliminary support for unidimensionality (Figure 8). As for internal consistency, Cronbach’s α for the entire test was calculated using the R package ltm (Rizopoulos, 2006) to be 0.994, but this number is likely artificially inflated by a large number of items. Therefore, Cronbach’s α was calculated separately for 10 randomly selected, partially overlapping subsets of 50 items in which all 235 items were included at least once, yielding values higher than α > 0.85 in each case, which we took as evidence of high internal consistency.

Scree plot for ASL FaN-CT.

We then again used ltm (Rizopoulos, 2006) to fit a one-parameter logistic (1PL) and two-parameter logistic (2PL) model to the data. A lower Akaike Information Criterion and statistically significant −2 log-likelihood ratio test via the ANOVA command (LR = 759, df = 234, p < .001) showed that the 2PL model fit the data better than the 1PL (Kang & Cohen, 2007), so estimates from the 2PL model were used for subsequent item analyses. 10 We examined the χ2 item fit indices, finding that 11 items had values higher than the critical value for p = .05. On further inspection, those items had discrimination parameter estimates of between 0.242 and 0.622, indicating weak discrimination. Those items were, therefore, removed from the test. To maximize test information, we removed an additional 17 items with a discrimination of less than 0.70.

As discussed earlier, the primary intended use of FaN-CT scores is criterion-referenced, so ideal item difficulty estimates would be clustered around the criterion to maximize item information. However, our item difficulty estimates were widely dispersed. We decided to retain all items that exhibited high discrimination except for those with truly extreme item difficulty (θ < -3.75 or θ > 6.00), resulting in another nine items being removed. Ultimately, 198 items which exhibited adequate fit statistics, high discrimination, and appropriate difficulty were selected for use in the operationalized versions of the FaN-CT.

Test score interpretation

Before piloting, one concern was whether interpretation of numerals and non-numeric fingerspelled words could be placed on a single continuum for scoring. As mentioned earlier, preliminary dimensionality analysis supports a unidimensional interpretation of test scores. While factor analysis on the whole test would require a larger sample, we ran a Confirmatory Factor Analysis (CFA) on a subset of items comparing a unitary factor model to a two-factor model with only numeric items loaded onto one factor, and short words with four or fewer segments on a second factor using JASP (JASP Team, 2020). In the two-factor model, estimated factor covariance was r = .968, and the unitary factor CFA on the same items had nearly the same estimates of model fit (e.g., comparative fit index [CFI]: 0.804 vs 0.801; root mean square error of approximation [RMSEA]: 0.088 vs 0.089; two-factor estimates presented first). Similarly, while multiword items and longer words were, on average, more difficult than short words, we did not find evidence that they behave differently with respect to our test takers. The unitary factor model exhibited similar fit statistics to the two-factor model, which again revealed high interfactor correlations (r = .971). With a larger sample size, some differences may be detectable, but any potential impact of using composite scores rather than a single factor score appears to be minimal.

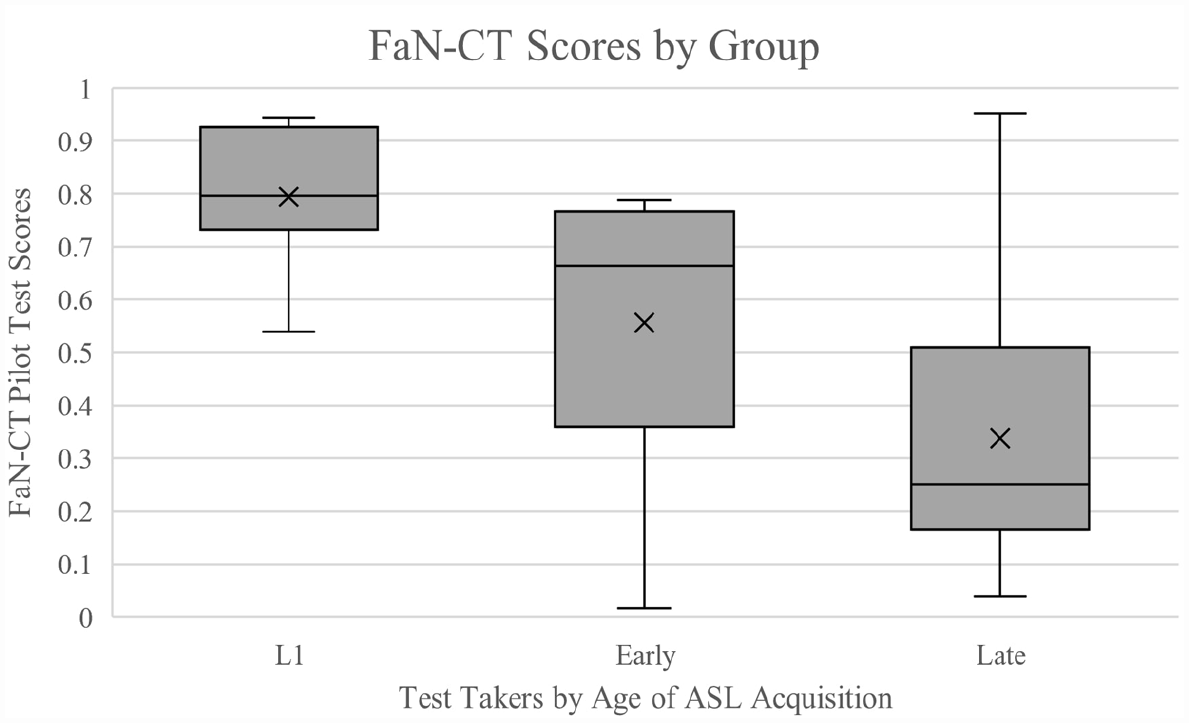

As for test takers themselves, some intragroup variation was expected, but given our knowledge of real-world usage, L1 ASL users should generally perform better than those who first began studying ASL as an adult (Hauser et al., 2008; Newport, 1988; Paludnevičience et al., 2012). There were examples of early and even late learners of ASL who scored among the highest on the test, but as shown in Figure 9, L1 test takers outperformed other groups. A one-way analysis of variance (ANOVA) on test scores by age of onset for ASL, using Brown-Forsythe corrections for heterogeneity of variance, was significant (F = 29.86, p < .001, η2 = 0.446), and Bonferroni-corrected post hoc tests showed that L1 signers outperformed early learners (p = .035), who in turn outperformed late learners (p = .029). The lowest performing L1 signer had the largest number of null responses of anyone on the test and happened to be the participant who wrote “slow down!!”, indicating that typing may have been an issue. Separate ANOVAs revealed that neither sex (p = .495) nor ethnicity (p = .721) of the test takers significantly predicted test scores.

ASL Fingerspelling and Number Comprehension Test (FaN-CT) scores by age of ASL acquisition.

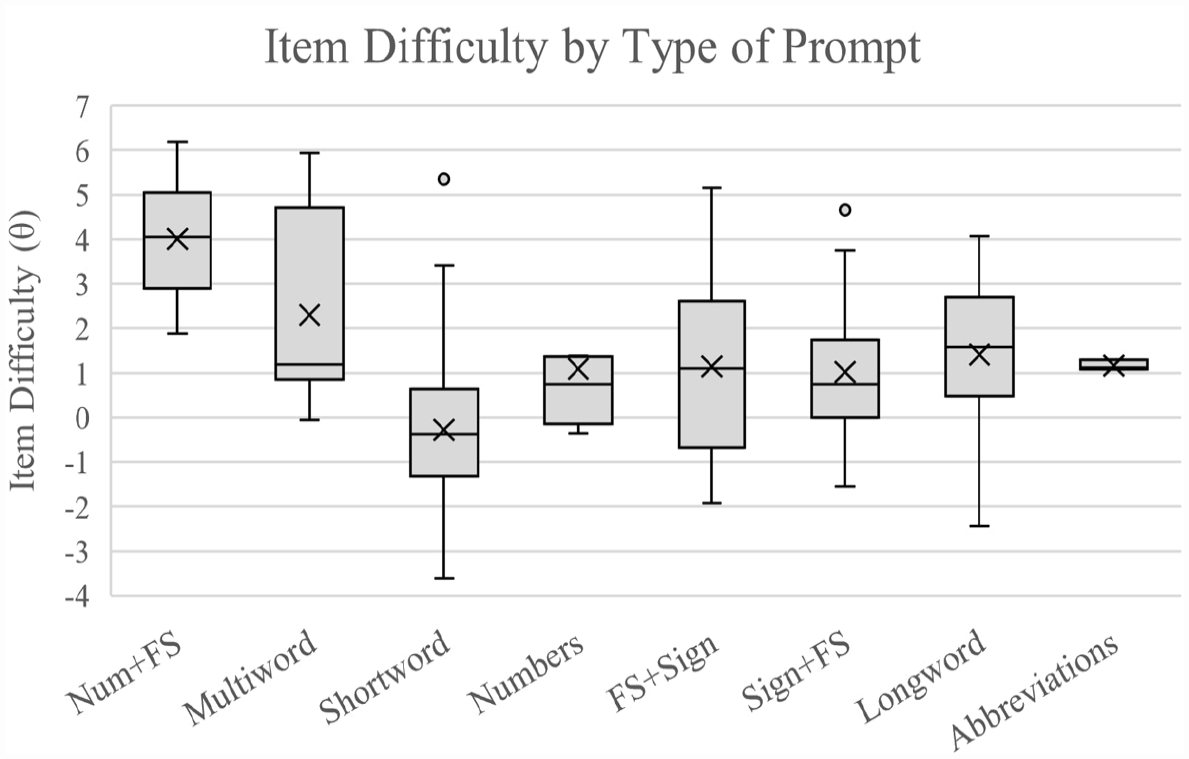

While our item design and scoring system contribute to the plausibility of the interpretative argument of the FaN-CT (namely, that it measures test-takers’ ability to understand fingerspelling), it is also necessary to examine whether differences in observed item difficulty reflect differences in the difficulty of interpreting fingerspelled words. Unfortunately, since the FaN-CT is one of the first tests of fingerspelling ability, the factors that should contribute to item difficulty are currently unknown. For example, longer words may place a greater demand on automatized processing skills, but for very long words, even partial decoding results in more information that could make it easier to infer the word from the surrounding context. We examined whether there was an effect of item category in general by breaking down items into bare numerals, numbers + fingerspelling (e.g., “5 adults”), short words of less than five letters, long words of at least six letters, multiword units (e.g., “super moon”), combinations of lexical signs followed by fingerspelling, the reverse order combination, and acronyms or abbreviations. The observed difficulty levels are displayed in Figure 10. A one-way ANOVA with Brown-Forsythe corrections for heterogeneity of variances showed that item category was a significant predictor of item difficulty (F = 12.065, p < .001, η2 = 0.297). Post hoc comparisons with Bonferroni corrections for multiple comparisons showed that items of the form number + fingerspelling (e.g., “5 adults”) were more difficult than all other item types except for multiword units and abbreviations (p < .001 in all cases). On those items, participants were more often accurate with the number portion, but struggled to transition to the fingerspelled word, with common response patterns including <5 volts>, <5 dolls>, and <50 pounds>. On the opposite end, short words (e.g., fs_ROPE) were the easiest category and were significantly easier than number + fingerspelling items, long words (p < .001), multiword units (p < .001), and combinations of fingerspelling + lexical sign (p = .041).

ASL Fingerspelling and Number Comprehension Test (FaN-CT) item difficulty by type of prompt.

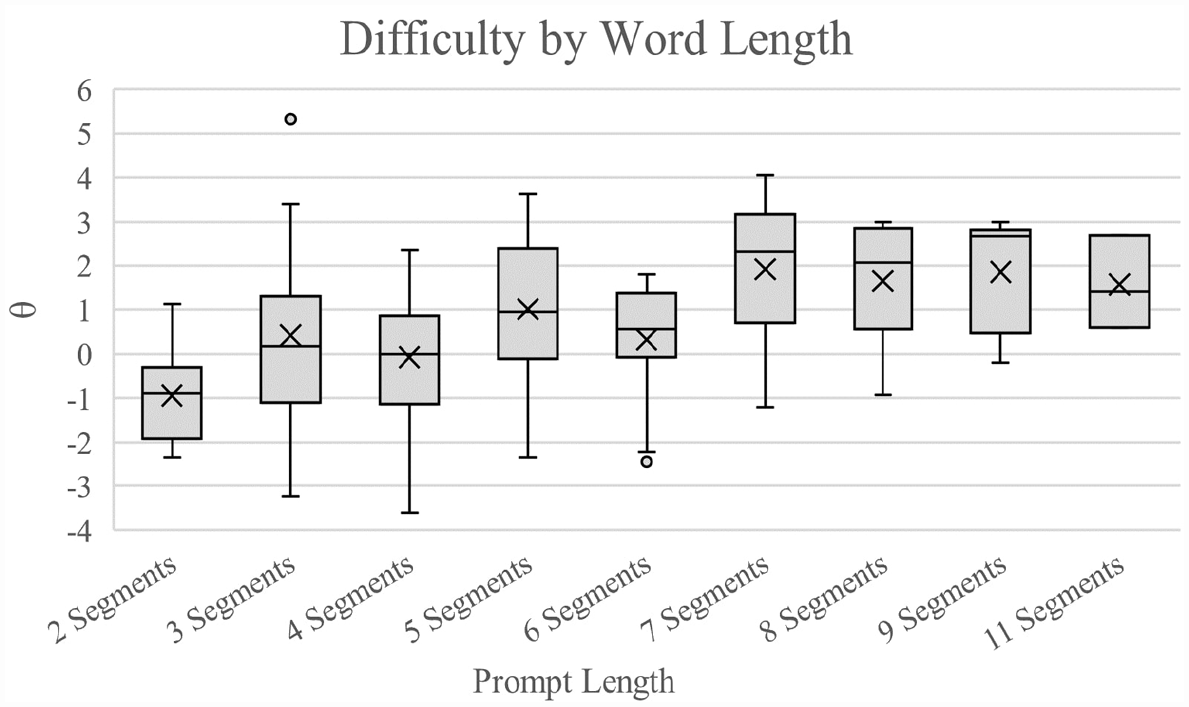

Item difficulty did not linearly increase with prompt length in general. For example, within longer words (and within shorter words), as shown below in Figure 11, increasing the length of the word had no appreciable impact on difficulty. A linear regression of prompt length on item difficulty was non-significant (β(length) = −0.005, R 2 = .001, p = .943), and this was also true even when examining only the range from two to seven segments (β(length 2-7) = 0.059, R 2 = .001, p = .682).

Fingerspelling and Number Comprehension Test (FaN-CT) observed difficulty of target words by word length.

Implementation and use

Since we judged that the test exhibited sufficient evidence of being able to measure differences in the ability to understand fingerspelled ASL, we proceeded to make operational versions of the test. Given that the intended use of the FaN-CT is as a criterion-referenced instrument, we needed to set cut scores. Because part of our sample was specifically recruited to vet the test, we chose to use the Contrasting Groups method (Livingstone & Zieky, 1989) by comparing the scores of 15 test takers whose real-world use of ASL and previous assessment scores indicated that they should pass the test as judged by key stakeholders at the institution, and 15 others who were deemed, through previous assessment scores and self-reports of ASL usage, as not yet sufficiently proficient. The ability estimates of those 30 participants revealed a clear gap between the highest of the “low” group (θ = −0.26) and the lowest of the “high” group (θ = 0.51). Setting a cut score at θ = 0.25 would place all 30 test takers in accordance with external judgments, while also erring on the conservative side, since false negatives can be relatively easily corrected, for example, by having candidates retake the test, whereas false-positive errors are harder to correct and therefore more consequential for test use. Such a cut score also requires participants to meet our operational definition of a minimally proficient user by correctly decoding the majority of fingerspelled tokens after one presentation.

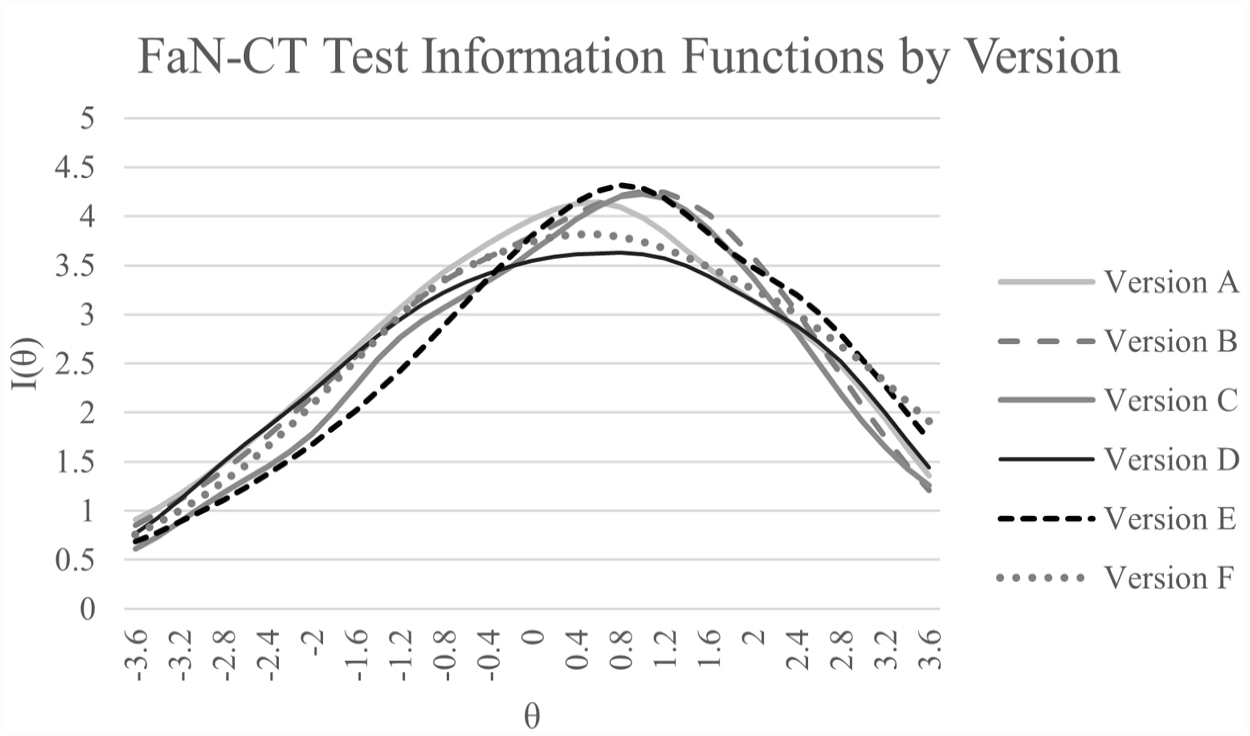

It is not logistically feasible to make employees take a nearly 200-item test as only one part of a battery of assessments, so instead, 30-item versions of the test were created by selecting an equal number of items of each type (e.g., numerals, long words, multiword units), and then finding sets of items that matched as best as possible in terms of their difficulty and discrimination. This resulted in five operational test versions and one additional version for use in planned equating studies and future item vetting, all of which have comparable information functions as shown in Figure 12.

Fingerspelling and Number Comprehension Test (FaN-CT) test information functions by version.

Candidates will be able to take a version of the FaN-CT once per semester, rotating each semester, balancing the need to reduce item exposure with the ethical responsibility to allow people to take the test multiple times before final tenure review, possibly assessing their need for further study if they do not pass initially.

Discussion

Since ASL is the primary language of instruction and interaction at the National Institute for the Deaf (NTID), it is necessary to assess whether staff, interpreters, and faculty have sufficient ASL proficiency to communicate independently, but the lack of existing instruments necessitated the creation and validation of our own assessment. The FaN-CT is designed with the goal of assessing test takers’ ability to understand fingerspelled words, phrases, and numerals, an area of ASL known to be challenging for L2 learners but also of vital importance for effective communication. After initially selecting a pool of more than 600 potential items from real-world usage of fingerspelling in ASL and carefully recording and editing videos, we piloted 250 items for use in a high-stakes assessment of fingerspelling comprehension.

The dichotomous scoring method reflects the TLU domain in that partial understanding is not indicative of communicative success. While initial decisions on which misspellings or other interpretations should be counted as correct open some room for subjectivity, the criteria for coding decisions are simple and transparent, and only those responses that have not already been assigned (1) or (0) will be left to the judgment of future raters, presumably leading to fewer non-automatic decisions over time.

Scored items showed evidence of high internal consistency and tentative evidence of unidimensionality, enabling the use of a 2PL IRT model that provided sample-independent estimates of item parameters. Removing any items that exhibited misfit or undesirable qualities such as poor discrimination, heterogeneous correct responses, or evidence of potential bias based on cultural knowledge, we ultimately arrived at a set of 198 items that appear to exhibit the traits specified in the test’s validity argument. Those items were then distributed among five operational versions of the test and one additional version was used for future item vetting and continued parameter modeling for equating purposes. These versions have balanced sets of different types of items that span the range of uses of fingerspelling in the TLU domain and that provide sufficient information to make credible decisions about test takers.

Notwithstanding this evidence in support of the test’s validity argument, continued revision and analysis is still necessary. The sample size for this pilot, while large for ASL communities, is still small compared to high-stakes assessments in other language testing contexts, and therefore estimates that we have obtained include some uncertainty and a need for further evaluation. Beyond simply gathering more data, we also see four areas of attention.

First, the non-negligible number of null responses indicates that, despite our attempts to provide ample time by giving 30 seconds per item, some candidates may not have recorded a response due to difficulty typing, which is construct-irrelevant. To address this concern, the test has already been revised such that it will only move on to the next item once the test-takers press ENTER or after 15 minutes have elapsed, indicating that the administration was interrupted and ending the session.

Second, a more detailed item analysis may help to elucidate which features of the item affect difficulty, which could be of interest to sign language research in general. Since features such as articulation rate, mouthing, coarticulation, and so on may interact with each other and with the proficiency of the test taker, this analysis will require a larger sample and dedicated effort. Coding for these item characteristics has already begun, but preliminary investigations have revealed that some features will necessitate detailed coding schemes and potentially complex analyses. For example, mouthing was not a binary feature: Signers sometimes mouthed only part of a word or used a mouth shape that differs from the one commonly used by hearing individuals, and it is unclear what impact that would have on intelligibility. In our test, each target was presented with only one video, so it may ultimately be necessary to conduct a separate study using counterbalanced lists where the same target is presented with different videos to examine mouthing or signer effects rigorously.

Third, it is unclear whether, or to what degree, test takers’ ability to interpret fingerspelled ASL as measured by the FaN-CT relates to other aspects of ASL proficiency. Once other tests are operational, examining the relationship between these measures will help inform test development and language research on ASL. For example, whether the FaN-CT’s apparent focus on segment-level processing will correlate with discourse-level comprehension is of key interest to models of ASL perception, analogous to the relationship between phoneme identification and listening comprehension in spoken languages. Fourth, as with any high-stakes assessment, especially a new one, the consequences of test use must be carefully monitored after pass/fail decisions have been made to detect any trends suggesting false positive or negative errors and make changes accordingly.

Finally, the FaN-CT was developed to be part of a battery of ASL tests used to make decisions on whether or not to grant tenure and promotion to faculty working at a college for the deaf. The faculty who will take the test will be both deaf and hearing L1 and L2 signers. In this case, the FaN-CT is not a pure L2 test per se because it will be used by both L1 and L2 signers. It is worth noting that deaf L1 and hearing L1 ASL signers perform differently on ASL tests, where hearing L1 signers have been shown to perform more similarly to deaf L2 signers (Hauser et al., 2008). One might not expect native signers to differ based on their hearing status because it is a visual language, but evidence of deaf signers performing better than hearing native signers on ASL tests is what Paludnevičience et al. (2012) referred to as the “24/7 Effect.” They explained that deaf signers use ASL all day, every day for all language interactions, whereas often hearing children of deaf parents (sometimes referred to as CODAs) might use ASL only to communicate with their parents but not in any other context. Given the heterogeneity of ASL signers at the institution and the need to test ASL proficiencies across these varied groups, FaN-CT is not a “pure” L2 test. This could be considered a limitation from the perspective of traditional approaches to L1 and L2 test development.

Future directions

Fingerspelling tests are massively underrepresented in the realm of ASL assessment. They are not included in a recent, comprehensive review of testing tools for various signed languages (Enns et al., 2016) and are not listed on Tobias Haug’s (2022) Sign Language Assessment website, which provides an overview of existing tests for various sign languages. The need for validated assessments of ASL is great and extends far beyond fingerspelling or any single institution. One clearly related problem is the certification of L2 adult learners in ASL Interpreter Training Programs (ITPs). ITPs often need to make tough decisions regarding program admission, placement in particular class levels, or passing requirements for degree completion. As ASL interpreters often work in high-stakes environments where lack of necessary proficiency to function in a professional capacity could result in harm to deaf clients assessing the working proficiency of these individuals could help raise the standards of professionals working in the field. A version of the FaN-CT could potentially be useful to ITPs in this regard.

As the FaN-CT was primarily developed for use among adult L2 signers, it remains to be seen whether we can extend this test to other populations. But because tests that probe typical L1 development in Deaf populations are greatly lacking, such an adaptation would be beneficial. Development of fingerspelling awareness in L1 populations has been linked to increased English proficiency (Ramsey & Padden, 1998; Stone et al., 2015); however, research on literacy development and fingerspelling development has also shown that the two do not necessarily develop at the same time, as many children learn to memorize fingerspelled words as lexical chunks before learning about the compositional parts which map to English orthography (Haptonstall-Nykaza & Schick, 2007; Padden, 1991, 2005). The development of an L1 version of the FaN-CT may be useful in kindergarten through 12th-grade settings (K–12 settings) for predicting literacy development in deaf and hard-of-hearing children. Items targeting academic vocabulary were specifically chosen for the FaN-CT but would be less appropriate for K–12 populations, especially those who are L1 learners of ASL. Additional item development and piloting will help to determine what item properties contribute to difficulty and discrimination in an L1 population, and whether items function differently by population. Importantly, using this test in a K–12 setting moves us away from a criterion-referenced test to a more norm-referenced usage. The current test peaks in test information at a considerably higher proficiency level than would be expected of most young children, so we would need to create and evaluate more items at a range of abilities to make the test usable for formative purposes at lower levels. Despite these potential limitations, the need for L1 ASL tests suggests an extension of some version of the FaN-CT would be potentially beneficial to the community.

Finally, we want to examine the development of ASL more generally, comparing this test to other tests which measure ASL development. Concurrent validity and examination of the nomothetic span of the test construct were not analyzed here due to sparse data on participants’ prior test scores. However, understanding the relationship between fingerspelling, general vocabulary knowledge, and the ability to produce fluent sentences (as measured by the SLPI) is of key interest to researchers and teachers.

Conclusion

Fingerspelling offers unique challenges to the development and mastery of ASL in that the perceptual mechanisms necessary for decoding fingerspelled words differ from other lexical and grammatical constructions, and neologisms and proper nouns that the interlocutor would be expected to encounter for the first time are frequent. FaN-CT contributes to the existing test batteries as another way in which to measure the fluency of L2 signers. Evaluation of adult L2 sign language skills is important for the education, health, and quality of life of deaf signers.

FaN-CT pass/fail decisions are intended to bring about the outcome of the National Technical Institute for the Deaf being able to make decisions regarding tenure and promotion for those employees who can communicate in ASL, thereby promoting its use within the community and promoting the interests of stakeholders, especially deaf students. FaN-CT is intended to be used as part of a larger battery of ASL assessments developed at NTID and in this context, our data so far support the statement that this test measures differences in receptive knowledge of fingerspelling. While it is our eventual goal to extend this to other settings and populations, doing so will require additional piloting and validation. The analysis of these results will, however, help inform that development process by providing insight into the factors that contribute to difficulty in recognizing fingerspelled words, and the item features that enable testers to discriminate between different ability levels.

Supplemental Material

sj-docx-1-ltj-10.1177_02655322231179494 – Supplemental material for Development of the American Sign Language Fingerspelling and Numbers Comprehension Test (ASL FaN-CT)

Supplemental material, sj-docx-1-ltj-10.1177_02655322231179494 for Development of the American Sign Language Fingerspelling and Numbers Comprehension Test (ASL FaN-CT) by Corrine Occhino, Ryan Lidster, Leah C. Geer, Jason Listman and Peter C. Hauser in Language Testing

Footnotes

Author contribution(s)

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.