Abstract

Placement tests are used to support a particular need in a local context—to determine the best starting place for a student entering a specific programme of language study. This brief report will focus on the development of an innovative placement test with self-directed elements for our local needs at a university in Canada for students studying English or French as a second language. Our goals are to produce a more efficient assessment instrument while allowing students more agency through the process. We hope that sharing these details will encourage others to consider the potential of incorporating self-directed elements in low-stakes placement decision-making.

Introduction

Placement assessment refers to assessment activities designed to place students into the appropriate course in a programme of study for their abilities and needs (Green, 2018). In this brief report, we describe the development of an innovative placement assessment for English and French credit programmes in a bilingual university in Canada, which incorporates objective and self-directed elements. Our goals were to improve the placement process and provide learners with more agency in determining their language learning pathway. Given the constraints of this brief report format, we focus here on describing our motivation for creating this tool, and details of its creation as well as adjustments following a pilot administration of the tool. We hope it will be informative for those interested in attempting to develop or revise placement tests in their own contexts. Further details about this pilot administration can consult the supplement to this report or contact the authors.

Context and problem to be addressed

Our bilingual university offers a large range of academic English (ESL) and French (FLS) credit courses to support students’ language development—from true beginner courses to advanced language arts. Students taking our placement tests are generally enrolled in English medium programmes taking French as elective courses (or vice versa). In other words, their programme is delivered in one language, but they may choose to take optional courses in the other language for their own interest and are generally motivated to find the best course for their needs.

As with many higher education language programmes, we test a relatively large number of people (in our case, approximately 1,500 per year), with a short turnaround to accommodate course registration deadlines. Therefore, our testing needs to be efficient as well as accurate in placement. Unfortunately, there are several issues with the current tests. For example, no exercise has been done to align content in the test to that of the language courses, seen as best practice in placement assessment (Alderson et al., 1995). Most importantly, too many students need to be moved in the first weeks of the semester.

It is important to minimise the number of misplaced students, or else the placement test serves little practical purpose. In addition, students play a passive role in this assessment process, in which they are not expected or allowed to contribute any views about where they belong. Given the inherent motivation of the students, we saw the potential of including students in a more active way. We therefore decided to explore options to incorporate a self-directed element into a revised placement assessment instrument, as described below.

Directed Self-Placement (DSP)

Directed self-placement (DSP; Ferris et al., 2017; Moos & Van Zanen, 2019; Toth & Aull, 2014; Wang, 2020) refers to a set of approaches for providing more autonomy to students to have a say in the placement decision. DSP is a type of self-assessment practice, albeit one where rather than asking students to grade or evaluate their own performances, they are engaged in a metacognitive reflection of their capacities (Andrade & Du, 2007).

Research and practice related to DSP has been largely limited to the placement of students into first year college writing courses in the United States (Aull, 2021; Royer & Gilles, 1998). DSP has encompassed a wide variety of practices; there seems to be a consensus that there is no “one size fits all” approach (see Ferris et al., 2017; Kenner, 2016). Benefits of DSP have been well documented, both in terms of student empowerment and placement efficacy. Crusan (2011) noted that DSP can be faster and cheaper, teachers appreciate the procedure, and placement decisions can be as appropriate or even more appropriate than with standardised testing (see also Crusan, 2006; Royer & Gilles, 1998). The Conference on College Composition and Communication’s (2020) Statement on Second Language Writing and Multilingual Writers included a recommendation for directed self-placement or other methods of placement of second language learners that combine direct assessment with student choice, to privilege student agency and balance against inherent biases against certain student groups (Moos & Van Zanen, 2019; Toth, 2018; Wang, 2020).

However, DSP is not without its drawbacks. For example, Crusan (2006) wrote that there may be a cultural effect on a student’s decision. Some students from one assessment culture may consider self-placement a fair and just instrument, while students from other assessment cultures may consider teachers as assessment authorities who make these decisions (see Summers et al., 2019).

DSP and L2 placement

There have been a few attempts to make use of DSP in L2 programmes. When experimenting with several self-assessment formats for placement into an Italian language programme, Fratter and Marigo (2018) found that it was necessary to provide students with a “guided syllabus” with specific and concrete information on functional and grammatical content of each course of the programme. One challenge they found was that students sometimes answered “yes, can do” to descriptions of content that they remember having studied, not what they were necessarily still able to do. Summers et al. (2019) examined the possibility of using student responses to ACTFL can-do statements for placement into college-level intensive English courses. Like Fratter and Marigo (2018), Summers et al. (2019) noted that can-do statements represent a level of abstraction away from the specific tasks found in the courses of the programme of instruction, so learners may find it a challenge to imagine themselves in the situation being described.

Fratter and Marigo (2018) dealt with this challenge by supplementing self-reports with performance information—responses to grammatical exercises as a check that they can do what they are reporting. Ferris et al. (2017) and Engelhardt and Pfingsthorn (2013) also suggested supplementing DSP tasks with an objective language assessment for triangulation purposes. Strong-Krause (2000) found that items that included descriptions of the tasks, or actual tasks from the programme itself, were the best predictors of the placement test scores.

Design of our revised placement instrument

Basic design considerations

Keeping the discoveries and challenges of this previous work in mind, we drew the following conclusions when embarking on the development of our own placement assessment:

We appreciated the need to create tasks that include detailed descriptions of class activities and samples of real student work to give a very clear picture of each level for students;

We saw the benefit of supplementing students’ self-judgements with objectively scored grammar, vocabulary and usage items aligned to each of the levels of the instructional programme.

Our orientation is not towards a self-assessment activity, where students are asked to produce and score their own performances. Instead, within a progression of learning framework (Kane, 2018), students are asked to examine specific task descriptions and samples of work representing each level within the instructional programme, and judge which ones best represent their current abilities. A progression of learning framework is preferable to a continuous scale-based interpretation of assessment scores (the current model for our placement testing), as it envisions learning as a sequence of levels that learners will gradually master as their language proficiency develops. In other words, “[s]tudents’ performances are thought of in terms of their levels in the progression and not in terms of points on the scale” (Kane, 2018, p. 241). Applying a progression of learning model means providing detailed descriptions of learning progressions at each level for students to consult.

Creation of draft tasks

The three tasks of our first draft of this “progression of learning” instrument are summarised below together with examples of each task. This assessment needs to distinguish five separate levels (or entry points) for ESL placement and six levels of FLS (which includes an absolute beginner level not necessary in English). Design decisions were made at the same time for both languages, but here we report on details of the ESL instrument. Our task development team consisted of four item writers and reviewers (three of whom were ESL instructors familiar with all levels of the programme). As described below, for the second task, we also solicited expert judgements from other current ESL course instructors.

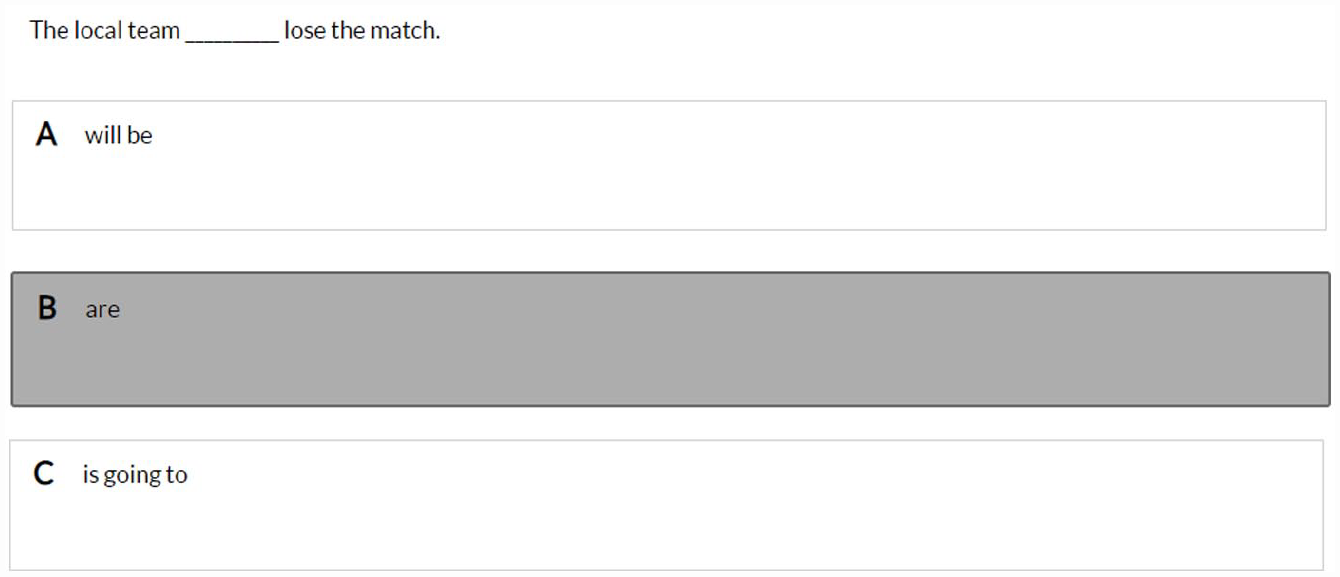

Objectively scored grammar, vocabulary and mechanics task

To create these multiple-choice items, team members analysed grammatical and lexical content objectives of each courseplan, as well as samples of exercises provided by instructors. Twelve to 15 items were drafted by one team member for each level, then went through the following review process: First, one team member conducted a technical review of the item, then the ESL instructors on the team confirmed the extent to which each item represented the level with which it was associated. Adjustments were made and the 10 items deemed to be the strongest were chosen for each level. An example item is found below in Figure 1, with an incorrect answer highlighted.

Example of an objective grammar/vocab/mechanics task.

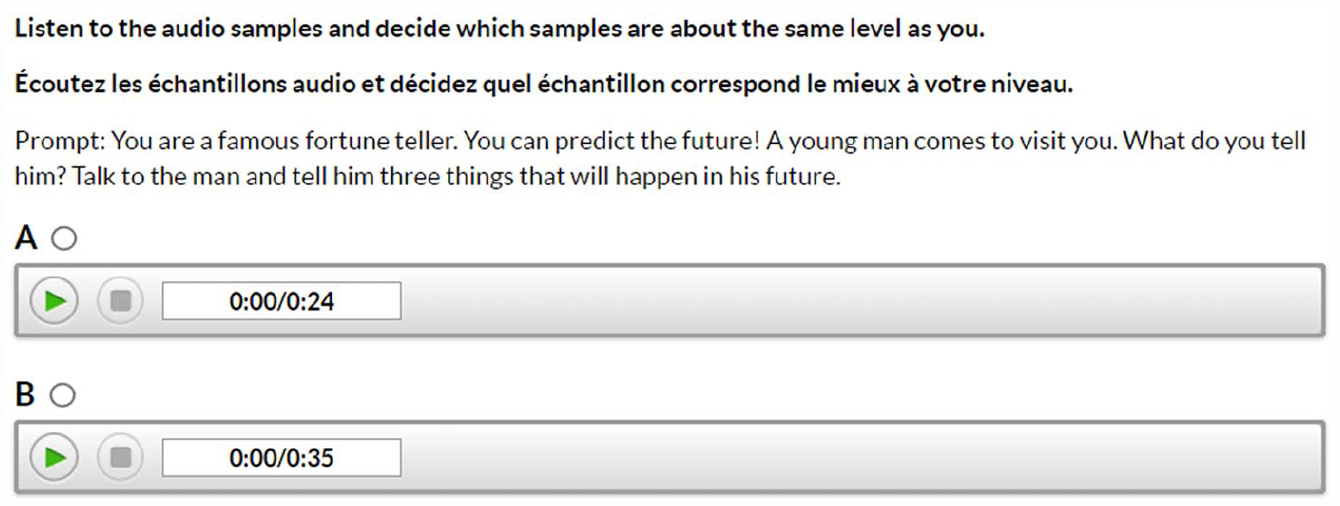

DSP Task 1—Binary judgement student samples task

In this task, students see pairs of authentic written or oral student samples judged to be representative of adjacent levels in the programme. When providing the samples, the contributing instructors gave their expert opinion of the level represented by each sample. These level judgements were then verified by the ESL instructors on the development team. Figure 2 provides an example of the interface for the oral binary judgement task. Student writing samples can be found in the Supplement.

SDP Task 1: Binary judgement task with paired student samples.

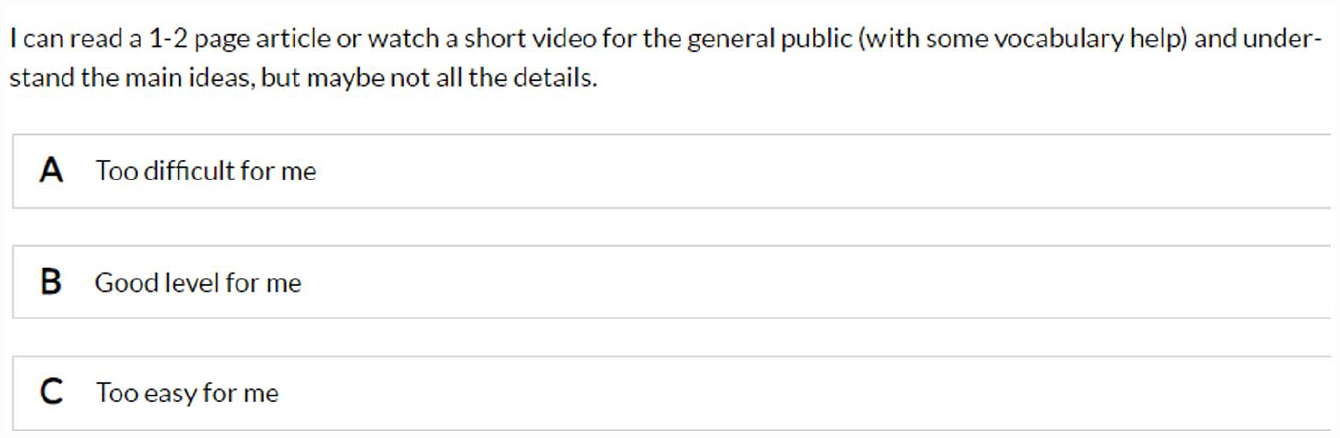

DSP Task 2—Course descriptions judgement task

This modified “can-do” task involved eliciting students’ judgements of their ability to do class activities at each level. While “can-do” statements are usually binary choice (see examples in the CEFR, Council of Europe, 2020) they may also involve longer rating scales to collect more nuanced information (Ma & Winke, 2019), and we decided on a three-level decision (“too difficult for me,” “a good level for me,” and “too easy for me.”) The objectives of the course plans were too general to distinguish effectively among levels. For example, courses at several levels listed an objective as “being able to use a variety of verb tenses.” Instead, we examined actual class activities from each level and described them in detail, including what the student is expected to do, for which audience, and with what support (see Figure 3 for an example).

SDP Task 2: Course descriptions judgement task.

Test delivery decisions

This assessment was designed to be delivered online through the university’s language assessment testing platform. While a fully adaptive assessment is well beyond our resources, the tasks are adaptive to a limited extent within the platform’s functionality. Specifically, for each task, if an agreed-upon cut-off to a subsequent level is not reached, the rest of the task will be skipped, and students will not be shown items at higher levels. This skipping function is designed to make the assessment more efficient and less frustrating for lower-level students. However, for the pilot administration of the test, this skipping option was not employed, to allow students to complete all items. That way, we could both examine the extent to which the items follow a progression of difficulty, and collect data to help determine the appropriate cut-off score to trigger the skipping function.

Pilot administration

Details of pilot

After initial development, we piloted the tool in December 2021 with 103 registered ESL students. In addition to verifying the functionality of the multiple-choice questions, the clarity of instructions, and other elements of the tool, we wanted to examine (a) the extent to which the new tool captured a progression of learning, (b) whether the new tool was effective for placement, and (c) if the students perceived a benefit in having their own judgements play into the placement decision.

We conducted a concurrent mixed-methods study during the pilot administration to address these questions (see details of this study in the supplement to this report). Here, we overview of our method and key findings that allowed us to modify the tool.

Evidence for progression of learning

We used Rasch analysis to examine whether we achieved an item difficulty hierarchy in the objective task, representing a progression of learning aligned to levels of the programme. Results suggested that the empirical item difficulties did not match the expected item difficulty hierarchy for a number of items. In response to these results, we replaced or revised 15 objective items that were either misfitting, or did not conform to our progression of difficulty. Although we hypothesised item difficulty levels through our programme experts’ judgements, these results demonstrate the benefits of combining human judgements with statistical validation. We will continue to monitor item quality and difficulty over future administrations, according to best practice in placement assessment (Green, 2018).

There are challenges in attempting to capture the nuances of progression across a communicative language programme with an objective test of grammar, vocabulary, and usage. Yet another possible complication is that course objectives and activities provided by the instructors were found to be associated with multiple levels. If a language programme has not been established with a coherent progression of learning, how can we hope to successfully fit a placement test to it? We intend to follow-up with the institutional programme committee regarding potential issues with progression of difficulty within the programme itself.

Effectiveness of the assessment

To investigate effectiveness, we contacted the students’ current instructors and requested their judgement on the level where they felt each of these students currently belonged (the instructors have the final say in the placement decision). We then ran a multinomial regression to examine the extent to which the level recommended by the pilot assessment (all three tasks) predicted the instructor level judgements.

Of the predictor variables examined, the objective decision variable was the only variable that predicted instructor judgement, but only at higher proficiency levels. The DSP decision recommendation did not predict instructor judgements. Given the lack of progression of difficulty revealed by the Rasch analysis, this is understandable and is already being addressed through item revision.

This demonstrates the need to collect further information about the effectiveness of the new tool. We have already planned to examine whether there is a reduction in the re-placement of students compared to the previous assessment, but collecting these data will take time. Despite these results, we plan to continue to use the binary judgements DSP task in our final test, first and foremost because of the positive responses of the students to this task and the possibilities of having their judgements included. Our field has been increasingly recognising the inherent value of including test taker voices (Jin, 2023). In addition, having students observe typical course performances and reflect on how their own language abilities compare may represent an active learning process, a modest opportunity for assessment as learning (AaL). According to Yang and Xin (2022), “AaL cultivates students’ metacognitive ability and literacy, encourages students to actively participate in the evaluation process, narrows their learning gaps through self-assessment, self-monitoring, and self-regulation, and determines the next learning goal” (p. 55).

Following the pilot we decided to eliminate the second DSP task—the course descriptions judgement task. Our initial review of the pilot data revealed that student responses were inconsistent; most students indicated that multiple levels were the appropriate level for them. Fratter and Marigo (2018) reported something similar—that students had difficulty establishing their language level using “can-do” statements because they had difficulty associating them with concrete examples of language use. Our findings represent further evidence of the limitations of can-do statements in language self-assessment. Despite our efforts during development, this task did not appear to describe the levels concretely enough to allow students to identify the level that best described their abilities.

Students’ perceptions of the assessment

We asked students directly about their experience by adding several short open-ended reflective questions immediately following the pilot assessment. We asked them, “Do you think this type of assessment would place you in the course that best meets your needs? Why or why not?” We also asked them whether they preferred to be one of the strongest in a language class, or had a preference for being challenged. We were interested in exploring if this was a useful DSP element to include—if students varied in their stated preferences. If they do vary, and if a level decision is borderline, this preference could figure into the final decision.

While many students reported positively about the “fresh experience” of the test and its novel approach, many also reported that the test helped them gain a clearer understanding of their strengths and weaknesses. When asking whether they think the test would place them in the course that best meets their needs, almost all students replied in the affirmative. They also shared their support for including student judgements during the testing process. Two students expressed their doubts at being able to judge their own performance. This sentiment was also reported in the literature, regarding differing assessment cultures, where students may consider teachers as assessment authorities (Summers et al., 2019). In addition, about half stated that they preferred to be challenged and half preferred to be the strongest in their class, demonstrating that students have a clear preference on this question.

Conclusions and future work

This report has described the development of a new type of placement tool for ESL courses, and the lessons we have learned through this process are being applied as we currently develop the French version of the tool. We drew on previous DSP research to support our decisions, including making use of actual student work samples to represent each level. In addition, we took the advice of previous scholars to supplement DSP tasks with an objective language task for purposes of triangulation. The result is a much shorter test compared to the original, and a test that has so far been well received by instructors and students.

One reason why instructors are positively oriented to the new test may be that many of them contributed their professional judgements through every step of the development process as partners with language testing researchers. Given the close relationship of placement tests to the relevant language courses (Green, 2018), the test could not have been designed without this partnership.

Our pilot process provided us with valuable information to support the revision of our assessment tool, beginning with responses to support subsequent standard-setting exercises to combine these scores into a final level recommendation decision, and to decide which cutoffs to apply to enable test takers to skip through tasks that were too difficult. We were interested in prioritising students’ judgements in the final scoring matrix. For example, we decided to not recommend more than one level higher or lower than that which is recommended from the DSP task.

It is not yet clear if this new placement tool will be more accurate, but we remain convinced that a progression of learning model for placement is preferable to our previous scale-based decision-making. In addition, including student preferences and judgement has too many potential benefits to not consider further, so we will continue to include these elements. We believe that this work provides a realistic portrayal of the often-messy process of assessment development in a local context. Future work includes collecting data on the numbers of course changes that take place now that the new test has been introduced, to enable comparisons to the previous test and provide an additional evaluation of placement effectiveness. With small-scale local tests such as these, it can take years to accumulate sufficient data to examine certain test groups of interest. However, we are committed to working within our limited resources to improve our service as well as the test taking experience of our language learners.

Supplemental Material

sj-pdf-1-ltj-10.1177_02655322231179128 – Supplemental material for Rethinking student placement to enhance efficiency and student agency

Supplemental material, sj-pdf-1-ltj-10.1177_02655322231179128 for Rethinking student placement to enhance efficiency and student agency by Beverly Baker, Angel Arias, Louis-David Bibeau, Yiwei (Coral) Qin, Margret Norenberg and Jennifer St-John in Language Testing

Footnotes

Acknowledgements

We would like to sincerely thank the English instructors and students at the Official Languages and Bilingualism Institute at the University of Ottawa for their dedication to this project.

Author contribution(s)

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.