Abstract

As integrated writing tasks in large-scale and classroom-based writing assessments have risen in popularity, research studies have increasingly concentrated on providing validity evidence. Given the fact that most of these studies focus on adult second language learners rather than younger ones, this study examined the relationship between written discourse features, vocabulary support, and integrated listening-to-write scores for adolescent English learners. The participants of this study consisted of 198 Taiwanese high school students who completed two integrated listening-to-write tasks. Prior to each writing task, a list of key vocabulary was provided to aid the students’ comprehension of the listening passage. Their written products were coded and analyzed for measures of discourse features and vocabulary use, including complexity, accuracy, fluency, organization, vocabulary use ratio, and vocabulary use accuracy. We then adopted descriptive statistics and hierarchical linear regression analyses to investigate the extent to which such measures were predictive of integrated listening-to-write test scores. The results showed that fluency, organization, grammatical accuracy, and vocabulary use accuracy were significant predictors of the writing test scores. Moreover, the results revealed that providing vocabulary support may not necessarily jeopardize the validity of integrated listening-to-write tasks. The implications for research and test development were also discussed.

Keywords

Introduction

Integrated skill tasks are commonly employed in language assessments of varying stakes and scales to evaluate test-takers’ language production based on information they receive from source texts. This information may take the form of reading materials, lectures, class discussions, or other methods that are used to deliver content-specific information (Cumming, 2013; Cumming et al., 2016; Plakans, 2015; Plakans, Gebril, & Bilki, 2019; Weigle & Parker, 2012). Research has shown that integrated skill assessments tap into multiple contributing skills of interest and general language proficiency (Sawaki et al., 2013). The provision of source texts in an integrated assessment allows for a more holistic assessment of a test-taker’s language proficiency, ranging from language use to source use based on the accurate comprehension of a given text, than is possible with independent writing tasks that solely measure writing skills (Plakans, 2008).

In an authentic academic setting, almost all writing assignments require learners to engage with reading or listening materials and may include writing a research synthesis on a scientific phenomenon or reflecting upon a lecture topic (Gebril & Plakans, 2009a, 2009b; Leki & Carson, 1997; Ohta et al., 2018; Plakans et al., 2018; Plakans, Gebril, & Bilki, 2019; Plakans, Liao, & Wang, 2019; Sawaki et al., 2013; Weigle & Parker, 2012). Test-takers are also frequently required to interpret the source materials in their own words, producing what Leki and Carson (1997) classified as “content responsible” writing (p. 41). In second language (L2) integrated writing assessments, scholars have identified an interplay between receptive skills (e.g., reading and listening) and writing skills within both task-completion processes and source integration strategies (Barkaoui, 2015; Cumming et al., 1989; Delaney, 2008; Hirvela, 2016; Plakans, 2009b; Rukthong & Brunfaut, 2020; Shin & Ewert, 2015; Watanabe, 2001; Weigle & Parker, 2012). These studies have also found that discourse synthesis represents a crucial cognitive mechanism underlying integrated writing, which itself includes skills that are essential to (1) organizing source information and summarizing points, (2) selecting specific parts of the source and using them in essays, and (3) making connections between source information, the topic, and personal experiences (Barkaoui, 2015; Cumming et al.,2005, 2006; Gebril & Plakans, 2009; Plakans, 2008, 2009a, 2009b; Plakans et al., 2018; Plakans, Liao, & Wang, 2019).

Research on integrated assessment has been carried out to provide reliability and validity evidence for using scores derived from a test task that integrates different language modalities. While gathering and presenting reliability evidence can be relatively straightforward (see Yan & Fan, 2021, for current methods in reliability and dependability estimation), validity evidence can be quite multifaceted and may be gathered rather broadly (Chapelle, 2020). A common type of validity evidence that has been the focus of integrated assessment research can be best characterized as evidence based on internal structure (Johnson & Christensen, 2020). Researchers have focused heavily on how discourse features vary at different score levels in integrated writing assessments to identify the specific features that predict the resulting writing scores (e.g., Cumming et al.,2005, 2006; Gebril & Plakans, 2013; Guo et al., 2013; Knoch et al., 2014; Plakans & Gebril, 2017; Plakans, Gebril, & Bilki, 2019). In a study of two distinct types of integrated task, Cumming et al. (2005, 2006) argued that, when compared with their lower scoring counterparts, higher scoring test-takers tend to accurately write longer clauses with more complicated structures and include higher quality arguments when summarizing source evidence. Gebril and Plakans (2013) also found systematic differences in grammatical accuracy and source text use between the lowest and the upper two score levels. Such studies provide evidence for the systematic differences in essay quality across score levels, thereby contributing to the validation of integrated writing scores. This evidence is critical for proper score interpretation as well as for verifying the rating scale that is used to score integrated writing tasks.

However, it is worth noting that these earlier studies of written discourse features primarily focused on adult L2 learners, since Martínez (2018) and Ortega and Carson (2010) indicated that little is known about the relationship between adolescent English as a foreign language (EFL) learners’ writing performance and written discourse features. Research focusing on adolescent learners’ writing performance in integrated writing tasks is even scarcer, potentially due to the complicated nature of its tasks, for which other language modalities are involved in addition to writing skills. Such tasks have been exclusively used to assess adult learners’ L2 writing proficiency (Knoch & Sitajalabhorn, 2013). Integrated writing tasks are generally more challenging because they require test-takers to sufficiently comprehend source materials before accurately and coherently expressing their ideas in their own writing (Cumming et al., 2005; Plakans & Gebril, 2017). After conducting factor analysis for the Test of English as a Foreign Language (TOEFL) iBT integrated writing task, Sawaki et al. (2013) attested, “Examinees whose academic English ability has not yet reached the [college] admission level may be having difficulty (a) comprehending the source materials . . . (b) selecting and organizing the appropriate information from source materials, or (c) both” (p. 93). Considering the purported connections between integrated writing tasks and academic writing required in the higher education context, college-bound high school students need to prepare for types of writing tasks that involve multiple language modalities, such as integrated writing tasks. Integrated writing tasks can still be helpful for non-college-bound high school students to the extent that they may help students develop important academic and professional skills of reasoning, argumentation, and critical thinking (Deane et al., 2008). Thus, we argue that the scarcity of research on the use of integrated writing tasks for adolescent L2 learners is problematic and should be addressed.

With limited information about whether integrated writing tasks can be used to assess the writing performance level of adolescent L2 learners, we are left to wonder if language learners and teachers should wholly avoid administering integrated writing assessments to adolescent learners despite the importance of using multiple language skills simultaneously in academic contexts. There are prominent differences between these adolescent learners and their adult counterparts, and it is not possible to merely transfer research findings on adult learners to this under-research population of learners. One salient difference is vocabulary knowledge. Older learners tend to possess a larger L2 vocabulary size than younger learners (Puimège & Peters, 2019). Adult learners’ larger vocabulary knowledge in their L1 may help them realize cross-linguistic transfer between L1 and L2 (i.e., words that share morphological, phonological, or semantic similarities), so they are more likely to efficiently acquire L2 vocabulary than secondary school learners (Ke & Xiao, 2015). It is also our understanding that enabling skills, including vocabulary, grammar, and pronunciation, affect performance in all four language skills (i.e., reading, listening, speaking, and writing), and adolescent learners are still in the process of developing these skills (Cameron, 2001; Coxhead, 2006). In addition, compared with adult learners who may have already tackled a variety of writing tasks in academic contexts, adolescent L2 learners usually lack experience and training in academic writing (Maamuujav et al., 2021). With these differences in mind, it is clear that some scaffolding is needed for adolescent learners when administering integrated writing tasks. Given that comprehension skills for input materials are critical to tackle integrated writing assessment tasks (Barkaoui, 2015; Plakans & Gebril, 2013), we are curious as to what scaffolding, if any, can be provided to adolescent learners to aid their comprehension. These queries can be answered by providing vocabulary support, which is crucial for both comprehension and language production.

The purpose of this research was to investigate the relationship between integrated listening-to-write scores and written discourse features with the understanding that this relationship informs the extent to which these features are predictive of adolescent EFL learners’ integrated listening-to-write scores. Discourse features in this study included complexity, accuracy, fluency, and organization. Moreover, this study aimed to understand the relationship between vocabulary support and the resulting scores. Measures of vocabulary support impact included vocabulary use ratio and the accurate use of provided words. The present study thus contributes to the literature by describing variations in discourse features and the use of vocabulary support across different score levels for the purpose of providing validity evidence for adolescent EFL learners’ integrated writing scores.

Literature review

In explorations of the trajectory of L2 development, second language acquisition research has focused on three major components of the multidimensional construct of L2 proficiency, namely complexity, accuracy, and fluency (CAF; e.g., Housen et al., 2012; Larsen-Freeman, 1978, 2009; Norris & Ortega, 2009; Skehan, 1996, 1998, 2009). Complexity and accuracy represent L2 learners’ current stage of language knowledge, as they reveal the degree to which learners are able to accurately use language in a sophisticated way. Conversely, fluency connotes how quickly L2 learners can access their language knowledge to produce a certain amount of language in a limited amount of time (Wolfe-Quintero et al., 1998). Research studies in second language acquisition and language testing tend to examine these three components in combination when attempting to understand the impact of such conditions as instruction or planning, rather than in isolation (Ellis & Yuan, 2004; Foster & Skehan, 1996). These measures serve as crucial evidence of a learner’s development in L2 speaking or writing.

The validity argument for proper score interpretation in L2 testing requires the investigation of differences in CAF across varying score levels, and it is expected that higher scoring test-takers exhibit more complex, accurate, and fluent language production than their lower scoring counterparts. While a number of studies have been carried out to provide validity evidence for utilizing CAF and relevant measures for written discourse features (e.g., organization and vocabulary knowledge) when assessing L2 learners’ integrated writing proficiency (e.g., Baba, 2009; Cumming et al., 2006; Gebril & Plakans, 2013; Plakans & Gebril, 2017; Plakans et al., 2019), the scope of interpretation is bounded by research design, task type, and task condition. In particular, the lack of studies examining written discourse feature measures in relation to adolescent EFL learners prevents us from understanding the characteristics of the products of integrated writing composed by learners of this profile. For this reason, investigating adolescent EFL learners’ integrated writing with regard to written discourse features would add to the validity argument of integrated writing tasks.

Complexity

In its most comprehensible explanation, the term complexity refers to “the use of more challenging and difficult language” (Ellis, 2003, p. 343). Housen et al. (2012) provided a more detailed definition of this term as being “the ability to use a wide and varied range of sophisticated structures and vocabulary in the L2” (p. 2). In practice, complexity is the most problematic construct in the CAF triad (Pallotti, 2009), subsuming many different aspects under this concept. In the literature, complexity is categorized as syntactic complexity and lexical complexity (Housen et al., 2012).

Syntactic complexity

Syntactic complexity refers to, as defined by Ortega (2015), “the range and the sophistication of grammatical resources exhibited in language production” (p. 82). Lu (2011) wrote that syntactic complexity can be measured by quantifying one or more of the following aspects: “length of production unit, amount of subordination or coordination, range of syntactic structures, and degree of sophistication of certain syntactic structures” (pp. 36–37). Syntactic complexity is commonly employed as a developmental measure of L2 speaking or writing. Researchers interested in measuring the complexity of L2 production must first determine which aspects of syntactic complexity they will focus upon; such a decision often considers the contextual characteristics of the given study, such as the current language proficiency of L2 learners.

In assessing the writing of L2 learners who have yet to fully develop in terms of language capacity, the T-unit serves as a viable index of syntactic complexity. Previous studies have used the T-unit, or the minimal terminable unit, to analyze L1 writing by children and adolescents (e.g., Hunt, 1965; Scott, 1988). Plakans, Gebril, and Bilki (2019) operationalized complexity as the mean length of T-unit to identify its contributions to the variance in integrated writing scores, while Cumming et al. (2006) reported that the length of clauses varied significantly among learners at different score levels.

Lexical complexity

While researchers agree that lexical complexity is an important indicator of L2 proficiency, there is as yet no uniform definition for it. The consensus is that lexical complexity is a multidimensional construct that consists of several different aspects, as with the constructs in the CAF triad. Jarvis (2013) suggested that lexical sophistication and lexical diversity are important dimensions of lexical complexity. Conversely, Read’s (2000) conceptualization of lexical complexity included lexical density, lexical sophistication, lexical variation, and the number of errors in vocabulary use. Among these traits, lexical sophistication denotes the extent to which a learner is able to use low-frequency, advanced lexical choices (Laufer & Nation, 1995). In language assessment, more convenient measures are commonly adopted to denote the maturity of a test-taker’s lexical sophistication. For example, in a comparison study of the discourse features between independent and integrated writing tasks, Cumming et al. (2006) gauged lexical sophistication by utilizing average word length and type–token ratio. However, recent research has indicated that the type–token ratio approach measures lexical cohesion, rather than lexical sophistication (Crossley et al., 2015).

Baba (2009) conducted a study in which she ran correlation and regression procedures to identify the influence of three lexical complexity indices—vocabulary size, word definition ability, and lexical diversity—on summary writing performance. While both vocabulary size and the ability to define words correlated moderately with summary writing performance, lexical diversity had a negative, statistically nonsignificant correlation with the dependent variable. According to the regression analyses, however, the effects of lexical complexity were not comparable to those of basic language abilities, including English reading proficiency, summary length, English proficiency, knowledge of Japanese vocabulary, and Japanese writing proficiency.

Grammatical accuracy

Accuracy refers to the extent to which language production is free of grammatical errors (Housen & Kuiken, 2009; Wolfe-Quintero et al., 1998). Since language production is compared against a presumed norm, accuracy is arguably “the most straightforward and internally consistent construct” (Housen et al., 2012, p. 4). In L2 testing and assessment, the number or rate of errors in a given essay is considered to be a common proxy of writing accuracy. As with other constructs in the framework, accuracy holds a positive correlational relationship with proficiency score; that is, higher scoring learners tend to produce essays with higher accuracy (e.g., Arnaud, 1992; Scott & Tucker, 1974). A recent study by Peng et al. (2020) provided a more nuanced understanding about the construct, demonstrating that writing accuracy can differ as a function of the linguistic complexity of the source text. After administering a continuation task (a type of integrated writing that requires reading an incomplete story and completing it) to the participants, the researchers found that providing a simplified text that better reflected the learner’s production capability was more conducive to eliciting better writing accuracy than giving them an unsimplified text. In contrast, in another study by Shi et al. (2020), which explored the effect of prompt type in the continuation task, writing accuracy was comparable across all four different types of prompts. Taken together, mixed evidence exists as to the level of grammatical accuracy that an L2 learner exhibits in an essay. Also, it is important to note the potentially inverse relationship between grammatical accuracy and syntactic complexity, in the sense that an L2 learner might tend to construct sentences in a test task in a way that increases grammatical accuracy at the price of reduced syntactic complexity (Jagaiah et al., 2020).

Fluency

Fluency is understood to be the ease and rapidity with which a test-taker accesses his or her language system to retrieve the necessary language forms in response to a task requirement. L2 writing assessment research has mostly utilized length as a proxy for fluency development. As such, fluency in L2 writing assessment contexts indicates the rate and amount of written production generated within a given time. Study results have consistently shown that fluency correlates with performance level; that is, lower scoring test-takers tend to produce briefer essays than higher scoring test-takers (Cumming et al., 2005, 2006; Jiang et al., 2019; Plakans et al., 2019). As an illustration, when investigating the discourse features of L2 test-takers’ integrated writing, Cumming et al. (2005, 2006) and Plakans, Gebril, and Bilki (2019) tallied the total number of words written in a composition as a measure of fluency. Plakans, Gebril, and Bilki (2019) reported that fluency was the biggest contributor to the variance in integrated writing scores, accounting for approximately 25% of variance. It is also worth noting similar findings in Gebril and Plakans (2013) and Shi et al. (2020). In particular, Shi et al. indicated that a source text whose complexity is comparable with the production ability of the test-takers can provide more significant gains in fluency than can an unsimplified source text.

Organization

In assessing the potential relationship between organization and score in a writing task integrated with listening and reading, Plakans and Gebril (2017) observed that the five-point rubrics used for grading essay organization showed statistically significant differences in the main effect of integrated score. Coherence, which was operationalized as the logical flow of written production, elicited significantly different means across score levels, whereas the results of a multivariate analysis of variance (MANOVA) test suggested that cohesion (e.g., repetition and use of connection words) did not derive significant differences in the integrated writing scores. Similarly, a study by Crossley and McNamara (2012) revealed that essays written by L2 learners categorized as highly proficient were characterized by effective lexical sophistication, rather than cohesion. The purported non-significance of cohesion can be explained by Graesser et al. (2004), who suggested that higher level texts usually contain more implicit cohesiveness, requiring readers to make inferences. Taken together, these studies demonstrate that organization can be a complex construct for L2 learners to effectively learn and display in an evaluative context.

Vocabulary support

As Cumming (2013) and Sawaki et al. (2013) noted, among the constraints that test-takers of an integrated writing task face is the threshold proficiency level that makes writing for integrated tasks possible. That is, learners who are not equipped with a minimum level of language proficiency at the time of assessment are unlikely to produce an effective essay when faced with a prompt that requires the integration of different language skills. While a number of cognitive and affective capabilities are necessary for effective performance in an integrated writing task, vocabulary knowledge is considered one of the most foundational factors in comprehending input materials (e.g., Beglar & Hunt, 1999; Laufer, 1992; Qian, 1999; Stæhr, 2008, 2009; Zhang, 2012). For instance, in a study on the composing processes of L2 writers completing reading-to-write tasks, Plakans (2009a) found that writers of all levels experienced challenges related to vocabulary. These results showed that the limited vocabulary knowledge of lower scoring writers affected how well they were able to understand the source materials. Similarly, Rukthong and Brunfaut (2020) indicated, in an integrated task that involves listening and writing, that high-quality integrated task performance is mediated crucially by a complex interaction between low- and high-level text processing and strategy use. Importantly, activation of high-level text processing (e.g., semantic and pragmatic processing) is impossible without text processing at a low level, such as acoustic–phonetic processing, word recognition, and parsing.

Although studies focusing on the impact of vocabulary support on integrated listening-to-write test performance are lacking, research has shown that providing vocabulary support in advance of a listening task aids test-takers’ listening comprehension. Chung (2002) suggested that the post-test scores of their experimental group, which had been exposed to a treatment of vocabulary pre-teaching, were significantly higher than those of the group given no treatment. Comparing four types of pre-listening support, Babaei and Izadpanah (2019) found using multimedia annotations and pre-teaching key vocabularies to be associated with significantly positive gains in listening comprehension scores for elementary-level EFL learners. In a similar study carried out by Madani and Kheirzadeh (2022), vocabulary preparation and pre-reading questions facilitated elementary-level EFL learners’ listening comprehension.

Studies have shown that analyzing the textual features of integrated writing tasks helps us differentiate test-takers at varying English performance levels. However, it is worth noting that these studies focused solely on one particular population of learners (specifically, adult, university-level English as a second language [ESL]/EFL learners). It is therefore unknown if textual features can be used to differentiate the performance levels of other populations, such as adolescent or young English-learning students. Moreover, the integrated writing tasks that were used in these studies were predominantly reading-to-write or reading–listening–writing, and thus the impact of listening-to-write tasks on textual features is unclear. In addition to the textual features of integrated writing, studies have highlighted the influence of vocabulary on both reading and listening comprehension and writing performance. Furthermore, although previous studies have suggested that pre-learning the vocabulary of source materials may benefit learners’ comprehension, the tasks that were used in these studies were not integrated. Since studies of the effects of pre-learning vocabulary remain scarce, more studies are needed to more fully understand the role of pre-learning vocabulary in integrated writing assessments.

The purpose of the present study was to investigate the textual features that are produced in listening-to-write tasks as well as the contributions of vocabulary support to adolescent L2 learners’ integrated writing performance. With this objective in mind, the current study intends to address the following questions:

Do textual features and vocabulary support predict students’ writing performance in integrated listening-to-write assessments?

If yes, how are the measures of textual features and vocabulary support characterized by score level?

Methods

Participants

The participants in this study consisted of 198 Taiwanese high school students. In Taiwan, high school education lasts 3 years (Grades 10 through 12 in U.S. education) and is part of compulsory formal education, with a national curriculum set forth by the Ministry of Education (MOE). The graduation requirements specify that students take five English class periods per week for 3 years. Each classroom has mixed proficiency levels.

The participants included 128 males and 70 females, all of whom were in their second year of high school (equivalent to Grade 11 in the U.S. school system). All the participants were 17 years of age, and none had studied English abroad or lived outside their country at the time of data collection. Their native language was Mandarin Chinese, although some also spoke Taiwanese or another indigenous language at home or in their community. Based on information from their English teachers, learning materials, and class assignments, the participants’ overall English proficiency ranged from A2 (low-intermediate level) to B1 (intermediate level) on the Common European Framework of Reference for Languages (Council of Europe, 2001) proficiency scale. It is important to note that the MOE launched in 2021 the

Of the 198 students, 130 completed two integrated writing tasks, and the remaining 68 completed only one integrated writing task. Although we collected 328 essays in total, 60 of these were excluded from the data analysis, since these essays (1) did not include any written responses, meaning that there were no texts to analyze; (2) only expressed the students’ inability to compose written responses (e.g.,

Listening-to-write tasks

Language tasks that require both listening and writing skills are appropriate and useful for adolescent L2 learners. Research has shown that younger language learners tend to comprehend source materials through audio input better than through visual input (Miller & Smith, 1989; Price et al., 2016; Prior & Welling, 2001). Moreover, performing a writing task (e.g., writing a reflection or summary) based on aurally acquired information (e.g., from a lecture or discussion) is a typical task that students encounter in secondary and higher education. Moreover, integration of listening and writing skills is often seen in standardized English proficiency tests, such as the TOEFL. We contend that compared with other types of integrated writing tasks, such as reading-to-write or reading–listening–writing, listening-to-write tasks were more appropriate to administer to our participants, considering their age and the prevalence of such tasks in a real-life academic context.

Two listening-to-write tasks were provided as part of the extracurricular activity, and the data collection took place during summer, when a special summer curriculum was in place. The school principal and the English teachers at the data collection site considered these tasks to be beneficial for their students, as they would usually learn English in an integrated manner whereby multiple language skills were developed in a single class. Both integrated writing tasks were adopted from a standardized test preparation book (Uehara, 2015). Each task required the students to listen to an academic lecture while taking notes and then write a paragraph describing the lecture based on their listening comprehension. Two writing topics were related to science, one about the two types of mountains and the other about the life cycle of a hurricane. All students completed the two listening-to-write tasks on the same day, except for those who only completed one task. The 130 students who participated in the two listening-to-write tasks took a 10-minute break after one task had been completed. When implementing the two tasks, the order was randomized; 134 students completed the mountain topic first, and the rest completed the hurricane topic first. Those who completed one listening-to-write task performed the first topic only.

Before listening to each lecture, a list of key vocabulary words was provided to aid the students’ understanding of the listening material. When students received the vocabulary list, we explained the meaning of the words in students’ L1 and taught their pronunciation. We also gave example sentences for words that were abstract in meaning. We encouraged students to ask questions while they were processing these given words. Students were also allowed to take notes before they listened to the lecture. This vocabulary support was considered a necessary scaffolding for students who may not have been familiar with some vocabulary words included in the lecture, as research has shown that integrated writing tasks require a threshold level of comprehension of source materials (e.g., Cumming, 2013; Sawaki et al., 2013). To create the vocabulary list, we began by selecting words based on the curriculum guidelines developed by the Taiwan MOE for K–12 English education. For its national high school curriculum, the MOE has selected 4500 English words for students to learn by the time they graduate from high school (Ministry of Education Republic of China, 2018). To ensure that there were no other words that might be challenging for the students, we asked English teachers to look at the listening scripts and identify words that need glossing based on their professional judgment. We then added those selected words to the glossary. The vocabulary list included the Chinese equivalents. Since the purpose of providing the glossary was to aid their listening comprehension, the students were not instructed to use these provided words, nor were they encouraged to use the words in their writing.

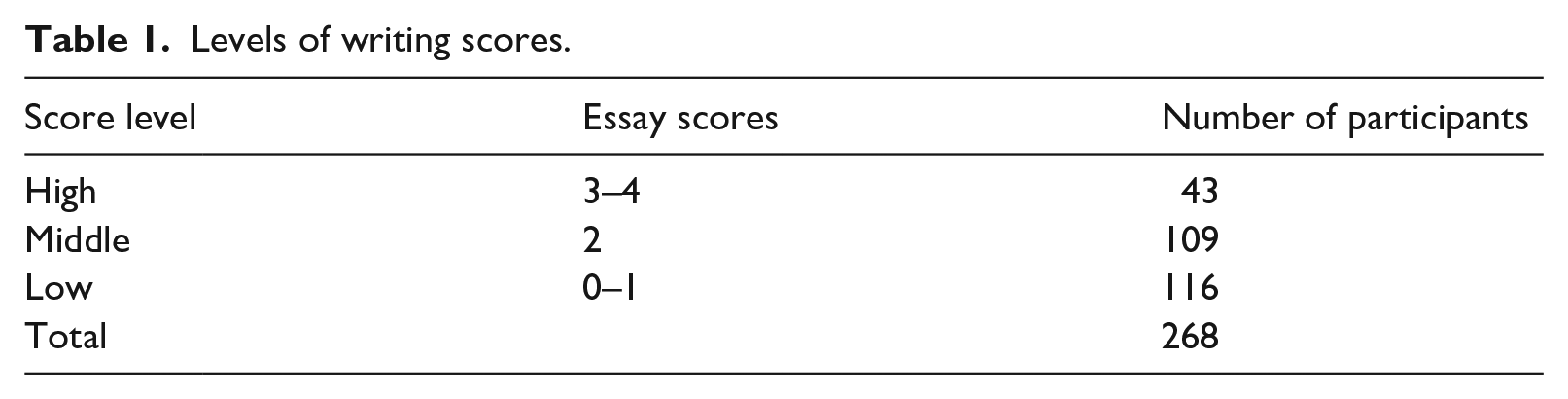

The two raters (both of whom were experienced English teachers) holistically scored the written products according to the TOEFL Junior Writing Scoring Guide: Listen–Write (Educational Testing Service, 2012). The interrater reliability between the two raters was

Levels of writing scores.

Selection of textual features

When analyzing the textual features of integrated writing performance, Cumming et al. (2005, 2006) focused on the following areas: lexical sophistication, syntactic complexity, rhetoric, and pragmatics. Based on Cumming et al. (2005, 2006), Gebril and Plakans (2009), and Plakans, Gebril, and Bilki (2019), we chose several textual features as the focus of this study to further investigate the relationship between textual features and integrated writing performance. These chosen textual features are described in the following sections.

Lexical sophistication

We chose to measure lexical sophistication by examining the average word length (Balota et al., 2007; Cumming et al., 2005, 2006; Grant & Ginther, 2000; Kramer & McLean, 2019; Maamuujav et al., 2021; Sawaki et al., 2013; Yoon, 2017). Microsoft Word was used to calculate the average word length, in accordance with the formula that was proposed by Cumming et al. (2005), namely: “the number of characters divided by the number of words per composition” (p. 9).

Syntactic complexity

Based on Ortega (2003) and prior studies, syntactic complexity was measured by calculating the average number of T-units in each sentence as well as the mean T-unit length (Casal & Lee, 2019; Cumming et al., 2005; Henry, 1996; Jiang et al., 2019; Jin et al., 2020). Previous research has suggested that mean T-unit length is capable of differentiating writing levels among learners for a writing task based on source material (Casal & Lee, 2019), lending support for its use as a measure of syntactic complexity.

Fluency

Studies have shown that word count can be an effective approach for distinguishing writing fluency across score levels (Cumming et al., 2005, 2006; Johnson et al., 2012; Kim et al., 2018; Plakans, Gebril, & Bilki, 2019; Shi et al., 2020). As Johnson et al. (2012) suggested, using the total number of words in a given essay is a feasible approach for measuring fluency, since in an evaluative context, timing is a variable held constant for all test-takers. Also, as mentioned above, Cumming et al. (2005) and Plakans, Gebril, and Bilki (2019) found that in their integrated assessment, the fluency measure operationalized by the total word count accounted for the largest score variance, reflecting ability difference among test-takers.

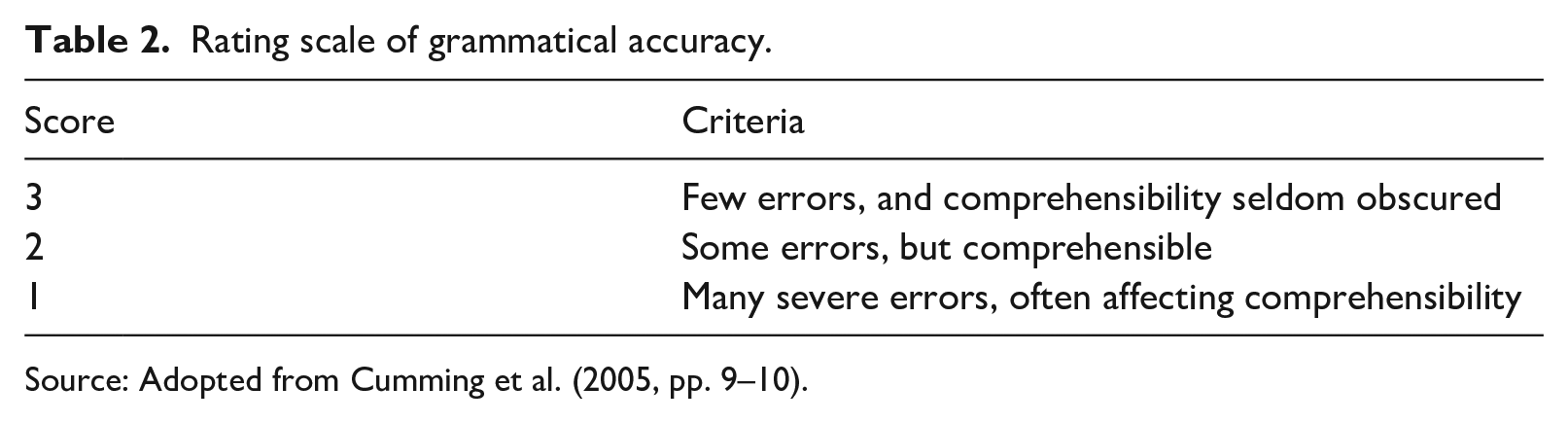

Grammatical accuracy

The grammatical accuracy of the students’ essays was judged based on the holistic scoring rubric used by Cumming et al. (2005), which was developed based on Hamp-Lyons and Henning (1991). The scores ranged from 1 to 3, with 3 being the highest score. The interrater reliability between the two raters was .84 with

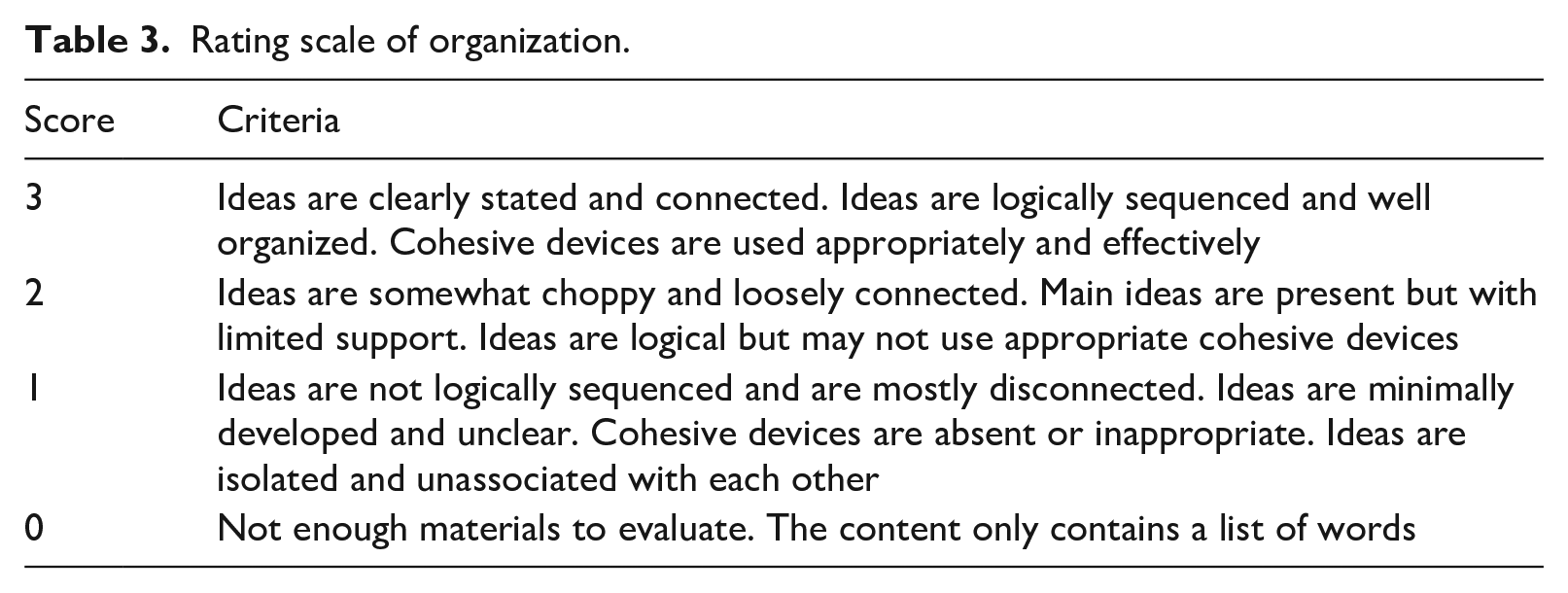

Organization

We developed a rating scale and piloted for revisions according to previous studies that focused on evaluating organization in L2 writing (Carr, 2000; Jacob et al., 1981; Li & He, 2015; Plakans & Gebril, 2017). During piloting, the anchor essays at each score level were selected to rate the appropriateness of organization using the rubric. We then used the rating scale to rate all the essays, and the interrater reliability between the two raters was .96 with

Rating scale of organization.

Vocabulary support

Although we did not encourage the students to use the provided vocabulary, most of the written products included the given words. Thus, we calculated the vocabulary ratio to explore patterns of using the provided vocabulary among students. To calculate the vocabulary ratio, we began by counting the number of glossed words in the students’ essays and then divided these identified glossed words by the total number of words written.

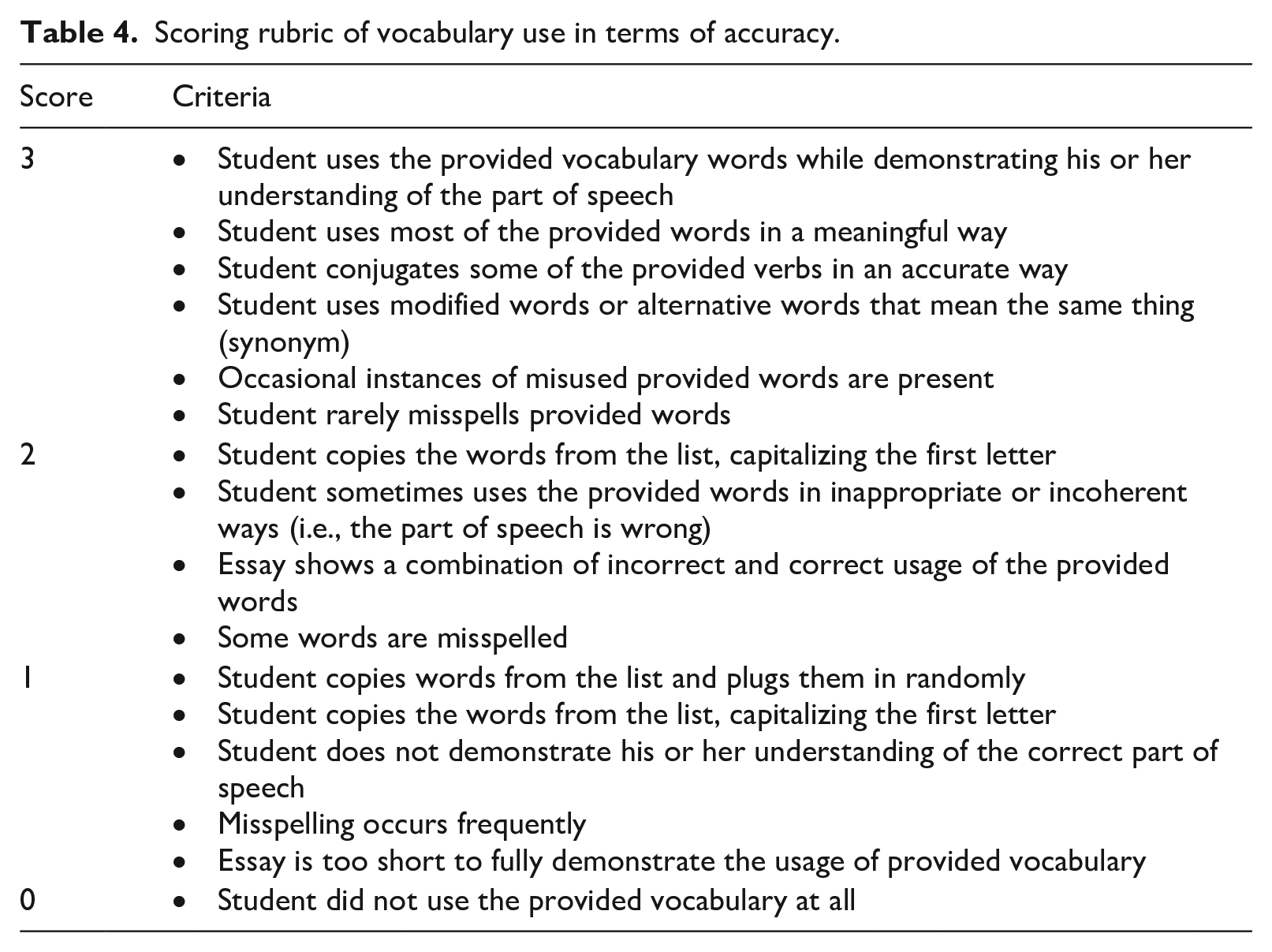

Moreover, to investigate how vocabulary support affected the students’ writing performance, we focused on the accurate use of the provided words (i.e., whether the students used the provided words appropriately and meaningfully). To create a scoring rubric for vocabulary use, we first reviewed the literature relevant to the assessment of vocabulary usage. Then we piloted the scoring rubric with anchor essays at each score level and revised the rubric for appropriateness, clarity, and task relevance. The interrater reliability between the two raters was .94 with

Scoring rubric of vocabulary use in terms of accuracy.

Analysis

We employed descriptive statistics and hierarchical multiple regression (HMR) analyses (i.e., stepwise) in our attempts to answer the research questions. To avoid interplay between the variables of textual features and vocabulary support, the stepwise regression analyses were carried out separately, thereby allowing us to examine the impact of textual features and vocabulary support on students’ writing performance. For the first regression model, the criterion variable was the students’ essay scores, and the predictor variables were fluency, organization, grammatical accuracy, mean number of T-units in each sentence, mean T-unit length, and lexical sophistication. For the second regression model, the criterion variable was the students’ essay scores, and the predictor variables were vocabulary use ratio and accurate use of the provided vocabulary words.

We checked the assumptions for the HMR analyses in accordance with Thorndike and Thorndike-Christ (2009). First, there was no multicollinearity, as the tolerance scores for the interested variables were all above .1 (organization = .28; fluency = .28; grammatical accuracy = .68; mean number of T-units in each sentence = .92; mean T-unit length = .80; lexical sophistication = .78; vocabulary use ratio = .96; vocabulary use accuracy = .96). Second, the histogram and normal probability plots showed that the residuals were normally distributed. Third, no outlier was identified based on the results of Cook’s distance (i.e., <1). Fourth, homogeneity of variance was checked by reviewing the scatterplots of standardized predicted values by standardized residuals.

Results

Descriptive statistics of textual features and vocabulary support by writing score level

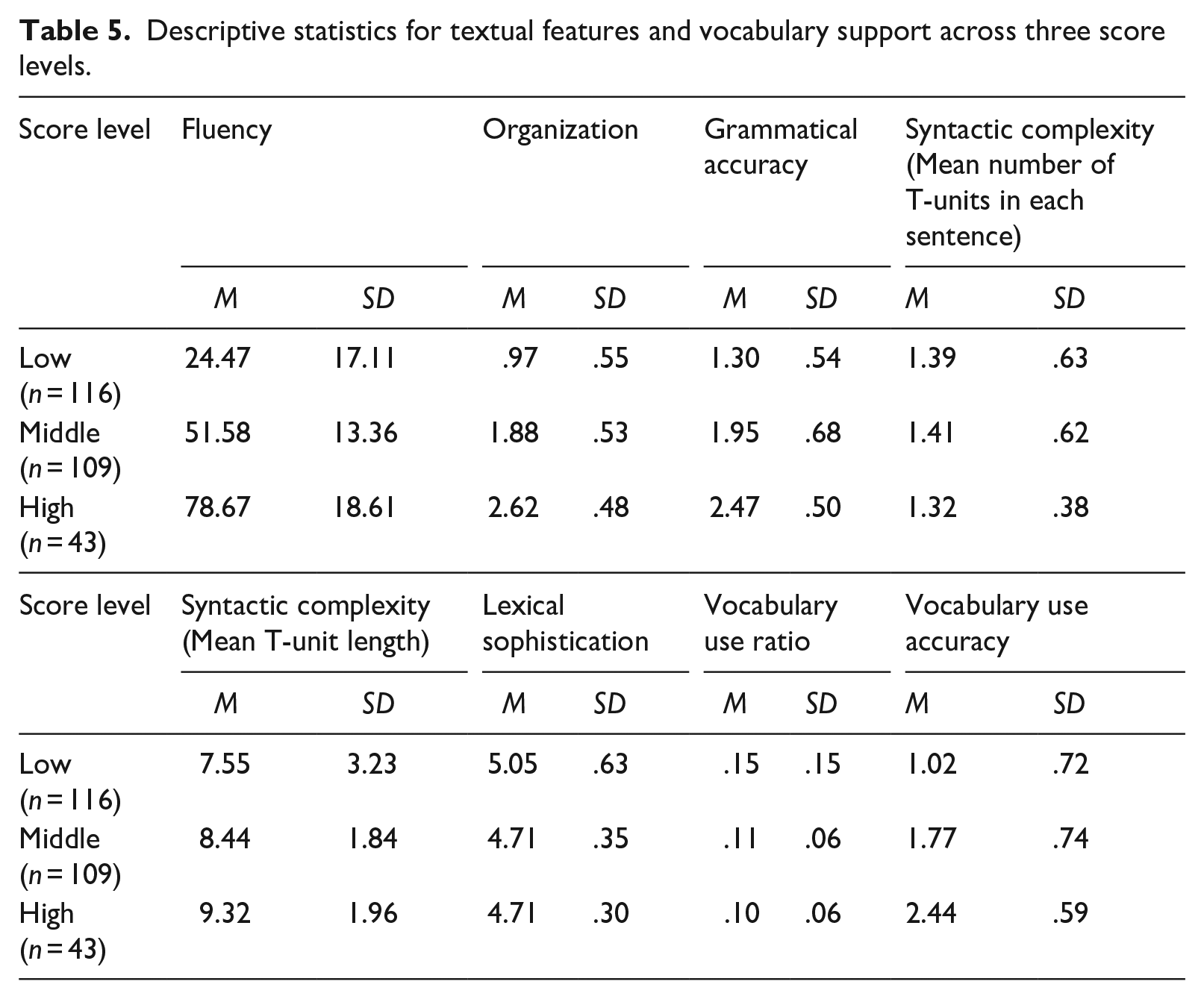

Table 5 presents the descriptive statistics for textual features and vocabulary support across different score level groups. In terms of textual features, a general pattern revealed that some textual features (including fluency, organization, grammatical accuracy, and mean T-unit length) increased as the essay scores rose. However, other features (i.e., mean number of T-units in each sentence and lexical sophistication) did not follow this linear pattern. Regarding vocabulary support, vocabulary use accuracy increased as the essay scores increased, while vocabulary use ratio showed an opposite pattern.

Descriptive statistics for textual features and vocabulary support across three score levels.

The predication of textual features and vocabulary support on students’ writing performance in integrated listening-to-write assessments

In the first regression model, fluency was entered in the first step, followed by organization, grammatical accuracy, mean number of T-units in each sentence, mean T-unit length, and lexical sophistication. The second regression model included accurate use of provided words in the first step, followed by vocabulary use ratio.

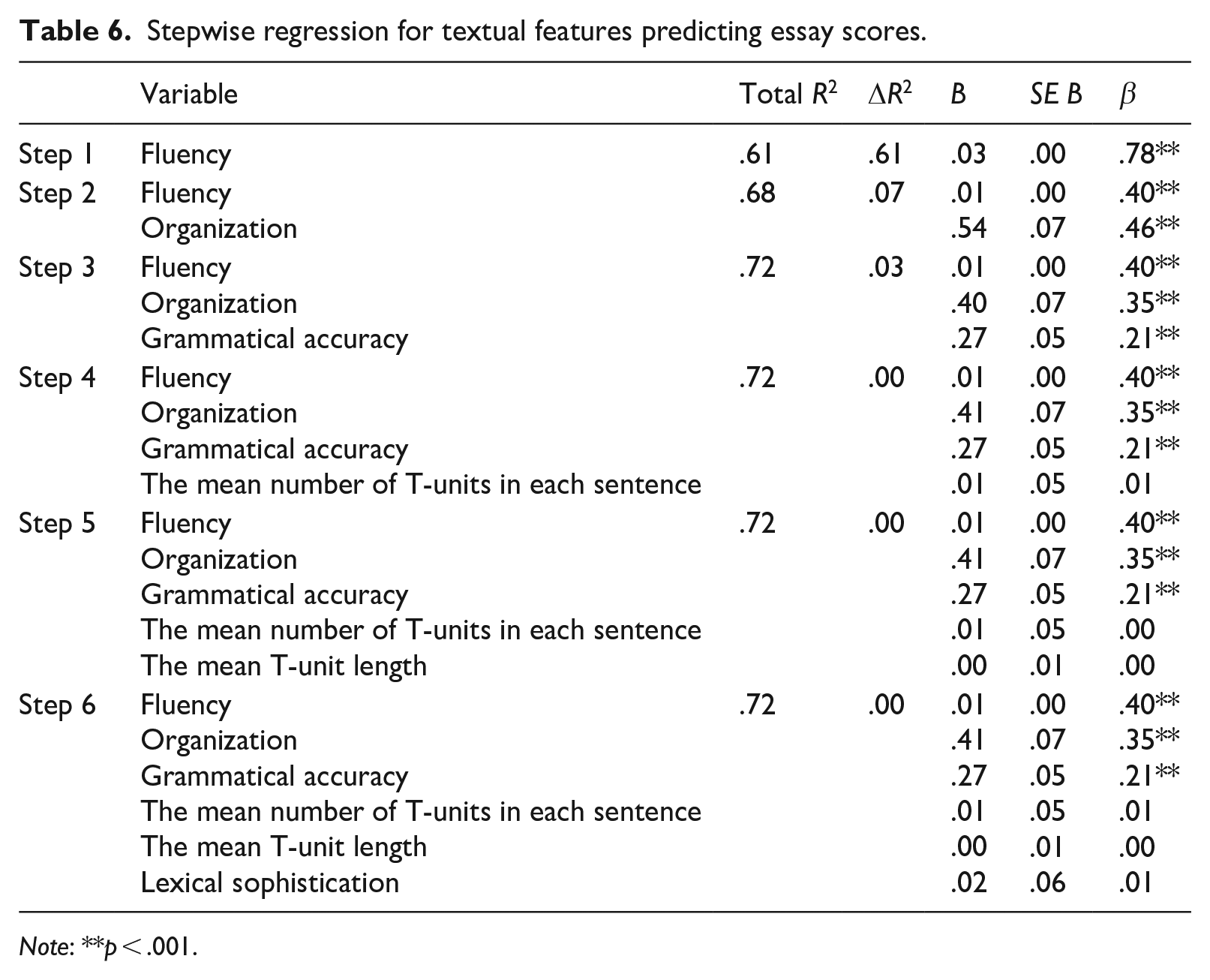

As Table 6 demonstrates, the textual features (i.e., fluency, organization, and grammatical accuracy) accounted for 72% of the variance in essay scores, with sole contributions from fluency (Model 1, Δ

Stepwise regression for textual features predicting essay scores.

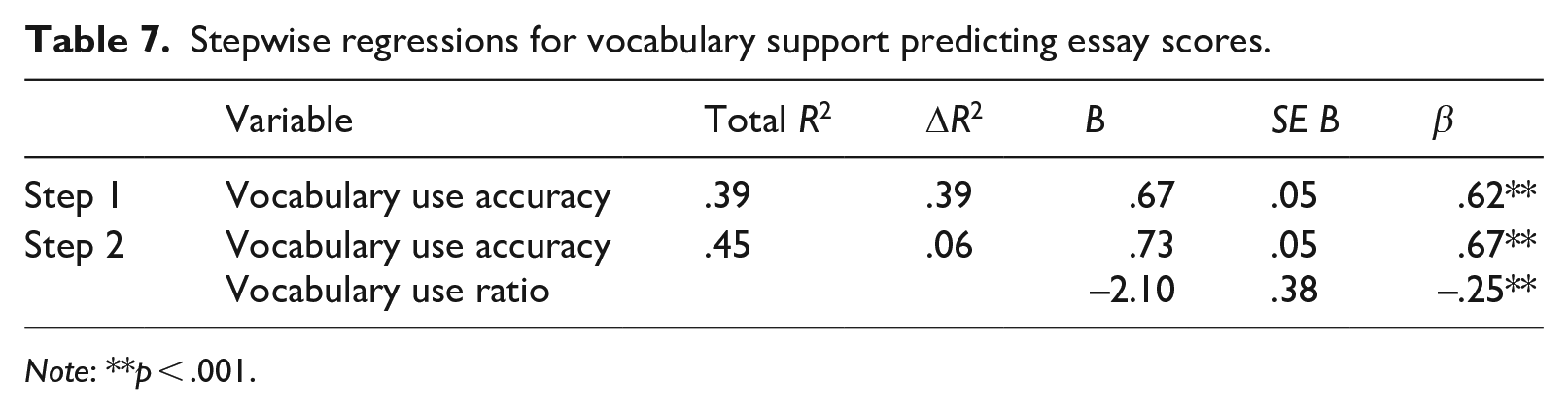

Table 7 shows that the vocabulary use of scaffolding materials accounted for 45% of the essay score variance, with the major contribution stemming from vocabulary use accuracy (Model 1, Δ

Stepwise regressions for vocabulary support predicting essay scores.

In summary, the results of the HMR analyses indicate that written discourse features had varying degrees of impact on the essay score variance. The first regression model revealed that fluency, followed by organization and grammatical accuracy, was the most predictive variable of the essay scores. Regarding the influence of vocabulary scaffolding materials, the second regression model indicated that vocabulary use accuracy had more predictive power than vocabulary use ratio in the essay scores.

Discussion and implications

The results of this study address the construct validity of integrated listening-to-write assessments by investigating the relationship between written discourse features, vocabulary support, and the resulting scores. Validation research involves collecting five different types of empirical evidence to support proper interpretations and uses of test scores, one of which is examining the internal structure of test-taker responses (Chapelle, 2020). This research is critical for supporting proper score interpretations and uses that warrant the implementation of an innovative integrated writing task for adolescent EFL learners. According to the HMR analyses, several textual features significantly predicted students’ writing performance in the integrated listening-to-write assessments. That is, the regression model showed that fluency (i.e., text length), organization, and grammatical accuracy were significant predictors of the current study’s integrated listening-to-write scores. Successful performance in listening-to-write assessments depends on students’ ability to (1) comprehend the lecture content by identifying key ideas and supporting details and (2) construct a piece of writing with minimum grammatical errors and proper organization. By doing these successfully, the text will naturally be lengthy.

The results of our study coincide with those of studies focusing on adolescent L2 learners’ independent writing tasks. Uccelli et al. (2013) and Wolf et al. (2018) indicated that text length, organization, and grammatical accuracy were significant predictors of independent writing scores. Among these significant predictors, text length was the major contributor to determining essay quality, as our study also demonstrated. Our findings also correspond with Cumming et al. (2006), whose investigation of discourse features included a listening-to-write task for adult L2 learners, finding that higher scoring writers tended to write longer compositions and clauses with greater lexical variations and grammatical accuracy. This coincides with another finding from Cumming et al.’s (2005) study, which suggested that lower scoring students tend to write shorter noun phrases and compositions and repeatedly use words provided from source texts with a lack of grammatical accuracy.

Looking more broadly, fluency, organization, and grammatical accuracy have been found to be important for successful performance in adult L2 learners’ integrated writing assessment (e.g., Cumming et al., 2005, 2006; Gebril & Plakans, 2013; Plakans, 2009a; Plakans & Gebril, 2017; Plakans, Gebril, & Bilki, 2019) regardless of the type of integration, including reading–listening–writing and reading-to-write. These studies emphasized that higher scoring writers are more likely to produce longer texts with appropriate organization and grammatical accuracy than their lower scoring counterparts. The current study confirms that listening-to-write tasks for adolescent L2 learners are no exception for assessing these three important writing features as target constructs. While bearing in mind that adolescent L2 learners are different in many ways to adult learners, the findings of this study coincide with previous research focusing on adult L2 learners. That is, adolescent learners must strive to develop skills to transfer their own understanding of the source material into a coherent piece of writing. It is critical for them to practice composing a sentence, organize a few sentences based on idea units, and then identify and correct language errors during the course of writing.

The present study also investigated how the use of provided vocabulary words predicted integrated listening-to-write performance. The regression model demonstrated that students who used the provided words accurately and meaningfully in their written products tended to receive higher listening-to-write scores than those who frequently copied the words and used them randomly in their paragraph. The lower scoring students in this study used the provided words in their writing more often than did their higher scoring counterparts (see Table 5 for the vocabulary ratio). On the other hand, higher scoring students exhibited incidental use of the provided words in an attempt to express their understanding of the lecture content in their own words. These results correspond with the findings of Kyle (2020) and Weigle and Parker (2012) in that, compared with higher scoring writers, the lower scoring counterparts mainly copied words, phrases, or content from the source texts when performing integrated writing tasks. In this present study, the vocabulary support was provided to aid listening comprehension, but this scaffolding did not necessarily bring about students’ improved performance in the listening-to-write tasks, nor did it disproportionately benefit a particular group of students. Those who chose not to use the provided words in their essays were not systematically penalized. Thus, we argue that vocabulary support does not jeopardize the interpretation of listening-to-write scores.

All in all, vocabulary support should be considered a useful scaffolding tool when administering integrated listening-to-write assessments to adolescent learners. Research in the field of L2 listening has shown that pre-listening activities (e.g., providing key vocabulary) can bolster L2 learners’ vocabulary knowledge, which is required for listening comprehension (Babaei & Izadpanah, 2019; Chung, 2002; Jafari & Hashim, 2012; Madani & Kheirzadeh, 2022). Yet in our study, we did not have empirical data to claim that vocabulary support actually helped students’ listening comprehension, except for positive anecdotal comments that we received from the English teachers and participating students. Clearly, more research is needed to investigate the efficacy of vocabulary support in integrated writing assessments that involve listening skills, such as including comprehension questions before the writing stage.

Conclusion

While the findings of this study make an important contribution to the literature of L2 integrated writing assessment for adolescent learners, it has limitations that should be noted. First, as the content of the lectures was related to science alone, including lectures related to more diverse fields (e.g., art, history, or politics) may minimize the topic effect. Second, in accordance with previous research (e.g., Cumming et al., 2005; Gebril & Plakans, 2009; Sawaki et al., 2013; Shi et al., 2020), we chose measures of textual features to understand how these features are related to integrated listening-to-write performance; however, using other measures of discourse characteristics may provide different findings. For example, studies have suggested the use of corpus data, such as average reference corpus word range (i.e., the frequency of a word in reference to a corpus of texts), the average reference corpus bigram and trigram (i.e., combinations of two and three words, such as

Third, while the multicollinearity of the predictor variables was checked, it is possible that the vocabulary ratio may have been influenced by the number of words the students wrote in their essays (i.e., the vocabulary ratio decreases when students write lengthy essays using their own vocabulary bank). Fourth, this study did not have a control group, so we could not conduct group comparisons. This limits our understanding of the impact of vocabulary support on students’ integrated writing performance. In future studies, researchers should consider dividing students into experimental and control groups to examine whether vocabulary support affects their understanding of the listening materials as well as their integrated writing performance. Since the integrated listening-to-write tasks in this study were given to the students as extracurricular activities, which were not linked to their school performance, their motivation levels might have varied. Consequently, some students might not have made the same effort as they would have if these tasks were given as regular classroom assessments. Another limitation is that since the participants in this study were all Taiwanese EFL learners, it may be difficult to generalize the findings to EFL learners in other parts of the world. Indeed, our findings may have varied had our study included English learners from diverse cultural and language backgrounds. Last, it should be noted that the researchers had only limited information about the students’ overall English proficiency prior to the study; as such, we have a limited understanding of how the students’ English proficiency levels may interplay with the current integrated writing tasks.

Overall, our findings demonstrate how textual features and vocabulary support predict adolescent English learners’ integrated writing performance. The present study sheds light on an under-researched population of learners’ L2 writing performance. To successfully perform a listening-to-write assessment, adolescent learners must write a text with a proper length, appropriately organize their understanding of the listening materials, and include as few grammar errors as possible that interfere with meaning. We found that vocabulary support did not pose a threat to the validity of integrated listening-to-write tasks. Thus, practitioners, such as teachers and test developers, can consider providing adolescent learners with vocabulary support as a pre-listening activity, given the importance of vocabulary knowledge in listening comprehension.

Footnotes

Acknowledgements

Our special thanks go to Warren Merkel for his professional feedback. We would also like to thank the four anonymous reviewers for their assistance in revisions of this article.

Author contribution(s)

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This study was conducted when Dr. Ohta was affiliated with the University of Iowa.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.