Abstract

This paper addresses the intersection of testing and policy, situating test-driven impact and validation within the context of policy-led educational reform in Korea. I will briefly review the existing validation models. Then, arguing for an expansion of the conventional conceptualization of consequential validity research, I use Fairclough’s dialectic–relational approach in critical discourse analysis (CDA), positioned in critical and poststructuralist research tradition, to evaluate social realities, such as intended and actual impact of policy-led testing, I take, as an example, the context of the development of the National English Ability Test (NEAT) in Korea, which had been used as a means of implementing government policies. Combining Messick’s validity framework for consequential evidence, Bachman and Palmer’s argument-based approach to validation (assessment use argument, AUA), and Fairclough’s dialectic–relational approach, I will illustrate how the impact of policy-led testing is performed and interpreted as a sociopolitical and discursive phenomenon, constituted and enacted in and through “discourse.” By revisiting the previous Faircloughian research works on NEAT’s impact, I postulate that the discourses arguing for and against social impact acquire their meanings from dialectical standpoints.

Keywords

The field of language testing has demonstrated a notable critical turn, moving “beyond the text” to embrace the sociopolitical dimension. Shohamy (2008) pointed out that the meaningfulness of language testing should be judged “in relation to [its] impact, ethicality, fairness, values, and consequences” (p. 363). Within this view, meaningfulness is intrinsically connected with a test’s uses and its effects on wider concerns such as language policy. Recent interactions between language policy and testing are hoped “to broaden our thinking about measurement [in order] to consider the policy context of assessment” (Hill & McNamara, 2015, p. 42).

While a collaborative intersection between language policy and testing has been acknowledged as important (Im et al., 2022), its feasibility has been called into question (Chalhoub-Deville, 2016). In current practice, testing standards are set, designed, and administered via policy initiatives, such as in the Common European Framework of Reference for languages (CEFR) (McNamara, 2010), and in No Child Left Behind (NCLB) and Race to the Top (RTTT) in the United States (Chalhoub-Deville, 2016). These initiatives drive the development, use, or in some cases, suspension of tests. As Young (2012) wrote, these frameworks are often grounded in the vested interests of testing regime consortia, to meet collectivist policy goals.

The goal of the language policies described above is to improve aggregate and system performance, such as teacher education, economic growth, social cohesion, and national competitiveness (Chalhoub-Deville, 2016). These indicators, which reflect the values of powerful stakeholders, pre-authorize testing constructs, which in turn have impacts on item types and rating rubrics through reform procedures. Such reform-based testing aims at bringing about a “positive impact” within the sociopolitical context.

Scholars have differentiated the “impact” of a test on society from its “washback” on teaching and learning (see McNamara, 2010). Specifically, “test impact” has been discussed in Bachman and Palmer’s (1996) framework of test usefulness, in terms of the ways in which test use involves, and affects, individuals, educational systems, and societies. This impact of tests can be investigated “at the macro and micro level . . . in terms of direct and indirect influences on test takers, the educational community, or society at large” (Chalhoub-Deville, 2009, p. 119). In this paper, I employ the term social impact to refer primarily to the macro level of test use and the effects of tests on educational communities and society (following Chalhoub-Deville). However, I also broaden this definition to include another important agent in the ecology of test impact: the media.

If policy-driven language testing is inextricable from the mechanisms relating to people’s lives, educational systems, and societies, it is inherently ideological and socially constituted (McNamara & Roever, 2006; Shohamy, 2001). However, few attempts have been explicitly made to validate claims of social impact in policy-driven language testing. In this paper, I will briefly review existing validation models with a view to understanding how they treat matters of social impact. Then, arguing for a conceptual expansion of consequential validity research, I propose using critical discursive approaches, such as critical discourse analysis (CDA; Fairclough, 1992, 2001; Weiss & Wodak, 2003; Wodak & Meyer, 2015), to collect validity evidence for investigating claims of social impact in the use of policy-led language tests. Specifically, combining Bachman and Palmer’s (2010) argument-based approach to validation (assessment use argument [AUA]), and Fairclough’s dialectic-relational approach in CDA (Wodak & Meyer, 2015), I will illustrate how the impact of policy-led testing can be interpreted as a sociopolitical phenomenon, constituted and enacted in and through “discourse.” The politicization of assessment (e.g., political imposition of test constructs) is often “understood most fruitfully in terms of the discourses within which language tests have their meaning” (McNamara & Roever, 2006, p. 199). Although many researchers (e.g., Harding et al., 2020; Khan, 2017; Macqueen & Ryan, 2019; McNamara et al., 2014) have explored discursive approaches in critically examining policy-related discourses surrounding test use, as well as within test responses, Fairclough’s approach has not been used to weigh social impacts (Shin, 2021).

I use Fairclough’s dialectic–relational approach, positioned in critical and poststructuralist research tradition, to evaluate social impact of policy-led testing. Texts, beliefs, and knowledge about testing and impact, which are often rationalized into a “regimes of truth” (Foucault, 1975), are constructed by the possibility of multiple positions within and across different and competing discourses. As social impact is open to change as the struggle for the “true” meaning, the validation for it is also a continuous process. I take, as an example, the context of the development of the National English Ability Test (NEAT) in Korea, which had been used as a means of implementing government policies (Im et al., 2020; Shin, 2019; Shin & Cho, 2020, 2021). Through analysis of this example, I contribute to the understanding of validation as itself a critical and discursive practice bound up with social impact.

This paper thus addresses the intersection of testing and policy, situating test-driven impact and validation in a critical and discursive research tradition, within the context of policy-led educational reform in Korea. The claim of social impact will be discussed in the validation procedure: how the planning and implementation of a newly developed policy-led test are perceived in (1) government documents and related research reports (developers’ evidence) and (2) the media (diverse and embattled stakeholders’ voices). This study will examine the discursive practice of the macro-level impact for the intended test use by focusing on the complicated battles in the policy document and media discourse.

An argument-based approach to validation: Issues in social impact

Policy-driven language testing needs to be understood sociopolitically, especially when manifestations of its power engage with high-stakes policies (Shohamy, 2006). Validity theories tend to elide the sociopolitical dimensions of language testing, especially in social gatekeeping around citizenship or professional advancement, even though tests and their constructs are inevitably politicized in the context of test implementation (McNamara & Roever, 2006). Conceptual and operational frameworks to ward off negative impact have been called for from the beginning of test development, as test developers are required to “look into the future, to picture the effect” (Fulcher & Davidson, 2007, p. 144). Messick’s (1989) framework, which includes the social dimensions of testing, opened the door to overcoming institutionalized beliefs in ostensibly psychometric properties of validity. His model highlighted the role of consequences in validity (Kane, 2013; McNamara & Roever, 2006), and many scholars have extended this socio-humanities discourse to maintain public discussion on the responsibilities of language testers, accountability toward test-takers (Norton, 1997), democratic principles (Shohamy, 2001), value-intervened justice (McNamara & Ryan, 2011), and consequences (Cheng, 2014).

However, Messick’s construct-focused tradition tends to downplay the policy-embedded characteristics of testing, making sociopolitical issues relevant only insofar as they pertain to construct validity. Even when inappropriate use of testing cannot be empirically traced to construct underrepresentation or construct-irrelevant variance, undesirable impacts should be critically discussed. Adverse consequences of test development appear unpredictably; for example, they may emerge from media intervention, and thus not directly related to construct issues in testing (Shin & Cho, 2020). Chalhoub-Deville (2016) emphasized that “separating consequences from validity suggests to test developers and other professionals that such research is not integral to the technical quality of the assessment program and can be pushed more easily aside or allocated to some stakeholders” (p. 459). Shohamy (2001) and Lynch (2001) supported critical investigation of test use and consequences in validation, while Bachman (2005) described such efforts as “impractical” or entrusted to ethical and professional perspectives; a position treated with skepticism by critical researchers (e.g., McNamara & Roever, 2006).

Scholars have continued to explore models for reconceptualizing validation, recognizing the usefulness of argument-based approaches where different forms of validity evidence are integrated, to support inference interpretations and test use. A test is now considered “valid” when its validity claims are defensible with the collected evidence in specific contexts of testing. Validation is not an all-or-nothing decision, but an ongoing process of playing out arguments about inferences and uses (Bachman, 2005; Chapelle et al., 2008; Fulcher & Davidson, 2007). Validity arguments are constantly vying with each other. They are open to contestation, resistance, or negotiation based on newly collected evidence or differently evolving testing situations. In short, validation is viewed as “a generative process that promotes continued inquiry into assessment practice” (Koch & DeLuca, 2012, p. 100).

Current approaches to validation encompass systematic procedures for evaluating arguments about inferences and uses associated with tests-in-use or -development. Bachman and Palmer’s (2010) AUA, for instance, based on Toulmin’s (2003) argument structure, has been praised as a useful model (Pardo-Ballester, 2010) for including “greater detail and structure in its consideration of test utilization and impact, including issues such as sufficiency, equitability, values, and consequences” (Johnson & Riazi, 2015, p. 35). However, operational problems are often discussed. Kane (2013) criticized Messick’s validity as “conceptually rich and suggestive . . . but not easy to implement effectively, because it does not provide a place to start, guidance on how to proceed, or criteria for gauging progress and deciding when to stop” (p. 8); Kane’s framework has also been criticized for the same reason (Chalhoub-Deville, 2016; Im et al., 2019). As for Chapelle et al.’s (2008) framework, separate steps for investigating test use and consequences were merged into one (Im et al., 2019). Bachman and Palmer’s (2010) AUA framework comprehensively conceptualized test use and consequence in connection with actual test design and implementation. However, there have been few attempts to provide explicit procedures for validating the viability of social impact in policy-driven testing. There is still, ultimately, reluctance among validation researchers to operationalize the complicated nature of social impact.

Language testing as discursive practice

I argue that the analysis of the conflicting discourses of policy-led testing can lead language testers to better understand the nature of social impact, revealing the ways in which the interests of diverse stakeholders are shaped, and helping language testers to optimally plan, execute, and revise assessment instruments within surrounding social systems (McNamara, 2010). “Discourse,” in the critical discursive tradition, does not simply refer to the level of language above the sentence, nor the structuralistic result of an already given reality. Instead, it is text and talk in the related social context, or as social action (Wodak & Meyer, 2015), offering different (positive and negative) versions of realities (e.g., about political viability, administrative practicality, or financial feasibility) and serving conflicting power relations. A range of institutional discourses (e.g., in the media or government documents) provides the network by which a dominant version of social impact is produced, resisted, and contested in the implementation-in-context of policy initiatives, from the framing of policy problems to the negotiation and revision essential to policymaking, the resulting products, and their dissemination and appropriation. Discourse is thus both constitutive and constructive of policy(-led testing).

Discussions within the scope of validity models therefore need to be expanded to examine social impact, through “the sort of cultural critique that is the subject matter of contemporary social theory” (McNamara & Roever, 2006, p. 244). CDA is one such approach, with decades of use in interpreting discursive conflicts, historical events, and sociopolitical realities. A CDA-based validation has the potential to anticipate a policy’s possible impacts before and after the policy is implemented. This section presents the tenets of CDA and clarifies their relevance to language testing. I illustrate how Fairclough’s dialectic–relational approach, one of a wide spectrum of CDA approaches, can be applied to argument-based validation. At this early stage of developing the critical discursive approach to validation, I hope to refine the ideas by responding to critical feedback and engaging in dialogue with other researchers across different testing systems.

A critical discursive approach to investigate social impact

This section presents CDA guidelines, focusing on Fairclough’s dialectical–relational approach (Wodak & Meyer, 2015), that can be utilized to support or challenge the claim of social impact in the validation of policy-driven testing. While early and capitalized “CDA” is known to be informed by structuralist and/or Marxist theories, the “critical discursive” approach in this study is not necessarily linked to “big C(ritical)” (emancipatory) research orientations endemic to structuralist approaches to conceptualizing impact as the product of a clearly defined social system. Following a poststructuralist perspective, discourse is interpreted enigmatically as fluid, contradictory, and changing constantly, and the distinction between texts and social realities is blurry (Baxter, 2010). For that reason, Fairclough’s dialectical–relational approach should be considered as a method for test validation in situations where social phenomenon pertaining to high-stakes testing need to be dialectically perceived not only as constructed through texts, but also construed as ideologically reflected.

Fairclough (1992, 2001, 2002) viewed discourse as socially mediated action, integrated linguistic analysis with poststructuralist categories (e.g., intertextuality and discourse), and attempted intertextual analysis as a way of reciprocally linking texts and ideologies, all of which can align with critical and poststructuralist tenets. Nevertheless, his approach focused on the dialectical relationship between discourse and materiality, social actions and structures, and different dimensions of discursive practice. Micro-level analysis of attested data was emphasized. Accepting the notion of complicated networks of shifting power relations, he also held on to the inflexible nature of power (linking to ideology) and separated it from the (analytic stage of) textual and intertextual practice. His approach seems to bridge the (early) critical and (poststructuralistic) discursive gap, arguing that social realities impinge upon discursive construction. In accepting that the social and textual world is changing, I try to be conscious of the need for Fairclough’s approach to locate “social impact” grounded in the conceptualizations of validity in the critical and discursive research tradition.

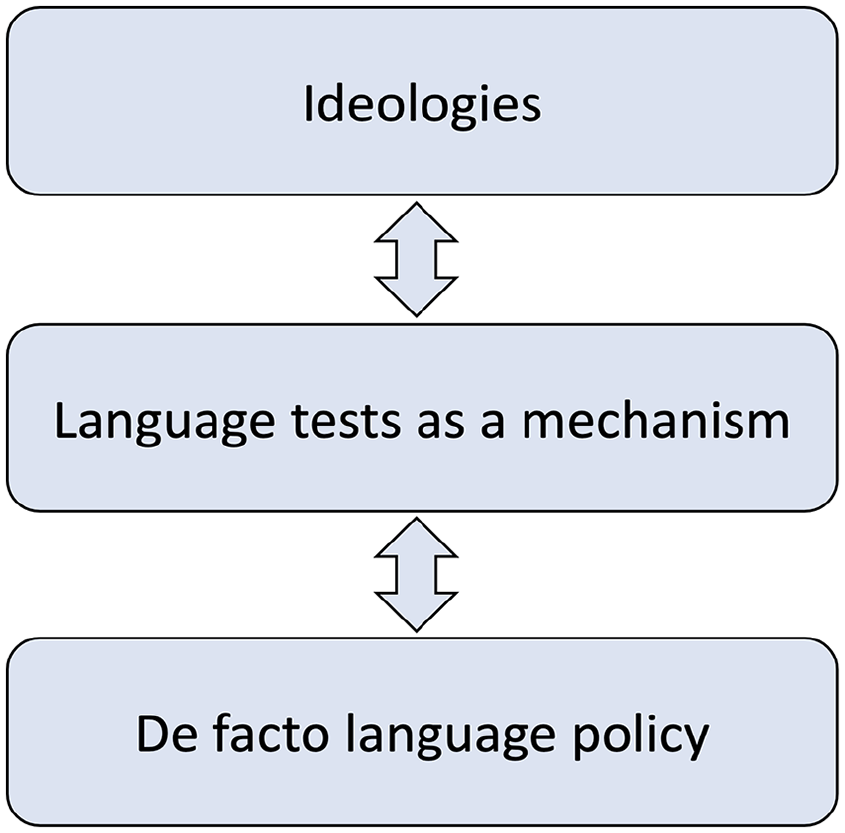

Before further considering Fairclough’s approach in argument-based validation, I briefly present Shohamy’s (2006) “critical approach to policy-driven testing” and test-based policy implementation in Figure 1.

Language tests as mediating mechanisms affecting language policy (based on Shohamy, 2007, p. 121).

Figure 1 illustrates the specific mechanism by which language tests can be used to transform ideologies into de facto policy (and vice versa). According to Shohamy (2006, 2007, 2008) the connection between language policy and testing works in two directions: one involves language testing as policy per se, and the other, de facto language policy that is brought about by the use of the testing system. Shohamy asserts that a “set of mechanisms” (rules and regulations, language education policies, language tests, language in public space, ideology/myths/propaganda/coercion) mediate between ideologies and practice, activating de facto language policies. Language testing is among these mechanisms.

The ideologies, mechanisms, and practices in Shohamy’s framework, however, can be considered to be constituted in and through discourse, where discourse provides the network by which discousal meaning is produced, disseminated, and inculcated. As such, Shohamy’s framework in Figure 1 can be reconceptualized in Figure 2 to illustrate a discursive framework for understanding policy-driven language testing.

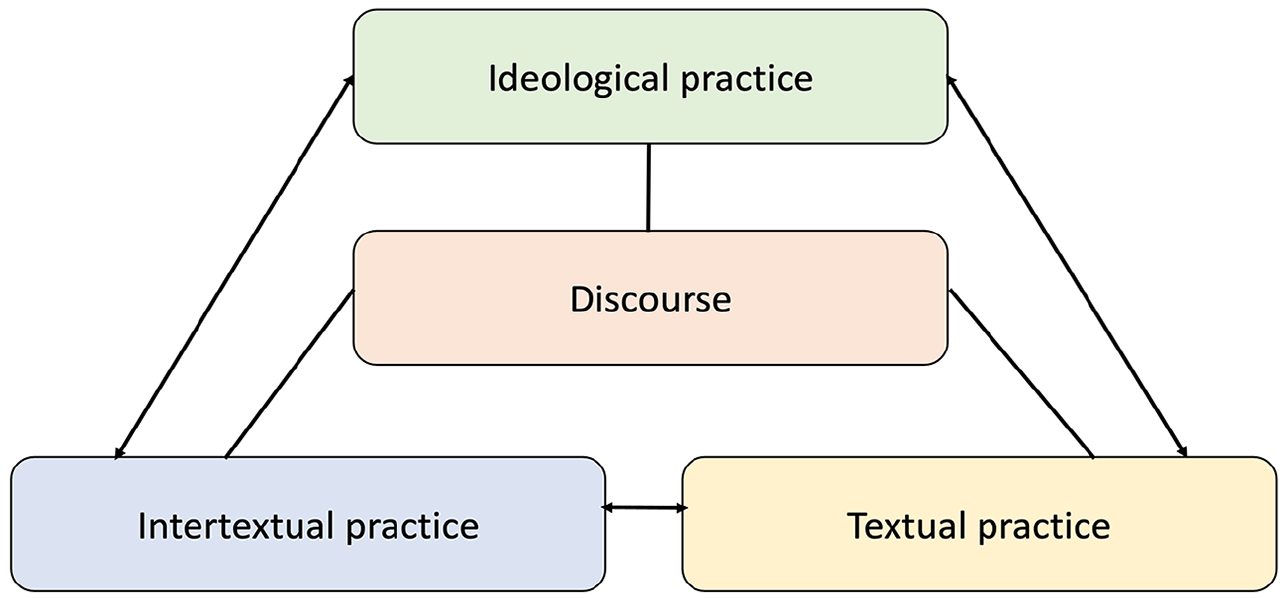

Discursive framework for understanding policy-driven language testing (based on Fairclough, 2001, p. 21).

The conceptual framework in Figure 2 prioritizes “discourse” as an omnipresent component of policy-driven language testing, which is simultaneously recognized as textual, intertextual, and ideological practice. The iterative analysis (moving from texts to ideologies) broadens the conventional scope beyond plain interpretation of texts by framing linguistic analyses within situational and sociopolitical contexts. Discourse is conceptualized as a three-dimensional process of social interaction; a discursive event is identified simultaneously as a piece of text, an instance of intertextual interaction involving process of producing and interpretating texts, and an instance of ideological actions linked to power relations. The conceptualization helps to synthesize linguistic and sociopolitical analysis.

When the social impact of policy-led testing is perceived as dialectical–relational between textual and non-textual, discourse and structure, or micro- and macro-dimensions, the discursive approach in Figure 2 can be conceived and operationalized by oscillating between textual, intertextual, and ideological practice. The social phenomenon (e.g., positive and negative impacts) cannot be analyzed as a monolithic entity that predisposes ideologies, mechanisms, and practices. The relationship, for example, between textual and ideological practice, mediated by discourse, needs to be understood as dialogical: “discourse carries but also generates ideologies through social actors’ behaviours” (Barakos, 2016, p. 38).

Barakos (2016) suggested an interdisciplinary framework for language policy and critical discourse studies, expanding Shohamy’s (2006) framework to a discourse-historical approach (Reisigl & Wodak, 2009). The framework in Figure 2 resembles Barakos’ discursive approach to language policy. However, it is more directly reflective of Fairclough’s (2001) dialectical-relational approach with a three-dimensional (textual, discursive, social practice) analysis, accompanied by a shunting back and forth in description of text disposition, interpretation of intertextuality strategies, and explanation of ideological orientations. Fairclough’s approach brings linguistic analysis at the micro level of social action together with analysis at the macro level of social structure in understanding not only the workings of individual texts, but also the ways in which they traverse in different genres and styles and enter into networks of other discourses in relation to the larger discursive formation such as social structure.

Fairclough’s three-dimensional analysis can be elaborated as follows: First, textual analysis is conducted by using Halliday’s systematic functional grammar, examining the content and formal features of texts. For example, what aspects of social phenomenon are reworded, overworded, metaphorically expressed? What patterns of transitivity are found? Who (Agent) is empowered over whom (Affected)? Is Agent inanimated or deleted (in a passive form of sentence)? Is nominalization frequently used? All these textual features relate to intertextual and ideological functions.

In Korea, for example, there was media pressure on the development of NEAT to resolve the problems in English education. Chosun Ilbo—a Korean newspaper—accentuated government involvement in a newly developed English test. It ran an article on 31 July 2007, titled, “Any problems? Concerns on increasing private education . . . .” in which overwording with similar meanings was found, with such words and phrases as “impossible,” “unrealistic,” “insufficient,” “failed attempt,” “parents are worried,” “only good for private institutes.” The article was preoccupied with “problem” texts (Shin, 2019), as it later alluded to a new test as a “solution” to the “crisis” phenomenon and aligned the selected texts to the position for the “anticipatory and positive impact” pertaining to a government-led English test.

Second, intertextual analysis is conducted to understand production, dissemination, and consumption of the target discourses, such as how texts are circulated and transformed within the process of distribution. Intertextuality refers to heterogeneity of texts constituted by combinations of diverse genres and styles. Related texts influence, assimilate, reflect, contradict, or differ from each other. Referring again to the context of NEAT development, for example, Shin (2019) identified interconnected texts of “problems” and “solutions” in the media, among editorials, statistical reports, reader contributions, or interview quotes, in terms of “demand and supply” (market-determined) or “profit and cost” (utilitarian) values (Shin, 2019). Attempts can be made to trace the chain of prior-posterior texts among different genres, styles, or discourse types.

Third, ideological analysis can be conducted to examine how connected texts are shaped by, or aligned with, related ideologies. Macro-analytically, sociopolitical realities behind discourse (e.g., secondary school students’ limited fluency in speaking, wasteful spending on private education, and NEAT development as a solution) can be explained by examining the power relations and ideological conflicts about English-language education, government-led testing, or test-driven education reform. The social (ideological) effects depend upon the target stakeholders, who evaluate, comprehend, or resist the accessible discourses. Ethnographic data can also help to understand the voices (un)heard.

Critiques of CDA have also been reported (Barakos, 2016). It should be noted that there is no single dominant model of CDA that can be consistently applied to test validation. As Blackledge (2005) emphasized, CDA approaches are political in their orientation to understanding sociopolitically constituted realities, are interdisciplinary in their scholarship and approaches, and diverse and eclectic in their focus and topics.

Example in the context of Korea: NEAT

I now present an example of evaluating social impact through a critical discursive form of inquiry to delineate a complicated phenomenon constructed in the process of policy-led test development. By bounding social impact inquiries within a Faircloughian approach, I will assert that key features in critical discursive approaches can contribute to the generative process of developing argument-based approaches to policy-driven test validation.

NEAT was developed, implemented, hailed, criticized, and abolished in a period of discursive conflicts mostly between 2007 and 2014 (see Kim & Isaacs, 2018). Adverse representations of NEAT could have been negotiated, amended, or lessened if validity evidence for its social impact had been seriously collected and undertaken in the service of the discursive battles. The negative view of NEAT development and implementation could have been predicted and evaluated on empirical data; test providers could have entered the site for discursive struggle and highlighted plans for mitigating adverse impacts at the time of policy formulation. Fairclough’s approach is applied here as a “retrospective” format to the period before and while NEAT was mandated to become operational.

NEAT-driven policies were formulated to shape and improve educational practices and outcomes in Korea, mostly in the context of secondary schools. NEAT was a test of English proficiency, fervently developed and implemented with the intent of neoliberal technocratic education reform, but was suddenly invalidated and abolished (Im et al., 2020; Shin & Cho, 2020). Sociopolitical impetus toward the development of a new homegrown test to supplant imported tests (TOEIC, TOEFL), measuring four commonly recognized skills of English, and ensuring global competence, was rooted in the belief that it could solve English-language-related problems in Korean education and society (e.g., limited fluency in English speaking, private spending on English education).

The discourses of homegrown English tests were to be communicated loud and clear to the public, when the media discourse highlighted the so-called “TOEFL crisis” in 2007 (Shin, 2019), where excessive demand for TOEFL-iBT registration exceeded the available spaces in Korea. The media reported on situations where Koreans spent more than 10 million dollars annually on TOEFL and TOEIC application fees, and ran features on frustrated test-takers, and compared the usefulness of TOEFL and other English tests in Korea. The chronic problems of “English fever” (the Korean term yeongeo yeolpung), along with the “TOEFL crisis” (Shin, 2012), were thought to be resolvable through the implementation of a locally developed English test. An innovative agenda for English education, including the development of a new government-led English proficiency test, tentatively called “Korean TOEFL” and named “NEAT” later, was put forward in the new government’s policy documents (Shin & Cho, 2021). The test was developed for three levels, with Levels 2 and 3 targeted at secondary school students. It was planned that NEAT, at these levels, would be used to screen college entrance applicants and replace the English subject in the College Scholastic Ability Test (CSAT). The NEAT was greeted as an effective substitute with loud fanfare. An article of Chosun Ilbo dated 20 August 2010, for example, announced “the Korean TOEFL, NEAT, as an alternative.”

However, the media also reported on problematic situations during the test development and trail stage (Shin & Cho, 2020), such as in the articles of Hankyoreh (a Korean newspaper), titled “Allowing middle and high school students to take level 1 [of NEAT], Instigating private education” (19 December 2008), or “Replacement of CSAT English . . . .test-takers caught in ‘double whammy’” (27 May 2011). After discursive battles in the media, the public backlash, which was often sparked by such issues as excessive private spending on NEAT preparation, scoring credibility, and technological instability in the pilot administration, led to government officials’ mistrust and accelerated the subsequent government’s decision to terminate the full administration of the test in 2014–2015. The goal to make NEAT scores mandatory in public school contexts was suspended. The development and use of the NEAT ceased after 7 years (2008–2015).

Until NEAT’s termination, education reform goals such as quality control in English-language learning, teacher education, or competitiveness-building through English, affirmed a pivotal role for NEAT in government-led efforts. Given its powerful status in Korean society, NEAT played a significant role in the establishment of English-language (education) policies, such as gatekeeping for college admission and teacher qualification, and ensuring accountability in management of school and classroom culture. It served as a mediator in the political controversies that raged between ideologies about English-language education and de facto language policies.

The reform-led testing system of NEAT was intended to measure beyond English learners’ individual performance and differences and situate the test development in a context of intervention for social impact. Its policy-driven impact, which often leads to sociopolitical discourses beyond the psychometric properties of testing, had been widely discussed, particularly in the media, (e.g., in an editorial of JoongAng Ilbo published on 12 April 2007, titled, “TOEFL crisis, let’s solve it with a domestic test”), focusing on the extent to which it helped achieve intended reform outcomes in educational contexts. Discourses either supporting or opposing the implementation of NEAT were created, disseminated, and inculcated in state-led policy documents (Shin & Cho, 2021), and reproduced in the media (Shin, 2019; Shin & Cho, 2020).

Following are the research findings of Shin (2019) and Shin and Cho (2020, 2021), all of which drew on Fairclough’s dialectic–relational approach. Shin (2019) analyzed newspaper articles regarding NEAT, published between 2007 and 2012. The discursive representation of NEAT was analyzed from three dimensions of textual, discursive, and social practice. NEAT-related media discourse was formulated in terms of technology-focused, economic (private education expenditure), or utilitarian (the benefits of a domestic “Korean” test) practice. These discursive events were implicitly connected to the ideologies of “technopoly” and “teach-to-the-test.”

Relatedly, Shin and Cho (2020) investigated the discursive conflicts over whether NEAT would be legitimized in two leading Korean newspapers of different political orientations—Chosun Ilbo and Hankyoreh—from 2006 to 2016. Both proponents and opponents of NEAT appeared in three periods of discursive battles: creation (of NEAT-related discourses with “TOEFL crisis”), expansion (on the implementation of NEAT), and extinction. There were competing discursive strategies and underlying ideologies (e.g., neoliberal and evaluative state) for developing, expanding, or abolishing NEAT.

Government and policy documents also serve as discursive constructs that legitimize test-driven educational reforms. Shin and Cho (2021) examined Korean Ministry of Education (MOE) policy documents strongly supporting the development of NEAT and consolidating a testing regime. They focused on how representational (discourse), identificational (style), and actional (genre) meanings of policy texts are socially constituted but also constitute the NEAT-driven social realities. The NEAT development was discursively legitimized in the policy documents, which played as an agent of social change (e.g., for agenda-setting) in terms of sociopolitical discourses.

In contemporary Korean society, the functions of language-test-as-policy, and their role in education, employment, immigration, and citizenship, are prominent. Particularly in the context of globalization, the need to examine the role of English proficiency testing and its sociopolitical aspect has become significant. As seen in the NEA-related literature, the Korean government’s decision to lead education reform through NEAT had sociopolitical effects on how the intended test was developed, used, and perceived in educational communities and broader societies. Testing issues in NEAT were embedded in a context of policy-led social impact, in which the construct of newly emphasized skills could be discursively legitimized by powerful stakeholders.

Validation framework for social impact

I now turn to developing a validation framework for social impact that draws together Bachman and Palmer’s (2010) AUA and the Faircloughian approach to CDA described above. I will explore this framework through a consideration of the NEAT mandate. The AUA approach can be applied to provide a systematic procedure for evaluating the claims of decisions and their consequences. A claim of NEAT’s social impact can be provided from the layers of the AUA framework for evaluation; for example, Claim 1 of intended consequences: “The consequences of using an assessment and the decisions that are made are beneficial to all stakeholders” (Bachman and Palmer, 2010, p. 158). As discussed above, the AUA is not easy to operationalize, because it asks for many inferences to be examined and does not provide multifaceted guidelines in the layer of Claim 1 (intended consequences). Potential threats and social controversies involving political viability, administrative operability, financial situation, and reform possibility should be duly weighed and verified, where the analysis of social impact needs to be explicitly performed and disentangled from the other steps of the validation process.

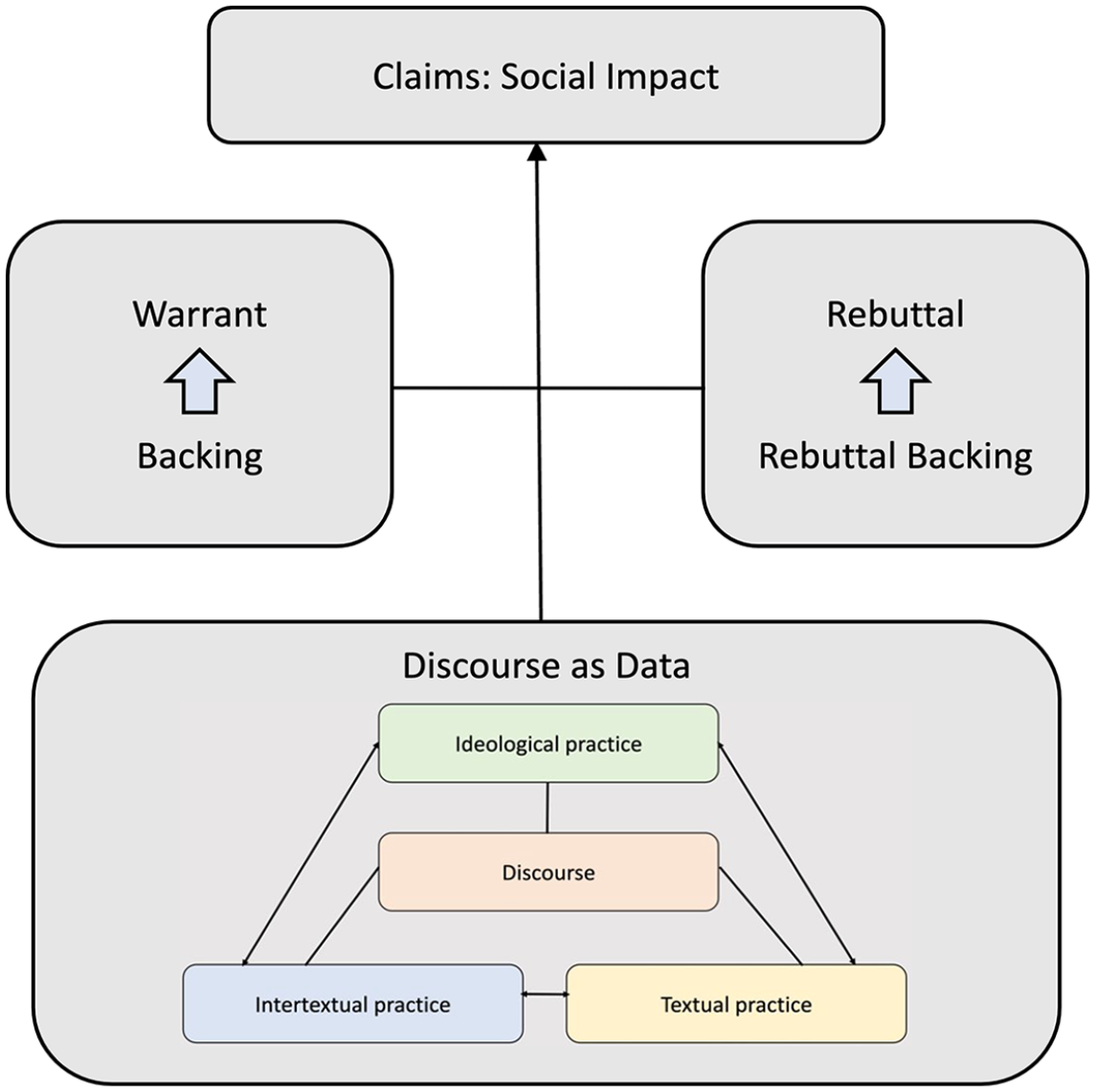

Fairclough’s three-dimensional framework seen in Figure 2 is placed within Bachman and Palmer’s AUA Claim 1 (of intended consequences), in Figure 3, with “warrants” and “rebuttals” of the validity argument for social impact. The claim is argued as follows: The use of NEAT, such as to inform the intended high-stakes decisions, results in positive social impact (e.g., educational competitiveness, changes in school and class culture, and less expenditure on private education) for all stakeholders involved. The balanced framework in Figure 3 allows for the collection and consideration of “warrants” (on the left) and “rebuttals” (on the right), then consideration of both positive and negative impacts. Validity evidence for positive impact can be collected with related “backing” in different forms of data. The claim of positive impact can be challenged and disproved by presenting “rebuttals” based on the evidence of “rebuttal backing.” Arguments in warrants and rebuttals may come into conflict. Moving from data to claim, Fairclough’s dialectic–relational approach enshrined in AUA serves to engage anticipated and actual “discursive conflicts” during the collection and evaluation of validity evidence. The discourse as “data” in Figure 3 can be conceptualized and analyzed in three dimensions, which are elaborated in Figure 2.

Inferences for claim of social impact with the Faircloughian CDA.

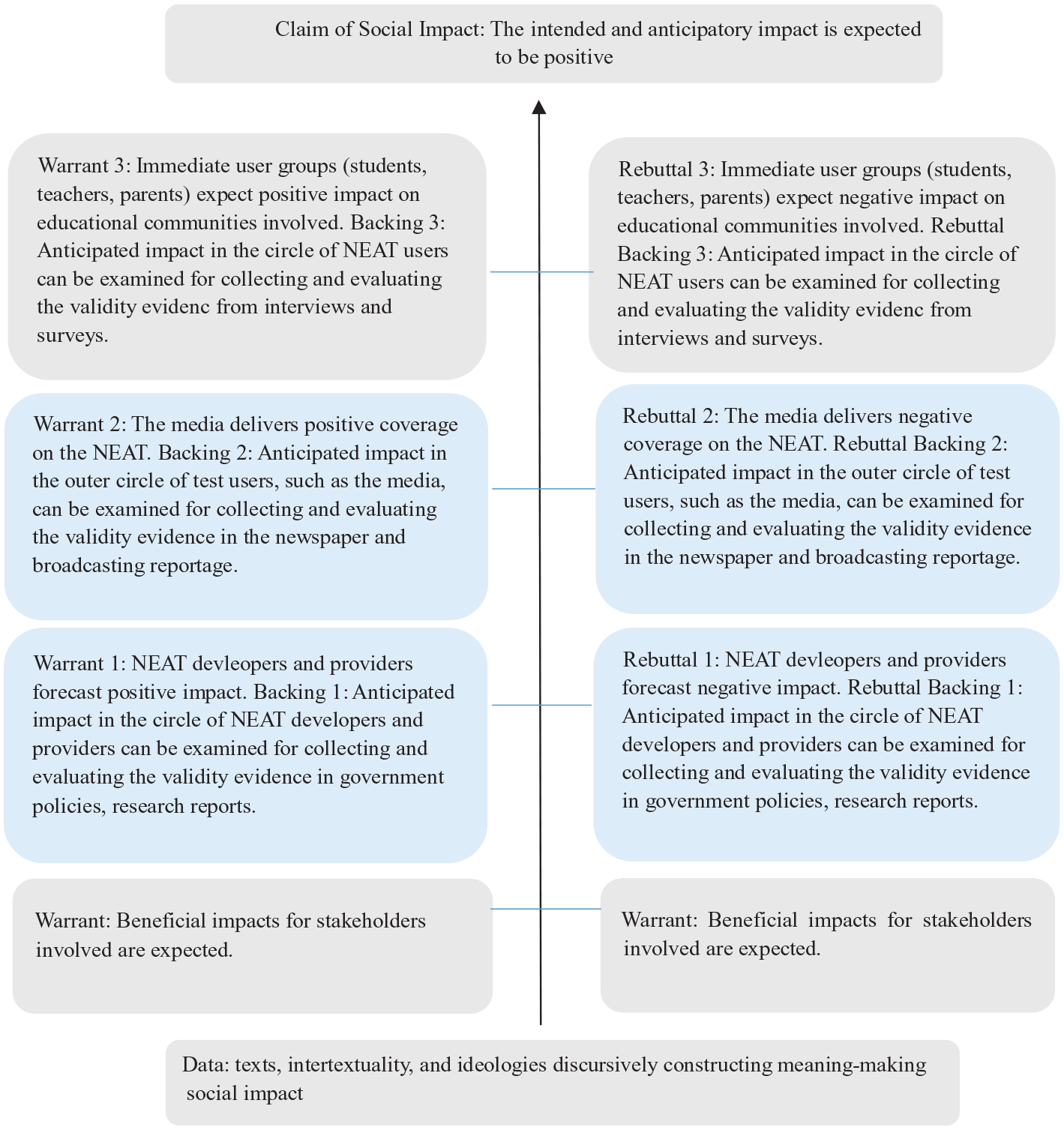

The validity arguments supporting and refuting positive impact of NEAT are illustrated in Figure 4. In this case, the discourse data—referred to in Figure 3—was gathered from policy documents (for Warrant/Rebuttal 1), media reportage (for Warrant/Rebuttal 2), or survey/interview results (for Warrant/Rebuttal 3) touching on social impact. “Warrants,” with the help of “backing,” support the link between the intended and actual impact regarding the implementation of NEAT, as “rebuttals” and “rebuttal backing” highlight the negative impact. Empirical evidence following a Faircloughian analysis can be collected for backing (rebuttal backing) to legitimize (reject) the warrants (rebuttals) of the test impact, where the policy goals of NEAT are intended to influence the English education systems in Korea. Three types of warrants and rebuttals can retrospectively come from (1) the circle of NEAT developers and providers, (2) the media, and (3) the immediate user groups (teachers, students, parents), with related backing (and rebuttal backing), also of several forms, such as the review of (1) policy documents and research reports, (2) media coverage, and (3) survey and interviews. The discourse data collected in (1), (2), (3) may then be analyzed through the three-dimensional framework shown in Figure 2.

Validity argument for NEAT’s social impact (Retrospective Format).

Beginning with Warrant 1 and Rebuttal 1, Shin and Cho’s (2021) analysis of MOE policy documents can illustrate how a CDA approach can be employed to examine whether beneficial impact for NEAT stakeholders is legitimized. As the impact of policy-driven testing can be either positive or negative, meaning-making policy documents can be interpreted to argue for or against the necessity of NEAT in reforming English education. The Korean government pushed NEAT through from the top down, and the policy documents were the discursive site where the goals and intents of NEAT were disseminated. Shin and Cho (2021) found that test-driven policy reforms were explicitly articulated in the policy documents, which could serve as Warrant 1’s backing in Figure 4 as evidence of setting an agenda for NEAT’s success. The reform goals (e.g., improving students’ English proficiency and the English educational system) were discursively textured with neutral and reductionistic information, in which all the documents, for example, “share positivistic features of generic structure, through which the need for test development is intertextually interwound to reinforce an underlying belief in efficiency, standardization, measurement, and progress” (Shin and Cho, 2021, p. 548).

However, the critical review of the MOE documents serves as rebuttal evidence, too, in that the way NEAT was planned and documented was perceived as inappropriate. It was argued that the policy documents embodied a positive genre structure, and as such they did not give credence to the voices of diverse stakeholders or provide room for alternative discourses of testing. When all the values (in conflict) were subordinated to the dominant discourse of policy-led testing, NEAT’s impact seemed to be evaluated too optimistically. The policy documents upheld a belief system in which the newly developed standardized test would successfully define and solve educational problems, and extirpated any other views and sociopolitical debates concerning the use of NEAT.

Evidence for Warrant 2 and Rebuttal 2 in Figure 4 can be retrospectively collected and evaluated from the previous research of Shin and Cho (2020, 2021). From 2007 to 2016, discursive conflict between proponents and opponents of NEAT flourished in the Korean media; for example, Shin and Cho (2020) explored different positions and discursive strategies patterned in 84 NEAT-related newspaper articles. The media enthusiastically disseminated positive messages on “state-led accountability,” “practical English,” “technological efficiency,” and so on, and related coverage can be utilized in Warrant Backing 2. The media pressured teachers, parents, and students to quickly accept the newly developed high-stakes test, which administrators dubbed “positive impact.”

In the early stages of NEAT implementation, the media often pushed the public to accept and behave in keeping with the urgency of reform goals. However, it also heralded the social issues of “expenditure on teach-to-the-test,” “severe competition among young learners,” and “limited use of test scores,” which led to adopting anti-NEAT attitudes, in turn, influencing media coverage toward more negatively represented realities of NEAT. Shin (2019) found that the media, with threefold coverage of “technology-focused, economic (private education expenditure), or utilitarian (the benefits of a domestic ‘Korean’ test) practice” (p. 1), documented the negative impact for the establishment of a new knowledge system. The discursive evidence for the NEAT’s accretion to social ends of social technology can be placed in Rebuttal 2 to show the anticipated negative impact, regardless of NEAT’s intended goals.

The validation efforts, supplemented by the evaluation of social impact, could contribute to the successful implementation or deter the sudden abolishment of policy-led testing. If we could go back to decision-making positions, in time to the point when NEAT was being developed and implemented, we would have weighed several options by examining the warrants and rebuttals in the policy and media discourses. Negatively delineated impact could have been anticipated on Rebuttal Backing 1 and 2 discussed above, such as teach-to-the-test, competitiveness in public schools, and expenditure issues related to test prep, all discursively represented in the media, pointed toward the negative impact of NEAT on educational communities.

A possible scenario could then have been imagined as follows: The validity argument for social impact would be discussed in the circle of test developers and test providers. That the positive impact could not be warranted, based on the evidence of media discourse, would be taken seriously. Test implementation would be put on hold. New validation efforts would be planned. Empirical research representing positive impact would be proposed. Test schedules would be rearranged, test content reformed, and test use negotiated (the power of high-stakes testing weighed), though counter-discourses assuming a negative impact would also be deployed. NEAT would then finally pass through the discursive conflicts, and the 60 million dollars invested in NEAT before it was abruptly abolished in 2015 would not be wasted. NEAT would, arguably, survive.

Building up inferences of positive impact requires an ongoing process of validation. It is often tempting for test developers to lament sociopolitical intervention in their work. However, validation efforts should embrace the challenge, negotiating discursive conflicts and surviving even in the embattled contexts of high-stakes testing. Validity arguments must be discussed and documented in the sociopolitical arena. No policy-driven test can be claimed valid when its positive impact cannot be warranted; decision-makers for NEAT implementation should have asked: To what extent is the intended impact seen as discursively “more-or-less plausible” (Kane, 1992, p. 533) in government documents, media reportage, and user groups’ perceptions? Validity is not an all-or-nothing state; it is open to change in argument and negotiation. If the target stakeholders were invited, contexts examined, and counter-evidence built on, to the extent possible, within the process of test implementation and validation, NEAT could have been redefined to better represent the sociopolitical phenomenon at hand, supporting its further revision and survival.

Conclusion

This article illustrated how the social impact of policy-led testing may be performed, negotiated, and evaluated in and through discourse, and demonstrated one way in which Fairclough’s dialectical-relational approach can be employed to collect validity evidence of social impact, in association with Bachman and Palmer’s AUA framework, within the context of NEAT development and implementation. This approach, taken in the critical and poststructuralist research tradition, builds on existing validation models. By revisiting the previous Faircloughian research on NEAT’s impact, I postulate that the discourses arguing for and against social impact acquire their meanings from dialectical standpoints. Validation efforts require manipulating and negotiating conflicting discourses. In re-examining the discursive conflicts (positive vs. negative impact) and addressing different warrants and rebuttals in the process of validation, the result of policy-led testing does not have to entail the declaration of virtue and vice. The claim of positive impact could be better warranted, and NEAT might have survived in the sociopolitical context of testing-as-policy-tool.

No stakeholder declares “the truth;” all are embroiled in changing, contradictory, unabashedly clashing discourses. Social impact is constructed and deconstructed through the social process. NEAT collapsed, not because the construct was not empirically proven, but because the decision to terminate it was void of critical discursive assertions, especially for social impact. Researchers should have reflexive understandings of their own positionings and attempt different readings of texts (again, in and through discourse). The drawing together of critical and discursive research traditions can facilitate the potential for different descriptions, interpretations, and explanations of the (discourse as) research data.

As testing professionals, it behooves us to actively engage in impact research when any test-driven reforms are being planned for the future. Over 60 million dollars was invested in NEAT. However, it did not survive the discursive trial by fire and was sociopolitically asphyxiated by powerful stakeholders. What if test providers had documented the claim of social impact, seriously addressed the validity arguments with both warrants and rebuttals, empirically explored counter-evidence to the negative impact disseminated in the media, and persuaded the public, colleagues, and policymakers of the NEAT’s feasibility? In my experience as a testing professional, working mostly in Korean contexts, including for the early stage of NEAT development, policy-driven test development often rushes to the discussion of test constructs, item types, or scoring technology without explicitly discussing the validity of the intended impact. NEAT was such a case. Policymakers, as well as test developers, rushed to point out the high-stakes use of the test. However, research on its social impact was not reported, even after NEAT’s termination.

A discursive turn appears as a natural extension of a series of “critical turns” (e.g., Shohamy, 2001, 2006) and “social turns” (McNamara & Roever, 2006) in language testing. It is also an instantiation of the discursive turns in the social sciences. Discursive approaches to critical language testing stand as “one manifestation of modern reflexivity” (Fairclough et al., 2011). The critical discursive approaches to validation certainly need to be supplemented by the critiques from ongoing dialogues across different academic disciplines. However, analytic methodologies cannot be restricted to Fairclough’s (Mulderrig et al., 2019). The claims made for social impact can be inflated and, without a rich dataset, such as ethnographic explorations of context, cannot be strongly warranted. It should be noted that CDA itself can neither prove test developers’ intentions, nor evaluators’ interpretations.

A range of social and linguistic theories can be brought into dialogue depending on the specific needs of the research questions and contexts; all should proceed from recognizing discursive characteristics, leading to an anti-positivist, interpretative, interdisciplinary orientation. Critical discursive approaches have necessarily sought to collaborate with neighboring theories and methods within transdisciplinary critical social research. More researchers are invited to examine the extent to which CDA studies may characterize both critical and discursive approaches to test validation at the intersection of language (testing) policy, discourse analysis, social–critical analysis, and sociolinguistics (Harding et al., 2020). Very little research with effective empirical support yet exists in this field, and future research directions must involve generating productive conversations among interdisciplinary researchers.

Footnotes

Acknowledgements

The author thanks Dr. Luke Harding and the anonymous reviewers for their valuable comments and feedback along the way.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.