Abstract

The COVID-19 pandemic has changed the university admissions and proficiency testing landscape. One change has been the meteoric rise in use of the fully automated Duolingo English Test (DET) for university entrance purposes, offering test-takers a cheaper, shorter, accessible alternative. This rapid response study is the first to investigate the predictive value of DET test scores in relation to university students’ academic attainment, taking into account students’ degree level, academic discipline, and nationality. We also compared DET test-takers’ academic performance with that of students admitted using traditional proficiency tests. Credit-weighted first-year academic grades of 1881 DET test-takers (1389 postgraduate, 492 undergraduate) enrolled at a large, research-intensive London university in Autumn 2020 were positively associated with DET Overall scores for postgraduate students (adj. r = .195) but not undergraduate students (adj. r = −.112). This result was mirrored in correlational patterns for students admitted through IELTS (n = 2651) and TOEFL iBT (n = 436), contributing to criterion-related validity evidence. Students admitted with DET enjoyed lower academic success than the IELTS and TOEFL iBT test-takers, although sample characteristics may have shaped this finding. We discuss implications for establishing cut scores and harnessing test-takers’ academic language development through pre-sessional and in-sessional support.

Keywords

Introduction

The COVID-19 pandemic has changed the university admissions and English language proficiency (ELP) testing landscape (Ockey, 2021). One tangible change has been the meteoric rise in use of Duolingo English Test (DET) for high-stakes university entrance purposes, with rapid and widespread uptake of the test following test centre closures in 2020 (Isbell & Kremmel, 2020). During that year, popular international student destinations that rely heavily on student tuition fees to offset budget shortfalls (e.g., United Kingdom—the setting for the current study; Bolton & Hubble, 2021) were under pressure to offer some form of alternative to in-person testing as a mechanism for allowing students to continue to provide proof of ELP during lockdown or restricted movement conditions. Traditional ELP testing companies with longstanding inroads in the higher education market moved quickly to adapt to the changing circumstances through launching home versions of their established tests (Clark et al., 2021; Papageorgiou & Manna, 2021). In contrast, DET, a relative newcomer in the ELP testing market, was, from its inception, designed with the flexibility to be administered at any time and location, given access to a computer and Internet connection (LaFlair et al., 2022). It, therefore, had the necessary online infrastructure and test security measures already in place for home administration (i.e., remote proctoring), helping it fill a vacuum, particularly before industry competitors’ at-home versions of existing tests had been brought to market. DET also offered university applicants a cheaper, shorter, more accessible option than pre-COVID-19 standardised ELP tests (e.g., IELTS, TOEFL iBT), making it an attractive alternative.

As a computer-adaptive test, DET offers fast, reliable results using proprietary machine scoring algorithms (LaFlair et al., 2022). Since its rapid and widespread uptake early in the pandemic, competing testing organisations, in a possible effort to retain market competitivity, have since shortened their existing tests (Pearson PTE, n.d.) or introduced a new, cheaper test with more varied item types, some of which parallel DET’s (Papageorgiou et al., 2021). In sum, DET’s prominence during the pandemic has changed the language testing world irretractably. It is likely to remain an attractive option for test-takers and their target higher education destinations in the years ahead.

DET is a fully automated (i.e., machine administered and scored) Internet-based test. It follows that the way that DET operationalises the ELP construct (see Cardwell et al., 2022) is markedly different than the approach of traditional, human-scored ELP tests. This includes using mostly controlled item types to elicit predictable test-taker output that the machine can easily recognise and automatically score. To elaborate, for fully automated high-stakes tests, including DET, test-takers’ outputs tend to be more predictable than in integrated tasks requiring information synthesis from multiple sources (e.g., Frost et al., 2012) or dialogic tasks with an interlocutor, including those that simulate non-test language use situations. As Khabbazbashi et al. (2021) note, concerns about a limited communicative orientation and narrowing of the ELP construct are common to contemporary high-stakes fully automated tests due to the state of the technology. For example, when considering a broad construct of L2-speaking (Lim, 2018), linguistic factors such as pragmatic appropriateness, pitch-related aspects of intonation, and interactional features tend to be difficult for machine algorithms to capture (Isaacs, 2018). Although there will always be limitations in what machine scoring alone can do (e.g., Nakatsuhara & Berry, 2021), technological advances unveil new possibilities, making it possible for fully automated tests to evolve. For example, DET introduced constructed-response item types for speaking and writing in its current version (Isbell & Kremmel, 2020), representing a stronger emphasis on complementing receptive skills assessment with more spontaneous but contained spoken and written productions. Interactive reading, which involves test-taker engagement with written text, is the most recent item type to have been incorporated into the test at the time of writing this manuscript (Attali et al., 2022). The introduction of these new item types has signalled movement towards a more communicative orientation of the test within the limits of technology. It should be noted that existing reviews of the test (Wagner, 2020; Wagner & Kunnan, 2015) were published before these new DET item types had been introduced, meaning that some points of critique do not reflect the latest version of the test. For example, discourse-level productive tasks were not available at the time that these critiques were published but are now. These item types were also not operational for test-takers in the current study.

The DET technical manual describes DET “as a measure of English language proficiency for communication and use in English-medium settings” while claiming to assess both general and academic English, because both are crucial for academic and professional success (Cardwell et al., 2022, p. 3). The manual also designates “post-secondary admission decisions” as one of several test uses in the description of test purpose (p. 3). Wagner’s (2020) test review underscored the pressing need for external validation evidence to support test score use and interpretation given the widespread uptake of DET as a gatekeeping measure at English-medium universities. Put differently, perhaps the most prominent source of critique for the test has been the dearth of robust external validation research needed to support test score interpretation, which can, in turn, be used to inform university admissions decisions. To our knowledge, the only DET predictive validity study that has, as yet, been published examined correlations between DET Overall scores and English for Academic Purposes teachers’ ratings of student essays and spoken comprehensibility (Ishikawa et al., 2016). However, this study did not provide measures of students’ actual academic success through grades. In addition, because the study involved the retired version of DET and substantial changes to the test have been made since then, an updated study is necessary.

The DET has an established internal research programme and has introduced external validation funding streams to build the evidence base to support test score use for its intended purposes. In 2022, Burstein and her DET colleagues published a “theoretical assessment ecosystem” comprising a set of frameworks that underlie test design and development to guide future validation efforts. Using an argument-based approach (e.g., Chapelle, 2021), they presented a digital chain of inferences that considers how DET’s artificial intelligence capabilities contribute to test scores, following the validation tradition notably for TOEFL iBT. In terms of concurrent validity, in-house research reports moderate correlations between DET Overall scores and IELTS (r = .65; n = 1643) and strong correlations with test centre–administered TOEFL iBT scores (r = .82; n = 183; Cardwell et al., 2022).

Given that DET is a relatively new high-stakes test and that widespread uptake has been recent, large-scale data sets of test performance have only recently become available. The current study contributes to empirical validation of DET through secondary analysis of international students’ DET Overall scores and academic grades shortly after DET was accepted for admissions at a major UK university, taking into account degree level, subject area, and nationality. It also examines how well students perform academically depending on which ELP test they used for admissions. In the next section, we overview main findings from previous predictive validity research on other ELP tests to foreground the current study.

Predictive validity: Some considerations from previous research

Proficiency in the language of instruction is critical for success in every academic subject and at all levels of education (e.g., Prevoo et al., 2016; Trenkic & Warmington, 2019; Whiteside et al., 2017). Limited proficiency constrains not only how knowledge can be demonstrated in assessment, but crucially what can be learnt. Despite this, ELP test scores used for university entrance purposes are often only weakly correlated with academic outcomes (Ihlenfeldt & Rios, 2022), and sometimes no relationship is detected at all (Arcuino, 2013; Krausz et al., 2005). Some studies with null results might also not have been disseminated due to publication bias (Franco et al., 2014).

One reason for these inconsistent findings is the plethora of factors that can play a part in academic progress and success, with variables possibly interacting with or cancelling each other out (e.g., area of study, educational background, test-taker attitudes, study skills, support networks; Oliver et al., 2012; see Pearson, 2020, for a discussion of methodological considerations). That is, heterogeneous participant samples can obscure the predictive validity of ELP tests, thereby attenuating the relationship with ELP test scores. Bridgeman et al. (2016) illustrated the importance of conducting subgroup analyses to investigate whether a correlation involving all participants conceals patterns that could differ across meaningful subgroups. Variables such as degree level, nationality, and subject area, among others, have been shown to be important to disaggregate in data analysis (Harsch et al., 2017). In a pandemic, with greater levels of unpredictability than in normal times, there are yet other variables that could differentially affect test-takers and how they perform for reasons unrelated to ELP or academic ability (e.g., illness, travel restrictions, resource access, programme mitigations), compounding the uncontrolled factors that could come into play in shaping students’ academic outcomes.

There is also the issue of range restriction, a statistical artefact in predictive validity studies that occurs when the performance range of the sample is constrained compared to that of the target population, resulting in a truncated distribution (Schneider, 2014). As in other predictive validity studies, we could only examine the academic performance of test-takers who had enrolled at the university, not test-takers who chose not to apply or were not admitted due to test scores below the admissions thresholds. Therefore, only a restricted sample of prospective applicants who took the test (i.e., high scorers) were admitted and received academic grades and, hence, are reported on, reflecting a narrower range of performance than the population of interest (i.e., all prospective applicants who took DET). To summarise, although well-developed proficiency in the language of instruction is crucial for success in every academic subject, proficiency scores are often only weakly correlated with academic outcomes, if any relationship is detected at all. This is because (a) many factors are predictive of academic outcomes; (b) in underpowered heterogenous samples, these variables can statistically cancel each other out; and (c) the sample likely has a restricted proficiency range. That is why even small correlational values between ELP test scores and academic outcomes can be considered meaningful (Bridgeman et al., 2016).

Finally, test preparation, also known as coaching, a well-researched washback artefact that is common in societies where learning is largely driven by exam-oriented pedagogy (e.g., Clark & Yu, 2021; Trenkic & Hu, 2021), can undermine the validity of the test. Research has demonstrated that the higher the stakes, the more likely test-takers are to engage with intensive test preparation practices in a bid to boost test scores in a short time period (Cheng et al., 2015). Such practices may be captured in test performance and scores, potentially jeopardising construct and criterion-related validity. Because DET was not predominantly used for university admission purposes prior to the pandemic, it is unlikely that intensive test preparation targeted DET at the time of the current study because the test was still relatively unknown (Cushing & Ren, 2022). We thus hypothesise that DET scores obtained at this timepoint are likely unadulterated by test preparation and, therefore, could be more valid measures of students’ ELP and potentially be more predictive of students’ subsequent academic attainment.

The current study

This rapid response research study is the first, to our knowledge, to investigate the predictive value of DET in relation to university students’ academic attainment for the version of the test in use during the first few months of the pandemic. It is also the first comparative study of DET with well-established ELP tests. Our first research question investigates the relationship between DET Overall scores and full-time university students’ first-year academic grades by degree level, academic subject classification, and nationality. The absence of a relationship between test scores and academic outcomes could suggest that the test is not a valid measure of ELP. However, such a result could also be an artefact caused by properties of the test-taker sample. One way to rule out the first option is to examine how other, more established tests behave in the same context. This led to our second research question, which examined the relationship between Overall scores on three ELP tests (DET compared to IELTS and TOEFL iBT) and students’ first-year grades by degree level.

DET in-house research has recently established points of correspondence with IELTS and TOEFL iBT and benchmarked DET performance against Common European Framework of Reference for Languages (CEFR) levels, although they note that correspondence values may change to take account of findings from future alignment studies (Duolingo English Test, n.d.). Nonetheless, with correspondence values published, it is now possible to investigate the practical question of whether students who met the ELP entry requirements for DET achieve a similar level of academic success as those who had their ELP certified using more established ELP measures. This motivated our third research question, which investigated whether students who took DET to meet ELP admissions requirements enjoy the same level of academic success as those admitted with IELTS or TOEFL iBT scores.

Methods

Research context and data

The setting for this study is University College London (UCL), a large, research-intensive (Russell Group) university in central London with 40% international students. The study, which uses university admissions and attainment data for the 2020–2021 student intake, captures the unique circumstances that arose during the COVID-19 pandemic months after the university and many other English-medium settings in the United Kingdom and elsewhere adopted DET. The university Admissions Requirements Panel accepted DET as proof of ELP for university entrance purposes in early March 2020. The decision was made before the start of the first UK lockdown but when virus containment efforts were already affecting China and other parts of the world. This was before IELTS and TOEFL iBT had launched home editions of their tests. Against this backdrop, nearly all teaching was conducted remotely for this cohort due to UK government restrictions, including, at some junctures, stay-at-home orders and social distancing guidelines that precluded face-to-face meeting. This led to adapted instruction and, in some cases, assessments. Due to the substantial disruption and unpredictability of the situation, the university implemented a “no-detriment policy” to mitigate students being unduly penalised. Compared to a non-pandemic year, there were higher numbers of extensions, interruptions of study, marking delays, and delayed exam boards. It is important to acknowledge these and other pandemic-related irregularities inherent in the data set.

We conducted secondary analysis on first-year academic grades of 1881 DET test-takers (1389 postgraduate, 492 undergraduate [UG]) enrolled full-time in a new programme at the university in Autumn 2020. This included 1389 postgraduate taught (PGT) students, (429 male, 959 female, 1 Other; Mage = 24.67 years, SD = 3.59) and 492 UG students (239 male, 252 female, 1 other; Mage = 19.03, SD = 1.05). The PGT students were from 243 distinct degree programmes and represented 74 different nationalities, with Chinese (1073), the largest subgroup (nearly 80%), followed by Italian (31), German, and Indian (24 each). For UG students representing 48 nationalities, Chinese students again constituted by far the largest group (305; over 60%), followed by Malaysian (21), Polish (20), and Spanish (16).

At the time of the university adopting DET, Duolingo only routinely reported overall test scores. Thus, only DET Overall scores were taken into account in admissions decisions, with cut scores applied depending on the linguistic demands of the programme, as specified at programme level (Standard: 115; Good: 125; Advanced: 135). DET subscores were only routinely available for test-takers who had applied from 7 July 2020, onwards (LaFlair & Tousignant, 2020), limiting the data set that we could explore in relation to subscore performance. Therefore, in this paper, we solely report DET Overall scores.

To investigate DET test-takers’ academic grades compared to those of students admitted using established ELP tests, we analysed the data of an additional 3087 full-time students who had commenced their studies at the same (Autumn 2020) entry point with scores for either IELTS (2650) or TOEFL iBT (430). The admissions team does not routinely enter scores from more than one ELP test for applicants into the admissions portal, precluding analysis of individuals’ performances across multiple ELP tests if there were cases of this. Admissions staff also did not log whether students had taken traditional test centre versions of these tests, or remote versions introduced during the pandemic. Proportionally, 54.6% of IELTS and 52.6% TOEFL iBT test-takers were Chinese compared to 73.3% of DET test-takers. This discrepancy could be accounted for by test centres closures in China and at-home versions of the competitor tests not being available there (Isbell & Kremmel, 2020).

Data preparation and coding

We organised and coded the DET data set using the following variables pertaining to test-takers:

Degree level: PGT or UG.

Nationality: With Chinese students constituting the largest nationality and due to small sample sizes of other nationalities, we compared Chinese students, including those from Hong Kong, Macau, and Taiwan, with students from all other nationalities (hereafter Chinese or non-Chinese).

Subject classification for programme of study: To categorise 341 distinct programmes represented in the DET data set into broader subject areas, we adopted the European Research Council’s (ERC) disciplinary typology of (a) Life Sciences (LS), (b) Physical Sciences and Engineering (PSE), and (c) Social Sciences and Humanities (SSH; ERC, 2021). These broad categories also accord with three of Durrant’s (2017) corpus-informed disciplinary groupings. Detailed discipline codes under each ERC category facilitated coding decisions.

DET scores: DET Overall score and, where available, subscores (not reported on in this paper).

First-year credit-weighted academic grades at first attempt: These grades included coursework and exams for taught subjects but excluded theses or dissertations. We focused on grades of enrolled students’ first year rather than termly grades because course structures differ across subject areas, with some courses spanning multiple terms, thereby conflating termly performance distinctions. In addition, at UK universities, yearly (not termly) grades are consequential for progression and degree classification decisions. We analysed initial (not final) course grades. All faculties use percent grading except for the Education faculty, which uses letter grades (A–F) for PGT students. We therefore aligned letter grades to the percent scale using the median of each band for these students (e.g., A = 85, B = 65, C = 55).

Data analysis

We used the open-source statistical tool, R, to compute statistical analysis and the tidyverse package for data wrangling. To explore the relationship between DET Overall scores and academic grades (Research Question 1), we ran Pearson correlations (two-tailed) for the full DET test-taker sample and for PGT and UG students, then subgroup analysis by subject classification and nationality. We computed the Thorndike Case 2 formula (Sackett & Yang, 2000) to correct for range restriction using unpublished auxiliary data supplied by Duolingo for tests administered between 31 July 2020 and 13 July 2021, with indices available by degree level only (not by subject area or nationality). This statistical adjustment accounts for enrolled students, who are at the higher end of the DET performance distribution, not being representative of all prospective applicants who take DET.

We then computed correlations between distinct groups of PGT and UG students admitted to the university using IELTS or TOEFL iBT scores at the same entry point as the DET test-takers. We were unable to correct for range restriction due to the unavailability of comparable auxiliary data across tests. To compare academic grades by students admitted using DET versus IELTS and TOEFL iBT (Research Question 3), we performed a one-way analysis of variance (ANOVA) to examine whether there were differences in PGT and UG students’ academic grades depending on whether they were admitted with DET or one of the other tests. Finally, we performed ANOVAs or t-tests depending on sample size to examine whether test-takers, who were considered by their test providers to have achieved a particular CEFR level (B2–C2), performed differently academically as a function of ELP test.

Results

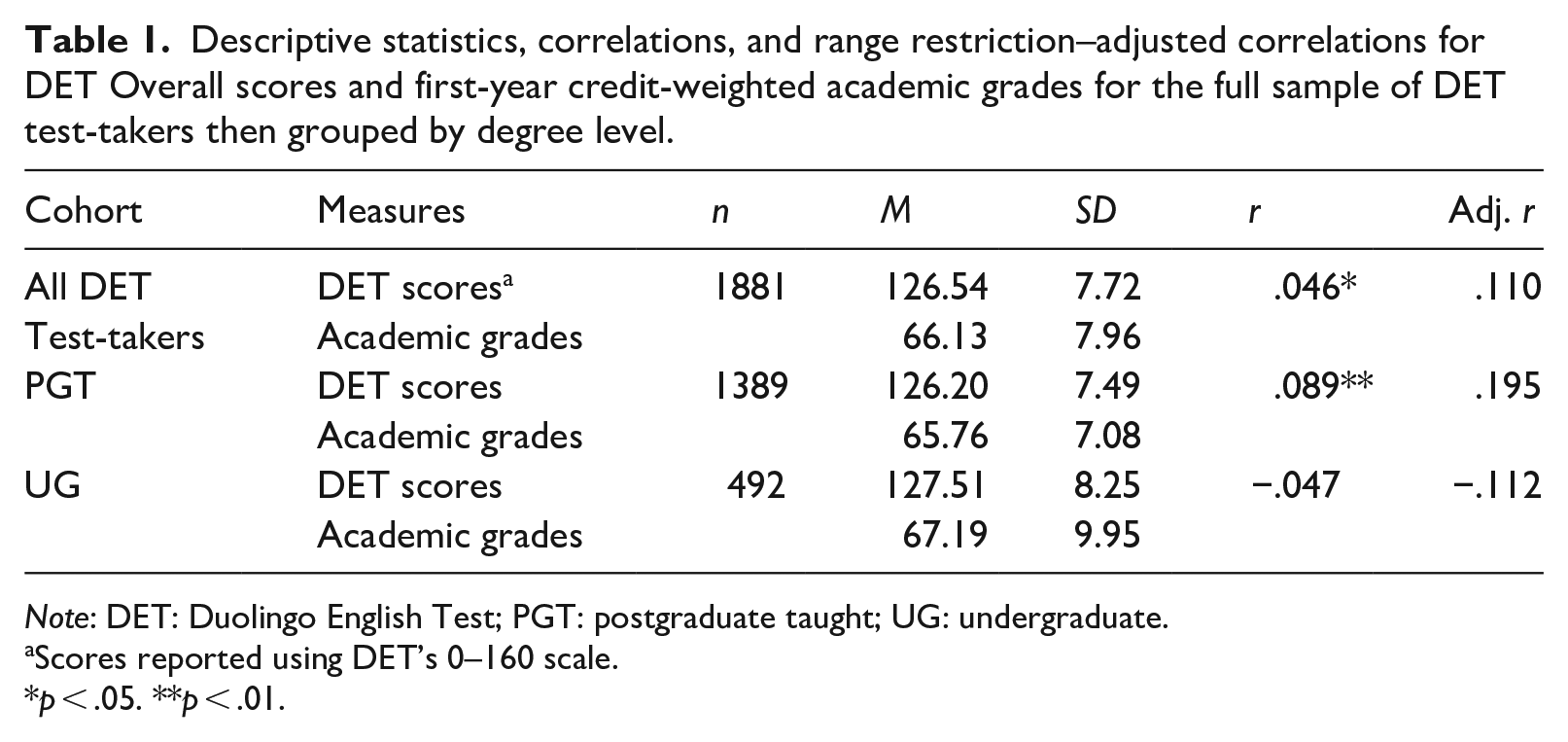

The first research question examined correlations between DET Overall scores and first-year academic attainment. Table 1 shows descriptive statistics and both Pearson correlations and range restriction–adjusted correlations between DET Overall scores and weighted average first-year academic grades. DET scores positively correlated with grades for the full sample DET test-takers (r = .046). Breaking down the result by degree level shows that the strength of the relationship is driven by the PGT cohort. Some caution should be applied in interpreting the UG result, however, with negative correlations suggesting that dissimilar groups were likely aggregated. The relationships for all coefficients, whether positive or negative, were strengthened after correcting for range restriction.

Descriptive statistics, correlations, and range restriction–adjusted correlations for DET Overall scores and first-year credit-weighted academic grades for the full sample of DET test-takers then grouped by degree level.

Note: DET: Duolingo English Test; PGT: postgraduate taught; UG: undergraduate.

Scores reported using DET’s 0–160 scale.

p < .05. **p < .01.

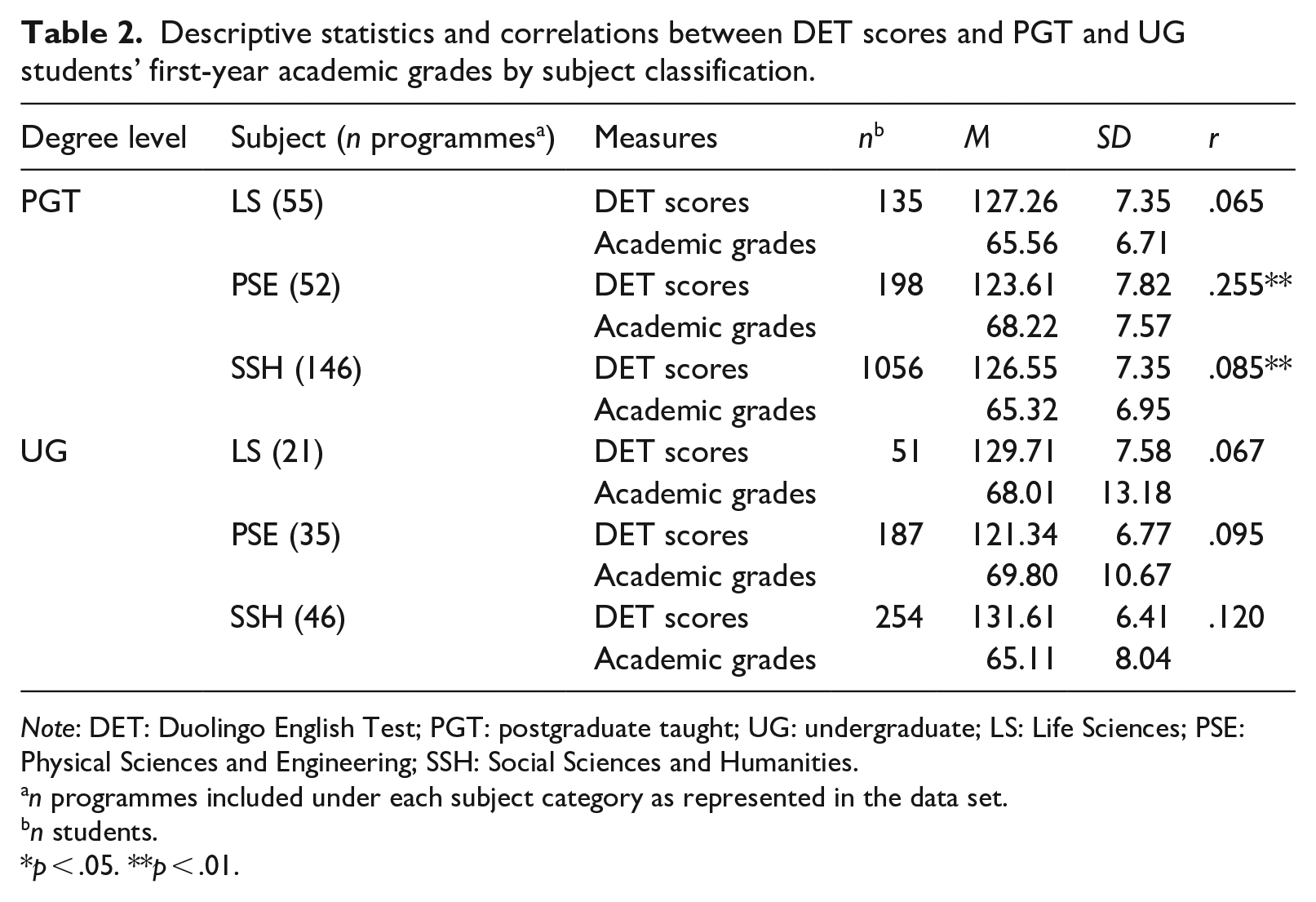

Table 2 shows descriptive statistics and correlations between DET scores and academic grades for PGT and UG students disaggregated by subject classification: LS, PSE, and SSH. For PGT students, positive correlations were revealed between DET scores and academic attainment for both PSE students (r = .255) and SSH students (r = .085); however, we could not adjust for range restriction due to unavailable parameters. For the UG cohort with a smaller overall sample size, relationships between DET scores and academic grades were strongest for SSH students (.120) than PSE students (.095).

Descriptive statistics and correlations between DET scores and PGT and UG students’ first-year academic grades by subject classification.

Note: DET: Duolingo English Test; PGT: postgraduate taught; UG: undergraduate; LS: Life Sciences; PSE: Physical Sciences and Engineering; SSH: Social Sciences and Humanities.

n programmes included under each subject category as represented in the data set.

n students.

p < .05. **p < .01.

We can observe that PSE students arrived with relatively lower DET scores but finished the year with higher average grades than the other two groups. Hu and Trenkic (2021) observed a similar trend, indexing by IELTS scores. A one-way ANOVA with academic discipline as the independent variable and DET Overall score as the dependent variable confirmed a statistically significant between-group difference in the PGT cohort, F(2, 1386) = 14.618, p < .001, η2 = .21. Pairwise comparisons using Tukey’s Honest Significant Difference (HSD) test (α = .05) revealed that PSE students were admitted with significantly lower Overall DET scores than both LS students, p < .001, 95% confidence interval (CI) = [1.704, 5.591], and SSH students, p < .001, 95% CI = [−4.290, −1.594]. The groups also differed significantly in achieved academic grades, F(2, 1386) = 14.265, p < .001, η2 = .020. Pairwise differences confirmed that PSE students, despite arriving with lower DET scores, finished the year with higher academic grades on average compared to both LS students, p < .001, 95% CI = [−4.491, −0.816], and SSH peers, p < .001, 95% CI = [1.621, 4.171]. Overall academic attainment data for all university students for the 2020–2021 academic year showed that students in PSE subjects obtain higher grades on average than do SSH students. For example, 57.7% of PGT students from the two largest PSE faculties were awarded distinctions for their degrees compared to just 18.5% of PGT students from the two largest SSH faculties.

In the UG cohort, there were also significant main effects for DET scores, F(2, 489) = 130.819, p < .001, η2 = .349, and academic grades, F(2, 489) = 12.745, p < .001, η2 = .050. Pairwise comparisons confirmed that UG students studying PSE were admitted with lower overall DET scores compared to LS students, p < .001, 95% CI = [5.891, 10.847], and SSH students, p < .001, 95% CI = [−11.789, −8.766], but finished the year with higher grades than SSH students, p < .001, 95% CI = [−6.895, −2.450]. Such discrepancies suppress the strength of the correlation between the observed ELP test score and academic attainment in the aggregated samples.

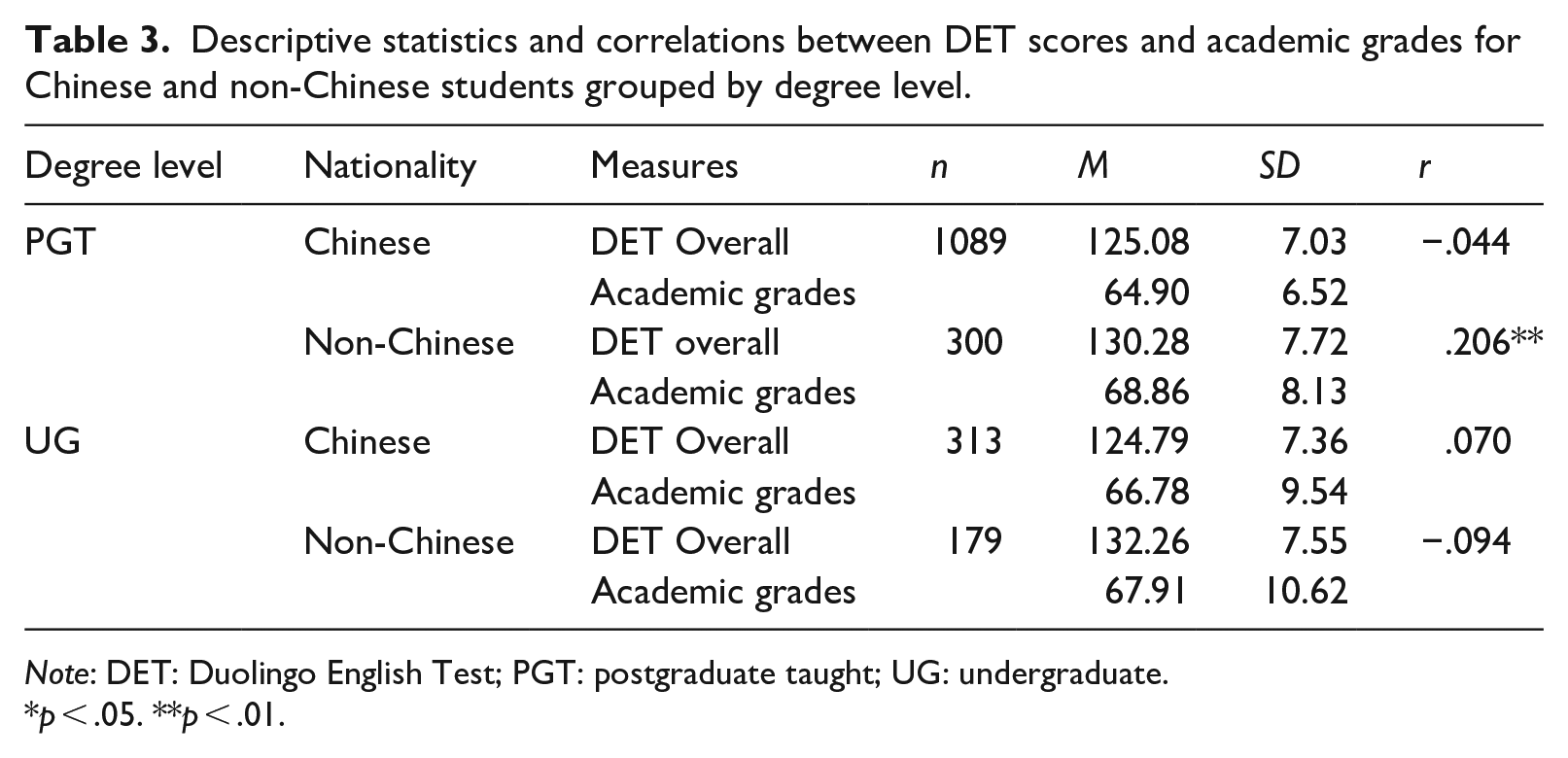

Table 3 shows descriptive statistics and correlations between DET scores and academic grades for PGT and UG students disaggregated by nationality. The strongest positive association was detected between DET scores and academic grades for non-Chinese PGT students, r = .206. In all other groups, the observed association was weak (positive for UG Chinese, but negative for PGT Chinese and UG non-Chinese students).

Descriptive statistics and correlations between DET scores and academic grades for Chinese and non-Chinese students grouped by degree level.

Note: DET: Duolingo English Test; PGT: postgraduate taught; UG: undergraduate.

p < .05. **p < .01.

Independent samples t tests showed that at both degree levels, Chinese students achieved significantly lower DET scores than non-Chinese students, tPGT(444.989) = 10.541, p < .001; tUG(490) = 10.725, p < .001. Chinese students also received lower academic grades than their non-Chinese peers; however, this difference was only significant for PGT students, t = 7.779, p < .001. No positive relationship was detected between DET scores and grades for Chinese UG students, r = −.094.

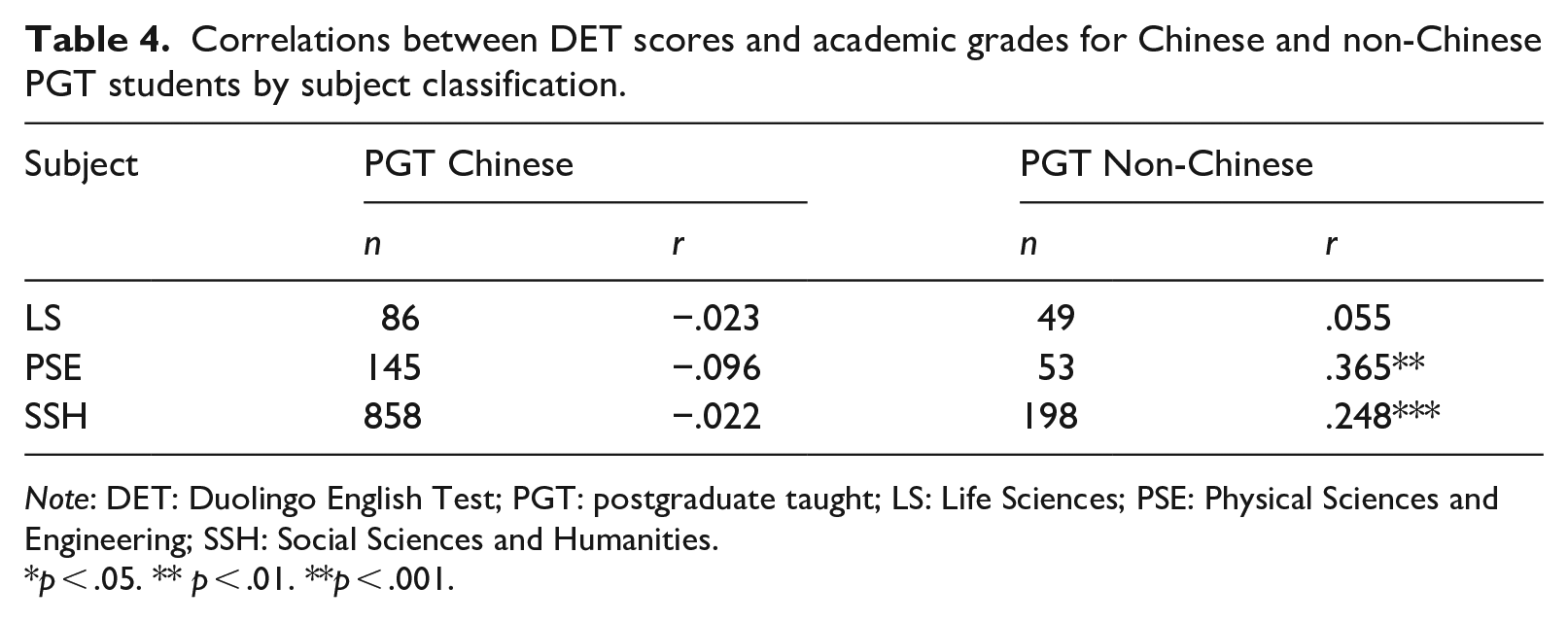

To probe whether different patterns might emerge for Chinese and non-Chinese students depending on their academic field, we further disaggregated PGT student data by nationality and subject classification. Table 4 shows that the most robust associations for subject classification were detected for PSE and SSH PGT Non-Chinese students (rPSE = .365, rSSH = .248), despite smaller sample sizes than for their Chinese counterparts. The main insight is the confirmation that the correlation strength between DET and academic grades goes up in more homogenous samples, as can be seen in the increased r value for both PSE and SSH when Chinese students are excluded from the sample.

Correlations between DET scores and academic grades for Chinese and non-Chinese PGT students by subject classification.

Note: DET: Duolingo English Test; PGT: postgraduate taught; LS: Life Sciences; PSE: Physical Sciences and Engineering; SSH: Social Sciences and Humanities.

p < .05. ** p < .01. **p < .001.

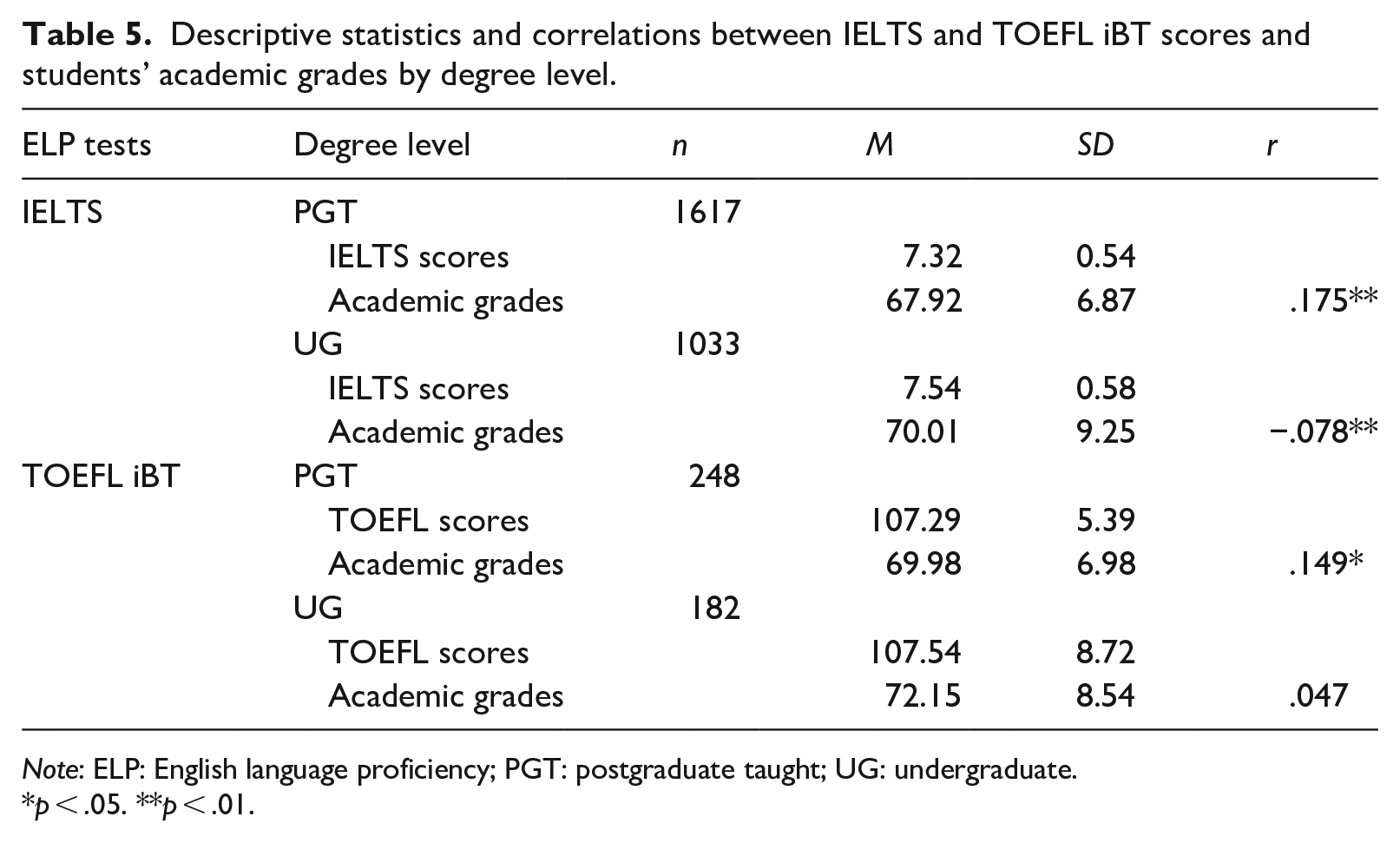

The second research question examined the association between DET scores and academic grades for enrolled DET test-takers compared to those admitted using another high-stakes ELP test. Table 5 shows descriptive statistics and correlations for PGT and UG students’ overall scores on IELTS and TOEFL iBT and first-year credit-weighted academic grades.

Descriptive statistics and correlations between IELTS and TOEFL iBT scores and students’ academic grades by degree level.

Note: ELP: English language proficiency; PGT: postgraduate taught; UG: undergraduate.

p < .05. **p < .01.

At PGT level, following the trend for DET (Table 1), IELTS and TOEFL iBT scores positively correlated with academic grades. Thus, PGT students arriving with higher scores on all three ELP tests performed better academically than peers who received lower scores on each of these three tests. The strength of correlation was higher for both IELTS (r = .175) and TOEFL iBT (r = .149) than for DET (r = .089). However, sample size differences and different test-taker background characteristics across cohorts (e.g., higher proportion of Chinese students for DET compared to the other two tests) make it important to only interpret overall trends. At UG level, a weak positive correlation for TOEFL iBT (r = .047) and weak negative correlation for IELTS (r = ‒.078) and DET (r = ‒.047) suggest high heterogeneity in the UG sample, which can mask meaningful patterns that exist within subgroups. Indeed, we observed disciplinary differences in the UG cohort in Table 2 for DET test-takers but did not have discipline-related information for IELTS and TOEFL iBT test-takers. Therefore, we could not examine group differences by subject classification for these tests.

To explore whether students who demonstrated readiness to study in English via DET experienced similar levels of academic success as those admitted using IELTS or TOEFL iBT (Research Question 3), we first performed a one-way ANOVA, comparing academic grades for the test-taker groups at each degree level. For PGT students, there were significant between-group differences for academic grades F(2, 3251) = 58.080, p < .001, η2 = .034. Tukey’s HSD post hoc test confirmed significant differences between PGT students accepted with IELTS versus DET, p < .001, 95% CI = [1.564, 2.759], and between TOEFL iBT and DET students, p < .001, 95% CI = [3.100, 5.353], with DET students performing less well academically in both cases. Similar patterns were observed in the UG subsample, with a one-way ANOVA confirming a significant between-group difference F(2, 1704) = 23.561, p < .001, η2 = .027. Tukey’s HSD pairwise comparisons confirmed that UG students who took IELTS received higher academic grades than those who took DET, p < .001, 95% CI = [1.608, 4.020]. TOEFL iBT students also performed more strongly academically than DET students, p < .001, 95% [CI = 3.045, 6.865].

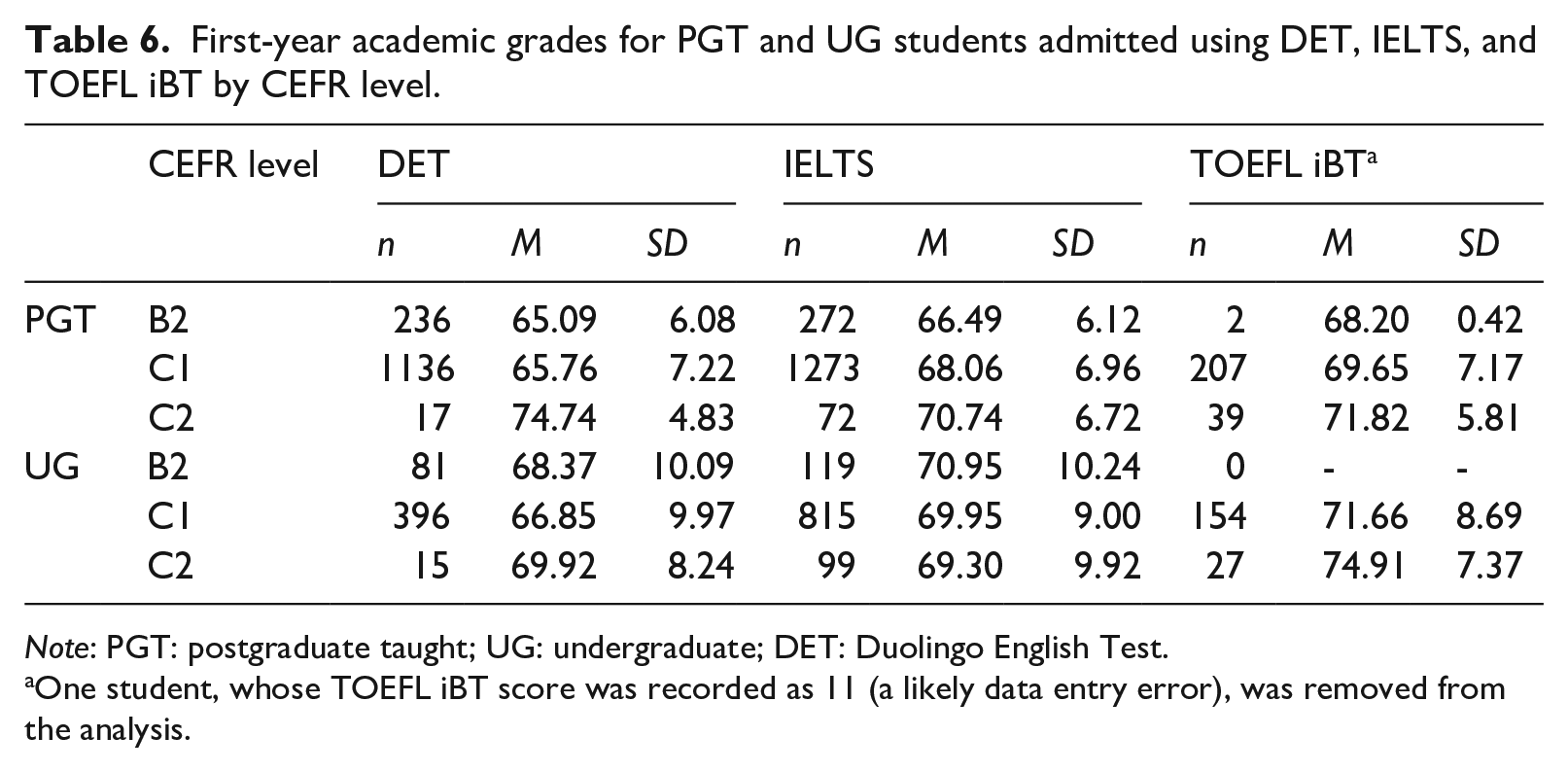

As the average level of ELP with which different test-taker groups were admitted might have differed and so contributed to the observed differences in grades, we mapped each student’s English proficiency score onto a CEFR level based on the test providers’ own published correspondences between the scores on their test and CEFR equivalents (Duolingo English Test, n.d.; ETS, 2022; IELTS, 2022). Notably, different scales are used for each test and test providers may have used different equating and standard-setting methods or other procedures for establishing equivalences.

Table 6 summarises the data by degree level grouped by the CEFR levels that are generally relevant for university admissions (B2, C1, and C2) for PGT and UG students, respectively. A one-way ANOVA for PGT students at C1 level (the largest subgroup) revealed a significant main effect for ELP test, F(2, 2613) = 45.264, p < .001, η2 = .033. Tukey’s HSD pairwise comparisons confirmed that PGT students accepted with IELTS, p < .001, 95% [CI = 1.625, 2.982], and TOEFL iBT, p < .001, 95% [CI = 2.637, 5.151], obtained significantly higher grades than DET test-takers. A significant result for UG students was also found at C1 level, F(2, 1362) = 20.869, p < .001, η2 = .030, with pairwise comparisons revealing that students accepted with DET received lower grades on average than students accepted with both IELTS, p < .001, 95% [CI = 1.775, 4.438], and TOEFL iBT, p < .001, 95% CI = 2.746, 6.874]. At B2 level, Welch’s t-tests (conducted due to sample size restrictions and imbalance) revealed that PGT students accepted with DET received significantly lower grades on average than students accepted with IELTS, t(497.03) = −2.572, p < .05. This difference was nonsignificant at UG level. For PGT students, DET test-takers considered to be at C2 level performed more strongly overall than C2 level PGT students admitted using more established tests in terms of mean scores.

First-year academic grades for PGT and UG students admitted using DET, IELTS, and TOEFL iBT by CEFR level.

Note: PGT: postgraduate taught; UG: undergraduate; DET: Duolingo English Test.

One student, whose TOEFL iBT score was recorded as 11 (a likely data entry error), was removed from the analysis.

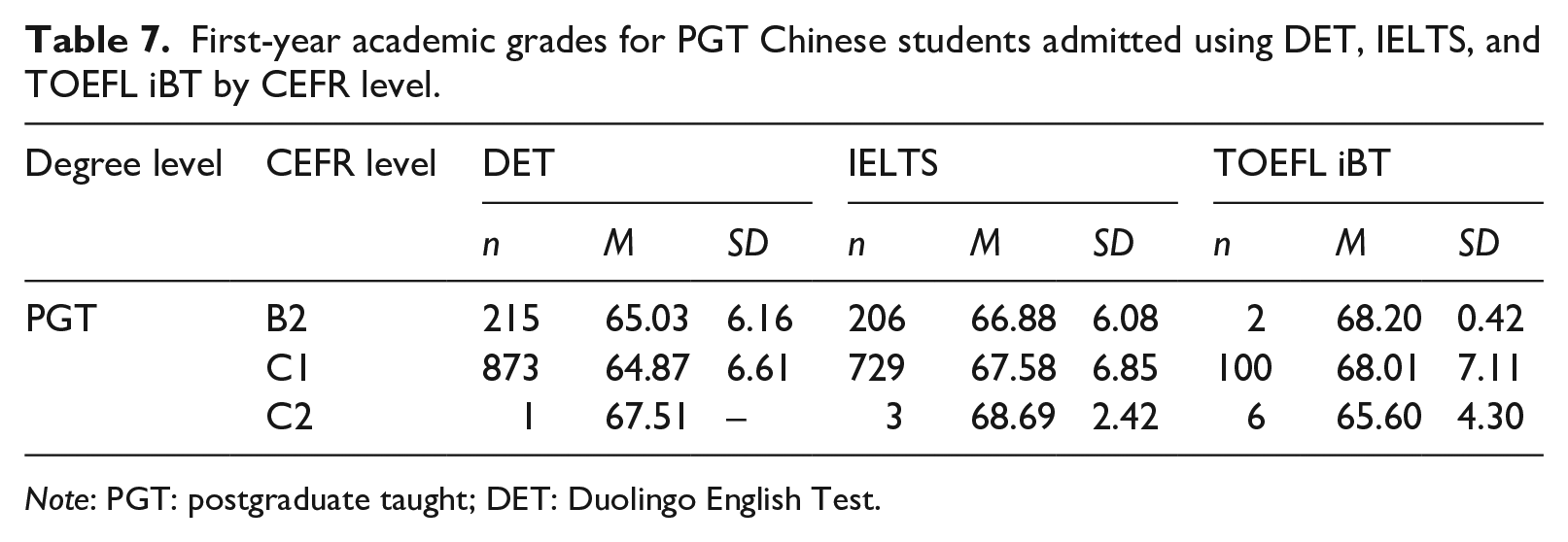

Finally, to rule out the possibility that the pattern was driven by a higher proportion of Chinese students among DET test-takers, we segregated the ELP data by CEFR level for PGT Chinese test-takers only (see Table 7). Significance testing mirroring that reported above revealed the same trend—PGT Chinese DET test-takers received significantly lower scores at B2 and C1 levels on average than their compatriots who had been admitted using the other ELP tests. A one-way ANOVA at C1 level revealed a significant main effect for ELP test, F(2, 1699) = 36.003, p < .001, η2 = .041. Tukey’s HSD confirmed that PGT Chinese students at C1 who were accepted with IELTS, p < .001, 95% [CI = 1.922, 3.509], and TOEFL iBT, p < .001, 95% [CI = 1.477, 4.816], obtained significantly higher grades than did DET test-takers. Welch’s t-tests revealed that B2 level PGT students accepted with IELTS received significantly higher grades than did students admitted using DET scores, t(418.63) = −3.115, p < .05.

First-year academic grades for PGT Chinese students admitted using DET, IELTS, and TOEFL iBT by CEFR level.

Note: PGT: postgraduate taught; DET: Duolingo English Test.

Discussion

This study is the first, to our knowledge, to examine the predictive validity of the revised (non-retired) version of DET in relation to academic attainment and to offer comparisons with established ELP tests. As a new test, it is only recently that large enough data sets of DET test-takers have become available with which to conduct such analyses. Validating the test through a programme of rigorous research is pressing, particularly due to its high-stakes and widespread use. Situated in the early stages of the pandemic shortly after DET had been adopted at a major UK university, this study contributes to criterion-oriented validity evidence. The headline predictive validity finding is that university students who arrive with higher DET Overall scores go on to achieve higher academic grades in their first year of study than do lower DET performers. This finding is in line with small but significant positive results for the established ELP tests in most previous predictive validity studies (Ihlenfeldt & Rios, 2022), notwithstanding publication bias.

After conducting subgroup analysis by degree level, we found that the correlation between DET scores and academic grades was positive for PGT but not UG students. We observed similar patterns for IELTS and TOEFL iBT as we did for DET, with a stronger result for PGT than UG for both competitor tests. It would be wrong to conclude from these results either that ELP is inconsequential in UG studies or that all three tests are poor measures of ELP. There are at least three plausible reasons why all three tests failed to detect a relationship between ELP and grades. The first is that in contrast to nearly all PGT programmes, first-year UG grades do not count for final degree classifications, potentially resulting in less effort on the part of UG students in summative assessments in their first year, which could weaken the predictive value of ELP test scores. Second, PGT courses tend to involve essays, which may be more linguistically demanding and involve more language production than exams, which tend to pervade UG assessment (Woodrow, 2006), potentially attenuating correlations between ELP scores and grades for UG students. Finally, as in all predictive validity studies, factors other than ELP come into play (Oliver et al., 2012). In highly heterogeneous samples, such factors can mask the predictive power of ELP scores, and this is likely to be the case here too.

To “peel . . . the onion” and examine whether different patterns exist across subgroups for the variables identified in the research questions (Bridgeman et al., 2016, p. 310), we disaggregated the PGT and UG results first by subject classification. For the PGT cohort, the overall association between DET scores and academic grades was driven by PSE and SSH but not LS students. For UG students, a very weak association by subject classification was found solely for SSH students, with no relationship detected for PSE and LS students.

Within the PGT cohort, the stronger positive correlation for PSE compared to SSH students could appear counterintuitive. That PSE students should arrive with lower mean DET scores is unsurprising, since cut scores on ELP tests for less linguistically demanding disciplines are typically lower. Why they go on to achieve higher academic scores is less clear. Previous predictive validity studies have shown that ELP tends to be more consequential for SSH subjects, which tend to be more English-intensive (e.g., more readings, extended writing), compared to PSE disciplines that focus on numeracy skills (e.g., Harsch et al., 2017). Although SSH students attain higher ELP test scores on average, this is offset by higher linguistic demands. Therefore, SSH students’ academic grades may be lower than ELP tests predict. In PSE disciplines, by comparison, math- or coding-oriented academic demands may mean less emphasis on ELP skills. It is also likely that the nature of assessment and/or grading practices played a role in different grade ranges for PSE compared to SSH students. University data on average degree classifications confirmed that students from PSE disciplines receive higher grades than do SSH students, which suggests that the nature of the assessment and marking practices are likely driving the effect. With the large number of programmes under the umbrella SSH classification, it is also possible that there is more diversity of practice and variability in linguistic demands within this cohort than in the other subject areas. Taken together, the results highlight the need to either control for or disaggregate analyses by subject classification when investigating the role of ELP in academic outcomes.

Chinese students, who constituted the largest subgroup at both degree levels in our sample, arrived with significantly lower overall DET sores compared to non-Chinese students. This finding is consistent with Harsch et al.’s (2017) and Bridgeman et al.’s (2016) predictive validity studies, which found that Chinese students received lower TOEFL iBT Overall scores and subscores than non-Chinese students. In our study, Chinese students also achieved lower academic grades than non-Chinese students, and this difference was significant in the PGT cohort. However, DET was predictive for the (smaller) non-Chinese student group but non-predictive for the (larger) Chinese student group. Because the Chinese group would seem to be more homogeneous than the group consisting of all other nationalities—notwithstanding the considerable linguistic and other diversity within China (Gong et al., 2011)—we might have expected a stronger correlation for Chinese students compared to their non-Chinese counterparts, which is consistent with what both Bridgeman et al. (2016) and Harsch et al. (2017) found for TOEFL iBT and IELTS, respectively. The fact that Chinese students are more numerous in our study compared to all other students means that they were likely more heterogeneous at the level of represented programmes and disciplines in our study compared to non-Chinese students.

When we conducted subgroup analysis by discipline, we again found weak negative correlations for PGT Chinese students for all three subject classifications compared to larger positive correlations for two of the disciplinary groups for non-Chinese students (PSE and SSH). One possible explanation is that Chinese test-takers who took DET had no alternative tests with which to demonstrate their ELP level, with IELTS Indicator and TOEFL iBT Home Edition not in operation in China after they were introduced (Isbell & Kremmel, 2020). By contrast, test-takers in almost all other countries could choose among these tests as alternatives to DET once the university had accepted them as proof of ELP. Thus, non-Chinese students who opted to take DET are more likely to have preferred and deliberately chosen it over the competitor tests. Consequently, they may have been more familiar with the test format than their Chinese counterparts, and some may have performed more poorly than they would have had they been more accustomed to the test. Whatever the reason, future research is necessary to confirm or disconfirm the patterns revealed in this study.

Students accepted with DET experienced lower academic success than those accepted with IELTS and TOEFL iBT. It is possible that had DET’s latest concordance data (Cardwell et al., 2022) been available at the time of the study, some lower-scoring students who had gained entry to the university through DET might not have been admitted. As described above, correlational patterns between DET scores and academic grades were mirrored in the results for IELTS and TOEFL iBT. Neither IELTS nor TOEFL iBT scores related to first-year academic outcomes in the UG cohort, confirming an underlying complexity and heterogeneity of this sample. However, all three tests had positive relationships with grades for PGT students, with the correlation for DET being somewhat lower than for IELTS and TOEFL iBT. This supports the predictive validity of DET (i.e., higher DET scores tends to mean higher grades) because of roughly similar patterns between DET Overall scores and scores for IELTS and TOEFL iBT. In particular, it confirms that the negative correlation between UG students’ DET scores and grades most likely stems from the properties of the sample rather than being a property of the test itself.

This pattern remained when the students were grouped by CEFR level. At C1 level, both PGT and UG students who had been admitted with DET achieved lower grades than their peers who had test scores certified by DET’s more established industry competitors. This was the most common CEFR level in our data set, thereby yielding the most statistically robust result. At B2 level for PGT students but not UG students, IELTS test-takers received significantly higher scores than DET test-takers. Conversely, at C2 level, DET test-takers received higher mean academic grades than the other test-takers. In sum, students arriving with DET (with the possible exception of a the few students at CEFR’s top level) experienced lower academic success than students arriving with IELTS and TOEFL iBT scores. Finally, by detecting the same pattern on the subgroup of Chinese students, we ruled out the possibility that DET test-takers’ lower academic grades were an artefact of the higher ratio of Chinese students in the DET sample compared to the IELTS and TOEFL iBT samples: Chinese students arriving with DET still went to achieve lower academic grades than Chinese students admitted with IELTS or TOEFL iBT.

This analysis relies on test providers’ own published equivalences of test scores and CEFR levels. However, this does not imply uniformity in method, nor consensus on which CEFR level equates to each test score. Correspondence points might not be adequately set nor directly comparable. In terms of DET, Cushing and Ren (2022) highlighted that although there are now published concordances between DET and other ELP tests and between DET scores and CEFR levels (DET, n.d.), it is unclear how these concordances were established. This calls for a closer look at the correspondence points between tests and suggests that higher DET cut scores, potentially of Overall scores in conjunction with subscores, might be necessary to ensure a comparable academic performance with students certified through the traditional ELP tests. Most students in the current study were admitted through Overall DET scores alone, as subscores were not routinely reported to universities until the first week of July 2020 (LaFlair & Tousignant, 2020).

It is possible that DET test-takers’ comparatively lower academic performance has to do with the test itself. Alternatively, their performance may have nothing to do with the test at all (e.g., could be attributed to sample characteristics and external factors). In relation to the first point, the fully automated DET is measuring a vastly different ELP construct than human-scored traditional ELP tests (Khabbazbashi et al., 2021). Cushing and Ren’s (2022) analysis of IELTS Academic and DET based on publicly available information highlighted differences between the two tests in terms of cognitive and context validity (e.g., cognitive skills elicited, academic orientation). Regarding the second point, it is possible that academically weaker students applied in the latter part of the university’s admission cycle, when DET was being accepted, and that this explains the lower academic grades. One of the key benefits of DET is its greater accessibility and affordability compared to market competitors (e.g., Wagner, 2020). It could be that students with lower socioeconomic status, who also tend to have lower academic achievement (Muttaqin et al., 2022), opted to take DET compared to more expensive alternative tests. In sum, test-taker characteristics and other factors that are extraneous to the test could have shaped the findings.

Clearly, there is a need for further research to establish whether the patterns observed in this study persist in other contexts and, if so, why. But on a practical level, it suggests that, for whatever reason, students arriving with ELP scores via DET may need a higher cut-off point to perform at a comparable level to students arriving with other ELP tests or may need additional support to fulfil their academic potential. It should be noted that few DET test-takers benefitted from pre-sessional support in our study. Pre-sessional DET student performance compared to that of test-takers who were admitted outright could be an additional variable to examine in future research (e.g., Trenkic & Warmington, 2019). Most students in our DET cohort were only admitted on the basis of DET Overall scores, due to DET reporting practices that changed in the latter part of the admissions cycle for the 2020–2021 intake, when DET introduced routine subscore reporting. Future research could examine the effect of different DET score profiles on academic grades (Ginther & Yan, 2018), how this can be interpreted in light of DET’s movement towards reporting composite skills through subscores rather than the traditional speaking/listening/reading/writing demarcation (Cardwell et al., 2022), and the appropriateness of cut scores for different disciplines.

Limitations

This study contributes to pressing criterion-related validity research on DET in the first full academic year after the onset of the pandemic at the United Kingdom’s largest on-campus university. The results reflect this snapshot of time. In line with government measures to stop the virus spread, most programmes needed to move to fully online provision. Students had little or no access to on-campus resources and less exposure to university life than in a non-pandemic academic year, with some students conducting their studies from abroad in fully non-English medium environments. Changes in teaching mode and, in some cases, adjustments to assessments may have affected students’ academic performance and grades. For example, to account for pandemic-related disruption, PGT students were allowed up to three resubmissions of failed assignments with no capped grades compared to only one capped resubmission pre-COVID. This policy, which inadvertently disadvantaged students who had submitted their assignments on time and passed but did not receive the opportunity to resubmit later and improve their grade, may have resulted in gaming the system. More students were also granted deferrals and interruptions of study than in a normal year. Some markers may have scored differently and potentially more generously because of perceived challenges to do with remote delivery (Karadag, 2021). The academic attainment data in this study were, thus, coloured by these factors, limiting the generalisability of the findings.

Because widespread uptake of DET for admissions purposes was recent at the time of the study, DET test-takers may have been unfamiliar with DET item types (Cushing & Ren, 2022). Thus, the resulting scores may have underestimated their ELP. However, the fact that the DET test preparation industry was also not yet well established at the time also means that intensive coaching was limited, hence reducing tailored test-taking strategies as a potential source of construct-irrelevant variance. In this sense, the DET scores in this data set may be a purer measures of test-takers’ ELP than in the future, when test-takers are more rehearsed, test-wiseness strategies are explicitly taught, and test-takers might have access to more resources to help them game the system and optimise their scores. This may especially be the case in exam-oriented societies that have established test preparation industries and cram school traditions (e.g., Clark & Yu, 2021; Tsang & Isaacs, 2022). However, Cushing and Ren’s (2022) analysis of postings on Chinese online discussion fora (2020–2021) suggested that at least one online poster felt that rigorous drilling, question pattern analysis strategies, and the use of contextual cues, which are apparently coached for IELTS and TOEFL iBT, may not be useful for DET, which requires knowledge of English structures under response time pressures. Future research should investigate how resilient DET is to coaching for the different item types.

There are several other limitations, mostly due to sample characteristics and data availability. First, we were only able to correct for range restriction for PGT and UG student DET test-takers and not for analyses involving subject classification, nationality, or competitor ELP tests due to the unavailability of auxiliary data. Second, students who met language entry requirements through DET, IELTS, and TOEFL iBT represented independent samples in our study. Therefore, we could not statistically compare their predictive utility, nor could we explore whether lower academic success for DET test-takers was due to the nature of the test, score calibration, or external factors, such as test-takers’ socioeconomic status or academic ability. Furthermore, the results were likely shaped by students with different background characteristics applying at different time points in the admissions cycle. Sample size differences across the three ELP tests are also notable, making it important to exercise caution when interpreting the test comparison results.

Unbalanced sample size was also a limiting factor. Due to the high proportion of DET test-takers from China and few students from European Union (EU) countries in the 2019–2020 academic year, potentially exacerbated by Brexit, we could only make nationality comparisons for Chinese students and students from all other nationalities. We had also wanted to examine programme-level data based on whether they required Advanced, Good, or Standard ELP thresholds for admissions as an indicator of linguistic demands. However, most programmes required a Good level, limiting our ability to use it as a grouping variable. Now that Duolingo routinely reports subscores, future research could examine subscore profiles. These data were only available for a small group of test-takers who had taken the test late in the application cycle in our sample.

Yet another limitation is using first-year course grades as the sole criterion measure. There is no placement test tradition at UK universities (e.g., Purpura et al., 2021), precluding use of in-house ELP test scores as an additional criterion measure. Furthermore, we only tracked students’ academic attainment over the course of their first year of study, with most PGT students in the data set undertaking 1-year master’s programmes. In the case of multiyear degrees, it would be useful to investigate whether DET is more predictive of academic grades in subsequent years.

Despite these limitations, this study provides much-needed preliminary predictive validity evidence for DET in relation to academic attainment. However, criterion-related validity is not sufficient for construct validity (American Educational Research Association et al., 2014). Given its ongoing high-stakes use at many English-medium universities, different but complementary sources of evidence are necessary to build a robust evidence base to support the intended uses and interpretations of test scores (Chapelle, 2021). Duolingo has shown its commitment to this both through work conducted in house (e.g., Burstein et al., 2022), in some cases with newly hired personnel from the assessment and psychometric communities, and through funding validation projects such as this one.

Footnotes

Acknowledgements

We are grateful to Jill Burstein, Antony Kunnan, Geoff LaFlair, J. R. Lockwood, and Alina von Davier from the Duolingo Assessment Research team for their input on previous versions of this manuscript. We also thank Paula Winke and the Language Testing reviewers for their valuable comments on previous versions of our article.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: During the period of undertaking and disseminating this Duolingo-funded project, the first author, Talia Isaacs, conducted assessment-related research, advisory, or consultancy work for the following organisations: British Council, Cambridge Assessment English, Educational Testing Service (ETS), National Centre for Excellence for Language Pedagogy (NCELP), and Organisation for Economic Co-operation and Development (OECD). Talia became the Co-Editor of Language Testing January 1, 2023. This co-authored paper was submitted and accepted before Talia assumed her editorial role for the journal. Co-Editor Paula Winke managed the peer review and editorial process for this paper.

Ethical Approval

The study received ethics approval from UCL IOE Research Ethics Committee, REC 1475. The project was jointly conceived by the first three authors. The fourth author provided access to admissions and academic attainment data, the second author conducted data analyses, and the first and second authors drafted the manuscript with reframing and editing from the third author. All authors approved this manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This article reports on a Duolingo-funded commissioned study.