Abstract

This research investigated sensory preferences and experiences of individuals with visual impairment and blindness, when interacting with a novel multisensory device for braille learning. The device comprised an enlarged braille cell, in which interacting with each button elicited a sound, haptic vibration, or an auditory-haptic stimulus. Children, adolescents, and adults with blindness or visual impairment placed their fingertips on the device to perceive braille letters. Parents rated their children’s auditory and tactile hyper- and hyposensitivity. All participants reported enjoyment, competence, and confidence during device interaction. Participants with blindness favoured auditory-haptic and auditory modalities, while participants with visual impairment also liked the haptic-only modality. Children with blindness who scored high on hyposensitivity revealed higher hypersensitivity scores within the auditory and haptic modalities, while children with visual impairment showed cross-modal hyper- and hyposensitivity correlations between tactile and auditory modalities. Multisensory enrichment of braille learning, applications, and diagnoses are discussed to outline future research.

Introduction

Braille letters consist of small, raised dots arranged in a 2 × 3 cell with a height of ~0.5 mm, and a diameter of ~1.5 mm (UK Association for Accessible Formats, 2024). Learning to read braille requires sensory, motor, and cognitive abilities to perceive the dots, understand the spatial configuration and transform the perceived spatial layout into letters (Martiniello & Wittich, 2022). Studies have indicated that individuals with blindness and visual impairment who use braille develop stronger spelling skills compared to those who predominantly use auditory methods (Papadopoulos et al., 2009). An earlier start and a longer duration of braille learning is also associated with higher braille reading speeds (Lang et al., 2021). Previous research highlights significant advantages of braille literacy for higher employment rates, and educational attainments (Hoskin et al., 2024; see also McDonnall et al., 2024; Ryles, 1996). However, the reduced tactile sensitivity in some learners, the length of braille books, and auditory alternatives that are less practice dependent have raised concerns of braille usage (Tobin & Hill, 2015).

Braille readers typically use their fingertips to identify letters using either their right hand, left hand, or both (Papadimitriou & Argyropoulos, 2017). While braille beginners often place both index fingers side by side, a more efficient strategy involves using both hands to perform separate but complementary functions. A range of braille reading strategies exist in terms of specific placement and movements of hands and fingers across and between lines, which depend on factors such as individual preferences, proficiency in braille reading, and physical ability (Bertelson et al., 1985; Davidson et al., 1980; Hughes et al., 2011; Kusajima, 1974; Mangold, 1977; Mousty & Bertelson, 1992; Nonaka et al., 2021; Olson & Mangold, 1981; Wormsley & D’Andrea, 1997; Wright et al., 2009).

Research indicates that braille beginners perceive letters as ‘textural’ rather than ‘spatial’ configurations (Millar, 1997). According to Millar (1997), dot density is crucial for braille reading. Instead of coding braille patterns by their outer shape, beginners learn to distinguish single dots as dot density cues are easier to interpret than global shape cues (Millar, 1986, 1997; for further research on tactile perception and braille, please find: Baciero et al., 2023; Bertelson et al., 1992; Boven et al., 2000; Grant et al., 2000; Knwlton and Wetzel, 1996; Legge et al., 1999; Nolan & Kederis, 1969; Stevens et al., 1996; Wong et al., 2011). Given that variations in braille size might interfere with the texture characteristics of the letters, it has been recommended to present braille letters in their original size (Millar, 1997; see Barlow-Brown et al., 2019 for further discussion). However, other research suggests that using enlarged braille letters via a pegboard resulted in more effective learning compared to standard braille letters in sighted children with no prior experience in reading braille (Barlow-Brown et al., 2019; Heller & Mitchell, 1985; Newman et al., 1982; Newman et al., 1985).

During braille learning, learners may use a variety of sensory modalities to associate tactile representations of letters with their corresponding meanings (Millar, 1997). For those with intact auditory perception, the sound of the letter needs to be associated with the haptic pattern of the braille letter. Theories suggest that impaired multisensory processing could lead to an overreliance on one sensory modality, which might result in hypersensitivity, while reducing reliance on others, which might lead to hyposensitivity (Hill et al., 2012; Siemann et al., 2020). Hyper- and hyposensitivity might be explained by the interaction between neurological thresholds and self-regulation (Dunn & Daniels, 2002). In this case, the neurological threshold can be defined as the amount of sensory stimulation required to detect a stimulus while self-regulation refers to response regulation to sensory input. For example, a child could react to sensory information without actively modifying or controlling it or actively manage sensory input to meet their needs.

To understand the impact of alterations in the onset of multisensory experiences, Scheller et al. (2021) investigated processes of multisensory integration, such as auditory-tactile integration in children with congenital and late blindness as well as adults with late onset blindness after the age of 8 years together with a sighted control group. Participants were asked to indicate whether two objects either presented in the haptic, auditory or in the auditory-haptic condition, differed in size. As children with congenital blindness and late blindness mainly rely on auditory and haptic information to identify an object, it has been suggested that they would show earlier auditory and haptic integration than sighted individuals. Nine- years old children with congenital blindness showed a higher benefit from integrating auditory-haptic information compared to sighted children. Furthermore, optimal auditory-haptic integration started at a younger age in individuals with early blindness compared to the other groups, whereas optimal auditory-haptic integration was impaired in individuals with late blindness. The authors suggest that cross-modal plastic changes, such as the recruitment of visual brain areas by the remaining sensory modalities, might be one underlying principle of optimal auditory-haptic integration in children with blindness (for visual cortex activity see for example: Amedi et al., 2005; Burton et al., 2002; Liu et al., 2023; Merabet et al., 2005; Pascual-Leone et al., 1993; Sadato et al., 1998).

On the other hand, several findings suggest that sound localisation and motor responses can be delayed in children with visual impairment and blindness, as visual information contributes to sensory-motor feedback that supports efficient environmental exploration (Cappagli et al., 2017, 2019). Training with an Audio Bracelet for Blind Interaction (ABBI) which connects to a smartphone and elicits a sound in response to movement showed that spatial accuracy improved in children with visual impairment and blindness after auditory-motor training (Cappagli et al., 2017, 2019).

This finding aligns with research demonstrating improvements in learning outcomes after multisensory training (Mathias & von Kriegstein, 2023) given that multisensory experiences activate a higher number of memory traces compared to unisensory experiences and thus allow more fine-tuned predictions about upcoming items (such as words). Based on this concept, the presentation of braille letters was enriched with auditory and haptic sensory information in the current experiment.

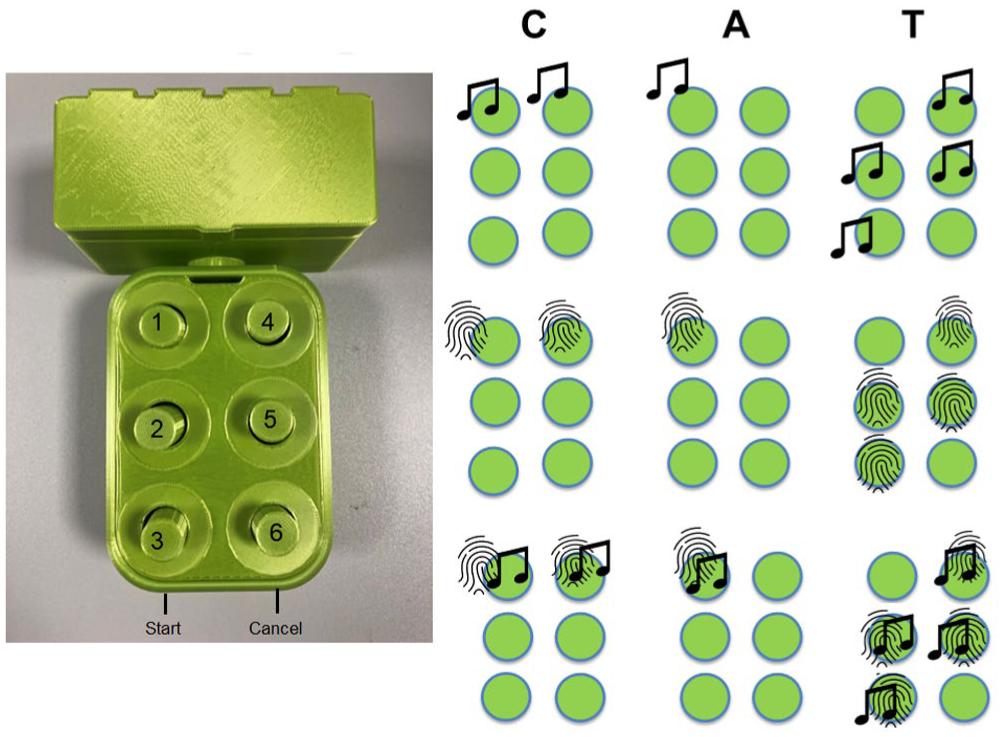

This article reports findings of participatory design sessions to develop the multisensory device, such as participant enjoyment and usability. A multisensory device has been developed, consisting of a six-button box arranged in an enlarged 2 × 3 braille cell (see Figure 1). This emulates the approach used by some teachers who use egg-cartons to teach the spatial concept of a braille cell (Farrand et al., 2024). Each of the buttons could initiate a sound (increasing in pitch from button one to six), a haptic vibration, or the combination of the sounds and vibrations. Participants could feel the haptic vibrations and listen to the sounds by either placing the fingertips on each button, or by pressing each button which initiates a sensory response (see Figure 1(b)). Children were interviewed about their enjoyment, their perceived competence, and their nervousness (Deci & Ryan, 2000). They were also asked about the modality they mostly enjoyed during device interaction. Parents completed the Glasgow Sensory Questionnaire (GSQ; Robertson & Simmons, 2019) on behalf of their children to analyse sensory sensitivity profiles.

Multisensory device (left side): A six-button box connected to a box including the Arduino with start and cancel button at the front side. Right side: Serial presentation of the letters CAT in three different modalities (auditory, haptic and auditory-haptic).

Materials and methods

Participants

Twelve children, adolescents, and young adults with blindness (

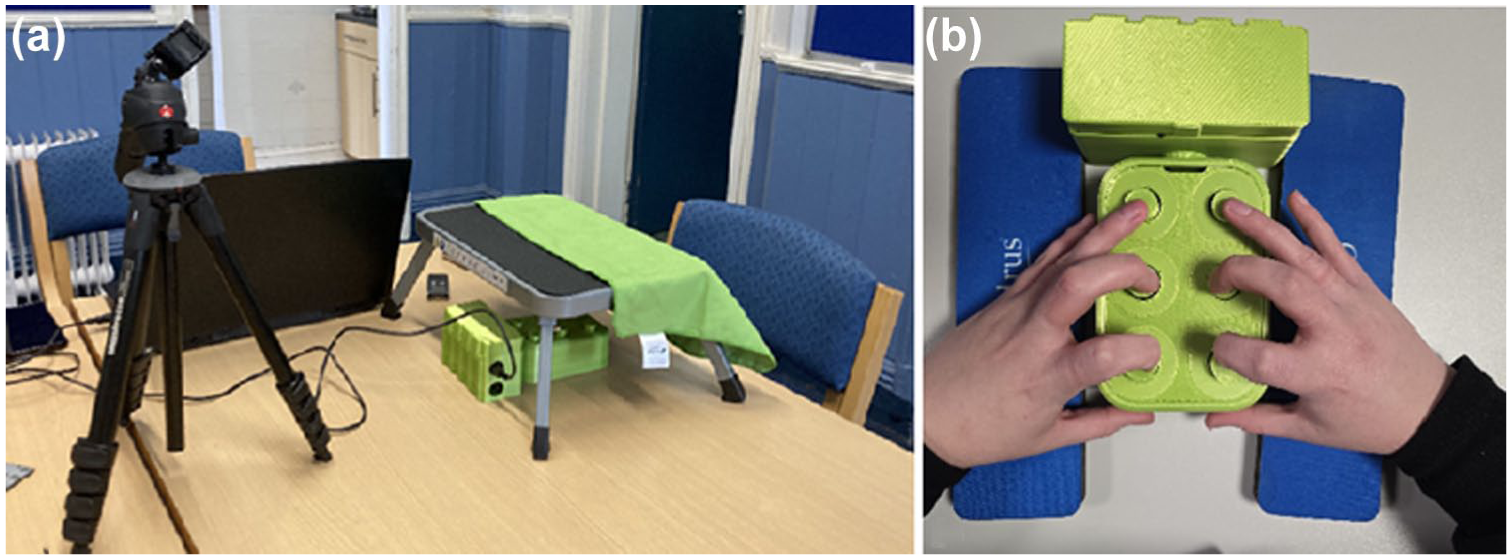

Diagnoses and demographic information for each participant are listed in Table 1. 1

Demographics of individuals with blindness and visual impairment.

The diagnosis of one participant was unknown. Among the 13 children with visual impairment, two were also diagnosed with autism, and another two were diagnosed with ADHD. In addition, one participant was suspected to have both autism and ADHD. None of the children were diagnosed with Cerebral Visual Impairment (CVI), although it should be noted that a lack of diagnosis does not necessarily mean that children do not have any CVI (Williams et al., 2021). Three participants had a dual diagnosis of blindness and hearing impairment. They were wearing hearing aids which allowed them to hear typical speech and music within 1 m. Please note that the decision to include individuals with additional impairments was guided by the fact that many individuals with visual impairment and blindness also have co-occurring impairments and diagnoses (please see Absoud et al., 2011; DeCarlo et al., 2014; Fazzi et al., 2019; M. Stevenson & Tedone, 2025; Teoh et al., 2021 for further information).

Participants were recruited through conversations with the braille teacher and the Qualified Teacher of Children and Young People with Vision Impairment (QTVI) who recommended specific students for whom the device would be most appropriate or relevant. These were mostly students who were either currently learning braille or who were being considered for braille instruction due to changes in their vision. In the current experiment, all students who received braille instruction – Grade 1 (uncontracted braille) and/or Grade 2 (contracted braille) – including those students who knew a few braille letters, were considered braille readers.

Twenty-three children and adolescents were braille readers, and two children with visual impairment had no braille reading experiences. The mean age onset of braille learning was 6 years (range: 2–11 years,

The primary goal of the device interaction was to enhance motivation to learn braille, even among children who had not yet started formal braille learning. Including participants at different stages of braille literacy (e.g., those in the early, pre-braille phase) allows for a more representative sample of the broader population of children and adolescents with visual impairments. This diversity enriches the dataset by offering a wider range of perspectives on the feasibility and usability of the device across different educational stages.

The multisensory device

The outer casing of the multisensory device was made of polylactide (PLA), a thermoplastic polymer that is biodegradable, renewable and typically used as a filament for 3D printing prototypes. The device shell (Height: 78 mm, Width: 215 mm, Depth: 50 mm) and buttons (Height: 60 mm, Width: 11 mm) were fabricated by an Original Prusa i3 MK3S + 3D printer. Object designs were drawn using Autodesk Fusion 360 and AutoDesk Tinkercad and prepared for printing using PRUSA slicer software (2.7.1). Six Grove haptic motor modules integrated with DRV2605L drivers were used to create the haptic vibrations of the buttons, and six Grove buttons with pull down resistors were used to record button presses. An Arduino Mega 2560 Rev3 was used as a microcontroller board, with a Grove–Mega Shield v1.2 attached. The code created on the Arduino IDE (2.3.2) was uploaded to the microcontroller board. A Grove speaker was used to play auditory sounds.

The haptic vibration controlled by the Arduino through the haptic motors included waveform 14/123 (Strong Buzz-100%, duration; 500 ms). The auditory sounds were feedback sounds: 349 Hz, and 1261 Hz; and auditory sounds associated with each button increased in pitch from button 1 to button 6: button 1: 293 Hz, button 2: 329 Hz, button 3: 349 Hz, button 4: 391 Hz, button 5: 440 Hz, and button 6: 493 Hz. The device was equipped with two additional buttons: a start button to initiate letter presentation, and a cancel button positioned on the front of the button box, facing the participant (see Figure 1).

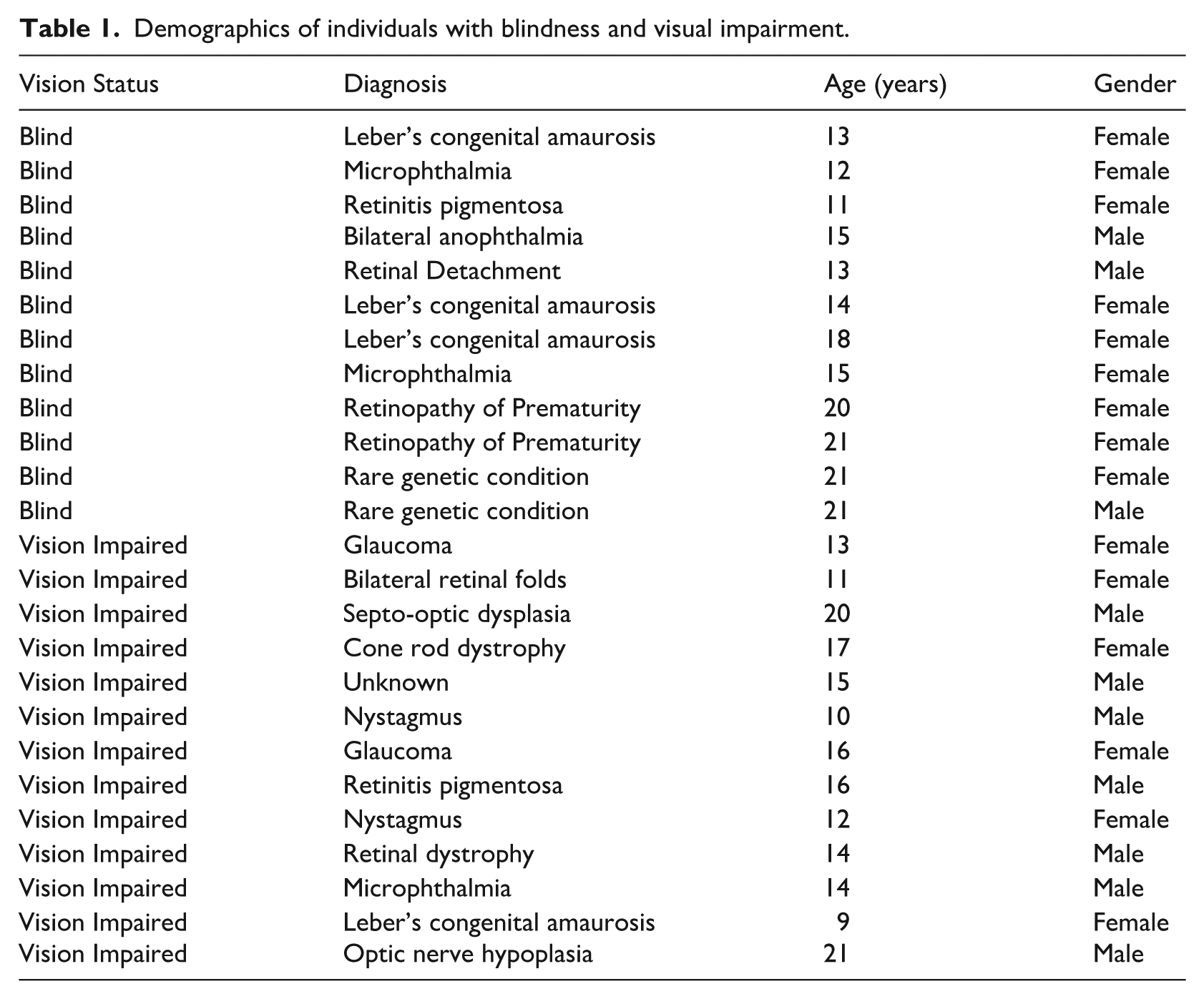

Experimental setting (a). The multisensory device was placed underneath a small table. Children were asked to place their fingers on the buttons (b), to be able to feel the haptic vibrations. Thumbs were placed on the start and cancel button.

a, b, g, i, k, l (level 1)

c, d, h, o,

e, m, n, p, s (level 3)

f, r, u, x, y (level 4)

j, q, v, w,

According to the Hands-On reading scheme, letters that are more tactually distinct and allow to create simple consonant-vowel-consonant words early on, were introduced first (e.g., bag). Less common letters were introduced at a later stage.

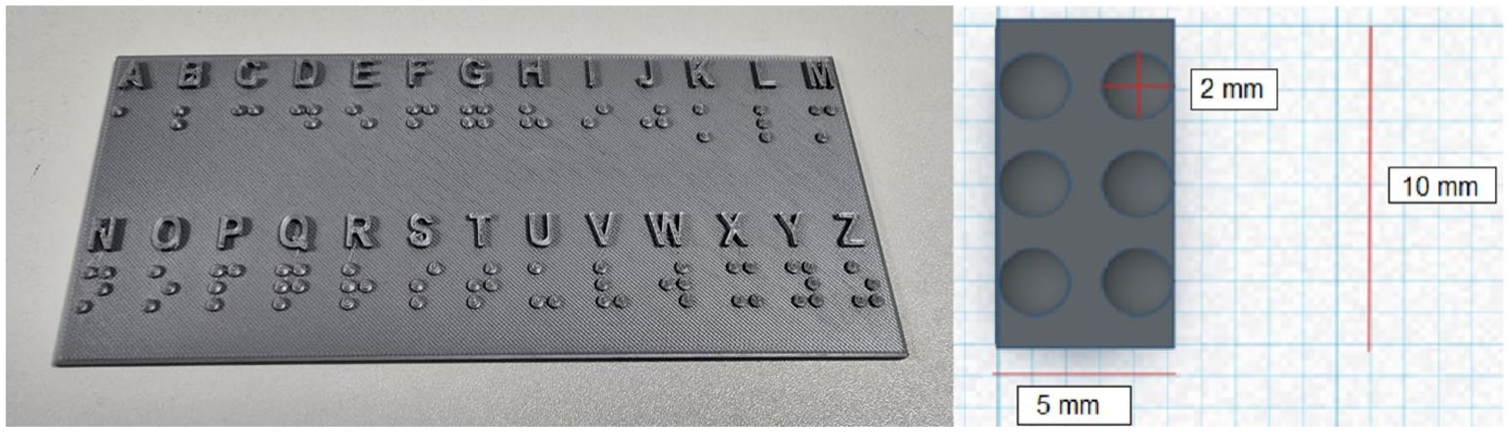

The letter presentation began with the letter A, which included only one haptic vibration, and the letters were grouped into different levels (1–5). A tune was played as feedback for reaching the next level. After each letter presentation, children were asked to identify the letter and they were given the opportunity to identify the letters on a 3D-printed chart specifically designed with smaller braille letter dimensions (dot size: 2 mm high, diameter 2 mm, see Figure 3). This braille letter size was chosen because a smaller 3D-printed version was challenging to read. Correct and incorrect letter responses were retrieved via the transcriptions of the video-recordings.

3D printed braille chart (left side) including smaller braille letters; right side: dot size: diameter: 2 mm and dot height: 2 mm; cell width 5 mm, cell length: 10 mm.

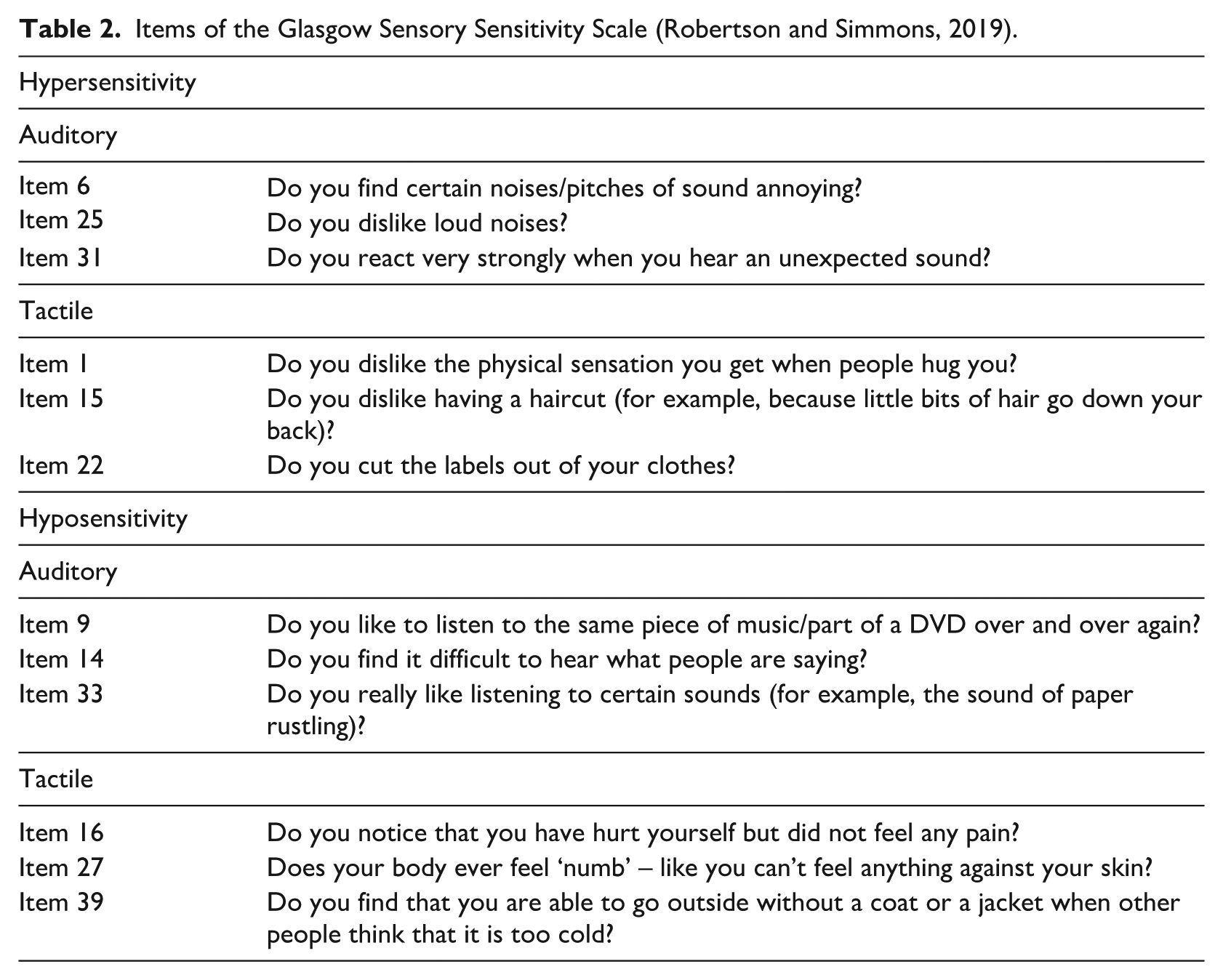

The following items were included in the analysis:

Items of the Glasgow Sensory Sensitivity Scale (Robertson and Simmons, 2019).

Results

Hand placement and letter identification

Participants’ hand movements were video-recorded to analyse their interactions with the device and to identify any typical hand movement patterns (Gentilucci et al., 1997; Lederman & Klatzky, 1987; Polechoński & Olex-Zarychta, 2012; Yokosaka et al., 2016). Seventeen out of the eighteen participants, began interacting with the multisensory device using both hands, moving their fingertips across the buttons. One child without any braille experience used only their right hand. Sixteen out of the eighteen users started using the device independently, without any additional support, for example, pressing start and cancel buttons.

After an initial exploration phase, all users were asked to place their fingertips on the buttons to sense the haptic vibrations. Out of 25 children, 15 children could identify the letters in most cases correctly (>70%), four children had difficulties to identify the letters (e.g., because they experienced that the haptic vibrations were too strong or they had difficulties to put the fingertips on the device), and two children showed evidence for both correct and incorrect responses. Four data sets were missing. While all children had the opportunity to identify the letter on the braille chart, children who were experienced with braille would just articulate the letter.

Modality preferences

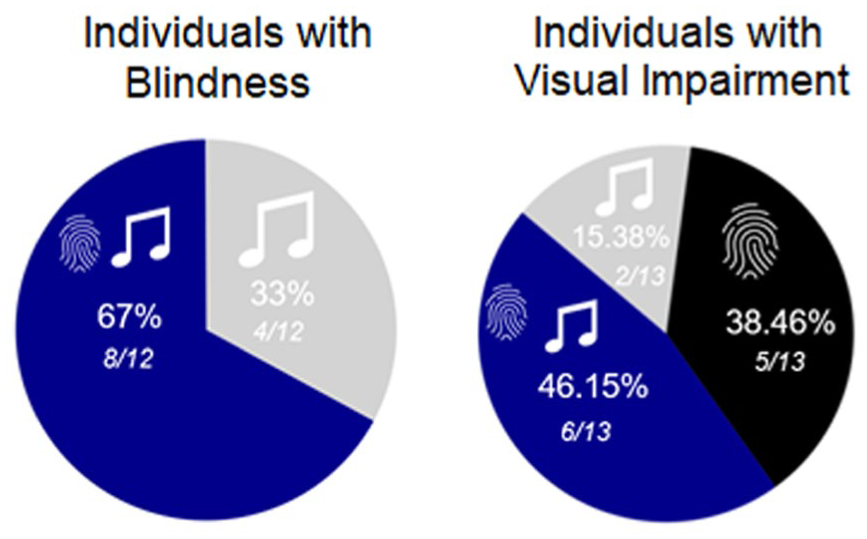

After interacting with the device, each participant was asked to indicate their preferred modality for engaging with the multisensory device. To investigate which modality was chosen most often a chi-square test was applied to compare the distribution of preferred modalities – the auditory-haptic, haptic and the auditory modality – across all participants. This distribution was also compared between individuals with blindness, and visual impairment. Results indicated that there was no significant difference in modality preferences, χ²(2, 25) = 5.84,

Modality preferences in individuals with blindness and visual impairment. Dark blue = auditory-haptic modality, grey = auditory modality, and black = haptic modality.

Enjoyment, nervousness and perceived competence (adapted from IMI)

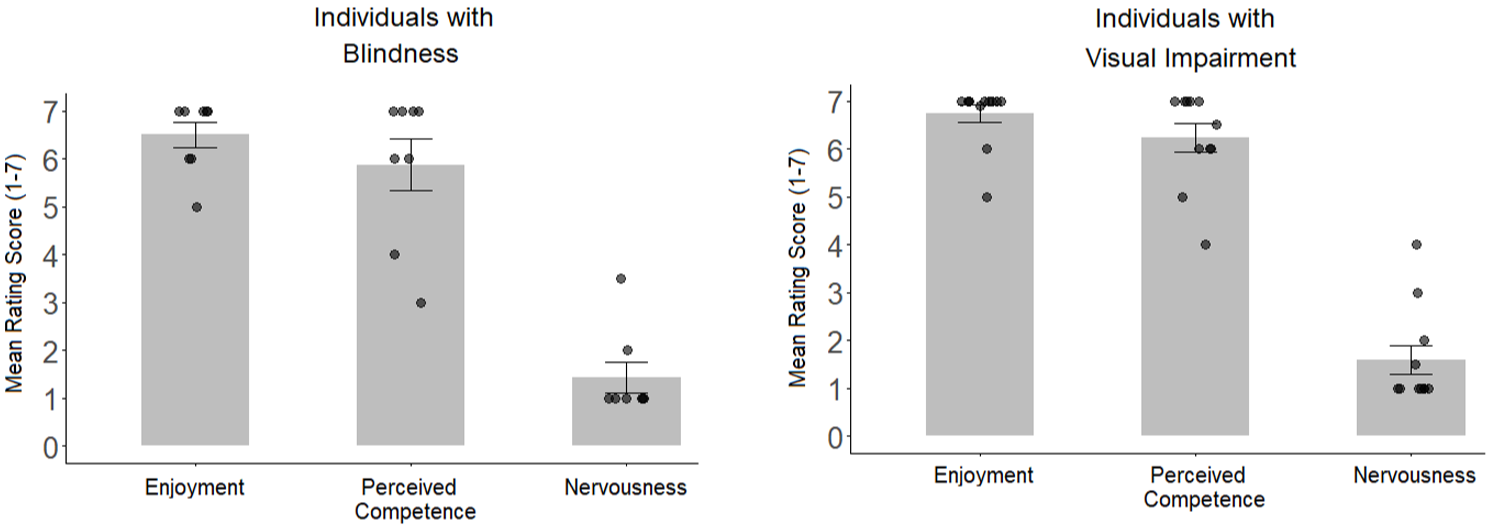

Following device interaction, participants were asked to rate their enjoyment during the task, their nervousness, and their perceived competence on a scale between 1 and 7 (see Figure 5) (Deci & Ryan, 2000).

Reported enjoyment, perceived competence, and nervousness in participants with blindness, and visual impairment. Single black dots indicate individual rating scores.

Given that the data were not normally distributed, the Kruskal Wallis test was applied to investigate whether the groups significantly differed in

Glasgow sensory sensitivity scale

Next, the participants’ sensory sensitivity scores were analysed, and this pattern was compared between participants with visual impairment and blindness (see Figures 6 and 7). Parents (

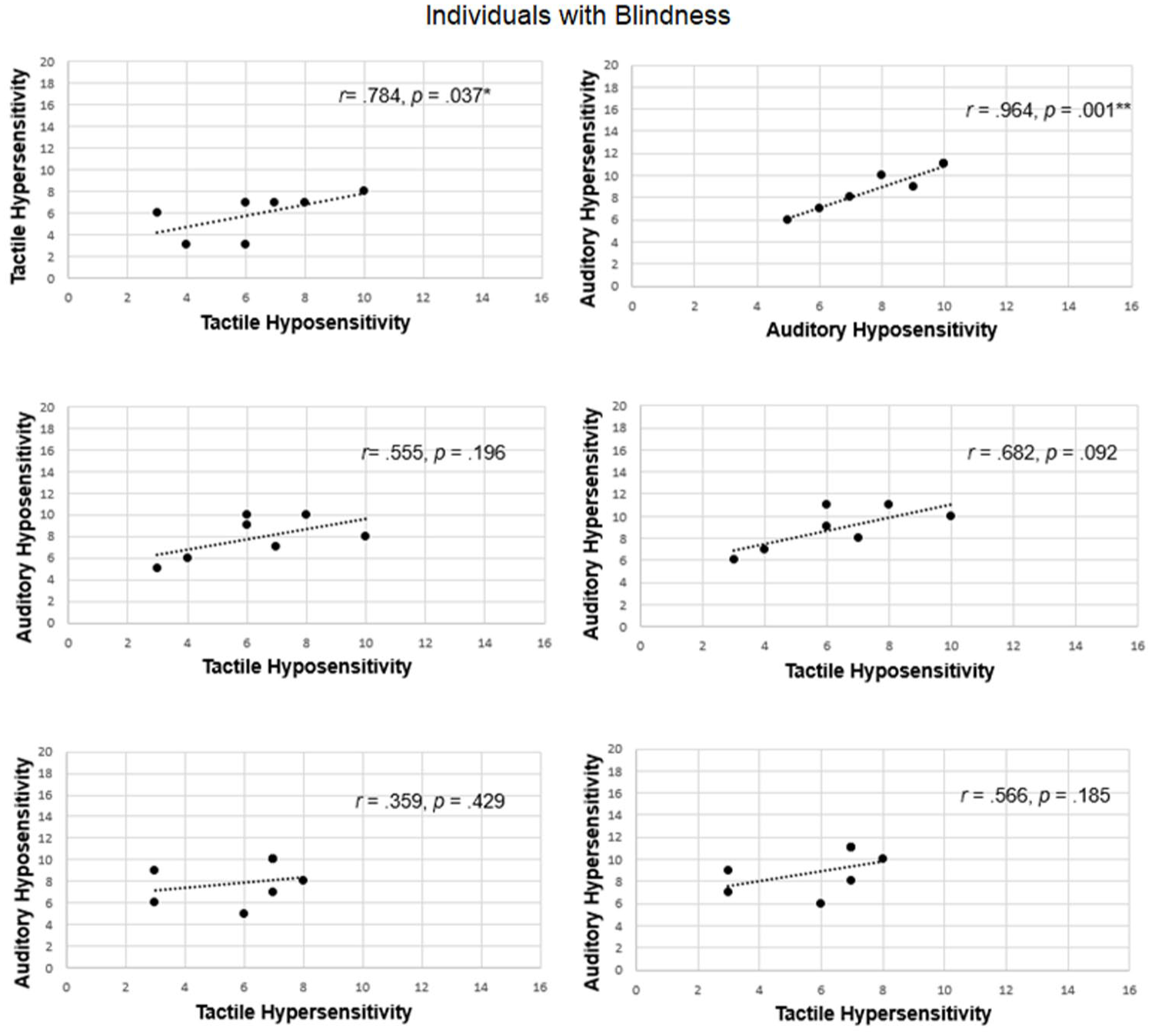

Correlation between auditory and tactile hyposensitivity and hypersensitivity scores in individuals with blindness.

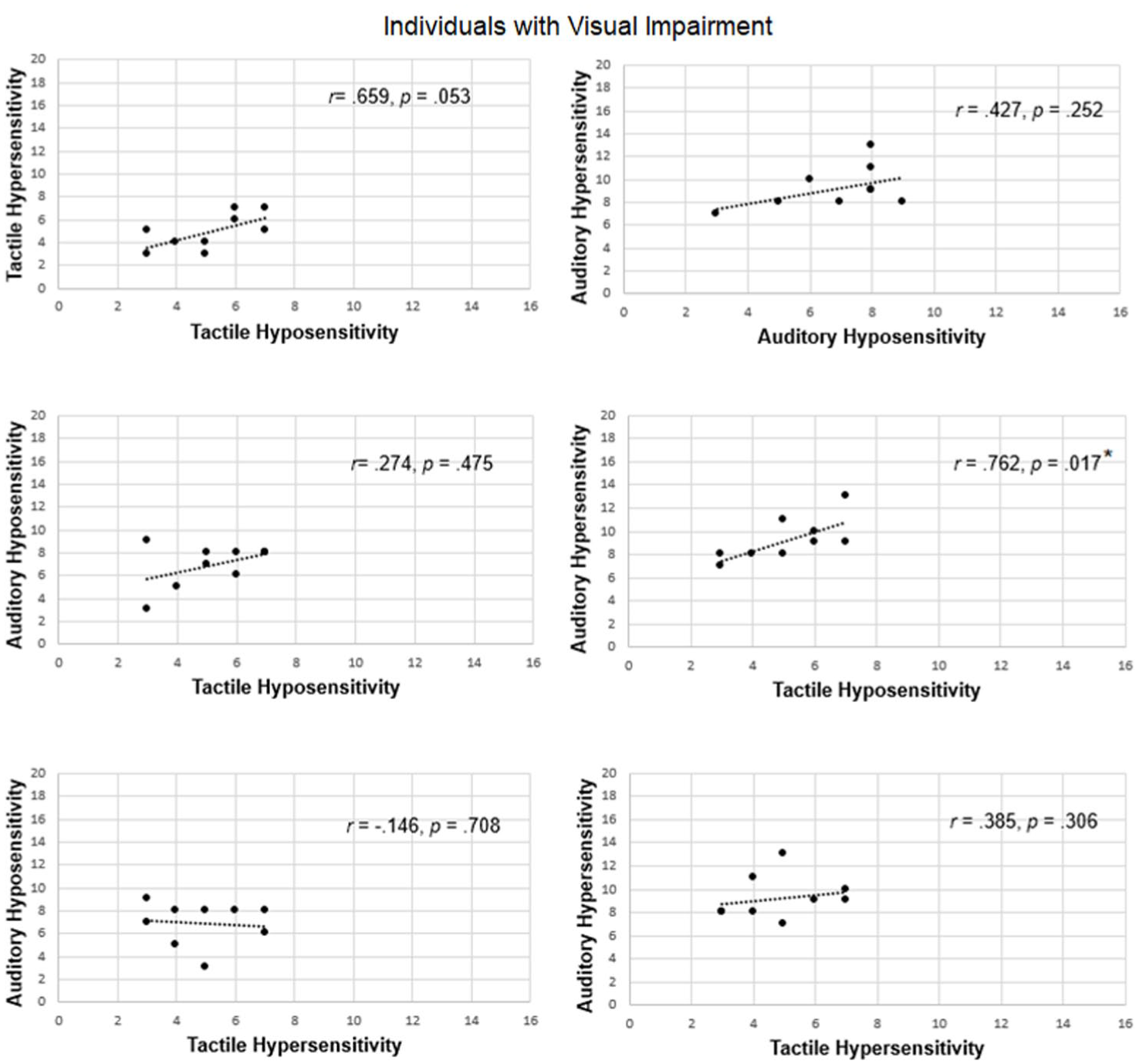

Correlation between auditory and tactile hyposensitivity and hypersensitivity scores in individuals with visual impairment.

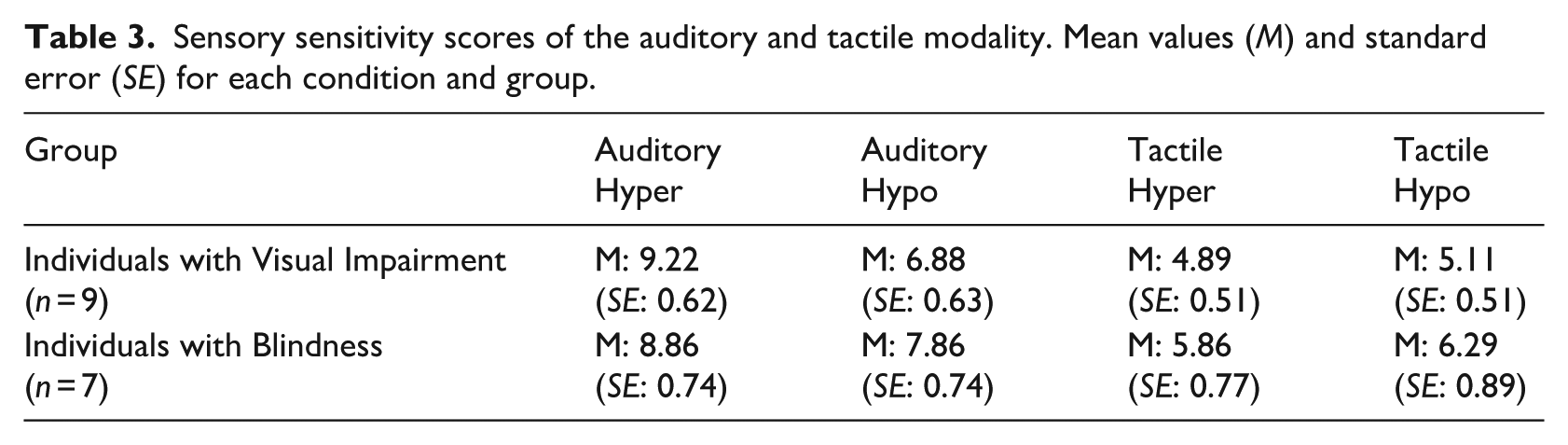

Sensory sensitivity scores of the auditory and tactile modality. Mean values (

To further understand how sensory sensitivity relates within and between different modalities, Spearman correlations were used to analyse whether hyper- and hyposensitivity ratings correlated within and across modalities, separately for children with blindness and those with visual impairment (see Figures 6 &7). For example, children who might have a higher sensory sensitivity in one modality, might also show higher sensitivity in another modality. As can be seen from Figures 6 & 7, the pattern differed in individuals with blindness from individuals with visual impairment: In individuals with blindness, higher hypersensitivity scores were also correlated with higher hyposensitivity scores, particularly within the auditory and tactile modalities. In contrast, the pattern was more mixed in children with visual impairment, possibly reflecting greater variability in modality preferences within this group. For example, auditory hypersensitivity scores correlated with tactile hyposensitivity scores.

Discussion

The primary aim of this research project was to investigate participants’ enjoyment and usability of a newly created multisensory device in participatory design sessions.

Even though the modality preferences did not significantly differ, 14 out of 25 participants selected the auditory haptic modality, which might have reached significance with a higher sample size. This initial finding further aligns with our aim to enrich braille learning with the combined auditory-haptic settings in future research. According to the intersensory redundancy hypothesis, the time-synchronous presentation of information across different modalities can facilitate stimulus detection and attention to stimulus properties (Bahrick & Lickliter, 2000; Bahrick et al., 2004). Previous research has also shown that learning can be improved after the presentation of multisensory compared to unisensory cues in sighted children (Broadbent et al., 2017; Broadbent et al., 2020). Multisensory-enriched instruction has been shown to improve literacy gains (Scheffel et al., 2008; Warnick & Caldarella, 2016) and to support language and mathematics learning through gesture-based approaches in sighted individuals (Andrä et al., 2020; Mathias et al., 2022; Morett & Chang, 2015; Zhen et al., 2019).

The idea of implementing auditory-haptic information into learning devices also fits to the idea of enriching complex mathematical graphs with auditory-tactile features. Users are usually ‘haptically overloaded’ as these graphs often contain complex patterns that require a detailed haptic exploration, and complex sequences of those graphs need to be memorised (see Ramôa et al., 2023). One alternative solution is the usage of Tactom Readers which are 2D graphic readers and braille pin matrix displays (see Ramôa et al., 2023). More recently, researchers have included acoustic feedback besides spoken explanations and showed that users were able to find more details with the new auditory features (Ramôa et al., 2023). Thus, auditory-haptic information might be efficient not only in braille learning but also in other applications such as identifying tactile graphics and maps.

The preferred modality pattern differed between children with blindness and visual impairment: while individuals with blindness preferred the auditory-haptic (67%) and the auditory modality (33%), children with visual impairment indicated their preferences for auditory-haptic (46.15%), auditory (15.38%) and the haptic modality (38.46%). While a higher sample size is required in future studies to confirm those findings, one factor which might explain these group differences could be related to the prevalence of the autism diagnosis in some children with visual impairment. Children with autism show different sensory patterns, compared to children without autism, and research has indicated ‘diminished’ multisensory integration abilities (Stevenson et al., 2014). Even though CVI was not diagnosed in the current sample, previous research indicated that individuals with CVI might have difficulties to integrate different sensory modalities and may have specific sensitivity profiles (Lueck et al., 2021).

To further understand sensory sensitivity patterns in children with visual impairment and blindness, we also asked parents to indicate Sensory Sensitivity Scores on behalf of their children. While sensory sensitivity ratings did not differ between groups, the sensitivity ratings correlated within the tactile and the auditory modality as well as across different modalities in children with visual impairment, while in individuals with blindness, the sensory ratings only correlated within one modality. This finding matches previous results using the Glasgow Sensory Sensitivity Score in children who either scored high or low on the autism questionnaire (AQ, Sapey-Triomphe et al., 2018): This study showed positive correlations between auditory hyper and hyposensitive scores in individuals with low AQ scores; however, these correlations were absent in the tactile domain. On the other hand, individuals with high AQ scores showed correlations between hyper and hyposensitivity scores within both, the auditory and the tactile modality. In the present study, findings indicate that these correlations also occur between different modalities in children with visual impairment.

Limitations and outlook

While this study included various exploratory approaches to understand the interaction with the device, future research requires a larger sample size (including braille beginners) to investigate whether the device is effective for braille learning. This would also allow for a more detailed investigation of how different subgroups interact with the device – for example, children with visual impairments and co-occurring diagnoses, and those without co-occurring diagnoses. Future studies should also include different cognitive measurements, such as IQ in children to better understand cognitive requirements to interact with the device as well as exact measures of visual acuity and visual functions.

In addition, we aim to investigate whether multisensory braille letter presentation might facilitate braille learning compared to haptic braille learning.

Conclusion

To conclude, participants enjoyed interacting with the newly created multisensory device which emphasises its potential as an engaging tool for braille learning. Differences in sensory sensitivity profiles might be linked to modality preferences and the importance of customising braille presentation to meet individual needs and sensory preferences. Future research should further investigate the connection between sensory sensitivity profiles and modality preferences of braille letter presentation in visually impaired and blind individuals.

Supplemental Material

sj-docx-1-jvi-10.1177_02646196251369664 – Supplemental material for SENSE-braille: Children’s multisensory experiences with auditory-haptic activities

Supplemental material, sj-docx-1-jvi-10.1177_02646196251369664 for SENSE-braille: Children’s multisensory experiences with auditory-haptic activities by Julia Föcker, Polly Atkins, Jonathan Waddington, Kieran Hicks, Emma Hawes, Mollie Baker, Caitlin Williams, Timothy Hodgson, Deepak Jowel, Andrew Irvine, John Patterson, Craig Green and Patrick Dickinson in British Journal of Visual Impairment

Footnotes

Acknowledgements

We would like to thank the following schools and charities: Rainford High School, Merton Bank Primary, Thatto Heath Community Primary, St. Helen’s; St Vincent’s, Liverpool, InFocus, Exeter; New College Worcester; and Inclusive Education Service SEND and Vulnerable Pupils Education Division, Nottingham City Council. We would like to thank Jacqueline Bennison, Susan Potter, and Cheryl Gray for supporting the organisation of this study. We also would like to thank the Braillists foundation and the RNIB for distributing our study advertisement.

Author contribution

JF: Writing original draft, review and editing, Conceptualisation, Investigation, Software, Methodology, Supervision, Formal Analysis, Project administration, Funding acquisition, Visualisation

PA: Writing original draft, review and editing, Conceptualisation, Investigation, Software, Data curation, Methodology, Project Administration, Visualisation

JW: Writing original draft, review and editing, Investigation, Methodology, Formal Analysis

KH: Writing original draft, review and editing, Methodology, Software

EH: Writing original draft, review and editing, Project administration, Formal Analysis, Data curation

MB: Writing original draft, review and editing, Project administration, Data curation, Formal Analysis

CW: Writing original draft, review and editing, Project administration, Data curation, Formal Analysis

TH: Writing original draft, review and editing, Methodology, Formal Analysis

DJ: Writing original draft, review and editing, Methodology, Software

AI: Writing original draft, review and editing, Methodology, Software

JP: Writing original draft, review and editing, Project administration

CG: Writing original draft, review and editing, Methodology, Software

PD: Writing original draft, review and editing, Methodology, Software

Declaration of conflicting interest

All authors declare no conflict of interest.

Funding

This work was funded by the Impact Accelerator fund (ref: 0006680-1052), the Commercial Impact Accelerator Fund (ref: 0007731-1052) and the Discovery Commercialisation fund (ref: 0008513-1052) at the University of Lincoln

Ethical approval and informed consent statements

Each child who took part gave assent before the experiment and had written informed consent provided by a parent or caregiver. Each adult agreed to participate in the study by signing the consent form. The study was approved by the University of Lincoln’s Ethics Committee (ref: PSY12410).

All participants provided consent of publishing the data.

The figure with hand placement (Figure 2) is one of the author’s hands.

Data availability statement

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.