Abstract

Scientific knowledge is intrinsically uncertain; hence, it can only provide a tentative orientation for political decisions. One illustrative example is the discussion that has taken place on introducing mandatory mask-wearing to contain the coronavirus. In this context, this study investigates how the communication of uncertainty regarding the effectiveness of mandatory mask-wearing affects the perceived trustworthiness of communicators. Participants (N = 398) read a fictitious but evidence-based text supporting mandatory mask-wearing. First, epistemic uncertainty was communicated by including a high (vs. low) amount of lexical hedges (LHs) to the text (e.g., “maybe”). Second, we varied whether the source of information was a scientist or a politician. Thereafter, participants rated the source's trustworthiness. Results show that the scientist was perceived as more competent and as having more integrity but not as more benevolent than the politician. The use of LHs did not impact trustworthiness ratings.

At its very core, scientific knowledge is tentative and provisional, as scientific claims can only be warranted by the best currently available knowledge (Friedman et al., 1999). Traditionally, scientific claims can be refuted by future evidence or theories at any time, so that scientific knowledge is called to be “tentative forever” (Popper, 1961, p. 280). However, taking up this epistemological stance does not necessarily highlight that epistemic uncertainty—which, is in its simplest form a lack of knowledge or a disagreement over knowledge (Friedman et al., 1999)—technically occurs at every stage of the empirical research process (Walker, 1991). Epistemic uncertainty derives from a wide range of sources, such as from the mere lack of knowledge, from the flawed use of underlying research assumptions, from measurement inadequacies, or even from emerging expert disagreement on observed results (van der Bles et al., 2019). Therefore, in trying to understand fundamental processes, scientific research does not only reduce uncertainties but inevitably also produces uncertainty (Zehr, 1999): While science works to find answers to open research questions, it also reveals unresolved matters that motivate further research. Thus, uncertainty can be seen as the driving force of science (Peters & Dunwoody, 2016). Extending this viewpoint, scientific knowledge can only meet the requirements for aiding in personal and political decision-making if uncertainties are thoroughly discussed within the inner-scientific discourse, thus aiming to provide the most reliable knowledge (Douglas, 2009).

Public discussions that have evolved around the coronavirus pandemic serve as a “fertile ground for perceptions of uncertainty” (Dunwoody, 2020, p. 471). For example, in spring 2020 different stakeholders such as scientists and politicians were publicly discussing the introduction of mandatory mask-wearing to contain the COVID-19 pandemic. To the broader public, it became apparent that while scientific findings were directly integrated into political decision-making processes, the available evidence on the effectiveness of mandatory mask-wearing was clearly characterized by epistemic uncertainty. That is, not only was relevant evidence lacking on the effectiveness of mandatory mask-wearing, but experts were publicly disagreeing on the issue. At the same time, during this state of emergency there was a strong need for reliable decision-making, and, hence, citizens were presumably extraordinarily interested in underlying uncertainties, not in the least because the broader public was immediately affected by such policies. However, lacking expert knowledge, citizens were not able to make first-hand evaluations (“What is true?”). Instead, they had to depend on second-hand evaluations (“Whom to trust?”); namely, they had to assess a source's trustworthiness (Bromme & Goldman, 2014).

Thus, this context raises the question of how the public communication of epistemic uncertainty affects the perceived trustworthiness of sources communicating science-related information. In May 2020, two weeks after mandatory mask-wearing had been enforced politically in Germany, the present study was conducted. First, we investigated how the use of lexical hedges (LHs) regarding the effectiveness of mandatory mask-wearing affects the perceived trustworthiness of scientists and politicians. Second, we assessed whether scientists are perceived as a more trustworthy source of science-related information than politicians. Third, we scrutinized whether the communication of uncertainty affects the ascribed trustworthiness ratings of scientists and politicians differently. Moreover, we tested how topic-specific prior attitudes and topic-specific epistemic certainty beliefs regarding mandatory mask-wearing are related to participants’ trustworthiness ratings.

Hedges as Markers of Epistemic Uncertainty

Making a scientific claim comes with an elemental disposition of uncertainty. The most fundamental source of scientific uncertainty is the inductive risk of falsely rejecting or falsely accepting hypotheses (Douglas, 2000). In addition, people also face internal epistemic uncertainty (Peters & Dunwoody, 2016): As scientific knowledge is not only inherently uncertain but also highly complex and specialized, it is not feasible for laypeople to strive for a full understanding of scientific issues themselves; they can only possess a bounded understanding of science (Bromme & Goldman, 2014). Because laypeople lack the ability to fully understand or assess scientific knowledge themselves, they instead must depend on experts’ knowledge. Such vulnerability is a core characteristic of trust (Mayer et al., 1995)—trust entails a “leap of faith” (Engdahl & Lidskog, 2014, p. 708). However, trust is not blind: People are epistemically vigilant against being misinformed (Sperber et al., 2010), for example, as they heuristically and/or systematically anticipate the trustworthiness of an information provider. Recipients take three different dimensions into account when evaluating the trustworthiness of a source (Hendriks et al., 2015): They assess whether a source is knowledgeable and pertinent (expertise), and whether a source of information (SI) has “the right attitude towards what they are doing” (Wilholt, 2013, p. 248); that is, they determine whether a source is honest as well as transparent (integrity) and whether it shows goodwill toward others (benevolence).

Some have argued that publicly revealing uncertainties has detrimental effects on such trustworthiness judgments (in fact, it enlarges the vulnerability of not getting the “truth”) because as a culturally acknowledged epistemic authority, science is expected to guarantee definitive answers (Bromme & Goldman, 2014; Douglas, 2009). Furthermore, uncertainties can be politicized within public debates in order to cast doubt on political issues (Bolsen & Druckman, 2015; Oreskes & Conway, 2011). Consequently, scientists fear that when they communicate scientific uncertainty, this might be misinterpreted by the public and exploited by interest groups (Post, 2016), or might even enhance public criticism towards their field of research (Post & Maier, 2016).

Conversely, some have argued that the disclosure of uncertainty in public debates might promote trust in science and scientists as long as it is not overly stressed (Druckman, 2015; Zehr, 2017). While recipients usually may rather expect science to provide clear answers to some problems, many acknowledge the existence and inevitability of scientific uncertainty (Maxim & Mansier, 2014). Hendriks et al. (2016a, 2016b) argued that when scientists communicate uncertainties or other qualifiers of scientific results, this may signal honest and good intentions. In fact, readers’ ascriptions of integrity and benevolence were higher when a scientist himself disclosed overstatements (Hendriks et al., 2016a) or ethical concerns (Hendriks et al., 2016b) in the context of a blog entry on his research, compared to when these were introduced by an unaffiliated expert. Such a positive effect from the self-disclosure of uncertainty might be of even greater relevance when scientific results are communicated with a direct link to political questions, as scientific limitations, even if not immediately disclosed, might nonetheless become apparent to the public later, which at that point might lead to a decrease in trust (Stocking, 2010).

However, reviews on the effects of communicating uncertainty point out that several parameters need to be taken into account when investigating how communicating uncertainty affects trustworthiness perceptions (van der Bles et al., 2019). Alongside considering different styles of uncertainty (Gustafson & Rice, 2020), one must also look attentively at variances among individuals, formats (van der Bles et al., 2020), the concerning scientific issue (Gustafson & Rice, 2019; Jensen & Hurley, 2012), and the respective research field (Broomell & Kane, 2017).

One common way to communicate uncertainty is to use so-called hedging (Crismore & Farnsworth, 1990). Hedging involves using either LHs, including single words such as adverbs (e.g., generally, probably), verbs (e.g., imply, suggest) and modal verbs (e.g., may, should), or using discourse-based hedges, which are part-sentences that express tentativeness, limitation and possibility (Horn, 2001; Hyland, 1996a). Some studies have investigated how hedges affect people's perception and processing of arguments. For example, arguments including LHs that expressed tentativeness were processed in more depth and rated as more complicated than arguments that included no hedges (Mayweg-Paus & Jucks, 2015; Thiebach et al., 2015).

As the expression of uncertainty is an essential norm within the inner-scientific discourse and, consequently, characterizes “what it means to speak as a scientist” (Zehr, 2017, p. 5), hedges can be viewed as a textual feature of scientificness. The use of hedges does not simply enable sources to communicate uncertainty, but it also allows them to express claims accurately while, at the same time, speculating about further possibilities and restricting possible detrimental effects of one's credibility due to unproven overstatements or absolute categorical commitments. Second, hedges open up room for discussion by offering readers the opportunity to refute and negotiate the claims that were made. This is essential, as the construction of scientific knowledge involves a collective negotiation of consensus, which is the collaborative ratification of knowledge (Hyland, 1996a, 1996b).

Jensen (2008) further argues that the use of hedging that is understood as uncertain language acts as a signifier of objectivity resulting in increased credibility of scientists. On the one hand, certain and assertive language has been viewed to be comparingly more effective than uncertain and tentative language leading to higher credibility perceptions of the communicators (Blankenship & Holtgraves, 2005; Burrell & Koper, 1998; for a review, see Hosman, 2002). However, not all studies were done in the context of scientific information (e.g., Hosman & Siltanen, 2011).

Jensen (2008) investigated (in a science communication context) how including discourse-based hedges in news coverage of cancer research (vs. not including hedges) and their attribution to the responsible researcher or to an unaffiliated researcher affects source credibility (which in this paper entails the two dimensions expertise and trustworthiness). When hedges were included, the primary scientist and the reporting journalist were perceived as more trustworthy compared to when no hedges were included; however, this was not the case when the hedging was attributed to an unaffiliated researcher. Importantly, neither the scientist's nor the journalist's expertise ratings were affected by the inclusion of hedging. Ratcliff et al. (2018) could only replicate these results for the ratings of the journalist. A more recent study by Butterfuss et al. (2020) did not detect an effect of hedged language on trust in information sources (including scientific information sources, liberal and conservative media sources) compared to a nonhedged language condition. However, the authors concluded that the applied manipulation of lexical hedging might have been too subtle.

Considering the above-mentioned argumentation, we investigated how the use of LHs (high vs. low) affects the three dimensions of epistemic trustworthiness (expertise, integrity, benevolence; Hendriks et al., 2015) and formulated the following hypothesis regarding integrity and benevolence ratings:

However, considering that uncertainty is a feature of scientific information, regarding expertise it remains an open research question, whether a SI is also perceived as more competent when acknowledging scientific uncertainty by high use of LHs as compared to low use.

Scientists and Politicians as Sources of Information

Within public debates, as during the coronavirus pandemic, both scientists and politicians regularly refer to scientific information. Hence, they can both be viewed as publicly relevant communicators. However, within the context of policy discussions, politicians and scientists might not be trusted equally as a source of science-related information (Fiske & Dupree, 2014). Also, national representative questionnaires indicate that scientists are perceived to be a more trustworthy SI than politicians (National Science Board, 2018; Wissenschaft im Dialog, 2020). For instance, the German Wissenschaftsbarometer (Wissenschaft im Dialog, 2020) found that in November 2020, 73% of respondents fully or mostly trust statements by scientists concerning the coronavirus, whereas only 32% fully or mostly trust statements by politicians concerning the coronavirus.

This discrepancy may be explained by several potential reasons. Presumably, scientists are seen to be more competent due to their technical knowledge which politicians might be lacking. Furthermore, alleged intentions that are ascribed to a SI also impact trustworthiness evaluations (König & Jucks, 2019): Contrary to scientists who are generally perceived to have benevolent intentions (National Science Board, 2018), politicians are usually aligned with partisan representation (Bøggild, 2020). In line with these theoretical considerations and empirical results, we expected the following:

On another note, it remains an open question whether the communication of uncertainty affects politicians and scientists differently, as varying communicative motives are ascribed to politicians and scientists (Rabinovich et al., 2012). Specifically, scientists are assumably perceived to be motivated by impartially informing audiences—which consequently involves the communication of uncertainties. The communicative motive ascribed to politicians, however, might be to persuade audiences of their decisions—which excludes the communication of uncertainties. On the one hand, it can be argued that recipients put more trust into a SI who is willing to give unbiased information (Eagly et al., 1978). For example, when politicians that are expected to communicate persuasively use uncertain language (a behavior inconsistent with their assumed communicative intentions), they might be perceived as less biased and more trustworthy than when confirming persuasive motives.

On the other hand, Rabinovich et al. (2012) found that while scientists were in general perceived to be more trustworthy when intending to inform their audience, the convergence of communicative intentions (informative vs. persuasive) and message style (informative vs. persuasive) resulted in higher trust in climate scientists and higher willingness to engage with climate change issues. In line with this evidence, scientists (with presumed informative communicative intentions) might be perceived as more trustworthy when they communicate uncertainty (indicating an informative message style), as compared to when they do not. Vice versa, politicians might be perceived as more trustworthy when they do not communicate uncertainty (indicating persuasive message style)—since this is consistent with the presumed underlying persuasive communication motive—as compared to when they do.

As there appear two ways an interaction effect could go and as there is only little evidence supporting each direction, we formulated an explorative research question.

However, the theoretical assumptions outlined are based on the premise that recipients expect the above-stated communicative motives for scientists and politicians. Thus, we measured which communicative motives are ascribed to scientists and politicians respectively (by asking participants to rate the extent to which they perceived the text's goal as persuasive/informative; and shaped by the evaluation of scientific evidence/the political attitudes of a communicator; Kotcher et al., 2017), and we exploratively investigated how these ascriptions were affected by the communication of uncertainty.

Effects of Prior Attitudes and Epistemic Beliefs on Trustworthiness Assessments

Individuals tend to process information in a biased manner so that it aligns with their personal values, world views and with attitudes they hold upon specific topics (Kienhues et al., 2020; Sinatra et al., 2014); this phenomenon is called motivated reasoning (Kunda, 1990). For example, individuals tend to appreciate messages that confirm their personal attitudes and depreciate messages that speak against their own beliefs. This is generally described as the self-confirmation heuristic (Metzger & Flanagin, 2015). Deriving from this mechanism, preexisting beliefs also affect source evaluations: Individuals rate news sources that are consistent with their prior attitudes and neutral news sources as more credible than sources that compete with their own attitudes (Metzger & Flanagin, 2015; Metzger et al., 2020). Furthermore, prior attitudes may impact ratings of source credibility (Landrum et al., 2017).

Moreover, individuals’ views on the nature of knowledge and on processes of knowing (epistemic beliefs) may affect their perception of scientific claims (cf. Hendriks & Kienhues, 2019). Due to science's inherent uncertainty, particularly epistemic beliefs concerning the certainty of knowledge—that is, individuals’ expectations toward the occurrence of uncertainty—may affect recipients’ assessment of uncertainty within scientific claims (Sinatra et al., 2014). For instance, Rabinovich and Morton (2012) found that messages including uncertainties were more persuasive for participants who viewed science generally as debate (scientific knowledge is generated by negotiating several possible answers) than for participants who saw science as a search for the absolute truth.

Furthermore, recipients’ beliefs on epistemic certainty influence the processing of scientific information (Bråten & Strømsø, 2009; Kimmerle et al., 2015; Strømsø et al., 2008). Epistemic beliefs and, more precisely, epistemic certainty beliefs may vary within domains (Bråten & Strømsø, 2009; Muis et al., 2006; Stahl & Bromme, 2007) and even between topics (Kienhues et al., 2011). In particular, topic-specific epistemic beliefs are likely to be activated when individuals possess relatively high content knowledge and are aware of the epistemology of the topic at hand, such as whether they are familiar with uncertainties of the matter (Sinatra et al., 2014).

Such was the case for the topic of mandatory mask-wearing: For several weeks it was not only publicly discussed whether mask-wearing is an efficient policy to contain the coronavirus but it was also made explicit that experts were unsure about their recommendations for example due to lack of evidence. Hence, we can assume that participants might have formed relatively stable attitudes and beliefs about the certainty of scientific knowledge on the topic. Going beyond our preregistration, we included both prior attitudes and epistemic certainty beliefs as covariates and formulated the following research questions:

The Present Study

The study presented in this paper was preregistered, osf.io/h6v85. A complete overview of applied materials, items, data, and scripts of statistical analyses can be accessed in the Open Science Framework, osf.io/9axd3. Further information on data acquisition, statistical procedures, and results can be found online in the Supplementary Materials.

Methods

Data Acquisition and Sample Description

Data acquisition took place from the 11th–13th of May 2020, which was shortly after mandatory mask-wearing had been introduced all over Germany on the 27th of April 2020. Participants were recruited by an online recruiting service (testingtime.com). Participants over 18 years of age residing in Germany were able to take part in the online survey in the German language. Each of the participants was rewarded with 5 Euros for participation. A total of 444 participants took part in the survey. After applying the exclusion criteria, the final sample included 398 participants (55% female, 44% male, one diverse, one did not disclose their gender). On average, participants were 42.40 years old (SD = 14.82), ranging from 18 to 78 years. Regarding participants’ formal education, 40% held a university degree, 30% had a higher education entrance qualification, 30% held a secondary school qualification degree or had successfully terminated a professional training. Only one participant did not hold an educational degree. The majority of the sample was German native speakers (89%). Referring to the answers provided to the open-ended question, we were assured that all participants were proficient German speakers. Of participants, 10% worked in a medical profession and 14% had a family member in a medical profession, whereas most (76%) did not have any proximity to a medical profession.

Design and Materials

We used a 2 (SI: scientist vs. politician) × 2 (use of LHs: high vs. low) between-subject experimental design, resulting in four experimental conditions. All conditions were equally sampled, ranging from 95 to 104 participants. Each participant was asked to read a short informational text arguing for the introduction of mandatory mask-wearing. To provide a straightforward randomized controlled experiment, the informational text was experimenter-written. However, the arguments included were based on a statement by the Robert-Koch-Institute (the government's central scientific institution in the field of biomedicine in Germany; Robert-Koch-Institut, 2020), which reviewed various studies on the effectiveness of mandatory mask-wearing. We manipulated whether the text was either authored by a scientist or a politician explaining why he was in favor of mandatory mask-wearing (SI: scientist vs. politician). Among the experimental conditions, we also varied whether the use of LHs (e.g., “probably”, “possibly”, Hyland, 1996a) expressing epistemic uncertainty was either high or low (use of LHs: high vs. low). In the high-hedged conditions the text contained in total 27 LHs including 13 adverbs (probably, rather, possibly, perhaps, presumably, maybe), 10 modal verbs (might, could), and four verbs (suggest, seem to, think). This accounts for an average of 1.35 hedges per sentence which means that 85% of all sentences contained at least one LH. In the low-hedged conditions there were in total only five LHs included. Four times we included the modal verb “can” (as compared to “could” in the high-hedged conditions) and once the verb “think” (which was the same in the high-hedged condition). In summary, this corresponds to 0.25 hedges per sentence which means 20% of all sentences included at least one LH. Materials can be seen in Appendix A.

Procedure

The software EFS Unipark was used to create an online survey for implementation. After being given information on the study's purpose and procedure, participants gave several initial ratings on their prior attitudes and their epistemic certainty beliefs about the topic. Next, participants were asked to read the short informational text on mandatory mask-wearing. Consequently, participants made ratings on dependent as well as on descriptive variables and provided demographic information. Variables were tested in the presented order. Also, in the end, we measured perceived responsibilities of scientists and of politicians as well as participants’ topic-specific intellectual humility (Hoyle et al., 2016) regarding mandatory mask-wearing. However, these two variables are not reported further within this paper. At the very end of the survey, participants had the option to comment on the study. Then, they were debriefed and asked to give consent on their data. In total, the experiment took about ten minutes to complete.

Measures

Topic-Specific Prior Attitudes

Topic-specific prior attitudes concerning participants’ attitudes regarding the effectiveness of mandatory mask-wearing were measured using three items (Cronbach's α = 0.92; scales from 1 = do not agree at all to 7 = agree very much) and one open question.

Topic-Specific Epistemic Certainty Beliefs

To measure participants’ topic-specific epistemic certainty beliefs concerning participants’ attitudes on mandatory mask-wearing, we used the Topic-Specific Epistemic Belief Questionnaire (Bråten & Strømsø, 2009). Precisely, we included six items of the certainty beliefs scale that were translated into German (Cronbach's α = 0.84; scales from 1 = do not agree at all to 7 = agree very much; e.g., “What is considered to be certain knowledge about the effectiveness of mandatory mask-wearing, may be considered to be false tomorrow”). High scores indicate that participants view the knowledge about the effectiveness of mandatory mask-wearing as rather tentative, whereas low scores imply that participants view it as rather certain.

Epistemic Trustworthiness

To measure the perceived epistemic trustworthiness of the SI, we used the Muenster Epistemic Trustworthiness Inventory (METI: Hendriks et al., 2015). The METI contains 14 pairs of adjectives that had to be answered on a 7-point semantic differential reflecting the three subscales of expertise (6 items; Cronbach's α = 0.95; e.g., competent—incompetent), integrity (4 items; Cronbach's α = 0.92; e.g., fair—unfair) and benevolence (4 items; Cronbach's α = 0.90; e.g., responsible—irresponsible). Each dimension was treated as a separate dependent variable; thus, we took the arithmetic mean for each of the three subscales.

Perceived Goals of the Text

To evaluate the perceived aims of the text, participants rated two single items (scales from 1 = do not agree at all to 7 = agree very much) asking for their assessment of the text's goal to persuade (“The goal of the text by the [source of information] was to persuade its readers that mandatory mask-wearing is appropriate and effective”) and their evaluation of the text's goal to inform (“The goal of the text by the [source of information] was to provide impartial information that mandatory mask-wearing is appropriate and effective to contain the coronavirus”). Both items were adapted from Kotcher et al. (2017) and translated into German. Every single item was treated as a separate dependent variable.

Attribution to Political Views and to Scientific Evidence

Participants further rated the extent to which they think that the informational text was influenced by the source's evaluation of scientific evidence (“The text by the [source of information] was mainly shaped by his evaluation of scientific evidence”) and by his political attitudes (“The text by the [source of information] was mainly shaped by his political views”). These items were also adapted from Kotcher et al. (2017) and rated on a scale from 1 = do not agree at all to 7 = agree very much. Again, every single item was treated as a separate dependent variable.

Results

Statistical Analyses

Using Statistical Package for the Social Sciences (SPSS) 26, we conducted multiple analyses of covariance (ANCOVAs). The statistical model included two independent factors, namely (1) use of LHs and (2) SI, and two covariates, which were (1) topic-specific prior attitudes and (2) topic-specific epistemic certainty beliefs. All tests were two-tailed, and the alpha level was set at 0.05. JAMOVI 1.2.27 was used to compute the reported effect size omega squared. We interpreted 0.01 as a small, 0.06 as a medium, and 0.14 as a large effect, in line with Kirk (1996).

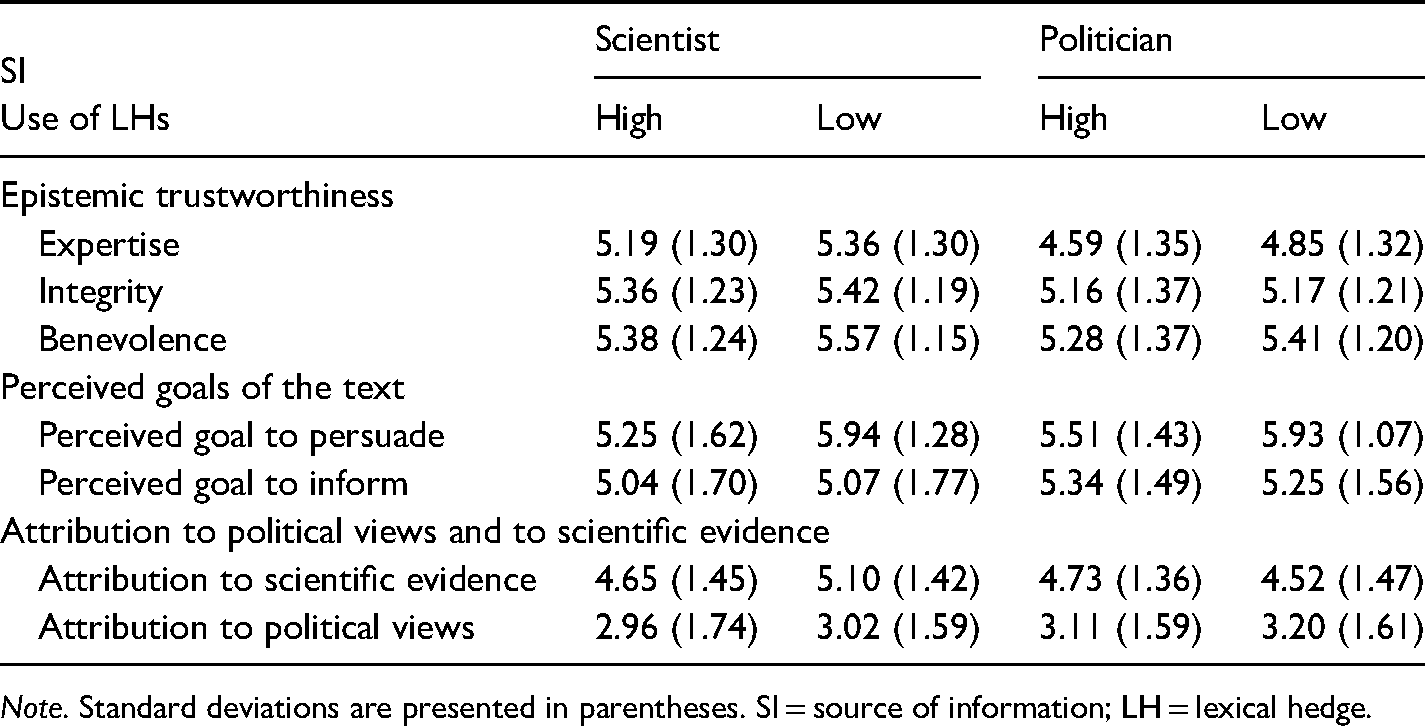

To investigate the direction of the effects of the covariates on the dependent variables, we additionally report the correlation between covariate and dependent variable using Pearson's correlation coefficient “r” (Field, 2013). Further, Table 1 shows the means and standard deviations of the dependent measures; see Supplementary Materials online for a detailed report of statistical analyses and results.

Means and Standard Deviations for the Dependent Variables by SI and Use of LHs (High vs. Low).

Note. Standard deviations are presented in parentheses. SI = source of information; LH = lexical hedge.

Descriptive Results and Equivalence of Groups

Regarding participants’ topic-specific prior attitudes, participants saw mandatory mask-wearing as a rather effective intervention to control the coronavirus and its consequences (M = 5.34, SD = 1.52). This can also be seen in participants’ open answers (ranging from 1 to 585 characters) concerning their attitude toward mandatory mask-wearing (M = 5.09, SD = 1.71). The certainty of knowledge concerning the effectiveness of mandatory mask-wearing was rated on average 4.25 (SD = 1.21), which does not allow a definite interpretation of clear tendencies. The four experimental groups did not significantly differ regarding prior attitudes (F(3, 394) = 1.09, p = .351) nor regarding epistemic certainty beliefs (F(3, 394) = 1.83, p = .141) about the effectiveness of mandatory mask-wearing; these two variables correlate negatively with each other (r = −.39), indicating that the more participants favor mandatory mask-wearing the less they rate the knowledge about it as uncertain.

Ratings of Epistemic Trustworthiness

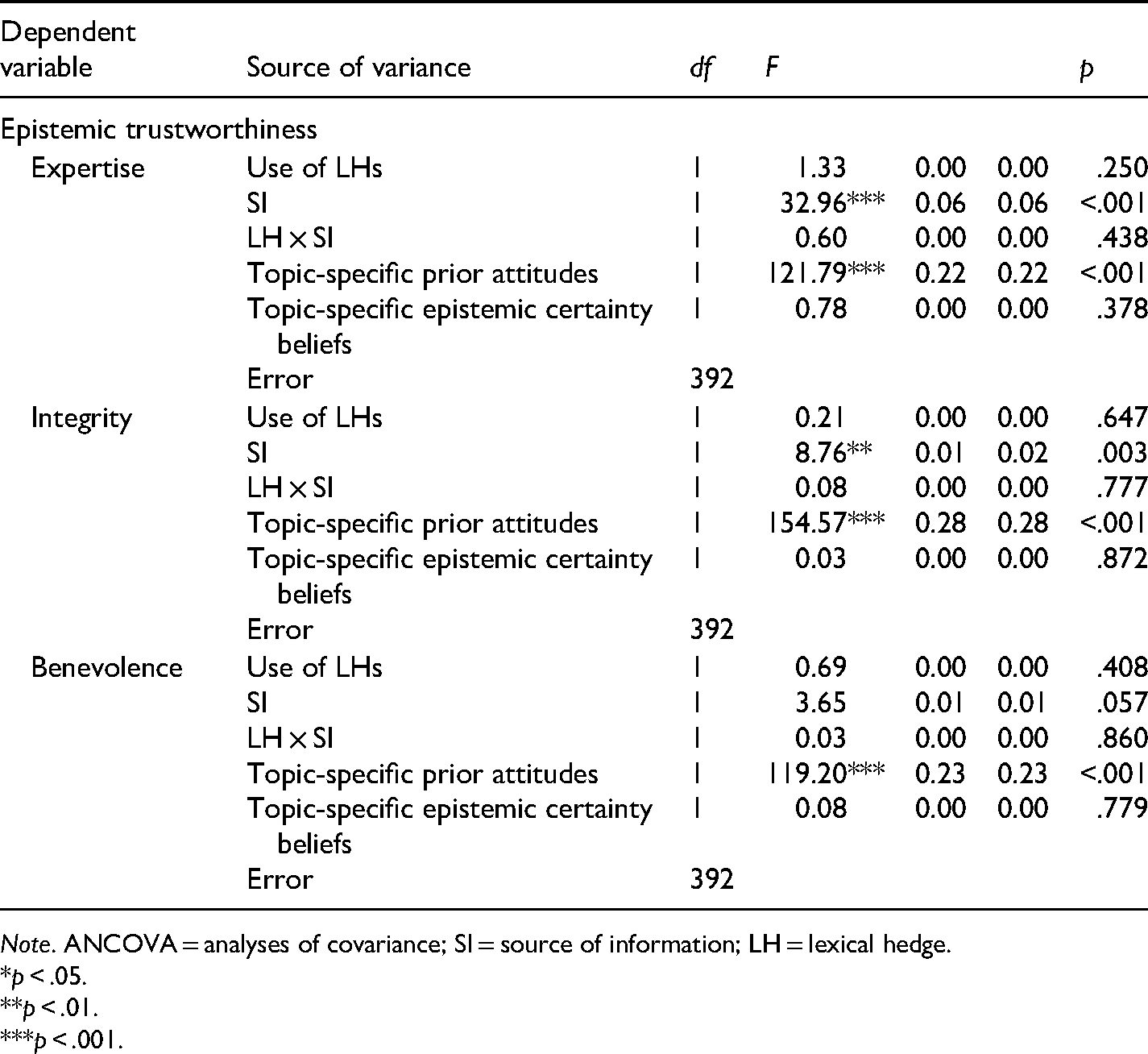

First, we assessed differences in epistemic trustworthiness on the three dimensions: expertise, integrity, and benevolence. For the full report of the ANCOVAs, see Table 2.

Results for ANCOVAs for the Dependent Variables Expertise, Integrity, and Benevolence.

Note. ANCOVA = analyses of covariance; SI = source of information; LH = lexical hedge.

*p < .05.

**p < .01.

***p < .001.

Expertise

Regarding expertise ratings, there was no significant main effect of the use of LHs (p = .250). There was, however, a significant main effect of the SI (p < .001, ω2 = 0.06), indicating that the scientist was seen as more competent than the politician. There was no significant interaction effect (p = .438). The covariate Topic-specific epistemic certainty beliefs did not reach significance (p = .378), but the covariate Topic-specific prior attitudes did (p < .001, ω2 = 0.22). This indicates that more favorable attitudes corresponded with higher ratings of expertise (r = .51).

Integrity

Similar results were found regarding integrity ratings. There was no significant main effect of the use of LHs (p = .647), but there was a significant main effect of the SI (p = .003, ω2 = 0.01). That is, the scientist was judged as having more integrity than the politician. 1 Again, there was no significant interaction effect (p = .777). The covariate Topic-specific epistemic certainty beliefs was not significantly related to integrity ratings (p = .872). The covariate Topic-specific prior attitudes reached significance (p < .001, ω2 = 0.28), indicating that more favorable attitudes corresponded with higher ratings of integrity (r = .56).

Benevolence

Regarding benevolence ratings, there were neither significant main effects (use of LHs: p = .408; SI: p = .057) nor a significant interaction effect (p = .860). Again, Topic-specific epistemic certainty beliefs did not reach significance as a covariate (p = .779), while Topic-specific prior attitudes did (p < .001, ω2 = 0.23), implying that more favorable attitudes corresponded with higher benevolence ratings (r = .51).

Additional Analyses

Furthermore, we exploratively conducted additional ANCOVAs referring to the following four single items assessing the “Perceived goals of the text” and the “Attribution to political views and to scientific evidence”. For the full report of statistical results, please see the Supplementary Materials online.

Text's Perceived Goal to Persuade

When the use of LHs was high, participants rated the text's goal as less persuasive as when the use of LHs was low (p < .001, ω2 = 0.03). There were no further effects (SI: p = .571; interaction effect: p = .362). Topic-specific epistemic certainty beliefs did not reach significance as a covariate (p = .292), while the covariate Topic-specific prior attitudes did (p < .001, ω2 = 0.03), indicating that more favorable attitudes corresponded with higher perception of persuasiveness (r = .23).

Text's Perceived Goal to Inform

Regarding the text's perceived goal to inform, there were no significant effects of the experimental manipulations (use of LHs: p = .479; SI: p = .286; interaction: p = .929). Topic-specific epistemic certainty beliefs were not included in the statistical model as a covariate, as statistical assumptions would have been violated (see Supplementary Materials online for further information). The covariate Topic-specific prior attitudes was significant, (p < .001, ω2 = 0.14), indicating that more favorable attitudes corresponded with higher perception of informational goals (r = .39).

Attribution to Scientific Evidence

The use of LHs did not impact the perception that the text was shaped by the source's evaluation of scientific evidence (p = .572). There was, however, a significant interaction effect (p = .039, ω2 = 0.01). Simple effect analyses showed that when the use of LHs was low, the perception that the text was shaped by the source's evaluation of scientific evidence differed with regard to the SI (F(1, 392) = 8.45, p = .004, ω2 = 0.02). Specifically, when the SI was a scientist, participants perceived the text as more influenced by the source's evaluation of scientific evidence as to when the SI was a politician. However, when the use of LHs was high, this perception did not differ (F(1, 392) = 0.004, p = .948). There was also a significant main effect of the SI (p = .049, ω2 = 0.01). The covariate Topic-specific epistemic certainty beliefs did not yield a significant effect (p = .142), while the covariate Topic-specific prior attitudes did (p < .001, ω2 = 0.06), indicating that more favorable attitudes corresponded with a higher perception that the SI is influenced by his interpretation of scientific evidence (r = .25).

Attribution to Political Views

Regarding whether participants thought the text was shaped by the source's political views about mandatory mask-wearing, none of the experimental manipulations yielded a significant effect (use of LHs: p = .243; SI: p = .055: interaction effect: p = .884). The covariate Topic-specific epistemic certainty beliefs was significant (p = .042, ω2 = 0.01). This indicates that the more participants viewed the topic-specific knowledge as uncertain, the more they perceived the text as being influenced by the source's political attitudes (r = .26). Furthermore, the covariate Topic-specific prior attitudes reached significance (p < .001, ω2 = 0.17), indicating that more favorable attitudes corresponded with a lower perception that the text was influenced by the source's political attitudes (r = −.47).

Discussion

This study investigated how the use of LHs (high vs. low), that is, the communication of uncertainty affects the perceived trustworthiness of a scientist versus a politician as a source of scientific information. Contrary to our expectations, we did not find that high use of LHs increased the perceived trustworthiness of a communicator regarding benevolence and integrity ratings (H1). Similarly, the use of LHs (high vs. low) did not affect the perceived expertise of a communicator (RQ1).

As other scholars have noted before, compared to other expressions of epistemic uncertainty, LHs can be considered as rather subtle (Butterfuss et al., 2020; Winter et al., 2015). Consequently, the use of LHs may not have been detected as an expression of uncertainty. Different from the study by Jensen (2008), who used part-sentences (discourse-based hedges; e.g., “too early to make definitive claims”) to convey uncertainty, the current study solely applied or omitted single words (LHs) from the informational text that participants were to read. This points to the fact that LHs alone did not clearly indicate the presence of underlying epistemic uncertainty.

Importantly, linguistic resources and rhetorical figures must always be considered within the context in which they appear (e.g., text type, communicator, intention situation, and topic) (Simmerling & Janich, 2016). Within the coronavirus pandemic, recipients may have frequently been confronted with explicit types of uncertainty communication (e.g., lack of consensus) and were, therefore, less sensitive to single-word hedges. In addition, single words like “presumably” or “possibly” are commonly used within everyday language, which could also explain why this form of hedging hardly impacted participants’ perception of the informational text. To test this assumption, forthcoming studies should include a manipulation check.

As the experimental conditions only differed between “high use of LHs” and “low use of LHs” in the study at hand, future experiments could test different types of hedges. It may be crucial to consider in future work which type of LH (e. g. colloquial or professional) is used and second, to which exact information the LHs are referring to. Durik et al. (2008) observed that colloquial and professional hedges affected the perception of a message and its source (both nonscience related) negatively when those hedges were referring to data statements. This effect does, however, not occur when hedges were included in interpretative statements. Possibly it might be of particular importance that communicators use LHs with reference to their own studies (Howell, 2020) as this conveys epistemic uncertainty toward their own knowledge. Such a self-critical style of communication might increase the perceived trustworthiness of communicators. In the present study, however, the sources of information use LHs to express scientific uncertainty in the context of scientific studies they did not conduct themselves. This might also explain why the use of LHs did not yield a significant effect on perceived trustworthiness.

Furthermore, future studies could include an experimental condition in which an expert makes overly certain claims (fully excluding hedging from scientific information might seem implausible). Explicit expressions of certainty or uncertainty might have a larger impact on trustworthiness perceptions (for an overview see van der Bles et al., 2019). For example, Mayweg-Paus and Jucks (2018) found that experts were perceived as more trustworthy when providing pro and contra arguments in an expert discussion (vs. taking an one-sided stance).

On another note, the communication of uncertainty can be viewed as one among many characteristics that characterize a scientific discourse style. Future studies should take such other features into account to study the extent to which different markers of “scientificness” such as the use of citations (Thomm & Bromme, 2012), the application of jargon (Shulman et al., 2020) or the use of technical language (Thon & Jucks, 2017) affect the perceived trustworthiness of a SI. As uncertainty is not usually communicated isolated from other information, future research could also investigate how additionally given information, such as explaining the relevance or cause of uncertainty, affect source trustworthiness (cf. Hendriks & Jucks, 2020).

A high use of hedges did, however, make the text's intention appear less persuasive than when the use of hedges was low. This means that even a relatively small linguistic variation has an effect on a text's perceived intentions irrespective of the communicating source. This effect, even though small, could be further investigated in two ways. First, future studies should investigate whether recipients are also less likely to attribute communicator bias (Eagly et al., 1978) when sources communicate epistemic uncertainty. For example, Steijaert et al. (2020) found that when a communicator discloses uncertainty recipients are less likely to ascribe communicator bias to the SI, leading to an increase in trust (compared to when no uncertainty is revealed). However, instead of applying single items, which has to be mentioned as a limitation of this study, it would be reasonable to develop a reliable scale to measure ascribed communicative motives. Second, the fact that the use of hedges made the text's goal appear less persuasive implies that the use of LHs could counteract motivated reasoning processes or psychological reactance. Similarly, Winter et al. (2015) found that while in their study hedging a science blog entry did not affect readers’ attitudes toward the topic (effects of computer games on children), a two-sided blog entry led to more moderate attitudes toward the issue compared to a one-sided version. Hence, participants might also be more likely to change their attitude or at least to lower their confidence after reading hedged (vs. nonhedged) information.

As expected, participants viewed the scientist as a SI as more competent and as having more integrity than the politician, but, contrary to our expectations, not as more benevolent (H2). The SI did not yield a significant main effect on participants’ perceived goals of the informational text nor on participants’ judgment on the degree to which the informational text was influenced by the source's political attitudes. These findings partly confirm former evidence and support recent survey results (Pew Research Center, 2020) by adding evidence from a randomized controlled study. As assumed, scientists were judged to be more competent than politicians: On the one hand, this is understandable because scientific evidence was portrayed (here, a politician is not a pertinent expert), but, on the other hand, the scientist might not be considered pertinent to advocate for specific policies.

Unexpectedly, however, scientists were not rated as more benevolent than politicians, and the SI only yielded a small significant effect on integrity ratings. This might be explained by two main reasons: First, data acquisition took place during a time in which most parties in Germany agreed on taking measures to contain the novel coronavirus. As there were extraordinary societal challenges, political interests might not have been very salient regarding the support for the decision to make mask-wearing mandatory. Second, both sources of information were depicted as abstract conceptions using generic designations (“scientist” and “politician”) without providing any additional information about the respective communicator. Hence, there were no references leading participants to allege, for example, partisan interests of the politician or dependence on possible interests voiced from industry (cf. Besley et al., 2017). However, this allowed us to guarantee a straightforward experimental manipulation controlling for confounding variables such as the perceived familiarity or the assumed party affiliation of the SI. Future studies should take up a more nuanced approach to investigate which ascribed characteristics of a communicator have a decisive impact on how a source is perceived. For example, there is evidence that recipients engage in partisan motivated reasoning (i.e., party commitment decisively shapes the interpretation of information): Participants were found to be more supportive of a policy suggestion when introduced by in-group partisan elites and less supportive of the suggestion when brought up by out-group partisan elites (Bolsen et al., 2014). Future studies could test if a scientist as a SI is still perceived as more competent and as having more integrity when compared to a politician reflecting participants’ party preferences.

Our findings do not indicate that the communication of uncertainty affects the perceived trustworthiness of scientists and politicians differently (RQ2). We had assumed that participants would ascribe different communicative motives (e.g., information vs. persuasion) to scientists and politicians. However, the SI did not have a significant effect on the text's perceived goal to persuade nor on its goal to inform. Yet, there was a small interaction effect between the use of LHs and the SI on participants’ perception that the text was shaped by the source's evaluation of scientific evidence. When the use of hedges was low and the SI was a scientist, participants perceived the text as being more influenced by the source's evaluation of scientific evidence as to when the communicator was a politician. This is not an unusual result, as it simply indicates that recipients view scientists as more likely to be influenced by scientific evidence than politicians (when not using hedges). However, when the use of LHs was high, this perception did not differ with regard to the SI. Possibly, this might be because a high use of LHs indicates scientificness.

Participants’ prior attitudes toward the efficiency of mandatory mask-wearing to contain the coronavirus had a large effect on trustworthiness ratings (RQ3a): The more participants favored mandatory mask-wearing, the more they rated the SI as competent, benevolent, and as having integrity. Furthermore, participants’ topic-specific prior attitudes affected how the text's goals were perceived: The more participants supported mandatory mask-wearing, the more they rated the text's goals as persuasive and also as informative. Lastly, the more participants supported mandatory mask-wearing, the more they perceived the SI as being influenced by his interpretation of scientific evidence, and the less they perceived the source as being influenced by his political attitudes.

These results stress the important role of prior attitudes on source trustworthiness ratings (cf. Gustafson & Rice, 2020). It can be argued that this overarching impact of prior attitudes serves as a fertile ground for motivated reasoning processes (Sinatra et al., 2014), which might possibly be a manifestation of the self-confirmation heuristic (Metzger & Flanagin, 2015). To test this assumption, future studies should investigate how prior attitudes affect source trustworthiness ratings differently when participants are confronted with an informational text that is either inconsistent or consistent with their personal opinions. According to the self-confirmation heuristic, individuals should then perceive the source as more trustworthy when it reveals views consistent with their own prior beliefs as when the source exposes views that are inconsistent with these. An experimental variation, in which the source argues against mandatory mask-wearing was not included in the present study for ethical reasons (e.g., sharing misinformation in an online study setting without being able to properly correct it).

Participants’ epistemic certainty beliefs regarding the effectiveness of mandatory mask-wearing to contain the coronavirus did not affect the ascribed trustworthiness of scientists and politicians (RQ3b), nor did it affect the perceived intentions of the text or the participants’ judgment on how much a SI was influenced by scientific evidence. Albeit, respondents’ topic-specific epistemic certainty beliefs affected the judgment on how much the communicators were influenced by their political views: The more participants rated the knowledge as uncertain the more they judged the SI to be guided by his political attitudes. Even though this effect was small, this indicates that individuals who view knowledge as more uncertain might believe that decisions on issues that rest on yet uncertain scientific evidence are a question of opinion. To gain a greater insight into the impact of individuals’ epistemic certainty beliefs on their ratings of source trustworthiness, future studies should investigate participants’ epistemic certainty beliefs more broadly (e.g., domain specifically) to account for a greater additional error reduction (Pituch & Stevens, 2016). Furthermore, future work could focus on possible moderating effects of participants’ epistemic certainty beliefs to scrutinize the interplay between the use of LHs and trustworthiness judgments. 2

Importantly, the present study has to be discussed in the context of the coronavirus pandemic. On the one hand, the public debate whether mask-wearing should be mandatory served as a useful occasion to investigate the relationship between uncertainty communication and trustworthiness judgments. For the broader public, it became apparent that scientific evidence was directly interlocked with political decision-making, and recipients could have been sensitive to the communication of uncertainty, as there was a strong desire for certainty in the state of this emergency. On the other hand, as argued above, participants might have been well aware of scientific uncertainty regarding the topic from media reports and, hence, might have rated different levels of uncertainty as “acceptable” (Gustafson & Rice, 2019). Furthermore, while it would have been worthwhile to investigate attitude change in this study, at the time of data collection (a political decision had just been made on mandatory mask-wearing) our participants probably had developed a rather stable attitude toward the topic (Betsch et al., 2020).

Zehr (2017) stresses that it may not be sufficient to portray uncertainty as an objective feature of science that scientists are morally obliged to communicate. Instead, he argues that one should also take into account how uncertainty is constructed within public debates (e.g., how it is shaped by different stakeholders and communicators). Even though the results of this study do not imply that communicators benefit from communicating uncertainty when revealing scientific information publicly, there were also no negative effects associated with using a high amount of LHs on trustworthiness ascriptions, as some scientists might worry (Post, 2016; Post & Maier, 2016). Thus, our findings are in line with those of others who found that the communication of uncertainty does not necessarily cause detrimental effects on communicators’ perceived trustworthiness (Gustafson & Rice, 2020; van der Bles et al., 2019).

Supplemental Material

sj-docx-1-jlsp-10.1177_0261927X211044512 - Supplemental material for Face Masks Might Protect You From COVID-19: The Communication of Scientific Uncertainty by Scientists Versus Politicians in the Context of Policy in the Making

Supplemental material, sj-docx-1-jlsp-10.1177_0261927X211044512 for Face Masks Might Protect You From COVID-19: The Communication of Scientific Uncertainty by Scientists Versus Politicians in the Context of Policy in the Making by Inse Janssen, Friederike Hendriks and Regina Jucks in Journal of Language and Social Psychology

Footnotes

Acknowledgments

We are thankful to three anonymous reviews for providing relevant and challenging comments on prior versions of this paper. We also thank Howie Giles for additional comments and help with the process as well as Celeste Brennecka for language editing. Noemi Kumpmann, Mona Diedrich, and Jolina Bilstein assisted this research and receive a very warm thank you, too.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (grant number GRK 1712/2).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

Appendix A

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.