Abstract

Recent global initiatives are accelerating the shift toward human-centric approaches, reducing reliance on animal models in preclinical research and other domains. In this changing landscape, objectively evaluating the scientific relevance and merit of research involving animal models, and assessing their translational relevance is increasingly critical. Over the past decade, several tools have been developed to assess translational relevance, accuracy/appropriateness and efficacy of preclinical animal models, evaluate risk-of-bias in preclinical research, support harm–benefit analyses, and facilitate the adoption of non-animal replacement strategies. However, the uptake of such tools remains limited. To address this, a Biomedical Research for the 21st Century (BioMed21) Collaboration workshop on ‘Evaluating translational value of animal models in preclinical research — Tools, challenges, and strategies’, was convened by Humane World for Animals (30 June–1 July 2025). The event brought together tool developers and diverse global interest-holders to review current assessment tools, discuss their strengths, complementarity, limitations and feasibility, and explore opportunities for cross-sector collaboration. This paper summarises key outcomes of these presentations and discussions, highlighting knowledge gaps and barriers to the adoption of these tools and frameworks by researchers, funders and regulators. Strategies to raise awareness and promote the use of the tools and frameworks, to better inform funding decisions, regulatory approval and the appraisal of preclinical research, are also proposed.

Keywords

Introduction

Drug discovery and development remain notoriously failure prone, with approximately 90% of drug candidates that enter clinical testing ultimately failing to reach the market. 1 Although the cause is multifactorial, one important reason for this attrition rate lies in the poor translatability of preclinical findings to human outcomes. Despite decades of investment and refinement, traditional preclinical models — largely based on non-human biology — often fail to capture the complexity of human diseases and heterogeneity at populational level. Animal models often cannot fully reproduce the human relevant pathophysiological mechanisms underlying disease development and progression necessary for accurate prediction of clinical outcomes. This mismatch leads to the advancement of drug candidates with limited efficacy or unforeseen toxicity when tested in humans, resulting in costly late-stage failures and significant delays in bringing safe and effective therapies to patients. 2

It has been reported that the approval rate for animal research proposals is extremely high, approaching 100% in some countries,3,4 which suggests that current ethical and scientific review processes may not always apply sufficiently rigorous scrutiny. While these approvals cover a broad range of research purposes — not only preclinical studies — such permissive systems can indirectly influence the overall quality and reliability of preclinical evidence, thereby affecting research translatability. These challenges in translation have scientific, economic, ethical and societal implications. From a scientific perspective, it highlights the need to improve the predictive value of preclinical research by prioritising models that better mimic human biology and disease mechanisms.5,6 Economically, the high cost of failed drug development, often exceeding billions of dollars per approved drug, 7 places a heavy burden on healthcare systems. Ultimately, the foremost ethical concern is opportunity cost for patients and harm for animals. Countless people still die without viable treatments, while ongoing dependence on poorly predictive models both wastes resources and inflicts avoidable animal suffering.

These factors are driving a shift toward the integration of more advanced human-relevant methods in both basic and applied research, such as microphysiological systems, organoids and computational modelling, to bridge the translational gap and enhance the efficiency of drug discovery. 8

The transition toward a human biology-based paradigm is already happening in toxicology/safety assessment. In response to the Save Cruelty Free Cosmetics European Citizens’ Initiative (ECI), 9 the European Commission (EC) committed to developing a comprehensive plan, expected by the first quarter of 2026, to eliminate animal testing for chemical safety assessments in 15 legislative domains, and facilitate the uptake of NAMs (which can mean, interchangeably, either non-animal methods or new approach methodologies) across legislations. 10

In 2023, the European Medicines Agency issued its concept paper on revising the 2017 guidelines 11 for the regulatory acceptance of Three Rs (replacement, reduction and refinement) testing approaches, 12 to foster the development and use of NAMs. In addition, the EC has proposed an action under the European Research Area, to promote innovative, human-relevant methodologies, underscoring its commitment to more predictive science. 13

Similarly, the US National Institutes of Health has announced plans to intensify efforts to “expand innovative, human-based science while reducing animal use in research”, 14 and the US Food and Drug Administration (FDA) has put in place a roadmap to reduce animal testing in preclinical safety assessment studies, making NAMs the default instead of animal studies in 3–5 years’ time.15,16 This is part of a series of bold legislative initiatives in support to non-animal methods for drug development, building on the FDA Modernization Acts 2.0 and 3.0.17,18 Along the same lines, in 2023, India amended its New Drugs and Clinical Trial Rules to authorise researchers to use non-animal testing methods for new pharmaceuticals. 19

Altogether, these initiatives call for a shift toward human-relevant technologies, in an effort to promote better safety assessment of novel candidate drugs and in line with the Three Rs principles. In this shifting legislative and funding landscape, the ability to also objectively evaluate the scientific relevance and merit of preclinical research, particularly preclinical efficacy studies involving animals, is becoming critically important.

Several tools and frameworks have been developed over the last decade to assess the translational relevance of preclinical animal approaches. These tools include, for example: the Clinical Relevance Assessment of Animal Preclinical Research (RAA) tool; 20 the PATH approach; 21 the Framework to Identify Models of Disease (FIMD) for efficacy assessment in drug development; 22 the Animal Model Quality Assessment (AMQA) framework; 23 the Animal Model Framework (AMF); 24 the GRADE4Animals framework; 25 SYRCLE’s Risk of Bias tool for animal studies; 26 the Translatability Score; 27 and the Replacement Checklist. 28 However, in practice, these tools are rarely used in preclinical research, either by researchers, funding agencies, animal ethics committees or scientific review boards. This is likely due to a combination of limited awareness and insufficient systemic support, as well as unclear and contested validity/applicability of the tools.

To address these issues, Humane World for Animals, on behalf of the Biomedical Research for the 21st Century (BioMed21) Collaboration, hosted a workshop (30 June–1 July 2025) on Evaluating translational value of animal models in preclinical research — Tools, challenges, and strategies. The event brought together tool and framework developers, as well as key interest-holders, including researchers, members of ethics committees, representatives from government institutions and industry professionals, to: (i) present and review some of the currently available assessment tools; (ii) discuss their strengths, complementarity, limitations and feasibility of use; (iii) facilitate open discussions around real-world challenges, including barriers to uptake in funding and research evaluation processes; (iv) explore opportunities for cross-sector collaboration; and (v) suggest recommendations.

This report summarises the presentations and discussion points of this workshop.

Workshop format

The workshop took place in Ardoch, Loch Lomond, Scotland, on 30 June–1 July 2025. It was presented in a hybrid format to enable the attendance of remote participants, and the entire meeting was recorded and transcribed for reporting purposes. This event brought together 24 participants from 18 governmental, industry, scientific institutions and non-governmental organisations (NGOs), including 10 online attendees, in a closed-door setting. The full workshop agenda is reported in the Appendix and online Supplemental Material.

Introductory session

Helder Constantino (Humane World for Animals) kicked off the meeting by introducing the rationale and objectives of the workshop. Francesca Pistollato (Humane World for Animals) summarised the preliminary results of a survey distributed to key interest-holders working, or otherwise involved, in preclinical research. The aim of this survey was to understand the level of awareness of, and gather feedback and opinions on, the currently available tools and frameworks to assess the translational relevance of animal models used in preclinical research. The survey was created using the EU Survey Platform and remained open until 30 November 2025. 29 Marco Straccia (FRESCI by SCIENCE&STRATEGY SL) moderated a roundtable discussion, inviting participants to introduce themselves and share their perspectives on the importance of assessing the translational value of preclinical research.

Day 1

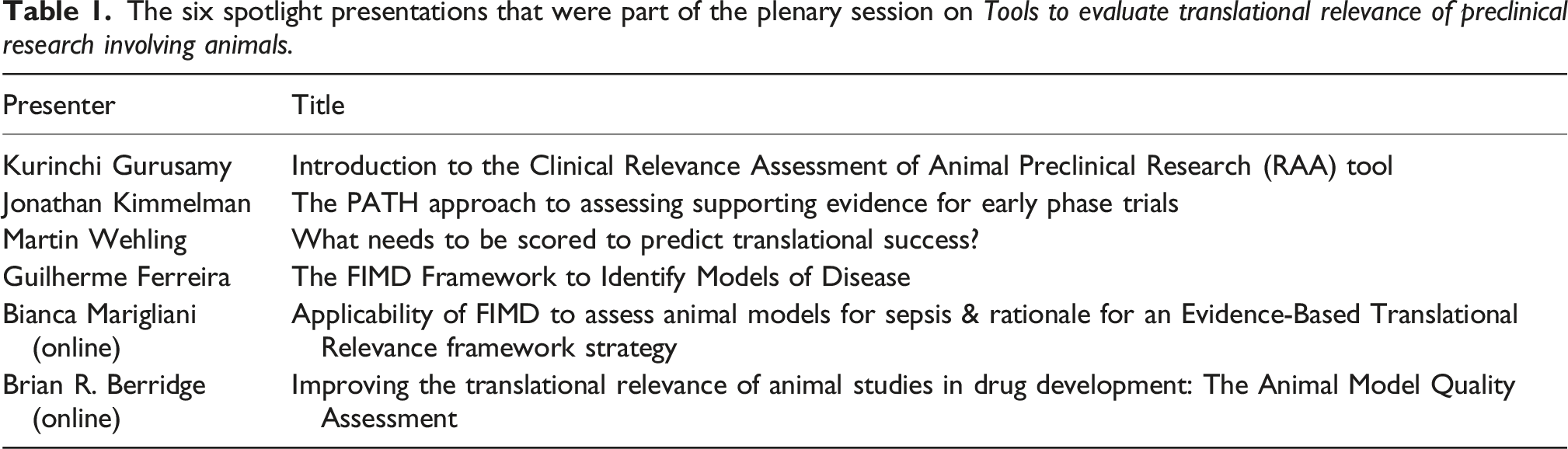

The six spotlight presentations that were part of the plenary session on Tools to evaluate translational relevance of preclinical research involving animals.

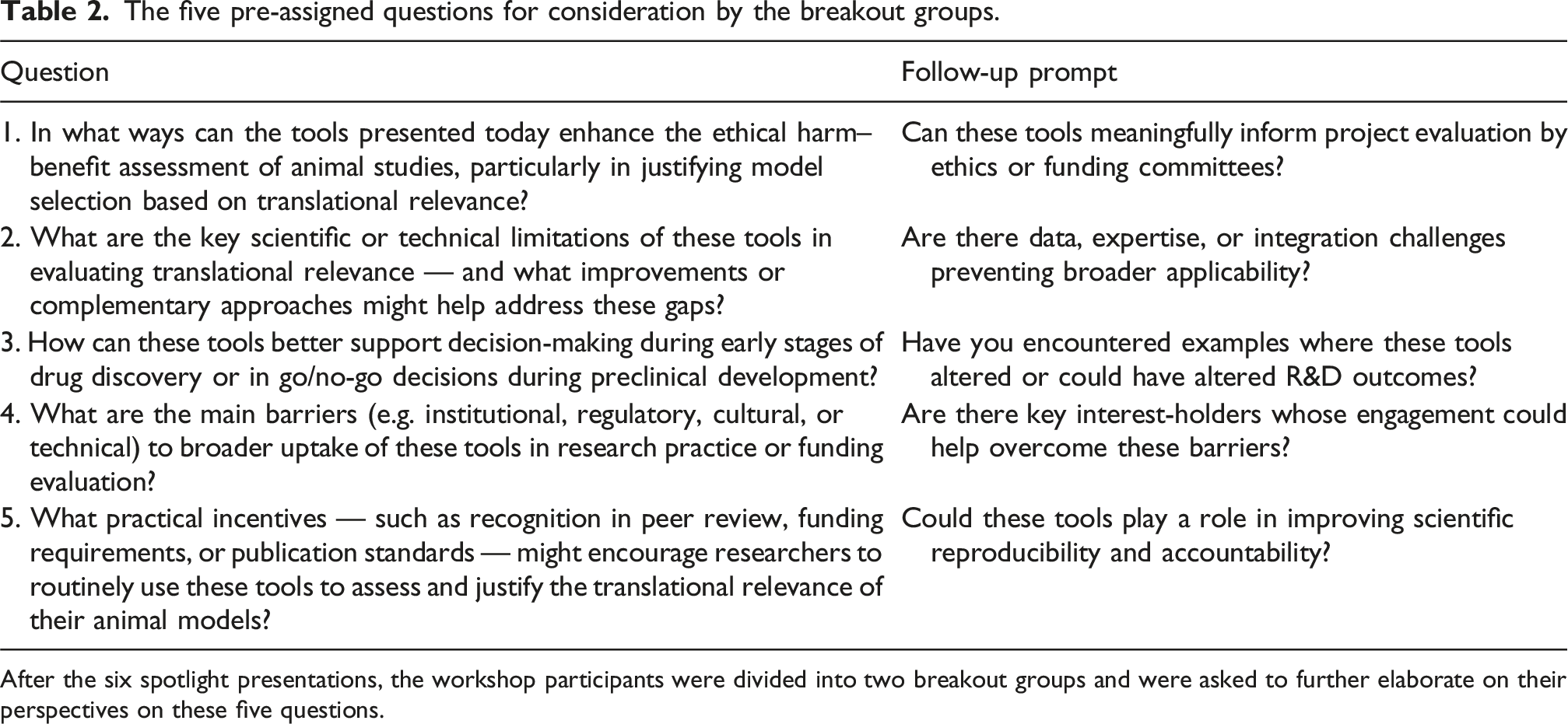

The five pre-assigned questions for consideration by the breakout groups.

After the six spotlight presentations, the workshop participants were divided into two breakout groups and were asked to further elaborate on their perspectives on these five questions.

Day 2

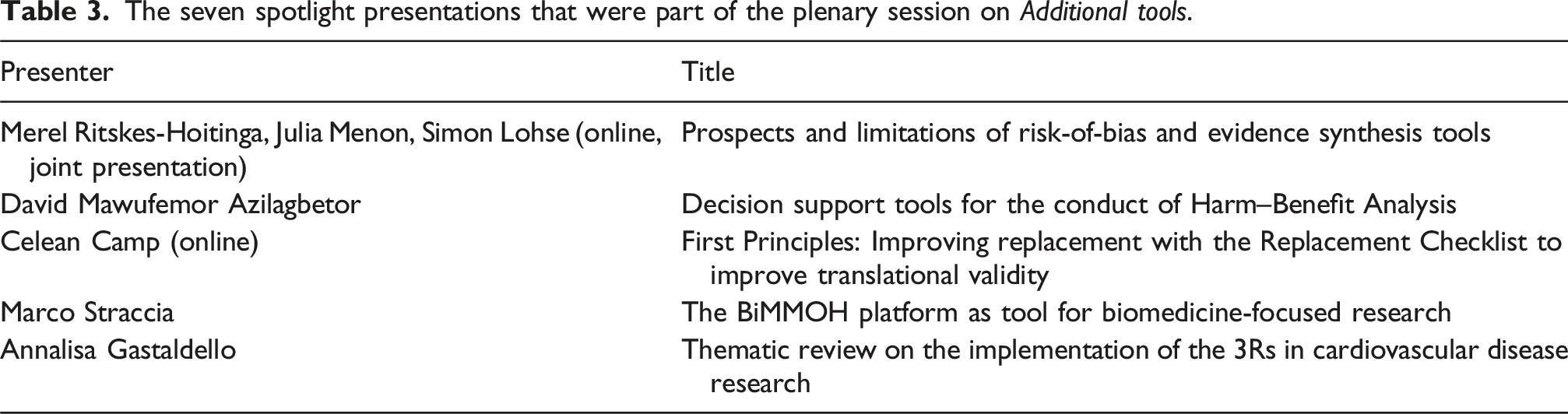

The seven spotlight presentations that were part of the plenary session on Additional tools.

The afternoon plenary discussion focused on identifying potential next steps in the context of the scientific merit of peer-review, ethical review (considering HBA), and the use of these tools to support decision-making in drug discovery and translation. The event ended with a final ‘Way Forward’ plenary discussion, moderated by Constantino, highlighting converging themes, areas of tension and opportunities for collaboration.

Summaries of these ‘spotlight’ presentations and the subsequent discussions are given in the appropriate sections below.

Day 1: Tools to evaluate translational relevance of preclinical research involving animals

The Clinical Relevance Assessment of Animal Preclinical Research (RAA) tool

Kurinchi Gurusamy (UCL) introduced the Clinical Relevance Assessment of Animal Preclinical Research (RAA) tool, as described in a 2021 publication. 20 This tool was designed to evaluate the clinical relevance of animal-based preclinical studies, and was developed using a Delphi process with 20 experts, 17 of whom completed all three rounds of consultation. The process resulted in consensus on eight key domains to guide assessment. These domains include: (i) the construct validity of the study (how well its findings translate to human disease); (ii) the quality of experimental design and data analysis; (iii) the risk of bias affecting internal validity; (iv) the reproducibility of results across clinically relevant conditions (external validity); (v) the replicability of methods within the same model; (vi) the strength and implications of the study’s conclusions; (vii) research integrity; and (viii) transparency.

Each domain is assessed using structured signalling questions and graded as ‘low’, ‘moderate’ or ‘high concern’. An overall judgement of ‘high’ or ‘uncertain’ clinical relevance is derived from domain-level assessments. The RAA tool is designed to prospectively and retrospectively assess the clinical translatability of in vivo therapeutic studies, while excluding other preclinical models, such as in vitro, in silico, veterinary and exploratory models. A major challenge in the RAA tool lies in the lack of empirical validation — currently, there is no direct evidence showing that studies deemed as ‘highly relevant’ by the RAA tool actually led to clinical benefit. Gurusamy proposed that fine-tuned large language models (LLM) might be leveraged both to operationalise the tool and to generate such empirical evidence at scale.

Systematic review of guidelines for in vivo experiments and the PATH approach

Jonathan Kimmelman (McGill University), began by challenging prevailing assumptions in the evaluation of preclinical evidence, arguing that early-phase preclinical decisions (e.g. whether to initiate a first-in-human trial) are often made on the basis of weak and heterogeneous data. These data sources may include animal studies, mechanistic experiments, in vitro results or indirect clinical observations, none of which are standardised or easily synthesised with each other.

To address this, Kimmelman presented the Preclinical Assessment for Translation to Humans (PATH) framework, as described in Kimmelman et al. 21 This tool was developed to improve how supporting evidence for early-phase trials is assessed and incorporated into risk–benefit judgements. PATH emphasises four key criteria for a valid evaluative method: transparency, comprehensiveness, reproducibility and accuracy. Unlike many existing tools that focus narrowly on the quality of specific experiments (such as internal validity in animal studies), PATH is designed to offer a broader and more structured view of the total evidentiary landscape that supports early clinical testing.

At the heart of PATH lies a mechanistic model that maps how an experimental intervention is expected to work in humans. The framework deconstructs the drug’s causal pathway into nine steps, organised into two main categories (direct and model) and three levels. PATH begins with direct clinical evidence, where researchers look for any direct evidence that the intervention engages the target, or triggers physiological or clinical responses in target patients. This evidence is then supported by studies in model systems suggesting that the drug engages its intended molecular target, this engagement triggers the pathophysiological responses, and those responses meaningfully contribute to the desired clinical outcome. The translatability of evidence from such models is assessed by five key considerations, including judgements about whether the disease mechanism in the model reflects human biology, whether model conditions sufficiently resemble the clinical setting, and whether potential barriers present in humans but not in models (e.g. poor drug delivery or compensatory biological pathways) might hinder the translatability of model effects.

Each step can be supported by different types of data, and not all steps need to be filled in every case. However, the strength of the overall evidence base depends on how many links in this chain are supported and how robust the supporting data are. The framework encourages structured discussion and documentation of uncertainties, disagreements or gaps in the evidence, thus increasing transparency and accountability in preclinical-to-clinical transitions.

The Translatability Score

Martin Wehling (University of Heidelberg) presented the Translatability Score, outlined in earlier work.27,30 This structured scoring system was developed to assess the likelihood that a compound will successfully transition from preclinical research to clinical benefit. The model evaluates projects on a 1-to-5 scale across criteria such as: (i) biomarker quality; (ii) availability of human evidence; (iii) relevance of preclinical models; and (iv) prior drug class experience.

Wehling stressed the need for validated biomarkers, presenting a quantitative scoring system that ranks their clinical utility. He illustrated how the translatability of certain drugs (e.g. statins, gefitinib) improved markedly after biomarker validation in humans.31,32 He also highlighted the importance of smart, early non-regulatory human studies, possibly attached to regulatory trials, adaptive trial designs and early human proof-of-concept studies, as tools to accelerate development and reduce reliance on animal models.

To demonstrate real-world utility, Wehling discussed a prospective validation of his scoring system by using six COVID-19 drug/vaccine candidates. Scores were locked before phase III results and predicted clinical success with an R 2 value of 0.86, which provides strong evidence for the model’s predictive value. 33

The Framework to Identify Models of Disease (FIMD)

Guilherme Ferreira (Utrecht University) presented the Framework to Identify Models of Disease (FIMD), which is described in a 2019 article. 22 FIMD is a structured and evidence-based tool designed to systematically assess the external validity of animal models in biomedical research, and evaluate how accurately animal models reflect key aspects of human disease. The framework is organised into eight domains: (i) epidemiology; (ii) symptomatology and natural history; (iii) genetics; (iv) biochemistry; (v) aetiology; (vi) histology; (vii) pharmacology; and (viii) endpoints. The scoring of each domain is based on the extent to which the model replicates human disease features (i.e. ‘fully’, ‘partially’ or ‘not at all’), and is supported by transparent documentation of evidence and reasoning.

A distinguishing feature of FIMD is its focus on pharmacological validation — models are critically assessed based on their ability to replicate both the efficacy of approved treatments and the lack of efficacy of failed drugs. The framework also incorporates an evaluation of reporting quality and risk of bias, ensuring that the reliability of underlying evidence is factored into the model’s overall assessment. Visual tools such as radar plots are used to represent the performance of models across domains, as well as to quantify model similarity and uncertainty, providing a clear, comparative overview of different models’ strengths and limitations.

Ferreira illustrated the application of FIMD by presenting case studies, including Duchenne muscular dystrophy and Type 2 diabetes. 22 He also acknowledged practical challenges, such as the substantial workload involved in curating literature and the limited access to proprietary preclinical data, especially from industry.

A FIMD case study and rationale for an evidence-based translational relevance framework strategy

Bianca Marigliani (Humane World for Animals) (online) initially focused on the applicability of the FIMD framework developed by Ferreira in assessing the translational relevance of animal models for sepsis, comparing the LPS model (commonly used but scientifically discredited) and the caecal ligation and puncture (CLP) model (considered the ‘gold standard’). This case study (unpublished) revealed that, while FIMD proved effective for comparing models based on drug efficacy, its applicability is hampered by methodological and practical challenges, including the high workload, the need for systematic review expertise, and the time-intensive nature of scoring pharmacological validation.

Marigliani continued, outlining a proposal for a broader evidence-based translational relevance framework. This conceptual model (under development) applies an evidence pyramid approach, placing systematic reviews at the top, followed by expert consensus, standardised strategies (such as FIMD), and assessment of animal model validity at the base. Finally, she emphasised the need to address the gap between tool development and real-world implementation.

The Animal Model Quality Assessment (AMQA)

Brian R. Berridge (B2 Pathology Solutions) presented the Animal Model Quality Assessment (AMQA), which was originally reported in Storey et al. 23 This is a qualitative framework — based on structured discussion rather than numerical scoring — that is intended to guide the critical appraisal of animal models used in drug development. The tool’s primary aims are to enhance the scientific justification for model selection, improve cross-disciplinary collaboration, identify weaknesses that could be mitigated, and promote transparency regarding the strengths and limitations of preclinical models. The AMQA is particularly valuable in supporting less experienced investigators, many of whom are more familiar with molecular techniques than animal science, aligning pharmacological investigations with clinically relevant endpoints, and providing insights into the quality of the translational evidence derived from the model.

The AMQA framework is organised around five key domains: (i) understanding of the human disease being modelled; (ii) comparative anatomy and physiology; (iii) historical translatability; (iv) biological reproducibility; and (v) contextual relevance of the model to the intended clinical application. Within these categories, investigators are prompted to address specific sub-questions designed to stimulate informed judgement and multidisciplinary dialogue. Responses to the questions are given a red, yellow or green ‘stoplight’ score, representing the strengths or weaknesses of the model relative to the intended patient population.

AMQA supports the refinement of existing models, informs study design, and enables interpretation of model-derived data within the broader drug development process. Practical applications have included evaluating models with discordant preclinical and clinical findings, such as in vivo studies targeting lipoprotein-associated phospholipase A2, where pharmacodynamic biomarkers aligned between species, 34 yet therapeutic outcomes diverged.35,36

Berridge commented that limited fundamental understanding of complex human diseases, lack of established metrics for model evaluation, cultural inertia favouring throughput over relevance, and systemic barriers, such as fragmented review processes and incentive structures that prioritise pipeline progression over long-term success, represent concrete implementation challenges.

Summary of the breakout discussions on Day 1

Following these spotlight presentations, breakout discussions addressed key challenges and perspectives surrounding the implementation of these tools in the context of translational research. These discussions were structured around several guiding questions addressing: (i) the potential role of the tools in supporting decision-making in drug discovery and translational research; (ii) existing limitations and strategies to address them; (iii) the contribution of the tools to the ethical harm–benefit analysis (HBA) of animal studies; (iv) barriers to broader uptake; and (v) possible incentives for researchers to adopt these tools to demonstrate the human relevance of their models.

While participants agreed that the applicability of these tools varies, they also emphasised the transformative potential of structured, evidence-based tools to improve the assessment of translatability and the justification of animal studies, supporting the Three Rs principles. Systematic reviews, standardised evaluation frameworks and publicly accessible justifications were seen as essential for increasing scientific rigour, ethical accountability and trustworthiness.

However, significant barriers were identified. These include technical limitations, insufficient training for researchers, animal facilities managers, ethics committees, reviewers and regulators, and institutional and cultural resistance to changing established practices. Many ethics committees lack the expertise or resources to critically appraise model validity, and funding bodies and scientific journals do not consistently require or reward thorough translational assessments. Lack of standardised criteria, decision-making frameworks and clear regulatory expectations further hinder adoption. In addition, researchers may perceive such evaluations as burdensome, unless linked to tangible incentives or competitive advantage. Participants highlighted multiple strategies to address these challenges, including: — Policy and governance: Establish independent advisory boards, improve alignment between ethics review and funding decisions, and create clear, binding criteria for model evaluation. — Capacity building: Provide mandatory training on translational relevance and integrate evidence-based tools into grant applications and review processes. — Digital solutions: Develop standardised, user-friendly platforms (potentially LLM-enabled) to support translatability scoring, pre-registration of animal experiments, and integration of complementary assessment tools. — Culture and incentive shifts: Reward researchers for demonstrating human applicability, promote data sharing of ‘negative’ results to reduce duplication, and consider phasing-out repeatedly non-translatable models from future approvals.

Day 2: Other tools and processes

The second day of the workshop focused on exploring additional tools and frameworks to support decision-making in planning and reviewing preclinical research, while facilitating the integration of the Three Rs with emphasis on replacement strategies.

Risk-of-bias and evidence synthesis tools

Systematic reviews and SYRCLE

Merel Ritskes-Hoitinga (Utrecht University) started a joint online presentation highlighting the central role of evidence synthesis, particularly systematic reviews, in improving the reliability and translational relevance of preclinical animal studies. She emphasised that systematic reviews employ formal, explicit and rigorous methodologies to consolidate existing findings, clarify knowledge gaps and guide evidence-based decision-making. While such approaches are routine in clinical medicine and the social sciences, they remain underutilised in the preclinical domain, despite their potential to prevent unnecessary or poorly justified animal use.

To address this gap, SYRCLE developed accessible tools and free e-learning resources aimed at educating researchers in the methodology of preclinical systematic reviews. 37 With support from ZonMw and the Dutch Ministry of Health, this initiative provided training, guidance and even salary support for conducting reviews. Although SYRCLE’s institutional support has since ceased, the network’s infrastructure, including a cadre of trained ambassadors, continues to function and promote best practices. A scoping review found that translational concordance rates between animal and human studies varied widely (from 0% to 100%) and failed to yield reliable predictors of success. 38 These findings, compounded by consistently poor reporting quality in preclinical publications, underscore the urgent need for systematic synthesis to inform study design, reporting and policy.

Despite its benefits, uptake of systematic review methodology in animal research remains limited due to key barriers such as time, cost and a lack of training. Ritskes-Hoitinga argued that these challenges pale in comparison to the costs of conducting redundant or low-impact animal experiments. She also highlighted the promise of initiatives like the Evidence Synthesis Infrastructure Collaborative (ESIC), 39 supported by the Wellcome Trust, as a global effort to harmonise and scale such approaches across disciplines.

The SYRCLE risk of bias tool

In the second part of the joint presentation, Julia Menon (PreclinicalTrials.eu) focused on the SYRCLE Risk of Bias (RoB) tool described in a 2014 work, 26 which is an instrument specifically developed to evaluate the internal validity of animal studies. The SYRCLE RoB tool comprises 10 items corresponding to five bias domains: (i) selection bias (e.g. unconcealed allocation, inadequate randomisation); (ii) performance bias (e.g. differential treatment of groups due to lack of blinding); (iii) detection bias (e.g. unblinded outcome assessments); (iv) attrition bias (e.g. unequal loss of subjects); and (v) reporting bias (e.g. selective outcome reporting). Each of these is scored as ‘low’, ‘high’ or ‘unclear’ risk. Menon emphasised that scoring requires both an assessment of the text and informed judgement, highlighting the importance of expertise and duplicate assessments to ensure consistency. She emphasised the importance of randomisation and blinding as central strategies for mitigating such biases.

The SYRCLE RoB tool is a critical component within the broader framework of systematic reviews, as it helps determine the trustworthiness of primary research before engaging in quantitative synthesis. Unlike general notions of study quality, which may encompass aspects such as reproducibility or statistical power, the tool targets internal validity by retrospectively assessing the extent to which observed effects in an animal study can be attributed to the intervention rather than confounding factors or methodological flaws. A key limitation of the tool is its reliance on reporting quality, which can rate well-conducted but poorly reported preclinical studies as ‘unclear’. In contrast, it can also rate studies that transparently disclose their methodological limitations (e.g. noting that blinding was not feasible due to the severity of a disease model) as ‘high risk’, even though such transparency reflects accountability. These limitations prompt further efforts to improve reporting quality, while also developing a novel ‘high justified’ category to distinguish transparent but methodologically limited studies.

The GRADE4Animals framework

In the final segment of the joint presentation, Simon Lohse (Radboud University) introduced and critically assessed the GRADE4Animals framework, 25 a recently developed methodological adaptation of the GRADE (Grading of Recommendations, Assessment, Development and Evaluation) approach, 40 tailored specifically for preclinical animal studies. The central aim of GRADE4Animals is to offer a systematic and transparent approach for assessing evidence from animal studies, with the ultimate goal of improving decision-making in clinical research and patient care by aligning preclinical evidence more closely with clinical needs.

The tool mirrors the five-step GRADE framework traditionally applied in clinical settings, but incorporates adaptations to account for the distinct characteristics of animal research. The process begins by defining a clinically relevant PICO (Patients/People, Intervention, Comparator, Outcome) question, followed by scoping the evidence to determine whether animal data are needed due to insufficient or low-quality human data. From there, a corresponding preclinical PICO question is formulated, which requires thoughtful translation of clinical parameters into appropriate animal models, interventions, comparators and outcome measures. Evidence from animal studies is then collected and synthesised, ideally via systematic review, to develop outcome-specific effect estimates. This is followed by a structured appraisal of the evidence quality, which involves assessing multiple domains: risk of bias, imprecision, inconsistency, publication bias and indirectness.

Lohse acknowledged that assessing indirectness represents a severe challenge for the tool, as well as lack of robust criteria for model relevance and clear evidence grading thresholds, among other things, leaving many judgements dependent on expert interpretation, potentially reducing the reproducibility of GRADE4Animals-based assessments.

Harm–benefit analysis (HBA) support tools

David Mawufemor Azilagbetor (University of Basel) outlined a comprehensive scoping review investigating the tools and frameworks designed to aid HBA in the ethical review of animal experimentation. The research, embedded within the 79th National Research Programme (NRP 79) of the Swiss National Science Foundation (SNSF) on advancing the Three Rs and the ethics of animal research in Switzerland, aims to evaluate the methodological landscape and practical utility of decision-support systems that assist ethics committees in assessing whether proposed animal experiments are ethically justifiable.

Azilagbetor traced the conceptual roots of HBA to utilitarian ethics and the Three Rs, emphasising the evolution of the field from the 1986 Bateson cube model, 41 to contemporary tools incorporating algorithms, categorical checklists, mathematical models and graphical visualisations. Seventeen such tools were identified and reviewed. 42 These tools vary significantly in structure and application: some rely on structured discourse within ethics committees, while others utilise scoring systems or algorithmic pathways to guide approval decisions. Several models, such as the refined Bateson cube 43 and the harm–benefit algorithm by Liguori et al., 44 offer visual and stepwise formats for evaluating scientific benefit versus animal suffering. Others, such as the Porter’s scoring approach, 45 yield quantitative outcomes for precise assessments, though not without criticism for resolving ethical issues through mathematical formulae. Azilagbetor’s analysis underscored that, while decision-support tools can enhance standardisation and transparency, ethical decision-making in animal research ultimately remains value-laden and context-specific. In addition, the translational relevance of animal experiments, which is an important aspect of the HBA, is not considered and addressed in most of the tools, highlighting a need to address this limitation when developing future tools.

A 2024 review of approval rates revealed that almost all (and, in fact, in some EU countries, all) animal research proposals are accepted,3,4 raising concerns about the rigour and public credibility of current ethical review processes. He argued that this phenomenon suggests that existing HBA mechanisms may function more as harm assessments than true harm–benefit evaluations, and there is a need for public transparency regarding how projects are evaluated leading to their approval.

The Replacement Checklist

In her online presentation, Celean Camp (Replacing Animal Research) introduced the Replacement Checklist, 28 a pragmatic tool designed to strengthen the implementation of replacement within the framework of the Three Rs, with a particular focus on enhancing the relevance of the models employed in biomedical research. The tool was developed in consultation with researchers, members of UK Animal Welfare and Ethical Review Bodies (AWERBs) and other interest-holders, and was subsequently piloted and refined based on user feedback. Its central aim is to address persistent gaps in the consideration of non-animal methods during project planning and regulatory review, thereby improving the rigour and transparency of ethical justifications for animal use.

The checklist comprises six non-prescriptive prompts, designed to guide researchers and reviewers through a systematic exploration of human-relevant alternatives. These include: identifying information sources; clarifying search strategies and subject areas; engaging with relevant expertise; documenting search timeframes; and critically justifying the rejection of identified alternatives. This approach reframes replacement as a proactive process to be initiated at the earliest stages of research design — well before licence applications or ethical review — rather than a retrospective requirement for regulatory compliance. The tool is intentionally adaptable to a wide range of scientific contexts, offering both procedural clarity and practical guidance without imposing rigid scoring systems.

The Replacement Checklist has demonstrated utility, both as a training and review instrument, enabling non-specialist AWERB members to engage more confidently with replacement discussions, and fostering a culture of transparency around model choice. Its uptake across over 60 institutions in the UK and Europe suggests strong demand for usable, context-sensitive tools that bridge the gap between policy and practice. Notably, the Checklist also supports broader institutional efforts to embed replacement into research governance frameworks, aligning ethical responsibility with scientific quality, while enabling regulatory accountability.

The Biomedical Model Hub (BimmoH) platform

Marco Straccia (FRESCI by SCIENCE & STRATEGY SL) introduced the Biomedical Model Hub (BimmoH), the first and largest public, regularly updated, highly curated knowledge database of human biology-based experimental models, powered by interpretable Artificial Intelligence (AI) models and designed to structure and consolidate information about models that support biomedical research. BimmoH was funded through a pilot project of the European Parliament and implemented by the EC Joint Research Centre (JRC), with support from a private consortium led by FRESCI and including Ready2Use, TenWise, Altertox, and Ecomole (https://www.bimmoh.eu). BimmoH addresses a critical bottleneck in the ethical and translational advancement of preclinical science — namely, the difficulty of efficiently locating suitable human-relevant models amidst an exponentially growing scientific literature. The tool aims to streamline the replacement process by enabling researchers, regulators, funders and other interest-holders to rapidly access structured, filtered, and context-specific information on available in vitro, in silico and ex vivo models.

The platform utilises AI-supported data processing to mine over 10 million PubMed references, and categorise and present data from over one million biomedical publications, focusing primarily on abstracts and titles due to legal constraints on full-text mining. This enables rapid query execution and high-level filtering, based on organ system, disease, cell type, model format (e.g. organoids, microfluidics, cell lines), omics technologies and other relevant metadata.

Key innovations of BimmoH include its intuitive search interface, the ability to distinguish between purely human-based and mixed human–animal studies, and a robust filtering architecture that allows users to refine their search further. The platform also accommodates secondary use cases, such as disease area searches and trends visualisations, thereby serving not only biomedical researchers but also regulatory authorities, ethical review bodies, funding agencies, startups, and industry interest-holders engaged in translational model development and assessment.

Although BimmoH improves on traditional databases with its domain-specific features, its inability to mine full texts and the continued need for expert review, limit comprehensive model evaluation and assessment of translational potential. The broader vision for BimmoH includes integration into an ecosystem of interoperable tools supporting ethical pre-assessment, HBA, model validation and regulatory compliance workflows.

Thematic review on the implementation of the Three Rs in cardiovascular research

Annalisa Gastaldello (EC, JRC) introduced an initiative led by the EC and the European Union Reference Laboratory for Alternatives to Animal Testing (EURL ECVAM) to conduct the first thematic review mandated by Directive 2010/63/EU. 46 This review focuses on cardiovascular research and aims to assess the implementation of the Three Rs by identifying existing NAMs, evaluating their potential to replace animal models, and uncovering challenges limiting their broader adoption. The broader goal is to provide actionable recommendations for researchers, ethical review bodies and project evaluators, to promote the use of NAMs.

The review adopts a structured, question-led approach involving a panel of experts in different fields of cardiovascular research, with experience in both animal-based and non-animal-based approaches. This panel will evaluate whether NAMs can replace, reduce or refine animal use in specific areas of cardiovascular research. Additional questions seek to further our understanding of why certain subfields within cardiovascular science rely more heavily on animal models, and to help identify instances in which animal-based methods persist, despite poor translational outcomes. The review also aims to identify NAMs that show promise but remain underutilised, as well as areas where alternatives are currently lacking and need to be developed.

Methodologically, the review combines two complementary data sources. Firstly, the ALURES database 47 — containing over 50,000 non-technical summaries of animal research projects approved across the EU — which is being mined to characterise the scope and severity of animal use in cardiovascular research. Gastaldello’s team has conducted a focused analysis of over 3000 summaries pertaining to cardiovascular research, in order to map the research fields, species used and severity classifications. Secondly, the review will draw on updated resources from the BimmoH platform to facilitate access to non-animal models. These datasets will be supplemented by qualitative inputs from expert case studies and interest-holder consultations.

Challenges acknowledged include the legal and linguistic constraints of the ALURES database (each summary is submitted in the language of the Member State), and the rapid obsolescence of existing literature reviews (which BimmoH aims to overcome through dynamic, AI-supported updates). The project is also serving as a test case for the broader implementation of thematic reviews under Directive 2010/63/EU, with the expectation that subsequent reviews may refine or adapt the methodology.

The expected outcomes include evidence-based recommendations for researchers, regulators and ethics committees, to strengthen the application of the Three Rs, improve scientific validity and boost the translational impact of cardiovascular research.

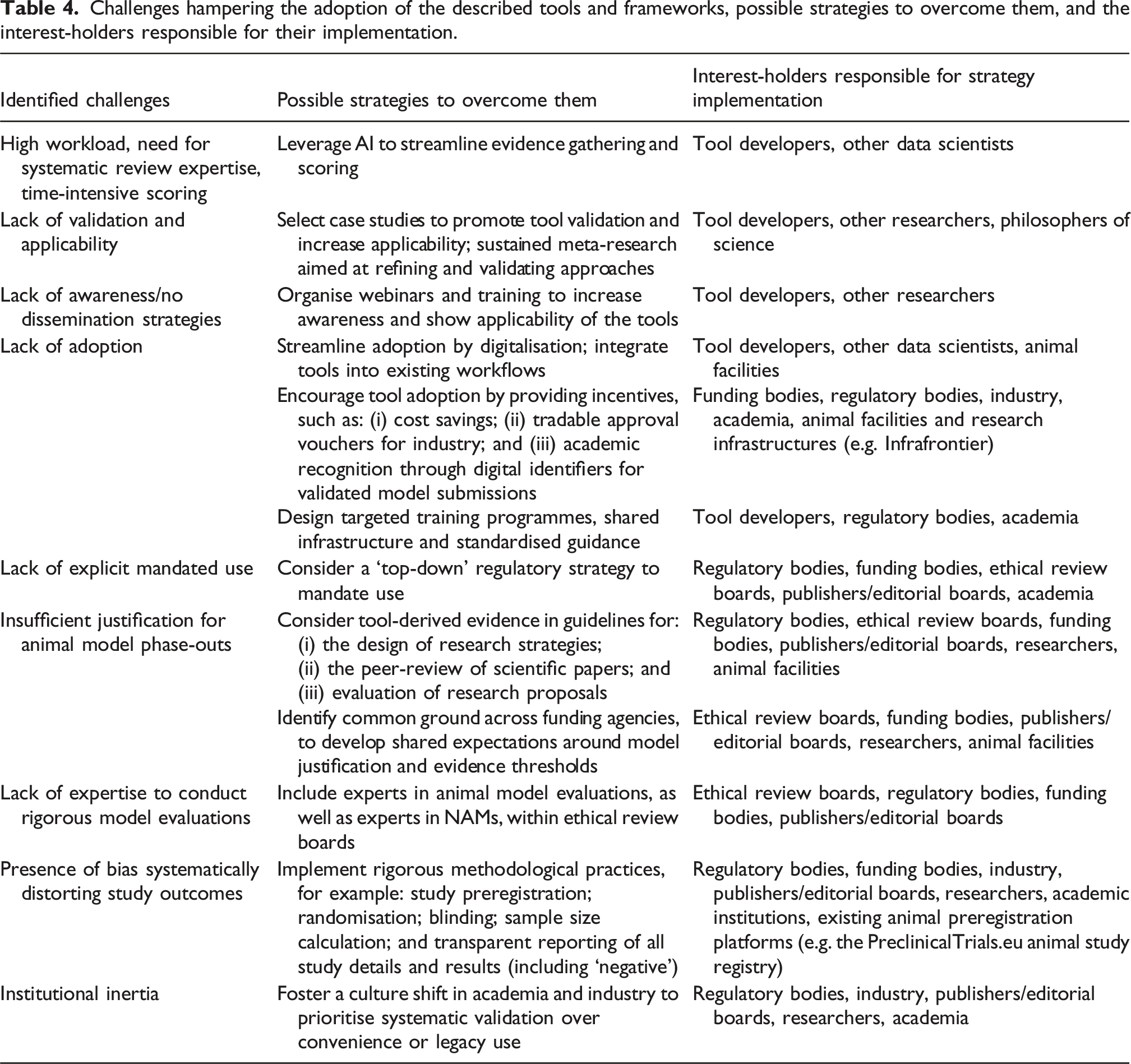

Summary of the plenary discussions on Day 2

The spotlight presentations were followed by a number of plenary discussions, aimed at considering the strengths, limitations, efficiency and feasibility of the tools presented over the two days of the workshop, their complementarity, opportunities for multi-stakeholder collaboration, the potential barriers to uptake, and possible workarounds. Potential next steps and the ‘Way Forward’ were also considered. Participants were prompted with a series of questions, as detailed in the Appendix and online Supplemental Material. The discussion points and main takeaways of these discussions are summarised below.

Considerations about the current tools

The diversity of available tools and frameworks was considered a strength, as each addresses distinct needs and contexts of use, ranging from model identification to quality assessment and regulatory alignment. Participants argued that a modular approach that integrates these complementary resources could provide a more robust decision-support system. In particular, combining tools that assess internal validity (e.g. bias) with those evaluating external validity or predictive performance offers strong potential to enhance confidence in model selection. In addition, despite advances in automation, expert interpretation remains indispensable, particularly for assessing model relevance and performance in a translational context.

Challenges and barriers to uptake

Participants agreed that, despite their potential, adoption of these tools faces significant hurdles, including: lack of regulatory mandates; insufficient financial or professional incentives; and limited awareness or training among researchers, funders and evaluators. Cultural resistance and institutional inertia further slow uptake, with adoption often depending on individual initiative rather than systemic support. Additionally, the usefulness of existing tools to improve translational assessment remains unverified due to limited large-scale implementation. This may reduce their perceived usefulness for funding and research prioritisation.

Regulatory and policy considerations

Participants emphasised the need to align tool development and use with existing regulatory frameworks. However, the absence of formal recognition or regulatory requirements for using these tools diminishes their potential influence on project design and funding decisions. A ‘top-down’ regulatory strategy was generally recommended to complement grassroots efforts and accelerate adoption.

Role of systematic reviews, risk of bias and transparent data sharing

Systematic reviews were highlighted as powerful mechanisms for discouraging unnecessary animal experiments, promoting the Three Rs and, ultimately, enhancing translatability.

Biases can systematically distort study outcomes, possibly leading to either over-estimation or under-estimation of treatment effects, thereby compromising the reliability of preclinical evidence. To address this, rigorous methodological practices should be systematically implemented in preclinical research, including preregistration of study protocols, randomisation, blinding, appropriate sample size calculation, and transparent reporting of both ‘positive’ and ‘negative’ (or inconclusive) results. In addition, reporting should mention all details of a study, adhere to the ARRIVE guidelines 48 in full and in all detail. The use of structured risk-of-bias assessment tools can help identify and mitigate sources of bias early in the research process.

Participants advocated for access to clinical trial data, and the mandatory sharing of structured, machine-readable data to strengthen tool development and systematic review efficiency. The use of AI tools (e.g. Undermind) could dramatically accelerate evidence synthesis, provided that comprehensive and harmonised data access is secured. In this context, initiatives such as the European Health Data Space, 49 aiming to establish shared standards, infrastructures and governance frameworks, to facilitate secure and trustworthy secondary use of health data, remain crucial.

Recommendations for increasing adoption and impact

Challenges hampering the adoption of the described tools and frameworks, possible strategies to overcome them, and the interest-holders responsible for their implementation.

Conclusions, recommendations and future outlook

This BioMed21 workshop served as a platform for knowledge exchange among tool developers and interest-holders who could benefit from the implementation of such tools to improve the relevance and translational power of research.

Participants called for a paradigm shift in how biomedical research success is measured, emphasising the need to prioritise human-centric solutions and innovation, and maximise moral efficiencies — i.e. minimise (or avoid) burdens and harms for animals, and achieve advances in medicine and public health. Achieving this vision will require regulatory clarity, supportive funding structures, digital infrastructure, interest-holder training, as well as public engagement, to ensure consistent and effective implementation of these tools and frameworks.

While these tools and frameworks could improve the rigour and transparency of preclinical research evaluation, significant epistemological and practical challenges must be confronted to enable their wider adoption. In particular, when appraising the benefit of using animals in preclinical research and evaluating translational impact, insights from philosophy of science could be incorporated. These could enable epistemological reflections, and improve understanding of both the rationale behind the choice of animal models and the extent to which methodological shortcomings (such as under-reporting or lack of blinding) are influenced by broader scientific and institutional practices. 50

Institutional and cultural barriers can hamper the successful implementation of the Three Rs, and replacement strategies in particular. These include inadequate training in literature search strategies, limited inter-disciplinary collaboration, and the continued perception of replacement as the most challenging of the Three Rs. A culture change within the research community is dearly needed, encouraging greater openness to human-relevant methods, while rejecting the binary framing of animal versus non-animal approaches.

Ultimately, to enable the integration and adoption of these tools in a systematic manner, participants agreed that one possible way forward could be their incorporation into a single interoperable system (manuscript in preparation), enabling a tiered decision-making approach that includes: 1. Implementation of replacement strategies (e.g. via the Replacement Checklist), informed by the evaluation of available NAMs for a given research question or context of use (e.g. through BimmoH). 2. Prospective assessment of the translational relevance of selected NAM-based strategies. 3. If NAMs are not immediately available, then a prospective evaluation of the translational relevance of a selected animal model. 4. Retrospective assessment of the impact of the selected preclinical strategy. 5. Harm–benefit analysis. 6. Early, smart human trials.

Such an integrated and iterative framework could not only improve the rigour and transparency of model selection, but also increase awareness and promote relevant training provisions in animal facilities in academia and industry. This will serve to accelerate the transition toward human-relevant research, fostering better science, enhanced ethical compliance, and the more efficient translation of findings into clinical benefits for patients.

Supplemental Material

Supplemental Material - Evaluating the translational value of preclinical models: Available tools and frameworks, challenges and strategies

Supplemental Material for Evaluating the translational value of preclinical models: Available tools and frameworks, challenges and strategies by Francesca Pistollato, Fabia Furtmann, Marco Straccia, Marc Avey, David Mawufemor Azilagbetor, Celean Camp, Conor Delaney, Guilherme S. Ferreira, Maria Laura Garcia-Bermejo, Annalisa Gastaldello, Kurinchi Gurusamy, Laura Holden, Jonathan Kimmelman, Simon Lohse, Bianca Marigliani, Julia M. L. Menon, Merel RitskesHoitinga, Shaarika Sarasija, Danilo Tagle, Ignacio J. Tripodi, Jan Turner, Martin Wehling and Helder Costantino in Alternatives to Laboratory Animals.

Footnotes

Acknowledgements

The authors would like to thank Troy Seidle, Humane World for Animals, for his helpful input into the workshop planning and reviewing of the Report, and Brian R. Berridge (B2 Pathology Solutions) for his participation in the workshop and constructive comments on the Report.

ORCID iDs

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This BioMed21 Workshop was supported by Lush Ltd, through a grant to Humane World for Animals.

Declaration of conflicting interests

DA, CC, GF, KG, JK, MRH and MW are developers of tools and frameworks mentioned in this Report. The other authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Agenda for the Workshop on ‘Evaluating translational value of animal models in preclinical research — Tools,challenges,and strategies’

09:00–09:15 | Welcome & opening remarks • Workshop goals and structure (Constantino) (5 min) • Framing the issue: Presenting the results of the survey launched before the workshop (Pistollato) (10 min)

09:15–10:00 | Tour de table and exchange of personal perspectives (moderated by Straccia)

10:00–10:15 | Coffee break

10:15–12:15 | Spotlight presentations: Tools to evaluate translational relevance of preclinical research involving animals (moderated by Pistollato)

Time

Title

Presenter

10:15–10:30

Introduction to the Clinical Relevance Assessment of Animal Preclinical Research (RAA) tool

Kurinchi Gurusamy

10:35–10:50

The PATH approach to assessing supporting evidence for early phase trials

Jonathan Kimmelman

10:55–11:10

What needs to be scored to predict translational success?

Martin Wehling

11:15–11:30

The FIMD Framework to Identify Models of Disease

Guilherme Ferreira

11:35–11:50

Applicability of FIMD to assess animal models for sepsis & rationale for an Evidence-Based Translational Relevance framework strategy

Bianca Marigliani (online)

11:55–12:10

Improving the translational relevance of animal studies in drug development: The Animal Model Quality Assessment

Brian R. Berridge (online)

12:15–13:10 | Lunch

13:10–14:30 | Breakout discussions of pain points surfaced during tour de table in the context of: • Contribution of these tools to harm–benefit assessment • Possible limitations of these tools and possible strategies to fill remaining gaps • Contribution of these tools to decision-making in drug discovery and translation • Possible barriers to uptake of these tools • Possible incentives for researchers to use these tools to demonstrate the translational relevance of their animal methods/models in research design

14:30–15:00 | Break

15:00–16:30 | Plenary: Do we agree there is a problem? • Group reporting • Moderated synthesis to identify shared concerns

16:30–17:00 | Reflections & close of day

09:00–09:15 | Welcome back & recap of Day 1 (Constantino)

09:15–10:45 | Spotlight presentations: Additional Tools (moderated by Pistollato)

Time

Title

Presenter

9:15–9:45

Prospects and limitations of risk-of-bias and evidence synthesis tools

Merel Ritskes-Hoitinga, Julia Menon, Simon Lohse (online, joint presentation)

9:50–10:05

Decision support tools for the conduct of Harm–Benefit Analysis

David Mawufemor Azilagbetor

10:10–10:25

First Principles: Improving replacement with the Replacement Checklist to improve translational validity

Celean Camp (online)

10:30–10:45

The BiMMOH platform as tool for biomedicine-focused research

Marco Straccia

10:50–11:00

Thematic review on the implementation of the 3Rs in cardiovascular disease research

Annalisa Gastaldello

11:05–11:20 | Coffee break

11:20–12:15 | Plenary discussion on the approaches presented (moderated by Straccia and Pistollato) • Strengths, limitations, efficiency and feasibility of tools • Complementarity of the different tools and opportunities for collaboration • Potential barriers to uptake (by funders, scientific review boards, ethical committees) and workarounds • Possible incentives for researchers to use these tools to identify replacement strategies or demonstrate the translational relevance of their models in research design

12:15–13:15 | Lunch

13:15–14:30 | Plenary discussion (moderated by Straccia and Pistollato) to explore potential next steps in the context of: • Scientific merit (peer) review • Ethical review (harm–benefit assessment) • Use of these tools to support decision-making in drug discovery and translation • Integration of complementary tools to create an integrated tool ecosystem (presentation by Straccia and Pistollato)

14:30–15:00 | Break

15:00–16:45 | Plenary discussion on ‘The Way Forward’ (Constantino) • Identify converging themes, tensions, and openings for collaboration • Possibility of ongoing working group or workshop series

16:45–17:00 | Workshop close & acknowledgements

Marco Straccia, FRESCI by SCIENCE&STRATEGY SL

Fabia Furtmann, Humane World for Animals | Europe

Francesca Pistollato, Humane World for Animals | International

Marc Avey, Canadian Council on Animal Care (CCAC)

David Azilagbetor, University of Basel, Switzerland

Brian R. Berridge, B2 Pathology Solutions (online)

Celean Camp, Replacing Animal Research, United Kingdom (online)

Conor Delaney, Health Products Regulatory Authority (HPRA), Dublin, Ireland

Guilherme S. Ferreira, GlaxoSmithKline Vaccines, Amsterdam, Netherlands

Maria Laura Garcia-Bermejo, European infrastructure for translational medicine (EATRIS), Ramon & Cajal Health Research Institute (IRYCIS), Madrid, Spain (online)

Annalisa Gastaldello, European Commission, Joint Research Centre (JRC), Ispra, Italy

Kurinchi Gurusamy, University College London (UCL), London, United Kingdom

Laura Holden, University of Birmingham, Birmingham, United Kingdom (online)

Jonathan Kimmelman, McGill University, Montréal, Canada

Simon Lohse, Radboud University, Nijmegen, Netherlands (online)

Bianca Marigliani, Humane World for Animals | Brazil (online)

Julia M.L. Menon, PreclinicalTrials.eu, Netherlands Heart Institute, Utrecht, Netherlands (online)

Merel J. Ritskes-Hoitinga, University of Utrecht, Utrecht, Netherlands (online)

Shaarika Sarasija, Humane World for Animals | Canada (online)

Danilo Tagle, Office of Special Initiatives, NIH, National Center for Advancing Translational Sciences (NCATS), Bethesda, MD, USA

Ignacio Tripodi, Independent Scholar, Barcelona, Spain

Jan Turner, Humane World for Animals | International (online)

Martin Wehling, University of Heidelberg, Heidelberg, Germany

Helder Costantino, Humane World for Animals | International

1. In what ways can the tools presented today enhance the ethical harm–benefit assessment of animal studies, particularly in justifying model selection based on translational relevance?

→ Follow-up prompt: Can these tools meaningfully inform project evaluation by ethics or funding committees?

2. What are the key scientific or technical limitations of these tools in evaluating translational relevance — and what improvements or complementary approaches might help address these gaps?

→ Follow-up prompt: Are there data, expertise, or integration challenges preventing broader applicability?

3. How can these tools better support decision-making during early stages of drug discovery or in go/no-go decisions during preclinical development?

→ Follow-up prompt: Have you encountered examples where these tools altered or could have altered R&D outcomes?

4. What are the main barriers (e.g. institutional, regulatory, cultural, or technical) to broader uptake of these tools in research practice or funding evaluation?

→ Follow-up prompt: Are there key interest-holders whose engagement could help overcome these barriers?

5. What practical incentives — such as recognition in peer review, funding requirements, or publication standards — might encourage researchers to routinely use these tools to assess and justify the translational relevance of their animal models?

→ Follow-up prompt: Could these tools play a role in improving scientific reproducibility and accountability?

1. Considering the tools presented (e.g. for risk of bias assessment, systematic review, or prediction of translational success), what do you see as their main strengths and limitations in supporting evidence-based replacement strategies?

→ Follow-up prompt: Are some tools more feasible or efficient to implement at different stages of research planning or evaluation?

2. How well do the different tools complement one another? Could an integrated or collaborative approach strengthen their utility in assessing and improving translational relevance?

→ Follow-up prompt: Are there synergies between tools focused on quality assessment (e.g. bias) and those focused on predictive validity or model justification?

3. What are the biggest practical barriers — technical, institutional, or cultural — to the uptake of these tools by researchers, ethics committees, or funding bodies?

→ Follow-up prompt: Have you seen successful workarounds or adoption strategies in your field or institution?

4. What role can these tools play in defining key elements of a future ‘replacement checklist' to guide the selection of models and methodologies in preclinical research?

→ Follow-up prompt: Which checklist elements would be most critical to ensure both scientific robustness and ethical justification?

5. How can the wider research community (e.g. tool developers, users, funders, regulators) work together to improve the accessibility, user-friendliness and impact of these tools?

→ Follow-up prompt: Are there gaps in training, infrastructure, or guidance that need to be filled to enable broader use?

1. How can we ensure interoperability between existing tools?

— standards, ontologies, Application Programming Interfaces (APIs), data formats, platform compatibility.

2. What additional functionalities are needed to enable comprehensive decision-making in preclinical research?

— e.g. scoring systems, confidence indicators, recommendations, model comparison dashboards.

3. Who needs to be involved in developing and validating an integrated tool ecosystem?

— researchers, tool developers, ethicists, regulators, funders, AI/data experts.

4. What opportunities exist for cross-project or institutional collaboration?

— joint grant applications to fund the development of an integrated tool ecosystem (e.g. EIC Pathfinder? NIH/FNIH?), open-source platforms, international consortia.

5. How can we align with regulatory and funding body expectations to increase uptake and legitimacy?

6. What kinds of case studies or pilot projects could demonstrate the integrated tool ecosystem utility?

7. How should we validate the predictive value or utility of an integrated tool ecosystem in real-world research workflows?

8. What training resources or guidance documents are needed to promote widespread adoption?

9. What incentives (e.g. funding eligibility, publication, ethics review, reproducibility) could drive the use of a comprehensive decision-making framework in preclinical research?

10. What business model or governance structure could ensure the integrated tool ecosystem’s long-term maintenance and evolution?

— Open science? Public–private partnership? EU digital infrastructure? Others?

11. How do we ensure continuous user feedback and community-driven refinement of the framework?

1. What recurring themes or shared concerns have emerged across the sessions that could serve as a foundation for joint action?

→ Are there areas where there seems to be strong consensus or alignment among different stakeholders?

2. Which aspects of model assessment, tool integration, or translational decision-making appear most ready for collective development or harmonisation?

3. Were there any tensions or diverging views (e.g. regarding feasibility, priorities, regulatory expectations) that should be acknowledged and constructively addressed in follow-up work?

→ What might be needed to bridge these differences?

4. Which specific areas or tools would most benefit from interdisciplinary or cross-sector collaboration moving forward?

→ Could shared pilot projects or common data frameworks accelerate this?

5. What would be needed to establish a collaborative working group or community of practice to continue advancing the work initiated here?

→ Are there existing platforms or networks that could host such a group, or should something new be created?

6. Would you see value in setting up a recurring workshop or forum to continue sharing insights, results and developments in this space? If so, what format and frequency would work best?

→ What would you expect from such a space to make participation meaningful and actionable?

7. How can we ensure that this momentum is not lost — what are the next concrete steps we can take together in the short and medium term?

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.