Abstract

This article explores whether susceptibility to misinformation is context-dependent. For this purpose, a survey experiment has been conducted in which subjects from Germany had to rate the reliability of several statements in the fields of climate change, COVID-19 and artificial intelligence. These contexts differed with respect to the frequency of media coverage, population activity in the form of demonstrations, daily number of deaths, and scientific knowledge. We find some similarities (for example, trust in social networks is positively associated with falling for misinformation in all three contexts) but also substantial differences (for example, risk perception as well as the extent to which people consider evidence to adjust their beliefs seem to matter for climate change and COVID-19 but not for artificial intelligence). More systematic work on context-related differences and narratives is required to design adequate measures against misinformation.

Introduction

Starting with the 2016 US election campaign, economists and psychologists have systematically been studying the influence of false information (Allcott & Gentzkow, 2017; Bovet & Makse, 2019). Early experimental studies in the realm of US politics find that the propensity to engage in analytical reasoning and actively open-minded thinking help to distinguish between fabricated and factual information (Bronstein et al., 2019; Pennycook & Rand, 2019; Ross et al., 2019). Pennycook and Rand (2020) argue that overclaiming one’s own level of knowledge is associated with judging false news stories as accurate. In early 2020, the SARS-CoV-2 virus began to spread and caused a worldwide pandemic. The WHO (2020) labelled the information pollution during this crisis as infodemic. Uncertainty and anxiety about the future fueled conspiracy theories, which on the one hand provided a simple solution to deal with a difficult period of time but on the other hand had serious adverse health effects. Doubts about scientific evidence as well as mistrust of politicians and other authorities made some people believe that, for example, social distancing would not be necessary, face masks did not help to reduce the spread of the virus and vaccination was strategically intended by Bill Gates. During the COVID-19 crisis, trust in facts and evidence seems to play a crucial role to reduce transaction costs, which in turn, help to generate actions to combat the pandemic (Grüner & Krüger, 2020). Taking into account the many warnings and information campaigns by the WHO and numerous scientists, Pennycook et al. (2020) argue that COVID-19 is a scientific issue. In their multi-country comparison (analysing Ireland, Mexico, Spain, UK and the US), Roozenbeek et al. (2020) identify trust in science and numeracy skills as protective factors against falling for false information. Moreover, they find evidence for a monological belief system: the belief in one false news story is correlated with the belief in others. The latter has also been found by Georgiou et al. (2020) and Miller (2020) within the context of COVID-19.

There are some studies (mostly in the realm of electoral politics and COVID-19) that address behavioural consequences of falling for false information. Their findings are mixed, ranging from strong effect to no effect, and other studies concluding that the consequences of distorted information perceptions remain ‘unclear’. In general, false information can reduce trust in traditional media as well as cause misallocations of resources and social damage (Waldman, 2018). One striking example of harmful individual-level consequences caused by false information is the conspiracy theory that Hillary Clinton operated a child sex ring in a Washington pizzeria (Persily, 2017). As a consequence, a man carrying a gun entered the local and opened fire, believing that the local was harbouring young children as sex slaves. Another example of individual-level harms resulting from false information has been reported from Iran where apparently hundreds of people lost their lives because they trusted in methanol to combat COVID-19. Ill-guided choices do not necessarily manifest themselves on the aggregate level. For example, Allcott and Gentzkow (2017) argue that false information was probably not decisive in the 2016 US election.

This article tackles false information by comparing three contexts: climate change, COVID-19 and artificial intelligence. The variation of the contexts serves primarily as a robustness check of the findings. While this study was carried out (December 2020), these contexts differed with respect to the frequency of media coverage, population activity in the form of demonstrations, daily number of deaths and scientific knowledge. COVID-19 and climate change constitute scientific issues. In both contexts, scientists communicate findings and frequently provide behavioural recommendations. Almost all scientists agree that humans are causing global warming, which has multiple consequences, such as droughts, species extinction, and forest dieback (Maertens et al., 2020). In Germany, politicians announced plans to ban combustion engine cars. Fridays for future initiatives are widespread but thwarted by the COVID-19 pandemic. Media coverage in Germany was dominated by the COVID-19 pandemic while the study was carried out. After the Northern Hemisphere has entered winter, the reported number of COVID-19 cases and deaths per day increased significantly. Political measures, such as social distancing and mandatory face-mask rules, were in part subject to controversial public discussions and demonstrations. It should also be mentioned that in Germany no vaccine was approved when the study was underway (but there were some promising candidates). Artificial intelligence seems to be subordinate to the other contexts in terms of urgency and media coverage. While the public discourse on COVID-19 and climate change is more or less about avoiding harm, people in Germany see some potential in artificial intelligence. In a nationwide survey conducted by the statistics portal Statista in September 2020, 65% of the individuals stated that artificial intelligence will lead to job losses but 45% indicated that it helps to avoid mistakes (Statista, 2020).

This article tackles the relevance of false information with the help of the following questions (with a premium on the first one):

What psychological and socio-demographic determinants can explain the susceptibility to false information in the respective contexts? Is there evidence for monological belief systems in the respective contexts? Do individuals who are more susceptible to false information behave differently (for example, in their intention to vote and willingness to demonstrate)?

To address these questions, a survey experiment has been carried out geographically covering inhabitants of the Federal Republic of Germany. Subjects were asked to evaluate the reliability of news items that in fact contain false information.

Study Design

Variable of Interest

The basic design of this study is most related to Roozenbeek et al. (2020). 1 The variable of interest is the extent to which subjects are susceptible to misinformation. For this purpose, subjects had to rate the reliability of short news items on a 7-point scale, ranging from very unreliable (=1) to very reliable (=7). Within all three contexts (climate change, COVID-19 and artificial intelligence), they were shown a total of 11 news items. Seven of them contained false news information (that is, misinformation) and the other four were factual information. The latter was included to prevent the subjects from seeing a pattern in the study and, therefore, to blind the research’s objective. This article deals with the evaluation of false news information: The less reliable the subjects rate these items, the less susceptible they are to misinformation. As ex-ante planned, the factual news items were not subject to any analysis.

For example, within the context of climate change subjects were told: ‘There is no consensus in science about the causes of climate change.’ This statement is not true because the vast majority of scientists agree that global warming is man-made. Another fabricated news story is: ‘Microsoft co-founder Bill Gates urges mandatory vaccination of all people.’ This is one example of the many wrong stories about Bill Gates that went viral during the COVID-19 pandemic. A third example is the following one: ‘In Germany, artificial intelligence already makes diagnoses in medicine and independently takes decisions that affect people.’ This might be the case in the future but not nowadays. Currently, artificial intelligence does not make decisions in medicine on its own.

Determinants

Subjective Knowledge

It captures what individuals think they know about a topic (for example, overconfidence; Ortoleva & Snowberg, 2015). We used the 5-item scale developed by Flynn and Goldsmith (1999). The agreement to several statements such as ‘I know pretty much about …’ was measured on a 7-point Likert scale (1 = Strongly disagree, …, 7 = Strongly agree) for all three contexts (climate change / COVID-19 / artificial intelligence).

Information Avoidance

Standard economics predicts that people incorporate all available information into their decision calculus. Since rational agents process information perfectly, having more information leads to better decisions in expectation. In reality, however, there are many situations in which people consciously forego information even when it is free (Golman et al., 2017). These include strategic reasons and the anticipation of negative news (for example, diagnosis of a medical test). Following Howell and Sheppard’s (2016) 8-item scale, subjects were asked, for example, to indicate to what extent they agree with the statement, ‘When it comes to (climate change / COVID-19 / artificial intelligence), sometimes ignorance is bliss.’ On a 7-point Likert scale (1 = Strongly disagree, …, 7 = Strongly agree).

Risk Perception

It is about the evaluation of hazardous activities and technologies (Slovic, 1987). As a result of experiences and individual attitudes, risks are perceived differently from person to person. The theoretical background to incorporate risk perception is confirmation bias, according to which information is selected and interpreted to be consistent with one’s own expectations and beliefs (Lord et al., 1979). Individuals may be more likely to perceive rather gloomy news items as reliable if it is in line with their prior beliefs. Risk perception was measured on a 4-item scale. Subjects were asked, for example: ‘(climate change / COVID-19 / artificial intelligence) represents an issue where I am personally worried’ using a 7-point-scale (1 = Strongly disagree, …, 7 = Strongly agree). 2

Willingness to Think Deliberately

The dual-process theory assumes that the human brain can be hypothetically divided into two systems: System 1 (intuitive system) and System 2 (reflective system, Kahneman, 2003; Stanovich & West, 2000). System 1 is the intuitive/emotional system and System 2 is the reflective/analytical system. The cognitive reflection test (CRT; originally introduced by Frederick, 2005) aims at measuring whether people are more intuitive or reflective thinkers. The questions of the test are designed in a manner that a quick (that is, intuitive) answer is often wrong but deliberate thinking is likely to lead to the identification of the correct answer. High test scores are associated with reflective thinking, whereas lower scores are associated with more intuitive thinking. Our CRT test consisted of the three items from Pennycook et al. (2020) (a re-worded version of Frederick, 2005) and three items from a non-numeric CRT (Thomson & Oppenheimer, 2016). A sample item question reads: ‘If you’re running a race and you pass the person in second place, what place are you in?’ An intuitive (but incorrect) answer is ‘first’; the correct answer is ‘second’.

Actively Open-minded Thinking

Human behaviour often exhibits path dependency (Lord et al., 1979). It takes time and effort to process new information. Actively open-minded thinking (AOT) is about the extent to which people consider evidence to adjust their beliefs and actively seek for alternative explanations. To measure AOT, we used the 7-item scale of Haran et al. (2013). Here, individuals indicate their agreement on a 7-point Likert scale (1 = Completely disagree to 7 = Completely agree) to statements such as, ‘People should take into consideration evidence that goes against their beliefs.’

Belief in Conspiracy Theories

A conspiracy theory is ‘the idea that a group of people secretly worked together to cause a particular event’ (Macmillan Online Dictionary, 2020). Bruder et al. (2013) argue that conspiracy theories can meaningfully explain political events and societal phenomena. We adopted their 5-item scale, where people are asked to indicate how likely they think various events are on a scale from 0 (0%–certainly not) to 10 (100%–certain). One sample item is: ‘I think that many very important things happen in the world, which the public is never informed about.’

Statistical Numeracy and Risk Literacy

People are exposed to a lot of quantitative information in everyday life. Communicating quantitative information about benefits and risks is only meaningful if people are capable to process basic mathematical concepts (Gigerenzer et al., 2005). An individual’s statistical numeracy and risk literacy was elicited using the eight items of the following tests: the 4-item Berlin numeracy test (Cokely et al., 2012), the 3-item Schwartz test (Schwartz et al., 1997) and one modified item from Wright et al. (2009). For example, subjects were asked the following question: ‘Out of 1,000 people in a small town 500 are members of a choir. Out of these 500 members in the choir 100 are men. Out of the 500 inhabitants that are not in the choir 300 are men. What is the probability that a randomly drawn man is a member of the choir? Please indicate the probability in percent.’ (correct answer is 25).

Trust

Trust is important in social and economic transactions (Fehr, 2010). It helps to establish friendships and reduces transaction costs within companies and organisations (Bromiley & Harris, 2006; Frank, 1988). To elicit trust, individuals were asked how much they trust in democracy, science, mass media and social networks using a 7-point Likert scale, ranging from cannot be trusted at all (=1) to can be trusted a lot (=7).

Political Orientation: Right

The general political attitude reflects, at least in part, the individuals’ perspective toward political measures. In Germany, for example, the right-wing populist political party AfD (Alternative for Germany) stands for criticism of measures (that is, mistrust) taken by the German government. Individuals were asked to state their political attitude on the following scale: ‘In politics people often talk about “left” and “right” to distinguish different attitudes. If you think about your own political views: Where would you place them? Please answer using the following scale.’ (0 ‘entirely left’ to 10 ‘entirely right’).

Other Variables

For further analysis, we asked the subjects about their willingness to vote in the next federal election (1 = No, …, Yes = 5) and whether subjects ever have joined demonstrations addressing climate change, COVID-19 or artificial intelligence (0 = Never done or no option, 1 = Either done or people can imagine to do so). We also collected data on life satisfaction (0 = Completely dissatisfied, …, 10 = Completely satisfied), age, education (1 = No degree, …, 7 = PhD), and gender (1 = Female, 2 = Male, 3 = Other).

Sampling Procedure and Analytical Approach

Sampling Procedure

The recruitment was carried out web-based between 2 December 2020 and 8 December 2020 using the online access panel of a professional survey company. 3 This facilitated the generation of a quota-representative sample of the German population with respect to age, gender, and education. It was ensured that the subjects answered anonymously (that is, no link could be drawn between the decision behaviour in the survey experiment and personal data). Subjects were paid between €1.00 and €1.25 for attending the survey. A total of 2,039 individuals were invited to attend and 554 of them completed the study. On average, the participation lasted 27.5 minutes.

Analytical Approach

Determinants to Explain Susceptibility to Misinformation

Our primary research goal was to find out who is susceptible to misinformation. The subjects were asked to rate the reliability of several statements on a 7-point Likert scale, ranging from very unreliable (1) to very reliable (7). Within each of the three contexts climate change, COVID-19 and artificial intelligence, we presented subjects with 7 statements containing misinformation. To measure susceptibility to misinformation, we calculated an index by aggregating the scores of the answers to the respective items. The higher the score, the more prone the subjects are to misinformation.

Since the dependent variables can take values from 7 to 49, we run ordinary least squares (OLS) linear regressions. In the regression analysis, we use scales with different numbers of items. As a consequence, we provide standardised beta coefficients to make the constructs comparable. A Breusch-Pagan test rejects the null hypothesis of homoscedasticity in the three OLS linear regressions to explain the susceptibility to misinformation in the contexts of climate change (p-value = .0004), COVID-19 (p-value < .0001) and artificial intelligence (p-value = .0027). Therefore, heteroscedasticity consistent standard errors are used in the linear regression model.

As a robustness check, we provide zero-order correlations between the susceptibility to misinformation and determinants resorting to Pearson correlation coefficients. Moreover, we show the results of count data models (cf., Appendix 4, Supplemental material). Since there is considerable overdispersion, we do not interpret Poisson regression models but depict the marginal effects of negative binomial regression models (Cameron & Trivedi, 2009; Long & Freese, 2014).

Monological Belief System

We calculate zero-order correlations (Pearson correlation coefficients) of the statements that contain misinformation within all contexts. To deal with multiple comparisons, we provide Bonferroni adjusted p-values. We also show the 95% confidence intervals for Pearson’s product-moment correlation. In addition, we conduct a between context analysis: the correlations of the aggregated susceptibility to misinformation between the three contexts are depicted. Here, we also correct for multiple comparisons (Bonferroni) and display confidence intervals.

Implications of the Susceptibility to Misinformation

The zero-order correlations (Pearson correlation coefficients) between the susceptibility to misinformation and the individuals’ willingness to vote are calculated for each context. P-values are Bonferroni corrected. The correlations between the willingness to demonstrate (yes = 1, no = 0) and the susceptibility to misinformation within each context are calculated with the point biserial correlation coefficient (which is a true Pearson product-moment correlation). P-values are also Bonferroni corrected.

Description of the Subjects

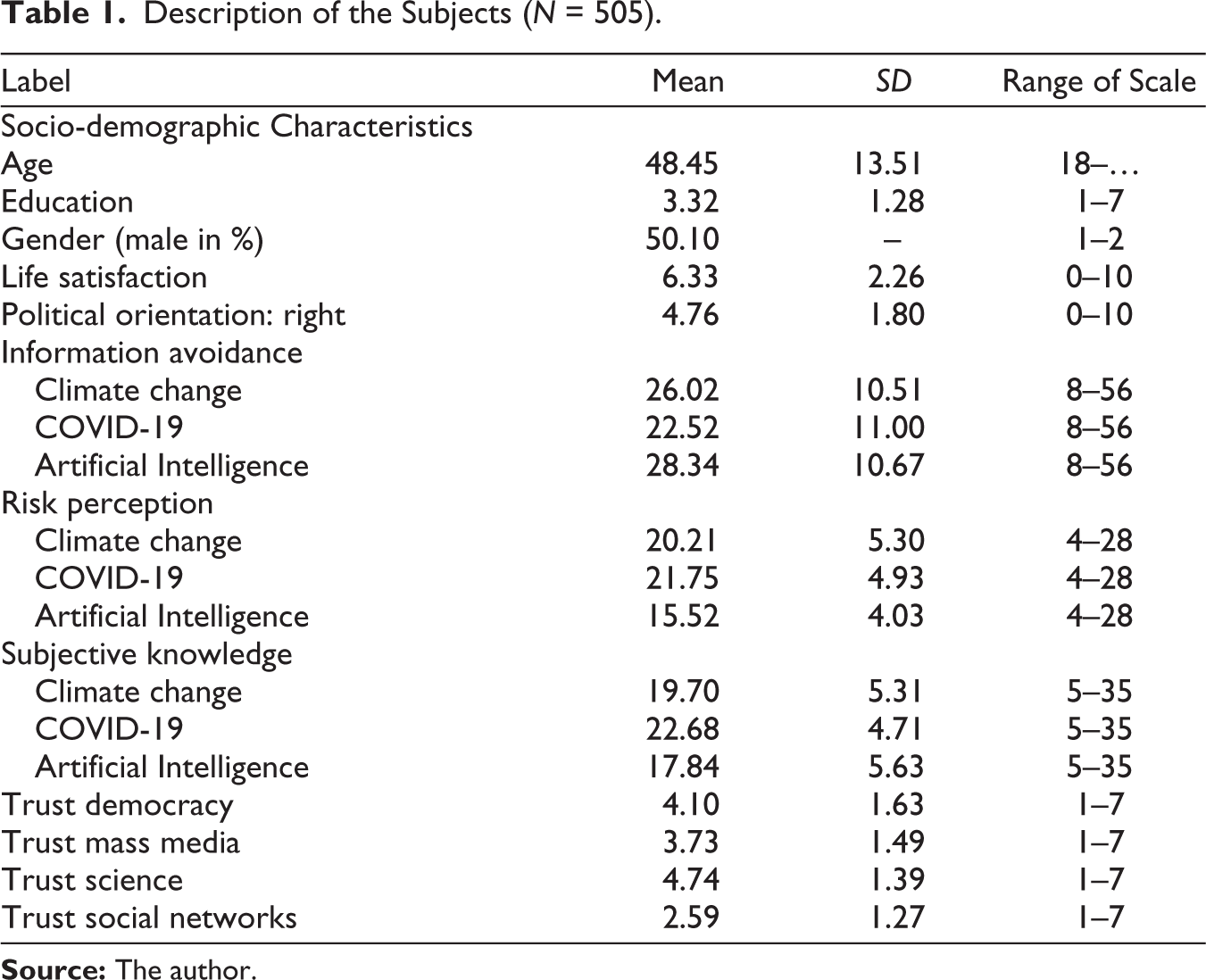

After the survey company has dropped speeders and individuals who gave implausible answers, a total of 507 subjects (252 women, 253 men, 2 other) remained for data analysis (cf., Table 1). 4 Since only two subjects indicated themselves as ‘other’, we dropped them from the sample to make the gender variable dichotomous simplifying the analysis. On average, the subjects are 48.45 years old and roughly one-third of them have at least a university entrance qualification (‘Abitur’). On a 10-point Likert scale (0 = Completely dissatisfied, …, 10 = Completely satisfied), subjects indicated an average life satisfaction of 6.33 and an average political attitude of 4.76 (0 = Entirely left, …, 10 = Entirely right). The tendency to avoid information is most pronounced for the contexts of artificial intelligence and climate change but relatively low for COVID-19. On average, the subjects indicate a high level of risk perception in the contexts COVID-19 and climate change. In contrast, artificial intelligence is not perceived as a major concern. Subjects rated their subjective knowledge highest for COVID-19. The perceived knowledge is lower in the area of climate change and lowest in artificial intelligence. In addition, subjects indicated a relatively high level of trust in science and democracy but less in the mass media and social networks.

Description of the Subjects (N = 505)

Empirical Findings

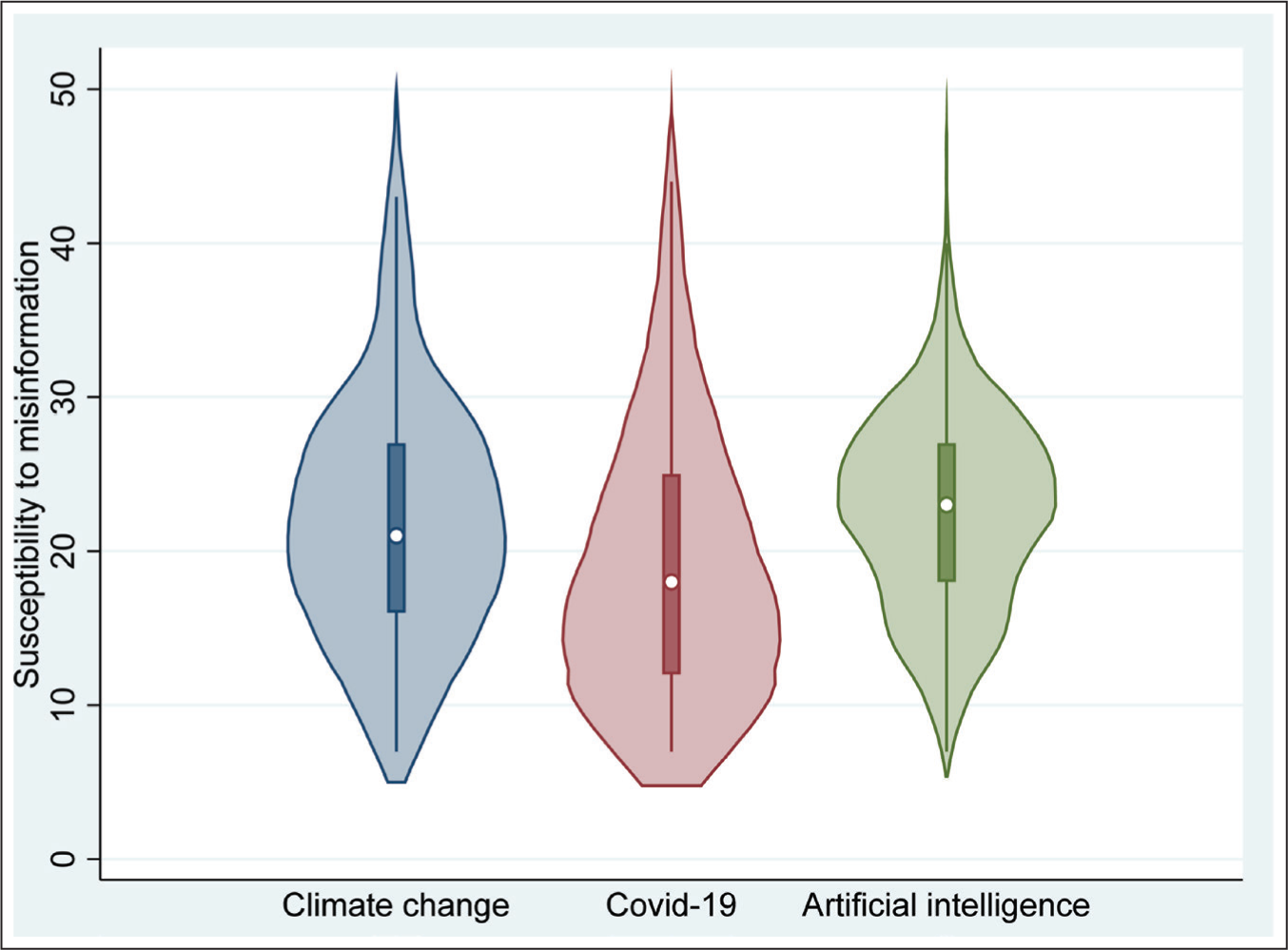

The index ‘susceptibility to misinformation’ can take values from 7 to 49, with a higher value indicating more susceptibility to false information. On average, subjects were slightly more susceptible to misinformation in the fields of artificial intelligence (M = 22.83, SD = 6.93) and climate change (M = 22.28, SD = 8.52) than in COVID-19 (M = 19.02, SD = 8.99). We now want to illustrate the index susceptibility to misinformation for each context with the help of a violin plot, which can broadly be considered as a combination of a box plot and a kernel density plot (cf., Figure 1). It also helps to illustrate the distribution of the data. The median is indicated in the respective violin plots by a white dot: it is 21 (climate change), 18 (COVID-19) and 23 (artificial intelligence). Moreover, information is provided about the interquartile range (IQR), a measure of variability, depicted as the bar in the centre of the violin plot. It is the difference between the upper and lower quartiles (that is, the middle 50%). We get the following differences in the respective contexts: climate change (27 − 16 = 11), COVID-19 (25 − 12 = 13) and artificial intelligence (27 − 18 = 9). The lower adjacent values (first quartile −1.5IQR) and the upper adjacent values (third quartile +1.5IQR) are illustrated as lines stretched from the bar: climate change (7; 43), COVID-19 (7; 44) and artificial intelligence (7; 40).

Determinants to Explain Susceptibility to Misinformation

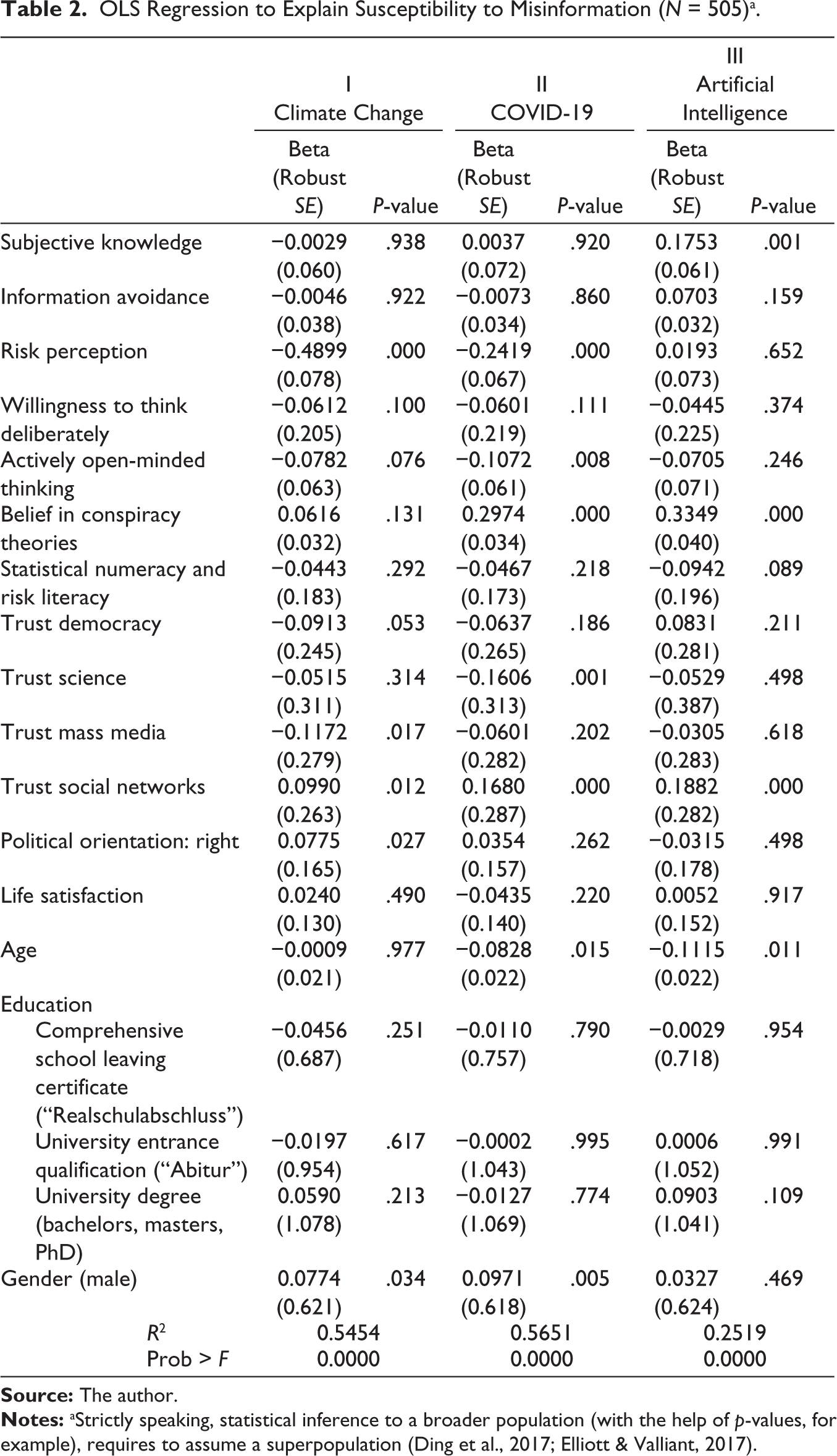

The econometric regressions to explain the association between susceptibility to misinformation and various determinants are depicted in Table 2. 5

OLS Regression to Explain Susceptibility to Misinformation (N = 505)a

Subjective knowledge does not seem to matter in the contexts of climate change (b = −0.0029, p-value = .938) and COVID-19 (b = 0.0037, p-value = .920). While the study was being carried out, both contexts dominated the media’s coverage. It is likely that people have already thought about them and formed beliefs. For artificial intelligence, we find a positive association between subjective knowledge and falling for misinformation (b = 0.1753, p-value < .01). Artificial intelligence is rarely mentioned in everyday life, but it is sometimes part of science fiction: insofar as individuals mix up the source where they have read or heard something, less reliable sources can become important (that is, source confusion). Relatively low standardised beta coefficients and high p-values (panel I and panel II > .8; panel III > .1) indicate that information avoidance appears to be negligible when it comes to explaining susceptibility to misinformation. People might avoid information related to the contexts of climate change and COVID-19 since both are about negative consequences. However, facts about climate change are relatively robust—ignoring information in part does not necessarily lead to a loss of knowledge. In addition, during the COVID-19 pandemic, people were frequently exposed to new information. However, news coverage was so extensive and repetitive, which made it hard to run out of information on the topic. Artificial intelligence was not a big topic in the media when this study was carried out. Thus, it seems plausible that information avoidance cannot explain much with regard to our research focus. There is a substantial association between risk perception and susceptibility to misinformation in the contexts of climate change (b = −0.4899, p-value < .001) and COVID-19 (b = −0.2419, p-value < .001). However, there does not seem to be such an effect for artificial intelligence (b = 0.0193, p-value = .652). These findings may reflect, in part, structural differences across the contexts. The public discourse on climate change and COVID-19 was foremost about avoiding negative consequences (for example, risks and hazards) from society. For example, if people do not consider COVID-19 to be a problem, then they might regard policy measures as a waste of resources and an inadequate way of restricting civil liberties. In contrast, the field of artificial intelligence is not that familiar to people. Negative but also positive consequences can come along and it is hard to predict how things will develop in the future. Subjects’ willingness to think deliberately and actively open-minded thinking are to some extent negatively related to falling for misinformation. The standardised beta coefficients of actively open-minded thinking are larger than those for the willingness to think deliberately within the contexts climate change (b = −0.0782, p-value = .076 vs b = −0.0612, p-value = .100) and COVID-19 (b = −0.1072, p-value = .008 vs b = −0.061, p = 0.111). The manner of thinking seems to play a subordinate role in explaining susceptibility to misinformation in the context of artificial intelligence (p-values > .2). Belief in conspiracy theories seems to be an important determinant to explain susceptibility to misinformation in the realm of COVID-19 (b = 0.2974, p-value < .001) and artificial intelligence (b = 0.3349, p-value < .001). The coefficient of climate change is positive but smaller (b = 0.0616) and the p-value is relatively high (p-value = .131). Conspiracy theories might play a more important role in contexts where things are uncertain. In December 2020, only expectations about the future course of COVID-19 could be made, but with a great deal of uncertainty for people, which fuels conspiracy theories. With artificial intelligence, future developments are also difficult to predict. Facts and action plans related to climate change were relatively stable. Somewhat surprisingly, statistical numeracy and risk literacy seems to protect against misinformation only to a limited extent. In all three regressions, the p-values are quite large (climate change: .292; COVID-19: .218 and artificial intelligence: .089). People often do not have to interpret probabilities and other numbers themselves but are given interpretations by institutions and politicians, which raises the question of the relevance of trust. Let us take a look at trust in democracy, science and mass media. These variables do not seem to protect much against the susceptibility to misinformation in the field of artificial intelligence (p-values > .2). Trust in democracy (b = −0.0913, p-value = .053) and trust in mass media (b = −0.1172, p-value = .017) are negatively associated with falling for misinformation in the realm of climate change. Trust in science (b = −0.1606, p-value = .001) seems to be an important driver against COVID-19-related misinformation. A possible explanation for this is that the content of climate change-related news is quite robust in time (that is, knowledge is to some extent given). In contrast, the COVID-19 pandemic is associated with developing vaccines and other measures to protect against the virus, which was often suggested by scientists. In all three contexts, trust in social networks is positively associated with falling for misinformation (b ≥ 0.099, p-value < .05). On social networks, literally everyone can create and share content according to one’s own preferences. This is partly due to the lack of verification of the posts (that is, no gatekeeper). Individuals who trust social networks may be less critical of information. Subjects who tend to be politically right-oriented are more susceptible to misinformation in the context of climate change (b = 0.0775, p-value = .027). The weak association between political orientation (right) and falling for misinformation in the context of COVID-19 (b = 0.0354, p-value = .262) is somewhat surprising. However, if we run an alternative regression without controlling for the three trust variables democracy, science and mass media, the association of political orientation becomes larger (b = 0.567; p-value = .099), indicating that there is some correlation between trust and political orientation. In Germany, the right-wing populist political party Alternative for Germany stands for mistrust of measures taken by the German government. Political orientation does not seem to be relevant in the context of artificial intelligence (b = −0.0315, p-value = .498).

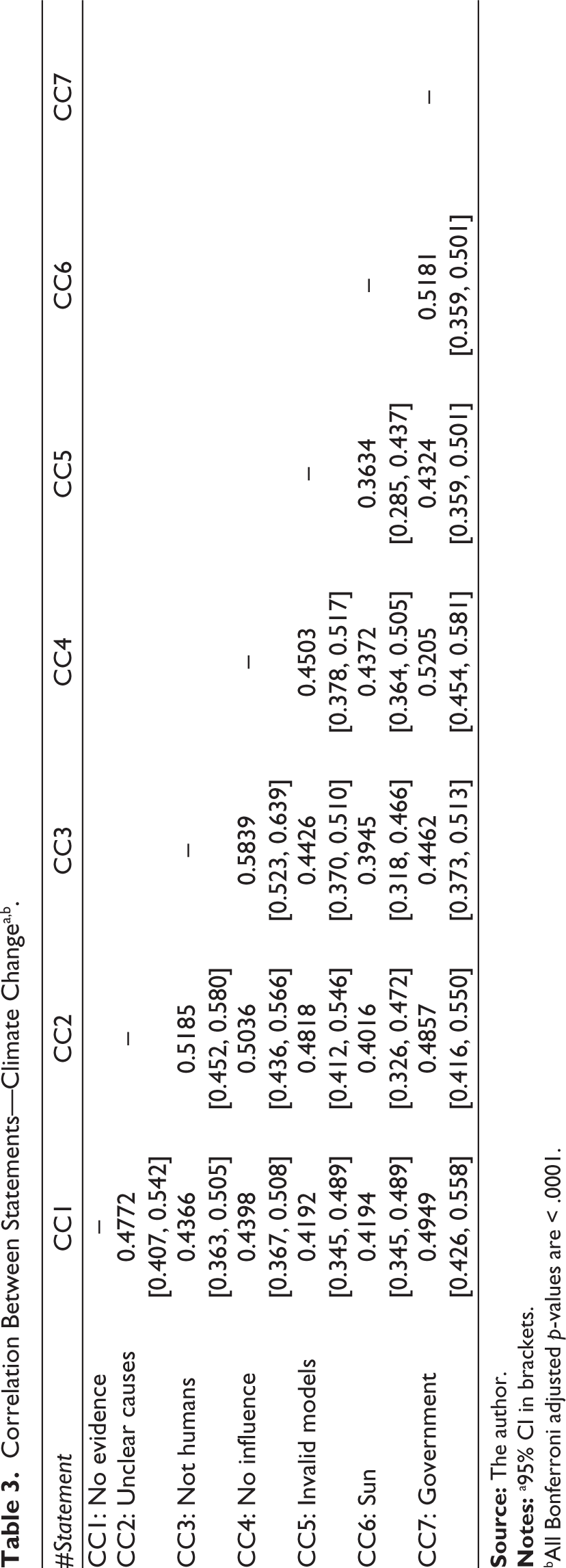

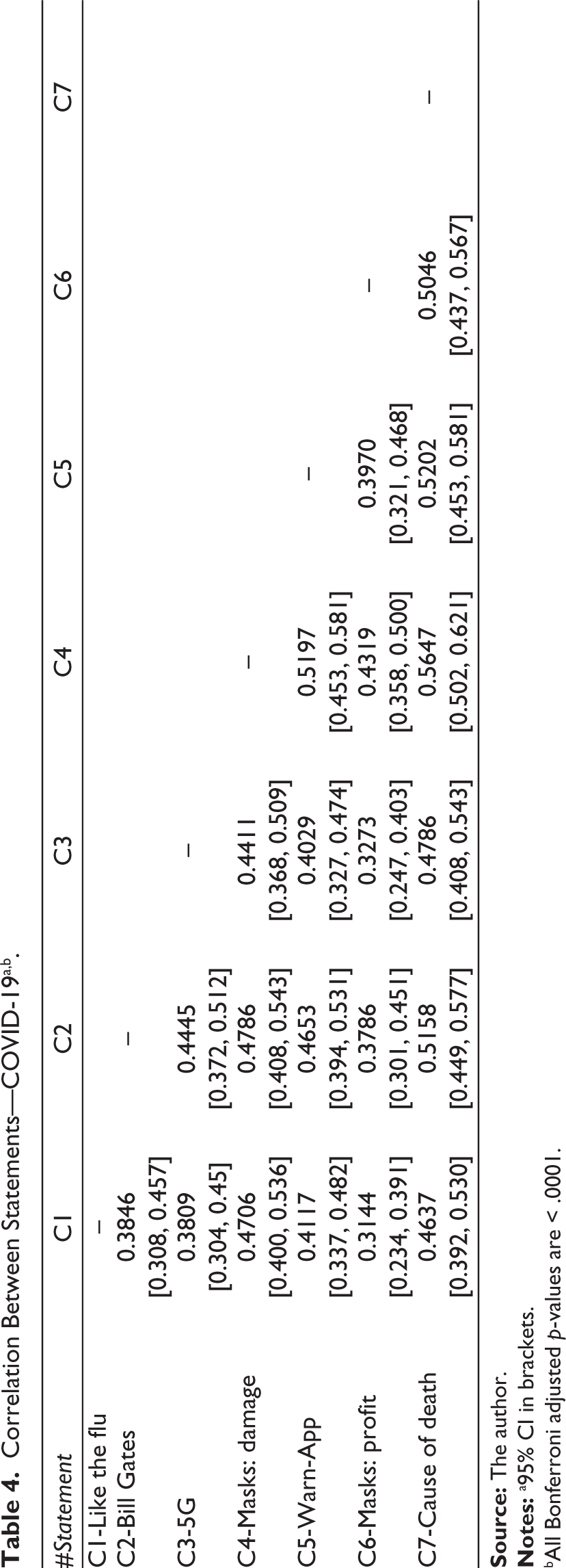

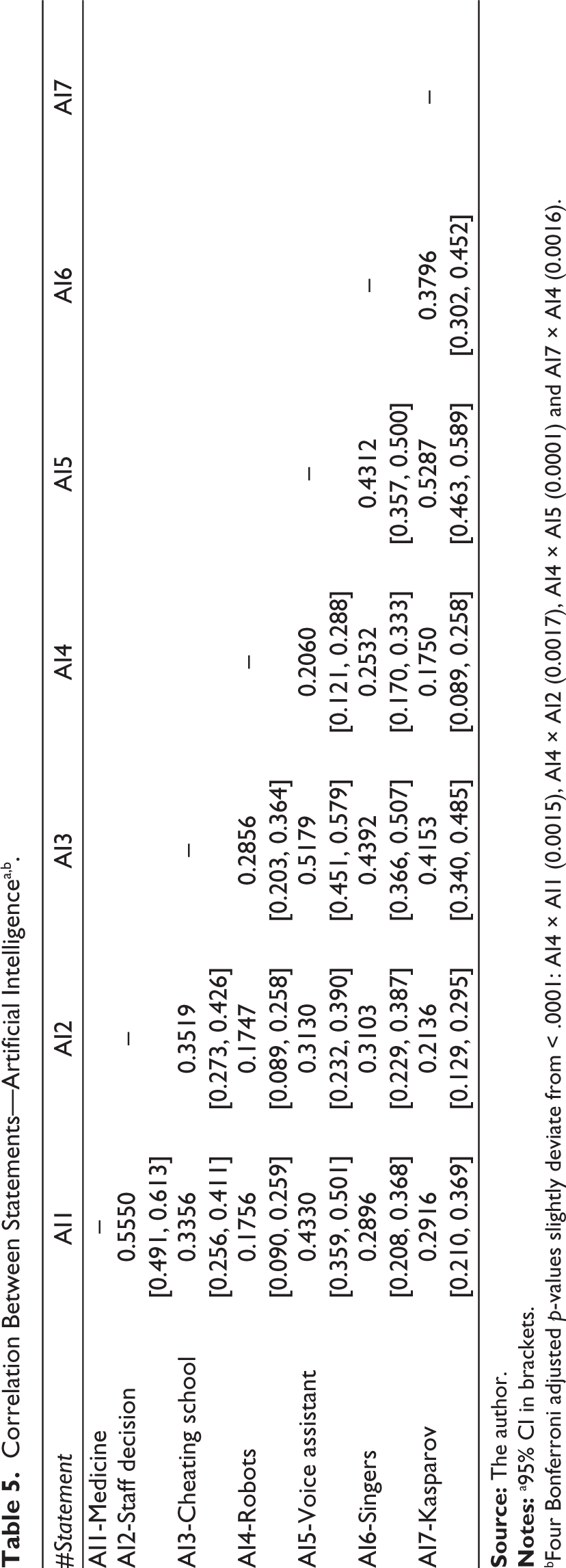

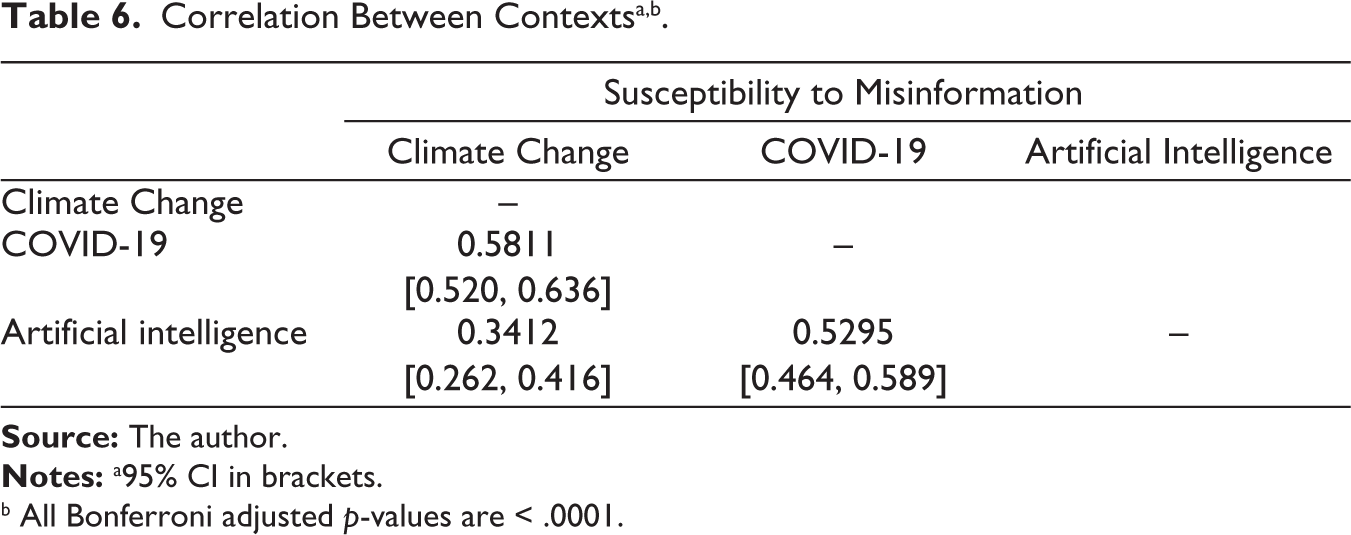

Monological Belief System

The magnitude of the correlation between the statements of the respective contexts can be described as moderate. In other words, there is evidence for monological belief systems (that is, being susceptible to one false news statement has predictive power to the other false news statements), which seems, however, more pronounced for climate change and COVID-19 than for artificial intelligence (cf., Tables 3–5). Individuals may differ in their general ability to identify false news. As a consequence, they do not only fall for one but for a couple of false news statements (that is, general susceptibility to misinformation). This argument is supported by the considerable correlation of the context-to-context susceptibility to misinformation (cf., Table 6). However, this explanation does not account for context-specific differences.

Correlation Between Statements—Climate Changea,b

bAll Bonferroni adjusted p-values are < .0001.

Correlation Between Statements—COVID-19a,b

bAll Bonferroni adjusted p-values are < .0001.

Correlation Between Statements—Artificial Intelligencea,b

bFour Bonferroni adjusted p-values slightly deviate from < .0001: AI4 × AI1 (0.0015), AI4 × AI2 (0.0017), AI4 × AI5 (0.0001) and AI7 × AI4 (0.0016).

Correlation Between Contextsa,b

b All Bonferroni adjusted p-values are < .0001.

Context-specific differences could be explained by the concept of narratives (Shiller, 2019). In contrast to rational choice, individuals often do not think in terms of equations but in stories. Let us presume, for illustrative purposes, that COVID-19 is not perceived to be a problem. As a consequence, policy measures to mitigate COVID-19 may be viewed critically, including the use of masks and social distancing. Similar to a spider’s web, many aspects are interconnected with each other. In other words, stories are bigger than simple statements. Therefore, it is no surprise that the susceptibility to misinformation in one statement is considerably related to the susceptibility to misinformation in other statements. It should be noted that confirmation bias seems to have relevance here, that is, trust in statements as long as it is in line with prior beliefs. Artificial intelligence receives relatively little public attention (compared to climate change and COVID-19). It is conceivable that many people have not formed a clear opinion on the subject (no prior beliefs), which may cause context differences. In addition, context differences may arise because artificial intelligence is structurally different: benefits and drawbacks are possible; whereas climate change and COVID-19 are about avoiding harm.

Implications of the Susceptibility to Misinformation

Intention to Vote

There is a small, negative association between being susceptibility to misinformation and the willingness to vote over all contexts: climate change (r = −0.1661, p-value = .0011, 95% CI = −0.250 to −0.080), COVID-19 (r = −0.2638, p-value < .0001, 95% CI = −0.343 to −0.181) and artificial intelligence (r = −0.1658, p-value = .0011, 95% CI = −0.249 to −0.080). This finding has economic consequences: people who fall for false news stories seem to be less likely to spread biased beliefs via voting.

Activity in Demonstrations

There is a negative association between being prone to climate change-related misinformation and the tendency to attend demonstrations on climate change (r = −0.2878, t = −6.7392, p-value < .0001). A positive sign can be found in the contexts of COVID-19 (r = 0.2615, t = 6.0766, p-value < .0001) and artificial intelligence (r = 0.1311, t = 2.9657, p-value = .0096). In other words, people who believe in misinformation stay at home when it is about climate change and are more active when it comes to COVID-19.

Conclusion

Competition of ideas (for example, about politics or products) constitutes a fundamental of democratic processes. To adequately evaluate courses of action, it is crucial that people do not fall for misinformation. For example, if people are susceptible to corona-related misinformation, individuals may refuse to wear masks or social distancing. There are immediate health implications for people but also consequences to the economy if lockdowns are necessary due to adverse consequences as a result of not following given rules. Recent studies have found evidence for a monological belief system (that is, people who fall for one false news item are also prone to fall for other false news stories, too). Analysing misinformation in the realm of climate change, COVID-19 and artificial intelligence, we can replicate such an effect.

Accounting for mechanisms how people obtain and evaluate information is the precondition for better understanding their susceptibility to false information and resulting ill-guided choices. This, in turn, is the prerequisite for meaningful normative deliberations of how to mitigate people’s susceptibility to false information and its potentially harmful consequences. Adding value to this question, the main focus of this article was on the question of whether falling for misinformation is context-dependent. Context-dependency means that different determinants might be important in different situations. While this study has been carried out, our contexts of interest differed in many aspects. For example, the public dicourse on COVID-19 and climate change were about avoiding harm, whereas people in Germany saw also some potential in artificial intelligence. The threats of climate change were well known but media coverage was dominated by COVID-19 and its uncertainties about future developments. Artificial intelligence received little public attention. Our analysis has shown that there are some similarities among the contexts (for example, relevance of social networks) but the vast majority of determinants is somewhat context-dependent. Further research is necessary to more systematically analyse similarities and differences (and their associated narratives) between contexts.

Supplemental Material

Supplemental material for this article available online.

Footnotes

Acknowledgements

I declare that there are no relevant or material financial interests that relate to the research described in this article. I further acknowledge the valuable comments and suggestions of the anonymous referees and Prabir Bhattacharya, the editor of the Journal of Interdisciplinary Economics.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The financial support of the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation—388911356) is gratefully acknowledged.