Abstract

In a world beset with crises, everyone from professional manager down to the layman is keen to understand how to make quick decisions under emerging disruptive situations. This article, employing self-reflective analysis of critical incidents, describes the author’s personal experiences from the field of adventure sports categorised as high-risk, critical decisions, involving split-second choices—while sky-diving and flying—to trace the mental cognitive processes from a decision maker’s perspective. This is used to discuss and establish a practitioners’ operational framework for high-risk, critical decision scenarios, drawing upon established theories and frameworks from cognitive, social and psychological decision research.

Keywords

The world today is beset with crises, which are more of a norm than otherwise. Just recollect how events over the last few years, starting 2020 have disrupted our lives. Managers have long talked of being faced with VUCA, short for volatility, uncertainty, complexity, and ambiguity, which represent crises as an ongoing feature that businesses face. So, the pertinent question managers dwell upon is how to face these crises. In looking around for solutions, there is a general curiosity among people to know how certain class of professionals at work, especially experts, make split-second, critical decisions on which, sometimes, lives could well depend. While split-second choices are being made all around us in some ways, e.g., in hospitals, on roads, and in our daily routines, there is a special lure to how high-risk adventure sportsmen face the critical situations they confront. This paper reflects on these split-second decision choices in a specific critical context from a practitioner’s perspective. It seeks to draw out learning that may be of interest and utility in the wider professional context in today’s world.

Influential organizational scholar Karl Weick and colleagues write:

Sport also evokes images, and the reality, of living at the edge… Sport performance tests the edge. Although there are exceptions (e.g., emergency medicine, firefighting, turnarounds forestalling bankruptcy), working at the edge is relatively rare in nonsport organizations, though common within sport. What can we learn about organizational success by studying organizations that work at the edge? Dutton (2003) argued that we need to breathe life into organizational studies. The imagery that is evoked in a sport context may facilitate achievement of this goal.

1

(Wolfe et al., 2005, p. 205)

BACKGROUND AND PAST RESEARCH

To set the context, I briefly list key research streams that inform this article’s arguments. The study of judgement and decision-making, as we know it today, can be traced back to the 1940s (Barnard, 1938; March & Simon, 1958; Neumann & Morgenstern, 1944; Simon, 1955), through seminal advances made in the 1970s and later (Kahneman & Tversky, 1979, 1984; March, 1997; Mintzberg, 1973; Tversky & Kahneman, 1973; Weick, 1979/1969; Weick et al., 1999). Currently, the study of decision-making is mainly focused on cognitive and social psychology and is studied in terms of human information processing (Koehler & Harvey, 2004, as cited in Bar-Eli & Raab, 2006). Behavioural decision theory (Kahneman, 2011; Sunstein & Thaler, 2008; Tversky & Kahneman, 1981) concerns itself with how humans make decisions against normative expectations, showing the flaws in human decision-making with possible reasons and displaying key cognitive limitations noticed. Awareness of these biases is expected to lead to better decisions (Kahneman, 2011; Milkman et al., 2009). However, this school does not offer much direct and useful guidance for mitigating crises. Instead, we can find useful indicators in the work of Weick and colleagues on the social psychology of organizing (Weick, 1979/1969), sensemaking and mindfulness (Weick, 1993; Weick et al., 1999). Weick’s work over the years explores many crises and disasters, trying to understand and unravel what happened and why, thus throwing up novel perspectives of viewing these failures and what to do about them (Weick, 1987, 1988, 1993, 1996; Weick et al., 1999). Researchers have applied similar frames to gain insights into other inexplicable accidents, for example, the 1994 incident

during peace-keeping operations after the Gulf War on April 14, 1994, when two US Air Force F-15 fighters shot down two US Army helicopters as a crew of 19 AWACS (Airborne Warning and Control System) air traffic controllers in charge of those four aircraft looked on. (Weick’s (2001) review of Snook (2000))

We also explore learning from two contrasting schools of thought that show both similarity and convergence, as also divergences, in terms of their key concerns, namely, findings from normal accident theory (NAT) and high-reliability theory (HRT) literature (Perrow, 1984; Rijpma, 1997; Weick, 1979/1969, 1993; Weick et al., 1999).

One line of thinking that holds promise with regard to understanding this context is fast and frugal heuristics (Gigerenzer et al., 1999) that has been used to explain some routinely employed but computationally complex activities on the sports field, amongst others. Activities explained are catching a ball in the field, avoiding collision course, predicting the winner and so on (Bennis & Pachur, 2006; Marewski et al., 2010). Since this class of heuristics emerges from experience in the domain, it is more likely that they apply better to situations normally encountered, that is, within the envelope of one’s experience. There is a need to investigate if and how this concept applies to certain high-risk decision scenarios.

The context of sports has been of interest to management and organizational scholars. Wolfe et al. (2005, p. 183) investigate

how studying within the context of sport can contribute to an understanding of management and of organizations with a focus on how such contribution can be achieved with creative and innovative research approaches… We attempt to push the envelope by suggesting how organizational research might benefit by using sport as a context in ways not yet evident in the literature.

In a review of decision-making in sports contained in an editorial review of ‘sport psychology literature’ in the special issue on ‘Judgment and decision-making in sport and exercise: Rediscovery and new visions’, Bar-Eli and Raab (2006) list the initial publications on cognitive sport psychology, namely Straub and Williams’ (1984) collection of theoretical and applied book chapters; a special issue of the International Journal of Sport Psychology on information processing and decision-making in sport, edited by Ripoll (1991); and Singer et al. (1993) handbook of research on sport psychology. Tenenbaum and Bar-Eli’s (1993) review of the cognitive processes in judgement and decision-making (JDM) summarizes the state of research in sports to support the view that decision-making is comprised of perceptual-cognitive, emotional, social and developmental properties, with a call to apply designs that account for their integrative-interactive impact on the nature of decision-making in competitive situations. Recent focus on sports-specific decision-making has been more on individual cognitive processes, viewed as applying in the ecological context (Araujo et al., 2006; Johnson, 2006). The general opinion, however, seems to be that individual understanding and responses to a dynamic context with multiple variables can lead to complex decision-making behaviours that need to be accordingly studied (Araujo et al., 2006; Johnson, 2006).

With regard to the context of parachute jumping and skydiving that is investigated in this article, there are some studies by individuals from the field who have reported on these adventure pursuits, that is, Hart and Griffith (2003), Laurendeau (2006) and Vidovic and Rugai (2007). These papers research skydiving accidents resulting while performing high-skilled manoeuvres—in other words, while operating at the edge. With the advent of high-performance parachutes, the risk of accidents appears to be from more dangerous manoeuvres being performed at higher speeds, closer to the ground, and being unable to control while operating at the so-called edge of performance (Hart & Griffith, 2003; Laurendeau, 2006; Vidovic & Rugai, 2007). Burke (2013a, 2013b) brings up the need for ‘appropriate quality and level of experience’ before going in for more sophisticated manoeuvres while also raising the question of who is to say how much exposure is enough for attempting something difficult and making a call to professional bodies and mentors to exercise judgement and control in this critical sphere.

OBJECTIVE AND METHOD

This article aims to analyse the critical experiences of an adventure sportsperson, to draw out some parallels and learn from split-second choices that may need to be made during such pursuits. In taking the leap from personal to wider generalization, I apply reflective analysis, which is shaped by broader, adventure community-wide culture and norms, imbibed through my own experiences and personal interactions within the community (of the military services’ operational environment), over a decade and a half. Functional social and organizational norms prevailing within specialized professional communities, studied through autoethnographic accounts of incidents and experiences within these communities, can provide useful insights and indicators to understanding the larger context (Rowe et al., 2011). Rowe et al. (2011) discuss the advantages and challenges relating to the use of autoethnography as a robust research method, stating, ‘The autoethnographic story is not, therefore, merely a tale. It provides access to data otherwise not available and represents a “provocative weave of story and theory” (Cohen et al., 2009, p. 233) that potentially can be generalised to others’ experiences and to theory.’

I first describe certain critical incidents (Flanagan, 1954; Lipshitz et al., 2001) I have encountered in my operational-adventure experience while engaged with these extreme adventure sports and then apply self-reflective analytical techniques to draw out my learning, mediated by the sensemaking process (Weick, 1979/1969, 1993; Weick et al., 1999) across these experiences. I conclude with some takeaways from these high-risk, critical decision moments in adventure sports for working professionals in other domains. In the discussion section, I also relate these experiences and learning to currently prevalent theories to deconstruct these theories for practitioners while also sketching an example of applying some of these novel and abstract but important concepts at the practitioners’ level.

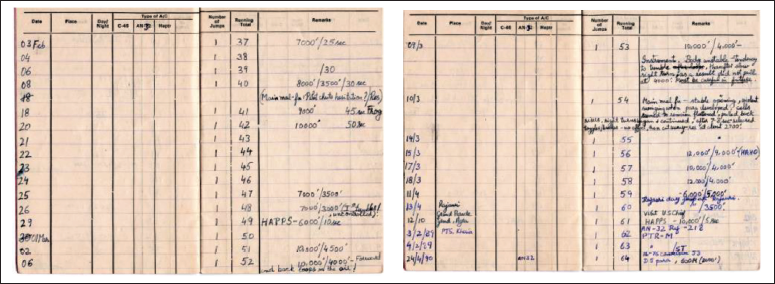

I was introduced to adventure while serving in the Army’s Parachute Regiment. As a young, enthusiastic person, one wants to try out all the exciting things in the world, and, at that stage, one is not scared of the risks involved. So, while there was this adrenalin rush, there was also some hard thinking on my part, during these adventure pursuits that I describe here (e.g., refer to Figure 1 for the brief notes that I took while participating in these activities).

Extracts from Jump Log-book, with Learning Experiences Noted Alongside.

One obvious challenge and limitation with such autoethnographic accounts of specialized incidents and experiences is the testability of the narrator’s propositions drawn from one’s experiences. In support of some of the key learning that I draw out from the experiences narrated, I propose the last incident, Parachute Jump after Recovery as akin to a validation trial (or, call it an ‘experiment’). The inherent risks were very much present in this controlled experiment. So it can be viewed, in a manner, as support for the underlying thought processes relating to the described experiences and reflection thereof.

PARACHUTE JUMPS, SKYDIVING AND CANOPY MALFUNCTION EMERGENCIES

In this section, I briefly describe five critical incidents (as mentioned above), including key related aspects, on which I base my further reflection and analysis.

Initiation and Overview (A) 2

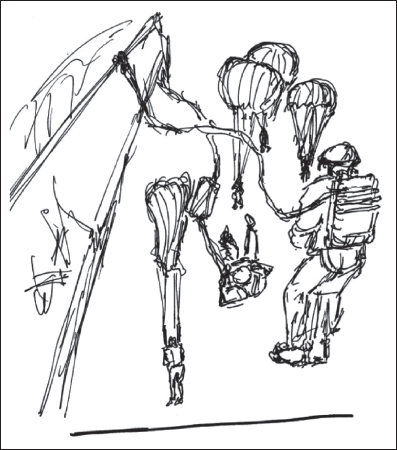

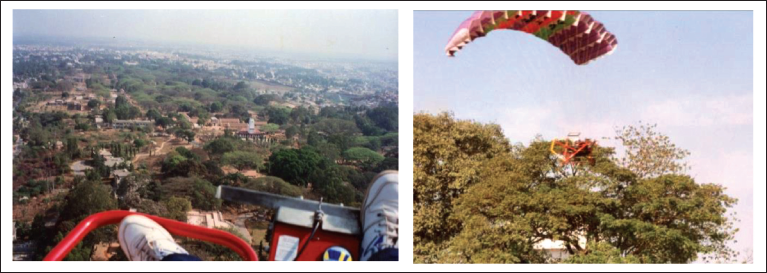

I did my first para jump with a static line parachute from a helicopter at about 1,400 feet. In this case, an anchored static line triggers the canopy deployment, and it is a matter of four to five seconds of falling through the air before you find yourself strung below a parachute overhead, suspended in the air, on your way down in full glory (see Figure 2 for the parachute opening sequence). There is little time to relish the experience though since reality comes knocking, as one needs to prepare for the landing on the ground, with all the instructions for various contingencies racing through the mind.

Slowly, over time, a jumper begins to understand how exits happen in a static line jump. One starts to think deeper and most likely prepares a checklist of points for a safe exit and descent. For me, this mental checklist (regularly updated over time) keeps flashing through my mind every time I have prepared for a para jump since then. There are no heroics in this; only caution, preparedness and control are to be exercised in adventure sports.

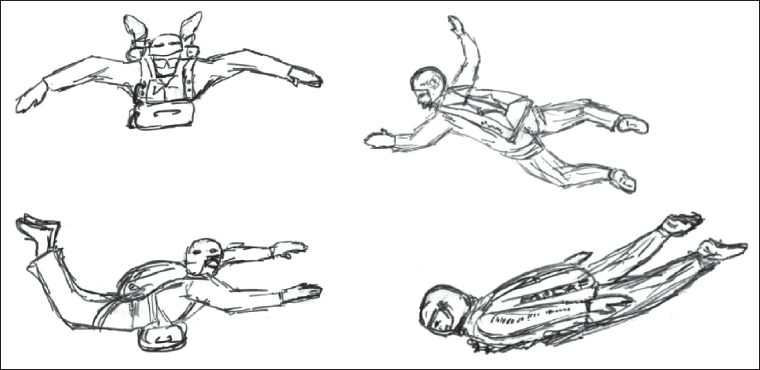

Some years later, I got an opportunity to do the military free-fall course. One graduates here from static line to the more advanced free-fall jumps, implying you are now more in control of your destiny—in terms of deciding when to pull the parachute open and till such time, you keep falling down free—accelerating till you reach ‘terminal velocity’, bringing to life theoretical school physics. In a skydive, one begins by pulling on the ripcord to deploy the chute after a small interval, usually five seconds. This allows one to feel free-fall without it turning dangerous. I remember how my body began to tumble even in this brief time period, much as I tried to counter the tumble and stabilize myself, keeping hands and legs outstretched in a ‘spread-eagle’ position (see Figure 3). Familiarizing with a new domain is an experience that must be learned through the doing–thinking–doing cycle, over and over again; so, it is good to understand the science that governs it.

One deploys the main parachute for a safe landing at 3,000 feet or above, thereby keeping a safety margin. During this process, it is important to be in a stable position, lest the parachute wrap itself around any of the body parts during a tumble, leading to a serious situation. Deploying the parachute at this height gives enough altitude and time to deploy the reserve parachute in case of the main parachute failure. Cases of main parachute failure or malfunction are rather rare—I would put it at less than 0.1 of a per cent, but they do happen, hence the risk, which goes up along with one’s careless attitude for sure. Let me recount my experiences with situations involving this one-tenth of a per cent probability.

Main-chute Full Malfunction During Skydiving (B)

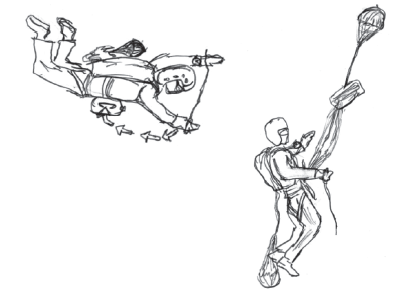

I was into my 12th skydive and getting a little more secure in my control over the skydive sequence. The initial skydives were made using the simpler round canopies, where direction control is achieved with the help of steering toggles whose lines go up along the rigging lines to two small openings on either side to the rear of the parachute, to manipulate these to turn to right or left. I exited out of the aircraft, fell free and stabilized myself in the spread-eagle position, and began to look around me, if all was clear. Glancing at the altimeter on my wrist to note the height, I was also relating to the other skydivers who were staggered below me, as one must not solely depend on the altimeter alone, which could malfunction anytime. So, I was mentally judging my altitude with respect to the other skydivers who were at different altitudes, depending on our sequence of exits. It is important to track by situational awareness of those around you, especially, those below oneself. At about 3,500 feet, I pulled at my ripcord to operate the chute. However, I kept falling and there was no opening shock on my torso and shoulder. Somewhat disoriented, I looked at my hands to confirm if I had actually pulled at the ripcord—yes, it was there in my right hand (see Figure 4). So, what was happening—why had the main parachute not deployed? That something has gone wrong hits you then.

Even as urgency strikes you, you try to recollect what needs to be done. You just have a few seconds now. I still could not feel any slowing down or a malfunctioning main parachute anywhere above me. I again briefly double-checked if the ripcord handle was there in my hand. It was. So, I did not even know what was happening there—why had the main parachute not sprung up and deployed? I then just discarded that line of inquiry as it was getting me nowhere (my available repertoire being rather limited then), focusing on what needed to be done under the circumstances—that is, deploy the reserve. As I tugged and pulled away, sideways and outwards, I remember this thought going through my mind, ‘Well, that’s all I can do—now, let’s see!’ With almost a crunching pull to the small of my back, the reserve parachute opened and yanked me upwards from my free-fall (see Figure 4). What a relief it was! I then saw that the main parachute’s canopy had come out of the bag on my back and was dangling alongside and below me, half-fluttering in the air. I immediately remembered what to do and quickly reeled it in between my two legs, lest it blow up partially now (i.e., partly deploy) and cause the reserve to collapse due to interference with its slipstream—leading to another disaster—surely, I did not want to get into that!

On the ground, conversing with the instructors, I reiterated that I had felt absolutely no shock or pull from the main parachute when I pulled the ripcord. The only possibility that remained was that the spring of the pilot-chute had become weak over time, and it may have simply collapsed above my pack as I fell, not pulling out the main parachute. My unusually stable spread-eagle position in this case, possibly, did not let the slip-stream fill the pilot-chute to pull out the main-chute. Later, once the reserve deployed, it would have just fallen alongside me. This was something new I learned that day, which had not emerged in the training till then.

Main-chute Partial Malfunction During Skydiving (C)

Going further into the course, as we became more experienced in skydiving, we graduated to the more advanced rectangular ram-air design canopy. This permits higher manoeuvrability but also increases the forward landing speed, which needs finer control for a safe touchdown. The system of release of the reserve parachute is slightly different in this design. In contrast to the round canopy, where one deploys the reserve with the main remaining attached in whatever state it has deployed up to (or otherwise), in this advanced canopy system, the sequence of deployment of the reserve parachute is that it first releases the main parachute above and away from the skydiver, and, as it does so, it pulls out the reserve parachute to deploy. Basically, you let go of the malfunctioning main parachute as you deploy the reserve in this case. It is all fine in theory. However, execution holds some challenges. Several jumps down the line, one day, I found myself in a tricky situation. After I had deployed the main parachute at about 4,000 feet, I felt some violent swinging as the canopy developed overhead. As I looked up at the canopy, something did not seem right—the cells were not fully inflated and developed. The steering toggles running from behind my shoulder straps to the parachute wing did not appear to be clear, and so, were not effective in providing directional control.

I soon realized that I was turning slowly to the right. I tried what I could do with the steering toggles, pulling them down sharply and releasing them a few times over to free them, but to no avail. The reality was now beginning to hit me. I had an emergency. I was continually turning right, albeit at a slow pace, as it seemed to me then, but I could not control or stop the turn. This effectively meant that I had no control over the canopy, implying that I would be carried where the canopy would take me, and I would most likely still be turning while landing, with the turns getting faster, which could break my legs or more, depending on the turning speed 3 . As I had some time margin to decide my next move, I wondered if the situation called for a reserve deployment. This also meant, as my mind raced forward, that I would be releasing the main canopy above me before the reserve deployed. So, I faced a hard choice about something that had seemed abstract so far. Was it advisable to let go of some hope that I held onto now, in the expectation that the reserve parachute would deploy correctly? Seconds ticked away, and I needed to make a decision. I was continually looking at my altimeter, monitoring my descent. Even though I did not know then, I was later told by ground-based observers that the speed of my right turns was increasing, and they feared I could go into a spiral, which would get much more dangerous. I finally decided I needed to take the chance now and not risk getting injured on landing. With a sinking feeling in my stomach that this just needed to be done, I pulled the ripcord of my reserve at about 2,700 feet (see Figure 5). I could sense all that was expected to happen in the operating sequence above me. Finally, the reserve deployed correctly and I was safely on my way down.

From subsequent discussions, it was surmised that the rigging lines of the main canopy may have got entangled with the canopy and with one another, resulting in a loss of control and a continual right turn. Ground observers felt that the turns were beginning to increase in speed and they were concerned that I was taking too long to decide.

Powerchute Flying in High Altitude: A Flight Emergency (D)

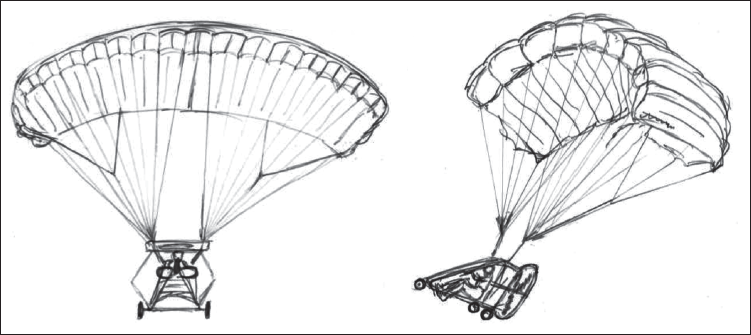

Some years later, I had an opportunity to take up paragliding. From there, I progressed to flying a single-engine powered, collapsible wing glider called Powerchute. This flying machine had a rectangular ram-air canopy (like the skydiving chute described above) and could fly at altitudes ranging up to about 4,000 feet above sea level (see Figure 6). There was some doubt in my mind about the manufacturer’s specifications as it appeared to carry a comparatively heavy engine, and we weren’t too optimistic about the likely glide ratio in case of an engine failure, so we always kept a safety margin while flying. Subsequently, I was to participate in flying this machine in an expedition in Ladakh (altitude of about 10,600 feet). We were going to try and fly these Powerchutes to check out their performance at those altitudes, exploring employment possibilities there. As we flew-in in an air force transport aircraft, the pilot was a course-mate from our training days at the academy (National Defence Academy, Khadakwasla). I remember him telling me that sometimes even they felt buffeted by the strong winds when coming in to land at the Leh airfield. Early mornings were generally the best time, and afternoons were mostly rough for flying due to the high winds later in the day.

Once we had acclimatized and checked out our machines, we were ready to attempt some flights in the vicinity of the airfield. We had two machines, and we found that one of them was able to take off in the very first attempt itself. I was on the other machine that could not get off the ground, despite gunning the engine as much as I could. I tried a few times, before I realized that what I feared was true—this machine, for some reason was under-powered compared to the other one, as I had earlier noted during the trials and preparation at lower altitudes. So, once the other pilots had done their flying on the first machine, I queued up for it, saying that I would just try my hand at it once and fly one or two quick circuits to understand why the machine I had earlier attempted was not able to take off.

By now, the sun was higher up, and temperatures were rising, but it still seemed alright for a quick flight to me. I lined up on the tarmac and revved the engine. The machine began moving forward and then took off. I was off the ground and was planning to gain height and do a few quick circuits. At this stage, it seems the machine did not gain height as quickly, or at all beyond the initial burst, as it should have; and, while I remained focused on controlling the direction and seeing the clearance off the ground, I did not realize the passage of time. My attitude, as I was seated on the machine, could also have hampered clear forward visibility and perspective of where I was headed, as I was leaning backwards into the seat and my legs and the front wheel were raised upwards to the front (see Figure 7). It seems I got involved in some more minor issues and lost a sense of where I was headed, occupied as I was with other things; there was also no radio communication with the machine or the pilot. I do not recall what was going through my mind and when it began to become dangerous instead. I just remember seeing a barbed-wire fencing atop the airfield’s boundary wall looming up right in front of me and I knew in that instant that I possibly could not clear that, but as there was nothing else I could do better then, it being so close, I just kept going, foot fully pressed on the pedal, hoping to clear the wire fencing 4 . I ran out of luck that day!

Parachute Jump after Recovery (E)

After a long period of recovery that followed, it took me some time to regain some modicum of fitness to the extent feasible. About 11 years after my accident, I was again toying with the idea of doing a parachute jump. I do not think I had any heroics in mind, nor was I going to receive any awards or incentive for doing that; it is just that as I had worked on myself over the past few years, overcoming difficulties along the way, I was beginning to develop more confidence again in what my body and I could do (besides knowing, what I couldn’t do). It seemed to me that it would be a perfectly safe proposition, under controlled circumstances, during a Parachute Veterans’ Reunion jump. I mentally reviewed the sequence and the implications many times just to be sure I was right in my thinking. I also checked out my body’s ability to take the impact of a landing by practising jumping up and down and the ability to cushion my fall by rolling immediately upon landing, using the 5-point roll sequence. Once I was sure of this, I went forward as soon as an opportunity presented itself. It was the same feeling of sweat, cramps and aches as the parachute is strapped tight to one’s body! I loosened up my body and kept it warmed up to remain agile. Mentally, I was busy too, going over each step in my mind and rehearsing for what was to come up next. Everything went off as it normally does on a good day. As I moved forward in the queue to exit, and the lights came ‘green’ on to go, I continued my deliberate shuffle towards the exit ramp, maintaining my balance, till it was the moment to step out, taking a controlled leap and tucking myself into a crouched compact shape. The familiar jerk on the shoulders and the upward pull, and soon I was floating down serenely. I looked around, checked to make sure all was ok, pulled at the steering toggles of the parachute to change direction into the wind and controlling to move closer towards the centre of the drop-zone. Then, it was time for the landing impact and roll, which went the way it normally does—not as in practice, but alright nevertheless! A few months later, I got yet another opportunity to test my beliefs and preparation technique in one more parachute jump, which also went off successfully, thereby, in some measure, validating the crux of my self-reflection and learning.

LESSONS FOR DECISION-MAKING FROM HIGH-RISK ADVENTURE

While the narration of some critical incidents of decisions involving elements of split-second choices is by no means extensive in scope, we can glean some helpful learnings from the deeper processes and linkages that appear to be at work behind these. An organizational process’s structure and prevalent culture shape the training of this high-risk activity, which leads to specific techniques and methods we see at play here (Weick, 1987; Weick et al., 1999). A professional environment exists in these organizations that are involved in these activities that are their core speciality. Finally, the individual mind prepares to partake in these activities and readies itself, both psychologically and technically, for the inherent challenges of the task. Hence, the critical incidents and responses described above may not be entirely unique to an individual; these could well be the experiences of many others operating in this professional environment, which cumulatively drive the development of learning and expertise, allowing us to make some generic observations here.

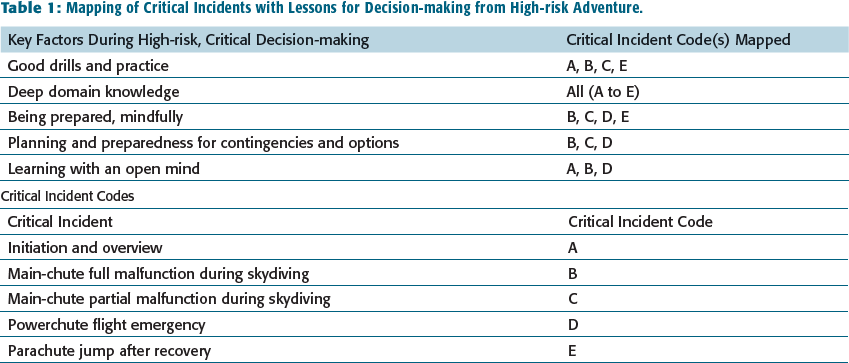

Drawing from these critical incidents, I propose useful indicators for others likely to face similar situations in their respective domains based on key factors influencing high-risk, critical decision-making under time pressure. I have already shared some insights into the decision-maker’s cognitive process by articulating my thoughts as I faced these situations in real time. I will now attempt to present a more coherent operative practitioners’ framework that is relevant and critical, in my view, and hopefully useful to others too. Table 1 presents a mapping of learning highlights from these critical incidents to the key factors identified for decision-making during high-risk adventure, which are discussed below.

Mapping of Critical Incidents with Lessons for Decision-making from High-risk Adventure.

Relevance and Context

At the outset, I would like to make the case that this learning about high-risk, critical decisions would apply to others in a similar situation, with a similar approach to training, practice and emotional mindset as discussed here. Not being an expert by any parameter on any of these adventure activities; as merely an enthusiast, keen on trying all these exciting things and, importantly, learning while engaged with them, actually makes it more relatable to others. I argue that this very contrast makes my experiences more relevant broadly, because these are the kind of situations that could be faced by anyone just beginning to participate in adventure sports, or similar risky situations at their jobs and elsewhere. Beginners in any similar field of endeavour need guidance and a greater awareness of the need for ‘mindfulness’ in such situations (Weick et al., 1999; Weick & Sutcliffe, 2006), so that they are better prepared to handle such emergencies, or, perceived emergencies, that, at times, they may not even have an answer to. The learning herein, if effectively transferred to these individuals, would be most useful to them when they need it. As they pick up experience and their own learning curve deepens, they would then be proficient enough to develop their own safe routines—which is a must and an underlying theme of this article. This process of self-discovery and establishing safe individual routines in an organizational culture focused on a deeper understanding of key linkages is important (Weick, 1987, 1988; Weick et al., 1999).

Key Factors During High-risk, Critical Decision-making

First, let us identify the general principles that significantly influence how one reacts to such critical situations. These emerge from the incidents and subsequent reflection as (a) training, especially in the preparatory stage and continuing thereafter; (b) depth of exposure and understanding of domain expertise; and finally (c) deep emotional involvement with the domain, that is, the specific adventure activity. This indicates the necessity of well-grounded, safety-oriented training procedures and an open-minded, passionate learning environment to acquire meaningful expertise. I elaborate on the key related factors below by deconstructing them into more comprehensible action points for those wanting to apply the learning from the field of high-risk adventure sports to their critical decision-making endeavours.

Good Drills and Practice

Good drills based on collective learning and accumulated expertise, are a must for anyone just beginning to train. They provide a framework to think around and grapple with in an unfolding situation. It will help throw up some instant options or responses in the face of developing scenarios that are not commonly experienced. The participants must also appreciate why these drills make sense so that they stick with them. This will come about with constant communication and interaction, as it happens during one’s experience on the basic parachuting or skydiving course. Good drills are such that they are most effective even in an emergency—you do not need to think too much. These are powerful ‘If so …, then this …’ rules for a beginner confronted with a feasible emergency scenario. Needless to add, these must be carefully reviewed periodically and updated for new learnings and technological changes with time. A close analogy is that of flight checklists, which can lead to disastrous consequences if not continually updated, for example, recent Boeing 737 Max crashes in 2018 and 2019 (Campbell, 2019).

Deep Domain Knowledge

Along with drills and practice, the need for deep domain knowledge is almost a given for anyone wanting to make correct decisions, in any crisis. Deep domain knowledge is essential to help one resolve new problems. Decision-making research indicates that what we call gut feeling or intuition is most likely backed up by deep domain knowledge, years of experience and practice (Kahneman & Klein, 2009; Simon, 1987). This is similar to what is employed by chess grandmasters, which comes from accumulated knowledge, pattern recognition and problem-solving techniques developed over time (Chase & Simon, 1973; Simon, 1987). Dhoni, when at the top of the game in all formats of cricket, explained his decision-making as follows, ‘I don’t plan a lot and believe in my gut feel. But what many people don’t understand is that to have that gut feel, you have to have experienced that thing before’ (The Times of India, 2014).

This leads us to an interesting question: How much experience is enough? This is difficult to answer. To put it simply, (a) it is never enough, as technology, processes and human cognition continually change, and (b) it also relates to the degree of difficulty of the situational demands, which vary similarly. Depending on the conditions encountered in the operating environment, which could get progressively complex as we advance to more stringent operating conditions, one should have had access to adequate learning experience close to the level of complexity likely to be so encountered, if not more. So, there is an element of judgement call to be exercised when trying to set up training standards and pre-requisites, such as what should be the experience level of a skydiver in order to be considered adequate for participation in close formation flying and complex tracking manoeuvres, for example (Burke, 2013a, 2013b).

As discussed earlier, good drills also involve a lot of interaction and communication. During a basic parachute jump course, rookie participants spend many days together, mostly talking about their upcoming jump experience. Their instructors and peers, who have been through the same, are close at hand to share their knowledge, experiences and learning with them. This is an important interactive process, and I expect this triggers a train of thinking in most individuals concerned so that each one is better prepared to think through any situation they face. Weick (1987) feels ‘Stories are important’, and they ‘hold the potential to enhance requisite variety among human actors’. Sustained inquiry and finding meaning of experiences undergone are vital steps in this process of learning (Weick, 1993, 1996; Weick & Sutcliffe, 2006).

Being Prepared, Mindfully

One of the key facets of a thinking adventure seeker and risk-taker is being prepared for what one is getting into. As discussed earlier, being prepared for what is to come and being conscious of what is happening around oneself at all times while being so immersed allows an individual to make better-informed choices in a critical situation if it were to emerge. It is akin to mindfulness, which has ancient roots in Buddhism, but was discovered more recently (albeit, somewhat differently) by Western thinkers and psychologists as applying to the modern organizational context (Weick & Putnam, 2006; Weick & Sutcliffe, 2006). It is ‘best understood as the process of drawing novel distinctions’ (Langer & Moldoveanu, 2000), said to ‘[entail] a unique way of looking at the world’ (Shrivastava et al., 2009) and is exercised through active refinement of existing categories, their continuous updating, and a more nuanced appreciation of the context and how to deal with it (Langer, 1989; Langer & Moldoveanu, 2000). Weick et al. (1999) extend this model of individual mindfulness to the group level to explain the underlying bases of high reliability in organizations—by identifying five relevant cognitive processes that lead to it, which they use to develop their model of mindful organizations (also Weick & Sutcliffe, 2006).

As I recount the two canopy malfunction instances (incidents B and C), I think I was better prepared for what was to come due to this aspect of mindfulness. Some of the thinking had already been done on the ground during my interactions and efforts to understand and learn about the domain-related technical intricacies till then. All I needed to do then was initiate a quick mental-checklist countdown about what was happening to help decipher what I needed to do, as recommended in such an instance. Being mindful about what one is going through helps recognize the limits of freedom available. One of the things uppermost on my mind in these two situations was the altitude, and, consequently, time available for me to make a decision in response to the unfolding crisis. Being mindful involves many things in parallel, for example, alongside the preceding concerns, I was subconsciously double-checking my height against the altimeter on my wrist and visually against the other skydivers and the ground looming below. I did this instinctively here, but this method of application comes close to how high-reliability organizations operate (Shrivastava et al., 2009; Weick & Sutcliffe, 2006; Weick et al., 1999). Continuous updating of current reality, matched with one’s repertoire of experience, understanding and capability, helps to throw up better-suited options that could help mitigate an unfolding crisis.

Novice adventurers must be careful about changes in context or the environment against what they have been accustomed to till then. A powerful example is what I faced flying the Powerchute in the rarefied atmosphere of Leh, which was beyond my expertise envelope. Despite the cautionary note about the treacherous weather there from my well-meaning friend as he flew me into Leh, we were, nevertheless, lulled into thinking all was well in the clear skies we encountered in our short stay leading up to the accident. There were other psychological factors at work too, in tandem, for example, overconfidence bias (i.e., I have done this many times earlier; what’s the big deal?) and anxiety-fuelled achievement-orientation bias (How can I be left behind the others at this?). Coming together, all these caused me to neglect the critical differences between my expertise and the environmental reality, which unfortunately turned out to be disastrous this time.

So, being mindful is about being aware of the differences in what the situation holds out right then; or, at least, asking oneself how things may not be what one assumes them to be, and looking out for the small contrarian signals that should not be there as per one’s understanding of the context.

Planning and Preparedness for Contingencies and Options

To be well prepared for any critical contingency in a high-risk environment, there is never enough thinking and preparation one can do towards that objective. A systematic process to identify likely eventualities—things that could go wrong—along with required responses, followed by realistic practice and simulation, is the only way forward. This is important in view of two tendencies that are widely noted amongst humans: One is overconfidence bias in most professionals (Kahneman, 2011; Kahneman & Klein, 2009; Meehl, 1954), and the second is the general lack of preparedness for what can go wrong in any situation (Kahneman & Klein, 2009). In some ways, the first feeds into the second here.

‘Overconfidence bias’ has been widely noted by psychologists across individuals, including professionals. It is, in fact, so ubiquitous that noted psychologist and Nobel laureate Kahneman put it at the top of his list when asked if there was one thing he could wish away, what would that be (Shariatmadari, 2015). This bias is very much evident in the field of adventure sports. Many novices in these adventure pursuits, who are just about starting out, seem to exhibit these tendencies. While instructors, to boost their trainees’ confidence, might make things appear simpler than they are, many novices get taken in by these actions, and after a little experience, which is under tightly controlled conditions, they actually begin to consider themselves as being in full control. This may be right under appropriate conditions, perhaps, close to ideal, but we really do not know how the world can change, in seconds (Weick, 1993, 1996). Besides, looked at in a way, ideal conditions too are rare, as it means many things coming together, just right (imagine the Swiss Cheese Model argument in reverse) (Reason, 1998, as cited in Shrivastava et al., 2009). The NAT and HRT literature inform us how fragile the operating consistency that humans and machines are able to achieve over an extended time duration, with the two fields differing conceptually in terms of how long the resulting accidents can be avoided (Perrow, 1984; Rijpma, 1997; Shrivastava et al., 2009; Weick, 1979/1969, 1993; Weick et al., 1999). In the air, for example, during a skydive or a powered flight, many things that may be difficult to predict and control can happen. A nuanced awareness of these two theoretical frameworks can help guide our efforts to achieve a safer operating environment.

Similarly, many professionals and organizations display a lack of planning for contingencies, for example, accidents and worse (Kahneman & Klein, 2009; Perrow, 1984; Shrivastava et al., 2009; Weick et al., 1999). Here, we can learn from the military. Every military commander, even while planning and hoping for the best-case scenario, also thinks and plans for a wide range of ‘what if’ scenarios. At the individual level, I have described this to some extent where I recollect my preparation for a parachute jump, that is, being conscious of all that could go wrong and how to be prepared for them. It would help those starting on any such tasks to think of the various contingencies relevant to the scenario and the available options beforehand. Read, discuss and inform yourself beforehand so one is better prepared.

Learning with an Open Mind

As we have seen above, learning is a key facet. There is much for one to learn in order to deeply understand the environment one is stepping into. So, one needs to be open to interaction to learn from others’ experiences and from the accumulated wisdom that is around us. Additionally, one must question everything in this process, because your life is at stake, and things should make good sense to you. Practice drills and rehearse contingencies in your mind and on the ground. Acknowledge the mistakes you make and learn from them. Ask: Could you have done better? How would that have been possible? While diagnosing a crisis situation, one should not get fixated on a particular line of inquiry or trouble-shooting that is evidently not working. Try new lines of inquiry. Think back and begin over again. Correlated to this is that those who stop learning with an open mind or are resistant to it or adopt a casual attitude towards it, are more likely to be set up for disaster, sooner than later. This same attitude is associated with a dysfunctional culture, as opposed to more mindful organizations (Weick, 1993; Weick et al., 1999). Anything that does not make sense or has changed over time or does not fit with one’s experience or knowledge must be investigated further and resolved to fit with one’s overall understanding, if need be by testing, experimentation or dialogue.

The history of accidents, in both NAT and HRT literature, presents another perspective—one of innumerable near-misses—unfortunately, where correct lessons were not drawn. Even at cutting-edge organizations, such as NASA, many of the lessons of the Challenger disaster (Starbuck & Milliken, 1988; Vaughan, 1997) were ignored by the time the Columbia disaster occurred (Starbuck & Farjoun, 2005). Near-misses of this kind were not reported, such as ones that eventually led to the friendly fire incident wherein two US F-15 fighters misidentified two US helicopters as Iraqi Mi-24 ‘Hind’ helicopters and shot both down on 14 April 1994 (Snook, 2000). In our organizational and professional circles, there is a dire need for suitable avenues to disclose such near-misses in an environment of psychological safety; this would lead to more conversations and interactions that could, in turn, help warn others about what to watch out for—such that, something that is deemed not possible, would become yet feasible now (Snook, 2000). In this manner, it would facilitate the use of past mistakes and near-misses as opportunities for forward learning, which the HRT school propagates (Snook, 2000; Weick, 2010; Weick et al., 1999).

Role of Intuition in the Above Experiences

Many times, decision-makers refer to intuition as the mysterious guiding characteristic for their decisions; however, as Dhoni’s explanation of his intuition indicates, we need to delve further into the underlying bases (The Times of India, 2014). I have covered this aspect in the earlier sections, and I will just briefly reiterate that intuition is based on deep domain knowledge and developed over a long time, through constant learning. So, I would not claim this plays a meaningful part in my accounts above; if at all, it would be half-baked and likely misleading, when needed most. However, it could be different in the case of more experienced skydivers with a few thousand skydives under their belt, provided they were operating in an environment similar to where their experience had been acquired. So, mindfulness and sensemaking, rather than intuition, are more relevant in the case of professionals still working on acquiring this expertise. As cautioned by Kahneman and Klein (2009), even experts should not be misled by their supposed intuition, especially if they are operating beyond the envelope of their experience and expertise, besides considering that they may have been simply lucky an odd time in the past.

THEORETICAL CONTRIBUTIONS AND LIMITATIONS

This article is a novel attempt to identify what we can learn from human endeavours that take place at the edge, for example, extreme adventure sports, as called for by Weick and colleagues (Wolfe et al., 2005). Studies such as this can help to bring fresh, riveting perspectives to organizational studies and breathe life into them to generate further curiosity and interest (Dutton, 2003). They also contribute to our understanding by bringing more robust and effective time-tested techniques to discern and deal with emerging crises and disruptive situations.

This self-reflective analysis identifies the key factors at work during high-risk, critical decision-making and learning from split-second choices made in extreme adventure sports, which can be suitably adapted and applied in other, similar environments. The analysis adopts a multi-disciplinary approach to draw upon learnings from rational decision models, cognitive and psychological decision-making research, behavioural decision theory and elements of social psychology of organizing, among others, to propose a practitioners’ operational framework for high-risk, critical decision scenarios. The article also attempts to deconstruct terms and concepts from these research streams, such as behavioural biases, intuition, sensemaking and mindfulness, for assimilation at the practitioners’ level by identifying action points for them in the organizational context.

Some of the limitations of this initial perspective of high-risk, critical decision-making are that individuals may have other coping mechanisms and cognitive-psychological responses to the situations they are faced with. Certainly, individuals with different levels of expertise and experience would handle these scenarios differently, which needs to be modelled to discern the most efficient critical decision-making techniques. These could vary across operational environments, risk levels, organizations and other relevant dimensions; hence, a broader range of follow-up studies are needed to build up more comprehensive analyses, resulting in more robust findings.

These findings need to be further substantiated with future research into other high-risk environments to test their replicability across those, as well as the applicability of these findings for different organizational environments. Wider multidisciplinary research incorporating emerging ideas from neurosciences or neuro-economics would help bring newer insights to this critical domain. The uncertainty and turbulence in the world around us are all too evident, and we need to be prepared for emerging crises, which could be just around the corner.

CONCLUSION

In this self-reflective analysis, I have described some personal experiences from the field of adventure sports that fall in the category of high-risk, critical decisions, involving split-second choices. I have tried to trace the mental cognitive processes through these critical incidents from a decision-maker’s perspective. The graphic description aimed to present a ring-side view of the decisive moments. These descriptions were analysed, and built upon to establish a practitioner’s operational framework of high-risk, critical decision scenarios, while drawing upon established theories and frameworks from social, cognitive and psychological decision research. In doing so, I have also given a practical tinge to these relevant theories for practitioners in the field, by helping deconstruct key concepts that may otherwise seem somewhat abstract. Hopefully, this offers guidance and fresh insights to professionals from other domains, who may be called upon to make similar decisions under crisis scenarios.

This study presents a practitioner’s perspective of high-risk, critical decision-making based on experiences and learning from the field of adventure sports categorized as high-risk, critical decisions, involving split-second choices—while skydiving and flying—to trace the mental cognitive processes from a decision-maker’s perspective. In doing so, we hope this article comes across as an effort to mainstream human endeavours at the edge and to transfer learning therefrom to our organizational environments (Wolfe et al., 2005, p. 205).

Footnotes

ACKNOWLEDGEMENTS

This work is a humble tribute to the Parachute Regiment personnel at all levels who toil selflessly to uphold the security of the nation. It is based on a small aspect of their range of endeavours, with the hope that this will provide impetus to further research into their professional context to discover deeper insights and help improve overall effectiveness.

DECLARATION OF CONFLICTING INTERESTS

The author declares that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this article.

FUNDING

The author received no financial support for the research, authorship and/or publication of this article.

NOTES

e-mail: