Abstract

This study investigated the impact of Personal Learning Environments (PLE) on the development of self-regulated learning (SRL) skills among postgraduate learners in China. 213 participants, divided into an experimental group (n = 113) using a researcher-designed PLE platform and a control group (n = 100) employing face-to-face instruction, joined a course on International English Language Testing Systems (IELTS). A mixed-methods approach was adopted, utilizing the Online Self-Regulated Learning Questionnaire (OSLQ) to explore SRL experiences, and weekly goal-achievement reports. Results demonstrated improvement in SRL skills in both groups, with the experimental group exhibiting significantly greater gains. Quantitative and qualitative results revealed that participants excelled in goal fulfillment but struggled with time management, help-seeking and self-evaluation skills. The study confirmed the positive influence of the PLE on postgraduates’ SRL skills, with implications for online learning design and the potential for PLEs to effectively promote SRL skills in online learning contexts.

The pandemic initiated a digital transformation and forced educational institutions at all levels to adopt online learning. Previous studies have indicated that learners find it difficult to study efficiently in a computer-based learning environment (Duffy & Azevedo, 2015; Kizilcec et al., 2017), and self-regulated learning (SRL) is the key to successful participation in online courses (Ejubovic, 2019). SRL is characterized as a complex, evolving procedure wherein individuals proactively participate in formulating learning goals, subsequently striving to manage, calibrate and direct their cognitive, motivational and behavioural capacities (Pintrich, 2000). This procedure is both coordinated and circumscribed by the individual’s aims and the environmental features inherent to the learning setting. Within the realm of online learning environments, substantial correlations have been identified between academic outcomes and comprehensive SRL (Cicchinelli et al., 2018; Greene et al., 2014).

PLEs refer to the social material entanglement involved in people’s learning and a method of formulating contemporary ideas about how people learn (Dabbagh & Castañeda, 2020). PLEs are typically described as learner-designed learning environments, involving functions such as finding, selecting and using tools and resources to pursue learning goals (Dabbagh & Kitsantas, 2012). They are widely used in educational settings (Tuah et al., 2015) to cultivate learner autonomy in organizing their academic pursuits through the practice of self-regulated learning (SRL) skills.

SRL skills such as self-reflection, thinking skills and other learner competencies were all mentioned as being important in the conceptualization and development of the PLEs (Attwell et al., 2013; Dabbagh & Castañeda, 2020; Ramírez Mera & Tur, 2021). In a recent study, Tur et al. (2022) unveiled the influence of SRL on the progression of the PLE concept through several dimensions: establishing the groundwork, providing frameworks for interconnected endeavours, broadening digital platforms with the incorporation of social networks and expanding learning activities through the utilization of e-portfolios and other pedagogical elements.

Despite the abundance of research demonstrating the effectiveness of online platforms in enhancing learners’ self-regulated learning (SRL) abilities (Broadbent et al., 2020; Segaran & Hasim, 2021), the impact of Personal Learning Environments (PLEs) on learners’ SRL skills remains strikingly limited (e.g., Ellili-Cherif & Hadba, 2016). Furthermore, the extant literature on self-regulated learning (SRL) and Personal Learning Environments (PLE) targeted vocational training and undergraduate education, as well as primary and secondary education. However, while few studies have specifically examined the postgraduate context (e.g., Blaschke, 2014), the regulatory processes in graduate students are critical for their computer-supported collaborative learning (Järvelä et al., 2016). Therefore, we set out to explore the impact of PLEs on postgraduates’ SRL skills, using international English Language Tests as the subject matter. The following research questions were raised:

Q1: Can the PLE-IELTS platform better improve postgraduates’ online self-regulated skills compared with traditional face-to-face teaching?

Q2: What were the postgraduates’ perceptions of using the PLE-IELTS platform?

To answer these questions, the research developed and applied a PLE platform for IELTS learning at a comprehensive university in China. The quasi-experiment and an open-ended questionnaire were used to compare learners’ SRL skill development between the experimental group and the control group.

Literature review

Personal Learning Environments

Over the past decades, researchers have tried to define Personal Learning Environments (PLEs) from the following three different perspectives: first, PLEs as platforms, which regards PLEs as a type of e-learning system that is structured on a model of e-learning itself rather than the model of an institution (Downes, 2010). PLEs enable learners to adjust, select, integrate and use various software, services and options based on their needs and circumstances (McLoughlin & Lee, 2010); second, PLEs as source aggregation, such as a self-defined collection of resources, services, tools and devices which can help teachers and students shape their personal learning and knowledge networks (Fiedler & Pata, 2010); third, PLEs as new educational methodology, such as a social pedagogic approach to using technology for teaching and learning (Attwell, 2021). In this research, we define PLEs as an e-platform:

PLE is a knowledge-based, learner-centred, learning resources–guaranteed platform with learning networks as the pathway and teaching as facilitation and a complementation.

Personalization and self-control of PLEs can benefit learners, and the Web 2.0 or 3.0 facilities and tools used in PLEs may facilitate knowledge-sharing among learners (Gaytan, 2013). Students can take on new roles because they have more control over what they learn and how they learn in two-dimensional contexts, regardless of where they are (Carrasco-Sáez et al., 2019).

Self-regulated learning

For the last decades, with the prevailing online learning, research in self-regulated learning (SRL) has proliferated. Self-regulation is the ability that students should have in order to actively self-learn and self-direct their way through knowledge construction. Sitzmann and Ely (2011) defined SRL as ‘the modulation of affective, cognitive, and behavioural processes throughout a learning experience to achieve a desired level of achievement’ (p. 421). Self-regulated learning occurs when students think and act in a systematic manner to achieve their goals. These students are motivated, active participants who pay attention to the learning process (Zimmerman, 1989).

In the online learning environment, the capacity for SRL is regarded as a critical factor for successful online learning outcomes (Chiu et al., 2016). Students were expected to be highly self-directed in the midst of multiple, dynamic, interactive and non-linear sources of information in their online learning. However, studies claimed that undergraduates had difficulties self-regulating their learning (Barrot et al., 2021; Kapasia et al., 2020).

Recent studies and meta-analyses indicated that SRL is strongly associated with environmental construction, task strategy, feedback, time management, assistance-seeking and self-evaluation (Gambo & Shakir, 2021; Singh & Miah, 2020). Despite these findings, the measurement tools and instruments to measure SRL for online learning environments are limited. Two recent reviews (2008–18 and 2007–19 respectively) on SRL measurement tools for mobile learning environments conducted by Araka et al. (2020) and Palalas and Wark (2020) found that the Online Self-Regulated Learning Questionnaire (OSLQ) developed by Barnard et al. (2009) was one of the most popular questionnaires used (Martinez-Lopez et al., 2017; Vilkova & Shcheglova, 2020).

The OSLQ was mainly used to assess students’ use of SRL strategies in the online or blended learning environment. It contained 24 items that are used to measure six SRL strategies which include goal-seeking, help-seeking, time management, task strategies, environment-structuring and self-evaluation. The reviews also indicated that little research has been done on postgraduates’ SRL, with most of them focusing on science, technology and medicine.

The relationship between PLEs and SRL

Research on PLEs and SRL has revealed a growth trend with significant technological advancement prior to the global spread of COVID-19 (Ceron et al., 2020). This study found a total of 271 articles regarding ‘online learning environment self-regulation’ on the Web of Science, with 62 on education dating from 2006 to 2022. Among the 62 articles on education, we located 24 on PLE self-regulation. Şahin and Uluyol (2016) identified a correlation between the degree to which PLE improves students’ autonomy and their decision-making capacity. J. W. Lin and Tsai (2016) concluded that PLEs play a significant role in cultivating group or community awareness, which greatly supports and sustains high SRL. Perera and Gardner (2018) reported that SRL skills were supported by PLEs. In turn, Yen et al. (2021) found that the five SRL skills (e.g., goal-setting, environment-structuring, time management) predict the sense of control, level of initiative and self-reflection in PLE management, leading to better learning.

Recently, Nan Cenka et al. (2022) presented an overview of research conducted between 2010 and 2020 on how the Personal Learning Environment (PLE) supports self-regulated learning (SRL). They concluded that the PLEs are suitable to support SRL strategies because of the shared ideas of personalization and ownership and the need for a student-centric platform to support LMS and a platform to promote continuous learning. However, it was also found that PLEs are not suitable for students with low SRL skills.

Method

Context

The IELTS course being investigated is a compulsory nine-week language course offered to postgraduate students of various disciplines at Wenzhou University in China. The course is part of a decade-long PLE project, which began in September. The course is offered by the School of Foreign Studies, and upon completion, participants receive one academic credit. The primary aim of the course is to improve learners’ academic English proficiency.

Participants

Altogether, 213 Year-1 postgraduate students who selected the IELTS course were conveniently recruited in the research. Participants’ ages ranged from 23 to 27 years old (male: female = 68:145, liberal arts: social sciences: natural sciences = 148:27:38). The participants were divided into the experimental (i.e., PLE) group and the control group (i.e., face to face only).

The researchers did not randomly assign participants to the two groups in this study, due to practical and ethical considerations. Instead, the classes were split based on enrolment time, with the first 113 students placed in the experimental group and the remaining students in the control group, following the school convention. Therefore, it can be argued that participants had equal chances to join either of the classes, which was semi-random from the outset.

However, ensuring similar attributes among participants is essential (McMillan & Schumacher, 2010). Therefore, to confirm group similarities, the researchers referred to participants’ Oxford Quick Placement Test results (Oxford University Press, 2001), taken at the start of their programme. Both groups were found at borderline B2 level (Scoreexperiment = 40.21, SD = 4.22; Scorecontrol = 40.54, SD = 4.05). A t-test suggested no between-group significant differences (t(211) = 0.576, p = .57).

The two groups were instructed by two different teachers, who followed the same teaching syllabus. Both teachers were female and held master’s degrees. The experimental group teacher had five years of teaching experience while the control group teacher had six years of experience. Both of them had been trained to deliver the instructional intervention and met regularly with the lead researcher. This procedure helped to minimize biases resulting from instructional differences.

Materials

Course syllabus

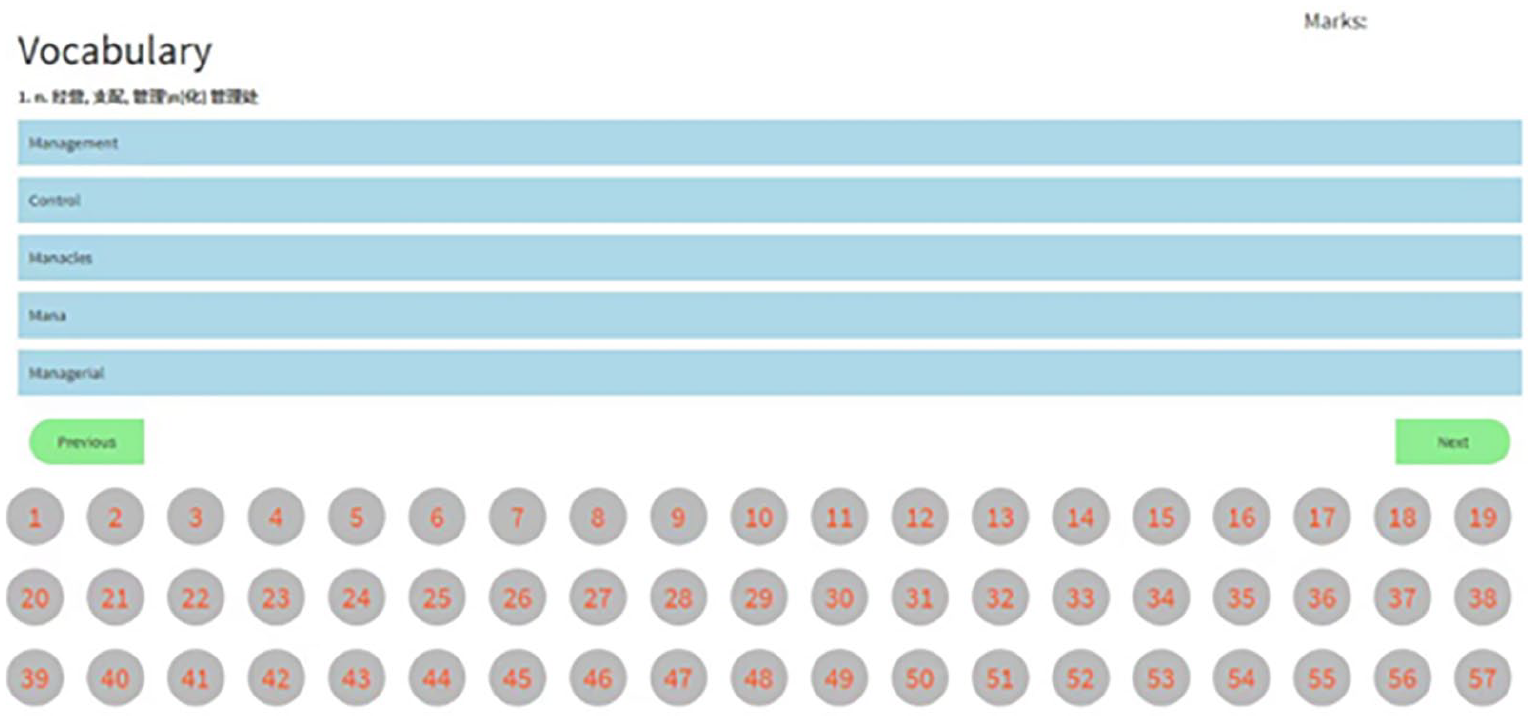

Various materials were designed and uploaded to both the PLE-IELTS platform and the cloud drive, catering to different proficiency levels and task formats for the four IELTS skills: speaking, writing, reading and listening. Learners were given the freedom to select the sequence and timing of sub-components within tasks. For instance, the researchers created 50 test papers online for the 5,040 IELTS core vocabulary exercises. Each test included 100 words. Learners in the control group could complete the exercise on their phones/computers, while the experimental group could log in to the PLE platform to complete the same exercise (see Figure 1).

5,040 IELTS core vocabulary.

The PLE-IELTS platform

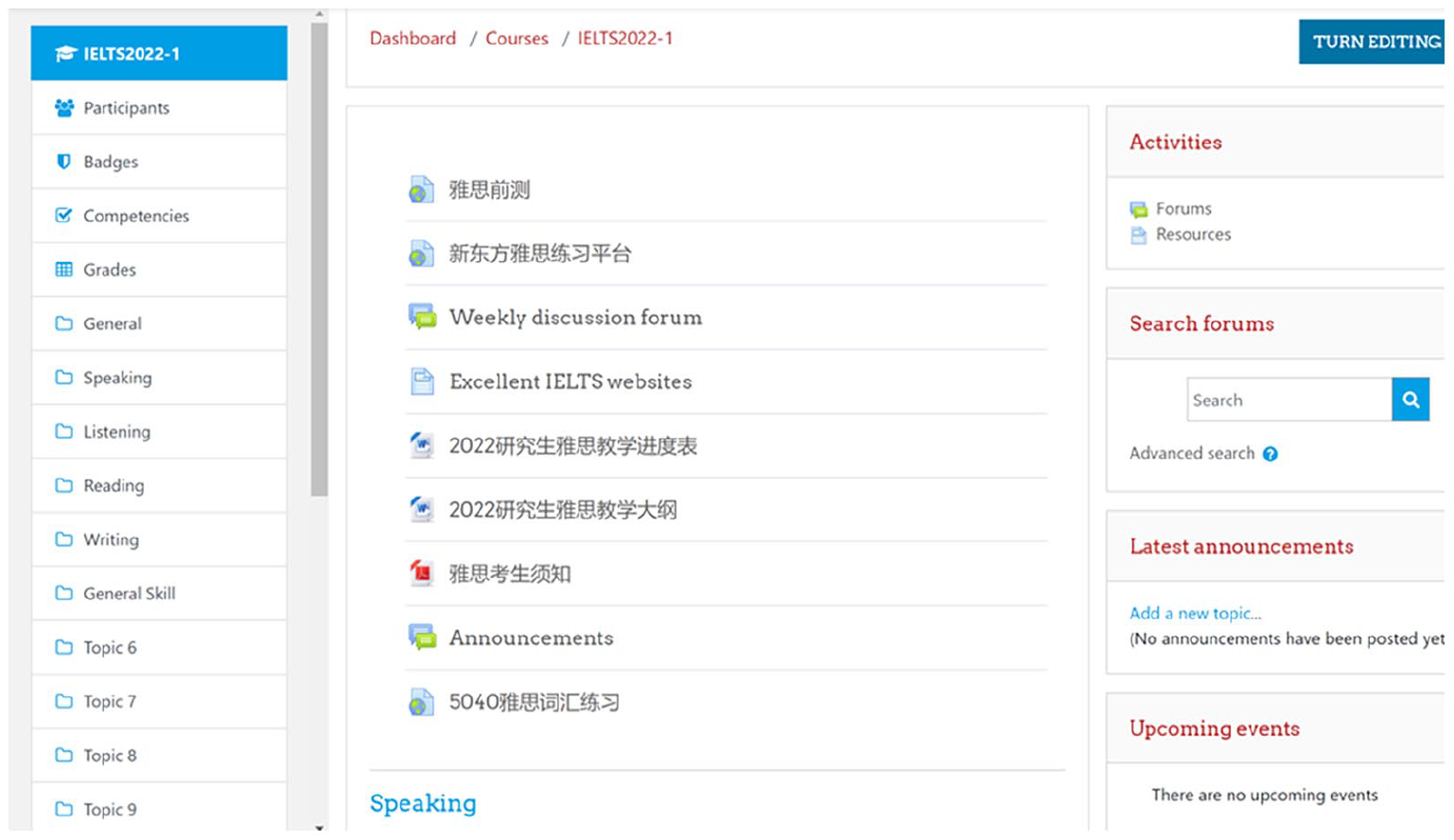

The infrastructure of the PLE-IELTS platform was predominantly constructed upon the open-source Learning Management System, Moodle. To enhance the level of personalization in the PLE-IELTS platform, a diverse range of internet application resources, such as Dropbox, Google Doc, Quizlet, TEDEd, Vimeo, YouTube, Turnitin, BigBlueButton, Zoom, Panapto, Trello and Slack, were also employed.

Learners were motivated to engage actively in the learning process, explore the content, engage in self-reflection and work towards achieving personal learning goals. The resource materials used for this course are presented in Figure 2.

PLE-IELTS platform.

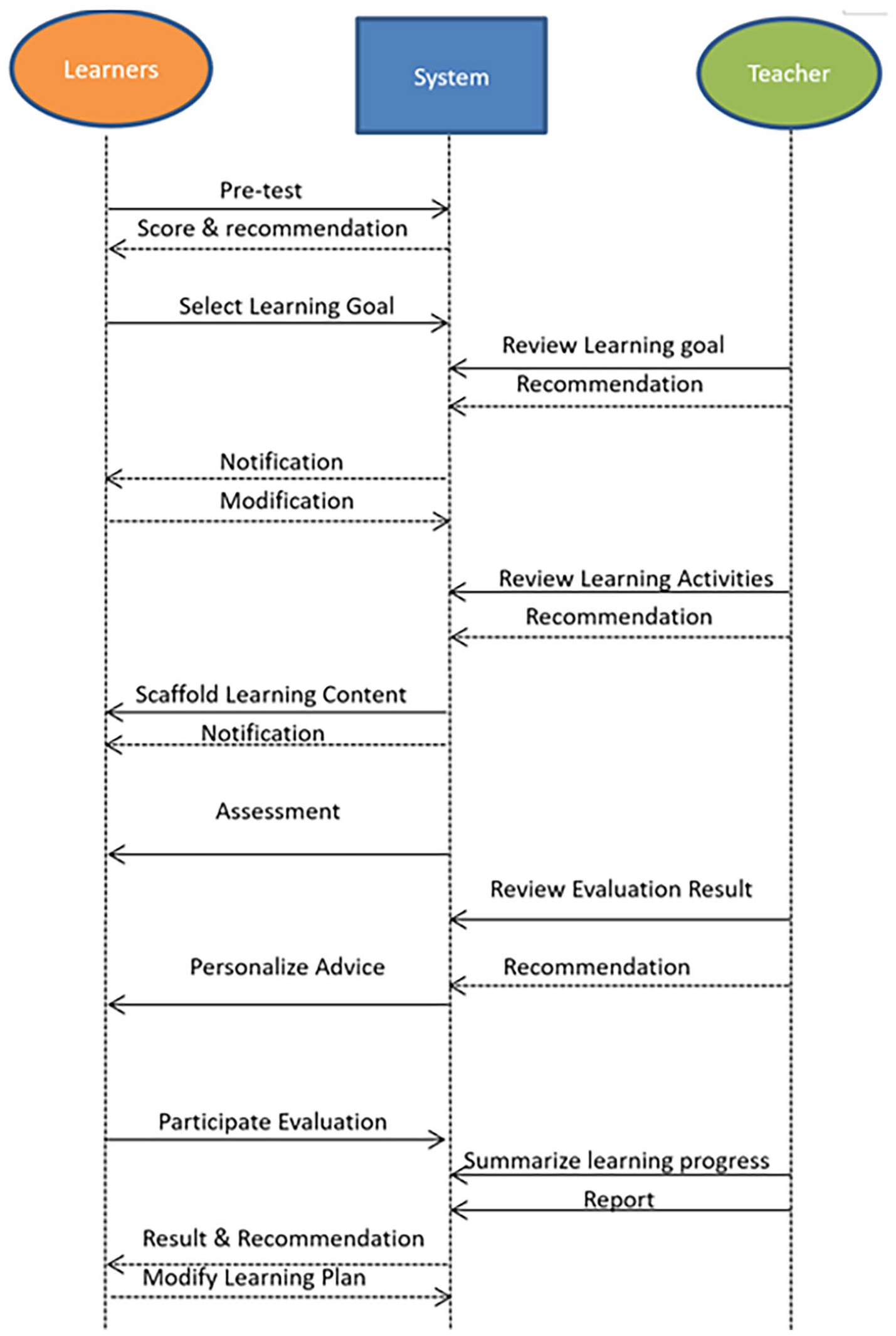

Taking reference from the learning-path design proposed by Xu et al. (2023), the experimental group learners were provided with scaffolding from both the system and their teachers. These scaffolds were adaptable to the learners’ individual needs and the characteristics of the task at hand. In system-based scaffolding, the PLE platform employed various measures such as learning time and scores to analyse learner performance and behaviour to provide feedback and subsequent learning content. Teacher intervention — feedback on task levels, learning pace and learning goal-setting, etc. — was given individually in time on the platform. Furthermore, the teachers involved in the project were trained to utilize the platform to deliver interventions and instructions based on the learning analytics generated by the system (see Figure 3).

The intervention designed in the PLE platform (Xu et al., 2023).

The face-to-face class

The control group joined the face-to-face class with all the same learning materials as the experimental group available on a cloud drive, but students had no access to the PLE. Face-to-face instructions were similar to the PLE group’s. However, most of the feedback was given in class.

Instruments

The OSLQ

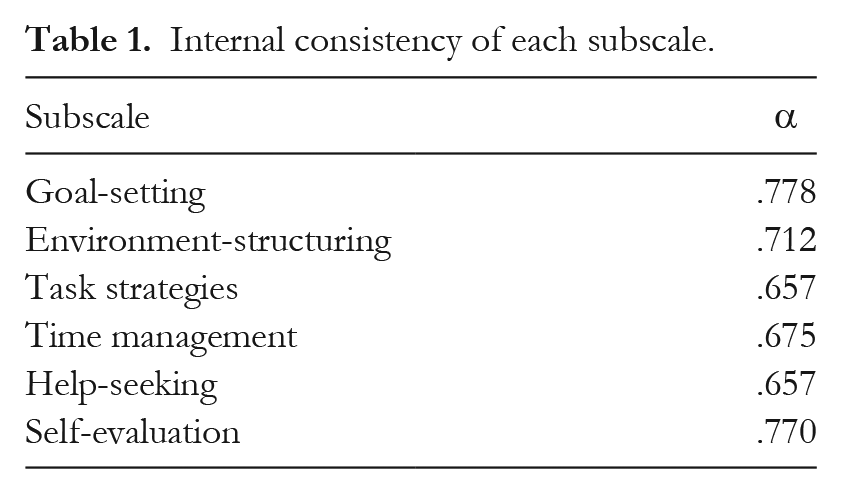

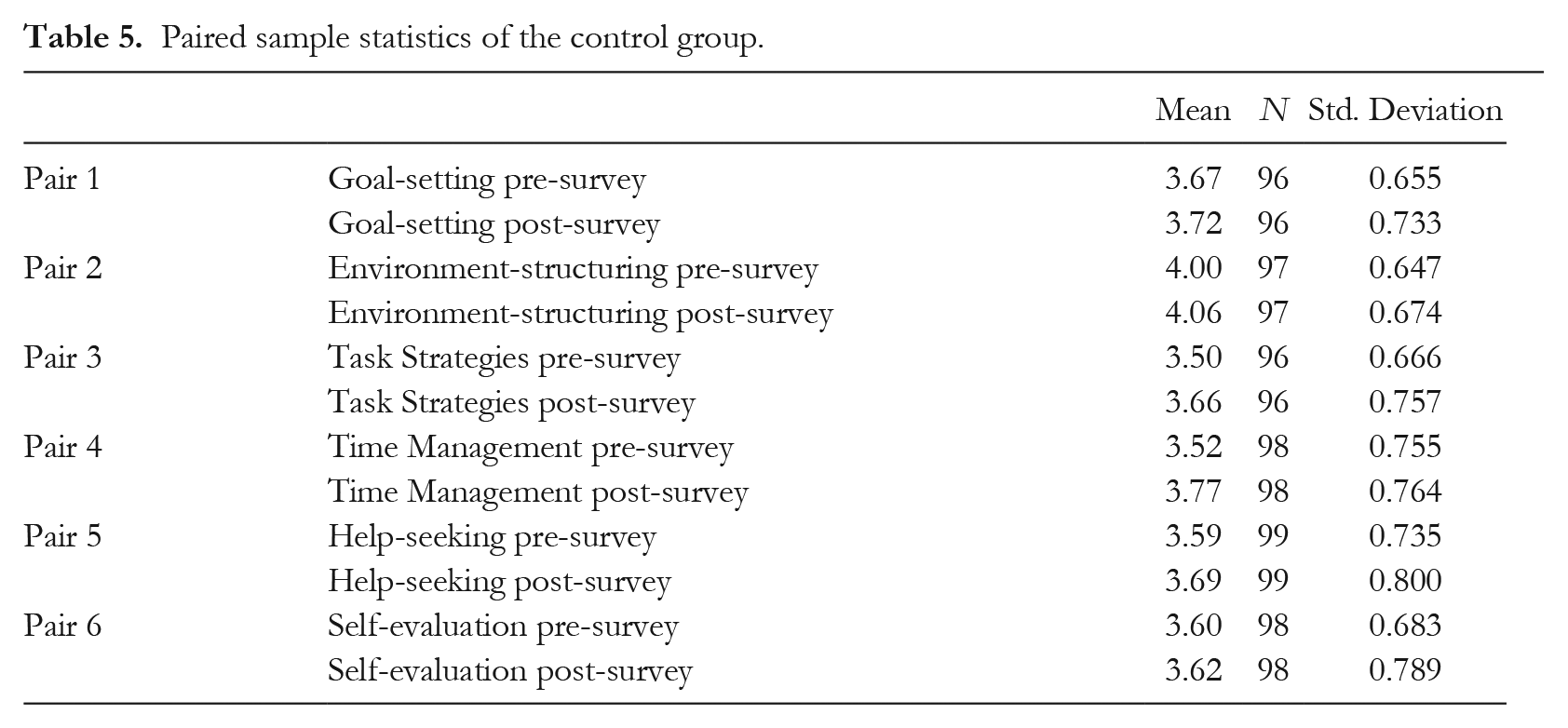

The OSLQ questionnaire contained 24 Likert scale items (1 = ‘strongly disagree‘ to 5 = ‘strongly agree’), categorized into six subscales: goal-setting, environment-structuring, task strategies, time management, help-seeking and self-evaluation (see Barnard et al., 2009). As the OSLQ is being applied to a new population, validation by means of Exploratory Factor Analysis (EFA) is necessary (Svensson, 2011), while reliability was established with Cronbach’s alpha coefficients (Barnard et al., 2009). The questionnaire was administered as a pre-survey at the start of the course and as a post-survey at the end.

First, the raw data from the OSLQ pre-survey (N = 213) were checked against Cronbach’s alpha, and high internal consistency was noted (α = .903). The alphas of the six subscales ranged from .657 to .778, which were of moderate to high consistency (see Table 1).

Internal consistency of each subscale.

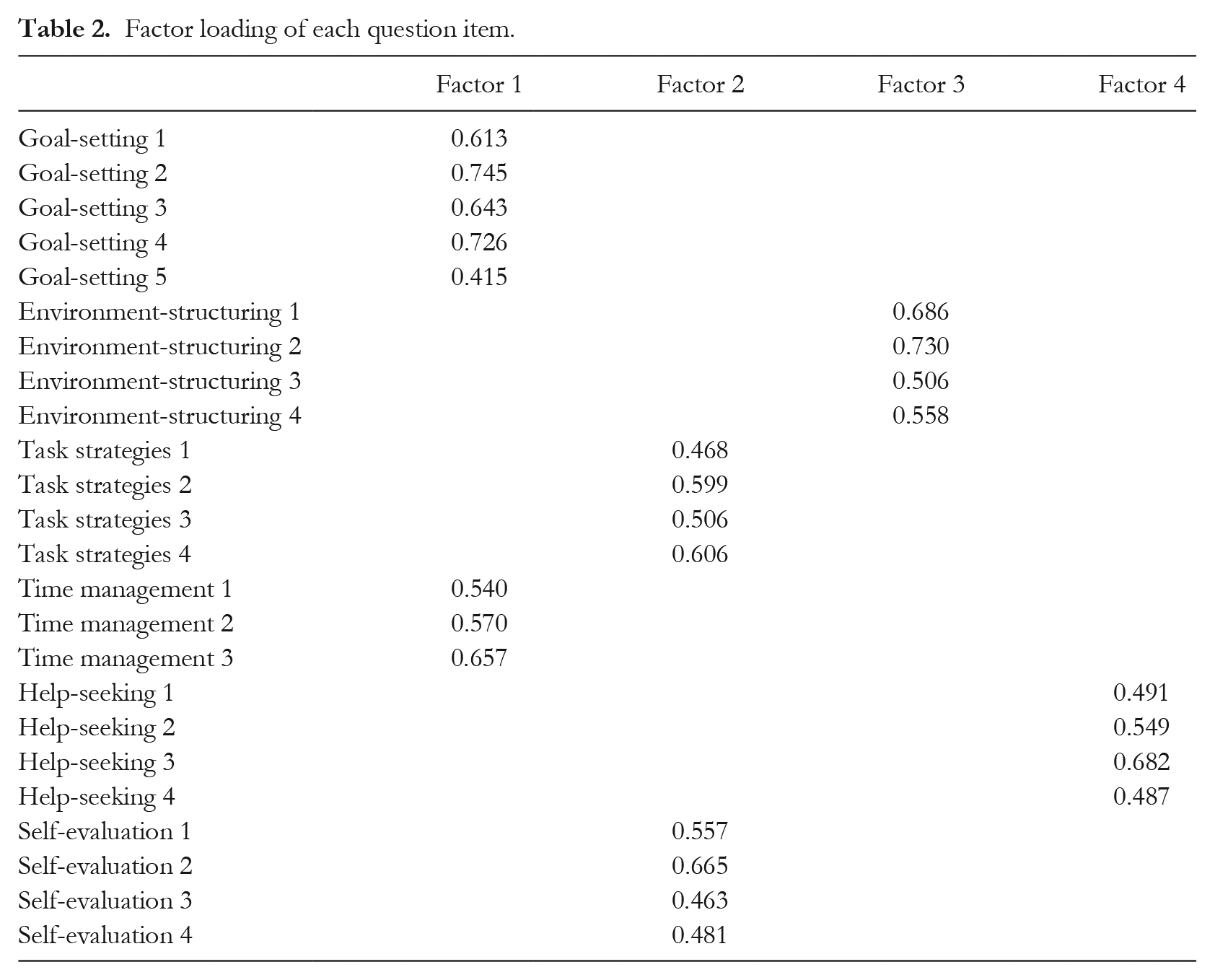

Next, data from the 24 question items of the OSLQ pre-survey (N = 213) went through an EFA. The principal component analysis (eigenvalues > 1) with Varimax rotation was selected. Kaiser-Meyer-Olkin was at .893, which indicated adequate sampling and data normality (Cerny & Kaiser, 1977). After nine iterations, four factors were extracted, accounting for a total of 52.23% of variable variance. The first factor explained 32.62% of the variance, the second factor 8.14%, the third factor 6.24% and the fourth factor 5.23%. Goal-setting was loaded together with time management, both of which pertain to setting a target for the learners themselves. Meanwhile, task strategies were loaded together with self-evaluation; they are both self-conscious strategies to facilitate learning (see Barnard et al., 2009). These two pairs of items are intuitively interconnected (see Table 2), indicating adequate scale validity.

Factor loading of each question item.

The open-ended questionnaire

The open-ended questionnaire was created using the OSLQ’s six categories, with 13 open-ended questions designed to investigate the experimental group’s perceptions of the PLE-IELTS platform and SRL skills (see Supplemental Appendix 1). Two PLEs experts evaluated the survey questions for relevance, clarity, simplicity and understandability. Furthermore, content analysis was applied to analyse the response data by two expert coders.

Ethical consideration

All study participants were informed of the study’s purpose, as well as their right to withdraw at any time. Their responses were kept confidential. The first page of the questionnaire explained the purpose of the research and participant information for use in this study. Participants had to press the ‘Consent to proceed’ button to move to the page of questions. At the end of the questionnaire, the participants were informed that by clicking the ‘Submit’ button they were consenting to participate in this study.

Results

Research question 1: Can the PLE-IELTS platform better improve postgraduates’ online self-regulated skills compared with traditional face-to-face teaching?

To answer the first research question, the Statistical Package for the Social Sciences (SPSS) version 24 was used to analyse the quantitative OSLQ data.

Within-group comparisons

The data were collapsed according to the subscales by averaging the subscale items, which generated 1,278 mean data points for the pre-survey and 1,188 mean data points for the post-survey. They were explored for outliers and normality. For the detection of outliers, the default 1.5 inter-quartile range used by SPSS was not taken due to its hypersensitivity to marginally acceptable data (Hoaglin & Iglewicz, 1987). Instead, the authors manually checked for a 2.2 interquartile range, and 22 data points were removed. Then, a Shapiro-Wilk (SW) Normality Test was conducted, followed by visual checking of Q-Q plots. Skewness and kurtosis were all within the strict standard of −1/+1 (Mishra et al., 2019), ensuring that the subscale data of the pre-survey and post-survey were normally distributed.

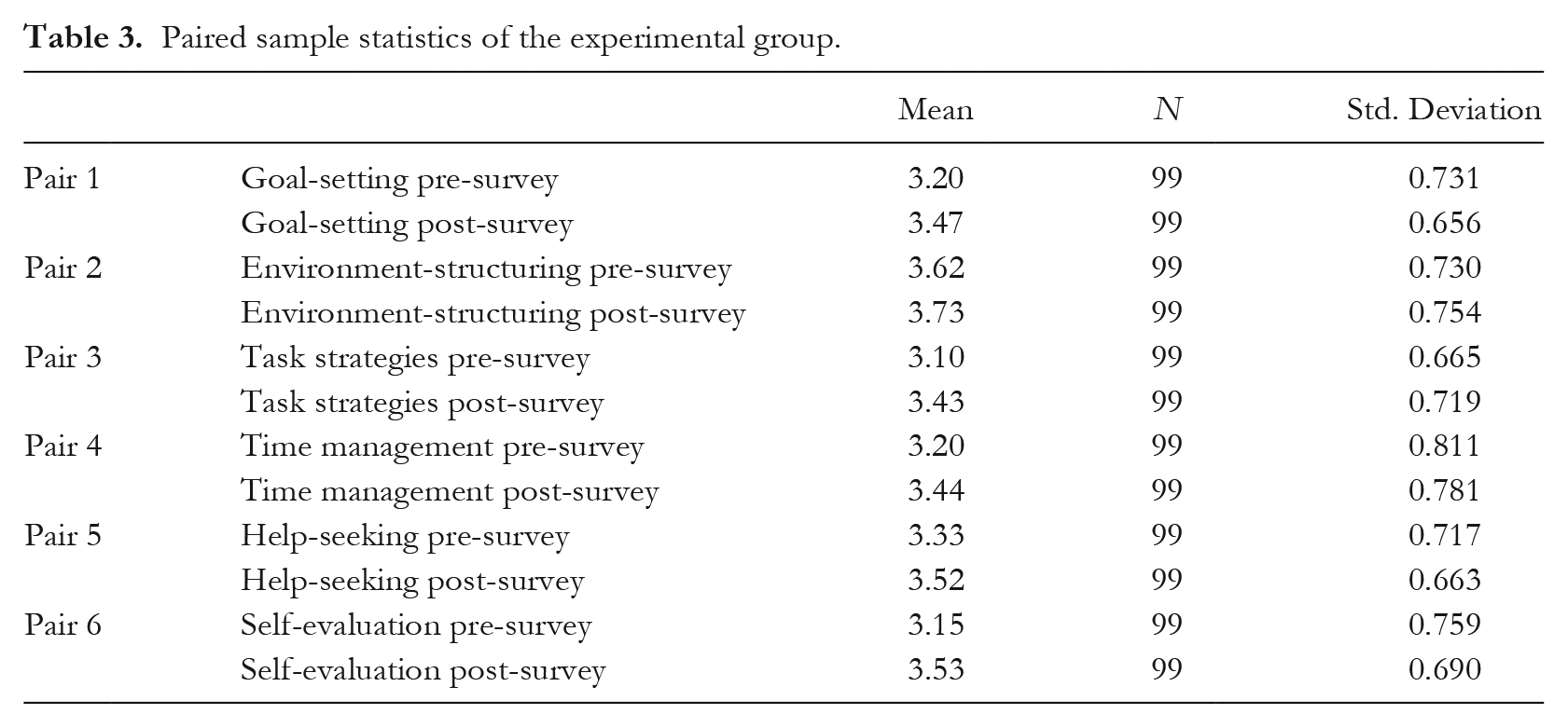

The experimental group

To evaluate the differences between the pre-surveys and post-surveys in the experimental group, a paired-samples t-test was conducted between each pair of pre- and post-survey subscales. Simple paired calculation suggested that the post-survey means of each subscale were larger than the pre-survey means (see Table 3 for details). Paired-sample t-test confirmed that the differences were all significant, as summarized in Table 4.

Paired sample statistics of the experimental group.

T-test results comparing the pre-survey and post-survey scales in the experimental group.

Note: *two-tail significance at .05

two-tail significance at .01

two-tail significance at .001

The above data analysis revealed that the experimental group’s SRL skills improved significantly by using the PLE platform, particularly their goal-setting, task strategies, time management and self-evaluation abilities. As for the developed goal-setting skills, this was due in part to the PLE-IELTS platform’s design, which included reminders and feedback during the learning experience, as feedback was essential in self-regulated learning by supporting learners’ self-reflection and regulation (Theobald, 2021). The analysis of the weekly goal-attainment reports revealed that the students excelled at carrying out plans and goals.

Regarding the improvement in the participants’ task strategies, the PLE platform’s access to extensive resources and reference recordings, where participants could practise in their own space, should be acknowledged. Meanwhile, self-reflection on the appropriate use of learning strategies may help learners to develop task strategies (Dignath & Büttner, 2008; Donker et al., 2014).

For time management skills, although the participants made significant improvements after using the PLE platform, their scores for scheduling or distributing time uniformly for online study remained comparatively low. These findings are consistent with those reported by Bylieva et al. (2021) and Syahri (2021).

For self-evaluation skills, the participants improved significantly in terms of communicating with classmates what to learn and how well they learned, as well as self-questioning and summarizing what they have learned.

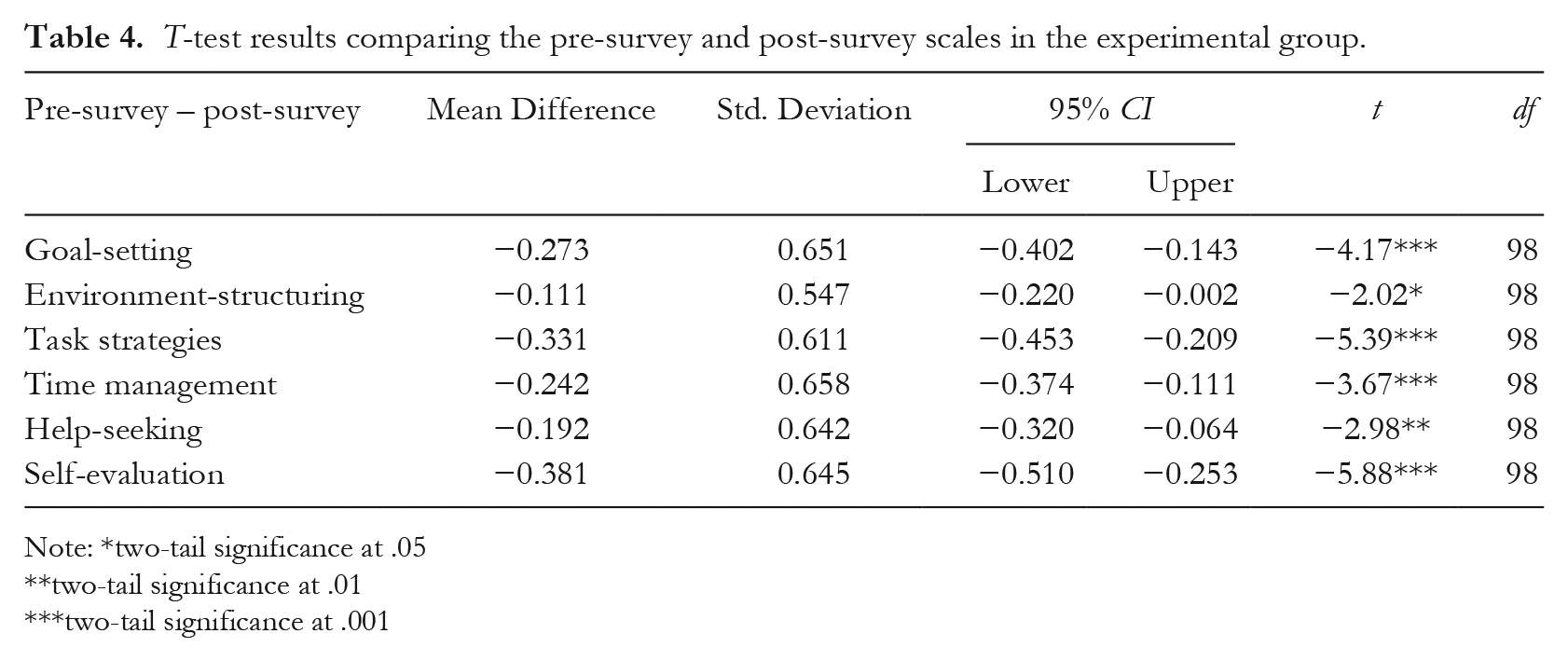

The control group

For the control group, improvements also appeared to be found in the post-survey. However, there were generally very small differences between the pre- and post-survey subscales (see Table 5). Further, the paired sample t-test only found the pre- and post-survey difference in time management to be significant (t(97) = −2.33, p < .05). All other differences were insignificant (p > .05).

Paired sample statistics of the control group.

Multiple studies indicate that learners’ time management skills may be improved through face-to-face instruction (Chen & Jang, 2010; Hafner et al., 2014). One potential explanation for this enhancement is that learners become more cognizant of the benefits of proper time utilization and the importance of prioritizing objectives and tasks, which can lead to the development of stronger time management skills (Alvarez Sainz et al., 2019).

Research question 2: What were the postgraduates’ perceptions of using the PLE-IELTS platform?

To answer the second research question, a thematic analysis was performed on the open-ended questionnaire data. To analyse the open-ended questionnaire administered in the experimental group, this research followed the steps in the content analysis process (Elo & Kyngäs, 2008). Researchers iterated through the data with the aim of finding emerging patterns, then performed open coding and created categories.

Q1: Goal-setting

Q1-1: How well did you meet your objectives?

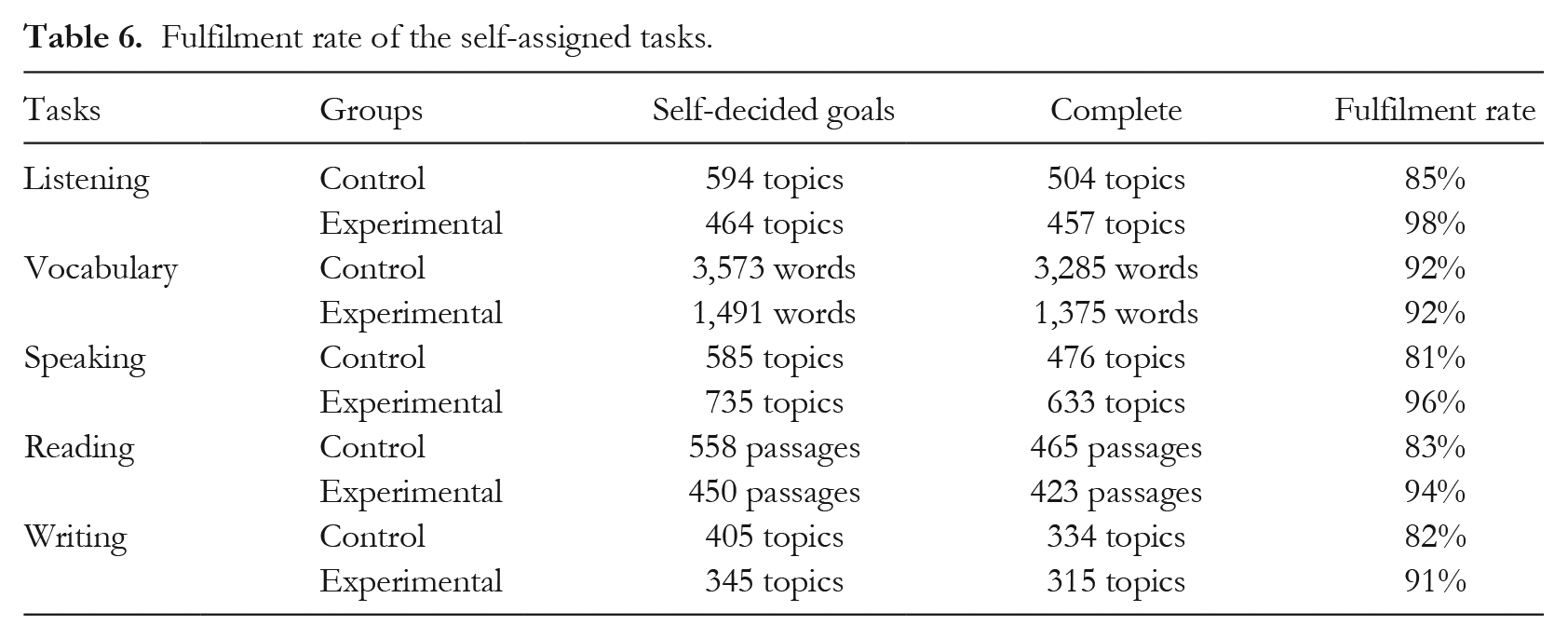

This item informs about participants’ goal execution. Both experimental and control groups set weekly learning objectives for IELTS vocabulary, listening, speaking, reading and writing tasks. Tasks were test-based and participants used apps or notebooks to create plans. A weekly goal-setting Excel form was sent via WeChat. Table 6 summarizes the experimental group’s self-decided goals and fulfillment rates.

Fulfilment rate of the self-assigned tasks.

Based on the table, the experimental group completed >90% of assigned tasks, while the control group completed >80%. This suggests better goal-management skills in the experimental group. The control group aimed for more tasks than they could handle. The experimental group excelled in setting specific and achievable goals, possibly due to system scaffolding and instructor intervention/supervision of SRL skills (Kizilcec et al., 2017; Littlejohn et al., 2016) in the Personal Learning Environment (PLE). However, it was uncertain to what extent the PLE platform and/or instructors’ intervention impacted the experimental groups’ goal-setting.

Q1-2: What issues did you face while developing and implementing your plan?

This item was designed to learn about the participants’ goal-setting abilities. Out of the total 47 responses, 78.7% said it was difficult to complete tasks on time, with some attributing the difficulty to an overburdened workload of homework or tasks. The heavy workload resulted in a lack of time and the inability to complete the previously designed plan.

Q2: Environment-structuring

Q2-1: If you were a platform designer, what would you change? (For example, functions, interface settings, interactive communication and so on.)

This item aimed to analyse the PLE-IELTS platform’s environmental structuring and its impact on learners’ self-regulated learning (SRL) skills. Among 111 responses, 41.4% suggested enhancing platform interactivity, such as implementing random roll calls, a reward system and interactive games. 30.6% desired additional functions like homework reminders, performance evaluation and online monitoring. 20.7% recommended improving the user-friendly interface for students and teachers. Students prioritized interactive communication over functions and platform interfaces, expecting learner-oriented features to facilitate self-regulation and goal attainment (Yen et al., 2021).

Q2-2: How could the platform be designed to your specific requirements?

This item was created to inform how the platform environment is structured based on individual requirements. Except for 19.8% of invalid answers, 35.9% of the students were concerned about the platform’s functions, 14.1% were concerned about their needs in interactive communication and user-friendliness, and 11.3% preferred a clean and tidy interface that could be easier to use.

Q3: Task strategies

Q3-1: Did you use other students’ speaking test recordings when practising IELTS on the PLE platform?

This question was designed to determine whether students sought assistance during the practice process; 81.8% said ‘yes’ among 110 valid responses.

Q3-2: When did you refer to other students’ speaking test recordings?

Referencing strategies had 107 valid responses, which mainly mentioned two situations: when encountering difficulties in answering the questions (28%), or when being dissatisfied with their own response in the mock test (36.55); 15.9% reported referring to the recordings after completing their own recording tasks.

Q3-3: How frequently did you refer to other students’ speaking test recordings?

This was a follow-up question about the adoption frequency of reference strategies. There were 108 responses in total, with eight claiming no reference to other students’ speaking test recordings. In the midst of the rest of the responses, 41.3% referred frequently, partly due to their own poor oral proficiency or lack of exam-oriented experience, partly to evaluate their own performance by comparing it to other students’, and 34% of participants reported making references occasionally. In general, students preferred referencing other students’ speaking test recordings.

Question 3-4: How did you manage the high-level speaking test recordings?

Students exhibited varying tendencies when it came to different levels of reference materials. Given the high-level speaking test recordings, approximately half of the 108 valid responses claimed to learn and imitate their ways of answering or excellent pronunciation and use of vocabulary, while approximately 24.1% said they would record the merits for future reference or memorization.

Q3-5: How did you handle the poor-quality speaking test recordings?

Considering the poor-level speaking test recordings, approximately 49.1% thought it was worthwhile to reflect on their own potential mistakes or weaknesses and estimate whether they would make similar mistakes or perform even worse. Around 33.3% said they would skip the low-level responses and instead listen to the others.

It is evident from Q3-4 and Q3-5 that participants engaged in self-reflection on their use of learning strategies when presented with varying levels of recordings of speaking tasks. This reflective practice facilitated the participants’ development of task strategies, which corroborates the quantitative research findings.

Q3-6: How did you believe the speaking test recordings of other students assisted you?

This question was related to the inquiry about the availability and preferences of student references. Except for two invalid responses, the remaining 108 responses were primarily concerned with obtaining assistance in improving language competence. For example, 35.2% for improving pronunciation and vocabulary, and 39.8% for broadening ideas for comprehensive and logical responses to speaking practices.

Q4: Time management: What difficulties did you have in time management?

This item was created to investigate learners’ self-evaluation of time management skills. A majority of 85.7% of respondents felt constrained by a lack of free time, which they attributed to a heavy workload at school or in the institute. Some of the participants reported a need for time management skill development due to the shift to online learning during the pandemic. This was because online learning was accompanied by an increase in unstructured time and the elimination of time norms for students (Tabvuma et al., 2021). However, only two participants reported that they lacked time management skills and were bad at making detailed plans. This was consistent with the results drawn from the quantitative research.

Q5: Help-seeking: Did you contact the instructor or your peers if you had a problem? If so, how frequently? If not, why not?

This item was designed to assess the participants’ ability to seek assistance; 73% of postgraduates said they never or rarely sought assistance while studying on the PLE-IELTS platform. Furthermore, 52% said they were not used to using help-seeking approaches to improve their SRL skills or solve problems they encountered. The majority of them (62%) also stated that they would seek assistance with assignment due dates and task requirements, or when they encountered difficulties, such as having no idea of how to answer the questions and being unsure of their own pronunciation.

Results indicate students’ lack of initiative in seeking assistance and limited options, aligning with questionnaire analysis. Asynchronicity and information overload in online forums hinder effective connections between help-seekers and providers (Yang & Stefaniak, 2023). Artificial intelligence, like peer recommenders, could be employed to address this, facilitating help-seeking in online learning (Li et al., 2022).

Q6: Self-evaluation: How did you deal with self-management and learning motivation management?

This item examined students’ self-evaluation in self-management and learning motivation. Lack of learning initiative (46.5%) and self-discipline (51.2%) were reported as major factors hindering self-regulation. The quantitative survey showed improved self-evaluation abilities, but conflicting outcomes emerged due to the questionnaire’s emphasis on metacognitive awareness and the reflective diary’s focus on self-management and motivation. The diary provided valuable insights into learners’ challenges despite improved self-evaluation skills, offering more accurate feedback on the learning process.

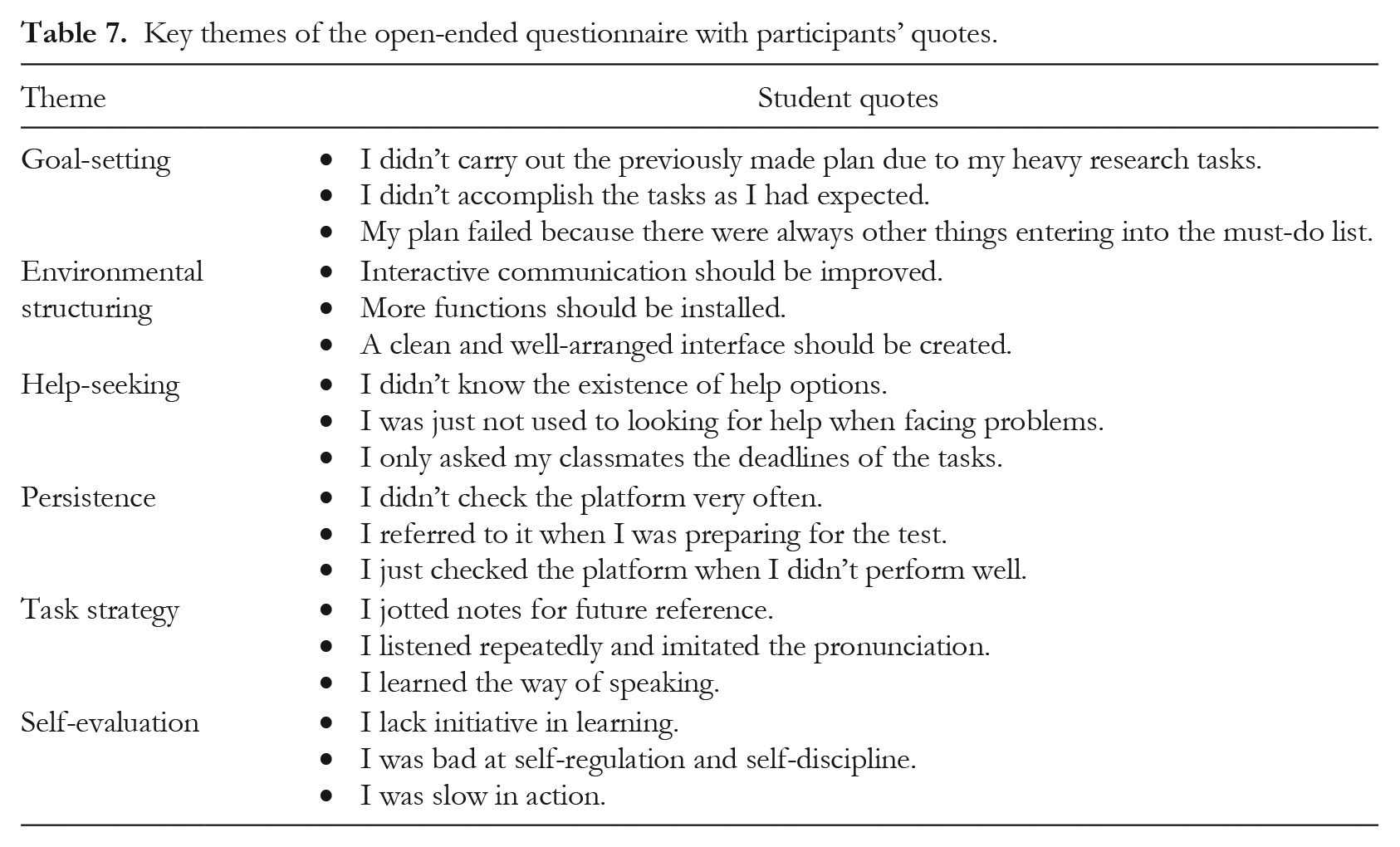

The key themes of the open-ended questionnaire along with some typical quotes from participants are displayed in Table 7.

Key themes of the open-ended questionnaire with participants’ quotes.

In sum, results indicated that time management, help-seeking and self-evaluation skills were areas where learners struggled. PLEs utilizing a system-based learning path and timely feedback (Xu et al., 2023) might address these challenges. PLEs were confirmed to be a promising approach for promoting SRL skills in online learning contexts. To this end, this study contributes to the theoretical understanding of the impact of PLEs on SRL skills in mature students and provides practical guidance to learning platform designers seeking to improve learners’ SRL skills.

Conclusion

During the pandemic, prevalent online learning encouraged learners to be equipped with SRL skills in order to be fully engaged in any adaptive learning environment. This study extended the SRL and PLE theories by investigating how learners’ SRL skills changed as a result of the design and implementation of a PLE platform for postgraduates. The SRL pre- and post-survey scores were compared, and paired-sample t-tests basically only revealed significant differences in the experimental group, indicating that the PLE-IELTS platform could significantly improve the experimental group’s online SRL skill, echoing Nan Cenka et al.’s (2022) study.

Meanwhile, further inferential statistics indicated significant improvements in the SRL skills after the experimental group received prolonged exposure to the researcher-designed PLE platform. Such an improvement was not found in the control group, which only received traditional face-to-face teaching. Furthermore, the results of the open-ended questionnaire indicated the quantitative data, with the experimental group demonstrating better intention and ability at goal-setting compared to the control group. They did, however, report difficulty with time management, owing to the tight schedule and heavy workload. Further, they proposed a more personalized and interactive design of the PLE platform to support their SRL learning. Also, it was discovered that the participants possessed good SRL skills in task strategy, such as self-reflection, referring and note-taking abilities. They did, however, need to improve their self-evaluation skills, such as learning initiative and self-discipline. Meanwhile, learners had to improve their help-seeking strategies, including their awareness and intention to ask for help, as well as their skills in inquiring for help. In conclusion, previous research (Barrot et al., 2021) demonstrated that SRL skills needed to be improved in all online settings, including PLEs. As a result, in the future, designers and instructors should offer more personalized options, materials and procedures to help learners improve their SRL skills.

Limitations and future research

The study’s limitations were primarily four. First, the experimental and control groups were not randomized due to university policies and ethical considerations. However, the enrolment-time-based class allotment could reduce biases caused by the non-randomization. Second, the research design could not confirm to what extent the PLE platform and/or instructors’ intervention impacted experimental groups’ goal-setting. Third, the study only lasted nine weeks; it was unable to detect the long-term effects of PLE implementation on learners’ SRL skills. Fourth, although the study found that the EFA of the OSLQ did not extract six factors as expected, the subscales did show high item correlations. In the future, longitudinal research can be conducted to investigate the long-term effectiveness of PLEs in improving learners’ SRL skills. Meanwhile, PLEs can be used as an SRL measurement tool to provide reports on each learner’s SRL skill level. It can also be used to model learners according to their SRL strategies.

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.