Abstract

Non-compliance is a challenge for practitioners serving children with and without disabilities. Many interventions have been developed to increase compliance. High-probability request sequences (HPRS), an antecedent-based intervention that is based on behavioral momentum theory, is one way to increase compliant behavior. HPRS includes the presentation of two-to-five easy or known tasks with a high probability of compliance immediately before requesting tasks with a low probability of compliance. The purpose of the current meta-analysis was to review the literature in the last 40 years on high-p request sequences as an intervention to improve compliance in children with autism spectrum disorder (ASD). Specifically, we examined the methodological rigor of the high-p single-case research for students with autism, identified the descriptive characteristics of these studies, and estimated treatment effects with Tau-U to determine whether HPRS is an evidence-based practice (EBP) for increasing compliance in children with ASD. Our results showed that HPRS is a very effective practice in increasing compliance in children with ASD (Tau-U = .87) and a promising EBP for improving compliance in children with ASD. Implications for future research and practice are discussed.

Keywords

The term behavioral disorders refer to a pattern of disruptive or non-compliant behavior that result in environmental disruptions (Sallese et al., 2023). Children with ASD often demonstrate behaviors that adversely affect their ability to develop quality relationships with peers and adults and can impact their performance in school and non-school settings (Sesso et al., 2020). To many school professionals and other adults, students with ASD may appear uncooperative or defiant when not following directions, leading to strained interactions and an increase in the use of punitive discipline methods (de Swart et al., 2023). The use of corrective and reactionary methods when responding to children with ASD often results in increased rates of challenging behavior, reduced opportunities for prosocial interactions, and places students at risk for long-term mental health outcomes (Common et al., 2019). As such, practitioners need strategies to increase the occurrence of compliance which refers to following instructions within a specified time after a request (Pitts & Dymond, 2012). Because non-compliance may prevent, delay, or interfere with the acquisition of prosocial behaviors and hinder the development of positive social interactions (Rosales et al., 2020), various interventions have been used to increase compliance in children with ASD. These interventions take on different complexions with some consequence-based such as differential reinforcement (e.g., Cuvo et al., 2010; Lillie et al., 2021), time-out (e.g., Olmi et al., 1997), extinction (e.g., Cuvo et al., 2010); and others antecedent-based such as noncontingent reinforcement (e.g., Ingvarsson et al., 2008; Richling et al., 2011), self-management (e.g., Imasaka et al., 2020), reduction in response effort (Fischetti et al., 2012), and HPRS (e.g., Planer et al., 2018; Rosales et al., 2020).

High-probability (high-p) request sequence, also called interspersed requests, pre-task requests, or behavioral momentum, is an antecedent-based intervention that includes the presentation of two-to-five easy or known tasks in quick succession (i.e., high-p requests) immediately before requesting the difficult or target task (low-p request; Cooper et al., 2014). This procedure has been demonstrated to impact a broad range of outcomes for children with ASD, including to decrease escape-maintained challenging behaviors (Mace & Belfiore, 1990) to increase compliance (e.g., Planer et al., 2018; Rosales et al., 2020), and increase food acceptance (e.g., Meier et al., 2012; Penrod et al., 2012). Moreover, HPRS has been the subject of several systematic reviews and meta-analyses in the last decade with each demonstrating the effectiveness of the intervention platform (Brosh et al., 2018; Common et al., 2019; Cowan et al., 2017; Hume et al., 2021; Maag, 2019; Wong et al., 2015). However, the inferences drawn from the research on HPRS and the compliance of individuals with ASD are not universal. In their comprehensive literature review to identify EBPs for individuals with ASD, Wong and colleagues (2015) identified 27 interventions as an EBP. Despite research indicating its effectiveness, behavioral momentum did not meet the EBP criteria due to the presence of fewer than 20 participants across the available single-case research studies, a key quality indicator.

Examining the findings of previous meta-analyses and systematic reviews, the evidence bases for HPRS need to be clarified. Although HPRS has been identified as a promising EBP in some studies (Common et al., 2019; Maag, 2019; Wong et al., 2015), it has been identified as an EBP in other studies (Brosh et al., 2018; Steinbrenner et al., 2020). The reasons for these different determinations likely relate to several issues. First, the use of different design standards across studies may lead to disparate ratings due to criteria and scoring type (Council for Exceptional Children [CEC], 2014; Maggin et al., 2014; Wong et al., 2014). Second, while some reviews only examined studies on a specific group of students (Brosh et al., 2018; Cowan et al., 2017; Steinbrenner et al., 2020), others reviewed studies across a broader range of students (Common et al., 2019; Maag, 2019). Third, some research teams only reviewed studies that used single-case research designs (SCD; Brosh et al., 2018; Cowan et al., 2017; Maag, 2019), while others also included group-experimental research designs (Common et al., 2019; Wong et al., 2015). Fourth, some reviews focused on challenging and appropriate behaviors instead of a common dependent variable. Fifth, the inclusion criteria for reviews varied, with some examining research across longer periods of time while others included studies from a more condensed timeframe.

The current review aims to improve on these limitations by (a) including studies that use only SCD, (b) deploying an established and comprehensive rubric for evaluating SCD standards, (c) focusing on a clearly defined dependent variable, compliance, and its relation for a well-operationalized intervention, and (d) sampling literature from a broad period of time that corresponds with new diagnosis criteria for identifying persons with ASD. The purpose of the current meta-analysis, therefore, was to review 40 years of research on high-p request sequences to improve the compliance of children with ASD and to estimate the magnitude of effect across these studies. The following questions were addressed in this meta-analysis:

Method

Study Selection

We identified the studies in two steps. First, in March 2022, we conducted an electronic database search of Academic Search Complete which allowed us to search multiple databases including, EbscoHost, ProQuest, Sage, Scopus, and Web of Knowledge. When searching the databases, we limited the languages to English, German, French, and Turkish to match the languages of the author team. For this search, we included only those manuscripts published in peer-reviewed journals over a 40-year timeframe (January 1982–December 2021). We did not include the gray literature since the systematic identification of gray literature poses a considerable challenge, as highlighted by Adams and colleagues (2016). Although web-based databases contain master theses, dissertations, and certain conference proceedings, locating other forms of gray literature, such as unpublished manuscripts or project reports, proves challenging (Godin et al., 2015). Research with substantial effect sizes and statistically significant results are more likely to be published in academic journals, creating a potential bias against studies with more minor effects or negative findings (Cook & Therrien, 2017; Gage et al., 2017). Thus, it is essential to recognize the influence of publication bias on the reported effect sizes in our study.

We decided to start the search from 1982 because that was the first year that ASD was treated as a separate category in Diagnostic and Statistical Manual of Mental Disorders (3rd ed.; DSM-III; American Psychiatric Association, 1980), leading to an increase in the number of studies focused on persons with ASD. For the search, we used the following keywords and in combination: autis* OR autism OR ASD OR pervasive developmental disorder OR PDD* OR HFA AND behavioral momentum OR high-probability OR high probability OR high-p* OR low-p OR task inters*. We accessed 2003 studies after the database search.

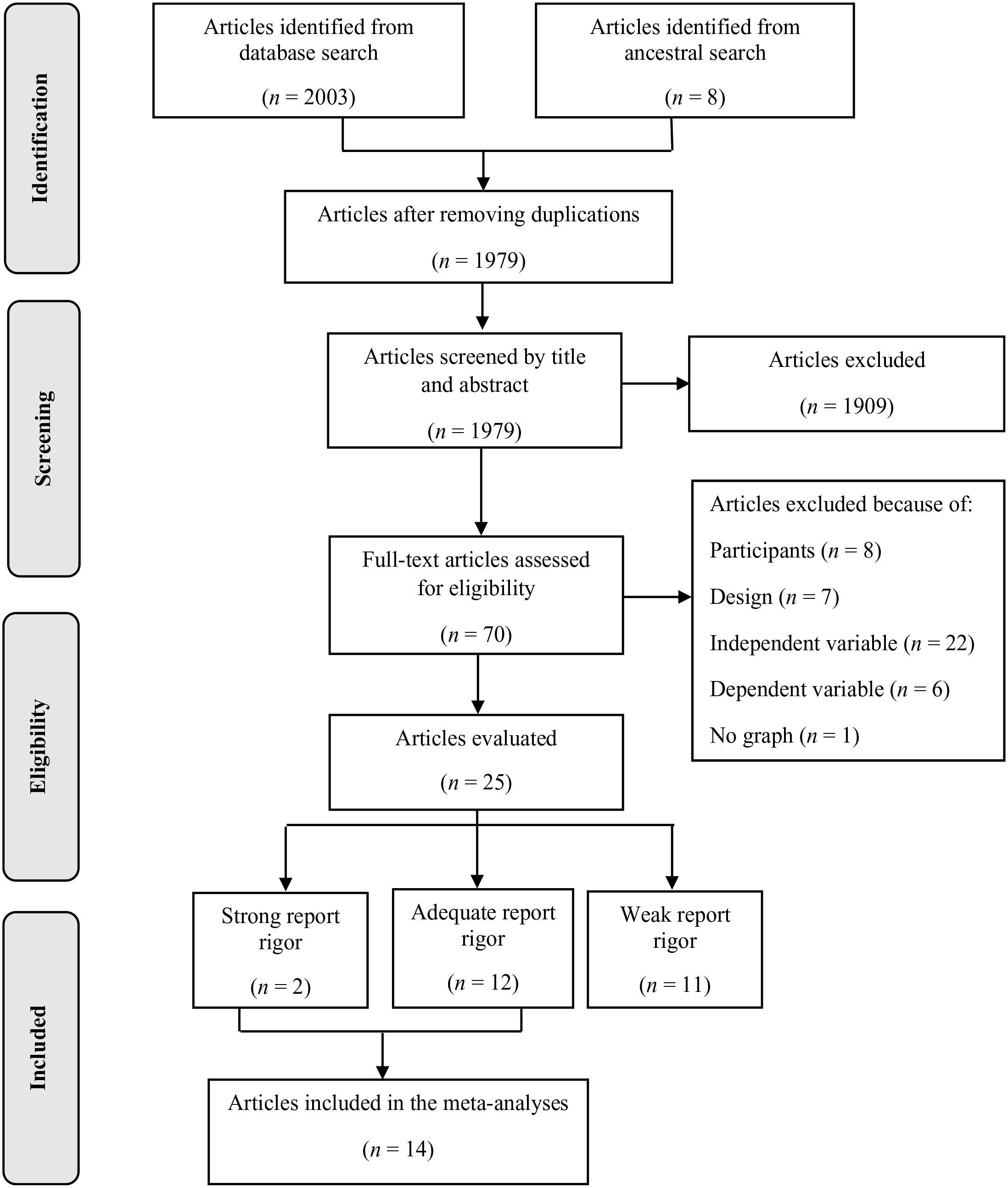

The second part of the search included an examination of the reference lists of published meta-analyses (Cowan et al., 2017), systematic reviews (Brosh et al., 2018; Common et al., 2019; Maag, 2019), and reports on HPRS. We found eight more studies during the ancestral search. After removing duplications (n = 32), we had 1,979 studies for the title and abstract screening. Two authors carried out both the electronic databases search and ancestral search simultaneously, but independently.

Inclusion Criteria and Screening Process

The inclusion criteria for the current review were as follows: (a) at least one participant had ASD diagnosis, (b) the participant was between the ages of 0 to 22, (c) an experimental SCD was used, (d) the primary independent variable was either behavioral momentum or HPRS, and (e) the primary dependent variable was compliance behavior. We excluded the studies that (a) had participants without disabilities or other a diagnosis other than ASD, (b) had participants older than 22 years, (c) used changing criterion design or a design that does not allow for experimental control (e.g., AB, ABA, BAB), (d) did not contain graphs or data that allow calculation of effect size, and (e) in which behavioral momentum or HPRS was part of an intervention package. Of the 1,979 studies identified during the search process, we found 1,909 ineligible studies and, therefore passed 70 studies into the full-text review where we applied the inclusion criteria in deeper detail.

The first and the last authors completed each screening step independently and involved the third author when mediation was needed. During the full-text review, we removed 45 studies. The reasons for moving these 45 studies were because the study did not include at least one participant with ASD (n = 7), the participants were older than 22 years (n = 1), the authors used a SCD that did not allow for experimental control (n = 7), used HPRS as part of an intervention package and not as an isolated independent variable (n = 22), did not target the compliance of students (n = 6), or did not contain a graph to allow for the calculation of effect size (n = 2). Following the full-text screening, 25 studies remained for further analysis.

Coding Process

Following the identification process, we coded each of the 25 remaining studies to describe key study characteristics related to student demographics, qualities of the intervention, and details related to the study. We also examined methodological aspects of the studies to determine the overall strength of the inferences drawn from this body of research. We describe the coding process in the following sections.

Quality Appraisal

We employed a systematic rating system specially developed for individuals with ASD by Reichow and colleagues (2008) to assess the methodological quality of the 25 studies in this review. This method includes three instruments: (a) a rubric for evaluating the research rigor, (b) guidelines for evaluating research report strength, and (c) criteria for determining an intervention as an EBP. The reason for selecting this method is because it has been developed especially for individuals with ASD and focuses on the strength of reporting as well as methodological quality. The evaluative method considers both primary and secondary methodological elements. Primary quality indicators (participant characteristics, independent variable, baseline condition, dependent variable, visual analysis, experimental control) are critical to the validity of the research. They are rated on a trichotomous scale (high, acceptable, and unacceptable quality). In contrast, secondary quality indicators (inter-observer agreement [IOA], kappa, blind coding, fidelity, generalization/maintenance, social validity) are elements that are, although important, not considered necessary to ensure the validity of the research and are rated on a dichotomous scale (evidence/no evidence). Reichow (2011) and Reichow and colleagues (2008) provided detailed descriptions and operational definitions for each quality indicator.

Reichow and colleagues’ (2008) guidelines for evaluating research report strength allow for the synthesis of the aforementioned ratings into an overall assessment of the research report’s strength. Report strength is rated at three levels: strong, adequate, and weak. To evaluate single-case research studies as strong, all primary quality indicators must be rated “high quality,” and three or more secondary quality indicators must be met. Research is considered adequate when at least four primary quality indicators are rated “high quality,” none is rated “unacceptable,” and meets at least two secondary quality indicators. A study is rated weak if fewer than four “high quality” ratings are received on the primary quality indicators, or fewer than two secondary indicators are met. The first and second authors examined all included studies regarding quality indicators simultaneously but independently.

Descriptive Data Extraction

We developed a coding form for extracting descriptive data from the studies. The coding form included variables related to participant characteristics (disability status, age, gender, and intervention agent), intervention features (setting, dependent and independent variables, acquisition, maintenance, generalization, and social validity), and study characteristics (geographic location and published journal). The first and last authors independently extracted descriptive data using this coding form and resolved disagreements through mediation.

Treatment Effect Calculation

In single-case research, the effect size is calculated to determine the magnitude of change associated with an intervention (Vannest & Ninci, 2015). In the current study, we used Tau for nonoverlap with baseline trend control (Tau-U) to determine the effect sizes of methodologically sound studies (Parker et al., 2011). Although there is a disaccord on which effect size calculation method is most valid, there is emerging evidence that Tau-U is widely used and addresses often overlooked issues of baseline trend (Fingerhut et al., 2021).

The first and second authors independently calculated effect size estimates for the studies. Firstly, they digitized the data using WebPlotDigitizer 4.5 (Rohatgi, 2021), an open-source digitizing software. To digitize the data, we converted the graphs into picture files (.png) and uploaded them into the software. We then assigned values to the x- and y-axes and marked each of the data points represented on the graphs using the software. We then transferred the raw data into the Microsoft Excel™ software, generated new graphs based on the extracted data, and compared them with the original graphics using Pixlr-X software. The purpose of this process was to determine whether an error was made during digitization. We placed the reproduced graphs on top of the original ones made translucent with Pixlr-Xi to check whether all data points overlapped and compared all digitized raw data and the reproduced graphs by considering the original ones.

In the next step, we inputted the raw data in a free web-based Tau-U calculator (http://singlecaseresearch.org/calculators/tau-u) and calculated effect sizes for each graph. The Tau-U formula can be accessed from the previous publications (Parker et al., 2011; Rakap et al., 2020). We used the guidelines provided by Parker and colleagues (2011) to interpret the treatment effect estimates. Scores can range from 1.0 to −1.0. According to the guidelines, scores higher than .80 shows a considerable change, between .70 and .79 a significant change, between .20 and .60 a moderate change, and below .20 a small change.

EBP Status Determination

Using the evaluative method (Reichow et al., 2008), we assessed the status of HPRS as an EBP. The evaluative method includes two categories of EBPs: established EBPs and promising EBPs. These two levels can be obtained using the ratings from the single-case and group experimental studies alone in combination by calculating a Z score. The Z score provides an overall measure of evidence for the intervention. Since we only included single-case research, we calculated the Z score using the following formula: (SCD_S*4) + (SCD_A*2) = Z. The SCD_S is the number of participants for whom the intervention was effective from studies with a strong rating. The SCD_A is the number of participants for whom the intervention was also effective but from studies with an adequate rating. An established EBP is an intervention that received 60 or more on the Z score and whose effectiveness has been demonstrated across at least five studies with sound methodology. Moreover, to categorize an EBP as established, the research must have been carried out with at least 15 participants across three or more independent research groups at three different locations. Alternatively, an established EBP might consist of 10 studies with adequate ratings, conducted with at least 30 participants, by three research groups at three sites. A promising EBP is an intervention that received a Z point between 31 and 59 and has shown effectiveness across five studies though the body of research includes 16 participants led by two research groups at two locations.

Inter-Rater Reliability

All phases of this meta-analysis were carried out independently by at least two authors. After each step, the authors convened to discuss the disagreements. In instances where consensus could not be reached, they sought the input of the third author. They did not pass to the next step without getting an agreement. Even if the authors obtained a consensus, they accepted it as a disagreement.

Two authors conducted the electronic search simultaneously during database search procedures and took a screenshot of the results. They noted the number of articles determined by themselves. Then, they used the “(Small number/Large number) × 100” formula (Ledford et al., 2019) to calculate the reliability ̧ coefficient, and they obtained a 100% reliability coefficient. Two authors independently completed the screening (title and abstract), full-text review, quality appraisal, descriptive data extraction, and effect size calculation steps. We calculated the inter-rater reliability coefficient using the “(Agreement/Agreement + Disagreement) × 100” formula (Ledford et al., 2019). The inter-rater reliability coefficients were 99.3% for screening, 89.9% for full-text review, 98.2% for quality appraisal, 99.8% for descriptive data extraction, and 100% for effect size calculation.

Results

Figure 1 presents the search procedure, screening, and eligibility flowchart. We identified a total of 2,011 studies in the electronic and ancestral search. After removing duplications and title and abstract screening, 70 studies remained for full-text review, of which 25 met the inclusion criteria.

Meta-Analysis Flowchart.

Quality Appraisal

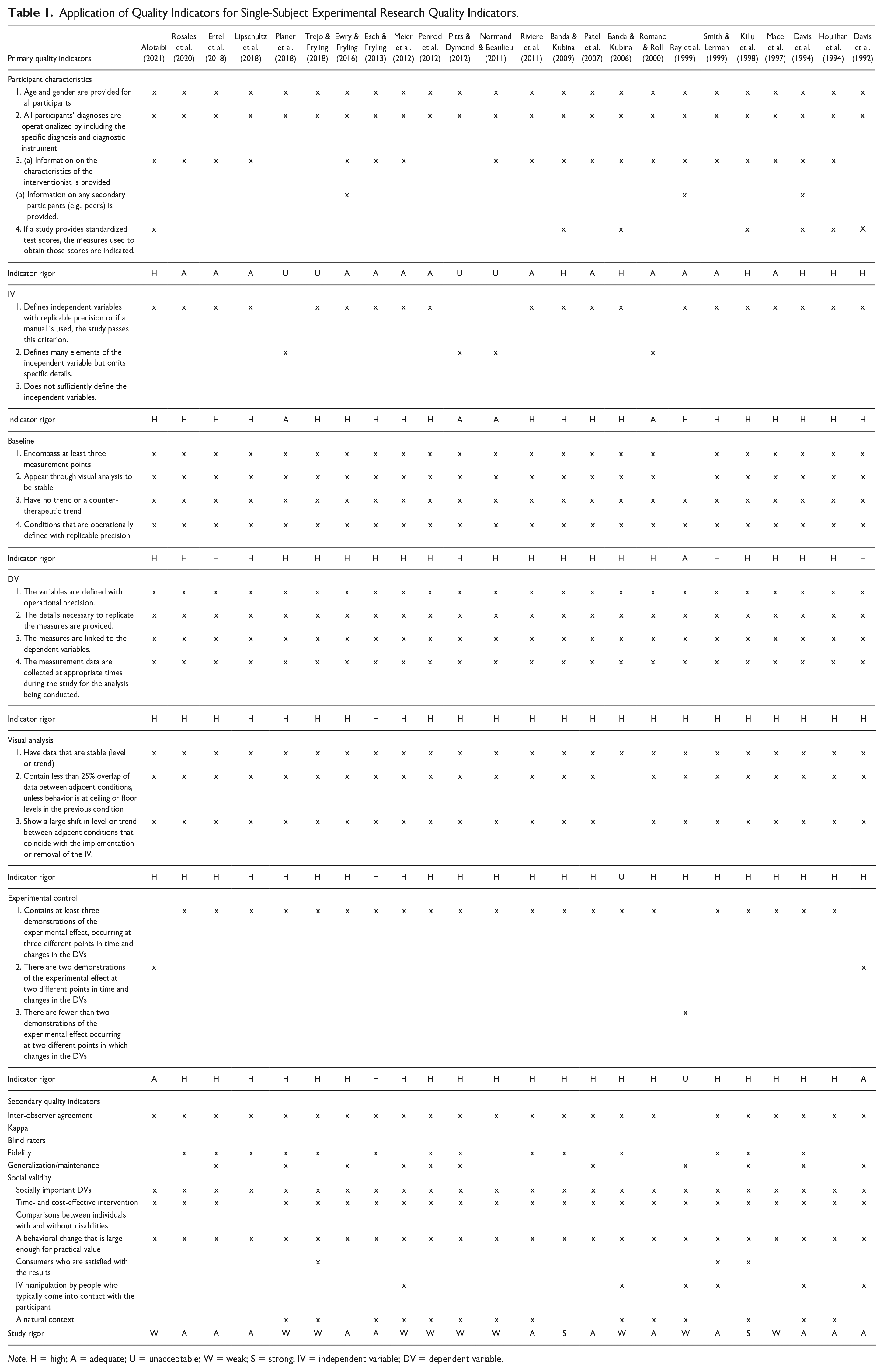

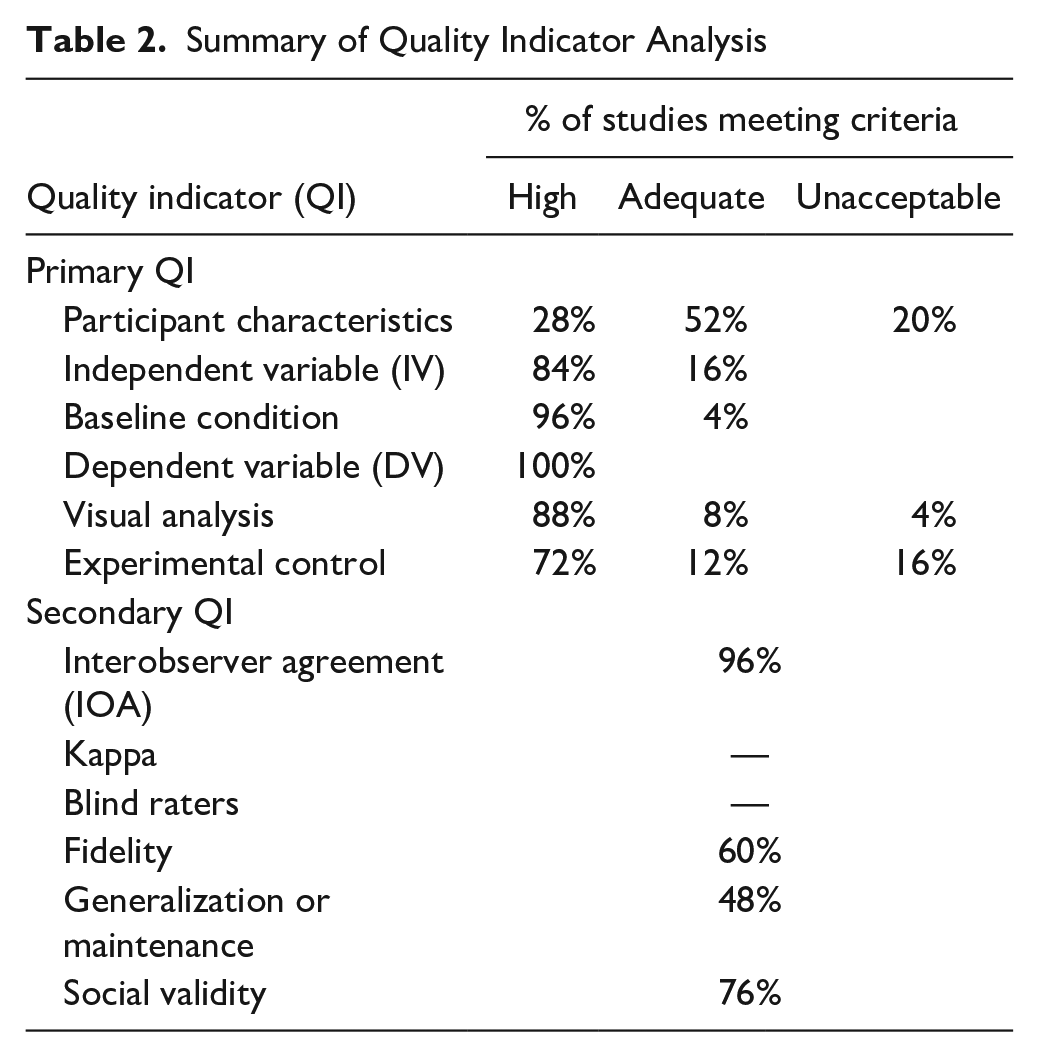

We evaluated 25 studies using the evaluation method for determining evidence-based practices in autism. We determined two studies as strong, 12 as adequate, and 11 as weak in research report strength (see Table 1). Detailed information about each study’s research rigor is presented in Table 1. Also, Table 2 shows the summary of the evaluation results.

Application of Quality Indicators for Single-Subject Experimental Research Quality Indicators.

Note. H = high; A = adequate; U = unacceptable; W = weak; S = strong; IV = independent variable; DV = dependent variable.

Summary of Quality Indicator Analysis

We analyzed primary indicators and found that approximately on quarter of the studies (n = 7, 28.00%) of the studies received a high rating on the quality indicator associated with participant characteristics. Nevertheless, a majority of the studies (n = 13, 52.00%) were rated adequate. Five studies (Kelly & Holloway, 2015; Normand & Beaulieu, 2011; Pitts & Dymond, 2012; Planer et al., 2018; Trejo & Fryling, 2018) did not provide one or more of the following: diagnostic information about the participants, behavioral characteristics of the participants, standard test scores, and intervention agent. Most studies provided detailed (n = 21; 84.00%) or sufficient (n = 4; 16.00%) information about the independent variable. No study received an unacceptable rating on this quality indicator. Almost all studies received a high rating on the quality indicator associated with the baseline condition (n = 24; 96.00%), whereas only one study (Ray et al., 1999) was coded as an acceptable rating (n = 1, 8.00%). All studies were highly rated on the dependent variable’s quality indicators. On the visual analysis, a large majority of the studies received a high rating (n = 22, 88.00%), while two studies (Lipschultz et al., 2018; Ray et al., 1999) were rated acceptable and one study (Banda & Kubina, 2006) was rated unacceptable due to having more than 25% overlap of data points between baseline and intervention conditions. The last primary indicator is related to experimental control, in which most studies receiving either a high (n = 18, 72.00%) or adequate (n = 3, 12.00%) rating. Just a few studies received an unacceptable rating (n = 4, 16.00%) for using either an AB design (Ray et al., 1999), a non-concurrent baseline design (Meier et al., 2012; Trejo & Fryling, 2018), or because the experimental procedure was implemented under non-standard conditions (Mace et al., 1997; Penrod et al., 2012).

Secondary quality indicators showed that nearly all (n = 24, 96.00%) of the studies collected IOA data while one did not (Ray et al., 1999). No study calculated Kappa nor reported blind raters (either they did not report or implement it). While most studies (n = 14, 60.00%) collected procedural/treatment fidelity data, less than half (n = 12, 48.00% conducted either generalization or maintenance sessions (n = 12). Evidence for social validity was found in approximately three quarters of the studies (n = 19, 76.00%).

Descriptive Characteristics

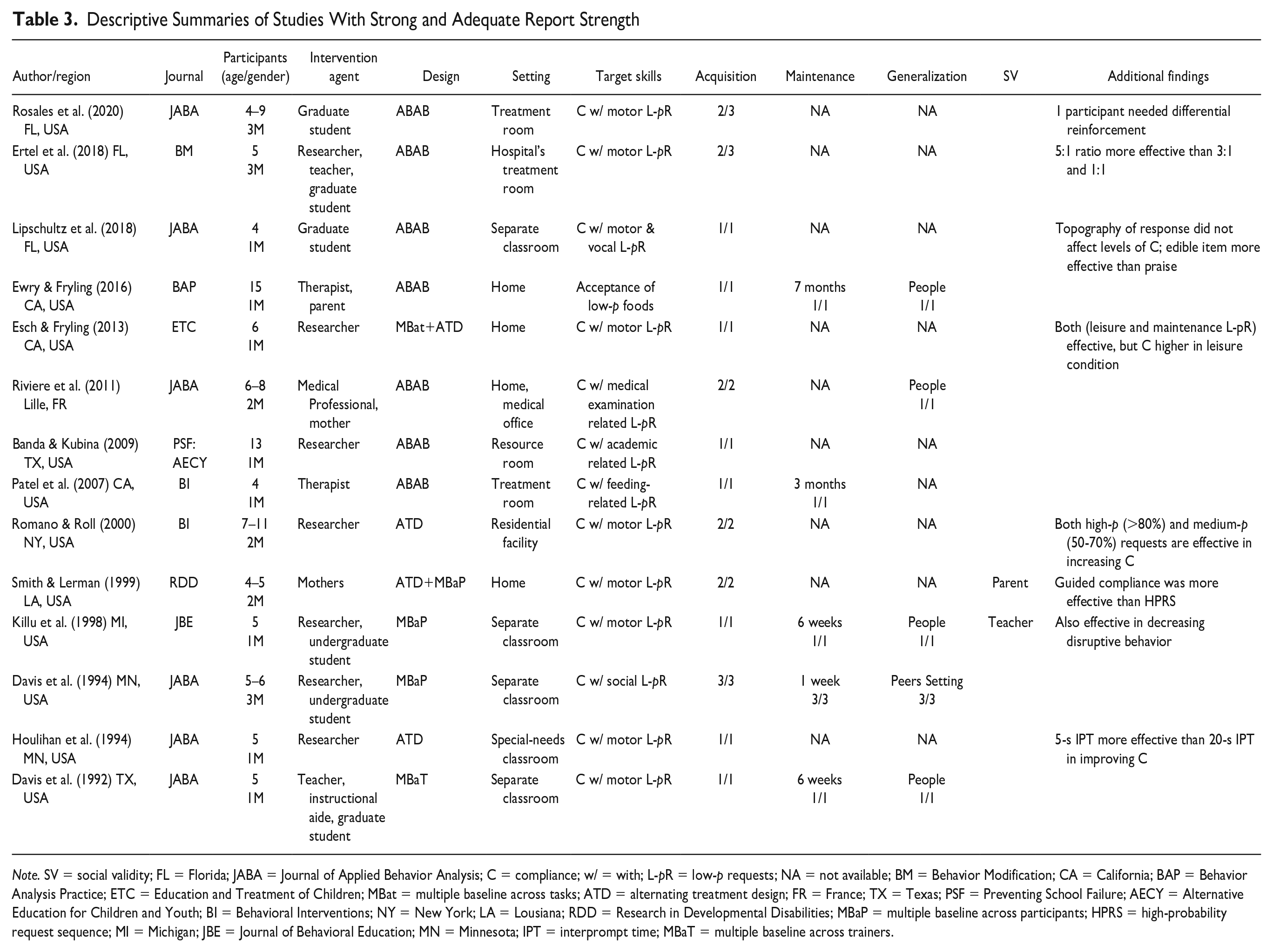

Descriptive characteristics include the main features of studies, participants, and interventions. We extracted only descriptive data from studies identified by the evaluating method as either strong or adequate report strength to detail studies that allow for the strongest causal inferences. In total, there were 14 studies rated as either strong or adequate with 19 participants represented across those studies. As such, the remainder of the results are based on 14 studies and 19 participants. Table 3 presents the descriptive information for these studies.

Descriptive Summaries of Studies With Strong and Adequate Report Strength

Note. SV = social validity; FL = Florida; JABA = Journal of Applied Behavior Analysis; C = compliance; w/ = with; L-pR = low-p requests; NA = not available; BM = Behavior Modification; CA = California; BAP = Behavior Analysis Practice; ETC = Education and Treatment of Children; MBat = multiple baseline across tasks; ATD = alternating treatment design; FR = France; TX = Texas; PSF = Preventing School Failure; AECY = Alternative Education for Children and Youth; BI = Behavioral Interventions; NY = New York; LA = Lousiana; RDD = Research in Developmental Disabilities; MBaP = multiple baseline across participants; HPRS = high-probability request sequence; MI = Michigan; JBE = Journal of Behavioral Education; MN = Minnesota; IPT = interprompt time; MBaT = multiple baseline across trainers.

Studies’ Characteristic

All studies except for one (Riviere et al., 2011) originated in the United States (n = 13, 92.86%). Those 13 studies were conducted in the states of California (n = 4), Florida (n = 3), Texas (n = 2), Minnesota (n = 2), Michigan (n = 1), and New York (n = 1). The most common outlet for these studies was the Journal of Applied Behavior Analysis (n = 6, 42.86%), while the next most common journal was Research in Developmental Disabilities (n = 2, 14.29%). Other journals included Behavior Modification, Education and Treatment of Children, Preventing School Failure, and the Journal of Behavioral Education.

Participants’ Characteristic

ASD Participants

Nineteen participants ages 4 to 15 (mean age = 6.5) were included across the studies used for this review. Most of the participants (n = 10, 52.63%) were preschool-age (3–5 years), approximately one-third were primary school-age (6–10 years; n = 6, 31.58%), and the remaining were secondary school-age (11–15 years; n = 3, 15.79%) children. All 19 participants were males and approximately half were diagnosed with ASD as the sole disability (n = 10, 52.63%). The remaining nine students were identified with comorbidity, including intellectual disability (n = 5, 26.32%), intellectual disability and speech and language disorder (n = 3, 15.79%), and developmental delay (n = 1, 5.26%).

Intervention Agent

In eight studies (57.14%), a research team member served as the primary intervention agent. The implementers of these eight studies were researchers (n = 4, 28.57%), graduate students (n = 2, 14.29%), and undergraduate students (n = 2, 14.29%). In the remaining studies, the interventionists were the teacher and instructional aide (n = 2, 14.29%), a therapist (n = 1, 7.14%), a mother (n = 1, 7.14%), a medical professional (n = 1, 7.14%), and a parent-therapist pair (n = 1,7.14%).

Intervention’s Characteristic

Settings

Nearly all the studies were conducted in a separate setting that allowed more control over implementation. The most common setting was a resource room (n = 6, 42.86%) while approximately one fifth were conducted at the participants’ homes (n = 3, 21.43%). Other settings used across the studies included treatment rooms in a children’s hospital (n = 1; 7.14%), specialized treatment rooms (n = 1; 7.14%), special needs preschool classroom (n = 1; 7.14%), and residential facility (n = 1; 7.14%). One study conducted the implementation at medical offices or participants’ homes (n = 1; 7.14%).

Research Design

In half of the studies, the research team used a withdrawal design (n = 7, 50.00%) to determine whether a functional relation was present, with multiple baseline as the next most common design (n = 3, 21.43%). In addition, the research included instances of an alternating treatment design (n = 2, 14.29%) and a nested design with multiple baseline and alternating treatment designs used concurrently (n = 2,14.29%).

Independent Variable

Different labels were used for the independent variable. Half of the studies used the label “high-p instructional sequence” (n = 7, 50.00%), while in about a third of studies, the label “high-p request sequence” (n = 5, 35.71%) was used. “High-p intervention” and “high-p request intervention” were other labels used in the studies. Some studies compared variations of the independent variable. One study compared three ratios of high-p with low-p instructions (5:1, 3:1, 1:1; Ertel et al., 2018). Esch and Fryling (2013) conducted a study comparing two variations of high-p sequence (maintenance and leisure high-p tasks) on compliance. In another study, the effects of different inter-prompt times (5 s and 20 s) on compliance were assessed (Houlihan et al., 1994). Romano and Roll (2000) set two levels, high-p (>80%) and medium-p (50–70%) requests. In another study, the effectiveness of guided compliance and HPRS was compared (Smith & Lerman, 1999).

Dependent Variable

In this meta-analysis, the following primary dependent variables were used: percentage of compliance with low-p instructions/requests (n = 9, 64.29%,) and latency to initiate a mathematics problem (n = 1, 7.14%). Most of the studies targeted low-p motor requests, such as “Stand up,” “Give me the phone,” or “Put the toy in the box” (n = 9, 64.29%). Some of these studies assessed the effectiveness of HPRS on two or more variables. For example, Ewry and Fryling (2016) evaluated the effects of HPRS on the percentage of bites accepted and the percentage of steps correctly performed by parents. In another study, disruptive behavior was also assessed, besides the number of responses to low-p requests (Killu et al., 1998). Davis et al. (1994) evaluated the effects of HPRS on the percentage of low-p requests performed, the percentage of time interacting, and prompted initiations of social behavior per minute. Another study examined the effects of HPRS on the rate of compliance with low-p requests and correct treatment components (Smith & Lerman, 1999).

Outcomes

For all participants with ASD except for two, HPRS effectively increased compliance (n = 17, 89.47%). The authors of five studies (35.71%) collected maintenance data for a total of seven participants (36.84%). Results of these studies demonstrated that the acquired skills were maintained between 1 week and nearly 7 months after completion of the intervention. Similarly, the authors of five studies (35.71%) examined the generalization of target skills across either participants or settings. Four participants across four studies demonstrated the ability to generalize the target skills to new or familiar people (Davis et al., 1992; Ewry & Fryling, 2016; Killu et al., 1998; Riviere et al., 2011). In one study, three participants generalized the target skills across peers and settings (Davis et al., 1994). A small proportion of studies collected social validity data (n = 2, 14.29%). These studies collected social validity data from teachers (Killu et al., 1998) or parents (Smith & Lerman, 1999). In one study, parents reported satisfaction and positive feedback for HPRS (Smith & Lerman, 1999). In the second study, teachers and teaching assistants indicated that the HPRS effectively increased compliant responses to requests, decreased disruptive behavior, and improved positive student response to requests (Killu et al., 1998).

When examining research findings, it has been observed that some studies have yielded striking results. We summarized some of these findings narratively in this paragraph. According to the findings of one study, in which the three ratios of high-p with low-p instructions (5:1, 3:1, 1:1) were evaluated, the 5:1 ratio (i.e., providing one low-p request after five high-p requests) was found to be more effective (Ertel et al., 2018). In another study, HPRS was effective when both leisure (e.g., “Drive your monster truck down the ramp,” “Fly your airplane,” and “Turn on the movie”) and maintenance (e.g., “Sit down,” “Clap your hands,” and “Give Mom a hug”) high-p requests were given. Still, the percentage of compliance behavior was higher in leisure conditions (Esch & Fryling, 2013). Houlihan and colleagues (1994) compared the effect of different inter-prompt times (5 s and 20 s) on compliance and found 5-s interprompt time more effective in increasing compliance. Another study found that delivering an edible item contingent upon compliance with each high-p request was more effective than praise in improving compliance with low-p requests. This study also showed that the topography of the response required by the high-p requests did not affect compliance levels (Lipschultz et al., 2018). Romano and Roll (2000) found that high-p (>80%) and medium-p (50–70%) requests were both effective in increasing compliance. In another study, HPRS was not effective for one participant. Differential reinforcement was needed to improve the compliance behavior of this participant (Rosales et al., 2020). Smith and Lerman (1999) compared the effects of HPRS and guided compliance. Findings showed that both interventions were effective, whereas guided compliance was more effective.

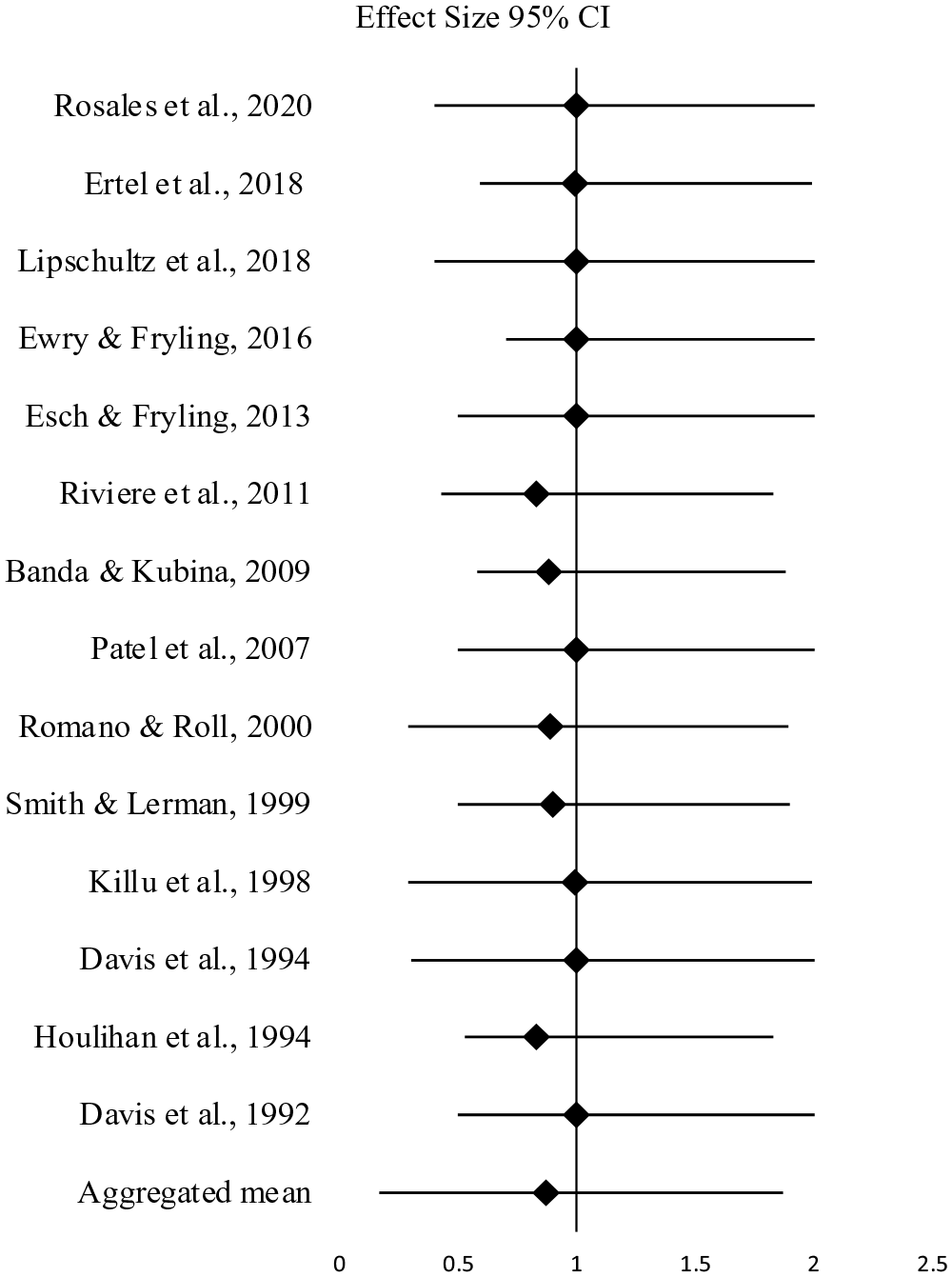

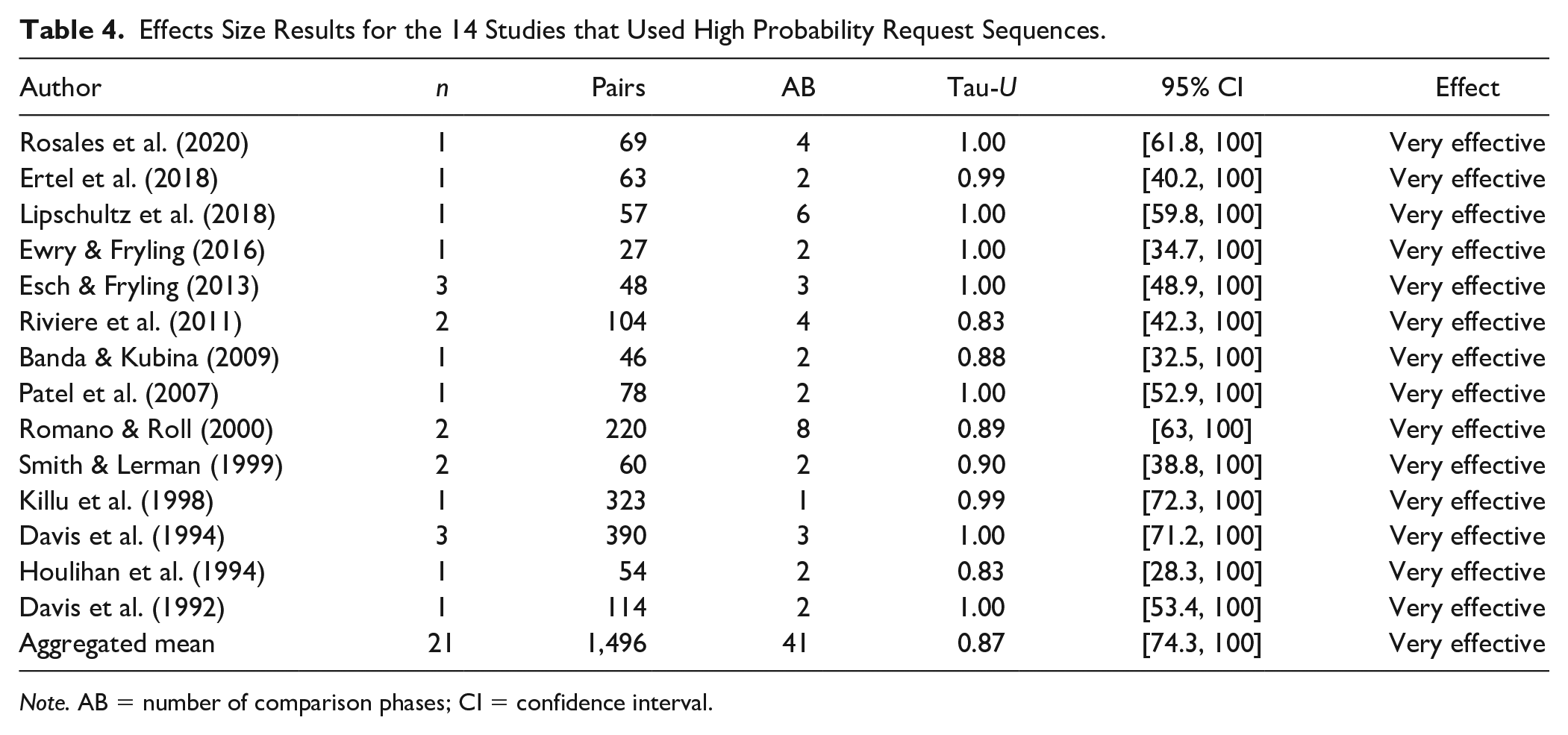

Effect Size

No study included in this meta-analysis reported an effect size estimate. Therefore, we calculated effect sizes for each individual study and computed an average effect size across all studies using Tau-U. Treatment effect estimates showed that all treatments across studies were very effective. Figure 2 provides a plot of the effect sizes for each study found to have either strong or adequate evidence and demonstrates a consistent and strong effect for all studies. As shown in Table 4, the aggregated mean Tau-U treatment effect estimate for the 14 studies based on 1,496 comparisons across 41 AB phases was .87 (range = .83–1.00), which indicates a very effective treatment. When the treatment effects of parent-implemented interventions were examined, the mean effect size was .91 (e.g., Ewry & Fryling, 2016; Riviere et al., 2011; Smith & Lerman, 1999). When teachers implemented HPRS, the mean effect size was calculated as .99 (e.g., Davis et al., 1992; Ertel et al., 2018), .90 when implemented by researchers (e.g., Banda & Kubina, 2009; Esch & Fryling, 2013; Houlihan et al., 1994; Romano & Roll, 2000), and .99 when implemented by students (e.g., Davis et al., 1994; Killu et al., 1998; Lipschultz et al., 2018; Rosales et al., 2020).

Forest Plot of Effect Size Estimates of Included Studies in the Meta-Analysis.

Effects Size Results for the 14 Studies that Used High Probability Request Sequences.

Note. AB = number of comparison phases; CI = confidence interval.

Evidence-Base Status

Following the application of the quality indicators, we determined that two studies provided robust evidence and 12 provided adequate evidence. We used these studies to assess whether HPRS is a promising or established intervention in increasing compliance in children with ASD. To determine the evidence-based status of an intervention, we calculated a Z score. The studies with a strong rating contained two participants for whom the intervention was effective. Therefore, SCD_S is 8. From studies with an adequate rating, HPRS was effective for 15 participants. Therefore, SCD_A is 30. Using the formula described in the method section, the Z score is 38. Thus, HPRS is a promising EBP in increasing compliance in children with ASD.

Discussion

The purpose of this study was to examine the methodological rigor of single-case research focused on HPRS to increase compliance in children with ASD. As part of this investigation, we identified descriptive characteristics of the included studies, calculated treatment effects for participant outcomes, and determined whether HPRS is an EBP for increasing compliance in children with ASD. We reviewed a total of 2,011 studies, of which 25 met our inclusion criteria. We evaluated the included studies on quality indicators using the evaluative method for determining evidence-based practices in autism (Reichow et al., 2008). Out of the 25 studies, we identified two as having strong, 12 adequate, and 11 weak research reports. Using those studies with adequate methodological rigor, we found that HPRS is a promising practice for improving compliance in children with ASD. In the following section, we address some issues and provide context for these results.

Research Quality

The application of the quality indicators revealed that only half of HPRS studies included in this review had strong or adequate research rigor. This is not the desired ratio, but the data suggest methodological improvement since the publication of Horner and colleagues (2005), a seminal piece outlining key characteristics needed for a rigorous single-case research study. While there were methodologically sound studies conducted before 2005 (e.g., Banda & Kubina, 2009; Killu et al., 1998), most of those not meeting the criteria of the evaluative method were published prior to the Horner and colleagues’ paper. Even with the improved rigor over time, there remain certain methodological areas in need of more attention in future research. For instance, studies that are inadequate in terms of methodological rigor have reported insufficient information about their participants’ selection procedures and characteristics (e.g., Common et al., 2019; Cowan et al., 2017; Maag, 2019). This is concerning because, in single-case research, it is important to operationally define the participant characteristics to support replication and generalization beyond the research (Horner et al., 2005; Wolery & Ezell, 1993). In addition, it was determined that some of the methodologically inadequate studies did not have three different demonstrations of the treatment effect; in other words, experimental control was not achieved. This finding is consistent with the findings of the meta-analysis study conducted by Maag (2019). Maag (2019) reported that nearly half of the studies included in his meta-analysis failed to establish experimental control. In fact, in an experiment, it is paramount to utilize experimental control to demonstrate that only the tested variable makes changes to the dependent variable. Finally, treatment integrity data were collected in only half of the studies and for those studies, the treatment integrity coefficient was low. Without quality treatment integrity data, it is difficult to conclude whether the intervention was correctly implemented (Horner et al., 2005) and to subsequently claim that the independent variable is effective.

Descriptive Overview

Regarding descriptive characteristics, it was revealed that most of the studies that originated in the United States were published in the Journal of Applied Behavior Analysis, were conducted with only male participants. Moreover, most students were in either pre- or primary school and conducted by professionals in segregated settings. Unsurprisingly, most participants were male which is in line with widely known prevalence rates with ASD diagnoses (Fombonne, 2003; Maenner et al., 2023; Worley et al., 2011). Most studies included preschool students, which aligns with previous meta-analyses (e.g., Cowan et al., 2017). It is notable that our sample included three secondary-age participants, something that was not found in prior reviews. Generalizing HPRS to older students is an important advancement because of the need for more preventative methods at this age. As with early childhood, increasing appropriate behavior and decreasing challenging behaviors in early childhood might prevent potential problems in the future. Although challenging behaviors are not included in the ASD diagnostic criteria, these children might be more likely to exhibit challenging behaviors than their typically developing peers due to communication and interaction limitations (Brereton et al., 2006; McClintock et al., 2003). Problem behaviors can cause injury and damage and interfere with learning and social interaction. As a result, it may limit the children’s participation in school and social settings (Kestner & St. Peter, 2018; Lindgren et al., 2020).

Another important finding is that the most prevalent implementers across studies were researchers with more than half of the studies deploying a member of the research team. Although limited, there were some studies in which parents or teachers conducted the intervention (Davis et al., 1992, 1994; Ertel et al., 2018; Ewry & Fryling, 2016; Killu et al., 1998; Smith & Lerman, 1999). These studies showed that teachers and parents could successfully function as intervention agents in applying HPRS. Thus, it is possible to coach or mentor teachers/parents so they can use such behavioral interventions in classroom settings or at home. We recommend more research that prepares non-research personnel to implement HPRS to better understand the training protocols and abilities of practitioners and families to implement the strategy.

When conducting the meta-analysis, it was seen that there was no consensus on the term for the independent variable. Half of the studies used “high-p instructional sequence,” one third used “high-p request sequence” to define the intervention. Other labels, such as “high-p intervention” and “high-p request intervention,” were also used in two studies. Cowan and colleagues (2017) used the term “high-probability command sequence” in their meta-analysis. Although when different terms are used for the same intervention, identifying relevant studies, and comparing and combining study results can be difficult, we considered the definition of independent variables and implementation steps of the studies in the current meta-analysis.

Research Design Issues

Maintenance and generalization results were less, and only about half of the studies collected maintenance and generalization data. Examination of maintenance and generalization effects in autism research is limited (Gunning et al., 2019; Pokorski & LeJeune, 2022); however, when prosocial behaviors are targeted, it is essential that participants can maintain these behaviors over time. Moreover, children with ASD might have difficulties generalizing acquired behaviors (Gunning et al., 2019). There is a consensus that the intervention plans should include generalization and maintenance plans. Kennedy (2005) proposed that maintenance is one part of the social validity of the intervention. If the acquired skills and strategies are not maintained over time and generalized to different settings, it may be possible to conclude that the aims, procedures, and outcomes were not socially important. For these reasons, researchers may consider conducting research focused on the effectiveness of the HPRS in promoting the maintenance and generalization of target behaviors.

Three fourths of the studies met the quality indicators on social validity; however, no included study provided social comparison data. Just a few studies obtained parents’ (Smith & Lerman, 1999) or teachers’ (Killu et al., 1998) opinions on the social appropriateness of the procedure and the significance of treatment outcomes, while one fifth of the studies got the views of people typically interact with the participant (e.g., Davis et al., 1992, 1994; Smith & Lerman, 1999). However, in all studies, the research team deemed that a socially important dependent variable was targeted and often manipulated in natural settings. In the study completed by Common and colleagues (2019), all studies targeted socially important behaviors. However, whether questionnaires were used to examine the social validity of the intervention was not reported. The results of another study showed that less than half of the studies provided sufficient information regarding social validity (Cowan et al., 2017). Future research should focus on increasing the reporting of social validity because it is essential to ensuring clinically significant interventions.

The aggregated Tau-U treatment effect size result was similar to results from prior research. For instance, Maag (2019) found a Tau-U of .80. It is noteworthy that effect size regarding teacher/student-implemented interventions was the highest with a mean Tau-U score of .99. Studies in which parents implemented the intervention mean Tau-U score was .91. While the effect size for studies in which researchers conducted the interventions was the lowest with a mean Tau-U score of .90. It is possible to say that according to the included studies, interventions conducted by natural intervention agents might be more effective in improving child outcomes. The literature emphasizes the importance of implementing effective interventions such as HPRS with high implementation fidelity to obtain positive student outcomes (Fixsen et al., 2013). The effect size findings indicate that parents or teachers could implement HPRS with high implementation fidelity. Therefore, researchers may consider conducting studies with parents, teachers, and peers as intervention agents to increase children’s social interactions and support their learning in natural settings.

Applying the EBP status formula and criteria recommended by Reichow and colleagues (2008), it can be concluded that HPRS is a promising EBP in increasing compliance in children with ASD. In the meta-analysis carried out by Cowan and colleagues (2017), the effectiveness of antecedent strategies grounded in behavioral momentum (i.e., high-p command sequence and task interspersal) on increasing compliance and on-task behavior of students with ASD were investigated. Results presented that HPRS could not be considered as EBP because included studies did not achieve the “5-3-20” threshold (Horner et al., 2005; Kratochwill et al., 2013). Brosh and colleagues (2018) combined their results with the report conducted by Wong and colleagues (2014) and determined that HPRS can be considered an EBP for children with ASD. Another systematic review classified HPRS as a potential EBP. However, the researchers noted that when CEC’s absolute coding was used, it would be more challenging to categorize HPRS (Common et al., 2019). In the report of The National Clearinghouse on Autism Evidence and Practice (NCAEP), behavioral momentum (HPRS) was included in the EBP category for the first time (Steinbrenner et al., 2020). The mixed findings may be due to differences in how these reviews were implemented (e.g., different dependent variables, different special needs groups, and different coding systems). The current study focused only on compliance in children with ASD and used a systematic rating system specially developed for individuals with ASD (Reichow et al., 2008). Thus, by focusing only on one dependent variable, we aimed to expand the domain of HPRS.

Review Limitations

There are several limitations and delimitations to consider when interpreting the results. First, we only included studies in which low-p requests were presented after one-to-five high-p requests. However, due to differences in terminology (e.g., HPRS, behavioral momentum, high-p command sequence), it is possible that we excluded relevant studies. Second, we only included studies conducted with children with ASD. Thus, studies conducted with children from other disability groups could provide additional support for the effectiveness of HPRS. Third, we limited this meta-analysis to compliance behavior. Another delimitation is that only single-case research studies were reviewed in the current study. Lastly, we only included articles published in peer-reviewed journals and excluded publications from the gray literature (e.g., thesis, dissertations, project reports, etc.; Rosenthal, 1979).

Footnotes

Acknowledgements

The authors thank the reviewers for their feedback and Dr. Daniel Maggin for providing helpful suggestions on this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.