Abstract

This study investigates how students engage with GenAI during a business data analysis assessment, drawing on Social Constructivist Theory and the human–AI co-agency model. Within the assessment, students used GenAI tools to support their data analysis and reflected on their experiences by comparing AI-generated and manually derived results. Thematic analysis of 258 students’ reflection, triangulated with academic performance data, revealed four key themes: epistemic beliefs, functional cognitive engagement, reflective metacognitive learning, and Human–GenAI co-agency for strategic foresight. Students demonstrated distinct patterns of engagement across performance groups. Higher-performing students approached GenAI as a collaborative partner, engaging in iterative prompt refinement, demonstrating critical evaluation of outputs, and exhibiting strong ethical awareness. In contrast, lower-performing students often showed polarised epistemic beliefs, limited critical reflection, and minimal iteration – accepting or rejecting GenAI outputs prematurely. These findings highlight the role of scaffolded reflection and prompt engineering in enabling students to develop deeper analytical and evaluative capacities. By reconceptualising GenAI as an active co-learner rather than a passive tool, this study extends Social Constructivism perspectives to accommodate emerging forms of human–GenAI interaction. Drawing on rich, in-situ qualitative evidence embedded within an authentic learning context, it offers new insight into how students’ beliefs and strategies shape their engagement with GenAI. The study also emphasises the need for differentiated pedagogical designs that cultivate AI literacy, narrow digital divide, and support ethical, adaptive use of GenAI in higher education.

Introduction

Generative Artificial Intelligence (GenAI) is reshaping higher education by introducing new dimensions of human–AI collaboration and redefining traditional learning interactions (Padovano & Cardamone, 2024; Zhou & Schofield, 2024). Increasingly sophisticated large language models serve not merely as passive tools but as interactive, agentive partners capable of engaging in dialogue, co-constructing knowledge, and fostering deeper cognitive reflection (Clegg & Sarkar, 2024; Dang et al., 2025). These technologies differ fundamentally from earlier digital learning tools by enabling reciprocal interaction, iterative problem-solving, and personalised scaffolding, thus closely resembling human learning partners in educational processes (Chiu & Rospigliosi, 2025; Nguyen et al., 2024).

This evolution necessitates a reconsideration of established pedagogical frameworks, particularly Social Constructivist Theory (SCT), to adequately capture the complexities of contemporary human–AI co-agency in education. SCT emphasises the social and interactive dimensions of learning, wherein knowledge is actively constructed through meaningful collaboration and dialogue with knowledgeable peers or educators (Bandura, 1986; Thoutenhoofd & Pirrie, 2015; Vygotsky, 1978). SCT foregrounds critical concepts such as scaffolding, self-regulated learning, reciprocal determinism, and agency as core to effective learning interactions (Lave & Wenger, 1991; Palincsar, 1998). Traditionally, these interactions have been conceptualised as exclusively human-mediated, with technological tools treated as supportive resources rather than active participants in knowledge creation. However, as AI increasingly assumes roles conventionally reserved for human agents – such as providing nuanced feedback, engaging in iterative dialogue, and prompting reflective thinking (Lee & Palmer, 2025) – there is a pressing need to reconceptualise SCT to integrate GenAI as an active co-learner or agent (Zhou & Schofield, 2024).

Within the management education context, business schools increasingly emphasise data-driven decision-making, critical evaluation of information, and ethical discernment – skills now frequently supported or augmented by GenAI (Essien et al., 2024; Narang et al., 2025). Yet, this rapid integration of AI poses significant challenges, including the risk of superficial engagement, over-reliance on AI-generated outputs, ethical dilemmas around data use, and uneven development of critical competencies among students (Hazari, 2025; Zhou et al., 2025). Despite considerable scholarly attention on conceptual frameworks or survey-based investigations of student attitudes towards AI (Guha et al., 2024; Oliveira et al., 2025), most existing studies have focused on conceptual frameworks or self-reported perceptions rather than direct evidence of how students reflect on their GenAI use in authentic learning contexts. Moreover, the majority of empirical studies have been situated in STEM disciplines (e.g. Tong et al., 2025), with limited attention to Management and Business education, particularly in Business Analytics where GenAI is increasingly applied in professional practice. The relationship between students’ reflections on GenAI use and their actual performance therefore remains largely under-explored.

Addressing these critical gaps, we pose two research questions: (1) What forms of cognitive engagement do students demonstrate when interacting with GenAI in subject learning? (2) To what extent does a scaffolded and reflective learning approach influence the development of students’ cognitive skills across performance groups? This approach combines structured instructional support (scaffolding) with repeated cycles of refinement and reflection. These research questions are critical for understanding the pedagogical implications of GenAI integration from a Social Constructivist perspective, particularly given the limited existing empirical evidence about direct observation of students’ cognitive and reflective engagement with GenAI tools (except Pavone, 2025). Moreover, exploring variations across different student groups addresses an under-explored dimension of SCT, revealing how learner characteristics mediate their capacity for meaningful engagement with technological agents (Hazari, 2025).

To answer the research questions, we examine 258 Year 2 undergraduate students’ written reflections in an assessment in which they used GenAI tools to assist their business data analysis. Through thematic analysis of students’ reflections, triangulated with quantitative performance data, we uncover how learners construct meaning, and develop critical awareness in AI-supported environments – highlighting distinct patterns of engagement across performance bands. This study offers four contributions. First, it extends SCT by reconceptualising GenAI as an active, agentive co-learner beyond traditional human–human interaction. Second, it provides empirical insights grounded in rich, assessment-embedded qualitative data, capturing students’ experiences more directly than surveys or conceptual work. Third, it shows how learner characteristics influence engagement with GenAI, informing pedagogical strategies to mitigate digital inequalities (Fang & Zhou, 2025; Zhou et al., 2025). Fourth, it presents a replicable, hands-on teaching practice where students critically reflect on GenAI-assisted analysis in authentic assessment, modelling meaningful and ethical AI integration in higher education.

The remainder of this paper proceeds as follows. We first review the literature on Social Constructivism, human–AI collaboration, recent GenAI applications in management education, and identify gaps. We then outline the methodological framework, detailing data collection and thematic analysis procedures. Next, we report the empirical findings, focussing on identified themes and variations across learner groups. We subsequently discuss theoretical contributions and implications for pedagogical practice. Finally, we conclude and propose future research directions.

Literature Review

Social Constructivist Theory and the Emergence of Human–GenAI Co-agency

SCT foregrounds the reciprocal interplay between learners, their teachers, and their environments, emphasising that knowledge is actively constructed through mediated social interactions (Bandura, 1986; Duan et al., 2023; Vygotsky, 1978). Historically, these mediators have primarily been human – teachers, peers, and mentors – serving as agents within the learner’s Zone of Proximal Development (ZPD) (Thompson, 2013). However, the increasing sophistication of GenAI tools has challenged this traditional framing.

Recent conceptual models propose human–AI collaboration frameworks that position GenAI as a cognitive partner, capable of offering real-time feedback, adaptive support, and even dialogic engagement (Edwards et al., 2025; Hutson & Plate, 2023). These models echo the SCT principle of reciprocal determinism by suggesting that learners and AI tools co-regulate one another’s contributions in a learning episode (Mishra, 2023). Notably, Clegg and Sarkar (2024) argue that such co-agency can extend to complex tasks, with GenAI complementing human cognition in theory-building and decision-making.

In higher education, the role of GenAI in supporting self-regulated learning has been explored through frameworks of shared cognitive control. Dang et al. (2025), for example, propose a model of collaborative regulation with embodied AI agents, integrating both cognitive and socio-emotional elements – again echoing the SCT notion that meaningful learning arises from interaction with capable agents (which is GenAI rather than human in this context). Similarly, Tong et al. (2025) experimentally compare human–human versus human–AI collaboration in physics problem-solving, demonstrating that well-structured human–AI interactions can promote comparable, if not superior, learning outcomes under certain conditions.

It is worth noting that in this study, we distinguish between two conceptual roles of GenAI in learning. When viewed as a tool, GenAI serves a primarily instrumental function – automating, generating, or summarising information to support task completion without influencing learners’ underlying cognitive processes. In contrast, positioning GenAI as a co-learner frames it as an interactive agent that engages in dialogue, provides feedback, and co-constructs understanding with the learner. The distinction lies in reciprocity: the tool role reflects unidirectional use (learner → AI), while the co-learner role entails bidirectional knowledge construction (learner ↔ AI).

SCT and Human–AI Co-agency in Management Education

While much of the work on human–GenAI collaboration has focused on STEM or general education contexts, there is a rising corpus addressing management education specifically. For instance, Valcea et al. (2024) introduce the ‘Expertise Paradox’ to describe how GenAI’s automation of analytical tasks may paradoxically erode students’ long-term ability to develop deep expertise unless scaffolded appropriately. Guha et al. (2024) similarly note a disconnect between existing marketing curricula and the dynamic capabilities of GenAI, urging curriculum reform that builds AI literacy and critical reasoning in tandem. Padovano and Cardamone (2024) apply human–AI collaboration to redesign curricula in industrial and management education, integrating AI-powered semantic analysis and stakeholder-informed validation. Pavone (2025), in the context of marketing education, further highlight that individual differences such as self-esteem and academic anxiety act as antecedents shaping students’ engagement with GenAI, emphasising the need for emotionally responsive AI-integrated learning design.

Recent studies in management education have focused on how human–AI collaboration affects students’ critical thinking and evaluative skills. Essien et al. (2024), Gonsalves (2024), Larson et al. (2024), and Zhou et al. (2024) explore how GenAI impact critical thinking, warning that while AI can assist with idea generation and facilitate performance on lower-order tasks, excessive reliance may hinder deep analytical engagement and the development of independent evaluative skills. Oliveira et al. (2025) propose a scale to measure how students perceive AI’s critical thinking dispositions, revealing gaps in students’ understanding of AI’s truth-seeking limitations. Hazari (2025) finds divergent student perceptions – some view ChatGPT as a creativity enabler, while others see it as a threat to originality and skill growth – reinforcing the SCT view that beliefs about learning tools shape their usage and impact.

Embedding GenAI meaningfully in management education requires attention not only to performance but also to students’ ethical and epistemological development. Aure (2024) advocates for integrating ethical training into business curricula, warning that students often lack the metacognitive strategies necessary to evaluate AI-generated outputs critically. This aligns with recent calls for ‘reflective AI literacy’ – a framework that synthesises ethical reasoning, epistemic awareness, and strategic decision-making (Almatrafi et al., 2024). Such models resonate with SCT’s emphasis on agentic learning, suggesting that students must not only interact with GenAI but also regulate, evaluate, and strategically adapt these interactions over time (Zhou et al., 2024).

Gap and Our Contributions – Towards a Socially Constructivist Pedagogy for GenAI

Although the potential of Human–GenAI co-agency in education has been widely acknowledged, much of the existing literature remains predominantly conceptual, lacking empirical grounding (e.g. Clegg & Sarkar, 2024; Chiu & Rospigliosi, 2025). Even among empirical contributions, most rely on surveys or interviews (e.g. Hazari, 2025; Karakose et al., 2023; Oliveira et al., 2025), capturing perceptions rather than observing learner–AI interactions. Direct observational studies of how students actually engage with GenAI during learning tasks remain scarce, particularly those that adopt process-oriented or behavioural lenses (e.g. Nguyen et al., 2024).

Furthermore, the majority of empirical work on human–GenAI collaboration has emerged from STEM or general education contexts (e.g. Tong et al., 2025), with relatively few studies situated within management education (e.g. Gonsalves, 2024; Pavone, 2025). Even fewer focus on business data analysis as a subject domain, despite its increasing reliance on AI technologies in professional practice. This leaves a critical gap in understanding how business students – who often have limited quantitative confidence – engage with GenAI as both a computational aid and a cognitive partner.

Our study addresses these gaps by empirically analysing students’ written reflections on their engagement with GenAI tools, within an applied business data analysis module. Unlike prior studies, our approach draws on direct observation, which are task-embedded rich qualitative data. This allows us to capture nuanced, in-situ evidence of how learners navigate AI-supported learning, exercise agency, and respond to ethical dilemmas. By doing so, we extend the theoretical scope of SCT, which traditionally positions humans – such as peers or instructors – as the primary agents in learning. We reconceptualise this framework to include GenAI as an active agent and co-learner, rather than a passive tool.

Moreover, triangulated with quantitative performance data, we examine variation in GenAI engagement across different performance bands – high-, mid-, and low-performing students – highlighting how learner characteristics shape the development of co-agency. By exploring these differential engagement patterns, we contribute to an underexamined area of SCT: how learners’ cognitive and metacognitive profiles influence their capacity to interact meaningfully with AI as a learning partner – an insight reinforced by Hazari’s (2025) findings that divergent student perceptions of ChatGPT, as either a creativity enabler or a threat to skill development, shape their patterns of use and learning impact.

Data and Methodology

The data for this study were collected from an assessment in the undergraduate module Working with Business Data, undertaken by 258 students. All students provided consent to participate, so the entire cohort was included in the analysis. Ethical approval for the study was obtained through the university’s research ethics procedures.

The assessment was designed to function within an asynchronous learning environment, where students independently applied GenAI tools to analyse a business scenario based on a hypothetical IT company, TechGlobal Corp., which significantly increased its investment in AI technologies after 2021. Students were tasked with analysing seasonal business performance data to investigate the impact of AI investment on key business outcomes, including sales, profits, staff satisfaction, customer satisfaction, and employee turnover.

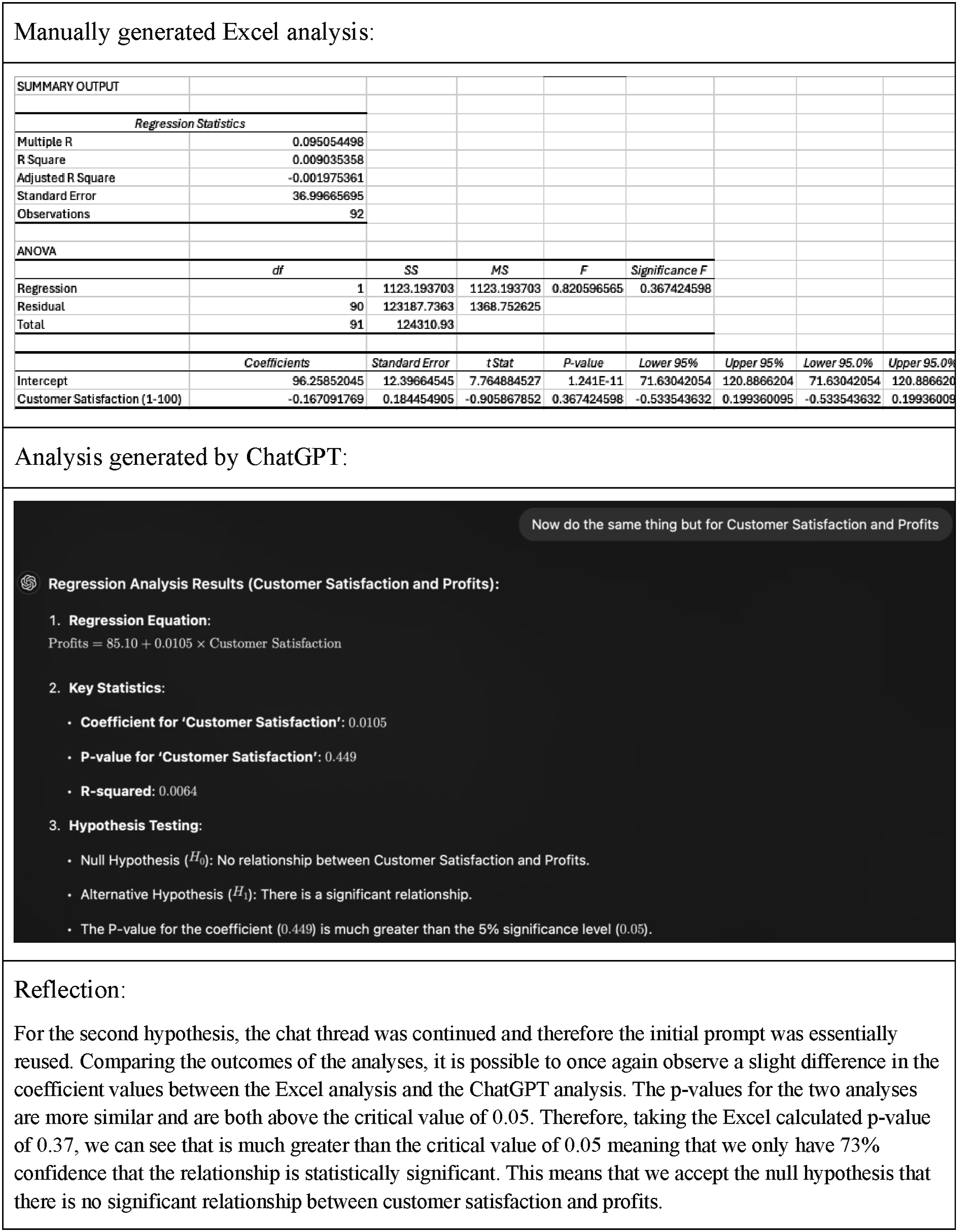

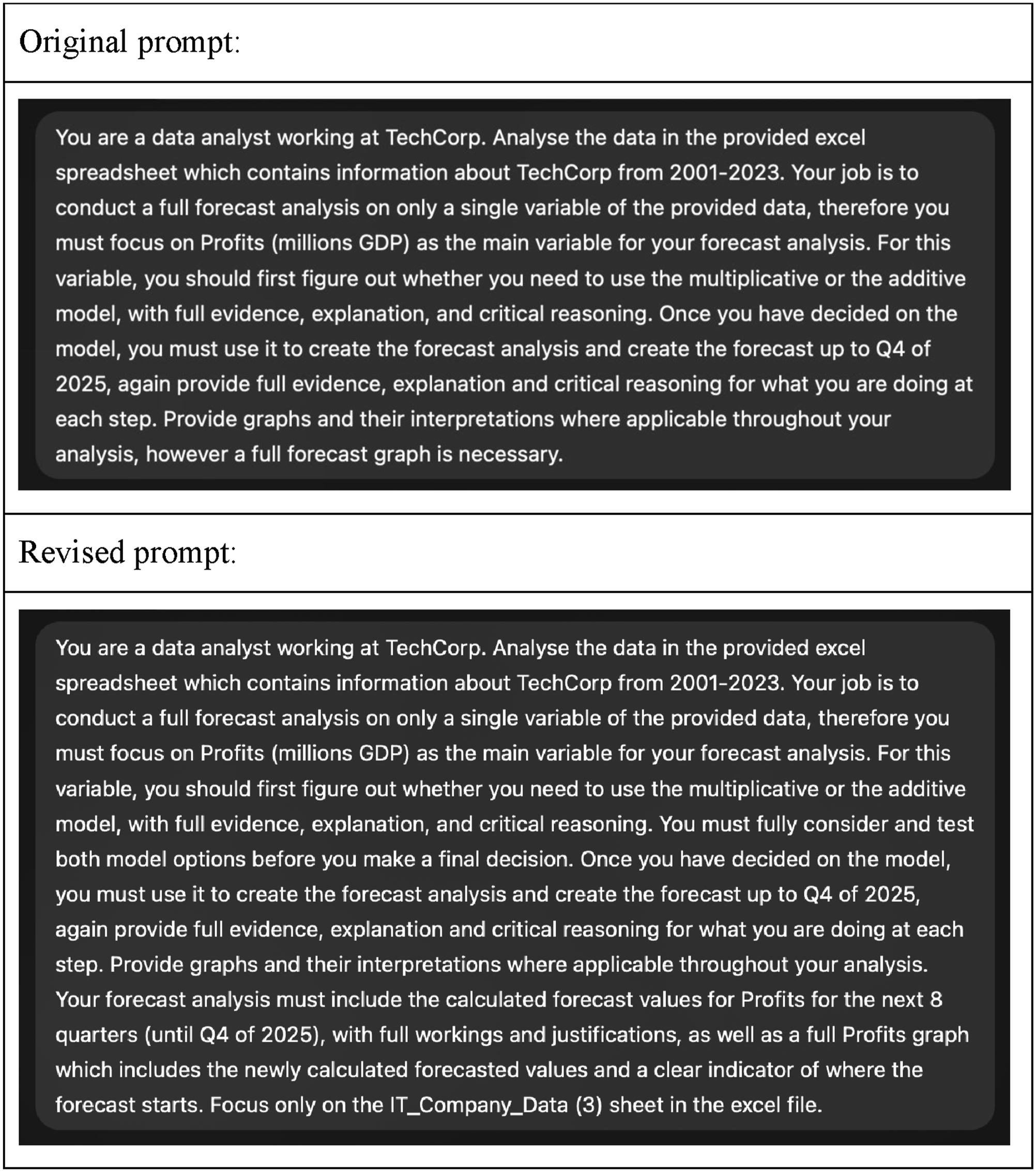

As part of the assessment, students were asked to manually conduct forecasting and hypothesis testing in Excel and then use GenAI tools (e.g. ChatGPT or Microsoft Copilot) to replicate the same tasks. All students had free access to the web-based version of Microsoft Copilot provided by the university. They were instructed to compare the results and reflect critically on their experiences using GenAI in the context of business data analysis (an example of such comparison is shown in Figure 1). In addition to the written reflections, students’ overall academic performance in the module was recorded. This score reflected their comprehensive ability in business data analysis, not limited to this exercise. Learner groups, categorised by overall module marks (0–49, 50–69, and 70–100), include 99 lower-performing, 94 middle-performing, and 65 higher-performing students. A sample student’s comparison of a manually generated analysis in Excel with a ChatGPT-generated analysis, including a reflection

A thematic analysis was conducted on the students’ written reflections to explore patterns of learning and engagement with GenAI. The coding followed a structured analytical framework focussing on cognitive, metacognitive, and strategic foresight dimensions of engagement. The two authors independently coded the data, and then compared their coding results, discussed discrepancies, and reached consensus on the final coding through iterative refinement.

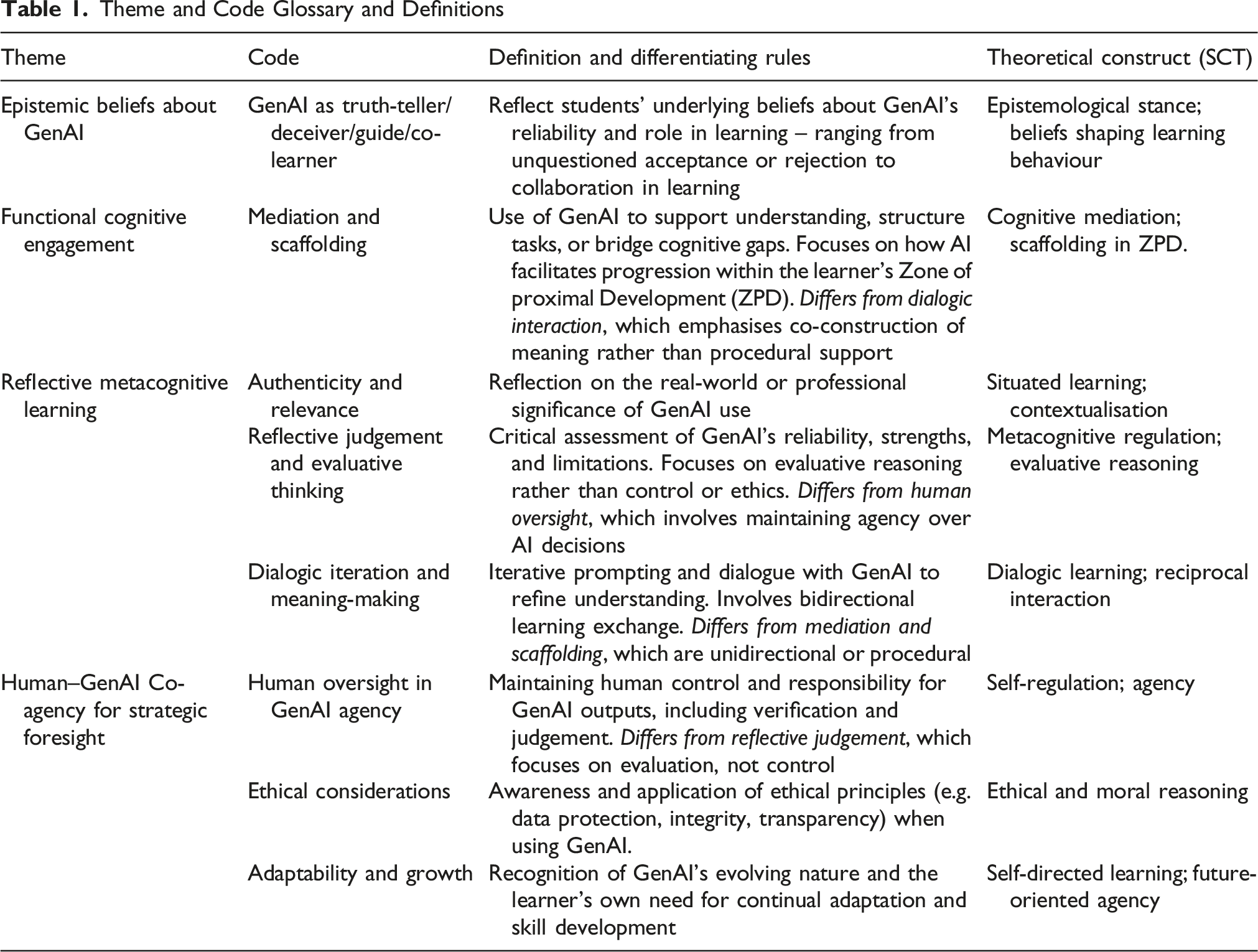

Theme and Code Glossary and Definitions

Results of Thematic Analysis

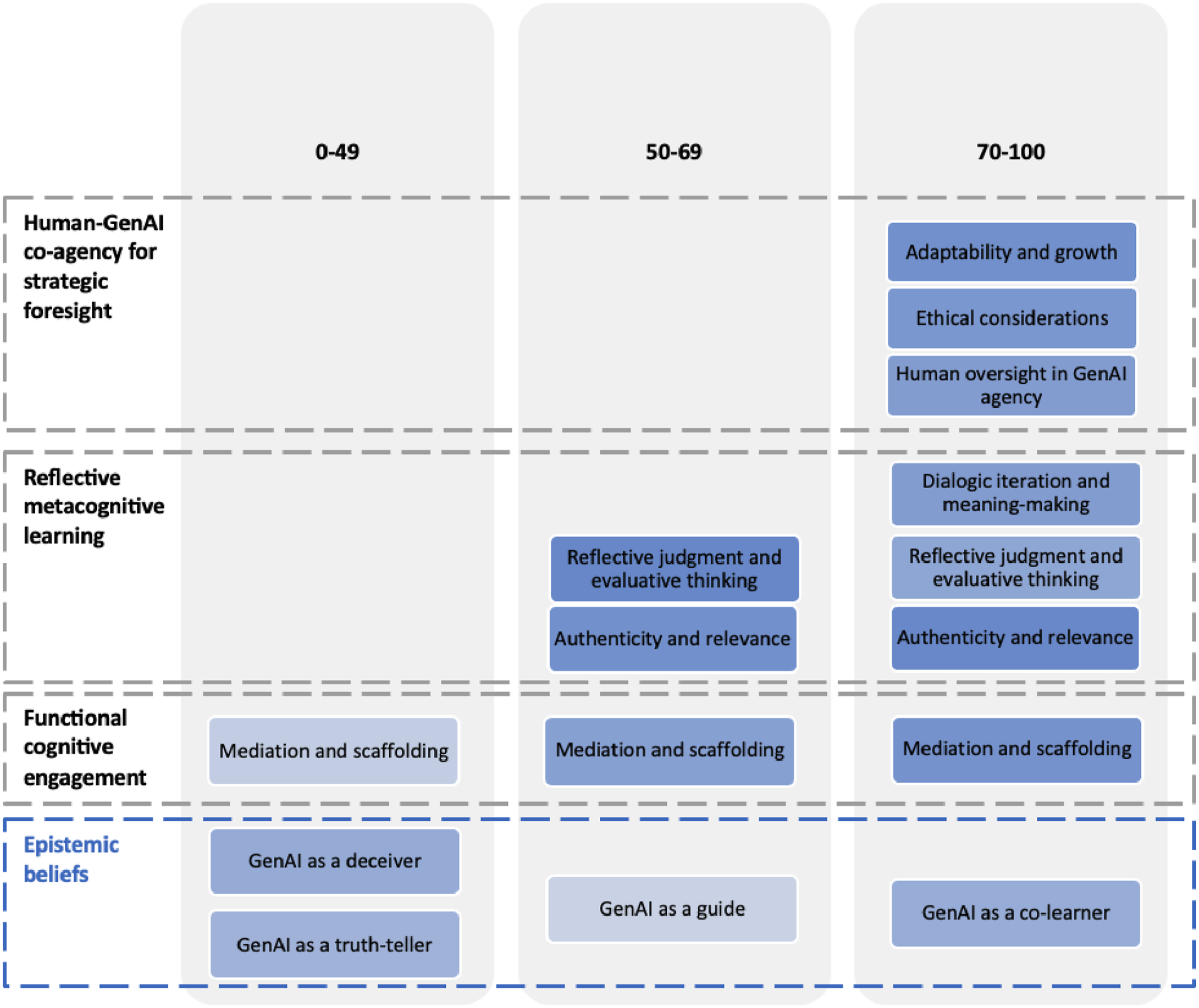

This section presents the key findings from a thematic analysis of students’ written reflections on their use of GenAI tools in business data analysis tasks. Across all reflections, four core themes emerged: epistemic beliefs about GenAI, functional cognitive engagement, reflective metacognitive learning, and human–GenAI co-agency for strategic foresight (see Figure 2). Each theme consists of several codes. These themes were applied across three learner groups, categorised based on overall module marks, which reflect comprehensive business data analytics skills beyond the reflection on GenAI usage. Figure 2 summarises the distribution of codes by group. Within each group, a darker shade indicates a higher percentage of students mentioning the corresponding code. Thematic analysis summary across three learner groups. Each bubble represents a code, while themes are grouped within dashed boxes. Learner groups (categorised by overall module marks: 0–49 marks, 50–69 marks, and 70–100 marks) are shown in columns. Darker shades indicate a higher frequency of code mention within each group. If a code was mentioned by fewer than 10% of students in a group, it is not shown as a bubble for that group

RQ1: What Forms of Cognitive Engagement do Students Demonstrate when Interacting with GenAI in Subject Learning?

Epistemic Beliefs About GenAI

Learners’ epistemic beliefs – how they perceive the reliability and role of GenAI in knowledge construction – emerged as a critical theme. Four epistemic beliefs were identified: GenAI as a truth-teller, GenAI as a deceiver, GenAI as a guide, and GenAI as a co-learner, reflecting divergent assumptions about GenAI’s role in learners’ knowledge construction.

Among students in the lower performance band (0-49 marks), epistemic beliefs about GenAI were polarised. 45% of the students within this group positioned GenAI as a truth-teller. For example, one student shared: ‘GenAI tools showed exceptional efficacy and accuracy as they offered practical insights, like calculating the financial return on AI investments’ (W1). Another remarked: ‘Statistical tests using GenAI provided mostly reliable results’ (W33). These students demonstrated a tendency to accept GenAI’s authority with minimal critical interrogation.

At the opposite extreme, 51% of students in the lower performance band framed GenAI as a deceiver, voicing frustration over errors, inconsistencies, and unmet expectations. This perspective reflects a perception of GenAI as unreliable or even misleading. For instance, one student noted: ‘ChatGPT has provided many errors when asked to do certain tasks. It kept saying that it's going to solve the problem but it never actually did it’ (W5). Another commented: ‘ChatGPT produced inconsistent results for forecasting and regression analysis’ (W32). Instead of attempting to resolve these issues, these students abandoned further engagement, concluding that GenAI was ineffective and expressing reluctance to use it.

In contrast, 22% of the mid-performing students (50-69 marks) viewed GenAI as a guide, describing it as useful for structuring their work and supporting analytical reasoning while still recognising its limitations, and the situations when GenAI performed well. For example: ‘GenAI tools were helpful in outlining and guiding the analysis process while more specific and nuanced observations were gathered’ (M4); and ‘ChatGPT assisted in generating insights, interpreting data, and guiding hypothesis formulation’ (M5).

Among the highest-performing students (70-100 marks), 49% conceptualised GenAI as a co-learner. These learners approached GenAI not just as a tool but as an interactive agent that could enhance their own learning processes. As one student explained: ‘ChatGPT was instrumental in helping me organise my datasets, understand complex statistical concepts, and clarify the steps for conducting regression analysis’ (S5). Others highlighted GenAI’s role in extending understanding through iteration and refinement: ‘GenAI also offered to discuss the results further, providing even more detailed interpretation’ (S14); and ‘I aim to improve my ability to craft precise prompts and prepare cleaner data inputs, which will further boost the effectiveness of these tools’ (S64). This co-learner perspective was evident in how students engaged GenAI as an active partner in their learning process: ‘AI tools greatly enhanced my productivity by automating tedious calculations... enabling me to focus on interpretation and application of the results’ (S28), and ‘AI tools helped swiftly analyse large volumes of data, drawing valuable trends quickly. I will critically analyse the results and complement them with my own analysis’ (S51), signalling a dynamic partnership in which the student actively directed the learning while GenAI extended their capabilities.

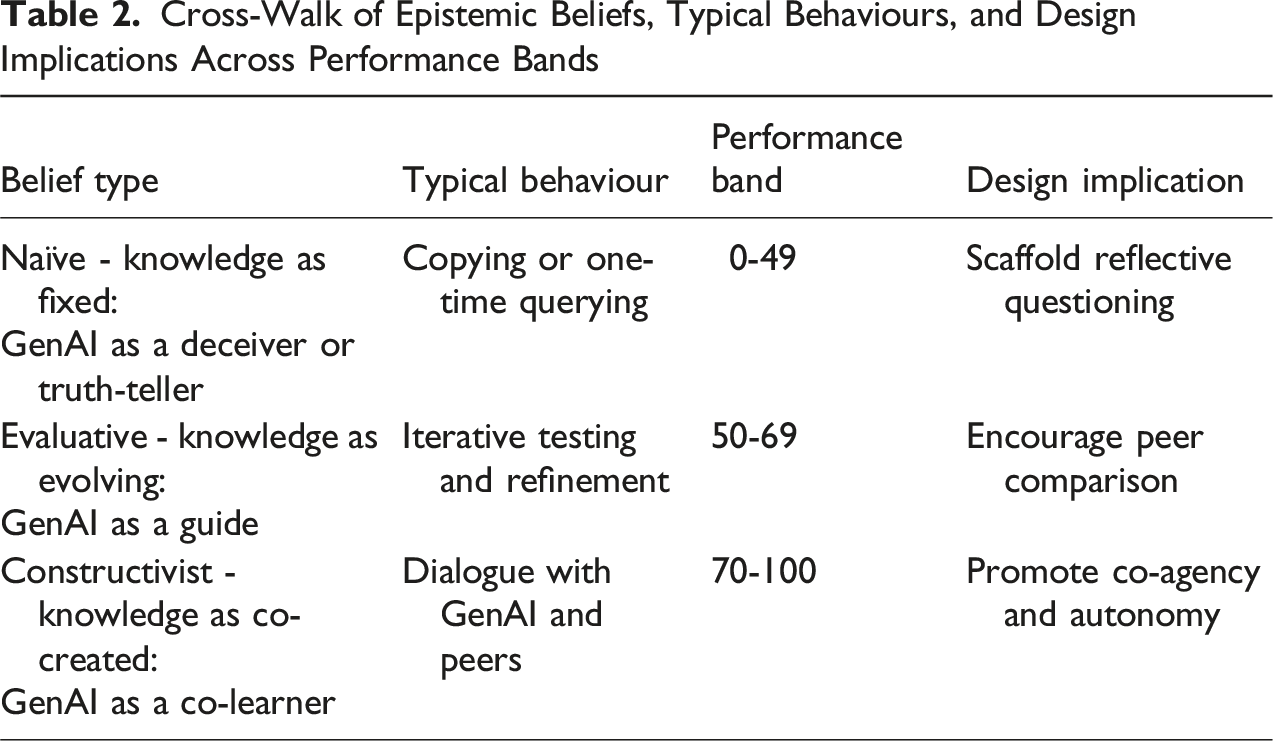

Cross-Walk of Epistemic Beliefs, Typical Behaviours, and Design Implications Across Performance Bands

Functional Cognitive Engagement

Across all learner groups, mediation and scaffolding emerged as a prominent theme, with increasing frequency aligned with higher academic performance: 51% in the lower band, 72% in the middle, and 78% in the upper band.

In the lower performance group, students largely relied on GenAI for basic structuring and output interpretation. One student explained: ‘ChatGPT improved efficiency by streamlining the structuring of the reports and interpreting outputs’ (W98), reflecting a reliance on GenAI to mediate between raw outputs and presentable conclusions. Another discussed: ‘ChatGPT was more useful to provide qualitative outputs and to highlight the results, and provide general conclusions about the findings’ (W61). Here, GenAI scaffolded student understanding by breaking down results into digestible qualitative components and offering summarised takeaways.

In the mid-performing group, the use of GenAI expanded to include procedural support in areas such as data formatting and hypothesis development. As one reflected: ‘Tools like ChatGPT were instrumental in structuring data, generating Excel templates, and summarizing findings efficiently’ (M12). Similarly, others noted that GenAI operated as a scaffold, enabling learners to gradually take on more analytical responsibility with its support: ‘ChatGPT 4 helped me with data preprocessing, hypothesis development, and contextual analysis’ (M32).

Among the highest-performing learners, GenAI scaffolding became more strategic and sophisticated. Students described using GenAI to refine their methodological approach: ‘It had suggested the use of error metrics (MAE, MAPE, RMSE) to assess accuracy’ (S12) and ‘ChatGPT was pivotal in this analysis … ensuring the allegiance of methodologies used throughout this study with academic standards’ (S12). Students further credited GenAI for enabling them to carry out rapid, structured data analysis: ‘ChatGPT enabled quick analysis and forecasting, offering insights that would have otherwise required significant time’ (S52), and ‘By automating … anomaly detection, AI tools streamline data analysis’ (S33). These examples illustrate how GenAI-mediated the learning process by providing timely and expert-like suggestions, while also scaffolding students’ workflow – offering structure, reducing cognitive load so that human can focus on higher-level tasks, and supporting the gradual development of methodological rigour.

Reflective Metacognitive Learning

The theme of reflective metacognitive learning was evidenced through three distinct but interrelated dimensions: authenticity and relevance, reflective judgement and evaluative thinking, and dialogic iteration and meaning-making. This theme captured the extent to which students reflected critically on the use of GenAI, situated its application in real-world contexts, and engaged in iterative refinement of their understanding and approach.

Authenticity and Relevance

Among students in the middle and high performance band, authenticity and relevance was the most frequently observed metacognitive code (80% and 83% respectively). These learners frequently contextualised GenAI use in terms of industry applications and their future professional practice. For instance, one student noted: ‘By embracing these tools in a responsible and strategic manner, TechGlobal Corp can persist in innovating, optimizing operations, and providing exceptional value to its stakeholders’ (M18). Another highlighted that ‘Generative AI tools enhanced the analysis by providing intuitive explanations of statistical methods, contextual insights into data trends, and strategic recommendations to TechGlobal Corp…’ (M34), emphasising the dual role of GenAI in both computation and interpretation.

For strong students, the way they articulated their engagement with GenAI tools revealed a notably more advanced and strategic orientation than that of their mid-performing peers. While many median students reflected on how GenAI helped them understand company-level insights, strong students went further by contextualising the GenAI outputs within broader industry practices. For example, one student noted: ‘TechGlobal Corp is suggested not to replace employees with AI, but to teach them how to collaborate with it… human and AI collaborative work improves task performance and satisfaction as well as increases humans’ self-efficacy’ (S29). Although the quote references TechGlobal Corp, the student positions himself as a real consultant, using it as a springboard to reflect on the broader industry shift toward human–AI collaboration – implicitly shaping how he envisions his evolving role in a data-driven job market.

Reflective Judgement and Evaluative Thinking

The code reflective judgement and evaluative thinking was present in 84% of mid-performing and 69% of high-performing students, reflecting their capacity to assess GenAI’s reliability and limitations. One mid-performer wrote: ‘After understanding the limitation of AI... I will ensure that it supports my analysis as well as use it to help me understand concepts better…’ (M13), indicating an intention to integrate GenAI without over-reliance.

High-performing students demonstrated critical awareness of GenAI’s variable performance across tasks, showing nuanced judgement by recognising where the tools added value rather than viewing them as wholly reliable or flawed. One student wrote: ‘Forecasting results differed from Excel, but hypothesis testing was accurate, showing AI tools are effective in structured tasks’ (S6). Similarly, another reflection noted: ‘Excel and AI agreed on hypothesis testing, not on forecasting’, highlighting the student’s capacity to compare tools and selectively trust outputs (S19). The evaluative mindset extended beyond numerical accuracy. Others focused on interpretive and visual limitations: ‘Copilot was useful in analysing the data set but fell short in its interpretation of said data and creating graphs’ (S65), and ‘AI tools could be used to aid in understanding content, but due to current limitations it is not wholly reliable or accurate especially in tasks such as drawing complex graphs or visualisations’ (S57). These students didn’t reject GenAI due to its limitations; rather, they framed it as a support tool best suited for certain components of analysis while emphasising human input for areas requiring judgement, presentation, or nuanced interpretation.

Dialogic Iteration and Meaning-Making

High performers demonstrated an active, reciprocal interaction with the tool, treating GenAI not as a static resource but as a responsive interlocutor. They recognised that meaningful outcomes emerged through iterative dialogue: ‘When using the AI for forecasting, it required several iterations before giving meaningful results’ (S28) and ‘I had to guide ChatGPT through multiple steps to generate the correct forecast’ (S44). This reflects metacognitive awareness of the learning process, where language played a mediational role in shaping both inputs and outputs. By revising, clarifying, and rephrasing prompts, students co-constructed understanding through sustained engagement (e.g. Figure 3), as echoed by one who noted that: ‘ChatGPT offered to discuss the results further, providing even more detailed interpretation’ (S14). An example of iterating the process by coaching GenAI to improve its responses through updated prompts and additional instructions

Human–GenAI Co-Agency for Strategic Foresight

The theme human–GenAI co-agency for strategic foresight was rarely evident among low- and mid-performing students (under 10%) but was present in high-performing learners: 74% reflected on human oversight in GenAI agency, 77% on ethical considerations, and 80% on adaptability and growth.

Human Oversight in GenAI Agency

High-performing students emphasised the importance of maintaining human agency and critical control over GenAI outputs. They described processes of validation, cross-checking, and human intervention to ensure accuracy and relevance: ‘ChatGPT requires structured prompts and human oversight to ensure outputs are accurate and relevant’ (S1), highlighting that GenAI alone cannot guarantee dependable results. Another remarked that ‘AI may struggle to fully grasp complex problems and risk repeating errors… ensuring critical analysis and validation are maintained through human oversight’ (S7).

Others also recognised the analyst’s role in ensuring meaningful outcomes in the industrial context. For example, one stated, ‘To use AI tools effectively, analysts must ensure data quality, complement insights with human judgment…’ (S15), while another reflected that ‘I will critically analyse the results and complement them with my own analysis’ (S51). These comments reflect active co-agency instead of passive tool use, where learners take responsibility for the quality and credibility of AI-assisted work.

Ethical Considerations

High performers did not treat ethical concerns as an afterthought but integrated them into their practical use of GenAI, showing awareness of data protection, intellectual honesty, and responsible representation of AI-generated content. One student mentioned, ‘In terms of ensuring AI is always ethically used, it is important to be aware of and mitigate any biases, ensure data protection and use AI outputs as a complement to human judgement rather than a replacement’ (S11).

Other comments showed attention to academic integrity and institutional norms. For instance, one student stated, ‘I would only use AI tools where allowed by the university, and I would reference it in accordance with the guidelines, without taking its ideas and results as my own’ (S63). Similarly, another noted, ‘I will prioritise transparency, respect privacy by anonymising sensitive data, and adhere to ethical standards to responsibly leverage AI for efficient and accurate analysis’ (S43).

Adaptability and Growth

High-performing students demonstrated an aspirational, growth-oriented mindset in their engagement with GenAI. Rather than viewing the technology as static or purely transactional, they recognised its evolving nature and aligned their own development accordingly. One student observed, ‘As for 2024, none of the versions of Chat GPT is ready yet to provide a superior calculation and high-quality proofs… However, the quality of answers delivered by ChatGPT 4o is positively surprising… its reasoning capabilities and response speed have advanced significantly’ (S29), reflecting awareness of GenAI’s rapid progression.

Their reflections often extended beyond the immediate assignment, signalling plans to apply these tools in future modules and professional scenarios. One student noted, ‘I will use AI as a medium of support and testing… to create a structure for essays, reports, and case studies or by giving detailed summaries from lecture presentations to study for exams’ (S27), illustrating a broad, practical vision for GenAI across academic tasks. This mindset also translated into strategic planning for academic and professional application. As one student put it, ‘For future assignments, I plan to integrate AI tools more strategically… I also aim to explore complementary AI tools that may better handle domain-specific challenges, such as market trend analysis or sentiment mapping’ (S28). Another shared, ‘In future assignments, to use AI tools effectively and ethically it is crucial to ensure data quality… whilst always developing and updating one’s knowledge about how best to utilise artificial intelligence’ (S11), showing a clear intention to integrate GenAI into ongoing learning.

Others expressed a specific desire to enhance their prompting techniques and data preparation skills. One remarked, ‘Moving forward, I plan to use AI tools alongside traditional methods to verify results and ensure reliability. I aim to improve my ability to craft precise prompts and prepare cleaner data inputs, which will further boost the effectiveness of these tools’ (S64).

RQ2: To What Extent Does a Scaffolded and Reflective Learning Approach Influence the Development of Students’ Cognitive Skills Across Performance Groups?

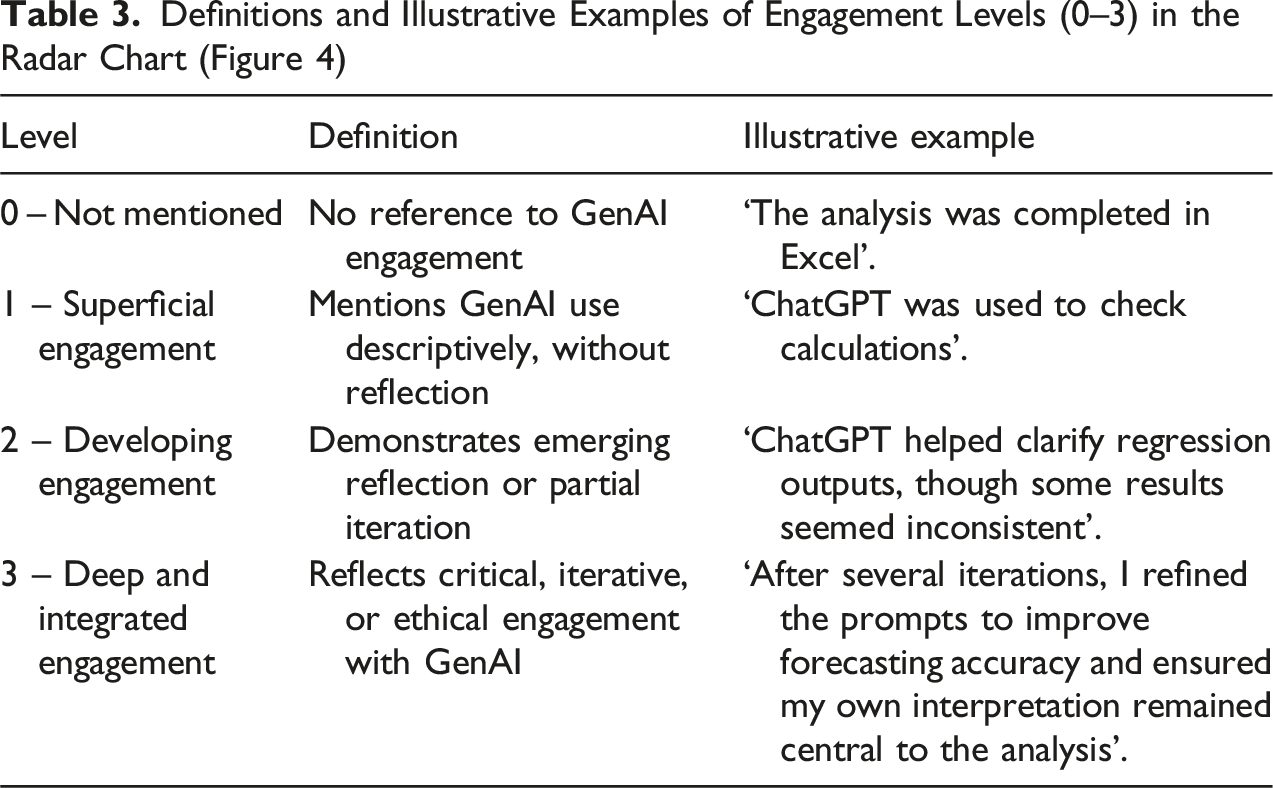

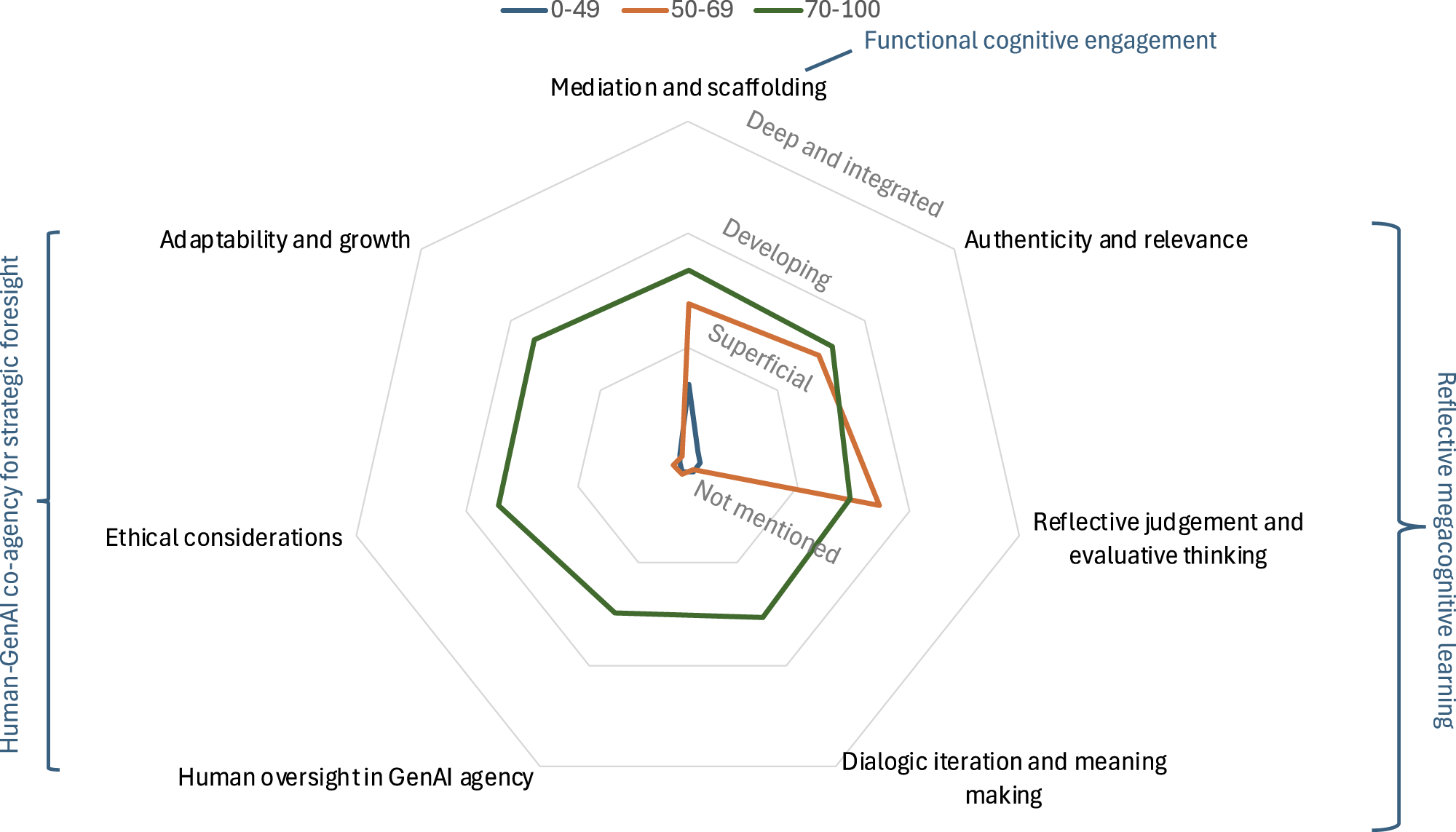

Definitions and Illustrative Examples of Engagement Levels (0–3) in the Radar Chart (Figure 4)

The coded scores represent average levels of engagement within each group and are visualised using descriptive labels in the radar chart (Figure 4). Low-performing students primarily demonstrated engagement in the theme of functional cognitive engagement, particularly under the code mediation and scaffolding, though even here their level remained between superficial and developing. Mid-performing students showed stronger engagement in the theme of reflective metacognitive learning, especially in authenticity and relevance and reflective judgement and evaluative thinking, suggesting growing critical awareness. However, most of them overlooked the theme human–GenAI co-agency for strategic foresight. High-performing students demonstrated the broadest and most consistent engagement, covering all three themes. While they reached developing or even deep and integrated levels in some areas, their average scores still fell below the top of the scale – pointing to an important finding: even the best performers have not yet reached a high level of GenAI competence. Comparison of thematic engagement with GenAI tools across student performance groups. This radar chart illustrates the quality and depth of students’ engagement with GenAI across key themes and codes. Engagement was manually coded on a 0–3 scale (0 = Not Mentioned, 1 = Superficial Engagement, 2 = Developing Engagement, 3 = Deep and Integrated Engagement), which is further clarified with examples in Table 2. All themes and their associated codes are included. Students were categorised into three groups based on their overall academic performance in the module

Discussion

Our study aimed to explore students’ engagement with GenAI tools through the lens of Social Constructivism (Palincsar, 1998; Vygotsky, 1978), focussing on how interactions with GenAI shape learners’ knowledge construction processes. Specifically, our analysis indicates that students’ epistemic beliefs, metacognitive awareness, and sense of agency significantly shaped their engagement patterns. This aligns well with SCT, which posits that individual beliefs and behaviours are reciprocally influenced by their environment, suggesting learners’ perceptions about GenAI fundamentally influence their learning interactions (Qureshi et al., 2023). Consequently, we argue that effective educational use of GenAI must extend beyond technical skills training (Mishra et al., 2023) and explicitly develop learners’ reflective capacities, ethical awareness, and epistemological sophistication (Yan et al., 2024). Our findings extend existing theories by highlighting these critical dimensions in AI-supported learning environments (Essien et al., 2024; Salinas-Navarro et al., 2024), particularly demonstrating how epistemic beliefs influence learner interactions with emerging technologies.

Importantly, our study addresses a critical gap in the existing literature, which has predominantly conceptualised social constructivist learning as occurring through interaction with human peers, teachers, or mentors (Bruner, 1974; Sklar & Richards, 2010). We extend this by examining human–GenAI interaction, positioning GenAI not merely as a passive tool but as an active, responsive agent in the learning environment (Nguyen et al., 2024). By treating GenAI as a mediating partner in the co-construction of knowledge, our work contributes to a growing body of scholarship that reconceptualises the role of non-human agents in education (Zhou & Schofield, 2024).

In our study, four overarching themes emerged. Collectively, these themes demonstrate how students actively shape and are shaped by their interactions with AI, reinforcing the reciprocal determinism central to SCT.

Epistemic Beliefs and Learning Philosophy

Our analysis indicates that students adopted diverse epistemological positions, ranging from viewing GenAI as authoritative and reliable (truth-teller), inherently misleading (deceiver), supportive yet fallible (guide), to treating it as a collaborative partner in learning (co-learner). These varied stances align with existing research underscoring the importance of epistemological beliefs in shaping how learners interact with AI-enabled environments (Hazari, 2025).

Table 2 illustrates the belief–behaviour relationship across performance bands, showing how epistemic orientations shape learners’ engagement with GenAI and the corresponding pedagogical implications. Notably, students who viewed GenAI as a co-learner demonstrated more advanced engagement, characterised by critical dialogue, iterative prompting, and strategic use of outputs. This pattern was more common among academically stronger students, suggesting a relationship between epistemic sophistication and effective GenAI integration. Our findings extend prior work by highlighting the need to explicitly foster nuanced epistemic beliefs – not to encourage uncritical acceptance or wholesale rejection of GenAI, but to cultivate students’ intention and capacity to use it thoughtfully and responsibly (Chan & Hu, 2023). Such development is essential to mitigating the emerging digital divide driven not by access to GenAI itself, but by disparities in how learners understand, evaluate, and apply its outputs (Fang & Zhou., 2025; Zhou et al., 2025). Quantitatively, higher-performing students achieved mean engagement scores between 1.46 and 1.72 across all themes, compared with 0.08 to 0.68 for lower-performing students. Iteration counts extracted from reflections further illustrated this disparity: high performers typically described two to four rounds of GenAI refinement, while most lower performers mentioned no iteration. These measurable contrasts reinforce that the divide lies not in access to AI tools, but in the quality and depth of engagement with them.

Functional Cognitive Engagement: Mediation and Scaffolding

Our findings reveal GenAI’s effectiveness in supporting students’ cognitive processes through mediation and scaffolding, reflecting Vygotsky’s concept of the Zone of Proximal Development (Thompson, 2013). Most of the students utilised GenAI tools to bridge cognitive gaps, facilitating the transition from basic to more advanced analytical tasks. For example, several students who struggled with interpreting complex statistical outputs used GenAI to provide explanations of difficult statistical concepts – such as regression, hypothesis testing, and forecasting – in an intuitive, story-telling style qualitative format. This approach helped them make sense of technical content by connecting it to familiar language and real-world business logic, allowing them to gradually build conceptual understanding and confidence in quantitative reasoning (Inoferio et al., 2024).

This is especially salient in the context of this module, which focuses on business data analysis. Many business students enter the course with limited prior exposure to quantitative methods and lack confidence in applying statistical techniques (Domingo et al., 2024; Leppink et al., 2012). For these learners, GenAI functions not only as a computational tool but as a conceptual mediator – helping them translate unfamiliar technical language into accessible insights (Tang, 2024). Without such support, students risk remaining dependent on surface-level outputs, missing the opportunity to develop the analytical fluency increasingly expected in business contexts.

However, substantial variations emerged in how students perceived and utilised this scaffolding. Higher-performing students employed GenAI strategically to enhance their analytical thinking and methodological sophistication. In contrast, mid- and lower-performing students predominantly viewed these tools as mere aids for task completion. This finding echoes prior research underscoring that the pedagogical value of scaffolding tools depends significantly on students’ active cognitive engagement and metacognitive capabilities (Gonslaves, 2024). Our analysis extends this insight by reinforcing the importance of explicitly teaching students how to engage reflectively and strategically with GenAI-mediated scaffolding, transforming functional interactions into meaningful cognitive development.

In practice, scaffolding strategies can be differentiated to support learners across performance groups. For lower-performing students, structured prompt-refinement workshops and guided exemplars can model how to iteratively question and verify GenAI outputs rather than accepting them at face value. Embedding reflective checklists that ask students to justify their trust in GenAI results, compare outputs across iterations, and articulate the human reasoning behind their final conclusions can help build metacognitive and evaluative skills. For middle-performing students, targeted feedback on reflection tasks and opportunities for collaborative prompt design can deepen their emerging analytical judgement and ethical awareness. For higher-performing students, scaffolding should shift toward extension activities – such as critical evaluation of multiple GenAI tools, peer mentoring, or designing their own AI-integrated case studies – to foster strategic foresight and transferable AI literacy.

Reflective Metacognitive Learning

Our analysis indicates that reflective metacognitive learning was most evident among students who perceived GenAI-supported tasks as authentic and contextually meaningful, demonstrated evaluative judgement, and engaged in iterative, dialogic interaction with the tool. Authenticity and relevance were particularly salient for higher-performing students, who consistently connected their use of GenAI to broader industry practices and future professional contexts. This enhanced their perception of the task’s value and encouraged deeper cognitive engagement – echoing Lave and Wenger (1991)’s assertion that situated, context-rich learning environments promote meaningful participation.

Reflective judgement and evaluative thinking further differentiated learners. Higher-performing students showed critical awareness of GenAI’s strengths and limitations, recognising its utility in structured tasks while also identifying areas – such as contextual interpretation or ethical reasoning – where human input remained essential. This reinforces findings by Zhou et al. (2024), who observed that limited evaluative capacity constrains meaningful AI engagement.

Dialogic iteration and meaning-making emerged as a further marker of metacognitive sophistication. Higher-performing students frequently engaged GenAI through iterative prompting, using feedback loops to clarify, refine, and extend their understanding. This interactive process reflects the principles of dialogic pedagogy, where knowledge is co-constructed through sustained dialogue (Siegle, 2025).

Human–GenAI Co-Agency for Strategic Foresight

Our findings reveal that only the highest-performing students engaged meaningfully with the theme of human–GenAI co-agency, reflecting an advanced capacity to interact with GenAI not merely as a tool but as a dynamic partner in learning. Their reflections spanned three interrelated dimensions: human oversight, ethical responsibility, and adaptability – each closely aligned with Social Constructive Theory’s (SCT) emphasis on self-regulated learning, future-oriented thinking, and reciprocal determinism (Kritt & Budwig, 2022). This behaviour aligns with SCT’s conceptualisation of agency, whereby learners exercise intentional control over their cognitive and behavioural processes in interaction with their environment. Echoing this, students’ ability to evaluate the trustworthiness, accuracy, and applicability of GenAI outputs demonstrates self-reflective monitoring and an internalised locus of control – both critical to agentic learning (Zimmerman, 2002). In extending this principle to AI-enhanced learning contexts, our findings suggest that meaningful learning occurs when students recognise and manage the boundaries of GenAI’s capabilities, thereby preserving human judgement at the centre of decision-making.

Ethical considerations were similarly articulated only by the strongest students, who engaged with issues such as data privacy, bias, and academic integrity. This ethical awareness aligns with recent calls to embed responsible AI literacy within educational practice (Walter, 2024), and extends this discourse by demonstrating its co-dependence on metacognitive regulation and epistemic awareness (Fan et al., 2025). These students did not treat ethical use as an add-on but integrated it into their learning philosophy – highlighting the importance of cultivating critical reflection about how AI is used, interpreted, and communicated in real-world settings.

A distinctive marker of high-performing students was their adaptive and forward-thinking orientation. They demonstrated a growth mindset by acknowledging both the rapid evolution of GenAI and their own responsibility to evolve alongside it. This adaptive co-agency, which aligns with SCT’s emphasis on future planning and self-directed learning, was reflected in their strategic intentions to apply GenAI in other academic modules, real-world business scenarios, and future careers (Wut et al., 2025). Their reflections frequently connected AI engagement in the current module to broader professional goals, indicating a sophisticated understanding of lifelong learning and workplace preparedness (Poquet & De Laat, 2021). This future orientation echoes recent research that underscores the importance of developing AI literacy not as a static competency but as a continually evolving practice aligned with career trajectories (Su et al., 2021).

Importantly, these students did not position themselves as sole agents acting on GenAI; rather, they embraced a co-agency model in which GenAI served as an active, if limited, collaborator in the knowledge construction process. Our findings therefore contribute to emerging discussions on how GenAI can function as a socio-cognitive partner (Zhou & Schofield, 2024), mediating not only task completion but also the learner’s reflective orientation toward knowledge, ethics, and professional identity.

Conclusion

This study explored how undergraduate students in a business data analysis module engaged with GenAI tools, particularly in terms of reflection, ethical reasoning, and evaluative thinking. By analysing students’ written reflections through thematic analysis, we uncovered notable differences across performance bands: High-performing students used GenAI tools not merely for task completion but as collaborative partners in learning. In contrast, mid- and lower-performing students engaged more superficially, showing limited critical reflection and a more passive approach to AI-supported tasks.

Theoretical Implications

This study broadens the scope of SCT by conceptualising GenAI not as a passive support tool, but as an agentive participant in the co-construction of knowledge. Traditionally centred on human-to-human interactions (Bandura, 1986; Vygotsky, 1978), SCT can be reinterpreted to accommodate human–AI collaboration, where GenAI engages in responsive, feedback-driven exchanges with learners. This reconfiguration aligns with recent theoretical developments that view AI as a co-learner – contributing computational insight while being shaped by human judgement and ethical framing (Chiu & Rospigliosi, 2025; Edwards et al., 2025). Such human–AI collaboration invites a reconceptualisation of key SCT constructs: scaffolding now includes AI-generated support; agency is distributed between human learners and GenAI; and the ZPD extends to interactions where learners co-navigate tasks with GenAI as a responsive, though non-human, partner (Zhou & Schofield, 2024).

By illustrating how GenAI can support iterative dialogue and metacognitive reflection, our study affirms SCT’s emphasis on scaffolded, feedback-rich learning. The recursive interaction between learner and GenAI resonates with Vygotsky (1978)’s Zone of Proximal Development and Bandura (1986)’s reciprocal determinism, suggesting these dynamics can extend to human–AI contexts when learners engage critically. Moreover, this work refines understandings of learner agency in SCT by showing how students’ epistemic beliefs and ethical dispositions shape their co-agency with GenAI, offering a foundation for rethinking agency, scaffolding, and reflection in AI-integrated education.

The variation in how students engaged with GenAI highlights that co-agency is a developmental construct – emerging through learners’ capacity for reflection, ethical judgement, and strategic use of AI as an interactive partner. This reinforces SCT’s emphasis on the socially mediated nature of learning (Asamoah & Oheneba-Sakyi, 2017), while extending it to include non-human agents as contingent collaborators whose effectiveness depends on the learner’s readiness to engage critically and iteratively (Hazari, 2025).

Practical Implications

Our findings carry significant implications for higher education pedagogy, especially in contexts where GenAI tools are embedded into learning and assessment tasks. The structured nature of our assignment – asking students to use GenAI for data analysis and compare its outputs with manually generated Excel results – offers a replicable model for promoting reflective and evaluative thinking through authentic, task-embedded use of AI, aligned with existing educational practices (e.g. Gonslaves, 2024; Pavone, 2025).

The observed variation in student engagement and reflection – particularly the gap between high- and low-performing students – serves as an early warning of a new kind of digital divide emerging from GenAI (O’Dea, 2024). High-performing students engaged in up to four rounds of prompt refinement, using each iteration to clarify, improve, and challenge GenAI outputs. In contrast, most low-performing students engaged in only a single round and either hastily concluded that ‘AI is unreliable and I will not use it’, or conversely, that ‘the results are the same so AI can replace human’. To address the divide, educators must incorporate differentiated support strategies, particularly for students with less-developed metacognitive skills (Hadar Shoval, 2025). Scaffolding activities that guide students through prompt engineering can help nurture more strategic and reflective engagement (Lee & Palmer, 2025).

Embedding structured opportunities for ethical discussion, prompt iteration, and critical interrogation of GenAI outputs is essential to developing responsible AI literacy. It is not enough to integrate GenAI into the curriculum as a technical tool; instead, educators must frame GenAI as a cognitive and collaborative partner whose outputs must be critically examined, refined, and contextualised (Yan et al., 2024). Ultimately, meaningful GenAI integration in teaching must be accompanied by pedagogical practices that cultivate students’ evaluative judgement, agency, and ethical sensitivity – preparing them not just to use AI, but to engage with it as thoughtful, discerning co-learners (Salinas-Navarro et al., 2024).

Limitations and Future Research Directions

While this study offers valuable insights into student engagement with GenAI tools through the lens of SCT, several limitations warrant consideration. First, the findings are derived from a single institutional context within a Year 2 undergraduate business data analysis module, which may limit the generalisability of the results to other educational levels, disciplines, or institutional cultures. Future studies could replicate this approach across diverse contexts, including postgraduate programmes, other disciplines or interdisciplinary courses, or international settings, to explore potential variations in human–AI co-agency.

Second, although our analysis highlights performance-based differences in epistemic beliefs, ethical reasoning, and co-agency, we did not systematically examine the longitudinal development of these dispositions. Given the rapid advancement of GenAI capabilities, understanding how students’ engagement evolves over time, becomes increasingly important. Future research should explore how learners adapt to evolving AI tools, how their epistemological stances and reflective practices shift with experience, and whether early engagement with GenAI fosters sustainable, productive habits of human–AI collaboration in education.

Finally, while our study primarily focused on GenAI as a text-based assistant, future research could explore multimodal GenAI tools that include visualisation, speech, and simulation capabilities. As AI technology becomes increasingly integrated and embodied in educational practice, research must continue to interrogate not only what AI can do, but how it reshapes the roles, identities, and learning capacities of students and educators alike.

Footnotes

Ethical Considerations

This study received ethical approval from the Queen Mary University of London Research Ethics Committee.

Consent to Participate

All participants were fully informed about the study and provided consent to participate.

Consent for Publication

Written informed consent for publication was obtained from all participants as well.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

An anonymised version of the data in this study is available from the corresponding author upon reasonable request.