Abstract

Rapid approaches are essential when resources are limited and when findings are required in real-time to inform decisions. Limitations exist in their design and implementation, which can lead to a reduced level of trust in findings. This review sought to map the methods used across rapid evaluations and research to facilitate timeliness and support the rigour of studies. Four scientific databases and one search engine were searched between 11–16th August 2022. Screening led to the inclusion of 169 articles that provided a much-needed repository of methods that can be used during the design and implementation of rapid studies to improve their trustworthiness. No reporting guidelines specific to rapid research or evaluation were identified in the literature, we therefore suggest that this repository of methods informs the development of transparent reporting standards for future rapid research and evaluation.

Introduction

There is often a need to conduct evaluations and research rapidly to deliver findings in a timely way so these can be used to inform decision-making processes (Beebe, 1995; Johnson & Vindrola-Padros, 2017; McNall & Foster-Fishman, 2007). While prolonged in-depth approaches are most appropriate in some occasions, rapid approaches are vital in contexts where time and resources are limited such as humanitarian crises or complex health emergencies, and real-time evaluation of changing programmes and services (Anker et al., 1993; Beebe, 1995; Gawaya et al., 2022; Holdsworth et al., 2020; Johnson & Vindrola-Padros, 2017; McNall & Foster-Fishman, 2007).

There is a wealth of research on the different types of studies that can be classified as rapid approaches (McNall & Foster-Fishman, 2007; Norman et al., 2022; Vindrola-Padros, 2021; Vindrola-Padros et al., 2020). Rapid research commonly refers to findings that are delivered in the context of time and resource constraints, and rapid evaluation commonly refers to the delivery of timely evidence to inform decision-making and service delivery (Vindrola-Padros, 2021). Concerns have been raised previously that the rapid approach to research and evaluation can be seen as a ‘quick and dirty’ exercise that may impact the quality of data being collected and analysed (Pink & Morgan, 2013). Similarly there have been concerns around the transparency of such studies, with many studies failing to report on the methods used (Vindrola-Padros & Vindrola-Padros, 2018).

Lincoln and Guba's evaluative criteria has been used to assess qualitative research studies to establish trustworthiness in research findings (Enworo, 2023; Forero et al., 2018; Lincoln & Guba, 1985). The criteria considers four key domains in order for research to be considered trustworthy, it should harness approaches that establish credibility, transferability, dependability, and confirmability (Lincoln & Guba, 1985). Authors have previously conducted reviews to identify some of the approaches used in rapid studies, and many of the cited approaches can fit within Lincoln and Guba’s criteria (Beebe, 1995; Fitch et al., 2004; Johnson & Vindrola-Padros, 2017; McNall & Foster-Fishman, 2007; Norman et al., 2022; Vindrola-Padros & Vindrola-Padros, 2018). Beebe’s work focussed on the techniques used in rapid appraisals based on literature in and prior to 1995; Fitch et al. reviewed the techniques used in rapid assessments in the substance use field in 2004; McNall and Foster-Fishman identified the approaches used in rapid evaluation, assessment and appraisal research based on literature in and prior to 2007. These reviews identified key features shared across all rapid evaluation and appraisal methods such as community participation, systems perspective, triangulation using different methods and sources of data, and iterative processes for data collection and analysis. However, all three reviews were published before 2007, limiting our understanding of new developments in this field.

There have been more recent reviews on rapid approaches by Johnson and Vindrola-Padros (reviewed the rapid qualitative methods used during complex health emergencies) (Johnson & Vindrola-Padros, 2017); Vindrola-Padros and Vindrola-Padros (reviewed the approaches used in rapid ethnographies in healthcare organisations) (Vindrola-Padros & Vindrola-Padros, 2018); and Norman et al. (reviewed the approaches used across rapid evaluation studies in healthcare in high-income countries) (Norman et al., 2022). These reviews highlighted the aspects of rapid research and evaluation that might come under scrutiny due to time pressures, such as sampling procedures, approaches for ethical approval, maintaining consistency across members of the research team, and little time to cross-check data with other sources to reduce bias. However, these reviews included publications that were limited to either complex health emergencies or healthcare. We plan to create an updated review to include publications that focus on both health emergencies, healthcare and any other contexts, as a means to create a larger repository of approaches used across a broader context of rapid research and evaluation.

The purpose of this systematic review was to build on this existing literature to produce an updated analysis of the rapid approaches that have been used across multiple sectors; the main challenges faced by researchers and evaluators; and the strategies used to overcome these challenges. The research questions guiding this review were: • What approaches have been used during rapid evaluation and rapid research to facilitate rigour and timeliness? • What are the main barriers experienced within these studies, and have any strategies been used to address them?

Methods

The systematic review was guided by the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 statement (Page et al., 2021). A protocol outlining the project steps was accepted by PROSPERO prior to conducting the research in August 2022 (CRD42022341825).

Search Strategy

The search strategy for the review included both keyword and subject heading searches across four scientific literature databases: MEDLINE, Embase, Healthcare Management Information Consortium (HMIC), Cumulative Index of Nursing and Allied Health Literature (CINAHL) Plus, and the open search engine Google Scholar. Search words were related to ‘rapid evaluation’ or ‘rapid research’. The searches were conducted between 11–16th August 2022. A detailed outline of the search strategy can be found in Online Appendix 1.

Eligibility Criteria

Criteria for including studies consisted of (1) study referred to as a rapid approach; (2) the study having been completed within 6 months (in keeping with rapid approaches) (Vindrola-Padros, 2021); and (3) the article including sufficient information on the methods used to ensure rapidity and support rigour. We define rigour as using approaches that may enhance the trustworthiness of the research (Lincoln & Guba, 1985). There were no restrictions on publication date, language or study design in terms of being qualitative, quantitative or mixed methods.

Selection Process

The search results were imported into EndNote for de-duplication (Gotschall, 2021), and then to Rayyan for further de-duplication and screening (Ouzzani et al., 2016). The title and abstract screening based on the eligibility criteria was split between three researchers due to the large number of search results. The three researchers then cross-checked 10% of each other’s exclusions and discussed any disagreements of exclusion decisions until a consensus on the decision was reached. Following this, one researcher progressed the included articles to full text screening against the eligibility criteria, using Microsoft Excel to report the screening decision. Any articles identified in non-English languages were translated using Google Translate. The principal investigator then cross-checked 10% of the excluded articles.

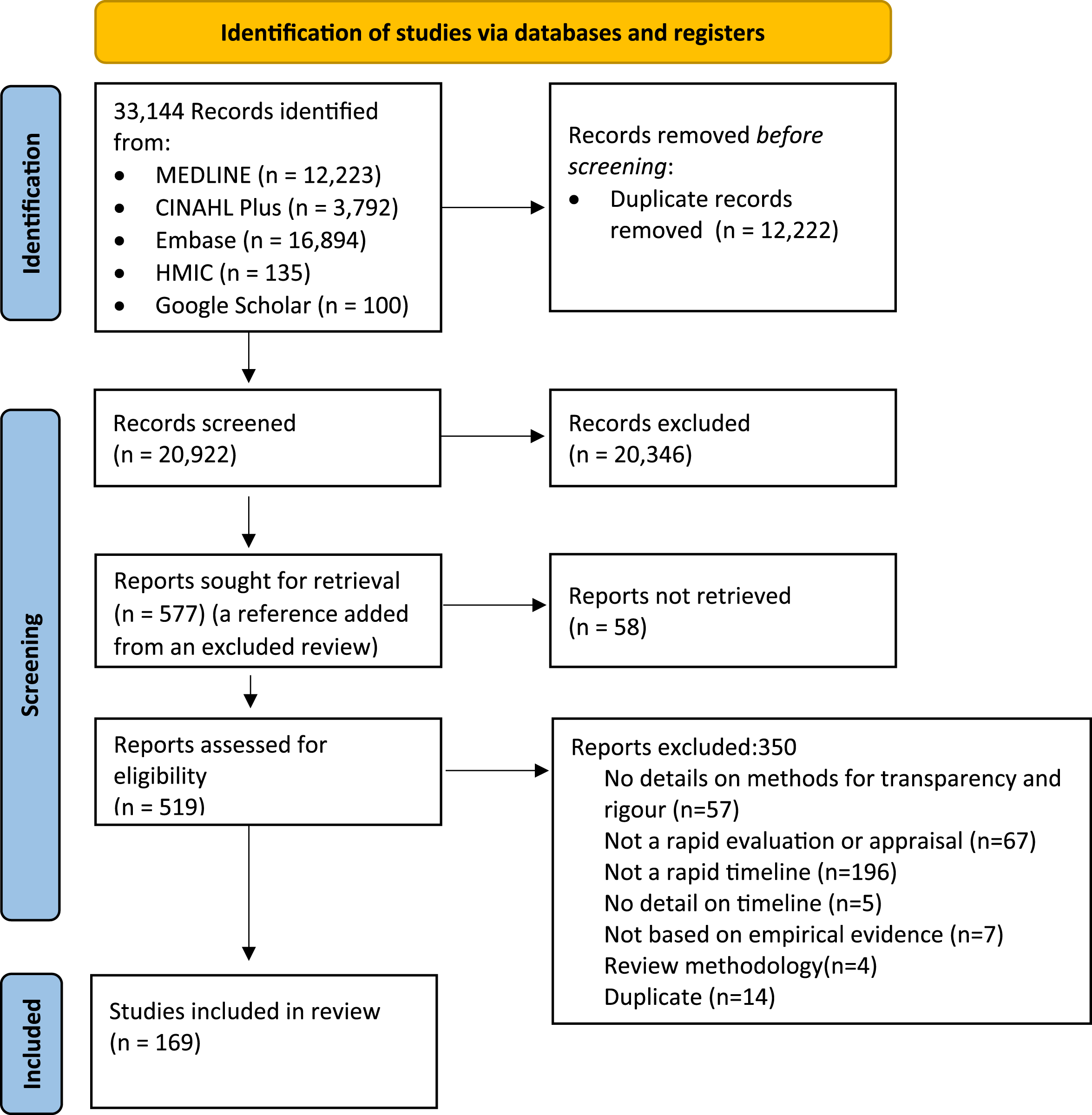

The search returned 33,144 results, of which 20,922 articles remained following duplicate removal. Of these, 577 articles were identified as potentially relevant and screened at full text. Overall, 169 articles were included in the review. Figure 1 shows the flow of studies through the review process based on PRISMA guidelines (Page et al., 2021). Prisma 2020 flow diagram of the selection process.

The reasons for excluding articles included: articles not sharing enough detail on the methods used to ensure rapidity and rigour; the study not fitting under a variation of rapid evaluation or research; the study not being conducted to a rapid timeline (within 6 months); no detail on the study timeline; the study not being based on empirical evidence; the study using review methodology; or the study was a duplicate of a previously screened item.

Data Extraction and Quality Assessment

A comprehensive list of data extraction categories was developed based on the research question and pre-specified outcomes of interest. These categories were further refined to ensure information was captured appropriately following screening. Data extraction, quality assessments and full text screening were carried out in parallel by one researcher, recording information on Microsoft Excel. The final data extraction categories included: study details (location of study; study design; study duration; general methods used throughout) and the methods used across the different stages of the rapid studies (as shown in Online Appendix 2) such as during study design; data collection; data analysis; result interpretation; and dissemination. We also extracted any method limitations outlined in the articles.

The Mixed Methods Appraisal Tool (MMAT) (Hong et al., 2018) was used to assess the quality of the included articles. The MMAT allows the quality of heterogeneous study designs to be assessed based on the methodology used. The types of study that can be assessed with this tool include qualitative, quantitative randomised control, quantitative non-randomised control, quantitative descriptive, and mixed methods studies. No articles were excluded based on their MMAT scores.

The MMAT scores of each study can be found in Online Appendix 2. The studies ranged from low quality with a score of 0/5 (n = 3) and 1/5 (n = 32) to medium quality with a score of 2/5 (n = 38) and 3/5 (n = 25), to high quality with a score of 4/5 (n = 61) and 5/5 (n = 10).

Data Synthesis

Textual narrative synthesis and a summary of content were conducted to summarise the study characteristics and key findings across the literature (Lucas et al., 2007). We then used the rigorous and accelerated data reduction technique (RADaR) to reduce the data within our findings (Watkins, 2017). The RADaR technique consists of using a sequence of tables to chart findings with the aim of reducing the volume of data with each subsequent table.

Results

Study Characteristics

A detailed summary of the characteristics and themes of all the studies included in the review can be found in Online Appendix 2, and the full list of included references can be found in Online Appendix 3.

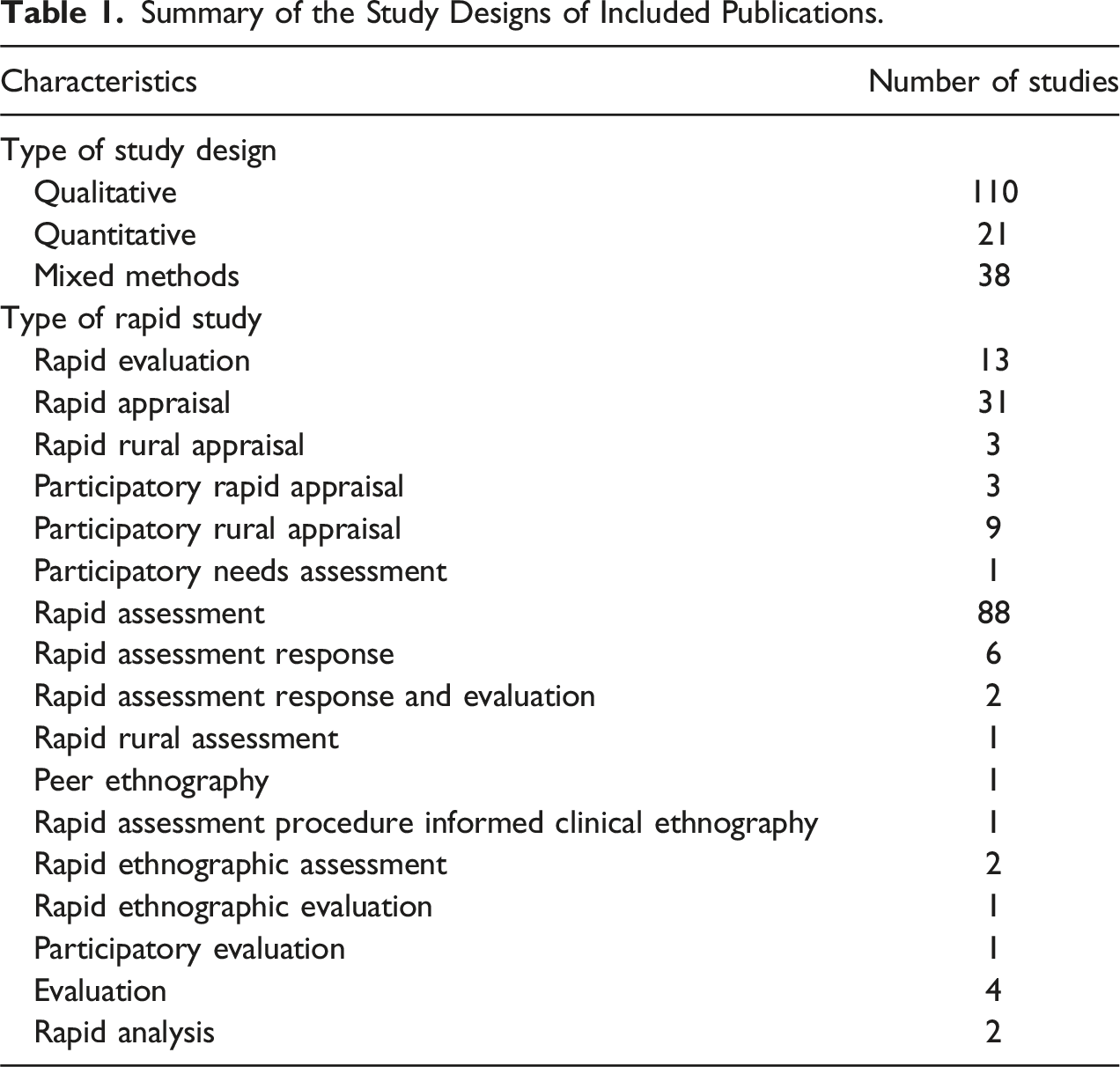

Summary of the Study Designs of Included Publications.

Methods, Challenges and Mitigation Strategies Reported Across the Rapid Studies

Summarised below are the methods used across the included publications that enabled rapidity and supported rigour, along with some of the challenges associated with these methods and any mitigation strategies as reported by the literature. A detailed summary of the methods used across the included literature can be found in Online Appendix 2.

Reporting Guidelines

A prominent gap across the literature was that only four studies discussed the use of specific guidelines for the conduct and reporting of the studies, and all four used the qualitative research (CORE-Q) guidelines.

Theoretical frameworks and approaches were cited more commonly (n = 30 studies) to guide study conduct, the most commonly cited were the Consolidated Framework for Implementation Research (n = 3), the Health Belief Model (n = 3), the International Rapid Assessment Response and Evaluation (I-RARE)/RARE methodology (n = 4), and the Rapid Assessment Procedure-Informed Clinical Ethnography (RAPICE) methodology (n = 3).

Study Design

A key area discussed among the publications was the use of sampling methodologies to increase rapidity or to improve relevance of characteristics of the sample such as non-probability sampling (used across 84 studies) in the form of convenience sampling, purposive sampling or variations of snowball sampling. Some studies (n = 14) based their sample size on when data saturation was reached. Small sample sizes and relying on non-probability sampling was recognised as a limitation across some publications, as it led to limited generalisability, limited representativeness of findings and the potential to introduce selection bias (Ahoua et al., 2006; Akello et al., 2007; Anastasaki et al., 2022; Brittain et al., 2019; Butler et al., 2021; Ezard et al., 2011; Hammond et al., 2022; Johnson & Vindrola-Padros, 2017; Morojele et al., 2006; Negandhi et al., 2017; Nemser et al., 2018; Sy et al., 2020; Theiss-Nyland et al., 2016). Three articles however used methods to minimise these limitations by searching for alternative or disconfirming cases, deviating from arranged locations to try to reach unsensitized groups, or using active, sequential recruitment to reduce the risk of excluding participants with underrepresented characteristics (Grant et al., 2011; Kahle et al., 2009; Kamineni et al., 2011).

Other approaches used throughout the study design of the included publications included following a study protocol/proposal, gaining relevant ethical and regulatory approvals, obtaining informed consent from participants ahead of data collection in the form of verbal, written and assumed consent (based on voluntary participation in online surveys and observations). Approaches to recruitment were also reported, where some studies (n = 22) were supported by local key informants, local researchers/evaluators, local networks, and organisations to build trust with potential study participants and to advertise the study. Other studies (n = 16) relied on emails or social media platforms to recruit participants. Some studies incentivised participants to improve recruitment through monetary incentives (n = 23), food and drink incentives (n = 6), and health incentives (n = 4).

Data Collection and Analysis

Methods of data collection and analysis that enabled rapidity included relying on team members to split data collection and analysis between each other. However inconsistency in data collection methods and data analysis methods, due to not having the time to train team members or having the time for supervisors to attend all data collection to ensure alignment across team members, were identified as challenges in the rapid studies (Ash et al., 2016; Bayleyegn et al., 2006). Methods such as team meetings during data collection and analysis were used frequently to address this challenge (n = 42) as a way for team members to share feedback with each other to ensure consistency with the methods used, achieve consensus on approaches used, and to modify any processes or materials (Abir et al., 2021; Albert et al., 2021; Sy et al., 2020).

Piloting data collection tools was conducted across 26 studies to support their rigour and in some of the studies this was done to ensure cultural and face validity. Several authors however reflected on the fact that short timelines often limited the piloting of data collection instruments and inhibited team members from going in depth to ask certain questions to immerse themselves into data collection (Ezard et al., 2011; Seidel et al., 2018; Tindana et al., 2012). Strategies to overcome these challenges included using member checking (cited across 27 studies) to share preliminary findings with the participants themselves or with members of their community, as a way to corroborate the analysis and interpretations that had been conducted (Belford et al., 2017; Chilanga et al., 2022; Chung et al., 2022). Additionally, triangulating findings with other methods of data collection or existing documents was often cited across the literature (n = 82) as a way to verify findings and interpretations (Balogh et al., 2008; Doherty et al., 2017; Loko et al., 2019).

Iterative approaches to data collection and data analysis were used across 32 studies whereby the studies analysed data whilst data collection was ongoing allowing teams to identify when data saturation had been met, to re-shape data collection tools based on emerging findings and to develop initial codebooks for subsequent in-depth analysis (Albert et al., 2021; Aral et al., 2005; Jumbe et al., 2021). Many of the studies (n = 66) conducted data collection and analysis in local languages working with translators, local researchers, and with researchers with lived experience. There were nine studies that discussed how this helped to facilitate trust and strong validity of the cultural and traditional knowledge (Anastasaki et al., 2022; Brown et al., 2008; Hanvoravongchai et al., 2010).

Other approaches used during the data collection and analysis included: reporting the composition and experience of the evaluation or research team (n = 87), using participatory methods for data collection (n = 14), relying on technology as a medium to collect data from participants (23), and recording and transcribing interviews or using field notes (n = 84). Many studies detailed their analysis approaches predominantly qualitative content analysis (n = 65) such as thematic analysis and the framework approach, also discussed were rapid analysis techniques such as the use of rapid assessment procedure sheets (n = 11). Some studies (n = 23) also used quality assurance approaches and audit trails to assess consistency in analysis across team members and to assess the quality of the analysed data.

Result Interpretation

Limitations can exist when team members do not recognise the effect their characteristics and experiences may have on their interpretations of findings. However a few studies shared that their team were actually able to reflect on their practice throughout the study and how their personal experiences may have affected their interpretations (Dasgupta et al., 2008; Laisser et al., 2021; Mital et al., 2016). Some studies (n = 10) had a separate peer-review team or advisory group that reviewed the research/evaluation teams’ interpretations and the draft reports to identify any potential biases.

Dissemination

Iterative dissemination of findings whilst studies were ongoing was used in three studies to rapidly share emerging findings with study participants, research team members, commissioners, and implementers of programs being evaluated (Burgess-Allen & Owen-Smith, 2010; Cohn et al., 2021; Vindrola-Padros et al., 2020). This formed feedback loops between stakeholders implementing findings and the evaluation/research team, enabling evaluation of studies or programs as they are being implemented.

Discussion

The aim of this review was to identify the methods that have been used by evaluators and researchers to carry out studies in the context of time pressures. The review is a response to current debates in the literature on the rigour and transparency of rapid studies, where short timeframes are often associated with ‘quick and dirty’ exercises, and limited reporting of the study methodology (Cupit et al., 2018; Vindrola-Padros, 2021; Vindrola-Padros & Vindrola-Padros, 2018). The findings from our review demonstrate that many of the rapid studies use approaches to improve their rigour, opposing the opinion that rapid approaches are ‘quick and dirty’. Many of the approaches identified in this review could be grouped into the areas of Lincoln and Guba’s criteria to facilitate trustworthiness of research findings (Lincoln & Guba, 1985). Approaches ensuring credibility included triangulation and member checking; establishing transferability had been facilitated by relying on local key informants and local researchers to support with understanding the local cultural and social contexts; establishing dependability had been achieved through auditing and peer-reviewing the research or evaluation; and establishing confirmability took place through approaches of reflexivity.

We found similarities in our review with the previous literature in terms of the approaches used by researchers and evaluators. This included relying on approaches to facilitate timely recruitment such as non-probability sampling (Beebe, 1995; Norman et al., 2022; Vindrola-Padros & Vindrola-Padros, 2018) and using existing networks to support with recruitment (Vindrola-Padros & Vindrola-Padros, 2018). Approaches to rapidly collect and analyse data were also discussed such as team-based approaches for data collection and analysis (Beebe, 1995); technology to support with data collection (Norman et al., 2022); rapid analysis techniques (Norman et al., 2022; Rankl et al., 2021); and an iterative process of data collection, analysis, and dissemination (Beebe, 1995; Norman et al., 2022; Rankl et al., 2021).

A key finding from our review that has not been highlighted in the previous reviews, was that very few publications cited the use of guidelines to enable rigour within the conduct and reporting of studies. The CORE-Q checklist (Tong et al.,. 2007) was the only reported guideline in our systematic review, which primarily shares guidance on research team dynamics, study design, data collection, analysis, and synthesis in qualitative research only, and does not specifically consider the design of rapid studies. We therefore recognise this as a gap in the field where reporting guidelines for rapid research and evaluation would be helpful. The approaches summarised in this review and previous reviews, could serve as a starting point of components that would be useful for inclusion in reporting guidelines for rapid studies. Further work is needed to generate consensus among rapid researchers and evaluators regarding the components that need to be included in guidelines for rapid evaluation and research. Another finding from our review that links to previous literature (Vindrola-Padros & Vindrola-Padros, 2018) is that some rapid studies are still failing to transparently report on the methods and approaches used, this was recognised in our review when 57 rapid studies (14% of the full text exclusions) were excluded at full text screening because of failing to report on methodologies used. We hope future rapid researchers and evaluators will consider this and transparently share the methods used when designing, implementing and reporting on rapid studies.

The strengths of this review include the wealth of incorporated studies (n = 169) that have been published over a relatively long period of time (1993–2022). One of the aims of this review was to create a repository of approaches used across studies published at a global scale, but we found that there is an overwhelming majority of publications from high-income countries (USA (n = 35) and UK (n = 12)). This represents a limitation regarding the transferability of these review findings to the context of Low and Middle Income Countries (LMICs) and highlights an area that future research should address by delivering and publishing rapid studies set in LMICs. Other limitations include the fact the review focused on articles published in peer-reviewed journals, excluding rapid evaluations and research in the grey literature; and that study durations of six months or less was used as a component of the inclusion criteria in our review, so articles that did not report study duration had to be excluded.

Conclusion

This systematic review has collated evidence that goes against the opinion that rapid studies are ‘quick and dirty’, as approaches are being used in rapid studies that fit within Lincoln and Guba’s framework for ensuring trustworthiness of research (Lincoln & Guba, 1985). A gap in this field has been identified that no reporting guidelines for rapid studies exist or are being used. The development of reporting guidelines could ensure rapid studies continue to be designed and delivered to produce trustworthy findings, and could help to address the finding from our review that some rapid studies are still failing to clearly report on methods used. Our research team plans to develop reporting standards based on the approaches identified in this review and with consultation of key stakeholders in the field, to facilitate the future transparent reporting and uptake of methods that enable speed while maintaining the rigour of rapid evaluation and research.

Supplemental Material

Supplemental Material - A systematic review of the methods used in rapid approaches to research and evaluation

Supplemental Material for A systematic review of the methods used in rapid approaches to research and evaluation by Sigrún Eyrúnardóttir Clark, Norha Vera San Juan, Thomas Moniz, Rebecca Appleton, Phoebe Barnett and Cecilia Vindrola-Padros in Evaluation Review.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported Medical Research Council; MR/W020769/1.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.