Abstract

Mixed methods research, that is, research that integrates qualitative and quantitative methods, has become increasingly popular in program evaluation because of its potential for understanding complex interventions. Despite recent constructive and fruitful developments that have led to the consolidation of mixed methods as a distinctive methodology, fundamental methodological issues such as generalization have received little attention. The purpose of this paper is to provide a critical reflection on how the concept of generalization has been used in mixed methods research. The paper is structured into four main parts. First, we discuss the relevance of external validity and mixed methods research in impact evaluation. Second, we summarize how generalization is conceptualized in mixed methods research. Third, we present the results of a literature review on generalization practices in mixed methods research. Finally, we conclude with a discussion of threats to and strategies for enhancing generalization in mixed methods research.

Introduction

Mixed methods research, that is, research that integrates qualitative and quantitative methods, has grown in popularity in program evaluation due to its potential for understanding complex interventions (Palinkas et al., 2019). Mixed methods is defined as a type of research that satisfies the following three criteria: (a) it combines at least one qualitative method and one quantitative method; (b) each method is conducted rigorously; and (c) it integrates both methods at the data collection, and/or data analysis, and/or interpretation stages to yield added value compared to single-method designs (Creswell & Plano Clark, 2018; Johnson et al., 2007). Complexity can arise from multiple dimensions, such as a large number of intervention components, interactions between these components and the context, and the system in which the intervention is nested (Thomas et al., 2019). Such complexity cannot be fully understood using quantitative or qualitative approaches alone. Thus, there is a need to use other methodological approaches to study the multilevel and multidimensional nature of complex interventions (Sturmberg, 2019). Using mixed methods in program evaluation can help to understand this complexity and better inform decision-making. For example, several existing quantitative methodological approaches, such as randomized controlled trials (RCT), focus on clinical effectiveness questions (e.g., “Does an intervention work?”), but do not provide the information needed to determine why a particular intervention was or was not effective (O'Cathain, 2018). Adding a qualitative approach to a quantitative intervention component can help address complementary questions on views and process, such as “Why does an intervention work or not?”, “How does the intervention work?”, “What factors influenced participants to participate while others did not?”, “What are the views of the users and providers of the intervention?”. Answering these complementary questions can provide a more complete and precise understanding of intervention complexity and incorporate evidence of effectiveness, intervention context, acceptability, and feasibility (Shaw et al., 2014). In addition, mixed methods research can help bridge the gap between researchers and practitioners by developing context-specific knowledge that can be more easily transferred to practice (Dattilio et al., 2010). For instance, by considering the unique complexity of each participant, mixed methods research can help practitioners understand why an intervention worked for some participants and not for others, thus contributing to assess the degree of generalizability of results from the quantitative strand (Fabregues et al., 2022).

Over the past two decades, there have been several conceptual and methodological developments in mixed methods research that focus, among others, on the rationale for using this approach (Bryman, 2006), types of research designs (Fetters, 2022), integration strategies (Guetterman et al., 2021), and quality assessment (Fàbregues & Molina-Azorín, 2017). Advances in mixed methods seen in primary empirical research can also be observed in mixed methods reviews (also named mixed studies reviews), in which qualitative, quantitative, and mixed methods studies are combined in a systematic literature review (Hong et al., 2020; Hong & Pluye, 2019). However, despite these recent constructive and fruitful developments that have led to the consolidation of mixed methods as a distinctive methodology, fundamental methodological issues such as generalization have received little attention. This gap in the literature is surprising, given that generalization can improve the applicability of findings by helping researchers assess their relevance to other settings, populations, and contexts (Ferguson, 2004). This feature is particularly relevant in program evaluation, as discussed in a special issue of Evaluation Review entitled “External Validity and Generalizability in Program Evaluation,” and published in 2024 (Besharov, 2024). Moreover, the program evaluation literature has emphasized the role of mixed methods in enhancing generalization, for instance, when this approach facilitates the ability to generalize the qualitative findings to a larger population (Barnow et al., 2024; Pluye & Hong, 2014). In addition, mixed methods can enhance the transferability of the evaluation findings to other settings by providing a better understanding of both the context in which the program was implemented and evaluated (i.e., the sending context) and the context in which the findings are intended to be applied (i.e., the receiving context).

The purpose of this paper is to provide a critical reflection on how the concept of generalization has been used in mixed methods research. First, we discuss the relevance of external validity and mixed methods research in impact evaluation. Second, we summarize how generalization has been conceptualized in mixed methods research. Then, we provide the results of a literature review of generalization practices in mixed methods research. We conclude this paper with a discussion of the threats and strategies to enhance generalizability in mixed methods research.

External Validity in Impact Evaluation

Shadish et al. (2002) define external validity as the degree to which the relationship between cause and effect in an experimental evaluation “holds across variations in people, settings, treatments, and measurement variables” (p. 38). Thus, when evaluating a program, external validity must be assessed to ensure that the effects of the program can be generalized beyond the specific conditions of the study (Fredericks et al., 2019). Traditionally, research on program effectiveness has prioritized internal validity over external validity, but more recently several authors have emphasized the importance of external validity, in particular with respect to the relevance and applicability of impact evaluation findings (Cronbach, 1982; Leviton & Trujillo, 2017; Williams, 2020).

A substantial body of literature has argued that, in certain situations, an impact evaluation may involve a trade-off between internal and external validity; thus, external validity decreases as internal validity increases, and vice versa (Cartwright, 2007; Leapley, 1987; Prowse & Camfield, 2013). Internal validity is critical for confidently establishing the causal relationship between the program and its outcomes, that is, for ensuring that the program is effective. However, from the perspective of those authors, the control and artificiality required to increase internal validity (e.g., when conducting an RCT) may limit the extrapolation of findings to wider populations. According to Prowse and Camfield (2013), such control and artificiality “puts severe constraints on the assumptions a target population must meet to justify extrapolating a conclusion outwards from the treatment group” (p. 54). For instance, in the evaluation of a program using a teaching method to enhance science learning, the internal validity of the results would be improved through implementation in a controlled environment with schools in the same district and students from similar socioeconomic backgrounds, as well as by controlling for confounding variables. However, such an improvement in internal validity would, consequently, reduce external validity due to the limited geographic scope of the program and the homogeneous nature of the participant sample. Conversely, if researchers attempt to increase the external validity of the findings, then the control over the confounding variables is reduced. As argued by Leviton and Trujillo (2017), if impact evaluation findings lack or show weak evidence of external validity, it poses a challenge for researchers and other stakeholders to determine whether the causal relationships identified in the experimental evaluation of programs can be generalized to other populations, thereby restricting the potential to use the findings to develop evidence-based and evidence-informed programs.

Williams (2020) distinguishes between two types of external validity in impact evaluation. The first type, external validity for scale-up decisions, refers to the extent to which findings relating to the effects of a program from a particular study sample can be extrapolated to the larger target population. Two potential obstacles to achieving this type of external validity are the difficulty of constituting a study sample representative of the target population and the fact that the effects of the program may be different when implemented on a larger scale. The second type, external validity for policy transportation, relates to whether the effects of the program will hold when it is applied to a population different from that of the original sample. The same author considers that both types of external validity entail the problem of determining how the contextual characteristics of a new implementation setting might interact with the program and affect the causal relationship identified in the original evaluation, thereby limiting the possibility of generalizing the relationship to the new setting. In other words, in a particular context, causal effects observed in one setting are unlikely to be exactly replicated across diverse settings (Leviton, 2015; Leviton & Trujillo, 2017).

Accounting for context variations in real-world settings is a key challenge when assessing the external validity of a program (Leviton, 2015, 2017; Leviton & Trujillo, 2017; Moore et al., 2015; Williams, 2020). Huebschmann et al. (2019) and Williams (2020) have described several contextual attributes of implementation settings that may interact not only with each other but also with the program. These include the following: the characteristics of the target population of the program and other stakeholders, the sociocultural and political characteristics of the setting, the time frame of the program, the existence of previously implemented programs, the human and economic resources available for implementation, the decisions about the treatments to be implemented, and the outcomes measured. External validity has often been viewed as a matter of selecting a representative sample of the broader target population in order to ensure the generalizability of the cause–effect relationship (Gertler et al., 2016). However, since populations are interactively embedded in these contextual attributes, assessing external validity cannot be merely a “problem of sampling or statistical adjustment” (Leviton & Trujillo, 2017, p. 439) but also requires ongoing monitoring of context. Since the number of combinations of context attributes is potentially limitless, Leviton and Trujillo (2017) argue that, “in the absence of formal sampling and effectiveness tests” (p. 441), external validity becomes an inductive process in which five principles outlined by Shadish et al. (2002) should be considered. These principles, which describe the instances in which researchers and users of research can generalize with greater confidence, include (1) surface similarity (i.e., assessing the similarities between the findings of a study and the broader context to which we are trying to generalize); (2) ruling out irrelevancies (i.e., identifying the attributes of people, settings, treatments, times, and outcomes that are not relevant because they do not alter a causal generalization); (3) making discriminations (i.e., identifying the attributes that constrain a generalization); (4) interpolation and extrapolation (i.e., making “interpolations to unsampled values within the range of the sampled instances” and exploring “extrapolations beyond the sampled range” [Shadish et al., 2002]); and (5) causal explanation (i.e., developing and testing explanatory models about the target to which we are trying to generalize). To carry out such an evaluation, researchers need to have a thorough understanding of the context, and this condition, in turn, tends to argue for the use of mixed methods (Leviton, 2017).

In the literature on impact evaluation, mixed methods has been described as a useful approach for conducting well-contextualized evaluations of programs, one that can strengthen the external validity of findings and increase their relevance to policy (Bamberger, 2015; Bamberger et al., 2016; Burrows & Read, 2015; Huebschmann et al., 2019; Leviton, 2017; Onwuegbuzie & Hitchcock, 2017; White, 2008). Bamberger (2015) noted that the effects of a program and how it is implemented are shaped by contextual factors that should be assessed using a mixed methods approach. Leviton (2017) argued that a mixed methods approach can be key to understanding the influence of contextual attributes on the implementation of programs, thereby enhancing the possibility of obtaining evaluation findings that can be generalized. Building on these premises, Onwuegbuzie and Hitchcock (2017) developed a comprehensive meta-framework for conducting mixed methods impact evaluations. These authors point out that the field of impact evaluation has traditionally privileged quantitative methods while underutilizing qualitative and mixed methods approaches. Onwuegbuzie and Hitchcock (2017) identified six reasons for mixing quantitative and qualitative methods in impact evaluations, including generalization and transferability. They also asserted that mixed methods can help describe the evidence in a way that enables researchers to generalize the findings to other contexts and to provide a detailed account of the findings, facilitating the assessment by third parties of the relevance and applicability of the findings in other contexts. Besides highlighting the advantages of using mixed methods for generalization, Onwuegbuzie and Hitchcock (2017) also emphasized the need for impact evaluators to “determine the type and level of generalizability and transferability” (p. 61) of evaluation findings obtained from mixed methods impact evaluation studies.

Lastly, in process evaluation, mixed methods research can help identify and understand the mechanisms of impact of the intervention, that is, how an intervention works and what aspects of the intervention contribute to its effectiveness (Maher & Neale, 2019; O'Cathain, 2018). O'Cathain (2018) provides an example of an evaluation of a telehealth intervention in which a qualitative component, added to two RCTs, provided valuable insights into the mechanisms of impact of the intervention. In particular, interviews with staff and patients allowed the researchers to understand that the motivation of the staff delivering the intervention increased patient engagement with the intervention. Patients felt that the staff cared about their well-being, and this increased their willingness to take actions to improve their health. These findings may be particularly useful for practitioners and policy makers to understand aspects of intervention implementation associated with impact (i.e., mechanisms of impact) that they should consider when implementing similar interventions in other contexts.

External Validity, Transferability, and Generalizability in Mixed Methods Research

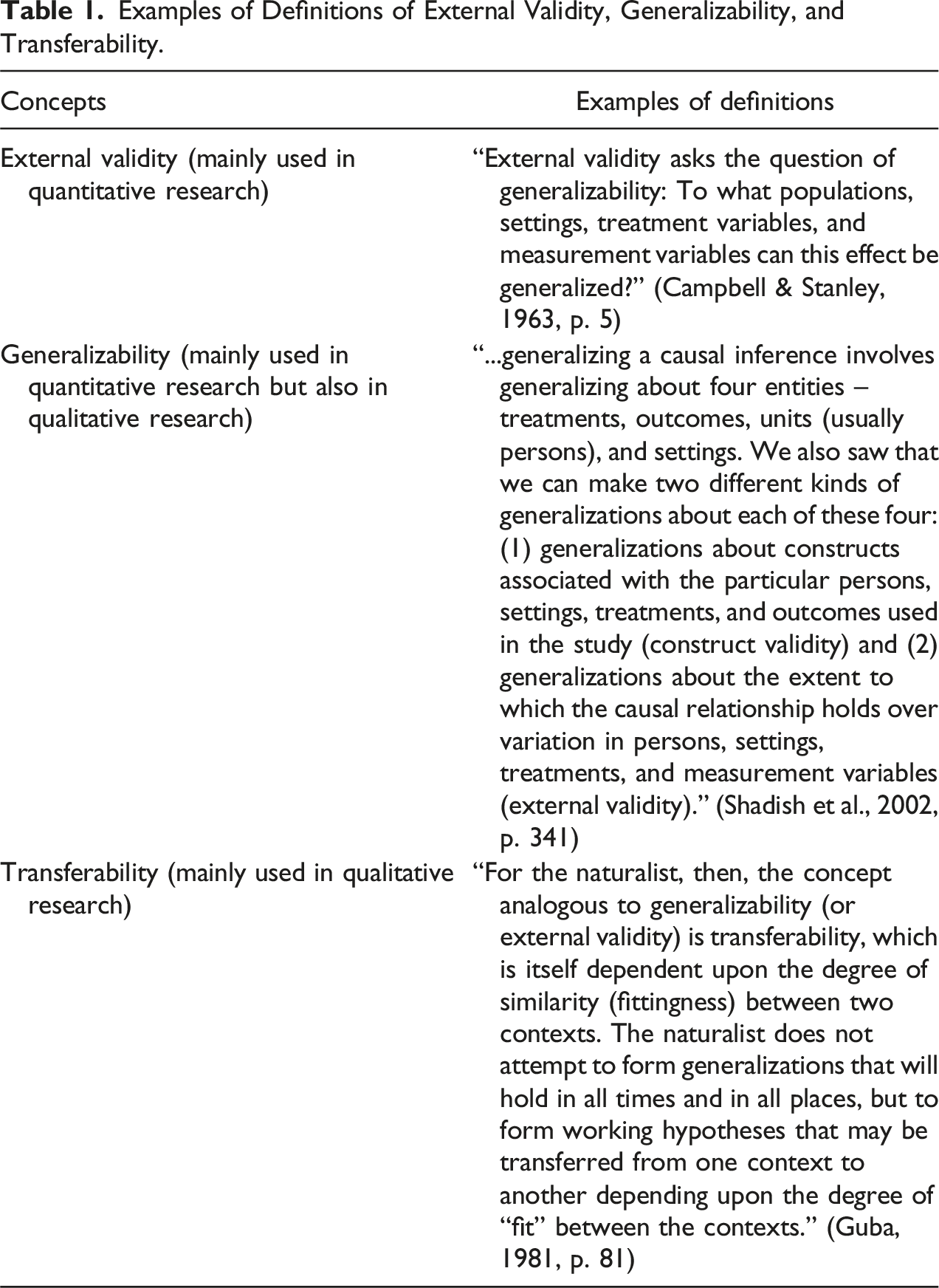

Examples of Definitions of External Validity, Generalizability, and Transferability.

In quantitative research, external validity refers to the extent to which quantitative findings can be generalized to broader contexts (Johnson & Christensen, 2019; Onwuegbuzie, 2003). In this kind of research, the sampling strategy used determines whether a high or low level of external validity is achieved. Probabilistic sampling, which allows researchers to generate a representative sample of the population, is more likely to result in a higher level of external validity. When a non-representative sample is used, the chances of achieving external validity are likely to be much lower.

In qualitative research, the term transferability is often used as a synonym for the quantitative term external validity. Transferability refers to the extent to which qualitative findings can be extrapolated from case to case (Maxwell & Chmiel, 2014). To assess transferability, researchers must provide a detailed and thick description of the context so that readers can judge whether the qualitative findings are applicable to other contexts or settings (Younas et al., 2023). Transferability is high when the similarity between the sending and receiving contexts is high, and it is low when those contexts are markedly different. While transferability is the most common approach to generalizability in qualitative research, some authors have suggested additional strategies for generalization in research of this type. These include maximizing variation in the selection of the qualitative sample to capture variations within the phenomenon that allow researchers to increase generalizability (Larsson, 2009), categorizing participants’ views and experiences within an overarching conceptual framework that can be generalized to a broader population (Lewis et al., 2014), or creating an understanding of causal processes that can later be applied to other cases (Maxwell & Chmiel, 2014).

In mixed methods research, discussions of external validity have not been frequent (Tashakkori et al., 2021; Younas & Durante, 2023). Although interest in the topic of the quality of mixed methods research has grown considerably over the past two decades, the conceptualization, operationalization, and assessment of external validity in this approach have rarely been addressed by published frameworks for the critical appraisal of mixed methods research (Fàbregues & Molina-Azorín, 2017; Heyvaert et al., 2013). This gap is concerning considering the potential of external validity to strengthen the quality of inferences in mixed methods research (Tashakkori & Teddlie, 2008). Furthermore, guidance on how to assess external validity is particularly needed in light of the greater complexity of the task of ensuring quality in mixed methods research as compared to monomethod research. Tashakkori and Teddlie (2008) argue that, as with other components of research quality, mixed methods researchers must consider how external validity manifests itself in all the components of the study (i.e., quantitative, qualitative, and mixed methods), making the process of achieving this type of validity even more challenging. This is a condition that Onwuegbuzie and Johnson (2006) describe as the challenge of legitimation in mixed methods research.

We can find the first explicit reference to external validity in mixed methods research in the first edition of the Handbook of Mixed Methods in Social and Behavioral Research (Teddlie & Tashakkori, 2003). In this publication, they introduced the concept of inference transferability, an umbrella term specific to mixed methods research that is analogous to external validity, construct validity, and generalizability in quantitative research and to transferability in qualitative research. Inference transferability is defined as “the degree to which research conclusions can be applied to other similar settings, people, time periods, contexts, and theoretical representations of the constructs” (Tashakkori et al., 2021, p. 297). This concept refers to both the extrapolation of the mixed methods meta-inferences and the inferences that are made within each component. According to Tashakkori et al. (2021), inference transferability is a key component of the quality control process in a mixed methods study, and it should be considered only after researchers have ensured a suitable degree of confidence in the robustness and credibility of the quantitative, qualitative, and mixed methods inferences. Those authors also emphasize that inference transferability is an ongoing process that also involves other perspectives, including those of the users of the research, who must evaluate whether the mixed methods results are applicable to their own real-world situations. While researchers are responsible for maximizing the transferability of findings, it is ultimately the users of the research who should evaluate the degree of transferability of such findings (Tashakkori et al., 2021). To enable such an evaluation, researchers should provide a detailed description of the study context and findings. Heap and Waters (2019) emphasized an important point regarding inference transferability when they argued that it is likely to be higher when quantitative inferences show a high degree of external validity and when qualitative inferences exhibit a substantial degree of transferability. However, when the degrees of external validity (quantitative component) and transferability (qualitative component) are different, researchers must carefully weigh the importance of each component when assessing the transferability of the overall mixed methods findings.

Onwuegbuzie and colleagues discussed the concept of generalization in several methodological works on sampling and analysis procedures in mixed methods research (Corrigan & Onwuegbuzie, 2020; Onwuegbuzie, 2003; Onwuegbuzie & Collins, 2014). Following earlier work by those authors, Corrigan and Onwuegbuzie (2020) delineated the following six types of generalization that can occur in any qualitative, quantitative, or mixed methods study: (1) external statistical generalization, (2) internal statistical generalization, (3) analytic generalization, (4) case-to-case transfer, (5) naturalistic generalization, and (6) moderatum generalization. In monomethod studies, researchers typically consider only one of these types of generalization when making inferences, whereas in mixed methods studies, multiple types of generalization may be used, a circumstance that adds difficulty to the process of determining the generalizability of meta-inferences (Onwuegbuzie & Collins, 2014). For example, the quantitative and qualitative findings in a particular study may each yield different types of generalization (e.g., statistical generalization for quantitative findings and case-to-case transfer for qualitative findings), requiring researchers to make more complex generalizations. As Onwuegbuzie and Collins (2014) point out, a key challenge for researchers at this stage is the need to avoid interpretive inconsistency, the lack of consistency that may occur between the sampling design and the type of generalization made. In a review of mixed methods sampling designs used in social and health sciences research, Collins et al. (2007 as cited in Onwuegbuzie & Collins, 2014) found that of 54 studies with a quantitative and/or qualitative component based on a sample of 30 or fewer participants, 53.7% reported meta-inferences based on incorrect statistical generalizations.

Younas and Durante (2023) provided the most recent contribution to the discussion of generalization in mixed methods research. In a review of the methodological literature on generalization, they proposed a framework for determining the selection of generalization practices in mixed methods studies. This framework is based on a combination of the following four elements: (1) the type of mixed methods design, (2) Shadish et al.’s (2002) five principles of logical generalization discussed above, (3) Firestone’s (1993) three arguments for generalizing from data, and (4) Onwuegbuzie et al.’s (2009) classification of types of generalization. In contrast to the two previous approaches to generalization in mixed methods research, Younas and Durante’s (2023) framework specifically links the principles of logical generalization and the types of generalization to the types of mixed methods designs. This approach is consistent with the contingent nature of generalization, as some types of generalization may apply to certain types of designs and yet be entirely irrelevant to others.

Review of Generalization Practices in Mixed Methods Research

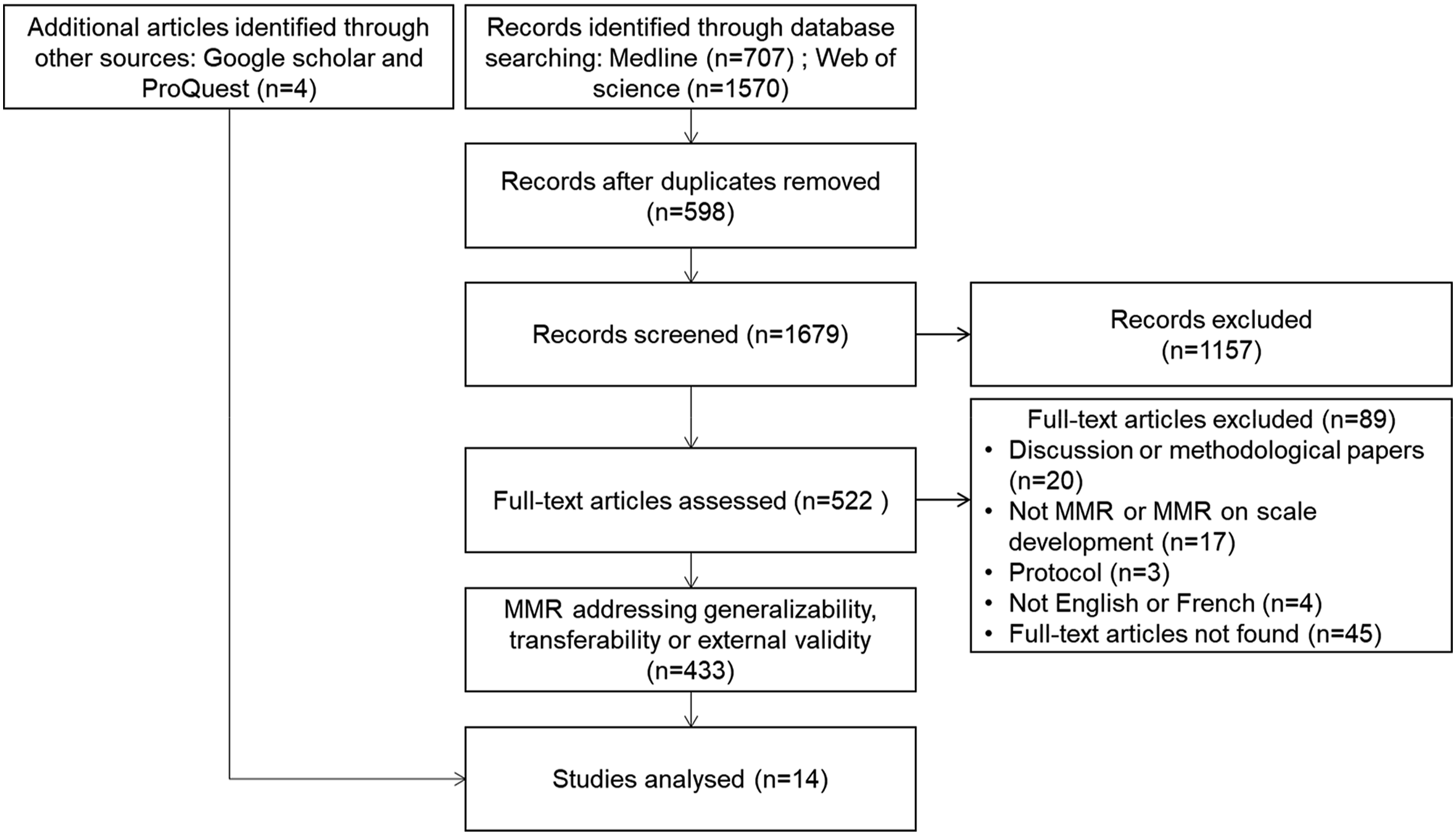

In this section, we present the results of a literature review on generalization practices in mixed methods research. We conducted an exploratory literature review to understand how generalizability is addressed in empirical mixed methods studies. To accomplish this, we searched two bibliographic databases on July 17, 2023: Medline (OVID) and Web of Science (Clarivate). The search strategy combined free text terms related to the concepts of mixed methods and external validity: (mixed method* OR multi method* OR multimethod* or multiple method* OR mixed research OR multiple research method* OR mixed study OR mixed approach).ti,ab,kf AND (external valid* OR generali?a* OR transferab*).ti,ab,kf. All records identified in these databases were transferred to Covidence, a software for systematic reviews. In addition, we searched the ProQuest search engine (ERIC and Social Science Premium Collection) and Google Scholar for studies that mentioned the concept of inference transferability and cited Tashakkori and Teddlie (2003), Teddlie and Tashakkori (2009), Tashakkori et al. (2021), or any other work in which these authors discussed this concept.

The selection process consisted of two steps. First, we screened titles and abstracts to identify articles that presented an empirical mixed methods study and addressed external validity, transferability, or generalizability. Second, we read the full-text papers to identify how these concepts were addressed. To expedite the selection process, a single screening process was used, with the two authors sharing the number of records to be screened and consulting with each other as needed. For this review, we did not limit the selection to studies focusing solely on program evaluation, as there are still few mixed methods studies addressing generalizability in this field. In particular, Barnow et al. (2024) found that mixed methods research is rarely used in impact evaluation and identified only two mixed methods studies that included a discussion of external validity and generalization issues. However, we excluded mixed methods studies specifically related to the development of measurement instruments (e.g., referring to multitrait-multimethod analysis and generalizability theory). We also excluded studies limited to surveys with closed- and open-ended questions. Moreover, we did not consider literature reviews, protocols, and methodological or discussion papers for inclusion. Studies not written in English or French were also excluded. Finally, among the mixed methods studies that addressed external validity, transferability, or generalizability, only those that provided a more detailed discussion on these concepts were retained for further analysis.

In the included studies, we examined how the authors discussed the types of generalization and inference transferability suggested in the mixed methods literature (Onwuegbuzie & Collins, 2014; Tashakkori & Teddlie, 2003). We extracted information on the research field, the mixed methods design used following Creswell and Plano Clark (2018) typology of mixed methods designs (e.g., convergent design, explanatory sequential design, and exploratory sequential design), the methods used, and the justification and description of external validity, transferability, and generalizability.

A total of 1679 records were screened after removal of duplicates, and 14 papers were analyzed (Figure 1). Although we found additional mixed methods studies that mentioned the terms generalizability, transferability, or external validity, the large majority of these studies provided little description of these concepts (n = 423), and therefore, we decided to exclude them for further analysis. In these studies, generalization was mainly used to discuss a study limitation (e.g., the sample size was small, thus limiting the generalizability of the results (e.g., Sin et al., 2022)) or a strength (e.g., consistent results across different methods are more likely to be reliable and generalizable (e.g., Wood et al., 1999)) (n = 190). Generalizability was also mentioned in the conclusion and future research sections (e.g., more studies are needed to improve the generalizability of findings (e.g., Jacoby et al., 2021)) (n = 107). Other studies referred to generalization in the introduction to justify the topic or the use of mixed methods research, or in the objectives or results of the study (e.g., to develop a generalizable framework (e.g., Borek et al., 2019) or to explore the generalizability of the qualitative findings (e.g., Swindle et al., 2021)) (n = 121). Lastly, other studies discussed how the methods used could be applied in other studies (n = 5). Flow Diagram.

Among the 14 included studies, 10 provided a discussion of generalizability, transferability, or external validity (Basurto et al., 2016; Brawner et al., 2012; Cleverley et al., 2017; Csutora et al., 2021; Hamm et al., 2019; Jeevan et al., 2019; Kohnke et al., 2017; Lerback et al., 2022; Roberts et al., 2020; Rosenberg et al., 2020), and four referred to inference transferability (Fox & Connolly, 2018; Huck-Fries et al., 2023; Roots & MacDonald, 2014; Tejay & Mohammed, 2023). Studies were conducted in eight different countries: Canada (n = 1), England (n = 1), Germany (n = 1), India (n = 1), Malaysia (n = 1), Mexico (n = 2), New Zealand (n = 1), and the USA (n = 3). Three studies were conducted in several countries. Various topics were addressed including computer and information sciences (n = 3), education (n = 1), environment and energy (n = 5), and health (n = 5). All studies employed one of the core mixed methods designs: exploratory sequential design (n = 6), explanatory sequential or multistage design (n = 4), and convergent design (n = 4). The common characteristics of each design with respect to generalization are presented in the following paragraphs.

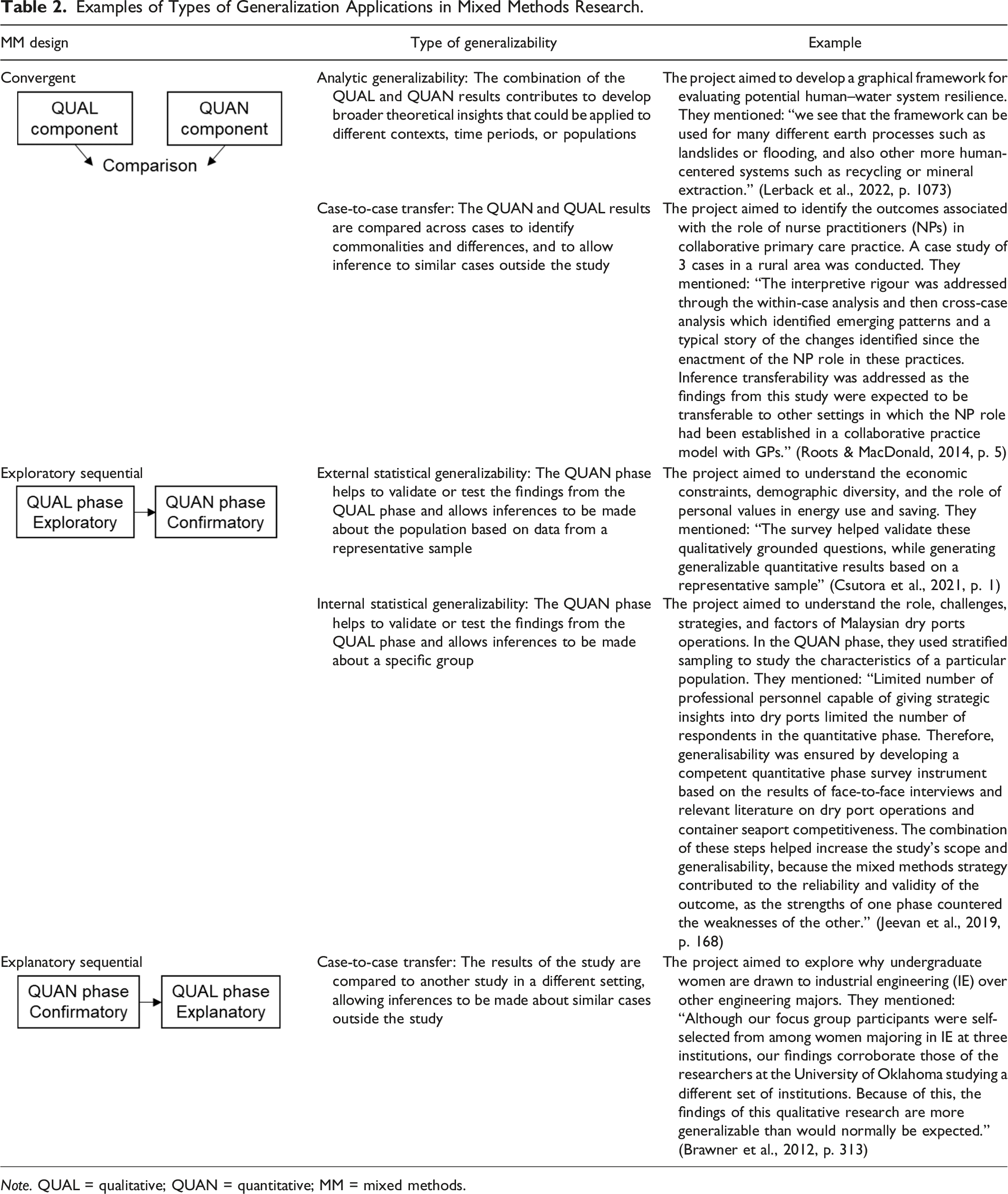

An exploratory sequential design was used in six studies (Csutora et al., 2021; Huck-Fries et al., 2023; Jeevan et al., 2019; Kohnke et al., 2017; Rosenberg et al., 2020; Tejay & Mohammed, 2023). In this design, a qualitative phase is first conducted to explore a phenomenon, which is then followed by a second quantitative phase to generalize the findings. All six studies started with a qualitative phase consisting of interviews or focus groups in order to identify factors or patterns, or to develop a theoretical model and testable hypotheses. The quantitative phase consisted of a survey of a representative sample of participants to test or validate the hypotheses, model, theory, factors, or patterns identified in the first qualitative phase. The exploratory sequential design lends itself well to generalization because the quantitative component usually aims at generalizing the qualitative findings. This refers to external statistical generalization. In the included studies, generalization was ensured by having a representative and large sample and by selecting participants from various fields and professions, within one organization, or from different organizations or countries.

Two studies used an explanatory sequential design (Basurto et al., 2016; Brawner et al., 2012; Fox & Connolly, 2018; Roberts et al., 2020) and two used multistage designs. For example, Basurto et al. (2016) used a more complex design, starting with quantitative experiments, followed by a survey to verify the findings, and a qualitative study to develop an explanatory mechanism. Brawner et al. (2012) performed a quantitative analysis of a large dataset, followed by focus groups of a subgroup, and analyzed websites. We classified these two multistage designs studies in the explanatory sequential design category because they started with a quantitative phase, and the subsequent phases were used to explain or expand the results from the first phase. These studies mainly commented on issues related to the external validity, transferability, or generalizability of one of the phases, especially the qualitative phase, such as the use of a small sample size or the omission of certain perspectives. However, they mentioned that these limitations were compensated by obtaining similar results from the qualitative and quantitative phases. They also compared their results with other similar studies in different settings, which could be related to a type of case-to-case transfer.

Four studies used a convergent design, of which two studies aimed to develop a theoretical framework (Cleverley et al., 2017; Lerback et al., 2022), one study evaluated the performance and application of a software application (Hamm et al., 2019), and one study identified outcomes (Roots & MacDonald, 2014). In all of these studies, the quantitative and qualitative results complemented each other to provide a more complete picture of the phenomenon under study. They were primarily concerned with analytic generalizability and case-to-case transfer.

Examples of Types of Generalization Applications in Mixed Methods Research.

Note. QUAL = qualitative; QUAN = quantitative; MM = mixed methods.

Inference transferability did not differ based on the mixed methods design. Among the four studies that explicitly mentioned inference transferability, all referred to ecological transferability (e.g., “Meta-inference can be transferred to other IT contexts, such as the private and public sector” (Huck-Fries et al., 2023, p. 6)). One study also addressed population transferability (“Inferences are pertinent to older citizens in 2 countries, and can be extended in further research” (Fox & Connolly, 2018, p. 1002)). These papers did not further explain how transferability was facilitated with mixed methods. Three other studies discussed inference transferability without explicitly naming it (Hamm et al., 2019; Kohnke et al., 2017; Lerback et al., 2022). All of them referred to ecological transferability, except for one, which also discussed other types of transferability. For example, Hamm et al. (2019) provide a detailed discussion of generalizability and transferability, discussing how their findings can be applied outside the UK and Europe (ecological transferability), with a sample population of older adults (population transferability), and how their project contributed to the advancement of methods, as well as the interoperability and technical implementation of the application (operational transferability).

Several limitations of this review should be noted. First, to expedite the process, only one reviewer was involved in the screening and analysis of the papers, as the reviewers shared the tasks. We consulted each other when necessary and agreed on the final list of papers to be included. Second, we did not appraise the quality of the included studies because the aim was to explore how generalization is addressed in empirical mixed methods research. Although the included studies had a section on generalizability, the level of detail and transparency in reporting this aspect differed considerably, as some studies lacked information on how generalization was achieved or could be achieved. Third, we may have missed published mixed methods studies that addressed this topic because the search was limited to two bibliographic databases. Likewise, during our literature search, we did not have access to some full-text articles (n = 45). Fourth, since we only included studies with a section or subsection specifically devoted to the topic of generalization, we may have missed other empirical mixed methods studies that discussed generalization less explicitly. Nevertheless, based on the papers found, we were able to meet the objectives of this review. We identified a large number of mixed methods studies that mentioned external validity, transferability, and generalizability. However, very few papers further developed on the generalizability of their findings. The included studies covered different mixed methods designs and addressed several types of generalization.

Threats to Generalization and Mitigation Strategies in Mixed Methods Research

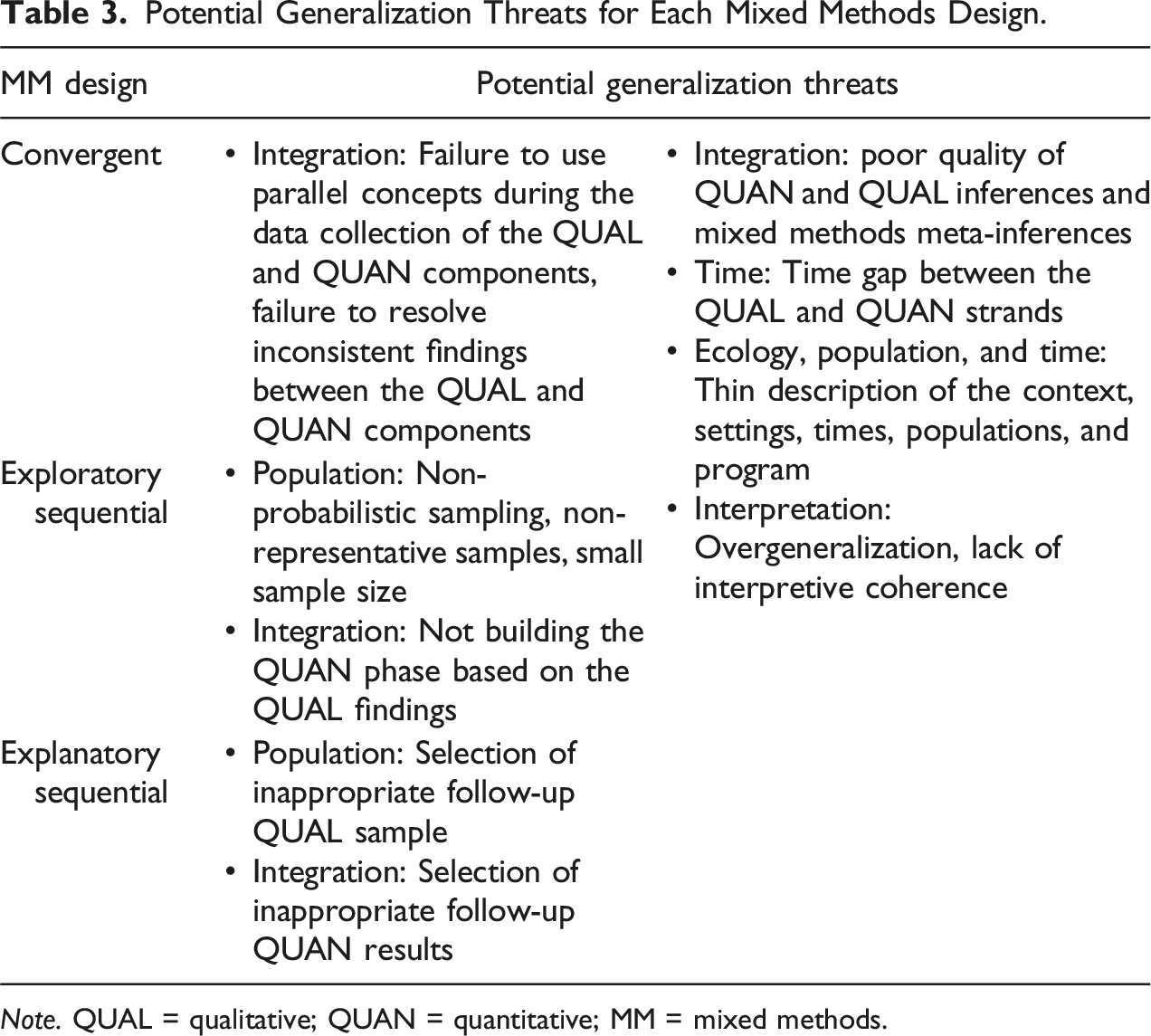

Potential Generalization Threats for Each Mixed Methods Design.

Note. QUAL = qualitative; QUAN = quantitative; MM = mixed methods.

Population Threats

Population threats consist of factors that affect the extent to which inferences drawn from a study can be applied to other individuals or entities than those from the study. In an exploratory sequential design, specific issues need to be taken into account if the aim of the quantitative phase is to generalize the findings from the qualitative phase in order to make inferences about external statistical generalization (see example in Table 2). In this case, non-probabilistic sampling should be avoided for the quantitative phase because it does not enable the selection of a representative sample of the target population. Also, a small sample size can affect the generalizability of research findings since it will reduce the statistical power to detect the true effects of a program and will not adequately represent the diversity and variability present in the larger population, thus limiting the representativeness of the sample (Curry & Nunez-Smith, 2015). Therefore, in the quantitative phase of an exploratory sequential design, probabilistic sampling with a large sample size is preferred to ensure that the sample is representative of the population to which the findings are intended to be generalized.

In an explanatory sequential design, other potential population threats can affect the validity of the study and, thus, its generalizability (Ivankova, 2013). For example, threats may arise from selecting inappropriate individuals or sample sizes for the quantitative and qualitative phases, or selecting the wrong individuals for follow-up, which can lead to limited inferences from the findings. Thus, careful consideration should be given to the type of participants that should be sampled for follow-up of the quantitative results.

Temporal Threats

Temporal threats are factors that can affect the extent to which research findings can be applied across different time periods or to changes in contextual factors over time. There may be cases in which the results can be heavily influenced by the time at which the data were collected, making the results less applicable to other time periods (e.g., some results from studies conducted during the COVID-19 pandemic may not be relevant today). Moreover, Plano Clark and Creswell (2008, p. 349) discuss generalization decay, highlighting that valid generalizations may evolve over time and differ across historical contexts. Thus, a detailed description of the time period, when appropriate, is essential to help readers account for potential temporal changes in the context, such as conditions, practices, or societal norms, that may affect the generalizability and applicability of the findings.

Another potential temporal threat relates to the time elapsed between the implementation of the quantitative and qualitative components of a mixed methods study. For example, when the two components are not carried out concurrently, there can be a change in the setting and population during the time lag between them. This change could affect the comparability of the quantitative and qualitative findings and the relevance of their integration. If there is a significant delay between components, it is important to systematically document and report any changes in the context and population over the course of the study that may affect the results and their potential for generalizability.

Ecological Threats

Ecological threats are factors that can affect the extent to which findings can be applied across different settings and contexts. In mixed methods research, qualitative methods play a crucial role in providing deep contextual understanding. For example, this is particularly valuable in program evaluation because RCTs tend to prioritize standardization, which can neglect the various contextual factors that can influence the implementation and effectiveness of a program (O'Cathain, 2018). Therefore, integrating qualitative methods helps to fill this gap by uncovering the rich contextual details that RCTs may overlook, ultimately enriching the overall understanding and applicability of research findings. A threat associated with ecological generalizability is the tendency to provide only a superficial description of the program and the context in which the study takes place, which limits the understanding of the phenomenon being studied. As presented in the previous section, some studies included in the review referred to ecological transferability. Yet, several of these studies did not explain how and why their results could be applicable to other contexts, raising questions about their relevance.

Similar to population and temporal threats, it is also key to provide a detailed description of the characteristics of the setting and program. Employing thick description offers a more comprehensive portrayal of the context, providing rich, nuanced, and detailed insights that are essential for achieving naturalistic generalization (Hitchcock & Onwuegbuzie, 2022). This will help users judge the value and applicability of the program in their own settings. There exist several reporting guidelines that can help ensure that all the important information from studies is included in publications (Simera et al., 2010). For example, the TIDieR (Template for Intervention Description and Replication) is a checklist to ensure that the interventions studied are described in sufficient detail to allow their replication (Hoffmann et al., 2014).

Integration Threats

Integration threats are factors that affect the effective integration of the qualitative and qualitative methods. Integration is a fundamental feature of mixed methods research that has been defined as the “optimal mixing, combining, blending, amalgamating, incorporating, joining, linking, merging, consolidating, or unifying of research approaches, methodologies, philosophies, methods, techniques, concepts, language, modes, disciplines, fields, and/or teams within a single study” (Hitchcock & Onwuegbuzie, 2022, p. 3). This definition underscores that integration can serve different purposes and operate at different levels. Effective integration and a clear description of the integration process are essential to demonstrate the enhanced insights and comprehensive understanding that justify the use of mixed methods in research.

Several integration threats can be identified based on the study design. In convergent designs, failing to use parallel concepts or constructs during the data collection of the qualitative and quantitative components can complicate the integration of findings, especially if the goal is to assess the convergence and divergence between both components (Creswell & Plano Clark, 2018). Also, the inability to resolve inconsistent findings between the quantitative and qualitative components is another threat found in convergent designs (Creswell & Plano Clark, 2018). This threat can be addressed using different strategies such as verification, reconciliation, and initiation (Pluye & Hong, 2023). Exploring divergences can lead to discover unanticipated insights that can contribute to a more complete and deeper understanding of complex phenomena (Pluye & Hong, 2023). In exploratory sequential designs, failure to build the quantitative phase based on the qualitative findings is a threat that can diminish the quality of integration (Creswell & Plano Clark, 2018). In explanatory sequential designs, using weak or unimportant quantitative results to follow-up the qualitative phase, switching the order of interpreting the results, and using wrong integration strategies can lead to inconsistent and incorrect conclusions (Ivankova, 2013). It is thus important to carefully identify the results that will be used to inform the subsequent phase and clearly describe how integration will take place.

Other integration threats relate to the quality of quantitative and qualitative data and results, and the ability of researchers to draw accurate and meaningful inferences and meta-inferences (Hitchcock & Onwuegbuzie, 2022). Meta-inferences that do not effectively integrate qualitative and quantitative data may have limited applicability beyond the specific conditions and methods used in the study. As mentioned by Tashakkori et al. (2021), inference transferability can only be made once the robustness and credibility of the quantitative, qualitative, and mixed methods inferences are established. Hence, it is essential to ensure that all the components of the mixed methods study adhere to the quality criteria of each tradition (Fàbregues & Molina-Azorín, 2017). Also, appropriate validation strategies specific to each mixed methods design should be used. For example, in explanatory sequential designs, it is suggested that researchers apply “a systematic procedure for selecting participants for qualitative follow-up, elaborating on unexpected quantitative results, and observing interaction between qualitative and quantitative study strands” (Ivankova, 2013, pp. 41–42).

Another strategy for improving generalizability, suggested by Younas and Durante (2023), is to ensure that integration occurs at different levels in a mixed methods study. For example, Fetters and Molina-Azorin (2017) describe 15 different dimensions in which integration can take place such as at the philosophical, theoretical, team, interpretation, research design, and research integrity dimensions. Younas and Durante (2023) suggest that ensuring that integration is applied at various levels or dimensions of a mixed methods study can enhance its credibility and generalizability.

Interpretive Threats

In mixed methods research, there is a potential risk of overgeneralizing observed data, which can significantly affect the quality of meta-inferences (Onwuegbuzie et al., 2011). This can occur when findings from the qualitative and quantitative strands are extrapolated too broadly, potentially leading to conclusions that may not accurately reflect the complexity or nuances of the phenomenon being studied. This can also occur due to the misapplication of logical generalization and a lack of critical thinking about generalization issues (Nastasi & Hitchcock, 2015). Moreover, as previously highlighted, Onwuegbuzie and Collins (2014) noted the possibility of a lack of interpretive consistency between the sampling design and the type of generalization made, such as inferring external statistical generalizability with a small sample size. Mixed methods researchers should be cautious about the arguments they present and avoid mixing incompatible appeals (Plano Clark & Creswell, 2008).

To avoid the problem of lack of interpretive consistency, Onwuegbuzie and Collins (2014) suggest that researchers should plan for expected generalizations from the beginning of the study. For example, if a project aims to make inferences about external statistical generalization, the protocol should include the use of an appropriate probabilistic sampling strategy to gather a representative and large sample of participants. In another instance, if the inference is about analytical generalization, researchers should expect a thorough immersion in the data to conduct an insightful analysis.

Younas and Durante (2023, p. 186) suggest “generating strong and plausible inferences and meta-inferences” as a strategy to enhance generalization in mixed methods research. These inferences should be consistent with the study aim, research questions, and findings (Fàbregues & Molina-Azorín, 2017). In addition, Teddlie and Tashakkori (2009, p. 298) specified that the inferences made on the basis of the results should be “consistent across variation in persons, settings, treatment variables, and measurement variables.”

Another strategy to help with the interpretation of the findings of a study and improve their generalizability is to involve stakeholders such as patients, families, clinicians, and decision-makers. Involving stakeholders in the research process can help improve generalization in several ways, including helping to shape relevant research questions, ensuring that recruited participants are representative of the target population, helping to interpret findings, contextualizing evidence, and providing insight into how study findings might be applied to different settings or populations (Esmail et al., 2015; O'Cathain, 2018).

Conclusion

In this paper, we have argued that external validity, transferability, and generalizability of program evaluation findings are essential to enhancing the relevance and applicability of such findings in real-world settings. However, determining these aspects is challenged by contextual factors that make it difficult to replicate the cause-and-effect relationships identified in a program evaluation in other contexts. In light of this challenge, mixed methods research can be particularly helpful in providing a comprehensive account of contexts, thus helping researchers and decision-makers assess the potential generalizability of findings. However, the methodological literature on mixed methods has provided limited discussion of external validity, transferability, and generalizability, with the works of Onwuegbuzie and colleagues, Tashakkori and Teddlie, and Younas and Durante being notable exceptions. The lack of engagement with these concepts is also found in the empirical literature, as revealed by our literature review of generalization practices in mixed methods studies. We identified a large number of mixed methods studies that explicitly mentioned external validity, transferability, or generalizability, but only 10 discussed these concepts, which is a small number given the exponential growth of mixed methods research across disciplines. This omission may be due to the lack of methodological guidance in the mixed methods literature, but also to a number of threats to their realization and other reasons such as word limit restrictions in journals. Knowledge of these threats and the use of strategies to mitigate them, such as those proposed in the final part of this paper, are fundamental to improving the generalizability of findings from mixed methods evaluations and thus their potential use in practice. At a minimum, a thick description of the methods, context, population, time, and program is needed to allow users to judge the applicability of the findings. It is hoped that this paper will increase awareness of the importance of generalization in mixed methods research and trigger fruitful discussion and future work toward improving the quality of mixed methods research.

Footnotes

Acknowledgments

We thank Dr. Burt S. Barnow for his constructive comments on the preliminary results of the review. Also, we would like to thank Dr. Anne Revillard for editing this special series on external validity. QNH holds a Junior 1 Research Scholar Award from the Fonds de recherche du Québec – Santé (FRQS).

Author Contributions

The two authors equally contributed to this paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.