Abstract

Background:

Many place-based randomized trials and quasi-experiments use a pair of cross-section surveys, rather than panel surveys, to estimate the average treatment effect of an intervention. In these studies, a random sample of individuals in each geographic cluster is selected for a baseline (preintervention) survey, and an independent random sample is selected for an endline (postintervention) survey.

Objective:

This design raises the question, given a fixed budget, how should a researcher allocate resources between the baseline and endline surveys to maximize the precision of the estimated average treatment effect?

Results:

We formalize this allocation problem and show that although the optimal share of interviews allocated to the baseline survey is always less than one-half, it is an increasing function of the total number of interviews per cluster, the cluster-level correlation between the baseline measure and the endline outcome, and the intracluster correlation coefficient. An example using multicountry survey data from Africa illustrates how the optimal allocation formulas can be combined with data to inform decisions at the planning stage. Another example uses data from a digital political advertising experiment in Texas to explore how precision would have varied with alternative allocations.

Keywords

Surveys are widely used to measure outcomes in randomized control trials (RCTs) and quasi-experiments. Although only endline (posttreatment) outcome data are required for the estimation of treatment effects in RCTs, baseline (pretreatment) survey data may be helpful for improving statistical precision and power. In panel surveys, a common set of respondents is tracked over time from baseline to endline, allowing researchers to assess how the trajectories of individual subjects’ outcomes in the treatment group compare with those of the control group. Optimizing the design of panel surveys for efficient estimation of average treatment effects (ATEs) has attracted increasing scholarly attention (McKenzie, 2012).

As Gail, Mark, Carroll, Green, and Pee (1996) discuss, panel surveys have important strengths and are often desirable for statistical precision, but they can also have important drawbacks in some contexts. Maintaining contact with baseline respondents may be costly or difficult, especially when tracking subjects who frequently change address or phone number (Parker & Teruel, 2005). A further concern is that the baseline interview may prime subjects in ways that alter their reaction to the treatment, distort their posttreatment survey responses, or cause nonresponse rates in the endline survey to differ between treatment and control groups (Flay & Collins, 2005; Solomon, 1949).

When treatments are administered to a set of geographic clusters (Boruch, 2005; Gail, Mark, Carroll, Green, & Pee, 1996), an alternative measurement design is to interview a random sample of individuals within each cluster at baseline and another random sample at endline. When researchers gather survey data using this repeated cross-section design with clusters of equal size, the ATE of the intervention may be estimated by comparing the average outcomes of treatment and control group clusters in the endline survey, adjusting for preexisting differences in the baseline survey.

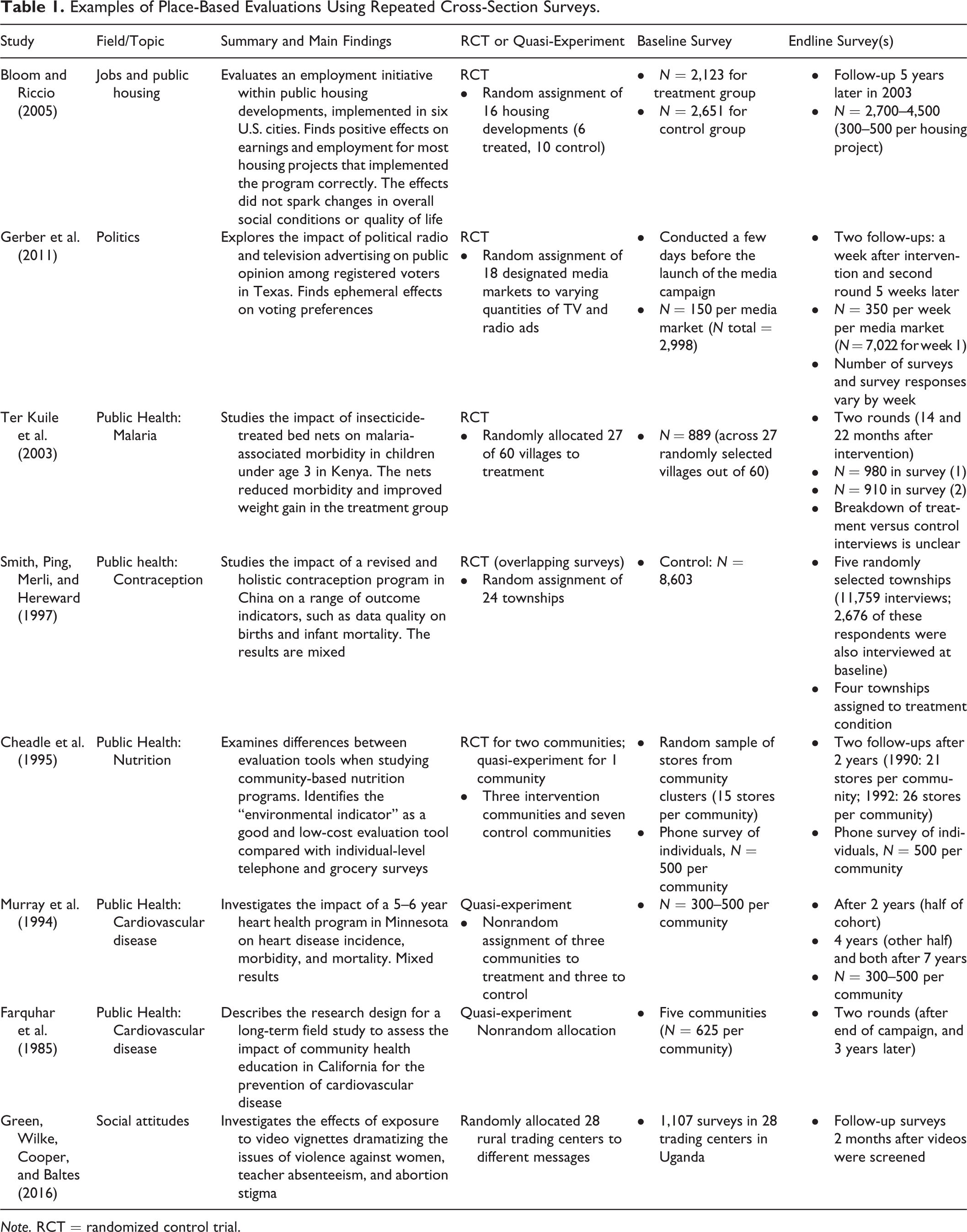

A wide array of applications have used this design. Table 1 presents illustrative examples of repeated cross-section designs from a variety of substantive domains. For example, Ter Kuile et al. (2003) assessed the effects of bed nets on malaria among young children by randomly assigning 60 Kenyan villages to treatment and control. Random samples of children in each village were given medical exams at baseline, and new random samples were examined at endline. Another example is Gerber, Gimpel, Green, and Shaw (2011), which assessed the persuasive effects of political advertisements across 18 television markets by conducting a baseline survey within each market before the advertising campaign and drawing new samples within each market for the endline surveys. Indeed, the use of this design is common among experiments that assess the persuasive effects of political advertising, where automated phone surveys are conducted with distinct random samples of registered voters during baseline and endline periods. These automated surveys are directed at landline phone numbers associated with a particular address rather than a specific person, which makes it impractical to conduct panel surveys that track the same respondents over time. One of the empirical applications described below (Turitto, Green, Stobie, & Tranter, 2014) uses this design to assess the effects of digital advertising on behalf of a candidate for lieutenant governor of Texas. Although such studies are common, political campaigns rarely make the results public.

Examples of Place-Based Evaluations Using Repeated Cross-Section Surveys.

Note. RCT = randomized control trial.

When using the repeated cross-section design to estimate the ATE of an intervention, a resource allocation question arises: In order to maximize the precision of the estimated ATE, how much of the survey budget should be allocated to the baseline survey as opposed to the endline survey? To our knowledge, none of the studies listed in Table 1 discuss this allocation problem.

This article begins by formalizing the allocation problem in a balanced experimental design (where equal numbers of clusters are assigned to treatment and control) and deriving a result that expresses the optimal allocation as a function of the budgeted number of survey interviews per cluster, the cluster-level correlation between the baseline measure and the endline outcome, and the intracluster correlation coefficient (ICC). We then show how insights from the formal analysis can be applied in practice, using data from the Afrobarometer surveys (Afrobarometer, 2009, 2015) for an illustrative example. Next, we discuss survey allocation in an imbalanced design, where the expense associated with administering treatment leads researchers to assign more clusters to control than to treatment. In the concluding section, we summarize the main lessons and discuss possible extensions to address a wider range of design considerations.

Model and Notation

To keep the allocation problem tractable, we will make a number of simplifying assumptions. First, suppose that we are planning an experiment or quasi-experiment with J clusters and that we are willing to assume the clusters are randomly assigned to treatment or control—either because the study is in fact a cluster-randomized experiment or because we believe the treated and untreated clusters are similar enough that modeling treatment as cluster randomized is reasonable. (In many nonrandomized studies, this assumption is not reasonable, and our analysis would need to be extended to consider possible roles for baseline covariates in reducing bias.)

Assume that baseline and endline interviews are equally costly and that our survey budget allows a total of S interviews. 1 One option is to allocate the entire budget to the endline survey, since treatment effects can be estimated without baseline data. Can precision be improved by allocating some interviews to a baseline survey and using the baseline data for blocking or covariate adjustment? If so, how many baseline and endline interviews should be conducted? 2

For now, we assume a balanced design in which

Our analysis assumes that the J clusters were randomly selected from a much larger superpopulation and that the goal is to estimate an ATE (defined below) in the superpopulation. In practice, many studies use clusters that are not randomly drawn from any superpopulation. Some researchers therefore prefer a “finite population” framework in which statistical inferences are limited to the actual clusters in the study. Others defend the superpopulation framework on the grounds that it is useful to make inferences about “a hypothetical infinite population, of which the actual data are regarded as constituting a random sample” (Fisher, 1922, p. 311). However, the two frameworks tend to yield similar or even identical results, and the superpopulation framework often makes the mathematics easier. For a helpful discussion, see Reichardt and Gollob (1999).

Suppose each cluster j has a population of

In each cluster j, the endline survey collects outcome data from a random sample

If

If

The allocation problem is to choose

To simplify the derivations and formulas, we will analyze the variances of

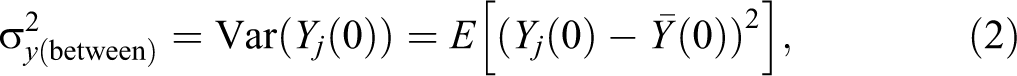

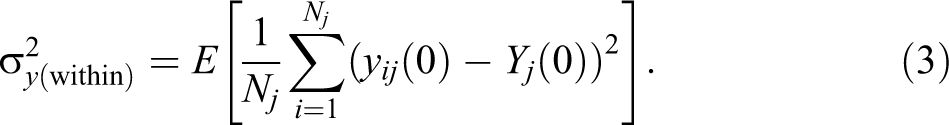

We now define several quantities that affect the variance of the estimated treatment effect. The between-cluster variance of the potential outcomes is the variance of

where

Define the covariate’s between-cluster variance

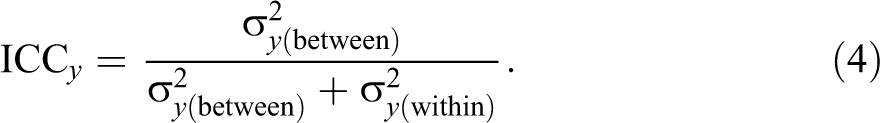

The ICC of the potential outcomes is given by:

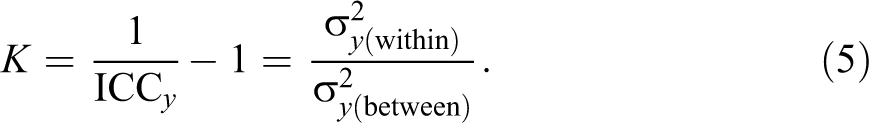

We define

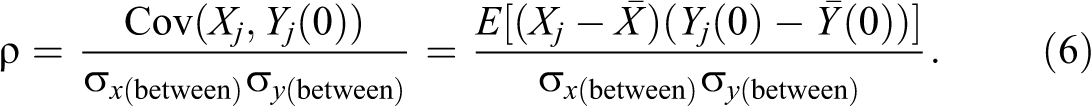

The between-cluster correlation between the covariate and each potential outcome is the correlation between

Equivalently, ρ is the square root of the R

2 that would be obtained if we could run a regression of

Results for Balanced Designs

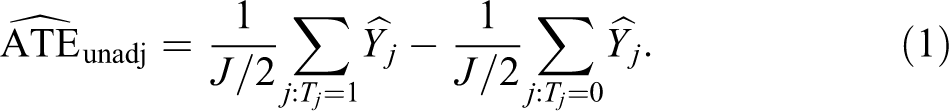

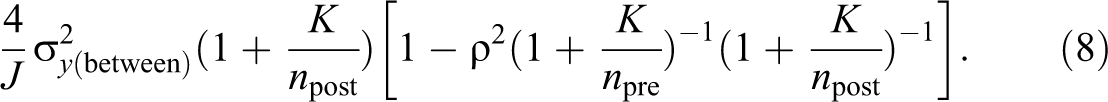

The Appendix shows that the variance of

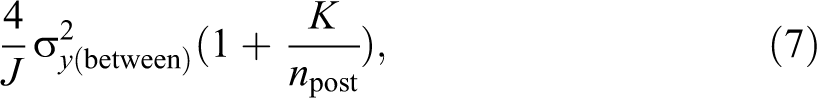

while, for large enough J, the variance of

The factor

The approximation to the variance of

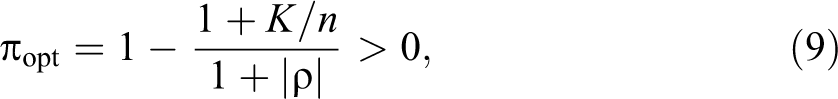

The optimal allocation of interviews between the baseline and endline surveys is derived in the Appendix. If

and, for large enough J, allocating

To interpret formula (9) and its requirement that

Related to the previous point, the condition For any given values of ρ and K, as n goes to infinity,

Example Using Afrobarometer Data

When deciding how to allocate a survey budget between baseline and endline interviews, one does not know the values of ρ and the ICC, but it may be possible to form educated guesses using external data. This example illustrates the types of calculations involved, using data from the Afrobarometer, an ongoing series of cross-section public opinion surveys on democracy, governance, economic conditions, and related issues in African countries (Afrobarometer, 2009, 2015).

The first round (wave) of Afrobarometer surveys was conducted in 12 countries from 1999 to 2001. More recent rounds have included over 35 countries, with representative samples of 1,200 or 2,400 noninstitutionalized adult citizens in each country. The Afrobarometer surveys are useful for illustrative purposes given the large number of randomized trials conducted in Africa that use surveys to measure outcomes, the large number of respondents in each country at each point in time, and the wide array of outcomes measured (which allows us to consider outcomes with different ICCs and different values of ρ).

In order to simulate the country-level assignment typical of many quasi-experiments that estimate the effects of national policies on outcomes (e.g., Dorn, Fischer, Kirchgässner, & Sousa-Poza, 2007; Welsch, 2007), we use data from the 20 countries that were included in both the fourth (March 2008 to June 2009) and the fifth (October 2011 to September 2013) rounds of Afrobarometer surveys.

8

We focus on two outcome variables: Economic optimism: “Looking ahead, do you expect the following to be better or worse: Economic conditions in this country in 12 months’ time?” (coded on a scale of 1 = “much worse” to 5 = “much better”).

9

Inclination to protest: “Here is a list of actions that people sometimes take as citizens. For each of these, please tell me whether you, personally, have done any of these things during the past year. If not, would you do this if you had the chance: Attended a demonstration or protest march?” (coded on a scale of 0 = “no, would never do this” to 4 = “yes, often”).

10

Consider the problem of allocating a survey budget in a cluster-randomized experiment or quasi-experiment where the main outcomes of interest resemble the economic optimism and protest inclination variables. Suppose it has already been decided that the experiment will include 20 clusters, with 10 clusters assigned to treatment and 10 to control, and the budget allows a total of n interviews per cluster. For illustrative purposes, we will show calculations for both

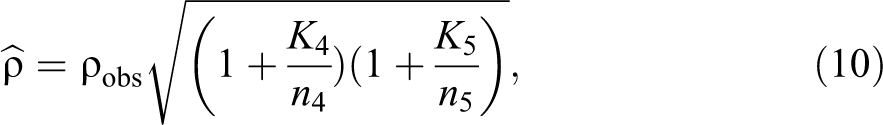

To apply formula (9), we have the number of interviews per cluster n, but we need to estimate

Here, we use the analysis of variance (ANOVA) estimator of ICC (Donner, 1986, p. 68; Ridout, Demétrio, & Firth, 1999, p. 138). The estimated ICCs for economic optimism are 0.180 and 0.231 in the fourth and fifth rounds of the survey, while for inclination to protest, the corresponding estimates are 0.0425 and 0.0458. These translate into estimates for K of 4.56 or 3.33 (economic optimism) and 22.5 or 20.8 (inclination to protest).

The simplest way to estimate ρ is to just use the observed correlation between the fourth- and fifth-round country-level means of the relevant variable. These correlations are 0.578 for economic optimism and 0.681 for inclination to protest. However, ρ in formula (9) is the correlation between the cluster-level population means of the covariate and outcome in the absence of treatment, while the observed correlation

where

The boundary condition

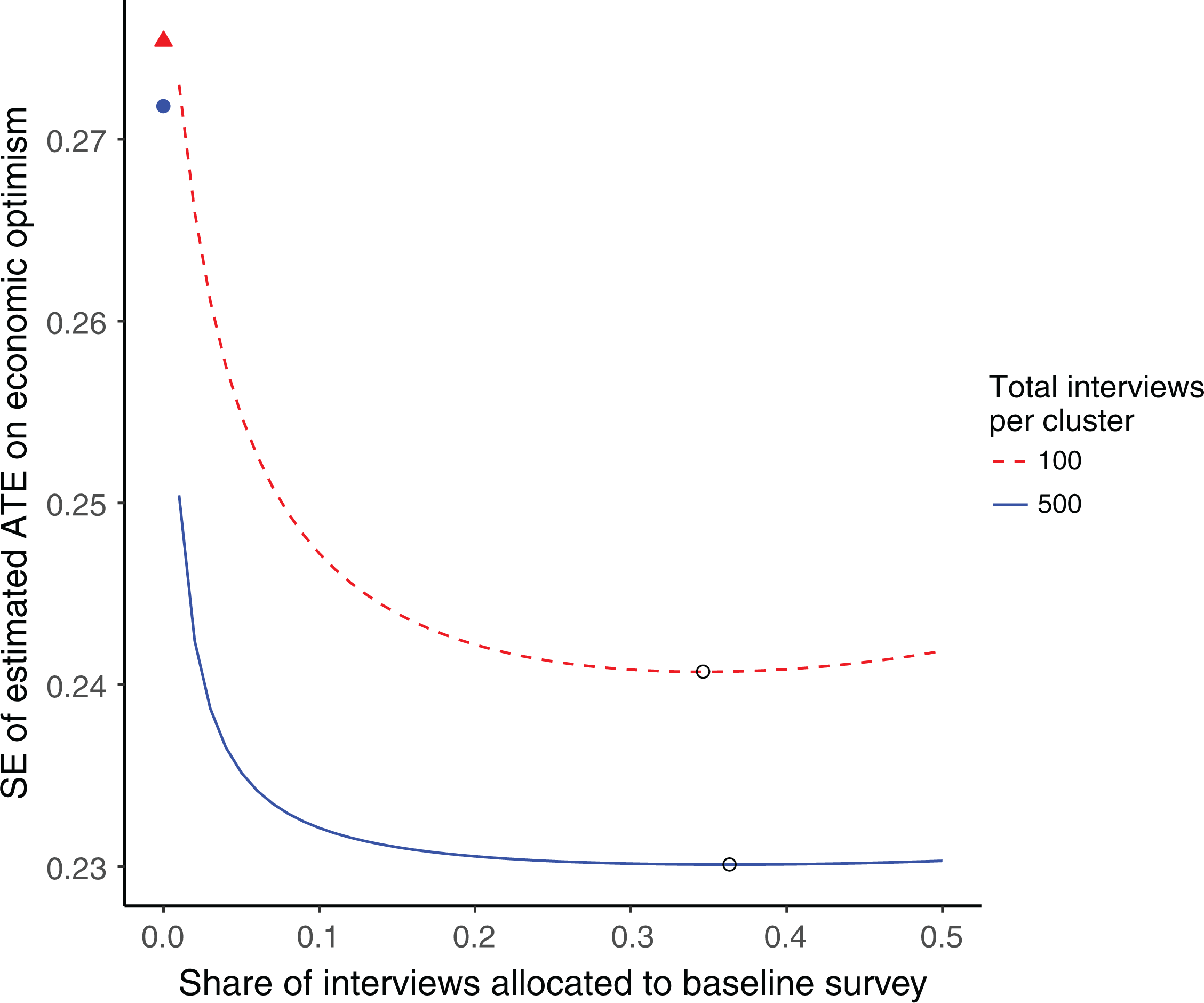

Figure 1 shows how the proportion of baseline interviews π affects the standard error (SE) of the estimated ATE on economic optimism:

Survey allocation and precision when the outcome variable is economic optimism. Near the top left corner, the filled triangle (for n = 100 interviews per cluster) and circle (for n = 500) show the standard error (SE) of the unadjusted estimate of average treatment effect when all interviews are allocated to the endline survey. The curves plot the SE of the regression-adjusted estimate against the share of interviews allocated to the baseline survey. The open circle on each curve marks the optimal baseline share. See text for details.

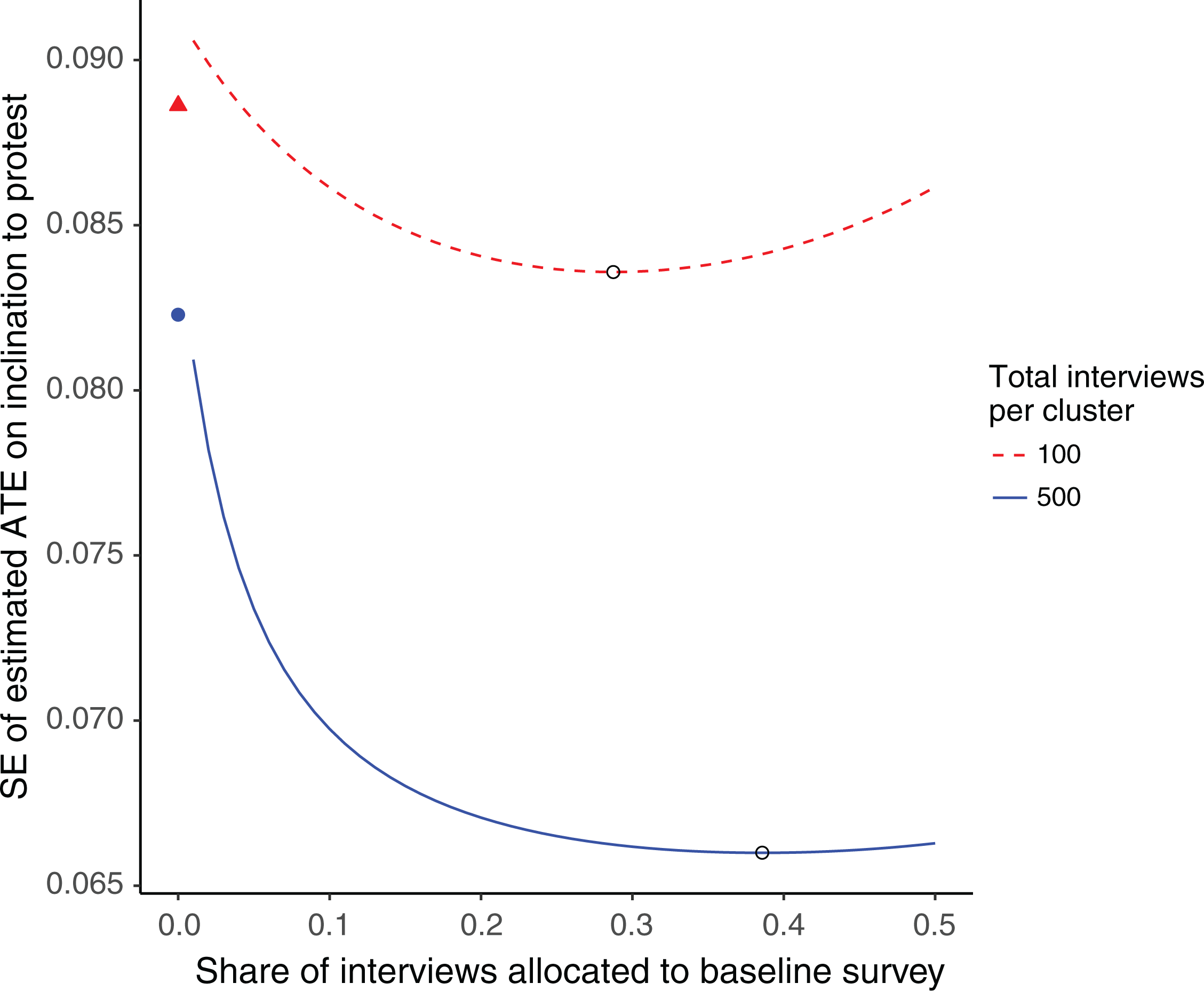

Near the top left corner, the two points marked with a filled triangle (for The two curves (dashed for The open circle on each curve marks the optimal allocation from formula (9). The optimal baseline shares are

In Figure 1, when

Figure 2 plots the analogous calculations for the protest inclination outcome variable. (The SEs are much smaller because the estimate of the between-cluster variance

Survey allocation and precision when the outcome variable is inclination to protest. Near the top left corner, the filled triangle (for n = 100 interviews per cluster) and circle (for n = 500) show the standard error (SE) of the unadjusted estimate of average treatment effect when all interviews are allocated to the endline survey. The curves plot the SE of the regression-adjusted estimate against the share of interviews allocated to the baseline survey. The open circle on each curve marks the optimal baseline share. See text for details.

Imbalanced Designs

Many experiments and quasi-experiments use imbalanced designs with unequal-sized treatment and control groups. For example, if the intervention is very costly, the researchers may decide to assign

We also assume that if a baseline survey is conducted, we will estimate the ATE using the coefficient on

While it appears to be difficult to solve for an exact optimum, numerical calculations (such as those in the example below) suggest that the following allocation performs well in many scenarios: Allocate half the interviews to the treatment group and half to the control group.

12

The number of interviews per cluster will then differ between the treatment and control groups: There will be Let

to the baseline survey. (Although π could be allowed to differ between the treatment and control groups, in many scenarios, there is little gain from such fine-tuning. The suggested baseline allocation here mimics the one we derived for the balanced design in Equation 9 and uses

Example: A Digital Advertising Experiment

To illustrate the ideas discussed above, we consider an application to digital political advertising drawn from Turitto, Green, Stobie, and Tranter (2014). Ten of 30 noncontiguous midsized cities in Texas were randomly assigned to the treatment, a 7-day digital advertising campaign on behalf of David Dewhurst, the incumbent candidate for lieutenant governor in the 2014 Republican primary. Using a repeated cross-section design, a baseline survey of Republican voters was conducted during January 3–6 (just before the launch of the treatment) and an endline survey was conducted during January 14–17 (just after the treatment ended). These automated phone surveys asked respondents, “Thinking about the race for Texas Lieutenant Governor for a moment, if the primary election were held today, which of the following candidates would you vote for?” and presented a list of candidates in random order. The goal of the study was to estimate the effect of the treatment on the proportion of respondents who indicated that they would vote for Dewhurst.

The baseline survey was designed to obtain approximately 100 interviews in each treatment group city and 50 interviews in each control group city, while the endline survey was designed to obtain approximately 300 interviews in each treatment group city and 150 interviews in each control group city. Thus, out of a total of approximately 8,000 interviews, half were allocated to the treatment group and half to the control group (as suggested above), with 25% allocated to the baseline survey and 75% to the endline survey.

We can explore in hindsight how the precision of the estimated treatment effect would vary with alternative allocations of the survey interviews.

13

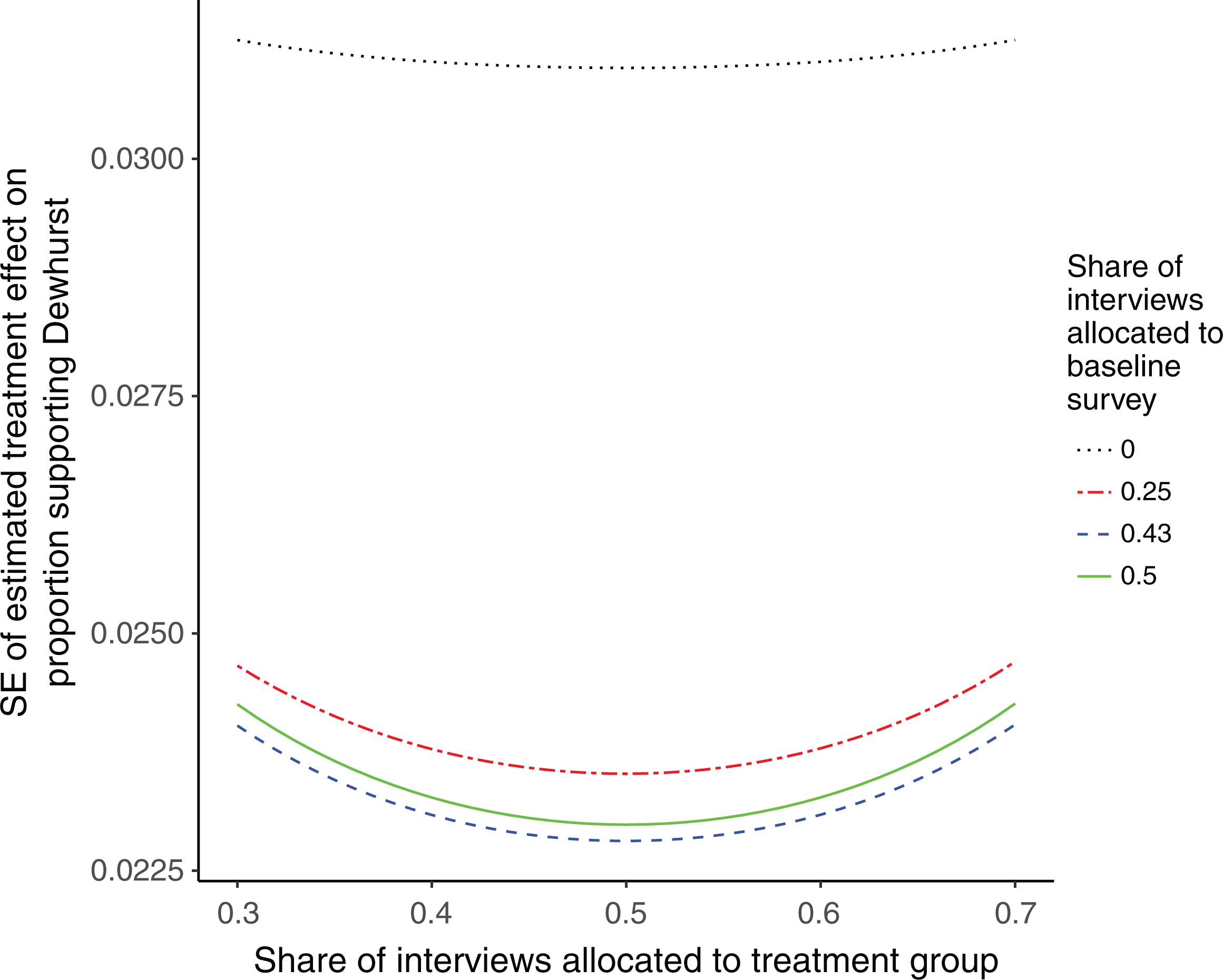

The budgeted total number of interviews is

Next, we calculate the suggested proportion of baseline interviews π from Equation 11. The suggested number of interviews per city is

Figure 3 explores how alternative allocations of the survey interviews would affect the SE of the estimated treatment effect. 14 Each curve shows how the SE varies with the share of interviews allocated to the treatment group, holding the share allocated to the baseline survey (which is assumed to be the same across the treatment and control groups) constant at zero, 25% (the actual baseline share), 43% (the baseline share suggested above), or 50%. Comparisons within each curve show that the SE is minimized when half the interviews are allocated to the treatment group and half to the control group. Comparisons across the bottom three curves show that precision is only slightly better with the suggested 43% baseline share (yielding at best an SE of 2.28 percentage points, which implies an MDE of 5.68 percentage points) than a 25% or 50% baseline share (yielding an SE of 2.35 or 2.30 percentage points at best, which implies an MDE of 5.85 or 5.73 percentage points). Thus, the actual 25% baseline share appears to have been a reasonable choice. Finally, the topmost (dotted) curve shows that precision would be noticeably worse if all interviews were allocated to the endline survey (the SE for the unadjusted estimate is at best 3.1 percentage points, implying an MDE of 7.7 percentage points).

Survey allocation and precision in the digital advertising example. The top (dotted) curve plots the SE of the unadjusted treatment effect estimate against the treatment group’s share of interviews when all interviews are allocated to the endline survey. The other three curves plot the SE of the regression-adjusted estimate against the treatment group’s share of interviews, holding the baseline survey’s share constant at 25% (the actual allocation), 43% (the suggested allocation), or 50%. See text for details.

Discussion

When the outcomes of interest are relatively stable over time, a study design with repeated cross-section surveys can be an effective strategy for efficient estimation of ATEs. Our analysis is intended to sketch some of the key issues involved in cost-efficient allocation of survey interviews and to invite more complex formalizations of the allocation problem. For simplicity, we omitted a number of complications that researchers may want to consider in applications, such as multiple baseline or follow-up survey waves, fixed costs associated with each survey wave, asymmetric costs of interviews in treatment and control areas, use of multiple baseline covariates in regression adjustment, and motivations for conducting a baseline survey other than improving the precision of estimated ATEs. Also, we assumed that the estimand is an ATE that weights each cluster equally, but researchers may prefer to weight the clusters according to population size or other considerations. Furthermore, we took the numbers of clusters assigned to treatment and control as given, while a more sophisticated analysis would simultaneously optimize the allocation of clusters to treatment arms and the allocation of survey interviews, given information about treatment costs, survey costs, and the overall budget. Researchers may wish to use our framework as a starting point for more complicated analyses that consider such issues.

Because we omitted such complications, the formulas we have given for optimal allocation will not necessarily be optimal in practice, but the analysis may be of heuristic value. In a cluster-randomized experiment with repeated cross-section surveys, the optimal share of interviews to allocate to the baseline survey is less than one-half and tends to increase with the cluster-level correlation between baseline and endline measures of the outcome variable, the ICC, and the total number of interviews per cluster. In many scenarios, a wide range of baseline allocations yields approximately the same statistical precision. This suggests that researchers have quite a bit of latitude to accommodate other design considerations, such as fielding a baseline survey in order to train enumerators or pretest a survey instrument.

Footnotes

Appendix

Acknowledgments

The authors are grateful to Jacob Klerman (the editor), Peter Aronow, and three anonymous reviewers for very helpful comments.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.