Abstract

Following a field disaster, organizations must be able to adapt to complicated new requirements like improved safety standards by changing their existing routines. Post-disaster, fewer deviations attributed to internal factors are expected and seen as evidence of adaptation. Using a difference-in-differences approach with data on nuclear power plants (NPPs) in 33 countries from 1976 to 2004, this study finds that, contrary to expectations, operational deviations attributed to human factors at NPPs increased globally for a long period after the Chernobyl disaster. This study argues that this counterintuitive performance is a manifestation of adaptive routines. To adapt to the environmental requirements for heightened safety standards, organizations may tend to alter their routines for attributing deviation causes by facilitating and transparently reporting the classification of more deviation causes as internal factors. These arguments extend organizational adaptation theory by suggesting that explicit performance does not necessarily manifest as adaptive routines because of the potential conflict between explicit and implicit performance dimensions in the context of complicated adaptation goals.

Introduction

On 26 April 1986 at 1:24 am, the worst accident in the history of the nuclear power industry occurred in Unit 4 of the Chernobyl Power Complex. The accident started with a turbine generator test to investigate how much emergency power could be supplied during the rundown phase. The operators in charge of the test and those in charge of the safety systems did not coordinate their actions before conducting the test. The operators shut off the safety systems that should have provided cooling water, which would be lost during a power outage. This let the cooling water boil and become steam. As the amount of steam increased, the nuclear reaction increased abnormally, and the control rods used to slow down nuclear reactions malfunctioned. This eventually caused huge explosions inside the reactor, which consequently caused the unit to collapse. Thirty-one workers died directly from radiation poisoning; post-disaster, the cancer rate among children in Ukraine rose by approximately 90% (Plokhy, 2018). The disaster also caused serious contamination of several neighboring countries in Europe (European Commission, 1998).

The accident was directly attributed to a technical cause: a design flaw in the control rods. However, at the post-accident review meeting in August 1986, the International Atomic Energy Agency (IAEA) declared that the root cause of the disaster was human error, including ignorance of safety procedures, operators’ lack of knowledge, and insufficient and inadequate operator training that had been continuously overlooked (International Atomic Energy Agency [IAEA], 1986). Various stakeholders strongly criticized these preventable internal factors behind the disaster. These factors were sufficient for stakeholders to speculate about the potential risks of nuclear power plant (NPP) operations in the future, triggering several anti-nuclear movements and leading to stronger safety measures for NPPs worldwide (McDaniels, 1988).

As this example suggests, a field disaster, a transient accident, or an incident at the field level that significantly harms environmental, social, and human values catalyzes a shift in stakeholder perceptions of a given field, and sparks substantial environmental turbulence (Hällgren, Rouleau, & de Rond, 2018; Hoffman & Ocasio, 2001). According to organizational adaptation theory, in turbulent environments, organizations are required to change their routines to be congruent with new directives; and only well-adapted organizations will survive and achieve superior performance (Haveman, 1992; Meyer, 1982). Accordingly, to adapt after field disasters, organizations are expected to adopt changes to prevent similar disasters from occurring in the future by improving safety routines.

A key theoretical puzzle that has not yet been fully addressed in the literature on organizational adaptation is whether such adaptive routines indeed assure superior performance. Organizational adaptation refers to organizational congruence with the institutional or competitive environment. Since it is difficult to measure by this definition, the traditional approach presumes adaptation to be changes in a routine that improve organizations’ explicit performance, such as achieving financial growth or reducing the number of negative events (Furr & Snow, 2014; Haveman, 1992). Recent studies, however, point out that this assumption should be revisited because of its tautological nature (Sarta, Durand, & Vergne, 2021; Vergne & Depeyre, 2016). Indeed, some scholars report unintended inferior explicit performance, such as errors and financial losses, following organizational changes for adaptation (Geleilate, Fainshmidt, & Zollo, 2021). Despite the significant presence of counterintuitive adaptation performance, the literature lacks a sufficient theoretical explanation of the conditions and rationale for this phenomenon.

The aim of this study is to offer a perspective that explains inferior performance of organizational adaptation by highlighting the nature of performance complexity—potential conflicts between explicit and implicit performance dimensions. Organizational performance entails multiple dimensions that can sometimes conflict (Gaba & Greve, 2019; Richard, Devinney, Yip, & Johnson, 2009). For instance, adaptive routines that satisfy environmental requirements may represent inferior performance in explicit dimensions, but they may represent superior performance in implicit dimensions. Using the context of the Chernobyl disaster, this study shows that reports of human errors following a disaster have unexpectedly increased among the world’s NPPs over a long period. Given this long-term persistence, temporal coordination costs for adaptation cannot explain this phenomenon (Dutt & Lawrence, 2022; Geleilate et al., 2021). This study argues that this inferior explicit performance (increased ratios of human errors) represents successful adaptation in an implicit performance dimension. A disaster might have triggered organizations to alter their routines for attributing deviation causes, and have led organizations to classify more deviation causes as internal factors and to facilitate transparent reporting. Based on this interpretation, this study suggests that adaptive routines should prioritize an implicit meaning that reflects the adaptation goals.

The organizational adaptive responses to field disasters are an indispensable topic in debates on how to organize sustainably, particularly for high-reliability organizations (HROs). Disastrous events in the HRO context cause tremendous environmental, social, and organizational/individual trauma; thus, they reflect how organizations must change to improve their sustainability (George, Howard-Grenville, Joshi, & Tihanyi, 2016). Although it is evident that adaptive changes are necessary after field disasters, not all organizations engage in such changes. Previous studies have explained that this might be linked to organizations’ failure to understand potential risks in the post-disaster phase (Dwyer, Hardy, & Maguire, 2020) or their highly standardized previous routines (Macpherson, Breslin, & Akinci, 2022). This study explains it by highlighting the potential conflicts between explicit and implicit performance dimensions. Proactive detection and transparent reporting of deviations are routines through which organizations should seek to make improvements necessary for sustainability. However, they necessarily deflect organizational explicit performance by increasing the explicit number of deviations. This trade-off may hamper organizational routine changes despite their significant necessity.

This study makes two main contributions. First, the traditional perspective of adaptation studies relies on a single explicit performance dimension as adaptation evidence, whereby increases in human error or deviations can indicate organizational maladaptation (Furr & Snow, 2014; Haveman, 1992). By contrast, this study suggests that the same performance following field disasters, under complicated adaptation goals such as the pursuit of safety and sustainability, can be interpreted differently based on the implicit dimension: adaptive routine changes through proactive detection, and transparent disclosure of organizational deficiencies. This conflict between explicit and implicit performance dimensions offers a new explanation for the possible inferior performance of adaptive routines.

Second, organizational adaptation has deep relevance to the issue of sustainability evaluation. Sustainability (the organizational ability to protect the interests of future generations in terms of environmental, social, and human prospects) is one of the critical and complicated organizational adaptation goals (Bansal, 2005; George et al., 2016). Yet, despite its theoretical and practical importance, the existing literature on sustainability included little discussion about appropriate measures and evaluations of organizational adaptation goals for sustainability. This study provides important insights into how sustainability should be evaluated from the perspective of organizational adaptation.

Theory and Hypotheses

Routine changes for adaptation

How organizations adapt to the requirements of external environments has been a major concern among organizational scholars. According to organizational adaptation theory, adaptation—the processes by which an organization converges towards a good fit with the external environment (Sarta et al., 2021)—is a primary requirement if organizations are to survive and achieve superior performance. Organizational adaptation is obtained by developing or changing routines for congruency with a set of environmental requirements (Greve, 2017). Routines refer to repetitive, collective patterns of organizational decision-making and behaviors that act as assumptions or genetic material for daily operations (Cyert & March 1963; Nelson & Winter 1982). They serve as an organizational memory created through accumulated knowledge and experiences to deal with environmental requirements (Nelson & Winter, 1982). Hence, routines are differentiated from practices—the use of the routines to conduct a particular function (Kostova & Roth, 2002). They become the fundamental basis of decision premises (Simon, 1947) or a stable template to guide practices (Levinthal & Marino, 2015).

Routines can hinder or help organizational adaptation (Hansson, Hærem, & Pentland, 2023; Yi, Knudsen, & Becker, 2016). Scholars have long emphasized inertia or a fixed reaction to operational stimuli as a routine’s nature (Levitt & March, 1988; March & Simon, 1958). Once organizations establish routines that fit the environmental requirements, they persist in following those routines. These hamper organizations’ progressive adaptation by forcing them to pursue the status quo. Instead, when organizations recognize non-adaptability of routines to the environmental requirements, they change the routines and attempt new adaptations (Newman, 2000). An external event that triggers environmental discontinuities, such as technological shifts or disastrous accidents, has been regarded as a typical source of routine changes (Meyer, 1982; Mithani, 2020). After these events, institutional or technological standards are tailor-fitted to the new requirements. These drive organizations to reevaluate existing routines and, if necessary, to move routines closer to the new set of environmental requirements.

Performance as evidence of adaptation

A recent critical debate in organizational adaptation theory dwells on performance as adaptation evidence. Adaptation is difficult to measure by definition—convergence towards a good fit with environmental requirements. Therefore, several scholars have analyzed adaptation outcomes by examining explicit organizational performance, such as measuring financial growth or the number of negative events (Sarta et al., 2021). They assume that superior explicit performance represents the appropriate fit between organizational routines and environmental requirements; accordingly, it could represent evidence of adaptation (Furr & Snow, 2014; Haveman, 1992; Moore & Kraatz, 2011).

However, some researchers argue that adaptation does not necessarily assure such superior explicit performance. These studies adopted one of two approaches. First, earlier studies suggested that a lack of certain organizational resources or capabilities can hamper adaptation processes, resulting in inferior explicit performance despite changes (Chakrabarti, 2015; Stan & Puranam, 2017). Second, recent studies suggested that inferior performance of adaptive routines is likely due to temporal coordination or adjustment costs. For instance, Geleilate and colleagues (2021) showed that after the deployment of new product development capabilities for adaptation, organizations reported increased manufacturing errors attributed to internal factors. The authors argued this was because adaptation entails coordination costs stemming from disruptions in existing routines. Similarly, Dutt and Lawrence (2022) suggested that adaptation can incur temporal adjustment costs; thus, an increased breadth of activities for better adaptation causes organizational performance to deteriorate. With these scholarly works, the literature has started acknowledging that adaptation does not necessarily equate to superior performance (Sarta et al., 2021, p. 60). However, it still relies on a tautology: adaptive routines eventually lead to superior performance at the end of the adaptation processes or when complemented with certain resources or capabilities; therefore, superior performance is evidence of adaptive routines.

This study suggests its alternative viewpoint by arguing that the nature of performance is complex. Organizational performance is a highly complicated construct with multiple dimensions and meanings (Levinthal & Rerup, 2020; Richard et al., 2009). Thus, one performance dimension might not fully reflect the holistic quality of adaptation—the degrees of convergence with environmental requirements. In particular, with complicated adaptation goals, an explicit performance dimension often distorts an evaluation of whether the organizations ultimately meet the adaptation goals (an implicit performance dimension), producing a spurious interpretation.

For instance, under an adaptation goal to improve sustainability (one of the complicated adaptation goals), some organizations may aim to improve quality for each sustainable activity by reducing the number of activities. For these organizations, the explicit number of activities might not be appropriate for evaluating adaptation. Indeed, sustainability scholars have argued that seemingly sustainability-related statistics (an explicit performance) might not fully reflect the real organizational movement to improve sustainability (an implicit performance) (Graafland & Smid, 2016). Such explicit performances could be manipulated by managers for organizational impression management, or they may fail to represent comprehensive organizational efforts to meet the adaptation goals. Accordingly, tension can exist between explicit and implicit performance dimensions aimed at adaptation. Due to this performance complexity, Levinthal and Rerup (2020, p. 528) noted that even a single performance can result in multiple interpretations. This leads organizations to face ambiguous performance feedback, and it is necessary to understand how such performances are interpreted (Gaba & Greve, 2019; Greve & Gaba, 2017).

Based on these arguments, the next subsection predicts how adaptive routine changes can take the explicit form of inferior (superior) performance while implicitly representing superior (inferior) performance in the context of periods following field disasters.

Performance of adaptive routines following field disasters

Field disasters are a major trigger of organizational adaptation. As the Bhopal disaster, the 9/11 terrorist attacks, the Challenger disaster, and the Chernobyl disaster showed, field disasters cause grave damage, significant harm to many people and the environment, and can erode financial and social value over the long term (Hällgren et al., 2018). The considerable harm results in institutional turbulence that introduces heightened safety standards and urges organizations to conform to such standards to prevent similar disasters from occurring in the future (Greenwood, Suddaby, & Hinings, 2002; Hoffman & Ocasio, 2001). Accordingly, after field disasters, organizations face new adaptation goals, which drive them to improve safety and take sustainable actions by considering future environmental, social, and human prospects in their routines (George et al., 2016). To meet these complicated goals, organizations are required to review existing routines and reconsider priorities for their behavior and decision-making (Kasbekar, 2020).

A change in the routines for attributing deviation causes is one type of adaptive response. Field disasters often highlight deviations caused by the organizations’ internal faults (hereafter, internally attributed deviations), such as human error or communication mistakes among organizational members, as root causes (Goodman, 2013; Sanger, 1986). Institutional environments after field disasters require organizations to address potential risks from these internally attributed deviations, which might have been continuously neglected. Hence, a decrease in such deviations is typically expected for organizations as evidence of adaptation. Nevertheless, counterintuitively, proactive learning behaviors to meet adaptation goals might lead to explicit inferior organizational performance in the following ways.

First, to adapt to strengthened safety standards, more controlled and deliberate information processes are likely (Morgeson, Mitchell, & Liu, 2015). Through these processes, organizational members make an effort to consider multiple internal causes to explain observed deviations (Gooding & Kinicki, 1995). They may recognize internal factors in the background of the deviations more often, and might look for hidden internal factors in those deviations. Consequently, organizational perceptions and definitions of internally attributed deviations could expand more broadly. This might lead to the reclassification of deviations previously viewed as caused by equipment or unforeseen external forces as caused instead by internal factors.

Second, under institutional environments in which safety issues are strongly emphasized, organizations’ transparent reporting of deviations is strongly encouraged (Cambraia, Saurin, & Formoso, 2010; Hession-Laband & Mantell, 2011). For instance, after the Chernobyl disaster, the IAEA recommended that the possibility of human error in NPP operations be considered by providing the means for detecting and correcting or compensating for human error (International Nuclear Safety Group, 1988). To adapt to this institutional emphasis, organizations are likely to engage actively in detection and transparent reporting of internally rooted errors to improve their reliability and sustainability.

Collectively, these adaptive routines can lead to inferior explicit organizational performance by increasing ratios of internally attributed deviations. Nevertheless, these results implicitly reflect the proactive learning of organizations to improve safety by changing their routines. Hence, this study proposes the following hypothesis:

Hypothesis 1. After field disasters, the ratios of operational deviations with internally attributed causes increase.

This study argues that inferior explicit performance of adaptive routines results from conflicts between explicit and implicit performance dimensions under complicated adaptation goals. However, the same results can be derived from coordination or adjustment costs owing to unfamiliarity with new routines, as existing adaptation studies have suggested (e.g., Geleilate et al., 2021). Grissinger (2011), using the example of a hospital, showed that although a new medical system was adopted to improve administrative safety, it increased the risk of mishaps for nurses by changing several previously well-rehearsed routines.

If this alternative explanation holds, then increased ratios of deviations attributed to internal factors may appear only for a short time immediately after a disaster. This is because coordination costs decline as new routines stabilize over time. Conversely, as this study argues, if inferior performance reflects the outcomes of adaptive routines, it should exert a long-term effect, because routines persist over time once they are established (Hannan & Freeman, 1984). To disprove alternative explanations found in the existing knowledge, this study suggests the following hypothesis:

Hypothesis 2. The significance of increases in the ratios of internally attributed operational deviations following field disasters is less likely to drop over time.

Methods

NPPs worldwide before and after the Chernobyl disaster

The world’s NPP industry provides a fruitful context to test the above hypotheses. Nuclear power has been considered an important provider of efficient energy and, since the 1970s, has been viewed as a viable source of electricity. Global warming from extreme reliance on fossil fuels has become a pressing global issue. Several countries have shifted their attention to nuclear power because nuclear fuel reduces CO2 emissions but is also cheaper and more abundant than other energy sources, such as wind and solar power (World Nuclear Association, 2008). Owing to these benefits, there was a remarkable increase in NPP development around the world in the 1970s and early 1980s, and a supportive institutional environment for NPPs has been established worldwide.

Nevertheless, the sustainability of the nuclear power industry has always been controversial, especially after the 1986 Chernobyl disaster, which was rated at the highest level of nuclear hazards (level 7) on the International Nuclear and Radiological Event Scale (INES). This level denotes accidents accompanied by a major release of radioactive material with widespread health and environmental effects (IAEA, 2008). More than 100 people were killed or injured within a few weeks of the explosion owing to radiation poisoning, and around 335,000 people were evacuated (Blackmore, 2019). Environmental contamination was serious. According to the European Commission (1998), several countries around Ukraine, including Russia, Belarus, Austria, Finland, and Sweden, were seriously contaminated by cesium-137. This unprecedented accident seriously damaged the nuclear power industry’s legitimacy by proving its significant potential to harm future generations.

The IAEA, the field-structuring organization in the nuclear industry, played a central role in the institutional turbulence during the post-Chernobyl disaster period. In August 1986, the IAEA organized the Post-Accident Review Meeting on the Chernobyl Accident, during which it declared that the root cause of the Chernobyl accident was related to human-induced failures, such as ignorance of safety procedures and the operators’ lack of knowledge. The root internal cause of the disaster, along with its dramatic results, significantly contributed to loss of legitimacy in the field. It led stakeholders to shift their attention to nuclear risks, instead of economic benefits. The disaster also fueled numerous anti-nuclear movements around the world (McDaniels, 1988). Some countries decided to exit the industry and seek alternative energy sources. Under these circumstances, the IAEA introduced the concept of safety culture for NPPs worldwide (Strauch, 2015). Accordingly, global NPPs faced immense adaptation pressure to meet strengthened safety measures to ensure their survival.

Sample

The sample included 510 NPP units at 231 plants in 33 countries between 1976 and 2004. Data from 2005 were excluded owing to format changes in the sources. The major data sources were the annual Operating Experience with Nuclear Power Stations in the Member States reports published from 1976 to 2004 by the IAEA. Additional data on demographic characteristics of NPP units were obtained from the website of the Power Reactor Information System (https://pris.iaea.org/PRIS/home.aspx). Based on prior studies, the NPP unit (reactor)-year was used as the unit of analysis (David, Maude-Griffin, & Rothwell, 1996; Piazza & Perretti, 2015). This unit of analysis is more appropriate than alternatives such as NPP plant sites or operating (power) companies because, even for the same NPP site or operating company, NPP units regarding operational safety performance are highly varied owing to different technical capacities and working conditions, including different workers (David et al., 1996).

Setting

To test the influence of the Chernobyl disaster, this study applied a difference-in-differences approach. To calculate the difference-in-differences estimate for the Chernobyl disaster, the sample was separated into treatment and control groups. The treatment group was defined as NPP units operating in countries tremendously affected by the Chernobyl disaster (disaster-hit countries). In general, three countries where more than 70% of the released radioisotopes landed are considered to have been most affected: Belarus, Russia, and Ukraine (Baca & Florkowski, 2000). In addition to these countries, according to the European Commission (1998), the following 13 countries were considered significantly affected by severe levels of released radioisotopes after a considerable percentage of their land area was contaminated with cesium-137: Austria, Finland, Sweden, Italy, Slovenia, Norway, Switzerland, Greece, Romania, the Czech Republic, Poland, Germany, and the United Kingdom. Of all 16 countries, no data on NPP operations from Belarus, Austria, Norway, Greece, and Poland were available. Hence, the final treatment group included 150 NPP units from 11 countries. Following this definition, 360 NPP units operating in another 22 countries (Argentina, Armenia, Belgium, Brazil, Bulgaria, Canada, China, France, Hungary, India, Japan, Kazakhstan, Lithuania, Mexico, the Netherlands, Pakistan, South Korea, Slovakia, South Africa, Spain, Taiwan, and the United States) constituted the control group.

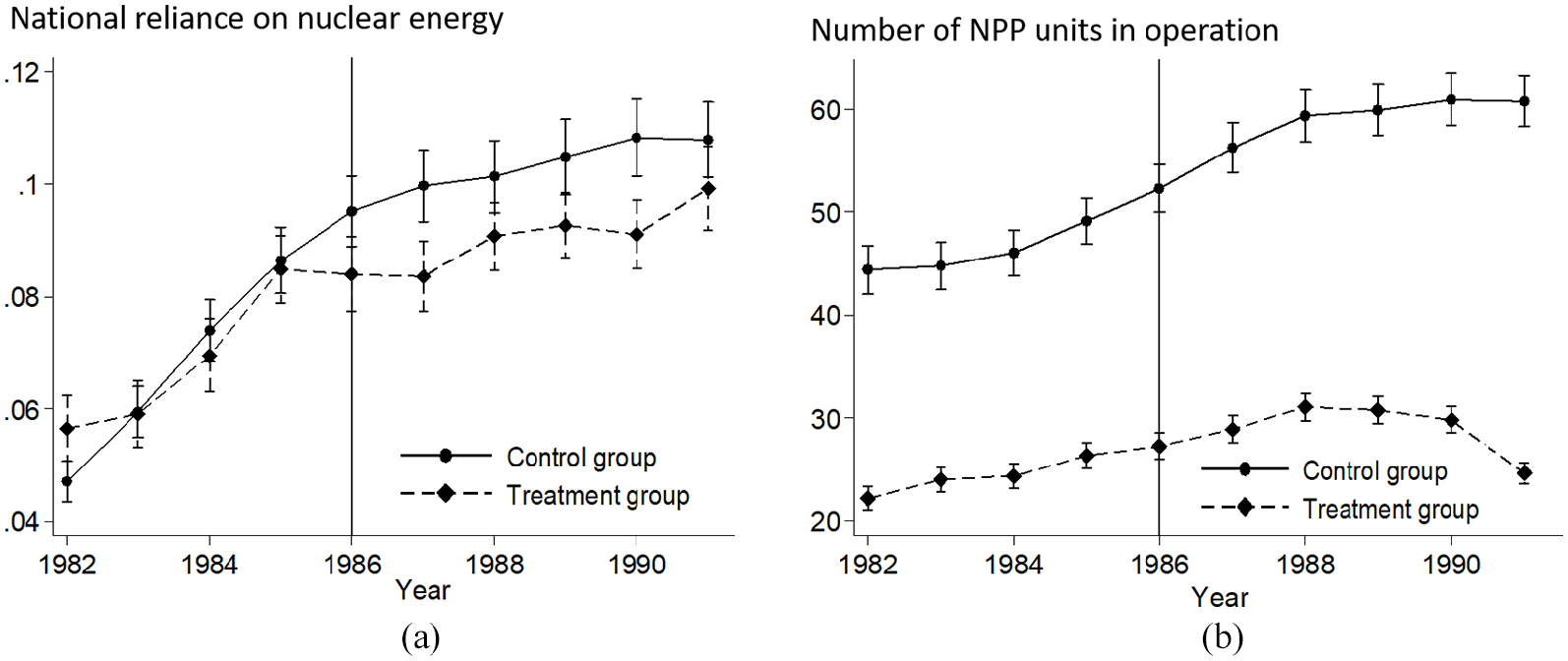

Figures 1(a) and 1(b) show the different effects of the Chernobyl disaster on these two groups with regard to their national reliance on nuclear energy and the number of NPP units in operation, respectively. According to Figure 1(a), national reliance dramatically increased pre-Chernobyl in both groups; however, after 1986, that tendency faltered for the treatment group. Similarly, in Figure 1(b), the number of NPP units in operation gradually increased pre-Chernobyl. This number displayed a downward trend around late 1980 in the treatment group. A prompt reduction did not occur, possibly because of a gradual phasing out of NPPs. Overall, Figure 1 shows the significant impact of the Chernobyl disaster on this industry.

Worldwide nuclear industry development pre-/post-Chernobyl.

Hypothesis 2 predicts the lasting impact of adaptive routines. To reflect these effects over time, the main tests focused on the two five-year periods before and after the Chernobyl disaster (1981 to 1991). This 10-year period surrounding the disaster is appropriate for examining the immediate influence of the Chernobyl disaster. The industry’s self-regulatory body, the World Association of Nuclear Operators, was formalized in 1989, and the INES safety standards were implemented in 1990. This study includes the period from 1976 to 1996 (20 years surrounding the disaster) and the period from 1976 to 2004 to examine whether its influence persisted.

Variables

Dependent variable (t+1)

The dependent variable corresponds to each NPP unit’s operational deviations with causes categorized as internal factors in each year. To define internally attributed operational deviations, this study used unplanned outages caused by human factors that occurred during operations at each NPP unit. Unplanned outages occur with any deviation from safety standards in NPP operations. Although these events do not necessarily lead to nuclear accidents accompanied by radioactive emissions, they are obvious signals of inadequate safety conditions and operational malpractice in NPP units as a proxy for safety deviations (Cowing, Paté-Cornell, & Glynn, 2004; David et al., 1996).

For all unplanned outages occurring during operations, NPP operators are expected to code the major cause using formats provided by the IAEA, and they must submit these records to that regulating body. The IAEA categories for causes of unplanned outages include equipment error, human-related factors, refueling, maintenance/repair, testing of plant/components, training/licensing, stretch-out/cost-down operations, regulatory limitation, grid failure, and others. Of these categories, this study focuses on human-related factors, the most fundamental internal cause of deviations.

Semi-structured interviews were conducted with two NPP operators and one NPP inspector which, together with the annual reports, provided several specific examples of unplanned outages, and offered justifications for the measurements. For instance, omissions of procedures by operators can contribute to these outages. Operators commit such deviations unconsciously—owing to a lack of knowledge or miscommunication with other operators—or consciously because they underestimate the risks and pursue operational convenience or efficiency. Additionally, cognitive failures, such as lack of attention or operational confusion, can cause this type of outage. For example, operators might conduct the same manipulations in two different control rooms owing to a high degree of similarity in these rooms.

The global inspector of NPPs mentioned in the interview that it is highly complex to define human factors in operational deviations. For instance, when certain parts in a unit of equipment cause trouble, the major cause could be an equipment failure. However, it could also be attributed to a human factor because it might have happened due to an operator’s ignorance and/or negligence when it comes to replacing the part. Indeed, one case in the global NPP annual reports showed that some NPP units attributed the cause of an unplanned outage during a fire to a human factor. In the report, this case was described as follows: “[A] cable tray fire occurred during a penetration leak test.” However, in other NPP units, similar cases were attributed to other causes without specific explanation. These examples imply that the manner of attributing causes of deviations (i.e., the decision to assign each deviation to a certain category) is determined by the operators based on the organizational safety routines for deviation attribution—what they have assumed when interpreting the given deviations.

To determine the dependent variable, this study used the ratios for numbers of unplanned outages attributed to human factors to the total number of unplanned outages in a given year. The use of these ratios avoids the potential influence of an entire stack of unplanned outage reports in a given year. Unplanned outages attributed to human factors were reported infrequently (4% on average).

Independent variable (t)

To define the independent variable, the Chernobyl disaster was used. This disaster was the first historical disaster in this field that generated unprecedented worldwide institutional changes. This study used the interaction term between disaster-hit countries and the post-Chernobyl period to run difference-in-differences. As hypothesized, this variable was expected to show significant positive coefficients.

Control variables (t)

To control for heterogeneous characteristics across NPP units and countries, this study used the following control variables. At the unit level, young NPPs may experience more operator mistakes owing to a lack of experience. Therefore, the units’ operating age (unit age) controls operating experience. Annual electricity production (in thousands of gigawatt hours) was used to control unit capacity. Given that routines to detect safety problems in advance may affect ratios of unplanned outages caused by human factors, this study included a dummy variable representing whether a unit had a routine of scheduled outages for maintenance (planned outage for maintenance). The annual total number of planned and unplanned outages was entered separately to control for any number of general operational deviations in the NPP units. Given that the deviations categorized as other may be related to human error, this study used a dummy variable to indicate whether a unit had deviations from other causes in a given year (unplanned outages from other causes). The annual load factor for each NPP unit was used to control for its operational efficiency. Finally, the study used operating company age to control for management experience in the operating company.

At the national level, to consider industry maturity, the numbers of NPP units in the country (unit density) and units under construction or planned were included as control variables.

Skewed continuous variables were log-transformed.

Analytical model

For the analysis, generalized estimating equations were used. Such models are suitable for longitudinal panel data and are particularly effective for controlling potential autocorrelation and heteroskedasticity (Greve & Goldeng, 2004). Considering that the dependent variable is continuous, an identity link and a Gaussian distribution were specified for the tests.

Some year-specific events in this industry, and country-specific policies or culture, may affect NPP safety routines. To address these issues, this study used fixed effects for the country and year in all models. Unit fixed effects were not available because major independent variables were time-invariant. All independent and control variables lagged by one year in the regressions from dependent variables. All continuous variables were winsorized at the 99% and 1% levels to correct for outliers. 1

Results

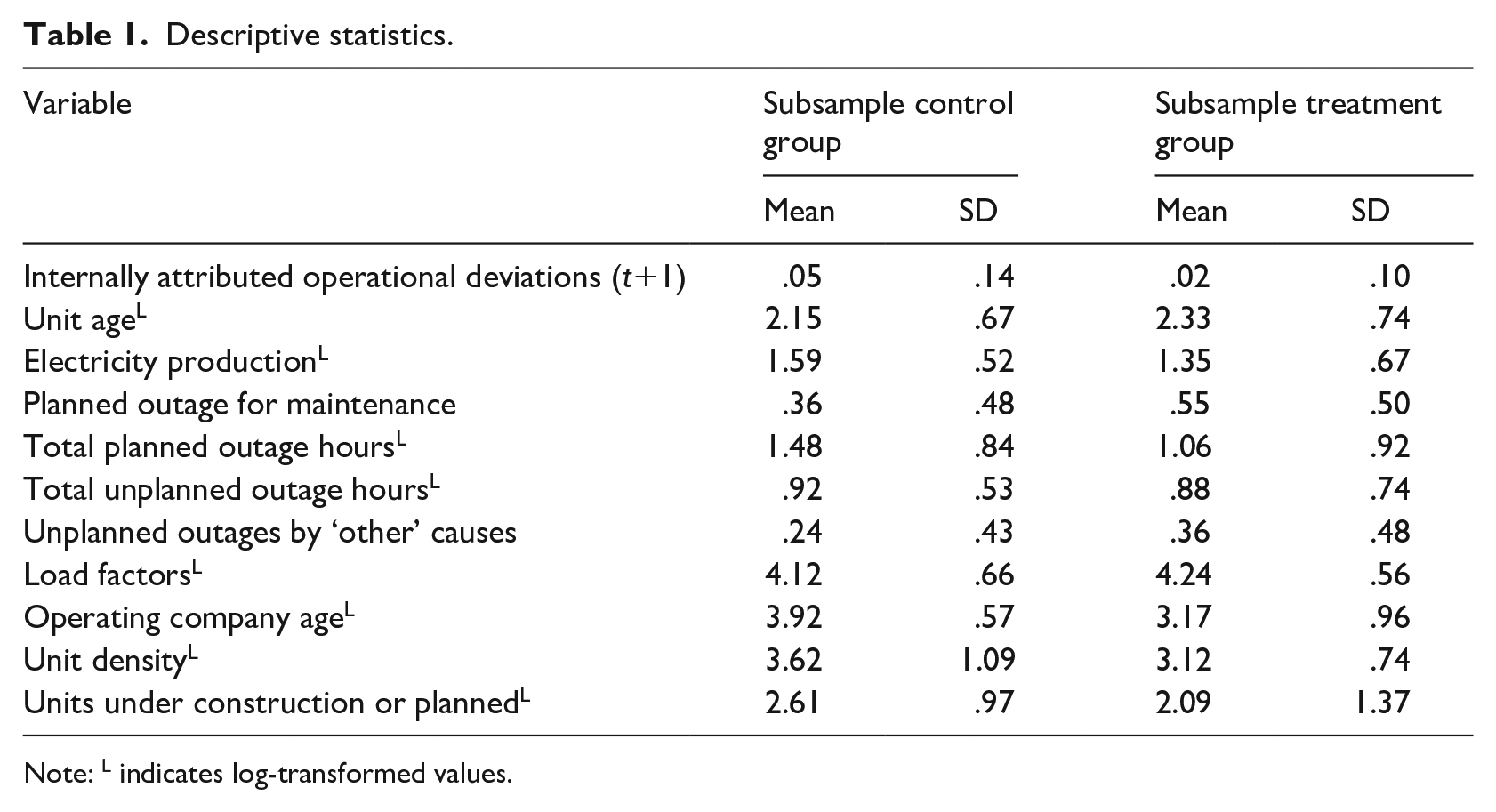

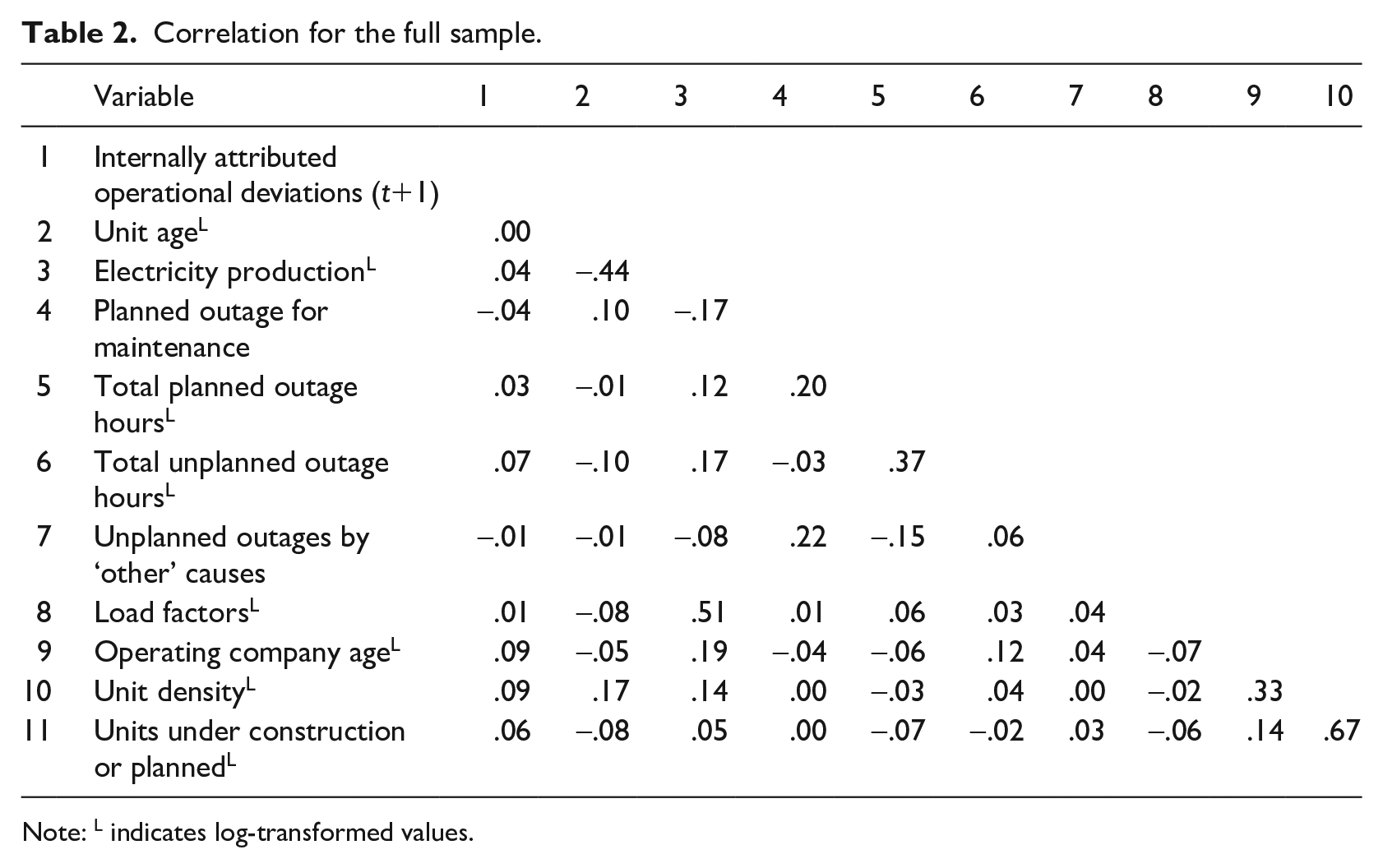

Tables 1 and 2 show descriptive statistics on treatment and control groups for the difference-in-differences analysis and the correlations among variables, respectively. The treatment group had, on average, deviation ratios categorized as internal factor causes that were 2.19 times lower than the control group. However, Figure 2(a) shows there was no significant difference in the trends of the ratios between these two groups immediately before the Chernobyl disaster, indicating that these groups satisfy the difference-in-differences analysis assumption of parallel trends. The maximum value of variance inflation factors across all models was 1.53, indicating insignificant multicollinearity among the variables.

Descriptive statistics.

Note: L indicates log-transformed values.

Correlation for the full sample.

Note: L indicates log-transformed values.

Deviations categorized as caused by human factors pre-/post Chernobyl.

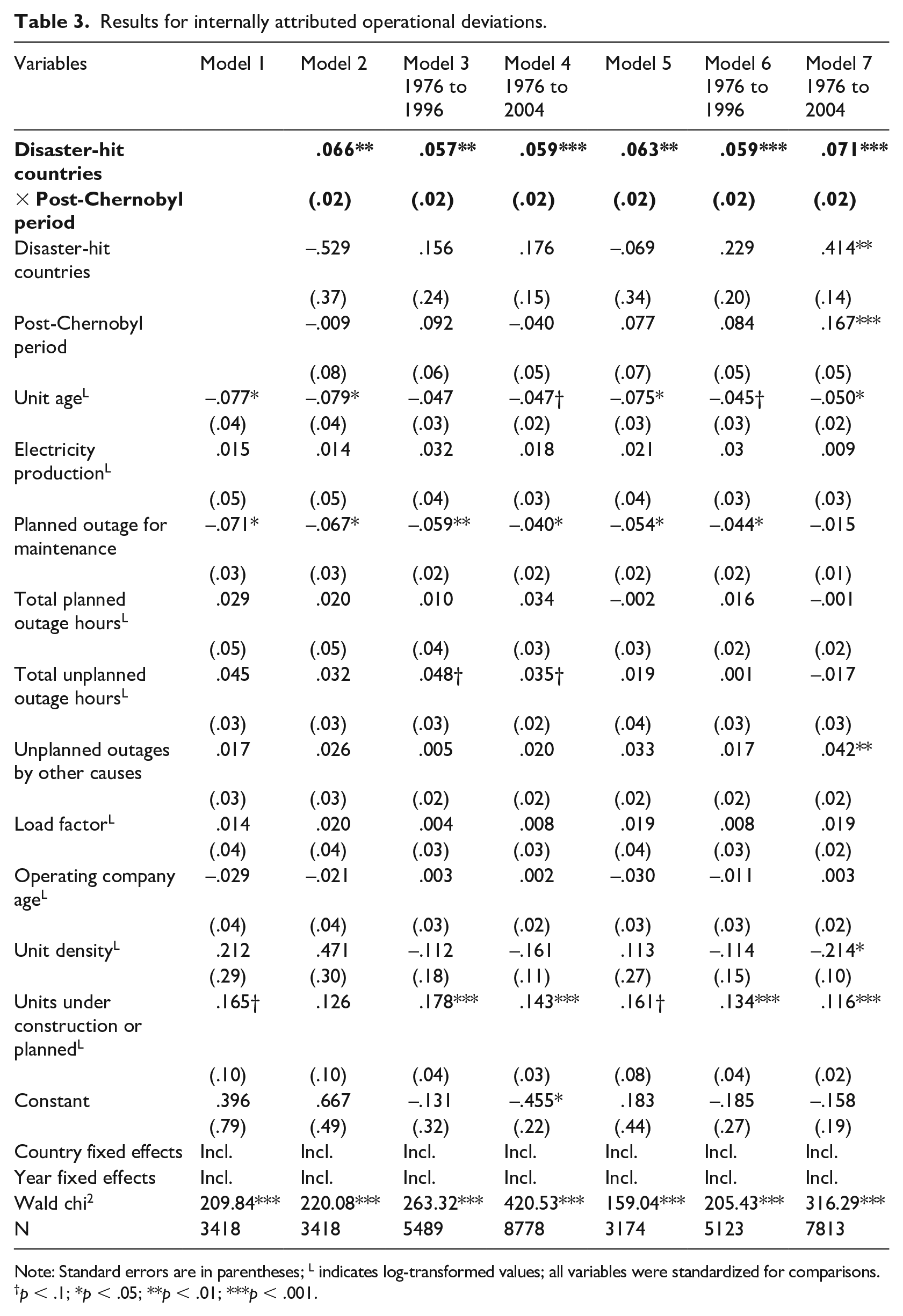

Table 3 shows the results for hypothesis 1. All variables were standardized for a convenient interpretation for comparison of the results. Model 1 contained only the control variables. As predicted, newer NPP units experienced more unplanned outages due to human factors (β = –.077, p = .043). Proactive scheduled maintenance alleviated those outages (β = –.071, p = .011). NPP units for countries in an early industrial stage showed a higher ratio of unplanned outages from human factors (β = .165, p = .093). In general, these results are consistent with reality and the expected directions.

Results for internally attributed operational deviations.

Note: Standard errors are in parentheses; L indicates log-transformed values; all variables were standardized for comparisons.

p < .1; *p < .05; **p < .01; ***p < .001.

Model 2 shows the results for the Chernobyl disaster’s influence. In this model, the treatment group comprised NPP units in disaster-hit countries, and an interaction term between this and a post-Chernobyl dummy variable was added. According to model 2, the treatment group showed a higher ratio of unplanned outages due to human factors after the Chernobyl disaster, supporting hypothesis 1 (βthe disaster-hit countries × the post-Chernobyl period = .066, p = .002). The ratio of unplanned outages attributed to human factors increased 6.6% for the NPP units in disaster-hit countries during the post-Chernobyl period. The chi2 test shows that model 2 was a significant improvement over model 1 (Pr > chi2 is < .001). Figure 2(a) visualizes this tendency in the five years before and the five years after the Chernobyl disaster. In general, before 1986 between the treatment and control groups, there were no significant differences in ratios for reports of unplanned outages caused by human factors. However, after the disaster, this significantly increased for the treatment group. Figure 2(b) identifies NPP units in Russia and Ukraine, which were the most contaminated by the disaster, and shows that in these countries, there were no reports before the disaster, but they appeared afterward.

Hypothesis 2 predicts that the results persist over time. Models 3 and 4 show that the Chernobyl disaster had a significant impact for a long time after the disaster. The ratio of unplanned outages attributed to human factors increased around 6% in disaster-hit countries for the 18 years following the disaster. These results support hypothesis 2.

Robustness checks

To check for the robustness of the results, this study includes several additional analyses. First, because each NPP unit was nested within a specific NPP site or operating company, their safety routines may be correlated with other units for the same site or operator. To reflect this nested structure, this study applied multilevel modeling using a generalized linear mixed model. The analysis used the meglm command in Stata 14. Second, to redesign the difference-in-differences analysis, the NPP units that operated in the 10 countries where the cesium-137 deposits exceeded 0.6 PBq immediately after the Chernobyl disaster were defined as the treatment group, and the rest were the control group (European Commission, 1998). Third, the Three Mile Island accident in 1979 might have influenced US NPPs and their routine changes (although the accident was rated at INES level 5, and its influence was mainly limited to local areas). To rule out this influence from another disastrous event, the US NPP units were removed from the sample. After applying these changes for the robustness checks, the same regressions as those for Table 3 were run. The results replicated the original analysis findings, confirming the robustness of the results.

Finally, this study replaced the original dependent variable with multiple other related variables for further robustness checks as follows. First, the original definition for deviations attributed to internal factors was based on a single cause—human factors. For the robustness check, this study added two more attribution causes of unplanned outages that seem to relate to internal factors: training/licensing and stretch-out. After calculating the ratios of the number of unplanned outages attributed to these two factors and the human factor to the total number, the same regressions were run again. The results revealed the same tendencies as the original results, as shown in models 5 to 7 in Table 3. Second, unplanned outages caused by human factors are rare events. Therefore, the use of the ratio of these events may bias the analysis results. For the dependent variables, the robustness check used a binary variable coded as 1 for the occurrence of unplanned outages caused by human factors in a given year, but coded as 0 otherwise. The analysis results applying a logistic model showed the same tendency as the original results. Third, this study proposes that the routines for attributing deviation causes were influenced by the disaster. To verify this proposition, this study defined the dependent variables as the total number of unplanned outages for each NPP unit, and tested whether the Chernobyl disaster affected all these deviations. As expected, the results showed that the disaster had an insignificant impact on total unplanned outages.

Additional analyses

The results of this study show a significant increase in human errors following the Chernobyl disaster. This study argues that this explicit inferior performance reflects routine changes in interpreting and reporting deviation causes that classify more deviations as internal attributions. Nevertheless, the results are not based on identification of the specific visible behavioral changes for adaptation. To provide further justification for the adaptive routine changes reflected by inferior performance, this study includes three sets of additional analyses, and attempts to offer more specific and extensive evidence. This subsection describes their summaries. The specific processes and results are described in the Appendix.

Changes in patterns of deviation descriptions

To examine the specific changes in the interpretation of deviations, the first analysis considers the changes in the assessment descriptions for human error. In this context, operators record a short description of the background information for each unplanned outage when coding the major cause. These descriptions include information on why operators categorize some deviations as caused by human factors, and reflect the efforts to analyze the causes. Using these descriptions, the first analysis identified the keywords that were extensively used to describe human errors post-Chernobyl, compared to pre-Chernobyl.

The increasing ratio of deviations attributed to human factors may have resulted from a broadened definition of human factor causes in organizations following heightened safety norms. If this argument holds, the description for human errors post-Chernobyl should include a more heterogeneous background, and should be accompanied by more comprehensive explanations than those pre-Chernobyl. To verify this, this study tested how description wordings and lengths have changed since the disaster. The analysis results showed that after the disaster, description wording heterogeneities and lengths significantly increased (see Table A and Figure A in the Appendix).

Changes in patterns of deviation coding

This study predicts that, after the disaster, NPP operators would increase efforts to investigate internal causes of deviation occurrences to adapt to a strengthened safety measure. These efforts might be reflected in the coding of deviation causes. In this context, when categorizing outages as equipment failure, operators have been required to note the specific serial number of the failed equipment, along with the category alphabet code A (the code for equipment failure) per the manual. This practice was not required when coding human factor causes, as these two categories (equipment failure and the human factor) are mutually exclusive in the manual. Nevertheless, some NPP units designated these numbers for human error, linking these two categories with reference to the equipment failures caused by human factors.

The second analysis examined how this coding behavior changed after the disaster. The analysis results proved that the ratios of such codings significantly increased in the treatment group post-Chernobyl. These may reflect the increased efforts to view equipment failures as an outcome of human factors post-Chernobyl (see Figure B in the Appendix).

Changes in attribution to ambiguous causes

If, as argued, operators made efforts to view more deviations as internally attributed post-Chernobyl, the instances in which operators attributed deviations to the category other—often characterized by deviations with undefined or ambiguous causes—should decrease. Furthermore, such reductions should be related to increased reports of human error.

To explore these possibilities, the study plotted the probability of each NPP unit to categorize deviations under the other category over the years, and found that such probabilities decreased noticeably in the treatment group post-Chernobyl (see Figure C in the Appendix). Then, to investigate whether the increase in causes attributed to human factors contributed to this reduction, this study examined the mediating effect of the ratios of causes attributed to human error in order to predict the attribution of deviations to other causes during the post-Chernobyl period. The results show there is a significant mediating effect (see Table B in the Appendix).

Discussion

This study, based on worldwide NPPs operating before and after the Chernobyl disaster, showed that, after the disaster, deviations attributed to human factors increased over a long period. Institutional environments following field disasters compel organizations to take sustainable actions and satisfy heightened safety standards. To meet these adaptation goals, organizations might change routines by detecting more internally rooted deviations and reporting those deviations transparently (hypothesis 1). Thus, the explicit increments in those internally attributed deviations may represent adaptive routine changes, although they appear to indicate maladaptation. Furthermore, given that this tendency persists for a long time, the results are distinct from the temporal coordination costs that the previous study suggested (hypothesis 2) (e.g., Dutt & Lawrence, 2022; Geleilate et al., 2021).

Implications

The findings of this study contribute to the literature in the following ways. First, many organizational adaptation studies presume that adaptation represents routine changes that lead to superior performance (Haveman, 1992; Moore & Kraatz, 2011). This study suggests that such performance of adaptive routines should be interpreted based on implicit meanings for adaptation goals. An explicit performance might not fully reflect satisfaction with the underlying requirements for adaptation, particularly when the adaptation goals are complicated. For instance, during a pandemic, the number of new cases of infection is often considered a performance measure to evaluate organizational or national responses to the goals of a thorough quarantine. Clearly, the simplest way to reduce new cases is to decrease tests to detect them, or to not report the numbers publicly. In that case, would fewer cases of new infections fully represent adaptation? The study results indicate that when the adaptation goals are complicated, organizational adaptation should not be equated with apparent performance, but should be judged in implicit ways based on the original definition: congruence with environmental requirements (Vergne & Depeyre, 2016).

Second, the findings also provide important implications for the growing body of literature on sustainability (Howard-Grenville, 2021). Sustainability is one of the critical adaptation goals for organizations. Given a complicated goal, an explicit sustainability performance measurement might conflict with implicit performance dimensions. For instance, increased numbers of several types of harassment explicitly look like a lack of equality or justice; however, they may also reflect adaptive routines whereby people comfortably report those events in response to adaptive social norms. Conversely, a decrease in the number of complaints or reports, such as reports of harassment, might result from avoidance related to detecting or reporting those events, or the organizational culture may make it difficult to report unsustainable issues. In consideration of these dynamics, when evaluating adaptation for sustainability, potential conflicts between explicit and implicit performance dimensions should be carefully considered and, when such a conflict exists, implicit interpretation should be prioritized. These examples provide important insights into measuring and evaluating adaptation for sustainability.

Third, this study sheds new light on errors or deviations within organizations. The current literature, mainly based on organizational learning theory, has investigated how organizations reduce unnecessary errors based on previous negative events (Catino & Patriotta, 2013; Dahlin, Chuang, & Roulet, 2018). According to these studies, a disaster should decrease deviations by offering learning opportunities to prevent future negative events. The results of this study, however, showed an opposite trend in two ways. First, the Chernobyl disaster increased reports of human error for a long time in NPPs worldwide. This phenomenon can be interpreted as organizations conducting proactive detection and transparent reporting of internally rooted deviations. Thus, the phenomenon represents an adaptation—the accomplishment of learning (Greve, 2017). This suggests a different manner of interpretation to the typical literature on errors in organizations. Second, as noted in the subsection on robustness checks, even after the Chernobyl disaster the total number of deviations did not decrease significantly. These results contradict the previous notion of learning from failure in order to mitigate errors (e.g., Dahlin et al., 2018). Such learning might be a more complex matter and, thus, might require many boundary conditions that we must address. This has a critical implication for future research on organizational learning from disastrous accidents at the industry level.

Finally, this study has important implications for HROs. The results of hypothesis 1 call for scholars and practitioners to reconsider their interpretations of human error in organizations. As emphasized, these deviations can correspond not only to a lack of safety but also to an organizational response to strict safety standards. The increased number of unplanned outages attributed to human factors following the Chernobyl disaster might not indicate corruption in industrial safety regimes but rather adaptation of organizations conforming to heightened safety standards. This result implies that an absence of deviations does not necessarily guarantee safety. Thus, merely comparing the frequency of deviations across organizations may be inappropriate, but comparisons based on the context or timing of events may lead to more accurate interpretations.

Limitations

This study relied on a single empirical context and definition of routine changes. Although the context and definition represent the given constructs well, future efforts should replicate the analysis based on other empirical contexts to test the generalizability of the findings.

Moreover, this study mainly relied on quantitative analysis of archival data. This method is optimal for testing the longitudinal effects of organizational responses to a historical event. However, it is limited in showing specific routine changes or internal processes and systems attributing deviations to internally rooted causes. Given these limitations, this study assumed that the increased ratios of internally attributed deviations following field disasters reflect routine changes for adaptation. However, it could not directly examine the specific observable practices or interpersonal interactions that more closely reflect the routine changes (Breslin, 2022). To address this issue, several additional analyses were conducted to complement the results. Nevertheless, other compelling qualitative analyses, based on the other contexts, are required to address this limitation and provide greater robustness of the analysis results.

Conclusions

There may be numerous beneficial routines available to improve sustainability that have not yet been implemented because potential conflict between performance dimensions could induce unwanted effects. Such routines might not be developed despite strong social requirements until disastrous outcomes urge their development. Ideally, organizations would proactively explore hidden beneficial routines to achieve sustainability even if there were no disastrous outcomes or external pressures. To support this organizational adaptation for sustainability, we should recognize and understand performance complexity and separate an implicit performance for organizational adaptation from explicit performance.

The results of this study have implications for future creative theories about organizational adaptation and performance, and can be used by policymakers and managers to develop routines and policies to improve organizational sustainability.

Footnotes

Appendix: Complementary descriptions for additional analyses

Acknowledgements

The author would like to thank the special issue’s editors, Prof. Renate E. Meyer (Editor-in-Chief), and three anonymous reviewers for their enthusiastic review and very helpful suggestions. The author also thanks all interviewees for sharing their knowledge and kind cooperation.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article. The financial support for this research was granted by the JSPS KAKENHI (grant numbers 17K13783 and 19H01507) and the Inamori Research Grants from Inamori Foundation.