Abstract

How are scientists coping with misinformation and disinformation? Focusing on the triangle scientists/mis-disinformation/behaviour, this study aims to systematically review the literature to answer three research questions: What are the main approaches described in the literature concerning scientists’ behaviour towards mis-disinformation? Which techniques or strategies are discussed to tackle information disorder? Is there a research gap in including scientists as subjects of research projects concerning information disorder tackling strategies? Following PRISMA 2020 statement, a checklist and flow diagram for reporting systematic reviews, a set of 14 documents was analysed. Findings revealed that the literature might be interpreted following Wilson and Maceviciute’s model as creation, acceptance and dissemination categories. Crossing over these categories, we advanced three standing points to analyse scientists’ positions towards mis-disinformation: inside, inside-out and outside-in. The stage ‘Creation/facilitation’ was the least present in our sample, but ‘Use/rejection/acceptance’ and ‘Dissemination’ were depicted in the literature retrieved. Most of the literature approaches were about inside-out perspectives, meaning that the topic is mainly studied concerning communication issues. Regarding the strategies against the information disorder, findings suggest that preventive and reactive strategies are simultaneously used. A strong appeal to a multidisciplinary effort against mis-disinformation is widely present, but there is a gap in including scientists as subjects of research projects.

1. Introduction

In the last years, a growing amount of research has been produced about mis-disinformation phenomena [1–8]. The literature distinguishes the mis- and dis-prefixes as the intention to cause harm behind the act of creation or dissemination (disinformation, which includes fake news phenomena) and the unintended behaviour (misinformation). This work aggregates both meanings in one expression as a means of synthesis. In this mis-disinformation environment, science has been challenged and scientists’ role has been questioned on trust and denial issues within a post-truth era [9,10]. However, how are scientists coping with misinformation and disinformation? Are they vulnerable to information disorder (mis-, dis- and mal-information) [11] like everyone else? Or are they able and more prepared to discern and overcome the mis-disinformation pitfalls?

Scientists produce and communicate scientific achievements, but they are also common people with their information behaviour of acquisition, sharing, use or dissemination. Scientists’ high degree of training and qualifications, their expertise in a science field or their preparation to understand the complexity of the scientific process, are undoubtedly an advantage to navigating a mis-disinformation universe. Yet, preparation may not be sufficient for a complete immunity to information disorder: Appealing as it may be to view science as occupying a privileged epistemic position, scientific communication has fallen victim to the ill effects of an attention economy. (…) Like other information consumers and producers, researchers rely on search engines, recommendation systems, and social media to find relevant information. In turn, scientists can be susceptible to filter bubbles, predatory publishers, and undue deference to the authority of numbers, P values, and black box algorithms [12].

Moreover, scientists are ‘epistemic agents’ [13] with vulnerabilities and strengths. Understanding scientists’ vulnerability towards mis-disinformation is the first motivation for this research. The second motivation relies on scientists’ strengths and the role that scientists can play in tackling information disorder. Are they ready or interested in elaborating or cooperating in overall strategies to tackle these phenomena? Scientists consume all kinds of information in their private life, but their public role and their responsibilities regarding science production and communication add an extra layer of complexity. To disregard that social position could erode society’s trust in science as ‘science relies on public trust for its funding and opportunities to interface with the world. Misinformation in and about science could easily undermine this trust’ [12]. Nevertheless, ‘scientific facts have a hard time competing with misinformation and misleading claims’, but ‘science alone cannot navigate us out of this storm. But we can avoid making things worse’ [14].

Despite some quick looks at the behaviour of scientists towards mis-disinformation [14,15], research on this topic is still limited. Previous studies showed that the scientists’ efforts in the production of misinformation rebuttals or debunking [16] are probably the most relevant information practices [17], but somehow considered ineffective [18], being suggested that a preventive action, such as prebunking or inoculation, could be more successful [19–21], as ‘explaining misleading or manipulative argumentation strategies to people (…) makes people resilient to subsequent manipulation attempts’ [22]. On the other hand, scientists may be susceptible to uncertainty-based argumentation, even when scientists recognise those arguments as false and are actively rebutting them: ‘the pressures of climate contrarians has contributed, at least to some degree, to undermining the confidence of the scientific community in their own theory, data, and models’ [23].

Human cognitive processes, such as motivated reasoning (biasing of judgements according to one’s beliefs), are also a relevant field of research, as ‘in politically contentious science debates, it is the best educated and most scientifically literate who are the most prone to motivated reasoning’ [24]. Polarisation seems to be also affected by literacy issues: general education, science education, and science literacy are associated with greater political and religious polarization, is consistent with both the motivated reasoning account, by which more knowledgeable individuals are more adept at interpreting evidence in support of their preferred conclusions, and the miscalibration account, by which knowledge increases individuals’ confidence more quickly than it increases that knowledge [25].

More recently, a study found that ‘basic knowledge of scientific facts was associated with proscience beliefs, even among politicised issues. Thus, with perhaps a few exceptions, focusing on teaching basic science is likely to yield increases in overall acceptance of science’ [26].

Regarding these psychological perspectives, one may ask: are scientists overconfident about their capacity to reject the mis-disinformation deluge? Or laziness or lack of reasoning might play also a relevant part [27]? Basic scientific knowledge may favour the ability to ‘discerning between true and false content (in terms of both accuracy and sharing decisions)’, but ‘inattention plays an important role in the sharing of misinformation online’ [28]. In fact, ‘overconfidence may contribute to susceptibility to false information, perhaps because it stops people from slowing down and engaging in reflective reasoning’ [21] and ‘people are often distracted from considering the accuracy of the content. Therefore, shifting attention to the concept of accuracy can cause people to improve the quality of the news that they share’ [29].

Since 2016 (US Presidential election), and mostly during the COVID-19 pandemic (2020–2023), the wave of mis-disinformation became a great concern for the scientific community. The call to arms [30,31] for a strategic response was made: ‘the best that can be done is for scientists and their scientific associations to anticipate campaigns of misinformation and disinformation and to proactively develop online strategies and Internet platforms to counteract them when they occur’ [32].

Several paths have been disclosed by the published literature, pointing out mandatory inter- or multi-disciplinary approaches [33]: Technological solutions deployed through social media and educational curriculum that explicitly address misinformation are two interventions with the potential to inoculate the public against misinformation. It is only through multi-disciplinary collaborations, uniting psychology, computer science, and critical thinking researchers, that ambitious, innovative solutions can be developed at scales commensurate with the scale of misinformation efforts [34].

A great number of solutions are technology-centred [35,36], including distributed computing (see European Research Council Project FARE) [37].

However, many of these studies did not focus on the information behaviour of scientists or their involvement in the fight against disinformation, such as, the tendency to underestimate the impact of misinformation or to overlook the complexity of the misinformation phenomena: ‘As science continues to be purposefully undermined at large scales, researchers and practitioners cannot afford to underestimate the economic influence, institutional complexity, strategic sophistication, financial motivation and societal impact of the networks behind these campaigns’ [38].

Studies mostly focused their attention on inside perspectives, like changes within science ethos and the scholarly communication system, such as the retrieval and consumption of journal articles through social media, the pressure to publish and hype their results, the use of preprint servers exposed to the media attention, overstating the implications of scientific findings which may jeopardise accuracy, the replication crisis of science, the publication bias of positive results, predatory publishing, etc. [12,31,39]. Scientific misinformation ‘arises as a disorder of public science communication’, as ‘publicly available information that is misleading or deceptive relative to the best available scientific evidence or expertise at the time and that counters statements by actors or institutions who adhere to scientific principles’ [40]. Inside perspectives also denote the acknowledgement that ‘misinformation and disinformation often start with scientists themselves’ and ‘making the scientific process more transparent will expose flaws and may even beget controversy, but ultimately it will allow scientists to strengthen error-correcting mechanisms as well as build public trust’ [41].

Also, inside-out (scientists vs public) perspectives are available in the literature, like, issues concerning science communication [42–44], science literacy [24,25,45], low-quality research, pseudoscience or bad science issues [46,47], but how scientists behave towards mis-disinformation (an outside-in perspective), focusing scientists as research subjects, is still poorly studied.

The information behaviour framework might be adopted to deepen our knowledge about scientists’ behaviour. For instance, the avoidance and rejection of information may be interesting abilities to observe the relationship between scientists and mis-disinformation. Wilson [48], in his general theory of information behaviour, established a connection between need and information seeking; however, Information seeking is only one aspect of information behaviour: other activities (which may play a part in information discovery) include information exchange or sharing, information transfer to others whose needs are known, as well as the avoidance and rejection of information.

Another definition of information behaviour ‘encompasses information seeking as well as the totality of other unintentional or serendipitous behaviors (such as glimpsing or encountering information), as well as purposive behaviors that do not involve seeking, such as actively avoiding information’ [49].

The problem of truth is one key aspect. An attempt to combine Truth-Default theory [50] and information behaviour research questioned: ‘how individuals determine if others are truthful as well as which factors are most effective in identifying truthfulness?’ This might be also applied to scientists: The individual encounters an information source, based on previous IB [information behavior] and outputs, and presumes it to be truthful. This information source requires processing and evaluation in the specific context. Most instances of processing are cursory and superficial which, according to TDT, enables efficient communication but also makes the person more vulnerable to deceit. The result of information processing determines information use, either internal (trust v. do not trust) or external (investigate, support, refute) [51].

On the contrary, truth judgements are constructed, reflecting: inferences drawn from three types of information: base rates, feelings, and consistency with information retrieved from memory. First, people exhibit a bias to accept incoming information, because most claims in our environments are true. Second, people interpret feelings, like ease of processing, as evidence of truth. And third, people can (but do not always) consider whether assertions match facts and source information stored in memory [52].

This implies, among other things, that crowdsourced judgements about source trustworthiness are more accurate than individual ones and are contrary to most recommendations to an individual ‘mind the source’ [53]. In other words, besides nudging accuracy, ‘using crowdsourcing to add a “human in the loop” element to misinformation detection algorithms is promising’ [21].

Given the absence of models of information behaviour that specifically address the problem of mis-disinformation, an information behaviour framework to deal with this issue was proposed by Agarwal and Alsaeedi [54,55]. Equalising information and mis-disinformation, the proposal highlights the contexts, the mediation of algorithms, bots and social media in information creation, the confirmation biases and prejudices’ influence on the ability to evaluate the sources, the perception of genuine and false information, the decision to ignore, use or spread it and the effect of filter bubbles and echo chambers. The critical action is to puncture the bubble: through the concerted efforts and advocacy by individuals, groups, associations and organizations working on media literacy, LIS professionals teaching information literacy, and educators in schools, colleges, and universities, as well as others, training people on critical thinking and critical action [54].

Later, Wilson and Maceviciute designed a model depicting the motives underlying the creation, acceptance and dissemination of misinformation. With this proposal, the authors aim to answer a new and complex layer of information behaviour research: ‘The information behaviour of many actors in different situations is known, but what do we know about how we behave in relation to misinformation?’ [56]. They introduce the concept of information misbehaviour (another expression for misinformation): intending to typify the associated activities as pathological to some degree. That is, as untypical behaviour relative to norms of communication behaviour in society, where the information transferred by broadcast or print media, for example, is assumed to be reliable and true [56].

Although this model is not specifically designed to frame scientists’ information behaviour or misbehaviour, its phases were used to organise our systematic review of the literature concerning the information behaviour of scientists and the modalities of behaviour towards mis-disinformation.

Future research projects should focus on scientists’ behaviour and deepen the research participation of this population. Here, we aim to understand how scientists behave towards mis-disinformation and how can they contribute to the development of strategies to counter it. Based on the identified research challenges, the study addresses the following research questions:

RQ1. What are the main approaches described in the literature concerning scientists’ behaviour towards misinformation and disinformation?

RQ2. Which techniques or strategies are discussed to tackle information disorder?

RQ3. Is there a research gap in including scientists as subjects of research projects concerning the development of information disorder tackling strategies?

This article includes a research methodology section, explaining the procedures of data extraction and the final data set analysis. The findings section contains the qualitative analysis of the data set, which is discussed in the next section. The conclusion intends to answer the research questions of the study.

2. Methods

Systematic reviews of the literature are a useful research technique widely used across diverse disciplines [57]. These groups of techniques can ‘provide syntheses of the state of knowledge in a field, from which future research priorities can be identified; they can address questions that otherwise could not be answered by individual studies’ [58]. In the preparation of a research project, ‘a systematic review should typically be undertaken before embarking on new primary research. Such a review will identify current and ongoing studies, as well as indicate where specific gaps in knowledge exist, or evidence is lacking’ [59].

Among several options available to guide the systematic review, we opted for the PRISMA 2020 statement, as it ‘provides updated reporting guidance for systematic reviews that reflects advances in methods to identify, select, appraise, and synthesize studies’ [58]. To properly answer RQ1 and RQ2, PRISMA 2020 item checklist was followed [58]. Concerning the eligibility criteria, the inclusion criteria for the review were: (1) empirical studies with scientists and/or academic members as research subjects; (2) studies of behaviour related to misinformation, disinformation and information disorder and (3) studies related with techniques or strategies concerning information disorder tackling. As exclusion criteria, it was used: (1) reviews, essays, editorial or opinion papers; (2) studies with technological-only proposals; (3) studies with other research subjects undisclosed, like random participants or social media random recruitment; (4) studies retrieved with the assigned search terms, but inappropriate for the research objectives and (5) studies which full text was not obtained or with a language inaccessible to the authors. Studies were grouped for the syntheses with a systematic application of these criteria.

Regarding information sources, the chosen databases were Web of Science, Scopus, LISA and LISTA. The first two are the main bibliographic databases available, and the latter are the most two relevant databases within information science. The extraction was performed on 20 January 2023. The search query comprised three thematic clusters, including its possible variations: scientists, behaviour and information disorder. The search strategies were as follows:

(a) Web of Science Core Collection: (scientist* OR researcher* OR facult* OR scholar*) AND (behavior OR behaviour) AND (misinformation OR disinformation OR ‘fake news’ OR ‘information disorder’), among term subjects, titles and abstracts;

(b) Scopus: TITLE-ABS-KEY-AUTH (scientist* OR researcher* OR facult* OR scholar*) AND (behavior OR behaviour) AND (misinformation OR disinformation OR ‘fake news’ OR ‘information disorder’);

(c) LISA: Anywhere except full text (scientist* OR researcher* OR facult* OR scholar*) AND (behavior OR behaviour) AND (misinformation OR disinformation OR ‘fake news’ OR ‘information disorder’);

(d) LISTA: All text (scientist* OR researcher* OR facult* OR scholar*) AND (behavior OR behaviour) AND (misinformation OR disinformation OR ‘fake news’ OR ‘information disorder’).

Through the selection process, it was used the Rayyan tool (https://www.rayyan.ai/) in a multistep approach. First, all the retrieved records were imported to the platform, and the authors independently reviewed all the titles, abstracts and keywords. All the keywords used in the search expression were also added to Rayyan, becoming underlined in the screening process. To overcome conflicts, two in-person meetings were held to reach a consensus on the application of eligibility criteria. Afterward, to perform a first full-text analysis of the sample, it was collected, and organised specific data from the eligible reports. The authors collected explicit research questions, sample characteristics, methods used and findings from each report, independently, using a Microsoft Excel sheet, which outcome was later confronted and assembled by the authors. In the end, a synthesis of the included studies was produced. The following items of the PRISMA 2020 checklist were not considered: risk of bias assessment, effect measures, synthesis methods, reporting bias assessment and certainty assessment.

3. Findings

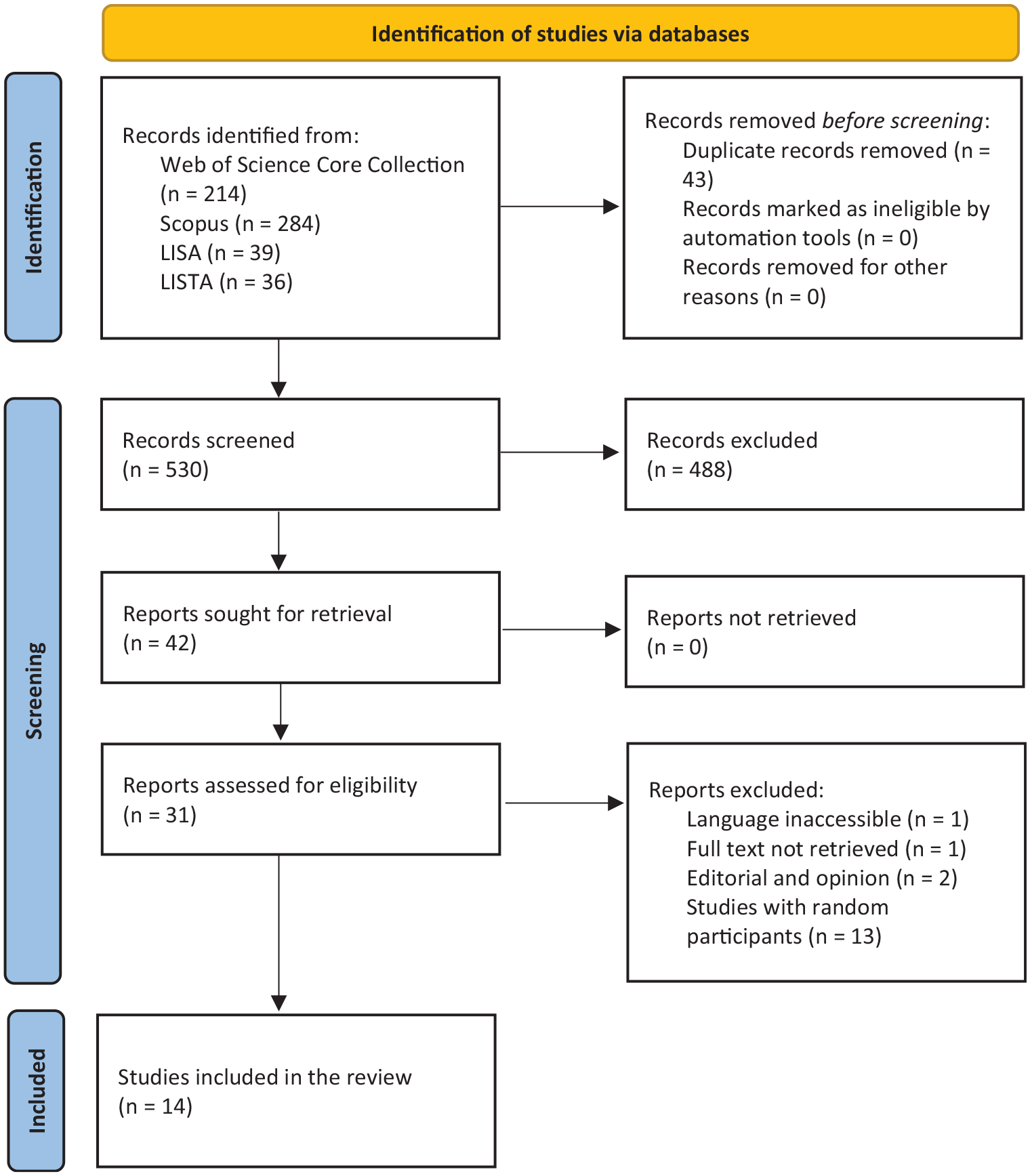

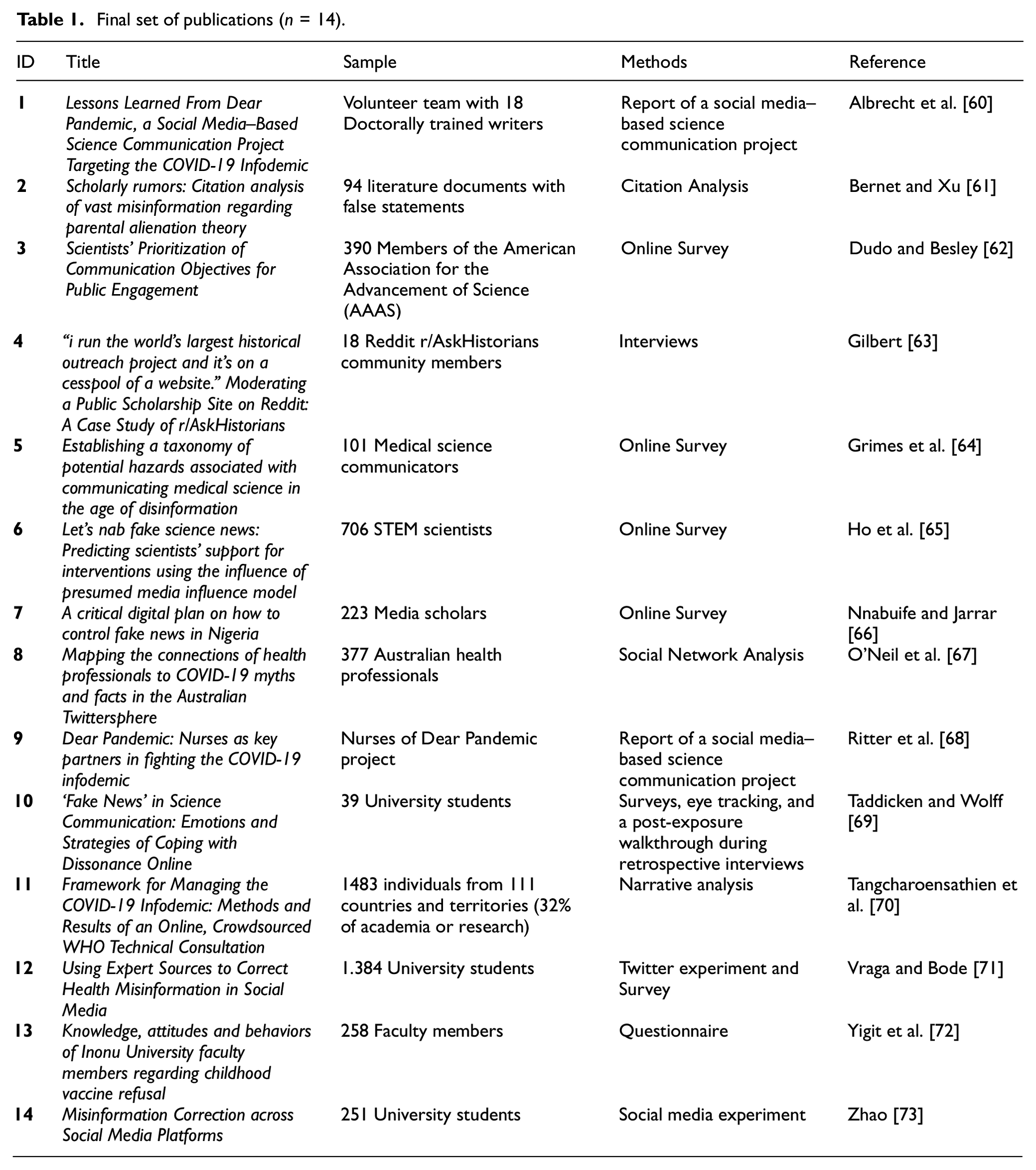

Below are described the results of the search and selection process, from the number of records identified in the search to the number of studies included in the review, meaning the process until reaching the 14 papers’ final data set (see Figure 1). The content analysis was made with the following data set (Table 1).

PRISMA flow diagram [58].

Final set of publications (n = 14).

The disparity between the references originally retrieved and screened (n = 530) and the final sample (n = 14) is enormous, as it was hard to find studies, first, concerning information behaviour towards mis-disinformation, and second, with scientists as research subjects. On the contrary, the number of excluded papers is also related to the large spectrum of the search strings and the ambiguity of some of the words/expressions used. We also found studies that might appear to meet the inclusion criteria, but which were excluded, mostly because they did not focus on scientists as their research subjects. The exceptions are Bernet and Xu’s [61] study which did not focus on scientists but on scientific publications, regarded here as behaviour manifestations. Also, three studies focused on university students [69,71,73], and we opted to include them due to their relevance and the academic setting chosen to perform the research. Regarding Table 1, survey methods are the most used in the literature. The date of publication range is between 2016 and 2022.

As mentioned before, Wilson and Maceviciute [56] designed a model depicting the motives underlying the creation, acceptance and dissemination of misinformation. Grounded in the literature, the model contains the categories of motives to (1) create misinformation (mostly, ‘in-order-to’ motives, to reach a desired state, e.g. commercial gain, political power, personal gain, etc., except true belief which is a ‘because’, stemming from the person’s history); (2) accept misinformation (mostly ‘because’ motives, e.g. reduced analytical thinking, political partisanship, religious fundamentalism, delusional ideation, etc.) and (3) disseminate misinformation (again, mostly ‘because’ motives, e.g. social belonging, self-promotion, altruism, distrust, entertainment, perceived importance, reduced analytical thinking, etc.). These three stages are enriched, according to the authors’ model, with the acknowledgement of the role of ‘facilitation’ to extend the reach of ‘creation’. The facilitators (like social media platforms) also have underlying motives, primarily commercial gain. Thus, ‘facilitation’ enables ‘diffusion’, which enables ‘use’. The stage of ‘use’ may result in ‘rejection’ of misinformation or ‘acceptance’, and the latter may lead to ‘dissemination’. The scheme ends with ‘dissemination’ leading to ‘refutation’ or ‘persistence’.

This model provided a framework to our content analysis in three different subsections: ‘Creation/facilitation’, ‘Use/rejection/acceptance’ and ‘Dissemination’, although some documents might fit in more than one subsection. Nevertheless, findings of individual studies will be presented within this framework to answer RQ1 (scientists’ behaviour) and RQ2 (tackling information disorder). The literature will be analysed focusing on the personal or collective information behaviour of scientists, although the research gap in including scientists as subjects of research projects (RQ3) concerning the development of information disorder tackling strategies seems already palpable.

3.1. Creation/facilitation

Following the framework proposed by Agarwal and Alsaeedi [54,55], which equalises information and mis-disinformation, the content analysis detected two documents concerning the same social media–based science communication project. Dear Pandemic [60,68] (currently, Those Nerdy Girls –https://thosenerdygirls.org) aims to create information to tackle misinformation, providing reliable information with a pre-emptive perspective. The scientists’ behaviour – initially infectious disease public health researchers, then extended to volunteers from other disciplines – reveals altruism and professional commitment to fact-based information creation.

The scientific expertise is used to create a science communication strategy within the same environment used by disinformation, ‘leveraging the same social media platforms where misinformation circulates’. This option makes ‘content as accessible and easily shareable as misinformation on social media’, ‘helping to flood the information ecosphere with fact-based information’, and ‘actively soliciting questions from readers (…) to track the rapidly changing currents of misinformation and provide responses that directly address myths and targeted attempts to undermine public health’ [60].

The second study about the Dear Pandemic project focuses on the role of nurses in this evidence-based communication strategy, ‘accelerating the spread of good information’ and grounded on the previous experience of the nurse–patient therapeutic relationship [68]. Using the same social media where mis-disinformation persists, vetted scientific information and conversational language are key elements of the project’s success: ‘Well-cited facts are not enough to change behavior. Instead, (…) [the team] situate facts in a low-barrier community of trust and interpersonal experience while making content easy to share within personal networks’ [68].

3.2. Use/rejection/acceptance

The COVID-19 pandemic was a pivotal moment of the mis-disinformation phenomena. During the public health crisis, an infodemic arose, causing large concern among governments and state authorities. The World Health Organization organised in April 2020 an international online consultation on how to tackle the infodemic, inviting a vast number of scientists, among other key partners. One of the main outputs of this coalition was a framework for managing the deluge of mis-disinformation [70]. Crowdsourcing strategies and the establishment of partnerships are mentioned as a strong multidisciplinary foundation for the road ahead. The knowledge translation process requires that scientists should translate knowledge ‘into actionable behavior-change messages, presented in ways that are understood by and accessible to all individuals in all parts of all societies’ [70]. Strategic partnerships ‘should be formed across all sectors, including but not limited to the social media and technology sectors, academia, and civil society’ (p. 6). The framework proposal encompasses five action areas: (1) strengthening the scanning, review and verification of evidence and information; (2) strengthening the interpretation and explanation of what is known, fact-checking statements and addressing misinformation; (3) strengthening the amplification of messages and actions from trusted actors to individuals and communities that need the information; (4) strengthening the analysis of infodemics, including analysis of information flows, monitoring the acceptance of public health interventions and analysis of factors affecting infodemics and behaviours at individual and population levels and (5) strengthening systems for infodemic management in health emergencies (see Annex 1, Tangcharoensathien et al. [70]). All the action areas rely on scientists’ contributions, for example, science communication improvements, open science adoption, debunking, health literacy promotion, etc.

Cognitive processes are key to understanding why humans reject or accept mis-disinformation. Using a sample of university students and grounded in the Theory of Cognitive Dissonance (a person’s mental discomfort that is triggered by a situation in which one is confronted with facts that contradict his or her beliefs, ideals and values), Taddicken and Wolff explored opinion-challenging disinformation and coping with the state of dissonance. Findings revealed coping strategies that people use to overcome cognitive dissonance when confronted with information on climate change. Some strategies include re-reading known information to confirm existing beliefs or seeking out information to refute disinformation claims. Surprisingly, for a brief period, some individuals decreased their awareness of climate change, with two different patterns emerging: frustration due to a lack of media literacy or a fascination with alternative worldviews. In the end, ‘individuals felt relieved and satisfied after being able to dissolve their dissonant state and negative arousal’, as ‘unfinished coping processes might be an explanation for disenchantment with the media as well as with scientific elites’ [69]. While studies about confirmation bias normally deal with how disinformation confirms one’s established views and opinions, the originality of this study is to investigate opinion-challenging disinformation in an academic setting.

Mis-disinformation is pervasive in online social media, appearing in a randomly and unexpectedly way. A US experiment with university students found that online debunking and correction are more probably to be effective when a reputable source (like CDC) intervenes, rather than an anonymous user [71]. The credibility of the expert intervention was not harmed, although renowned institutions should improve dialogical science communication. Another US study found positive results in corrective messages when this technique was performed across different social media platforms, suggesting ‘the persuasive power of cross-platform exposure’ and that ‘practitioners can also benefit from this study by conducting social media campaigns with multiple platforms’ [73].

The intervention of specialists in a crowdsourced and dialogical environment seems to be an effective strategy against mis-disinformation proliferation. Considering online communities, moderation work was the focus of Gilbert’s article on a public scholarship platform about history. Reddit is based on a Q&A model; thus, the moderators’ role is critical to regulating mis-disinformation introduced by web users to ‘create and enforce rules and model normative behavior’. This moderation is visible, for example, when moderators answer member’s questions, which humanises the subjects and the process itself. They contribute to the use of accurate information (demanding sources) and the rejection of inaccurate or offensive content, ‘first, by removing questions and comments that promote hateful rhetoric, and second, by providing explanations and feedback to users who are asking questions in good faith’. Her study revealed that ‘visible moderation is often interpreted as censorship’, however, r/AskHistorians Reddit community is seen ‘as a model for combating misinformation by building trust in academic processes’ [63].

A Singapore-based study analysed the presumption of media influence in establishing the linkages between scientists’ attention to fake science news and their intentions to address the issue, arguing that ‘scientists who perceive that fake science news would inflict harm may aim to limit it’ [65] and that ‘tackling fake science news is an altruistic behaviour aimed at reducing harm’ (p. 916). Findings show that scientists’ awareness of fake news and presumed harm to other scientists is greater than presumed harm to the public. To counter the phenomenon, ‘scientists showed more support towards educational efforts than the establishment of legislations’ (p. 920), as ‘setting up educational programmes for the public to learn about fake science news’ might be ‘a more productive strategy to curb the spread of fake science news’ (p. 921). This study highlights the importance of scientists in the global effort of tackling fake news and reveals the collaborative and educational dimensions activated by their ‘personal norms’, a moral obligation to adopt a behaviour after the awareness of consequences and the responsibility arousal, for example, altruistic behaviours.

The global dimension of mis-disinformation encompasses African countries, like Nigeria. Media scholars from the African Council for Communication Education were studied by Nnabuife and Jarrar [66]. Focusing on personal behaviour, findings suggest that human intervention in the use and rejection of fake news should be increased. Social media users should take responsibility for what they read, believe and share on social media platforms. It also calls for social media organisations to improve regulation and prevent generating conflicts online. Furthermore, the study suggests the creation of an International Fact Checking Network (IFCN) to monitor social media users’ posts and impose sanctions on organisations and bloggers who violate the regulations. Also, recommends the organisation of seminars and workshops to educate people on detecting and avoiding fake news. It also suggests the implementation of a digital identification code for all social media account owners. Finally, the study proposes that social media owners should hire human editors to fact-check the contents of posts, which seems not easy to scale up and implement realistically.

Scholars’ vulnerability towards mis-disinformation was a topic poorly studied in our sample. A single example was found in a Turkish study about vaccine hesitancy and refusal among faculty members. Findings showed that almost one-third of the faculty members were hesitant about childhood vaccinations and hesitancy behaviour was higher on social sciences faculty members, in comparison with health sciences colleagues. Concerning their own children, almost all reported a complete vaccination status, however, nearly half of the faculty members stated that they had not read any scientific publications on vaccines. The media and the internet were sources that gave more weight to personal opinions, often not based on scientific knowledge, compared to scientific studies proving the safety and efficacy of vaccines [72].

The mis-disinformation wave might easily penetrate the academic setting when one is uninformed about the issues at stake or when it relates to other expertise areas than our own: ‘the hesitancy status of faculty members working in the field of health sciences and faculty members whose sources of information were scientific articles, school education and family doctors was lower’ [72].

3.3. Dissemination

Dissemination is strongly related to science communication and public engagement in science. Scientists have several communication objectives, such as ‘(1) informing (i.e. educating) others about science (2) exciting others about science, (3) ensuring others see scientists as trustworthy, (4) framing or shaping messages to resonate with people’s existing views and (5) defending science from perceived misinformation’ [62]. Educating the public and defending science were the most prioritised objectives in Dudo and Besley’s [62] study, although scientists might ‘engage in aggressive public communication aimed at individuals who reject the science’, leading to negative reactions by the public.

Authority or expert strong positions towards misinformation might have an undesired backfire effect. This is confirmed through a behaviour taxonomy of the adversarial reactions in the face of health communication. Negative experiences strongly affect the public outreach activity of science communicators. An international sample of health professionals revealed a sort of abusive behaviours and negative repercussions of their work, including discrediting attempts, misrepresentation of scientific evidence/expertise, dubious amplification of pseudoscientific narratives, malicious complaints/abuse of regulatory frameworks and interpersonal harassment, like intimidation [64].

In the same line of inquiry, an Australian research related the COVID-19 mythical and factual hashtags with health professionals and researchers who engaged with COVID-19 tweets, finding that they ‘are much more engaged in the diffusion of evidence-based information and scientific facts than medical myths’, distinguishing ‘between a small minority of actors who endorsed COVID-19 myths, and the overwhelming majority who rebutted these myths’ [67]. However, the authors point out that to debunk conspiracy theories effectively, it is important to understand that people’s willingness to accept contradictory information matters more than just exposing them to it. People who believe in conspiracy theories may have a varied news diet, but effective debunking requires distinguishing between ‘echo chambers’ and ‘epistemic bubbles’. In ‘epistemic bubbles’, people can re-evaluate their beliefs if refuted, while in ‘echo chambers’, people trust one authority and dismiss anything that contradicts it as fake news. Scientists and health professionals are not exclusively responsible for debunking, but important voices and influencers in the social media landscape during a public crisis, requiring further research.

Dissemination of misinformation may lead to refutation or persistence, as Wilson and Maceviciute [56] pointed out. Through citation analysis, Bernet and Xu studied the persistence of a particular set of misinformation (regarding parental alienation (PA) theory) in scientific literature between 1994 and 2022. They found a systemic flaw in the literature (variations of the statement: ‘PA theory assumes that the favoured parent has caused PA in the child simply because the child refuses to have a relationship with the rejected parent, without identifying or proving alienating behaviours by the favoured parent’.) with three plausible explanations: the first pertains to the psychological mindset of the authors and presenters (i.e. confirmation bias); the second pertains to the authors’ writing skills (e.g. sloppy research practices, such as persistent use of secondary sources rather than original or primary sources for their information); and the third possible explanation for the epidemic of PA [parental alienation] misinformation is the adoption of typical cognitive processes within PA families by evaluators and attorneys and other individuals in their social network [61].

4. Discussion

Providing a general interpretation of the results, it seems clear that scientists, as research subjects, are still poorly studied. The few studies analysed reveal a research gap concerning this social and professional group. The mis-disinformation phenomena require more empirical research to understand scientists’ behaviour fragilities and strengths and their role to design effective strategies to counteract the information disorder.

Concerning the ‘Creation/facilitation’ category, findings revealed a preventive perspective adopted by the scientists, also as a science communication strategy, confirming previous studies [19–22]. This category was the least used in our analysis. Little is known about scientists as creators of information specifically designed to tackle mis-disinformation or even about scientists as creators of mis-disinformation. We excluded here the reactive behaviour of the production of misinformation rebuttals or debunking, the most common and relevant scientists’ information practices, as we have considered it part of the ‘rejection’ perspective, or the intention to help others reject mis-disinformation.

The ‘Use/rejection/acceptance’ category showed the importance of the collective effort: collaboration, crowdsourcing, partnerships and dialogical actions, all highlighting the relevance of multidisciplinary approaches [34]. This cooperative attitude is essential to guide the scientists’ behaviour and the only viable way to overcome such challenges. Combined efforts and the acknowledgement that scientists alone are unable to overcome mis-disinformation confirm previous proposals [14]. Cognitive dissonance, and other cognitive processes, were studied in our sample, but the diversity of these psychological lines of inquiry is broadly open to be more explored. Psychology, together with Information Science and Social Studies of Science, and other fields’ contributions, are vital to understanding the complexity of these phenomena. Findings also suggest that debunking mis-disinformation by reputable sources and across different social media platforms could be effective [16]. Likewise, the intervention of specialists is often a significant sign of altruistic behaviour for the benefit of society, strengthening the social role of science and the public trust [12]. Findings additionally stress the importance of the educational dimension, as scientists should be more involved in science literacy activities [24]. On the contrary, the need for regulation or legislation against mis-disinformation should be also addressed, with scientists as relevant stakeholders and consultants.

Considering the ‘Dissemination’ category, our findings showed scientists’ commitment to educating the public and defending science. To perform it, strong or aggressive positions should be avoided, as negative repercussions may influence scientists’ behaviour to persist in their public role. This is an inside-out perspective, claiming the social position of scientists and their responsibility towards society. In this line of inquiry, scientists must contribute to penetrating the mentioned ‘epistemic bubbles’ and ‘echo chambers’ [67] being the voices and influencers of a debunking general strategy. Findings also revealed the science ethos inside perspectives, such as the systemic flaws of scholarly literature, already mentioned by Renstrom [41].

The limitations of the review processes are multiple. First, the inclusion and exclusion criteria led to a reduced final set of publications, thus some articles may have been missed because of those criteria. Second, some relevant articles may have been missed through the search terms used, possibly leading to flaws in the literature extraction. Third, the content analysis is limited to the final sample, probably not embracing the complexity of the mis-disinformation phenomena regarding scientists’ behaviour.

There are two main implications of the results for future research. On one hand, research on this topic should be developed with multidisciplinary teams, combining knowledge of different areas of study. This is not a one-dimensional issue, being mandatory the convergence of different skills. On the other hand, considering scientists as research subjects is essential to understand their specific behaviour towards mis-disinformation and to engage this group in the development of tackling strategies.

It should be also noted that Wilson and Maceviciute’s [56] model regards the underlying motives for the creation, acceptance and dissemination of misinformation, not specifically designed to frame scientists’ information behaviour or misbehaviour. As the authors state, they ‘do not suggest that the model includes all possible motivations and an analysis of a larger body of documents would undoubtedly reveal more’ (p. 498). The use of this model to organise the systematic review presented in this study allowed to perceive that it is based on the scheme of the theoretical framework of Shannon and Weaver’s mathematical theory of information/communication. This train of thought begs to inquire if this categorisation is sufficient for future research concerning scientists’ behaviour and tackling mis-disinformation and to further studies that allow the specification of categories for this (these) subject(s).

5. Conclusion

Providing a systematic literature review, this work aimed to understand how scientists behave towards mis-disinformation and how can they contribute to the development of strategies to counter it. However, the answer to the research questions was strongly conditioned by the size of the final set of publications.

How are scientists coping with misinformation and disinformation? Are they vulnerable to information disorder like everyone else? Or are they able and more prepared to discern and overcome the mis-disinformation pitfalls? Are they ready or interested in elaborating or cooperating in overall strategies to tackle these phenomena? These questions do not have yet enough evidence available to be answered. It is not clear how academic preparation influences the scientists’ behaviour towards mis-disinformation or even the personal behaviour of this professional group in their private life. We still do not have a straight perception of their strengths and fragilities, and it would be relevant to acknowledge both. For instance, the tendency to underestimate the impact of misinformation or to overlook the complexity of the misinformation phenomena is issues far from being disclosed in the literature.

To properly answer RQ1 (What are the main approaches described in the literature concerning scientists’ behaviour towards misinformation and disinformation?), it was used a model depicting the motives underlying the creation, acceptance and dissemination of misinformation [56], namely its three main stages, which provided a framework to our content analysis. This model proved to be useful to the task, as it encompasses the dynamics of information behaviour and misbehaviour. Cross-overing these stages, our analysis also advanced three standing points to analyse scientists’ positions towards mis-disinformation: inside, inside-out and outside-in perspectives. The stage ‘Creation/facilitation’ was the least present in our sample, but ‘Use/rejection/acceptance’ and ‘Dissemination’ were depicted in the literature retrieved. Most of the literature approaches were about inside-out perspectives, meaning that the topic is mainly studied concerning communication and public engagement issues. Little is known about how scientists are coping with mis-disinformation in their private life. The domain of information behaviour concerning mis-disinformation seems also poorly understood.

Regarding RQ2 (Which techniques or strategies are discussed to tackle information disorder?), our findings suggest that preventive and reactive strategies are simultaneously used, confirming the previous studies. A strong appeal to a multidisciplinary effort against mis-disinformation, combining different areas of knowledge, is widely present in the literature.

Finally, about RQ3 (Is there a research gap in including scientists as subjects of research projects concerning the development of information disorder tackling strategies?), the application of these criteria (including literature that empirically studied scientists and excluding other research subjects) led to extract a small final sample, which might indicate the need to further research and the existence of a large research gap.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.