Abstract

The competitive intelligence (CI) construct must be scientifically defined, characterised, empirically validated and accurately measured to grow in science and business. This study aims at elevating the accuracy of the empirical validation of the CI construct suggested and confirmed by Madureira, Popovic and Castelli to serve as the scientific foundation for CI praxis. This construct is selected due to its unmatched recency, thoroughness, universality identified limitations of its empirical validation. We relied on a multistrand design of fully sequential with equivalent status qualitative and quantitative mix-methods followed by the triangulation of the findings and the development of the meta-inferences. Validity, reliability and applicability were tested using computer-aided text analysis and artificial intelligence methods based on 61 in-depth interviews with CI subject matter experts. Contributions to knowledge advancement and relevance to practice derive from the scientific-grade empirical construct validation, providing undisputed levels of accuracy, consistency, applicability, and triangulation of results. This study highlights three critical implications. First, the delimitation of the body of knowledge and recognition of the CI domain serve as the baseline for theory development. Second, the validated construct guarantees reproducibility, replicability and generalisability, laying the foundations for establishing CI science, practice and education. Third, creating a common language and shared understanding will drive the much-claimed definitional consensus. This study thus stands as a foundational pillar in supporting CI praxis in improving decision-making quality and the performance of organisations.

1. Introduction

In a breakneck-paced VUCA World [1], organisations increasingly struggle to sustain their performance. The decreasing average company lifespan on the Standard & Poor 500 Index from 1965 to 2030 provides such evidence [2,3]. In parallel, the PwC 22nd Annual Global CEO Survey [4] identified a critical gap between the data needed to make decisions about the long-term success and durability of the businesses and the ‘comprehensiveness’ of data received on essential business measures. The importance of this data gap is twofold. First, it might lead executives to think that the Big Data volume alone might entail the solution to better decision-making. However, according to Cappa et al. [5], this could not be further from the truth. The promise of Big Data rests on ‘understanding how the volume, variety, and veracity dimensions function to ensure it is a valuable resource for a firm’. Second, the gap has not reduced between 2009 and 2019 [4] despite the extensive research on data analytics, business analytics and big data analytics [6–10]. As such, the current zeitgeist overwhelmingly claims for a new approach. O.E. Wilson [11] best summarises the situation stating, ‘… we are drowning in information, while starving for wisdom. The world henceforth will be run by synthesisers, people able to put together the right information at the right time, think critically about it, and make important choices wisely’. Organisations must act wisely on intelligence rather than data, information or knowledge [12–19]. These latter have increasingly lost prominence to intelligence in sustaining organisational performance over the last few decades [20]. Prescott, Juhari and Stephens, and Marcial [20–22] synthesised the emergence of competitive intelligence (CI) as the kingpin to unlock Wilson’s timely and wise decision-making and Cappa et al. business value within a resource-based view theory of the firm [23–26]. But what exactly is CI, what does it entail and what are the recognised guiding principles advancing its practice and theory advancement?

The definition of the CI construct [27] should specify the conceptual underpinnings guiding its praxis – the practice of skill, art and science [28]. Despite scholars and practitioners having extensively defined CI for over a century, the lack of consensus led to the absence of a body of knowledge (CI BoK). The resulting void compromised the effectuation of the CI discipline, curricula and profession. Specifically, this inherent fuzziness impedes the translation into effective information management systems supporting CI capabilities, functions and programmes, negatively impacting decision-making and organisational performance. As a result, the practical, scholarly, educational, policy development and societal impact of CI have been considerably hindered [29]. Therefore, researching the empirical validity and application of a unified view of CI is a priority due to the severe impact on practitioners, researchers, executives, decision-makers, educators, students and policymakers.

Prior research addressing this issue is limited to a single scientific and empirically validated definition of CI. Madureira et al. defined CI as ‘the process and forward-looking practices used in producing knowledge on the competitive environment to improve organisational performance’ [30,31]. However, although trailblazing, the quality of this empirical evidence has some self-identified limitations [31]: first, the set of 20 in-depth interviews is below the recommended sample relevance threshold of above 30 [32]; second, the sample is potentially incomplete in thoroughly addressing all stakeholder cohorts and skewed regarding its geographical scope; and third, there is no triangulation of the results. Therefore, improving the validity, reliability and applicability of the CI unified view [30] is a critical research gap of the utmost importance for grounding future theory, practice and education.

This study aims to fill this gap through a conclusive empirical validation and triangulation of the CI construct proposed by Madureira et al. [31]. Our hypothesis is that a highly accurate scientific-grade empirical validation is necessary for delimitating the CI domain and BoK. These consensuses allow for creating a shared understanding and common language that will drive adoption and knowledge advancement in research, teaching and business. Therefore, the findings are expected to advance the theory by providing a solid scientific base for knowledge advancement and fostering future research and curriculum development for CI education. Business-wise, we foresee the establishment of the CI profession, function and programmes to improve decision-making quality and organisational performance. The findings advance CI science, education, business and society.

In researching this argument, this article starts with a theoretical framing, followed by a thorough description of the methodology, data improvements and methods used. Then, we share the results and their detailed interpretation. In the following chapter, we develop and discuss the meta-inferences, namely, their impact on the five validated dimensions of the CI construct and the knowledge advancement and relevance to practice framed by the four sets [33]. Finally, this article points out the limitations and future research paths.

2. Theoretical framing

2.1. CI construct

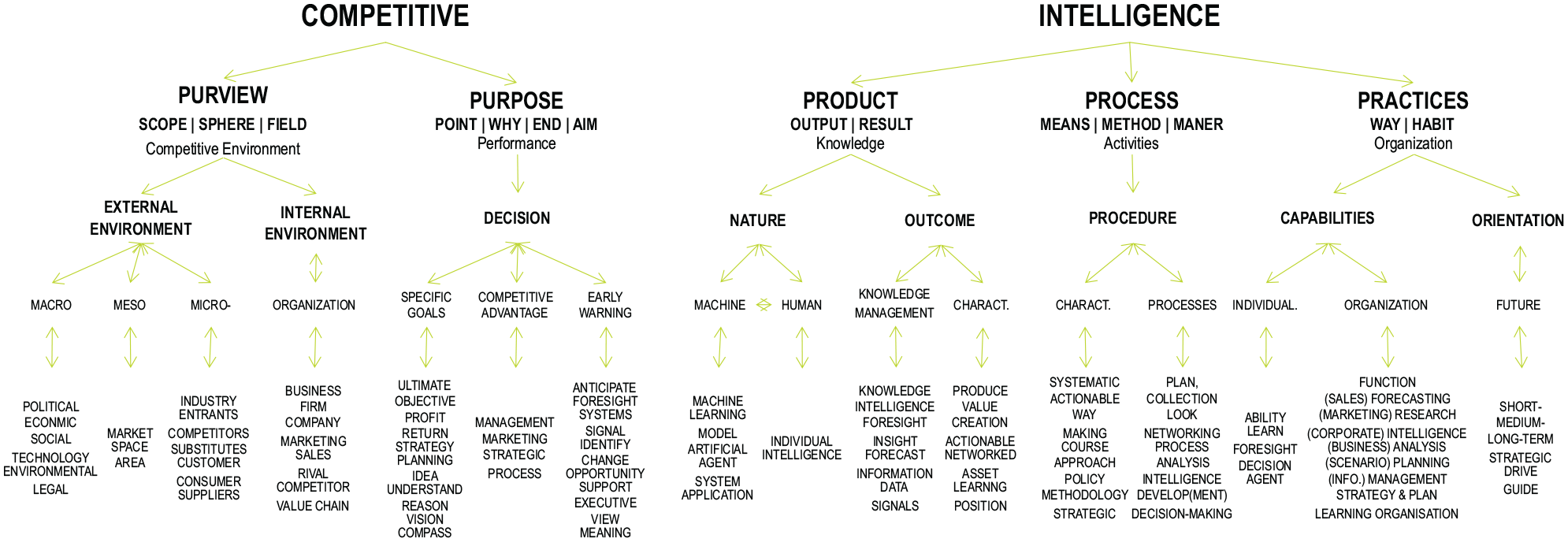

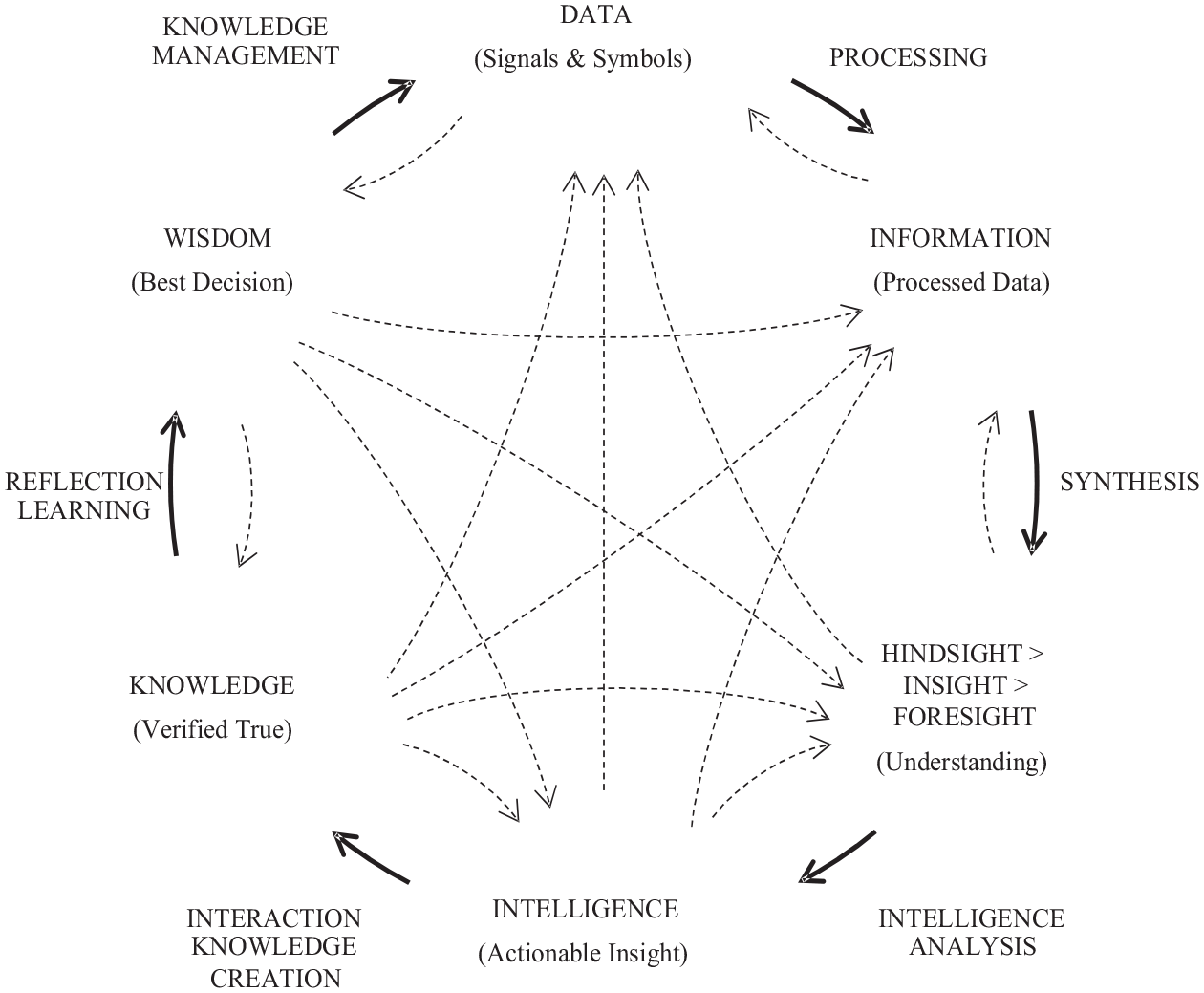

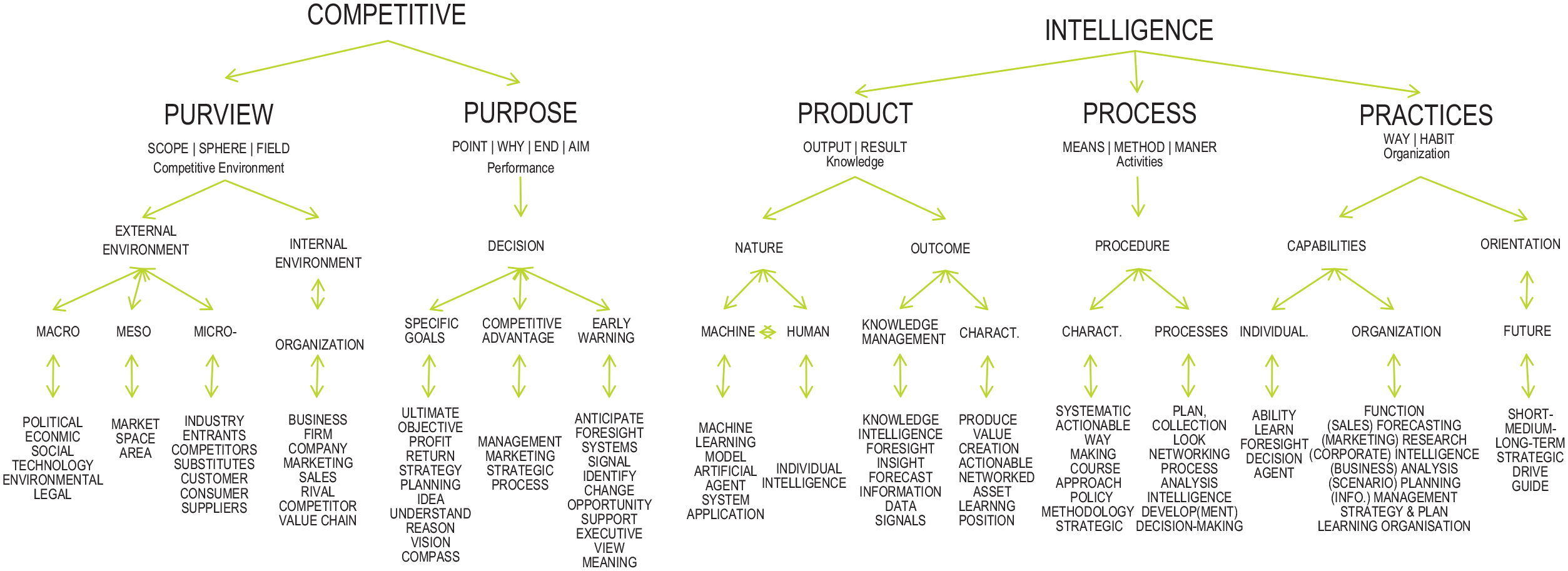

The CI construct has been defined in multiple ways to address specific contexts, resulting in a patchwork of different perspectives [30,34]. Madureira et al. [30] identified over 800. Since then, in exactly 2 years, we identified 26 new CI-termed definitions originated in various types of literature and fields of study: CI [35–44], Technology [45], Big Data Analytics [9], Entrepreneurship [46], National Intelligence [47], Library and Information Science [48], Economics and Finance [49,50] and Social Media [51]. The myriad of narrow scope definitions provides incomplete and overlapping perspectives of the CI construct across its five dimensions – the 5Ps [30]. Considering the product dimension as an example (Figure 1), the definitional spectrum ranges from: data (e.g. Big Data [5–7,52], Data Science [53,54], Market Research [55,56]), to information (e.g. Big Data Analytics [8,9,57,58], Information Science [10,16,17,59]), to insight (e.g. Competitive Insight [60,61], Futures & Foresight Science [62–64]), to intelligence (e.g. Decision Intelligence [65–67], BI [68,69], artificial intelligence (AI) [70,71], machine learning (ML) [72,73], generative artificial intelligence (GAI) [74,75]) to knowledge (e.g. Knowledge Management [76,77]) and wisdom [16,18,78,79].

CI product lifecycle [19].

The CI construct is the interdisciplinary glue as self-evident in the unified view and modular definition [30] (cf. Figure 6). As such, it leverages the exponential access to Big Data [80] building on top of the latest research from Data, Computer and Information Sciences, related technologies (e.g. AI, ML, 5G, Internet of Things (IoT)) and the humanities. This integrative role exponentiates the latest developments in disciplines such as Big Data and Business Analytics or Generative Artificial Intelligence to develop actionable insights (Intelligence). This actionability differentiates CI from related fields, supporting superior decision-making and strategising. Consequently, CI allows organisations to successfully navigate the constantly changing competitive environment in becoming learning organisations. Therefore, CI plays an intermediation role between economic development and its factors [40].

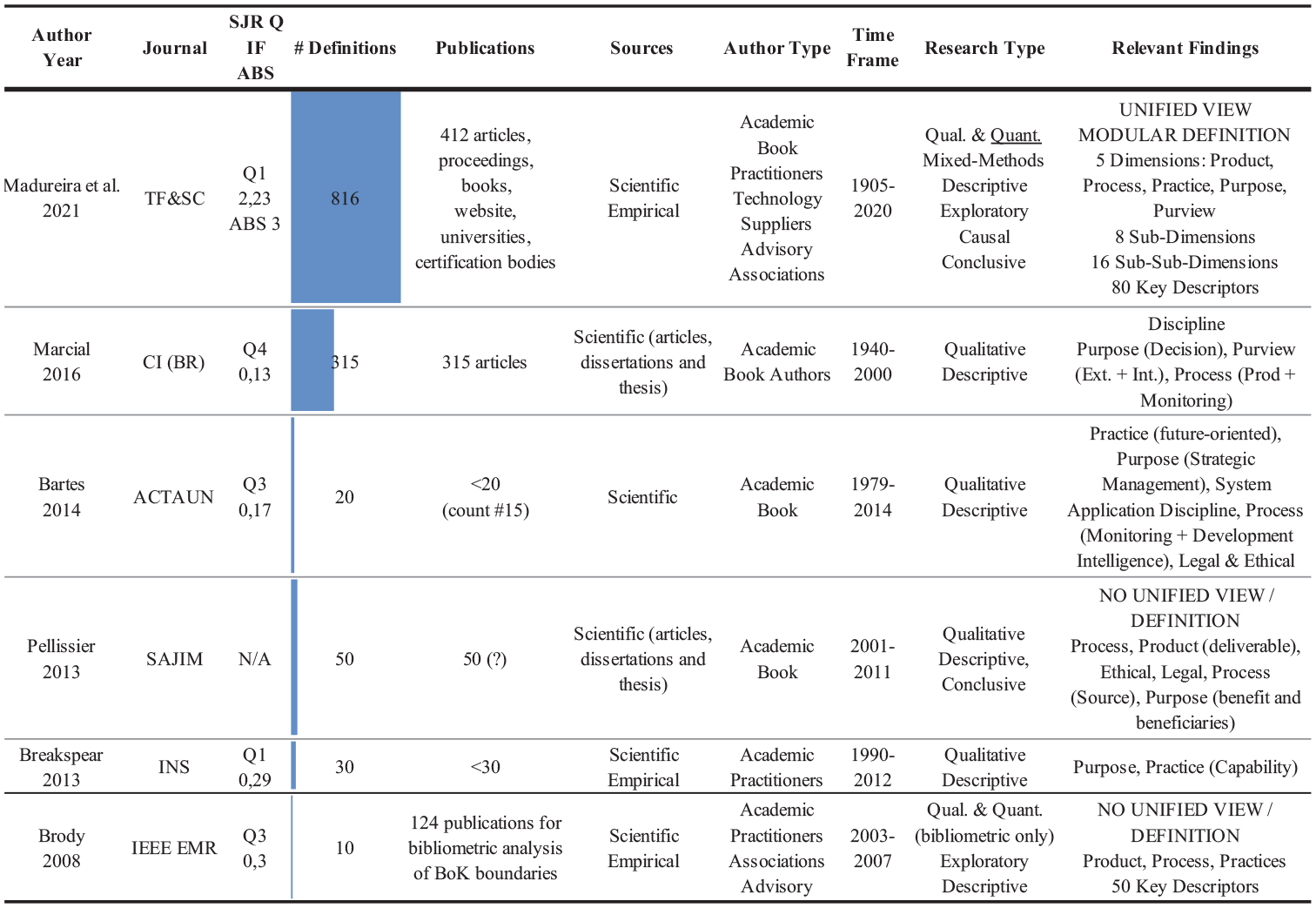

However, the lack of consensus on the CI construct scatters these relevant contributions across related research fields beyond Bradford’s Law [81], hindering its practice and theory advancement. Identifying this conceptual ambiguity led several authors from different disciplines to claim the development of a consensual definition of CI [34,37,47–49,82]. To the present day, there have been only six attempts to develop a unified view of the CI construct [22,30,83–86]. Among these, Madureira et al. [30] offer the greatest recency, thoroughness (dimensions, sub-dimensions and descriptors) and breadth of scope (816 definitions; fields of knowledge; business, science and education worlds; academic and practitioner cohorts). Moreover, the proposed modular definition is the least subjective, with CI defining dimensions deriving from a quantitative mathematical method (Figure 2). Therefore, the chosen definition of CI for empirical validation is ‘the process and forward-looking practices used in producing knowledge on the competitive environment to improve organisational performance’ [30].

Overview of universal CI definitions characteristics.

2.2. CI construct empirical validation

The critical challenges of CI theory construction are accuracy (validity), consistency (reliability) and relevance to practice (applicability) [87,88]. Overall, construct validity – the ‘approximate truth of the conclusion or inference that the operationalisation accurately reflects its construct’ [89] –‘is one of the most significant advances of modern measurement theory and practice’ [90]. However, prior research related to the CI construct empirical validation is limited to a single effort by Madureira et al. with self-identified shortcomings [31]. Noticeably, the sample size of subject matter experts in in-depth interviews is below the recognised threshold for scientific relevance [32]. This fact speaks to the representativeness of the different stakeholder cohorts, geographic scope and construct accuracy. As a result, the overall construct validity and subsequent adoption are at risk. Therefore, increasing the CI construct empirical validation accuracy and demonstrating its relevance to practice is paramount.

3. Materials and methods

We assume that reality is continuously interpreted hence subscribing to subjectivism. This ontological perspective aligns with the multitude of CI interpretations deriving from the need to define its practice across time and space. Epistemologically, this study uses pragmatism [91] to determine the concepts and methods; therefore, the approach used is the one that best solves the research problem. The reasoning used to connect empirical data and theory logically is abductive [92,93]. Axiology-wise, the authors intend to develop an unbiased perspective despite the intangibility of the CI construct. This approach recognises that understandings and perceptions filter the actual reality. Regardless, objective facts are equally important since these understandings and perceptions can be quantised through quantitative methods such as natural language processing (NLP). Observations translate into theories, followed by their assessment through action. The inferences result from the triangulation of the results from different qualitative and quantitative theoretical and data approaches [93,94].

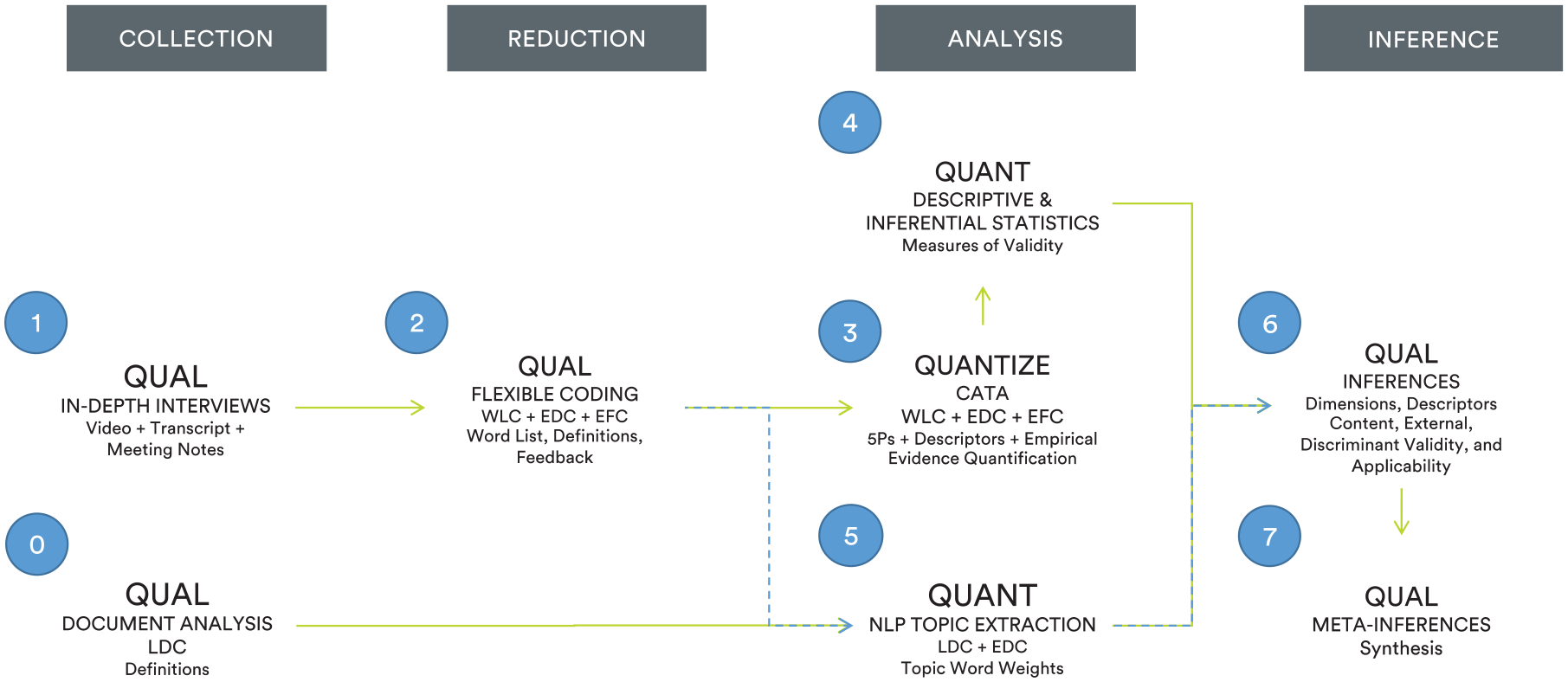

The investigation confirms the CI construct and explores its impact in practice. Based on a multiple-strand design with fully sequential equivalent status quantitative and qualitative mix-methods [91], we chose content analysis to apprehend and assess the CI latent construct. This systematic, objective, quantitative research method reliably classifies and categorises communication features from linguistic content [95]. In addition, the study follows two main computational intelligence methods for CI construct validation. Primarily, we used Short et al.’s [96] methodology for computer-aided text analysis (CATA). This method guarantees appropriate psychometric measurement by combining conceptual and empirical methods. Secondarily, the triangulation method follows the same procedure Madureira et al. [30] used in developing the original CI construct. This quantitative analysis uses NLP and topic extraction to identify the dimensions and descriptors that experts addressed in their in-depth interviews, which allows for assessing the CI construct reliability. Finally, qualitative meta-inferences are derived by integrating the results from the validation procedure with the empirical expertise shared by experts. A graphical overview of the overall methodology is shown in Figure 3.

Study research design: methodological and data sets overview.

3.1. Data collection

We started by sourcing the original Literature Definition Corpus (LDC N = 816) from Madureira et al. [30]. This rich data set was pivotal for testing construct reliability and validity, especially its dimensionality.

The data collection specific to this study produced 61 interviews, unparalleled in previous CI studies regarding the number and profile of participants, doubling the recommended threshold. We used in-depth interviews for greater data depth, discovery, value and speed [97]. Moreover, this approach enables us to factor in the practical expertise pivotal for evaluating the CI construct’s application in practice [88]. We used Ritchie’s [98] method, translating into the following steps: sampling strategy definition, interview protocol development, interviewing and data analysis.

3.1.1. Sample selection and protocol

A considerable effort was made to obtain a large sample to guarantee the impeachable accuracy of the results and inferences. A purposive and concurrent sampling strategy supported the data collection. The main objective was to secure the representativeness of the participants in recognition of their thought leadership, application in practice and cohort archetypal. Experts’ profile varies in demography, education, training, title, profession, experience and organisational levels in CI. The study covers all cohorts, namely, CI producers and users, as identified by Madureira et al. [31]. The selected sample followed several criteria: knowledge and awareness of the CI theory and practice recognised thought leadership (e.g. Competitive Intelligence Fellowship), coverage of the different contexts (e.g. industries, academia, consulting) and type of expert (e.g. consultants, scholars, executives). Given the sample selection criteria, most notably the multidisciplinary nature of CI, the sample attributes are heterogeneous, representing different cohorts: Consultants, Scholars, Authors, Educators, Thought Leaders, Keynote Speakers, Community leaders, CI Technology Platform professionals, Practitioners, CI or Intelligence Professionals (CIP), Business Executives and Consultants. The experts cover the five continents and are identically distributed across the different cohorts (Table 1).

List of subject matter experts, their country of activity and respective cohorts.

CIP: Intelligence Professional

The overall process took a full year ending in February 2022. All interviews were online, synchronous, relying on video communication tools, over approximately 1 h, and uploaded into ATLAS.ti 8.4.5 for content analysis. The experts agreed to be recorded and named as contributors but not cited verbatim. The semi-structured questions followed the protocol order, but we were flexible to achieve a better conversational flow [99]. The interviewer started by establishing rapport allowing for unbiased and richer feedback [100]. Next, the interviewer probed experts about what they consider to be CI today, followed by a working definition and its key dimensions. Next, the experts shared their feedback on the definition, dimensions and descriptors proposed by Madureira et al. [30]. Finally, time allowing, experts were asked to comment on the contributions and foreseen impacts of this study. Clarification was requested for further meaning, gap-filling or allowing for unrestricted feedback [101].

3.2. Data reduction: CATA and flexible coding

Data reduction is of pivotal importance to qualitative analysis [102], and more so, to allow the following quantitative strand of this study. We chose a CATA methodology based on deductive and factual coding – using actual and confirmative feedback from experts. We also used the flexible coding method as it ‘supports rigorous, transparent and flexible analysis’, perfectly fitting both the source (in-depth interviews) and the tool (qualitative data analysis software) of this research, according to Deterding and Waters [32]. This coding type contributes to the confirmatory pursuit of this study while mitigating potential issues of subjectivity and inter-rater reliability. Basically, this approach quantised the in-depth expert interviews, considerably reducing the content through automated word frequency computation. We considered the word as the unit of analysis for quantising the transcripts by applying the methodology twofold: to convert the terms from the visual abstract of Madureira et al. [30], creating the coding framework, and statistically summarise the analytic codes present in the CI construct definitions and feedback shared by experts [103]. The overall process originated three additional data sets in a total of four. First, the ‘dimension word lists’ data set (DWLs, N = 5) was imported from Madureira et al. [31] to benchmark the similarity between the expert’s interview transcript and the construct dimensions word lists. The ‘experts feedback corpus’ (EFC, N = 61) is the list of quantised terms that confirms the proposed CI construct per dimension. The final data set is the ‘experts definition corpus’ (EDC, N = 61), an individualised expert list of CI working definitions.

3.3. Data analysis: empirical construct validation

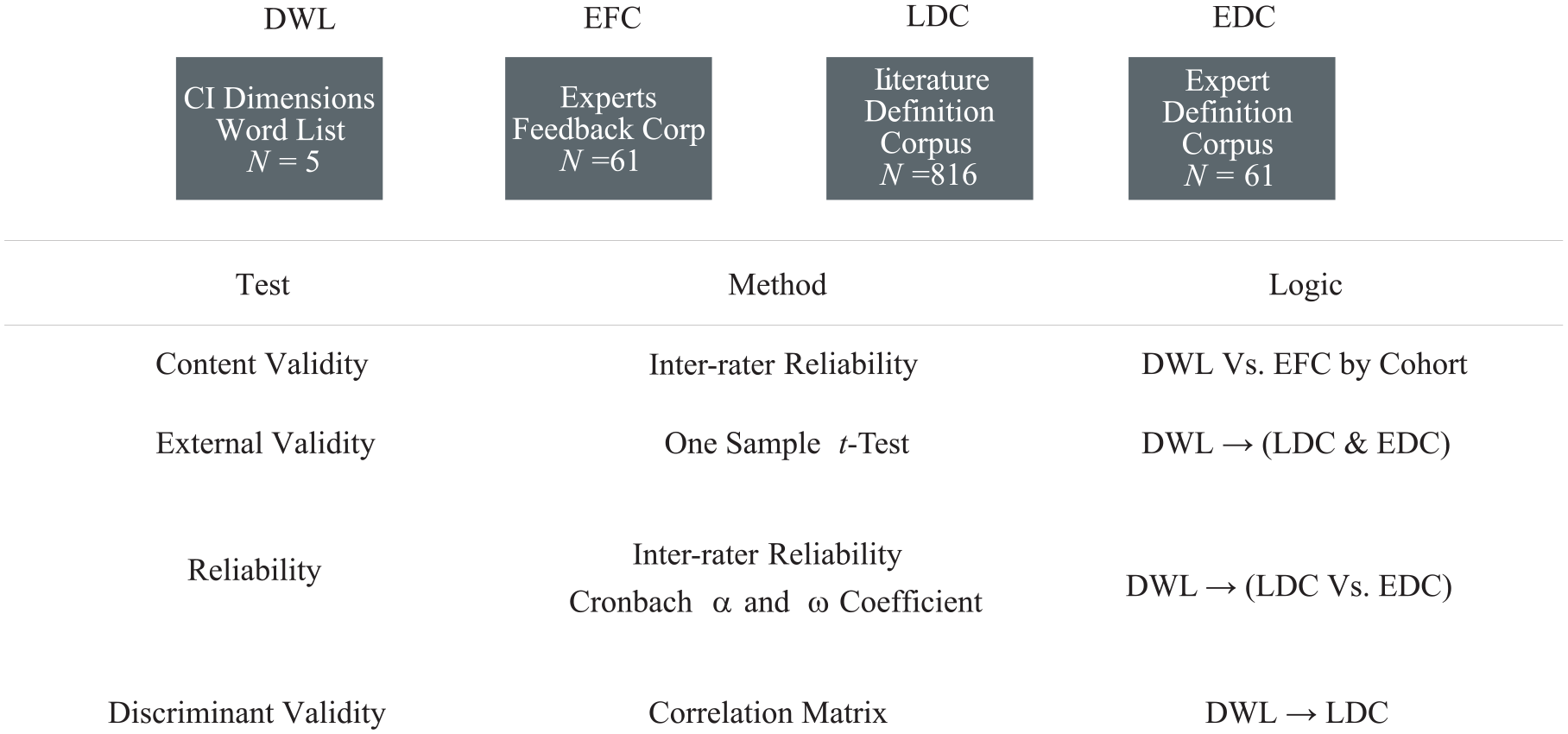

This section focuses on the validity, reliability and applicability of the CI construct to address the two most pivotal issues in research: rigour and relevance to the practice [88,89]. Rigour refers to guaranteeing the validation of the propositions as the consequence of applying a scientifically sound methodology [89]. Relevance refers to the internalisation of the research findings into praxis, namely, its importance (focused on needs and proposing a solution), accessibility (understandable) and applicability (concrete recommendations) [88]. Figure 4 summarises the overall procedure, data sets, validity tests, methods and respective logic.

Procedure and data sets for the construct validation.

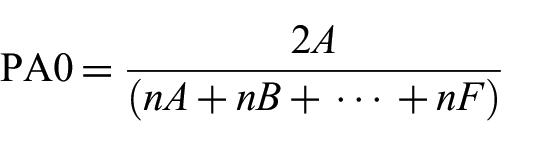

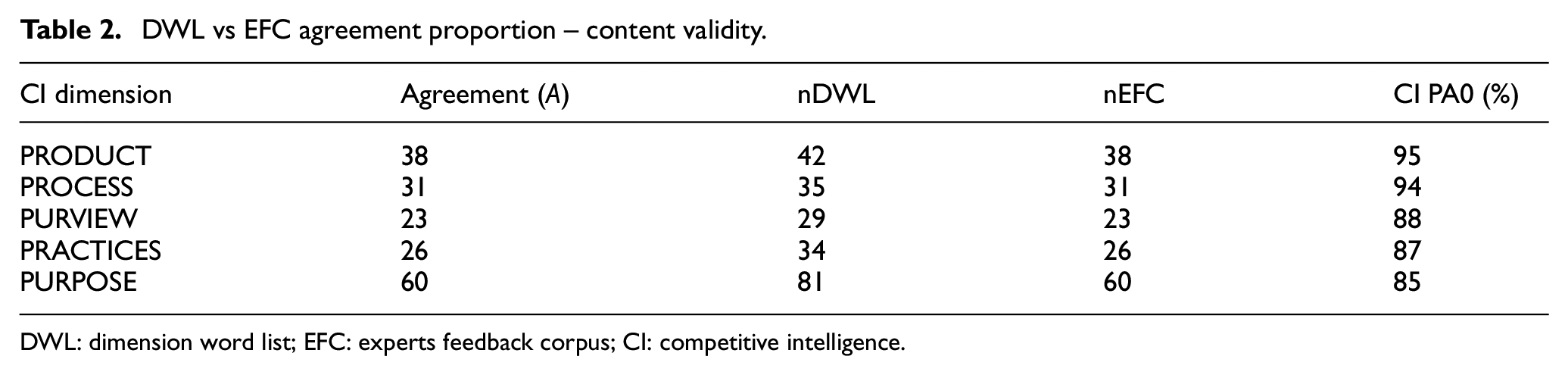

3.3.1. Content validity testing

Given that CI construct working definition had already been chosen, we initiated the content validity by assessing the CI dimensionality per Short et al.’s [96] procedure, building on Madureira et al.’s [31] validation study for a smaller sample allowing us to skip a few redundant steps. We started by comparing the expert’s opinion (EFC) with the word lists (DWL). We then computed the inter-rater agreement using the formula [104]

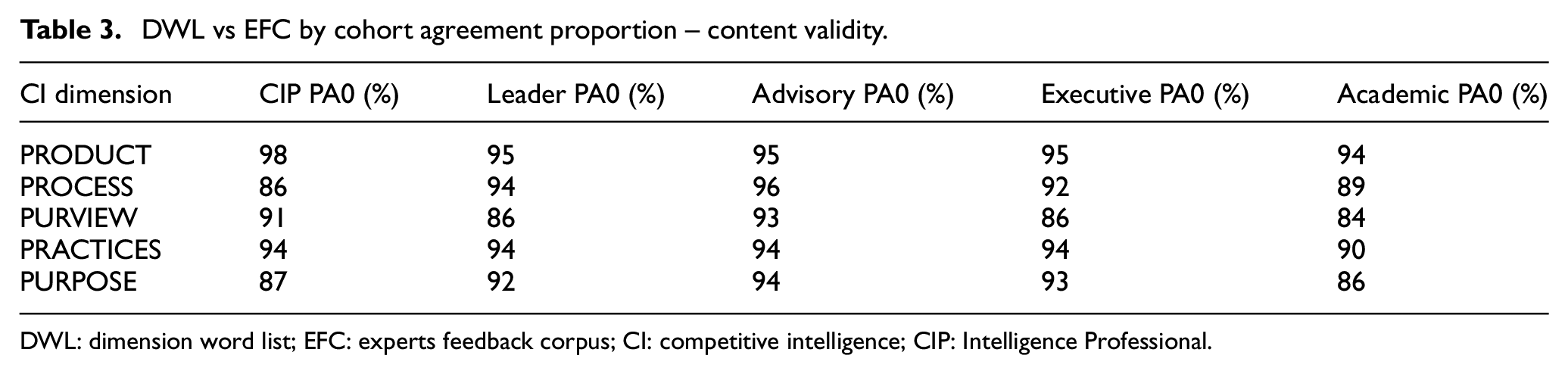

In the formula, PA0 measures the agreement proportion; A is the agreements’ count between all the raters or data sets, and nA, nB, …, and nF are the specific cohort or data set count of coded words per dimension. The comparison was also performed for each stakeholder cohort, reinforcing the content validity check (Tables 2 and 3).

DWL vs EFC agreement proportion – content validity.

DWL: dimension word list; EFC: experts feedback corpus; CI: competitive intelligence.

DWL vs EFC by cohort agreement proportion – content validity.

DWL: dimension word list; EFC: experts feedback corpus; CI: competitive intelligence; CIP: Intelligence Professional.

3.3.2. External validity testing

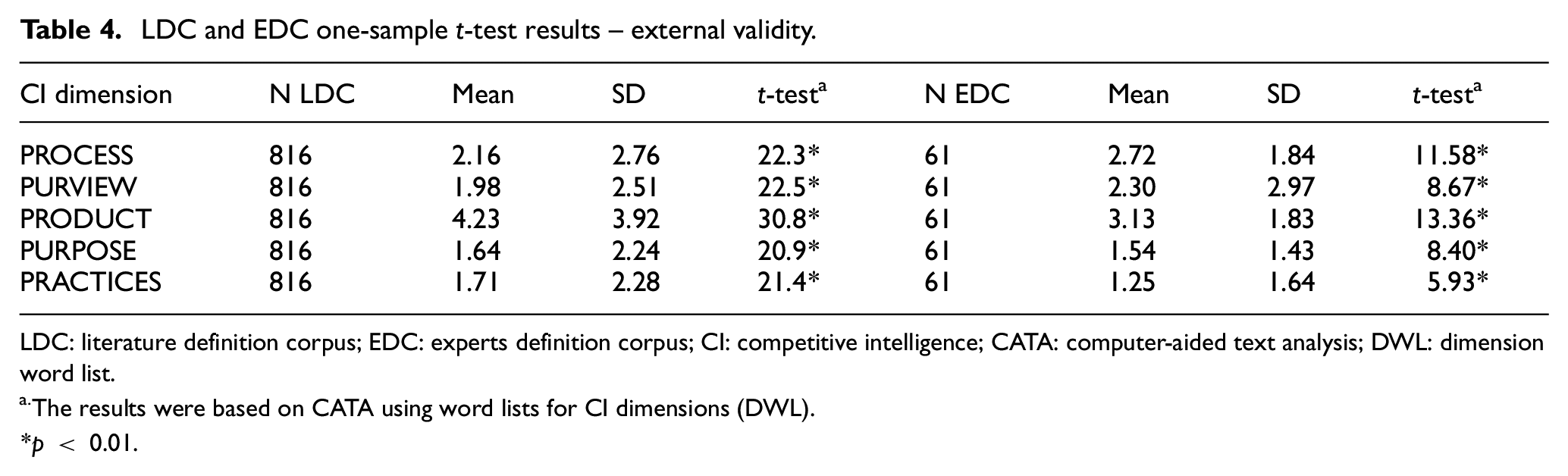

In continuation of Short et al.’s [96] recommendations, we selected the EDC and the LDC as the appropriate samples with the narrative texts for analysis, the latter serving as a baseline for comparison. The one-sample t-test (compared with a test statistic of zero) results highlight the presence of consistent language in expert’s working definitions (EDC) with the literature review definition of CI (LDC) (Table 4). This step assesses the similarity of the construct definitions in two different contexts, in literature vis-à-vis practice.

LDC and EDC one-sample t-test results – external validity.

LDC: literature definition corpus; EDC: experts definition corpus; CI: competitive intelligence; CATA: computer-aided text analysis; DWL: dimension word list.

The results were based on CATA using word lists for CI dimensions (DWL).

p < 0.01.

3.3.3. Reliability testing

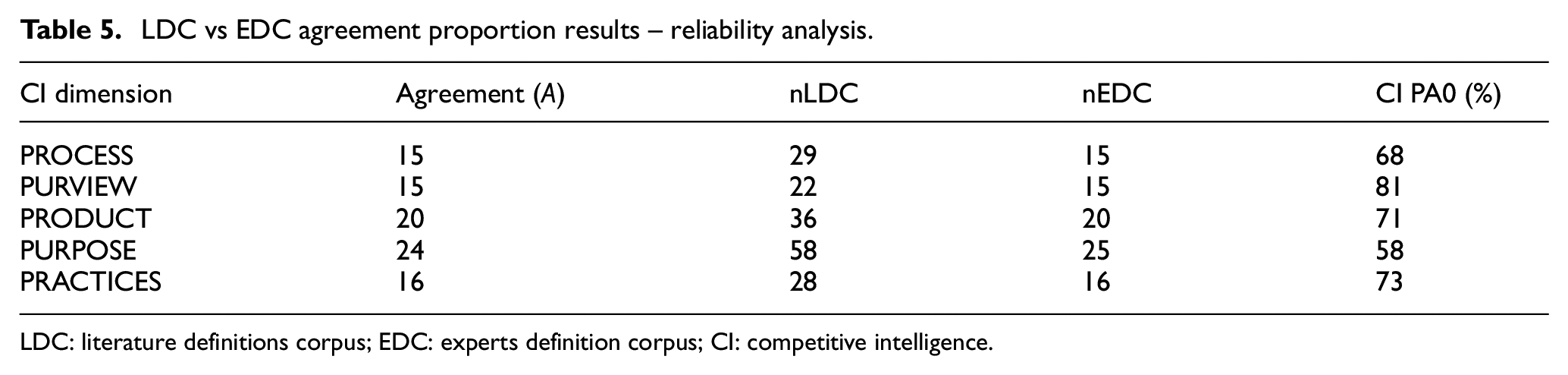

To measure the CI construct operationalisation in different contexts, Short et al. [96] recommend comparing the word counts between the LDC and EDC data sets using Holsti’s [104] inter-rater reliability for similarity (Table 5). This procedure assessed the similitude of discourses between literature definitions and experts’ working definitions.

LDC vs EDC agreement proportion results – reliability analysis.

LDC: literature definitions corpus; EDC: experts definition corpus; CI: competitive intelligence.

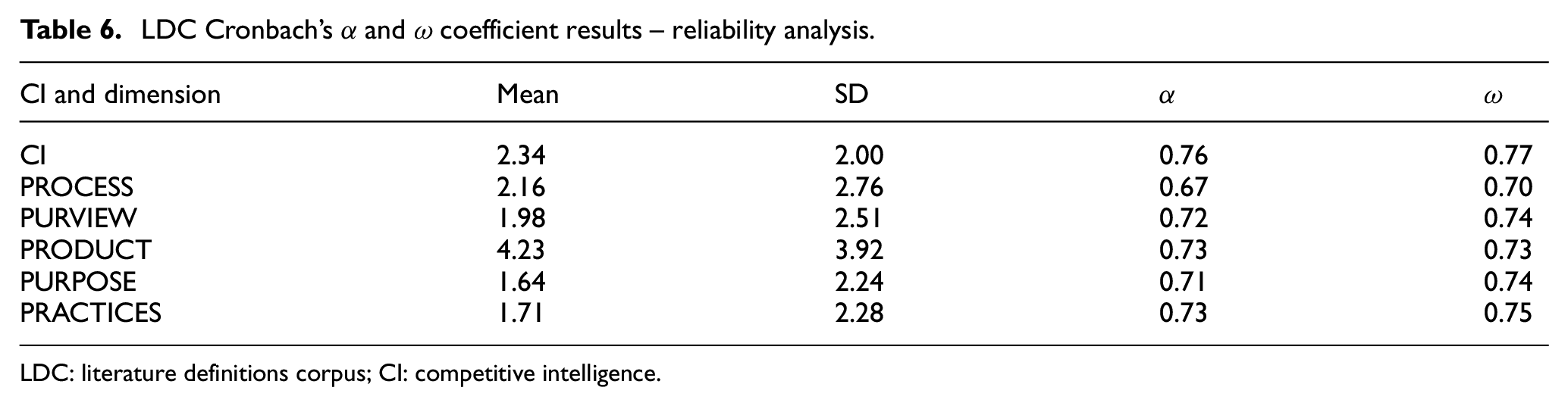

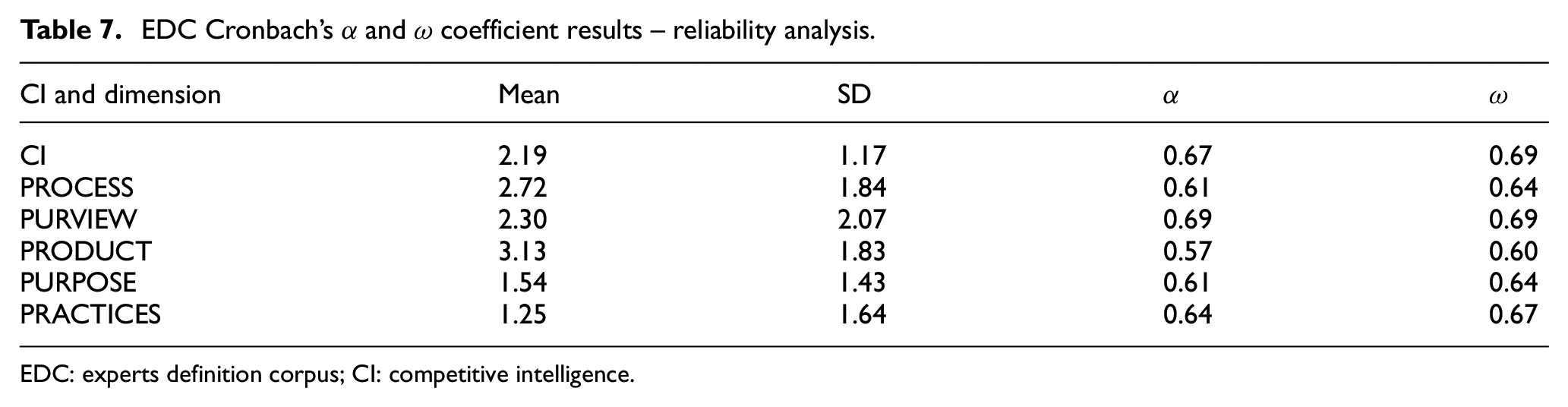

Incrementally, we computed the Cronbach’s (α) and omega (ω) coefficients for the construct and its dimensions relating to the LDC and EDC data sets. The coefficients allow for measuring the part of the total construct variance expounded by a common source and the level of each dimension defined by the construct. Furthermore, according to Nunnally [105], calculating ω strengthens the construct’s acceptability by relaxing the tau-equivalent model assumption (Tables 6 and 7).

LDC Cronbach’s α and ω coefficient results – reliability analysis.

LDC: literature definitions corpus; CI: competitive intelligence.

EDC Cronbach’s α and ω coefficient results – reliability analysis.

EDC: experts definition corpus; CI: competitive intelligence.

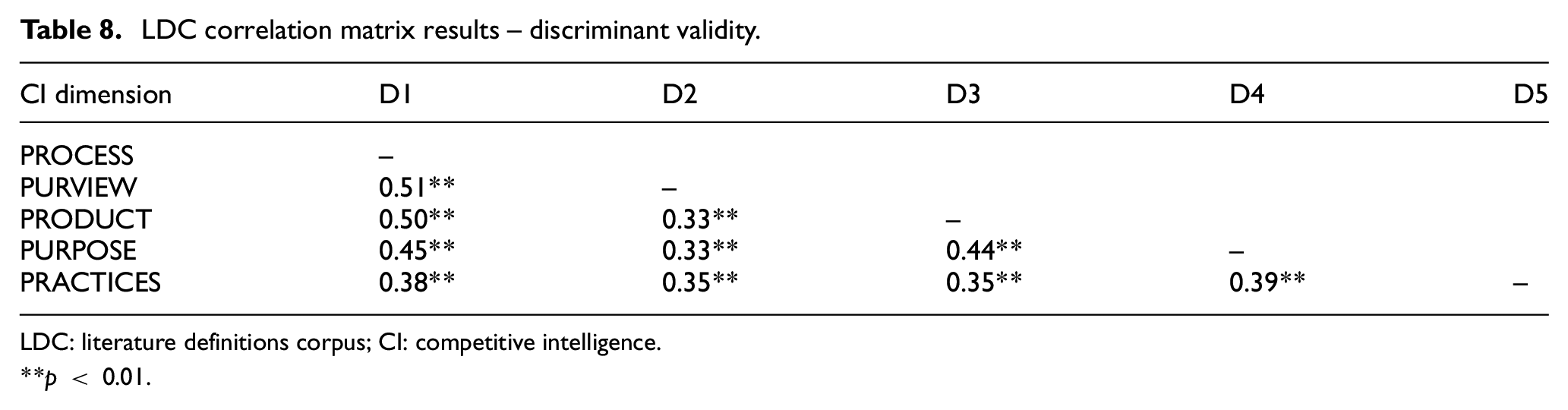

3.3.4. Discriminant validity testing

Discriminant validity assesses the dimensionality of the CI construct. The measurement is twofold: how many words of the same dimension are related and how many words from different dimensions are uncorrelated. The computation of the LDC correlation matrix (Table 8) allows us to assess the dimensionality of the construct as per Short et al.’s [96] recommended procedure.

LDC correlation matrix results – discriminant validity.

LDC: literature definitions corpus; CI: competitive intelligence.

p < 0.01.

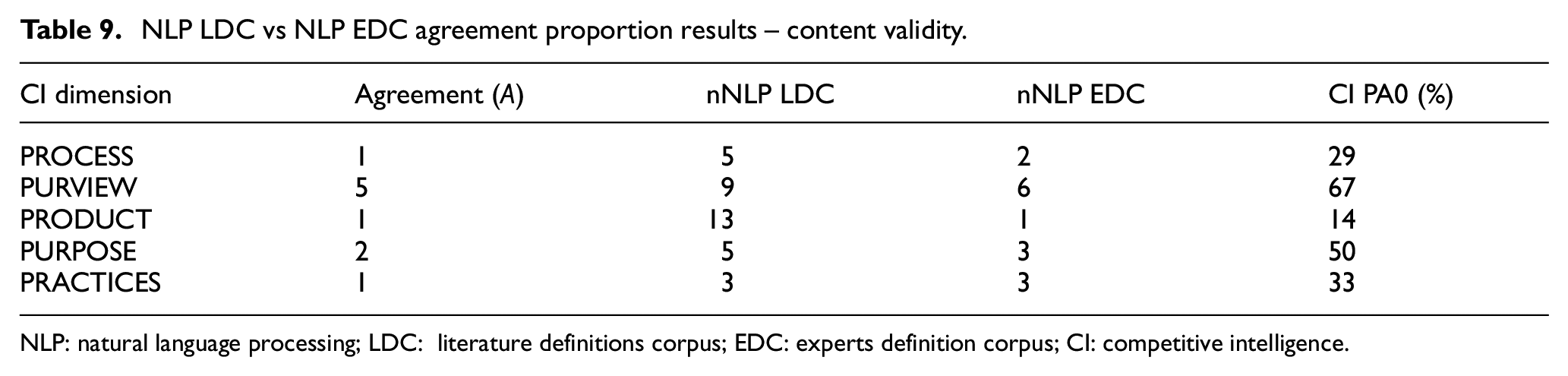

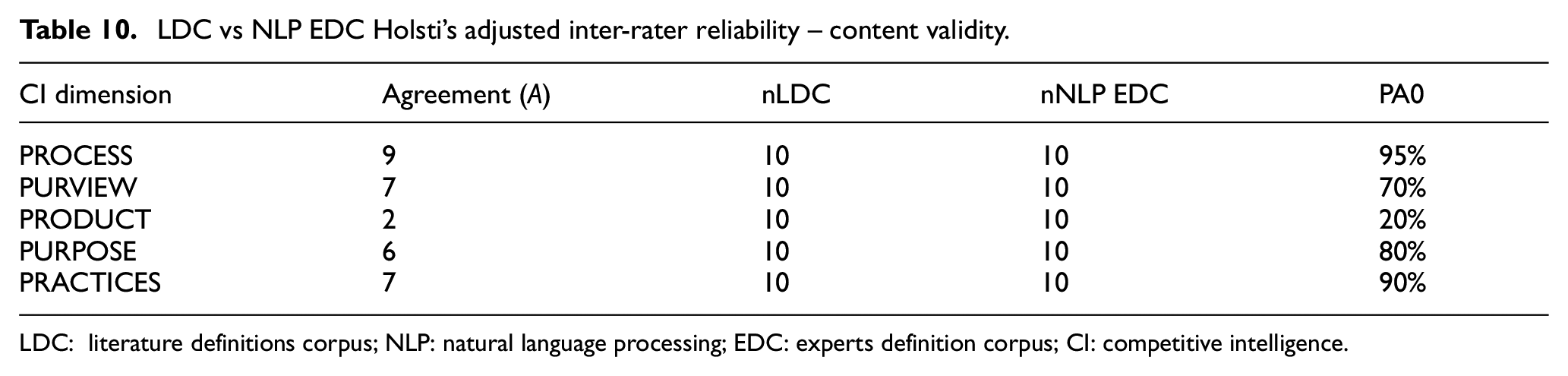

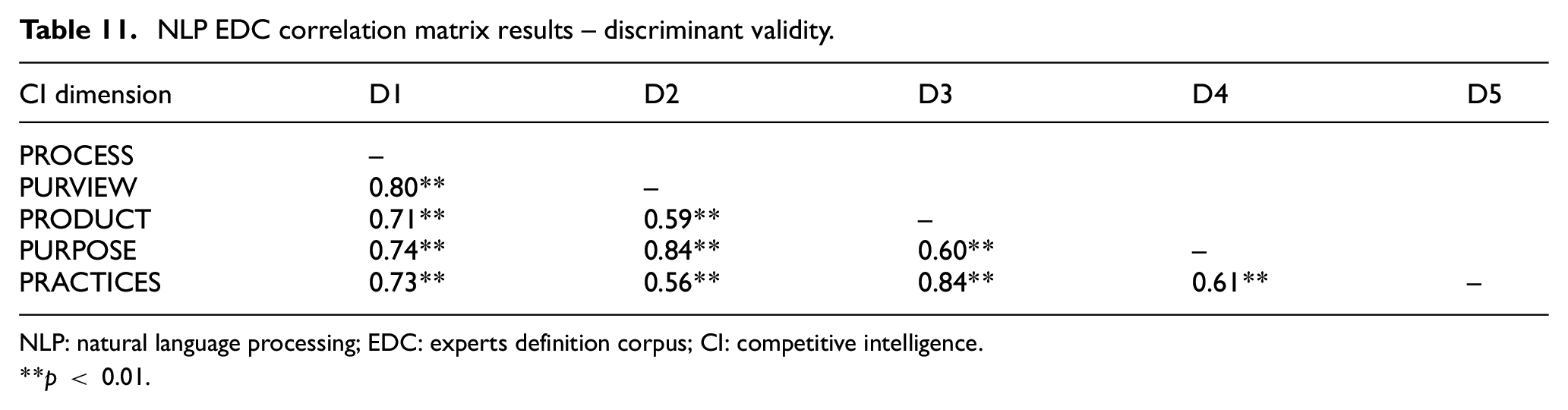

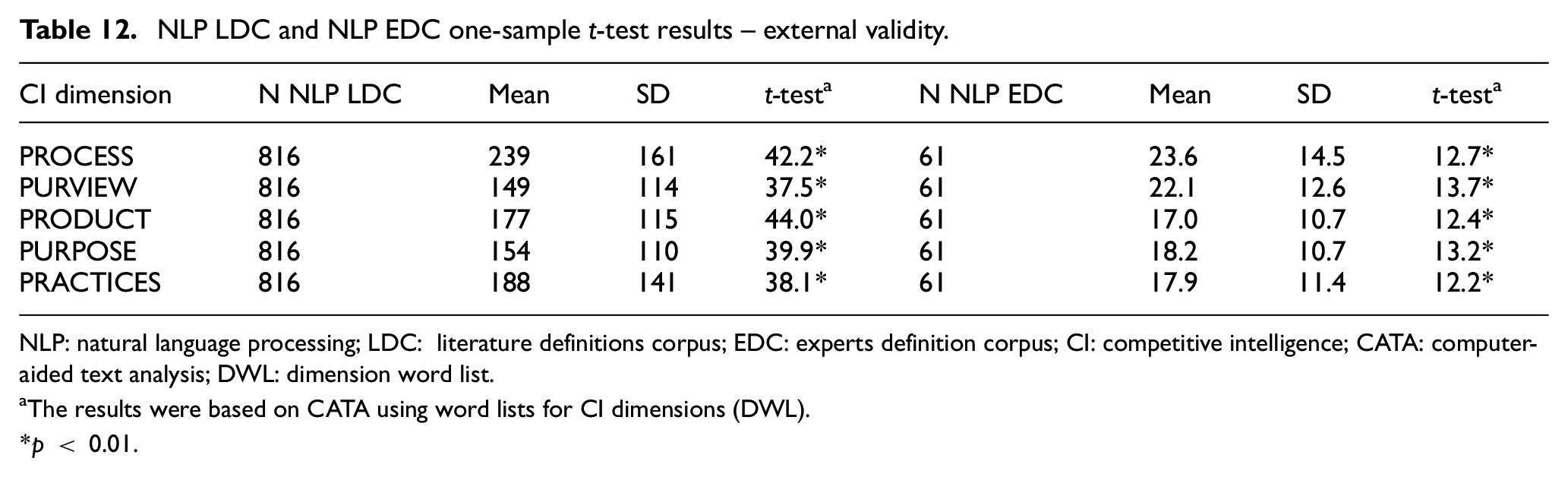

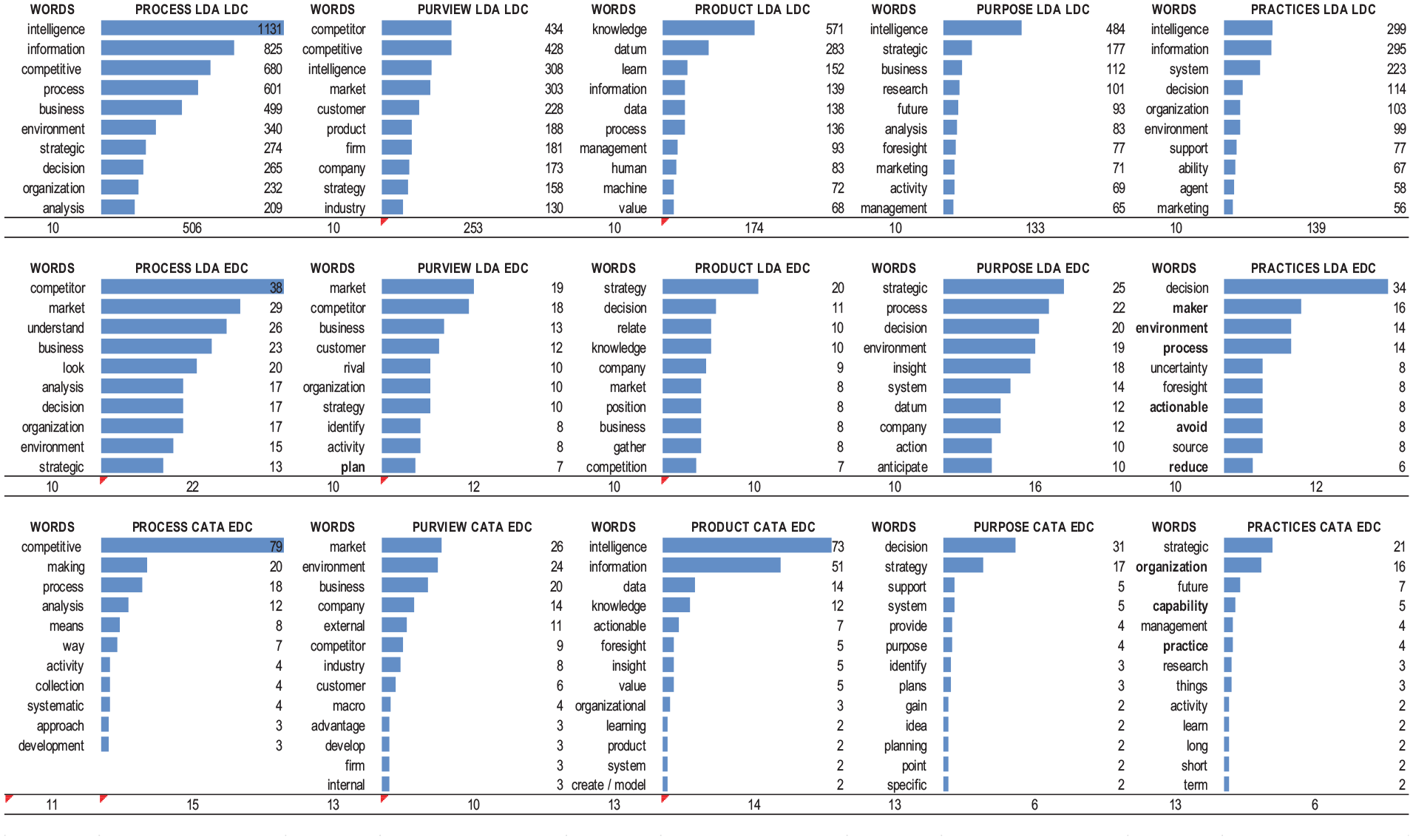

3.3.5. Construct empirical validity triangulation

We made further diligence vis-à-vis the existing empirical validation of the CI construct [31] by triangulating the procedure using the LDC and EDC data sets. Applying the same methodology (NLP LDA) used to develop the original CI construct [30] substantially increases the robustness and richness of the findings. First, we pre-set the number of topics to five, the same as the construct dimensions under validation (5Ps). We then compared the results of the NLP EDC, and the original LDC data sets using Holsti’s [104] formula to evaluate the content validity (Table 9). Considering that we are comparing two data sets with different scopes of vocabulary (scientific literature and working definitions), we adjusted the computation of Holsti’s [104] formula. The new calculation accounted for the top 10 keywords of each data set and accommodated for synonymous. For example, company, firm and organisation are considered the same keyword, hence counting for that specific dimension’s agreement (A) (Table 10). Finally, we triangulated discriminant validity using the NLP EDC correlation matrix and the external validity using the one-sample t-tests for LDC and NLP EDC resulting in the application of the original NLP LDA method (Tables 11 and 12).

NLP LDC vs NLP EDC agreement proportion results – content validity.

NLP: natural language processing; LDC: literature definitions corpus; EDC: experts definition corpus; CI: competitive intelligence.

LDC vs NLP EDC Holsti’s adjusted inter-rater reliability – content validity.

LDC: literature definitions corpus; NLP: natural language processing; EDC: experts definition corpus; CI: competitive intelligence.

NLP EDC correlation matrix results – discriminant validity.

NLP: natural language processing; EDC: experts definition corpus; CI: competitive intelligence.

p < 0.01.

NLP LDC and NLP EDC one-sample t-test results – external validity.

NLP: natural language processing; LDC: literature definitions corpus; EDC: experts definition corpus; CI: competitive intelligence; CATA: computer-aided text analysis; DWL: dimension word list.

The results were based on CATA using word lists for CI dimensions (DWL).

p < 0.01.

4. Results and inferences

We expected to empirically validate the CI construct using Short et al.’s [96] procedure and triangulate this empirical validation with the NLP LDA procedure used to develop the CI construct under validation [30]. The results corroborated our expectations by significantly increasing the rigour and guaranteeing scientific significance while confirming the relevance to practice. The study outcomes should thus drive the future adoption of CI in business and academia.

4.1. Results detailed interpretation

The results confirm content validity, whichever perspective and stakeholder cohort we consider since values for all dimensions are above 0.80 (Tables 2 and 3). Inter-rater reliability coefficients have no consensual heuristic for acceptance. Regardless, Riffe et al. [106] and Krippendorff proposed coefficients greater than 0.80, while Ellis [107] relaxed the threshold to values between 0.75 and 0.80, indicative of high reliability.

We consider the t-test results (LDC and EDC) to confirm the CI construct external validity, indicating consistent rhetoric with the construct definition and all dimensions being significant and differing from zero (Table 4).

The LDC and EDC Holsti’s coefficients for process, product and purpose dimensions display moderate, while process and practice dimensions show high inter-rater reliability (Table 5). Most LDC Cronbach’s α and the ω coefficients results are between 0.70 and 0.80 above the generally agreed threshold for acceptance of 0.70, showing increased reliability and internal consistency (Table 6). Furthermore, the EDC data set also displays good reliability (Table 7). CI construct discriminant validity is confirmed as the LDC results for the correlation between dimensions showcase an optimal level of close to, but no more than, 0.40 (p < 0.01) [96] (Table 8). In regard to the triangulation of empirical validation results, the application of the original NLP LDA model [30], a type of principal component analysis [108], guarantees a priori the discriminant, internal and external validities, as well as reliability. The content validity is also confirmed, although with some caveats. The adjusted Holsti’s inter-rater reliability results (LDC vis-à-vis EDC) are mostly above 0.80, except for product and purview (Tables 9 and 10). We anticipated these results when pre-setting the number of topics to match the five dimensions of the NLP LDA model. For a redundancy check, the Cronbach’s α and ω coefficient results are above 0.70, confirming the reliability and internal validity (Table 11). Although above the superior threshold, the correlation matrix results make sense (Table 12). The higher values explanation may derive from the pre-set number of five dimensions, lower than the optimal number of topics for the EDC data set.

We developed a global visual overview of the keywords assigned to each dimension by validation procedure (the original NLP LDA model and the empirical validation CATA) and relevant data set (LDC and EDC). The visual representation allows for comparing the results across the different construct dimensions (Figure 5), supporting the discussion and deriving the impacts of these results within the next section.

Keywords frequency by dimension for NLP and CATA.

5. Discussion and meta-inferences

The improved rigour of the empirical validation, to which the assessment of relevance to practice this study adds, is foundational for CI praxis – theory and practice. The construct is empirically validated by 61 CI experts representing all geographies and segment types, significantly advancing the scientific rigour of the previous literature [31] and confirming the relevance to practice. Therefore, practitioners and scholars have a sound theoretical and empirical basis for delineating and developing a much-needed CI BoK and common language. Specifically, the CI BoK will drive the effectuation of business practice, scientific research and the teaching of the discipline to tackle the grand challenges of today and tomorrow. In short, the results ignite the CI virtuous cycle [30] and promote the establishment of the CI science, contributing to decision-making quality and organisational performance.

We derived a set of empirical meta-inferences based on the findings and expert feedback. These inferences are structured and detailed by each CI construct–validated dimension in the following sections as further clarifications and guidelines for practical application.

5.1. Purpose of CI

Experts agree that executives at all organisational levels use CI to inform decision-making, improve performance and strategies, obtain competitive advantages or avoid surprises. However, the purpose dimension is the one that raised the most debate. The debate arises on which should be the ultimate purpose of CI. Integrating the findings and the expert’s feedback was instrumental in confirming that performance is indeed the end purpose of CI. The reasoning is as follows. First, organisations develop competitive advantages to increase or sustain their performance. Second, by definition, strategy is the path to achieving a goal. As such, it cannot be the goal in itself. Finally, we could speculate that decision-making support could be the final goal of CI. Here again, we would be falling into the same fallacy. If decision-making is the precursor of performance, then the aim of CI in supporting decision-making must be to improve organisational performance. The validation findings also support this reasoning by showing that CI supports three types of decisions: (1) address specific goals through strategic, tactical and operational decisions informing strategy, planning and execution; (2) development of competitive advantages such as in management by the allocation of resources or marketing by developing the continued preference of existing and new customers and (3) early warning anticipating what is going to happen to make the decisions today that will allow the organisation to compete in the future.

In summary, CI aims to improve performance by maximising the effectiveness of managerial decision-making. The findings inform the effective implementation of the underlying information systems, the technology choice and its setup, enabling these three types of decisions to improve the strategic and tactical performance of organisations.

5.2. Purview of CI

The findings confirm that the CI scope encompasses the overall competitive environment in which organisations operate. Yet, most practitioners overlook its importance and rarely define it. According to the experts, there are three significant misconceptions. The first refers to the fact that the competitive environment is exclusively external. In other words, intelligence solely focuses on the macro-, meso- and micro-environments. The oversight of the internal environment impedes the correct understanding of the true strengths, weaknesses, capabilities and competencies. As a result, strategic alignment between the organisation and the competitive environment is faulty, leading to poor quality decision-making and strategy. The second is that CI just focus on competitors. This highly restricted view primes the inability to identify and leverage the organisation’s strengths and competitive advantages. Unlocking this critical effort is only possible through internal analysis and competitor benchmarking. On top, it may lead to incorrect foreseeing of the probable future direction of competitors, given the lack of external competitive environment context. The third and last misconception is that consumer and customer intelligence are outside the scope of CI. Often outsourced and delegated to third parties, integrating this type of intelligence is critical for customer-centricity and relevant innovation.

Overall, the empirical validation of this dimension highlights the purview as a critical success factor for any CI programme [109]. In an era of extraordinary technological advancement, the importance of competitive technological intelligence [110,111] is one prominent example of the need to validate the relevant scope. Therefore, guaranteeing the appropriate depth and breadth of the intelligence scope is of the essence to support effective decision-making and sustained business performance.

5.3. CI practices

CI praxis, the practical application of the construct, depends on individual and organisational capabilities. Practitioners need to hone specific competencies on a broad set of topics, given the multidisciplinary nature of CI [112]. In addition, the CI practice calls for mastering technological, functional and business expertise. The technical challenge is twofold: enabling the underlying information systems and understanding the technology purview affecting the performance of organisations. The functional knowledge must cover the complete value chain [113]. The business expertise ranges from the industries to the geographies in which the organisation competes or will compete. Combining these three factors results in many ways of doing CI, from market research to financial intelligence [114], decision intelligence [115] or competitive technology intelligence [110]. The most critical and holistic way of enacting CI is still becoming a learning organisation. According to the experts, organisations increasingly compete on the learning rate [116–118]. Learning allows for better strategising, planning, innovating, value propositions and sustaining competitive advantages [113,119,120]. CI is the only discipline that enables the learning process by closing the loop on the impact evaluation of the decisions that it initially informed.

The other key characteristic of CI practices is the forward-looking orientation. Although decision-making is restricted to the immediate and the future, understanding the past is essential to managing the present and creating the organisation’s official future [121]. Thus, developing relevant foresight results from understanding the continuum between past learnings, present viable decisions and the design of the official futures [122,123]. Furthermore, CI practices should enact the purposeful exploitation of this continuum through effective decision-making. Namely, an increasing area of focus is technological forecasting aiming to explore innovation possibilities and adapt to a rapidly changing competitive environment. Furthermore, the strategic intent [124,125] should link these different time horizons and guide the CI practice.

Foreseeing and creating the official future is pivotal and notably requires abductive reasoning, an essential theme in the Design Thinking mind-set for CI [126,127]. In tandem with an intelligence-driven culture, this mind-set plays a critical role in business generally and in CI success in particular [33,128–132]. The CI culture will ultimately dictate the success of the organisation [109,133,134]. As highlighted by one expert, the role of values in an organisation can influence the way sense-making is done, finally conditioning the success of the organisation. For example, ethical values condition the understanding of competitors affecting how intelligence is developed and used for decision-making. In the end, the role of values can inform the legal conduct of an organisation, which would move the classification of such illegal activities from CI to the field of Industrial Espionage [135–137]. A final highlight is that the CI practice follows defined scientific procedures and respects organisational policies, namely, ethical and legal [138].

5.4. CI process

The CI process is the set of activities that follow a specific overarching procedure in developing actionable insights that inform decision-making. The approach guides the integration of all the sub-processes to achieve the desired output. The process should be systematic, networked and actionable. Networked means this is a collective process, external and internal to the organisation, where the collective knowledge (implicit and explicit) is integrated, communicated and actioned in the decision-making. Internally, this is a value chain-wide process [113] addressing all people, functions, levels and supporting technology across the organisation. The subject matter experts challenged the systematic approach, namely, its cyclic nature and linearity approach [84]. Instead of a linear one-cycle process, the central realisation is that the overall process is multicycle and constituted of different sub-processes that may or may not be performed. The nonlinearity and iterative nature of the sub-process [139] translates rather faithfully the impact of the Design Thinking mind-set on the CI model studied by Madureira et al. [127]. These sub-processes range from identifying intelligence needs – for example, Key Intelligence Topic and Questions development [140] – to its usage – for example, decision-making in Business War Games [141]. Although the initial cycle used by national intelligence services had four steps [142,143], a more granular approach emerged. Guided by the expert’s feedback, we identified eight sub-processes: Intelligence Needs; Planning and Direction; Data and Information Collection; Data and Information Processing; Information Analysis; Intelligence Communication and Storage; Usage and Decision-Making; and Feedback and Knowledge Protection. The most controversial sub-process is counterintelligence or knowledge protection since some experts defend that this is the responsibility of the organisational security function. Although this is sensible and understandable, after weighing all inputs, CI should, at the very least, inform the security efforts of the organisation relating to protecting its intelligence, knowledge and intellectual property. Experts highlighted the importance of the communication sub-process, especially compared with its mere dissemination, as decision-makers will not act on intelligence they do not understand. As such, communication as an interactive process plays a particularly pivotal role. Finally, the increasing usage of information technology in supporting CI is a recurring key topic with two important caveats. First, there is the need for effective information systems in dealing with the mounting information overload and communication challenges, namely, through CI platforms. Second, technological forecasting capabilities are needed to compete on CI through developing competitive advantages vis-à-vis the competition.

5.5. The product of CI

The nature of the CI process output is either human or machine. While machines can help process big data [80] and the exponentially increasing global infosphere[144], natural human intelligence can address the ‘how’ and ‘why’ questions for a higher-level understanding of competitive dynamics. According to different authors [145–149], the trend goes towards intertwining into integrated or augmented intelligence. Another emerging concept is the human-centred/centric artificial intelligence (HAI) [150,151], which is the ability to ethically augmenting on top of the technical functions of AI.

In its broadest form, the output is knowledge management (KM) [152]. The creation and management of knowledge go from identifying signals and data points to information, insight, intelligence, knowledge and wisdom. Contrary to the classic view of the Ackoff’s [78] data, information, knowledge and wisdom (DIKW) pyramid with just four levels, our discussion expands this number significantly (cf. Figure 1). Notably, it recognises the cyclic nature of data as a pivotal contribution. As confirmed by the experts, the previous pyramid metaphor does not do justice to the feedback loop that initiates when insight is generated. An insight is a hypothesis at its core. Confirming insight turns it into knowledge, a potential data point for new insight. In fact, starting from insight onwards to higher-order outputs such as intelligence, knowledge and wisdom, all are potential future data points. In its most advanced form, the wisdom of today is the data of tomorrow. The underlying assumptions of this iterative approach are the creation of value and the acknowledgement of data as an asset. The formation of value derives from the actionability of the knowledge. When knowledge is networked, it furthers the organisation’s performance. Finally, considering data as an asset supports the resourced-based, but most importantly, knowledge-based view of the firm [24,153–155].

5.6. Meta-inferences: knowledge advancement and relevance to practice

The induction of insightful meta-inferences resulted from integrating the inferences from the previous sections with the practical expertise shared in the in-depth interviews [91].

The definition of a common language for CI is the most crucial impact, as unanimously identified by the experts. Soilen [156] highlighted that the semantic trap is real and hindered CI development for too long. However, there is still room for misunderstanding in defining CI, namely, for polysemy and synonymy. The enhanced shared understanding should mitigate, if not eliminate, this critical issue. Therefore, a thoroughly improved unified view of the CI construct is proposed to guide future empirical application and knowledge advancement (Figure 6).

New empirically validated meta-inferred CI unified view.

Furthermore, developing the CI BoK and a common language should facilitate intra-, cross- and multi-disciplinary research involving CI and related disciplines. Some noteworthy examples are the foundational contribution to Collective Intelligence research, as defined by Mulgan [157] and Calof et al. [158]; the alignment and cross-pollination with Social Intelligence (SI) [159–164]; the empowerment of insights through collaboration [165] and knowledge advancement in decision sciences [166–168].

A second-order set of effects will improve the capability of addressing grand business challenges. CI enables a deeper understanding of pivotal issues, resulting in higher value creation, improved policy development and increased business competitiveness. These outcomes will promote future CI adoption, increased relevance and praxis and theory development. But, again, this is only possible when accompanied by efficient information systems and technology implementation deriving from the holistic and empirically validated perspective this study offers. The overall impact in decision-making leading to improved organisational performance is leveraged by configuring the four sets: mind-set, skillsets, toolset and data sets [33].

5.6.1. Mind-sets

A CI culture embedded with critical and design thinking mind-sets sits at the intersection between intellectual curiosity and empathy [127]. The immediate consequences are improved problem framing, solution development, better decision-making and value creation for better business performance. The subsequent development of the intelligence culture will improve business and society through intelligence-driven decision-making and better policy development. As a decision-support discipline, CI can inform the development of better policies, public and private.

5.6.2. Skillsets

CI literacy increases resource efficiency by reducing time to insight and improving decision quality. For example, an improved skillset in open-source intelligence (OSINT) helps tame information overload through noise reduction [169]. The impacts range from time savings in finding the correct information to curbing the disinformation/misinformation plaguing the social and business worlds.

Moreover, OSINT supports CI citizenship through more effective information collection and sharing or applying individual intellectual capacity to develop intelligence for society’s greater good [170]. This Collective Intelligence [157,158] can be vital in war or natural catastrophes, such as the Ukraine conflict, where civilians collect, process and share pivotal intelligence with the armed forces.

Societies compete for data as a critical resource in a world increasingly led by Data Capitalism [171] and complex geoeconomics [172]. Therefore, those mastering the intelligence skillset will thrive, converting data into knowledge at the highest learning rate and increasing their competitiveness and economic development. For example, the French initiative ‘Référentiel de formation en intelligence économique’ [173] delivers CI training to improve the performance of French businesses. The resulting CI literacy increased value creation, decreased unemployment and created higher wages and better quality of life. In short, CI literacy is of the essence to maximise knowledge creation and navigate the complex geoeconomics situation.

5.6.3. Data sets

The development of information and communication technologies, mainly Web 2.0, led to the emergence of the social consumer (SC) [174]. In this new paradigm, the consumer crowdsources his buying and consumption decisions while simultaneously influencing the decision of his peers through user-generated content. This behaviour is the primary change driver for society and business, forcing organisations to leverage digital technologies such as social listening and AI to address their changing needs and technology usage. To successfully navigate this transformation, known as Digital Transformation (DX), organisations need to make sense of social data (SD) – the rich data set made of the content generated and consumed by the SC. The challenge arises from continuously collecting and converting SD into intelligence, knowledge and wisdom, as discussed in section 5.5. Solving this challenge is where CI can exert its most noticeable effect. Furthermore, following the CI purview validation and the expert’s caveats from section 5.2, namely, the holistic integration of different scopes, such as the consumer and technology, gives rise to a pivotal role in developing Digital Transformation Intelligence (DXI) [175]. Therefore, this CI effect is a crucial example of the application and importance of this study’s findings for business and society.

5.6.4. Toolset

The sheer volume of SD requires the technology and analysis toolset used in CI constantly evolve. AI, ML and deep learning are examples of how CI practitioners make sense of social Big Data. As a CI sub-discipline evolving from consumer and market research, SI develops social insights from identifying needs and wants, sentiment and emotion analysis and opinion mining to design more relevant offerings. SI aims to address the SC paradigm by supporting the development of competitive advantages through superior customer intimacy [176,177]. Ultimately, SI informs decision-making and strategy development, resulting in improved new product development and relevant innovation. Surprisingly, the relationship between CI and innovation has not been studied in sufficient depth, which is paradigmatic. CI for international business development is a prerequisite for any organisation expanding beyond borders. With increased protectionism and trade wars in recent years, global companies must be increasingly aware of geopolitical developments. Anticipation allows organisations and executives to act accordingly and better defend their interests.

5.7. Summary of the contributions and implications

This study significantly reinforced the empirical validity, reliability and applicability of the construct proposed by Madureira et al. [30], laying the scientific foundations for CI praxis. The findings confirmed our expectations in surpassing the shortcomings of extant validation studies by going above and beyond on the sample representativeness – doubling the scientific threshold, avoiding potential biases – covering all stakeholder cohorts and geographies and performing the triangulation of findings – using the same method used for the development of the initial construct under validation. The increased accuracy of the empirical validation of the CI construct contributes significantly to the theory, domain and practice:

The findings contribute to the literature by providing scientific-grade accuracy of content, external and discriminant validities, reliability and delivering the triangulation of results.

They challenge the assumptions of prior research by providing a new scientific-grade CI construct.

Confirm the applicability and relevance of the construct for CI practice.

Given the robustness of the results, the undisputed empirical validity means the construct is fully generalisable in advancing science, guiding the practice and teaching CI.

5.7.1. Implications to theory, from theory and to practice

A scientific-grade CI construct has several foundational implications. An immediate implication is the delimitation of the BoK. The inherent recognition of the domain will promote scientific research resulting in new CI literature. Therefore, the baseline for future theory development and practice should be the construct proposed by Madureira et al. [30].

In parallel, it guarantees reproducibility, replicability and generalisability, the fundamental preoccupations of any science [178]. As such, it lays the foundations for establishing CI as a science and discipline. The construct facilitates developing a curriculum leveraging the newly delimited BoK. CI education should increase awareness of the profession in the business world and increase the number of practitioners.

Finally, the much-claimed consensus for the definition of CI is provided. A common language and shared understanding should drive CI practice in organisations which now comprehend what CI is, why and when to use it, where it can help, who does it and how to develop it. The obvious derivative is support implementation in the effectuation of the CI practice, function and profession.

5.8. Strengths, limitations and future research agenda

The major strengths of this study are the increased accuracy and reliability of the findings resulting from unmatched sample size and the quality of top subject matter experts, both producers and users of CI. In addition, a highly clarified visual instrument is provided to effectively guide theory and praxis. That said, there are some limitations that, although attenuated, can be mitigated through further research. Given that the expert’s sample size will always be a limitation, stretching it further towards a higher saturation point may present itself as a research path. From the opposite perspective, researchers may choose to disprove this empirical validation. An unquestionably critical research path is measuring the impact of this study on decision sciences and management research. More specifically, study how CI can successfully contribute to making a difference in practice, science, education, policy development and society at large [29], researching its full applicability check [88]. Another undoubtedly valid research path for practitioners is developing a maturity model based on the validated dimensions and, most notably, identifying the critical success or failure factors in CI implementation in practice.

Furthermore, acknowledging the CI construct adaptation through time and space, developing new and enhanced CI constructs is yet another future research path. The longitudinal change of the CI construct highlights the need for the constant refreshment of the meaning, validation or confirmation of the results of this study. A complementary research path is the development of a CI ontology that contributes to the further clarification of concepts and reduces existing miscommunication within and between CI and related fields. Finally, generalising the results of this study can be a challenging but fruitful area for research or even analysing how the findings apply to specific industries and business ecosystems [116].

6. Conclusion

This expander study [179] draws from unparalleled empirical expertise across the CI field, increasing the scientific rigour of the construct empirical validation and offering practical guidance to its effectuation. By filling a century-long history foundational gap, this research allows the scientific grounding of empirical and theoretical advancements in CI and decision sciences. In practice, it enables igniting the virtuous cycle identified by Madureira et al. [30] threefold. First, fostering a common language and offering an instrument that further clarifies the construct dimensions and descriptors creates a practical and rigorous blueprint for practitioners to successfully set up their CI functions and programmes in any organisation; second, providing a scientific-grade construct validation and empirical feedback that highlights the key issues scholars need to study in establishing CI science and third, providing CI education with an empirically validated construct and instrument allows for developing a thorough curriculum that is de facto relevant to practice. Therefore, this study should effectively impact the practice, the theory and the education, elevating the CI science, contributing to the quality of managerial decision-making and improving the performance of organisations.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by national funds through FCT (Fundação para a Ciência e a Tecnologia), under the project - UIDB/04152/2020 - Centro de Investigação em Gestão de Informação (MagIC)/NOVA IMS