Abstract

Most research into online misinformation has investigated its direct effects—the impact it may have on citizens’ beliefs and behavior. Much less attention has been paid to how citizens themselves make sense of misinformation as a broader social problem. We integrate theories of narrative, identity, cultural capital, and social distinction to examine how people construct the problem of misinformation and their orientation to it. We show how people engage in everyday ontological narratives of social distinction. These involve making a variety of discursive moves to position one’s “taste” in information consumption as superior to others constructed as lower in a social hierarchy. This serves to enhance social status by separating oneself from misinformation, which is presented as “other people’s problem.” We argue that these narratives have significant implications not only for citizens’ vigilance toward misinformation but also their receptiveness to interventions by policymakers, fact-checkers, news organizations, and media educators.

Keywords

Online misinformation is a significant problem globally. To date, most research has investigated its direct effects—the impact false or misleading information may or may not have on citizens’ beliefs and behavior. Much less attention has been paid to how citizens make sense of online misinformation as a social problem. In this exploratory study we examine how citizens construct the problem of misinformation and their orientation to it. We show how some people engage in what we term everyday ontological narratives of social distinction in relation to misinformation. Ontological narratives assemble and cohere the stories people tell about themselves and their experiences (Somers, 1994). While such narratives often animate interpersonal interactions, they are never merely personal but provide important connections to the public world of institutions, media, and politics. As such, ontological narratives play a key role in the formation of identity as people mobilize accounts of personal attributes and past experiences to make sense of public events. People fold this sense-making back into their everyday lives, in an ongoing, reflexive, and relational process (Somers, 1994). We argue that this process has significant implications not only for people’s vigilance toward online misinformation but also their receptiveness to anti-misinformation interventions by policymakers, fact checkers, news organizations, and media educators.

Our study aims to build new theory and expand the canvas of misinformation research. Empirically, we examine people’s everyday experiences of online personal messaging platforms. These technologies, such as WhatsApp and Facebook Messenger, are extremely popular in many countries and have become embedded in people’s lives. But they remain poorly understood by researchers, particularly when it comes to how misinformation spreads on them. Our data are from in-depth qualitative fieldwork we conducted in 2021 and 2022 with a sample (N = 102) of personal messaging users that roughly reflected the UK population on key demographic variables. The project is funded by the Leverhulme Trust. We focus here on one strand of the data concerning how people construct ontological narratives of social distinction to demonstrate their personal resilience to the online misinformation they encounter in personal messaging contexts and the broader media system. Among our participants, these narratives had common themes: the importance of a “good” upbringing; the acquisition of formal education; the possession of “natural,” “innate” personal qualities such as diligence; the cultivation of “savvy” media consumption routines; personal self-help and improvement over time; and, in some cases, a “critical outsider” orientation to science, public institutions, and professional news organizations. When it comes to misinformation, these everyday ontological narratives of social distinction enable some people to signal their social status by differentiating themselves from others in society they consider less resilient than themselves.

These narratives deserve attention for at least three reasons. First, they may undermine receptiveness not only to individual-focused digital literacy campaigns but also to broader efforts by public institutions, scientists, news organizations, and fact checkers to improve the epistemic bases of liberal democracies. Although the valorization of behaviors such as verification can build social norms that have a positive impact on combatting misinformation, the attribution of diminished social status to the “types of people” who need to improve their media literacy can stigmatize engagement with campaigns and participation in interventions.

Second, vulnerability to misinformation is multi-faceted, context-dependent, rooted in deeply held socio-political identities and cognitive biases, and often exploited by malicious illiberal actors skilled in leveraging digital affordances (Chadwick and Stanyer, 2022). While it is important not to exaggerate the threat of misinformation, the wide-ranging evidence suggests that most people are susceptible to online deception at least some of the time. Narratives that occlude this context need to be understood if we are to raise public awareness about the sophisticated and variegated nature of misinformation today.

Third, narratives of social distinction undermine anti-misinformation work because mitigating online harms is necessarily a collective social endeavor. It requires purposive social action in a range of settings including civil society and everyday interactions. As media systems transition from digital disruption to consolidation on an uneasy blend of centralized and decentralized information production and circulation, authoritative media and scientific organizations cannot do all the work in combating online harms. Misinformation that stokes class, ethnic, and religious divisions, causes mental health harms to vulnerable groups, or undermines public health and environmental protection policies ought to involve citizen responses in everyday settings. This requires decentralized participation in social endorsement and social correction, driven by a norm of collective, common purpose (Bode and Vraga, 2021; Chadwick et al, 2021). Narratives that valorize disengagement from the problem of misinformation—because susceptibility is discursively articulated with low social status and “other people’s problems”—undermine attempts to address online harms. When individuals distinguish themselves from others in whom they chiefly locate the problem of misinformation, they imply that challenging misinformation is not a common cause in which they need to play an active role but an issue that is only relevant for socially distant others. In sum, how people tell stories about their position in relation to misinformation matters for policy outcomes.

Conceptual framework: ontological narratives, cultural capital, and social distinction

To illustrate this in action, we adopt an inductive and interpretive research strategy. This enables us to unearth the social meanings of information production and consumption. Our approach adds to an emerging body of research that recognizes the importance of interpersonal and social relationship factors in the digital spread—and correction—of problematic content (Chadwick et al., 2023a; Malhotra, 2023; Masip et al., 2021; Tandoc et al., 2020). We contribute new thinking and evidence on the work that everyday narratives relating to misinformation do for social distinction and status.

Personal messaging as a distinctive misinformation arena

Personal messaging platforms have become wildly popular globally. WhatsApp has more than 2 billion users (WhatsApp, 2020). With 31 million users in the UK—about 60% of the adult population—it outstrips any of the public social media platforms. Facebook Messenger is also very popular, with 18.2 million UK adult users (OFCOM, 2021).

Studying personal messaging platforms is important not only because they are popular but also because they are unique communication environments. In addition to one-to-one chats, individuals join groups of varying sizes, organized around friendships, family, school, work, hobby, neighborhood, and diaspora relations. Yet misinformation may originate in the world of news and politics and then be exchanged in everyday conversations embedded in the high-trust, interpersonal contexts of family, friend, and community relationships (Chadwick et al., 2023a, 2023b). Recognizing how this unique context matters, we bring into focus how individuals relationally link their own experiences with the experiences of others. This relational nexus provides a fertile context for people to construct ontological narratives.

Everyday ontological narratives

As historical sociologist Margaret Somers has argued, ontological narratives are “the stories that social actors use to make sense of—indeed, to act—in their lives.” They are a source of identity, used “to define who we are” and “this in turn can be a precondition for knowing what to do” (Somers, 1994: 618, emphasis in original). Such narratives assemble and fix meaning in apparently disconnected historical experiences: “everything we know, from making families, to coping with illness, to carrying out strikes and revolutions is at least in part a result of numerous cross-cutting relational story-lines in which social actors find or locate themselves” (p. 607). In this way, ontological narratives are also future-oriented. They provide for “emplotment”: the transformation of events into episodes sewn together into a narrative plot that makes them consequential for individual agency. As Somers puts it, “ontological narratives make identity and the self something that one becomes” (p. 618, emphasis in original). Ontological narratives are guided by a system of normative values that assigns greater moral worth to some actors (Somers, 1994: 617).

Cultural capital and social distinction

Public awareness of the problem of misinformation has increased in the post-2016 era (Kearney, 2017; Mitchell et al., 2014). In 2021, the Reuters Digital News Report found that over half of their global sample were concerned about it (Newman, 2021). This awareness can be seen in the various uses and abuses of the term “fake news,” the deluge of reporting on conspiracy theories during the COVID-19 pandemic, and the widespread allegations of the Russian state’s fakery during the invasion of Ukraine. Identifying online misinformation is now often presented in news reports as essential for citizenship. This is most visible in the many “how-to” guides offered by news organizations and fact checkers. As Swart and Broersma (2022: 402) have shown, many citizens are becoming aware of the emergent “normative ideals of informed citizenship” they encounter in news reports about misinformation.

But whether and how these normative ideals interpellate citizens’ identities and are mobilized in everyday social relations has not been investigated. Some recent survey studies have revealed that the “third-person perception”— the tendency to perceive that media messages have a greater influence on others than on oneself—now extends to online misinformation (Corbu et al., 2020; Jang and Kim, 2018). This provides a descriptive measure of psychological perceptions, but not a framework to address the underlying social mechanisms or consider the broader social and policy consequences. Psychological motives such as “ego-enhancement” are drawn upon to explain the third-person perception (Yang and Tian, 2021), but these motives only acquire substantive meaning and impact in social contexts where some social practices are valorized over others.

How might emergent norms around information consumption and people’s awareness of them affect the way social value is ascribed to particular groups, and how does this interact with existing and newly-emerging systems of social value? How might this affect the ways people seek to understand and present themselves, and what are the consequences? One way of understanding this process is as a new augmentation of cultural capital. Bourdieu’s (1984) influential work on distinction argued that “taste,” in the form of credentials, cultural consumption, and personal dispositions cultivated by upbringing, acts as a marker of class, moral worth, and social position. Individuals come to internalize the meaning of cultural distinctions and how they relate to social classification. Certain tastes are ascribed greater social exchange value than others. Through performances of their cultural capital, including cultural practices and narratives, groups distinguish themselves from others in the social hierarchy and distance themselves from “distasteful” others (Lawler, 2005).

The concept of distinction has been used to analyze status within a wide range of cultural and social contexts (Ollivier, 2008). For example, regarding audience behavior, Friedman (2011) investigated the role cultural capital and related moral narratives play in how people construct their superior appreciation of comedy. Skeggs et al. (2008) illuminated how social groups ascribe different value to the practice of watching reality TV, which shapes how they distance themselves from that media genre.

Here we explore how people construct “responsible” and “respectable” orientations to misinformation. We show how people use narratives about their orientation to online misinformation to position their “taste” in information consumption and signal distinction from others they construct as lower in a social hierarchy. As our interview data indicate, this move serves to discursively enhance individuals’ social status by separating themselves from the problem of misinformation.

Research design, data, and method

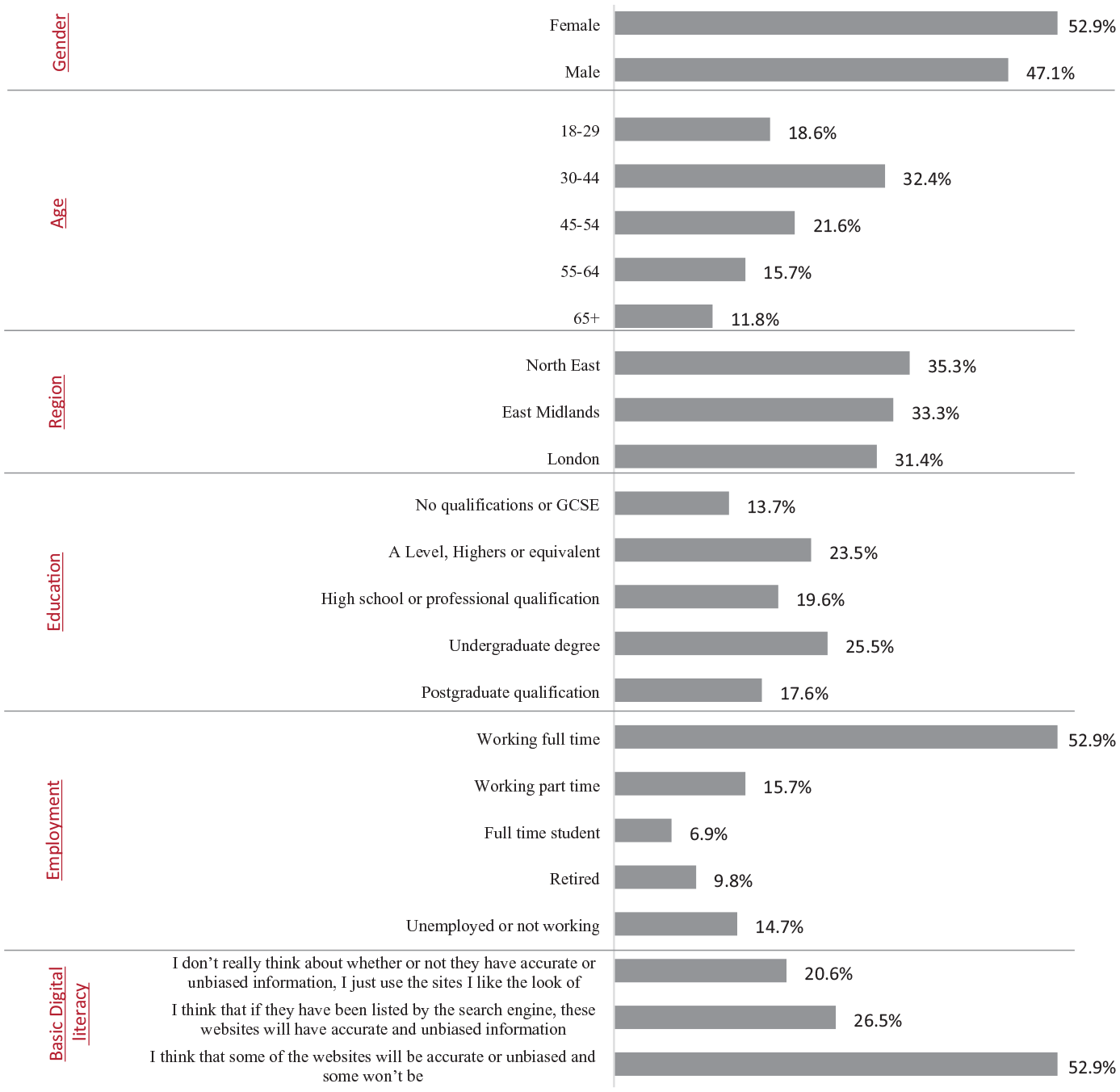

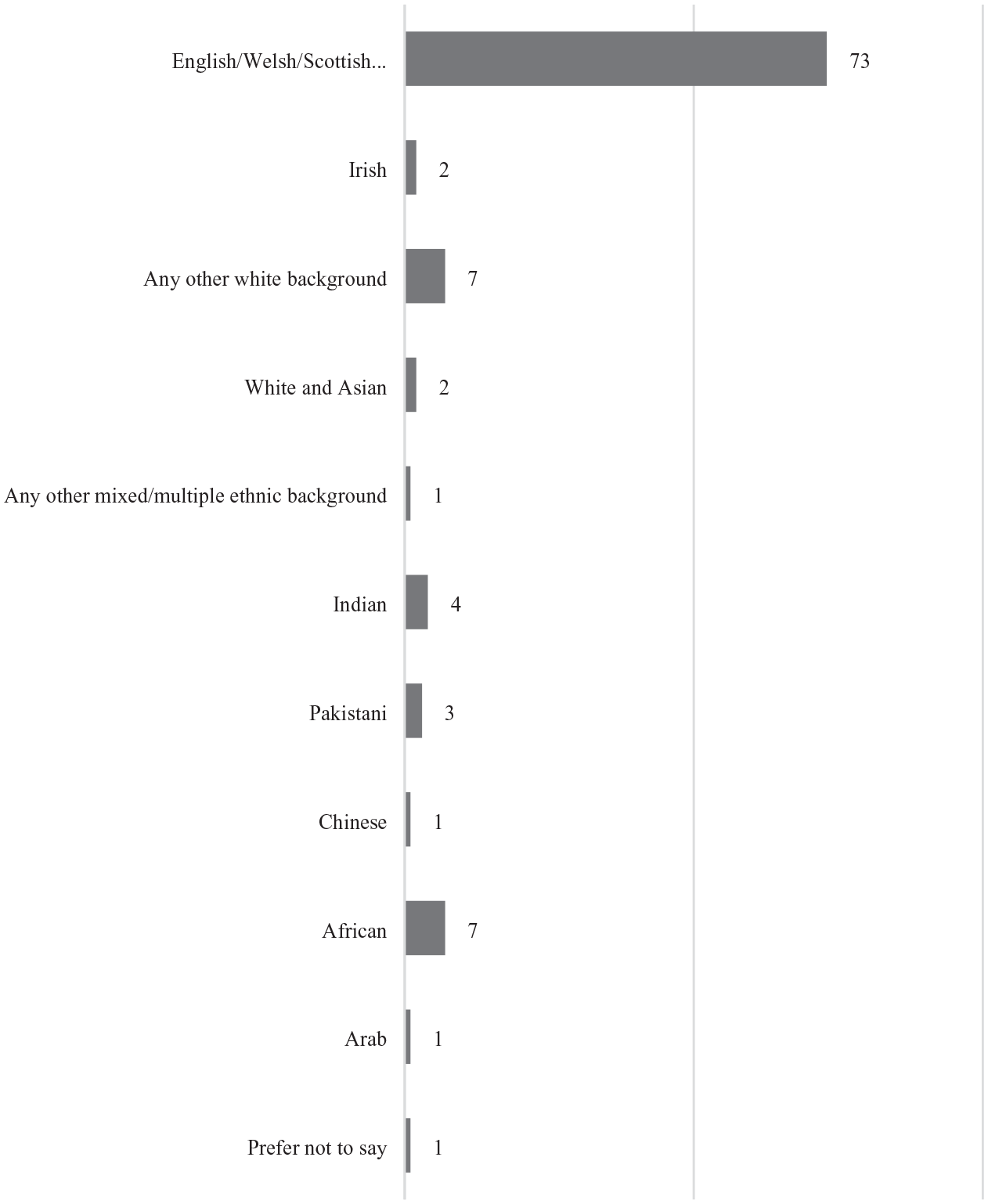

We conducted in-depth one-to-one interviews with a sample of the public whose characteristics roughly reflected key features of the UK population. To recruit participants we used Opinium, a research company that maintains a panel of more than 40,000 participants. Our short recruitment questionnaire ensured approximate representativeness on gender, age, ethnicity, educational attainment, and basic digital literacy (see Figures 1 and 2) though we note that average educational attainment was moderately higher than the UK average. We recruited participants who used at least one of the following at least a few times a week: WhatsApp, Facebook Messenger, iMessage, Android Messages, Snapchat, Telegram, Signal. While it is not possible with a sample this size to include a balanced representation of every geographical area of the UK, we used regional criteria to avoid over-representation of those residing in London and South-East England, from which it is often easier for survey companies to recruit participants. The three regions—London, the East Midlands, and the North East of England—have distinct local cultures and class profiles. This helped us include participants with a range of values and lifestyles. 1

Participant characteristics (N = 102).

Participant ethnicity breakdown (N = 102).

Interviewees granted informed consent and were compensated with a £35 payment for the first interview and £25 each for the second interview and the content uploads. Between the two interviews, participants could also engage in a series of optional, anonymized messaging content uploads using a customized smartphone application. Loughborough University granted ethical approval before fieldwork.

Our recruitment strategy allowed us to balance in-depth, interpretive analysis with covering the experiences of a reasonably representative group of people from a wide variety of backgrounds. To achieve the sample balance, we used six iterative recruitment and interview rounds on a rolling schedule for wave 1 from April to November 2021 (N = 102). We then recontacted participants and invited them to a second interview (N = 80, panel retention 78.4%). These ran from October 2021 to July 2022 and included discussion of the content 64 wave 2 participants voluntarily uploaded.

Procedure

As the COVID-19 pandemic restricted movement and in-person interactions, interviews were conducted on Zoom. They were semi-structured. We encouraged participants to speak in their own terms about matters they deemed important. We sought to capture everyday experiences and routines, how participants made sense of their social practices when using personal messaging, and how messaging layered into the texture of everyday life. We encouraged participants to check their smartphones during the interviews as an aide memoire. The pandemic inevitably loomed large as a theme, but we took care to tease out more general patterns; the 16-month duration of the fieldwork provided helpful variability of context. Interviewing most participants twice meant we could capture their experiences over time.

Analysis

Interviews lasted about an hour on average and were video recorded and fully transcribed. Participants’ content uploads were noted in the transcripts and NVivo was used to conduct emergent, interpretive coding (Corbin and Strauss, 1990). The principal investigator created the initial coding scheme. This was checked, discussed, and augmented by the other team members. In weekly team meetings we discussed coding consistency and added and refined codes over a period of about 12 weeks. We then moved to axial coding (Corbin and Strauss, 1990: 13) to explore intersections between themes. Finally, the selective coding phase focused on ontological narratives of social distinction as the central category and explored linkage with other themes in the data (Corbin and Strauss, 1990: 14). All team members agreed on the final coding scheme. Here we report the social experiences of some of the participants in detail to enable a rich, contextual understanding of the stories participants told us. This approach fits with our focus on narratives. We also include briefer testimony from some participants to further substantiate our claims. Interviews have been anonymized; all names are pseudonyms assigned by us.

Findings

Constructing binaries

A key theme in our interviews was the value of savvy information consumption. Certain participants constructed themselves as critically-minded, ever-skeptical individuals not vulnerable to being deceived by false information they might encounter. These participants claimed they set a high bar for how they established trustworthiness, painting a picture of constant caution.

For instance, Anthony, a part-time lecturer in his 60s living in London, told us, “I think my own nature is not to trust anything without looking at it first, so I wouldn’t implicitly trust anything even very trusted friends sent me or, you know, even if my partner sent me [something], I’d read it critically.” Anthony’s use of the term “my own nature” reflects a view of this critical thinking ability as an innate personality trait. This was echoed by Christine, an East Midlands resident in her 50s, who said that unless content was sent by her youngest daughter who had “three master’s degrees,” her first reaction would be to go and verify it herself: I think I’ve always been somebody who, you know, I don’t read something and necessarily immediately believe it. [. . .] I’ve always been quite questioning. [. . .] I wouldn’t just read a message that somebody had sent me [. . .] and think “oh, [. . .] it was The Huffington Post, so it must be true.” [. . .] If I was interested, I probably would go and look at different sources of information. [. . .] I wouldn’t have just forwarded that straight on. [. . .] I’m naturally a suspicious person, I think. (Emphasis added)

Christine is not only making claims about her own “naturally” suspicious attitudes and information consumption behavior, she is constructing normative assumptions about desirable behavior. One should not make immediate judgments based on biases, she claims, but refer to a variety of sources to gain a balanced view. Christine acknowledges the importance not only of taking care interpreting received content, but also taking responsibility for verifying the accuracy of information before sharing it with others, which she says comes “naturally” to her. She claims to set a high bar for trustworthiness; only content sent from her most educated daughter qualifies, signaling how these narratives sometimes implicate educational credentials, a long-standing marker of cultural capital.

Christine’s comment also refers to the imagined behaviors or attitudes of others who take information at face value and implicitly trust particular sources. Her ontological narrative constructs a binary between herself and these others who lack media literacy or are naïve. We also saw this in Tahira’s case. A volunteer worker in her 40s living in the East Midlands, Tahira described herself as “a skeptical person” and said she felt others saw her this way too. She spoke about exchanges with a friend over Facebook Messenger at the beginning of the pandemic. This friend was relaying false claims from personal messaging about the relationship between COVID-19 and 5G technology. In contrasting her approach with her friend’s, Tahira revealed her negative view of “people” whose naivety in evaluating and sharing information she characterizes as worthy of ridicule: I’m more of a sort of, [. . .] if I read something on Facebook, I’ll go and look at like [. . .] fact-checkers [. . .] or I’ll go look at verified news like BBC, [. . .] and she was, [. . .] you know, saying she read [something], or this and that, and I’m thinking “well that’s not true.” [. . .] People send me stuff [. . .] like “why you shouldn’t use fluoride in toothpaste” [. . .] and I watch[ed] the video and I thought “that is totally ridiculous.” [. . .] I don’t want to sound like I’m looking down on people, but I think “how can you believe something so ridiculous?” [. . .] I just kind of think how naïve they are. (Emphasis added)

Tobe, a first-generation migrant in his 40s who had been living and working in London for several years, also distinguished himself from others based on his approach to media consumption. However, in his case, the narrative he constructed juxtaposed himself not just with certain naïve individuals with whom he was in touch, but to broader society. Tobe felt that his degree in law, together with his avid news consumption through the BBC, Sky News, and Twitter, equipped him with critical-thinking skills and an open-mindedness that others, regrettably, did not possess: I have a critical view of everything and have a rational view and [. . .] I don’t take things on the face of it, I’d say. I’ll always ask “what’s the reason for this?” [. . .] and I know this is lacking in society now, because I think a lot of people don’t want to take responsibility for their actions, so they tend to just form an opinion based on, you know, one very narrow view, but if you pull a lot of information together you’ll be able to form an opinion that is your own. And then you won’t point to someone else and say “Oh, but I heard it from you, you told me to go and attack the Capitol,” you know [chuckles]. [. . .] Because, I find that society these days, they tend to do that. (Emphasis added)

Tobe constructed a moral opposition between himself and broader society. Not only were others generally too trusting, they did not have the skills required to “pull together” information and think independently and this could result in harmful and even violent actions, such as participating in the January 2021 attack on the US Capitol. These naïve individuals are represented not just as uncritically accepting what they read online, but as failing in their personal responsibility.

Narrating—and responding to—the media trust crisis

In this comment, Tobe echoed depictions in news media of those who participated in the Capitol attack as influenced by conspiracy theories—as enshrined in the prominence of images of the now infamous bull-horn-wearing, spear-flag-wielding “QAnon shaman,” Jake Angeli, who was imprisoned for a felony in relation to the attack (BBC News, 2021). Such references to public media reports about misinformation in participants’ interviews reflect their awareness of the problem and the normative value such awareness is ascribed. Among our participants, there was a strong awareness of the trust crisis engulfing public debate. Like Tobe, other participants identified key political moments and issues that exemplified this trust crisis, particularly the 2016 EU Referendum (“Brexit”) campaign in the UK, Donald Trump’s presidency in the US, and the early period of the COVID-19 pandemic. Understanding the problem as going far beyond social media, many participants spoke of news “agendas” and acknowledged the problem of commercial interests driving news media organizations to adopt problematic tactics such as clickbait. British tabloid newspapers, particularly The Daily Mail and The Sun—partisan, right-wing outlets with a historically fraught relationship with truth (Jewell, 2014)—were viewed with the most suspicion, and were often described as “sensationalism” (Abigail, F, 70s, East Midlands) or having “a bad reputation about being unreliable” (sic.) (Bianca, F, 30s, East Midlands). However, to those who saw themselves as savvy information consumers, such as Tammy (F, 20s, North East), “no newspapers are perfect,” and skepticism extended to all news media.

Participants often narrated the unreliability of news media as a recent phenomenon, signaling awareness of the shifting status of critical information consumption. For instance, Christopher, a retiree in his 70s living in London, said, “increasingly and particularly over the last five years, you know, the sort of false news scenario, it’s just more and more and more.” Christopher went on: Just because it’s written by a journalist in a magazine or a newspaper, it doesn’t mean 100 per cent it is certainly correct. [. . .] I think I’m sort of savvy enough to say, if I was going to pass that on or do anything as a result of it, I would check it independently. (Emphasis added)

Christopher contrasted his outlook with the approach he said others took: “no doubt there will be people who would see it and believe it straight away and pass it on.” However, he also told of how his personal qualities had enabled him to adapt to the changing context over time: “I sort of worried quite a lot [but] I’m not concerned [any longer]. As I say, I take things with a pinch of salt and I like to think eventually I can work out whether something is correct or not.” In this way, Christopher distanced himself from the problem of misinformation because, as one of the savvy media consumers, he does not envisage being duped.

This resonated with a comment by Evan (M, 50s, East Midlands) who said when others shared misinformation it did not affect him personally: It wouldn’t bother me in the slightest because [. . .] whatever videos or links to things, I don’t have to actually believe it. I can look at it and decide for myself what I think of the article or the video. If I don’t particularly think it’s accurate, I’ll just dismiss it.

Another participant, Miles (M, 60s, London), was similarly confident but specifically justified his attitude based on the platforms he used: I’m not familiar enough with the sort of fake news syndrome, because I don’t use Facebook, or, you know, particularly, I don’t get dragged into that sort of miasma of reinforcing one’s opinion because of what you said, or whatever. [. . .] I don’t listen to people that I don’t know, really. But they have no access to me, and I don’t have any access to them, so it’s immaterial.

This perspective reflects the way public social media platforms such as Facebook, YouTube, and TikTok were frequently characterized by participants as arenas for problematic information, in contrast with personal messaging platforms, to which they saw the problem of misinformation as less relevant because they used them to connect with trusted others who were like themselves. Because Miles chose not to use Facebook, he saw the issue of misinformation as “immaterial” to him. Although misinformation does of course spread on personal messaging (Badrinathan and Chauchard, 2023; Chadwick et al., 2023a), Miles does not acknowledge this possibility. On personal messaging, he is able to limit his information sources to people he “knows,” and this is part of his ontological narrative—how his personal choices in his everyday relationships intersect with the public world outside in ways that, to him, enhance his status in both. He dismisses the possibility that misinformation might enter his world.

“Responsibility,” class, and status

The “miasma of reinforcing one’s opinion” Miles mentioned is a nod to the discourse of “echo chambers” that has become central to much news coverage and commentary on misinformation. Being information savvy means not falling into the supposed echo chamber trap, as Ken (M, 40s, London) also highlighted: As far as social media goes, I’m quite distrustful of those channels as reliable sources of information. [. . .] There’s a sort of like, I think the expression is “echo chamber,” so depending on where you are in the spectrum, you’re going to be gravitating towards the groups that it seems to be, that reflect your views and so on. I know where I am on the political spectrum, [. . .] but I really like to take my views from everywhere—right across—and I don’t like to have something that continually reflects a particular perspective. [. . .] You’ve got to actually have a responsibility to think it through, [. . .] try and have some sort of balanced trawl of the sources that you’re using rather than just relying on [Nigel] Farage or whatever else. [. . .] That requires a lot of investment which people aren’t prepared to make. (Emphasis added)

Ken attributes echo chambers some responsibility for the spread of misinformation on social media, but only among other people. Importantly, he has built a narrative that presents echo chambers as resulting from other people’s lack of “investment” in balancing their information diets. By contrast, he sees himself as able to rise above his own political views and potential biases, because he thinks carefully and takes responsibility. In addition to mentioning some of his friends, Ken’s reference to Nigel Farage—arguably the UK’s most prominent Brexit campaigner and right-wing populist (Mondon and Winter, 2019)—hints at another group in which he locates the problematic behaviors from which he distinguishes himself. The reference to Brexit is significant because of the widespread depiction in liberal media of Brexit supporters as emotional, irrational, racist, ignorant, or naïvely misinformed (e.g., Carl et al., 2019). In this way, while participants demonstrated awareness of the social problem of misinformation, their ontological narratives of social distinction located this problem in an othered group.

In that same vein, educational attainment—a well-known marker of cultural capital frequently, but subtly, tied to class in the UK—was often referenced when participants were asked to account for the difference between their media literacy skills and those of others. For instance, Ben (M, 30s, East Midlands), said: I think, without sounding too bad, just level of education seems to be a common theme, or like someone’s class. Well, that sounds really bad, but I think, a combination of that kind of thing, their kind of, what they do, their kind of family background. [. . .] If you are a bit more educated, you’re more likely to see through and be able to make your own judgments.

Ken also referred to class when discussing how his upbringing contributed to his taste in news consumption, separating himself from those he considers as lacking responsibility: I was brought up by my grandparents and [. . .] although he was a builder and she was a school cook, they’d get The Times

2

every day. [. . .] We’re all products of our environment and, for me, getting news from reputable news sources was always, you know, fundamental to how I collect it and interpret it. (Emphasis added)

Here, Ken’s grandparents’ sophisticated news behaviors existed despite their jobs and were passed on to Ken through his good upbringing. For Ken, the domestic environment that formed him, where news consumption was valorized, is assigned great social status and extraordinary social power. So much so that the “responsible” approach to news media consumption instilled in him serves in his ontological narrative to override other potential barriers to status and distinction that might conventionally be linked with his working-class background.

From skepticism to conspiracism

Janet is in her 60s, living in London. She compared what she presented as her own innately skeptical nature with the gullibility of her brother and neighbor: I’m worried about [my brother] because he’s a little bit more gullible than I am, you know. It’s like my neighbor, they believe everything they see online. I have to keep telling people, “Not everything on YouTube is true. Just take it with a pinch of salt or do your research and find out who’s behind this.” [. . .] I’ve always had a curious mind. I’ve always wanted to dig deeper into things. I’m always skeptical. I don’t always believe what people tell me. I question things. I’ve been like that since I was a child. (Emphasis added)

Based on this part of Janet’s testimony, one might assume she easily dismissed misinformation. However, Janet shared in her initial interview her belief in false claims about long-term side effects and inadequacy of the vaccines’ clinical trials and ingredients, which she told us included (in her words) “DNA from chimpanzees” and “cells from aborted fetuses.” The information Janet endorsed was in fact false.

Indeed, in her follow-up interview Janet further revealed that she believed in conspiracy theories such as the New World Order. This contrasted with her first interview, in which she pre-emptively deflected characterizations of herself as a conspiracy theorist when discussing the COVID-19 vaccine with statements such as: I mean there’s a lot of the conspiracy theories behind it that, you know, we’re going to grow two heads or what-have-you, that something’s going to get into our DNA and change our DNA, or it’s connected to 5G. I put all that to one side, because I think that’s just got out of control. So, when some people say “oh you’re just a conspiracy theorist,” it’s not that. It’s neither for the vaccination nor against it. I’m just a person who’s looking for accurate information.

Janet also made another distinction—between herself and “out of control” conspiracy theorists. She was aware of the negative media coverage of conspiracy theories brought to the fore during the pandemic and was careful not to diminish her social status by allowing herself to be associated with this group. Janet thus simultaneously distinguished herself from both the uncritical “followers” of “mainstream media” and the uncritical consumers of conspiracy theories. Janet’s case was rare within our data: few participants expressed overt conspiracy theory beliefs. However, her comments demonstrate how an individual’s ontological narrative can stretch to the point that it performs the role of conveying social distinction while simultaneously directly contradicting the reality of their information consumption.

Justin represents another example of this contradiction. A delivery driver in his 30s in the North East of England, he also distinguished himself from others: “I think I can see through it better than other people. [. . .] Some other people don’t even think to question, [. . .] whereas I’d be more from the mantra of ‘question everything’ (Emphasis added),” he said. Justin also distrusted the COVID-19 vaccines. This attitude was reflected in the material he uploaded to us for our project, which included two pieces of false COVID-19-related conspiracist misinformation he had marked as “accurate and helpful.” One was a social media post shared by his brother on personal messaging that falsely claimed that the vaccines were connected to widespread sudden deaths of children.

Some of the anti-vaccination material Justin had seen had been posted in a WhatsApp group by close friends who had recently become engaged with conspiracy theories. However, Justin distinguished himself from these friends, using an ontological narrative of personal improvement over time: I personally try and stay away from it, because I’ve previously been quite into some conspiracies, and I was ahead of the curve, whereas there’s people who are in the group now who are bang into that way of thinking, whereas I’ve come through the other side of it now. [. . .] You can’t look at everything like it’s a conspiracy, cos some of them turned out to not be.

Although Justin still believed in some conspiracy theories, he distanced himself from his conspiracy-theory-believing friends whom he presented as naïvely and obsessively delving into every theory. One of Justin’s closest friends had been espousing conspiracy beliefs about the invasion of Ukraine, claiming that videos depicting Russian brutality were staged and circulating videos supposedly providing evidence of war crimes by Ukrainian soldiers. Justin purported to be able to see through these claims. He despaired of his friend: “He should know better. [. . .] He should see where I am with it.” In this way, Justin positioned himself as a bearer of cultural capital and social distinction within this conspiracy theory community. He did so through an emancipatory narrative, tracing his trajectory from being “ahead of the curve” of conspiracy thinkers to subsequently splitting from this community while preserving his social status within it and beyond, among the non-believers.

Justin’s and Janet’s cases were outliers in our data, but they demonstrate how the ontological narrative of distinction can stretch to encompass conspiracy beliefs and mask actual vulnerability to misinformation. The cases reveal an important tension. A strand of research highlights how the discourse of “doing your own research” has become prevalent among online conspiracy theory communities. This can lead to what Buzzell and Rini (2022) have termed “epistemic superheroism”: an unfounded sense of superiority that masks the realities of susceptibility. Conspiracy theories offer followers a feeling of uniqueness or superiority, even if they are stigmatized in broader society (Harambam and Aupers, 2017). The high value placed on skeptical information consumption means that, for some, it is possible to circumvent the stigma of conspiracy believer while still professing to “question everything”—further spreading misinformation in the process.

Conclusion

Drawing on insights from theories of narrative identity (Somers, 1994) and cultural capital (Bourdieu, 1984), we have explored how increasing awareness of misinformation means that signaling skeptical information consumption can become a new marker of social status. Based on evidence from relationally-contextualized interpretive data from interviews with online personal messaging users, we have shown how some individuals try to define themselves as savvy and others as naïve.

In the examples we have discussed, participants associated negative connotations with being the sort of person who is deceived by misinformation. To avoid diminishing their social status by being attributed this poor “taste” in information consumption, participants sought to distance themselves from the problem. The result was that they constructed misinformation as a problem for others out there, not relevant to themselves and their everyday lives and social relationships. On messaging platforms, where content moderation and oversight are impossible, combatting misinformation relies on individuals’ agency and sense of responsibility. To the extent that users perceive themselves as information savvy and these platforms as trusted spaces where they can safely lower their critical guard, problematic content may go unnoticed and unchallenged.

This is a complex social terrain to navigate by those seeking to involve citizens in the fight against misinformation. On the one hand, the attribution of high status to critical information consumption is heartening; these attitudes and behaviors are important to combatting misinformation. However, when combined with narratives emphasizing one’s difference from naïve others, they also have important downstream implications for anti-misinformation interventions, particularly those based on encouraging social correction. If misinformation is perceived to be a result of individual naivety and lack of diligence, rather than a society-wide threat to which we are all vulnerable and should contribute to combatting, journalists, fact checkers, and civil society organizations may face difficulties reaching those who, for reasons of social distinction, seek to distance themselves from the problem and tune out attempts to combat it. It has previously been demonstrated in related contexts that stigma and class-based cultural boundaries can be barriers to campaigns reaching particular groups (e.g., Wachs and Chase, 2013; Wang, 1992), and raising awareness may fail to impact attitudes and behaviors of those who do not see themselves as “part of the problem” (Boring and Philippe, 2020).

If people disassociate themselves from the problem because susceptibility has negative status connotations, and they construct others as unwilling or helplessly unable to avoid deception, they may be reluctant to engage with much-needed misinformation correction in everyday interactions. In the context of recent threats to liberal democracy, social cohesion and public health, combating misinformation inevitably involves decentralized social and collective behavior (Bode and Vraga, 2021; Kligler-Vilenchik, 2022) and depends on engagement from broad sections of society. The more people wash their hands of this problem—even if they simply “live and let live” as a participant, Kurt (M, 40s, East Midlands), advocated—the harder it will be to tackle misinformation on a societal level.

These ontological narratives of social distinction could also lead to greater complacency and a failure to recognize one’s own susceptibility. Online misinformation is variegated and often sophisticated. No individual is capable of verifying everything they encounter, nor is an imagined innate intuition sufficient for identifying all forms of deception. Restricting one’s social media diet to personal messaging does not render one immune either, as misinformation can and does spread on these platforms including between trusted contacts (Andrey et al., 2021; Badrinathan and Chauchard, 2023; Chadwick et al., 2023a, 2023b).

In 2022, a UK Office of Communications study found that “although social media users were highly confident that they could judge the validity of online content, most did not spot the valid indicators of a genuine social media post” (OFCOM, 2022: 13). We saw evidence of this in our interviews, with some participants professing to never implicitly trust content and always being sure to “check it myself” (Farhan, M, 50s, London) while also admitting to experiences of forwarding content they later discovered to be false. In fact, the most confident individuals tend to be proportionately more likely to overestimate their abilities (Dunning, 2011; Motta et al., 2018). And, as Janet and Justin’s cases above demonstrate, a potential consequence of distinguishing one’s savvy self from naïve others is falling prey to conspiracy theory beliefs. This jells with recent work that finds knowledge over-confidence is associated with misperceptions on issues such as climate change, vaccination, and evolution (Light et al., 2022). Some who mobilize ontological narratives of social distinction might be more vulnerable to misinformation than they would otherwise be (Buzzell and Rini, 2022).

The kinds of discursive moves we have identified in this study deserve greater attention. Future research could investigate their consequences in more detail, including whether they could become a significant long-term barrier to reducing online harms.

Footnotes

Acknowledgements

We thank our project’s participants. Our thanks also go to participants in the American Sociological Association Communication, Information Technologies, and Media Sociology Section’s Symposium at the American Sociological Association Annual Meeting in August 2022, and to participants at the International Communication Association Annual Conference in Toronto in May 2023, where we first aired the ideas in this article. We would also like to thank the anonymous reviewers, and Dr Brendan Lawson for his helpful feedback on an earlier version of this paper.

Author’s note

Cristian Vaccari is now affiliated to The University of Edinburgh, UK.

Declaration of conflicting interests

We have no conflicts of interest to disclose.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: this research is supported by a Leverhulme Trust Research Project Grant (RPG-2020-019).