Abstract

This article draws on the framework of “folk theories” to analyze how people perceive algorithms in the media. Taking algorithms as a prime case to investigate how people respond to datafication in everyday media use, we ask how people perceive positive and negative consequences of algorithms. To answer our question, we conduct qualitative thematic analysis of open-ended answers from a 2019 representative survey among Norwegians, identifying five folk theories: algorithms are confining, practical, reductive, intangible, and exploitative. We situate our analysis in relation to different application of folk theory approaches, and discuss our findings in light of emerging work on perceptions of algorithms and critiques of datafication, including the concept digital resignation. We conclude that rather than resignation, digital irritation emerges as a central emotional response, with a small but significant potential to inspire future political action against datafication.

Algorithms are integral to media experiences, routinely encountered when navigating social media newsfeeds, targeted advertising, streaming services or personalized media. Algorithmic media draw on the systematic exploitation of user data often referred to as datafication, that is “the requirement, not just the possibility, that every variation in the texture of human experience be translated into data for counting and processing” (Couldry, 2019: 11; also Mayer-Schönberger and Cukier, 2013). Perceptions of algorithms are therefore a suitable case to study how datafication is experienced in everyday media use.

Gaining a deeper understanding of user experiences is essential as datafication produces complex and potentially problematic outcomes in society. Concerns have been raised about lacking accountability systems for regulating platforms (Poell et al., 2018), reinforcement of bias and oppression (Eubanks, 2017; Milan and Treré, 2019; Noble, 2018), or normalization of surveillance and resulting cynicism affecting the infrastructure of the public sphere (Zuboff, 2019). The potential consequences for media’s role in democracy, and for democratic society in general, are grave. To better understand such consequences, we need insights into how users interact with structures of datafication (Vaughan-Williams and Stevens, 2016), to study everyday experiences with data (Kennedy, 2018; Livingstone, 2018) and advance a social critique of datafication (Couldry, 2014). A central justification for analyzing users is that “a perspective on audiences and their sense-making allows for acknowledging the nuances of people’s agency beyond its metrification into computable data” (also Kennedy and Hill, 2017; Mollen and Dhaenens, 2018: 44).

In view of the glooming prospects brought forward by datafication, concepts such as surveillance realism or digital resignation have emerged to characterize emotional and political attitudes, especially toward issues of privacy in datafied society. Surveillance realism is defined as a “lack of transparency and knowledge in conjunction with the active normalization of surveillance through discursive practices and institutional sanctions” (Dencik and Cable, 2017: 777), while digital resignation emphasizes that “feelings of resignation are a rational emotional response in the face of undesirable situations that individuals believe they cannot combat” (Draper and Turow, 2019: 5). But do these concepts grasp user reactions to datafication, and if so, are feelings of resignation more generalized than specific privacy issues? Taking algorithms as a prime case to investigate how people perceive datafication in everyday media use, we ask what people believe algorithms do. Are we right to assume that people feel algorithms to be opaque and unavoidable? Are algorithms considered mysterious, predictable – or useful? How do people perceive positive and negative aspects of algorithms?

To answer these questions, we have conducted qualitative analysis of open-ended answers from a 2019 representative survey on media literacy in Norway (N = 1363), asking respondents to describe in their own words positive and negative aspects of algorithms in the media. Our analytical approach builds on budding work on “algorithmic imaginaries” (Bucher, 2017) and decoding and hermeneutics of algorithms (Andersen, 2020; Lomborg and Kapsch, 2019). We draw inspiration from the field of Human-Computer Interaction which studies these issues through the lens of folk theories (e.g. Eslami et al., 2016). But where this latter body of work focuses on improving software design, our aim is to feed into the critical discussion of user agency in response to datafication (Kennedy et al., 2015; Ytre-Arne and Das, 2020).

As such, the article adds a user perspective to discussions of political-economic and regulatory dimensions of datafication, while methodologically bolstering previous empirical user studies that have primarily been based on small-N interview data, or self-recruited surveys typically among students. Our selection of a small European case country also broadens the empirical scope, so far dominated by Anglo-American perspectives, while the Norwegian case might represent user practices associated with overall high ICT penetration. Theoretically, we further develop the framework of folk theories to advance conceptual understandings of user responses to datafication.

First, we review research on user perceptions of algorithms, before zooming in on and outlining our approach of “folk theories.” We discuss the survey material we draw on, and subsequently present our analysis in the form of five folk theories. We conclude that rather than resignation, digital irritation emerges as central to user perceptions of algorithms.

Understanding user experiences with datafication and algorithms

While structural concerns have been prominent in academic debates on datafication (Dencik and Cable, 2017; Draper and Turow, 2019; Van Dijk, 2014), some research has emerged that investigates user perspectives on datafication in general, and algorithms more specifically.

A recent meta-analysis of audience research highlights the need to understand experiences with intrusive media, a tendency accentuated by datafication (Das and Ytre-Arne, 2018). The idea intrusive media refers to a dynamic between interfaces and users, in which experiences of technologies as pervasive and exploitative are reacted to with self- and technology management, as users seek to appropriate digital media to personal or collective needs (Mollen and Dhaenens, 2018: 52). More precise insight into user understandings of how technologies work is central to investigate the potential of such strategies.

Concerning algorithms specifically, a small number of studies have analyzed people’s perceptions. In media and communication research, a key contribution is Bucher’s (2017) study of how algorithms make people feel. She used tweets and follow-up interviews to understand “algorithmic imaginaries,” defined as “the way in which people imagine, perceive and experience algorithms and what these imaginations make possible” (Bucher, 2017: 31). With a similar interest, Lomborg and Kapsch (2019) interviewed a group of media users about how they obtain knowledge about algorithms, what they understood algorithms to do, and how they responded to them, in a framework developed from Hall’s (1980) Encoding–Decoding model. Andersen (2020) also fruitfully applies theories of interpretation to the understanding of what it means to live with algorithms, by developing a hermeneutics perspective suited to capture culturally and historically situated processes of interpretation. Some studies have analyzed user experiences with algorithms in specific contexts: recommendations in streaming services (Colbjørnsen, 2018; Siles et al., 2020) or news selection in social media (Fletcher and Nielsen, 2019). The latter study formulates a key finding of “generalized skepticism” that users feel toward any selection of news, enabling people to be critical toward functions of algorithms that they do not understand (cf. Ruckenstein and Granroth, 2019 for a corresponding approach focused on advertisements and surveillance).

Within the field of Human–Computer Interaction (HCI), related work is emerging that employs “folk theories” as a concept to study how people understand algorithms and personalization tools. This concept is also applied by Siles et al. (2020) in their study on Spotify recommendations, arguing for its relevance to discussions of user agency in critical data studies. We will discuss folk theories as a framework below, but note that the HCI-studies produce similar findings as some of the communication studies already mentioned. For instance, Eslami et al. (2016) did a qualitative laboratory study finding that around half of their informants did not seem to understand algorithms, but also formulating 10 folk theories of how people understood automated curation to work. Rader and Gray (2015) studied how people make sense of their Facebook newsfeed, with data from a non-representative self-recruited population of Facebook users in the US. Similar to Eslami et al. (2016), Rader and Gray argue that the users mobilized a range of theories to explain the behavior of the feed: Some viewed it as a force of nature – a “flow” or “flood”– with little ability to change its course, while others displayed sophisticated ideas of informal reverse engineering.

Across the different studies, we find some shared tendencies: First, users’ sense-making of algorithms appears as an interpretative process rather than a question of mere technical understanding. Second, emotional reactions merit attention, as different contributions underline ambivalent feelings and contradicting experiences with datafication and algorithmic media, bringing out how we all have more or less implicit and imprecise notions about ourselves and the world we inhabit – and that these are valuable to analyze. To further systematize such understandings, we suggest that the framework of folk theories is particularly helpful. We will outline its conceptual roots to substantiate its relevance.

Folk theories as a framework

The notion of “folk theories” is an approach to analyze the understandings that people draw on in everyday life. In contrast to studies of how people actually use media in everyday situations, or analyses of interpretations of specific messages, a folk theory approach centers on revealing the conceptions people hold of how the media works – that is, their theories.

Cognitive psychologists call implicit and imprecise everyday notions “intuitive theories” and argue that these “organize experience, generate inferences, guide learning, and influence behavior and social interactions” (Gelman and Legare, 2011: 380). Examples differ: Intuitive theories about plants and animals are referred to as “folk biology” (e.g. Atran, 1998), while the “accessible, appealing ideas assuring people that they live under an ethically defensible form of government that has their interest at heart” can be sad to constitute a folk theory of democracy (Achen and Bartels, 2017). Nielsen (2016: 840) develops the notion of folk theories of journalism to grasp “actually existing popular beliefs about what journalism is, what it does, and what it ought to do,” and situates this understanding of folk theories in light of culture as “toolkits of symbolic resource from which we can construct strategies for action” (842). Vaughan-Williams and Steven (2016) use the label “vernacular theories” in their study of public security issues. These applications all share a recognition of the value such intuitive notions have for people, and how useful it is for research to understand them. 1 The perceptions that inform folk theories can be fleeting, inconsistent, only partly acknowledged or articulated, based on limited or misleading knowledge, and revised and developed over time in interchange with a range of experiences. Nevertheless, folk theories are integral to the foundations that users build on when interacting in media environments.

While analytical approaches seeking to identify “folk theories” or “imaginaries” could be similar, a distinction between the concepts lies in the scientific connotations of the word “theory,” hinting toward models or principles intended to hold up in the face of various empirical realities, even though this is certainly not always the case, and even though these theories are not necessarily coherent or formal (Nielsen, 2016). Toff and Nielsen (2018) argue that folk theories differ from algorithmic imaginaries by being rooted in experience, rather than mapping abstract explanations of how technology works (p. 639). However, this distinction appears to build on a fairly narrow reading of the imaginary concept as employed by Bucher (2017). In our understanding, folk theories of how media work are not necessarily abstract, but rooted in everyday experience as emphasized by Toff and Nielsen (2018). We further follow Gelman and Legare (2011) in highlighting the value of folk theories not just in guiding behavior, but also in making sense of experiences, generating inference and steering learning about the world. Our reason for analyzing folk theories as opposed to imaginaries lies primarily in the more extensive conceptual development of folk theories through applications in cognitive psychology, media research, and, in the case of algorithms, HCI.

As mentioned, HCI studies have fruitfully applied folk theory frameworks to understand perceptions of algorithms (Eslami et al., 2016; Rader and Gray 2015, also French and Hancock 2017), underling the value of user perspectives and producing useful typologies. DeVito et al. (2017) separate “operational theories” of algorithms, which describe certain aspects of what the algorithm does, from “abstract theories” which are more generally mourning what was lost in a change. The latter “do not include specific attempts to theorize how an algorithm might actually operate. Instead, they rely on a more general sense that an algorithm is something that will, in turn, cause something to happen” (DeVito et al., 2017: np). The distinction between operational and abstract theories is useful, but the co-existence of these within one specific study underlines that users could blend them together. This signals a need to be open to both general and specific aspects of algorithms, which refers to a question raised by Fletcher and Nielsen (2019) on whether user interaction with algorithms is more or less context-specific. As we do not yet know if there are shared reactions to algorithms across a range of situations, we find that further research on perceptions of algorithms across contexts is called for. In this respect our study differs from for instance Siles et al. (2020)’s specific analysis of folk theories of Spotify recommendations algorithms.

Many of the HCI-studies we have mentioned make no attempt at situating user experiences within a broader context of intrusive media. Our aim is to analyze folk theories of algorithms from a user perspective, across contexts of everyday media use, and bring these insights to the scholarly critique of datafication. In order to do so, we argue, it is important to take two steps methodologically: Moving beyond small-scale qualitative interviews studies or non-representative survey data, and beyond a US focus to other social contexts.

Methods

Our analysis is based on data from a representative media literacy survey of the adult Norwegian population. The survey was conducted by Kantar TNS on behalf of the Norwegian Media Authority, and was in the field primo March 2019 (N = 1363). Respondents were 16 years and older, selected to form a representative sample and approached through e-mail to answer a web-survey. The data was weighted based on age, gender, and educational level. Our data thereby facilitates a varied intake of opinions, as well as insight into variations concerning demographics and media use variables. The Norwegian case expands empirical knowledge in debates on datafication that are often implicitly or explicitly based on Anglo-American settings – and raising issues of contextualization (as discussed by Boczkowski and Mitchelstein, 2019; Siles et al., 2020). Our case country Norway is a small, affluent, relatively egalitarian Northern European democracy, ranking high on international comparisons of ICT penetration (Syvertsen et al., 2014). 2018 survey data report that 79% have access to a tablet computer, 95% have access to a smartphone, and 98% have internet access at home (MedieNorge/SSB, 2019).

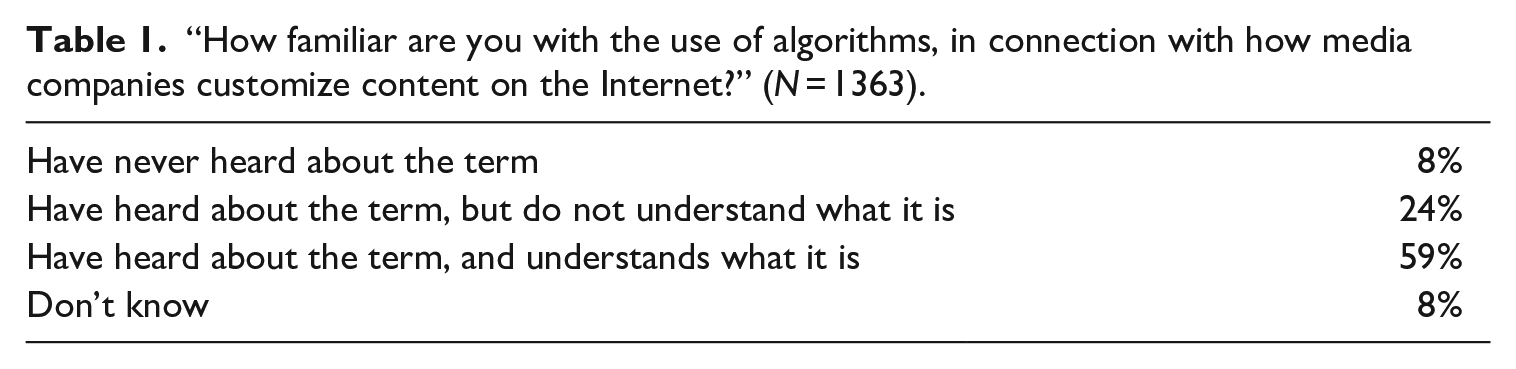

We base our analysis on open-ended answers in informants’ own words, solicited as part of a two-step question segment in the survey. The first step asked “How familiar are you with the use of algorithms, in connection with how media companies customize content on the Internet?” 2 The alternatives were intended to distinguish between respondents recognizing the term, and those declaring understanding of it. Table 1 shows the distribution of answers to this question.

“How familiar are you with the use of algorithms, in connection with how media companies customize content on the Internet?” (N = 1363).

Closer inspection shows that the young are more likely to reply that they understand algorithms, and so are male respondents (66% vs 52%) and respondents with a university degree (68% vs 47%). While the question asks about algorithms in the context of customization of online media content, it seems likely that familiarity with algorithms from for instance work or education could strengthen the likelihood of claiming to understand. In the second step, those who declared not just recognition but understanding (59%, N = 804) were asked in an open-ended question “Do you see any negative or positive consequences of media companies’ use of algorithms?” They were given an open text field with instructions to “write down some keywords or move on if you do not have an opinion.” The question produced a total of 553 replies, ranging from one-word entries to full paragraphs.

Before elaborating on how we analyzed this material, it is essential to reflect upon its strengths and limitations for analyzing folk theories of algorithms. As argued above, we consider it important to expand with empirical studies from non-US contexts, with a range of respondents as facilitated by a survey, and the possibility to assess findings in light of demographical divides. However, asking questions to facilitate the articulation of “folk theories” is a tricky matter. It would likely be counterproductive, particularly in a survey, to bluntly ask people to write down a “theory.” Instead, we argue, our question is fruitful to the articulation of folk theories because it asks respondents to envision consequences and normatively consider these. To envision consequences means that respondents have to consider if algorithms do something, and what this might be. As an inroad to analyze folk theories, this goes to the core of what people believe algorithms to be and do. To further ask for normative judgments could potentially push toward binary classifications (especially, we might consider if neutral judgments should have been more strongly invited). Yet, in light of the dark sides of datafication discussed above, the choice of “positive or negative” opens up a space for also seeing algorithms in a favorable light, and this might be needed to produce balanced responses.

In a survey, the wording of the question(s) and their placement in the questionnaire give cues to respondents. The survey included several other items related to online news, social media, advertising and entertainment, and to personalization of media content. As such, we believe the respondents were “primed” to think broadly about algorithms in the media. Still, the survey emphasized journalism and social media more than fiction or gaming, which, we suspect, might have colored responses. More fundamentally, the focus on media literacy could in itself prime informants toward demonstrating critical abilities.

We have conducted a qualitative thematic analysis with a flexible and predominantly inductive approach. First, replies were categorized according to whether their views on the effects of algorithms were positive (a minority), neutral (hardly any), mixed (many), or negative (a majority). Next, to identify “folk theories,” we read all entries again and pieced together full or partial responses that alluded to potentially shared understandings (following Nielsen, 2016). Our ambition was not to conduct a thematic categorization of all subcategories in the material, 3 but to group replies alluding to similar operational theories (DeVito et al., 2017) of what people believe algorithms do to media experiences, with search for more abstract theories of what algorithms are or mean to people. Categorizing these shared understandings, we formulated a first set of theories, then revised the theories over time, with both authors conducting further readings of the material.

Afterwards, we coded all replies to assess whether and how they corresponded to a set of five folk theories, seeking a more systematic overview of the prevalence of each theory, and to investigate if groups of respondents were prone to express particular theories. The authors coded the same 50 replies independently and discussed each of these to decide how to judge difficult cases, before one author coded the rest of the material. 4 Some respondents draw upon several theories at once, and we will comment on how such interplay appeared.

Five folk theories of algorithms

From our analysis we identify five folk theories of algorithms: (1) Algorithms are confining, (2) Algorithms are practical, (3) Algorithms are reductive, (4) Algorithms are intangible, and (5) Algorithms are exploitative. Following the distinction developed by DeVito et al. (2017), the first three theories are operational in describing workings of algorithms, while the latter two are less specific. However, as they appear in our material, the theories can be distinguished differently: The first two focus on what algorithms do to media experiences, the third on how algorithms represent users, while the latter two question power structures. We will present the theories one by one, before discussing the joint insights they bring forward regarding user reactions to datafication.

Folk theory 1: Algorithms are confining

The first folk theory is that algorithms are confining: They narrow your world view by feeding you more of what you have expressed interest in, more of what you already know, rather than expand your horizons or challenge your beliefs. This folk theory builds on insight into how algorithms work with digital traces, and posits that such functions capture users within an increasingly narrow frame of knowledge. The theory is clear and prevalent in the material, and examples include:

Narrows down the flow of information. We get lots of stories that confirm what we already believe and are interested in, maybe less that does not fit, and that leads to confirmation bias. We don’t get the same information, we get the information that confirms what we already know. Definitely negative because [algorithms] tend to steer the content on the websites I visit based on my prior actions. I get a narrowed down news flow with few objections and little information which challenges what I already have read, bought, clicked on, etc.

Some respondents frame this problem as confirmation bias or rephrase arguments from debates on selective exposure. Notions of echo chambers and filter bubbles, while academically contested (e.g. Bruns, 2019), are alive in the material. What is at stake, referred to explicitly by some, is the public sphere ideal of a shared meeting-place for a broad spectrum of ideas, or in the context of news, the general news agenda.

The theory of algorithms as confining is an operational theory describing a particular feature of algorithms, but one that is so central to their application that it appears generalized. Some replies describing the theory are neutral in tone, but many express that context is decisive for how problematic the generalized confining effect is. Following the wording of the question on positive and negative consequences of algorithms in the media, many replies provide examples of both:

I am in favor of targeted advertising. It is problematic if the visibility of news, and particularly opinions, is controlled by algorithms so that echo chambers are formed. I could be trapped in a silo, where only information that makes me stay with the media outlet is reported to me. That is good if it keeps irrelevant sport journalism away. Also good for avoiding gossip I don’t care about. Bad if it keeps me from important information. [. . .] Last but not least it could give me a wrongful impression of reality. If I only come across happy outdoorsy pictures of people on Facebook I might (and I have) think that everyone else is doing fine and my problems are weird.

As these replies indicate, some consider the confining effects to be beneficial for filtering away irrelevant advertising, as well as (softer) journalistic content beyond one’s interests. The same effect is considered harmful for news and public debate, (mentioned by many), and for socio-cultural representation (mentioned by some), hinting to ideas of exposure diversity as particularly important to certain types of media content. It seems as though respondents draw on a distinction between news and debate as representing a public sphere where confining effects are problematic, contrasted with entertainment and particularly shopping, which are assumed to be already individualized.

“Algorithms are confining” is the most widespread theory in the material (41% of responses), more frequent among younger age groups, and among respondents with higher education. If we compare representatives for each theory with a different survey question about the privacy settings on their Facebook account, we see a tendency that those finding algorithms to be confining more often claim to have strict privacy settings (26%). They also report comparatively high levels of other digital skills.

Folk theory 2: Algorithms are practical

Our second folk theory is that algorithms are practical: They are helpful in sorting through cascades of information to prioritize what is most relevant. This theory is closely related to the one we discussed above: The theory of algorithms as confining is the evil twin of the theory of algorithms as practical. Both build on the same operational understanding of what algorithms do, but “algorithms are practical” underlines that they are good for something:

No, I’d rather have ads based on interest and what I look for/search for online, instead of being loaded with ads I have no interest in whatsoever. That is how it is these days. Positive when I look for something specific. Negative: that conspiracy theories gain influence because they are put first in rankings after searches. Positive: you do not have to spend so much time in an enormous heap of information to find hits of relevance.

As indicated here, some respondents describe algorithms as general information-sorting devices. For most, however, the theory of algorithms as practical is connected to consumerist experiences with marketing, shopping, and online services. It seems that notions of individual personalization are already internalized as part of consumer culture, and therefore appear less problematic (Draper and Turow, 2019). Some express that ads are a necessarily evil of the media, and of capitalist society, and that the algorithm could you might as well get directly to the most relevant products for you. However, even located within shopping experiences, positive and negative effects are juxtaposed:

Adapting to users can be a good thing, but also very negative by creating pressures to buy, entice (ignorant) customers and so on. Positive: Offers adapted to the individual, that they might not know existed. Negative: A constant nagging to buy.

As these replies indicate, algorithms are considered practical, but not met with warm feelings. People understand that algorithms are there for a reason, and that they could make media use more efficient, productive or interesting – but also that this is a trade-off with other negative effects. Similar ambivalences are found in Siles et al.’s (2020) study of folk theories of Spotify recommendations, considering the algorithm as a “surveilling buddy,” helpful but at times too intense.

Almost as widespread as the first theory (34% of responses), “algorithms are practical” is more often stated by the younger age groups: 42% of the relevant responses are by people under 30 years old. There is a slight tendency that the theory is expressed more often by those with lower educational levels. Like the theory of algorithms being confining, the idea that they are practical is also linked to self-reports of strong digital skills.

Folk theory 3: Algorithms are reductive

The third folk theory is that algorithms are reductive: They work with stereotypes and simplified notions, they make stupid and annoying mistakes, and thereby give crude and limited portrayals of human experience and identity:

Negative to be put in a “box” and get adapted content depending on random clicks. We are placed in a “box,” targeted influence. Coincidences can “define” the user.

With the “box” as a prevalent metaphor, respondents describe uncomfortable experiences of being (wrongfully) represented through algorithms that paint a simplified image of their interests. These experiences relate to the confining but potentially practical effects we already described, but the theory of algorithms as reductive is focused on how these algorithmic effects represent users, seeing selection of media content as a mistaken image of user identity. In a related vein, one of Lomborg and Kapsch’s (2019) informants, a non-binary person, described “oversimplified, stereotyped boxing” (p. 10). In light of the current debate on how oppression can be reinforced by algorithms (Couldry and Meijas, 2019; Noble, 2018), we note that our informants do not necessarily voice their critique as political, nor as connected to social groups suffering greater injustices from stereotyping. Instead, they articulate a personal feeling of caring how data represents their identities (Rettberg, 2009).

A recurring example in the material concerns crude algorithmic representation through coincidental or embarrassing search histories serving up limited information:

What you click on the web doesn’t need to be in keeping with what you are interested in, and the algorithms can easily go wrong. In addition, you feel surveilled. It follows us around way too much. If I search for a place on the map for my mom, that doesn’t mean I’m interested in hotels and flight tickets for that place. Doesn’t separate between random online choices and deliberate clicks you make. Doesn’t separate between the intention behind e.g. a Google search. [. . .] The more I do, and leave traces of online, the more “food” does the algorithm get to base its decisions on, without separating between what I do with a purpose and not. Especially negative experience of “big brother” and that we are not alone on the Internet even though the algorithm is math, and not a physical person who watches you.

Here, the algorithm is portrayed as a stupid but persistent creature who follows you around the web, who gets things wrong, who neglects your intentions and deeper meanings, and nevertheless sends its own misconceptions back to haunt you. Respondents describe that they are followed and stalked, and refer to misleading interpretations of what they are truly interested in. The experience of being watched is uncomfortable, but it is particularly provocative because they feel misunderstood on a personal level. Even actively feeding the algorithm, an opportunity the last quote above suggests, will not help because there is no easy way of weeding out the crude miscalculations.

While this is a less frequently expressed theory (12% of the responses), it is interestingly the only theory with a gender bias: Women express it more often than men. It is rare among the oldest age groups, and we find no educational differences. Compared to the two previous theories, those who express that algorithms are reductive report lower digital skills.

Folk theory 4: Algorithms are intangible

The fourth folk theory is that algorithms are intangible: their workings and consequences are difficult to grasp, their power is opaque, and lack of precise insight causes negative feelings of suspicion and foul play.

Sneaky. You get affected by invisible forces. Influences the consumers without them thinking about it. Step inside a persons’ free will and borders without notice.

Here, phrases such as “free will” indicate that personal autonomy is at stake, while the forces behind the algorithm are “invisible” and “sneaky.” The material includes several short replies that can be interpreted as articulating such a theory – and it is understandably difficult to expand on vague suspicions of invisible manipulation.

5

Some informants draw upon historical or current examples of surveillance at a societal level to exemplify what these forces might be, particularly the notion of “big brother”:

Negative that “big brother” to a large extent influences what content you as reader get in your feed. That means commercial actors or actors with their own agenda can control the agenda for very many people. Not even the wet dream of totalitarian East Germany was as all-foreseeing as algorithms, because my private life can be harvested and used, cf. Carnegie Analytica [sic.] and Facebook.

Compared to theories whose proponents demonstrate knowledge about the workings of algorithms, the theory of intangibility emphasizes lack of precise understanding. It is articulated as a cultural condition focused on the possibility of manipulative surveillance. As the open survey question was only posed to respondents who first indicated knowledge of algorithms, this theory is not for those who have never heard of them – but for those who feel they yet know too little. A basic familiarity with algorithms does not preclude an imagination of indefinability.

Experiences of intangible algorithmic surveillance refer to conditions of limited transparency and technical features that are black-boxed (Bucher, 2017; Dencik and Cable, 2017). Some of these responses are akin to the affective encounters reported by Lomborg and Kapsch (2019), and the kind of emotional responses discussed by Ruckenstein and Granroth (2019). Colbjørnsen (2018) found that some users view intangibility as inherent to helpful algorithmic recommendations, however articulating this within a context of finding relevant entertainment as opposed to news or social media. The theory of algorithms as intangible is not operational, but an abstract theory that articulates emotions in encounters with algorithms.

The theory of algorithms as intangible was fairly widespread (36% of responses) and more often found among those with lower educational levels. At the same time, many respondents who hold such views on algorithms report high digital skills and awareness of privacy issues. This speaks to the duality inherent in this theory: you express skepticism toward something you think is problematic, but have a hard time putting your finger on the exact spot.

Folk theory 5: Algorithms are exploitative

The fifth folk theory is that algorithms are exploitative: They are harvesting your information to use for their purposes – such as selling you things. While the theory of algorithms as practical recognized positive aspects of algorithmic selection, or resigned to notions of ads as a necessary evil, the theory of algorithms as exploitative brings forward a stronger critique of capitalism and consumer manipulation:

Algorithms are things websites have found make consumers stay longer on the site. The website makes more money, people waste more time. From what I know/have understood about algorithms, the negative thing is that we are mapped (i.e. surveilled for registration of our habits, interests, preferences etc). This is done so that the market can tailor and hit the right people with advertising – this is the whole market value of Facebook – so that algorithms are positive to commercial interests – but really negative for us media users/ consumers considering our privacy.

In contrast to the theory of algorithms as intangible, proponents of the theory of exploitative algorithms claim or demonstrate insight into the workings of algorithms, and paint a clearer picture of the forces behind them. The quote above about the market value of Facebook is a case in point. More fundamentally, these replies indicate awareness that algorithms are part of a power dynamic between conflicting interests – the ordinary consumer versus the corporations. At times, these interests can appear to align, as when the algorithm informs you of a product you need, but the long-term goals are nevertheless different:

Advertising is a necessary evil to finance content on the internet. Algorithms ensure that recipients see more relevant ads and thereby increase the chances that advertising fulfils its goal: to lure the recipients into buying something they don’t need. I am completely aware of this and have trained myself to ignore all ads I see. They want to know everything about you to squeeze out as much money as possible. A dream scenario for capitalists! For the rest of us? We will see!

The first respondent quoted here is one of several who articulates third person effects – other people could be influenced – but this reply also suggests a potential for action, joking or arguing that it is possible to train oneself to resist algorithmic manipulation.

This theory (18% of responses) is the male equivalent to algorithms being reductive: it is more often held by men, but the respondents report fairly low digital skills.

Concluding discussion: Irritating and inescapable

We have identified five folk theories of algorithms: they are confining, practical, reductive, intangible and exploitative. Across the various theories, a number of replies convey emotional reactions that can be summarized with a short and poignant reply from one respondent: “Irritating, but inescapable. . .”. A key finding is therefore that algorithms are perceived as annoying, but integral to media experiences and impossible to avoid.

This leads us to ask if “digital resignation,” a concept applied in discussions of the structural aspects of privacy, could capture more generalized reactions to algorithms, and what the potential for critique of datafication might be, if this is the case. Digital resignation is proposed (Draper and Turow, 2019) as an explanation of the so-called privacy paradox, that “although people say they care about information privacy, they often behave in ways that contradict those claims” (Draper and Turow, 2019: 2). This is one example of how user behavior in conditions of datafication might seem puzzling. Rather than contending that users conduct a rational cost-benefit analysis, Draper (2017) highlights the limited information people have when they make decisions such as accepting or declining a cookie warning. People do not have knowledge to foresee consequences, and do not see opting out as a choice, so that resignation appears as a rational response (Draper and Turow, 2019: 5).

Resignation is about relinquishing, about giving up. Some of the negative feelings expressed in our theories could be interpreted as resignation, ranging from concern for the result of algorithmic selection (“algorithms are confining”), critique of their opaqueness (“algorithms are intangible”), to their stereotyping portrayals (“algorithms are reductive”), and their cynical applications (“algorithms are exploitative”). However, these folk theories also signal an emotional engagement that fits uneasily with a state of resignation. This is not to deny the inaction of most people facing algorithms in the media, nor to challenge the argument that resignation is actively cultivated by the industry (Draper and Turow, 2019). Instead, the collective of our respondents appear resigned about the continued existence of algorithms, but not ready to accept every variety of their application. The theory of algorithms as reductive does not solely portray users as victims of algorithmic bias, but underlines that human identity is too complex for the algorithm to understand. This theory provides nuance to more general descriptions of “surveillance realism” (Dencik and Cable, 2017) by revealing which aspects of the surveillance practices respondents highlight as problematic. The theory of algorithms as practical, while not universally positive, nevertheless builds on an idea that the algorithm could work for you (as also found by Colbjørnsen, 2018). Context is key for our informants, in a way similar to what Nissenbaum (2009) highlights in her concept of “contextual integrity” (see also Lomborg and Kapsch, 2019).

Further, it is interesting to observe the relatively high level of knowledge regarding algorithms that is expressed in our material. As noted, it is probable that the priming of the broader survey would affect knowledge claims in our material, as would the specific empirical case context of Norway as a high ICT use country. However, the five folk theories demonstrate insight as to how algorithms work, potentially relevant beyond this specific case, by pointing to challenges noted in prominent scholarship (e.g. Couldry and Meijas, 2019; Noble, 2018) on limited transparency, commercial co-option, and bias reinforcement. This is not to say that our respondents are always correct, or that the self-reported level of knowledge is accurate. It is, nevertheless, striking that so many replies express awareness of key challenges.

Both the emotional engagement and the level of knowledge indicate that user perceptions of algorithms are not fully grasped by the notion of “resignation.” The emotional reactions include some examples of anger, but frustration or annoyance appear more prevalent. We suggest the term “digital irritation” to capture these reactions. Coupled with some overall knowledge of what algorithms do and fail to do, this points to potentials for critical user engagement with algorithmic media. There appears to be a realm for user agency in criticizing algorithms, actively noticing their imperfections, rather than accepting them as seamlessly integrated into media experiences. Likewise, Andersen (2020) concludes that “while algorithms may seduce, oppress, or force us, at the same time, they also invite us to make sense of them” (1492). To interpret is not the same as acting against or escaping datafication, but nevertheless constitutes a contribution to dominant critiques of datafication, that operate on a structural level with fairly sweeping recognition of the role of actual users. If we are interested in figuring out how to counter the problematic consequences of algorithmic biases, or the normalization of surveillance, we are well served by insights into what aspects of the everyday experiences with algorithms that people actually are concerned with, understand, or find troublesome.

Importantly, the critical potential is most specific concerning how algorithms work and how they could work better – while we find few signs of users proposing to get rid of algorithms. They are thereby resigned to the existence of algorithms, but irritated by their workings. In discussing “digital resignation” to understand users’ relation to privacy issues online, Draper and Turow (2019) refer to contexts where people meet extraordinary circumstances such as a natural disaster, or bad weather at an airport. Arguably, little can be done by the collective of passengers when snow closes down a major transport hub all of a sudden. But bad weather will pass. In contrast, algorithmic media are not a temporary occurrence, and we should take care not to compare datafication to a force of nature. This is perhaps also the most troubling aspect of the folk theories we have identified: people are irritated and critical, but still consider algorithms inescapable.

Footnotes

Acknowledgements

The authors would like to thank Jan Fredrik Hovden for valuable advice in the research process.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: The authors received funding from The Research Council of Norway (Grant No. 247617).